DOI:10.32604/cmc.2022.021299

| Computers, Materials & Continua DOI:10.32604/cmc.2022.021299 |  |

| Article |

Artificial Intelligence Enabled Apple Leaf Disease Classification for Precision Agriculture

1Department of Computer Science, King Khalid University, Muhayel Aseer, Saudi Arabia

2Faculty of Computer and IT, Sana'a University, Yemen

3Department of Information Systems, College of Computer and Information Sciences, Princess Nourah Bint Abdulrahman University, Saudi Arabia

4Department of Computer and Self Development, Preparatory Year Deanship, Prince Sattam bin Abdulaziz University, Alkharj, Saudi Arabia

*Corresponding Author: Fahd N. Al-Wesabi. Email: falwesabi@kku.edu.sa

Received: 28 June 2021; Accepted: 29 July 2021

Abstract: Precision agriculture enables the recent technological advancements in farming sector to observe, measure, and analyze the requirements of individual fields and crops. The recent developments of computer vision and artificial intelligence (AI) techniques find a way for effective detection of plants, diseases, weeds, pests, etc. On the other hand, the detection of plant diseases, particularly apple leaf diseases using AI techniques can improve productivity and reduce crop loss. Besides, earlier and precise apple leaf disease detection can minimize the spread of the disease. Earlier works make use of traditional image processing techniques which cannot assure high detection rate on apple leaf diseases. With this motivation, this paper introduces a novel AI enabled apple leaf disease classification (AIE-ALDC) technique for precision agriculture. The proposed AIE-ALDC technique involves orientation based data augmentation and Gaussian filtering based noise removal processes. In addition, the AIE-ALDC technique includes a Capsule Network (CapsNet) based feature extractor to generate a helpful set of feature vectors. Moreover, water wave optimization (WWO) technique is employed as a hyperparameter optimizer of the CapsNet model. Finally, bidirectional long short term memory (BiLSTM) model is used as a classifier to determine the appropriate class labels of the apple leaf images. The design of AIE-ALDC technique incorporating the WWO based CapsNet model with BiLSTM classifier shows the novelty of the work. A wide range of experiments was performed to showcase the supremacy of the AIE-ALDC technique. The experimental results demonstrate the promising performance of the AIE-ALDC technique over the recent state of art methods.

Keywords: Artificial intelligence; apple leaf; plant disease; precision agriculture; deep learning; data augmentation

Plant phenotyping and Precision agriculture are information and technology based domain with certain challenges and demands for the detection and diagnoses of plant diseases [1]. This scheme's aim is to achieve real world, strong mapping system for environment, crop, and soil parameters for facilitating a management decision [2]. In contrast, conventional agricultural management practice assumes that parameters in crop fields are homogeneous, thus leads to crop health management and pesticide application that isn't obviously interrelated to the current disease management condition. Enhancing agriculture productivity needs advanced solution which provides improved quality and yield for outdoor and indoor farming [3]. A farmer requires precision technique for obtaining and interpreting the data for well controlled crop development, avoiding losses created by adverse climate conditions/infectious pests, and therefore enabling return on investment [3]. Yearly, plant disease contributes to substantial damages in global harvest, costing an estimated US$220 billion. Plant disease evaluation is accompanied for analyzing the measurement of pathogens or disease (phythopathometry), that is essential for estimating the crop loss and disease intensity. Abundant usage of chemicals like nematicides, bactericides, and fungicides for controlling plant diseases is created adverse impacts on several agroecosystems. A reliable and accurate method is required in plant disease evaluation for increasing severity estimation plant and disease detection.

Traditionally, visual observation by professionals was accompanied for diagnosing plant diseases. But, there is a threat for error because of subjective perceptions [4]. Regarding this, several spectroscopic and imaging methods were investigated to detect plant disease. Though, they need accurate bulky sensors and instruments that lead to higher costs and lower performance. Recently, using the popularisation of digital cameras and another electronic device, automated plant disease diagnoses through ML were broadly employed as suitable alternative [5]. ML methods are highly suitable for the detection of uniform background plant images that were taken in an ideal laboratory platform. In recent times, DL and convolutional networks have created significant developments in computer vision and several related practical and theoretical attainments have been stated [6]. Since CNNs could extract features directly and automatically from the input images, thus evading complicated preprocessing on images, they have been a study in object identification. But, executing the real world recognition of apple leaf disease remains complex due to ALDD has the succeeding features: firstly, many diseases might occur on similar leaves [7]. Additionally, all the spots of apple leaf disease are tiny. Lastly, environmental aspects like soil, shadow, and illumination also affect by apple leaf disease detections.

This paper introduces a novel AI enabled apple leaf disease classification (AIE-ALDC) technique for precision agriculture. The proposed AIE-ALDC technique encompasses orientation based augmentation, Gaussian filtering (GF) based noise removal processes. In addition, the AIE-ALDC technique includes a Capsule Network (CapsNet) based feature extractor to generate a helpful group of feature vectors. Moreover, water wave optimization (WWO) technique is employed as a hyperparameter optimizer of the CapsNet model. Finally, bidirectional long short term memory (BiLSTM) model is used as a classifier to determine the appropriate class labels of the apple leaf images. A wide range of experiments was carried out to showcase the supremacy of the AIE-ALDC technique.

The paper organization is given as follows. Section 2 offers the related works, Section 3 elaborates the proposed model, Section 4 provides the performance validation, and Section 5 concludes the paper.

Liu et al. [8] presented the accurate detecting technique for apple leaf diseases on the basis of DCNN. It consists of creating adequate pathological images and design a new framework of a DCNN is depending upon AlexNet for detecting apple leaf disease. Chouhan et al. [9] presented a technique called BRBFNN for classification and identification of plant leaf disease manually. To assign an optimum weight for radial basis function NN they utilize bacterial foraging optimization which additionally raises the accuracy and speed of the network for classifying and identifying the areas affected by distinct diseases on the plant leaves. The area developing method rises the performance of the network by grouping and searching of seed points that have general attributes for extracting feature procedure.

In Khan et al. [10], a novel technique is executed for apple disease recognition and identification. Initially, the apple leaf's spot is improved by a hybrid technique that is the combination of de-correlation, three-dimension box filtering, Gaussian, and median filters. Next, the lesion spot is separated by the robust correlation based technique and enhanced their result by the combination of EM segmentation. The investigational result is executed on plant village dataset. In Yu et al. [11], a novel DL framework is presented for considering the leaf spot attention method. To understand this, 2 subnets are implemented. Initially, feature segmentation subnetwork for providing extra discriminate features for spot areas, individual background, and leaf areas in the feature map.

Xie et al. [12], proposed a real world detector for grape leaf disease is depending upon enhanced DCNN. This study firstly extends the grape leaf disease image via digital image processing technique, creating the grape leaf disease dataset (GLDD). In Agarwal et al. [13], a CNN method was established for identifying the disease in apple and it has 2 densely connected, 3 max pooling layers, and 3 convolution layers. This module was made afterward analysis by differing amount of convolution layers from two to six and initiate that three layers were provided an optimal accuracy. In order to compare the result, the conventional ML method was also implemented on a similar dataset. Alongside conventional ML approach, the prominent pretrained CNN modules like InceptionV3 and VGG16 are also performed. Bi et al. [14] propose a HIGH, LOW COST, STABLE accuracy apple leaf disease detection technique. It can be attained by using MobileNet module. Initially, relating to a common DL method, it is a LOW COST module since it is easily placed on mobile devices. Next, in place of skilled expert, everybody could complete the apple leaf disease investigation STABLELY using this approach. Lastly, the accuracy of MobileNet is almost similar to present complex DL methods. Some other methods are also available in the literature [15–19].

The workflow involved in the AIE-ALDC technique is demonstrated here. The proposed AIE-ALDC technique encompasses orientation based augmentation, GF based preprocessing, CapsNet based feature extraction, WWO based parameter tuning, and BiLSTM based classification. The detailed working processes involved in the AIE-ALDC technique are offered in the succeeding sections.

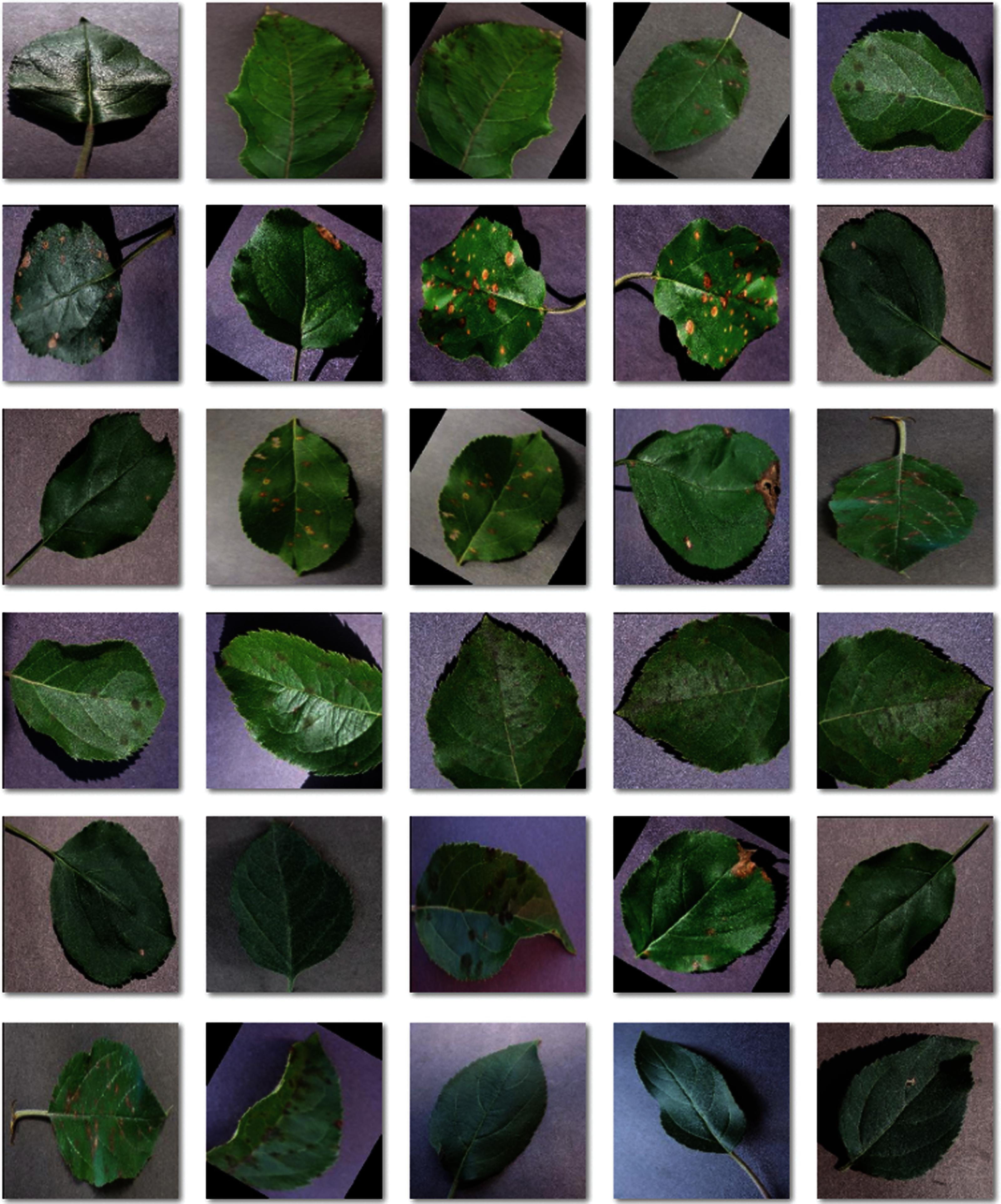

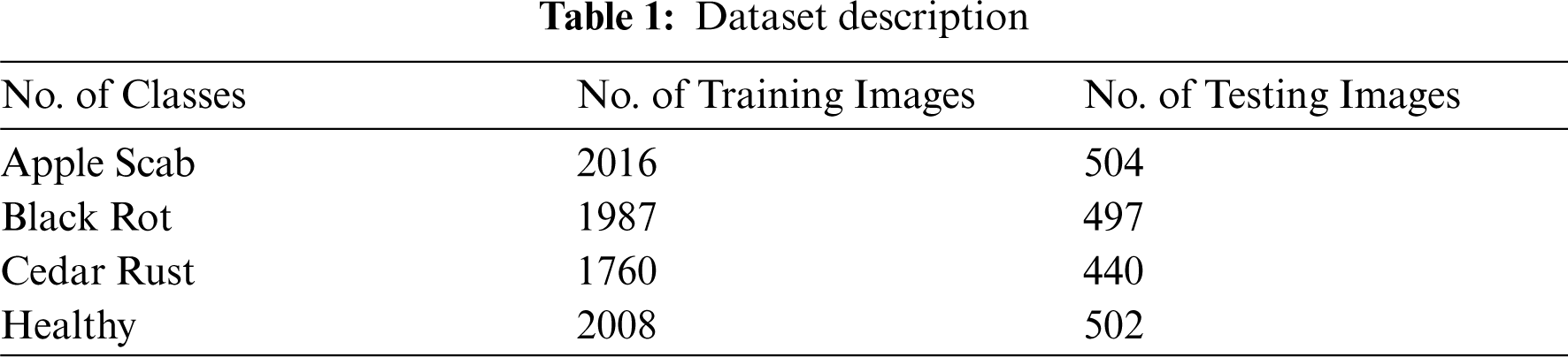

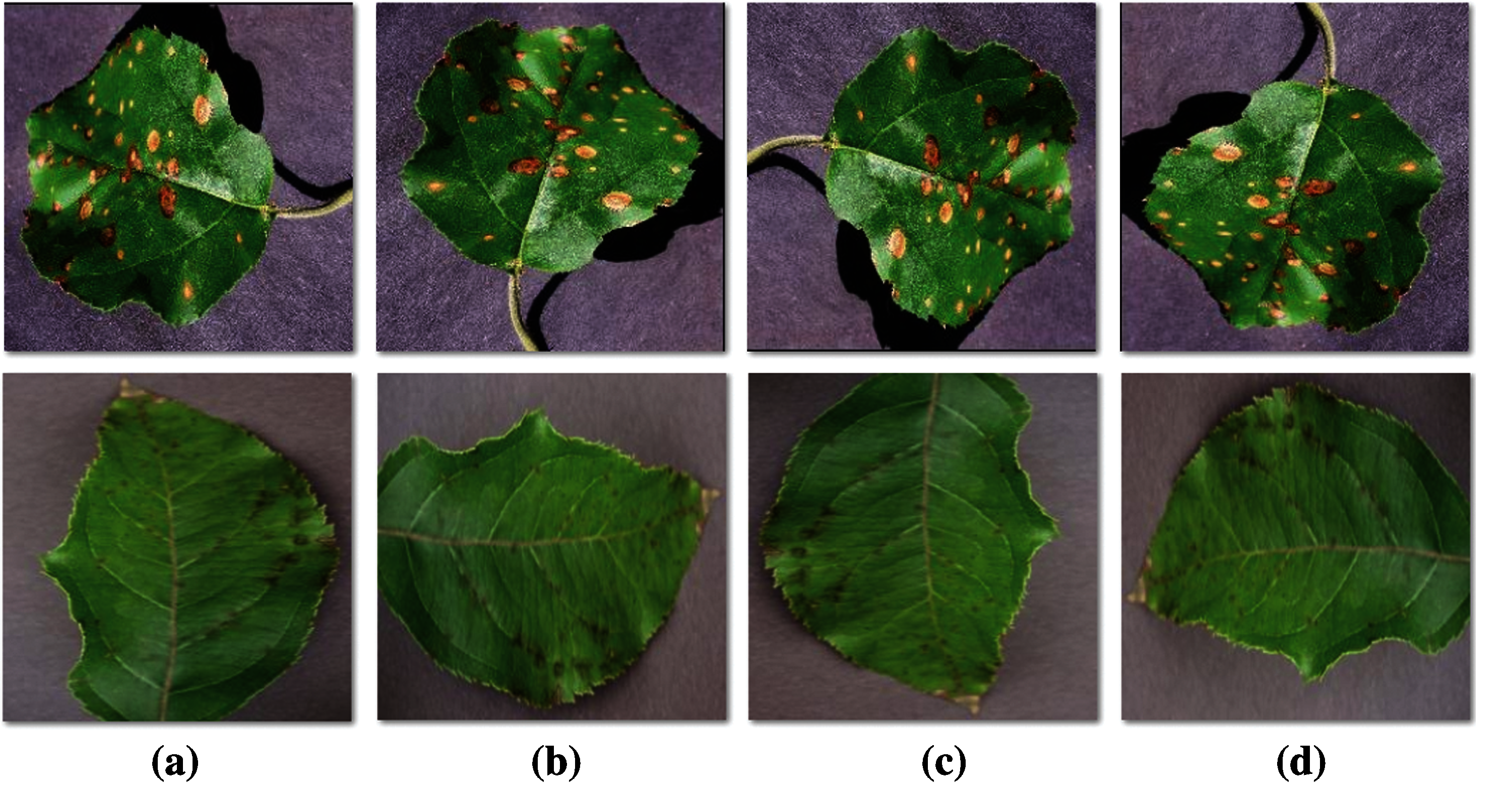

The performance validation of the AIE-ALDC technique takes place utilizing a new plant disease dataset [20]. The dataset comprises images under four different classes like Apple Scab, Black Rot, Cedar Rust, and healthy images. Firstly, the Apple Scab contains a set of 2016 training images and 504 testing images. Besides, the black rot class includes a total of 1987 training images and 497 testing images. Followed by, the Cedar Rust class encompasses a set of 1760 training images and 440 test images. Finally, the healthy class contains a set of 2008 and 502 training and testing images respectively. The details related to the dataset are shown in Tab. 1 and some sample test images are illustrated in Fig. 1.

Figure 1: Sample images

3.2 Data Augmentation and Data Pre-Processing

In the apple orchard, the comparative location of the image acquisition devices to study objects is defined by their present spatial relation that is based on the shooting location. Thus, it is complex to picture the apple leave pathological images from all angles to encounter every opportunity. In this subsection, to test and construct the adaptability of the CNN based method, an extended image dataset is developed from the natural images utilizing mirror symmetry and rotation transformation. Image rotation takes place if all the pixels rotate specific angles nearby the centre of an image. Consider that P0(x0, y0) denotes a random point of image; afterward rotating θ° anticlockwise, the point coordinates are P(x, y). The coordinate of the estimation of 2 points is displayed in Eqs. (1) and (2).

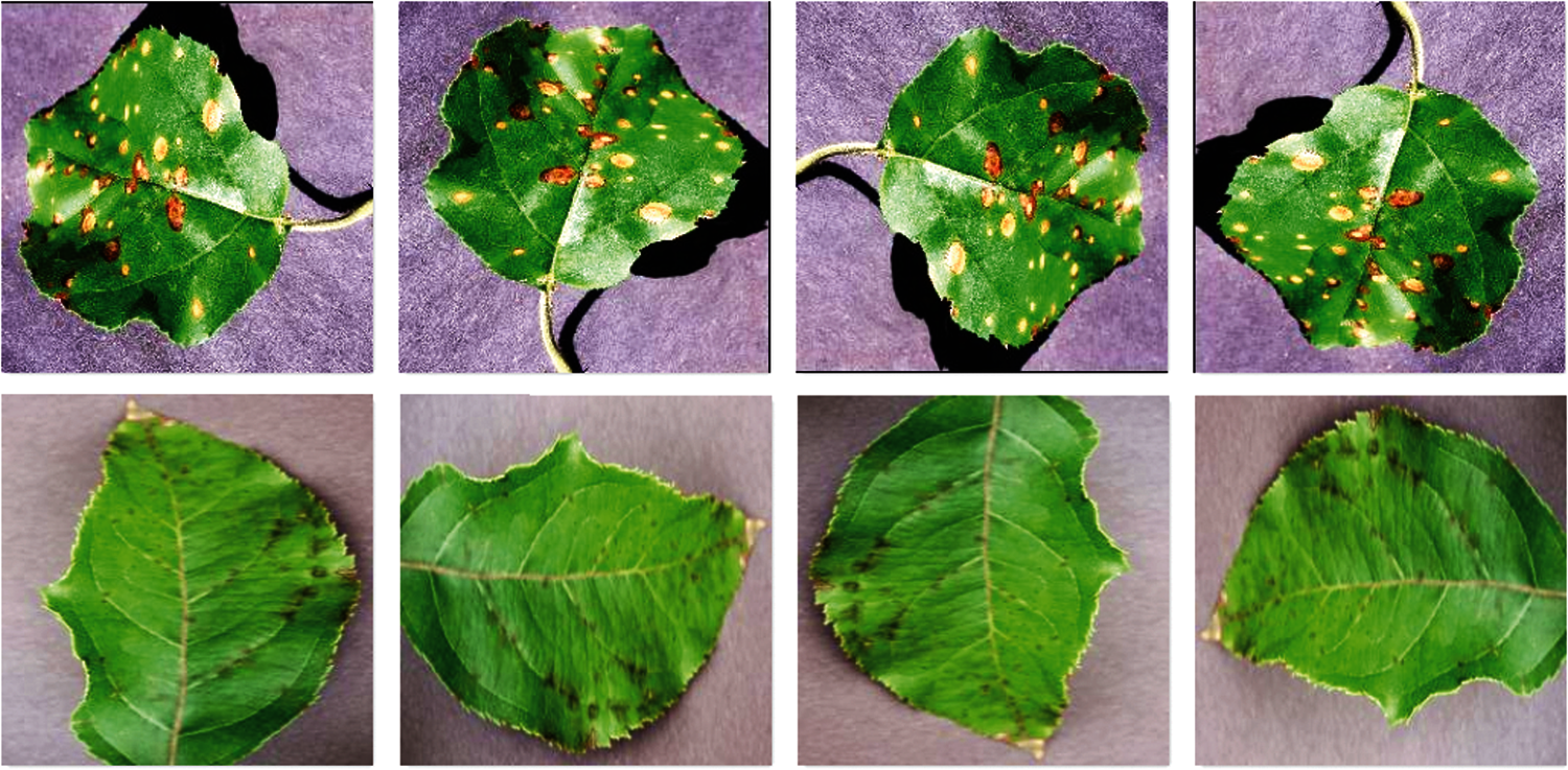

As displayed, a pathological image is mirrored and rotated for generating 4 pathological images, where the angle of rotation contains 90°, 180°, and 270.

Fig. 2 shows the sample images produced after the data augmentation process. Fig. 2a depicts the original, 2b–2d depicts the images produced after the rotation of 900, 1800, and 2700. The figure depicted that the input images are effectively augmented into different views.

Figure 2: Sample augmented images a) Original image b) 90◦ Rotated image (c) 180◦ Rotated image (d) 270◦ Rotated image

The implementation of two dimensional Gaussian filters was widely utilized for noise removal and smoothing. It requires huge processing resources and the efficacy in executing is stimulating research. The convolutional operator is determined as Gaussian operator and proposal of Gaussian smoothing is attained using the convolution. The Gaussian operator in one dimension is given by:

The optimal smoothing filter for the image endures localization in the frequency & spatial domains, in which the ambiguity relations are fulfilled by:

The Gaussian operator in two dimensions is established by:

Whereas, σ (Sigma) denotes the SD of a Gaussian function. Once it has a maximal value, the image smoothing will be higher.

Fig. 3 displays the sample set of pre-processed images produced by the GF technique. From the figure, it is obvious that the quality of the images is considerably enhanced by the GF technique.

Figure 3: Preprocessed images

3.3 Feature Extraction: WWO-Capsnet Model

Convolutional neural networks (CNNs), or convents for short, is specific kind of FFNN. They are equivalent to the NN demonstrated above in that they are composed of neurons with learnable biases and weights. The significant variation is that the CNN framework creates the implicit statement that the input is an image that permits encoding specific features in the framework. Particularly, convolution captures translation invariance (viz., filter is independent of the position). This alternately creates the forward function more effective, massively decreases the amount of variables, and thus creates the network easier for optimizing and lesser based on the size of the data. A CNN contains MLP, sequence of layers, in which all the layer converts the activation or output of the prior layer by other distinguishable functions. It has many layers utilized in CNN, and this would be described in the following section, but, the most popular components would meet in all the CNN framework contains the fully connected layer, pooling layer, and convolutional layers. Basically, this layer contains, dimensionality reduction, classification layers, and feature extractors, correspondingly. These CNN layers are stacked to create a full convolutional layer. These sliding filters are generally executed by a convolution and, a linear operator, it could be stated as a dot product for effective execution. In every convolution layer, they have a whole set of filters, all of them would generate distinct activation maps. This activation map is stacked for obtaining the output map or activation amount of layers.

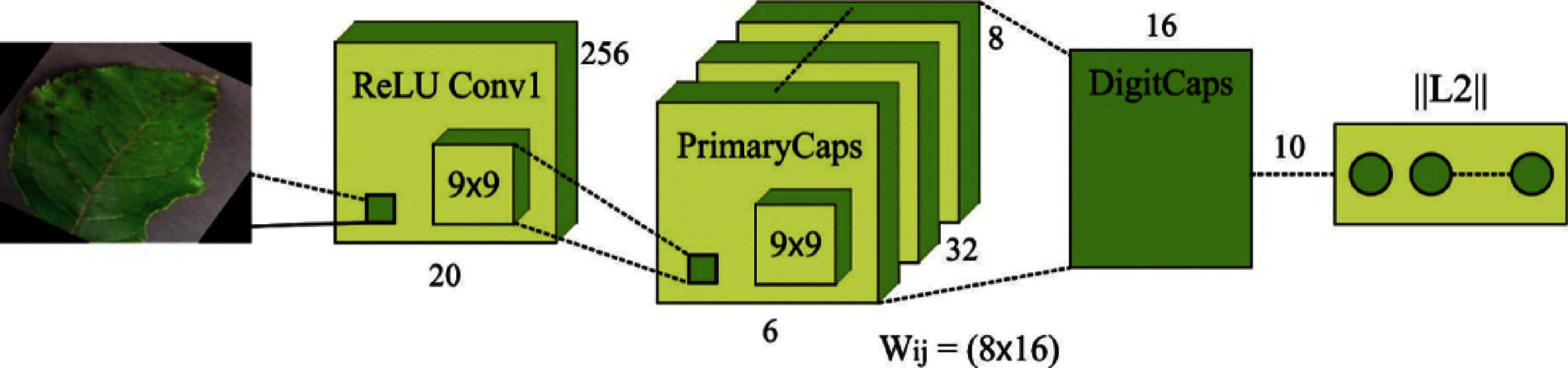

The CapsNet method is the most common study field in the research. The fundamental units of CapsNet are capsule that has a group of arranged neurons. The capsule length is based on the invariance, where the amount of features for recreating the image are the measurement of equivariance. Capsule produces vectors of a similar magnitude with distinct locations. The output of capsule i was considered as

whereas

Figure 4: Structure of CapsNet

An activation function baptized squashing shrink the last output vector to 0 when it is smaller and for unit vector when it is larger, for generating capsule length. In activity vectors

The

Once the images are augmented and preprocessed, the WWO-CapsNet technique is applied to generate a useful set of feature vectors.

WWO module is an effective and well-known method that has attained greater emphasis from the researchers utilized to map search space problems to a sea bed. Now, a single WW is suggested as a solution where height and wavelength fitness

For each iteration, all WWs are propagated effectively. With the consideration of actual WW

whereas

Whereas

whereas

In the event of WW standard, improved power of WW enhances steeper peak. If speed of wave is greater, WW may be separated into huge amount of solitary waves. With WWO method, the wave breaking task is calculated on newly recognized solution when finishing the propagation operation. A method of separating waves are chosen arbitrarily amongst

Whereas

Once WW refracts in the mountain valley, particularly in steep regions with deep sea features. For accelerating the power dispersion of WW in propagation development, henceforth wave height is decreased afterward propagation for removing search stagnation. Eq. (14) is applied for updating the position of a dimension afterward WW refraction:

Whereas

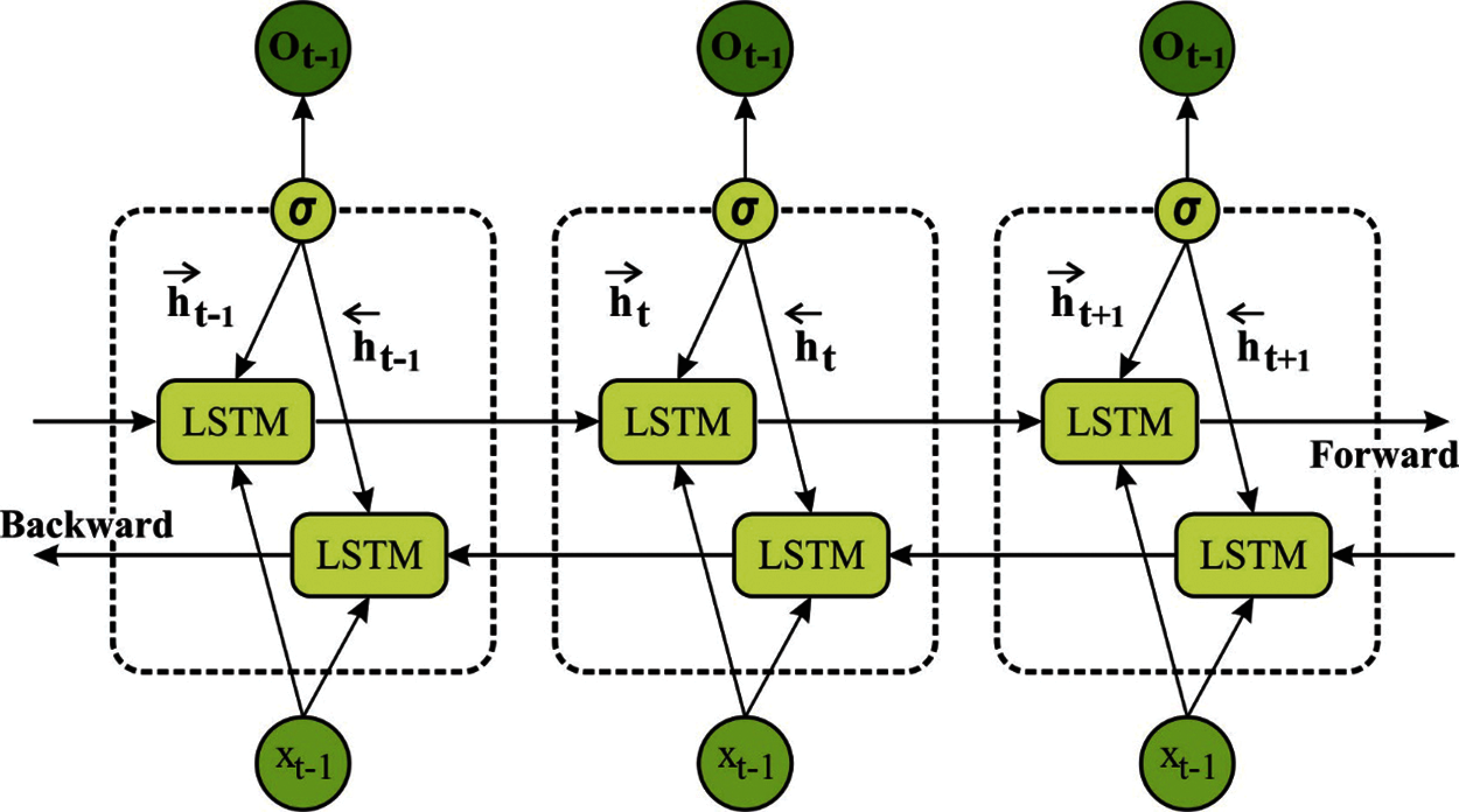

Finally, the BiLSTM model is utilized as a classifier to allot proper class labels to the input apple leaf images. Generally, an RNN is made up of difficulties in learning long term dependency [24]. An LSTM based technique is expanded for RNNs that can able to handle reducing gradient problems. Therefore, the LSTM technique learns the data importance to remove/preserve. Usually, an LSTM technique has output, forget, and input gates. Fig. 5 demonstrates the architecture of LSTM.

Figure 5: Structure of LSTM

Forget Gate. The sigmoid function is normally employed for creating a decision that should be deleted from the LSTM memory. This decision is basically depending upon the value of

whereas

Input Gate. Now, it improves the decision of new data is within the LSTM memory. It can be made up of: sigmoid and “tanh” layers. The results of 2 layers are estimated by:

whereas

whereas

Output Gate. It employes sigmoid layer to make decisions i.e., A separation of LSTM memory provides to the result. Next, it creates a nonlinear

whereas

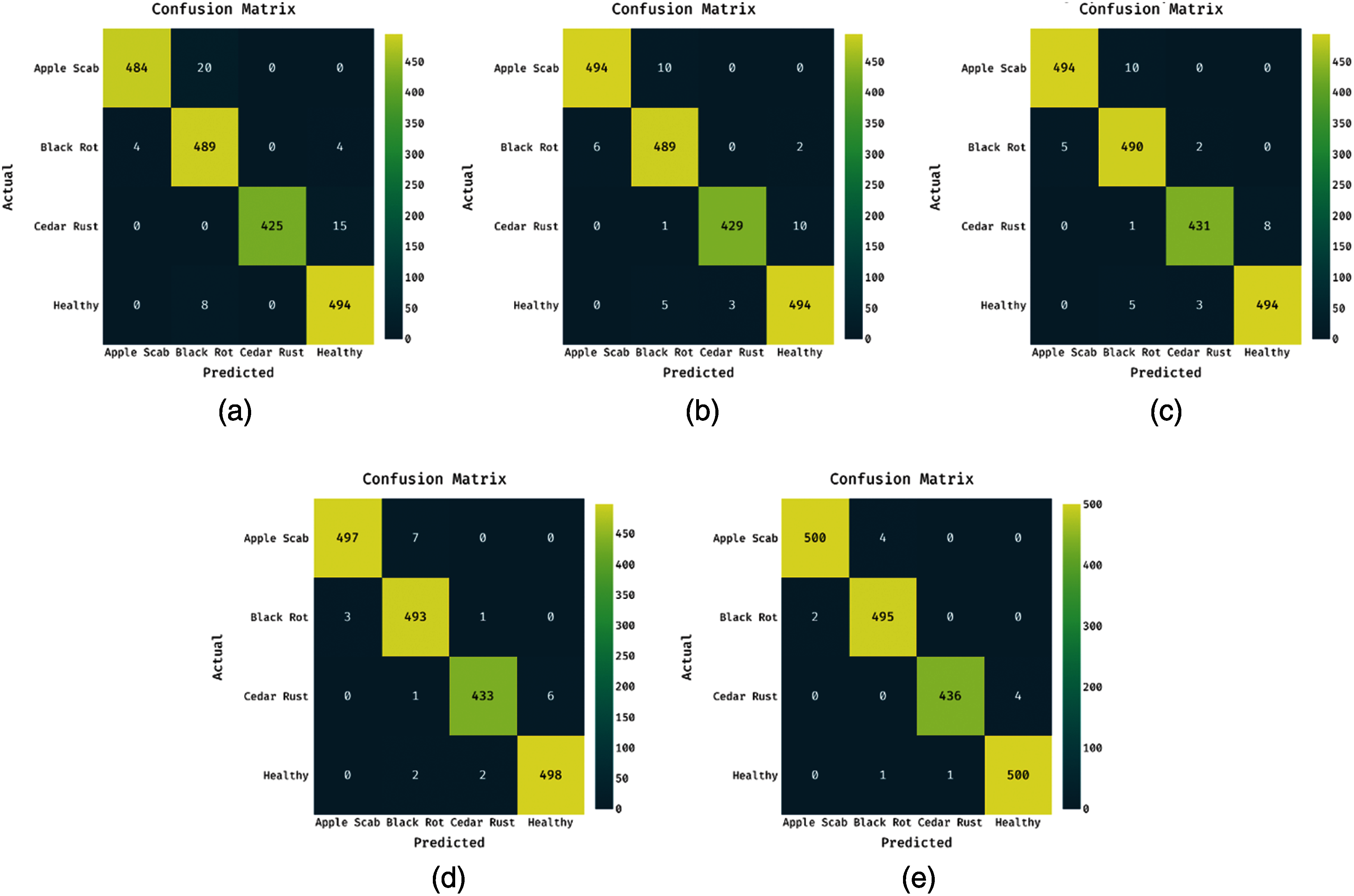

Fig. 6 displays the confusion matrices generated by the AIE-ALDC technique under different runs. On the execution run-1, the AIE-ALDC technique has classified a set of 484 images into Apple Scab, 489 images into Black Rot, 425 images into Cedar Rust, and 494 images into Healthy. Also, on the execution run-2, the AIE-ALDC approach has classified a set of 494 images into Apple Scab, 489 images into Black Rot, 429 images into Cedar Rust, and 494 images into Healthy. Moreover, on the execution run-3, the AIE-ALDC method has categorized a set of 494 images into Apple Scab, 490 images into Black Rot, 431 images into Cedar Rust, and 494 images into Healthy. Furthermore, on the execution run-4, the AIE-ALDC manner has classified a set of 497 images into Apple Scab, 493 images into Black Rot, 433 images into Cedar Rust, and 498 images into Healthy. Finally, on the execution run-5, the AIE-ALDC methodology has ordered a set of 500 images into Apple Scab, 495 images into Black Rot, 436 images into Cedar Rust, and 500 images into Healthy.

Figure 6: Confusion matrices of AIE-ALDC technique. a) Run-1 b) Run-2 c) Run-3 d) Run-4 e) Run-5

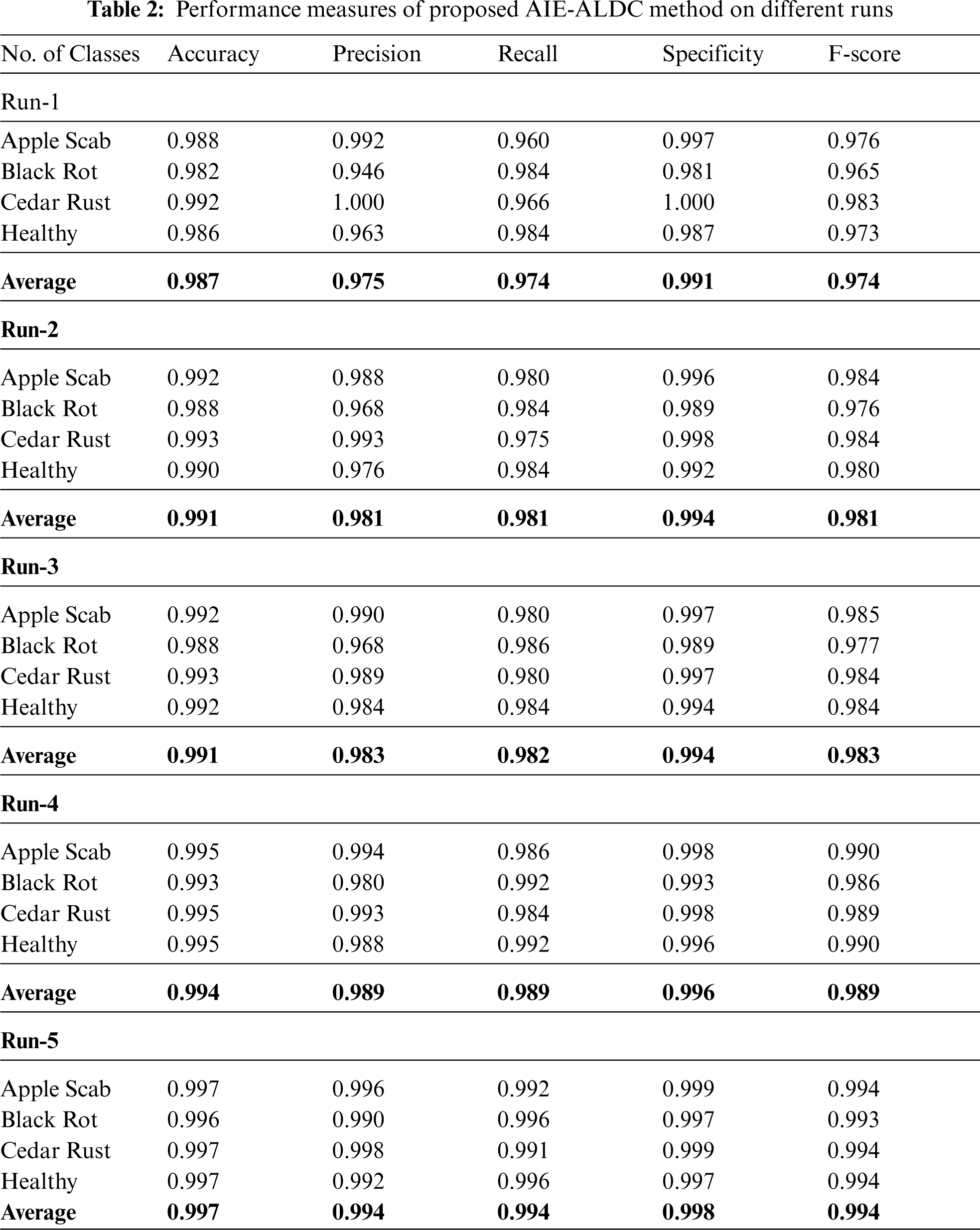

The proposed AIE-ALDC technique is simulated utilizing Python 3.6.5 tool. A deiled classification results analysis of the AIE-ALDC technique is examined under different runs in Tab. 2. From the results, it can be noticed that the AIE-ALDC approach has accomplished maximal apple leaf disease detection performance. For instance, with the execution-1, the AIE-ALDC technique has obtained effectual outcomes with accuracy of 0.987, precision of 0.975, recall of 0.974, specificity of 0.991, and F-score of 0.974. Likewise, with the execution-2, the AIE-ALDC manner has gained effective results with accuracy of 0.991, precision of 0.981, recall of 0.981, specificity of 0.994, and F-score of 0.981. Similarly, with the execution-3, the AIE-ALDC method has attained efficient outcomes with an accuracy of 0.991, precision of 0.983, recall of 0.982, specificity of 0.994, and F-score of 0.983. Also, with execution-4, the AIE-ALDC methodology has gained effectual outcomes with accuracy of 0.994, precision of 0.989, recall of 0.989, specificity of 0.996, and F-score of 0.989. At last, with the execution-5, the AIE-ALDC algorithm has achieved effective results with accuracy of 0.997, precision of 0.994, recall of 0.994, specificity of 0.998, and F-score of 0.994.

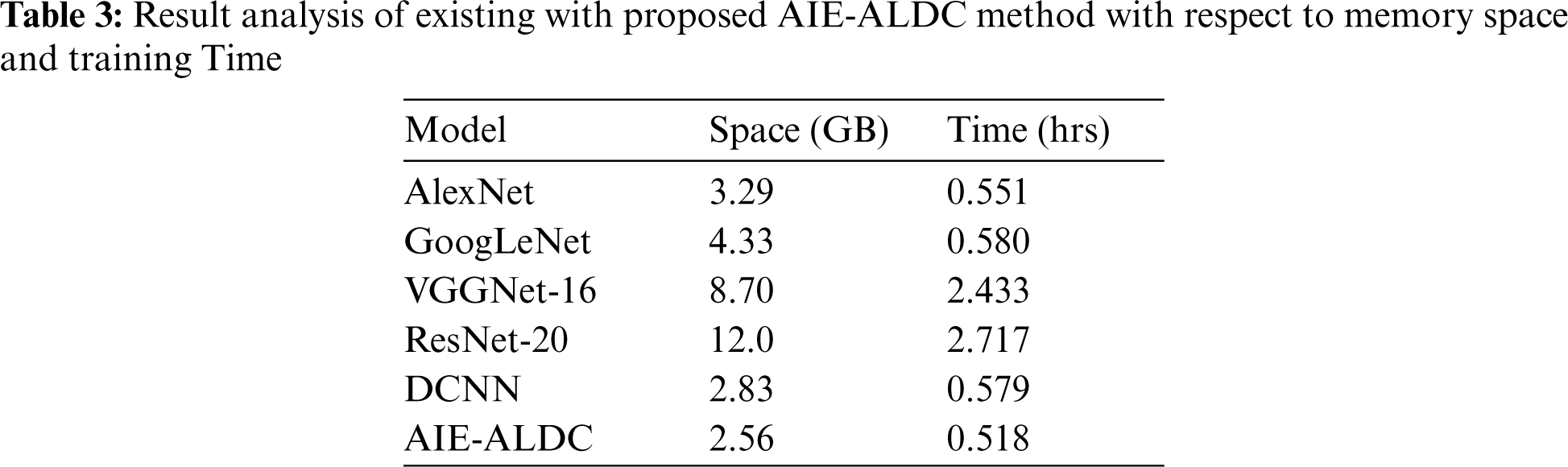

Tab. 3 offers the memory space and training time analysis of the AIE-ALDC technique with other techniques. From the table, it is apparent that the ResNet-20 model has shown ineffective outcomes with a higher memory space of 12 GB and training time of 2.717 h. Followed by, the VGGNet-16 model has gained slightly enhanced performance with a memory space of 8.70 GB and training time of 2.433 h. Then, the GoogleNet and AlexNet models have showcased moderate memory space and training time. At the same time, the DCNN model has portrayed somewhat considerable memory space of 2.83 GB and training time of 0.579 h. However, the AIE-ALDC technique has outperformed the other techniques with a minimal memory space of 3.29 GB and training time of 0.551 h.

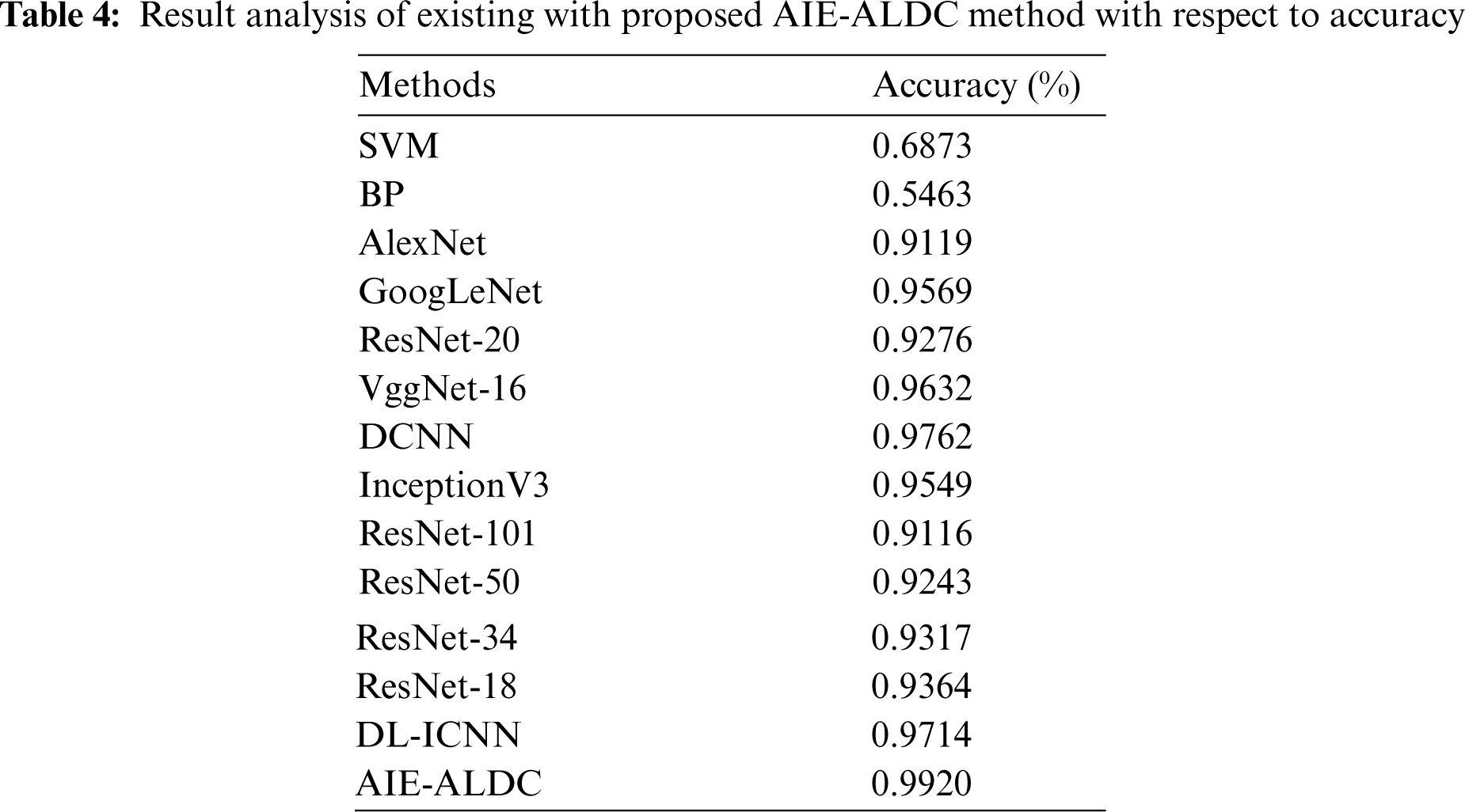

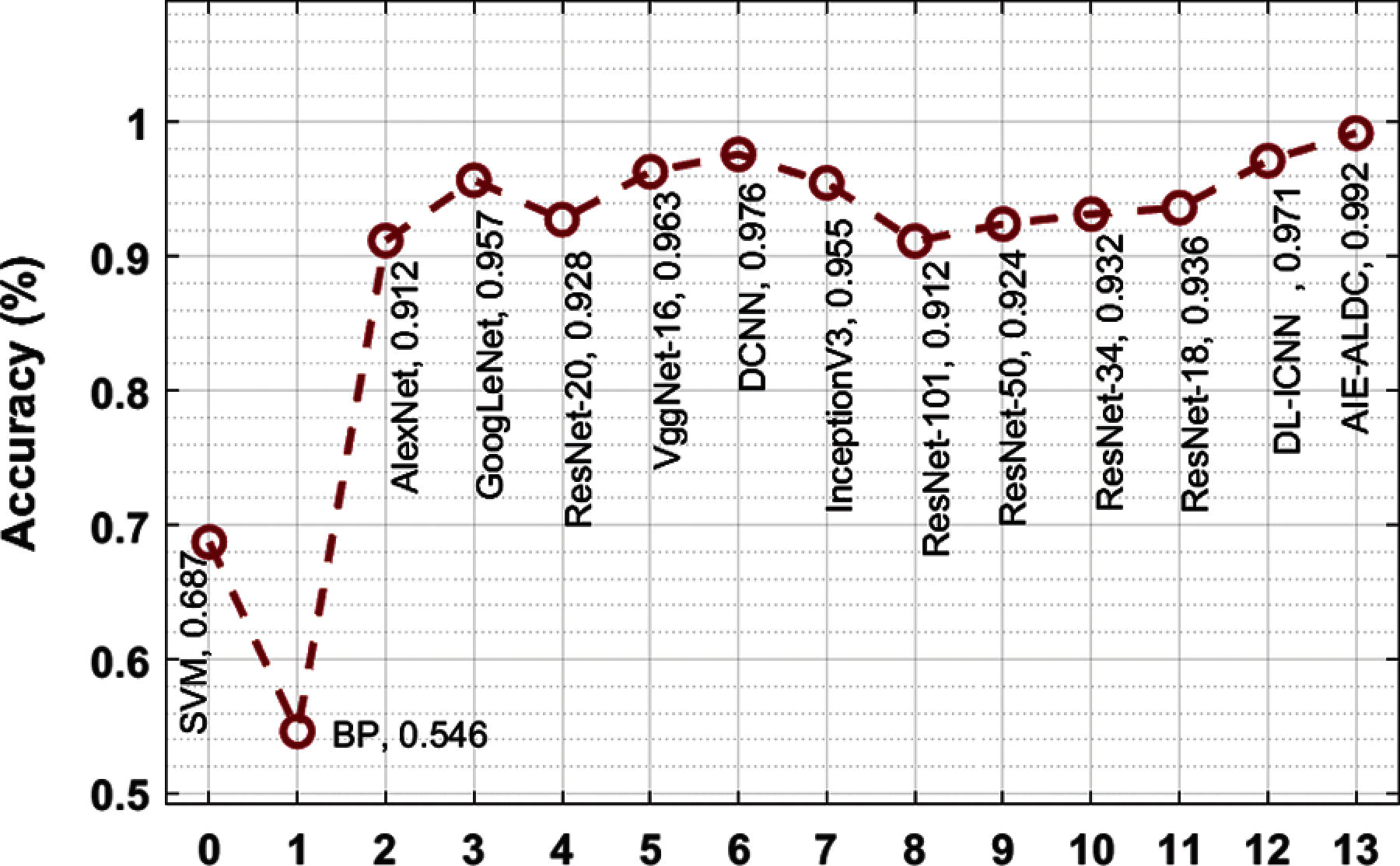

In order to ensure the promising performance of the AIE-ALDC technique, a detailed comparison study is made with the recent methods in Tab. 4 and Fig. 7 [25]. From the obtained values, it is noticeable that the BP and SVM models have led to least outcome with the accuracy of 0.5463 and 0.6873. In line with, the ResNet-101 and AlexNet models have depicted slightly enhanced outcome with the accuracy of 0.9116 and 0.9119. Then, the ResNet-50, ResNet-20, ResNet-34, and ResNet-18 models have demonstrated moderate outcome with the accuracy of 0.9317, 0.9364, 0.9549, and 0.9569 respectively. Followed by, the VGGNet-16 model has gained somewhat considerable outcome by gaining an accuracy of 0.9632. Though the DL-ICNN and DCNN models have portrayed competitive accuracy values of 0.9714 and 0.9762, the proposed AIE-ALDC technique has surpassed all the other techniques by accomplishing a maximum accuracy of 0.992.

Figure 7: Accuracy analysis of AIE-ALDC model with existing techniques

By looking into the aforementioned tables and figures, it can be ensured that the AIE-ALDC methodology is initiate that exists an appropriate technique for apple leaf disease detection and classification.

This paper has devised an efficient AIE-ALDC technique for the detection and classification of apple leaf diseases. The proposed AIE-ALDC technique encompasses orientation based augmentation, GF based preprocessing, CapsNet based feature extraction, WWO based parameter tuning, and BiLSTM based classification. The use of WWO algorithm aids to optimally adjust the hyperparameter involved in the BiLSTM model. A wide range of experiments was performed to showcase the supremacy of the AIE-ALDC approach. The experimental outcomes demonstrate the promising performance of the AIE-ALDC technique over the recent state of art methods with the higher accuracy of 0.9920. In future, the proposed AIE-ALDC technique can be deployed in a real time environment using internet of things (IoT) and imaging sensors.

Funding Statement: The authors extend their appreciation to the Deanship of Scientific Research at King Khalid University for funding this work under Grant Number (RGP2/209/42), https://www.kku.edu.sa. This research was funded by the Deanship of Scientific Research at Princess Nourah bint Abdulrahman University through the Fast-Track Path of Research Funding Program.

Conflicts of Interest: The authors declare that they have no conflicts of interest to report regarding the present study.

1. A.-K. Mahlein, “Plant disease detection by imaging sensors–parallels and specific demands for precision agriculture and plant phenotyping,” Plant Disease, vol. 100, no. 2, pp. 241–251, 2016. [Google Scholar]

2. M. Elhoseny, K. Shankar and M. Abdel-Basset, (Eds.) Recent Advances, In Artificial Intelligence Applications for Smart Societies, Switzerland, Cham: Springer International Publishing, pp. 1–251, 2021. [Google Scholar]

3. I. V. Pustokhina, D. A. Pustokhin, T. Vaiyapuri, D. Gupta, S. Kumar et al., “An automated deep learning based anomaly detection in pedestrian walkways for vulnerable road users safety,” Safety Science, vol. 142, pp. 105356, 2021. [Google Scholar]

4. M. Dutot, L. M. Nelson and R. C. Tyson, “Predicting the spread of postharvest disease in stored fruit, with application to apples,” Postharvest Biology and Technology, vol. 85, pp. 45–56, 2013. [Google Scholar]

5. K. Shankar, E. Perumal, P. Tiwari, M. Shorfuzzaman and D. Gupta, “Deep learning and evolutionary intelligence with fusion-based feature extraction for detection of COVID-19 from chest X-ray images,” Multimedia Systems, vol. 27, pp. 1–13, 2021. [Google Scholar]

6. D. Venugopal, T. Jayasankar, M. Y. Sikkandar, M. I. Waly, I. V. Pustokhina et al., “A novel deep neural network for intracranial haemorrhage detection and classification,” Computers, Materials & Continua, vol. 68, no. 3, pp. 2877–2893, 2021. [Google Scholar]

7. T. Vaiyapuri, S. N. Mohanty, M. Sivaram, I. V. Pustokhina, D. A. Pustokhin et al., “Automatic vehicle license plate recognition using optimal deep learning model,” Computers, Materials & Continua, vol. 67, no. 2, pp. 1881–1897, 2021. [Google Scholar]

8. B. Liu, Y. Zhang, D. He and Y. Li, “Identification of apple leaf diseases based on deep convolutional neural networks,” Symmetry, vol. 10, no. 1, pp. 11, 2017. [Google Scholar]

9. S. Chouhan, A. Kaul, U. Singh and S. Jain, “Bacterial foraging optimization based radial basis function neural network (brbfnn) for identification and classification of plant leaf diseases: An automatic approach towards plant pathology,” IEEE Access, vol. 6, pp. 8852–8863, 2018. [Google Scholar]

10. M. A. Khan, M. I. U. Lali, M. Sharif, K. Javedet, K. Aurangzeb al., “An optimized method for segmentation and classification of apple diseases based on strong correlation and genetic algorithm based feature selection,” IEEE Access, vol. 7, pp. 46261–46277, 2019. [Google Scholar]

11. H.-J. Yu and C. -H. Son, “Leaf spot attention network for apple leaf disease identification,” in 2020 in IEEE/CVF Conf. on Computer Vision and Pattern Recognition Workshops (CVPRWSeattle, WA, USA, pp. 229–237, 2020. [Google Scholar]

12. X. Xie, Y. Ma, B. Liu, J. He, S. Li et al., “A deep-learning-based real-time detector for grape leaf diseases using improved convolutional neural networks,” Frontiers in Plant Science, vol. 11, pp. 751, 2020. [Google Scholar]

13. M. Agarwal, R. K. Kaliyar, G. Singal and S. Kr. Gupta, “FCNN-Lda: A faster convolution neural network model for leaf disease identification on apple's leaf dataset,” in 2019 12th Int. Conf. on Information & Communication Technology and System (ICTSSurabaya, Indonesia, pp. 246–251, 2019. [Google Scholar]

14. C. Bi, J. Wang, Y. Duan, B. Fu, J.-R. Kang et al., “Mobilenet based apple leaf diseases identification,” Mobile Networks and Applications, vol. 8, pp. 1–12, 2020. [Google Scholar]

15. J. Tamang et al., “Dynamical properties of ion-acoustic waves in space plasma and Its application to image encryption,” IEEE Access, vol. 9, pp. 18762–18782, 2021. [Google Scholar]

16. C. L. Chowdhary, P. V. Patel, K. J. Kathrotia, M. Attique, K. Perumal et al., “Analytical study of hybrid techniques for image encryption and decryption,” Sensors, vol. 20, no. 18, pp. 5162, 2020. [Google Scholar]

17. R. Panigrahi et al., “A consolidated decision tree-based intrusion detection system for binary and multiclass imbalanced datasets,” Mathematics, vol. 9, no. 7, pp. 751, 2021. [Google Scholar]

18. Mustaqeem and S. Kwon, “1D-CNN: Speech emotion recognition system using a stacked network with dilated CNN features,” Computers, Materials & Continua, vol. 67, no. 3, pp. 4039–4059, 2021. [Google Scholar]

19. Mustaqeem and S. Kwon, “CLSTM: Deep feature-based speech emotion recognition using the hierarchical ConvLSTM network,” Mathematics, vol. 8, no. 12, pp. 2133, 2020. [Google Scholar]

20. Plant Diseases Dataset. [Online]. Available: https://www.kaggle.com/vipoooool/new-plant-diseases-dataset/data#. [Google Scholar]

21. Preprint: S. Sabour, N. Frosst and G. E. Hinton, “Dynamic routing between capsules,” arXiv preprint arXiv:1710.09829, 2017. [Google Scholar]

22. Y. -J. Zheng, “Water wave optimization: A new nature-inspired metaheuristic,” Computers & Operations Research, vol. 55, pp. 1–11, 2015. [Google Scholar]

23. A. A. Hematabadi and A. A. Foroud, “Optimizing the multi-objective bidding strategy using min–max technique and modified water wave optimization method,” Neural Computing and Applications, vol. 31, no. 9, pp. 5207–5225, 2019. [Google Scholar]

24. Z. Cui, R. Ke, Z. Pu and Y. Wang, “Stacked bidirectional and unidirectional LSTM recurrent neural network for forecasting network-wide traffic state with missing values,” Transportation Research Part C: Emerging Technologies, vol. 118, pp. 102674, 2020. [Google Scholar]

25. P. Jiang, Y. Chen, B. Liu, D. He and C. Liang, “Real-time detection of apple leaf diseases using deep learning approach based on improved convolutional neural networks,” IEEE Access, vol. 7, pp. 59069–59080, 2019. [Google Scholar]

| This work is licensed under a Creative Commons Attribution 4.0 International License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited. |