Open Access

Open Access

ARTICLE

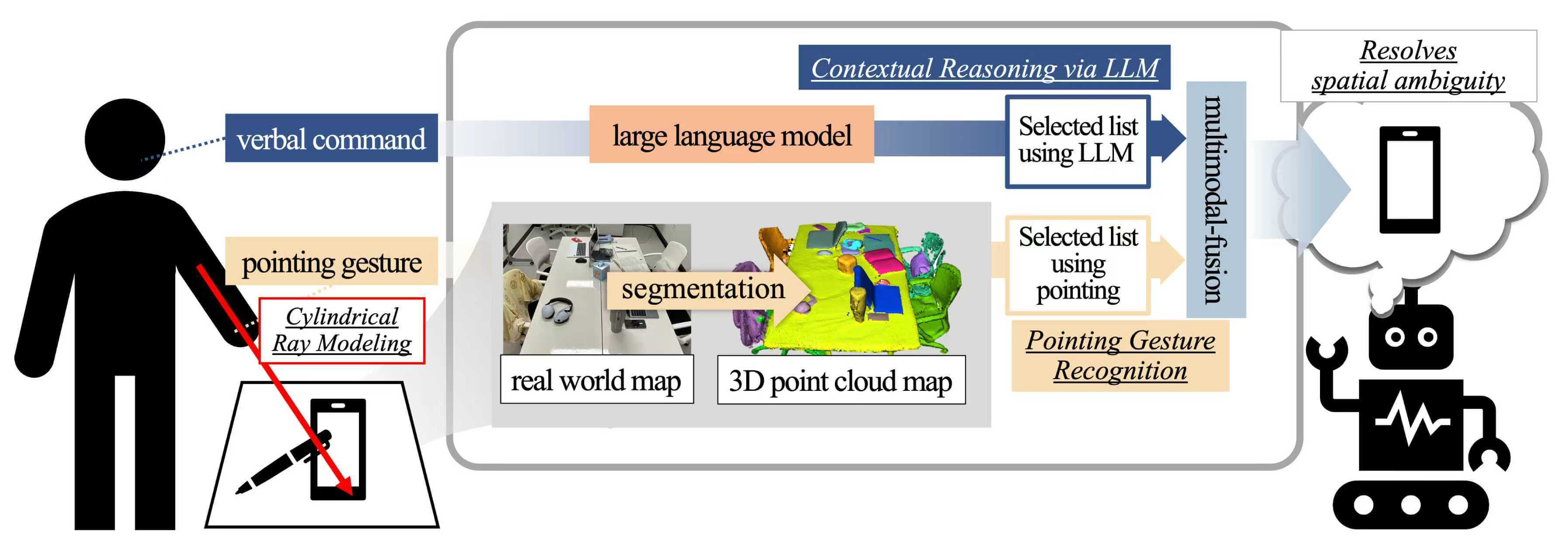

Resolving Ambiguity in Pointing Gestures Using Contextual Reasoning from Large Language Models

Department of Computer Science and Engineering, Gyeongsang National University, Jinju-Si, Republic of Korea

* Corresponding Author: Suwon Lee. Email:

Computer Modeling in Engineering & Sciences 2026, 147(1), 33 https://doi.org/10.32604/cmes.2026.079954

Received 31 January 2026; Accepted 17 March 2026; Issue published 27 April 2026

Abstract

In everyday life, people effectively convey their intentions through pointing gestures without explicitly naming objects. In particular, pointing gestures used in conjunction with linguistic expressions such as “this” and “that” play a crucial role in intuitively indicating objects or locations in space. Although research on the recognition of such nonverbal gestures has been actively pursued within the field of human-computer interaction (HCI), accurately interpreting a user’s intent remains challenging in situations where the pointing gesture is ambiguous. This paper proposes an integrated system that combines a large language model (LLM), capable of understanding complex human language expressions, with pointing gestures designed to designate targets in space, thereby effectively processing multimodal user commands. The system is designed to accurately recognize user intentions even in complex and uncertain environments (e.g., indoor spaces with multiple objects) by synergistically leveraging spatial information obtained from pointing gestures and contextual reasoning provided by the LLM. To validate the proposed approach, we constructed a dataset comprising complex real-world environments and diverse utterances, and conducted experiments to meticulously analyze the system’s performance and limitations. This study demonstrates the potential for natural expansion of language-based spatial understanding within HCI, and suggests avenues for future research in related fields.Graphic Abstract

Keywords

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools