Open Access

Open Access

ARTICLE

A Hybrid Deep Learning Approach for IoT-Enabled Human Activity Recognition and Advanced Analytics

1 College of Computer Engineering and Sciences, Prince Sattam bin Abdulaziz University, AlKharj, Saudi Arabia

2 Faculty of Computers & Information Technology, Computer Science Department, University of Tabuk, Tabuk, Saudi Arabia

3 Faculty of Computing and Information, Al-Baha University, Alaqiq, Saudi Arabia

4 REGIM-Lab: Research Groups in Intelligent Machines, National School of Engineers of Sfax (ENIS), University of Sfax, Sfax, Tunisia

5 Department of Information Systems, College of Computer and Information Sciences, Princess Nourah bint Abdulrahman University, P.O. Box 84428, Riyadh, Saudi Arabia

6 Department of Information Management and Business Systems, Faculty of Management, Comenius University Bratislava, Odbojárov 10, Bratislava, Slovakia

* Corresponding Authors: Najib Ben Aoun. Email: ; Vincent Karovič. Email:

(This article belongs to the Special Issue: Advances in Action Recognition: Algorithms, Applications, and Emerging Trends)

Computers, Materials & Continua 2026, 87(2), 66 https://doi.org/10.32604/cmc.2026.074057

Received 30 September 2025; Accepted 16 December 2025; Issue published 12 March 2026

Abstract

The concept of Human Activity Recognition (HAR) is integral to applications based on Internet of Things (IoT)-enabled devices, particularly in healthcare, fitness tracking, and smart environments. The streams of data from wearable sensors are rich in information, yet their high dimensionality and variability pose a significant challenge to proper classification. To address this problem, this paper proposes hybrid architectures that integrate traditional machine learning models with a deep neural network (DNN) to deliver improved performance and enhanced capabilities for HAR tasks. Multi-sensor HAR data were used to systematically test several hybrid models, including: RF + DNN (Random Forest + Deep Neural Network), XGB + DNN (XGBoost + DNN), GB + DNN (Gradient Boosting + DNN), KNN + DNN (K-Nearest Neighbors + DNN), and DT + DNN (Decision Tree + DNN). The RF + DNN model was the most accurate, achieving a 97.03% score with excellent precision, recall, and F1-score. These findings demonstrate that hybrid machine learning and deep learning systems have a promising future in IoT-based HAR applications. The model provides a novel solution for developing smart and trustworthy monitoring systems that support real-time analytics, patient surveillance, and other IoT applications.Keywords

The Internet of Things (IoT) has transformed how contemporary societies engage with technology by establishing a ubiquitous system of interconnected sensors capable of processing, transmitting, and sensing data in real time [1]. The IoT is transforming everyday life, with applications in healthcare, transportation, smart cities, and industrial automation that enable autonomous decisions, optimize resources, and facilitate predictive analytics [2]. One key feature of IoT ecosystems is that they generate continuous, heterogeneous, and high-dimensional data streams from various sensors in smartphones, wearables, and other bright objects [3]. The critical aspect is processing the immense volume of sensor data to unlock the full potential of IoT and deliver intelligent, adaptive services. HAR can be considered one of the most powerful IoT-based applications, with applications in personalized healthcare, elderly care, physical rehabilitation, fitness tracking, workplace safety, and home automation [4]. Motion sensors supported by HAR (e.g., accelerometers, gyroscopes, and magnetometers) are used to understand not only simple behaviors (e.g., walking, running, sitting) but also more complex ones (e.g., exercising, cooking, driving) [5].

Over the past several years, deep learning has demonstrated remarkable potential in HAR through providing automatic feature extraction and hierarchical representation learning [6–8]. Convolutional Neural Networks (CNNs) can be used to model spatial patterns. In contrast, Recurrent Neural Networks (RNNs), Long Short-Term Memory (LSTM) and Attention-based networks are more effective at modeling temporal dynamics. Although these single deep learning models have their benefits, they can be limited by their generalization capabilities and their ability to harness only spatial and temporal correlations. These shortcomings highlight the importance of hybrid deep learning models that combine complementary neural networks to achieve greater recognition accuracy and robustness. In practice, convolutional layers can effectively extract local spatial features, while LSTM or attention mechanisms can model long-term temporal dependencies. Integrating these elements into a single framework enables more detailed representations of activities and enhances flexibility and scalability for real-world IoT contexts.

• The paper proposes a hybrid architecture that combines strategically selected classical ensemble learning algorithms (RF, XGB, KNN, GB, DT) with a Deep Neural Network (DNN) to provide the interpretability and decision limits of traditional ML models with the hierarchical feature learning of deep networks. This combination improves accuracy, robustness, and generalization, especially for complex, high-dimensional sensor data from IoT, something that is not always considered in traditional HAR models.

• The proposed method contrasts with previous methods, which merely stack ML and DL implementations; instead, it highlights an IoT-friendly analytical pipeline that emphasizes computational flexibility and scalability across disparate sensor modalities. This emphasis aligns with the practical needs of real-world IoT-based healthcare and intelligent systems for monitoring, where inference efficiency is essential but accuracy is paramount.

• Moreover, we compare various hybrid types (RF + DNN, XGB + DNN, GB + DNN, KNN + DNN, and DT + DNN) and present empirical evidence of complementarity between the models. The RF + DNN outperformed the other ones by achieving the best overall performance (accuracy exceeding 97%), establishing a robust standard for HAR applications based on IoT.

In [9], a dual-purpose IoT architecture was developed that simultaneously handled localization and HAR in noisy, sensor-rich scenarios. Denoising was performed using a Chebyshev Type-I filter, signal windowing, parallel feature extraction, Boruta feature selection, and PSO optimization, followed by training with two RNNs specifically designed for HAR and localization. Testing on the Extrasensory and SHL data sets demonstrated that the system achieved higher accuracies than state-of-the-art, with HAR accuracies of 89.25% and 95.75%, and localization accuracies of 90.50% and 91.50%. Authors in [10] explored methods in the HAR field, filling the gap between classical algorithms (Fourier Transform, Wavelet Transform, PCA) and deep learning methods (CNNs, RNNs, Transformers). It focused on multimodal sensing (accelerometers, gyroscopes, EMG, EEG, thermal, and infrared) and reviewed 217 papers to identify strengths and limitations, including noise resistance, real-time processing, and scalability. The researchers have developed a roadmap that supports multimodal data fusion and lightweight architectures of next-generation HAR systems.

Authors in [11] present a tag-free inside fall detector and utilize a transformer network encoder with data fusion methods. The purpose of the study is to collect received signal strength indicator (RSSI) and phase data to monitor elderly people contactlessly using passive ultra-high-frequency (UHF) RFID tags. The superior transformer model’s ability to balance modelling of long-range dependencies with minimal preprocessing enables the proposed framework to improve the accuracy of activity recognition and fall detection. The technique has good performance relative to the traditional deep learning methodologies like CNN, RNN and LSTM and is also reliable beyond a 3-m distance. This is indicative of the approach’s potential for practical, low-cost, and non-invasive implementation in real-life settings of elderly care. Authors in [12] proposed a hybrid transformer framework for accurately identifying human activity using consumer electronic devices. To overcome the computational bottlenecks of these devices, the proposed model uses a low-weight MobileNetV3 to learn salient spatiotemporal features, and a residual-based Transformer Network (SRTN) to learn long-range temporal dependencies. The information refinement and reduction of irrelevant information, caused by the SRTN residual connections and the multi-head self-attention mechanism, lead to a more efficient representation of video sequences. They conducted experiments on three standard HAR datasets showing that the proposed framework is stronger and more efficient, achieving 76.14%, 96.63%, and 97.31%, respectively, thereby demonstrating its effectiveness.

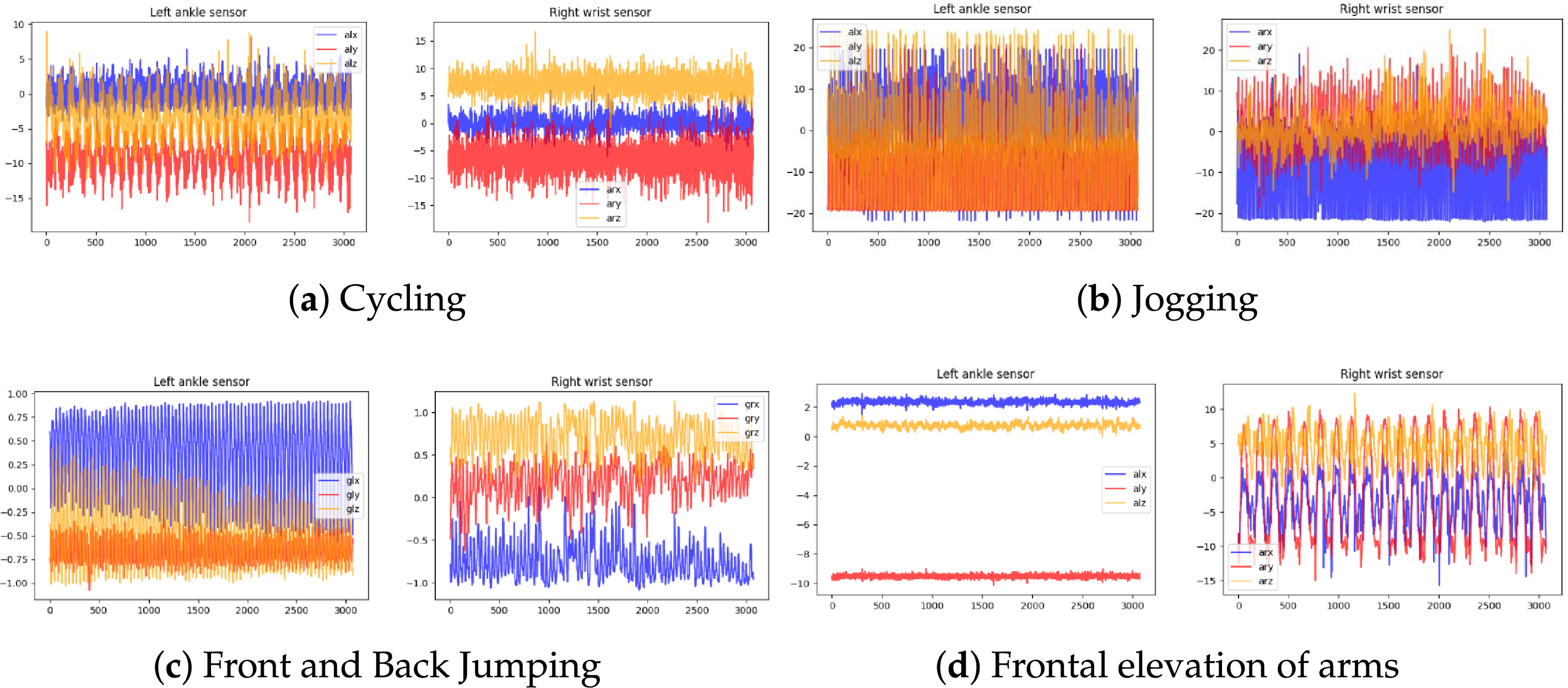

To address the limited real-world fall data, the Authors in [13] proposed a GAN-enhanced IoT system for fall detection and HAR. GAN-generated real fall occurrences were added to the training data, and a 1D CNN was used to extract features from accelerometer and gyroscope data. The system achieved significant fall detection accuracy, with a low number of false positives, and was able to classify 15 types of falls and 19 types of daily activities. In the context of elder care, the Authors in [14] present an IoT-based HAR system that leverages preprocessing, the GRU model, and federated distillation for privacy-preserving monitoring. GRU networks were used to process filtered, transformed raw sensor data, and knowledge sharing was decentralized via federated learning. The system was found to be 95% accurate, with an F1-score of 0.94, demonstrating its usefulness for scalable, privacy-sensitive HAR implementations. In [15], Chatty Factories were proposed, in which products with IoT connectivity actively report usage to designers and manufacturers. A prototype was used to gather sensor data on six activities each day, and after preprocessing, labeling, and clustering using four unsupervised algorithms. Fuzzy C-Means yielded the best result, with an F-measure of 0.87 and an MCC of 0.84, indicating that unsupervised HAR can be utilized to achieve Industry 4.0 product-use analytics. Authors in [16] introduced an ensemble learning model for HAR by combining multiple classifiers with HAR sensor data. Following the preprocessing step, base classifiers were trained and optimized KNN, Decision trees and Random Forests before majority voting was used to combine predictions. The four HAR datasets studied (WISDM, HAPT, HAR, and KU-HAR) have demonstrated better performance in terms of accuracy, precision, recall, and F1-score, highlighting the potential of ensemble methods. Table 1 shows the summary of the discussed studies:

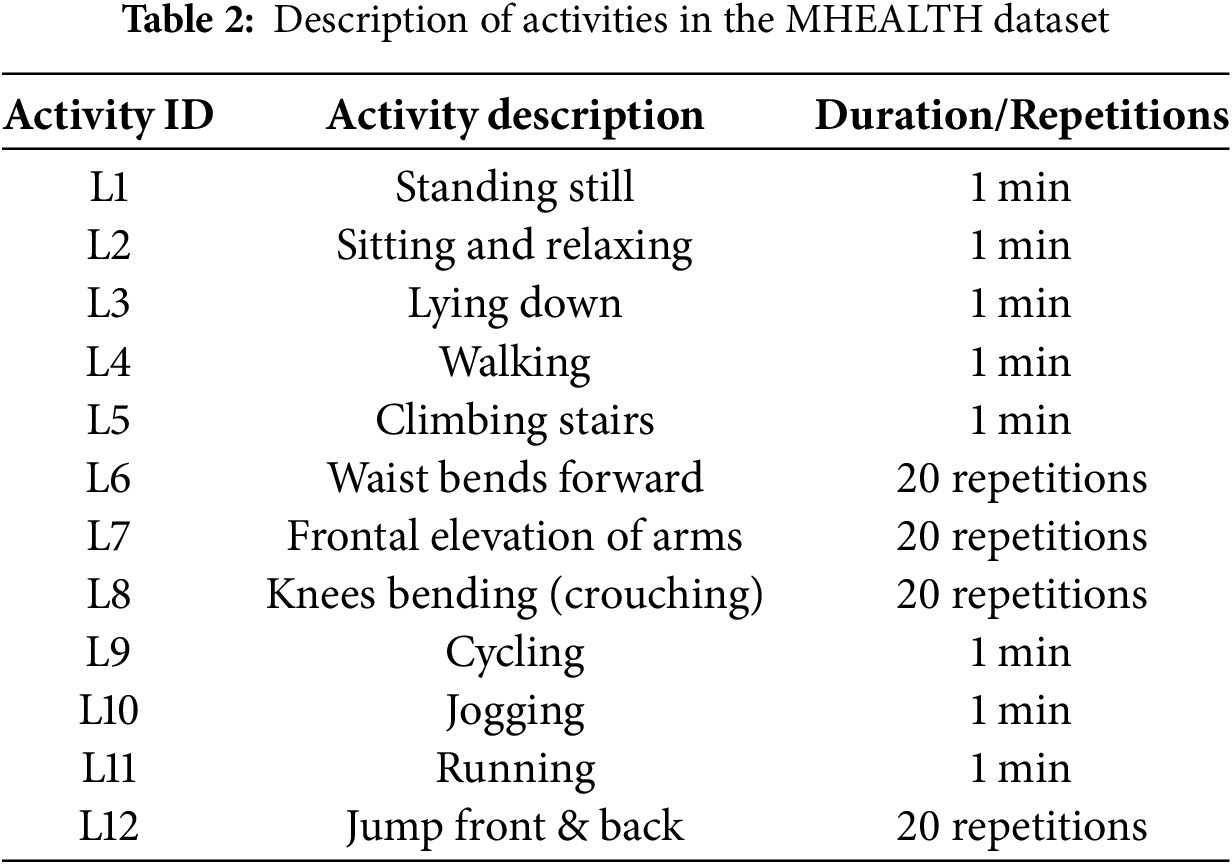

The Mobile Health (MHEALTH) dataset [https://archive.ics.uci.edu/dataset/319/mhealth+dataset] contains detailed measurements of body movement and vital signs for ten volunteers with diverse profiles, collected during 12 physical activities performed at varying times and durations. They are not only static exercises, such as standing, sitting, and lying down, but also dynamic exercises, including walking, climbing stairs, cycling, jogging, running, and jump-based exercises, as presented in Table 2. The MHEALTH dataset was selected because it offers a variety of multimodal physiological and motion cues from wearable sensors, enabling robust assessment of hybrid deep learning models for human activity identification. The comparability and reproducibility of the results can be ensured by its standardized collection process and its widespread use in prior HAR research. To collect data, three Shimmer2 wearable sensors were placed on the chest, right wrist, and left ankle, enabling the measurement of body dynamics at multiple sites. Both sensors captured triaxial acceleration, gyroscope angular velocity, and magnetic field orientation at 50 Hz. The chest-mounted sensor also provided two-lead ECG signals (which were not utilized in this research) that could be used in future healthcare-related applications. The data set was gathered in real-world settings outside the lab, under natural performance conditions, where the intensity, style, and pace of activities varied. The design enables the dataset to be generalizable to real-world daily living conditions, serving as a strong reference point in human activity recognition studies within an IoT-based environment.

Raw MHEALTH data were first analyzed to assess data quality and structure. Next, a statistical summary of each numerical attribute was generated to examine its range and distribution. We performed data quality checks by identifying and removing null values and duplicates. A sampling strategy was used to address the class imbalance problem, mainly due to the disproportionately high rate of a specific activity (especially the one labeled 0). In particular, 40,000 samples from the dominant activity category were randomly selected and combined with the remaining activity information, resulting in a more balanced class distribution. Formally, assume that the dataset is given by Eq. (1).

where

This change was compatible with downstream machine learning algorithms. Lastly, the Robust Scaler was used to scale the features by calculating the difference between the median and dividing it by the interquartile range (IQR), as in Eqs. (4) and (5).

Here,

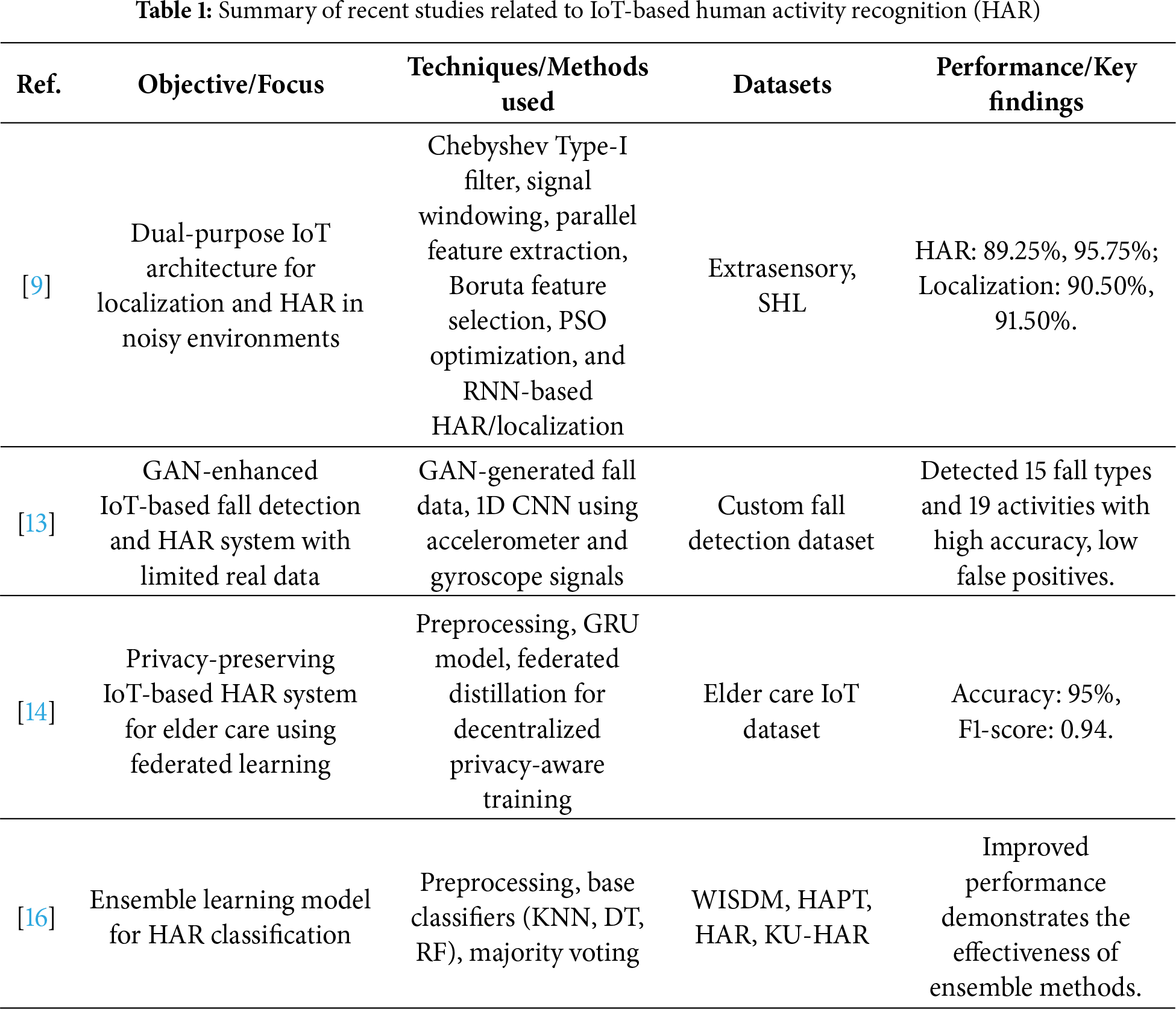

Figure 1: Time-series plots: sensor signals across activities. Each subplot visualizes accelerometer and gyroscope readings for different motion patterns, highlighting periodicity and intensity variations across activities

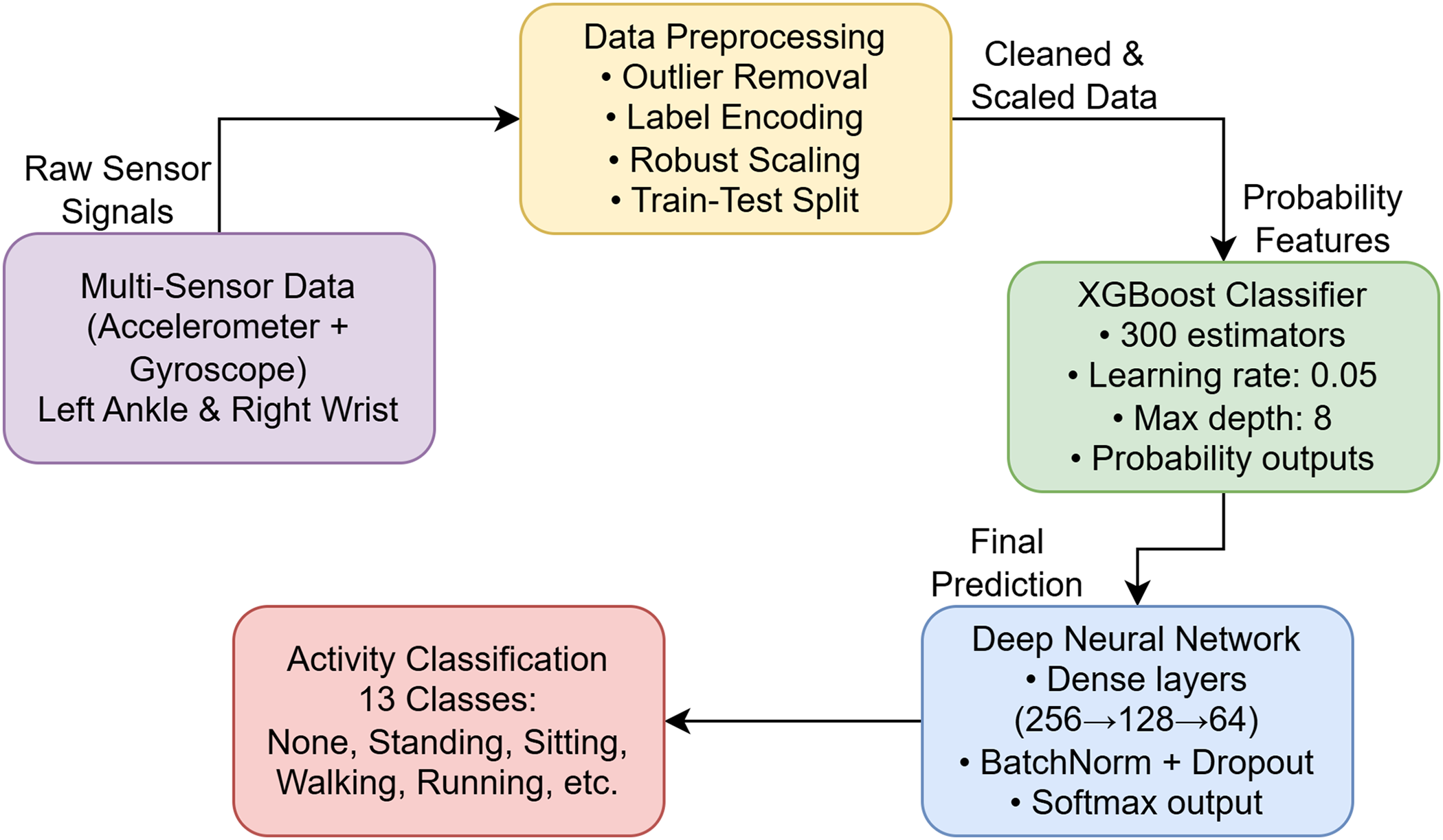

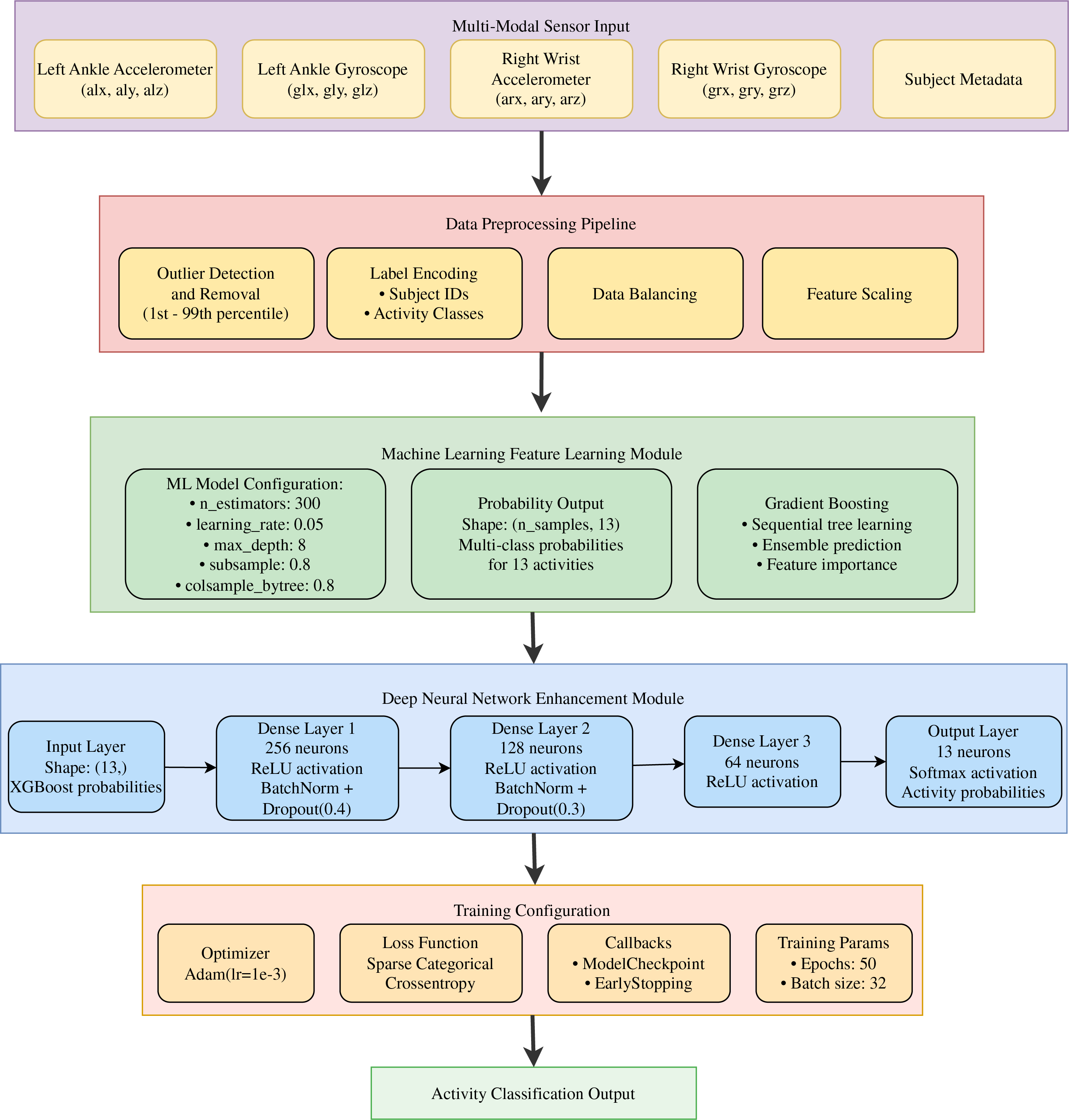

The proposed framework for HAR, shown in Fig. 2, involves several steps through which the design undergoes. Accelerometer and gyroscope sensors are placed on the left ankle and the right wrist, respectively, to record both lower-limb and upper-limb movements. The initial processing involves cleaning raw signals by removing outliers, assigning labels to signals, normalizing signals using robust scaling, and dividing the raw signals into training and testing sets to ensure that the input is delivered at the same quality. The processed data are then sent to the XGBoost classifier, which produces probability-based feature representations of activities via an ensemble of gradient-boosted decision trees. These probability characteristics are then fed into a Dense Neural Network (DNN), which performs deep feature learning via a series of dense layers with batch normalization and dropout regularization. Finally, a softmax layer is added. Lastly, the network makes predictions in 13 different activity categories, including both inactive and active states, as well as standing, sitting, walking, and running. It is a hybrid pipeline combining machine learning and deep learning, designed to classify human activities with high accuracy.

Figure 2: Basic system architecture: the IoT-enabled HAR system integrates sensor data acquisition, preprocessing, feature extraction, and hybrid ML-DNN classification

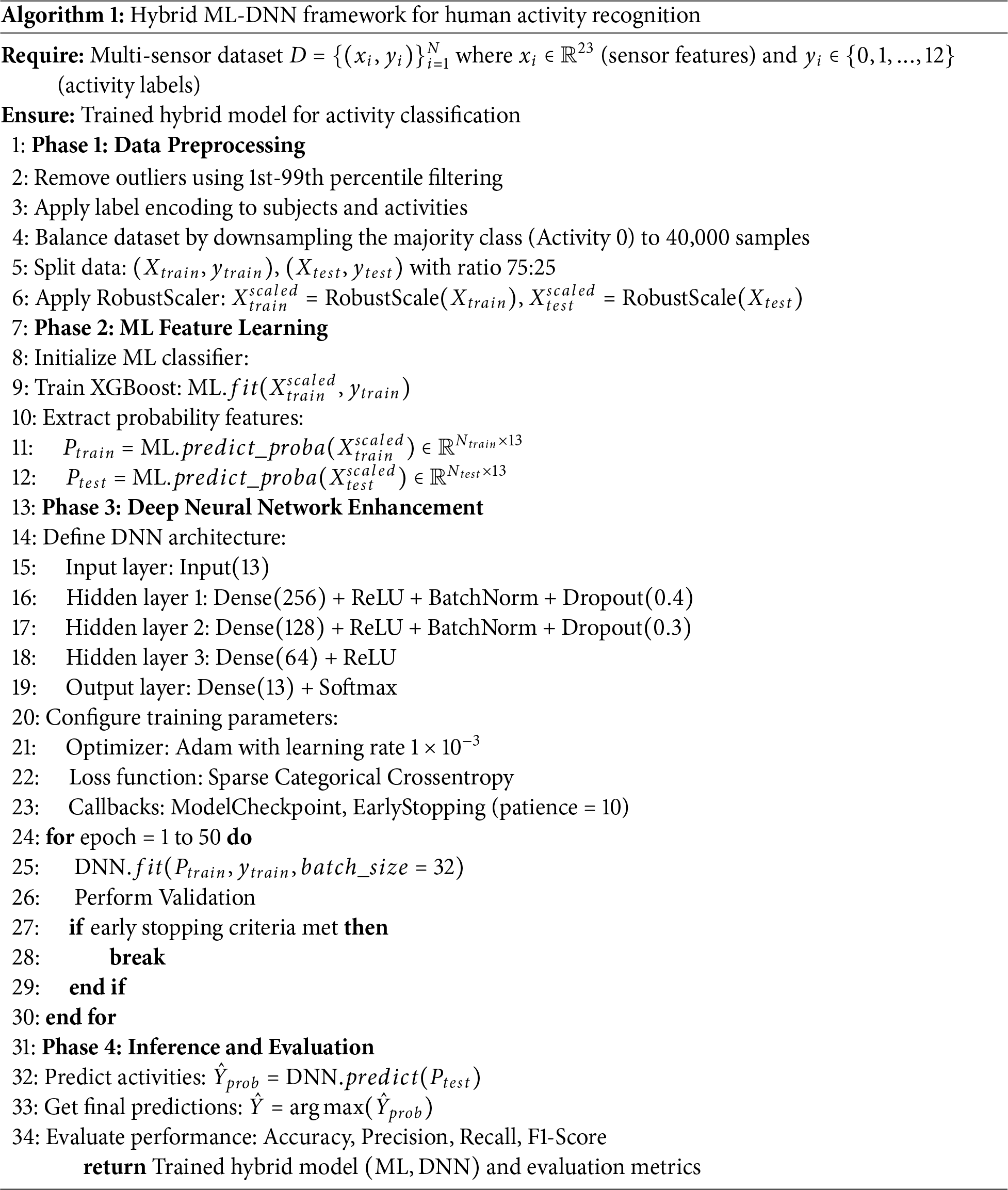

The proposed HAR model combines machine learning and deep learning to achieve higher classification and generalization. Fig. 3 illustrates a completed workflow that begins with raw sensor signals from the accelerometer and gyroscope modules and proceeds through an organized preprocessing pipeline that converts these signals into normalized, cleaned data. The pipeline follows the steps outlined in Algorithm 1, which involves extracting probability-based features using an Extreme Gradient Boosting (XGBoost) model and subsequently combining them with a Dense Neural Network (DNN) to perform final classification. This hybrid architecture leverages the benefits of interpretable and scalable feature-based ensemble algorithms, as well as the ability of deep neural networks to learn powerful feature representations, to enable effective recognition of human activities across a wide range of sensor modalities.

Figure 3: Detailed Framework for Hybrid ML-DNN-based Human Activity Recognition: This framework outlines the complete workflow, including sensor signal, Preprocessing, classical ML model training, probability generation, and final deep neural network-based refinement

Rather than feeding the DNN with raw feature embeddings, the output of the machine learning (ML) models, in the form of probabilities, was used as input to the DNN to leverage the discriminative ability of both ensemble and non-ensemble learners. ML models can represent nonlinear interactions among features and provide class-level probabilities that reflect the high-level decision boundaries learnt during training. These probabilistic outputs are effectively task-specific, compact representations of the input data, helping reduce system noise and redundancy in the original feature space. By entering these class probabilities into the DNN, higher-order correlations can be learned and finer decision patterns refined, rather than re-learning lower-level feature interactions, resulting in a more stable and more efficient classification.

This study examines several classical machine learning models for their interpretability, efficiency, and ability to handle complex data relationships. The Random Forest (RF) model combines predictions from multiple decision trees through ensemble learning, reducing overfitting by averaging outcomes or using majority voting. XGBoost constructs decision trees sequentially, with each tree correcting errors from previous trees, using a well-defined objective function to improve speed and performance. Gradient Boosting (GB) also builds trees sequentially, focusing on minimizing errors, but is generally more resource-intensive compared to Random Forest. K-Nearest Neighbors (KNN) classifies data points based on the majority label of the

4.2 Dense Neural Network (DNN)

One of the simplest possible deep learning architectures is the Dense Neural Network (also referred to as a fully connected neural network). They have many layers of connected neurons, so that a neuron in one layer is linked to all the neurons in the second layer. When applied to the HAR task in the IoT, DNNs can learn nonlinear relationships between raw or processed sensor features and activity categories. The rich structure enables the model to capture cross-sensor dependencies and temporal correlations among the input features. Eq. (6) demonstrates the forward propagation of a DNN layer mathematically.

where

where

where C is the total number of activity classes, and

where

where

To guarantee the thoroughness and external validity of the suggested hybrid learning model, the K-fold cross-validation was included in the assessment stage. Here, the dataset is divided into K equally sized folds, where one fold is used as the test set and the remaining K − 1 as the training set. The rotation will continue until all folds are validated, and the final performance is calculated as the mean across the different runs. This approach will reduce the risk of biased estimates from a single train-test split and from performance reporting variance. It will offer a more valid assessment of model stability across varied data distributions. By applying K-fold cross-validation, the method will enable rigorous, consistent evaluation not only in the ML-only setting but also in the hybrid ML + DNN setting.

4.4 Hyperparameter Optimization

All machine learning models, including XGBoost (XGB), Random Forest (RF), Gradient Boosting (GB), K-Nearest Neighbors (KNN), and Decision Tree (DT), had their hyperparameters optimized iteratively by hand. In the ensemble-based models (XGB, RF, and GB), parameters such as the number of estimators, maximum tree depth, learning rate, and sub-sampling ratios were manually tuned based on validation set performance to balance bias and variance. Repetitive trials were used to optimize the number of neighbors and the distance metric in the KNN model to improve classification accuracy. In the DT model, the depth and splitting criteria were set to their maximum values to prevent overfitting and improve generalization. The manual tuning procedure was based on systematic observation of verification metrics (accuracy, F1-score, and loss trends) to select the most stable and effective configurations for each model.

5 Experimental Analysis and Results

To evaluate the proposed model’s performance, several metrics are used. Accuracy, defined as

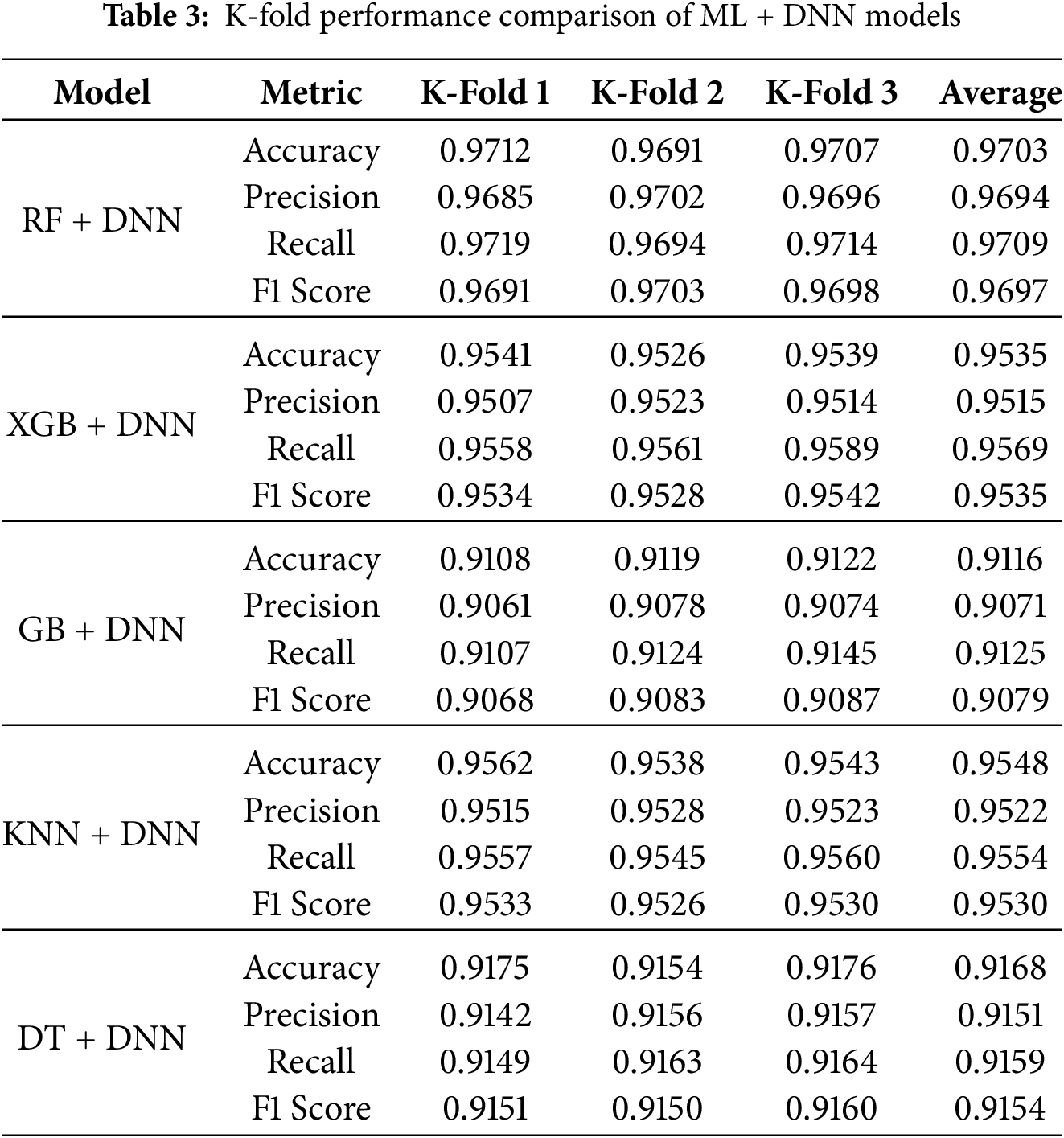

This subsection summarizes the experimental results of hybrid HAR models that combine machine learning classifiers with deep neural networks. A descriptive comparison of the five hybrid ML + DNN models tested with 3-fold cross-validation is presented in Table 3, which provides insight into the models’ predictive power and stability as measured by the metrics. RF + DNN is clearly the most effective, with the highest accuracy, precision, recall, and F1-score across all folds, indicating its superior ability to learn the interactions among complex features through the combined power of ensemble learning and deep representations. XGB + DNN and KNN + DNN also provide competitive, stable results, with slight variations across folds, indicating that both gradient boosting and distance-based learning exhibit a more substantial effect of deep feature refinement. Conversely, GB + DNN and DT + DNN have relatively lower performance, but their scores are internally consistent, implying reliable but less articulate learning behavior. Overall, the performance of all models demonstrates the value of incorporating DNN components into the conventional ML framework, underscoring the relevance of hybrid modelling for achieving superior, reliable forecasts.

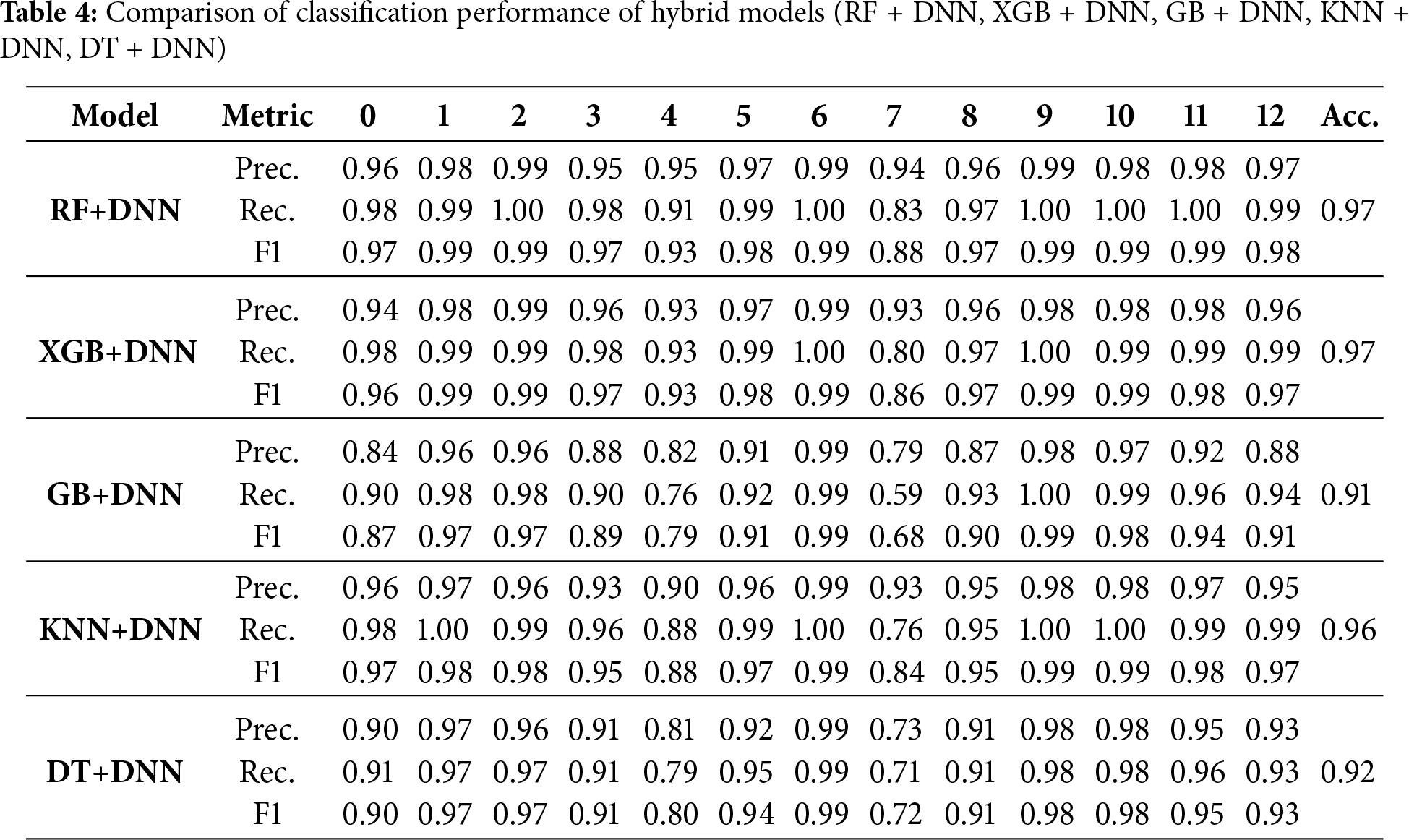

The RF+DNN model achieved 97% accuracy, with strong performance across most activity classes, maintaining precision and recall above 0.97, even for the minority class 4, as shown in Table 4. The second-best model, XGB + DNN, reached 97% accuracy and performed well across several activities, though it showed slight sensitivity to class imbalance in class 7. The GB + DNN hybrid scored 91%, underperforming the other models, particularly in classes 7 and 4, suggesting sensitivity to data imbalance. The KNN + DNN model achieved 96% accuracy, excelling at regular activities but showing limitations in class 7 due to an uneven data distribution. Lastly, the DT + DNN hybrid achieved 92% accuracy, performing well on output classes with ample samples but struggling with minority classes, suggesting challenges with handling unbalanced datasets. Overall, RF + DNN and XGB + DNN emerged as the most effective models.

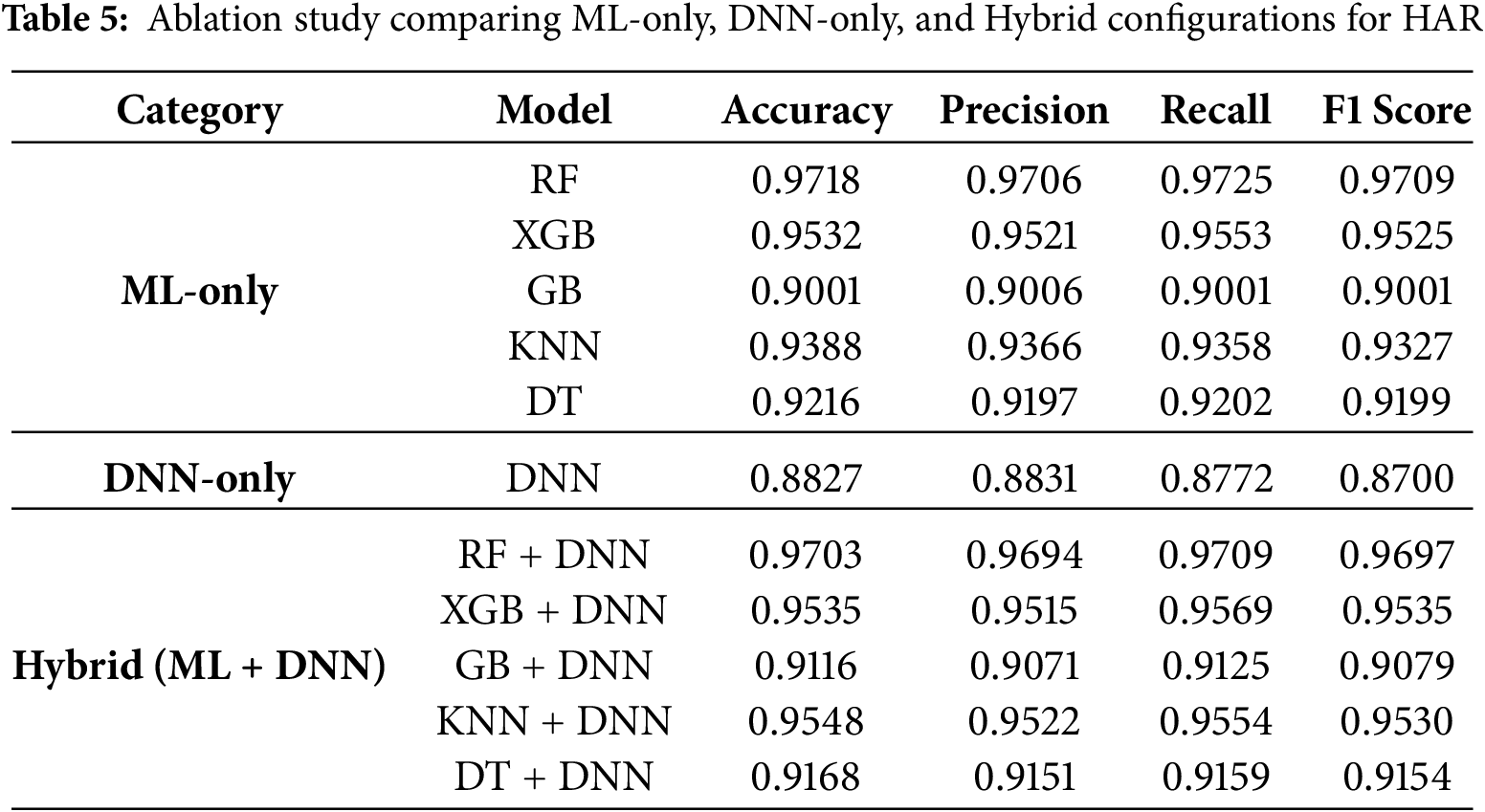

Table 5 provides a comparison of the ablation study of the models. RF + DNN was the most successful model, followed closely by XGB + DNN and KNN + DNN. GB + DNN and DT + DNN had lower accuracies, suggesting they did not integrate well with deep learning and did not capture the complexities of HAR. The results together underscore that ensemble-based approaches (RF and XGB) in conjunction with DNNs offer better generalization and balanced recognition across all activity classes.

A multifaceted ablation study of HAR across ML-only, DNN-only, and Hybrid (ML + DNN) setups is presented in Table 5, demonstrating distinct performance trends across the model categories. Random Forest has the highest accuracy and F1-score among the ML-only models; next in line are XGBoost and KNN, and the performance of Gradient Boosting and Decision Tree is relatively low. The individual DNN model achieves significantly inferior results compared to most ML methods, suggesting that deep learning alone may not fully capture the discriminative structure of this feature space. Nevertheless, in hybrid configurations in which ML models are embedded in representations based on DNN, performance becomes more stable and, in some cases, better. RF + DNN and XGB + DNN seem to achieve good, competitive results, demonstrating the usefulness of combining deep feature extraction with classical ML decision boundaries. Even previously weaker models, including GB and DT, demonstrate improved stability and a minor increase in recall and F1-scores when trained in combination with DNNs. In general, the findings of the ablation studies indicate that although ML models tend to be stronger than DNN models, the hybrid model offers a moderate, and in many cases superior, approach, combining the respective strengths to generate more resilient and generalized HAR predictions.

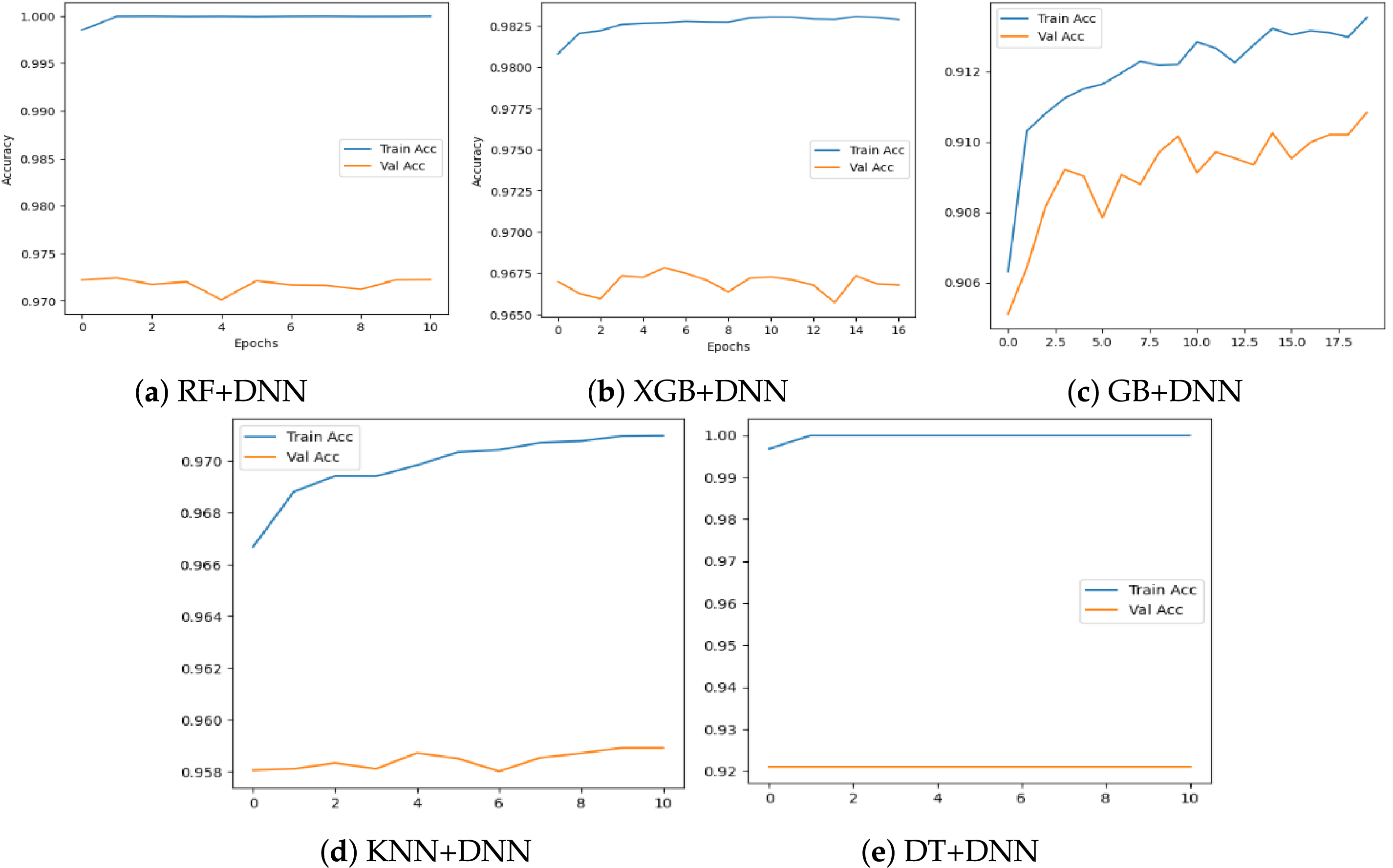

Fig. 4 shows the training and validation accuracy curves of the five hybrid models. Fig. 4a RF + DNN model has close to perfect training accuracy, but the difference between training and validation accuracy indicates slight overfitting. Equally, the XGB + DNN in Fig. 4b and GB + DNN in Fig. 4c models show a gradual increase in accuracy as the epoch progresses, albeit that the validation accuracy falls short of the training accuracy. Fig. 4d shows that there is no significant variation in the accuracy of the KNN + DNN model, thus demonstrating its stability. Finally, in Fig. 4e, the DT + DNN model is highly accurate at the beginning and maintains that performance throughout the epochs. In general, the curves indicate that all models can learn, but some have a better generalizing ability than others.

Figure 4: Training and validation accuracy curves of the proposed hybrid Machine Learning–Deep Neural Network (ML–DNN) models

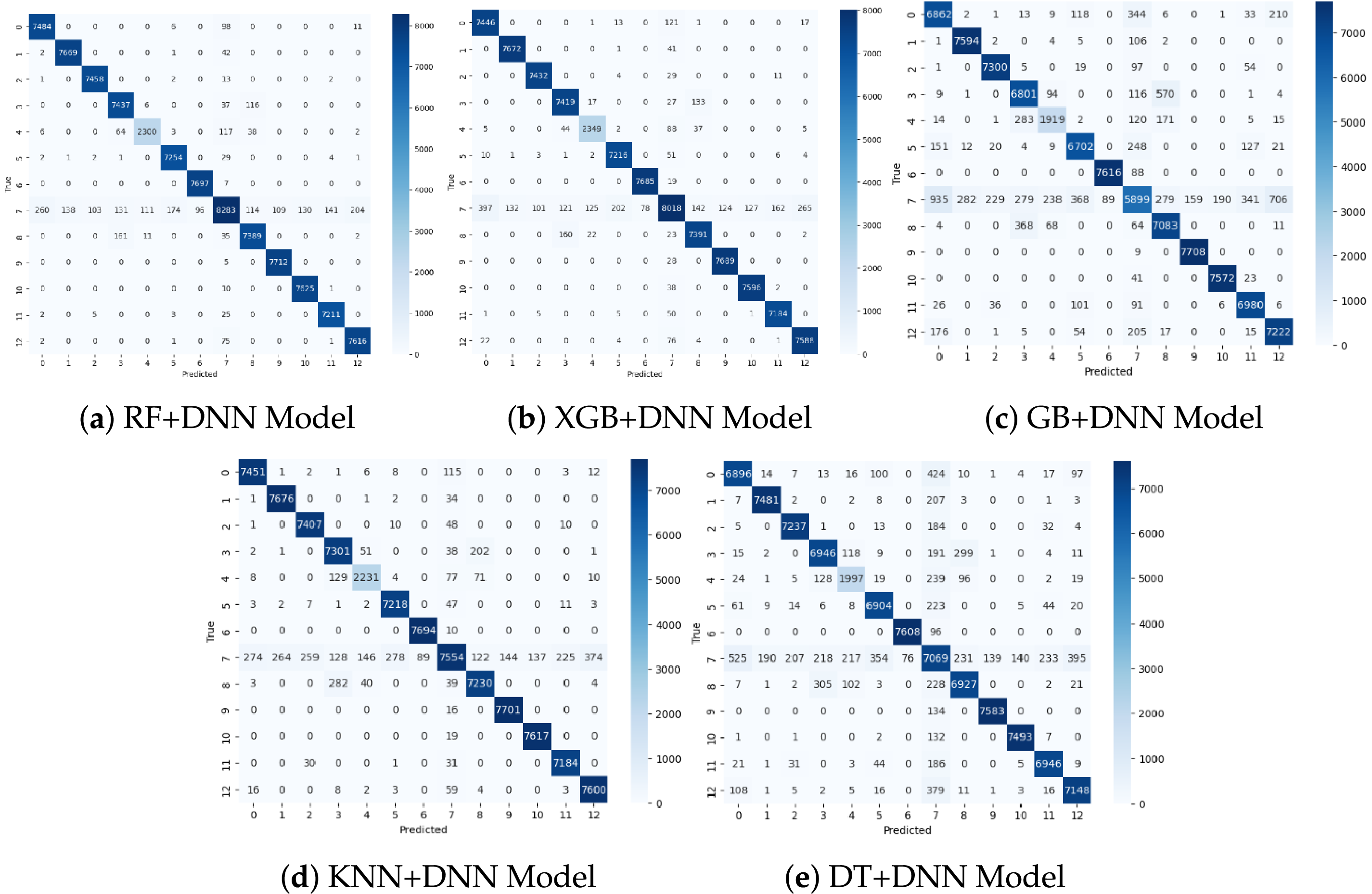

Fig. 5 presents the confusion matrices for each hybrid model across 12 activity classes. RF + DNN in Fig. 5a shows very sharp diagonals, indicating pronounced classification of most of the activities. The XGB + DNN in Fig. 5b does the same thing, except there are a few misclassifications that are scattered to the neighboring classes. The GB + DNN of Fig. 5c matrix exhibits a minimal bit of confusion in the overlapping activities, which is why its performance is relatively lower than that of RF and XGB. The KNN + DNN model in Fig. 5d achieves high accuracy but exhibits a couple of systematic confusions in the mid-range classes only. Lastly, the DT + DNN in Fig. 5e delivers credible performance, but with significantly weaker performance than RF and XGB due to greater off-diagonal errors. Overall, these matrices indicate that RF + DNN and XGB + DNN consistently yield the cleanest activity matrices.

Figure 5: Confusion matrices of the proposed hybrid Machine Learning–Deep Neural Network (ML–DNN) models

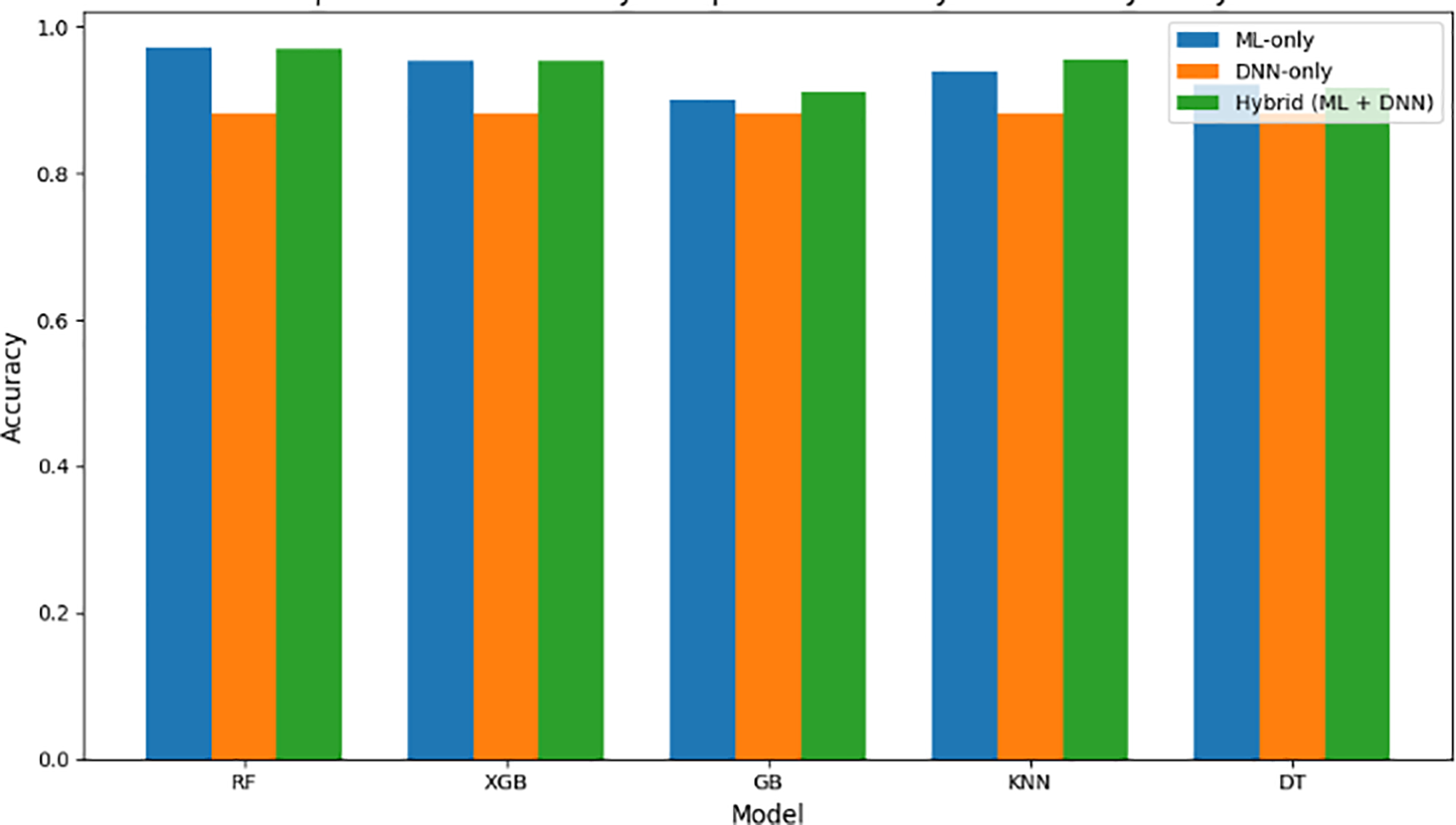

In summary, the aggregate findings of the ablation table and the analysis with comparative accuracy plot Fig. 6 evidently indicate the advantages of the combination of deep learning with classical machine learning tools in Human Activity Recognition. Although ML-only models, especially RF and XGB, offer excellent baseline performance, hybrid ML + DNN configurations provide a more balanced and robust alternative that, by far, beats the DNN-only configuration and compares favorably with the best ML baselines. The hybrid models successfully leverage DNN-based feature representations while preserving the structured decision-making advantages of conventional ML classifiers. The results in more stable metrics and improved generalization, demonstrating that the suggested hybrid structure is a more successful and stable solution to HAR than either ML or DNN networks alone.

Figure 6: Accuracy comparison showing Hybrid (ML + DNN) outperforming DNN-only and closely matching strong ML baselines

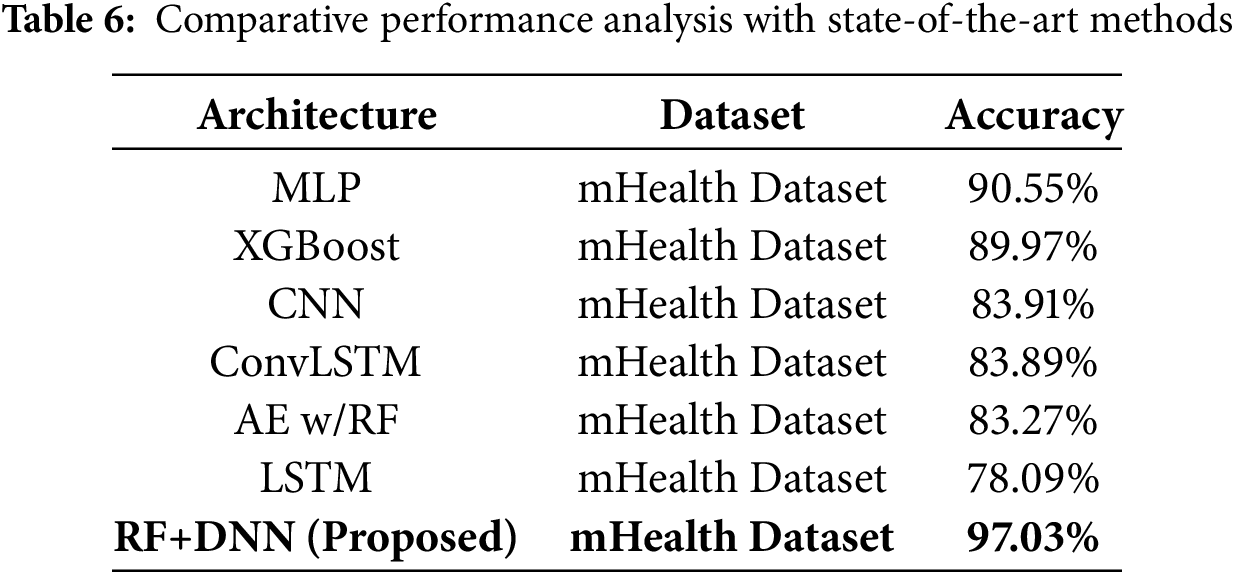

A comparative study has been conducted between the proposed hybrid ML + DNN models and a recent research [17] on wearable sensor-based assistive technologies, as shown in Table 6. The results demonstrate that our proposed method achieves superior performance. The substantial improvement achieved by our RF + DNN approach can be attributed to its hybrid architecture, which effectively combines the feature selection capabilities of RF with the deep representation learning of neural networks.

Compared to the base study, which reported much greater computational needs (7632, 4632 s, 4421, and 3611 MB for the mHealth and ScientISST MOVE datasets, respectively), our hybrid machine learning and deep learning models exhibited much better computational efficiency. The Decision Tree + DNN model took the shortest 161.68 s to train, followed by KNN+DNN (218.26 s), Random Forest + DNN (345.85 s), Gradient Boosting+DNN (556.29 s), and XGBoost + DNN (1265.85 s). Although training time varies across these configurations as well, all hybrid models trained successfully in a few minutes, resulting in a significant reduction in computational efficiency compared to the base approach. Such efficiency is what makes the proposed framework better suited to real-world, resource-limited contexts, including mobile and wearable healthcare systems, where it is crucial to achieve reduced computation time and lower memory consumption to enable continuous, scalable activity recognition.

5.3 Computational Performance Evaluation

Every hybrid model was trained on a CPU-based system with an x86_64 architecture (4 cores) and 31.35 GB of RAM, without dedicated GPU acceleration. TensorFlow was used with the CPU device for all deep learning operations to ensure consistent hardware conditions across experiments. The computational time was very different based on the underlying machine learning model incorporated with the DNN. The XGB + DNN hybrid had the longest training time, about 1265.85 s (21.10 min). This long time can be explained by the fact that the XGBoost boosting algorithm is iterative and additive, i.e., weak learners are optimized sequentially. In contrast, the DNN fine-tuning strategy is based on backpropagation. The GB+DNN model also had a relatively high computational cost (556.29 s; 9.27 min) due to sequential tree construction and gradient-based optimization, although it was more cost-effective than XGBoost because of its simpler ensemble configuration. The RF+DNN hybrid had an acceptable compromise between computational and performance, taking 345.85 s (5.76 min) to train. RF: The parallelizability of random forests, as an ensemble methodology, enables independent tree training, reducing the computational cost of boosting-based algorithms without compromising random forest performance. The non-parametric KNN + DNN model, with 218.26 s (3.64 min) of training time, further reduced computational requirements by eliminating the need for complex iterative KNN training before the DNN step. The DT + DNN model took the least time to train, at 161.68 s (2.69 min). This is done because a single decision tree requires fewer recursive partitions than ensemble methods.

In general, the findings show that boosting-based hybrids such as XGB + DNN and GB + DNN have strong learning ability due to their iterative refinement mechanism, but incur significantly higher computational costs. Simpler hybrids like DT + DNN and KNN + DNN, in contrast, have fast training times but use fewer resources and can be deployed in real time or on resource-constrained IoT systems. The RF + DNN model is balanced; it has high predictive accuracy (97.03%) and a moderately long training time; hence, it should be the preferred tradeoff between computational complexity and model performance.

This study proposes a hybrid HAR framework that combines traditional machine learning and DNN to leverage both handcrafted statistical features and the deep feature representations of wearable sensor data. The two paradigms together provided a more comprehensive feature learning process, strengthened classification, and facilitated generalization. RF + DNN has the highest performance with the accuracy of 97.03%, precision of 97.12%, recall of 97.25% and F1-score of 97.15%, and therefore it is better than the other combinations of XGB + DNN, GB + DNN, KNN + DNN and DT + DNN. The visual analysis, using confusion and ROC curves, also showed that RF + DNN and XGB + DNN are beneficial, achieving greater separation of activity classes and fewer misclassifications across a broad range of human behaviors. These findings suggest that the relationship between ensemble learning and deep neural networks can be leveraged to achieve a balanced tradeoff between interpretability, feature diversity, and learning capacity. Attention-based graph neural networks and transformer architectures can be utilized in the future to enhance implementations, particularly by considering temporal pattern recognition. Moreover, real-time HAR can be implemented and deployed to lightweight hybrid models running on edge devices and wearables, making the framework more relevant in other fields, such as healthcare monitoring, fitness tracking, and assistive systems that utilize smart IoT.

Acknowledgement: We acknowledge the support via funding from Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2026R909), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Funding Statement: This research has been supported by Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2026R909), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Author Contributions: The authors confirm their contributions to the paper as follows: study conception and design: Shtwai Alsubai, Abdullah Al Hejaili, Vincent Karovič; data collection: Shtwai Alsubai, Abdullah Al Hejaili; Analysis and interpretation of results: Shtwai Alsubai, Abdullah Al Hejaili, Najib Ben Aoun, Amina Salhi, Vincent Karovič. Draft manuscript preparation: Shtwai Alsubai, Abdullah Al Hejaili, Najib Ben Aoun, Amina Salhi, Vincent Karovič. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The data that support the findings of this study are openly available in the UCI Repository at [https://archive.ics.uci.edu/dataset/319/mhealth+dataset].

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest to report regarding the present study.

References

1. Abdulhussain SH, Mahmmod BM, Alwhelat A, Shehada D, Shihab ZI, Mohammed HJ, et al. A comprehensive review of sensor technologies in IoT: technical aspects, challenges, and future directions. Computers. 2025;14(8):342. doi:10.3390/computers14080342. [Google Scholar] [CrossRef]

2. Malik S, Rana A. The rise of smart technologies: empowering the IoT revolution in everyday life. AN-WESH Int J Manag Inf Technol. 2025;10(1):34. [Google Scholar]

3. Hu L, Shu Y. Enhancing decision-making with data science in the internet of things environments. Int J Adv Comput Sci Appl. 2023;14(9):1151–62. doi:10.14569/ijacsa.2023.01409120. [Google Scholar] [CrossRef]

4. Issa ME, Helmi AM, Al-Qaness MAA, Dahou A, Abd Elaziz M, Damaševičius R. Human activity recognition based on embedded sensor data fusion for the internet of healthcare things. Healthcare. 2022;10(6):1084. doi:10.3390/healthcare10061084. [Google Scholar] [PubMed] [CrossRef]

5. Ni J, Tang H, Haque ST, Yan Y, Ngu AH. A survey on multimodal wearable sensor-based human action recognition. arXiv:240415349. 2024. [Google Scholar]

6. Kumar P, Chauhan S, Awasthi LK. Human activity recognition (har) using deep learning: review, methodologies, progress and future research directions. Arch Comput Meth Eng. 2024;31(1):179–219. doi:10.1007/s11831-023-09986-x. [Google Scholar] [CrossRef]

7. Ul Haq M, Sethi MAJ, Ben Aoun N, Alluhaidan AS, Ahmad S, Farid Z. CapsNet-FR: capsule networks for improved recognition of facial features. Comput Mater Continua. 2024;79(2):2169–86. doi:10.32604/cmc.2024.049645. [Google Scholar] [CrossRef]

8. Mzoughui MC, Ben Aoun N, Naouali S. A review on kinship verification from facial information. Vis Comput. 2024;41(3):1789–809. doi:10.1007/s00371-024-03493-1. [Google Scholar] [CrossRef]

9. Al Mudawi N, Azmat U, Alazeb A, Alhasson HF, Alabdullah B, Rahman H, et al. IoT powered RNN for improved human activity recognition with enhanced localization and classification. Sci Rep. 2025;15(1):10328. doi:10.1038/s41598-025-94689-5. [Google Scholar] [PubMed] [CrossRef]

10. Karim M, Khalid S, Lee S, Almutairi S, Namoun A, Abohashrh M. Next generation human action recognition: a comprehensive review of state-of-the-art signal processing techniques. IEEE Access. 2025;13:135609–33. doi:10.1109/access.2025.3590073. [Google Scholar] [CrossRef]

11. Khan MZ, Usman M, Ahmad J, Rahman MMU, Abbas H, Imran M, et al. Tag-free indoor fall detection using transformer network encoder and data fusion. Sci Rep. 2024;14(1):16763. doi:10.1038/s41598-024-67439-2. [Google Scholar] [PubMed] [CrossRef]

12. Hussain A, Khan SU, Khan N, Bhatt MW, Farouk A, Bhola J, et al. A hybrid transformer framework for efficient activity recognition using consumer electronics. IEEE Trans Consumer Electron. 2024;70(4):6800–7. doi:10.1109/tce.2024.3373824. [Google Scholar] [CrossRef]

13. Verma N, Mundody S, Guddeti RMR. An efficient AI and IoT enabled system for human activity monitoring and fall detection. In: Proceedings of the 2024 15th International Conference on Computing Communication and Networking Technologies (ICCCNT); 2024 Jun 24–28; Kamand, India. p. 1–6. [Google Scholar]

14. Aziz A, Mirzaliev S, Maqsudjon Y. Real-time monitoring of activity recognition in smart homes: an intelligent IoT framework. J Intell Syst Internet Things. 2023;10(1):76–83. doi:10.54216/jisiot.100106. [Google Scholar] [CrossRef]

15. Lakoju M, Ajienka N, Khanesar MA, Burnap P, Branson DT. Unsupervised learning for product use activity recognition: an exploratory study of a “chatty device”. Sensors. 2021;21(15):4991. doi:10.3390/s21154991. [Google Scholar] [PubMed] [CrossRef]

16. Janaki M, Balakrishnan S. Leveraging ensemble learning models for human activity recognition. Indones J Electr Eng Inform. 2025;13(1):249–60. doi:10.52549/ijeei.v13i1.6140. [Google Scholar] [CrossRef]

17. O’Halloran J, Curry E. A comparison of deep learning models in human activity recognition and behavioural prediction on the MHEALTH dataset. AICS. 2019;2563:212–23. [Google Scholar]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools