Open Access

Open Access

REVIEW

Review of Deep Learning-Based Intelligent Inspection Research for Transmission Lines

1 College of Mechanical and Electrical Engineering, Chizhou University, Chizhou, China

2 College of Electronic and Information Engineering, Nanjing University of Aeronautics and Astronautics, Nanjing, China

* Corresponding Authors: Chuanyang Liu. Email: ,

(This article belongs to the Special Issue: Advances in Deep Learning and Neural Networks: Architectures, Applications, and Challenges)

Computers, Materials & Continua 2026, 87(2), 5 https://doi.org/10.32604/cmc.2026.075348

Received 30 October 2025; Accepted 21 January 2026; Issue published 12 March 2026

Abstract

Intelligent inspection of transmission lines enables efficient automated fault detection by integrating artificial intelligence, robotics, and other related technologies. It plays a key role in ensuring power grid safety, reducing operation and maintenance costs, driving the digital transformation of the power industry, and facilitating the achievement of the dual-carbon goals. This review focuses on vision-based power line inspection, with deep learning as the core perspective to systematically analyze the latest research advancements in this field. Firstly, at the technical foundation level, it elaborates on deep learning algorithms for intelligent transmission line inspection based on image perception, covering object detection algorithms, semantic segmentation algorithms, and other relevant methodologies. Secondly, in application practice, it summarizes deep learning-based intelligent inspection applications across six dimensions—including detection of power insulators and their defects, transmission tower detection, power line feature extraction, metal fitting and defect detection, thermal fault diagnosis of power components, and safety hazard detection in power scenarios, and further lists relevant public datasets. Finally, in response to current challenges, it identifies five key future research directions, such as the deep integration of multiple learning paradigms, multi-modal data fusion, collaborative application of large and small models, cloud-edge-end collaborative integration, and multi-agent cluster control. This paper reviews and analyzes numerous deep learning-based intelligent detection methods for aerial images, comprehensively explores the application of deep learning in Unmanned Aerial Vehicle (UAV) inspection scenarios, and thus provides valuable theoretical and practical references for scholars engaged in smart grid automated inspection research.Keywords

As the economic lifeline of a nation, the power industry shoulders increasingly heavy responsibilities and plays an irreplaceable core role in meeting the electricity demands of industrial production and daily life, driving national economic development, and safeguarding social security and stability. With the rapid growth of the social economy and the continuous improvement of people’s living standards, society’s demand for electricity has exhibited a rigid upward trend. This objective trend has directly accelerated the large-scale expansion of power grid construction. By deeply integrating cutting-edge information technologies such as artificial intelligence, the Internet of Things, big data, and cloud platforms, it has established a robust smart grid infrastructure, which provides crucial technical support and hardware foundations for the large-scale application of deep learning and machine vision technologies in power systems [1,2].

Transmission lines, serving as the pivotal infrastructure for power transmission, are crucial to maintaining the stability of national livelihoods. Ensuring their operational reliability and safety is an essential prerequisite for the continuous supply of electrical energy [3]. However, owing to the inherent characteristics of power transmission and distribution systems, these lines are predominantly deployed in complex terrains such as dense forests and rugged mountains. Persistently exposed to environmental stressors including extreme weather events, topographical variations, and seasonal shifts, they also face challenges ranging from internal design flaws to external mechanical damage. Consequently, regular inspections are indispensable for ensuring the safe and stable operation of transmission lines, and the integration of deep learning and machine vision technologies is now providing an entirely new solution for efficient inspection in such complex environments [4].

The core objective of intelligent inspection for transmission lines is to monitor the status and diagnose faults of key components such as transmission towers, insulators, anti-vibration hammers, wire clamps, grading rings, bolts, and connection plates, while identifying potential hazards including bird nests on towers, suspended objects on lines (e.g., kites, balloons, garbage bags, and ice accretions), and vegetation or engineering vehicles within the transmission corridor [5]. Traditional manual inspection not only struggles to cover complex terrains but also has limited accuracy in detecting minor defects. In contrast, machine vision-based intelligent detection technology can achieve automated recognition of multiple component types and hazards through image feature extraction and pattern recognition [6]. For instance, it can identify cracking defects by analyzing texture changes on insulator surfaces and distinguish between iced and normal lines using color features, thereby significantly enhancing the comprehensiveness and accuracy of inspections.

With the rapid development of image processing and UAV control technologies, the model of “UAV inspection as the primary approach and manual inspection as a supplement” has become the mainstream mode for power transmission line inspection [7]. Major power grid enterprises have widely deployed UAVs equipped with visible light or infrared imaging devices, generating massive volumes of image and video data that lay a solid data foundation for intelligent analysis. Compared with infrared images, visible light images—rich in shape, color, and texture features—have emerged as the preferred data source for machine vision algorithms. However, UAV aerial images present challenges for component recognition and defect detection, such as complex backgrounds (including forests, fields, rivers, and buildings), variable target scales (e.g., small insulators captured from a long distance vs. large towers photographed at close range), and target occlusion (e.g., wires blocked by tree branches). Traditional manual identification is not only time-consuming and labor-intensive but also prone to missed or false detections due to visual fatigue. Thus, deep learning-based automated detection methods have become key to breaking this bottleneck: through the multi-layer feature extraction capability of Convolutional Neural Networks (CNNs), interference from complex backgrounds can be effectively suppressed, enabling accurate recognition of multi-scale and multi-morphological targets [8].

Over the past decade, with the maturation of UAV control technologies, power transmission line inspection has gradually evolved from mere image acquisition and transmission to the deep integration of UAVs and visual detection technologies. Its core lies in achieving intelligent recognition of power components and automated detection of defects [9–11]. Deep learning has demonstrated revolutionary advantages in this process: leveraging large-scale annotated datasets and high-performance computing hardware, target detection algorithms such as You Only Look Once (YOLO), Faster Region-Based Convolutional Neural Network (Faster R-CNN), and Single Shot MultiBox Detector (SSD) can automatically extract deep semantic features from images, enabling end-to-end detection that maps raw images to target coordinates and categories [12]. Instance segmentation algorithms like Mask Region-Based Convolutional Neural Network (Mask R-CNN) can further output pixel-level contours of targets, providing more granular information for defect localization (e.g., the precise range of insulator cracks). Compared with traditional image processing methods based on edge detection and threshold segmentation, deep learning methods exhibit stronger robustness to illumination variations and background interference, with significantly improved detection accuracy and speed [13,14].

Against the strategic backdrop of new digital infrastructure development, the ubiquitous power Internet of Things, and other power big data initiatives, the integrated advantages of UAV inspection and machine vision technologies continue to gain prominence. Through the efficient processing of massive inspection data by deep learning models, the workload of manual inspection can be significantly reduced, while the efficiency of equipment inspection and the accuracy of defect recognition are improved, thus driving power operation and maintenance toward automation and intelligent transformation [15,16]. For instance, visual models based on the Transformer architecture leverage a global attention mechanism to better capture correlations between transmission line components (e.g., spatial positional constraints between conductors and insulators), further enhancing detection stability in complex scenarios. The deployment of lightweight models (e.g., MobileNet, ShuffleNet) enables real-time on-edge analysis on UAVs, facilitating the “inspect-while-analyze” immediate response paradigm. Deep learning-based UAV autonomous inspection technology not only significantly reduces the operation and maintenance costs of power grid enterprises but also enhances the ability to identify tiny defects in aerial images (e.g., loose bolts and broken conductor strands), thereby providing more reliable technical safeguards for the safe operation of transmission lines [17,18]. Therefore, in-depth research on this technology holds great practical significance for comprehensively improving the efficiency and quality of power grid equipment inspection, as well as reducing the workload intensity and safety risks faced by inspectors.

To date, significant progress has been made in intelligent detection technologies for transmission line inspection images based on image perception. However, due to the wide variety of power components and their significant differences in morphological structures, a universal algorithm capable of effectively detecting all power components in images has not yet been developed. Therefore, in order to select suitable deep learning algorithms for the detection of specific power components, it is urgent to conduct a systematic analysis and summary of the detection methods for different power components. Against this backdrop, this paper reviews and analyzes a large number of intelligent detection methods for aerial images based on deep learning, comprehensively explores the application practices of deep learning in UAV inspection scenarios, and provides researchers engaged in smart grid automated inspection research with valuable theoretical and practical references to support their related work.

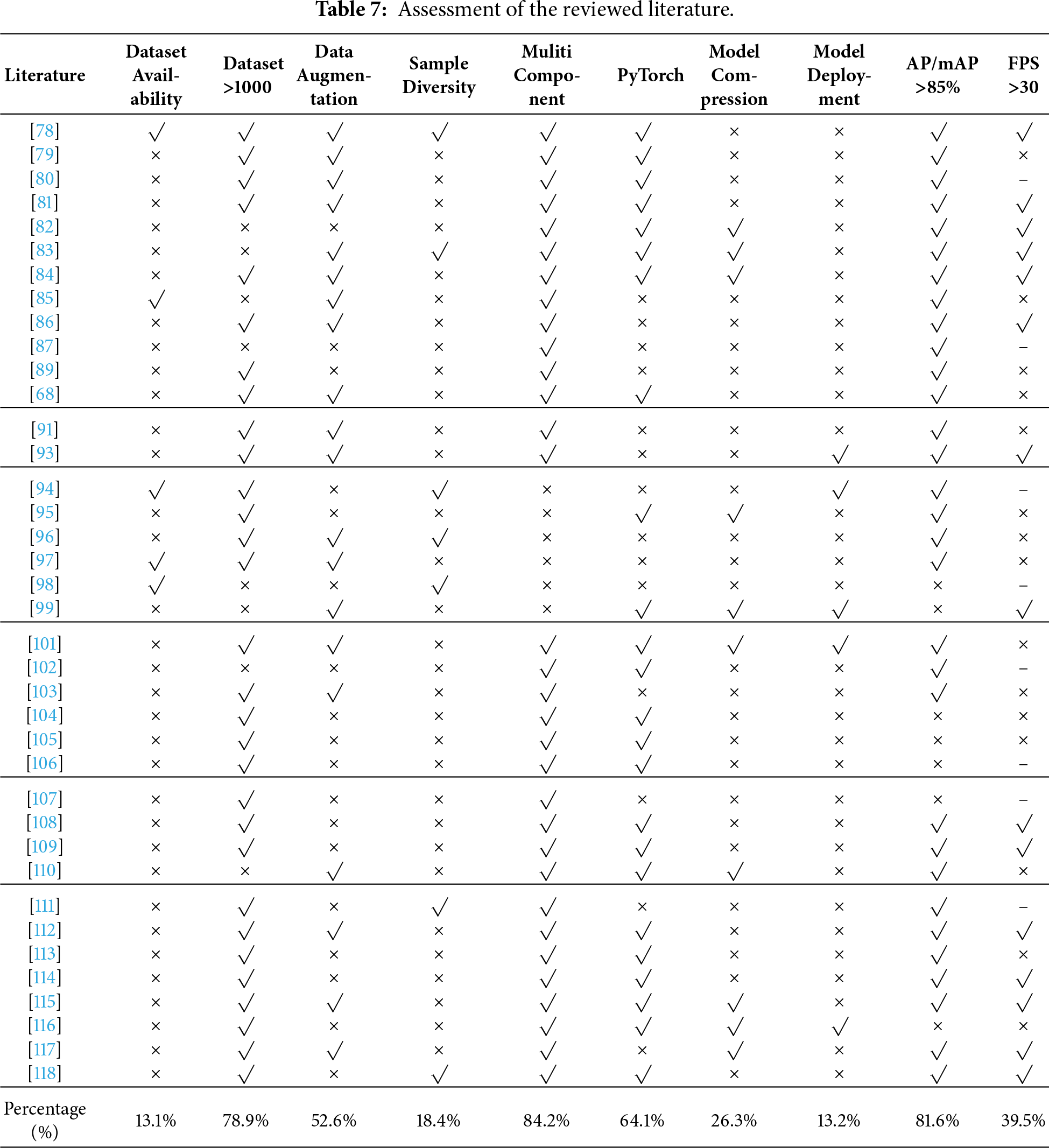

In terms of application practice, the paper highlights deep learning-based intelligent inspection applications, comprehensively summarizing them across six dimensions: power insulator and defect detection, transmission tower detection, power line feature extraction, metal fitting and defect detection, heating fault diagnosis of power components, and safety hazard detection in power scenarios. Meanwhile, it conducts a quantitative evaluation of all selected research papers, establishing a comparative framework from dimensions including model performance, dataset applicability, and engineering practicability to provide an objective reference for technology selection. Additionally, the paper systematically lists public datasets available for research on intelligent transmission line inspection.

In response to current challenges, the paper identifies five future research directions: promoting the deep integration of deep learning with multiple learning paradigms to enhance model adaptability; strengthening the deep integration of multimodal data (visible light, infrared, laser, etc.) to enrich feature dimensions; exploring the collaborative application of object detection algorithms and large language models to improve scene understanding capabilities; constructing a cloud-edge-end collaborative integration architecture to optimize data processing efficiency; and developing multi-agent collaborative inspection and cluster optimization technologies to enhance the coverage and reliability of large-scale inspections. These directions provide a clear development path for research in the field.

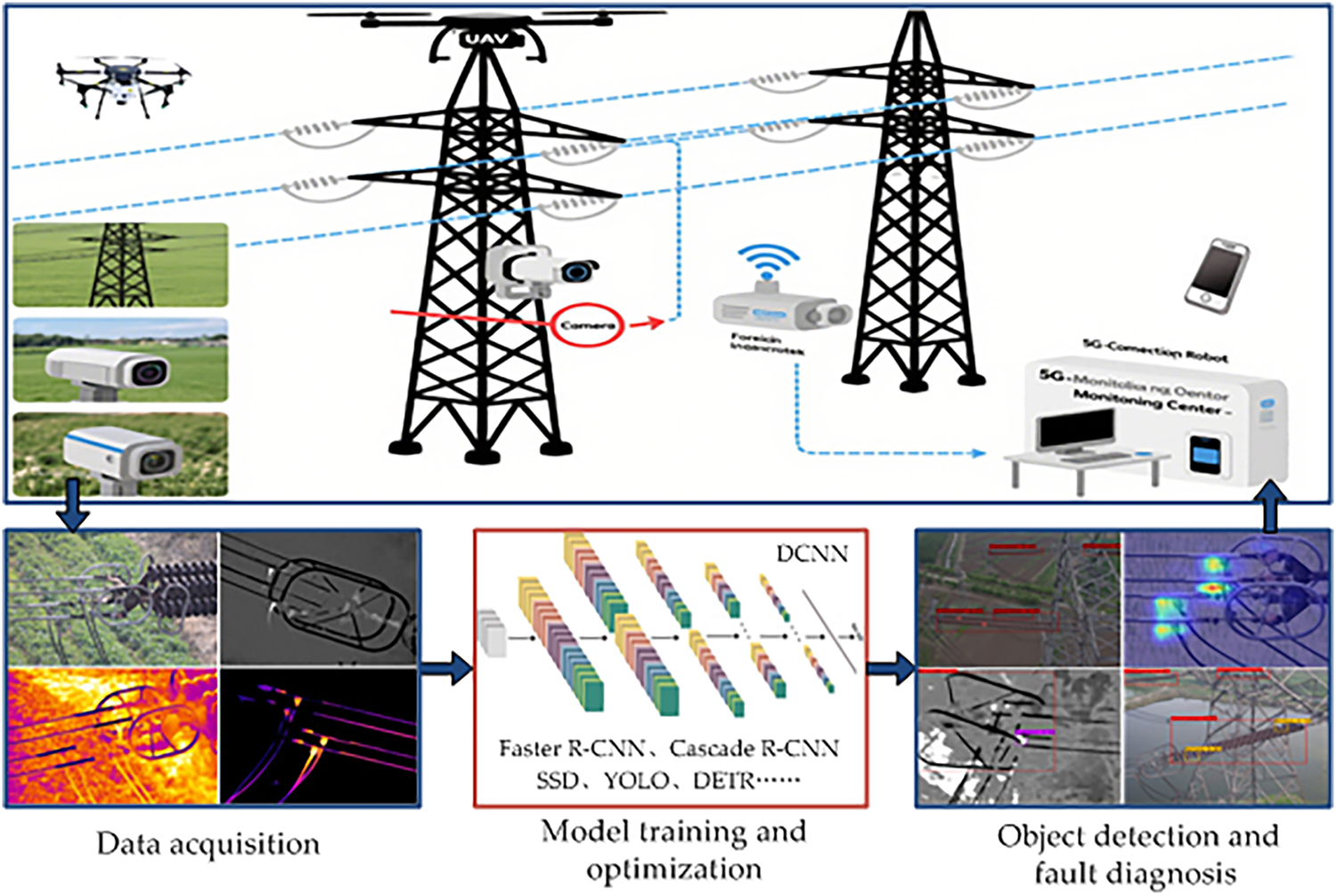

With the continuous deepening of smart grid digital transformation, image acquisition devices based on high-definition cameras have undergone continuous iteration and upgrading. Meanwhile, supporting technologies such as image processing, machine vision, and deep learning have become increasingly mature, laying a solid foundation for the intelligent advancement of transmission line inspection. Currently, UAVs equipped with visible-light cameras, infrared cameras, and Light Detection and Ranging (LiDAR) have been widely applied in transmission line inspection scenarios. Intelligent transmission line inspection integrates the advantages of computer vision and deep learning technologies, effectively enhancing the safety, reliability, and operational efficiency of transmission systems [19,20]. Fig. 1 illustrates the workflow of image perception-based intelligent inspection for transmission lines, which primarily includes three core links: data acquisition, deep learning network model training and optimization, transmission line target detection and fault diagnosis.

Figure 1: Intelligent inspection flowchart for transmission lines.

Nguyen et al. [21] systematically constructed a technical framework for the intelligent inspection of transmission lines. They not only comprehensively sorted out the applicable scenarios and limitations of traditional inspection methods (manual inspection and helicopter inspection) and emerging technologies (UAV inspection and robot inspection) but also conducted in-depth analyses of the characteristics of various data sources—including visible light images, infrared thermal imaging data, and Light Detection and Ranging (LiDAR) point clouds—and their application value in inspection. Crucially, this study prospectively summarized the research status of deep learning in power inspection, clarified the potential of models such as CNNs and Recurrent Neural Networks (RNNs) in tasks like image classification, target detection, and defect recognition, and laid a theoretical foundation for the subsequent in-depth integration of deep learning technology with inspection scenarios.

Yang et al. [22] focused on the core issues of image detection technology for transmission lines inspection. They first categorized typical detection tasks in inspection aerial images, such as power component positioning, defect recognition, and obstacle detection. Then, by comparing the technical parameters and actual performance of different inspection platforms (fixed-wing UAVs, multi-rotor UAVs, tethered balloons, etc.), they analyzed the differences in adaptability of various platforms in complex terrains (mountainous areas, jungles, cross-river regions) and harsh weather conditions (heavy rain, fog, strong electromagnetic interference). In addition, this study systematically summarized the functional modules and detection processes of multiple existing implemented automatic inspection systems, pointed out the shortcomings of current systems in image blur processing, small target missed detection, and algorithm real-time performance, and proposed future research directions such as optimizing the robustness of machine vision algorithms and building a multi-sensor fusion system—thus providing practical technical paths for engineering applications.

Liu et al. [23] focused on the data level and comprehensively reviewed deep learning-based data analysis technologies for transmission lines inspection. They divided visual detection into two core tasks: power component recognition and fault diagnosis. They compared in detail the accuracy and efficiency of different deep learning models (such as YOLO, SSD, Faster R-CNN, and Mask R-CNN) in detecting components like towers, insulators, conductors, and metal fittings. In parallel, they analyzed the advantages and disadvantages of two approaches to defect recognition tasks: direct detection and indirect recognition based on component detection. Notably, this study insightfully identified the core challenges faced by current technologies: in data quality, issues include sample imbalance, high labeling costs, and severe data noise in harsh environments; in small target detection, tiny components such as bolts and pins occupy a small pixel proportion in images and have blurred features, which makes it difficult to improve detection accuracy; in embedded applications, there is a conflict between the high computing power required by deep learning models and the hardware limitations of terminal devices such as UAVs and robots; in evaluation benchmarks, the lack of unified datasets and evaluation indicators hinders horizontal comparison of different research results. These insights have clarified the key directions for subsequent research.

Liu et al. [24] further focused their research on insulators—a critical component—and traced the evolutionary trajectory of defect detection methods from a technical standpoint. They compared the fundamental methodological differences between traditional image processing methods (which rely on manually designed features such as color, shape, and texture) and deep learning methods (end-to-end learning that automatically extracts high-level features), noting that traditional methods have limitations such as poor adaptability and weak generalization in complex scenarios. In contrast, deep learning methods, by leveraging their strong feature learning capabilities, demonstrate higher accuracy and robustness in tasks such as insulator fragment dropping, self-explosion, and contamination level evaluation. Additionally, this study also addressed unique challenges in insulator defect detection, such as variations in defect characteristics among insulators of different materials (porcelain, glass, composite) and image quality degradation under harsh weather conditions (e.g., icing, thunderstorms), and proposed future development trends including model improvement integrated with domain knowledge and lightweight network design.

Luo et al. [25] proposed a “full-process intelligent” technical framework centered on the mainstream scenario of UAV inspection. Their research encompasses the entire workflow of UAV inspection: in the path planning phase, they analyzed the autonomous obstacle avoidance algorithm based on environmental perception and the strategy for generating globally optimal paths; in the trajectory tracking phase, they discussed high-precision positioning and attitude control technologies which are designed to ensure the stability of inspection routes; in the fault detection and diagnosis phase, they summarized the application of deep learning models in real-time defect recognition and the method of multi-modal data fusion (visible light and infrared images) to improve diagnostic accuracy. Furthermore, this study identified the practical challenges faced by UAV inspection, such as limited inspection range caused by battery capacity constraints, interference from strong electromagnetic environments on communication signals, and difficulties in autonomous takeoff and landing in complex terrains. It also proposed potential solutions including lightweight energy systems, anti-interference communication protocols, and intelligent auxiliary takeoff and landing systems, thereby providing a systematic approach for the full-process optimization of UAV inspection.

Although the aforementioned review studies all center on intelligent transmission line inspection, each has distinct research focuses: Nguyen et al. concentrated on constructing technical frameworks; Yang et al. focused on core issues of image detection technology for inspection; Liu et al. emphasized data-level analysis; Liu et al. focused on the evolutionary trajectory of insulator defect detection methods; and Luo et al. centered on the full-process framework of UAV inspection. By comparison, this review takes deep learning algorithms as the core backbone, closely integrating the technical principles, application scenarios, and future directions of intelligent inspection to form a closed-loop analytical logic of algorithm-application-evaluation-outlook, and thus highlights the core driving role of deep learning in intelligent inspection.

This review systematically categorizes six application directions (e.g., power insulator defect detection, transmission tower detection, etc.), covering the full-spectrum demands in transmission line inspection from component-level to scenario-level, and from single-target to multi-target tasks, thus forming a more complete application system. Such an integration not only reflects comprehensive coverage of domain applications but also responds to the comprehensive detection needs in complex engineering scenarios through directions like multi-target recognition and defect detection, filling the gaps in single-scenario research. Existing reviews mostly focus on technical combing, with a lack systematic evaluation of research results and integration of data resources. This paper conducts a comprehensive evaluation of retrieved and screened papers regarding deep learning-based intelligent inspection applications, quantifying the performance of different studies through an evaluation system and providing an objective reference standard for researchers in the field. Although the aforementioned reviews mention technical challenges, their discussions on future directions are mostly limited to a single technical dimension. This review proposes five future directions from the perspective of interdisciplinary integration: combining deep learning with multiple learning paradigms, multimodal data, large language models, cloud-edge-end architectures, and multi-agent technologies, and thereby breaks through through the traditional idea of single technical upgrading and emphasizes the innovative path of collaborative integration.

3 Intelligent Inspection Based on Deep Learning Algorithms

In the field of computer vision, the emergence of deep convolutional neural networks has equipped deep learning with robust feature extraction capabilities, allowing it to extract highly complex and abstract feature representations from massive image datasets. This capability provides robust support for key tasks such as image classification, object detection, and semantic segmentation. Moreover, leveraging the availability of large-scale public datasets and the computing power of high-performance hardware, researchers have developed a series of advanced backbone networks and object detection networks, driving breakthroughs in deep learning-based image classification, object detection, and segmentation technologies within computer vision. Notably, numerous researchers have focused on developing deep learning frameworks, fostering a technical ecosystem typified by Darknet, MXNet, Convolutional Architecture for Fast Feature Embedding (Caffe), TensorFlow, Keras, PaddlePaddle, and PyTorch. These frameworks, with their comprehensive functionalities and user-friendly design, offer strong technical guarantees for the large-scale deployment and cross-domain application of deep learning technologies.

This section provides a systematic overview of deep learning technologies for image-based intelligent inspection of transmission lines, classified according to object detection networks, semantic segmentation networks, and other methods.

3.1 Object Detection Algorithms

In recent years, deep learning-based object detection has emerged as a research hotspot in machine vision. Since the advent of AlexNet in 2012, researchers have driven innovations in feature extraction networks by reconstructing architectures and designing novel modules, achieving a series of landmark technological breakthroughs. Specifically, early networks such as Zeiler-Fergus Network (ZF-Net) and Visual Geometry Group Network (VGG-Net) effectively increased the depth of deep CNNs while controlling computational complexity by reducing filter size. GoogLeNet innovatively introduced the Inception module, which significantly lowered model computational costs through multi-scale feature fusion. Residual Neural Network (ResNet), meanwhile, overcame the training bottleneck of deep networks via cross-layer connections (residual structures), greatly extending network depth and thus becoming the most widely adopted backbone network to date. Building on this foundation, researchers have further optimized and upgraded these frameworks, successively developing derivative networks such as Residual Neural Network with Next-generation architecture (ResNeXt), Inception-ResNet, Res2Net, and Residual Neural Network with Nest architecture (ResNeSt), which continuously enhance feature extraction performance. Additionally, to address the computational constraints of mobile devices, some researchers have focused on lightweight solutions, developing backbone networks like MobileNet, Xception, and ShuffleNet. These networks leverage technologies such as depth-wise separable convolution and channel shuffling to drastically reduce model size while preserving core performance, specifically addressing the requirements of portable applications.

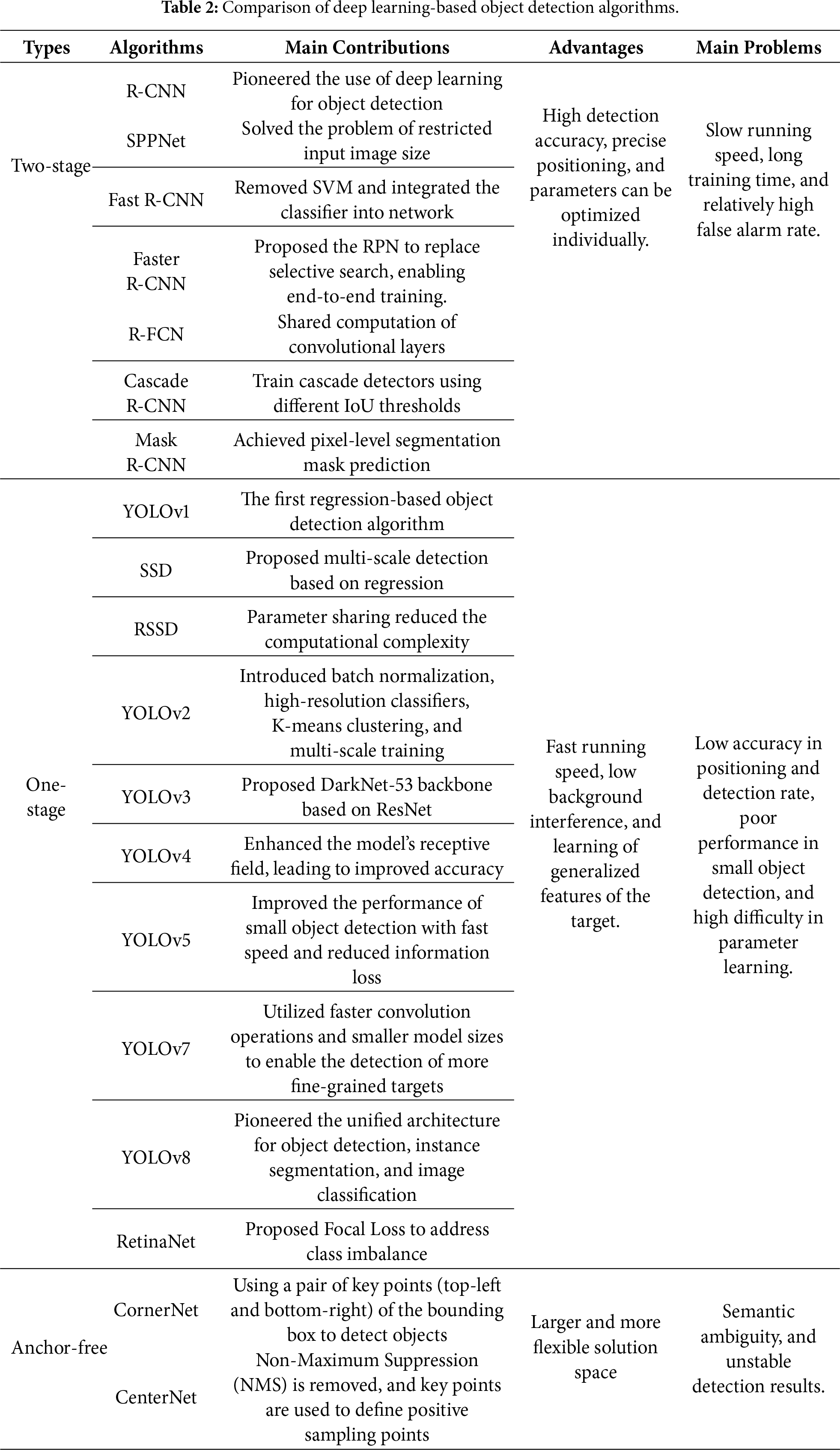

As deep learning theories continue to advance, a wealth of high-performance object detection algorithms have been developed in academia and industry. Based on distinct detection mechanisms in the model inference process, these algorithms can be clearly categorized into three types: two-stage detectors, one-stage detectors, and anchor-free detectors. These three categories differ significantly in detection accuracy, processing speed, and applicable scenarios.

3.1.1 Two-Stage Detection Algorithms

Two-stage object detection algorithms achieve object localization and classification through two sequential steps: first generating candidate regions, then classifying these regions. Typical algorithms include Faster R-CNN and Spatial Pyramid Pooling Network (SPPNet); the latter enhances feature extraction efficiency via spatial pyramid pooling; Cascade R-CNN improves detection accuracy by iteratively refining candidate boxes; Region-based Fully Convolutional Network (R-FCN) integrates the advantages of fully convolutional networks and region-based methods for detection. Additionally, as a representative extension of Faster R-CNN, Mask R-CNN not only realizes object detection but also generates instance segmentation masks, thereby providing more detailed object information.

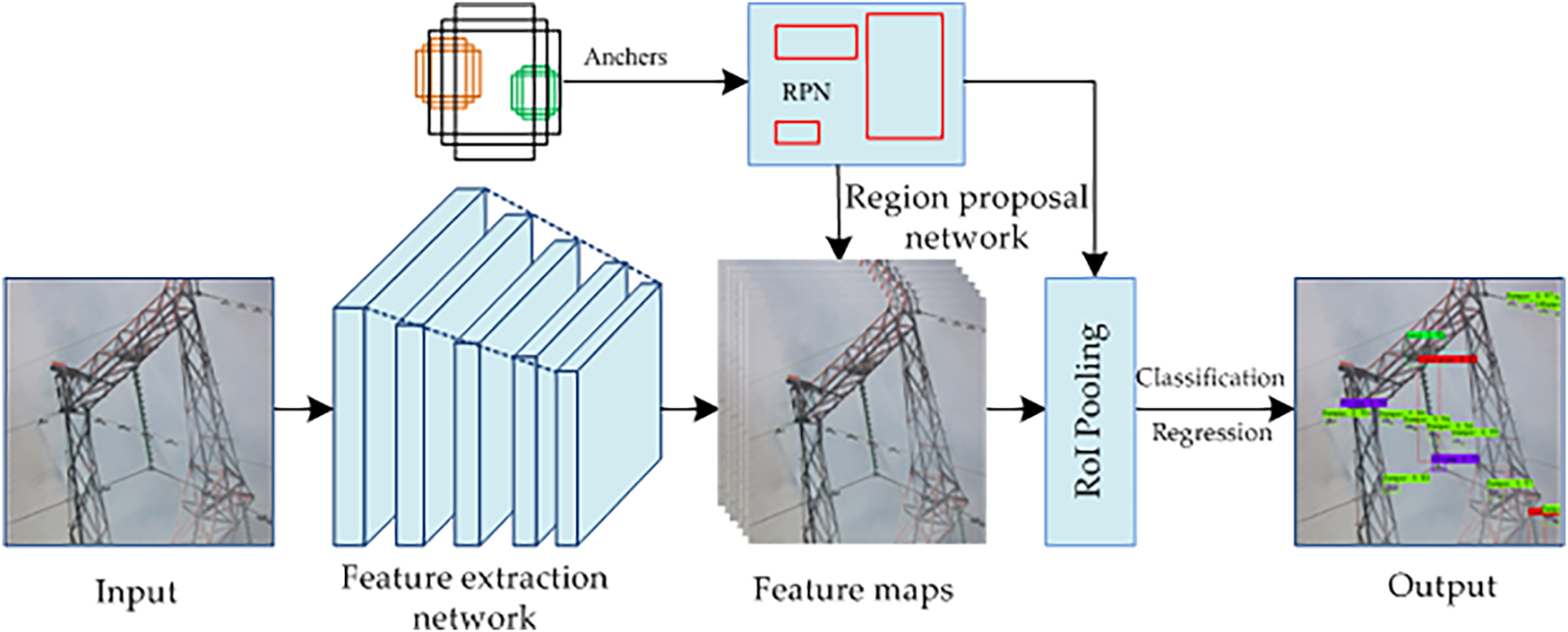

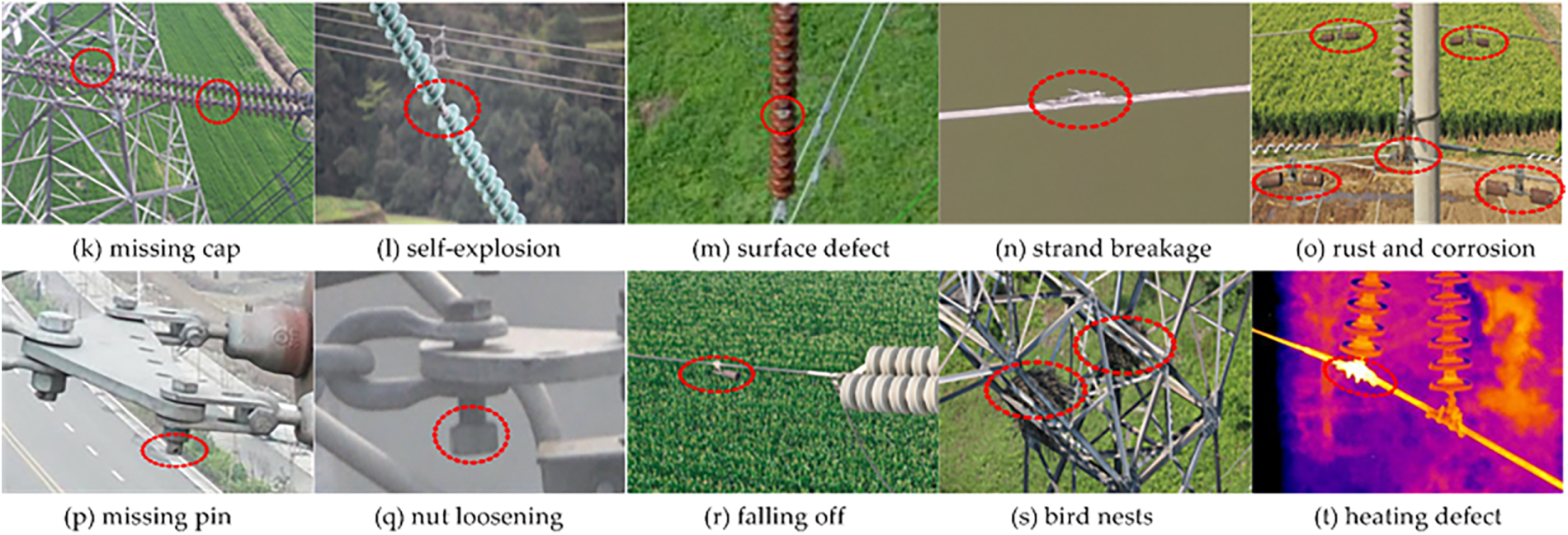

Faster R-CNN, a mainstream high-accuracy object detection algorithm, is among the most widely adopted two-stage frameworks. Its architecture, as shown in Fig. 2, includes three core components: feature extraction network, RPN (Region Proposal Network), and Region of Interest (RoI) Pooling. The feature extraction network generates a shared feature map via convolutional operations on the input image; the RPN slides a sliding window over this map to produce candidate regions, with its classification branch identifying object-containing anchor boxes and regression branch refining their positions and sizes; RoI Pooling converts these variable-sized regions into fixed-size features, which are then processed by fully connected layers for object classification and fine-grained bounding box regression. This design allows Faster R-CNN to simultaneously achieve accurate object classification and precise bounding box localization, thus achieving detection accuracy far exceeding many contemporary single-stage algorithms. As a result, researchers widely apply the algorithm and its improved variants to identify power components and detect defects in aerial images.

Figure 2: Faster R-CNN architecture.

Reference [26] employed Faster R-CNN for insulator detection and defect localization in aerial images. The trained model performed excellently on the test set, achieving a detection accuracy of 94% and a recall rate of 88%, and a detection speed of 10 Frames Per Second (FPS). To tackle this limitation and address the missed detection of small defects in complex aerial backgrounds, reference [27] proposed a cascaded insulator defect recognition framework integrating global detection and local segmentation. By introducing ResNeXt-101, Feature Pyramid Network (FPN), and the Online Hard Example Mining (OHEM) strategy into Faster R-CNN, this method improved insulator defect detection accuracy to 97.3%. Reference [28] presented an insulator defect detection method based on ResNeSt and multi-scale RPN. Through optimizing the ResNeSt architecture, implementing adaptive feature fusion, and integrating multi-scale RPN design, the method effectively enhanced small-target detection capability and resolved the missed detection of small defects in complex scenes. For more complex high-resolution UAV scenarios facing challenges of complex background interference and small-target detection, reference [29] proposed two improved Faster R-CNN variants (Exact-RCNN and CME-CNN). Experiments demonstrated that both variants can effectively detect insulator defects in high-resolution UAV imagery. To further improve multi-defect detection accuracy, reference [30] proposed a method based on the Multi-Geometric Reasoning Network (MGRN). By incorporating Spatial Geometric Reasoning (SGR), Appearance Geometric Reasoning (AGR), and Parallel Feature Transformation (PFT) submodules into the Faster R-CNN framework, the approach significantly enhanced detection accuracy when defect samples are limited. Reference [31] proposed a Faster R-CNN-based pointer meter recognition method, representing the first attempt to apply deep learning to meter positioning and reading inference. By calculating the geometric angle between the pointer and scales, this method effectively overcame recognition challenges under complex lighting and angles. Reference [32] put forward a small-sized insulator defect detection method based on I2D-Net. This method integrates the Three-Path Feature Fusion Network (TFFN), Enhanced Receptive Field Attention (RFA+) Module, and Context Perception Module (CPM) to strengthen feature extraction and localization capabilities for small-scale defects. Reference [33] proposed the GFRF-RCNN algorithm for detecting small-scale power components. By replacing the backbone network with AdvResNet50 and adopting the Guided Feature Refinement (GFR) method to enhance small targets, this GFRF-RCNN (an improved Faster R-CNN variant) effectively enabled the detection of small insulators, shock hammers, bolts, and other components.

In addition, two-stage detection algorithms such as Cascade R-CNN, R-FCN, and Mask R-CNN have also been widely applied to intelligent inspection tasks for transmission lines. Among them, Cascade R-CNN significantly enhances the detection accuracy of subtle defects through multi-stage iterative refinement of candidate boxes, rendering it particularly suitable for inspection scenarios in complex terrains [34]. R-FCN integrates the advantages of fully convolutional networks and regional features, ensuring stable recognition of small-scale power components while maintaining high detection speed. Mask R-CNN extends object detection with an instance segmentation function, which can accurately delineate the contours of power components like insulator strings and conductors, thereby providing more detailed spatial information for defect localization [35]. These algorithms effectively complement Faster R-CNN and its variants, thus collectively driving the evolution of intelligent transmission line inspection technology from single defect detection to full-component, multi-dimensional state assessment.

3.1.2 One-Stage Detection Algorithms

One-stage object detection methods occupy a prominent position in the field of computer vision due to their concise and efficient architecture. Unlike two-stage methods, they eliminate the region proposal generation process and subsequent feature processing operations, thereby greatly simplifying the detection workflow. Leveraging the powerful feature extraction capabilities of CNNs, these methods directly and simultaneously predict object classes and positions from predefined spatial grids across the entire input image, markedly enhancing detection speed and making them particularly suitable for scenarios with high real-time requirements. Among the numerous one-stage algorithms, typical representatives include SSD [36], YOLO [37], and RetinaNet [38]. Among these, the YOLO algorithm is particularly highly favored for its outstanding performance in engineering applications. It achieves efficient end-to-end inference by partitioning the input image into spatial grids, with the grid containing an object’s center tasked with detecting that object. By comparison, SSD detects objects of varying sizes via multi-scale feature maps, while RetinaNet introduces focal loss to address class imbalance.

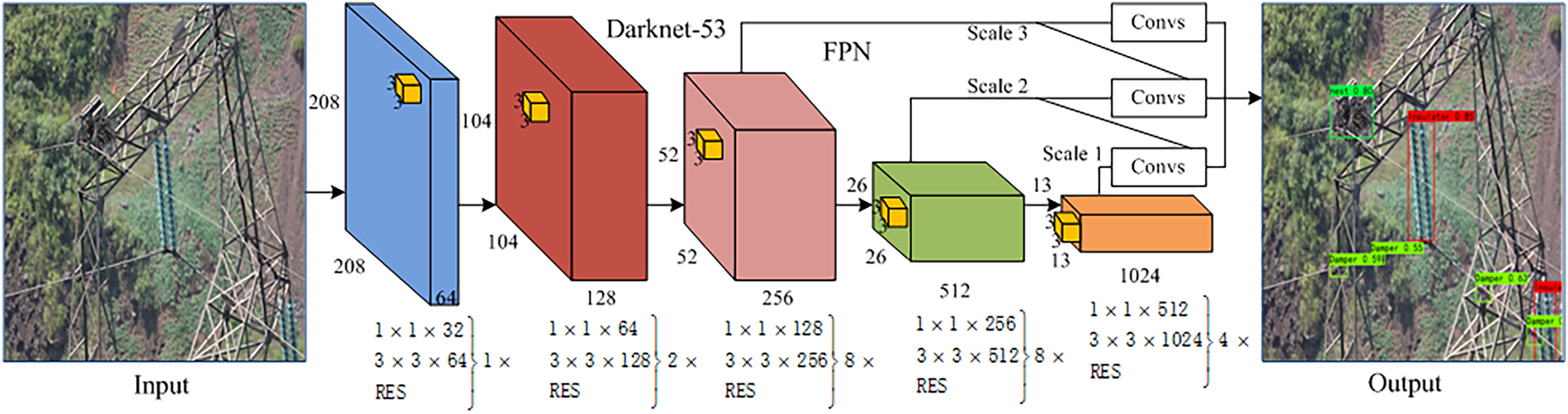

As a typical one-stage object detection framework, YOLO directly and simultaneously outputs the spatial location information and corresponding category labels of target objects from input images through an end-to-end inference process. Since YOLOv1 pioneered the one-stage detection paradigm in 2016, the series has undergone multiple rounds of iterative optimization and evolved to YOLOv11, forming an algorithmic system that addresses diverse scenario requirements [39]. A pivotal milestone in this evolution is YOLOv3, as shown in Fig. 3, which introduced several innovative advancements: it adopted Darknet-53 as its backbone network, boosting deep feature extraction capabilities via residual connections; simultaneously, it implemented a multi-scale prediction mechanism based on FPN principles. This mechanism operates on three feature maps of varying resolutions, each tailored to detect large-, medium-, and small-sized objects respectively, thereby significantly improving the insufficient small-object detection accuracy that plagued previous versions. With continuous algorithmic advancements, the YOLO series has developed multi-scale models spanning lightweight to high-performance variants, enabling flexible adaptation to diverse scenarios including mobile device deployment, real-time field monitoring, and high-precision industrial inspection. In engineering practice, numerous studies have verified that applying the YOLO algorithm to transmission line inspection image analysis enables efficient identification of key power components and various types of defects, providing robust technical support for the intelligent operation and maintenance of power systems.

Figure 3: The architecture of YOLOv3.

To enhance the accuracy and speed of insulator defect detection, reference [40] proposed a YOLOv4-based model improved via data augmentation and K-means anchor clustering. Experimental results indicate that the detection accuracy of the improved model is 37.2% higher than that of the original YOLOv4 algorithm; compared with benchmark algorithms such as SSD and Faster-RCNN, it exhibits significantly better robustness under varying lighting conditions and complex backgrounds. Reference [41] developed an enhanced YOLOv5 algorithm integrating the C2f module and SimAM attention mechanism. This model effectively tackles the low accuracy issue caused by small target proportions and complex backgrounds in insulator defect detection, thus making it more suitable for low-altitude UAV-based insulator defect detection scenarios. Reference [42] proposed MFI-YOLO, a multi-fault detection algorithm for insulators based on YOLOv8. By constructing the MSA-GhostBlock module to enhance feature extraction in complex backgrounds and designing the ResPANet residual pyramid structure to optimize multi-scale feature fusion, the algorithm successfully addresses the challenge of multi-scale target detection in complex scenarios. Reference [43] proposed IF-YOLO based on YOLOv10, which enhances small-target feature representation and extraction via the Group Collaborative Attention (GCA) module. This effectively alleviates issues such as low accuracy and frequent missed detections in UAV inspections of insulator defects. Reference [44] proposed the ID-YOLO algorithm by integrating the GConv, C3-GPF, MSIF, and WFIF modules into the YOLOv5 framework. This algorithm effectively resolves the problem of detecting multiple targets and small targets in complex backgrounds, satisfying the requirements of real-time power system inspections.

For foreign object intrusion detection in transmission lines, reference [45] proposed the KM-YOLO model based on an improved YOLOv5. By integrating the C3GC attention module into the backbone network, adopting a dynamic decoupled detection head, and using the SIoU loss function, this model enhances the detection capability for foreign objects such as bird nests and kites, thereby providing a practical solution for the safety monitoring of transmission lines. Reference [46] improved YOLOv5 by fusing the coordinate attention mechanism with FasterNet, and proposed the FusionNet model. This model can still achieve accurate target detection under severe weather conditions while balancing detection accuracy and the model’s lightweight requirements. Reference [47] developed a foreign object detection model based on an improved YOLOv5. By adopting the C3CG module to enhance feature extraction, utilizing Spatial Pyramid Dilated Convolution (SPD-Conv) to mitigate information loss during downsampling, and introducing the Simple Attention Module (SimAM) to improve the detection performance of small targets in complex backgrounds, the model achieves effective detection of small-scale foreign objects in complex transmission line scenarios. Another study by Wang et al. [48] proposed a foreign object detection model based on an improved YOLOv8. This model introduces the Efficient Channel Attention (ECA) attention mechanism to enhance inter-channel feature dependency and adds a small-target detection module in the detection head, improving detection accuracy while ensuring high speed, thus making it suitable for UAV intelligent inspection scenarios.

To address the challenge of rapidly and accurately identifying hardware defects in UAV inspection images, Zou et al. [49] proposed an improved YOLOv5-based method. By fusing the Convolutional Block Attention Module (CBAM) attention module with Omni-Dimensional Dynamic Convolution (ODConv), this method effectively enhances the detection accuracy of hardware defects in transmission lines. Shi et al. [50] developed SONet, a small-target detection network based on YOLOv8. This network employs the Multi-Branch Dilated Convolution Module (MDCM) to capture features across diverse receptive fields and replaces PANet with the Adaptive Attention Feature Fusion (AAFF) structure, thereby significantly boosting the detection accuracy of small-scale hardware defects in UAV inspection imagery. Liu et al. [51] proposed a two-stage cascaded RepYOLO model (an optimized variant of YOLOv5 integrated with RepVGG, Diverse Branch Block, and ECA modules) for the specific task of small-target pin loss detection. With an inference speed 4 times that of YOLOv5 and a 1.2% accuracy improvement, the model enables low-latency real-time deployment on resource-constrained edge devices.

To address the challenge of slow detection speed caused by the limited computing capacity of edge devices, Hou et al. [52] proposed the YOLO-GSS algorithm. This algorithm replaces the backbone network with G-GhostNet and optimizes the neck module with the S-FPN structure, thereby enhancing feature fusion and reducing overall model computational complexity. Han et al. [53] developed TD-YOLO based on YOLOv7-Tiny, which achieves a frame rate of 23.5 FPS on Jetson Xavier NX, thus providing a valuable reference for low-power edge device deployment in power inspection scenarios. Wu et al. [54] proposed the lightweight GMPPD-YOLO model. By implementing channel pruning and knowledge distillation to reduce the model size by 66.4%, it enables real-time high-precision detection on edge devices. Wang et al. [55] put forward a lightweight multi-type defect detection method based on YOLOv8, which effectively balances high detection accuracy and low-latency real-time inference performance. Xiang et al. [56] introduced the BN-YOLO algorithm for bird’s nest detection, by designing a lightweight C2f structure that increases the inference speed from 61 FPS to 83 FPS and thus solves the challenge of real-time detection in complex environments.

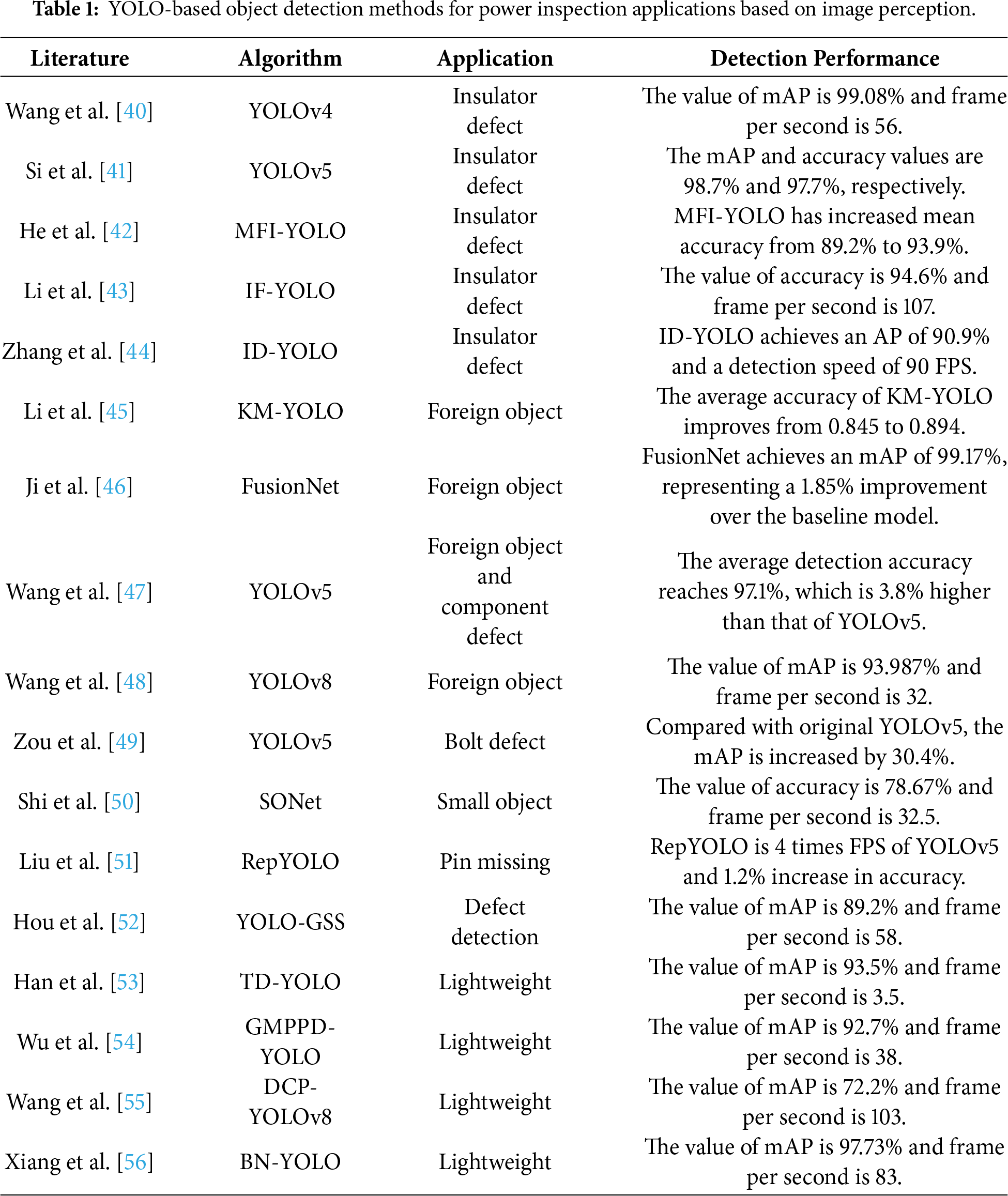

In summary, YOLO-based object detection methods for power transmission line inspection image perception have demonstrated significant advantages in scenarios such as insulator defect detection, transmission line foreign object intrusion detection, metal fittings defect identification, and edge device adaptation. Existing studies have effectively enhanced detection accuracy, speed, and adaptability to complex environments by optimizing backbone networks, upgrading feature fusion architectures, integrating attention mechanisms, and implementing lightweight optimization technologies. For instance, to address the long-standing challenge of small-target defect detection, detection performance is enhanced via modules like multi-branch convolution and adaptive feature fusion; to adapt to edge devices, real-time deployment is achieved via model compression and lightweight design. These methods provide diverse technical support for the intelligent operation and maintenance of power systems, with their specific performance metrics and application scenarios summarized in Table 1, serving as a valuable technical reference for subsequent academic research and field engineering practice.

Since the introduction of the anchor mechanism in Faster R-CNN, anchor-based two-stage and one-stage object detection algorithms have become mainstream paradigms in the field of object detection. However, such algorithms require the predefined generation of a large number of anchors, whose sizes must be tailored to the specific characteristics of the dataset—this inherently restricts the model’s generalization capability. In addition, the vast majority of anchors correspond to background regions, with only a small fraction overlapping with actual targets, which readily induces a severe positive-negative sample imbalance and thus leads to classification outcomes dominated by negative samples. To mitigate these drawbacks of anchor-based detectors, anchor-free object detection algorithms have gradually gained traction. Unlike their anchor-based counterparts, these methods eliminate the need for per-pixel anchor predefinition and instead perform direct object detection on input image pixels, which not only effectively reduces model complexity but also further enhances the model’s universality for multi-category object detection tasks. Based on different sample assignment strategies, anchor-free object detection approaches are primarily categorized into two types: keypoint grouping and center point regression.

For the object detection pipeline based on center-point regression, a feature extraction network is first utilized to extract feature maps. Subsequently, the detection head module predicts three core target properties: the center-point location, the scale of the bounding box, and the center-point offset. Finally, the post-processing module selects the optimal bounding boxes, thus accomplishing the object detection task. For instance, Law and Deng [57] proposed the CornerNet algorithm based on the anchor-free paradigm; this algorithm converts bounding box prediction into the detection and localization of corner point pairs. However, this method suffers from high computational complexity during the matching of valid corner point pairs and is susceptible to corner point pair mismatches, which consequently degrades the accuracy of the resulting bounding boxes. For the keypoint grouping-based object detection pipeline, a feature extraction network is first employed to extract feature maps. Subsequently, multiple keypoint prediction modules identify and localize keypoints (i.e., pixels that can characterize the typical features of targets), after which candidate bounding boxes are delineated based on these keypoints. Finally, object detection is achieved by regressing and aggregating these keypoints—including corner points, center points, or extreme points. Building upon CornerNet, Duan et al. [58] proposed the CenterNet algorithm, which introduces three sets of key points (i.e., top-left corners, bottom-right corners, and center points) to constrain the generation of bounding boxes. Specifically, the algorithm refines the predicted bounding boxes through the cascade of corner pooling and center pooling modules.

Compared with anchor-free algorithms based on keypoint grouping, those based on center-point regression eliminate the keypoint matching step, thereby enhancing the model’s overall performance. CenterNet and its improved variants exhibit high target detection accuracy and excellent real-time performance, having been widely deployed in the field of fault detection for power inspection equipment. To address the challenges of detecting small targets and low-contrast internal defects in X-ray images, Wang et al. [59] proposed a CenterNet-based improved architecture. By incorporating a cross-scale feature fusion module and a defect-region attention mechanism, this framework enhances the feature extraction capability for minute defects (e.g., core rod microcracks and fiber delamination). Additionally, the loss function is refined to mitigate the problem of defect sample imbalance. When benchmarked against conventional approaches—including threshold segmentation, Faster R-CNN, and the vanilla CenterNet—the improved model delivers state-of-the-art performance across key evaluation metrics: mean Average Precision (mAP), defect miss-detection rate, and detection speed. Building upon the CenterNet framework, Meng [60] proposed an improved model dubbed CenterNet plus by introducing a context clustering feature enhancement module, incorporating a multi-scale defect detection branch, and refining the loss function. Comparative experiments against the original CenterNet were performed, and the results demonstrate that the improved model elevates the mAP of insulator defect recognition by 8%–12%, cuts the missed detection rate of small defects by over 15%, and retains a detection speed that satisfies the real-time demands of UAV-based inspection.

Two-stage detection algorithms, with their unique step-by-step processing mechanism, exhibit significant advantages such as accurate localization, shareable computational overload, and module-individually optimizable parameters. This design enables them to effectively capture the detailed features of targets through the dual processes of region proposal and fine-grained classification, thereby achieving high detection accuracy in complex scenarios. However, due to the requirement that candidate regions and target classification must be completed in stages, the model training process requires collaborative optimization across multiple modules—resulting in a significantly prolonged training cycle. Meanwhile, the dual computational flow in the inference stage also drastically reduces detection speed, which makes it difficult to satisfy the requirements of high-real-time scenarios.

One-stage detection algorithms, leveraging an end-to-end detection process, exhibit significant advantages such as fast inference speed, strong resistance to background interference, and a robust capability to learn generalized target feature representations, and thus finding extensive application in scenarios with high real-time requirements. However, these algorithms often struggle with small-target detection: due to the low pixel occupancy ratio and insufficient feature information of small targets, small targets are easily obscured or misclassified in complex backgrounds. Additionally, fine-grained target details tend to be lost during deep feature extraction, leading to low detection accuracy and high missed detection rates. This limitation is particularly prominent in scenarios with dense small targets, such as UAV-based power line inspection and aerial remote sensing image analysis, and has become a key bottleneck limiting their further application [61,62].

Anchor-based algorithms generate candidate regions using predefined anchor boxes to achieve direct target classification and bounding box coordinate regression. By incorporating prior knowledge of typical target scales and aspect ratios into the detection pipeline, such algorithms can achieve higher detection recall rates, especially for small-target detection tasks. However, manual hyperparameter design (e.g., predefined anchor scales and aspect ratios) tends to induce severe positive-negative sample class imbalance. In contrast, anchor-free algorithms eliminate the process of anchor box generation, thus providing a more flexible algorithmic solution space while reducing computational overhead. Nevertheless, they often face issues such as severe foreground-background category imbalance, semantic ambiguity, and unstable training convergence. Despite these inherent limitations, anchor-based algorithms such as Faster R-CNN, SSD, and YOLO remain widely deployed in practical computer vision applications. The advantages and disadvantages of these algorithms are summarized in Table 2.

3.2 Semantic Segmentation Algorithms

The core of semantic segmentation lies in assigning corresponding category labels to each pixel in an image. State-of-the-art deep learning-based semantic segmentation networks must simultaneously fulfill two core tasks: first, extracting hierarchically structured multi-scale target features in a top-down manner, and second, reconstructing image spatial dimensions in a bottom-up fashion to enable pixel-wise classification. Such networks typically adopt an encoder-decoder architecture: the encoder module performs hierarchical feature extraction via backbone networks (e.g., VGG, ResNet), while the decoder module achieves precise image size restoration through upsampling operations and skip connections (e.g., cross-layer feature fusion mechanisms). Classic algorithms including Fully Convolutional Networks (FCN), U-Net, SegNet, and DeeplabV1-V3+ leverage the robust feature learning capability of deep convolutional neural networks to model global and local image context information, thereby enabling high-accuracy processing of diverse pixel-level segmentation tasks (e.g., semantic and instance segmentation).

Since Chen et al. [63] proposed DeepLab v1, dilated convolution modules have become standard components in advanced semantic segmentation networks; these networks effectively fuse multi-level deep and shallow feature maps by configuring varying dilation rates. Focusing on deep learning-based pixel-level image segmentation technologies, this section summarizes mainstream algorithms across different architecture categories and conducts an in-depth analysis of their practical application value in power component recognition and defect detection for intelligent transmission line inspection. For instance, the power line segmentation algorithm for multi-spectral UAV images proposed by Hota et al. [64] effectively resolves the confusion between power lines and background in complex terrains by fusing visible light and infrared features, providing precise power line contours for hidden danger investigation and early warning in transmission line corridors. The insulator instance segmentation and defect detection scheme by Antwi-Bekoe et al. [65] precisely identifies damaged areas of umbrella skirts through pixel-level classification, offering a quantitative basis for insulation performance evaluation. Additionally, relevant studies have achieved notable results: Ye et al. [66] realized substation insulator defect detection based on CenterMask, while Hu et al. [67] completed ice-cover segmentation on transmission lines using an improved U-Net combined with Generative Adversarial Networks (GANs).

These algorithms have been validated in scenarios such as UAV inspection and robotic autonomous operation and maintenance, with their algorithmic characteristics, applicable scenarios, and segmentation performance comprehensively verified. This practical validation further provides key technical support for shifting transmission line inspection from traditional manual patrols to intelligent, unmanned monitoring.

Beyond the traditional intelligent inspection of transmission lines based on object detection and semantic segmentation techniques, advanced technical approaches leveraging Transformer architectures, 3D point cloud processing, lightweight network models, and GANs are gradually improving the environmental adaptability and operational efficiency of power line inspection scenarios. From the technical dimensions of global feature modeling, 3D spatial perception, edge deployment optimization, and data augmentation, these four technologies have specifically addressed key technical challenges in transmission line inspection, such as complex scenario adaptation, high-precision geometric measurement, edge-side real-time deployment, and defect sample scarcity, and thus providing multiple technical pathways for intelligent power grid operation and maintenance.

(1) Transformer, through its ability to efficiently aggregate global spatial feature information via multi-head self-attention mechanisms, has overcome the limitations of local receptive field-based feature modeling in traditional CNNs within transmission line inspection. Its core advantage lies in modeling long-distance inter-component correlations, which enables more comprehensive scene understanding—such as topological spatial relationships between conductors and transmission towers, or between metal fittings and insulator strings—effectively addressing complex background interference. For instance, the end-to-end insulator string defect detection method in complex backgrounds proposed by Xu et al. [68] employs Vision Transformer (ViT) to capture global features of insulators amid cluttered backgrounds, boosting defect recognition accuracy to 91.7% and significantly reducing false detection rates caused by vegetation occlusion. Cheng and Liu [69] realized high-precision power line insulator defect detection by improving the Detection Transformer (DETR), precisely locating damaged umbrella skirt areas via bidirectional attention mechanisms, which reduced the missed detection rate by 15% relative to traditional methods. Additionally, Shi et al. [70] integrated the strengths of CNN and Transformer to resolve power line detection challenges under occlusion, improving accuracy by 20% in cross-line scenarios—fully demonstrating the strong environmental adaptability of Transformer-based models in complex inspection scenarios.

(2) 3D point cloud technology breaks through the planar spatial constraints of 2D images via depth information reconstruction and fusion, providing accurate three-dimensional spatial cognition for fine-grained transmission line inspection tasks. Using Structure-from-Motion (SfM) techniques with monocular or binocular cameras or high-precision ranging via LiDAR, a high-precision 3D model of the transmission line corridor (incorporating spatial coordinates and key physical attributes) can be constructed. Chen et al. [71] proposed an insulator extraction method from UAV LiDAR point clouds based on multi-type, multi-scale feature histograms, achieving 96.3% insulator extraction accuracy in high-density point clouds, which outperforms traditional 2D image-based methods. Ni et al. [72] utilized UAV LiDAR to detect and predict tree-related risks to transmission lines, calculating the minimum distance between trees and conductors via point cloud modeling, which enabled 7-day advance warnings of potential hazards, representing a 5-fold efficiency improvement compared with traditional manual patrols.

(3) Lightweight network architectures and model compression technologies specifically tackle the deployment bottlenecks of deep neural networks on resource-constrained edge devices (e.g., UAV terminals, inspection robots) [73]. As the depth of detection/segmentation networks increases, model parameter volume and computational overhead surge exponentially, making it difficult to meet the strict real-time inference requirements of on-site power line inspections. Zhao et al. [74] proposed a dynamic supervision knowledge distillation-based method for classifying transmission line bolt defects, transferring “discriminative knowledge” from complex teacher models to lightweight student models. While maintaining 92% accuracy in bolt looseness detection, the model achieved a 60% size reduction, adapting to mobile terminal computing constraints.

(4) Generative Adversarial Networks tackle the critical challenges of scarce defect samples and insufficient environmental scene robustness in transmission line inspection through adversarial training between generators and discriminators. Wang et al. [75] generated UAV aerial images of high-voltage transmission line components using a multi-level GAN, synthesizing photorealistic insulator and conductor defect samples via multi-scale feature fusion and style transfer; this effectively expanded small datasets, elevating component recognition accuracy by 18%. Wu et al. [76] utilized a multi-scenario diverse sample generation model for detecting foreign object intrusions, enhancing environmental adaptability by synthesizing foreign object samples under varying lighting and weather conditions, thereby elevating foreign object detection accuracy rates from 72% to 90%. Zhang et al. [77] integrated GAN-based image generation with deep defect detection networks for substation equipment defect identification, using GAN to synthesize diverse defect samples (e.g., casing damage, overheated joints), resolving the long-standing issue of real-world defect data scarcity in substation scenarios.

4 Applications of Intelligent Inspection Based on Deep Learning

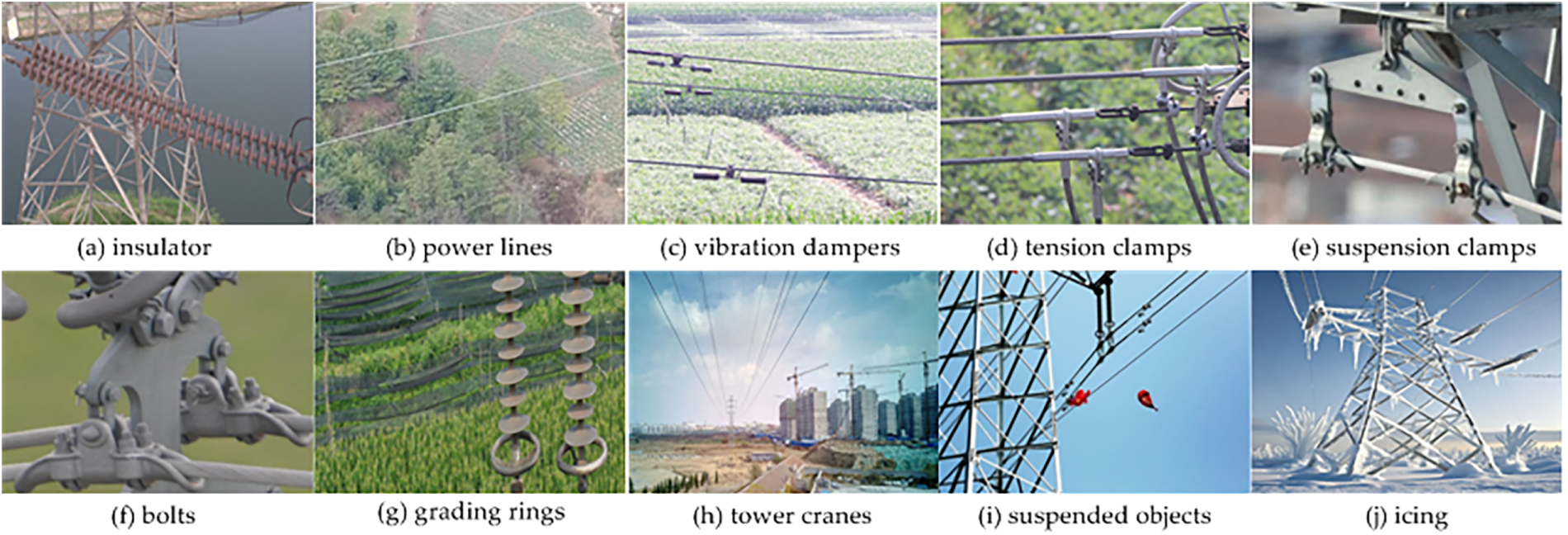

With the rapid advancement of machine vision and deep learning fusion technology, deep learning-driven target detection methods have been widely applied in the intelligent inspection of transmission lines. Numerous researchers have conducted in-depth studies on various detection networks, proposed scenario-adaptive improved algorithms for typical inspection tasks, and attained high-precision and real-time detection results that meet engineering application requirements. This section systematically explores the application of computer vision and deep learning technologies in power component identification and transmission line fault diagnosis (Fig. 4), reviewing the following six aspects: detection of power insulators and defects, detection of transmission towers and structural defects, power line feature extraction and spatial positioning, detection of metal fittings and defects, diagnosis of heating faults in power components, and safety hazard detection in power scenarios.

Figure 4: Different types of power components and defects.

4.1 Detection of Power Insulators and Defects

As a core insulating component in transmission lines, insulators are irreplaceable for ensuring the stable operation of power systems. These components are characterized by large quantities, wide coverage, and diverse types, including porcelain, glass, and composite insulators, each adapted to transmission lines of specific voltage levels and environmental conditions. Long-term exposure to complex and variable outdoor environments subjects insulators to dual stresses from natural and anthropogenic factors, gradually degrading their mechanical and electrical performance. Extreme weather conditions (e.g., strong winds, heavy rainfall, thunderstorms, extreme high temperatures, and frigid cold), combined with accumulated contaminants such as industrial dust and bird droppings. These include insulator string detachment, self-explosion of glass insulators, surface cracking in porcelain insulators, and surface pollution accumulation—a common defect across all insulator types. If left undetected or unaddressed in a timely manner, such defects may, at best, degrade the insulation performance of transmission lines; at worst, they could trigger severe faults like short circuits and tripping, directly undermining the continuous and stable power supply capacity of transmission lines.

Accurate and efficient monitoring of insulator operating status has thus become pivotal to ensuring the safe and stable operation of power systems. To enhance the efficiency and accuracy of insulator on-line monitoring in aerial inspection scenarios, researchers have actively adopted mainstream deep learning-based object detection algorithms such as Faster R-CNN, SSD, and YOLO. Tackling challenges in aerial images—including small-scale insulator targets, complex backgrounds, and indistinct defect features—they have strengthened discriminative feature extraction for key defect regions by integrating attention modules, improved recognition accuracy for insulators and defects of varying sizes through multi-scale feature fusion, optimized algorithm deployment and inference speed on mobile devices via lightweight network design, and elevated defect detection rates using multi-stage detection strategies. In-depth research on insulator target recognition and defect detection in UAV aerial images has achieved substantial technical progress that meets engineering application standards.

(1) Attention mechanism, which highlights regions of interest via dynamic weighting, has been widely applied in fields such as target tracking, image recognition, and image classification. SENet compresses 2D feature maps through the Squeeze-and-Excitation (SE) mechanism, enhancing target feature representation and enabling the model to focus on critical image features. To this end, Zhang et al. [78] integrated SENet into YOLOv5 to develop SE-YOLOv5. Compared with the original YOLOv5, SE-YOLOv5 increased the detection accuracy of insulator defects by 1.9%. For detecting multiple insulator defects in aerial images, Kang et al. [79] adopted a hybrid self-attention and convolution mechanism (a mixed model of self-attention and convolution, ACmix) to prioritize processing key target information, enabling differentiation of defects such as spontaneous explosion, contamination, and damage. Overall, attention mechanism modules can enhance feature representation in complex backgrounds, thereby improving the saliency and detection accuracy of inspected objects.

(2) Multi-scale feature fusion is a core feature enhancement technique in deep learning-based detection and segmentation models that integrates feature maps extracted from different network layers and at varying spatial resolutions. In deep learning networks, as layer depth increases, high-level features can capture richer semantic information but suffer from inherent drawbacks such as degraded spatial resolution and diminished fine-grained detail perception capability. This trade-off between semantic information richness and spatial detail retention has become a key bottleneck limiting the performance improvement of deep learning detection models. Specifically, increased network depth significantly enhances the semantic expressiveness of high-level features yet impairs the retention of fine-grained target structural details. In contrast, low-level features—while boasting high resolution and rich detail information—carry relatively weak high-level semantic information compared with deep-layer features. Thus, multi-scale feature fusion technology complements the advantages of features across different layers: it not only strengthens the understanding of overall target semantics but also improves the precision of local detail perception. This has become a core method for effectively boosting the performance of target detection and semantic segmentation models, especially for small-target and multi-scale defect detection tasks in power inspection scenarios.

Li et al. [80] proposed a multi-scale feature fusion detection algorithm based on an enhanced SSD framework. The core of this method involves using a residual attention network to extract multi-scale insulator defect features and achieving effective feature fusion via the cross-layer connection mechanism between deconvolution and multi-branch detection networks. Experimental data show that compared with the original SSD algorithm, its defect detection accuracy is improved by 2.7%, fully verifying the method’s advantages in handling complex defect patterns. In addition, Hao et al. [81] proposed a high-precision insulator defect detection method based on a modified YOLOv4 architecture. The method employs Cross Stage Partial-Residual Network with Split-Attention (CSP-ResNeSt) as the backbone network and embeds the SimAM attention mechanism into the multi-scale bidirectional pyramid network, thereby constructing an efficient cross-scale feature fusion path. This design not only addresses the long-standing challenge of accurately identifying small-scale insulator defects (e.g., micro-cracks and pinhole damage) but also significantly improves the overall detection accuracy and robustness of the model in real-world power inspection scenarios.

(3) Deep learning network lightweighting is a pivotal approach in modern deep learning, as it can significantly reduce computational resource consumption and model storage requirements through technical means such as network architecture redesign and reconstruction, parameter pruning, and low-rank decomposition—all while maintaining or even slightly improving the core detection performance of the model. In target detection, traditional algorithms often adopt classification networks like VGGNet and ResNet as backbone structures. While these backbone architectures excel at extracting high-dimensional semantic features, they have inherent limitations that hinder edge deployment: excessively large parameter volumes and high inference computational complexity, which make them ill-suited for resource-constrained edge devices (e.g., UAV onboard terminals and portable inspection edge nodes) and thus fail to meet the strict real-time detection requirements of field inspection. Therefore, advancing the lightweighting of deep learning detection networks holds great practical significance for enabling low-latency, real-time detection of power components in UAV aerial inspection images under on-site resource constraints.

Yang et al. [82] proposed a lightweight YOLOv3 detector based on spatial pyramid pooling and MobileNetV2 backbone. Compared with the baseline YOLOv3 model, it achieves a 98% reduction in model parameter volume and a nearly fivefold improvement in inference speed. To achieve fast and accurate localization and recognition of insulator defects in aerial images, Zan et al. [83] replaced the standard Conv-BatchNorm-LeakyReLU (CBL) convolutional blocks with MobileViT hybrid feature extraction modules to improve YOLOv4-tiny. Compared with the traditional YOLOv4-tiny, the defect detection accuracy of the improved algorithm is increased by 1.64%, and the insulator defect detection inference speed reaches 80.61 FPS under the same hardware test environment, making it highly suitable for on-site real-time monitoring tasks. In addition to depthwise separable convolutions and lightweight backbone architectures (e.g., SqueezeNet, MobileNet), researchers have also explored targeted model compression techniques (e.g., knowledge distillation, parameter quantization) to further reduce the deployment overhead of lightweight detectors on edge devices.

For example, Xie et al. [84] applied comprehensive pruning methods to eliminate redundant channels and convolutional kernels, while Zhao et al. [74] employed dynamic supervision knowledge distillation to train smaller models, effectively balancing accuracy and resource consumption. These research efforts have collectively promoted the development of high-efficiency deep learning-based power component detection systems. However, several key technical challenges still persist in practical engineering deployment. Future research can focus on exploring integrated hybrid optimization strategies to further elevate system performance, including: (1) fusing multi-modal lightweight techniques for synergistic efficiency improvement; (2) optimizing the accuracy-computation-resource trade-off for complex on-site inspection scenarios; (3) improving model cross-domain adaptability to diverse power components and defect categories.

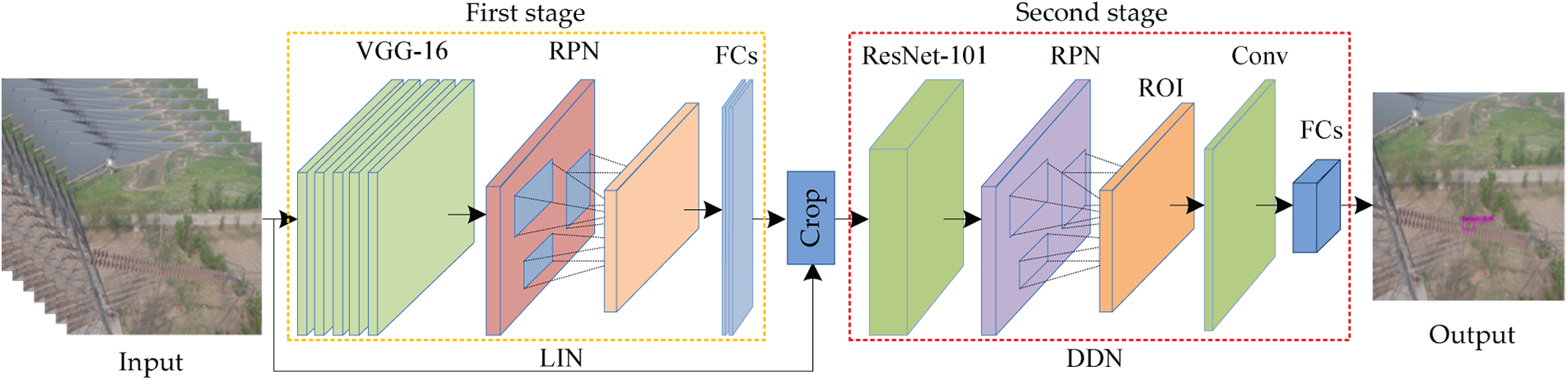

(4) Multi-stage target detection is a hierarchical technical framework in computer vision-based object detection that involves gradually filtering, refining, and optimizing candidate target regions through successive cascaded detection stages to achieve more accurate target localization and classification. To tackle the key challenge of detecting tiny or small-scale insulator defects in complex aerial inspection images, researchers have employed network cascading to enhance detection precision. Tao et al. [85] developed a two-stage framework by cascading an insulator localization network (ILN) and a defect detection network (DDN) (Fig. 5), achieving 91% accuracy and 96% recall on the Insulator Defect dataset. Liu et al. [86] proposed a cascaded approach combining the enhanced YOLOv3-dense and YOLOv4-tiny, which boosted the overall insulator defect detection accuracy to 98.4%—a 2 percentage points absolute improvement over the 96.4% accuracy of the baseline YOLOv4 model. Beyond the pure cascading of multiple detection networks, hybrid approaches integrating detection and segmentation have been explored. For instance, Ling et al. [87] integrated Faster R-CNN (for coarse insulator localization and defect detection) and U-Net (for fine-grained pixel-level defect segmentation) into a unified cascaded multi-task pipeline, enabling simultaneous target localization and pixel-level defect segmentation.

Figure 5: The cascaded model based on LIN and DDN.

In addition to the aforementioned techniques, various specialized deep learning approaches have been developed for insulator recognition and defect detection. Li et al. [88] addressed the problem of inaccurate insulator positioning caused by arbitrary orientation in oblique aerial images by incorporating angular rotation parameters into axis-aligned bounding boxes to implement rotated bounding boxes, thus developing a directional insulator recognition algorithm that improves the alignment accuracy of arbitrarily oriented targets in aerial inspection images. Jiang et al. [89] improved defect recognition accuracy and environmental robustness through multi-scale and multi-level feature perception by implementing ensemble learning with heterogeneous SSD-based detection models. Xu et al. [68] leveraged the DETR network for end-to-end insulator defect detection, eliminating the need for anchor-based box preprocessing and prior anchor design and streamlining the overall detection pipeline. To mitigate the challenge of labeled data scarcity in practical power inspection scenarios, Shi and Huang [90] proposed a weakly supervised method for detecting insulator string drop defects, thereby significantly reducing the model’s reliance on large-scale high-quality labeled defect datasets.

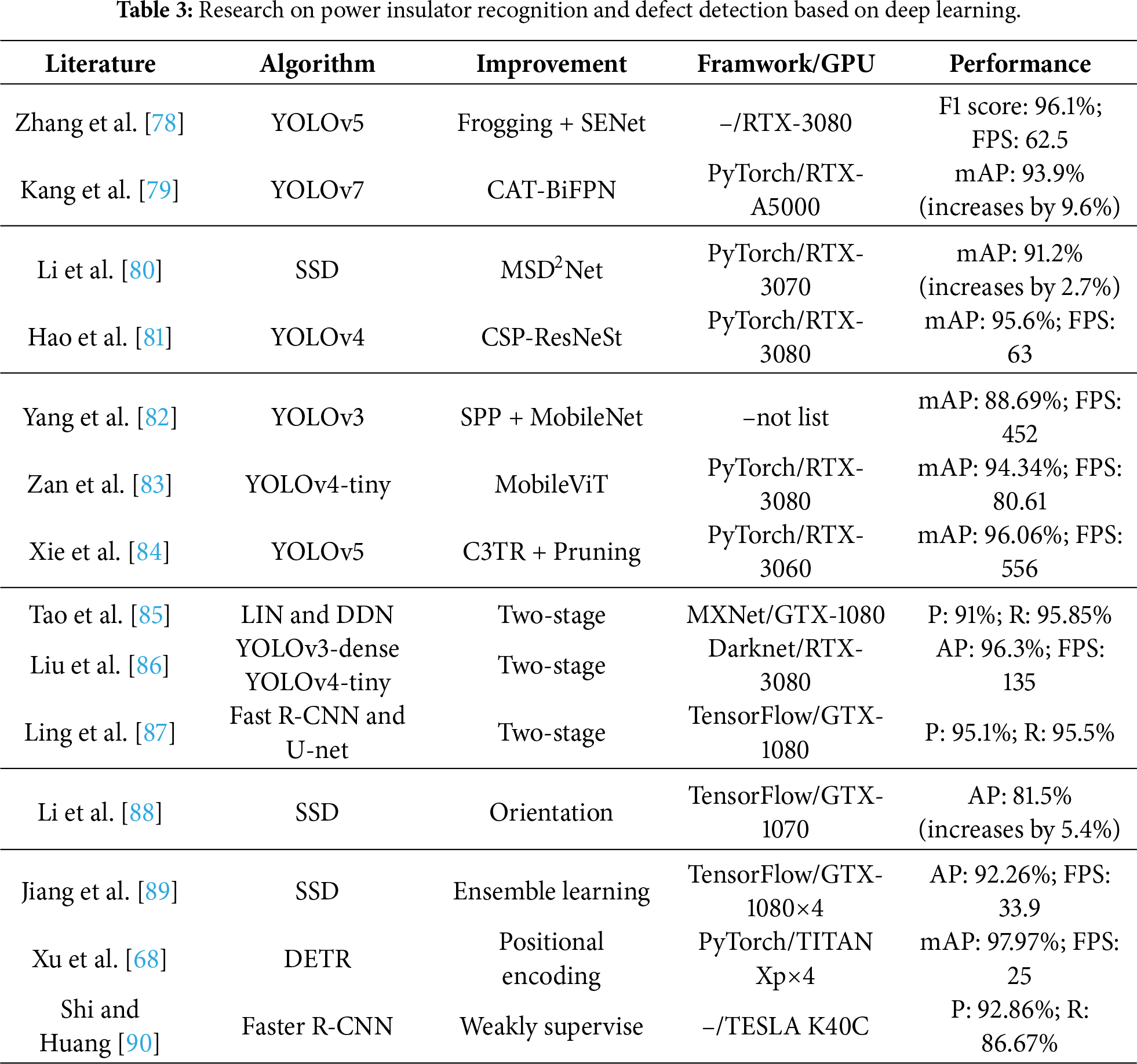

Table 3 summarizes the relevant research achievements of power insulator recognition and defect detection methods based on deep learning.

4.2 Detection of Transmission Towers

As a core supporting infrastructure for overhead high-voltage transmission lines, transmission towers serve to support high-voltage power transmission lines and maintain the required safe clearance distances between conductors and the ground, as well as among the three-phase conductors. In post-disaster emergency repair, rapidly identifying and precisely locating damaged or collapsed transmission towers from large-scale UAV aerial inspection images is critical for efficient disaster damage assessment and timely emergency maintenance of transmission line systems.

Guo et al. [91] proposed a real-time detection method for transmission towers based on an improved YOLOv3. By simplifying the network structure—reducing layers from 106 to 23—the model minimized parameters. The improved model sacrificed 1% of detection accuracy (from 95.13% to 94.09%) but increased detection speed by 50% (from 20 FPS to 30 FPS), providing auxiliary decision-making information for power maintenance personnel during post-disaster repairs. Bian et al. [92] introduced a transmission tower detection method based on Tower R-CNN, which is adapted from the baseline Faster R-CNN framework through targeted parameter optimization and a hierarchical staged training strategy. Compared with the baseline Faster R-CNN, this model maintained the same 89.8% transmission tower detection accuracy while increasing the inference speed from 0.8 FPS to 5 FPS. To enable quantitative damage assessment of transmission towers after disasters, Hosseini et al. [93] developed an intelligent transmission tower damage classification and severity estimation method (IDCE) that uses four parallel ResNet-18 models for tower collapse detection, flame detection, damage classification, and damage state assessment. The model achieved accuracy rates of 94.18% for tower collapse detection, 97.14% for flame detection, and 98.78% for damage classification and state assessment, respectively.

4.3 Feature Extraction of Power Lines

Power lines serve as the core carriers for electric energy transmission and exhibit faint linear features amid cluttered and complex natural backgrounds (e.g., vegetation, buildings, and mountainous terrain). UAV patrol inspections typically capture sequential aerial images along power lines traversing transmission line corridors. Accurately extracting and identifying power lines from aerial images not only enables UAVs to implement autonomous obstacle avoidance but also guarantees the safety of low-altitude inspection flight paths. Yetgin et al. [94] proposed a power line classification model with VGG-19 and ResNet-50 as dual backbone networks. While this model could effectively classify whether power lines were present in images, it failed to achieve pixel-level or sub-pixel-level precise localization of power line segments, limiting its practical application in UAV obstacle avoidance.

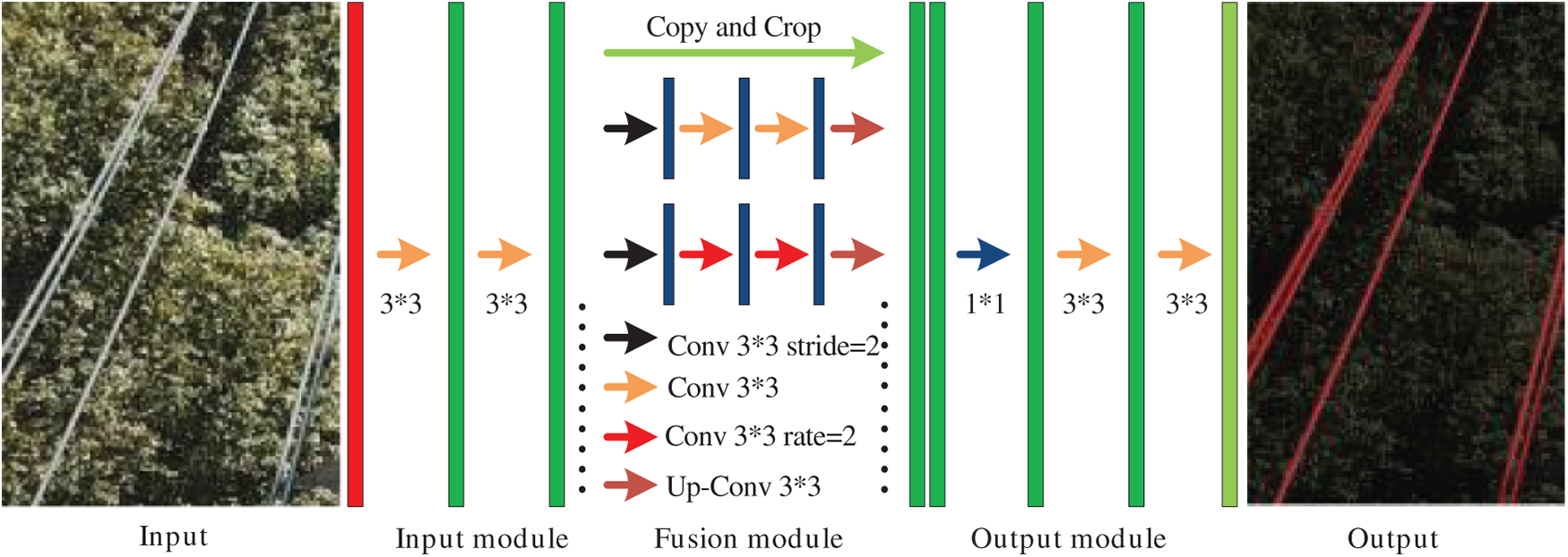

Due to the extremely narrow pixel width of power lines in high-altitude UAV aerial images, power line detection tasks are essentially equivalent to fine-grained power line semantic segmentation, as both require pixel-level localization of linear targets—prompting many researchers to apply advanced semantic segmentation algorithms for power line detection. Nguyen et al. [95] developed LS-Net, a dedicated power line segmentation network comprising a fully convolutional feature extractor, target classifier, and line segment spatial regressor. This algorithm achieved an inference speed of 20.4 FPS, enabling near-real-time power line segmentation and detection in complex outdoor power line corridor scenarios. In the presence of complex background clutter and noise interference (e.g., overlapping vegetation, light glare, and motion blur), traditional filtering and gradient-based methods failed to capture continuous and complete power line structures. To address this limitation, Zhang et al. [96] proposed a power line segmentation method based on multi-scale convolutional features and power line-specific structural prior features, which utilized multi-scale context information and structural prior constraints to achieve accurate and efficient power line detection. Beyond the above supervised learning methods for power line segmentation, researchers have also explored low-label or label-free learning approaches to address the challenge of labeled data scarcity. For example, Lee et al. [97] proposed a weakly supervised learning method for power line localization based on convolutional neural networks, while Chen et al. [98] proposed SaSnet (Fig. 6), a self-supervised learning framework for real-time power line semantic segmentation.

Figure 6: The structure of SaSnet.

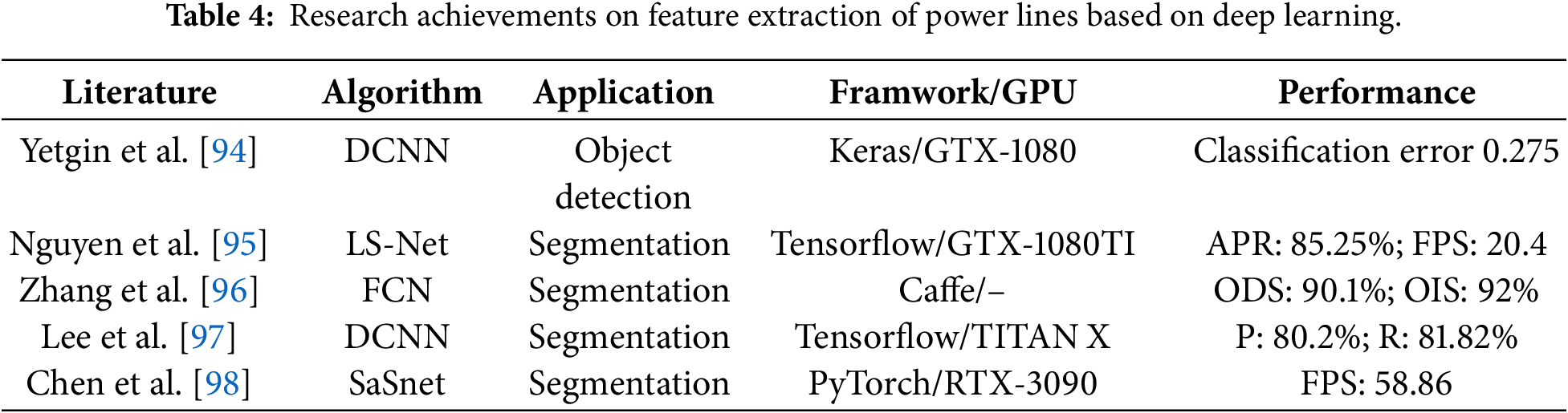

The relevant research achievements on deep learning-based power line feature extraction and segmentation are systematically summarized in Table 4, covering algorithm, application, framwork, GPU, and key performance metrics.

4.4 Detection of Metal Fittings and Defects

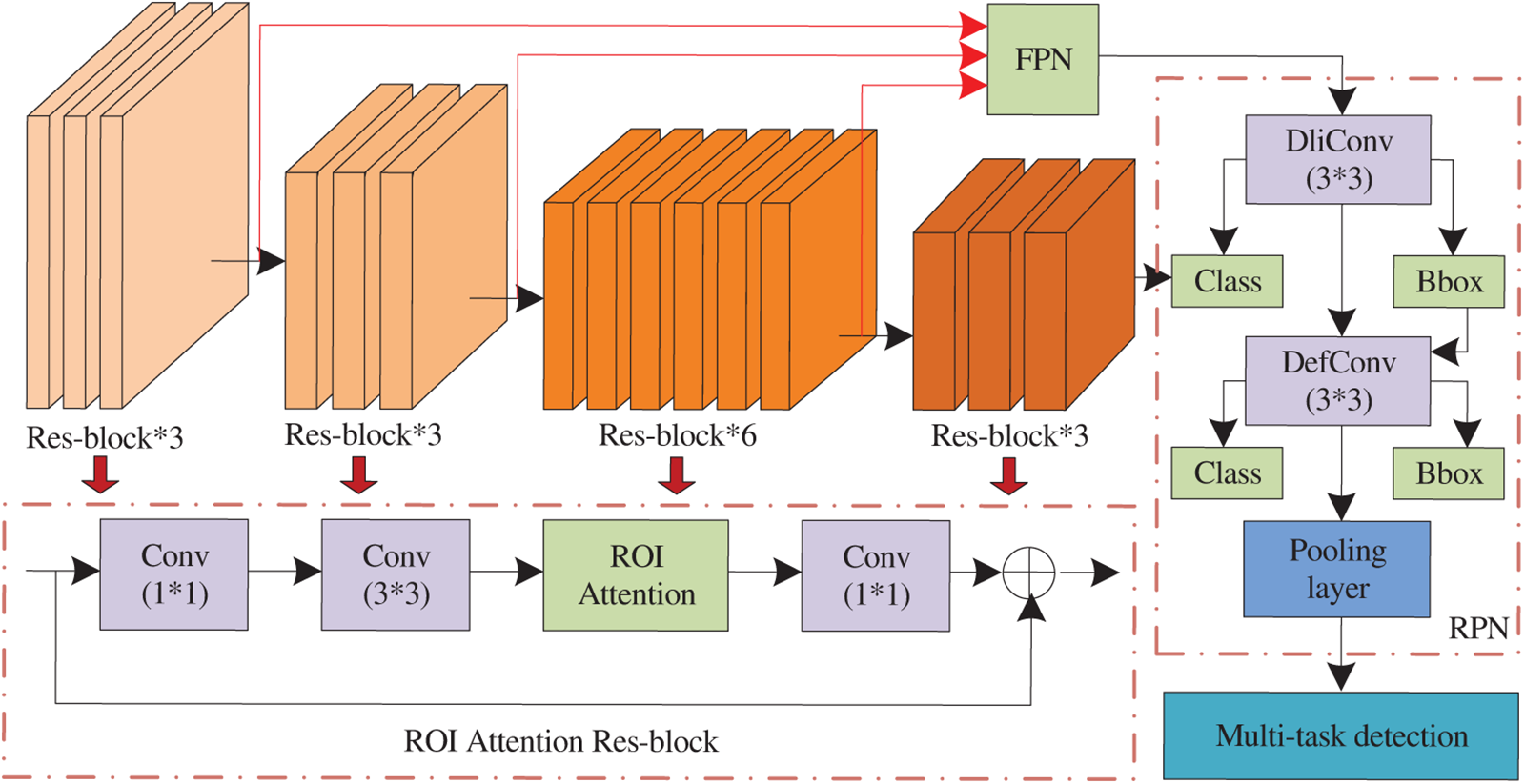

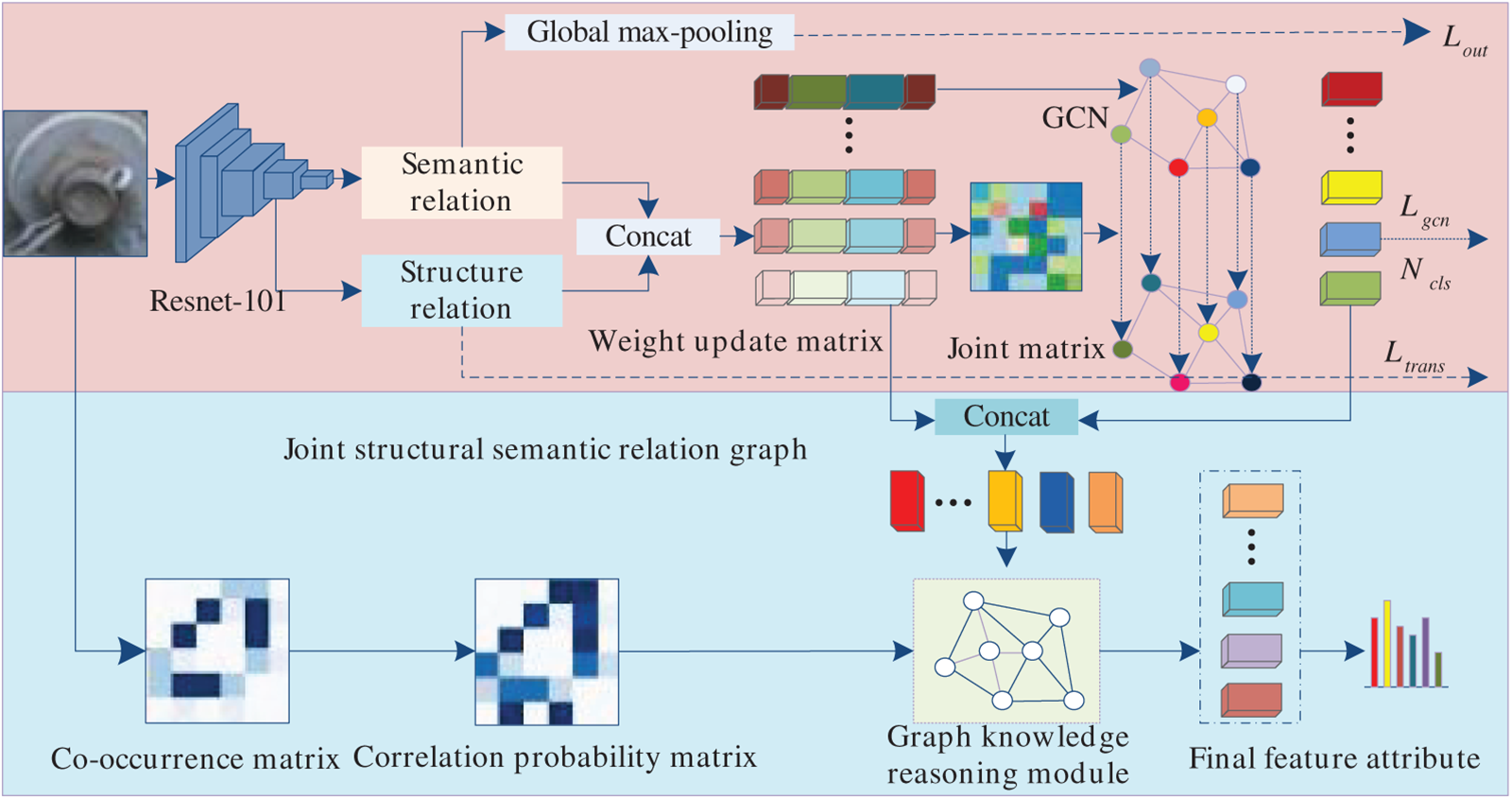

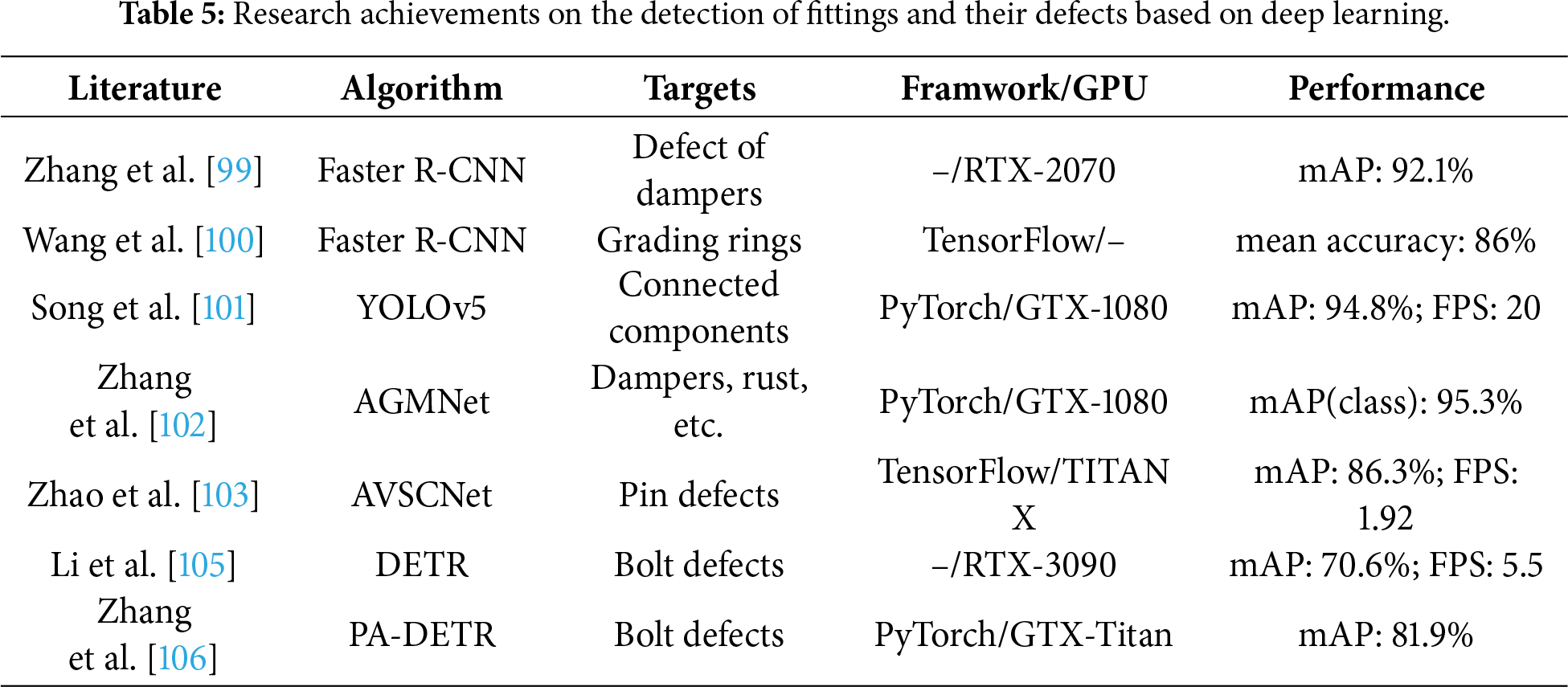

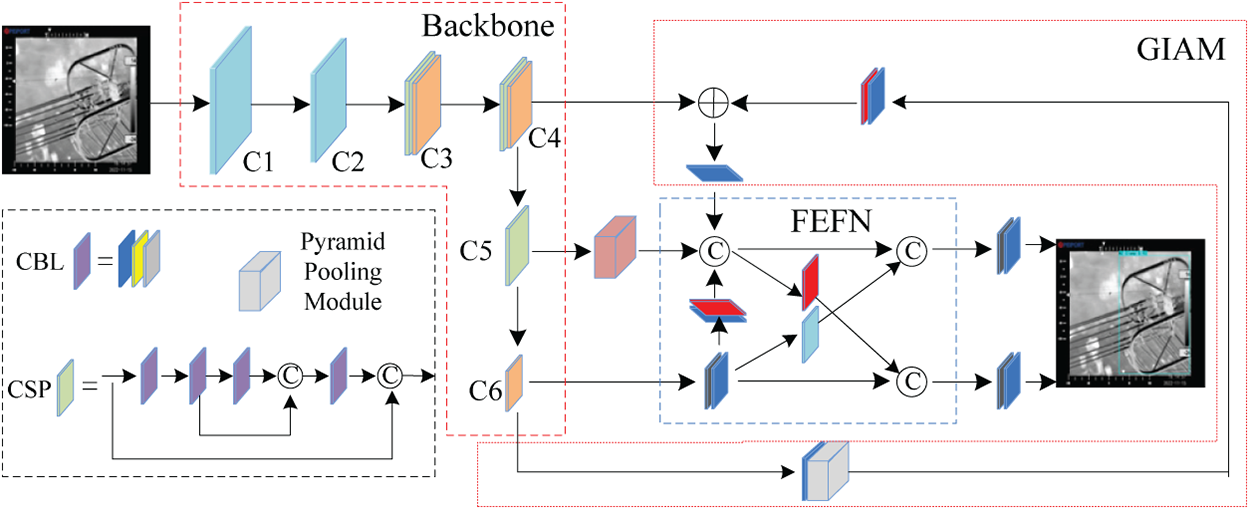

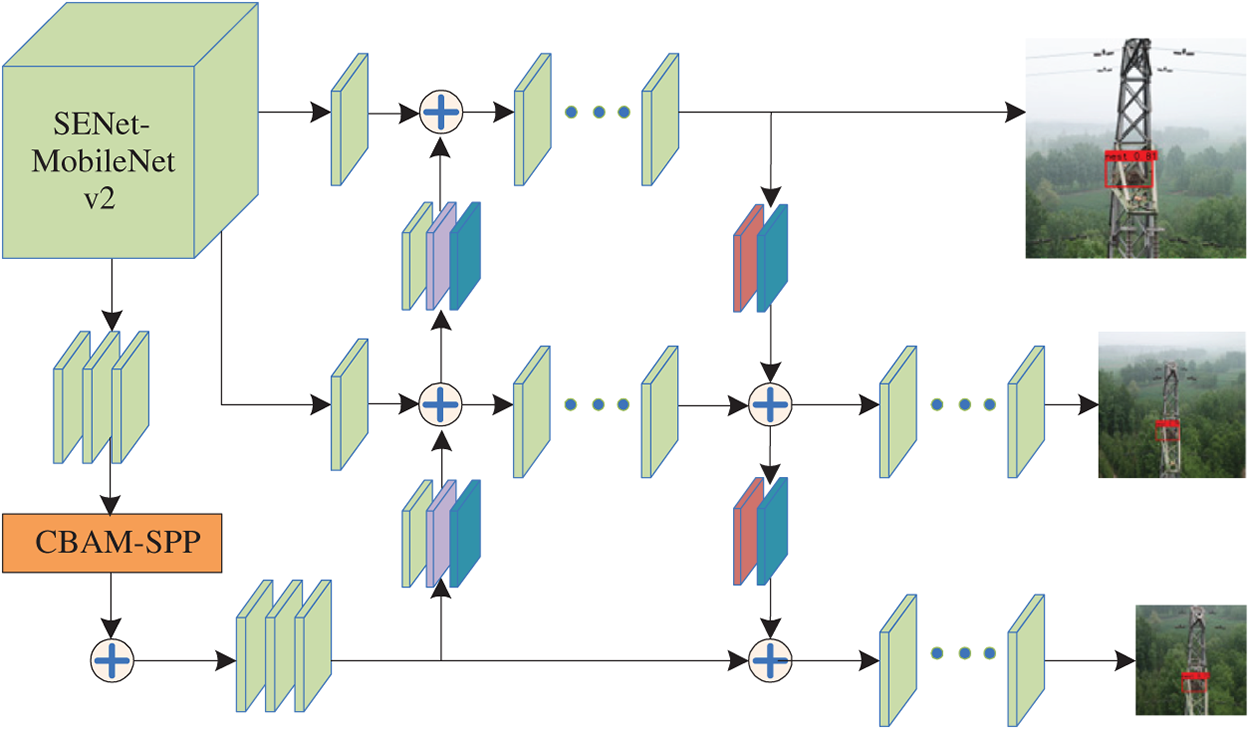

Power fittings are specialized metal connecting accessories used to connect and assemble various devices in power systems, serving to transmit mechanical loads (e.g., tension, compression) and electrical loads (e.g., current conduction) while providing specific protective functions. Transmission lines incorporate numerous types of power fittings, and researchers have primarily focused on target detection of key components such as vibration dampers, spacers, grading rings, and bolts in UAV aerial inspection images. To address the low efficiency and poor accuracy of traditional vibration damper detection methods under challenging aerial inspection conditions (e.g., uneven lighting, cluttered backgrounds, and small target sizes), Zhang et al. [99] enhanced Faster R-CNN for vibration damper recognition and rust defect detection by integrating the Retinex low-light image enhancement algorithm and FPN module, enabling intelligent detection of vibration damper damage and rust defects in complex on-site scenarios. Wang et al. [100] implemented a Faster R-CNN-based detection system via the TensorFlow framework, realizing accurate grading ring recognition and spatial localization in complex transmission line environments. Song et al. [101] introduced a fitting recognition and rust defect detection method based on the YOLO algorithm with dual attention mechanisms (fusing channel attention and Vision Transformer-based spatial attention). Zhang et al. [102] proposed an attention-guided multi-task detection network (AGMNet, Fig. 7), for grading rust severity and identifying abnormal states of power line fittings.

Figure 7: The structure of AGMNet.