Open Access

Open Access

ARTICLE

Computational Assessment of Information System Reliability Using Hybrid MCDM Models

1 Department of Information Security, L.N. Gumilyov Eurasian National University, Astana, Kazakhstan

2 Research Institute of Information Security and Cryptology, L.N. Gumilyov Eurasian National University, Astana, Kazakhstan

3 Department of Information Systems, Vilnius Gediminas Technical University, Vilnius, Lithuania

* Corresponding Authors: Nurbek Sissenov. Email: ; Gulden Ulyukova. Email:

(This article belongs to the Special Issue: Software, Algorithms and Automation for Industrial, Societal and Technological Sustainable Development)

Computers, Materials & Continua 2026, 87(2), 76 https://doi.org/10.32604/cmc.2026.075504

Received 03 November 2025; Accepted 12 January 2026; Issue published 12 March 2026

Abstract

The reliability of information systems (IS) is a key factor in the sustainable operation of modern digital services. However, existing assessment methods remain fragmented and are often limited to individual indicators or expert judgments. This paper proposes a hybrid methodology for a comprehensive assessment of IS reliability based on the integration of the international standard ISO/IEC 25010:2023, multicriteria analysis methods (ARAS, CoCoSo, and TOPSIS), and the XGBoost machine learning algorithm for missing data imputation. The structure of the ISO/IEC 25010 standard is used to formalize reliability criteria and subcriteria, while the AHP method allows for the calculation of their weighting coefficients based on expert assessments. The XGBoost algorithm ensures the correct filling of gaps in the source data, increasing the completeness and reliability of the subsequent assessment. The resulting weighted indicators are aggregated using three MCDM methods, after which an integral reliability indicator is formed as a percentage. The methodology was tested on six real-world information systems with different architectures. The results demonstrated high consistency between the ARAS, CoCoSo, and TOPSIS methods, as well as the stability of the final rating when the criterion weights vary by ±10%. The proposed approach provides a reproducible, transparent, and objective assessment of information system reliability and can be used to identify system bottlenecks, make modernization decisions, and manage the quality of digital infrastructure.Keywords

Modern information systems (IS) form the foundation of digital infrastructure in government, commercial, and mission-critical industries. The reliability of such systems directly impacts business continuity, data security, and user trust. Despite a significant amount of research devoted to assessing the quality and reliability of IS, existing approaches remain fragmented and focused either on individual technical indicators or subjective expert assessments [1–3].

Most modern studies use either standardized quality models without quantitative formalization or multi-criteria decision making (MCDM) methods without strict adherence to international standards [4]. Furthermore, a significant portion of the studies do not take into account the problem of incomplete data, which is typical for real-world IS, especially when analyzing operational and failure indicators [5,6]. This significantly reduces the reliability of the final assessment and limits the practical applicability of the proposed methods.

This study proposes a comprehensive approach aimed at overcoming these limitations by integrating the international standard ISO/IEC 25010:2023, hybrid MCDM models, and machine learning methods. This synthesis not only allows for the structuring of reliability as a multidimensional property but also provides a quantitative, reproducible, and robust assessment adapted to the real-world operating conditions of information systems.

1.1 ISO/IEC 25010:2023 as a Normative Basis for Assessing the Reliability of Information Systems

The ISO/IEC SQuaRE (Software Product Quality Requirements and Evaluation) series of international standards has been the basis for formalized quality assessment of software products and information systems for several decades. The evolution of this series of standards began with the ISO/IEC 9126 model, which first proposed a systematic representation of software quality as a set of characteristics and subcharacteristics [7]. Despite its widespread use, ISO/IEC 9126 had several limitations related to insufficient detail in its metrics and poor adaptation to complex, distributed information systems.

To address these shortcomings, ISO/IEC 9126 was replaced by ISO/IEC 25010, which became a key element of the SQuaRE family. It offered a more rigorous and logically structured quality model, focusing not only on software but also on information systems as a whole [8]. The latest edition of the standard, ISO/IEC 25010:2023, reflects the modern requirements of digital transformation, including issues of reliability, security, performance, and resilience of systems under high load and distributed architecture.

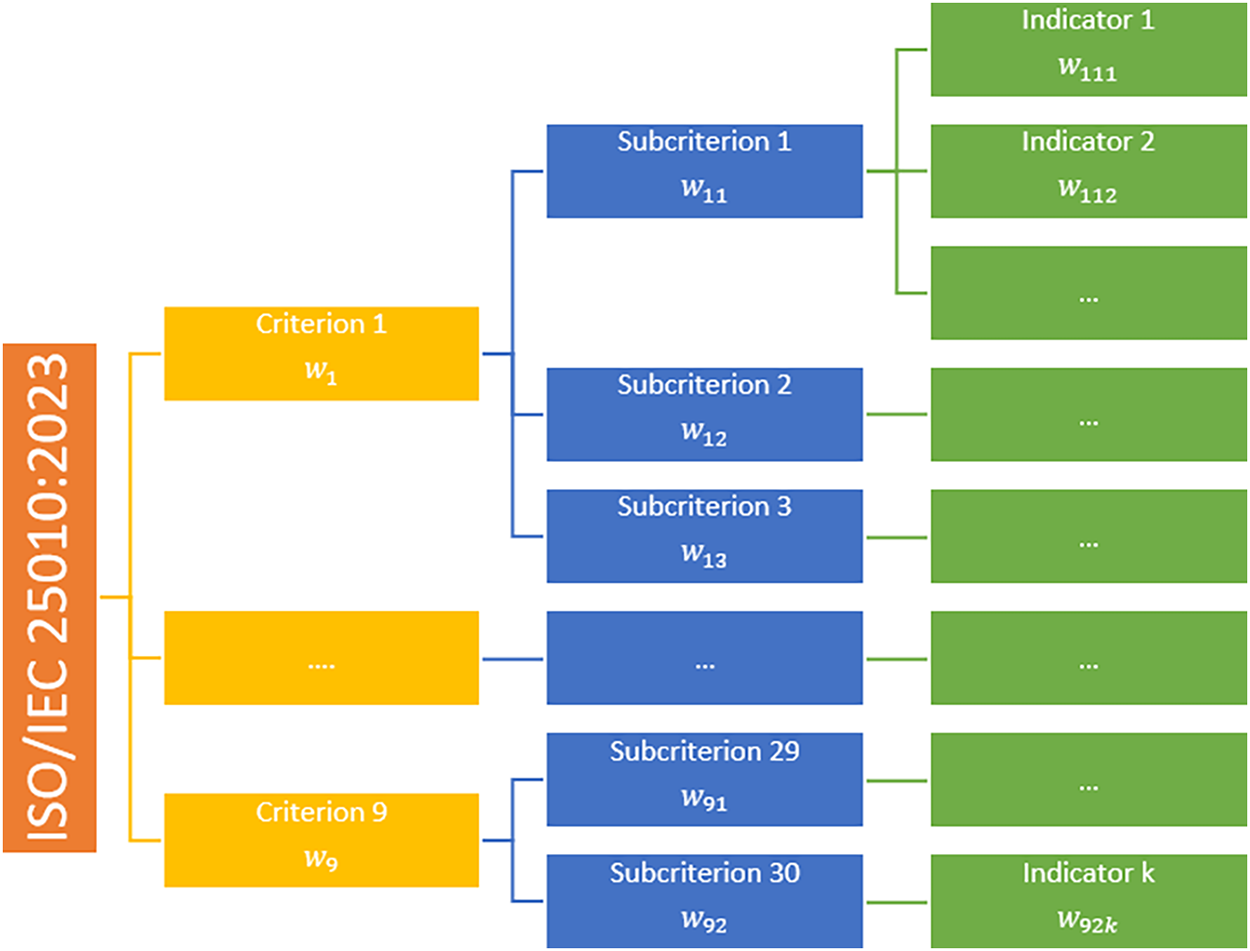

ISO/IEC 25010:2023 defines nine core quality characteristics, including suitability, performance, compatibility, usability, reliability, security, maintainability, and portability [8,9]. A key advantage of this model is its hierarchical structure, which allows abstract quality properties to be decomposed into subcharacteristics and then into measurable indicators. This makes the standard a universal basis for formalizing complex quality properties of information systems.

The ISO/IEC 25010:2023 standard was selected for this study because it is the most relevant and internationally recognized model for assessing the quality of information systems, widely used in both scientific research and industrial practice [4,10]. Unlike many applied models, ISO/IEC 25010 ensures conceptual integrity and consistency of criteria, which is especially important for a comprehensive assessment of the reliability of information systems. The ISO/IEC 25010:2023 standard itself does not offer a quantitative mechanism for an integrated assessment of reliability. It defines what should be assessed, but does not determine how to aggregate indicators into a single numerical indicator. In most existing studies, the standard is used only as a descriptive or auxiliary model without rigorous mathematical formalization [4,9]. This creates a significant methodological gap, especially for comparative analysis and management decision-making.

Within the framework of ISO/IEC 25010, reliability is considered a comprehensive property, including subcharacteristics such as availability, fault tolerance, maturity, and recoverability. However, in existing studies, these subcharacteristics are typically analyzed in isolation or at a qualitative level, without developing a comprehensive numerical indicator of information system reliability [2,3].

In this study, ISO/IEC 25010:2023 is used not only as a conceptual model but also as a formal basis for developing a computational methodology for reliability assessment. The standard’s hierarchical structure is used to create a three-level model of “criterion, subcriterion, and measurable indicator” which allows for linking the standard’s requirements to actual operational data of information systems. This approach ensures a rigorous interpretation of reliability in accordance with international standards and simultaneously creates a foundation for further quantitative analysis.

The use of ISO/IEC 25010:2023 in this work is justified and methodologically sound. It serves as a fundamental framework for integrating multicriteria analysis and machine learning methods, enabling a transition from declarative quality assessment to a reproducible and quantifiable assessment of information system reliability.

1.2 Multicriteria Decision Making Models (MCDM)

Multi-criteria decision making methods are widely used to analyze complex systems characterized by the presence of many heterogeneous and often conflicting criteria [11,12]. Classical MCDM approaches, such as AHP, TOPSIS, VIKOR, ELECTRE, and DEMATEL, have become widely used in problems of assessing the efficiency, risk, quality, and sustainability of various technical and organizational systems [13–16].

The evolution of MCDM methods in recent years has led to the active development of hybrid approaches aimed at increasing the stability of results, reducing the subjectivity of expert assessments, and taking into account the interrelations between criteria [17–19]. The most common combinations are AHP–TOPSIS, AHP–DEMATEL, FAHP–VIKOR, and DEMATEL–ANP, which allow for consideration of both the hierarchical structure of criteria and their mutual influence [19,20]. Such hybrid models have proven their effectiveness in tasks such as supplier selection, investment project evaluation, and risk analysis.

Hybridization of MCDM methods has become a trend over the past decade, aimed at overcoming the limitations of individual methods. A literature review identifies several key types of hybrid approaches and their applications in related fields:

1. Combinations for accounting for uncertainty: Integration of fuzzy set theory methods with MCDM. For example, F-AHP in combination with F-TOPSIS or F-VIKOR allows for the effective formalization of linguistic expert assessments. The study [15] successfully applied FAHP-Interval TOPSIS to IT strategy selection, increasing the solution’s robustness to subjectivity. Similarly, in [21], the use of AHP-FTOPSIS for bank evaluation allowed for the integration of qualitative and quantitative criteria;

2. Combinations for analyzing criteria interdependencies: Combining weighting methods (AHP) with structural modeling methods (DEMATEL, ANP). AHP-DEMATEL is used when the criteria are not independent. Such a combination helped not only to determine the weight of supplier evaluation criteria but also to identify key causal factors. A more complex DEMATEL-ANP-VIKOR chain was used to select Six Sigma projects, taking into account the mutual influence and feedback between requirements;

3. Combinations for decision aggregation: Using several ranking methods (e.g., TOPSIS, VIKOR, WASPAS) followed by synthesis of their results to obtain a more robust final ranking.

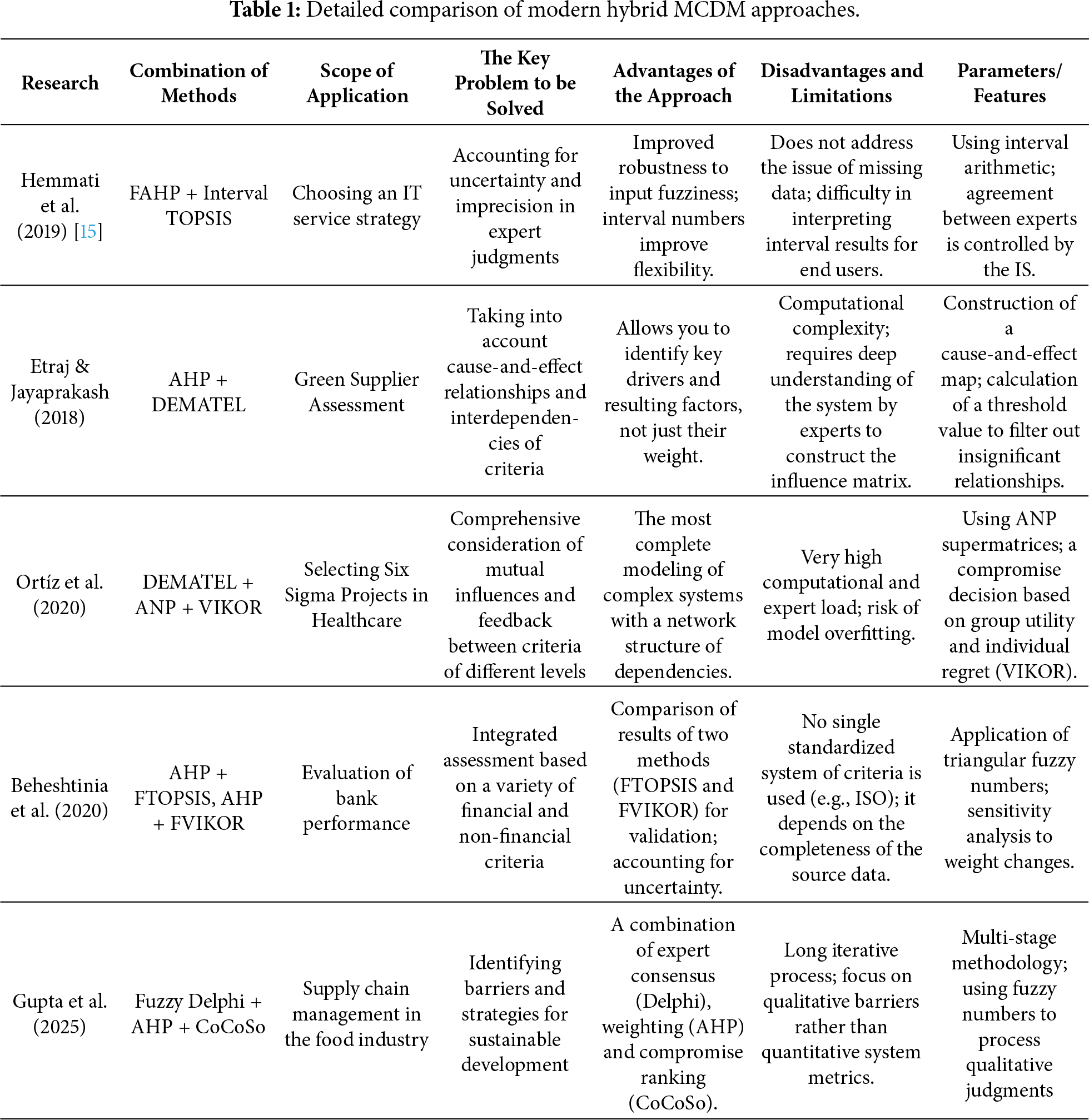

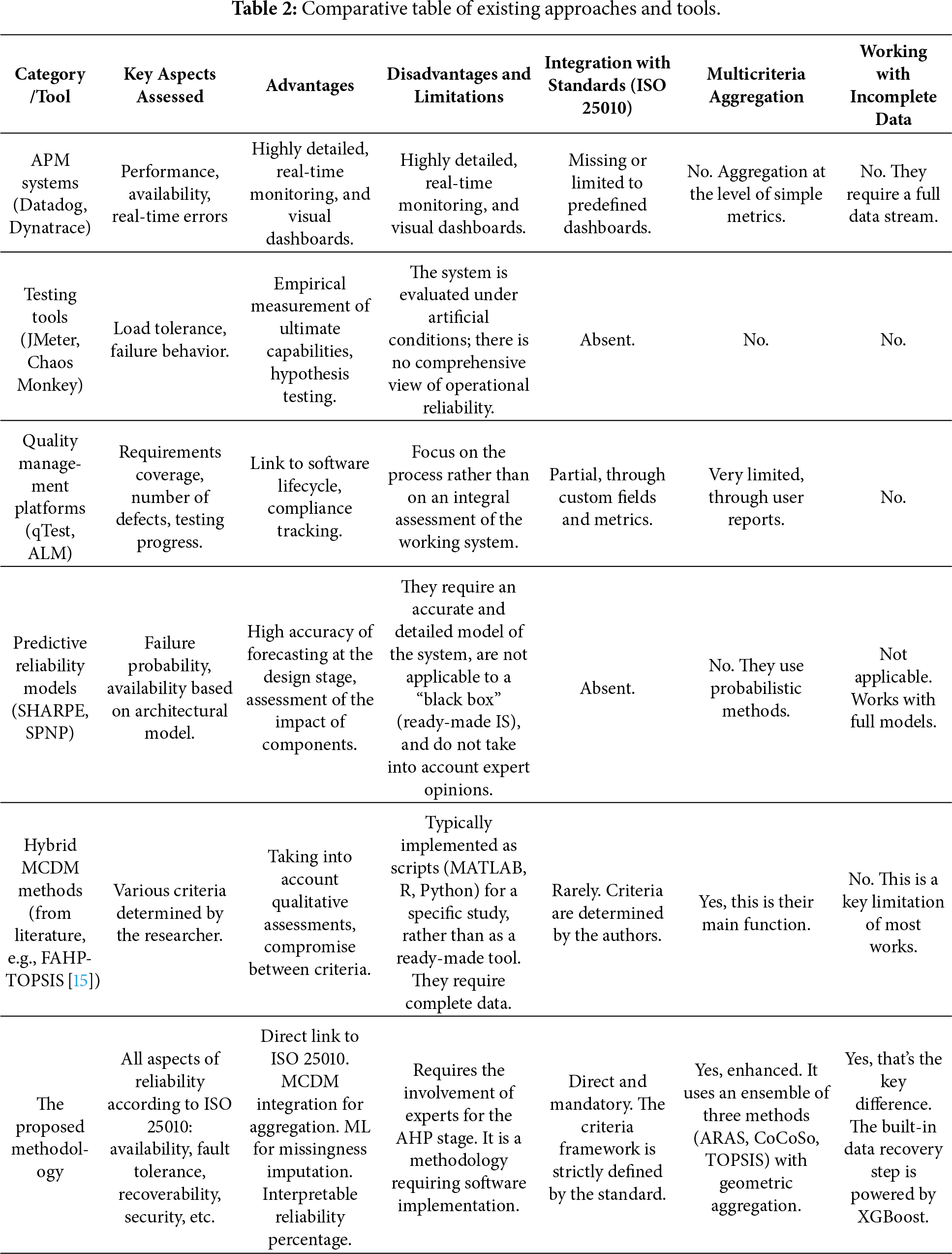

A literature review (Table 1) shows that existing hybrid methods successfully address the challenges of accounting for uncertainty or interrelationships among criteria. However, they rarely combine standardized quality models with machine learning capabilities. This paper aims to fill this gap.

In the field of information systems, MCDM methods are used primarily for comparative analysis of alternatives, including the selection of software platforms, cloud services, and architectural solutions [13,22]. In a number of studies, MCDM is used to evaluate individual aspects of IS quality, such as performance, security, or usability [4,23]. However, in most cases, reliability is considered either as one of the secondary criteria or as an abstract property without a rigorous quantitative interpretation.

An analysis of existing research shows that MCDM methods are rarely used for direct quantitative assessment of information system reliability in accordance with international quality standards. In the vast majority of studies, the result of applying MCDM is the ranking of alternatives, rather than the calculation of an absolute numerical reliability indicator suitable for monitoring, comparison with standard values, or management decision-making [21,24].

Preliminary studies utilized the modern MOBAC and CODAS methods, which have attracted the attention of researchers in recent years due to their robustness to changes in criterion weights and computational simplicity. Despite their analytical advantages, the results of applying these methods showed that they are primarily focused on obtaining the relative preference order of alternatives. The resulting values generated by MOBAC and CODAS lack direct interpretability and do not allow for representing the reliability of an information system as an unambiguous quantitative indicator.

This aspect is a significant limitation in assessing the reliability of information systems, as practical tasks require not only comparing systems with each other but also determining their compliance with specified requirements or benchmarks. The lack of an absolute assessment scale complicates the analysis of reliability dynamics over time and the development of recommendations for system upgrades.

Unlike the aforementioned approaches, this study examines MCDM methods not as a standalone ranking tool, but as a component of a hybrid computational framework. The ARAS, CoCoSo, and TOPSIS methods are used together to generate an integrated reliability indicator, normalized as a percentage. This approach allows for the integration of the advantages of various MCDM models, mitigating the impact of their individual limitations, and moving from relative ranking to a quantitative assessment of information system reliability.

An analysis of the evolution and application of MCDM approaches shows that, despite their widespread use, existing methods do not provide a comprehensive quantitative assessment of IS reliability. This confirms the need to develop a hybrid methodology integrating MCDM with a formalized quality model and additional computational tools, as implemented in this study.

1.3 Machine Learning as a Tool for Ensuring Data Integrity

Practical assessment of information system reliability inevitably faces the problem of incomplete source data. Performance indicators such as MTBF, MTTR, failure rate, and resource utilization often contain gaps due to monitoring limitations, logging failures, or organizational factors [5]. Using such incomplete data in multicriteria analysis leads to distortion of the final assessments and a decrease in their reliability, making the task of data recovery critical.

In recent years, machine learning methods have been actively used to improve the accuracy of reliability analysis and the processing of complex, nonlinear dependencies in technical and information systems [25,26]. Unlike traditional statistical imputation methods, machine learning allows for the consideration of hidden relationships between indicators, thereby generating more realistic and consistent input information for subsequent analysis [6]. In this study, machine learning is used specifically as a tool for ensuring data integrity, rather than as a replacement for analytical or expert methods.

Various approaches have been considered for missing value imputation, including linear regression, k-NN imputation, random forests, and ensemble methods. Linear models have low computational complexity but are unable to adequately model nonlinear relationships between reliability metrics. k-NN methods are sensitive to the choice of distance metric and data scaling, while random forests, despite their high accuracy, demonstrate lower robustness with limited sample size [27].

In this study, we selected the XGBoost algorithm, which belongs to the class of gradient boosting and combines high accuracy with robust results. Compared to alternative methods, XGBoost effectively handles tabular data, models nonlinear relationships, and contains built-in regularization mechanisms that reduce the risk of overfitting [28,29]. Several comparative studies show that XGBoost outperforms traditional imputation methods in terms of reconstruction accuracy and robustness when working with incomplete and heterogeneous data [30].

Using XGBoost as a data recovery tool is a methodologically sound choice. Its integration into the proposed hybrid model eliminates the key limitation of MCDM methods—the requirement for a complete decision matrix, and thus ensures a more accurate, reproducible, and objective assessment of information system reliability.

1.4 Comparative Analysis of Existing Methods and Software Tools for Assessing the Reliability of Information Systems

In addition to methodological approaches, an important aspect is the existing software tools and practical methods used to assess IS reliability. Their analysis allows us to understand the current technological landscape and identify gaps that the proposed hybrid methodology fills.

Existing solutions can be roughly divided into several categories:

Monitoring and APM (Application Performance Management) tools: These include platforms such as Datadog, Dynatrace, New Relic, Nagios, and Zabbix. These systems provide extensive real-time data on availability, response time, resource utilization (CPU, RAM), error rates, and transactions. They are effective for collecting metrics and detecting incidents, but their focus is biased toward performance and observability rather than a comprehensive assessment of reliability as an integral property encompassing security, fault tolerance, and compliance. They do not perform multi-criteria aggregation based on expert weights and do not provide a single reliability index.

Specialized software for reliability testing: Tools for load testing (JMeter, LoadRunner, Gatling) and stress testing, as well as for fault tolerance assessment (Chaos Monkey, Gremlin). They allow for the empirical determination of metrics such as MTBF (mean time between failures), MTTR (mean time to repair), and the point of system degradation. However, these tools provide a point-by-point, technical assessment of individual aspects under load and are not intended for comparative analysis of dissimilar ICs based on a standardized quality model.

Software quality management platforms and frameworks: Solutions that support quality standards, such as Tricentis qTest and Micro Focus ALM/Quality Center, help with test planning, defect tracking, and, to some extent, comparing results with requirements. Some support reliability-related metrics. However, they remain testing process management tools and do not include built-in algorithms for multi-criteria decision making or machine learning-based data imputation.

Academic and proprietary models for metric calculation: Mathematical models and specialized software (e.g., RELIABILITY, SHARPE, SPNP) exist for calculating probabilistic reliability metrics for complex systems based on architectural models (e.g., Markov chains, Petri nets). These tools are powerful for quantitative forecasting at the design stage, but require detailed system models, are difficult to use for existing IS, and do not take into account subjective expert assessments of business criteria [9,24].

As Table 2 shows, a wide variety of tools and approaches are used in practice to assess the reliability of information systems, but each addresses only a limited range of tasks. Monitoring systems and APM are well suited for monitoring the system’s state in real time, while testing tools allow for testing its behavior under load. However, neither approach provides a comprehensive understanding of reliability as an integral property. In contrast, the proposed methodology combines the requirements of the ISO/IEC 25010 standard, multi-criteria analysis, and machine learning methods, resulting in a clear and interpretable indicator of information system reliability.

1.5 Comparative Analysis of Existing Methods and Software Tools for Assessing the Reliability of Information Systems

The conducted analysis of the literature allows us to clearly formulate a scientific gap: there is no comprehensive, standardized and practice-oriented methodology for assessing the reliability of information systems that would synergistically combine:

1. Normative framework (ISO/IEC 25010:2023) for structuring the task;

2. Expert-analytical procedures for weighing and aggregation;

3. Algorithms for intelligent data processing to ensure the completeness and reliability of the initial information;

4. A combination of MCDM methods that not only provides robust rankings but also generates a final, interpretable percentage reliability score.

The contribution and novelty of this study lies precisely in the development of such a comprehensive hybrid methodology. Specific scientific and practical contributions can be summarized as follows:

Conceptual and methodological contribution: For the first time, an assessment model has been proposed and formalized in which the ISO/IEC 25010:2023 standard is used as a direct and mandatory basis for constructing a hierarchy of criteria, ensuring consistency and objectivity.

Methodological contribution: A consistent integration of three disparate paradigms (standardization, MCDM, ML) into a single workflow is proposed.

Technological contribution: The Machine Learning algorithm is adapted and applied specifically to solve the imputation problem in the context of multi-criteria evaluation, which allows working with real incomplete data.

Practical contribution: The practical significance of the proposed methodology lies in its ability to comprehensively determine the reliability level of an information system, taking into account all key criteria and indicators regulated by the ISO/IEC 25010:2023 standard, including availability, fault tolerance, recoverability, performance, and security. The integration of diverse criteria into a single model provides a holistic view of information system reliability as a system property, and the final result, presented as a percentage (0%–100%), makes the resulting assessment clear, interpretable, and convenient for direct comparison of information systems with different architectures and purposes.

Contribution to verification: The combination of methods and the sensitivity analysis conducted confirm the reliability, robustness and practical applicability of the developed methodology.

This study contributes not to the creation of new algorithms, but to their innovative integration in a new applied context—a comprehensive, standardized assessment of IS reliability. The proposed methodology fills the identified gap, offering researchers and practitioners a transparent, reproducible, and sustainable tool that overcomes the fragmentation of existing approaches and delivers the final result in a format that is most convenient for management decision-making.

Assessing the reliability of information systems is a complex, multidimensional task, as this indicator depends on a combination of functional, operational, and quality characteristics. To ensure the objectivity and reproducibility of results, a methodological framework based on recognized international standards is necessary. This paper uses ISO/IEC 25010:2023 as such a framework, which offers a systematic approach to describing the quality of software and information systems. Its structure allows for the identification of individual criteria and subcriteria that can be formalized and used for quantitative reliability assessment.

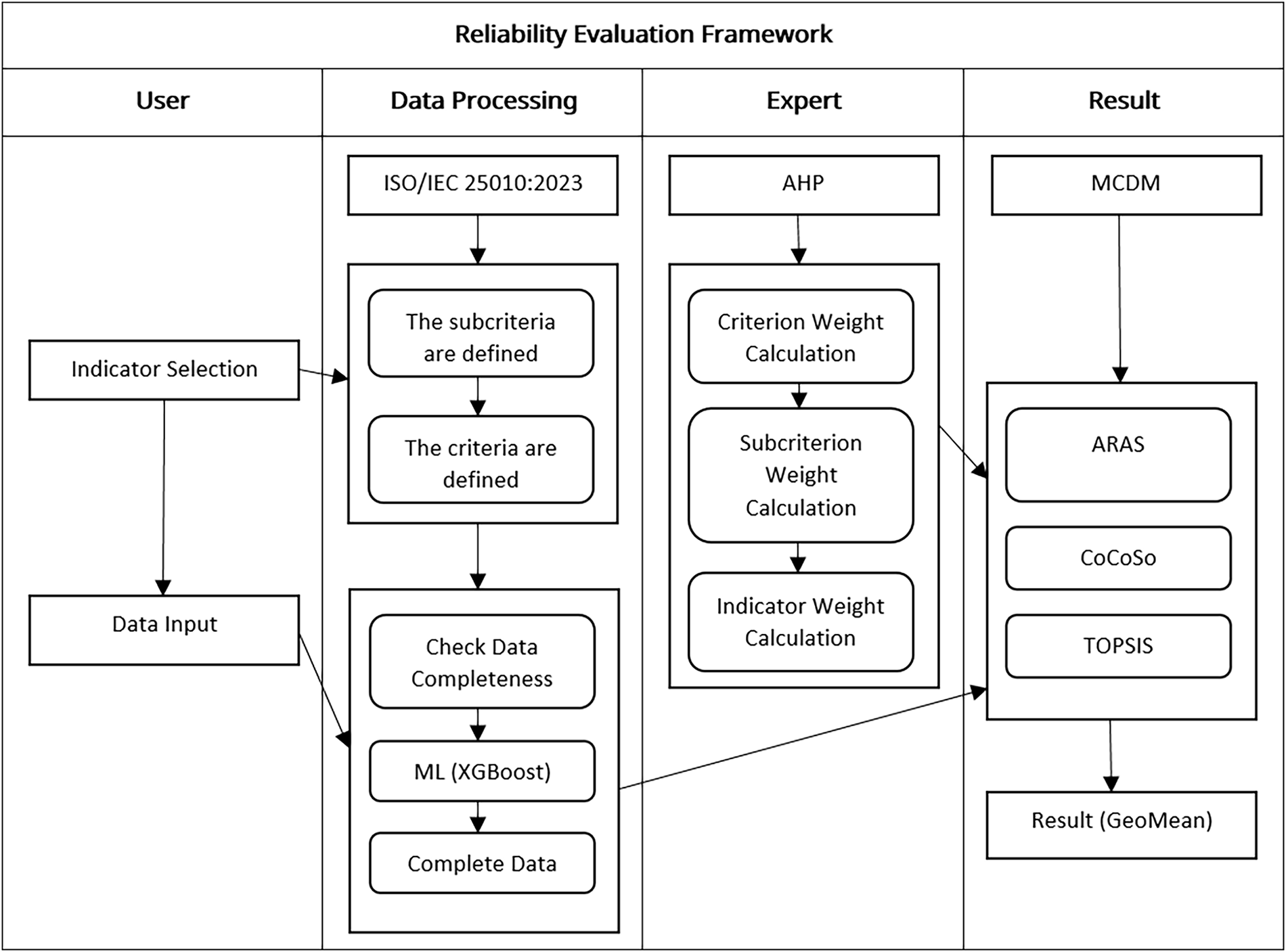

However, using standards alone is insufficient to obtain an integrated assessment, as criteria have varying weights and may conflict with one another. To address this issue, MCDM methods are used, which allow for the consideration of weighting factors and the aggregation of disparate indicators. The study selected the ARAS, CoCoSo, and TOPSIS methods, which differ in their calculation logic but, when combined, provide a more robust and objective assessment.

Machine learning methods are also integrated into the methodology. The use of the XGBoost algorithm allows for the recovery of missing data, thereby eliminating the problem of incomplete information typical of practical research. The combination of the ISO/IEC 25010:2023 standard, MCDM methods, and machine learning forms the basis for a comprehensive and robust methodology for assessing information systems.

The ISO/IEC 25010:2023 standard provides an internationally recognized model for evaluating software and information system quality, comprising nine key criteries and over thirty subcriteries. This hierarchical model makes it possible to translate the complex concept of quality into measurable components. This approach enables the quantitative assessment of multidimensional properties of information systems and their comparison with one another.

For the purposes of this study, the most relevant criteries and subcriteries directly related to the reliability of information systems were identified from the standard’s overall structure. At the first tier, the model identifies the overarching attribute of Reliability; the second tier specifies its key elements—availability, fault tolerance, and recoverability—while the third tier links each subcriteria to concrete, measurable indicators such as mean time to recovery, availability ratio, and failure frequency. Hierarchy is consistent. Weights are correctly distributed at all levels of the model. To make sure that the expert judgments are reliable, another parameter in validation is also computed-the consistency ratio (CR). If, then results can be accepted and hence used for further calculations.

To obtain an objective, integrated assessment of the reliability of information systems, it is necessary to determine the weighting factors for criteria, subcriteria, and indicators. For this purpose, the AHP is used, which allows for the consideration of expert assessments and the specifics of the subject area.

As shown in Fig. 1, the model has a three-level hierarchical structure:

Figure 1: Three-level model for assessing the reliability of information systems.

Level 1—criteria defined by ISO/IEC 25010:2023;

Level 2—corresponding subcriteria;

Level 3—measurable indicators that enable quantitative assessment of the subcriteria.

At each level, a pairwise comparison of elements is conducted based on their significance. Experts create judgment matrices, the values of which are taken from the Saaty scale (from 1 to 9). Local weights are calculated based on these matrices.

To ensure the correctness of the scales, the following normalization conditions are met:

the sum of the weights of all criteria is equal to 1:

the sum of the weights of subcriteria within each criterion is equal to 1:

the sum of the weights of the indicators within each subcriterion is equal to 1:

Hierarchical consistency and the correct distribution of weights across all model levels are ensured. To ensure the reliability of expert judgments, the consistency ratio (CR) is also computed as part of the validation process. If

In the next step, decision matrices are formed for each criterion, with rows corresponding to alternatives and columns corresponding to indicators. The matrix elements reflect quantitative or qualitative assessments of the alternatives for each indicator.

Let there be many alternatives

and many indicators

Each alternative is assessed according to an indicator, forming a decision matrix:

where

The weight of each indicator is defined as the product of the weights of the criteria, subcriteria, and the indicator itself. Let

Each indicator receives an integral weight that takes into account the significance of the criterion, subcriterion, and the indicator itself. The sum of the global weights of all indicators equals one, ensuring model consistency.

The next step involves checking the evaluation matrix for missing elements. If the matrix contains an element without a value:

then this element is replaced with the predicted value

After data supplementation, final decision matrices are formed, which additionally include optimal values for each indicator. These values are designated as

If optimal values are not present in the initial data, they are determined according to the following rule:

• if the indicator is of the max type (the higher the better), then the highest value among all alternatives is considered optimal;

• if the indicator is of the min type (the smaller the better), then the smallest value is optimal.

Formally:

2.1 Application of the ARAS (Additive Ratio Assessment) Method

The ARAS method is used to obtain an integrated assessment of alternatives based on their relative proximity to the optimal solution. The basic idea of the method is that each alternative is normalized relative to the sum of all values for each indicator, after which a weighted sum of the normalized values is determined. The higher the value of the integrated indicator, the more preferable the alternative.

Each element of the matrix is normalized relative to the sum of the values for the corresponding indicator:

Taking into account the weights of the indicators

For each alternative, the total priority is determined:

The utility of each alternative is calculated as the ratio of its total value to the value of the optimal alternative:

where

The alternatives are ordered in descending order of

• Ease of computation and clarity in interpreting the results;

• Obtaining a visual assessment of the utility of each alternative compared to the optimal one;

• Widely applicable in economics, management, engineering, and information systems evaluation.

The ARAS method is an effective tool for multi-criteria analysis, allowing for informed decision-making based on a comprehensive assessment of alternatives.

2.2 Application of the CoCoSo (Combined Compromise Solution) Method

The CoCoSo method is a modern approach to multicriteria analysis that combines the principles of weighted sum and weighted tradeoffs to obtain an integrated assessment of alternatives. Each alternative in the CoCoSo method is normalized by each indicator, after which aggregate indices are calculated that take into account both average values and relative priorities. The final ranking is based on a combined utility measure, which allows for the balance between benefit and cost indicators to be considered.

Normalization of indicators (taking into account the maximization or minimization of criteria):

Weighting of normalized values

where

Calculation of additive and multiplicative aggregate functions:

Summary function:

The function produced:

Based on these, combined indices are formed:

The alternatives are ranked by their

The advantages of using the CoCoSo method show high stability to weight changes, a compromise between an additive and multiplicative schemes, and universal application in engineering, economics, and reliability assessment of information systems.

2.3 Application of the TOPSIS (Technique for Order of Preference by Similarity to Ideal Solution) Method

Regarded as one of the leading tools in multicriteria analysis, the TOPSIS method operates on the notion of choosing the alternative that exhibits the smallest distance to the ideal solution while remaining the farthest from the anti-ideal reference point. Thus, it first constructs a normalized, weighted decision matrix and then defines ideal and anti-ideal vectors corresponding to each indicator. For each alternative, the distances to these reference points are calculated, after which a proximity coefficient is calculated. Alternatives are ranked by the magnitude of this coefficient: the higher the coefficient, the more preferable the alternative.

Vector normalization is applied:

after which the normalized values are weighed:

Then the ideal solutions are determined:

• Positive Ideal Solution (PIS):

where

• Negative-ideal solution (NIS):

where

The distance of the alternative

The relative closeness of an alternative to the ideal solution is defined as

Alternatives are sorted in descending order of

Advantages of the TOPSIS method:

• Simplicity and clarity—the method is distinguished by clear mathematical procedures and easily interpretable results;

• Taking into account ideal and anti-ideal solutions—allows you to objectively evaluate alternatives, focusing on both the best and the worst solution;

• The incorporation of criterion weights provides flexibility in decision-making by accounting for the varying importance of individual indicators.

At the concluding stage, the measured values of the indicators are evaluated in relation to their optimal counterparts within the decision matrix. Each alternative’s divergence from the optimal value is determined, which makes it possible to represent its reliability degree as a percentage from 0% to 100%.

Next, an integrated reliability assessment is formed by aggregating the results obtained using various multicriteria analysis methods (ARAS, CoCoSo, TOPSIS). To ensure a balanced final metric, the geometric mean of the integrated indicators is calculated:

where

Based on the

As illustrated in Fig. 2, the proposed reliability assessment framework outlines the complete workflow of the hybrid methodology that integrates the ISO/IEC 25010:2023 quality model, machine learning, and MCDM techniques. The process begins with the selection of indicators and data entry, followed by a validation step to check the completeness of data. In order to have a fully completed dataset, missing entries are filled in by outcome estimations through the XGBoost algorithm. Expert judgment using AHP is applied in determining weights for criteria, subcriteria, and indicators. The calculated weights are then applied within ARAS, CoCoSo, and TOPSIS methods that return integrated reliability scores. The final reliability index is taken as a geometric mean value of these results—this single number summary neatly encapsulates information system reliability.

Figure 2: Reliability evaluation framework for information systems.

In summary, this methodology enables and combines the use of the most recent international standard ISO/IEC 25010:2023, multicriteria analysis methods (ARAS, CoCoSo, TOPSIS), AHP for weighting determination, and machine learning algorithms (XGBoost) for imputation of missing values. Through the integration of measurable indicators and weighted significance, the hybrid model delivers a thorough and impartial assessment of information system reliability, expressed as an integrated percentage value. The final composite score, obtained via the geometric mean across various MCDM approaches, guarantees stable and comprehensible results, confirming the methodology’s suitability for applied and scholarly use alike.

For our research, we needed real data. To this end, we contacted several IT companies, who agreed to assist us and provided information about the information systems they supported. The companies strictly insisted on confidentiality: we cannot disclose the names of the systems themselves or the companies. We were provided only with system descriptions and measurement results for the indicators necessary for assessing reliability.

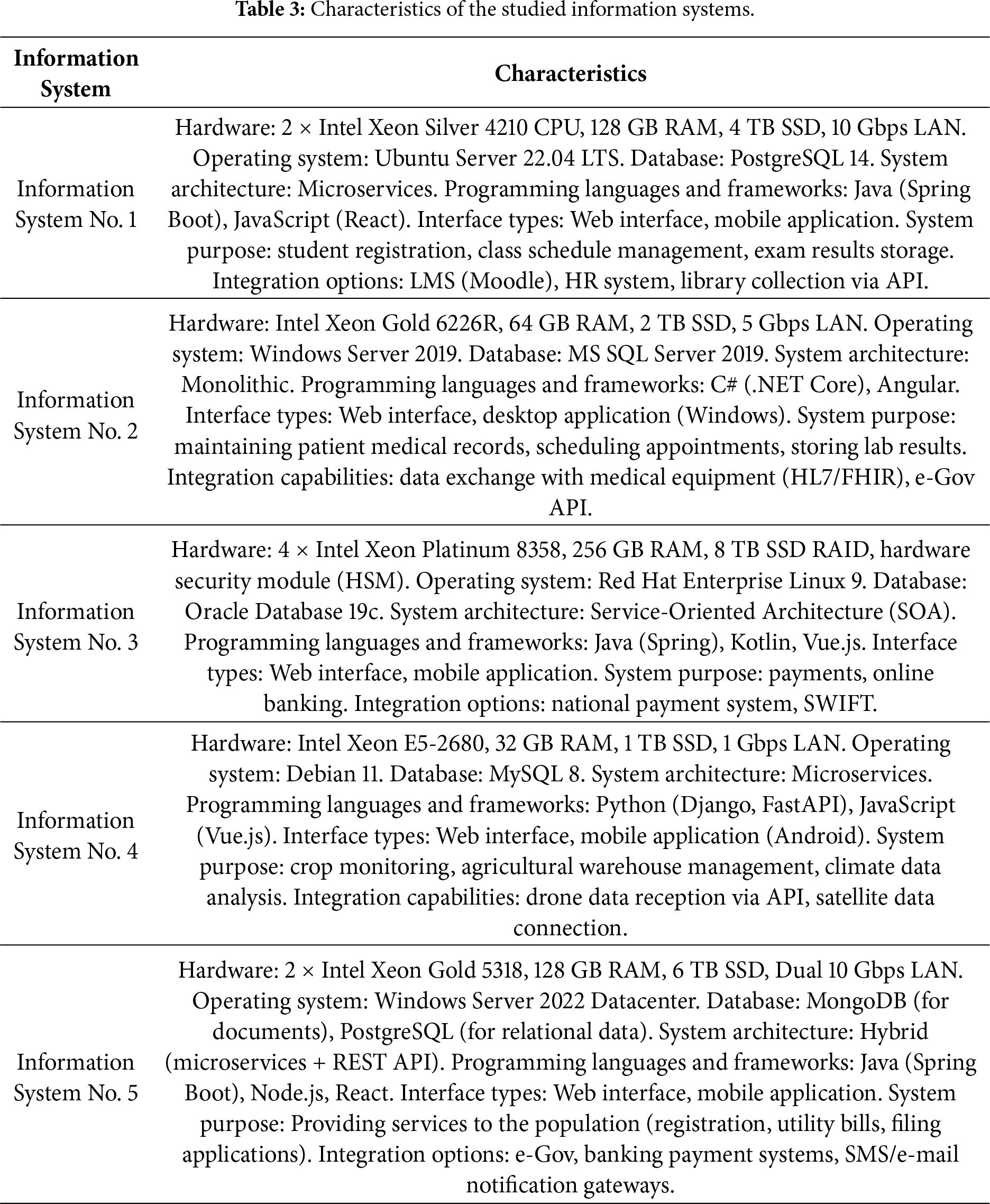

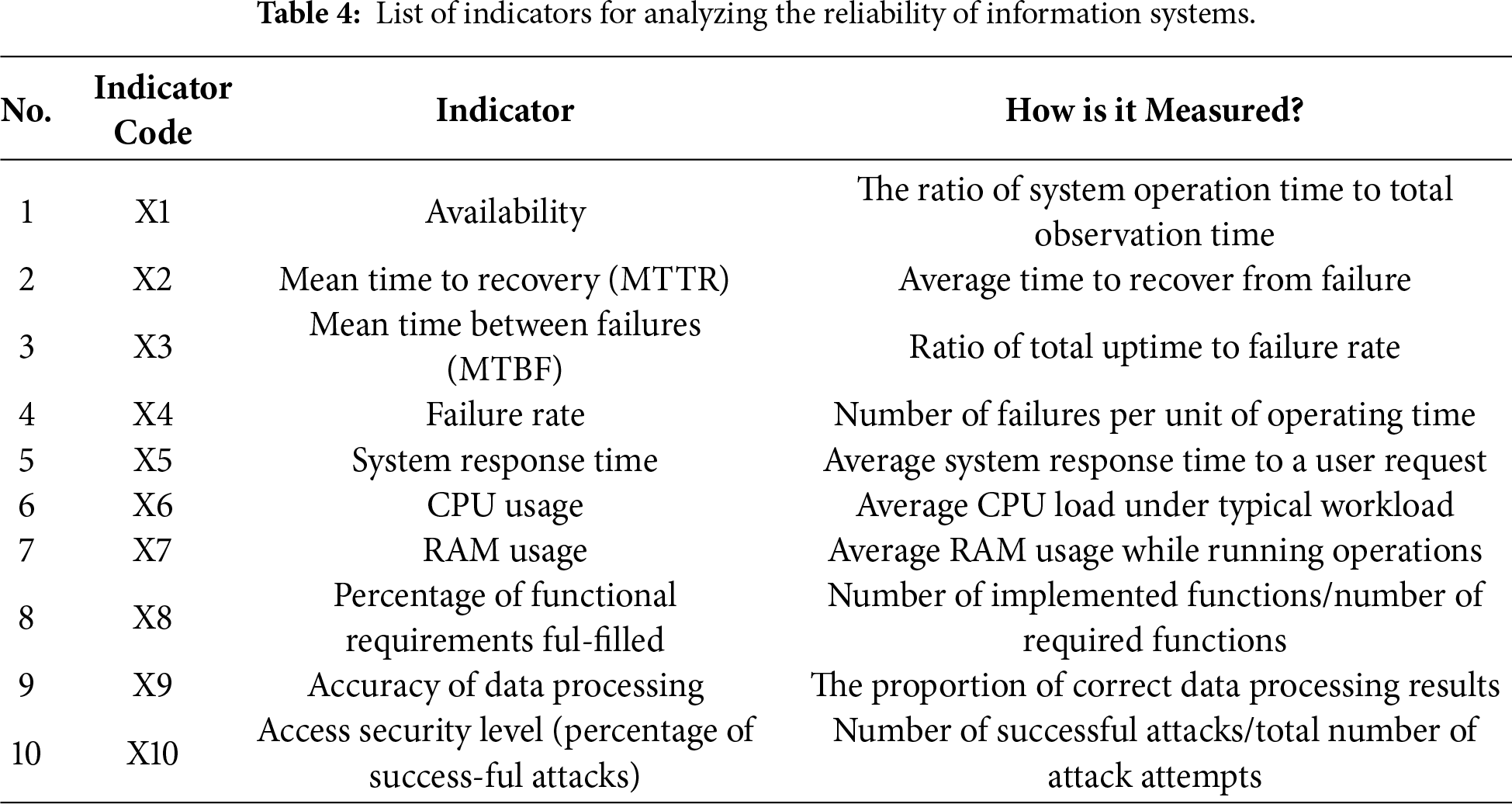

Based on this data, we created a dataset that we use for machine learning and multicriteria analysis. As an example experiment, we included data from five different information systems. Their key characteristics are presented in the table below (Table 3).

To provide a clearer presentation of the experimental data, the above-described information systems will be considered in a simplified variant. To analyze their reliability, a set of key indicators is used, which most fully and systematically characterize the system features. The list of indicators is provided in Table 4. This makes it possible to present the data compactly, concurrently performing multi-criteria analysis and visualization of the results.

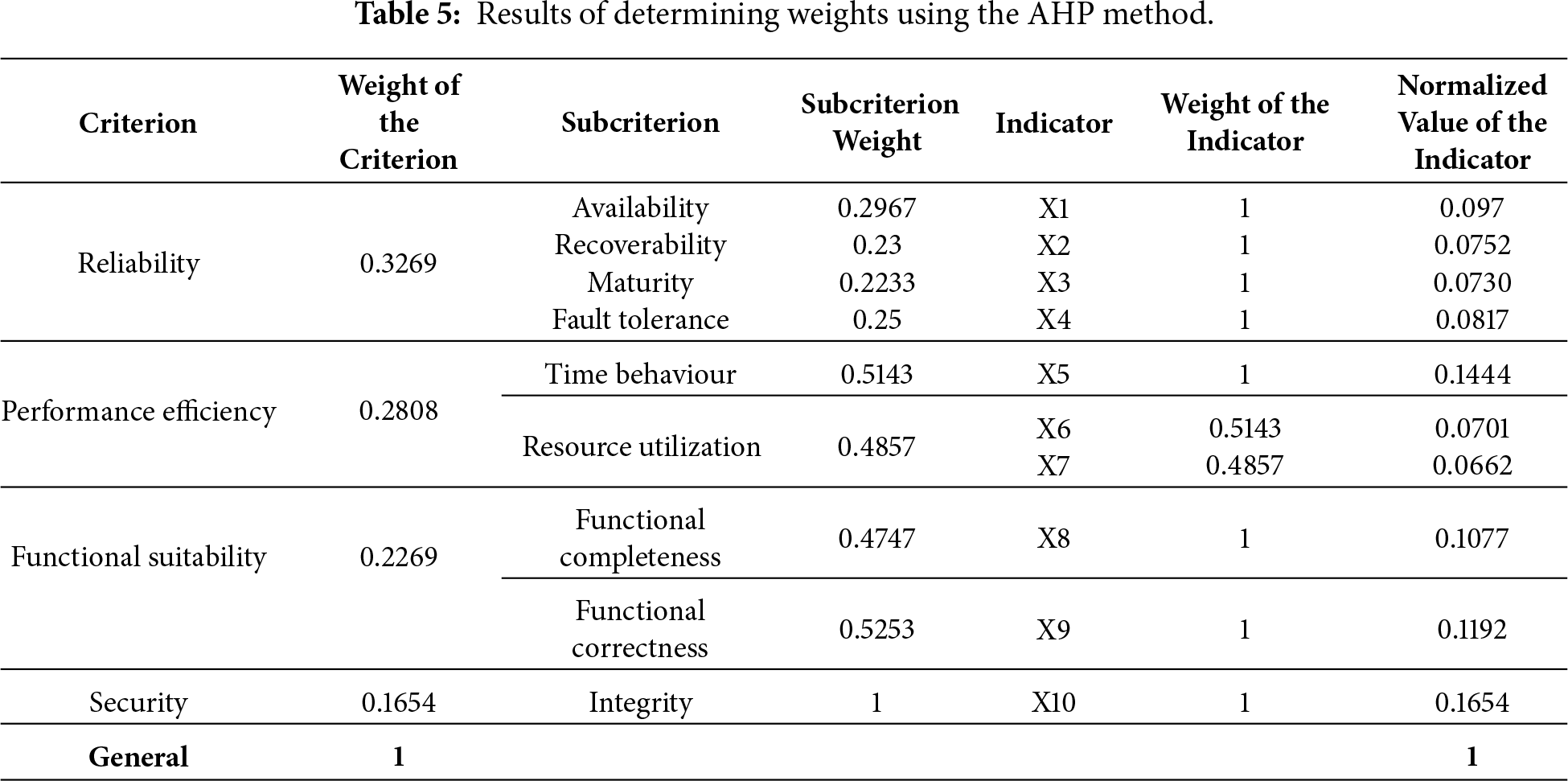

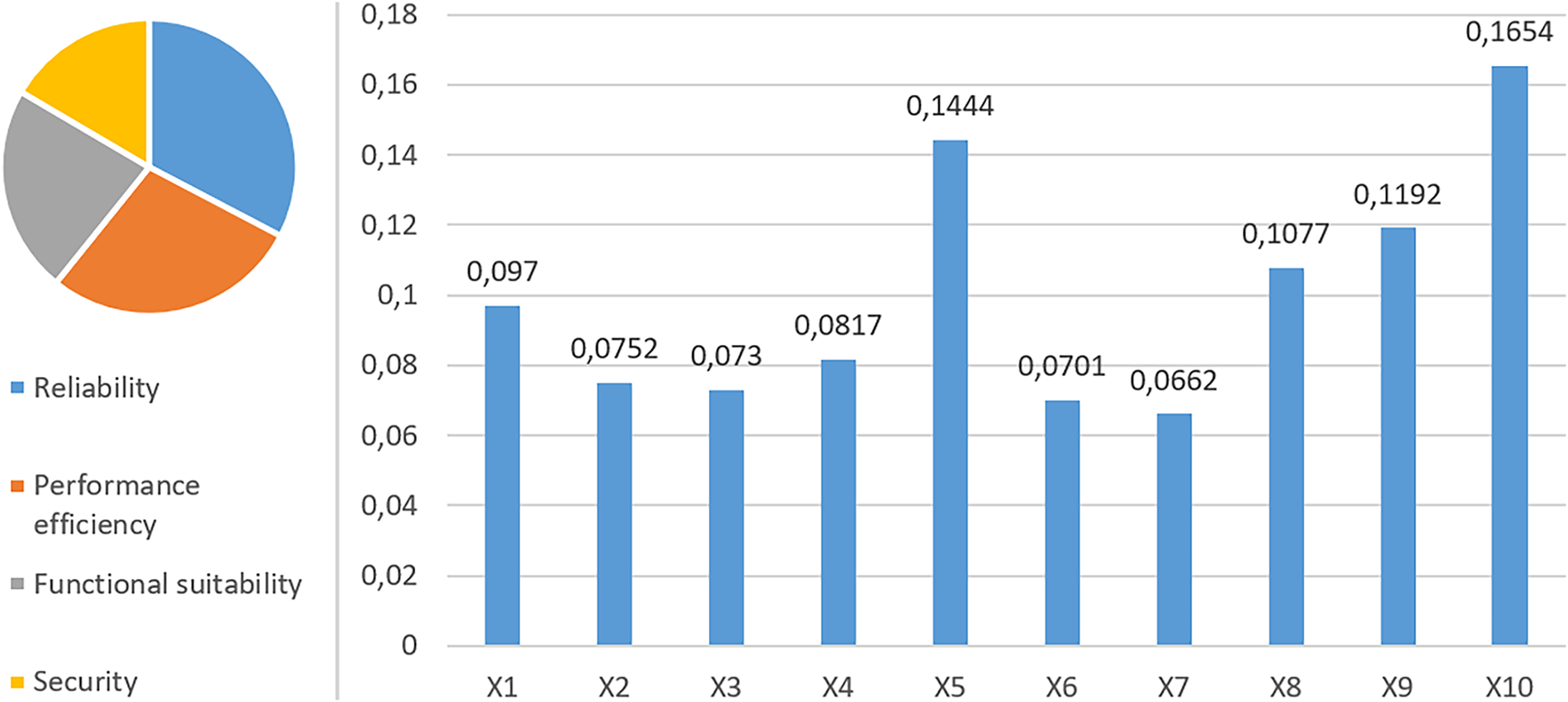

The AHP pairwise comparison method was used to calculate the weights. The evaluation took into account the average values obtained from 25 experts. The panel of 25 experts included IT system architects (8), software engineers (7), information security specialists (5), and academic researchers in information systems (5). All experts had at least 7 years of professional experience and prior participation in system evaluation or quality assurance projects. The consistency ratio (CR) of all matrices was below 0.1, indicating acceptable agreement among the experts.

Based on this data, the criteria, subcriteria, and indicators were prioritized, after which the values were normalized. The final results are summarized in Table 5, and a graphical representation of the structure and distribution of the weights is shown in Fig. 3.

Figure 3: Graphical representation of the distribution of weights of criteria and indicators.

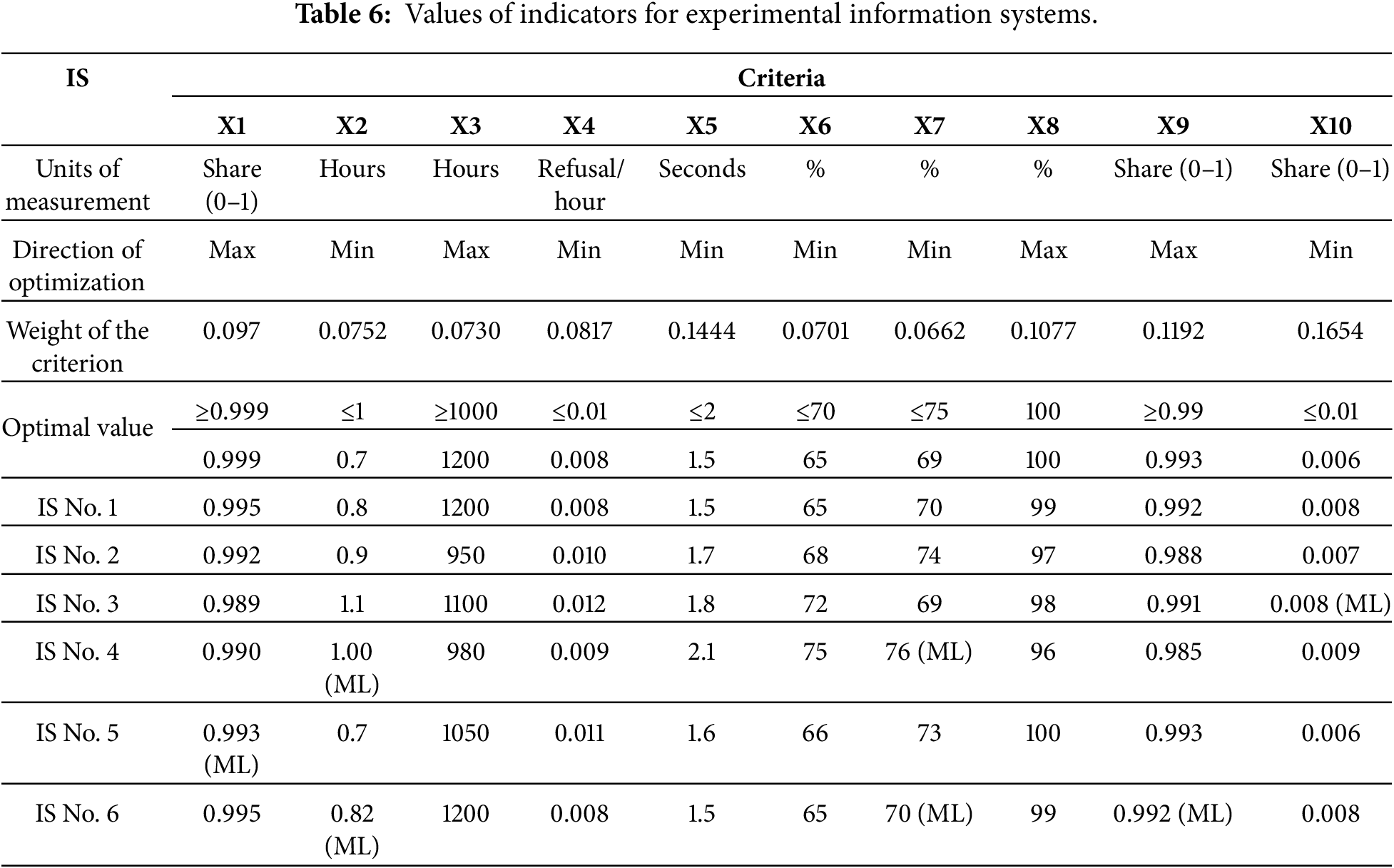

Table 6 presents the indicator values for the five experimental information systems, along with their optimal values and optimal directions. A sixth system was also created, similar to the first. Several gaps were intentionally left in its data, which will later be reconstructed using machine learning methods to demonstrate the process of processing incomplete data and compare the obtained results.

For indicators with an optimal “max” direction, if the actual value is higher than the specified optimal value, then the highest of the presented values is taken as a reference value. In case it is lower, the original optimal value remains. For indicators with a “min” optimization direction, the same logic is followed: if the measured value is smaller than the lower limit, that minimum is used as the reference, while higher values preserve the original optimal setting. This approach allows for the specific characteristics of indicators to be accurately taken into account during comparative analysis and the formation of a summary assessment.

As shown in Fig. 4, a final matrix was generated, reflecting the impact of each indicator on the overall reliability of the systems. This data presentation format allows for comparison not only of alternatives but also of the optimal value, highlighting the strengths and weaknesses of each information system. The obtained results served as the basis for further calculation of integrated indicators using the ARAS method and their visualization in the form of a diagram.

Figure 4: Results of applying the ARAS method.

The final results calculated using the CoCoSo method are presented in Fig. 5. These values represent composite scores of all indicators and permit relative analysis among the six information systems.

Figure 5: Results of applying the CoCoSo method.

Fig. 6 shows the results of TOPSIS. While comparing alternatives, the optimization direction for each criterion is taken into consideration: for indicators with a “max” direction, higher values are considered to indicate better system quality; for indicators with a ‘min’ direction, the minimum value is considered as good. This makes the comparison of alternatives fair and exposes, in a more objective manner, the strong and weak points of the information systems under study.

Figure 6: Results of applying the TOPSIS method.

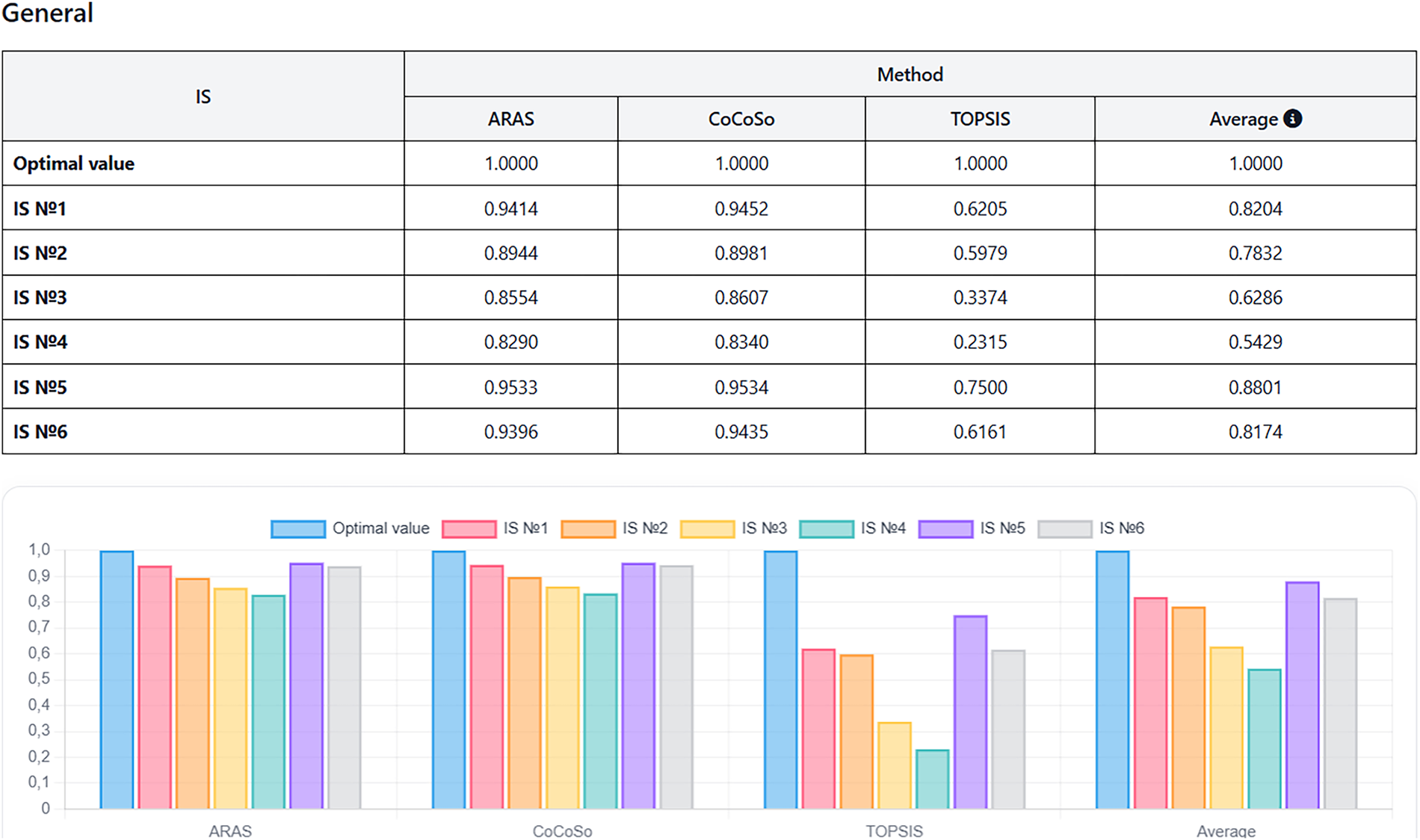

Reliability assessment was conducted using three multicriteria analysis methods: ARAS, CoCoSo, and TOPSIS. The results showed that, according to the ARAS and CoCoSo methods, all six systems under study had values close to optimal, indicating a relatively high level of reliability. IS No. 1 and No. 5 demonstrated the most consistent results, while IS No. 3 and No. 4 showed relatively low values (Fig. 7).

Figure 7: Final results of the information systems reliability assessment.

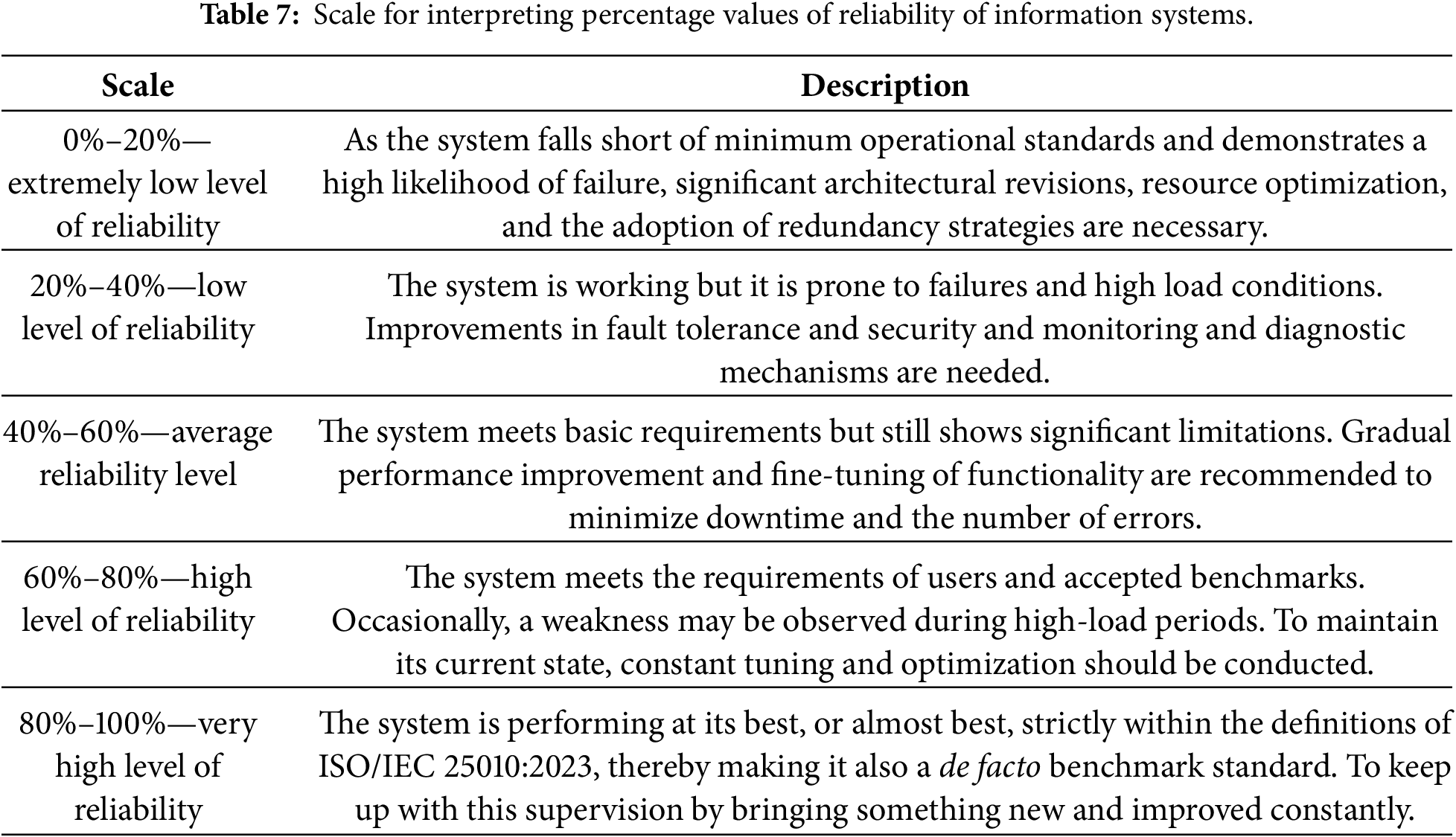

To interpret the obtained reliability percentage values, a scale based on recommendations from international software quality standards was used. This scale allows for the classification of information systems by reliability levels and the development of practical recommendations (Table 7).

An extra sensitivity test was carried out to check and confirm the stability of our hybrid methodology under different operational conditions. The weights of the indicators, which were set through the AHP method, have been slightly modified by ±10%. The total composite scores were then recalculated again in TOPSIS and CoCoSo methods. It appeared from the analysis that small changes in weight did not disturb the final ranking of information systems. Very slight changes have been observed only at a few borderline cases mostly in “Security” and “Response Time” metrics. Therefore, it can be considered as an evident result proving robustness and stability of model when weighting configuration changes within small limits.

This paper proposes and explores a hybrid methodology for assessing the reliability of information systems based on the combined use of ISO/IEC 25010:2023, multicriteria analysis methods (ARAS, CoCoSo, and TOPSIS), the Analytical Hierarchy Process, and machine learning algorithms. The primary goal of the study was to overcome the fragmentation of existing approaches and develop a holistic, quantitatively interpretable reliability assessment applicable to real-world information system operations.

ISO/IEC 25010:2023 was chosen as the conceptual basis for the methodology, allowing reliability to be viewed not as a standalone indicator, but as a systemic property encompassing availability, fault tolerance, maturity, and recoverability. The standard’s hierarchical structure ensured a logical decomposition of requirements and linked abstract quality characteristics to specific measurable indicators. The use of the AHP method made it possible to incorporate expert knowledge and accurately determine the relative importance of criteria and indicators. A significant practical aspect of the work was addressing the problem of data incompleteness, which is typical when assessing real-world information systems. To this end, machine learning was integrated into the methodology for missing value imputation. Experiments demonstrated that using machine learning at the data preparation stage improves the completeness and consistency of the initial data without distorting the final assessment results.

To generate the final reliability assessment, three different multicriteria analysis methods—ARAS, CoCoSo, and TOPSIS, were used. Their combined use reduced the impact of the limitations of individual methods and increased the robustness of the results. The resulting assessments demonstrated high consistency between the methods, and sensitivity analysis confirmed the stability of the final conclusions when changing criterion weights within acceptable limits.

The practical value of the proposed methodology lies in its ability to comprehensively determine the reliability level of an information system, taking into account all the key criteria regulated by the ISO/IEC 25010:2023 standard. The final assessment, presented in a visual and interpretable form, facilitates the analysis of system status, the identification of weaknesses, and the comparison of information systems with different architectures and purposes. This makes the developed approach a convenient tool for supporting decisions in quality management, modernization, and the development of digital systems.

Overall, the proposed methodology represents a reproducible and practice-oriented tool for assessing the reliability of information systems. This work does not aim to create new algorithms, but rather demonstrates the sound integration of existing methods into a single model adapted to modern requirements and real-world operating conditions, which determines its scientific and practical significance.

Acknowledgement: Not applicable.

Funding Statement: The work was carried out with the financial support of the Ministry of Science and Higher Education of the Republic of Kazakhstan, grant No. AP25793823.

Author Contributions: The authors confirm contribution to the paper as follows: Conceptualization, Nurbek Sissenov and Dina Satybaldina; methodology, Nurbek Sissenov and Dina Satybaldina; software, Nurbek Sissenov and Gulden Ulyukova; validation, Nurbek Sissenov and Gulden Ulyukova; formal analysis, Nurbek Sissenov; investigation, Nurbek Sissenov and Gulden Ulyukova; resources, Gulden Ulyukova; data curation, Nurbek Sissenov, Dina Satybaldina and Nikolaj Goranin; writing—original draft preparation, Nurbek Sissenov and Gulden Ulyukova; writing—review and editing, Dina Satybaldina and Nikolaj Goranin; visualization, Nurbek Sissenov; supervision, Nurbek Sissenov and Dina Satybaldina; project administration, Nurbek Sissenov and Dina Satybaldina; funding acquisition, Nurbek Sissenov and Dina Satybaldina. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: Data available within the article.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

Abbreviations

| AHP | Analytic Hierarchy Process |

| ARAS | Additive Ratio Assessment |

| CoCoSo | COmbined COmpromise Solution |

| TOPSIS | Technique for Order of Preference by Similarity to Ideal Solution |

| XGBoost | Extreme Gradient Boosting |

| MCDM | MultiCriteria Modeling Methods |

| IS | Information System |

| ML | Machine Learning |

| CR | Consistency Ratio |

References

1. Carpitella S, Carpitella F, Certa A, Brentan B, Izquierdo J. Advanced risk management software for multi-criteria decision-making in uncertain scenarios. Comput Appl Math. 2025;44(7):379. doi:10.1007/s40314-025-03338-0. [Google Scholar] [CrossRef]

2. Tworek K. Reliability of information systems in organization in the context of banking sector: empirical study from Poland. Cogent Bus Manage. 2018;5(1):1522752. doi:10.1080/23311975.2018.1522752. [Google Scholar] [CrossRef]

3. Dubey SK, Jasra B. Reliability assessment of component based software systems using fuzzy and ANFIS techniques. Int J Syst Assur Eng Manage. 2017;8(2):1319–26. doi:10.1007/s13198-017-0602-z. [Google Scholar] [CrossRef]

4. Foidl H, Felderer M. Integrating software quality models into risk-based testing. Softw Qual J. 2018;26(2):809–47. doi:10.1007/s11219-016-9345-3. [Google Scholar] [CrossRef]

5. Grote T, Genin K, Sullivan E. Reliability in machine learning. Philos Compass. 2024;19(5):e12974. doi:10.1111/phc3.12974. [Google Scholar] [CrossRef]

6. Vinodh S, Swarnakar V. Lean Six Sigma project selection using hybrid approach based on fuzzy DEMATEL–ANP–TOPSIS. Int J Lean Six Sigma. 2015;6(4):313–38. doi:10.1108/ijlss-12-2014-0041. [Google Scholar] [CrossRef]

7. Budiman E, Wati M, Widians JA, Puspitasari N, Firdaus M, Alameka F. ISO/IEC 9126 quality model for evaluation of student academic portal. In: proceedings of 2nd International Conference on Business, Finance, Management and Economics (BIZFAME); 2023 Jun 22–23;Kedah, Malaysia. doi:10.21834/e-bpj.v8iSI15.5104. [Google Scholar] [CrossRef]

8. ISO/IEC 25010:2023. Systems and software engineering—systems and software Quality Requirements and Evaluation (SQuaRE)—product quality model. Geneva, Switzerland: International Organization for Standardization; 2023. [Google Scholar]

9. Tamura Y, Kawakami M, Yamada S. Reliability modeling and analysis for open source cloud computing. Proc Inst Mech Eng Part O J Risk Reliab. 2013;227(2):179–86. doi:10.1177/1748006x12475110. [Google Scholar] [CrossRef]

10. Gobov D, Zuieva O. Software quality attributes in requirements engineering. Int J Inf Technol Comput Sci. 2025;17(4):38–48. doi:10.5815/ijitcs.2025.04.04. [Google Scholar] [CrossRef]

11. Raut RD, Bhasin HV, Kamble SS. Evaluation of supplier selection criteria by combination of AHP and fuzzy DEMATEL method. Int J Bus Innov Res. 2011;5(4):359. doi:10.1504/ijbir.2011.041056. [Google Scholar] [CrossRef]

12. Băcescu Ene GV, Stoia MA, Cojocaru C, Todea DA. SMART multi-criteria decision analysis (MCDA)-one of the keys to future pandemic strategies. J Clin Med. 2025;14(6):1943. doi:10.3390/jcm14061943. [Google Scholar] [PubMed] [CrossRef]

13. Yazdani M, Zarate P, Kazimieras Zavadskas E, Turskis Z. A combined compromise solution (CoCoSo) method for multi-criteria decision-making problems. Manag Decis. 2019;57(9):2501–19. doi:10.1108/md-05-2017-0458. [Google Scholar] [CrossRef]

14. Ershadi MJMJ, Forouzandeh M. Information security risk management of research information systems: a hybrid approach of fuzzy FMEA, AHP, TOPSIS and Shannon entropy. J Digit Inf Manage. 2019;17(6):321. doi:10.6025/jdim/2019/17/6/321-336. [Google Scholar] [CrossRef]

15. Hemmati N, Rahiminezhad Galankashi M, Imani DM, Mokhatab Rafiei F. An integrated fuzzy-AHP and TOPSIS approach for maintenance policy selection. Int J Qual Reliab Manage. 2020;9(10):1275–99. doi:10.1108/ijqrm-10-2018-0283. [Google Scholar] [CrossRef]

16. Pamucar D, Ecer F, Gligorić Z, Gligorić M, Deveci M. A novel WENSLO and ALWAS multicriteria methodology and its application to green growth performance evaluation. IEEE Trans Eng Manage. 2024;71:9510–25. doi:10.1109/TEM.2023.3321697. [Google Scholar] [CrossRef]

17. Mardani A, Jusoh A, Zavadskas EK. Fuzzy multiple criteria decision-making techniques and applications–Two decades review from 1994 to 2014. Expert Syst Appl. 2015;42(8):4126–48. doi:10.1016/j.eswa.2015.01.003. [Google Scholar] [CrossRef]

18. Kazimieras Zavadskas E, Kazimieras Zavadskas E, Antucheviciene J, Adeli H, Turskis Z, Adeli H. Hybrid multiple criteria decision making methods: a review of applications in engineering. Sci Iran. 2016;23(1):1–20. doi:10.24200/sci.2016.2093. [Google Scholar] [CrossRef]

19. Demir G, Chatterjee P, Pamucar D. Sensitivity analysis in multi-criteria decision making: a state-of-the-art research perspective using bibliometric analysis. Expert Syst Appl. 2024;237:121660. doi:10.1016/j.eswa.2023.121660. [Google Scholar] [CrossRef]

20. Saraygord Afshari S, Enayatollahi F, Xu X, Liang X. Machine learning-based methods in structural reliability analysis: a review. Reliab Eng Syst Saf. 2022;219:108223. doi:10.1016/j.ress.2021.108223. [Google Scholar] [CrossRef]

21. Chatterjee K, Zavadskas EK, Tamošaitienė J, Adhikary K, Kar S. A hybrid MCDM technique for risk management in construction projects. Symmetry. 2018;10(2):46. doi:10.3390/sym10020046. [Google Scholar] [CrossRef]

22. Mostafa AM. An MCDM approach for cloud computing service selection based on best-only method. IEEE Access. 2021;9:155072–86. doi:10.1109/ACCESS.2021.3129716. [Google Scholar] [CrossRef]

23. Grimmelikhuijsen S. Explaining why the computer says no: algorithmic transparency affects the perceived trustworthiness of automated decision-making. Public Adm Rev. 2023;83(2):241–62. doi:10.1111/puar.13483. [Google Scholar] [CrossRef]

24. Boranbayev A, Boranbayev S, Sissenov N, Goranin N. Method and software system for assessing the reliability of information systems. J Theor Appl Inf Technol. 2021;99(19):4436–47. doi:10.1007/978-3-030-89912-7_38. [Google Scholar] [CrossRef]

25. Jaiswal A, Malhotra R. Software reliability prediction using machine learning techniques. Int J Syst Assur Eng Manage. 2018;9(1):230–44. doi:10.1007/s13198-016-0543-y. [Google Scholar] [CrossRef]

26. Tamascelli N, Campari A, Parhizkar T, Paltrinieri N. Artificial Intelligence for safety and reliability: a descriptive, bibliometric and interpretative review on machine learning. J Loss Prev Process Ind. 2024;90:105343. doi:10.1016/j.jlp.2024.105343. [Google Scholar] [CrossRef]

27. Thomas T, Rajabi E. A systematic review of machine learning-based missing value imputation techniques. Data Technol Appl. 2021;55(4):558–85. doi:10.1108/dta-12-2020-0298. [Google Scholar] [CrossRef]

28. Bakurova A, Bilyi V. Development of an approach for predicting the cost of damaged infrastructure recovery with microservice implementation. Technol Audit Prod Reserv. 2025;5(2(85)):33–9. doi:10.15587/2706-5448.2025.339773. [Google Scholar] [CrossRef]

29. Levitin G, Finkelstein M, Xiang Y. Optimal inspections and mission abort policies for multistate systems. Reliab Eng Syst Saf. 2021;214:107700. doi:10.1016/j.ress.2021.107700. [Google Scholar] [CrossRef]

30. Deng Y, Lumley T. Multiple imputation through XGBoost. J Comput Graph Stat. 2024;33(2):352–63. doi:10.1080/10618600.2023.2252501. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools