Open Access

Open Access

REVIEW

A Review on Penetration Testing for Privacy of Deep Learning Models

1 School of Mathematics, Physics and Computing, University of Southern Queensland, Toowoomba, QLD, Australia

2 Department of Engineering, La Trobe University, Melbourne, VIC, Australia

3 School of Computer and Data Science, Minjiang University, Fuzhou, China

4 School of Security Science and Engineering, Civil Aviation University of China, Tianjin, China

* Corresponding Author: Wencheng Yang. Email:

(This article belongs to the Special Issue: Artificial Intelligence Methods and Techniques to Cybersecurity)

Computers, Materials & Continua 2026, 87(2), 2 https://doi.org/10.32604/cmc.2026.076358

Received 19 November 2025; Accepted 22 January 2026; Issue published 12 March 2026

Abstract

As deep learning (DL) models are increasingly deployed in sensitive domains (e.g., healthcare), concerns over privacy and security have intensified. Conventional penetration testing frameworks, such as OWASP and NIST, are effective for traditional networks and applications but lack the capabilities to address DL-specific threats, such as model inversion, membership inference, and adversarial attacks. This review provides a comprehensive analysis of penetration testing for the privacy of DL models, examining the shortfalls of existing frameworks, tools, and testing methodologies. Through systematic evaluation of existing literature and empirical analysis, we identify three major contributions: (i) a critical assessment of traditional penetration testing frameworks’ inadequacies when applied to DL-specific privacy vulnerabilities, (ii) a comprehensive evaluation of state-of-the-art privacy-preserving methods and their integration with penetration testing workflows, and (iii) the development of a structured framework that combines reconnaissance, threat modeling, exploitation, and post-exploitation phases specifically tailored for DL privacy assessment. Moreover, this review evaluates popular solutions such as IBM Adversarial Robustness Toolbox and TensorFlow Privacy, alongside privacy-preserving techniques (e.g., Differential Privacy, Homomorphic Encryption, and Federated Learning), which we systematically analyze through comparative studies of their effectiveness, computational overhead, and practical deployment constraints. While these techniques offer promising safeguards, their adoption is hindered by accuracy loss, performance overheads, and the rapid evolution of attack strategies. Our findings reveal that no single existing solution provides comprehensive protection, which leads us to propose a hybrid approach that strategically combines multiple privacy-preserving mechanisms. The findings of this survey underscore an urgent need for automated, regulation-compliant penetration testing frameworks specifically tailored to DL systems. We argue for hybrid privacy solutions that combine multiple protective mechanisms to ensure both model accuracy and privacy. Building on our analysis, we present actionable recommendations for developing adaptive penetration testing strategies that incorporate automated vulnerability assessment, continuous monitoring, and regulatory compliance verification.Keywords

The systematic process of identifying and analyzing security vulnerabilities within a system, network, or application to uncover weaknesses before malicious actors can exploit them is known as Penetration Testing, also known as Pen Testing or Ethical Hacking [1,2]. Penetration testing has become essential for cybersecurity because it enables industries and companies to proactively identify security gaps and strengthen their defensive measures against cyber threats [3–5]. The primary goal of penetration testing is to discover and evaluate security flaws in a system before malicious actors can leverage them for unauthorized access or data exfiltration [6]. This process typically involves simulating various attack scenarios, evaluating the system’s response, and providing actionable recommendations to mitigate identified vulnerabilities [7–9]. Through systematic penetration testing, businesses can effectively prioritize and remediate vulnerabilities, which significantly reduces the risk of data breaches, information theft, financial damage, and reputational harm.

Deep Learning (DL), a specialized branch of machine learning, has fundamentally transformed numerous industries through its ability to process and analyze vast quantities of data. DL excels in domains requiring complex pattern recognition, such as image identification, predictive analytics, and natural language processing, which leverage multi-layered neural networks [10]. These sophisticated neural architectures enable advanced decision-making capabilities that have revolutionized critical sectors [11], including healthcare (e.g., disease diagnosis [12–14], medical image analysis [15–17], e-health records analysis [18–20]), banking (e.g., algorithmic trading, fraud detection, risk management [21,22]), autonomous systems (e.g., robotics, self-driving automobiles [23,24]), and identity management(e.g., biometric recognition [25–28], identity privacy protection [29–31]). Despite these advances, such platforms inherently process and store sensitive data, which renders them attractive targets for malicious attacks. The ubiquitous deployment of DL systems has consequently generated substantial privacy concerns.

While prior research has examined individual DL privacy threats including model inversion, model extraction, membership inference attacks, data poisoning, and adversarial exploitation, such studies typically analyze each attack separately and therefore do not provide a holistic evaluation of a deployed model’s privacy posture [6]. Traditional privacy-attack analyses often demonstrate the feasibility of specific attacks under controlled laboratory settings, without considering how these threats manifest across the full lifecycle of real-world systems [32]. In practice, DL models can leak private information through outputs, gradients, confidence scores, and latent representations, making them vulnerable in ways fundamentally different from classical software applications. These diverse leakage pathways underscore the need for more rigorous and comprehensive privacy-evaluation methodologies.

Penetration testing (PT) is therefore essential because it offers a structured, multi-phase, adversarial methodology capable of evaluating privacy leakage under realistic operational conditions. Unlike standalone attack demonstrations, PT incorporates reconnaissance, threat modeling, exploitation, and post-exploitation analysis, enabling a full-spectrum understanding of how privacy vulnerabilities emerge, propagate, and interact across interconnected DL components. Furthermore, PT evaluates practical deployment specific factors such as API exposure, query limitations, gradient access, and system integration that traditional privacy-attack research often overlooks. This distinction highlights why PT is critical for assessing DL privacy and why existing privacy analyses alone are insufficient for securing real-world deployments.

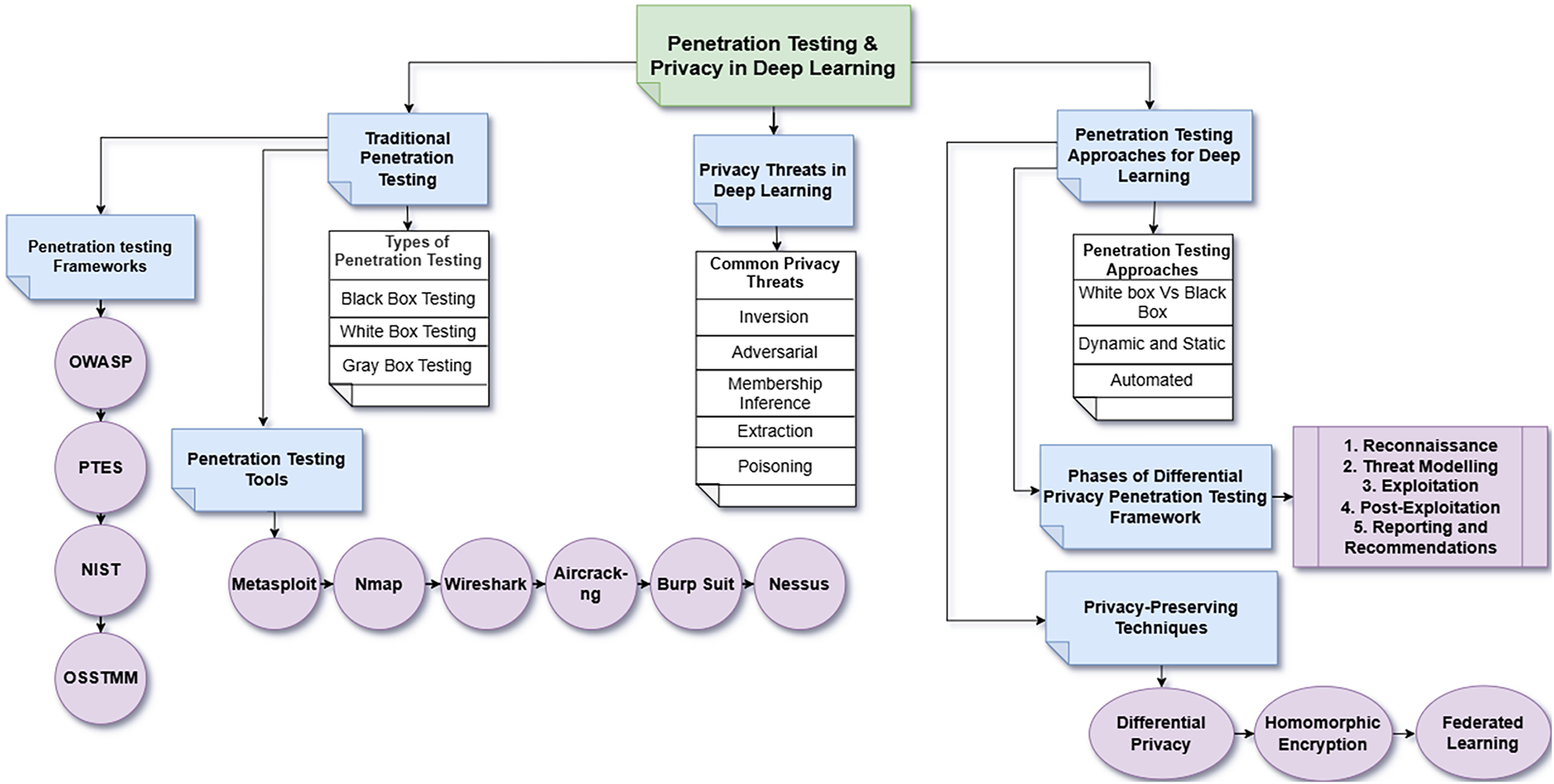

This review addresses a critical gap in the literature by being the comprehensive analysis to specifically examine penetration testing methodologies for DL privacy, which distinguishes it from previous surveys that have either focused solely on general DL security [10] or traditional penetration testing without DL considerations [4]. The motivation for this review arises from the recognition that existing privacy-attack research does not systematically incorporate attacker behaviour, lifecycle-based evaluation, or operational constraints, all of which are central components of penetration testing. While prior research on DL-related security risks and privacy protection has established foundational understanding, it has not systematically evaluated how traditional penetration testing can be adapted for DL-specific privacy threats. Through comprehensive integration of disparate resources, this review aims to establish a unified framework for developing systematic, reliable penetration testing strategies that specifically target the privacy vulnerabilities inherent in DL systems. The comprehensive analytical framework of this review is illustrated in Fig. 1. Fig. 1 represents a conceptual and methodological framework that integrates traditional penetration testing principles with deep learning–specific privacy threats and defenses. The components shown in the figure (e.g., privacy threats, evaluation objectives, and lifecycle-based assessment stages) are iterative and interdependent, and can be revisited or adapted depending on the model architecture, threat assumptions, and evaluation objectives. This framework serves as a systematic guideline for designing, conducting, and interpreting privacy penetration testing in deep learning systems.

Figure 1: Overall workflow diagram illustrating the penetration testing framework for deep learning privacy.

This survey aims to fill this gap by providing a comprehensive review of penetration testing for DL privacy. Specifically, the contributions of this work are fourfold:

1. A critical analysis of the limitations of traditional penetration testing frameworks when applied to DL privacy.

2. A taxonomy of DL privacy threats and their mapping to penetration testing phases.

3. A comparative evaluation of state-of-the-art privacy-preserving methods and associated tools (e.g., IBM ART, TensorFlow Privacy, PySyft, FATE).

4. Actionable recommendations and a future research agenda for hybrid, automated, and regulation-compliant penetration testing tailored to DL systems.

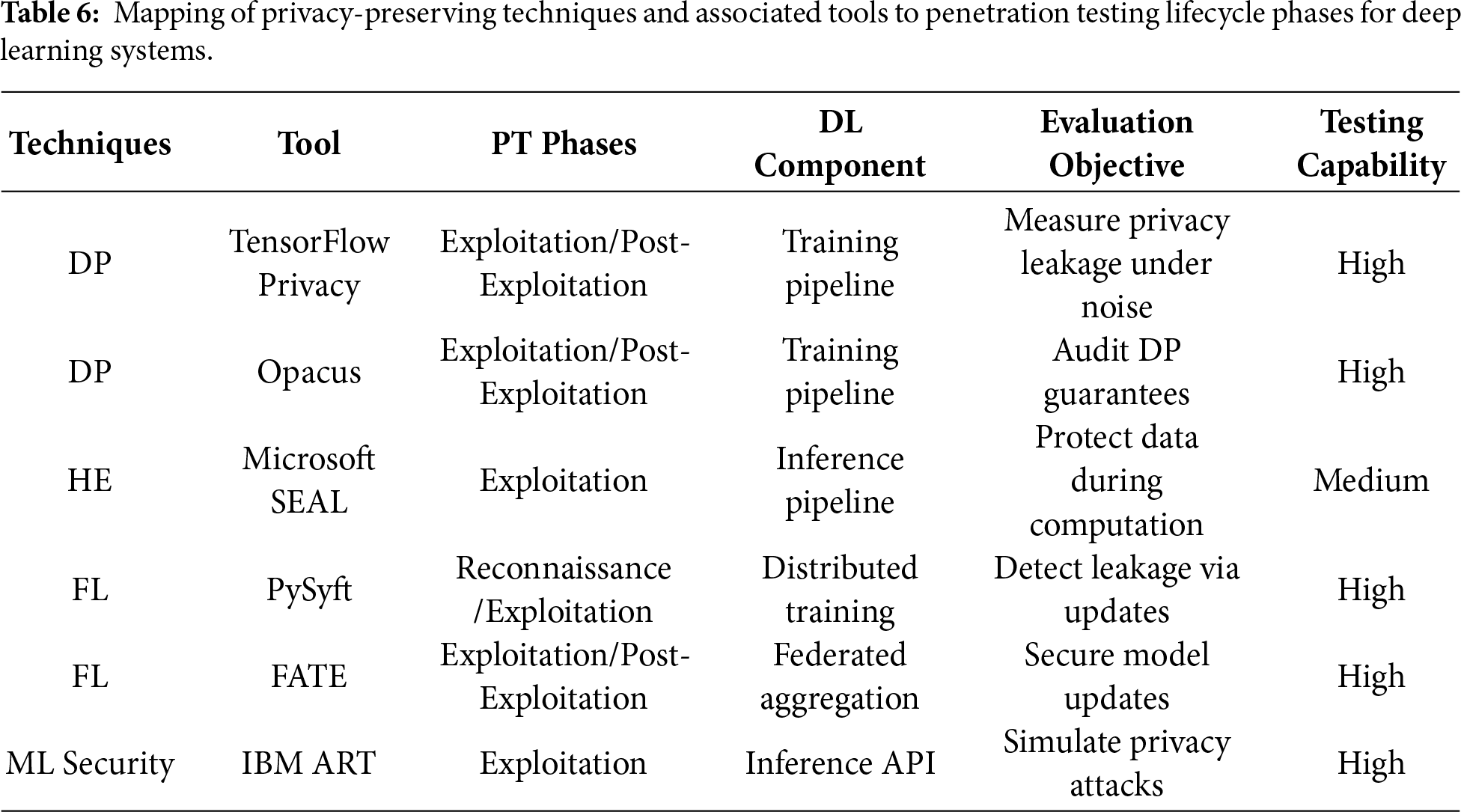

Unlike existing DL privacy and federated learning surveys, this review integrates privacy attacks, defenses, and evaluation within a penetration testing life-cycle tailored to deep learning systems, which is not jointly addressed in prior surveys. The comparison of our survey with some related existing surveys is listed in Table 1. The comparison highlights differences in scope and methodological focus rather than overall completeness or depth.

In contrast to AI red-teaming studies, which typically evaluate adversarial behaviours in isolated or robustness-focused scenarios, this review adopts a formal penetration testing life-cycle with explicit consideration of privacy leakage, system-level impact, and regulatory compliance for deep learning models.

On the above table, “Yes” indicates explicit coverage; “Partial” denotes limited discussion; “No” indicates that the aspect is not addressed.

Literature Review Methodology

This review adopts a structured literature analysis to identify relevant studies on penetration testing and deep learning privacy systems. Primary databases including IEEE Xplore, ACM Digital Library, ScienceDirect, Scopus, and Google Scholar. The search process utilised a combination of targeted keywords and logical operators to retrieve relevant studies. Core search terms included deep learning privacy, penetration testing, Penetration testing in deep learning, model inversion, membership inference, adversarial attacks, differential privacy, homomorphic encryption, federated learning and privacy-preserving machine learning. These terms were iteratively refined to ensure broad coverage while maintaining relevance to the research scope.

The inclusion criteria comprised peer-reviewed journal articles, conference papers, and authoritative technical reports, as well as preprint repositories were consulted to capture recent and emerging research developments focusing on DL privacy threats, penetration testing methodologies, or privacy-preserving techniques. Studies unrelated to machine learning, lacking security relevance, or focused solely on traditional network security were excluded. Reference management and duplicate removal were conducted using Zotero to ensure consistency. Following the screening titles, abstracts, and full texts, more than 80 peer-reviewed studies were selected for in-depth analysis. The selected literature was then organized into thematic categories covering deep learning privacy threats, penetration testing strategies, privacy-preserving mechanisms, evaluation tools, and emerging research challenges. This systematic selection process supports the comprehensive and balanced nature of the review.

The remainder of this paper follows a structured progression from foundational concepts to practical applications and future directions. Section 2 establishes the theoretical foundation by examining traditional penetration testing, with particular emphasis on key methodologies, including black-box testing, white-box testing, and gray-box testing, alongside critical evaluation of established penetration testing frameworks including OWASP, PTES, NIST, and OSSTMM. Section 3 provides a comprehensive taxonomy of privacy risks specific to deep learning models, encompassing model inversion, adversarial attacks, membership inference, model extraction, and data poisoning. Drawing from these identified privacy vulnerabilities, Section 4 investigates the adaptation and enhancement of penetration testing methodologies to effectively evaluate and strengthen DL privacy, which includes detailed analysis of targeted testing strategies, attack simulation tools, and the integration of privacy-preserving techniques such as differential privacy, homomorphic encryption, and federated learning. Section 5 articulates the research questions that frame this investigation, while Section 6 critically analyzes existing challenges and presents future research directions. Section 7 concludes this work by synthesizing the key findings and contributions of this comprehensive review.

2 Traditional Penetration Testing

The evolving complexity of modern technologies and the increasing sophistication of attack vectors drive continuous changes in cybersecurity risks. Penetration testing stands as one of the most widely adopted methodologies for analyzing security vulnerabilities, which replicates real-world attacks to evaluate a system’s security posture through assessment of configuration errors, weak passwords, insecure coding practices, and unpatched software. This testing methodology constitutes an essential component of proactive security frameworks (ISO 27001, NIST 800-53) [39–42] to ensure organizational compliance with security standards and regulatory requirements. The process involves deploying targeted attacks against the system to evaluate its security controls and determine the system’s resilience against potential threats [43]. Even though traditional penetration testing methods are extensively employed in information technology security, their effectiveness remains constrained when addressing privacy-specific vulnerabilities in DL models.

The following sections provide an examination and analysis of penetration testing types, tools, and frameworks.

2.1 Types of Penetration Testing

Penetration testing techniques are generally classified into three distinct categories (shown in Fig. 2, which is adapted from [44]) based on the tester’s level of system knowledge [45,46]. These classification frameworks are well established in traditional cybersecurity assessments, their direct applicability to deep learning (DL) privacy evaluation is limited, as they do not inherently capture model-specific privacy leakage or training-data exposure.

Figure 2: Three types of penetration testing.

In Black-Box Testing, testers possess no prior knowledge regarding the target system’s internal architecture simulating real-world external attackers who rely on reconnaissance and observable system behaviour [39,47,48]. The methodology proves particularly effective for evaluating the security posture of organization-facing systems, such as firewalls, web applications, and public APIs (Application Programming Interface). Despite its effectiveness in simulating genuine attack vectors, provides limited visibility into DL model internals and cannot directly assess privacy leakage arising from learned representations.

In White-Box Testing, alternatively referred to as clear-box testing, glass-box testing, structural-box testing, and transparent-box testing [47–49], grants testers full access to system architecture, source code, and configurations, enabling detailed identification of design flaws and implementation weaknesses. This methodology resembles internal security audits, wherein testers examine all system components, such as access control configurations, encryption implementations, and programmatic logic. However, this method incurs higher operational costs and demands extensive data requirements.

Gray-Box Testing, also designated as translucent-box testing, provides testers with partial information about the target system. This scenario simulates conditions where attackers possess limited insider knowledge, such as compromised credentials or restricted Application Programming Interface (API) access [47,49]. Gray-box testing enables testers to focus on high-risk areas without the extensive resource requirements of white-box testing, thus representing a strategic balance between effectiveness and operational authenticity. Nevertheless, without ML-specific evaluation techniques, gray-box testing remains insufficient for systematically identifying deep learning privacy threats such as membership inference or model inversion.

From an overall perspective, although these testing models remain foundational to penetration testing, their applicability to DL privacy assessment is limited unless extended with DL-specific attack methodologies and evaluation metrics.

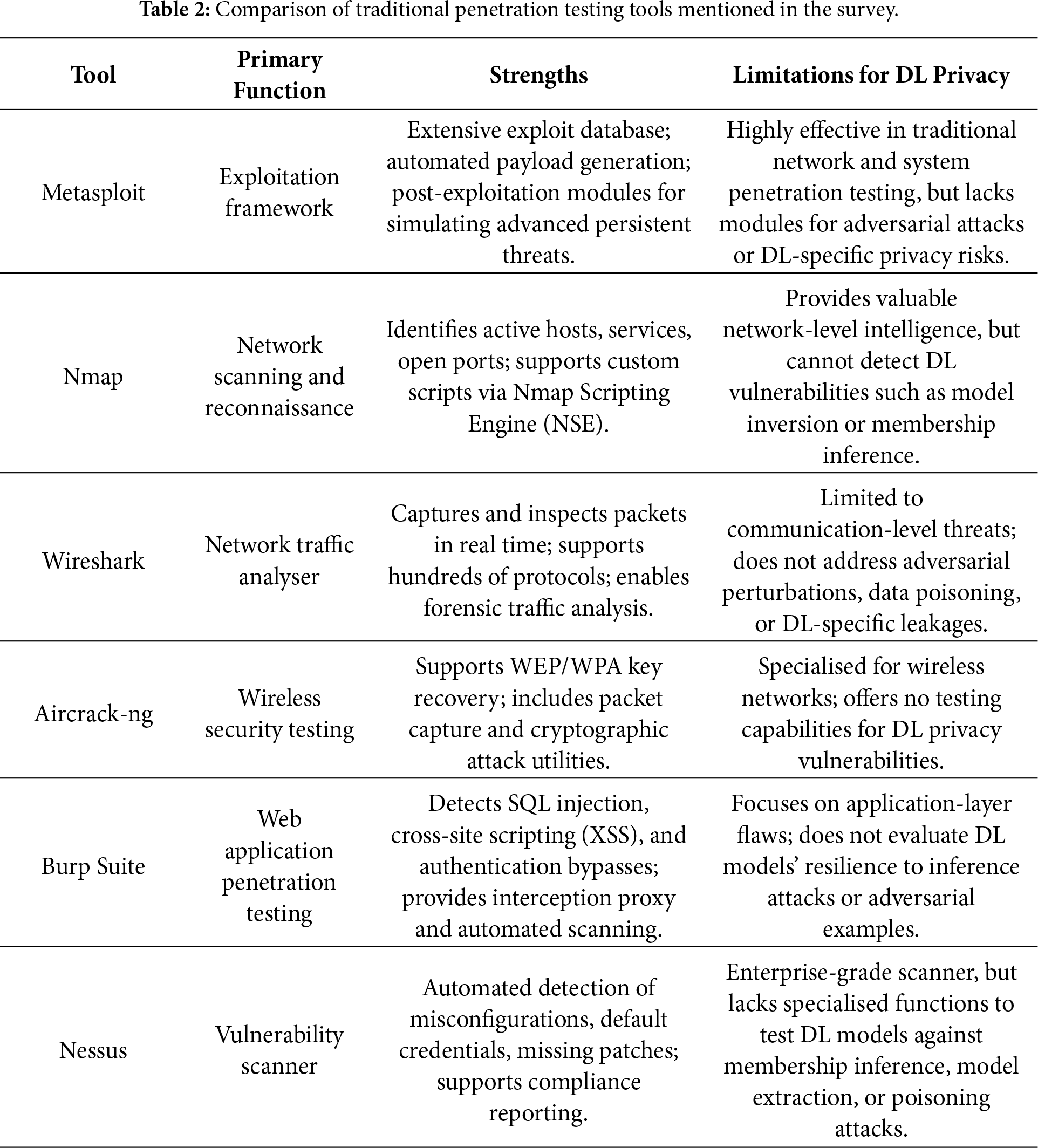

A range of established penetration testing tools is routinely used to assess system security across diverse technological environments. The following examination presents some of the most widely adopted and effective tools in contemporary penetration testing practices. However, these tools are primarily designed for network, application, and protocol-level vulnerabilities and offer limited support for evaluating DL-specific privacy risks. Below a comparison of these penetration testing tools is listed in Table 2.

Metasploit represents a robust and comprehensive penetration testing framework that supports automated exploitation, payload delivery, and post-exploitation analysis through an extensive library of attack modules. This framework serves as a flexible platform for replicating diverse attack scenarios and provides automated exploitation capabilities [11,50]. The tool incorporates trailblazing features such as post-exploitation modules, payload encoding mechanisms, and evasion techniques that allow testers to simulate advanced persistent threats. Metasploit proves particularly beneficial for discovering security vulnerabilities in network services and evaluating the effectiveness of existing security policies through controlled exploitation attempts. However, despite its strengths in traditional network and system security assessment, Metasploit does not provide native support for evaluating deep learning–specific threats, such as adversarial attacks, membership inference, or privacy leakage arising from model inference behavior.

Nmap (Network Mapper) functions as a sophisticated network scanning tool that facilitates comprehensive network reconnaissance through identification of active hosts, running services, open ports, and operating system fingerprinting, which collectively provide valuable intelligence for identifying potential attack vectors [11,51]. Its scripting engine further supports service enumeration and vulnerability detection across large-scale infrastructures. However, while Nmap is effective for mapping network-level attack surfaces, it does not support evaluation of deep learning–specific privacy risks, such as inference-based data leakage or adversarial manipulation of model behaviour.

Wireshark operates as an advanced network protocol analyzer that assists security experts in identifying suspicious network traffic patterns through real-time packet capture and detailed protocol analysis [11,52]. The application provides deep packet inspection capabilities that enable forensic analysis of network communications, protocol violations, and anomalous traffic behaviors [53]. It is commonly employed for traffic monitoring and post-incident forensic analysis in traditional network security assessments. However, Wireshark operates at the communication layer and does not provide visibility into deep learning model behavior or support assessment of privacy risks arising from inference outputs, gradient leakage, or adversarial manipulation.

Aircrack-ng comprises a comprehensive suite of tools specifically designed for wireless network security assessment, network monitoring, and WEP/WPA key recovery operations, which includes specialized packet capture utilities and cryptographic attack implementations [11,51]. It is commonly used to assess vulnerabilities in wireless configurations through passive monitoring and active attack techniques. However, Aircrack-ng focuses exclusively on wireless communication security and does not support evaluation of deep learning–specific privacy risks or attacks targeting model inference or training processes.

Burp Suite serves as a specialized web application security testing platform that facilitates detection of application-layer vulnerabilities through integrated features supporting session management analysis, automated scanning, and manual testing capabilities [54]. The platform excels at identifying common web application vulnerabilities such as cross-site scripting (XSS), SQL injection, and authentication bypass vulnerabilities. Burp Suite’s proxy functionality enables testers to intercept, modify, and analyze HTTP/HTTPS traffic to identify security weaknesses in web application logic and input validation mechanisms. However, Burp Suite is limited to web application logic and does not provide mechanisms for evaluating deep learning–specific privacy risks, including inference-based data leakage or adversarial manipulation of model outputs.

Nessus functions as an enterprise-grade vulnerability scanner that systematically identifies potential attack vectors, security misconfigurations, and system vulnerabilities through automated assessment protocols [11,55]. The scanner employs comprehensive vulnerability databases and performs automated assessments to identify weaknesses such as default credentials, misconfigured systems, and missing security patches. It is effective for prioritizing remediation based on exploitability and compliance requirements. However, this established vulnerability scanner lacks the specialized capabilities required for assessing DL-specific security risks, such as adversarial perturbations or data poisoning attacks that target machine learning model integrity.

Despite their proven effectiveness and widespread adoption in traditional cybersecurity contexts, these conventional penetration testing tools are not optimally suited for addressing the unique security challenges and privacy concerns associated with DL-related system assessments. The emergence of AI-specific vulnerabilities necessitates the development of specialized testing frameworks and tools designed specifically for deep learning model security evaluation.

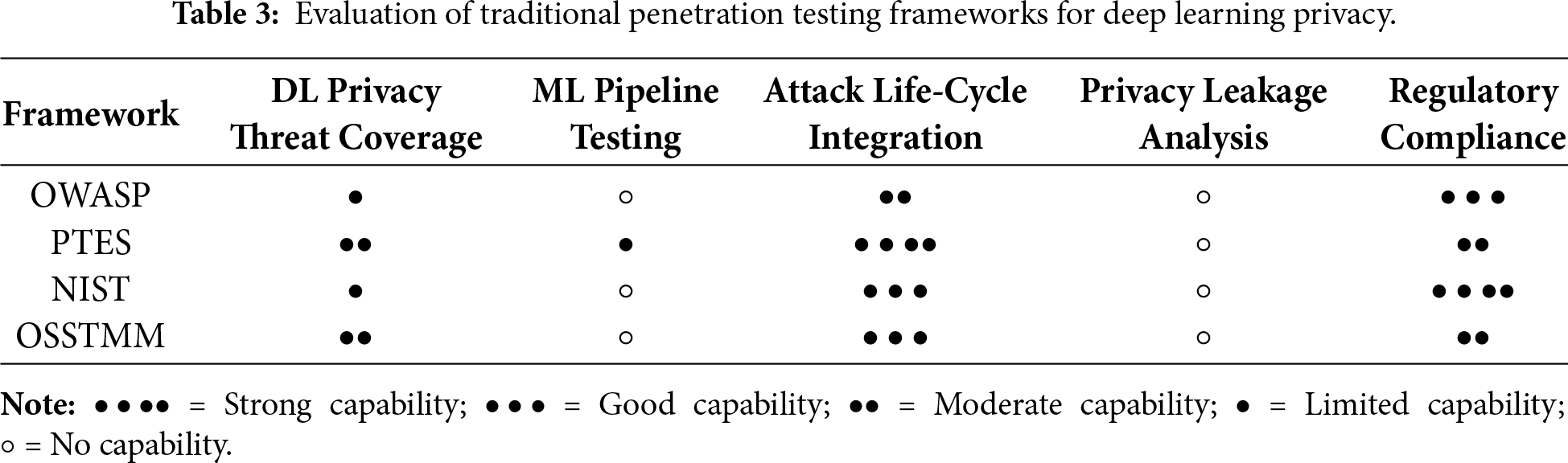

2.3 Penetration Testing Frameworks

The scoring in Table 3 is derived from documented capabilities in each framework’s official guidelines, including the OWASP Testing Guide and ASVS, PTES Technical Guidelines, NIST SP 800-53, the NIST AI Risk Management Framework, and OSSTMM 3. Each framework was evaluated against five DL-relevant criteria: (1) coverage of DL-specific privacy threats (e.g., inversion, extraction, membership inference), (2) support for ML pipeline assessment, (3) alignment with structured attack life-cycle methodologies, (4) mechanisms for evaluating privacy-leakage behaviors, and (5) regulatory alignment. Higher scores were assigned when a framework demonstrated explicit support for AI/ML security considerations or model-centric testing procedures; lower scores reflect limited or no applicability to DL privacy risks. This structured scoring method ensures transparency, reproducibility, and direct relevance to DL-privacy assessment.

OWASP (Open Web Application Security Project) provides extensive methodologies for web and application penetration testing, including vulnerability identification, authentication failures, and configuration weaknesses [44,56]. However, OWASP offers minimal support for evaluating model-centric vulnerabilities and lacks mechanisms for analyzing privacy leakage in DL models, such as training data exposure, gradient-based attacks, or inference-time exploitation. Its partial alignment with the PT attack life-cycle through structured testing phases such as threat enumeration, exploitation, and reporting explains its moderate score for life-cycle integration, while its strong emphasis on compliance-oriented security practices supports its high regulatory rating [57,58]. Despite OWASP’s proven effectiveness for traditional application security evaluations, it does not address specialized DL-specific vulnerabilities, including model inversion, membership inference, and adversarial perturbation attacks that specifically target neural-network integrity. Consequently, OWASP remains a strong framework for conventional application security but provides only limited applicability to DL privacy risks, reflected in its low scores for DL privacy-threat coverage, ML pipeline testing, and privacy leakage analysis.

PTES (Penetration Testing Execution Standard) defines a highly structured seven-phase methodology, ranging from intelligence gathering to exploitation and reporting. This structured workflow aligns well with adversarial evaluation processes [59]. Although PTES is valuable for modelling attacker behaviour, it does not address DL-specific vulnerabilities such as gradient leakage, shadow modelling, or model-extraction techniques. Consequently, it receives a high score for lifecycle support but only moderate relevance for DL privacy assessments [4].

The NIST (National Institute of Standards and Technology) provides comprehensive compliance-oriented frameworks, including SP 800-53 and the NIST AI Risk Management Framework. These guidelines incorporate data-governance principles and acknowledge adversarial machine-learning risks [4,54]. However, NIST does not define penetration testing procedures for identifying privacy leakage in DL models or assessing model-specific attack vectors. Therefore it scores strongly on regulatory alignment but only moderately on DL relevance.

The OSSTMM (Open-Source Security Testing Methodology Manual) provides a quantitative, metrics-driven approach to security testing and is widely recognized for its structured and scientifically grounded assessment methodology. While OSSTMM is highly effective for evaluating organizational security across traditional domains, such as operational processes, physical controls, and data handling, it does not include specialized assessment criteria for analyzing machine-learning systems [44,54]. However, OSSTMM lacks mechanisms for analysing ML pipelines or identifying privacy leakage pathways such as model inversion or gradient exposure. As a result, although it performs reasonably well in structured security measurement, its applicability to DL privacy testing remains limited [60].

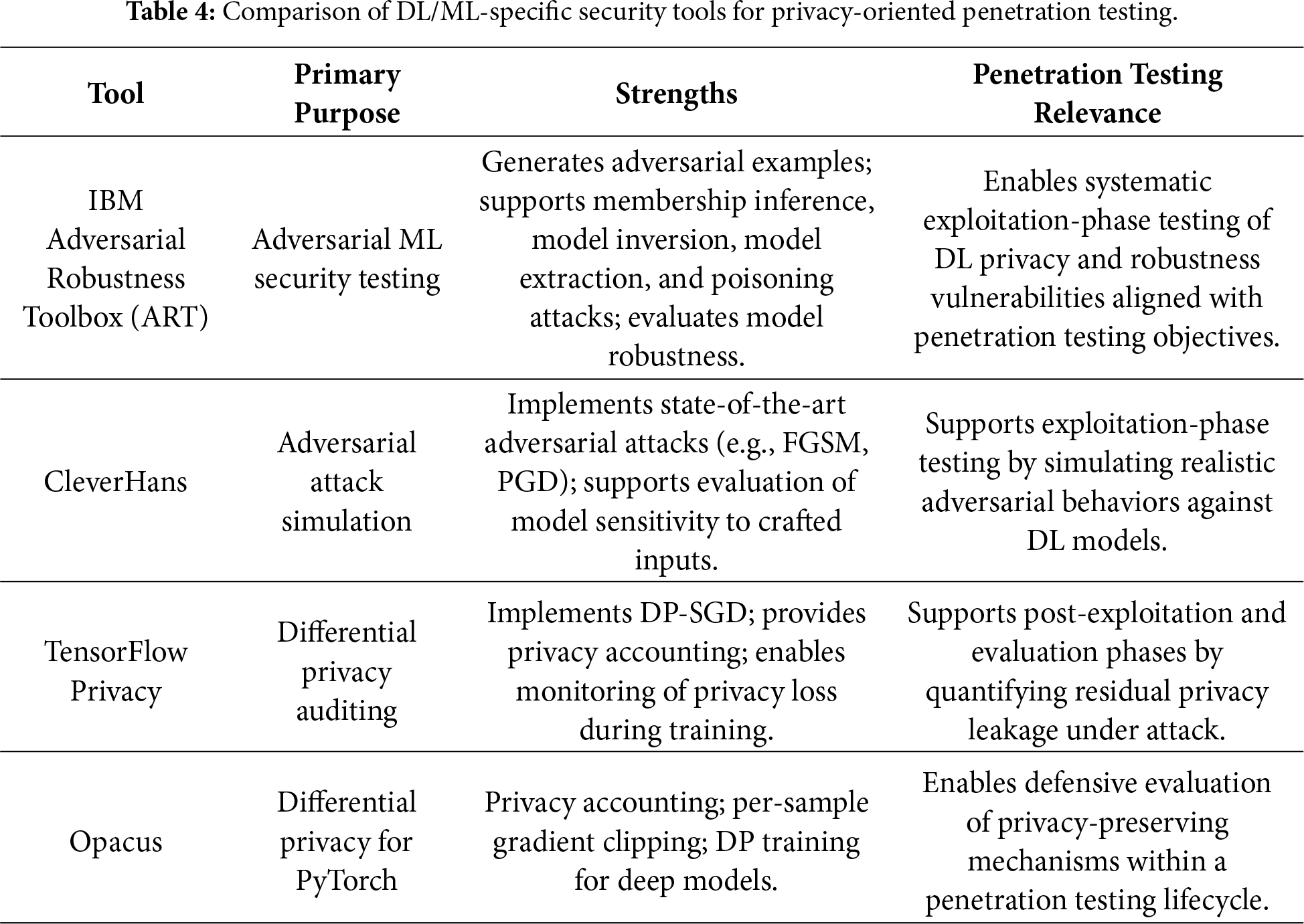

This subsection highlights DL/ML-specific security tools that complement traditional penetration testing by enabling direct evaluation of deep learning privacy threats that conventional tools cannot assess. To address the shortcomings of traditional penetration testing frameworks, it is necessary to incorporate DL/ML-specific security toolkits that directly target vulnerabilities unique to deep learning systems [61]. In particular, libraries such as IBM ART [62,63] and CleverHans provide comprehensive capabilities for generating adversarial examples, evaluating model robustness, and conducting membership inference and model inversion attacks—capabilities that are specifically designed for neural network architectures. Similarly, TensorFlow Privacy and Opacus [64] enable rigorous auditing of differential-privacy guaranties during training, offering mechanisms to quantify and monitor privacy leakage. Together, these tools complement conventional PT methodologies by allowing a systematic evaluation of DL-specific attack surfaces that traditional cybersecurity frameworks are not designed to assess.

Although the tools discussed in this subsection primarily support adversarial testing and attack simulation, complementary DL/ML-specific security tools that enable defensive evaluation of privacy-preserving mechanisms, such as differential privacy, homomorphic encryption, and federated learning, are examined in Section 4.3 within a penetration testing life-cycle context.

Unlike Table 2, which highlights the limitations of conventional penetration testing tools for deep learning privacy assessment, Table 4 emphasizes the penetration testing relevance of DL/ML-specific security tools that directly support adversarial testing, privacy leakage evaluation, and defensive validation.

DL model depends on massive datasets, many of which include private or sensitive data [9]. Despite their success, DL models have the potential to unintentionally remember and disclose details about training data, which raises serious privacy issues. This is particularly concerning when there is personally identifiable information or other sensitive data gathered in training. As DL models grow exponentially and are used in more delicate fields, it is crucial to address these privacy issues. To ensure relevance to penetration testing methodologies, the privacy attacks discussed in this section are later mapped to standard penetration testing phases in Section 3.2.

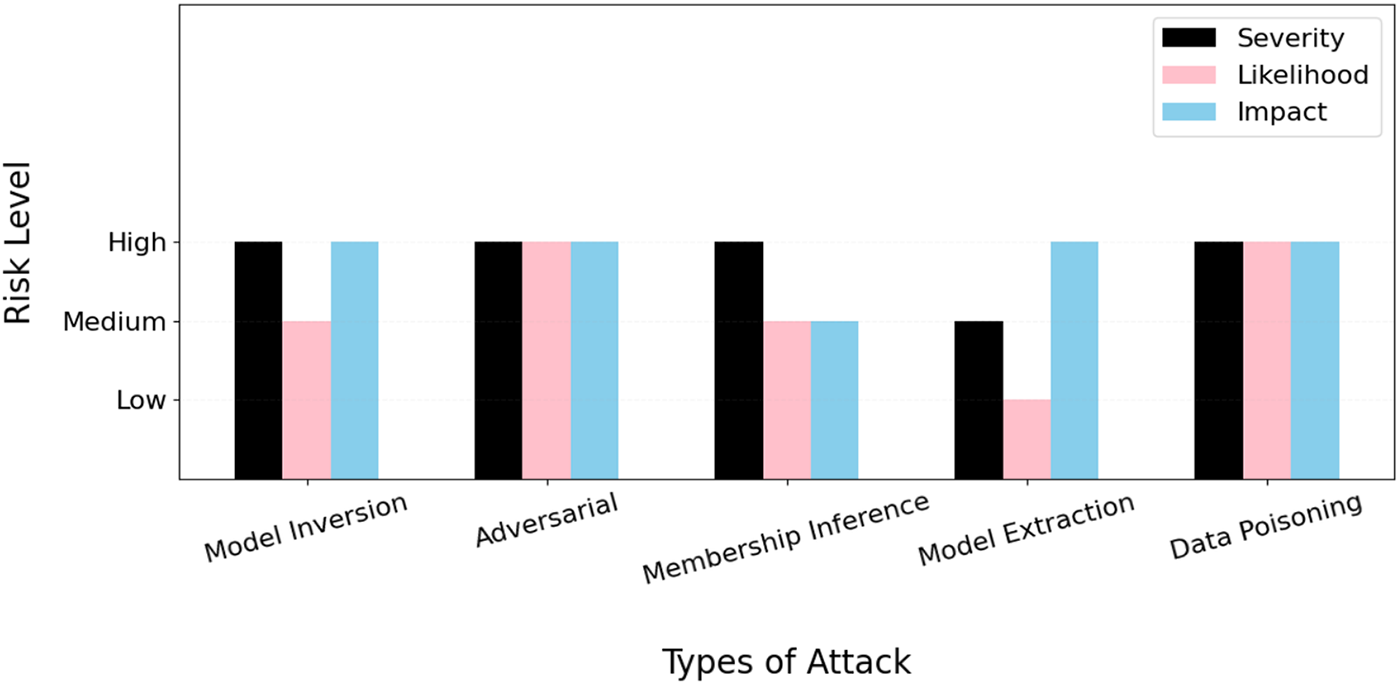

This section presents some common privacy threats to DL models. The severity levels, likelihood of occurrence, and potential impact of these attacks are systematically compared in Fig. 3, which provides a comprehensive risk assessment framework for understanding the relative threat landscape facing modern deep learning deployments. In Fig. 3, risk severity is assessed based on three core dimensions: (i) attack feasibility, reflecting the technical effort required to execute the attack; (ii) privacy impact, capturing the extent of sensitive information exposure; and (iii) regulatory and operational consequences, particularly in compliance-sensitive domains. The scoring criteria are informed by established security risk assessment methodologies, including OWASP risk rating principles, NIST SP 800-53 controls, and prior literature on deep learning privacy attacks. Each dimension is evaluated using an ordinal scale, facilitating consistent and reproducible comparison of privacy risks across different attack categories [65,66].

Figure 3: Privacy attack risk comparison regarding DL models.

Model Inversion Attacks. In this sophisticated category of attack, adversaries leverage the outputs and behavioral patterns of established DL models to systematically retrieve sensitive input data that was used during the training process [67]. Through strategic use of publicly accessible data and carefully crafted queries to DL model prediction interfaces, attackers can successfully reconstruct significant portions of the training database and recover highly private information, such as patient health records in medical diagnostic applications or facial images in biometric authentication systems. Furthermore, DL models that process biometric data or personally identifiable information demonstrate particular vulnerability to this attack vector due to the inherent memorization properties of deep neural networks [39]. Attackers systematically exploit the built-in capacity of DL models to retain and encode data-specific characteristics within their learned representations, making robust privacy protection measures absolutely critical for mitigating these substantial associated risks. The success of model inversion attacks often depends on the model’s complexity, the amount of training data, and the sensitivity of the information encoded within the model parameters.

Adversarial Attacks. In this category of sophisticated manipulation, attackers deliberately alter input data such as text, audio, or image content to deceive DL models while maintaining the appearance of legitimate data to human observers [39]. During the process of strategically modifying input data, attackers cause DL models to generate incorrect predictions or classifications that appear normal to human reviewers, thereby compromising system reliability and decision-making processes. Beyond causing functional failures, these attacks can create significant security vulnerabilities that enable confidentiality breaches through disclosure of trained data patterns and internal model information. Adversarial attacks are systematically categorized into two primary methodologies: (1) black-box attacks, where attackers interact with the system without prior knowledge of its internal architecture or parameters; and (2) white-box attacks, where attackers possess comprehensive access to the model’s structural properties, weights, and training procedures. Consequently, mission-critical applications that depend on reliable security decisions, such as unmanned vehicle navigation systems and electronic health platforms, face particularly severe vulnerabilities to adversarial attack vectors that could result in catastrophic failures.

Membership Inference Attacks. In this privacy-focused attack methodology, adversaries can systematically determine whether specific information of interest was included in a model’s training dataset through careful analysis of model responses and behavioral patterns [37,39,68]. Attackers employ carefully selected data samples to query DL models systematically, then analyze the model’s responses, confidence levels, and prediction patterns to determine whether particular datasets were utilized during the training process. For example, malicious actors could identify whether a specific patient’s health records were utilized to train a medical diagnostic system, thereby revealing sensitive information about individual participation in research studies or clinical trials. These privacy violations create substantial concerns in highly regulated sectors such as healthcare and financial services, where unauthorized disclosure of sensitive or confidential information carries significant legal consequences and financial penalties for organizations.

Model Extraction Attacks. In this intellectual property-focused attack vector, adversaries systematically and repeatedly interrogate a deployed DL model through its public interface to reverse-engineer, recreate, or approximate its functionality without requiring direct access to the original training data or model architecture [39]. Through this systematic extraction process, attackers can retrieve confidential algorithmic information, steal valuable intellectual property, or create unauthorized replications that enable further malicious activities. The extracted model copies can then be used for competitive advantage, sold to unauthorized parties, or serve as a foundation for developing more sophisticated attacks against the original system or similar deployments.

Data Poisoning Attacks. In this supply chain-focused attack methodology, malicious actors deliberately inject corrupted, biased, or strategically modified data into the original training dataset to fundamentally alter the system’s behavior, thereby degrading performance or creating hidden backdoors that enable future exploitation [39]. This attack vector poses particularly severe threats to DL models whose operational effectiveness depends heavily on user-generated content or crowdsourced data, such as fraud detection systems, content moderation platforms, or recommendation engines. The injected poisoned data can remain dormant until specific trigger conditions are met, making detection extremely challenging and enabling long-term compromise of system integrity and reliability.

3.2 Mapping Deep Learning Privacy Attacks to Penetration Testing Phases

While the privacy attacks discussed in Section 3 are well-established threats to deep learning (DL) models, their relevance to penetration testing lies in their evaluation within a structured adversarial life-cycle. Accordingly, these attacks are mapped to traditional penetration testing phases— reconnaissance, exploitation, and post-exploitation, to enable systematic and reproducible DL privacy assessments [33].

Model inversion attacks primarily occur during reconnaissance and exploitation, where exposed prediction APIs and confidence scores are identified and leveraged to reconstruct sensitive training data. Post-exploitation analysis quantifies privacy leakage and assesses confidentiality violations.

Adversarial attacks are typically executed during the exploitation phase by injecting crafted perturbations that induce incorrect model behavior. Reconnaissance focuses on understanding input constraints and decision boundaries, while post-exploitation evaluates system reliability and safety impacts.

Membership inference attacks span all penetration testing phases, beginning with reconnaissance of output patterns, followed by exploitation through systematic querying, and post-exploitation assessment of regulatory and privacy implications.

Model extraction attacks align with iterative reconnaissance and exploitation, where repeated queries are used to approximate or replicate deployed models. Post-exploitation evaluates intellectual property exposure and risks of secondary attacks.

Data poisoning attacks are primarily assessed during exploitation, targeting training or update pipelines. Reconnaissance identifies data ingestion and trust assumptions, while post-exploitation examines backdoor persistence and long-term integrity degradation.

By explicitly mapping DL privacy attacks to penetration testing phases, this section demonstrates how penetration testing enables structured evaluation and mitigation of privacy risks throughout the DL system life-cycle.

3.3 PT-Oriented Privacy Attacks and Defences in Large Models

Recent advances in large-scale deep learning models, particularly large language models (LLMs) and foundation models, introduce new privacy risks that extend beyond those observed in conventional deep learning systems. Due to their scale, extensive pretraining on heterogeneous data sources, and widespread deployment via public APIs, large models present a substantially expanded attack surface from a penetration testing perspective [69].

Privacy attacks against large models commonly exploit memorization and generative capabilities to extract sensitive training data. Empirical studies have demonstrated that carefully crafted prompts can induce models to reveal personally identifiable information, proprietary text, or verbatim training samples, constituting a form of training data extraction. Membership inference attacks have also been adapted to large models, where attackers assess whether specific data records were included in pretraining or fine-tuning corpora based on output confidence, response patterns, or likelihood scores [70]. Additionally, prompt injection and jailbreaking techniques can be leveraged to bypass safety constraints, enabling unauthorized access to sensitive model behaviors or hidden system prompts [71].

From a penetration testing [72] perspective, these attacks align primarily with reconnaissance and exploitation phases, where testers probe exposed model interfaces, analyze response variability, and systematically craft adversarial prompts to induce privacy leakage. Post-exploitation analysis focuses on quantifying the severity of disclosed information, assessing regulatory impact, and evaluating the robustness of deployed safeguards.

Defences against large-model privacy attacks include differential privacy—based training and fine-tuning, output filtering and redaction mechanisms, prompt sanitization, rate limiting, and continuous monitoring of anomalous query patterns. While these techniques can reduce privacy risk, they introduce trade-offs in utility, accuracy, and deployment [73]. Penetration testing plays a critical role in evaluating the effectiveness of these defences by simulating realistic adversarial behaviors and measuring residual leakage under controlled attack conditions.

Incorporating large-model privacy attacks into penetration testing frameworks is therefore essential for modern AI deployments. Unlike traditional security assessments, PT for large models must account for prompt-based interaction, probabilistic output behavior, and lifecycle-specific risks spanning pretraining, fine-tuning, and inference [74]. This highlights the need for adaptive, AI-aware penetration testing methodologies that extend beyond conventional network and application security paradigms.

4 Penetration Testing for DL-Related Privacy

As a safety assessment to identify weaknesses in a system by replicating real-world attacks [75], penetration testing for DL models requires to evaluate the models’ defenses against possible adversarial threats, privacy violations, and ethical attacks. With AI-driven systems getting more integrated into cybersecurity, it is necessary to have DL-specific penetration testing for the purpose of analysing the security and privacy of DL systems.

4.1 Penetration Testing Approaches to DL Models

This section presents the main categories of penetration testing methods for DL models.

4.1.1 White-Box and Black-Box Testing

In white-box testing, testers have full access to DL models’ structure, parameters, and training data. While white-box penetration testing is highly efficient at detecting internal flaws in model layouts, it might not accurately represent real-world attack scenarios where attackers do not have such direct access [34,76].

In black-box testing, testers are not given any prior information about the parameters, training data, or structure of the model. Testers engage with the system’s API or external user interface and examine its output. Although being more realistic and useful, black-box testing may not be as good at identifying underlying architectural flaws as white-box methods [34,76].

Potential solution: If black-box and white-box testing can be merged, then it can restrict unauthorized access and model checks. Additionally, active learning strategies can reduce the number of inquiries, which is required for adversarial security assessment and model extraction.

4.1.2 Dynamic and Static Testing

Dynamic Penetration Testing refers to analyzing actual attack scenarios along with examining DL models in real time [77]. This type of testing includes the detection of abnormalities or unauthorized access and adversarial attacks during runtime to check a system’s security strength. It also continuously monitors model flow and safety standards. Since dynamic testing offers real-time security assurance, it necessitates automatic assessment tools and continuous monitoring.

Static Penetration Testing encompasses the examination of a system’s architecture, training data, and source code [77] without deploying the actual code. In this penetration testing, code analysis is used to identify a DL model’s architectural flaw, with data scanning detecting possible data poisoning and adversarial pre-deployment training attempting to boost resilience. Static testing does not take real-time adversarial threats into consideration, even though it contributes to the early detection of possible vulnerabilities.

4.1.3 Automated Security Testing

Automated tools have been created to analyse model security and privacy issues due to the complexity of AI-related penetration testing.

IBM’s Adversarial Robustness Toolbox (ART) is an open-source AI penetration testing tool, allowing researchers and developers to evaluate, authorize, and verify machine learning models [78]. ART also uses various defense approaches to assess privacy risks of membership inference atatcks.

Google TensorFlow is one of the most widely used penetration testing frameworks for DL models. It ensures regulatory compliance is followed, impairs the model’s ability to remember private information, and reduces the risk of membership inference attacks [79].

Although automated AI penetration testing methods are essential for expanding security tests, their capacity to manage unpredictable adversarial threats remains limited.

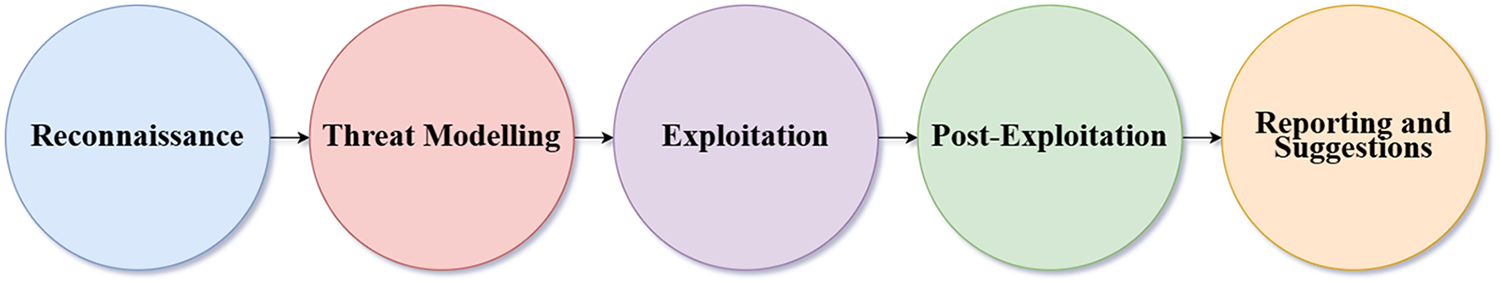

4.2 Key Phases of Penetration Testing for DL-Related Privacy

A penetration testing framework for DL-related privacy should take a methodical approach so that it can systematically assess DL models’ security and detect flaws. The key phases of such a framework are illustrated in Fig. 4 and expounded below.

Figure 4: Penetration testing workflow for DL-related privacy.

(1) Reconnaissance. It is vital to gather comprehensive information regarding the target model, its structure, and any possible weaknesses prior to performing penetration testing [80–82]. This phase helps uncover any vulnerabilities that might be used to launch privacy attacks. This phase includes:

– Identifying the model type (e.g., transformer-based models, convolutional neural networks, and recurrent neural networks).

– Examining the characteristics of the dataset, and finding out if personally identifiable information, health records, or monetary transactions are present.

– Visualizing attack vectors, taking into account all endpoints, execution platforms, and public APIs.

– Analysing metadata to gather details about hyperparameters and training methods.

Note that unauthorized access to the model may be made possible by public APIs, opening the door for malicious requests. Model inversion and membership inference could be implemented easily using publicly accessible models.

Although the workflow in Fig. 4 follows a classical penetration testing structure, it is explicitly adapted for deep learning systems by incorporating model-centric assessment activities. Reconnaissance includes inference API discovery, model architecture identification, and dataset sensitivity analysis. Threat modeling focuses on DL-specific attacks such as model inversion, membership inference, extraction, and poisoning. Exploitation evaluates these attacks against training and inference pipelines, while post-exploitation quantifies privacy leakage, model integrity degradation, and regulatory impact. This adaptation ensures that the workflow reflects DL-specific attack surfaces rather than traditional network security testing.

(2) Threat Modeling. This phase pays particular attention to privacy issues, such as model exploitation, data leaks, and malicious attacks, which are likely to put DL models at risk [80,83]. This phase includes:

– Identifying attacks and ascertaining whether the attack is from inside, outside or fraudulent.

– Detecting the threat type (e.g., Adversarial Attacks, Model Inversion Attacks, Membership Inference Attacks, Model Extraction Attacks, or Data Poisoning Attacks).

– Assessing risks through investigating the probability and seriousness of any attack.

The absence of strong privacy-preserving measures makes DL models vulnerable to model inversion and membership inference attacks. Also, adversaries may use model APIs to extract decision-making parameters, which could result in model theft.

(3) Exploitation. In this phase, the model is influenced to manage attacks so as to determine its shortcomings [80,84]. This phase measures the model’s strength to handle genuine privacy risks. This phase includes:

– Implementing active attacks (e.g., adversarial attacks, membership inference attacks, model inversion attacks, and data poisoning attacks) to evaluate the model’s robustness and weaknesses.

– Assessing each attack, the possibility of success and impact on security and privacy.

Active attacks might weaken model performance, leading to inaccurate decision-making.

(4) Post-Exploitation. This phase determines how serious privacy leaks are and how well privacy-preserving methods work. It also provides information about the model’s vulnerability to abuses including data restoration and prediction [80,84,85].

(5) Reporting and Suggestions. This final stage is to record the outcome of penetration testing and offer practical suggestions, making sure privacy-related rules are followed [84,86]. By gathering thorough reports on penetration testing, testers can measure security weaknesses, attack incidence results and the impact of mitigation strategies.

Based on the reports, safety standards with legal requirements and best industry practices can be investigated, and further recommendations for enhancing privacy protection can be made. Inadequate reporting and neglecting to implement recommended privacy protection measures could result in DL models to ongoing risks and regulatory violations [42,87–89].

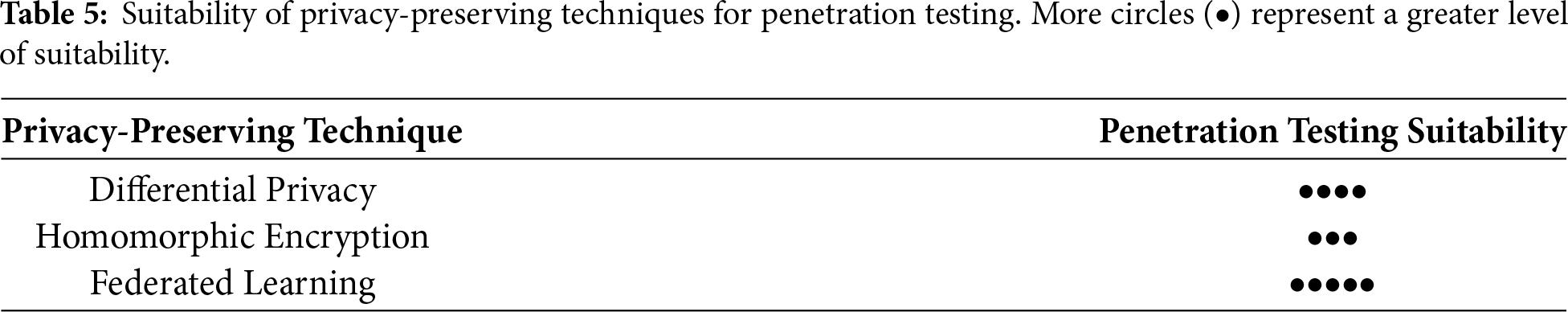

4.3 Privacy-Preserving Methods

While differential privacy, homomorphic encryption, and federated learning are commonly described as privacy-preserving techniques, the tools implementing these methods also function as AI/ML-specific security mechanisms when evaluated through a penetration testing lens. In contrast to adversarial testing libraries that actively simulate attacks (e.g., model inversion or membership inference), DP, HE, and FL-based tools support defensive evaluation by enabling testers to measure privacy leakage, robustness under adversarial conditions, and compliance-related risk throughout the deep learning life-cycle. In this review, these tools are therefore treated as complementary AI/ML-specific security tools that extend penetration testing beyond attack execution to systematic assessment of privacy protection effectiveness. Since sensitive data is frequently handled by DL systems, privacy protection has become a priority. Many privacy-preserving schemes have been proposed in recent years to reduce the risks of membership inference attacks, model inversion attacks, and data leaks [90,91]. These schemes aim to preserve DL models’ efficiency and performance while securing sensitive data and protecting privacy. Based on key factors such as accessibility, tool availability, and known areas of attack, Table 5 shows the suitability of the most well-known privacy-preserving techniques for penetration testing.

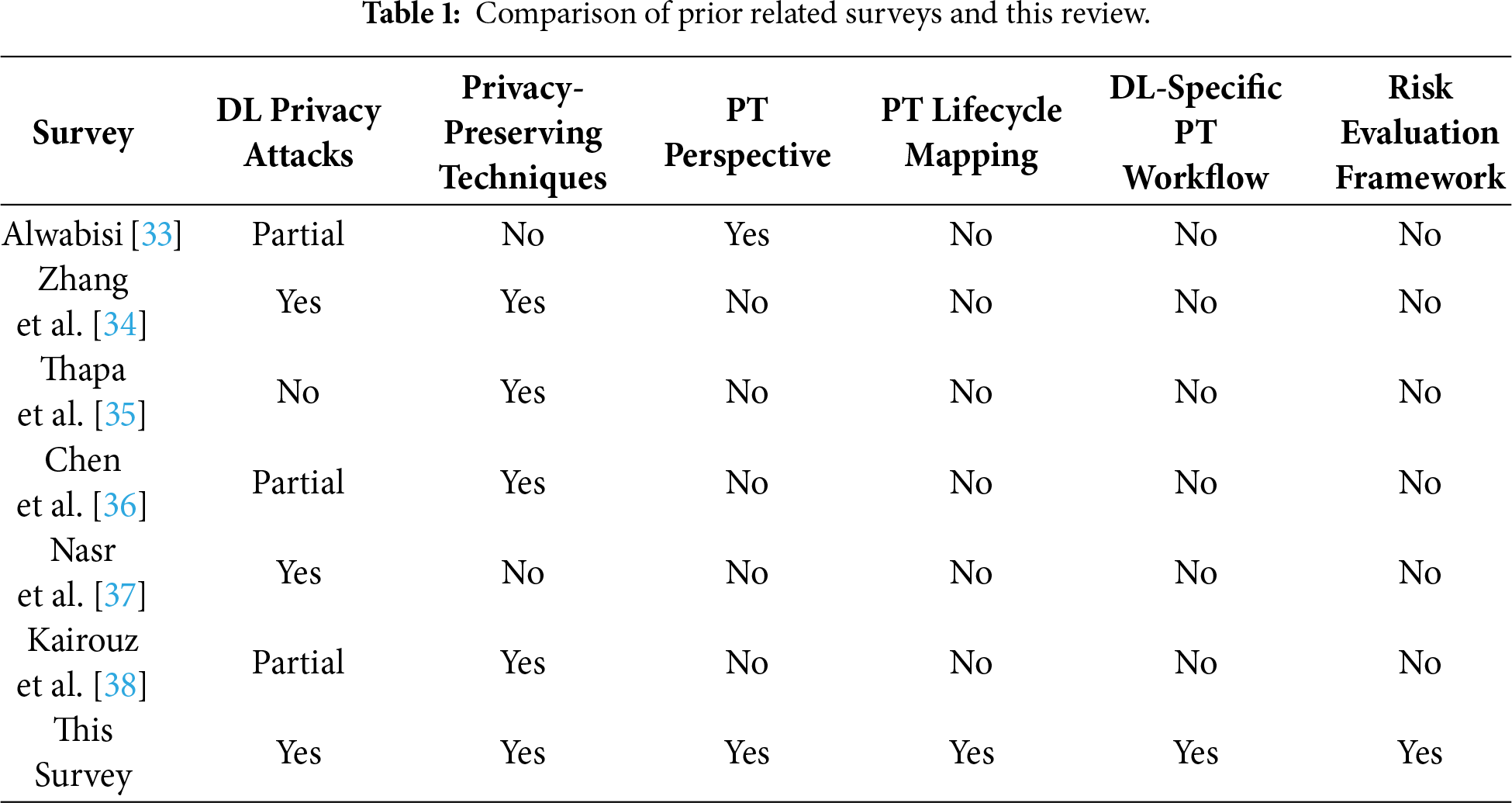

These privacy-preserving techniques are discussed below and an overview of these techniques and associated tools for deep learning systems are listed in Table 6.

4.3.1 DP (Differential Privacy)

A mathematical framework called DP adds controllable noise (distraction or disturbance) to datasets or model outcomes to provide robust privacy assurances [90,92,93]. Penetration testing is an organized process for identifying security weaknesses, whereas DP is a statistical protection technique against such flaws [94,95]. Thus, penetration testing and DP can be interrelated for the purpose of privacy protection, especially in DL systems that handle private and/or sensitive data. Overall, DP can serve as both a barrier against privacy violations and a tool for assessing system flaws during penetration testing.

According to [90], “Penetration testing techniques, such as adversarial attack simulations and differential privacy analysis, play a crucial role in identifying vulnerabilities but frequently do not provide full coverage across all stages of a deep learning system’s lifecycle”. DP helps to restrict the impact of each specific data point by inserting controllable noise into the data or model outputs, lowering the danger of privacy breach when models are under attack [90,96]. On the one hand, penetration testing frequently assesses the accuracy of DP solutions, verifying that the added noise is enough to prevent inference attacks while preserving the model’s efficiency. Penetration testing methods also guide the setting of DP parameters, striking a balance between privacy and performance. On the other hand, DP influences the direction and purpose of penetration testing, suggesting what needs additional examination because of security concerns.

– Examining the effectiveness of DP and addressing the issue of performance decline if needed.

– Figuring out how well the added noise hides confidential information to ensure that DP offers the required privacy protection.

– Confirming that the implementation of DP satisfies government regulations (e.g., GDPR, and HIPAA).

In summary, penetration testing is useful for verifying that DP meets the appropriate equilibrium of privacy, performance and usability for DL models.

Tools for DP From a penetration testing perspective, DP tools enable quantitative evaluation of privacy leakage and adversarial resilience under controlled attack scenarios. The acceptance of DP has resulted in the creation of several powerful tools that make it easier to apply DP in DL systems. These tools are particularly useful for DL models, where privacy of training data used is vital. This study covers four popular DP tools: TensorFlow Privacy, OpenDP, Opacus, and DiffPrivLib.

TensorFlow Privacy is an open-source library created by Google that enhances TensorFlow to allow for differentially private stochastic gradient descent or DP-SGD, a key algorithm that adds controlled noise to gradients during model training to ensure that each data point has minimal impact on the overall model, while offering basic privacy assurances [79]. Additionally, TensorFlow Privacy helps developers achieve DP on a broad scale, for example in production-grade DL scenarios.

OpenDP is an open-source project proposed by Harvard University in partnership with Microsoft to assist data analysts and organizations that require high data privacy without compromising performance, utilizing DP as a flexible and extendable framework for privacy-preserving data analysis [64]. OpenDP can securely evaluate confidential information by including precisely regulated noise while protecting privacy. Additionally, OpenDP is a tool that allows for unique privacy-oriented data analysis using modular blocks, while being cautious about privacy-induced cost.

Opacus is Facebook’s DP library for machine learning services. It contains basic and special features [64,79] to serve both machine learning operators and skilled DP researchers. Its main objective is to ensure the privacy of each trainee’s specimens while reducing any negative impact on the accuracy of completed models. Opacus also offers privacy management capabilities and privacy budgeting, suitable for medical, financial, and other area involving confidential information.

DiffPrivLib created by IBM for machine learning applications, provides DP variations of popular scikit-learn algorithms [64,79]. It includes a collection of tools for machine learning and data analytics, along with built-in privacy measures. Furthermore, DiffPrivLib is concerned with accessibility and obedience to legislative frameworks (e.g., GDPR) entailing robust privacy protections in data handling.

4.3.2 HE (Homomorphic Encryption)

HE is a cryptographic operation, enabling calculations to be made on encrypted information without decrypting it [97]. It can be applied to DL systems to secure data in estimation and training, assuring that confidential data remains hidden.

Penetration testing and HE are connected, because HE offers a strong cryptographic method to tackle detected hazards, especially those related to disclosure of information during data processing, while penetration testing detects such security loopholes [90]. Penetration testing techniques for DL systems are to identify DL models’ secret parameters or expose confidential information from the data used for learning. After these flaws are identified, HE can be applied as a strong protective approach to preserve information without disclosing original text inputs on the server, while analysis is in progress.

HE guarantees that both input and output data remain encrypted throughout computation, thereby preventing access to sensitive information during processing [90]. By confirming no information leaks throughout the model’s learning or during updates, penetration testing may verify whether HE is appropriately included within the model’s timeline. Moreover, when assessing the capacity and effectiveness of DL models, penetration testing should take into account the computational expense that HE imposes. The coordination between penetration testing and HE is important for the security and privacy protection of DL systems.

The purpose of penetration testing for HE-based DL systems is to inspect the privacy protection of the HE scheme and its effect on computional cost. The appropriate testing areas are:

– Evaluating the encryption algorithm’s durability during cryptographic attacks, and checking if the encryption might be compromised and if pen testers can address the harm.

– Analyzing how much computational complexity is added by HE.

– Verifying that the encryption algorithm can generate accurate results and HE works well with state-of-the-art DL frameworks (e.g., TensorFlow and PyTorch).

Tools for HE: Within penetration testing workflows, HE tools support assessment of confidentiality guarantees during inference and data processing under adversarial observation. Several HE libraries and tools have been developed to facilitate its application in practical scenarios. Some of the most widely used HE methods are described below:

Microsoft SEAL (Simple Encrypted Arithmetic Library) is an open source c++ library for HE, developed by Microsoft Research, which supports the CKKS (Brakerski/Fan-Vercauteren) scheme for approximate floating-point computations and the BFV (Brakerski-Gentry-Vaikuntanathan) scheme for exact integer arithmetic [98]. It is appropriate for programs that protect privacy and secure services in the cloud because of its efficient and flexible structure. It is widely adopted in both academic research and practical applications due to its performance and adaptability.

PALISADE is a lattice cryptography library written in C++ that implements a variety of HE techniques, such as BFV, BGV, CKKS, FHEW, and TFHE, developed by Dharpa [99]. PALISADE was designed with a modular architecture, making it suitable for both research and production contexts, especially in industry and government circumstances where powerful encryption features are needed. PALISADE is an effective tool for advanced cryptography research because of its wide scheme assistance, which enables developers to test out various HE techniques.

HElib (Homomorphic-Encryption Library) is an open-source C++ library developed by IBM, which focuses on the BGV and CKKS schemes, with particular emphasis on bootstrapping, which is a technique that enables an unlimited number of calculations on encrypted data [100]. When extensive control over fully homomorphic encryption (FHE) is required in educational and industry research, HElib is frequently utilized due to its abstraction of higher levels and the enhancement for complex processes. Additionally, its strengths allow it to be an ideal option for sophisticated FHE tests and implementations, yet it can have a longer learning process than SEAL or TenSEAL.

As a next-generation successor to PALISADE, OpenFHE (Open Fully Homomorphic Encryption) is an open-source C++ library for FHE that offers enhanced flexibility, modularity, and efficiency [97]. It supports a wide range of FHE schemes, such as BFV, BGV, and CKKS for arithmetic operations, as well as FHEW and TFHE. In addition to its modular design, OpenFHE allows researchers to seamlessly incorporate new cryptographic methods and improvements, while maintaining backward compatibility with PALISADE to simplify migration.

In Federated Learning (FL), data is not stored on a single central server but remains on personal devices such as smartphones and IoT devices to train deep learning models [90,101,102]. Instead of sharing raw data, only the update models are sent to a central server for accumulation in federated learning. Since the raw information never leaves local devices, this method reduces the risk of data disclosure [36,95,103,104].

FL, which enables model training across decentralized data sources without transferring raw data to a central location, has emerged as an effective approach for privacy-preserving deep learning [105–108]. In delicate industries like banking, healthcare, where regulatory frameworks like HIPAA and GDPR demand strong data protection and compliance, this strategy is especially beneficial for them [109,110]. But it introduces new privacy and security vulnerabilities because of its distributed and shared structure. This makes penetration testing critical for assessing and improving the resilience of federated learning systems.

Penetration testing in FL is necessary to assess vulnerabilities such as gradient leakage, client or server manipulation, poisoning attacks, membership inference, and model inversion attacks. These attacks can either make the model less accurate or steal private information by exploiting the shared data (e.g., model updates or gradients) exchanged during training. As an instance, a malicious actor pretending to be a legitimate user in the FL system might attempt to infer private user data from the updates or inject poisoned gradients to corrupt the global model.

Incorporating penetration testing into FL helps to actively assess the effectiveness of privacy-enhancing technologies such as HE, secure multi-party computation (SMPC), secure aggregation, and differential privacy. However, the goal of these methods is to hide or secure private information, their implementations may still contain vulnerabilities [35,111]. On the other hand, penetration testing aid in verifying whether hostile tactics can still compromise security and whether the deployed protections offer enough security against realistic attack models. So, penetration testing acts as a safeguard to ensure that FL continues to uphold privacy, even if there are attackers trying to break it.

The objective of penetration testing in FL is to evaluate the privacy as well as security of data exchanges between the central server and participating devices [112,113]. The key testing areas include:

– Ensuring model updates are transmitted safely over encryption protocols, like TLS to protect against hacker eavesdropping.

– Inspecting the modified models if potential disclose of private data about the local information is made available. A penetration tester can assess whether attackers could reconstruct original data from observable model activity.

– Monitoring how resilient the system is to malicious clients that could potentially damage the model by submitting corrupted updates.

Through such assessments, penetration testing can simplify the identification of weaknesses in FL systems and guarantees the preservation of privacy during the collaborative training process [38].

Tools for FL: FL frameworks provide an evaluation surface for penetration testing by enabling analysis of leakage, poisoning, and manipulation risks in distributed training environments. A number of frameworks and tools have been created to simplify the implementation of FL. These frameworks make it easier to train models across distributed devices while preserving privacy and keeping data secure [114]. An overview of key tools and their features is provided below:

TensorFlow Federated (TFF) framework, developed by Google, is a python-based library to implement FL algorithms using TensorFlow [115]. It offers high-level APIs for testing and simulating FL ideas, making it particularly useful for research. Moreover, TFF simplifies experimentation by simulating multiple users with their own datasets [116]. It allows users to add privacy features like differential privacy and includes widely used training methods such as Federated Averaging (FedAvg) to protect data. Moreover, it works seamlessly with TensorFlow models, which makes it easy to reuse existing machine learning architectures in a privacy friendly environment.

PySyft developed by Openminded, is a python-based library that integrates FL with techniques like differential privacy, homomorphic encryption and secure multiparty computation (SMPC) to enable privacy-preserving machine learning. This framework integrates well with well-known machine learning frameworks such as TensorFlow and PyTorch [115]. It helps to preserve user confidentiality by enabling models to be trained on distributed data. Additionally, it offers confidential estimation without disclosing private information.

Flower is a flexible, framework-agnostic FL library that works well for both large-scale testing and real-world deployments. It is compatible with a variety of machine learning frameworks, including PyTorch, TensorFlow, and Scikit-learn [115]. Flower supports deployment across diverse devices and allows developers to design custom aggregation strategies to aggregate updates from various users.

FATE (Federated AI Technology Enabler), an industrial-grade federated learning platform developed by WeBank AI, is a high-quality FL platform that enables secure data processing and model training across multiple organizations. FATE supports different types of FL, including horizontal, vertical, and transfer learning, depending on the data distribution. It protects training data with robust privacy techniques including secure multi-party computing (SMPC) and differential privacy (DP) [115]. Furthermore, FATE supports a variety of machine learning models, such as neural networks, logistic regression, and decision trees.

OpenFL (Open Federated Learning) is a tool made by Intel, that helps different organizations work together to train machine learning models without sharing their raw data [117]. OpenFL employs a decentralized method in which data remains local, thereby enhancing privacy. It is effortless to setup in practical environments because it enables container-based deployments. Moreover, OpenFL prioritizes robust security of information by utilizing protected locations known as enclaves and secure computing to protect data during processing.

Table 6 maps privacy-preserving techniques and associated tools to standard penetration testing life-cycle phases (reconnaissance, exploitation, and post-exploitation) following PTES and NIST methodologies. The table highlights the deep learning components under evaluation and the primary testing objectives for assessing privacy leakage, model robustness, and regulatory impact.

By integrating DP, HE, and FL within a penetration testing—oriented evaluation framework, the proposed hybrid approach addresses the individual limitations of existing tools by jointly mitigating privacy leakage, robustness under adversarial testing, and deployment-level constraints across the deep learning life-cycle.

4.4 An Illustrative Case Study: Privacy-Oriented Penetration Testing of a DL Model

To demonstrate the practical applicability of the proposed penetration testing framework, we present an illustrative case study involving a deep learning image classification model deployed via a public inference API in a healthcare-like setting [118]. The model is assumed to be trained on sensitive medical imaging data and made accessible to external users for prediction services [119]. For clarity and brevity, this illustrative case study focuses on the core penetration testing phases where privacy leakage is manifested—reconnaissance, exploitation, and post-exploitation, while threat modeling and reporting are implicitly incorporated through attack selection and evaluation metrics.

Reconnaissance Phase: The penetration testing process begins by identifying exposed model interfaces, available output information (e.g., confidence scores), and access constraints. Public API documentation and response formats are analyzed to determine potential privacy leakage vectors [120].

Exploitation Phase: During exploitation, adversarial techniques such as membership inference and model inversion attacks are executed using DL-specific security tools (e.g., IBM Adversarial Robustness Toolbox). These attack classes are well-established mechanisms for inferring whether specific data records were included in the training set or for reconstructing sensitive features of the training data from model outputs [37]. Attack success is evaluated using metrics including membership inference accuracy, reconstruction similarity, and attack success rate.

Post-Exploitation Phase: Post-exploitation analysis assesses the severity of privacy leakage by quantifying the amount of sensitive information inferred and evaluating potential regulatory impact under data protection frameworks. Defensive mechanisms, such as differential privacy–based training and output filtering, are then evaluated by re-running attacks and measuring reductions in leakage metrics and corresponding impacts on model accuracy [34].

This illustrative case study demonstrates how the proposed framework extends beyond a conceptual workflow by enabling structured, lifecycle-based assessment of privacy risks and defenses under realistic attack scenarios. The case study is intended to showcase practical applicability rather than provide exhaustive empirical benchmarking.

Research questions serve as the foundational framework that guides systematic investigation and provides structured pathways for addressing complex challenges in deep learning privacy and security. In the context of this comprehensive review, research questions are particularly important because they help synthesize the diverse findings from the literature analysis, identify critical knowledge gaps that require immediate attention, and establish clear directions for future research endeavors. The formulation of targeted research questions enables researchers and practitioners to focus their efforts on the most pressing issues while providing measurable objectives for evaluating the effectiveness of proposed solutions. Furthermore, well-structured research questions facilitate the translation of theoretical insights into practical implementations that can address real-world privacy and security challenges in deep learning deployments.

The following research questions have been systematically derived based on the literature review findings and represent the most critical areas where current knowledge and practice fall short of addressing the evolving privacy and security landscape in deep learning systems.

Q1. What limitations do existing privacy-preserving mechanisms exhibit when evaluated through penetration testing across the deep learning lifecycle?

Penetration testing reveals that existing privacy-preserving mechanisms provide partial and phase-dependent protection across the deep learning life-cycle [36,120,121]. Differential privacy is effective at reducing inference-based leakage during training but remains vulnerable to model extraction and poisoning attacks at inference time. Homomorphic encryption protects data during computation but does not prevent privacy leakage through exposed model outputs or APIs. Federated learning limits raw data sharing; however, penetration testing of aggregation and update phases exposes susceptibility to gradient leakage, membership inference, and poisoning attacks. These findings demonstrate that individual privacy mechanisms do not provide comprehensive protection when assessed under adversarial testing conditions, underscoring the need for lifecycle-aware evaluation.

Q2. How can penetration testing be used to evaluate and balance the trade-off between privacy protection and model accuracy in deep learning systems under realistic attack scenarios?

Penetration testing enables quantitative evaluation of the privacy–accuracy trade-off by subjecting models to realistic adversarial conditions. Testing shows that stronger privacy controls, such as increased noise in differential privacy, reduce attack success rates but introduce measurable accuracy degradation [122]. Conversely, configurations optimized for performance exhibit higher vulnerability to inference and extraction attacks. By systematically varying privacy parameters and measuring outcomes such as attack success probability, privacy leakage magnitude, and accuracy loss, penetration testing supports evidence-based calibration of privacy mechanisms that balances security requirements with operational utility.

Q3. Which deep learning–specific attack vectors are most effective at different penetration testing phases, and what system-level factors contribute to their success?

Penetration testing indicates that the effectiveness of deep learning–specific attacks varies across testing phases and system configurations. Model inversion and membership inference attacks are most effective during exploitation and post-exploitation phases, particularly in systems exposing probabilistic outputs via public APIs [67,120]. Model extraction attacks primarily succeed during iterative exploitation, where unrestricted querying enables functional replication. Data poisoning attacks are most impactful during training and update phases, especially in distributed or federated learning environments lacking robust validation. Attack success is strongly influenced by model architecture, API exposure, training pipeline design, and data governance assumptions, highlighting the importance of system-level analysis in penetration testing.

6 Challenges and Future Directions

The intersection of deep learning technologies with cybersecurity presents unprecedented challenges that fundamentally diverge from traditional security paradigms. As DL systems become increasingly integrated into critical infrastructure and sensitive applications, the security community faces a complex landscape of emerging vulnerabilities that conventional defensive strategies cannot adequately address. These challenges are compounded by the rapid pace of AI advancement, which often outpaces the development of corresponding security measures, creating persistent gaps in protection capabilities. The following analysis examines the primary obstacles that currently impede effective security assessment and privacy protection in deep learning environments, each representing a critical area where current approaches fall short of addressing the unique requirements of AI system security.

1. Inefficiencies of Traditional Penetration Testing Techniques. The unique vulnerabilities inherent in deep learning models, such as data poisoning, membership inference, and model inversion attacks, are not adequately addressed by traditional cybersecurity frameworks (e.g., OWASP, PTES, NIST, and OSSTMM), which were originally designed for conventional software systems and network infrastructures [32]. These established frameworks lack the specialized methodologies and assessment criteria required to evaluate AI-specific attack vectors that target model training processes, inference mechanisms, and data handling procedures. Consequently, these frameworks often fail to provide a comprehensive evaluation of deep learning system security, leaving critical vulnerabilities undetected and organizations exposed to sophisticated AI-targeted attacks.

2. Deficiency in Implementing Privacy Methods. Even though numerous privacy-preserving strategies have been proposed and validated in theoretical contexts, significant constraints such as excessive computational expenses, system complexity, and integration challenges with practical production environments have substantially complicated their real-world implementation [123]. The gap between theoretical privacy guarantees and practical deployment capabilities remains substantial, with many organizations struggling to balance privacy requirements with operational efficiency and system performance. Furthermore, the lack of robust, evidence-based validation studies and standardized implementation guidelines hampers wider adoption and undermines organizational confidence in these methods, creating a cycle where promising privacy technologies remain underutilized despite their theoretical benefits.

3. Continuous Transformation of Attacks. Deep learning (DL) systems are increasingly vulnerable to powerful and dynamic threats that extend beyond traditional threat models and evolve at an unprecedented pace [124]. The adversarial landscape in AI security is characterized by rapid innovation in attack methodologies, where defensive measures quickly become obsolete as attackers develop more sophisticated techniques. Therefore, most existing security tools remain reactive in nature, typically responding only after a deep learning system has been compromised and showing fundamental limitations in proactive threat detection and prevention capabilities. They lack the predictive capabilities required to identify and prevent new, advanced attacks, particularly those that exploit the unique characteristics of machine learning algorithms and training processes.

4. Lack of Tools and Technologies. Current privacy-preserving toolkits including PySyft, TensorFlow Privacy, and ART provide only minimal support for comprehensively securing deep learning systems in production environments [123]. These tools, while valuable for research and proof-of-concept implementations, often fail to provide essential enterprise features such as scalability, interoperability, and seamless integration with diverse deep learning model architectures and deployment platforms. As a result, achieving continuous security monitoring and comprehensive testing remains a significant challenge for organizations, particularly those operating large-scale AI systems that require robust, automated security assessment capabilities.

5. Regulatory Compliance. DL systems face considerable challenges in complying with stringent regulatory standards like GDPR and HIPAA, which impose strict requirements for data protection, user privacy, and system accountability. For implementing effective technical security measures that ensure privacy protection, DL systems must support complex services such as continuous user activity monitoring, comprehensive action logging, and guaranteed compliance with evolving legal requirements [125]. However, integrating these compliance capabilities into existing DL architectures and workflows is frequently complex and difficult to achieve in practice, particularly when organizations must balance compliance requirements with model performance, operational efficiency, and development timelines.