Open Access

Open Access

ARTICLE

Painted Wolf Optimization: A Novel Nature-Inspired Metaheuristic Algorithm for Real-World Optimization Problems

Faculty of Information Technology and Electrical Engineering, University of Oulu, Oulu, Finland

* Corresponding Author: Saeid Sheikhi. Email:

(This article belongs to the Special Issue: Artificial Intelligence Algorithms and Applications, 2nd Edition)

Computers, Materials & Continua 2026, 87(2), 7 https://doi.org/10.32604/cmc.2026.077788

Received 17 December 2025; Accepted 16 January 2026; Issue published 12 March 2026

Abstract

Metaheuristic optimization algorithms continue to be essential for solving complex real-world problems, yet existing methods often struggle with balancing exploration and exploitation across diverse problem landscapes. This paper proposes a novel nature-inspired metaheuristic optimization algorithm named the Painted Wolf Optimization (PWO) algorithm. The main inspiration for the PWO algorithm is the group behavior and hunting strategy of painted wolves, also known as African wild dogs in the wild, particularly their unique consensus-based voting rally mechanism, a behavior fundamentally distinct from the social dynamics of grey wolves. In this innovative process, pack members explore different areas to find prey; then, they hold a pre-hunting voting rally based on the alpha member to determine who will begin the hunt and attack the prey. The efficiency of the proposed PWO algorithm is evaluated by a comparison study with other well-known optimization algorithms on 33 test functions, including the Congress on Evolutionary Computation (CEC) 2017 suite and different real-world engineering design cases. Furthermore, the algorithm’s performance is further tested across a spectrum of optimization problems with extensive unknown search spaces. This includes its application within the field of cybersecurity, specifically in the context of training a machine learning-based intrusion detection system (ML-IDS), achieving an accuracy of 0.90 and an F-measure of 0.9290. Statistical analyses using the Wilcoxon signed-rank test (allKeywords

In recent years, rapid technological development has increased the complexity of real-life problems and heightened the need for more effective metaheuristic techniques to find optimal solutions for complex issues [1,2]. The metaheuristic is a process that produces heuristic approaches to determine the most optimal combination of variables to provide the fitting solution for complex problems in the optimization algorithm [3]. The popularity of these algorithms increased dramatically due to their performance and complexity in solving large-scale problems compared to other classical techniques [4]. In general, metaheuristic techniques could be categorized into four classes: evolutionary-based, human-based, physics-based, and swarm-based algorithms [5].

Evolutionary-based algorithms are primarily inspired by the theory of natural selection. In these algorithms, the initial population evolves based on evolutionary processes to enhance the population’s fitness. This process produces a new population with inherent characteristics and features derived from a set of randomly selected parents from the current population, using them to produce offspring for the new generation [6]. Recent population-based optimizers, such as the Chaotic Arithmetic Optimization Algorithm (CAOA) [7], Crested Porcupine Optimizer (CPO) [8], and Hippopotamus Optimization (HO) [9], have further advanced population evolution strategies on complex benchmark suites. The most common operators used in these processes are selection, crossover, and mutation. Some of the widely used evolutionary-based optimization algorithms are Genetic Algorithms (GA) [10], Differential Evolution (DE) [11], Forest Optimization Algorithm (FOA) [12], Firefly Algorithm (FA) [13], Evolution Strategy (ES) [14], and Biogeography-Based Optimizer (BBO) [15]. Evolutionary algorithms mostly provide near-optimal solutions for many problems since they do not assume the adaptive landscape or essential fitness.

The human-based methods are algorithms that are explicitly inspired by the theory of the social life behavior of humans or concepts humans have developed [16]. In addition, novel human-based methods like the Preschool Education Optimization Algorithm (PEOA) [17] and the Dragon Boat Optimization (DBO) [18] have recently been proposed to emulate teacher-student dynamics and coordinated team-paddling behavior. Some of the popular human-based techniques are Poor and Rich Optimization (PRO) [19], Group Teaching Optimization Algorithm (GTOA) [20], Teaching-Learning-Based Optimization (TLBO) [21], Queuing Search Algorithm (QSA) [22], and Group Counseling Optimization (GCO) [23], which are proposed based of this theory.

Physics-based approaches encompass any algorithm that is inspired by perceived physical phenomena or chemistry. These methods operate based on physical rules or theories and direct search agents using formulae derived from physical laws. Recent physics-driven optimizers, including the Energy Valley Optimizer (EVO) [24] and the Snow Ablation Optimizer (SAO) [25], have demonstrated effective search behaviors by modeling particle decay processes and heat-induced phase changes. Some of the optimization algorithms that are recently introduced based on this theory are Galactic Swarm Optimization (GSO) [26], Henry Gas Solubility Optimization (HGSO) [27], Atom Search Optimization (ASO) [28], Electro-Search algorithm (ES) [29], Optics Inspired Optimization (OIO) [30], Water Evaporation Optimization (WEO) [31], Equilibrium Optimizer (EO) [32], Lévy flight distribution (LFD) [33], Multi-Verse Optimizer (MVO) [34], Water Cycle Algorithm (WCA) [35], Thermal Exchange Optimization (TEO) [36], and Gravitational Search Algorithm (GSA) [37].

The swarm intelligence-based algorithms emulate social behavior, interactions, and communications between a set of plants, varieties of animals, or different creatures. The most popular social behaviors studied in this approach are hunting, exploring for food, memorizing, and mating [38]. Because communication and sharing of information are vital factors in interactions between animals and different species, swarm-based algorithms share information between multiple agents during iterations to perform efficient search processes [39]. New swarm-based approaches such as the Special Forces Algorithm (SFA) [40], the Squid Game Optimizer (SGO) [41], the Artificial Satellite Search Algorithm (ASSA) [42], the Dhole Optimization Algorithm (DOA) [43], and the Chaotic Evolution Optimization (CEO) [44] have recently been introduced, drawing on tactical, game-based, and chaotic group behaviors. In recent years, the popularity of swarm-based methods has increased in both algorithm development and application. There are various optimization algorithms introduced based on this approach, such as Particle Swarm Optimization (PSO) [45], Squirrel Search Algorithm (SSA) [46], Harris Hawks Optimization (HHO) [47], Sine Cosine Algorithm (SCA) [48], Seagull Optimization Algorithm (SOA) [49], Whale Optimization Algorithm (WOA) [50], Moth-flame optimization (MFO) [51], Pathfinder algorithm (PFA) [52], Gray Wolf Optimizer (GWO) [53], and Emperor Penguin Optimizer (EPO) [54].

Furthermore, metaheuristic algorithms can be categorized by the number of agents they employ in the search process [55]. Accordingly, population-based algorithms utilize multiple search agents, whereas individualist methods employ a single search agent in each iteration. A population-based method can provide more information using multiple agents and better explore the search space to find the optimum solution. However, it can have massive increases in the number of evaluations on the objective function, making computationally intensive objective function problems. Some of the well-known individualist metaheuristics methods are Tabu Search (TS) [56], Hill Climbing [57], and Simulated Annealing (SA) [58]. The metaheuristic method is widely applied by researchers to solve various optimization problems across several domains; however, no single algorithm can provide the optimal solution for all cases. Some metaheuristic methods are particularly suited to specific types of issues but may be ineffective for others [59]. Therefore, there is a continuous need for the development of new optimization techniques that can more effectively address a broad spectrum of current and future challenges that are complex to solve using existing algorithms. This paper introduces a new swarm-based metaheuristic algorithm, named Painted Wolf Optimization (PWO), inspired by the social hierarchy and hunting behaviors of painted wolves, also known as African wild dogs. The Painted Wolf Optimization algorithm draws inspiration from the intelligent behaviors of painted wolves in hunting and exploring as a pack, as well as their unique pre-hunt voting system, which contributes to their success as hunters in the natural world. It is essential to note that, although several wolf-inspired algorithms exist in the literature, particularly the Grey Wolf Optimizer (GWO) [53], the proposed PWO algorithm is fundamentally distinct. The painted wolf (Lycaon pictus) is a biologically separate species from the grey wolf (Canis lupus), exhibiting unique social behaviors including consensus-based decision-making through sneezing rallies. PWO introduces novel algorithmic mechanisms, including a rally-based exploration, exploitation switching mechanism, dynamic alpha influence modulation, and dual probabilistic exploration strategies that are not present in GWO or its variants.

The main contributions of this work are outlined as follows:

• A novel optimization algorithm named Painted Wolf Optimization is proposed for solving a diverse array of optimization problems.

• The efficacy of the PWO algorithm is evaluated across various domains, including 33 different benchmark functions, cybersecurity applications, and three engineering scenarios (i.e., classical, CEC-2017).

• The performance of the PWO algorithm is compared against well-established metaheuristic algorithms.

• Analysis of results demonstrates that the PWO algorithm provides the most optimal solutions and outperforms other benchmarked algorithms in efficiency.

The remainder of this paper is organized as follows: Section 2 introduces the biological inspiration behind the proposed PWO algorithm, including the pre-hunting rally, exploration, and exploitation phases, as well as the pseudocode and flowchart of PWO. Section 3 presents the experimental results and discussion, detailing the benchmark set, compared algorithms, experimental setup, and performance analyses (exploitation, exploration, local minima avoidance, convergence, scalability, and stagnation). This section also provides a summary of the performance comparisons. Section 4 explores the application of the PWO algorithm to engineering benchmark problems, while Section 5 focuses on its utility in a cybersecurity context, covering data collection, model training, experiment setup, and evaluation. Finally, Section 6 concludes the paper by summarizing the key findings and contributions.

This section presents the system model, optimization framework, and working mechanism of the proposed Painted Wolf Optimization algorithm. The mathematical formulation translates the unique biological behaviors of painted wolves into computational procedures for optimization.

The painted wolf (Lycaon pictus), also known as the African wild dog or Cape hunting dog, is an endangered species residing in various African countries [60]. Renowned for their intricate social structures, painted wolves live in packs of 6 to 20 individuals. This social living confers significant advantages, particularly in hunting, where the success of the pack is largely dependent on the cumulative endurance of its members [61]. Unlike predators relying on stealth, speed, and strength, painted wolves utilize exhaustive predation strategies to pursue and capture prey [62]. They typically hunt larger prey as a pack, while solitary individuals may hunt smaller animals such as hares and rodents. Additionally, painted wolves exhibit a nomadic lifestyle, often covering distances of up to 50 km per day [63]. Fig. 1 showcases photographs of painted wolves in their natural habitat.

Figure 1: Pictures of the individual painted wolf and its pack.

A sophisticated behavior observed in painted wolves is their consensus-driven decision-making process for hunting. Before a hunt, they engage in a pre-hunting ritual known as a rally, during which each pack member may express their willingness to participate by sneezing [64]. This process does not limit the number of sneezes per individual, allowing for a form of voting that is influenced by the social hierarchy within the pack. When an alpha painted wolf initiates the rally, a smaller number of affirmative signals (often just three sneezes) are needed for the pack to proceed with the hunt. In contrast, if a lower-ranking member starts the rally, the minimum for the hunt’s commencement typically requires ten sneezes [64]. This demonstrates that while dominant individuals can sway the decision-making process, they are not essential for initiating a rally.

The intricate group behavior and consensus decision-making of painted wolf packs, particularly their pre-hunting rally ritual, are the primary inspirations for the proposed Painted Wolf Optimization (PWO) algorithm. This algorithm draws on the complex hunting behaviors and strategies of painted wolves, translating these into a mathematical model that underpins the development of a novel optimization approach. In the following subsections, we detail how the natural behaviors of painted wolves are mathematically modeled and how this model informs the structure and function of the PWO algorithm.

Painted wolves search for significant prey, prompting them to explore and identify potential targets before initiating a rally. In our algorithm, the current best position (the one with the optimal solution cost) is considered the alpha or leader of the pack. The leader’s influence is modeled by a dynamic parameter, Alpha_influence, which changes at each iteration:

where t is the current iteration, VOTE_INCREMENT is a constant base influence factor (set to 0.04 in the implementation), and a(t) is a control parameter that decreases linearly from 2 to 0 over the course of the iterations.

During the rally, each pack member contributes to a collective rally_strength based on their performance relative to the leader. A vote is cast if the following condition is met:

If the condition is true for the

2.2 Search for Prey (Exploration Phase)

After the rally, the pack decides whether to hunt or continue searching. This decision is mediated by a calculated RallyThreshold:

where

Strategy 1: The pack follows a randomly chosen member. This strategy employs a unique scalar-to-vector update. For each dimension j of the wolf’s position vector, a new position for the entire vector is calculated:

where

Strategy 2: The pack coordinates its search around the current alpha. The position is updated as follows:

where

2.3 Hunting (Exploitation Phase)

If ‘RallyStrength’ meets or exceeds ‘RallyThreshold’, the pack begins the hunt, converging on the leader’s position. This exploitation phase is modeled as a per-dimension update:

where the update is performed for each dimension

2.4 Pseudocode of the PWO Algorithm

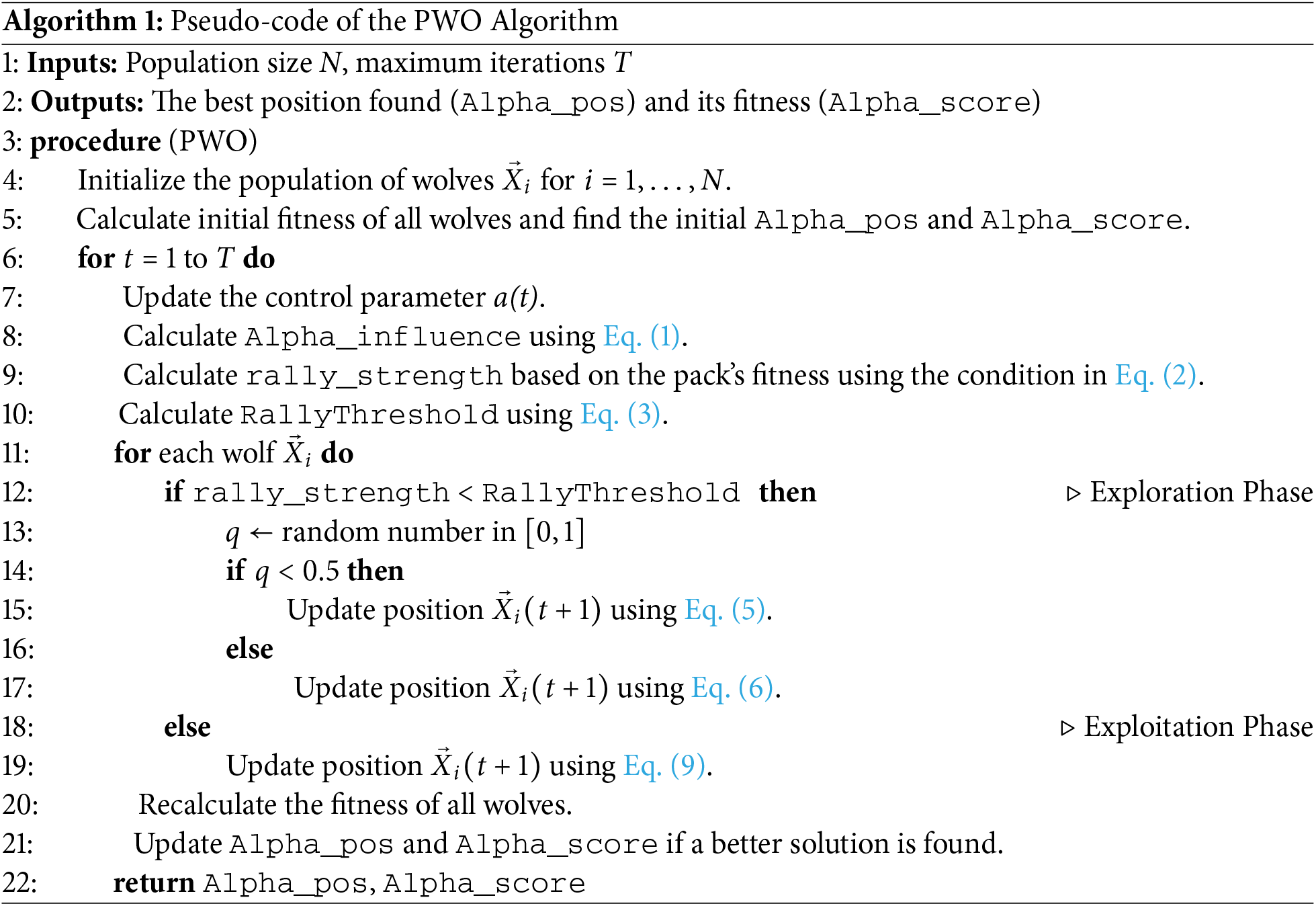

Algorithm 1 summarizes the pseudocode of the proposed PWO optimization algorithm.

2.5 Steps and Flowchart of PWO

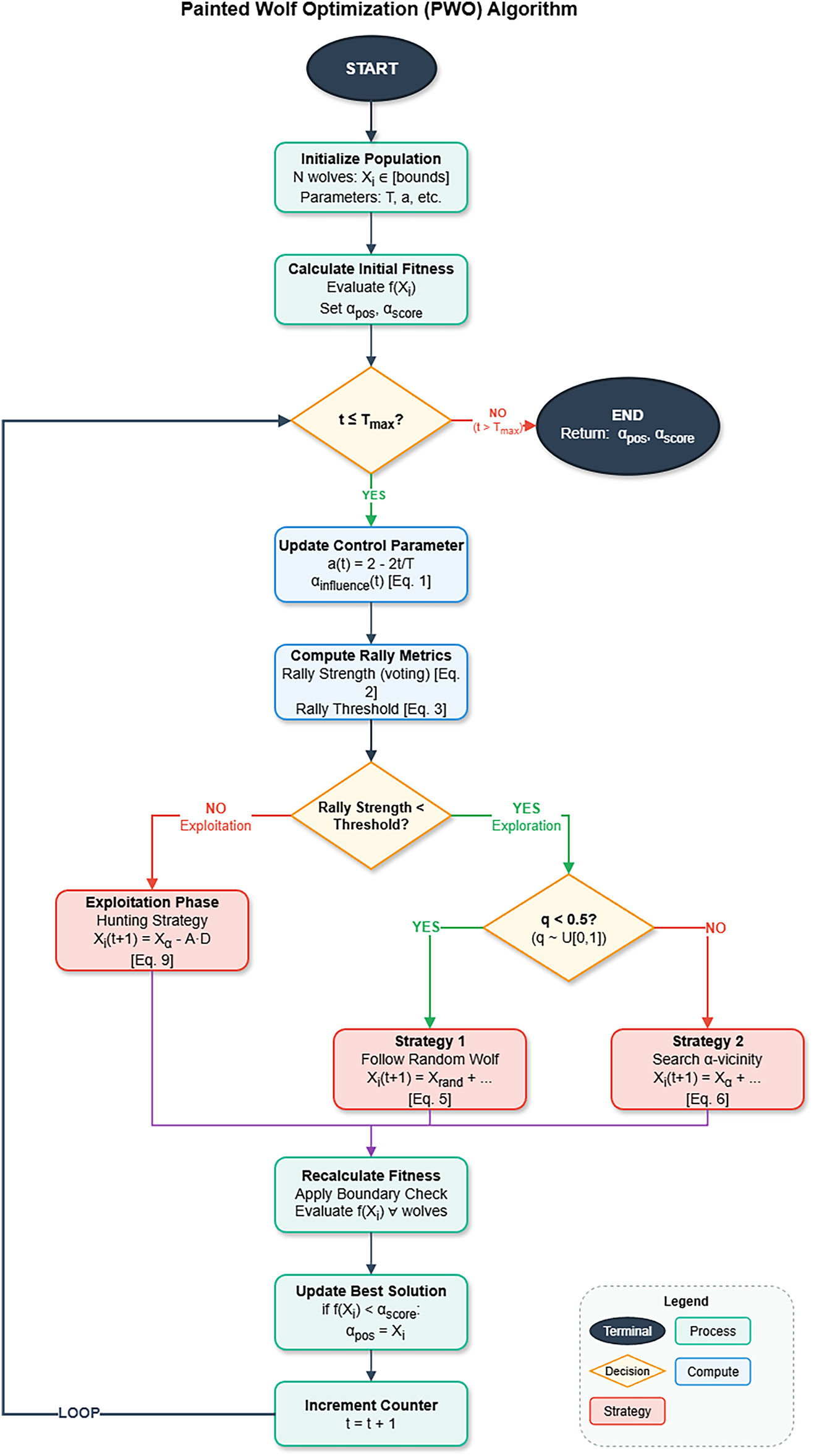

The flowchart of the proposed PWO is presented in Fig. 2, and the steps of the algorithm are summarized as follows:

1. Step 1: Initialize the population of N painted wolves with random positions in the search space.

2. Step 2: Set the initial parameters, including the maximum number of iterations T.

3. Step 3: Calculate the fitness value for each wolf and initialize Alpha_pos and Alpha_score with the best solution found.

4. Step 4: For each iteration, calculate the rally_strength based on the pack’s performance relative to the alpha using the condition in Eq. (2).

5. Step 5: Calculate the RallyThreshold using Eq. (3) to determine the pack’s next action.

6. Step 6: Compare rally_strength to RallyThreshold.

• If it is lower (Exploration), randomly choose between updating positions based on a random leader using Eq. (5) or based on the alpha using Eq. (6).

• If it is met or exceeded (Exploitation), update positions by converging on the alpha’s location using Eq. (9).

7. Step 7: Recalculate the fitness for each wolf after the position update. If a better solution is found, update Alpha_pos and Alpha_score.

8. Step 8: Check if the termination criterion (maximum iterations T) is met. If not, repeat from Step 4.

9. Step 9: Return the final Alpha_pos and Alpha_score as the optimum solution.

Figure 2: Flowchart of the proposed PWO algorithm.

3 Experimental Results and Discussion

This section includes the experimental setup and the investigation of the experiment outcomes of the proposed algorithm. The effectiveness of the PWO algorithm is evaluated on 33 standard test functions. Section 3.1 describes the benchmark test functions used, Section 3.2 explains the experimental preparation and parameters, and Section 3.3 reports the analysis of the results achieved by the proposed PWO algorithm and compares it with well-known metaheuristic algorithms.

3.1 Benchmark Set and Compared Algorithms

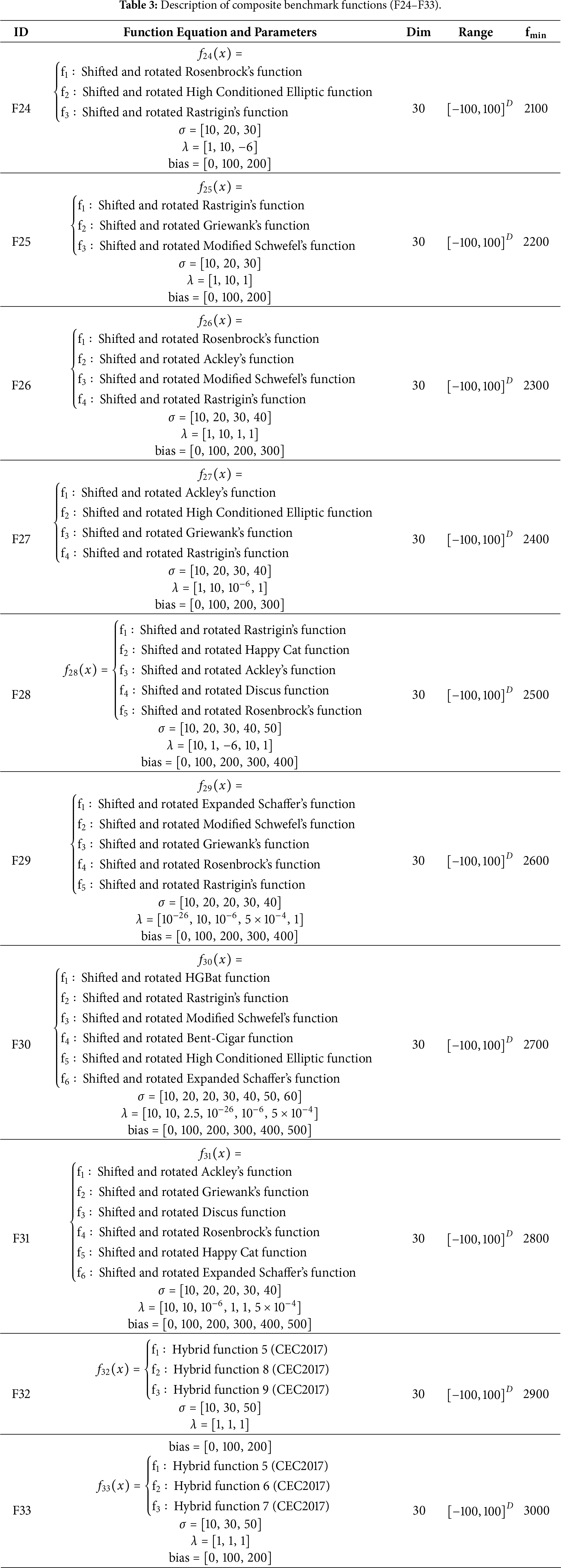

In this research, we conducted experiments on 33 different benchmark functions. The benchmark functions are grouped into four main parts: unimodal [65], fixed-dimension multimodal [13], and composite functions [66].

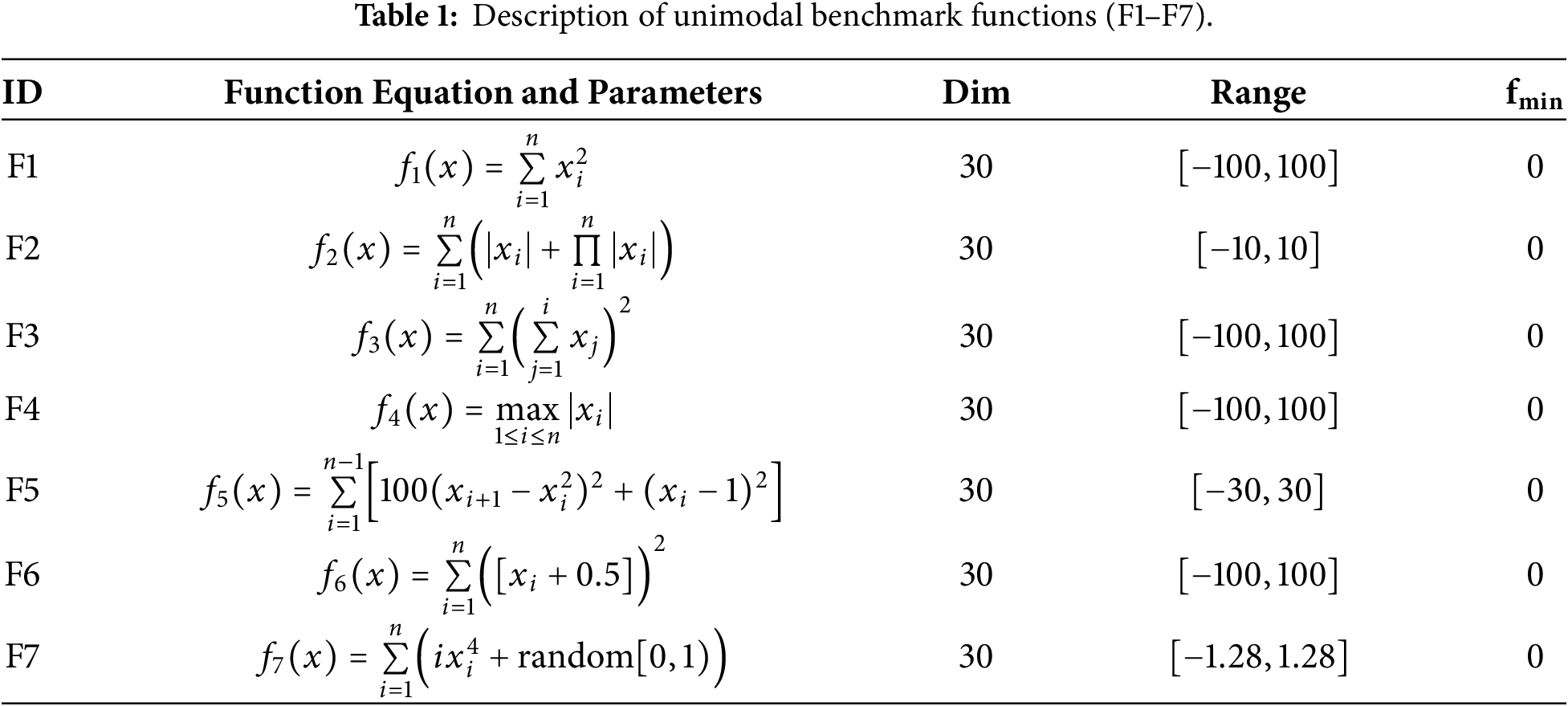

• Unimodal functions (F1–F7): These functions have only one global optimum and are used to examine the exploitation capability of metaheuristic algorithms. Their description is given in Table 1.

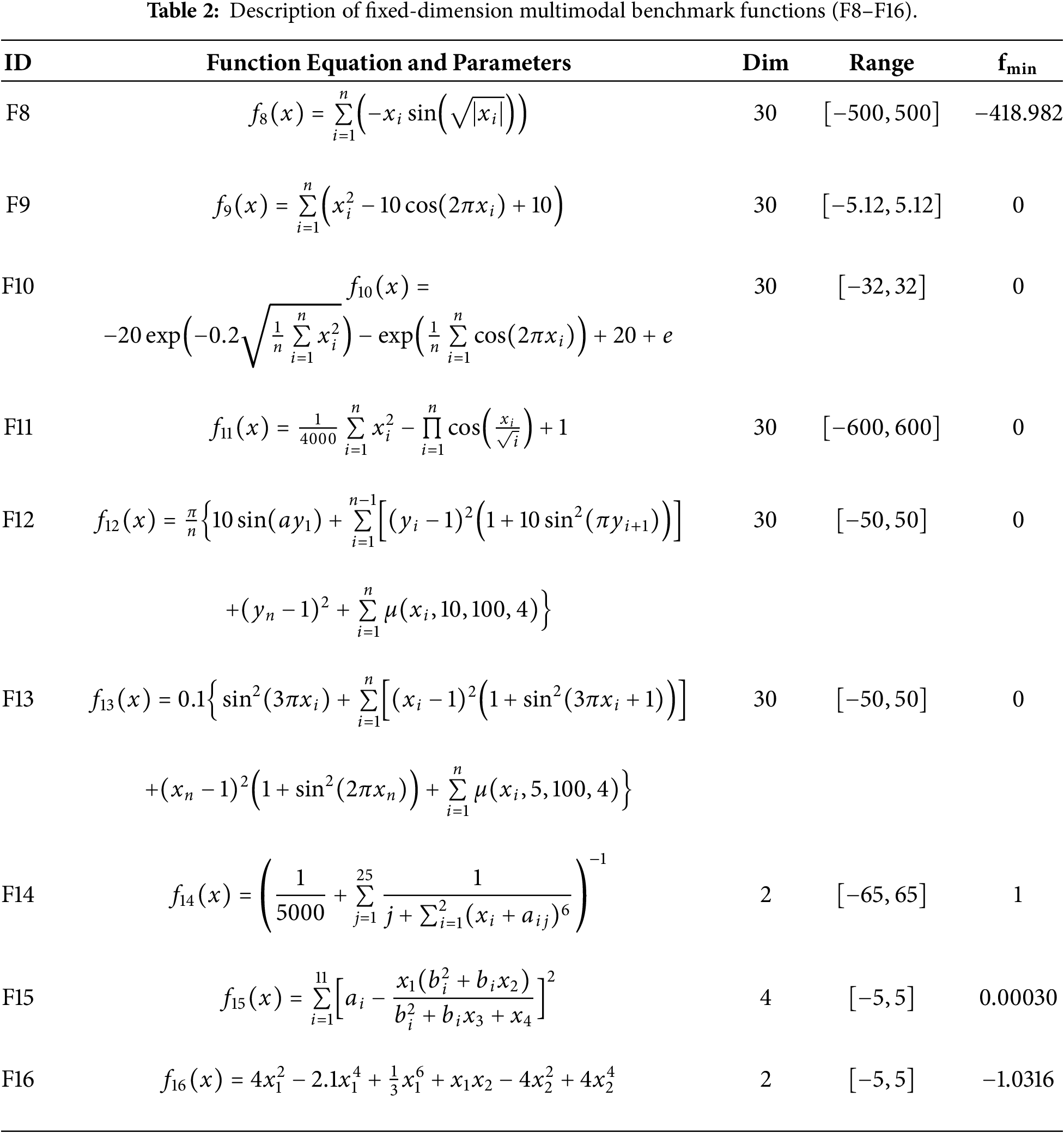

• Fixed-dimension multimodal functions (F8–F23): These functions contain multiple local optima. Their description is given in Table 2.

• Composite functions (F24–F33): These functions are typically shifted, rotated, biased, and combine classical unimodal and multimodal functions. Their description is provided in Table 3. Additional information about these functions can be found in [66].

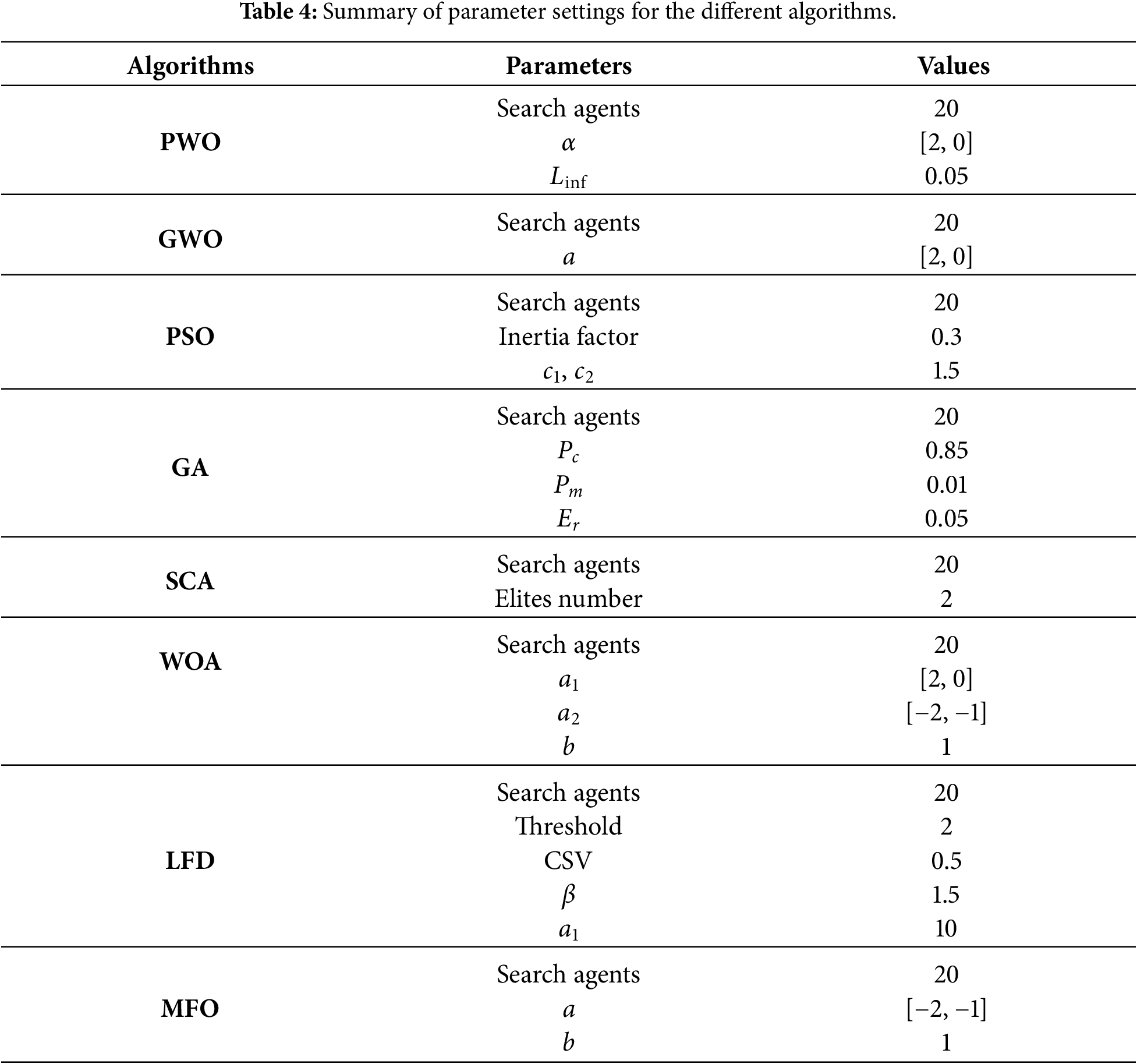

The experiments were run on a system equipped with an Intel i5-9400 CPU and 16 GB of RAM, using MATLAB R2020a on Windows 10 (64-bit). In each experiment, the following settings were used:

• 20 search agents.

• Maximum iterations: 1000.

• Each algorithm was run 30 independent times with different random seeds to ensure statistical validity.

• All algorithms were evaluated using the same number of fitness evaluations per run to ensure fair comparison.

Table 4 summarizes the parameter settings for the proposed PWO algorithm and the compared algorithms.

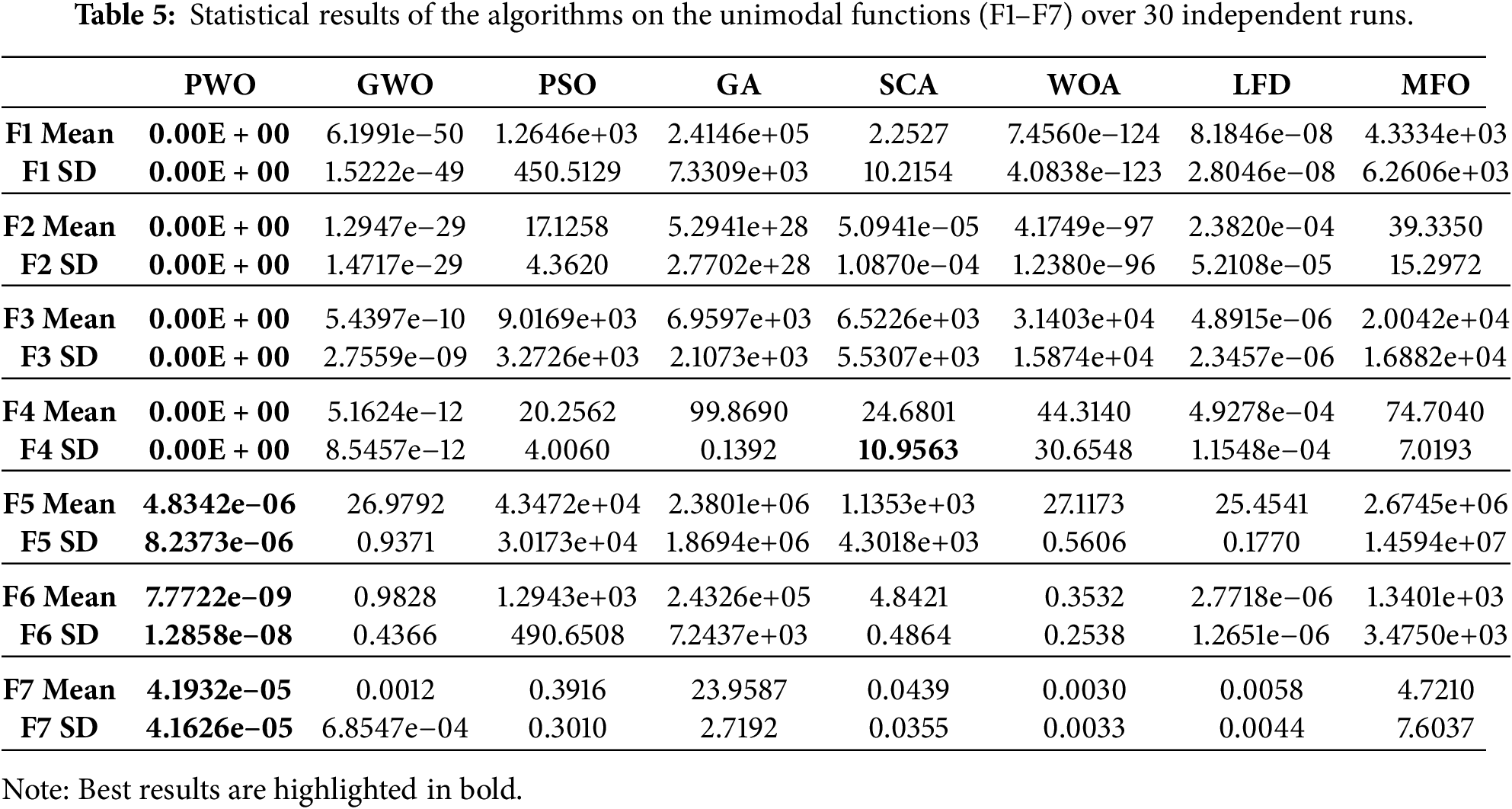

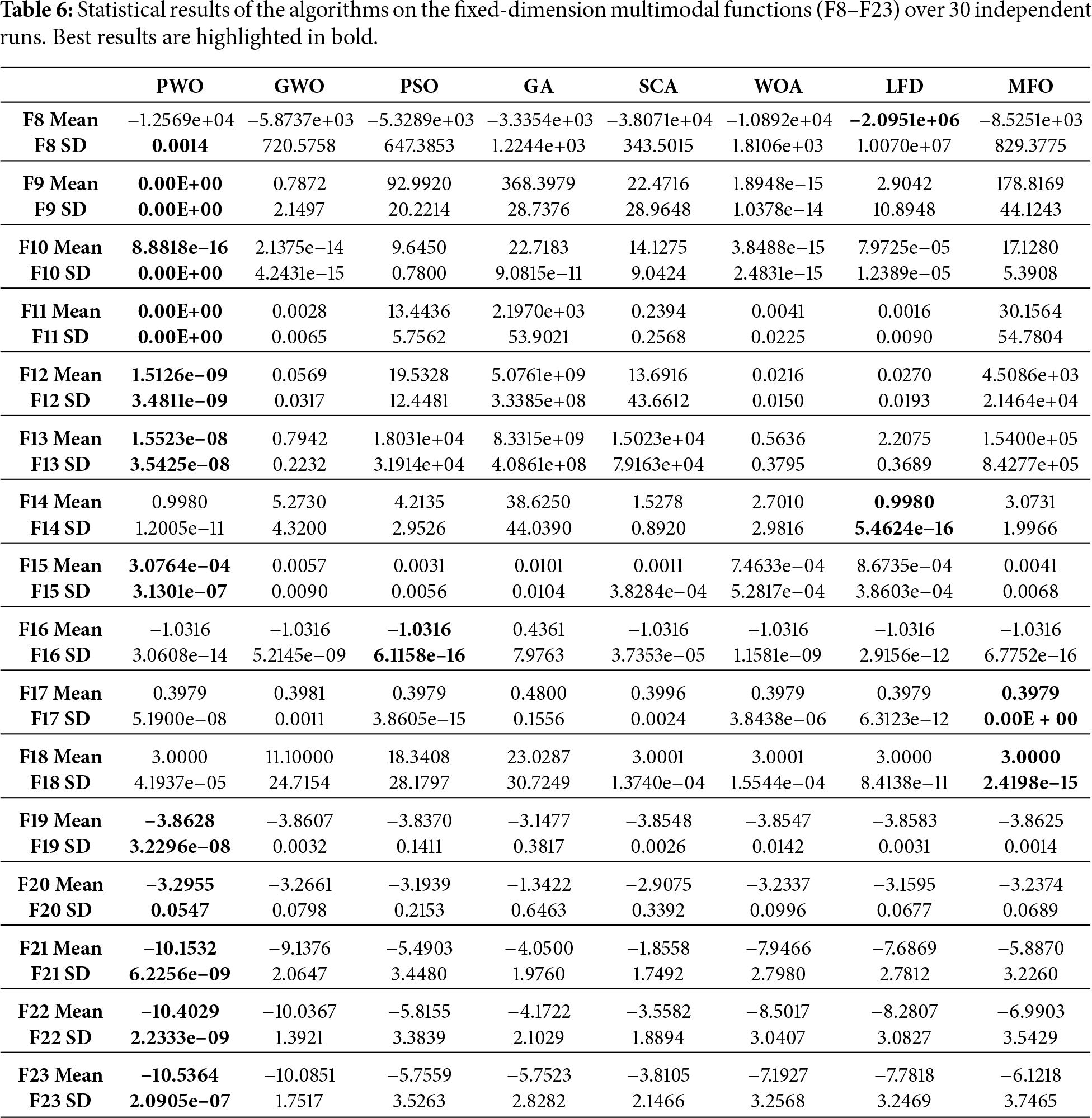

The efficiency of the proposed PWO algorithm is evaluated in the 33 benchmark functions (grouped as unimodal, multimodal, and composite) and in several real-world engineering design cases. The results are compared with those of eight well-established algorithms (GWO, PSO, GA, SCA, WOA, LFD, MFO). Performance is reported in terms of standard deviation (SD) and mean over 30 independent runs.

3.3.1 Analysis of Functions F1–F7 (Exploitation)

The unimodal functions (F1–F7) contain one global optimum and serve to assess the exploitation capability of the algorithms. Table 5 shows that the proposed PWO algorithm provided competitive results in these tests.

3.3.2 Analysis of Functions F8–F23 (Exploration)

The fixed-dimension multimodal functions (F8–F23) are characterized by multiple local optima. Table 6 reports that the PWO algorithm outperformed many other algorithms in most test cases.

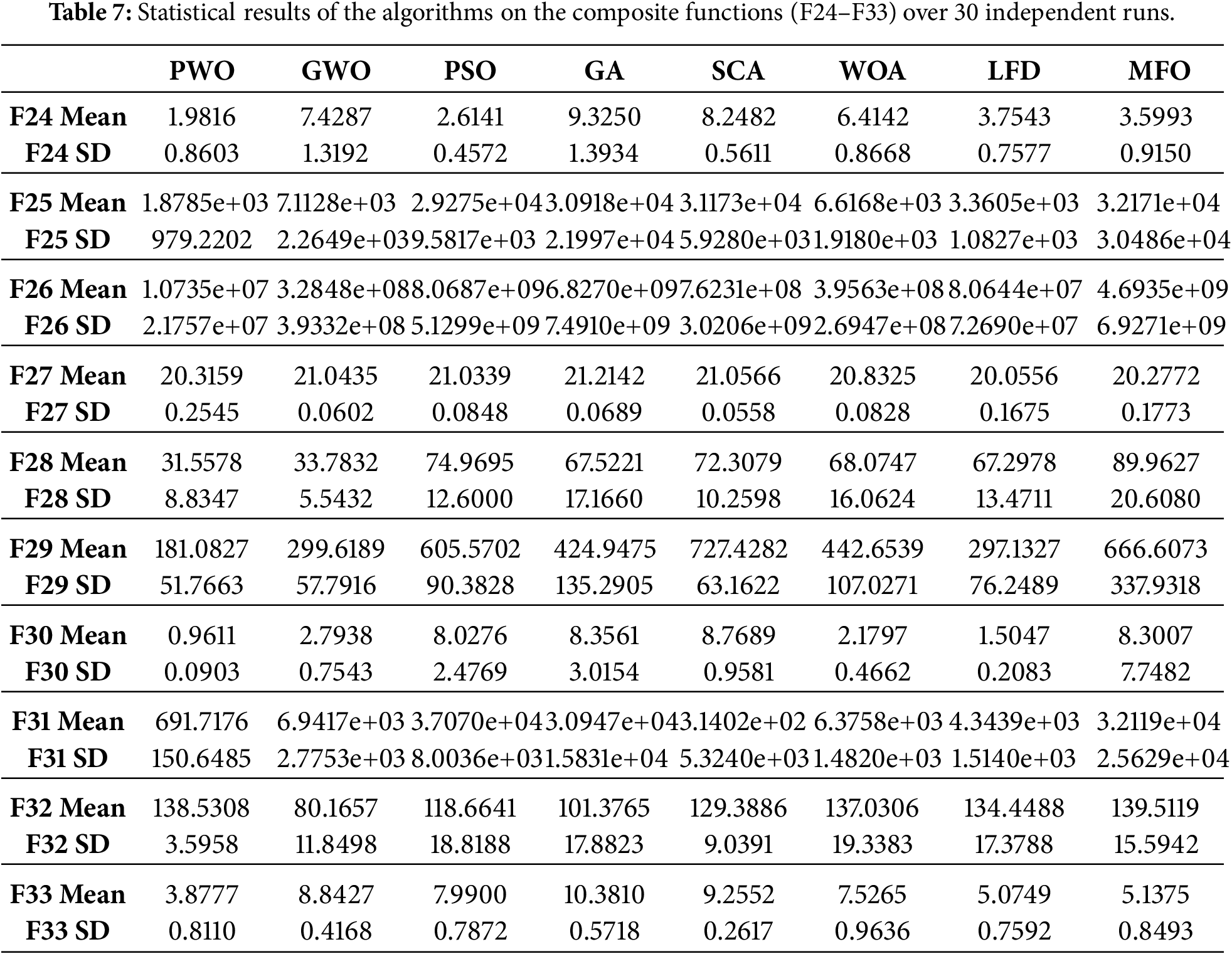

3.3.3 Analysis of Functions F24–F33 (Local Minima Avoidance)

Composite functions (F24–F33) require balancing exploration and exploitation. Table 7 indicates that the proposed PWO algorithm achieved the best or highly competitive results compared to the other algorithms.

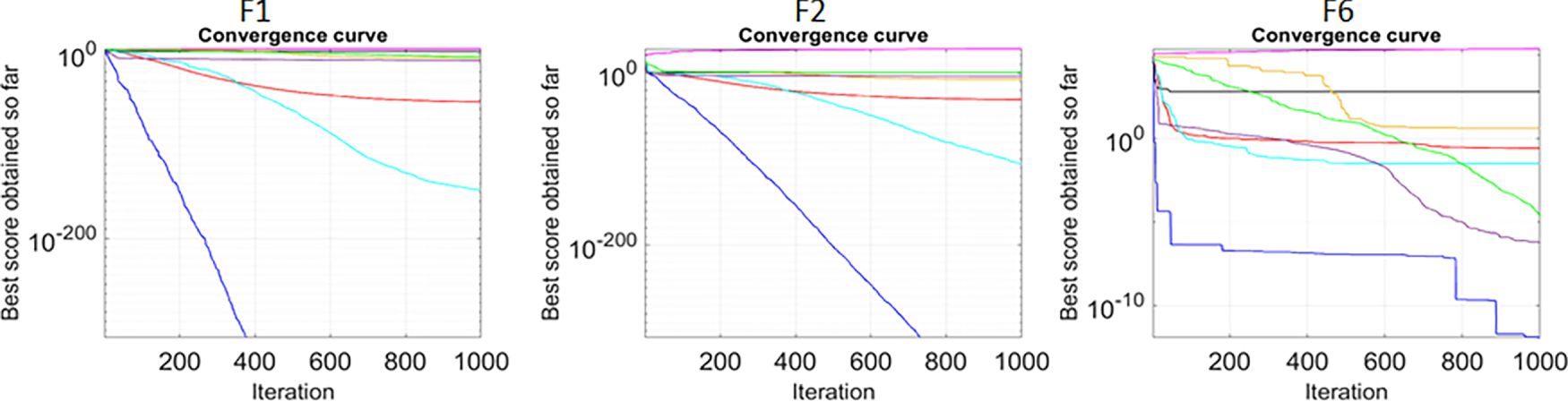

The convergence curves, which depict the relationship between the number of iterations and the fitness values, are used to visually compare the convergence rates of the algorithms. Fig. 3 shows the convergence curves of the PWO algorithm vs. several competitive methods (in 30 runs and 1000 iterations). Three distinct behaviors are observed:

1. Gradual convergence: As seen in functions F1, F2, and F7.

2. Late-stage convergence: As observed in functions F6, F12, and F13.

3. Rapid initial convergence: As shown in functions F24, F26, and F29.

Figure 3: Convergence curves for the PWO algorithm compared to other methods on different benchmark functions.

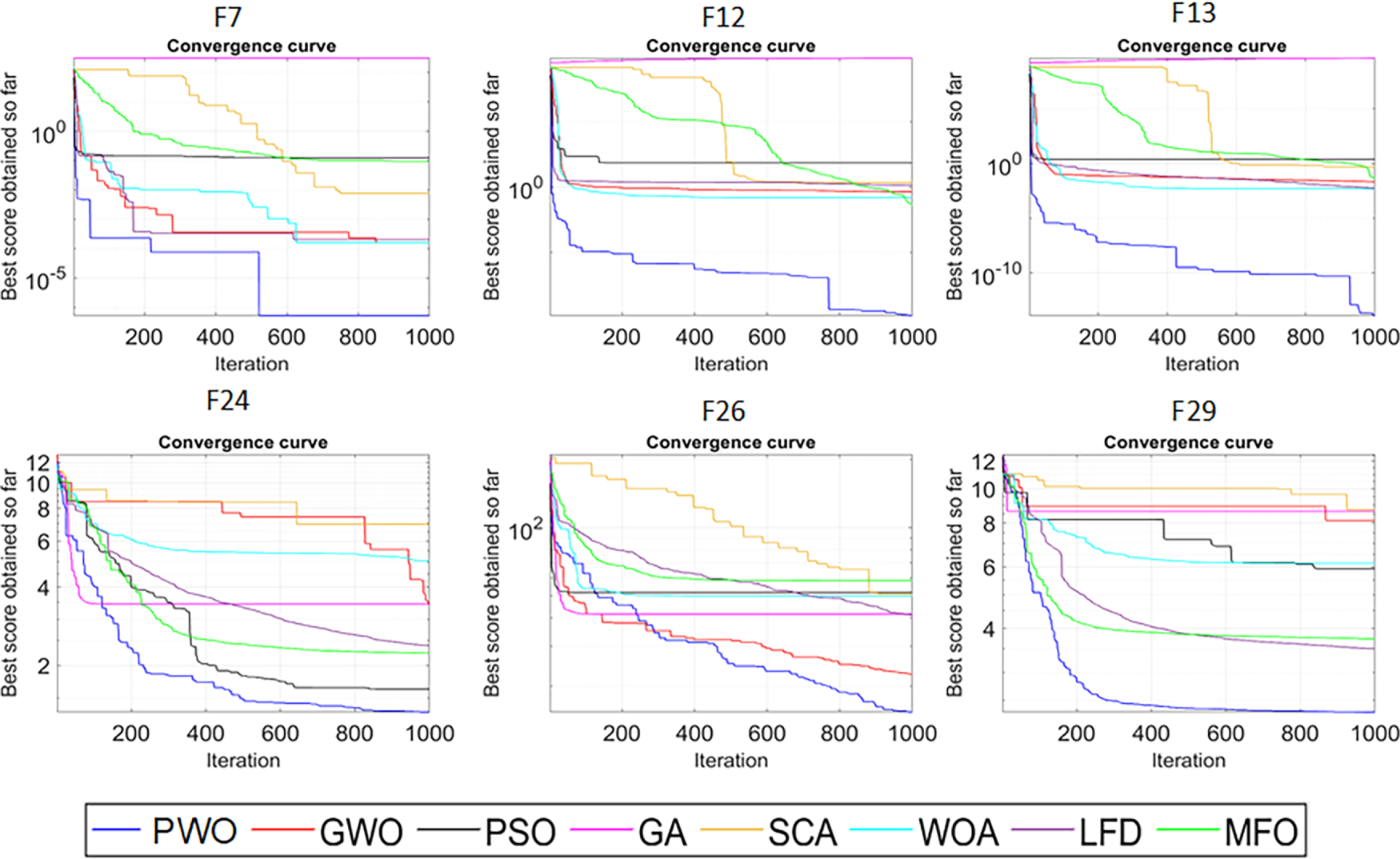

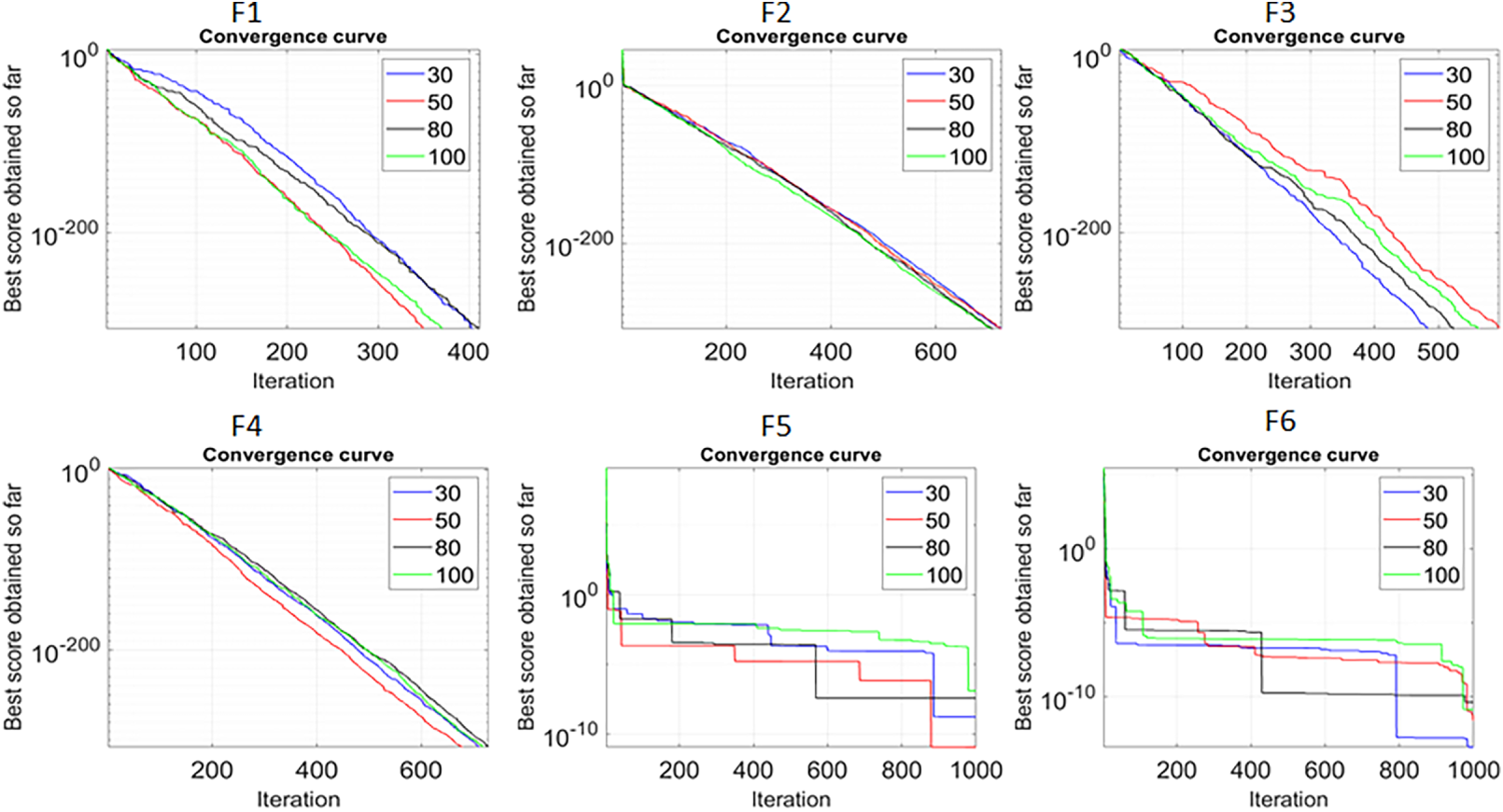

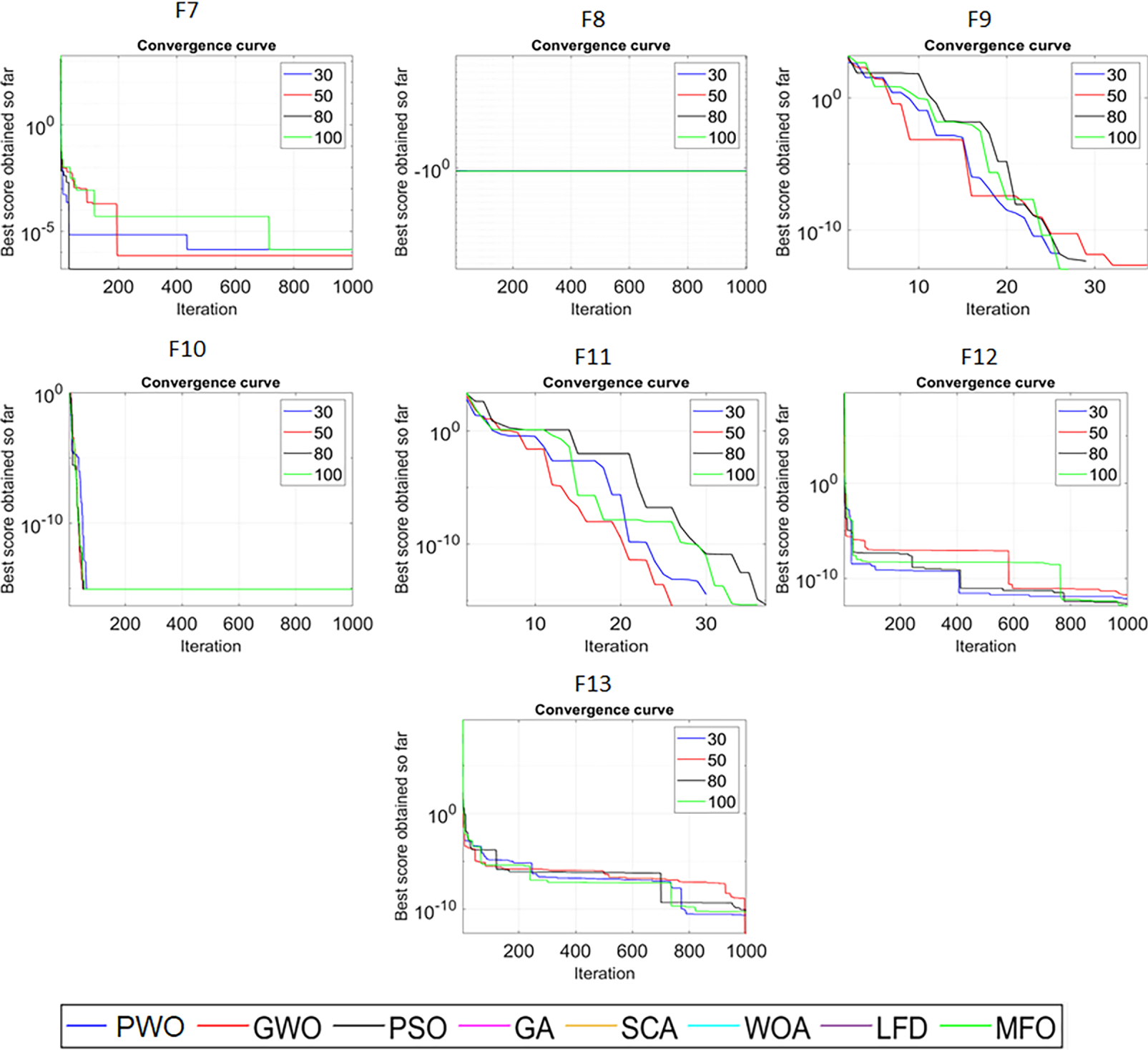

To evaluate scalability, experiments were conducted using benchmark functions with 30, 50, 80, and 100 dimensions. Fig. 4 shows that although some degradation is observed at higher dimensions, the PWO algorithm maintains a good balance between exploration and exploitation.

Figure 4: Scalability results of the PWO algorithm on the F1–F13 functions with different dimensions.

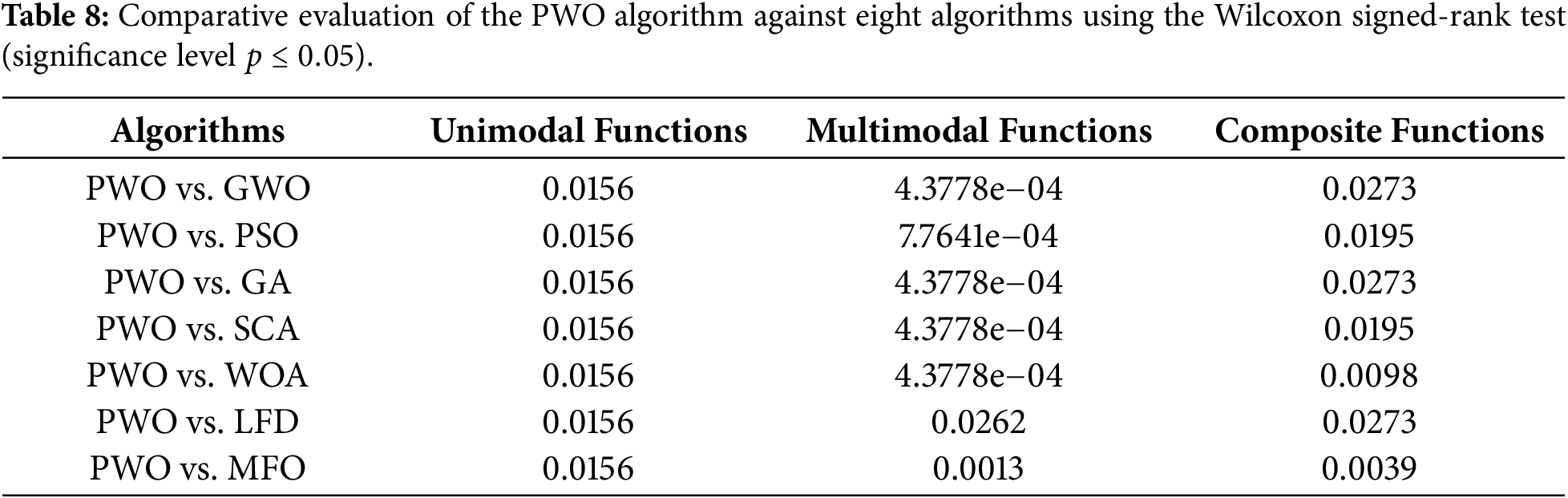

3.3.6 Statistical Test Analysis

The Wilcoxon signed-rank test is used to evaluate the statistical significance of the PWO algorithm compared to the benchmark algorithms. The null hypothesis (

3.4 Summary of Performance Comparison

Overall, the experimental results show that the proposed PWO algorithm consistently obtains competitive or superior performance across different types of benchmark functions and real-world engineering problems. Its balanced exploration and exploitation, as evidenced by the convergence and scalability analyses and the statistical tests, validate its effectiveness compared to current state-of-the-art methods.

4 PWO for Engineering Benchmark Tests

In this section, in order to evaluate the performance of the PWO algorithm in solving real-world engineering problems, the proposed algorithm is used to tackle three well-known engineering design problems. Solving engineering design problems using metaheuristic optimization algorithms has been widely used in previous studies. This is because of the nonlinear characteristics of engineering design problems, making metaheuristic algorithms a proper candidate for tackling these problems. In this study, the PWO algorithm was applied in solving the following engineering benchmark problems: welded beam design, tension/compression spring design, and pressure vessel design. In all experiments, the PWO provided results compared with the other well-known optimization techniques utilized in previous sections.

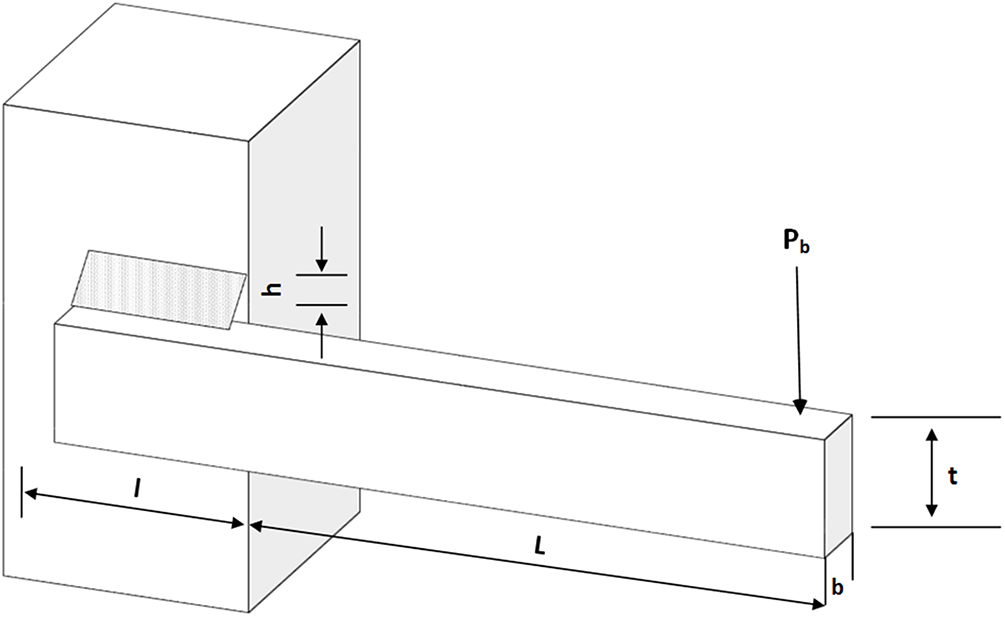

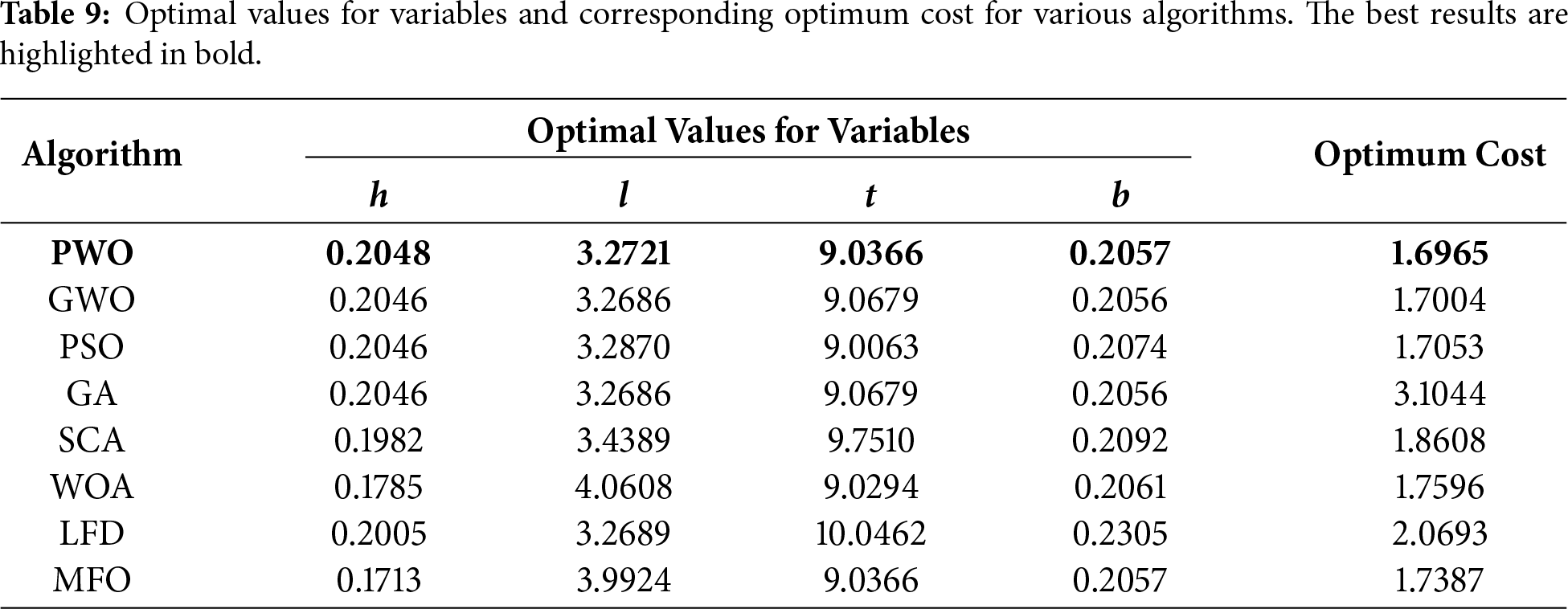

The welded beam design is a test that intends to determine the minimum manufacturing cost of welded beams. It is necessary to consider four main constraints in this problem: beam width (

Figure 5: The schematic illustration of the welded beam design problem.

Consider

Minimize

Subject to

Variable Range

Where

Table 9 reports the optimal results obtained by the PWO and other competitive algorithms for solving the welded beam design problem. The table results reveal that the PWO algorithm outperforms considered metaheuristic algorithms and provides the best optimal solution for solving this problem. The results also show the ability of the proposed PWO algorithm for solving complex problems that have multiple nonlinear constraints effectively.

4.2 Tension/Compression Spring Design

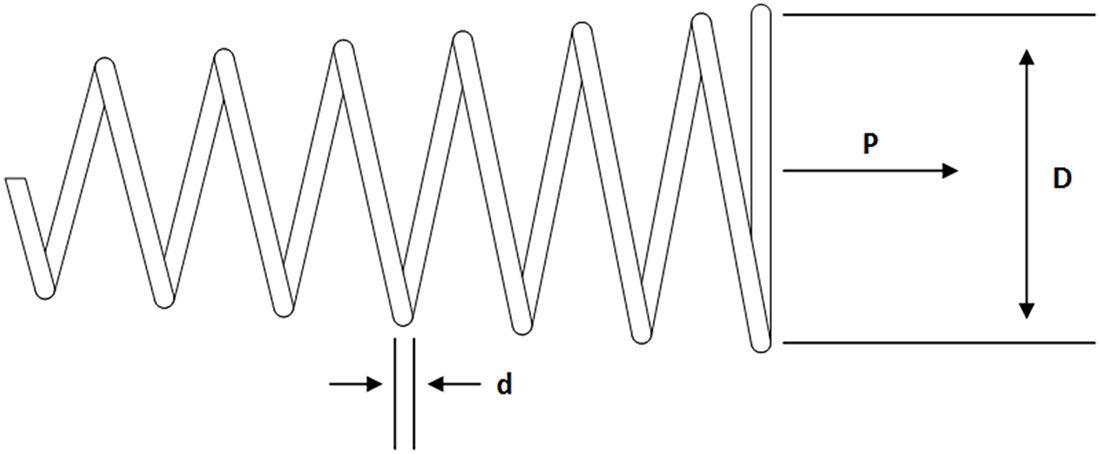

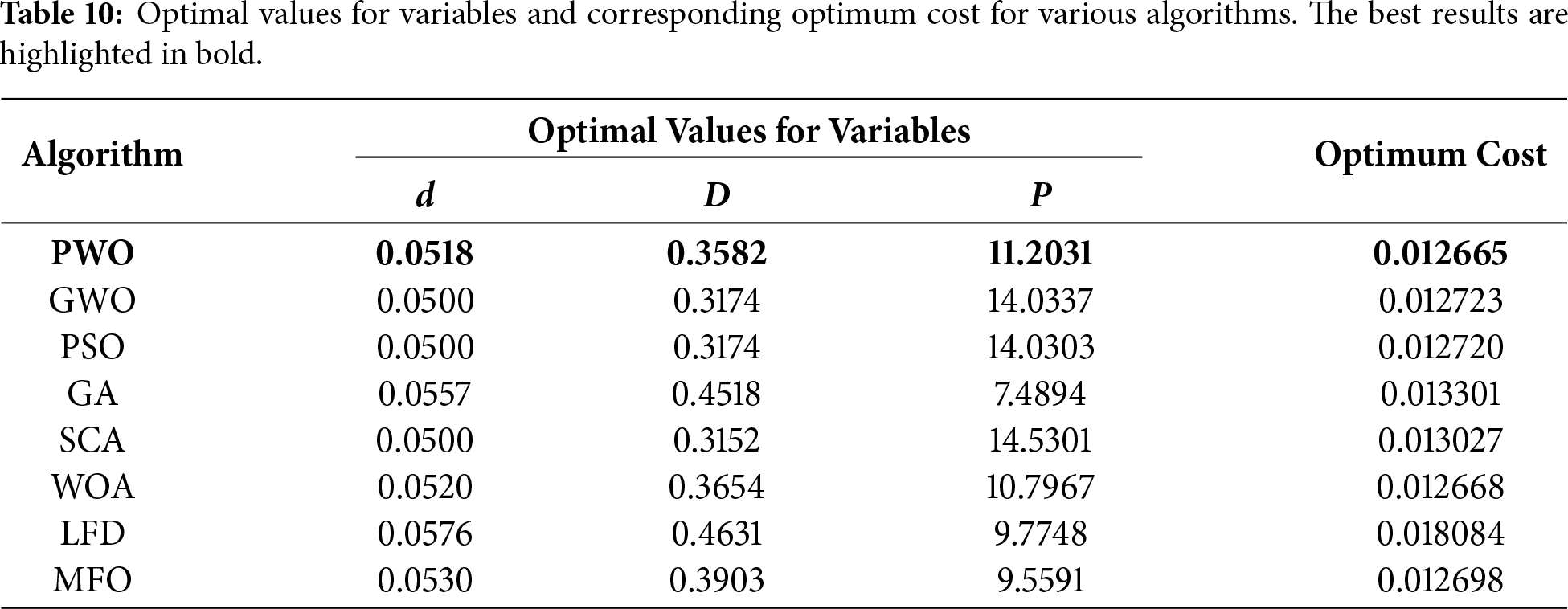

The main purpose of this test is to determine the minimum total weight of the tension/compression spring, as illustrated in Fig. 6. This design test includes three design variables: the number of active coils (N), the wire diameter (

Figure 6: The schematic illustration of the tension/compression spring design problem.

Consider

Minimize

Subject to

Table 10 presents the optimal results obtained by the PWO and other well-known algorithms for solving the tension/compression spring design problem. The results explain that the PWO algorithm has superiority in solving this problem, and it can outperform competitive algorithms in terms of providing the best optimal solution.

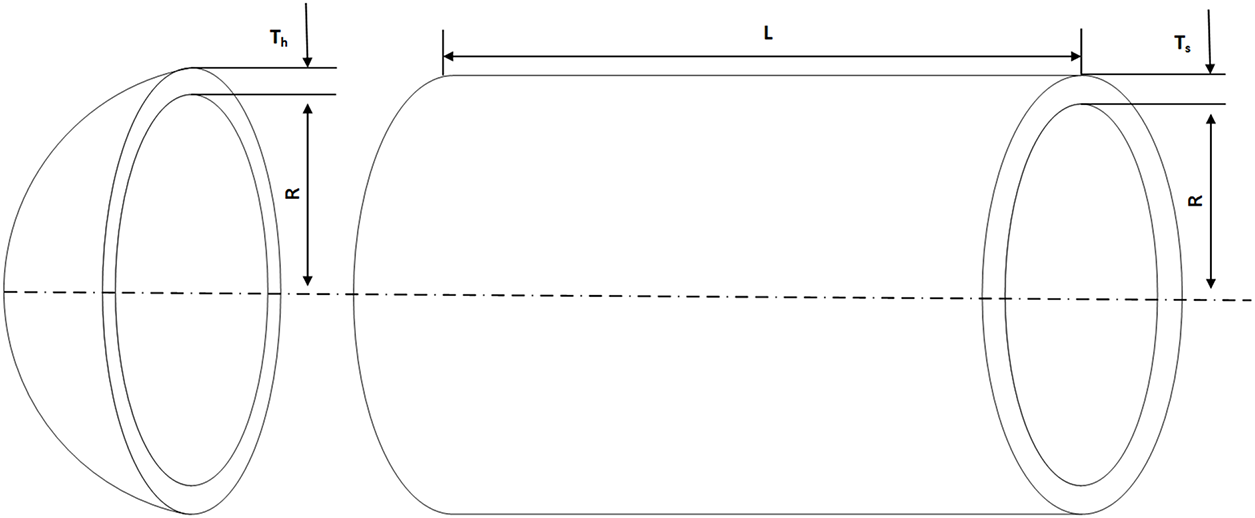

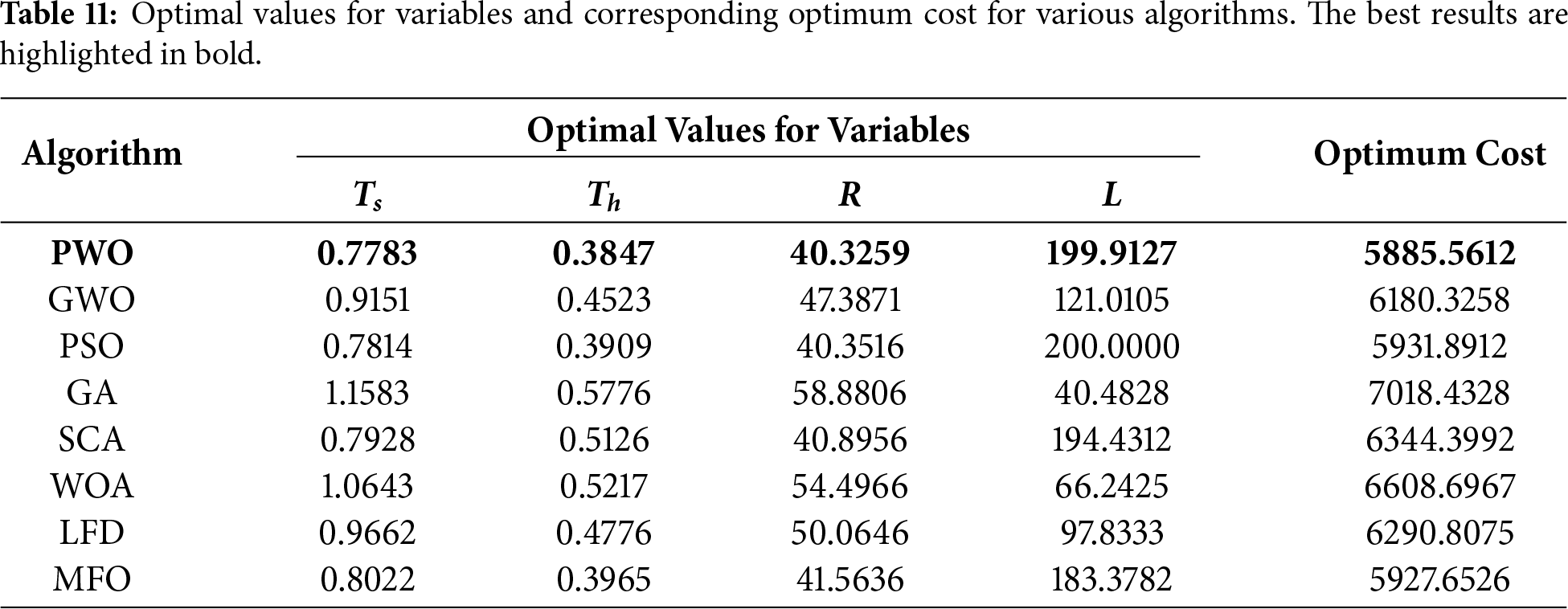

This problem considers minimizing the total cost of pressure vessels, including the cost of material, forming, and cylindrical vessel welding, as illustrated in Fig. 7. This problem contains four variables: the thickness of the head (

Figure 7: The schematic illustration of the pressure vessel design problem.

Consider

Minimize

Subject to

Variable Range

Table 11 tabulates the optimal results obtained by the PWO and other competitive algorithms for solving the pressure vessel design problem. The reported results demonstrate that the PWO algorithm performed better than other well-established optimization algorithms and provided the best optimal solution for solving this problem.

In this section, in order to evaluate the performance of the PWO algorithm in solving cyber security issues, the proposed algorithm is used to tackle training issues in Machine Learning Intrusion Detection Systems (ML-IDS). Solving training issues in ML-IDS using metaheuristic optimization algorithms can overcome the limitations of current training methods and improve the performance of ML models [67]. This is because they offer effective and efficient optimization techniques for finding reasonable solutions in complex and high-dimensional search spaces. Furthermore, ANN model training involves adjusting the weights and biases of the network to minimize a defined objective function, typically the error between the network’s output and the desired output, making metaheuristic algorithms a proper candidate for tackling these problems. This study applied the PWO algorithm to train the ML-IDS weights and biases on an NSL_KDD dataset. The PWO provided results in all experiments compared with the other well-known optimization techniques utilized in previous sections.

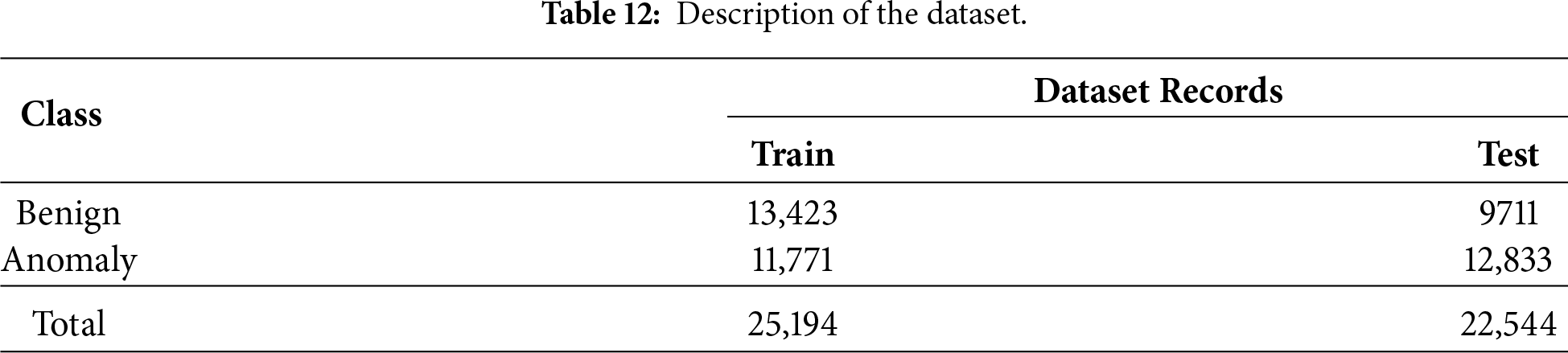

The evaluation of AI-based intrusion detection systems typically relies on using an appropriate dataset. The NSL-KDD dataset plays a key role in evaluating intrusion detection systems. The UCI machine learning repository offers KDD Cup 99, a well-established dataset for validating network security solutions. However, the NSL-KDD dataset represents an improved iteration of the KDD Cup dataset, specifically modified to overcome the significant limitations of its predecessor. Some of the main improvements are eliminating noisy, irrelevant, and duplicate records, which enhances the evaluation process performance. Furthermore, the NSL-KDD dataset introduces crucial modifications, including partitioning the dataset into test and train sets, enabling comprehensive experiments, and employing a sampling strategy that ensures a proportional representation of the original dataset’s problematic level groups. Therefore, the NSL_KDD dataset was selected for conducting the experiments. The details of the dataset records are presented in Table 12 as follows:

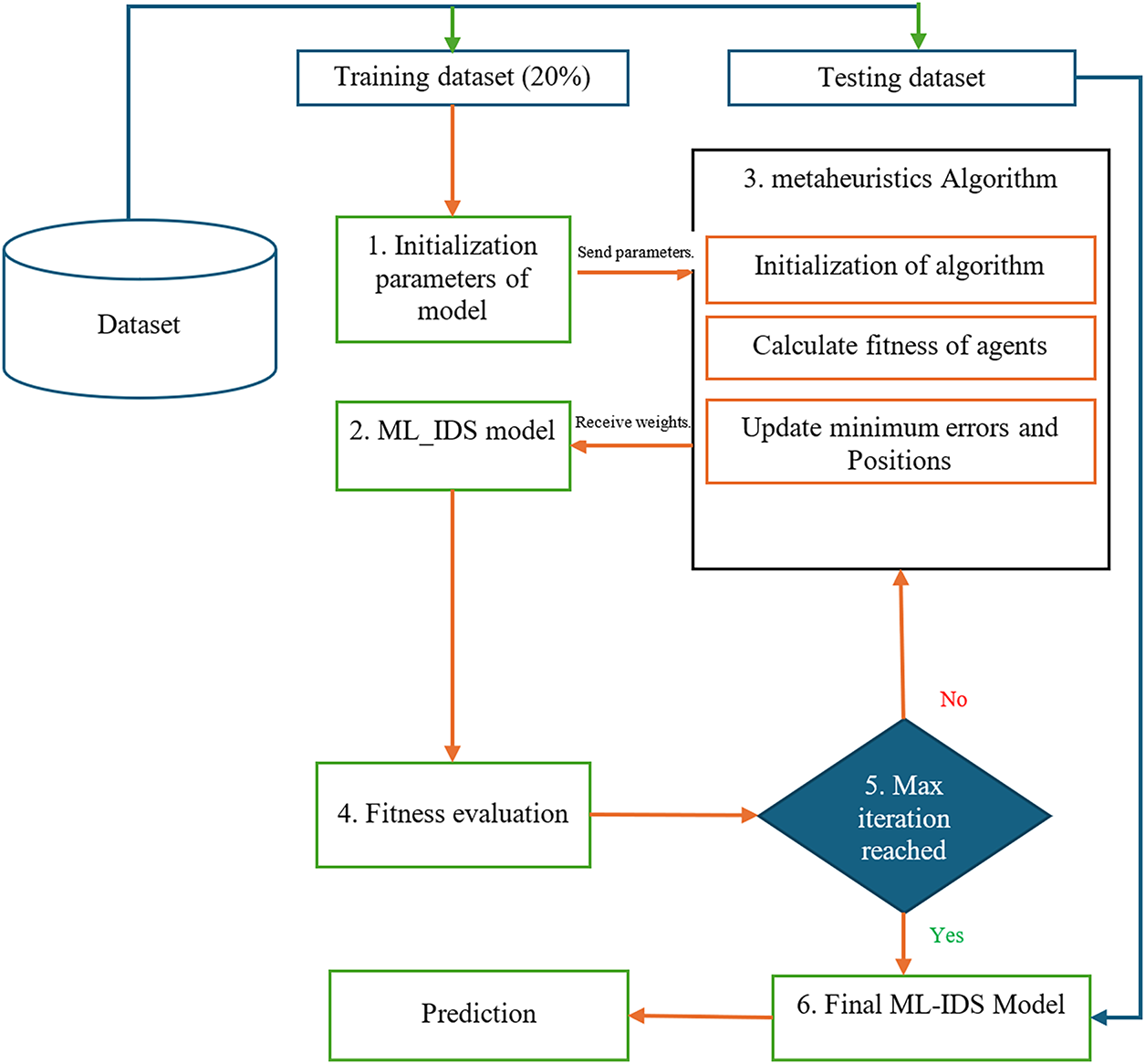

The following section presents the training process of the ML-IDS model, which combines the metaheuristic algorithm for training the IDS method to optimize the weights and thresholds in the neural network structure. This hybrid model aims to train the neural network and overcome local minimum errors using the metaheuristic algorithm, improving the classification of the model’s accuracy. The proposed model’s main structure is illustrated in Fig. 8.

Figure 8: The main structure of the ML-IDS model training process using PWO.

Step 1: Initially, it establishes the configuration and arrangement of the neural network, initializes the neural network, and sets the starting values and thresholds, which include the number of neurons and hidden layers.

Step 2: The metaheuristic optimization algorithms require initializing various algorithmic parameters, including population size and upper and lower boundaries. Afterward, it proceeds to calculate the corresponding fitness function.

Step 3: The corresponding metaheuristic algorithm assigns freshly updated weights to an artificial neural network (ANN) during each iteration. The ANN then assesses and measures the fitness of these weights using the training dataset, after which the results are sent back to the algorithm.

Step 4: Subsequently, the algorithm updates several parameters associated with the provided algorithm, including positions and the fitness function. It then verifies whether the maximum iteration has been reached.

Step 5: Ultimately, the metaheuristic algorithm provides the optimal weights and thresholds, which are then set to the artificial neural network and employed to perform prediction. This training process continues until a termination condition is reached. Once the termination condition is satisfied, the network training terminates, and the trained neural network is applied to the test dataset and, the obtained results are presented.

The objective of the problem is the minimization of the network weights and thresholds within a neural network to improve the performance of the ML-IDS. During the implementation of these algorithms, several parameters were consistently set to specific values. The population size was established at 20, the maximum number of iterations at 100, and the upper and lower bound values were defined as (2.0,

The objective of this study is to address the training and optimization of network weights and thresholds within a neural network.

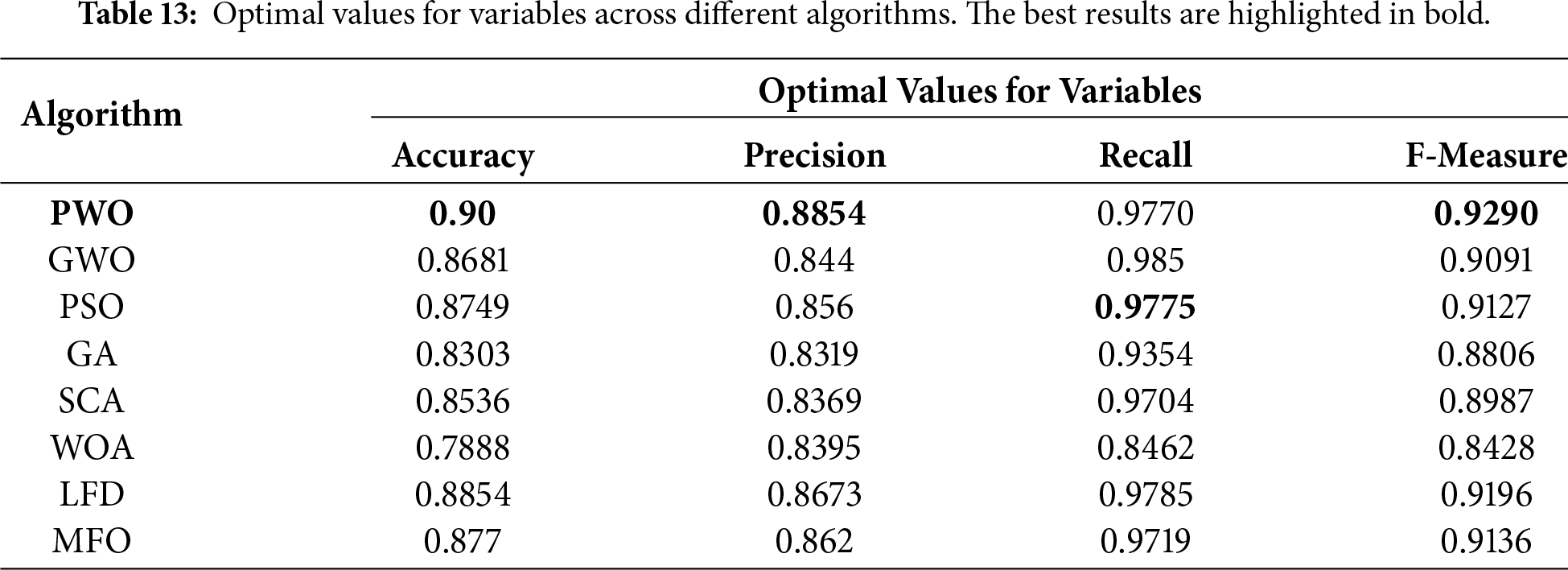

In this section, we present the outcomes of our evaluation, which was conducted to assess the effectiveness of the proposed algorithm in optimizing neural network weights and thresholds. We aim to compare its performance with other established algorithms, using the metrics of Accuracy and F1-Score. Table 13 exhibits the best results achieved by the PWO algorithm in comparison to competitive algorithms in tackling the ML-IDS training problem and detecting the anomaly traffic in the network

Table 13 provides a comparative performance analysis of the PWO algorithm against other well-known algorithms concerning the training of the ML-IDS model for the detection of anomaly network traffic. The results indicate that the ML-IDS model trained using the PWO algorithm consistently demonstrates robust performance across multiple evaluation metrics. It achieves a high accuracy of 0.90, closely followed by a precision value of 0.8854, a recall value of 0.9770, and an excellent F-measure of 0.9290.

Comparatively, while other algorithms, such as GWO, PSO, GA, SCA, WOA, LFD, and MFO, exhibit competitive accuracy rates, they fall short in achieving the same level of precision, recall, and F-measure as the PWO algorithm. For instance, while some algorithms may achieve comparable accuracy levels, their precision and recall values are lower, leading to a diminished F-measure, which is critical for comprehensive model evaluation.

These results suggest that the PWO algorithm has a distinct advantage over other algorithms in effectively training models and achieving higher accuracy, as well as a better balance between precision and recall. Its superior performance highlights its potential to effectively train ML-IDS models to identify and address anomaly network traffic, highlighting its applicability and importance in the domain of cybersecurity.

In this study, we proposed a new nature-inspired metaheuristic, Painted Wolf Optimization (PWO), for solving a broad range of optimization problems. PWO is motivated by the social organization and cooperative hunting behavior of painted wolves, and these behaviors are translated into mathematical operators that guide the search process. The performance of PWO was evaluated on 33 benchmark functions, including the CEC2017 suite, which spans unimodal, multimodal, and composite scenarios, to examine exploration, exploitation, and the ability to avoid local optima. Additionally, PWO was validated in a cybersecurity application by optimizing a machine-learning intrusion detection system (ML-IDS) for accurate detection of abnormal traffic. Overall, the experimental results indicate that PWO achieves competitive and often superior performance compared with several recent, well-established metaheuristics across the tested benchmarks. In the ML-IDS task, PWO demonstrated a strong detection capability, achieving high accuracy and F-measure compared to the other methods. Furthermore, experiments on three standard real-world engineering design problems showed that PWO can produce high-quality solutions and compares favorably with existing optimization techniques.

Future research directions include: (1) extending PWO to large-scale and multi-objective optimization problems; (2) hybridizing PWO with other metaheuristics to further enhance performance; (3) evaluating PWO on additional real-world domains such as healthcare, finance, and smart grid optimization; (4) conducting systematic parameter sensitivity and robustness analyses; and (5) benchmarking against more recently proposed optimization algorithms.

Acknowledgement: Not applicable.

Funding Statement: The authors received no specific funding for this study.

Availability of Data and Materials: This study used publicly available benchmark functions (CEC 2017) and the NSL-KDD dataset. The source code for the proposed Painted Wolf Optimization (PWO) algorithm is publicly available on GitHub at: https://github.com/saeidsheikhi/Painted-Wolf-Optimization.

Ethics Approval: Not applicable.

Conflicts of Interest: The author declares no conflicts of interest.

References

1. Rezk H, Olabi AG, Wilberforce T, Sayed ET. Metaheuristic optimization algorithms for real-world electrical and civil engineering application: a review. Res Eng. 2024;23:102437. doi:10.1016/j.rineng.2024.102437. [Google Scholar] [CrossRef]

2. Sheikhi S, Kostakos P. Safeguarding cyberspace: enhancing malicious website detection with PSOoptimized XGBoost and firefly-based feature selection. Comput Secur. 2024;142:103885. doi:10.1016/j.cose.2024.103885. [Google Scholar] [CrossRef]

3. Li G, Zhang T, Tsai CY, Yao L, Lu Y, Tang J. Review of the metaheuristic algorithms in applications: visual analysis based on bibliometrics. Expert Syst Appl. 2024;255:124857. doi:10.1016/j.eswa.2024.124857. [Google Scholar] [CrossRef]

4. Sattar D, Salim R. A smart metaheuristic algorithm for solving engineering problems. Eng Comput. 2021;37(3):2389–417. doi:10.1007/s00366-020-00951-x. [Google Scholar] [CrossRef]

5. Zhang J, Wei L, Guo Z, Sun H, Hu Z. A survey of meta-heuristic algorithms in optimization of space scale expansion. Swarm Evol Comput. 2024;84:101462. doi:10.1016/j.swevo.2023.101462. [Google Scholar] [CrossRef]

6. Gu Q, Wang D, Jiang S, Xiong N, Jin Y. An improved assisted evolutionary algorithm for data-driven mixed integer optimization based on Two_Arch. Comput Ind Eng. 2021;159:107463. doi:10.1016/j.cie.2021.107463. [Google Scholar] [CrossRef]

7. Li XD, Wang JS, Hao WK, Zhang M, Wang M. Chaotic arithmetic optimization algorithm. Appl Intell. 2022;52(14):16718–57. doi:10.1007/s10489-021-03037-3. [Google Scholar] [CrossRef]

8. Abdel-Basset M, Mohamed R, Abouhawwash M. Crested Porcupine Optimizer: a new nature-inspired metaheuristic. Knowl Based Syst. 2024;284(1):111257. doi:10.1016/j.knosys.2023.111257. [Google Scholar] [CrossRef]

9. Amiri MH, Mehrabi Hashjin N, Montazeri M, Mirjalili S, Khodadadi N. Hippopotamus optimization algorithm: a novel nature-inspired optimization algorithm. Sci Rep. 2024;14(1):5032. doi:10.21203/rs.3.rs-3503110/v1. [Google Scholar] [CrossRef]

10. Holland JH. Genetic algorithms. Sci Am. 1992;267(1):66–73. doi:10.1038/scientificamerican0792-66. [Google Scholar] [CrossRef]

11. Storn R, Price K. Differential evolution-a simple and efficient heuristic for global optimization over continuous spaces. J Glob Optim. 1997;11:341–59. doi:10.1023/a:1008202821328. [Google Scholar] [CrossRef]

12. Ghaemi M, Feizi-Derakhshi MR. Forest optimization algorithm. Expert Syst Appl. 2014;41(15):6676–87. doi:10.1016/j.eswa.2014.05.009. [Google Scholar] [CrossRef]

13. Yang XS. Firefly algorithm, stochastic test functions and design optimisation. Int J Bio-Inspired Comput. 2010;2(2):78–84. doi:10.1504/ijbic.2010.032124. [Google Scholar] [CrossRef]

14. Beyer HG, Schwefel HP. Evolution strategies-a comprehensive introduction. Nat Comput. 2002;1:3–52. [Google Scholar]

15. Simon D. Biogeography-based optimization. IEEE Trans Evol Comput. 2008;12(6):702–13. doi:10.1109/tevc.2008.919004. [Google Scholar] [CrossRef]

16. Kalananda VKRA, Komanapalli VLN. A combinatorial social group whale optimization algorithm for numerical and engineering optimization problems. Appl Soft Comput. 2021;99:106903. doi:10.1016/j.asoc.2020.106903. [Google Scholar] [CrossRef]

17. Trojovskỳ P. A new human-based metaheuristic algorithm for solving optimization problems based on preschool education. Sci Rep. 2023;13(1):21472. doi:10.1038/s41598-023-48462-1. [Google Scholar] [PubMed] [CrossRef]

18. Li X, Lan L, Lahza H, Yang S, Wang S, Yang W, et al. Dragon boat optimization: a meta-heuristic for intelligent systems. Expert Syst. 2025;42:(2)e13785. doi:10.1111/exsy.13785. [Google Scholar] [CrossRef]

19. Moosavi SHS, Bardsiri VK. Poor and rich optimization algorithm: a new human-based and multi populations algorithm. Eng Appl Artif Intell. 2019;86:165–81. doi:10.1016/j.engappai.2019.08.025. [Google Scholar] [CrossRef]

20. Zhang Y, Jin Z. Group teaching optimization algorithm: a novel metaheuristic method for solving global optimization problems. Expert Syst Appl. 2020;148(2):113246. doi:10.1016/j.eswa.2020.113246. [Google Scholar] [CrossRef]

21. Rao RV, Savsani VJ, Vakharia DP. Teaching-learning-based optimization: a novel method for constrained mechanical design optimization problems. Comput-Aided Des. 2011;43(3):303–15. doi:10.1016/j.cad.2010.12.015. [Google Scholar] [CrossRef]

22. Zhang J, Xiao M, Gao L, Pan Q. Queuing search algorithm: a novel metaheuristic algorithm for solving engineering optimization problems. Appl Math Model. 2018;63(1):464–90. doi:10.1016/j.apm.2018.06.036. [Google Scholar] [CrossRef]

23. Eita M, Fahmy M. Group counseling optimization. Appl Soft Comput. 2014;22:585–604. doi:10.1016/j.asoc.2014.03.043. [Google Scholar] [CrossRef]

24. Azizi M, Aickelin U, Khorshidi HA, Baghalzadeh Shishehgarkhaneh M. Energy valley optimizer: a novel metaheuristic algorithm for global and engineering optimization. Sci Rep. 2023;13(1):226. doi:10.1038/s41598-022-27344-y. [Google Scholar] [PubMed] [CrossRef]

25. Deng L, Liu S. Snow ablation optimizer: a novel metaheuristic technique for numerical optimization and engineering design. Expert Syst Appl. 2023;225(1):120069. doi:10.1016/j.eswa.2023.120069. [Google Scholar] [CrossRef]

26. Muthiah-Nakarajan V, Noel MM. Galactic swarm optimization: a new global optimization metaheuristic inspired by galactic motion. Appl Soft Comput. 2016;38:771–87. doi:10.1016/j.asoc.2015.10.034. [Google Scholar] [CrossRef]

27. Hashim FA, Houssein EH, Mabrouk MS, Al-Atabany W, Mirjalili S. Henry gas solubility optimization: a novel physics-based algorithm. Future Gener Comput Syst. 2019;101:646–67. doi:10.1016/j.future.2019.07.015. [Google Scholar] [CrossRef]

28. Zhao W, Wang L, Zhang Z. Atom search optimization and its application to solve a hydrogeologic parameter estimation problem. Knowl-Based Syst. 2019;163:283–304. doi:10.1016/j.knosys.2018.08.030. [Google Scholar] [CrossRef]

29. Tabari A, Ahmad A. A new optimization method: electro-Search algorithm. Comput Chem Eng. 2017;103:1–11. doi:10.1016/j.compchemeng.2017.01.046. [Google Scholar] [CrossRef]

30. Kashan AH. A new metaheuristic for optimization: optics inspired optimization (OIO). Comput Oper Res. 2015;55:99–125. doi:10.1016/j.cor.2014.10.011. [Google Scholar] [CrossRef]

31. Kaveh A, Bakhshpoori T. Water evaporation optimization: a novel physically inspired optimization algorithm. Comput Struct. 2016;167(1):69–85. doi:10.1016/j.compstruc.2016.01.008. [Google Scholar] [CrossRef]

32. Faramarzi A, Heidarinejad M, Stephens B, Mirjalili S. Equilibrium optimizer: a novel optimization algorithm. Knowl Based Syst. 2020;191:105190. doi:10.1016/j.knosys.2019.105190. [Google Scholar] [CrossRef]

33. Houssein EH, Saad MR, Hashim FA, Shaban H, Hassaballah M. Lévy flight distribution: a new metaheuristic algorithm for solving engineering optimization problems. Eng Appl Artif Intell. 2020;94:103731. doi:10.1016/j.engappai.2020.103731. [Google Scholar] [CrossRef]

34. Mirjalili S, Mirjalili SM, Hatamlou A. Multi-verse optimizer: a nature-inspired algorithm for global optimization. Neural Comput Appl. 2016;27:495–513. doi:10.1007/s00521-015-1870-7. [Google Scholar] [CrossRef]

35. Eskandar H, Sadollah A, Bahreininejad A, Hamdi M. Water cycle algorithm-A novel metaheuristic optimization method for solving constrained engineering optimization problems. Comput Struct. 2012;110(1):151–66. doi:10.1016/j.compstruc.2012.07.010. [Google Scholar] [CrossRef]

36. Kaveh A, Dadras A. A novel meta-heuristic optimization algorithm: thermal exchange optimization. Adv Eng Softw. 2017;110:69–84. doi:10.1016/j.advengsoft.2017.03.014. [Google Scholar] [CrossRef]

37. Rashedi E, Nezamabadi-Pour H, Saryazdi S. GSA: a gravitational search algorithm. Inf Sci. 2009;179(13):2232–48. doi:10.1016/j.ins.2009.03.004. [Google Scholar] [CrossRef]

38. Zedadra O, Guerrieri A, Jouandeau N, Spezzano G, Seridi H, Fortino G. Swarm intelligence-based algorithms within IoT-based systems: a review. J Parallel Distrib Comput. 2018;122(1):173–87. doi:10.1016/j.jpdc.2018.08.007. [Google Scholar] [CrossRef]

39. Nguyen BH, Xue B, Zhang M. A survey on swarm intelligence approaches to feature selection in data mining. Swarm Evol Comput. 2020;54:100663. doi:10.1016/j.swevo.2020.100663. [Google Scholar] [CrossRef]

40. Zhang W, Pan K, Li S, Wang Y. Special Forces Algorithm: a novel meta-heuristic method for global optimization. Math Comput Simul. 2023;213:394–417. doi:10.1016/j.matcom.2023.06.015. [Google Scholar] [CrossRef]

41. Azizi M, Baghalzadeh Shishehgarkhaneh M, Basiri M, Moehler RC. Squid game optimizer (SGOa novel metaheuristic algorithm. Sci Rep. 2023;13(1):5373. doi:10.1038/s41598-023-32465-z. [Google Scholar] [PubMed] [CrossRef]

42. Cheng MY, Sholeh MN. Artificial satellite search: a new metaheuristic algorithm for optimizing truss structure design and project scheduling. Appl Math Model. 2025;143:116008. doi:10.1016/j.apm.2025.116008. [Google Scholar] [CrossRef]

43. Romeh AE, Snášel V, Mirjalili S. Dholes-inspired optimization (DIOa nature-inspired algorithm for engineering optimization problems. Clust Comput. 2025;28(13):853. doi:10.1007/s10586-025-05543-2. [Google Scholar] [CrossRef]

44. Dong Y, Zhang S, Zhang H, Zhou X, Jiang J. Chaotic evolution optimization: a novel metaheuristic algorithm inspired by chaotic dynamics. Chaos Solitons Fractals. 2025;192(1):116049. doi:10.1016/j.chaos.2025.116049. [Google Scholar] [CrossRef]

45. Kennedy J, Eberhart R. Particle swarm optimization. In: Proceedings of ICNN’95-International Conference on Neural Networks. Vol. 4. Piscataway, NJ, USA: IEEE; 1995. p. 1942–8. [Google Scholar]

46. Jain M, Singh V, Rani A. A novel nature-inspired algorithm for optimization: squirrel search algorithm. Swarm Evol Comput. 2019;44:148–75. doi:10.1016/j.swevo.2018.02.013. [Google Scholar] [CrossRef]

47. Heidari AA, Mirjalili S, Faris H, Aljarah I, Mafarja M, Chen H. Harris hawks optimization: algorithm and applications. Future Gener Comput Syst. 2019;97:849–72. doi:10.1016/j.future.2019.02.028. [Google Scholar] [CrossRef]

48. Mirjalili S. SCA: a sine cosine algorithm for solving optimization problems. Knowl-Based Syst. 2016;96:120–33. doi:10.1016/j.knosys.2015.12.022. [Google Scholar] [CrossRef]

49. Dhiman G, Kumar V. Seagull optimization algorithm: theory and its applications for large-scale industrial engineering problems. Knowl-Based Syst. 2019;165:169–96. doi:10.1016/j.knosys.2018.11.024. [Google Scholar] [CrossRef]

50. Mirjalili S, Lewis A. The whale optimization algorithm. Adv Eng Softw. 2016;95:51–67. doi:10.1016/j.advengsoft.2016.01.008. [Google Scholar] [CrossRef]

51. Mirjalili S. Moth-flame optimization algorithm: a novel nature-inspired heuristic paradigm. Knowl-based Syst. 2015;89:228–49. doi:10.1016/j.knosys.2015.07.006. [Google Scholar] [CrossRef]

52. Yapici H, Cetinkaya N. A new meta-heuristic optimizer: pathfinder algorithm. Appl Soft Comput. 2019;78:545–68. doi:10.1016/j.asoc.2019.03.012. [Google Scholar] [CrossRef]

53. Mirjalili S, Mirjalili SM, Lewis A. Grey wolf optimizer. Adv Eng Softw. 2014;69:46–61. doi:10.1016/j.advengsoft.2013.12.007. [Google Scholar] [CrossRef]

54. Dhiman G, Kumar V. Emperor penguin optimizer: a bio-inspired algorithm for engineering problems. Knowl-Based Syst. 2018;159(2):20–50. doi:10.1016/j.knosys.2018.06.001. [Google Scholar] [CrossRef]

55. Yang XS, Karamanoglu M. Nature-inspired computation and swarm intelligence: a state-of-the-art overview. In: Nature-inspired computation and swarm intelligence: algorithms, theory and applications. London, UK: Academic Press; 2020. p. 3–18. doi:10.1016/b978-0-12-819714-1.00010-5. [Google Scholar] [CrossRef]

56. Glover F. Future paths for integer programming and links to artificial intelligence. Comput Oper Res. 1986;13(5):533–49. doi:10.1016/0305-0548(86)90048-1. [Google Scholar] [CrossRef]

57. Fisher DH. Knowledge acquisition via incremental conceptual clustering. Mach Learn. 1987;2:139–72. doi:10.1023/a:1022852608280. [Google Scholar] [CrossRef]

58. Laarhoven PJM, Aarts EHL. Simulated annealing: theory and applications. 1st ed. Dordrecht, The Netherland: Springer; 1987. [Google Scholar]

59. Wolpert DH, Macready WG. No free lunch theorems for optimization. IEEE Trans Evol Comput. 1997;1(1):67–82. doi:10.1109/4235.585893. [Google Scholar] [CrossRef]

60. Fraser-Celin VL, Hovorka AJ. Compassionate conservation: exploring the lives of African wild dogs (Lycaon pictus) in Botswana. Animals. 2019;9(1):16. doi:10.3390/ani9010016. [Google Scholar] [PubMed] [CrossRef]

61. Chengetanai S, Bhagwandin A, Bertelsen MF, Hård T, Hof PR, Spocter MA, et al. The brain of the African wild dog. II. The olfactory system. J Comp Neurol. 2020;528(18):3285–304. doi:10.1002/cne.25007. [Google Scholar] [PubMed] [CrossRef]

62. Hubel TY, Myatt JP, Jordan NR, Dewhirst OP, McNutt JW, Wilson AM. Energy cost and return for hunting in African wild dogs and cheetahs. Nat Commun. 2016;7(1):11034. doi:10.1038/ncomms11034. [Google Scholar] [PubMed] [CrossRef]

63. Cozzi G, Behr DM, Webster HS, Claase M, Bryce CM, Modise B, et al. African wild dog dispersal and implications for management. J Wildl Manag. 2020;84(4):614–21. doi:10.1002/jwmg.21841. [Google Scholar] [CrossRef]

64. Walker RH, King AJ, McNutt JW, Jordan NR. Sneeze to leave: african wild dogs (Lycaon pictus) use variable quorum thresholds facilitated by sneezes in collective decisions. Proc R Soc B Biol Sci. 2017;284(1862):20170347. doi:10.1098/rspb.2017.0347. [Google Scholar] [PubMed] [CrossRef]

65. Digalakis JG, Margaritis KG. On benchmarking functions for genetic algorithms. Int J Comput Math. 2001;77(4):481–506. doi:10.1080/00207160108805080. [Google Scholar] [CrossRef]

66. Awad N, Ali M, Liang J, Qu B, Suganthan P. Problem definitions and evaluation criteria for the CEC 2017 special session and competition on single objective real-parameter numerical optimization. Technical Report. Singapore: Nanyang Technological University; 2016. [Google Scholar]

67. Sheikhi S, Kostakos P. A novel anomaly-based intrusion detection model using PSOGWO-optimized BP neural network and GA-based feature selection. Sensors. 2022;22(23):9318. doi:10.3390/s22239318. [Google Scholar] [PubMed] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools