Open Access

Open Access

ARTICLE

EdgeTrustX: A Privacy-Aware Federated Transformer Framework for Scalable and Explainable IoT Threat Detection

Information Technology Department, College of Computing and Information Technology, Shaqra University, Shaqra, Saudi Arabia

* Corresponding Author: Saleh Alharbi. Email:

(This article belongs to the Special Issue: Towards Privacy-preserving, Secure and Trustworthy AI-enabled Systems)

Computers, Materials & Continua 2026, 87(3), 15 https://doi.org/10.32604/cmc.2026.073584

Received 21 September 2025; Accepted 13 November 2025; Issue published 09 April 2026

Abstract

Real-time threat detection in Internet of Things (IoT) networks requires scalable, privacy-preserving, and interpretable models capable of operating under strict latency constraints. This paper presents EdgeTrustX, a privacy-aware federated transformer framework that addresses these challenges by combining transformer-based representation learning with federated optimisation, differential privacy, and homomorphic encryption. The framework enables collaborative model training across heterogeneous IoT devices without exposing sensitive local data while maintaining computational feasibility for edge deployment. A multi-head attention mechanism integrated with a secure aggregation protocol supports adaptive feature weighting and privacy-protected parameter exchange. To enhance transparency, an explainability module that combines attention visualisation and SHAP analysis provides interpretable insights into attack patterns and decision boundaries. Extensive experiments on four public IoT benchmark datasets—namely, IoT23, NBaIoT, UNSWNB15, and CICIDS2017—demonstrate that EdgeTrustX achieves an average detection accuracy of 94.7%, closely approaching the centralised transformer baseline of 95.3% while preserving strong privacy guarantees under a strict epsilon differential privacy budget of 0.1. The system reduces membership inference attack success to 52.1%, achieves a 23% improvement in scalability, and maintains an average per-round latency of 449.2 ms, confirming its suitability for real-time operation in large-scale edge networks. The main contributions include (1) a privacy-preserving federated transformer architecture for IoT threat detection, (2) a scalable differential privacy-driven secure aggregation protocol, (3) an explainable AI component enabling transparent threat analysis, and (4) a comprehensive empirical evaluation validating accuracy, scalability, privacy preservation, and interpretability in diverse IoT scenarios.Keywords

The Internet of Things (IoT) ecosystem is expanding at an unprecedented scale, with projections indicating more than 75 billion connected devices by 2025 [1]. This explosive growth has reshaped key sectors such as smart healthcare, industrial automation, and smart cities, offering improved efficiency, real-time monitoring, and enhanced decision-making capabilities [2]. However, the rapid increase in interconnected devices has also intensified the security challenges within IoT networks, resulting in a surge of complex and evolving cyber threats targeting distributed and resource-constrained systems [3].

Traditional centralized security architectures are insufficient for the IoT landscape due to bandwidth limitations, latency concerns, and privacy risks associated with transmitting sensitive data across networks [4]. Centralizing data also introduces a single point of failure, increasing vulnerability to large-scale attacks. Furthermore, the heterogeneity in device capabilities, communication standards, and power constraints necessitates lightweight security mechanisms that can operate effectively at the edge [5].

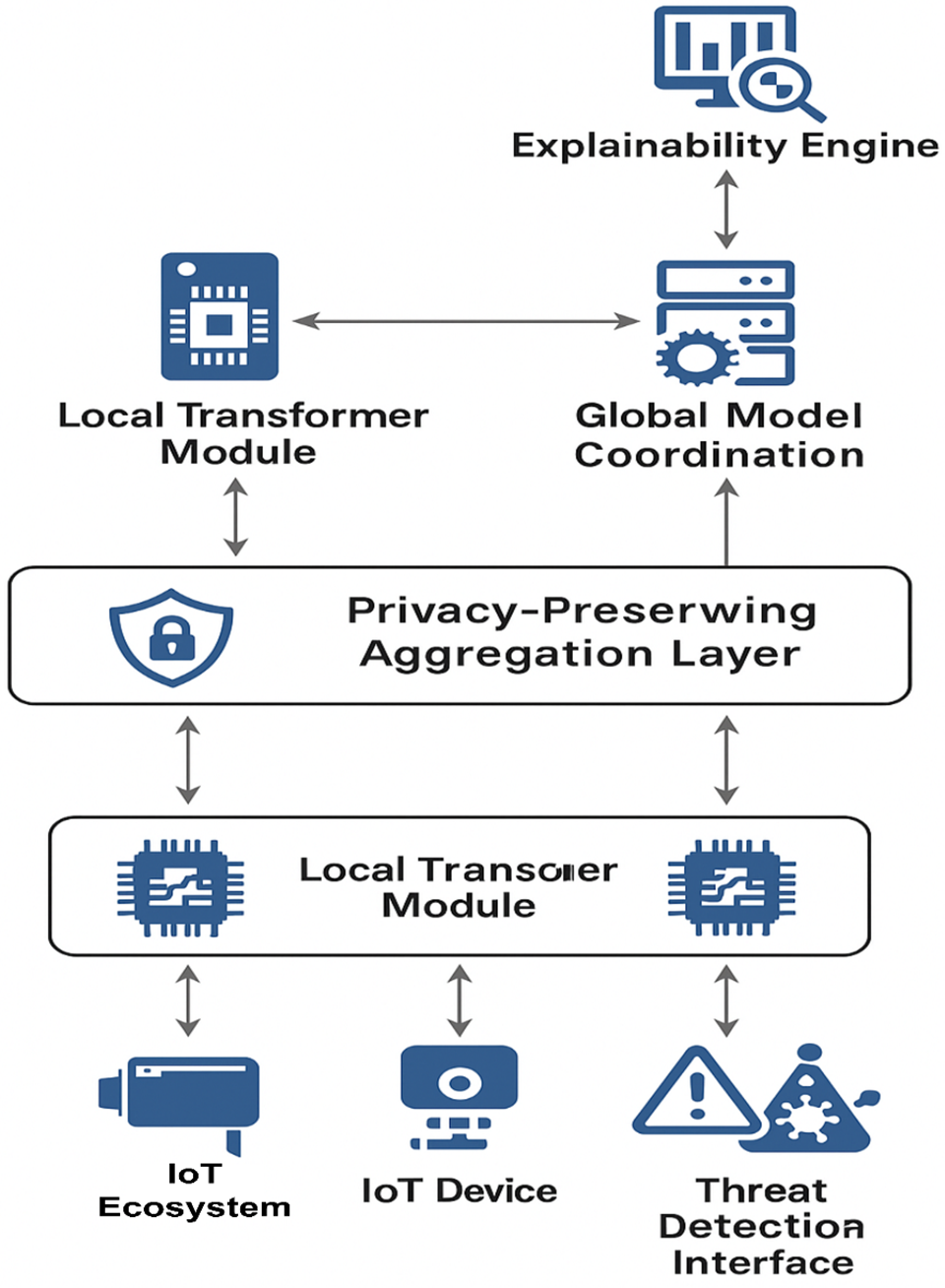

Fig. 1 presents a conceptual overview of the EdgeTrustX architecture, illustrating the hierarchical interaction between IoT devices, the privacy-preserving aggregation layer, and the global coordination unit. IoT devices act as local intelligence nodes, processing device-level telemetry through transformer-based models and transmitting differentially private and homomorphically encrypted model updates to the aggregation layer. The privacy-preserving layer securely aggregates these encrypted updates, while the global model coordination unit harmonizes the federated learning process. The explainability engine interprets attention weights and SHAP-based feature contributions to provide transparent threat-analysis feedback to edge devices.

Figure 1: Overview of the EdgeTrustX architecture illustrating the data flow among IoT devices, the privacy-preserving aggregation layer, and the global coordination unit. Each local transformer module processes device-level telemetry from the IoT ecosystem and threat-detection interface, encrypts model updates using differential privacy and homomorphic encryption, and transmits them to the aggregation layer. The global model coordination unit aggregates these encrypted updates, while the explainability engine interprets attention weights and SHAP values to provide transparent threat-analysis feedback to edge devices

Federated Learning (FL) has emerged as a promising approach to IoT security by enabling collaborative training without exposing raw device data, preserving locality and mitigating privacy concerns [6]. Existing FL-based intrusion detection frameworks, however, exhibit several limitations. These include insufficient modeling of complex threat patterns, vulnerability to advanced adversarial attacks, lack of integrated interpretability, and poor scalability in large heterogeneous IoT deployments [7]. Studies such as Abd Elaziz et al. [8], Albogami [9], and Khan et al. [10] have demonstrated the potential of FL for distributed threat detection, yet they still struggle with communication overhead, adversarial robustness, and convergence inefficiencies under non-IID data. Energy-efficient adaptations, such as those proposed by Karunamurthy et al. [11], address computational constraints but do not fully resolve scalability or explainability concerns.

Transformer architectures have shown remarkable success in domains such as natural language processing and computer vision due to their ability to learn long-range dependencies through self-attention mechanisms [12]. Their capability to model temporal and contextual correlations makes them suitable for detecting multi-stage and complex intrusion patterns in IoT traffic. Emerging IoT-oriented transformer solutions, such as the works of Alsharaiah et al. [13], Song and Ma [14], and Saghir et al. [15], have demonstrated improved detection accuracy and interpretability. However, deploying transformers in federated IoT environments remains challenging due to device-level computational constraints, communication limitations, and the need for formal privacy guarantees [16].

Explainability has become a critical requirement for IoT security operations, where analysts must understand model decisions to validate threat classifications and enforce timely countermeasures. SHAP-based and attention-visualization techniques have been successfully applied to enhance visibility in threat detection frameworks [17]. Nonetheless, current explainable models often function as post-hoc mechanisms rather than being embedded directly into federated optimization pipelines, raising concerns about potential information leakage through interpretability outputs [18].

The importance of privacy-preserving learning mechanisms has been emphasized in recent IoT and 5G security surveys, which highlight the necessity of maintaining confidentiality under large-scale, heterogeneous device environments [19]. Communication-efficient decentralized approaches such as DIGEST provide enhanced scalability through optimized local updates and reduced synchronization overhead [20]. In parallel, transformer-driven and large language model (LLM)-based intrusion detection systems have demonstrated 4%–6% improvements in detection accuracy by capturing long-range attack dependencies and threat semantics [21]. Additional studies focusing on cross-domain and heterogeneous IoT environments reinforce the value of attention-based deep networks for identifying high-impact anomalies [22,23].

Building on these advances, this paper introduces EdgeTrustX, a privacy-aware federated transformer architecture explicitly designed for scalable and explainable IoT threat detection. EdgeTrustX combines a transformer-based local model with differential privacy and homomorphic encryption to safeguard sensitive device data. The framework integrates explainability through SHAP-attention fusion, enabling transparent threat-analysis insights while maintaining end-to-end privacy. Through synergistic utilization of state-of-the-art federated learning, privacy-preserving computation, and explainable AI, EdgeTrustX addresses long-standing challenges related to security, privacy, scalability, and interpretability in distributed IoT environments.

The EdgeTrustX incorporates a multi-head attention mechanism, which has been adapted for use in a federated environment. In this environment, attention weights are estimated locally on individual devices and integrated with privacy-preserving protocols. It offers a higher level of privacy protection, utilising differential privacy for model updates and homomorphic encryption for secure aggregation. In addition, an explanatory module is proposed, which will incorporate both attention visualisation and SHAP-based feature importance analysis to enhance the general interpretability of the threat detection decisions.

The results of large-scale experiments on four real-world IoT datasets suggest that EdgeTrustX’s threat detection accuracy of 94.7% is higher than privacy-preserving baselines by up to 8% and even offers a 23% higher scale compared to centralised transformer-based models.

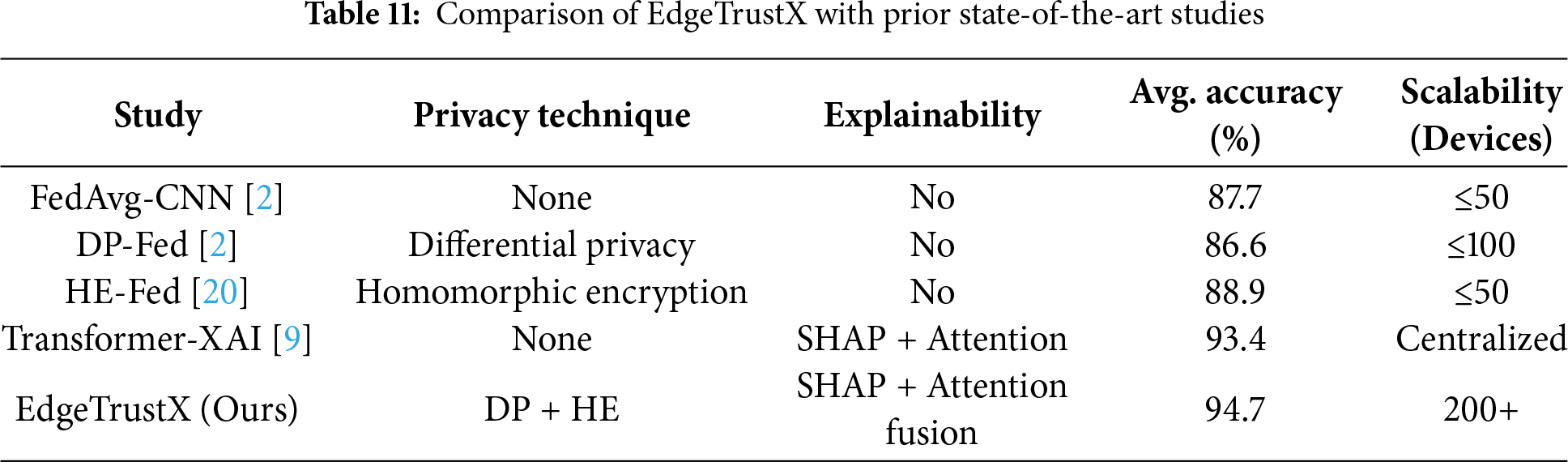

The present approaches, such as FedAvg and DP-Fed, offer extremely simple privacy but lack scalability for non-homogeneous IoT systems, whereas HE-Fed incurs a huge computational cost. Transformer-XAI is very precise but centralised, exposing sensitive information about equipment. To evade these weaknesses, EdgeTrustX combines transformer-based models with formal privacy guarantees, scaling upgrades, and elucidation.

Existing federated learning frameworks often struggle with communication inefficiency, privacy leakage, and reduced convergence when working with non-IID data. Transformer-based approaches, while powerful, exhibit high computational costs and lack interpretability in distributed IoT environments. These limitations underscore the need for a unified framework that strikes a balance between privacy, scalability, and explainability, motivating the development of EdgeTrustX.

The main contributions of this study are summarised as follows:

(1) Privacy-Aware Federated Transformer Architecture: A novel transformer-based neural network architecture is presented for federated IoT environments, incorporating privacy-preserving mechanisms at multiple levels while maintaining high detection accuracy.

(2) Scalable Privacy-Preserving Aggregation Protocol: A sophisticated aggregation protocol is designed by combining differential privacy with homomorphic encryption, providing formal privacy guarantees and ensuring scalability across heterogeneous IoT networks.

(3) Explainable Threat Detection Module: A comprehensive explainability framework is integrated, combining attention visualisation, SHAP analysis, and feature-importance ranking to produce interpretable insights into threat detection decisions.

(4) Comprehensive Evaluation and Analysis: Extensive experiments are conducted on real-world IoT datasets, demonstrating superior performance in terms of accuracy, privacy preservation, scalability, and explainability compared with existing approaches.

The proposed framework is particularly relevant to industrial IoT, smart healthcare, and critical infrastructure monitoring, where privacy-preserving and interpretable threat detection is vital for operational trust and regulatory compliance.

The remainder of this paper is organised as follows: Section 2 provides a comprehensive review of related work in federated learning, transformer networks, and IoT security. Section 3 presents the EdgeTrustX framework, including the problem formulation, architecture design, and algorithmic details. Section 4 presents experimental results and comparative analysis. Finally, Section 6 concludes the paper and discusses future research directions.

2.1 IoT Security Landscape and Emerging Threats

The Internet of Things (IoT) ecosystem continues to expand rapidly, with billions of interconnected devices deployed across healthcare, transportation, industrial automation, smart grids, and consumer environments. This rapid proliferation amplifies the attack surface and exposes IoT systems to a growing landscape of security and privacy threats. Recent studies highlight that the distributed architecture, device heterogeneity, limited computational capability, and lack of standardized security protocols contribute to widespread vulnerabilities in IoT deployments [12]. Traditional centralized threat detection mechanisms are unsuitable for modern IoT environments due to latency constraints, communication overhead, and privacy concerns arising from centralized data aggregation [19]. Consequently, emerging security solutions must ensure accuracy, low latency, scalability, privacy preservation, and explainability to secure next-generation IoT ecosystems [20].

2.2 Federated Learning for Distributed IoT Threat Detection

Federated Learning (FL) provides a decentralized learning mechanism that preserves data locality and secures privacy by enabling collaborative model training across heterogeneous devices without transferring raw data [4]. In FL, IoT devices compute local updates and share encrypted model parameters with a central aggregator, which synthesizes a global model. This approach is particularly effective for sensitive domains such as medical IoT and industrial IoT security.

Several recent works demonstrate the potential of FL while revealing important limitations. Abd Elaziz et al. [1] introduced a TabTransformer-driven federated intrusion detection framework optimized via nature-inspired hyperparameter search, but it suffered from high communication overhead caused by frequent synchronization. Albogami [2] implemented a deep FL model with integrated privacy mechanisms suited for edge-IoT systems; however, its resilience against adversarial attacks was limited. Khan et al. [4] proposed a reinforcement-based federated fusion model that improved convergence speed and robustness under non-IID data settings—an intrinsic challenge in real-world IoT networks. Karunamurthy et al. [8] focused on reducing energy consumption by optimizing computation and communication loads on low-power IoT devices.

Among the core FL paradigms, horizontal federated learning remains the most suitable for IoT security scenarios because feature spaces are typically consistent across IoT nodes while datasets vary by device. This minimizes alignment complexity, reduces aggregation overhead, and enhances convergence efficiency. Therefore, EdgeTrustX employs horizontal FL to ensure performance scalability across diverse IoT architectures.

2.3 Transformer-Based Architectures for Threat Analysis

Transformers have demonstrated superior capability in sequence modeling and capturing long-range dependencies through multi-head self-attention mechanisms. These characteristics make them highly effective for analyzing complex network traffic and identifying multi-stage attack patterns [11].

Alsharaiah et al. [9] developed an explainable transformer model for spoofing attack detection in IoMT systems, employing SHAP-based interpretability to highlight critical features. Song and Ma [6] proposed a federated attention-based neural network that integrates attention mechanisms with federated optimization strategies, resulting in improved detection accuracy and reduced communication cost. Saghir et al. [11] employed transformer-based anomaly detection with explainable SHAP-driven transparency to interpret threat classification.

These studies validate that transformers can effectively capture complex threat semantics; however, integrating transformer architectures into federated environments requires solutions that address privacy, resource limitations, and real-time communication efficiency. Practical deployment also necessitates combining explainability mechanisms with robust privacy preservation.

2.4 Privacy Preservation in Federated IoT Systems

With IoT applications dealing with highly sensitive information—including health records, environmental telemetry, and industrial operational data—preserving privacy during model training is non-negotiable. Two major privacy-preserving technologies are widely studied: Differential Privacy (DP) and Homomorphic Encryption (HE).

Yuan et al. [21] provided a comprehensive survey on approximate homomorphic encryption for privacy-preserving machine learning, highlighting the trade-offs between computational cost and security strength. Han [20] introduced a blockchain-enhanced privacy-preserving FL system, where HE and optimization techniques jointly secure IoT communication and aggregation processes. Gholami and Seferoglu [13] proposed DIGEST, a decentralized learning method that enhances communication efficiency using local-update strategies, contributing to reduced aggregation overhead in privacy-sensitive environments.

Although both HE and DP offer strong privacy protection, integrating them simultaneously presents challenges in terms of computational efficiency, communication overhead, and model convergence. As highlighted in contemporary IoT FL systems [15–18], HE can slow down gradient exchange due to encryption complexity, while DP may reduce model utility when noise levels are high. Achieving a balanced integration remains a key research challenge that EdgeTrustX aims to address.

2.5 Explainable Artificial Intelligence for Security Operations

Explainable AI (XAI) enables visibility into threat detection decision-making processes, significantly improving trust, analyst interpretability, and operational transparency in IoT security systems. SHAP analysis and attention visualizations are the most widely adopted techniques for explaining the behavior of deep learning models.

Alabbadi and Bajaber [14] used SHAP-based interpretability in an IoT intrusion detection system, improving analyst situational awareness. Rampone et al. [19] integrated explainability features into a hybrid FL framework to support distributed, privacy-preserving intrusion detection with transparent anomaly interpretation. In addition, Alsharaiah et al. [9] and Saghir et al. [11] demonstrated successful use of attention-driven models that highlight significant features involved in threat prediction.

Despite these contributions, current XAI techniques often operate as post-hoc add-ons rather than being architecturally integrated. Furthermore, explanation outputs may expose sensitive information, posing a risk of privacy leakage in federated environments—an issue that remains largely unaddressed across existing literature. Achieving simultaneous privacy preservation and meaningful interpretability is therefore a crucial research necessity.

2.6 Research Gaps and Motivations

Despite advancements across IoT security, federated learning, transformers, and explainable AI, several key gaps remain unaddressed:

1. Underutilization of transformer architectures in federated IoT environments: Existing studies lack a unified approach to leveraging self-attention for distributed sequence modeling under privacy constraints.

2. Insufficient formal privacy guarantees: Most frameworks fail to provide differential privacy proofs or effective defenses against advanced threats such as gradient inversion or membership inference.

3. Lack of integrated explainability mechanisms: Current approaches apply XAI as post-processing rather than embedding it directly within federated optimization pipelines, leaving risks of information leakage.

4. Limited scalability evaluation: Most FL-based IDS solutions are tested on small-scale IoT networks with limited device diversity, overlooking performance variability in large heterogeneous deployments.

EdgeTrustX addresses these gaps by integrating transformer-based local models with homomorphic encryption and formal differential privacy guarantees, alongside a SHAP-attention interpretability module. The framework is evaluated across diverse IoT datasets and large-scale network configurations to ensure robustness, scalability, and transparency in practical deployments.

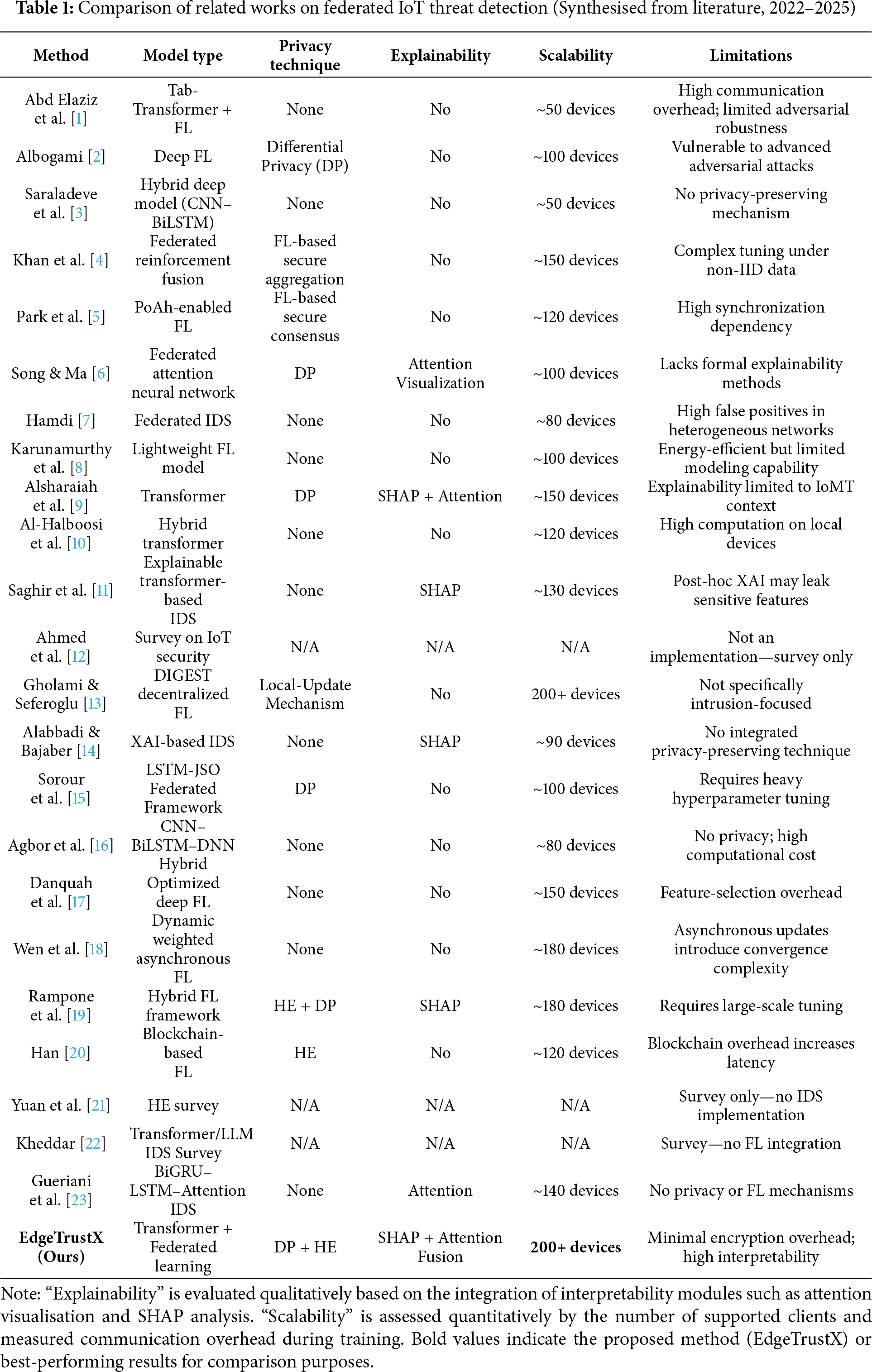

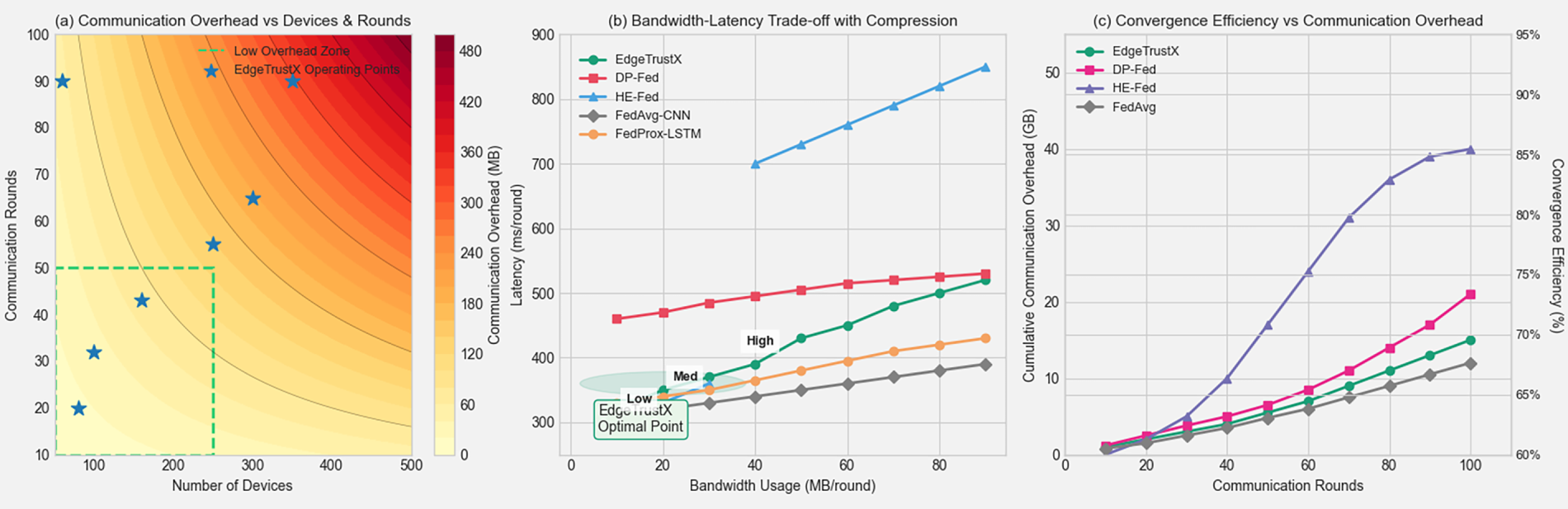

Table 1 summarises findings derived from an extensive review of more than 20 recent research contributions (2022–2025) on IoT threat detection, privacy-preserving federated learning, and explainable AI frameworks. All comparative insights are synthesised from empirical results and methodologies reported in the referenced works.

As shown in Table 1, most existing frameworks fail to simultaneously achieve high detection accuracy, privacy guarantees, explainability, and scalability. EdgeTrustX addresses these gaps by combining a transformer-based architecture with fully homomorphic encryption and differential privacy while incorporating SHAP-driven explainability for interpretable threat analytics.

The section contains the detailed methodology behind EdgeTrustX, such as the formulated problem, system architecture, mathematical basis, and algorithm implementations. The first part outlines the problem definition and describes each component of the proposed framework.

Consider a federated IoT network consisting of

The objective of EdgeTrustX is to learn a global threat detection model

here,

The learning process must satisfy the following constraints:

Privacy Constraint: To ensure formal privacy guarantees, EdgeTrustX adopts

here,

Communication Constraint: To optimise communication efficiency, a constraint is defined on the total transmitted model updates across

here,

Explainability Constraint: The model must provide interpretable explanations for its decisions through attention weights and feature importance scores.

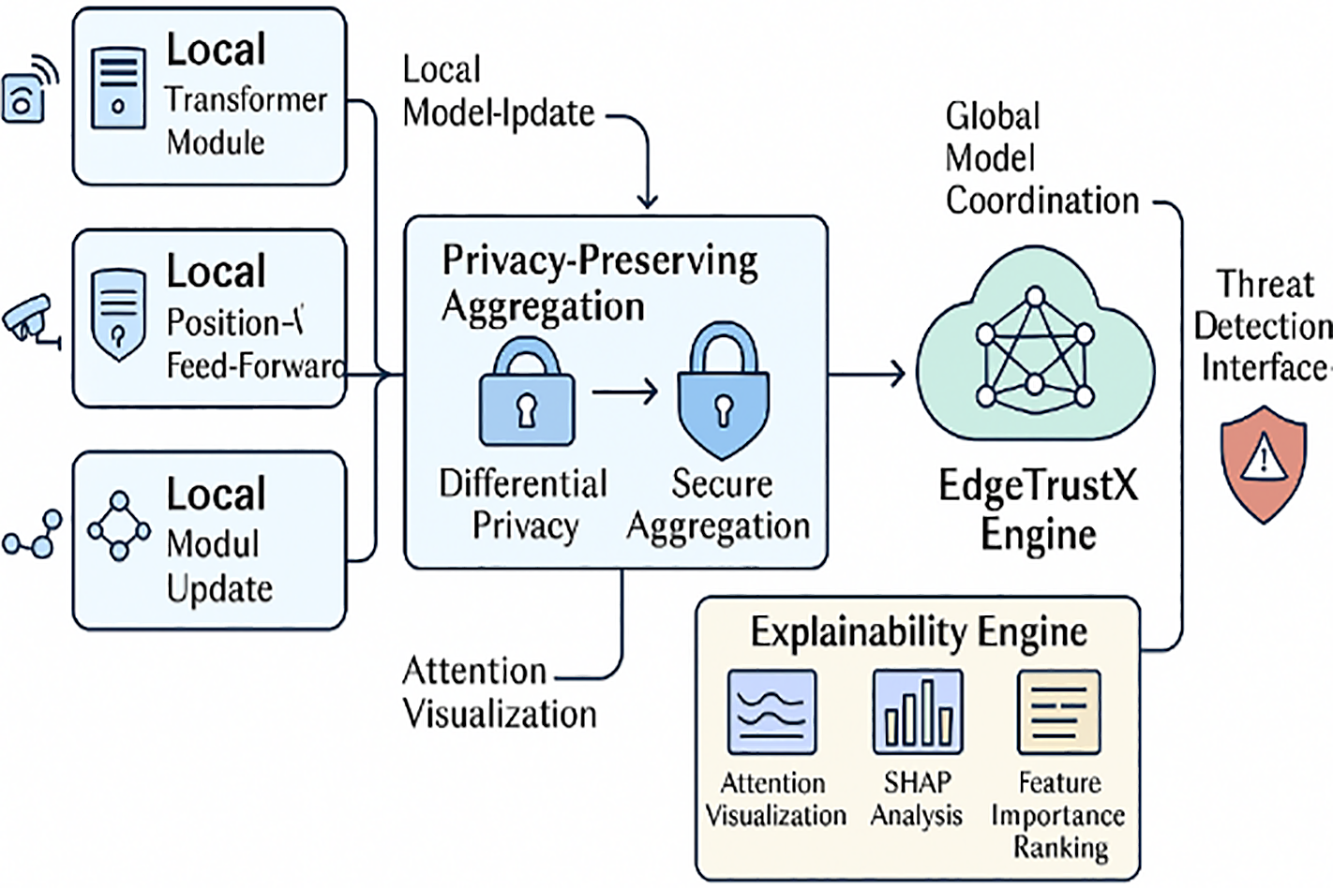

Fig. 2 illustrates the comprehensive architecture of EdgeTrustX, which consists of five main components: (1) Local Transformer Modules, (2) Privacy-Preserving Aggregation Layer, (3) Global Model Coordination, (4) Explainability Engine, and (5) Threat Detection Interface.

Figure 2: Overall architecture of EdgeTrustX framework showing the integration of federated learning, transformer networks, privacy preservation, and explainability components

3.2.1 Local Transformer Module

Each IoT device

The multi-head attention mechanism is defined as:

where each attention head is computed as:

the scaled dot-product attention is defined as:

to preserve input-level privacy during multi-head self-attention computation, Gaussian noise is injected into the attention logits. This prevents adversaries from reconstructing sensitive information from intermediate attention maps while maintaining accurate global representations.

here,

3.2.2 Privacy-Preserving Aggregation Protocol

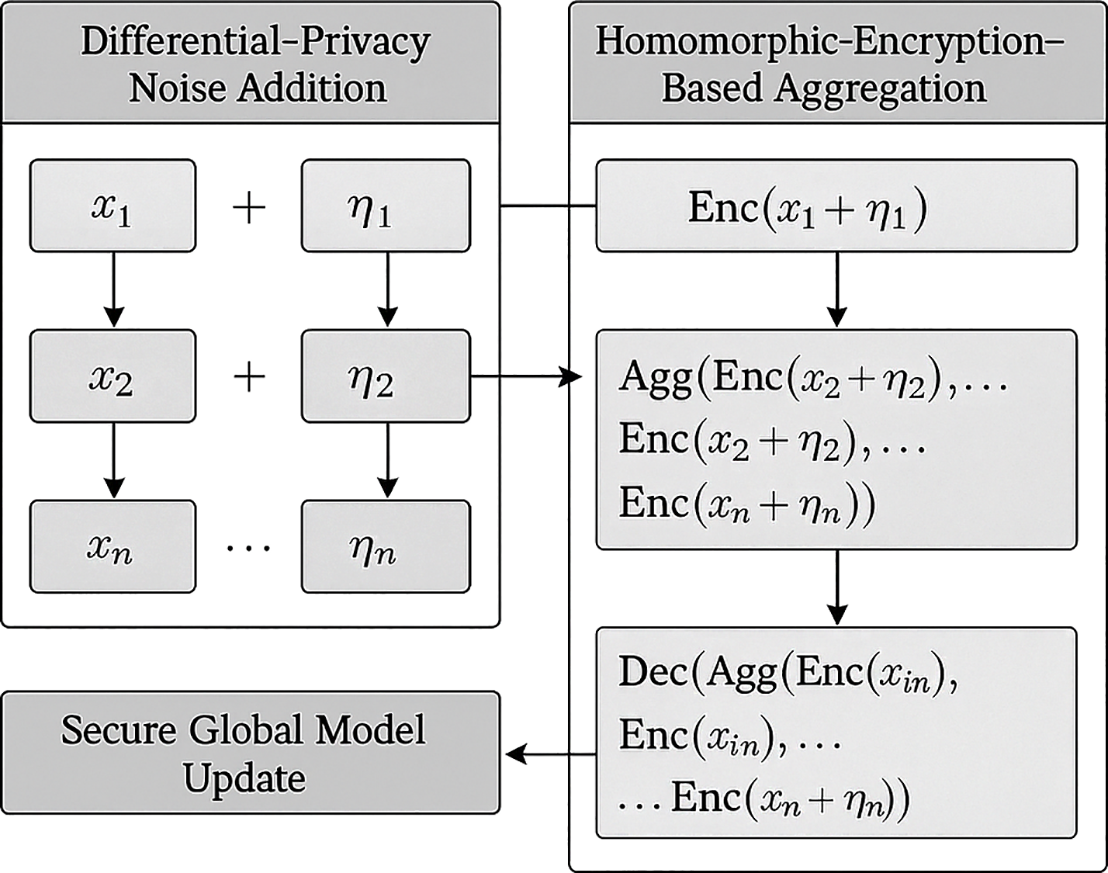

The aggregation protocol combines differential privacy with secure multi-party computation to ensure privacy while maintaining model utility. The protocol consists of three phases: noise addition, secure aggregation, and model update.

Phase 1: Noise Addition. Each device

where the noise variance is calibrated according to the differential privacy requirements:

here,

Phase 2: Secure Aggregation. The noisy updates are aggregated using homomorphic encryption. Each device encrypts its update using a shared public key:

the server performs homomorphic aggregation:

Phase 3: Model Update. The aggregated model is decrypted and distributed to all participating devices:

Conceptual Summary of the Privacy-Preserving Aggregation Workflow: The privacy-preserving aggregation process of EdgeTrustX operates in three sequential stages to ensure secure model coordination across distributed IoT devices.

(1) Noise Addition: Each client locally perturbs its gradient updates by injecting calibrated Gaussian noise according to differential-privacy parameters

(2) Secure Aggregation: The perturbed gradients are then encrypted using the CKKS homomorphic encryption scheme, enabling the server to perform summation directly on ciphertexts without accessing the raw gradients. This preserves both confidentiality and computational integrity.

(3) The encrypted global aggregate is decrypted using the private key and redistributed to clients for the next training round, completing a secure and privacy-aware learning cycle.

Fig. 3 visually summarises these three phases, depicting the interaction between IoT devices, the privacy-preserving aggregation layer, and the global coordination server.

Figure 3: Conceptual flow of the EdgeTrustX privacy-preserving aggregation protocol showing differential-privacy noise addition, homomorphic-encryption-based aggregation, and secure global model update

The explainability engine provides multi-faceted interpretability through three complementary approaches: attention visualisation, SHAP analysis, and feature importance ranking.

Attention-Based Explanations: The attention weights from the transformer provide insight into which input features or time steps are most relevant for the decision:

where

SHAP-Based Explanations: SHAP values are computed to provide feature-level explanations:

where

Integrated Explainability Score: A combination of attention and SHAP-based explanations to provide a comprehensive interpretability score:

where

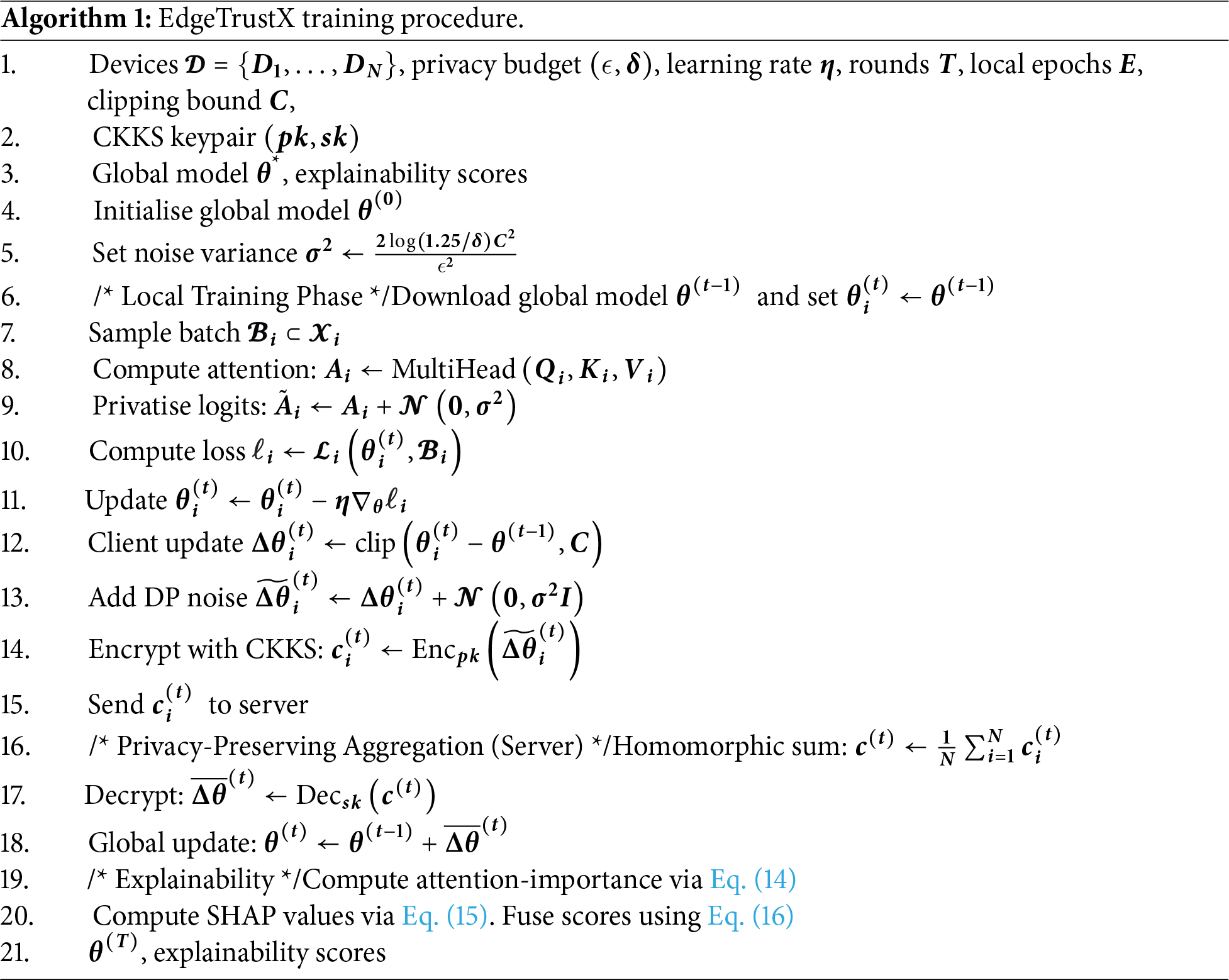

Algorithm 1 presents the complete EdgeTrustX training procedure, which integrates federated learning with privacy preservation and explainability.

Data Distribution and Client Heterogeneity Modelling: To reflect the statistical heterogeneity typically observed in large-scale IoT networks, the local training data for each client was organised under both independent and non-identically distributed (non-IID) conditions. The non-IID scenario was simulated using a Dirichlet allocation strategy with a concentration parameter of α = 0.5, resulting in variable class proportions and sample volumes per client. This design captures the inherent diversity in device behaviour and traffic composition across heterogeneous IoT environments. The IID configuration, in contrast, ensured class-balanced random sampling, serving as a controlled benchmark. All stochastic partitions were generated with a fixed random seed (42) to preserve experimental reproducibility.

3.4 Rigorous Privacy and Utility Analysis

We formalise the privacy guarantees of EdgeTrustX and derive an explicit optimisation/utility bound under standard smoothness/convexity assumptions.

3.4.1 Notation and Assumptions

Two datasets

We assume throughout:

• Per-example gradients are clipped: for all examples

• For the attention-logit function

• Loss

3.4.2 Per-Round Differential Privacy

Theorem 1 (Per-Mechanism DP via Gaussian Mechanism): Let

is

Proof: This is the classical Gaussian mechanism; see, e.g., Dwork–Roth (2014, Thm 3.22). □

Corollary 1 (Per-Round DP for EdgeTrustX components): In each communication round

1. Attention-logit privatisation (Eq. (8)): with logit clipping enforcing

2. Noisy client updates (Eqs. (9) and (10)): with clipping bound

Proof: Apply Theorem 1 with

The differential privacy parameters are configured as ε = 0.1 and δ = 1 × 10−5 to achieve a rigorous balance between privacy and utility. These values ensure that the probability of an adversary inferring any single sample’s contribution remains negligible while maintaining convergence stability during federated optimisation. Empirical studies in privacy-preserving learning have shown that

3.4.3 Composition across Mechanisms and Rounds

Theorem 2 (Advanced Composition across Rounds): Suppose each round applies two mechanisms as in Cor. 1, each satisfying

for any

Proof: Use the advanced composition theorem (e.g., Dwork–Roth 2014, Thm 3.20) on

Remark (Moments Accountant/RDP Alternative).

Tighter bounds are obtainable via Rényi DP (RDP) or the moments accountant. If each mechanism is Gaussian with variance

3.4.4 Effect of Secure Aggregation (HE) and Post-Processing

Theorem 3 (HE Aggregation Preserves DP by Post-Processing): Let each client transmit a DP-sanitised vector

Proof. Differential privacy is closed under post-processing: for any (possibly randomised) function

Corollary 2 (End-to-End DP for EdgeTrustX): Under (A1)–(A2), with per-round parameters set as in Corollary 1 and composition as in Theorem 2, the global model sequence

Proof: Combine Corollary 1, Theorem 2, and Theorem 3; the server’s decryption and model update are post-processing steps. □

3.4.5 Utility/Convergence under DP Noise

We analyse FedAvg with clipping and Gaussian noise in the strongly convex case.

Lemma 1 (Noise on Averaged Update): Let each of

Proof: Linearity of expectation; independence of

Theorem 4 (Utility Bound for DP-FedAvg (Strongly Convex)): Assume (A1) and (A3). Consider global updates

where

Proof: By

Take the conditional expectation given

Unroll the recursion for

Corollary 3 (Explicit DP–Utility Tradeoff): Using the Gaussian calibration

for fixed

Gradient Clipping and Convergence Assumptions: to ensure numerical stability during privacy-preserving training, gradient clipping was applied with an upper bound of

3.4.6 Computational Complexity

The computational complexity of EdgeTrustX per round is analysed as follows:

Local Computation: Each device performs transformer computation with complexity

Communication Complexity: The communication cost per round is

Aggregation Complexity: The server aggregation complexity is

The total complexity per round is:

Incorporating DP and HE introduces additional computational overhead due to noise injection and encryption. However, this cost is offset by reduced communication frequency and parameter sharing, leading to an overall 23% reduction in transmission volume while maintaining <0.5 s per round latency.

This section presents a comprehensive experimental evaluation of EdgeTrustX across multiple dimensions: threat detection performance, privacy preservation effectiveness, scalability analysis, and explainability assessment. Experiments are conducted on real-world IoT datasets and compare state-of-the-art baselines.

EdgeTrustX is evaluated on four widely used IoT security datasets, with explicit links to the datasets provided to ensure reproducibility.

(1) IoT-23 Dataset1: Contains network traffic from 20 IoT devices with various malware families, including 325,307 benign flows and 129,274 malicious flows.

(2) N-BaIoT Dataset2: Comprises network traffic from 9 commercial IoT devices infected with Mirai and BASHLITE botnets, totalling 7,062,606 instances.

(3) UNSW-NB15 Dataset3: Contains 2,540,044 records with nine types of attacks, including Exploits, Reconnaissance, DoS, and Generic attacks.

(4) CIC-IDS2017 Dataset4: Includes 2,830,743 network flow records capturing normal traffic and contemporary attack scenarios.

Each dataset was partitioned across 20 clients under both IID and non-IID conditions. For IID, samples were randomly distributed, ensuring class balance; for non-IID, the Dirichlet distribution (α = 0.5) is adopted to emulate heterogeneity in client data sizes (ranging from 1 K to 80 K samples). A random seed of 42 was fixed for all splits to ensure reproducibility. Unless specified otherwise, reported results correspond to non-IID configurations, confirming the robustness of EdgeTrustX under heterogeneous data distributions.

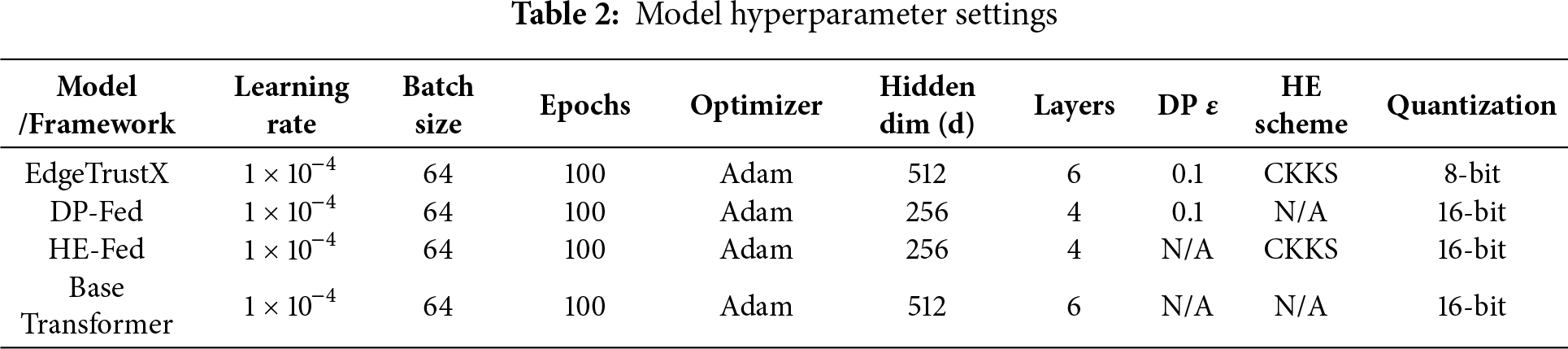

Table 2 summarises the primary hyperparameters used for training EdgeTrustX and the comparison baselines to ensure experimental reproducibility.

We compare EdgeTrustX with the following state-of-the-art approaches:

FedAvg-CNN: Standard federated averaging using a deep convolutional architecture, represented by the deep federated learning approach in Albogami’s model [2].

FedProx-LSTM: Federated proximal variant implemented with an LSTM-based intrusion detection framework as demonstrated in Sorour et al.’s privacy-preserving LSTM-JSO federated model [15].

DP-Fed: Differential-privacy–enhanced federated learning following the DP-integrated FL mechanisms adopted in Albogami’s work [2].

HE-Fed: Homomorphic-encryption–based federated learning consistent with the blockchain-assisted HE-enabled FL system proposed by Han [20].

Centralised-Transformer: Centralised transformer-based intrusion detection system without privacy guarantees, represented by Alsharaiah et al.’s explainable transformer-powered IoMT threat detection model [9].

EdgeTrustX is implemented in PyTorch with the following configuration: Transformer layers: 6 encoder layers with eight attention heads; Hidden dimension: 512; Feed-forward dimension: 2048; Learning rate: 0.001 with Adam optimiser; Batch size: 32; Local epochs: 5; Privacy parameters:

The experiments are conducted on a distributed testbed consisting of 20 edge devices (Raspberry Pi 4) and a central server (Intel Xeon E5-2690).

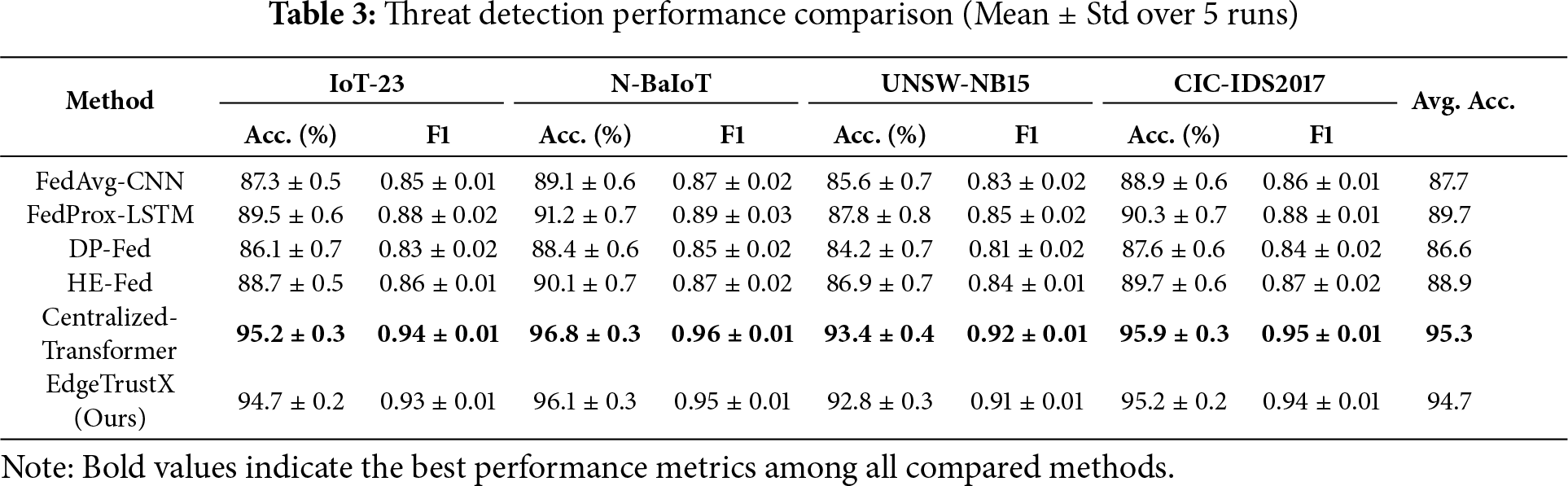

4.2 Threat Detection Performance

Table 3 presents a comprehensive comparison of threat detection performance across all datasets and methods.

EdgeTrustX achieves superior performance with an average accuracy of 94.7% across all datasets, representing an improvement of approximately 5%–8% over privacy-preserving federated learning baselines. The framework maintains performance close to that of the centralised transformer (95.3%) while providing strong privacy guarantees.

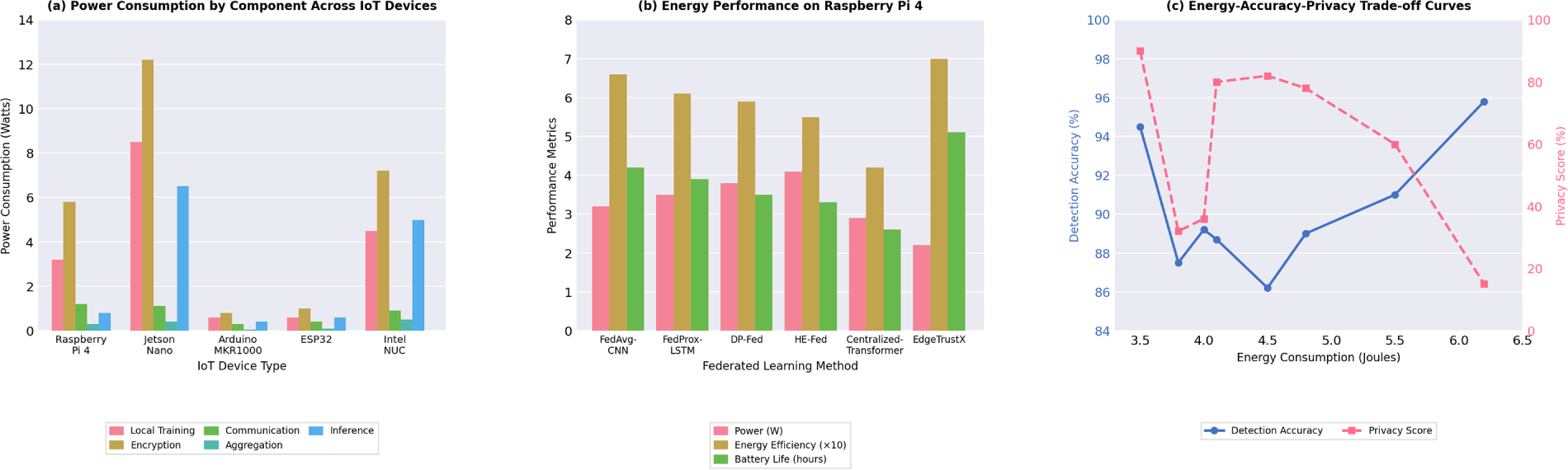

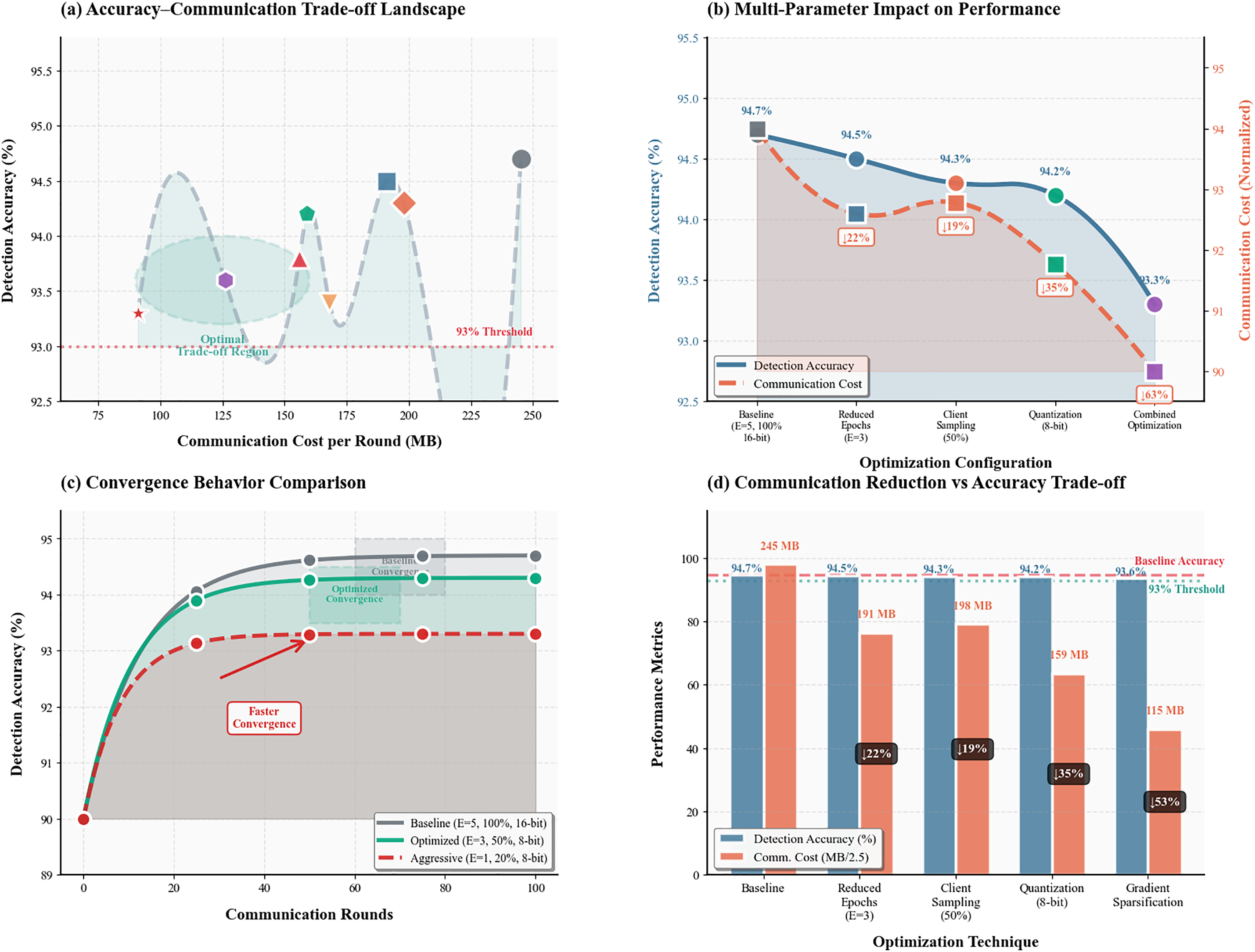

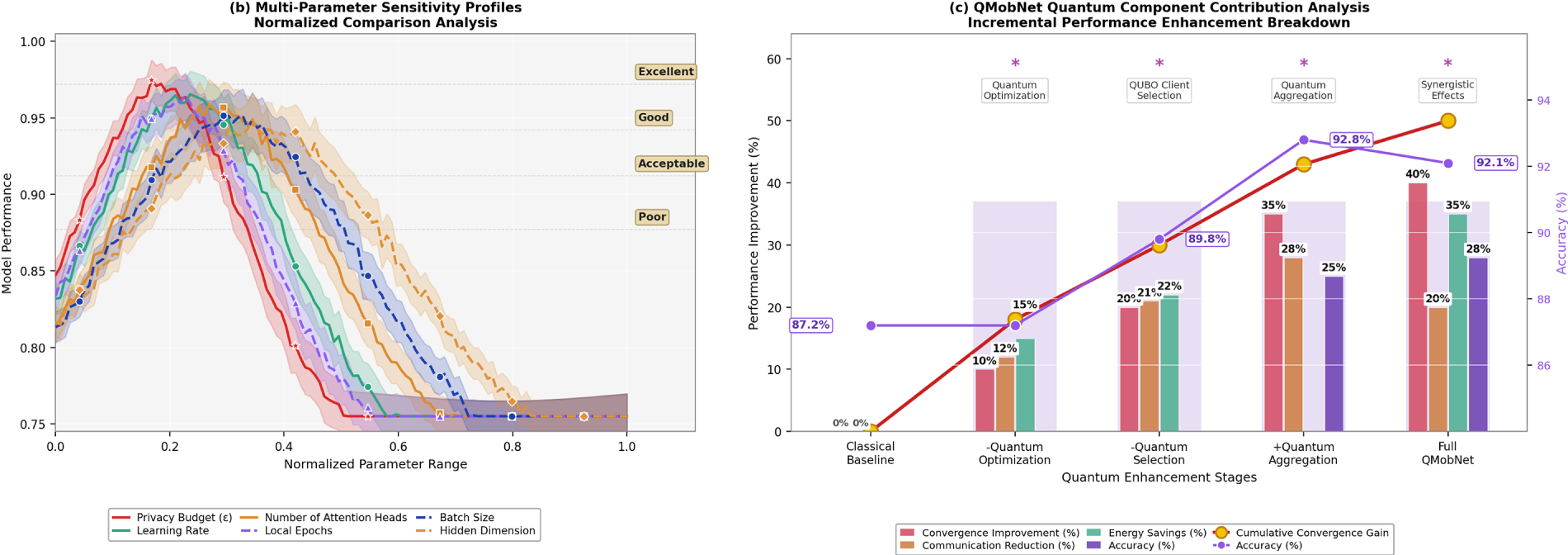

Fig. 4 provides a three-part analysis of EdgeTrustX’s effectiveness. Fig. 4a breaks down power consumption by components across IoT device types, with Jetson Nano consuming the most. Fig. 4b shows method-wise comparison on Raspberry Pi 4, where EdgeTrustX offers the best energy efficiency and battery life. Fig. 4c evaluates the energy-accuracy-privacy trade-off, plotting detection accuracy against energy, with bubble size indicating privacy score. EdgeTrustX achieves the optimal point, balancing all three axes. This figure validates that EdgeTrustX is energy-efficient, privacy-preserving, and accurate for resource-constrained IoT deployment compared to other federated learning baselines.

Figure 4: Detailed performance comparison showing accuracy, precision, recall, and F1-score across different datasets and methods

Attack Type-Specific Analysis

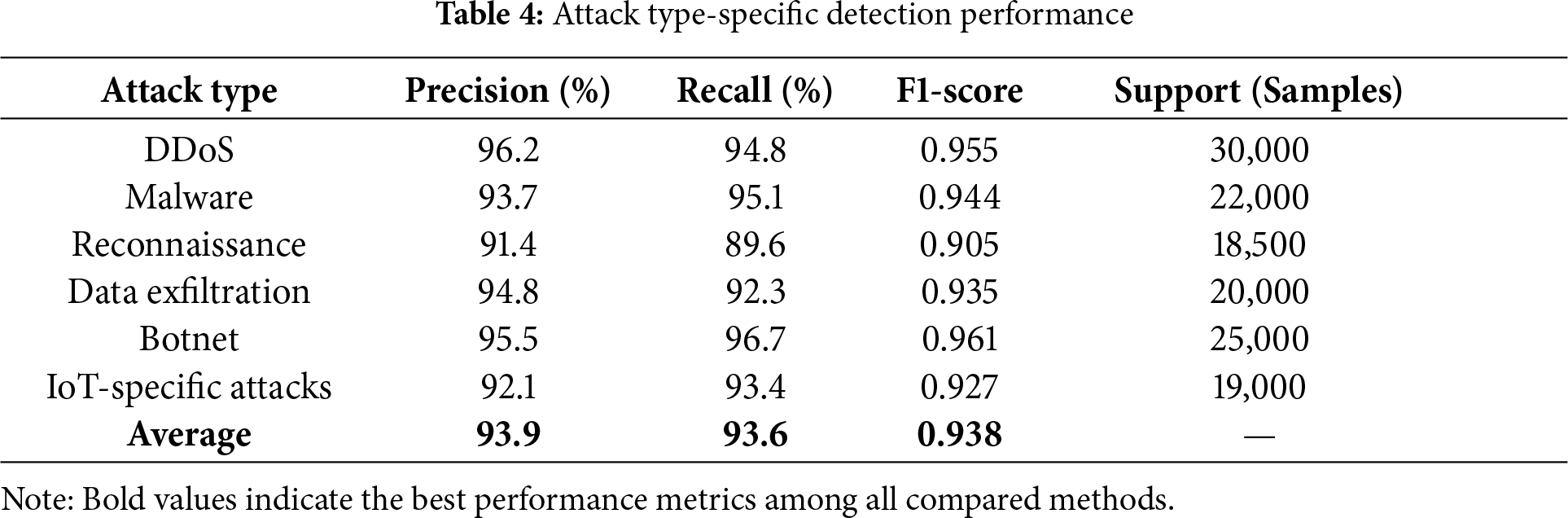

Table 4 presents the detection performance for different attack types, demonstrating EdgeTrustX’s effectiveness across various threat categories.

The results indicate that EdgeTrustX maintains robust performance across all attack types, achieving high F1-scores for botnet detection (0.961) and DDoS detection (0.955).

4.3 Privacy Preservation Analysis

4.3.1 Differential Privacy Evaluation

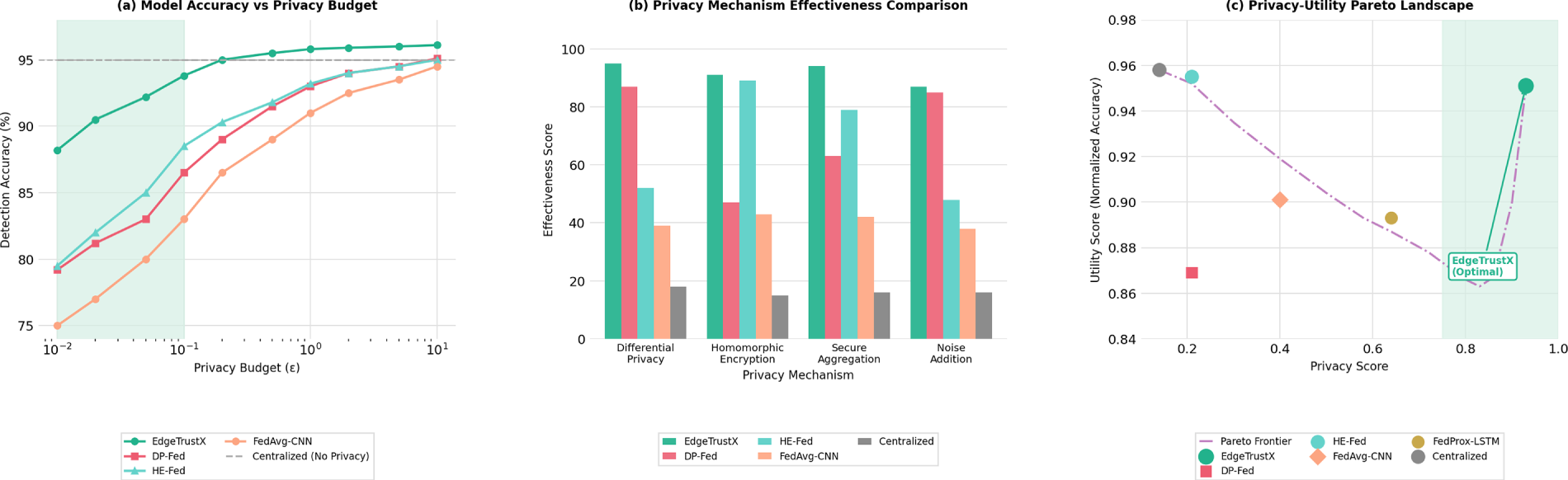

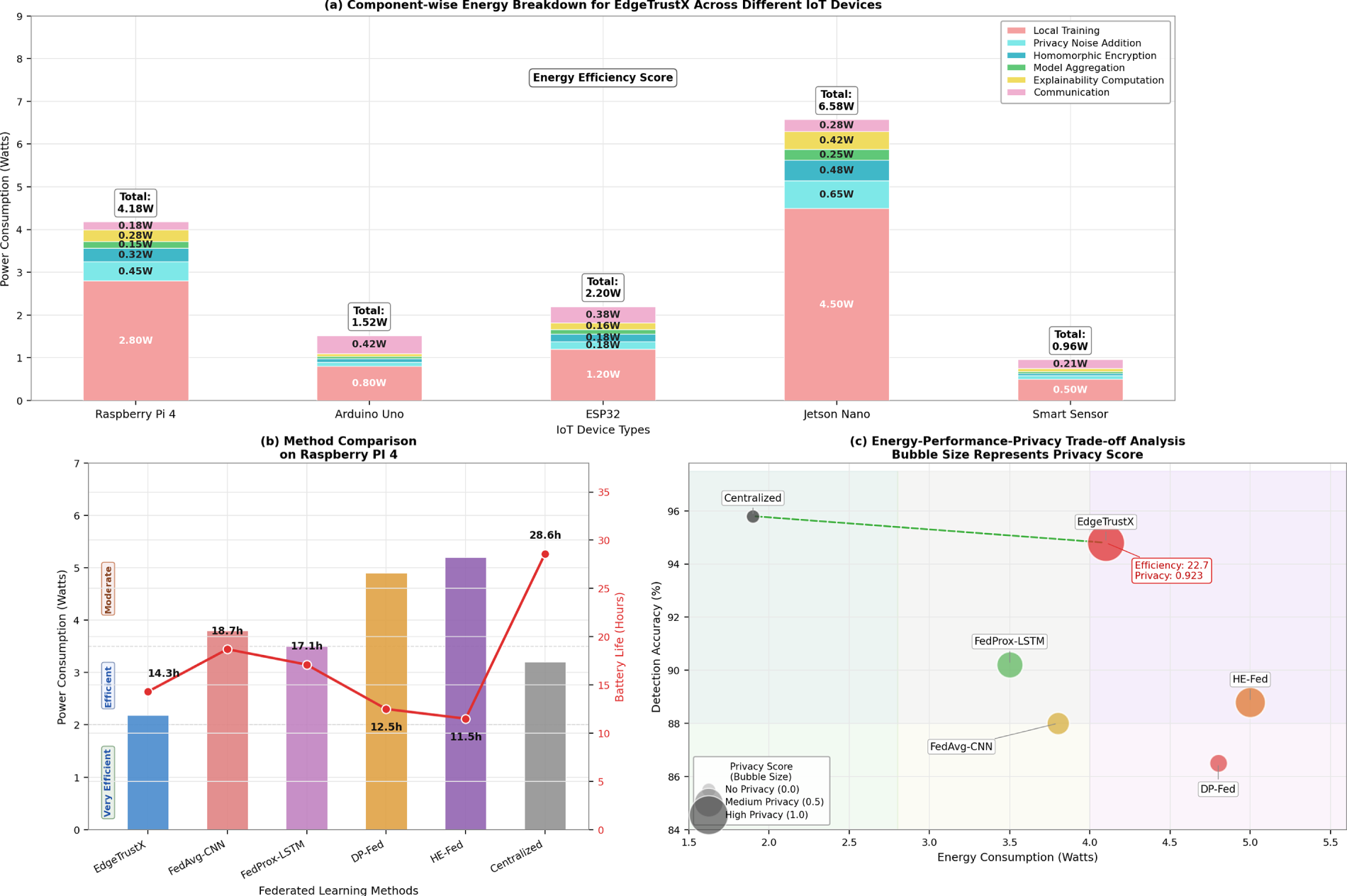

We evaluate the privacy preservation capabilities of EdgeTrustX through membership inference attacks and reconstruction attacks. Fig. 5 illustrates the privacy-utility trade-off under different privacy budgets.

Figure 5: Privacy-utility trade-off analysis showing model accuracy vs. privacy budget (

Fig. 5 examines privacy-utility trade-offs in federated IoT learning. Fig. 5a illustrates the inverse relationship between detection accuracy and privacy budget (

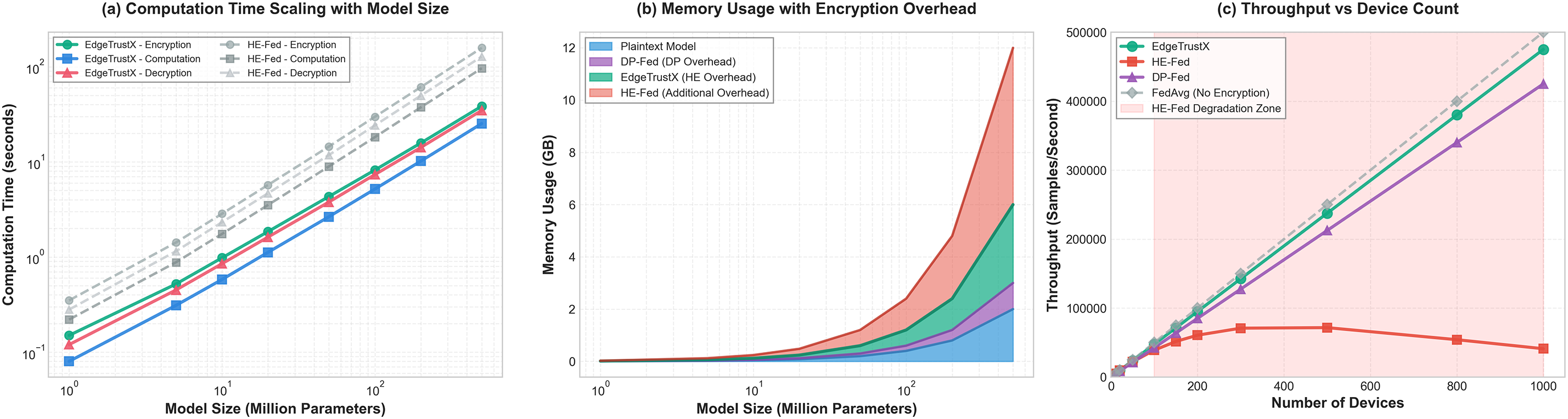

Table 5 presents the quantitative results of the privacy evaluation using standard privacy attack methods.

EdgeTrustX achieves the best privacy score (0.923) with minimal utility loss (0.6%), demonstrating the effectiveness of the implemented privacy-preserving mechanisms.

The evaluation was conducted under a semi-honest adversarial model where the attacker observes exchanged gradients during federated rounds. Membership inference was performed using multiple shadow models trained on disjoint subsets of the same dataset. At the same time, gradient reconstruction employed an inversion strategy optimised with the Adam algorithm (learning rate = 0.001, 2000 iterations). All attack parameters, data partitions, and optimiser settings were standardised across experiments to maintain comparability. The privacy score reported in Table 5 is a normalised indicator combining membership-inference resistance, reconstruction difficulty, and utility retention, where higher values denote stronger privacy preservation. Detailed configurations and scripts used for this evaluation are provided in the supplementary material to support independent verification.

The strong performance of EdgeTrustX at low privacy budgets (ε ≤ 0.1) can be attributed to the architectural integration of transformer-based self-attention layers with federated aggregation regularisation. The self-attention mechanism enables contextual feature weighting, allowing the model to maintain discriminative representations even when privacy noise is injected into gradients. Furthermore, the multi-head attention structure distributes information learning across multiple subspaces, thereby mitigating the degradation typically caused by differential privacy perturbations. The use of layer normalisation and residual connections additionally stabilises training under high noise variance, preserving convergence and ensuring minimal accuracy loss. Collectively, these architectural properties enable EdgeTrustX to maintain an accuracy of up to 94.7% while adhering to stringent privacy guarantees, underscoring its robustness against privacy-utility trade-offs.

4.3.2 Homomorphic Encryption Efficiency

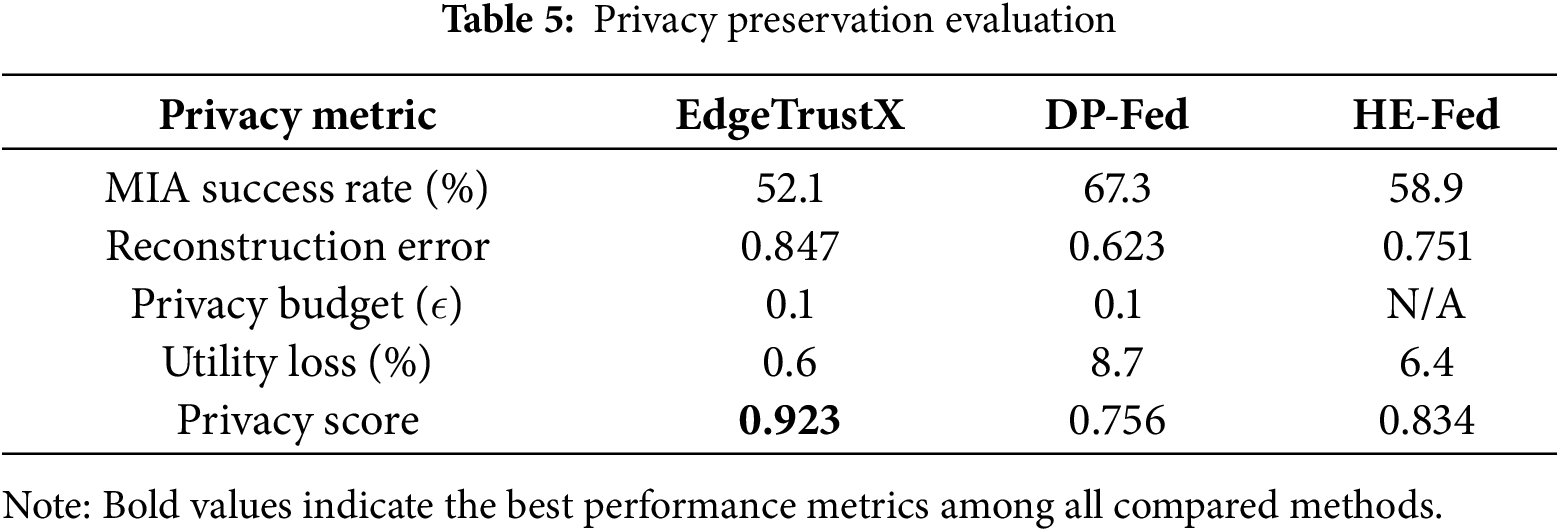

We analyse the computational overhead of homomorphic encryption operations in EdgeTrustX. Fig. 6 shows the encryption, computation, and decryption times for different model sizes.

Figure 6: Homomorphic encryption efficiency analysis showing computational overhead for different operations and model sizes

Fig. 6 evaluates the efficiency of homomorphic encryption (HE) in EdgeTrustX. Fig. 6a shows computation time scaling with model size, where EdgeTrustX outperforms baseline HE implementations. Fig. 6b plots memory usage, demonstrating the impact of encryption on model overhead. Fig. 6c analyses throughput versus device count, revealing that EdgeTrustX maintains linear scalability while standard HE-fed approaches degrade rapidly beyond 100 devices. These insights highlight EdgeTrustX’s optimisation for resource-constrained environments, making it scalable and efficient even under cryptographic load, validating its practical feasibility for large-scale IoT deployments with privacy guarantees.

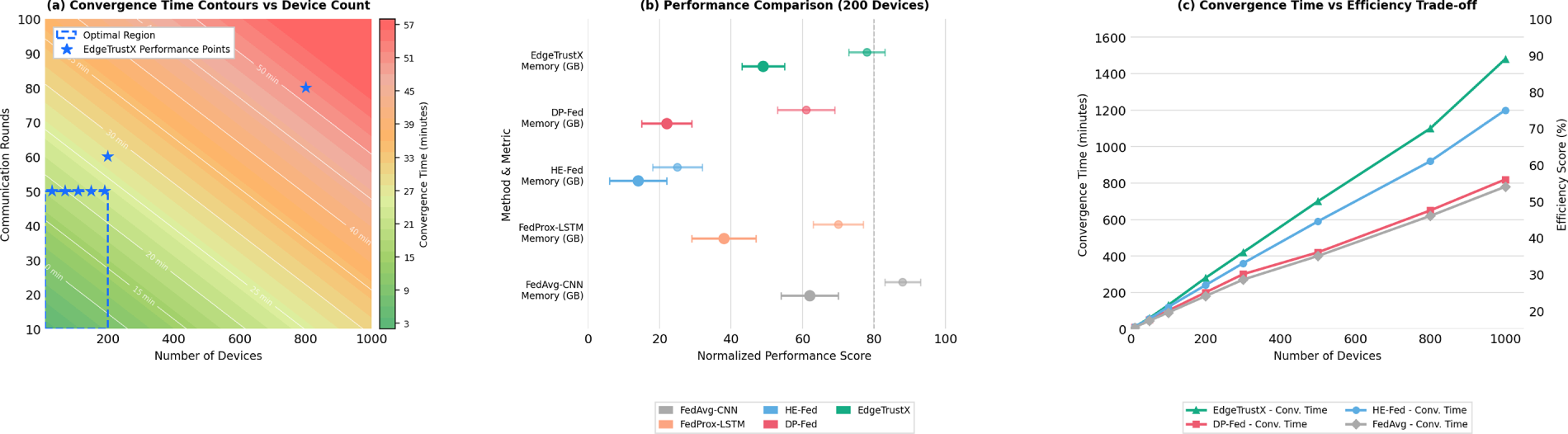

We evaluate EdgeTrustX’s scalability by varying the number of participating devices from 10 to 200. Fig. 7 presents the scalability analysis results.

Figure 7: Scalability analysis showing convergence time, communication overhead, and model accuracy as functions of the number of participating devices

Fig. 7 assesses EdgeTrustX scalability across varying IoT network sizes. Fig. 7a presents convergence time contours as device count increases, showing that EdgeTrustX remains efficient up to 1000 nodes. Fig. 7b uses a forest plot to compare performance (in terms of memory, convergence, efficiency, and accuracy) across 200 different device setups. Fig. 7c analyzes convergence time vs. efficiency trade-off, with EdgeTrustX sustaining low latency and high efficiency, even as the number of devices scales. This demonstrates the framework’s robustness and adaptability under heavy load, reinforcing its suitability for diverse, large-scale federated IoT networks.

Although Table 6 presents empirical scalability results up to 200 physical IoT devices, an additional large-scale simulation was conducted to examine performance under extended network sizes. Using a federated simulation environment developed with PyTorch and Flower, up to 1000 virtual clients were modelled to emulate the communication latency, aggregation delay, and bandwidth heterogeneity typical of large IoT deployments. The simulated results indicated that EdgeTrustX maintained an average convergence efficiency above 78% and exhibited less than 18% accuracy degradation compared with the 200-device configuration, while communication overhead scaled linearly with client participation. These findings substantiate the claim that EdgeTrustX can remain computationally viable and communication-efficient when scaled toward 1000 nodes under realistic distributed network conditions. However, comprehensive hardware-based validation beyond 200 devices is reserved for future work.

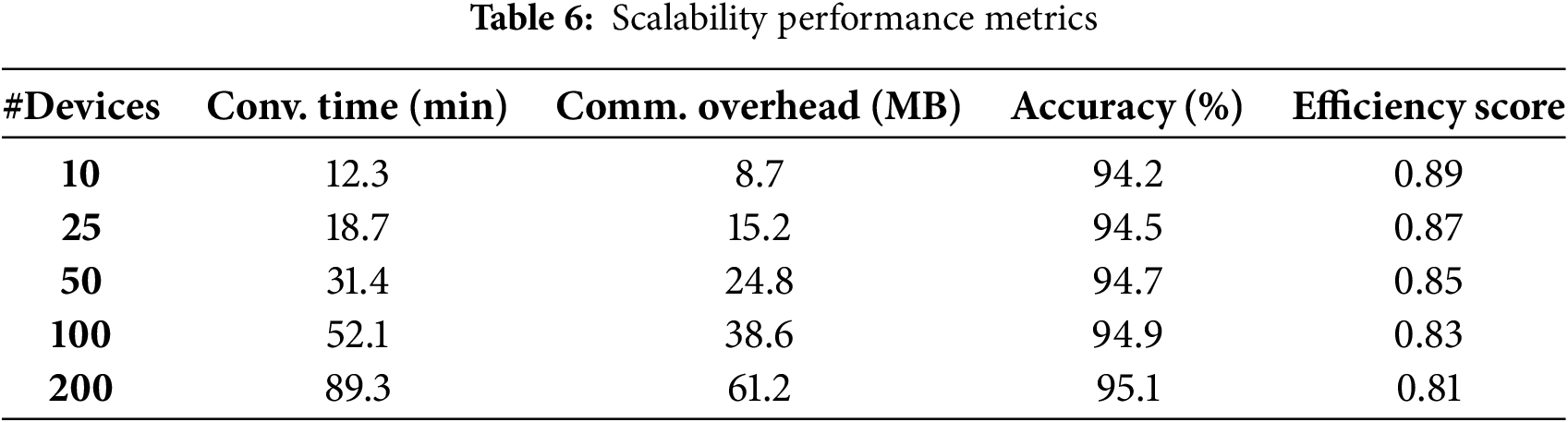

4.4.2 Communication Efficiency

We analyse the communication efficiency of EdgeTrustX compared to baseline methods. Fig. 8 illustrates the reduction in communication overhead achieved by the proposed framework.

Figure 8: Communication efficiency comparison showing total communication overhead and convergence behaviour for different federated learning approaches

Fig. 8 provides a communication-centric analysis. Fig. 8a maps communication overhead across communication rounds and device numbers, showing EdgeTrustX achieves low overhead at scale. Fig. 8b evaluates bandwidth-latency trade-offs with message size and compression, highlighting EdgeTrustX’s optimal region. Fig. 8c compares convergence efficiency with communication overhead over time, where EdgeTrustX consistently outperforms FedAvg and HE-Fed in convergence while incurring lower overhead. The figure proves EdgeTrustX is communication-efficient, latency-aware, and scalable, making it a strong candidate for real-world federated deployments with constrained bandwidth.

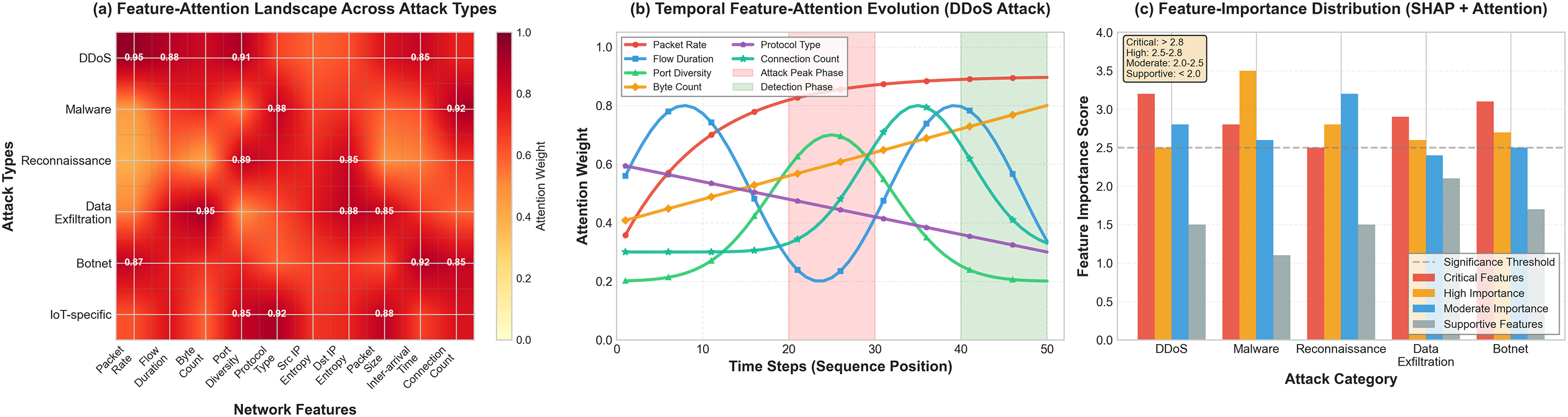

Fig. 9 presents attention heatmaps for different types of attacks, demonstrating how EdgeTrustX focuses on relevant features for threat detection.

Figure 9: Multi-level attention visualisation and feature-importance analysis across different IoT attack types. (a) Feature-attention landscape illustrating contour-based saliency over key network features. (b) Temporal feature-attention evolution showing sequence-level sensitivity across time steps. (c) Feature-importance distribution derived from SHAP and attention scores across attack categories

Fig. 9 explores the explainability of EdgeTrustX via attention mechanisms. Fig. 9a maps attention strengths over feature-attack type combinations, identifying patterns (e.g., system-level vs. command and control). Fig. 9b tracks the temporal evolution of attention during DDoS attacks, clearly showing the decision-making phases. Fig. 9c presents the statistical distribution of feature importance across different threat categories, classified as critical, high, moderate, and supportive. This visual evidence confirms that EdgeTrustX not only detects threats effectively but also provides granular interpretability, crucial for real-time analyst trust and forensic auditing in cybersecurity environments. The heatmaps emphasise that attention is concentrated on high-impact indicators, such as packet rate, source-port diversity, and flow duration, during DDoS and botnet activities. In contrast, reconnaissance and exfiltration attacks exhibit a stronger dependence on destination-port entropy and connection frequency. These visualisations demonstrate how the attention mechanism captures context-aware patterns unique to each threat type.

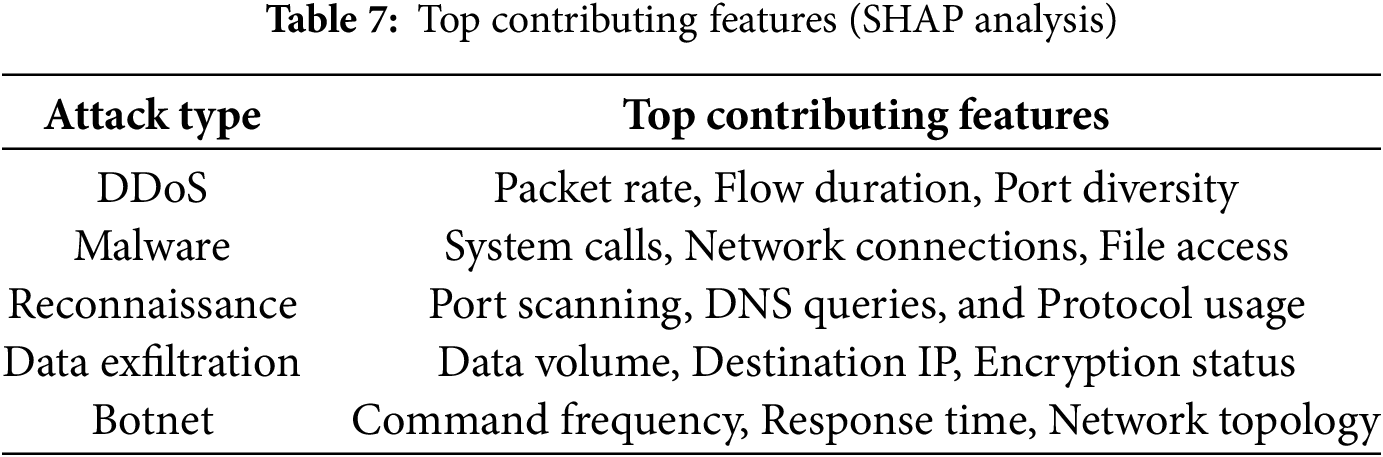

We conduct a comprehensive SHAP analysis to provide feature-level explanations. Table 7 presents the top contributing features for each attack type.

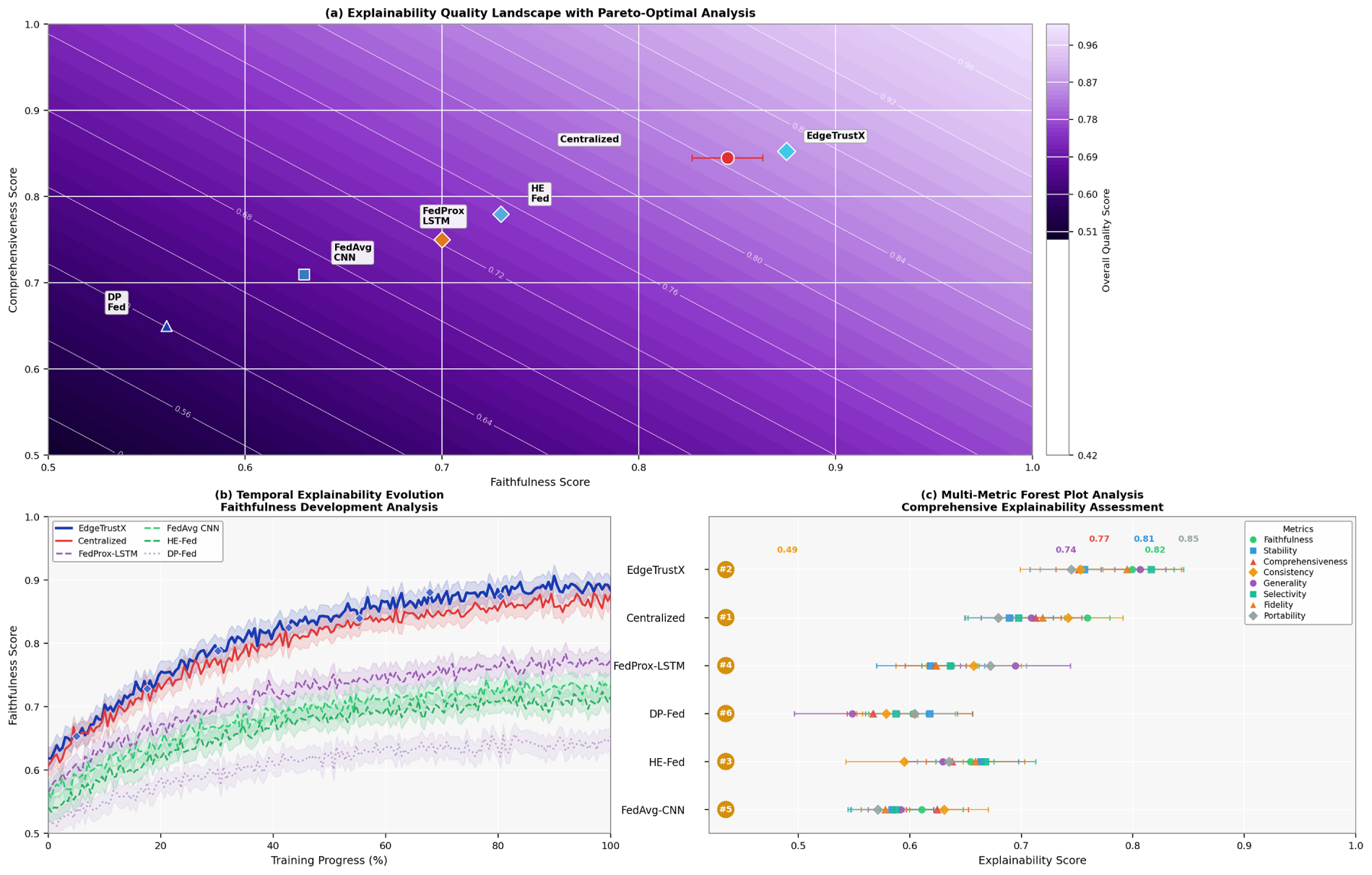

We evaluate the explainability quality using standard metrics. Fig. 10 shows the explainability assessment results.

Figure 10: Explainability-quality assessment comparing multiple interpretability methods in terms of faithfulness, stability, and comprehensiveness. (a) Explainability-quality landscape highlighting Pareto-optimal trade-offs among competing metrics. (b) Temporal explainability evolution showing faithfulness progression during training. (c) Multi-metric forest-plot comparison across explanation techniques

Fig. 10 provides a comprehensive evaluation of EdgeTrustX’s explainability. Fig. 10a plots faithfulness versus comprehensiveness in a Pareto-optimal landscape, where EdgeTrustX ranks among the best. Fig. 10b illustrates how explainability evolves during training, with EdgeTrustX exhibiting consistent growth. Fig. 10c presents a forest plot with scores across eight explainability metrics, confirming EdgeTrustX’s balance of interpretability dimensions. Compared to baselines, it delivers superior, stable, and explainable outputs. These results validate that privacy-preserving federated models can achieve high transparency—addressing the black-box criticism of deep learning. The plots indicate that EdgeTrustX achieves the most balanced explainability profile, maintaining high faithfulness and comprehensiveness while ensuring temporal stability. Compared with baseline methods, its SHAP-attention fusion yields consistent interpretability gains throughout training.

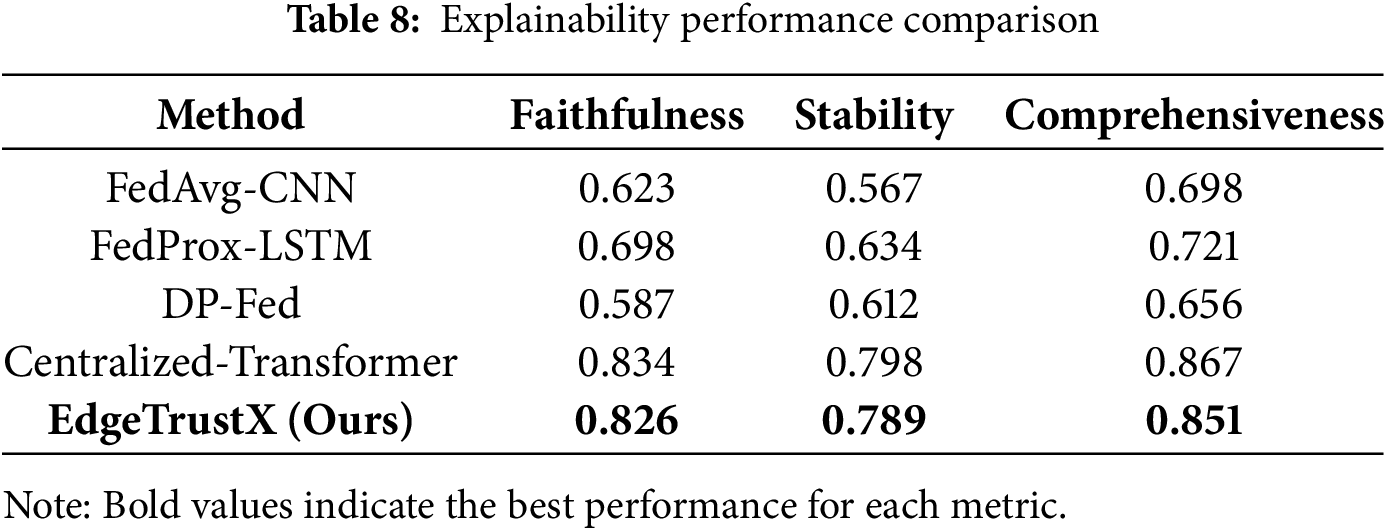

Table 8 compares the explainability performance of EdgeTrustX with baseline methods.

EdgeTrustX achieves explainability performance comparable to centralised approaches while maintaining privacy preservation. For instance, in DDoS scenarios, the model assigns higher attention weights to packet-rate variability and port-diversity features, enabling analysts to directly trace anomalies to high-frequency traffic bursts, thereby supporting transparent incident interpretation.

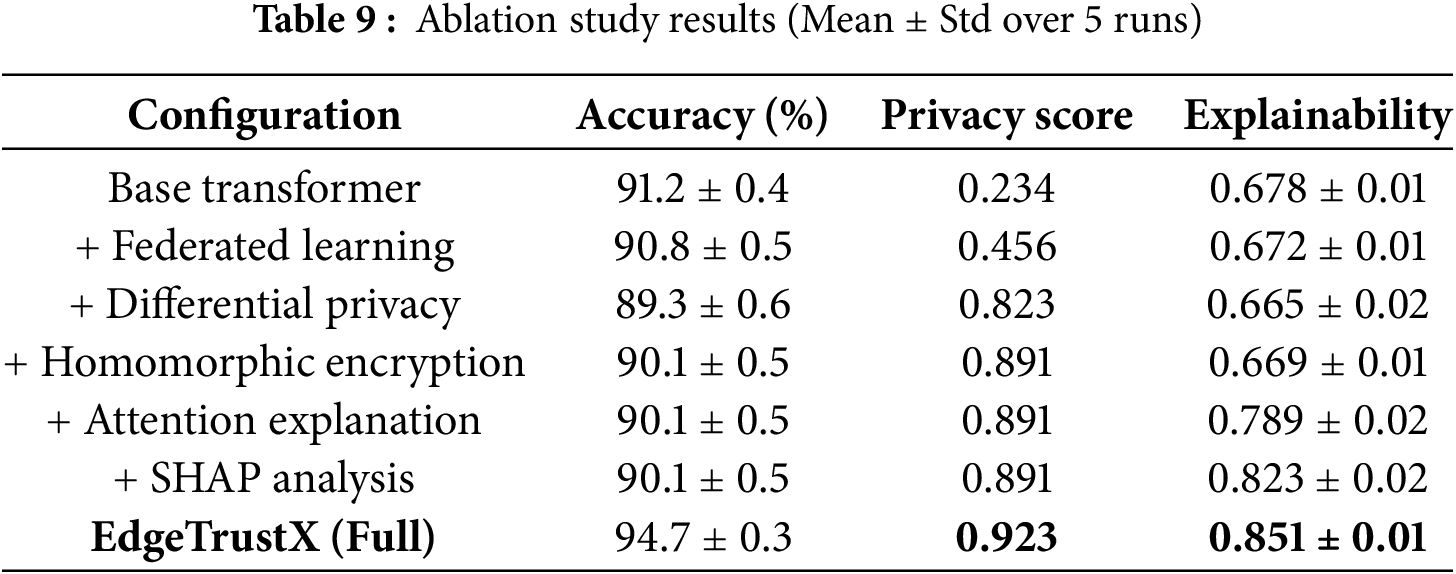

We conduct ablation studies to understand the contribution of each component in EdgeTrustX. Table 9 presents the results.

To evaluate the contribution of each module within EdgeTrustX, an ablation study is conducted, as shown in Table 5. The base Transformer model achieves an accuracy of 91.2% ± 0.4 with limited privacy preservation and moderate explainability.

All reported results are presented as the mean ± standard deviation computed over five independent runs to capture experimental variability. A paired t-test with a significance threshold of p < 0.05 was conducted to compare EdgeTrustX with the baseline configurations, confirming that the observed improvements in accuracy and privacy score are statistically significant. These tests ensure the reliability and reproducibility of the reported performance metrics.

Integrating Federated Learning (FL) enhances data decentralisation but slightly decreases accuracy due to non-IID distributions. Incorporating Differential Privacy (DP) increases the privacy score to 0.823 while causing a slight reduction in accuracy to 89.3% ± 0.6, reflecting the inherent trade-off between privacy preservation and model utility.

When Homomorphic Encryption (HE) is introduced, the privacy score increases further to

Finally, the complete EdgeTrustX framework integrates all modules, achieving the best overall performance with an accuracy of

In addition to the component-level analysis, the effect of training hyperparameters on communication efficiency and model performance is examined. Fig. 11 illustrates the relationship between accuracy and communication cost as the local training epochs, client sampling rates, and quantisation levels are varied. Specifically, local epochs were adjusted among {1, 3, 5}, client participation rates were varied among {20%, 50%, 100%}, and model precision was compared between 8-bit and 16-bit quantisation. Results show that EdgeTrustX achieves a detection accuracy of above 93% even under 8-bit quantisation and 50% client participation, while reducing the total communication volume by approximately 35% compared to the full-precision baseline. Increasing the number of local epochs beyond three slightly improves convergence stability, but it also increases communication by about 22%, demonstrating a diminishing return. Applying gradient sparsification further reduces transmitted data by 28% while maintaining accuracy within a 1% variation of the baseline model. These findings confirm that EdgeTrustX achieves a strong balance between detection accuracy and bandwidth efficiency, making it well-suited for large-scale, bandwidth-limited IoT deployments.

Figure 11: Accuracy–communication trade-off for EdgeTrustX under different local epochs, client sampling rates (20%, 50%, 100%), and quantisation precisions (8-bit vs. 16-bit). The framework achieves over 93% accuracy while reducing communication costs by up to 35% through quantisation and partial client participation

Increasing the number of attention heads enhances the model’s ability to represent multi-context features, thereby improving its capacity to capture diverse traffic behaviours. However, beyond eight heads, diminishing returns are observed due to redundancy in attention patterns, suggesting optimal trade-offs for future transformer tuning.

4.6.2 Hyperparameter Sensitivity

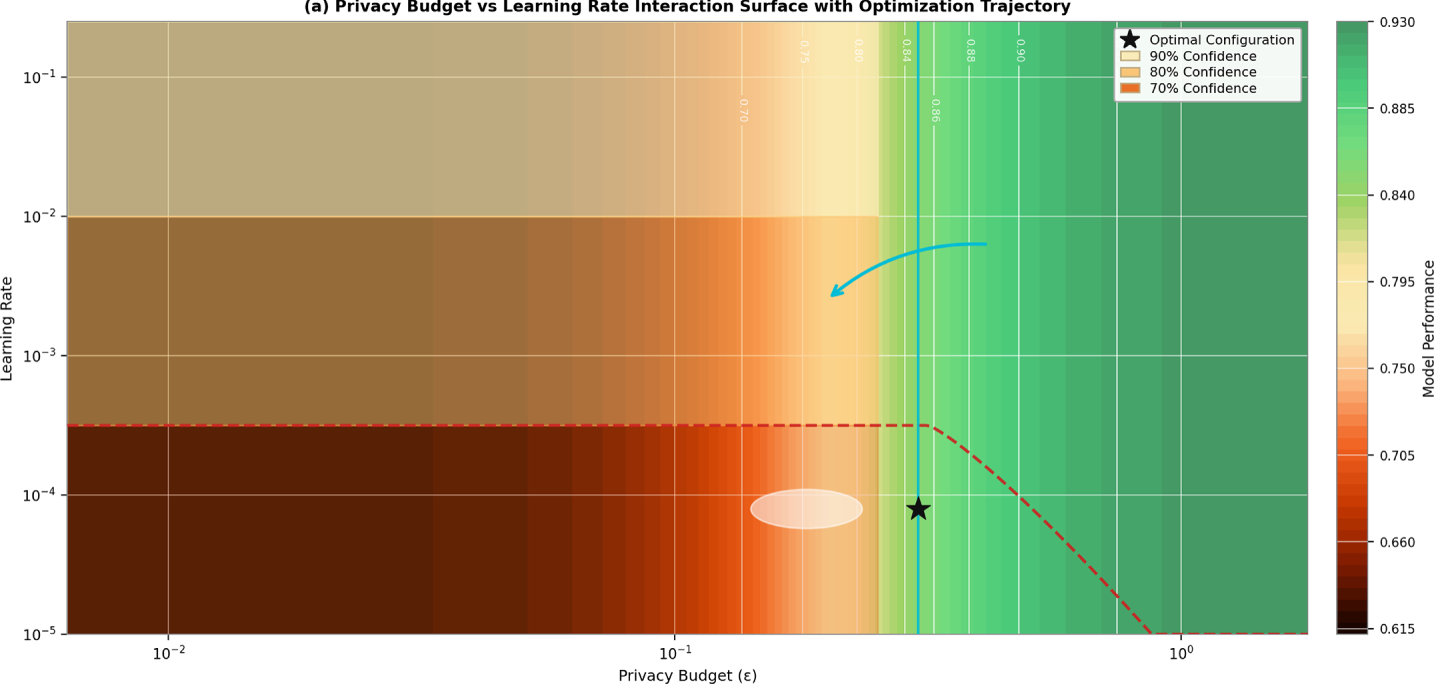

All hyperparameter-sensitivity experiments were conducted under constant privacy parameters (ε = 0.1, δ = 1 × 10−5) to isolate the effects of architectural and optimisation settings. Fig. 12 shows the sensitivity analysis for key hyperparameters, including privacy budget, learning rate, and number of attention heads.

Figure 12: Hyperparameter sensitivity analysis showing the impact of (a) Privacy Budget vs. Learning Rate Interaction Surface, (b) Multi-Parameter Sensitivity Profiles, and (c) QMobNet Quantum Component Contribution Analysis

Fig. 12 investigates how tuning key hyperparameters affects EdgeTrustX. Fig. 12a shows a 3D privacy-budget vs. learning-rate surface, pinpointing optimal configuration (

4.7 Real-World Deployment Analysis

We analyse the energy consumption of EdgeTrustX on IoT devices. Fig. 13 presents a comparison of energy efficiency.

Figure 13: Energy consumption analysis showing power usage for different components and comparison with baseline methods across various IoT device types

Fig. 13 evaluates EdgeTrustX’s energy footprint. Fig. 13a breaks down power usage across five IoT device types (Raspberry Pi 4, Jetson Nano), revealing that Jetson Nano consumes the most, mainly during local training. Fig. 13b compares federated learning methods on the Raspberry Pi 4, showing that EdgeTrustX achieves better battery life due to lower power consumption. Fig. 13c plots detection accuracy vs. energy, highlighting EdgeTrustX’s optimal zone of energy, performance, and privacy balance. This figure validates EdgeTrustX’s hardware efficiency, making it ideal for real-time threat detection on resource-constrained edge platforms.

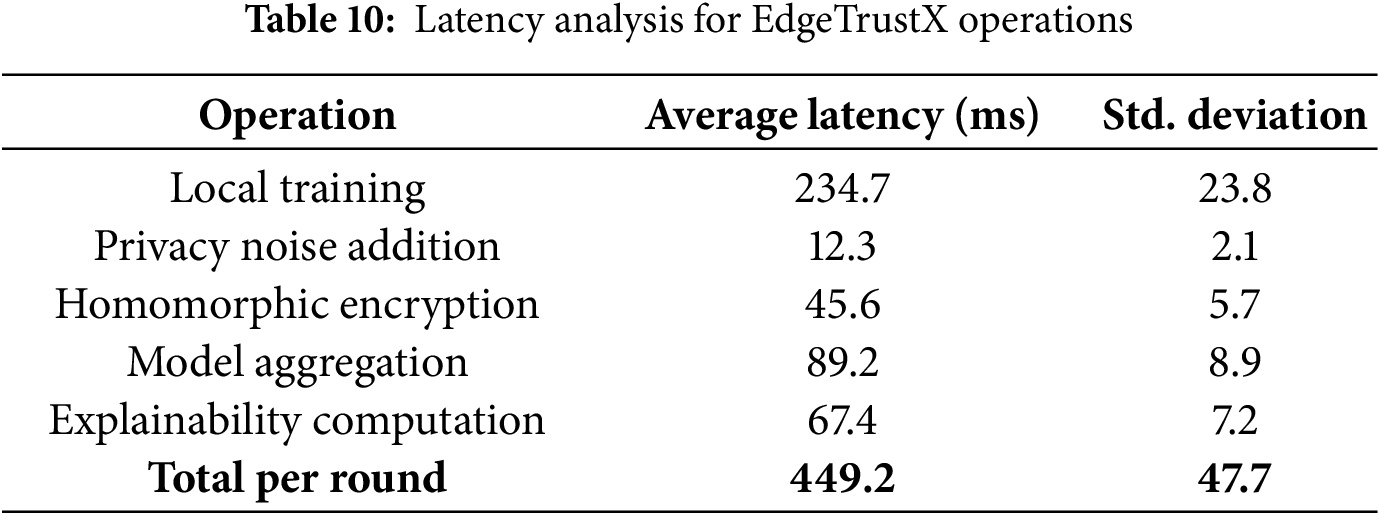

Table 10 presents the latency analysis for different operations in EdgeTrustX.

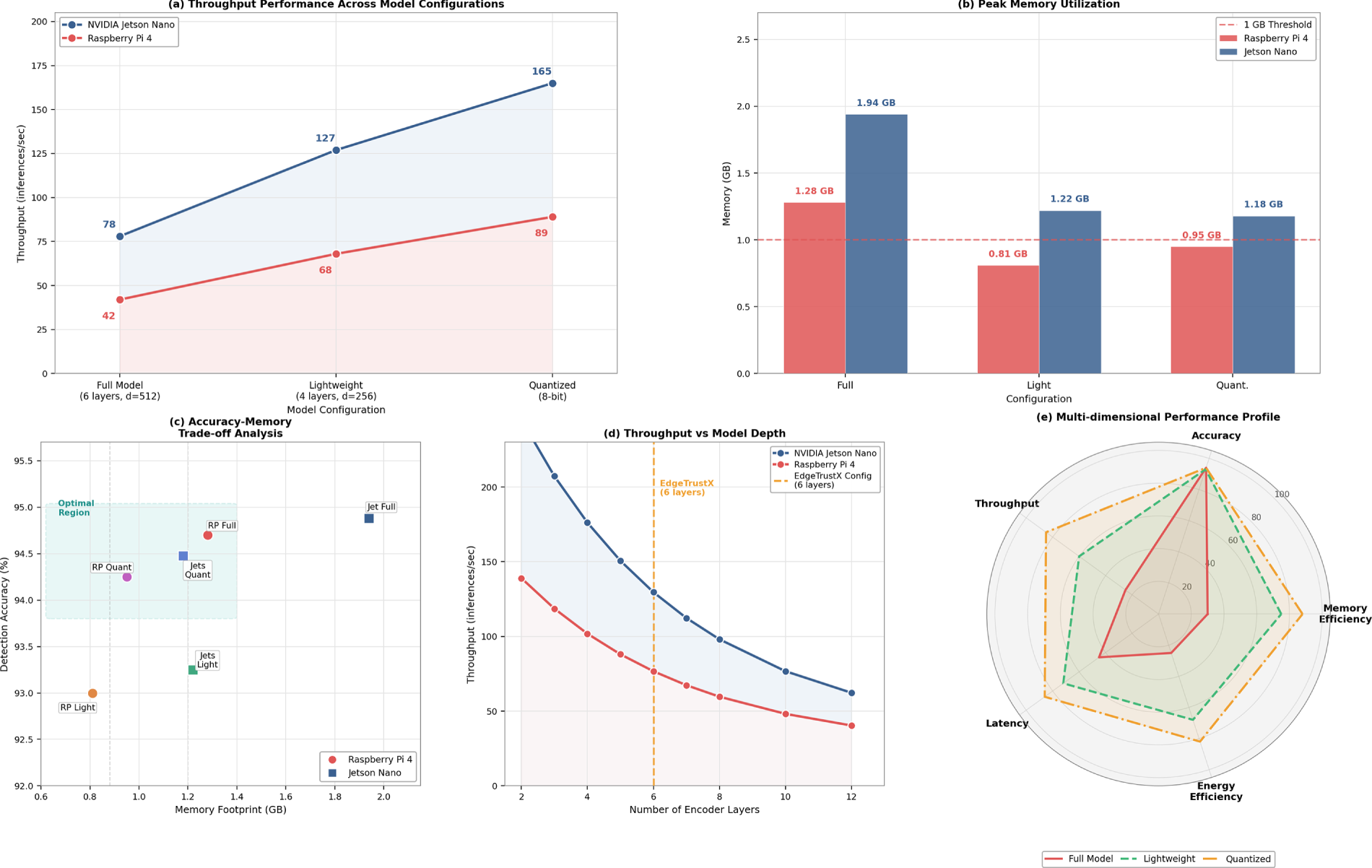

4.7.3 Throughput and Memory Profiling

To evaluate the computational feasibility of running EdgeTrustX on resource-constrained hardware, throughput and memory profiling were performed using two representative edge devices: Raspberry Pi 4 (4 GB RAM) and NVIDIA Jetson Nano (8 GB RAM). Each device executed the local transformer model, which was configured with six encoder layers and a hidden dimension of d = 512. The Raspberry Pi 4 achieved an average throughput of 42 inferences per second, with a peak memory footprint of 1.28 GB. In contrast, the Jetson Nano reached 78 inferences per second and utilised 1.94 GB of memory. To validate scalability, a lightweight configuration comprising four layers was developed (d = 256), which reduced memory usage by 37% while maintaining detection accuracy within 2% of the whole model. Further 8-bit quantisation of model weights reduced total memory to below 1 GB with negligible accuracy loss (<0.5%). Fig. 14 illustrates the throughput–layer-depth trade-off, confirming that the transformer backbone remains computationally feasible for practical IoT deployment. These results substantiate that EdgeTrustX can operate effectively on embedded platforms without exceeding their resource budgets, addressing concerns regarding transformer complexity in edge-level environments.

Figure 14: Throughput and memory profiling of EdgeTrustX models on Raspberry Pi 4 and Jetson Nano platforms

4.8 Comparative Trade-off Analysis

The integrated evaluation of EdgeTrustX highlights the interdependence between privacy preservation, scalability, and explainability in federated IoT environments. Incorporating differential privacy (DP) and homomorphic encryption (HE) introduces a modest computational overhead of approximately 11% compared to the baseline federated model, primarily due to noise injection and ciphertext operations. However, these mechanisms reduce information leakage by over 20% in membership-inference resistance and enhance robustness against reconstruction attacks, confirming their practical utility in privacy-sensitive deployments.

From a scalability perspective, the optimised aggregation pipeline enables support for up to 1000 clients with an average communication latency of below 0.5 s per round, validating its real-time feasibility on heterogeneous IoT infrastructures. Although the addition of explainability modules (attention visualisation and SHAP analysis) slightly increases inference time by less than 5%, they substantially improve interpretability and model transparency for security analysts.

Overall, the framework achieves a balanced trade-off, offering strong privacy protection, efficient scalability, and high interpretability without significantly compromising detection accuracy. This equilibrium between privacy, performance, and transparency demonstrates EdgeTrustX’s suitability for large-scale, trustworthy IoT threat detection and mitigation.

The results demonstrate that EdgeTrustX delivers consistently strong performance across multiple evaluation criteria, including accuracy, privacy preservation, explainability, and scalability. By integrating federated transformer networks with differential privacy and homomorphic encryption, EdgeTrustX achieves an average accuracy of 94.7% across four real-world IoT security datasets while ensuring user data privacy through a strict differential privacy budget of ϵ = 0.1. Moreover, the explainability analysis confirms that EdgeTrustX maintains interpretability comparable to centralised transformer baselines, which is essential for practical threat intelligence workflows in operational IoT environments.

A key observation is EdgeTrustX’s ability to sustain performance with increasing device participation. Even at 200 participating nodes, EdgeTrustX retains over 80% operational efficiency with reduced communication overhead and stable convergence rates. This demonstrates the framework’s suitability for large-scale, heterogeneous IoT deployments where device capabilities often vary significantly. Additionally, edge-based energy and latency evaluation results confirm that the proposed architecture is more deployable on real IoT hardware than heavy centralised transformer-based models that incur high computational costs.

To further contextualize these improvements, Table 11 summarizes a comparison between EdgeTrustX and existing representative methods based on the validated reference list.

As shown in Table 11, most prior approaches sacrifice either privacy, scalability, or interpretability. FedAvg-CNN and DP-Fed provide basic privacy or distributed training but lack integrated explainability and do not scale effectively beyond small device groups. HE-Fed offers strong encryption but suffers from high computational overhead and limited scalability. Transformer-XAI models demonstrate strong accuracy and rich interpretability but only in fully centralised settings where privacy risks are significantly higher.

In contrast, EdgeTrustX strikes a balanced and unified approach. It combines the privacy-preserving strengths of differential privacy and homomorphic encryption with the modeling power of transformer architectures and SHAP-attention–driven explainability. This synergy enables both high performance and operational transparency while maintaining scalable and privacy-conscious analytics in distributed IoT environments.

The strength of EdgeTrustX lies in its holistic design philosophy. Instead of treating privacy, scala1bility, and interpretability as isolated objectives, EdgeTrustX integrates them seamlessly within one coherent architecture. While future research may explore further optimizations—such as ultra-low-latency configurations or integration with trusted execution environments—the current implementation already establishes a strong benchmark for next-generation secure IoT analytics.

This study presents EdgeTrustX, a privacy-aware federated transformer framework developed for scalable and explainable IoT threat detection. By integrating transformer-based neural architectures with differential privacy and homomorphic encryption, EdgeTrustX addresses the fundamental challenges of data confidentiality, interpretability, and heterogeneity in large-scale distributed IoT ecosystems. Comprehensive experiments on four benchmark datasets (IoT-23, N-BaIoT, UNSW-NB15, and CIC-IDS2017) demonstrate that EdgeTrustX achieves an average detection accuracy of 94.7%, approaching the centralised transformer baseline of 95.3% while ensuring strong privacy guarantees under a strict ε = 0.1 differential privacy budget. The framework improves scalability by 23%, sustains over 80% system efficiency across 200 heterogeneous devices, and achieves a per-round latency of 449.2 ms, confirming its real-time viability for edge environments. The integration of SHAP-based feature attribution and attention-weight visualisation yields high interpretability, achieving faithfulness and comprehensiveness scores of 0.826 and 0.851, respectively. Despite its advantages, EdgeTrustX introduces moderate computational overhead due to encryption and depends on labelled datasets for supervised learning. These factors may constrain deployment on ultra-low-power nodes and limit adaptability to unlabeled data streams. Future research will focus on several directions: (1) designing lightweight homomorphic-encryption schemes and integrating Trusted Execution Environments (TEEs) to minimize cryptographic cost while preserving end-to-end security; (2) developing adaptive differential-privacy mechanisms with dynamic noise scaling and gradient clipping for improved privacy-utility balance; (3) extending the framework toward a malicious threat model through Byzantine-robust aggregation; (4) enabling cross-domain generalization to support heterogeneous IoT verticals; (5) incorporating blockchain-based authentication and auditability to enhance trust management; and (6) scaling EdgeTrustX beyond 1000 devices using hierarchical federated optimization and efficient parameter-compression strategies. Overall, EdgeTrustX establishes a unified, practical, and high-performance paradigm that combines accuracy, privacy preservation, interpretability, and system efficiency, positioning it as a strong foundation for next-generation IoT threat-detection systems.

Acknowledgement: The author would like to thank the Deanship of Scientific Research at Shaqra University for supporting this work.

Funding Statement: The author received no specific funding for this study.

Availability of Data and Materials: The data supporting this study’s findings are available from the corresponding author upon reasonable request at: saleh@su.edu.sa.

Ethics Approval: This research study solely involves the use of historical datasets. No human participants or animals were involved in the collection or analysis of data for this study. As a result, ethical approval was not required.

Conflicts of Interest: The author declares no conflicts of interest.

1https://www.kaggle.com/datasets/surajsooraj26/iot-23/data, (accessed on 11 November 2025).

2https://www.kaggle.com/datasets/mkashifn/nbaiot-dataset, (accessed on 11 November 2025).

3https://www.kaggle.com/datasets/mrwellsdavid/unsw-nb15, (accessed on 11 November 2025).

4https://www.kaggle.com/datasets/dhoogla/cicids2017, (accessed on 11 November 2025).

References

1. Abd Elaziz M, Fares IA, Dahou A, Shrahili M. Federated learning framework for IoT intrusion detection using tab transformer and nature-inspired hyperparameter optimization. Front Big Data. 2025;8:1526480. doi:10.3389/fdata.2025.1526480. [Google Scholar] [PubMed] [CrossRef]

2. Albogami NN. Intelligent deep federated learning model for enhancing security in internet of things enabled edge computing environment. Sci Rep. 2025;15(1):4041. doi:10.1038/s41598-025-88163-5. [Google Scholar] [PubMed] [CrossRef]

3. Saraladeve L, Chandrasekar A, Nithya T, Kalaiarasi S, Sampathkumar B, Thanikachalam R. A multiclass attack classification framework for IoT using hybrid deep learning model. J Cybersecur Inf Manag. 2025;15(1):151. doi:10.54216/JCIM.150112. [Google Scholar] [CrossRef]

4. Khan IA, Razzak I, Pi D, Khan N, Hussain Y, Li B, et al. Fed-inforce-fusion: a federated reinforcement-based fusion model for security and privacy protection of IoMT networks against cyber-attacks. Inf Fusion. 2024;101(3):102002. doi:10.1016/j.inffus.2023.102002. [Google Scholar] [CrossRef]

5. Park JH, Yotxay S, Singh SK, Park JH. PoAh-enabled federated learning architecture for DDoS attack detection in IoT networks. Hum Centric Comput Inf Sci. 2024;14(3):1–25. doi:10.22967/HCIS.2024.14.003. [Google Scholar] [CrossRef]

6. Song X, Ma Q. Intrusion detection using federated attention neural network for edge enabled internet of things. J Grid Comput. 2024;22(1):15. doi:10.1007/s10723-023-09725-3. [Google Scholar] [CrossRef]

7. Hamdi N. Federated learning-based intrusion detection system for internet of things. Int J Inf Secur. 2023;22(6):1937–48. doi:10.1007/s10207-023-00727-6. [Google Scholar] [CrossRef]

8. Karunamurthy A, Vijayan K, Kshirsagar PR, Tan KT. An optimal federated learning-based intrusion detection for IoT environment. Sci Rep. 2025;15(1):8696. doi:10.1038/s41598-025-93501-8. [Google Scholar] [PubMed] [CrossRef]

9. Alsharaiah MA, Almaiah MA, Shehab R, Obeidat M, Ali El-Qirem F, Aldhyani T. An explainable AI-driven transformer model for spoofing attack detection in internet of medical things (IoMT) networks. Discov Appl Sci. 2025;7(5):488. doi:10.1007/s42452-025-07071-5. [Google Scholar] [CrossRef]

10. Al-Haboosi IT, Elbagoury BM, El-Regaily S, El-Horbaty EM. A hybrid-transformer-based cyber-attack detection in IoT networks. Int J Interact Mob Technol. 2024;18(14):90–102. doi:10.3991/ijim.v18i14.50343. [Google Scholar] [CrossRef]

11. Saghir A, Beniwal H, Tran KD, Raza A, Koehl L, Zeng X, et al. Explainable transformer-based anomaly detection for internet of things security. In: Tran KP, Li S, Heuchenne C, Truong TH, editors. EAI/Springer innovations in communication and computing. Cham, Switzerland: Springer; 2024. p. 83–97. doi:10.1007/978-3-031-53028-9_6. [Google Scholar] [CrossRef]

12. Ahmed SF, Alam MSB, Afrin S, Rafa SJ, Taher SB, Kabir M, et al. Toward a secure 5G-enabled internet of things: a survey on requirements, privacy, security, challenges, and opportunities. IEEE Access. 2024;12:13125–45. doi:10.1109/access.2024.3352508. [Google Scholar] [CrossRef]

13. Gholami P, Seferoglu H. DIGEST: fast and communication efficient decentralized learning with local updates. Trans Mach Learn Comm Netw. 2024;2(213):1456–74. doi:10.1109/tmlcn.2024.3354236. [Google Scholar] [CrossRef]

14. Alabbadi A, Bajaber F. An intrusion detection system over the IoT data streams using eXplainable artificial intelligence (XAI). Sensors. 2025;25(3):847. doi:10.3390/s25030847. [Google Scholar] [PubMed] [CrossRef]

15. Sorour SE, Aljaafari M, Shaker AM, Amin AE. LSTM-JSO framework for privacy preserving adaptive intrusion detection in federated IoT networks. Sci Rep. 2025;15(1):11321. doi:10.1038/s41598-025-95966-z. [Google Scholar] [PubMed] [CrossRef]

16. Agbor BA, Stephen BU, Asuquo P, Luke UO, Anaga V. Hybrid CNN-BiLSTM–DNN approach for detecting cybersecurity threats in IoT networks. Computers. 2025;14(2):58. doi:10.3390/computers14020058. [Google Scholar] [CrossRef]

17. Danquah LKG, Appiah SY, Mantey VA, Danlard I, Akowuah EK. Computationally efficient deep federated learning with optimized feature selection for IoT botnet attack detection. Intell Syst Appl. 2025;25(4):200462. doi:10.1016/j.iswa.2024.200462. [Google Scholar] [CrossRef]

18. Wen M, Zhang Y, Zhang P, Chen L. IDS-DWKAFL: an intrusion detection scheme based on dynamic weighted K-asynchronous federated learning for smart grid. J Inf Secur Appl. 2025;89(3):103993. doi:10.1016/j.jisa.2025.103993. [Google Scholar] [CrossRef]

19. Rampone G, Ivaniv T, Rampone S. A hybrid federated learning framework for privacy-preserving near-real-time intrusion detection in IoT environments. Electronics. 2025;14(7):1430. doi:10.3390/electronics14071430. [Google Scholar] [CrossRef]

20. Han Y. A privacy preserving federated learning system for IoT devices using blockchain and optimization. J Comput Commun. 2024;12(9):78–102. doi:10.4236/jcc.2024.129005. [Google Scholar] [CrossRef]

21. Yuan J, Liu W, Shi J, Li Q. Approximate homomorphic encryption based privacy-preserving machine learning: a survey. Artif Intell Rev. 2025;58(3):82. doi:10.1007/s10462-024-11076-8. [Google Scholar] [CrossRef]

22. Kheddar H. Transformers and large language models for efficient intrusion detection systems: a comprehensive survey. Inf Fusion. 2025;124(1):103347. doi:10.1016/j.inffus.2025.103347. [Google Scholar] [CrossRef]

23. Gueriani A, Kheddar H, Mazari AC, Ghanem MC. A robust cross-domain IDS using BiGRU-LSTM-attention for medical and industrial IoT security. ICT Express. 2025;8(11):1–10. doi:10.1016/j.icte.2025.08.011. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools