Open Access

Open Access

ARTICLE

Handoff Decision-Making in 5G Cellular Networks Using Deep Learning

1 Faculty of Computer Science and Mathematics, Universiti Malaysia Terengganu, Kaula Nerus, Terengganu, Malaysia

2 Faculty of Engineering and Technology, Sunway University, No. 5, Jalan Universiti, Bandar Sunway, Selangor Darul Ehsan, Malaysia

3 School of Computing Sciences, NFC: Institute of Engineering and Fertilizer Research, Faisalabad, Pakistan

4 Department of Information Technology, The University of Haripur, Haripur, Pakistan

* Corresponding Authors: Ahmad Shukri Mohd Noor. Email: ; Muhammad Junaid. Email:

Computers, Materials & Continua 2026, 87(3), 104 https://doi.org/10.32604/cmc.2026.076246

Received 17 November 2025; Accepted 17 March 2026; Issue published 09 April 2026

Abstract

The increasing adoption of 5G cellular networks has introduced significant challenges for network operators. The main challenge lies in the management of seamless handoff (HO), which occurs owing to the rapid expansion of equipment, data, and network complexity. To address this challenge, a model named optimal HO management deep learning neural network (OHMDLNN) is proposed. The model is trained on network activity data, and it uses KPIs (key performance indicators) and system-level parameters to make HO decisions. As demonstrated in the article, OHMDLNN is successful in analyzing the effect and interdependence of KPIs from both the network and user equipment (UE) perspectives. Moreover, the model is evaluated for accuracy (the percentage of correct decisions made by a model on a dataset) in comparison with existing neural network-based HO decision models. These include temporal convolution networks (TCN), recurrent neural networks (RNN), long short-term memory (LSTM), gated recurrent units (GRU), and convolutional neural networks (CNN). The dataset used to evaluate the performance of the model consisted of 65,000 records. The model demonstrates superior performance, with an average improvement on accuracy of 8 percent over TCN, 18 percent over RNN, 6 percent over LSTM, 14 percent over GRU and 4 percent over CNN. Along with accuracy, the model is also tested on important performance indicators, including the packet loss rate, the success rate, latency, and throughput at the time of handover. These results affirm its efficiency in the HO decision-making process. Future research will consider the use of advanced deep learning architectures and simplify the process of integrating system-level inputs to optimize system performance during HO events.Keywords

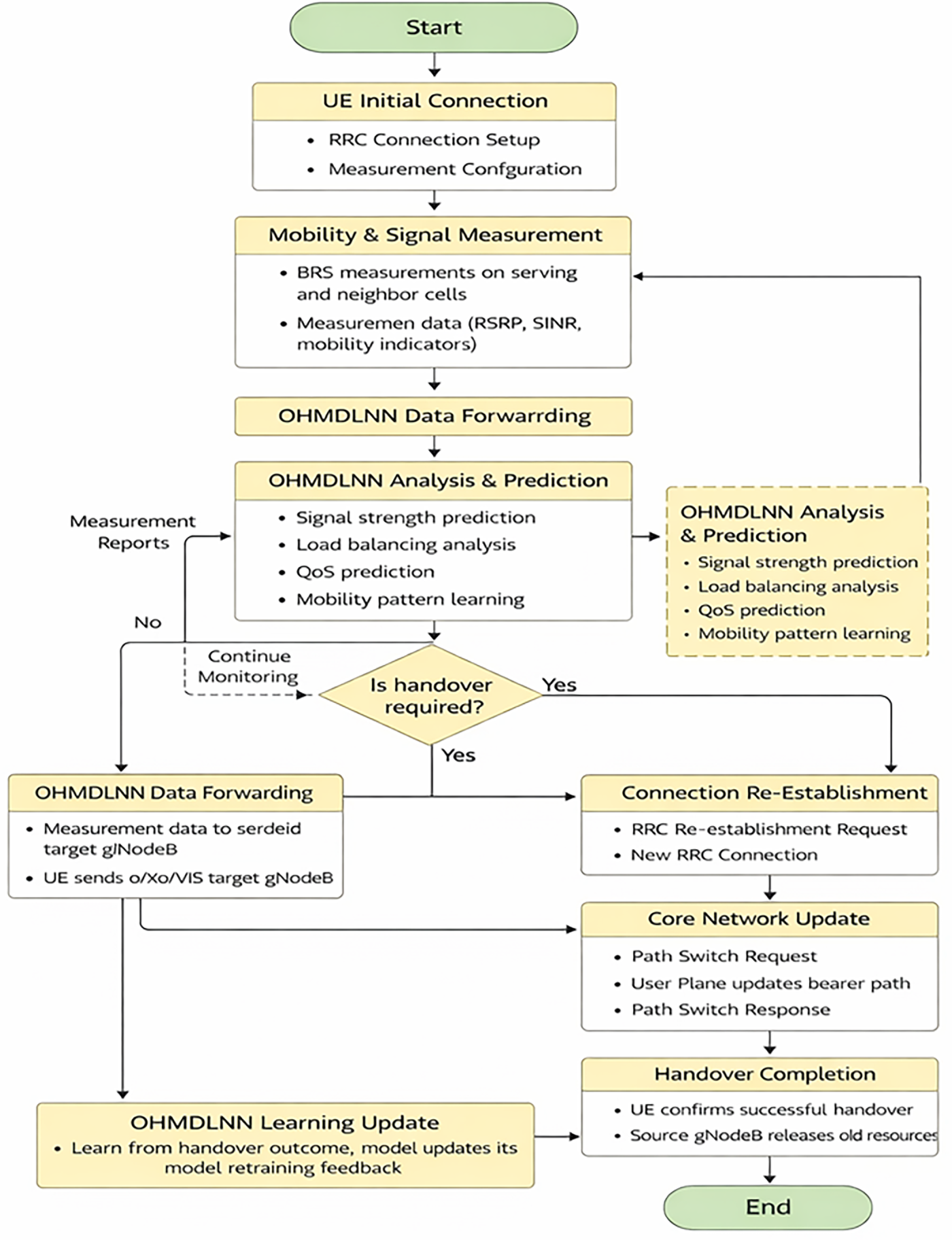

A recent study shows that there has been a 25% rise in novel mobile broadband subscriptions [1]. Similarly, there is a projected 65% growth in worldwide traffic demand by 2025, compared to the preceding years [1]. This rapid growth has created numerous challenges for current networks. To address these challenges, 3GPP has laid the groundwork for 5G networks through recent standardization efforts. These efforts primarily focus on the 5G new radio (NR) and the 5G next-generation core (NGC) architecture. One of the most challenging aspects is the HO management in 5G network design, which is necessary for efficient management and smooth user mobility [2]. Fig. 1 illustrates how the UE or network can initiate the transmission of handover messages. Broadly, the HO management process involves two main steps: handover decisions and handover signaling [3]. The HO decision process has been investigated, explored, and analyzed with the help of multi-attribute decision methods, fuzzy logic, and artificial intelligence-based algorithms.

Figure 1: Handover mechanism flow after incorporating the OHMDLNN model in a 5G cellular network.

However, optimizing the handover signaling phase has remained a challenge for quite a long time. Some studies use network function virtualization (NFV) and software-defined networking (SDN) to optimize handover signaling. Similarly, some authors have proposed models that consider the HO optimization aspect but are unable to reflect the transitional characteristics of the pertaining network architectures. As a result, existing research efforts into HO signaling optimization are inadequate to address the circumstances that will occur in current 3GPP and 5G applications.

Artificial intelligence (AI), and especially the branch of AI known as artificial neural networks (ANN), plays an important role in HO decisions [4]. These models analyze and make optimal HO decision by considering contextual information such as application requirements, network capability, device strength, and user location [5]. Similarly, these models can also be used to identify and recover networks from errors, allowing them to manage unanticipated malfunctions and change their decision-making during HO events. Moreover, these models are expandable and can analyze extensive volumes of traffic because large and complicated networks can be addressed with the help of such models [6]. Precisely, the integration of neural network models into the existing network management systems guarantees robustness, reliability, and effective network management [7]. Recent research has shown that the primary reason behind handovers in networks is mobility, but other factors can also cause handovers [1]. These include backhauling compatibility, network jamming or blocking, network reliability, quality of service (QoS), signal-to-interference-and-noise ratio (SINR), received signal strength indicator (RSSI) and, fade duration (FD). This article introduces OHMDLNN, a new artificial neural network-based model that is targeted at helping with HO decisions in 5G networks. The model is smart in combining both network and UE-side parameters so that improved, adaptive, and efficient handovers decisions can be made. Its performance was assessed using a large-scale dataset of 65,000 records.

It is benchmarked against traditional, machine learning, hybrid, and advanced neural network-based methods, including TCN, RNN, LSTM, GRU, and CNN. The evaluation demonstrates that OHMDLNN consistently outperforms these baseline models. It achieves up to a 9% improvement in accuracy and shows enhanced performance in terms of handover success rate, latency, packet loss, and throughput. The remainder of the article presents the literature review, details the system model and data description, introduces the proposed model, discusses the results, evaluates its performance, and concludes with future research directions.

This section highlights state-of-the-art technologies and methods relevant to handoff management in future wireless networks. One study suggests a novel mechanism to enhance HO signaling within the 5G service-based architecture [8]. The study recommends an evolution in the 3GPP-proposed access and mobility management function (AMF) block to manage radio resource control (RRC) and radio resource management (RRM) functions. Similarly, a novel HO preparation signaling methodology and an evolutionary architecture have been proposed. This architecture employs SDN principles and intelligent message handling procedures, which can improve latency by up to 49.42%, as well as transmission and processing costs by up to 40% and 28.57%, respectively.

Similarly, researchers suggest a correlation-based adaptive compressed sensing (CBACS) mechanism for 5G technology. This mechanism is a promising technique because it involves mm waves during propagation [9]. However, when the base station has more antennas than the number of users, it is more promising to use the uplink angle of arrival (AoA) than the downlink angle of departure (AoD) to obtain correct channel state information (CSI). Additionally, the researchers have also studied and suggested the assisted handover signaling method [10]. This method is beneficial as it provides seamless connectivity and enhances overall user experience.

Next, a pre-selection allocation strategy is proposed where macro eNodeB handles high-speed user equipment, while Pico eNBs handle low-speed UEs [11,12]. This strategy provides effective distribution of the workload among macro-eNBs. Similarly, another approach is used, where a centralized reinforcement learning agent oversees handovers between base stations [13]. This agent processes radio measurement reports from UEs and selects appropriate handover actions based on the reinforcement learning architecture [14]. Further, in the survey of densely populated small-cell heterogeneous networks, the authors utilize a game-theoretical approach [15]. They put this strategy into practice and evaluated it to ensure that the delays had improved at the time of handover. Likewise, a weighted fuzzy self-optimization (WFSO) strategy is also proposed to address the problem of needless handovers and ping-pong effects [16]. This strategy focuses on handover control parameter (HCP) optimization and considers factors including the signal-to-interference-plus-noise ratio (SINR) and the traffic load of the serving and target base stations [16].

The performance of X2-based HO is optimized with the help of reference signal received power (RSRP) and reference signal received quality (RSRQ) algorithms [17]. These algorithms are also compared with standard SINR-based handover procedures used in cellular networks, along with FDOP-based handover criteria [18]. Further, the authors investigate how HOM, A3 offset, and TTT affect UE velocity for attaining seamless HO. In this investigation, different distances are analyzed from the base station to get a clear picture of the network responses. Another contribution is the modification of control signalling as part of radio resource control messages that describe system-level architectural improvements for both UE and network parts. Apart from traditional HO management approaches, researchers have also proposed intelligent approaches for handoff optimization [19]. These approaches utilize machine and deep learning models.

For intelligent approaches/models, the dataset plays a crucial role in training and recognizing several significant factors. These include excessive handovers, their timings and locations, and the need for updates to the neighbour list. It also covers the power levels of each eNB at different locations, RF conditions, and UE behaviour. Additionally, the dataset reflects regular mobility patterns, uplink power levels of eNBs, UE bandwidth requirements, and specific applications being utilized. Finally, it considers diverse service parameters from both network and RF perspective [20].

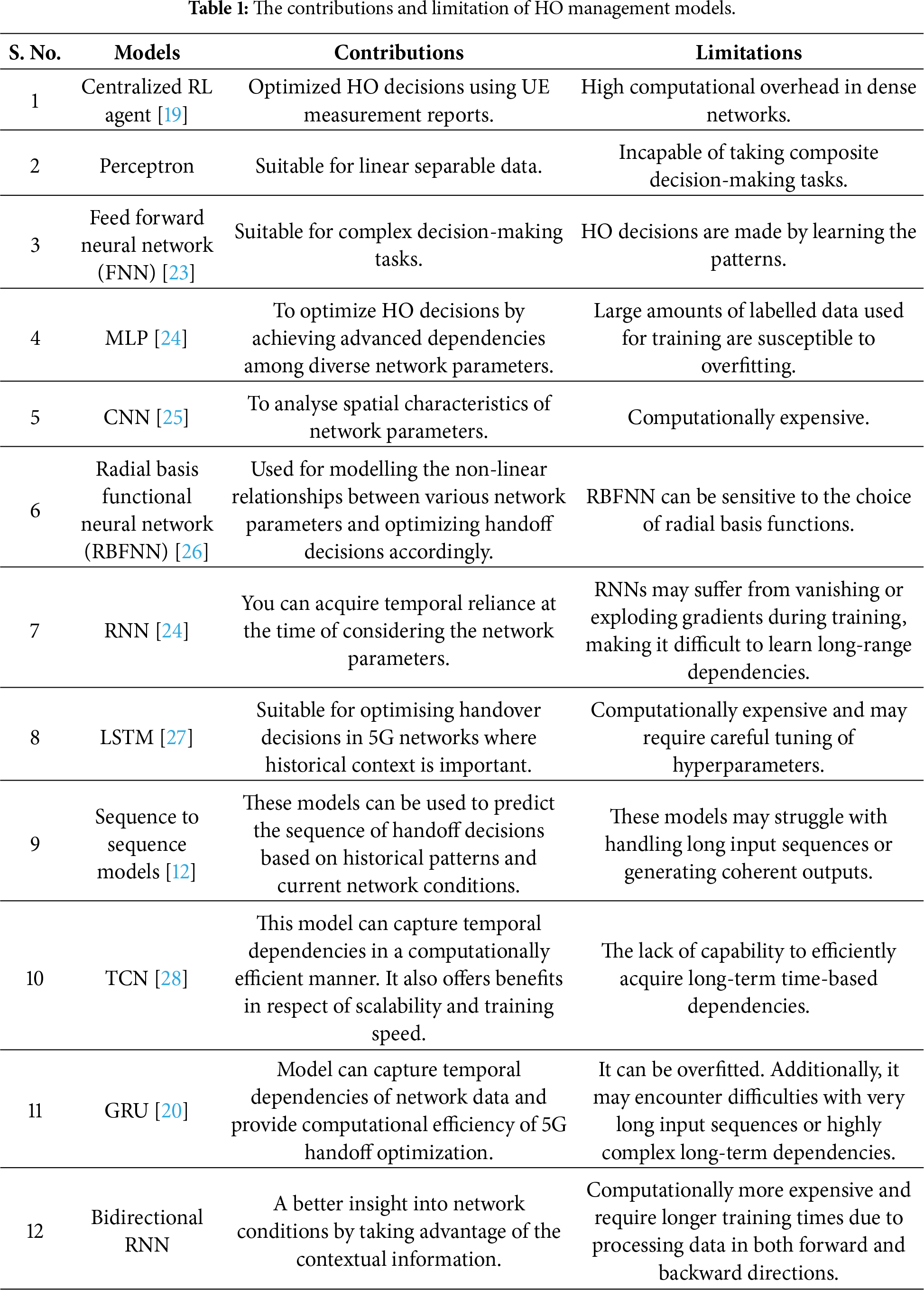

Intelligent approaches are particularly important when service providers implement highly concentrated networks [21]. These networks include picocells, femtocells, UE relays, DAS systems, as well as cellular and Wi-Fi hotspots. Further approaches and models of deep learning neural networks (DLNN) are used for handoff optimization are depicted in Table 1, which presents the findings and limitations of various neural network models. For instance, perception in Table 1 excels in basic tasks but falls short in complex decision-making processes.

Similarly, a multilayer perceptrons (MLPs) and convolutional neural networks (CNNs) demonstrate proficiency in capturing complicated patterns spatially. However, they demand significant labelled data and computational resources. Likewise, recurrent neural networks (RNNs) and long short-term memory (LSTM) networks excel at capturing temporal dependencies but may face challenges with gradients during training. One research group deployed a centralized RL agent to optimize HO decisions using UE measurement reports [22]. Similarly, a weighted fuzzy self-optimization (WFSO) reduces unnecessary HOs by optimizing parameters like SINR and UE velocity [16]. However, these models had issues with scalability in ultra-dense 5G environments. Therefore, deep learning (DL) models like MLPs, CNNs and RNNs were later adopted to capture spatial-temporal dependencies. In this regard, TCNs offered computational efficiency for temporal sequences but lacked long-term dependency modelling. Meanwhile, sequence-to-sequence models faltered when dealing with complex input–output mappings.

Outcomes of Literature

Several studies have been conducted to maximize HO decision-making as stated in Table 1. According to the literature, there has been improvement in HO management in 5G networks. The major elements of this improvement include the emergence of SDN, machine learning and deep learning algorithms, and other architectural improvements [2]. Nevertheless, it is not an easy task to discuss the primary concerns with HO decisions while considering various factors. Moreover, the literature concludes that TCNs can efficiently process temporal sequences and, thus, they are valid to consider them when making HO-related decisions in 5G networks. Even so, a more sophisticated model is needed that can best tackle the complexities involved in predicting HOs in 5G and future wireless networks. Although the current approaches have enhanced the level of HO decisions by merely emphasizing a particular dimension such as signalling and beamforming, they lack comprehensive and smart designs. The proposed OHMDLNN model bridges these gaps by leveraging deep learning to integrate both network-side and UE KPIs. This approach achieves superior accuracy and overall performance metrics.

This section provides an overview of the proposed model, its algorithmic workflow, and the dataset used in the proposed approach. It also describes the detailed architecture design and the execution of the training process.

The OHMDLNN handoff decision model is assessed within a 5G heterogeneous cellular network environment. The system considers different base stations and mobile users possessing diverse mobility patterns, in a dense user scenario, where handoffs are expected to happen frequently. Furthermore, the UE is modelled to navigate along the cell boundaries, creating dynamic handover events. The mobility management (MM) problem is defined as a multi-class classification task, where the purpose is to achieve the optimum HO decision at each timestamp. This HO decision is made by considering both the system-level indicators and the radio link quality parameters. By considering both types of parameters, the model will be able to capture the temporal dependencies as well as optimize HO success while decreasing packet loss, signalling overhead, and latency. The algorithmic workflow of the proposed model is summarized as follows:

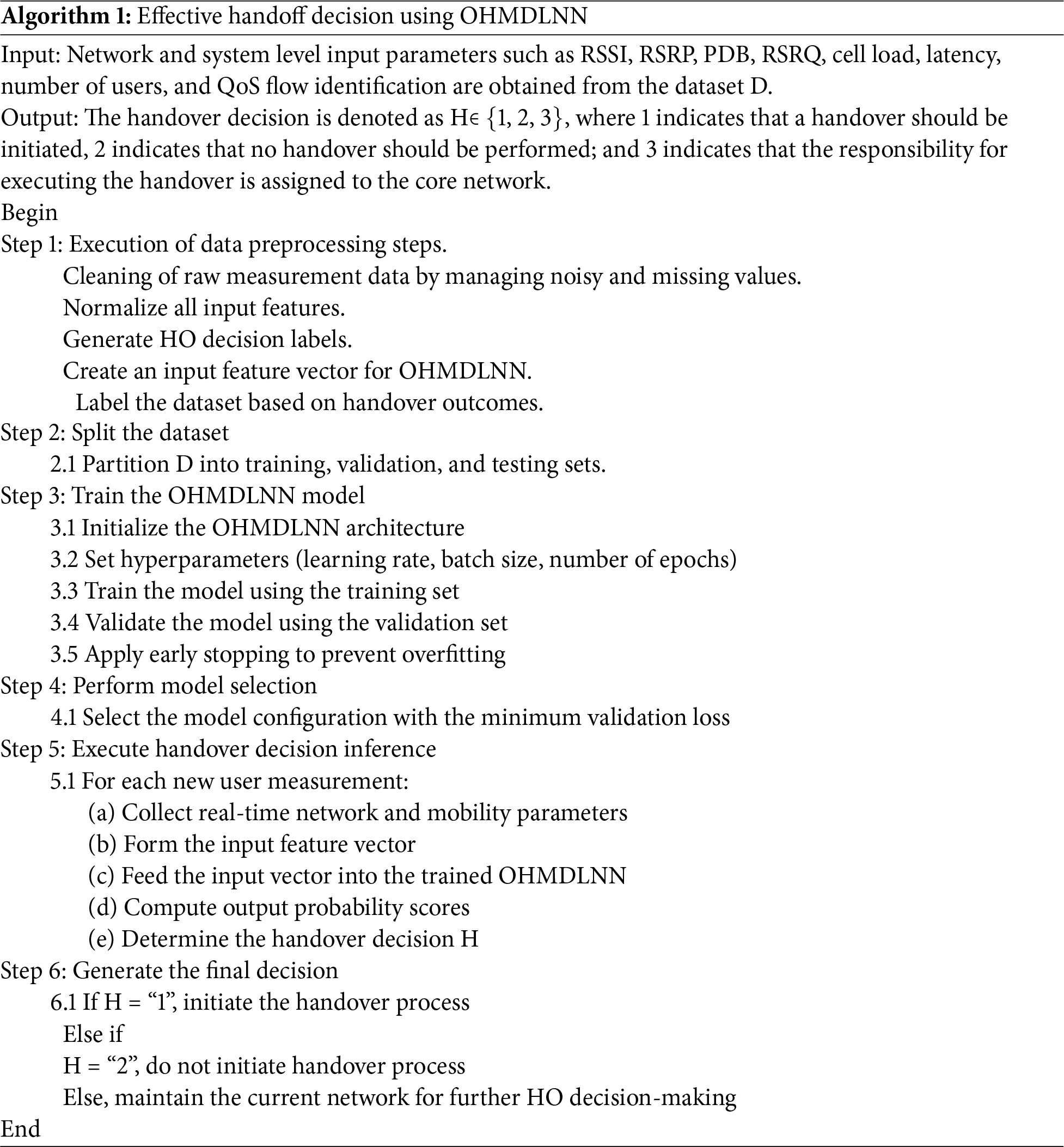

The Algorithm 1 provides a high-level description of the operational flow of the proposed model in a high level, as it demonstrates how the proposed model supports the generation of intelligent and reliable handover decisions. Through a systematic combination of important inputs such as QoS parameters, mobility context and radio measurements. The algorithm is implemented to maximize the network performance to reduce the loss of packets, the delay of handover, and the siganling overhead. Such algorithmic expression forms the basis for the training of the model and the following performance evaluation, which is presented in the subsequent sections.

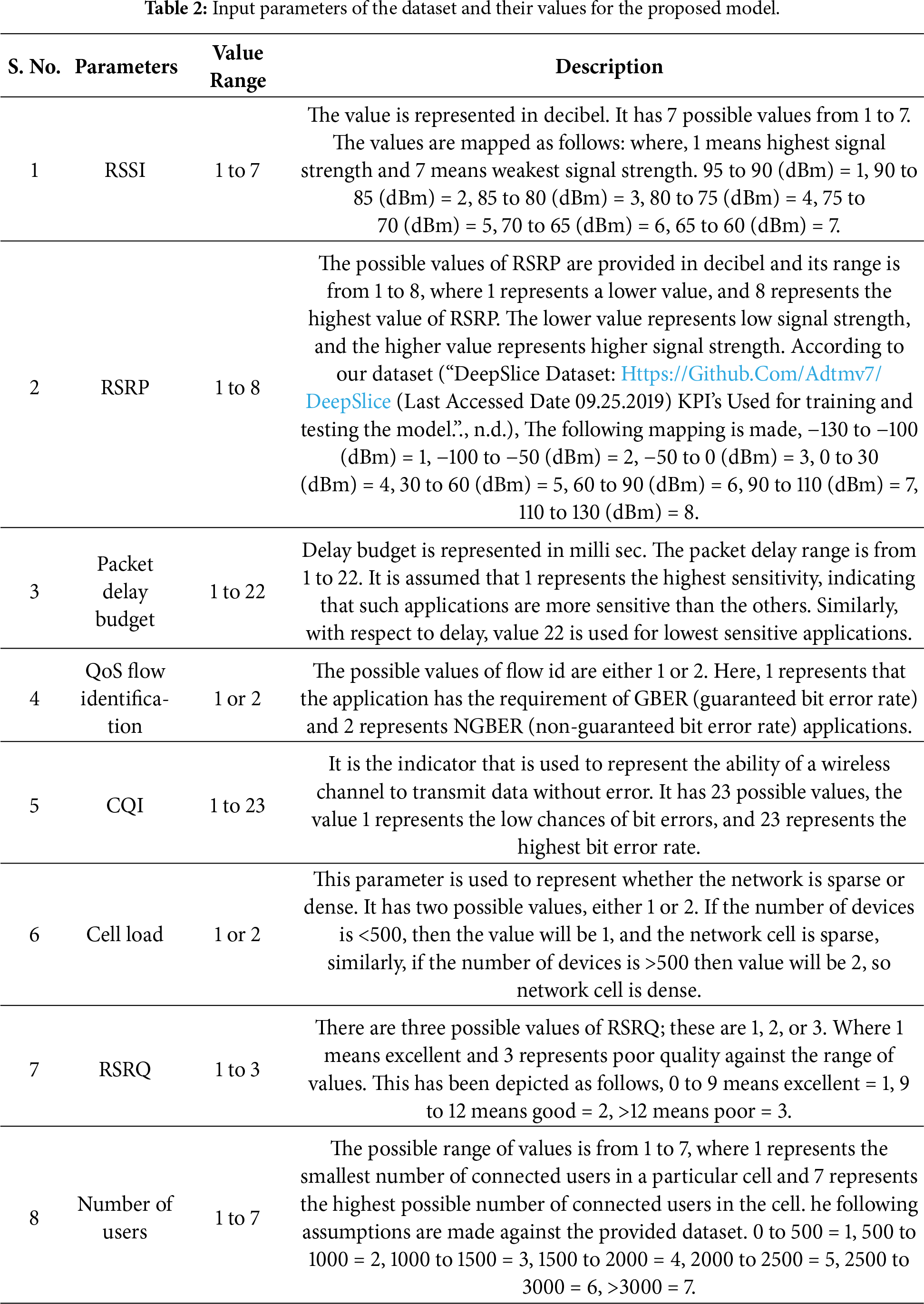

To ensure realistic simulation, key 5G parameters such as handover thresholds, mobility statistics, and path loss characteristics are derived from the DeepSlice dataset [29]. First, the Deep Slice data is used to train the model. However, operational 5G working information was not available during testing. We thus created a simulated 5G environment to create a synthetic data to test our model. As such, the experiment workflow will consist of the following steps: training on the DeepSlice dataset, testing and evaluation on the simulated 5G dataset. This dataset was chosen because it for its relevance and appropriateness to the problem domain of the article. The HO indicators that are employed in this dataset consist of RSSI, RSRQ, RSRP and channel quality indicator (CQI) which are significant measures of quality and strength of any channel. Earlier deep learning models have also utilized these parameters to control HO in 5G and cellular networks [30]. In the same way, the user count, QoS flow identification, and other values are also included in the dataset that provide detailed information about the network conditions and user requirements. Every record in the dataset describes the state of the network at the time of a HO event. Both network-side and user-side features are unified in the dataset to ensure the comprehensive representation of the MM environment. The dataset indicators (input parameters) can be categorized as follows:

• Signal-based include RSSI, RSRP, and RSRQ.

• System-level indicators include latency, HO success rate, packet loss ratio, and throughput.

• Mobility parameters include time, direction, and the speed of the user.

• Environmental factors include user density per cell and interference.

The above set of parameters provides a balanced representation of user experience and network performance. Enabling the model to learn effective decision-making under diverse conditions. Further details of the input parameters in the dataset, along with their corresponding values, are presented in Table 2.

The architecture of the proposed model employs multiple libraries and processing tools. The NumPy and Pandas packages are selected as preferred tools due to their efficient integration of data-handling capabilities with numerical operations. They also provide strong support for data organisation and evaluation functions [31]. Additionally, matplotlib and seaborn libraries are employed to generate graphical representations of data visualization. The input data preprocessing procedures are performed in the following manner:

• Normalization: This is done by using LabelEncoder and StandardScaler of scikit-learn library, to standardize the input features and encode them accordingly.

• Reshaping: The features are organized to a shape of (1, 8) to be used as input to the model.

• Split: The dataset is divided with the help of the train_test_split function to form separate subsets, enabling proper model assessment prior to evaluation. The best results in the training process can be obtained through EarlyStopping along with ModelCheckpoint as a callback function. These methods are used to avoid overfitting and preserve the most successful version of the model.

In addition, the model used stochastic gradient descent (SGD) as well as the Adam optimization algorithm. Similarly, the dataframe of pandas was used to handle and manage the CSV file datasets. The data quality assessment employed two assessment methods: unique value quantity assessment and missing value analysis.

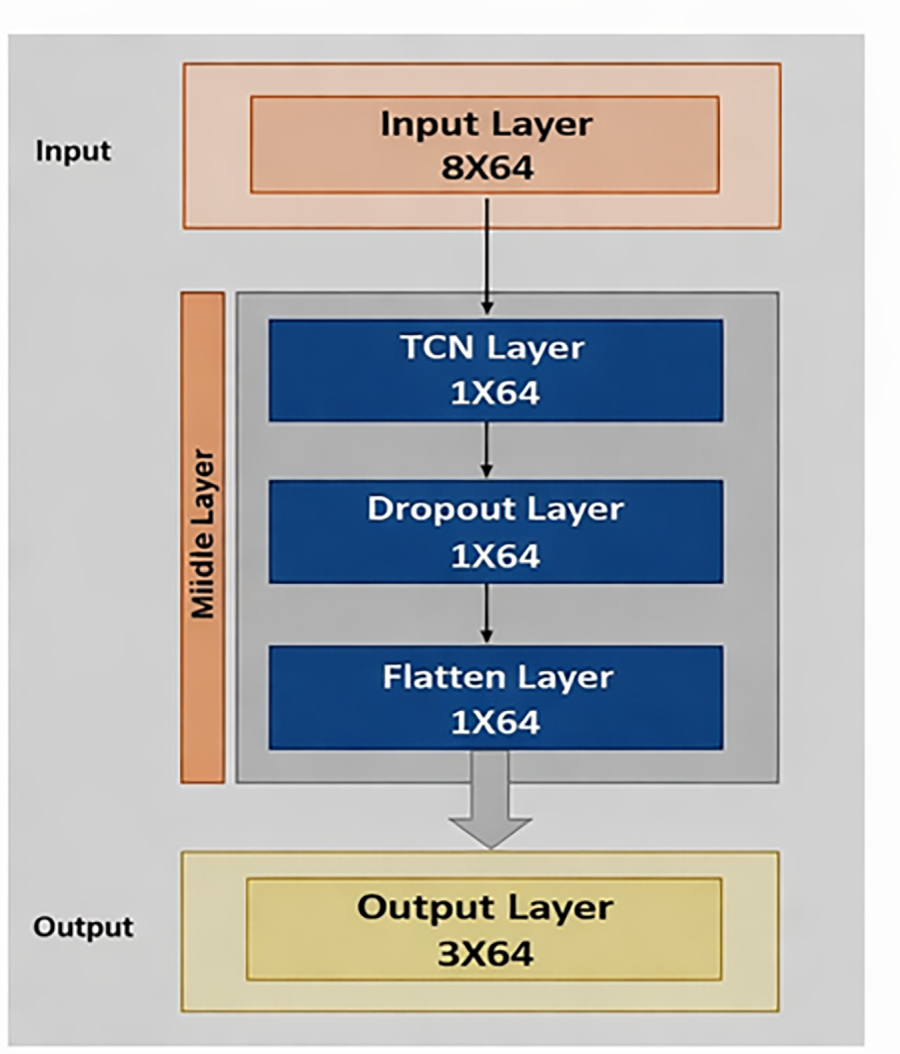

The model performs classification by processing distinct groups of information through its structured layers. These layers include an input layer, a sequence of hidden layers (which consist of a TCN layer, a dropout layer, and a flatten layer), and the output layer, as shown in Fig. 2. The specific functions of each layer are as follows:

Figure 2: Representation of an optimal HO management model, using deep learning neural networks.

This layer serves as the first entity of the model, responsible for receiving data from external sources [32]. Since the OHMDLNN is a form of deep learning neural network, it emulates the format and operation of the human brain. The layer consists of artificial neurons which train on raw data to recognize patterns. First, the input data is restructured to fit the structural demands of the model. The structured data normally incorporates the features such as time series of network characteristics like RSRP, packet delay budget, QoS flow identification, RSSI, channel quality indicator, cell load, RSRQ, and number of users. These features are integrated into the training dataset to capture the network state information, which then serves as input to the OHMDLNN model. The model begins with an input layer configured with one step and eight features, represented by a shape of (1, 8). This is followed by a TCN layer comprising 64 filters, a kernel size of 6, and dilation rates of (1, 2, 4, 8, 16, 32, and 64), enabling the model to effectively process sequences of varying lengths.

The hidden layer further consists of a TCN layer, a dropout layer, and a flatten layer. Further details of the sub-layers within hidden layer are as follows:

TCN Layer

This layer is included in the model as it effectively addresses issues related to sequential data. Therefore, it is particularly suitable for time-series data such as signal strength, network load, signal variations, and other dynamic metrics within a 5G network. Furthermore, this layer improves accuracy, enable causal convolution, support long-range learning, and improve training capabilities.

Dropout Layer

This layer is employed to prevent the model from underfitting or overfitting. It enhances the HO process among base stations and improves the overall performance of 5G networks. The model incorporates the layer for the following purposes:

• Overfitting prevention: Overly complex models may learn signal strength behaviour patterns for 5G networks that perform well on training data. However, these patterns are not appropriate for real-world deployments that involve new or different scenarios. To address this issue, the dropout layer temporarily deactivates a fraction of neurons during training. It also assigns lower weights to the remaining ones.

• Enhanced model robustness: The layer enables the model to learn more generalised representations of the network data (e.g., signal strength and mobility). This makes it more robust to fluctuations in real-time network conditions and, consequently, improves HO decisions.

• Reduced model complexity: The layer also reduces the dimensionality of the learned features by eliminating irrelevant information (noise) from the model.

Flatten Layer

This layer consists of 64 neurons and a single output. Its purpose is to transform the data in a way that aligns with the output layer. The flatten layer receives data in a multi-dimensional format, such as signal strength, location information, and patterns of user and network traffic. It then converts these multi-dimensional features into a one-dimensional vector, which is subsequently passed to the output layer for further processing and decision-making. This layer is added due to its capacity for preserving the feature information and improving the computational efficiency. Moreover, the Tanh (hyperbolic tangent) and ReLU (rectified linear unit) activation functions are used in hidden layer. The Tanh function, which ranges between −1 and 1, helps scale the input values and enhances the stability of the neural network. Furthermore, the Tanh function is also used to reduce the risk of vanishing gradients in the backpropagation phase.

The ReLU function is only activates for positive inputs. This feature can be used to avoid the vanishing gradient problem and speed up the rate of convergence in the training stage. It records complex patterns in signal strength variations and user movements. Similarly, ReLU can also be applied to improve the reliability of the network and the experience of the users. It forecasts the right time for a HO, thereby improving network performance and service quality.

This is the last layer of the model, where the Softmax activation function is employed for classification. It is widely used in neural networks to convert raw scores (logits) into class probabilities. The outcome can then be easily interpreted by selecting the class with the highest probability. The model defines three possible output classes, which are interpreted as follows:

Class 1: indicates that HO is needed and should occur, as a result handover preparation phase is started.

Class 2: indicates that HO will not occur, therefore no action is required from the system and standard measurement reports continue.

Class 3: indicates that a conditional boundary state has been identified. Therefore, the system will buffer the target information, start a time-to-trigger timer and increase measurement report frequency.

All three classes contribute equally to the accuracy metric. Specifically, class 3 triggers the enhanced monitoring state as described. Every prediction from each class is included in the performance evaluation.

3.5 Mathematical Representations of Proposed Model

The proposed model in mathematical form can be represented as follows:

In Eq. (1),

The output of OHMDLNN is computed using Eq. (2), where EL denotes the embedding layer. Similarly,

where,

here,

In Eq. (5),

HL represents the output of the hidden layer, after dropout regularization.

W0 represents the weight matrix of the output layer.

b0 is the bias factor for the output layer.

Tanh is used as the hyperbolic tangent activation function; it normalizes the output and represents both positive and negative values.

Finally, X denotes the residual connection that facilitates gradient flow through the deeper layers of the network

The final output of the model is computed by applying the softmax operation to

The model is trained on a dataset of 65,000 records. To ensure rigorous development and assessment of the model, the data is split into three separate subsets: training, validation, and testing with the ratios of 70, 15, and 15 percent, respectively. This data splitting adheres to a conventional hold-out validation approach, which is necessary for achieving an unbiased assessment of the model. The model training is executed under the following settings:

• Optimizer: SGD optimizer with momentum.

• Learning rate: 0.01.

• Loss function: sparse categorical cross-entropy.

• Batch size: 64.

• Epochs: 16 epochs, with early stopping implemented to prevent overfitting.

Validation Strategy

The validation set is a key part of model development. It is applied in hyperparameter tuning, where a grid search is performed to optimize important hyperparameters, and the model is chosen according to its results. Validation set performance metrics include accuracy and loss monitored every epoch. This is a good predictor of how the model would behave on unknown data. They direct early termination of the process and help to avoid overfitting during the weight update process. Once the training and hyperparameter optimization have been completed, the model is tested on the held-out test set. This test provides a final and objective estimate of how well the proposed model can generalize to new, unknown data.

3.7 Practical Use-Cases and Deployment Scenarios

The proposed model can be utilized in different scenarios of intelligent handover management. It is most effectively used to provide dependable communication with low latency [33]. In enhanced mobile broadband scenarios, it helps to support seamless connectivity for high-speed users by minimizing packet loss and enhancing the handover success ratio [11]. In the case of low-latency, high-reliability, such as remote sensing and self-driving cars, it provides reliable and continuous data transmission. Moreover, in the context of heterogeneous networks with high population density, the model can be used to make smart handover decisions based on network-level KPIs, leading to improvements in overall quality of service and network stability. The combination of these applications demonstrates the usefulness and feasibility of the proposed model in real 5G networks.

This section describes the simulation setup and the baseline models used for comparison. It further describes the evaluation metrics and the results and discussion of the proposed model.

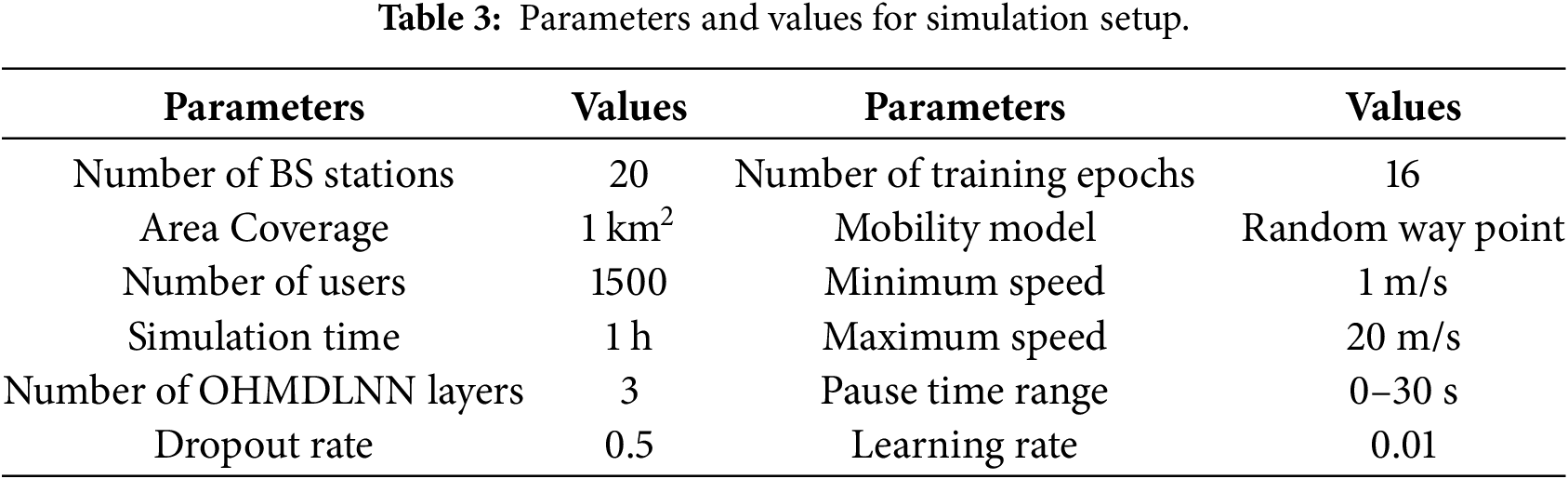

The exploration and evaluation of the OHMDLNN model were conducted to ensure its reliability and generalisability within a well-established 5G simulation environment. Simu5G, a simulator based on OMNeT++ [34] enables realistic deployment of 5G NR and supports mobility management functionalities. It provides accurate modelling of base stations, user equipment behaviour, and radio channel conditions in both urban and suburban settings. Table 3 presents the simulation parameters selected to replicate real-world 5G network conditions. A simulation duration of one hour was used to enable detailed performance analysis. The model was trained over 16 epochs with a batch size of 64. To minimise overfitting and maintain stable convergence, the hyperparameters were empirically optimised, resulting in a dropout rate of 0.5 and a learning rate of 0.01. Multiple test runs were conducted to identify the best balance between model complexity and predictive accuracy, which ultimately led to the selection of a configuration comprising three hidden layers.

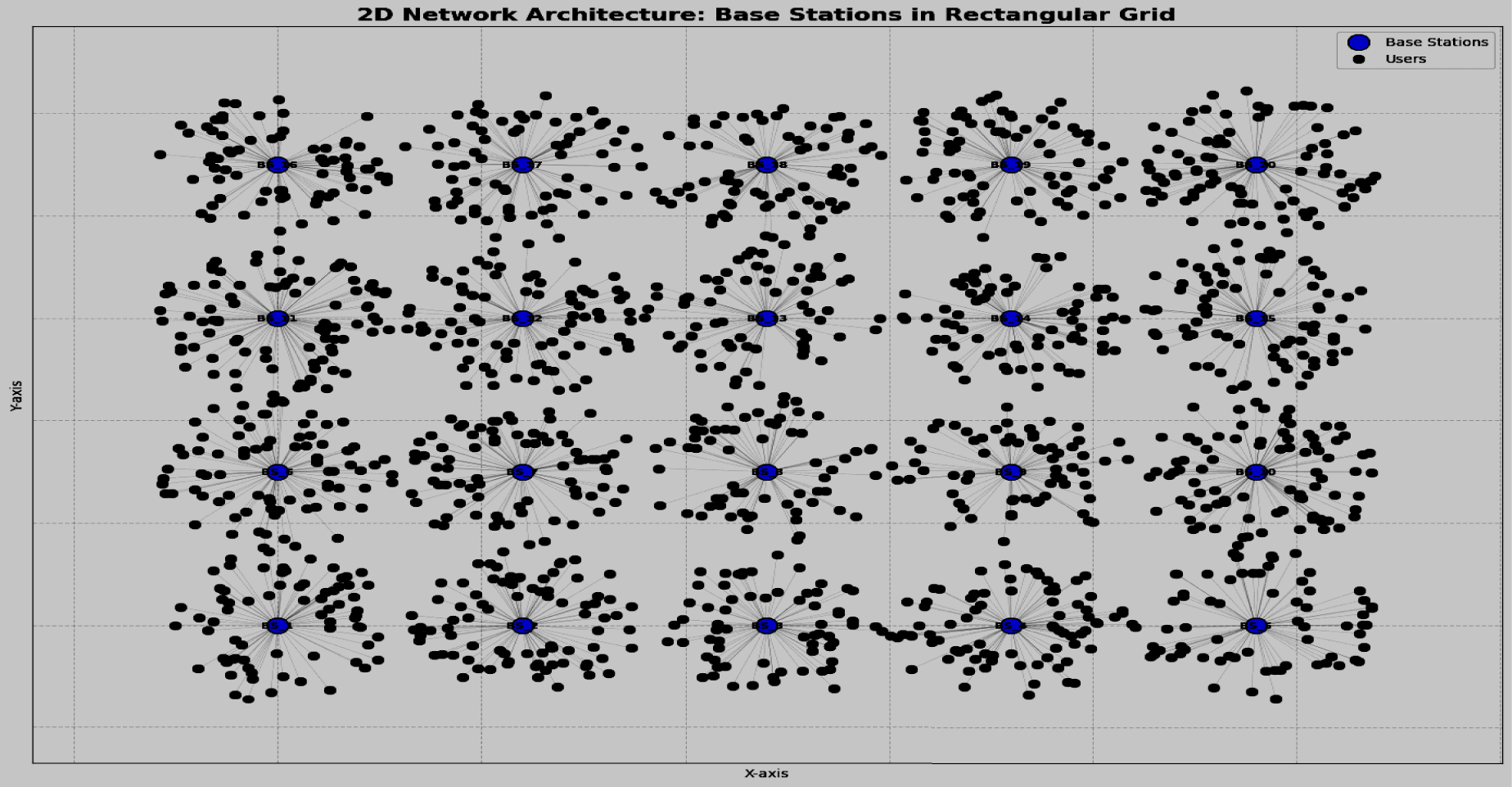

The random waypoint mobility model [35] is used to introduce realistic mobility into the simulation, as shown in Fig. 3.

Figure 3: Deployment of nodes and base stations.

It has a minimum speed of 1 m/s, a maximum speed of 20 m/s, and a pause period of 0 to 30 s. A one-hour simulation is conducted to allow a detailed performance analysis of the OHMDLNN model. The comparison is based on the packet loss ratio, handover success rate, handover latency, and throughput.

The performance of the proposed model was evaluated using performance measures such as accuracy, loss, precision, and the confusion matrix during validation. It was compared with state-of-the-art deep learning neural network models. With respect to network-specific metrics, the model was assessed based on packet loss ratio, handover latency, handover success rate, and throughput. Further details of these metrics are provided in the results and discussion section.

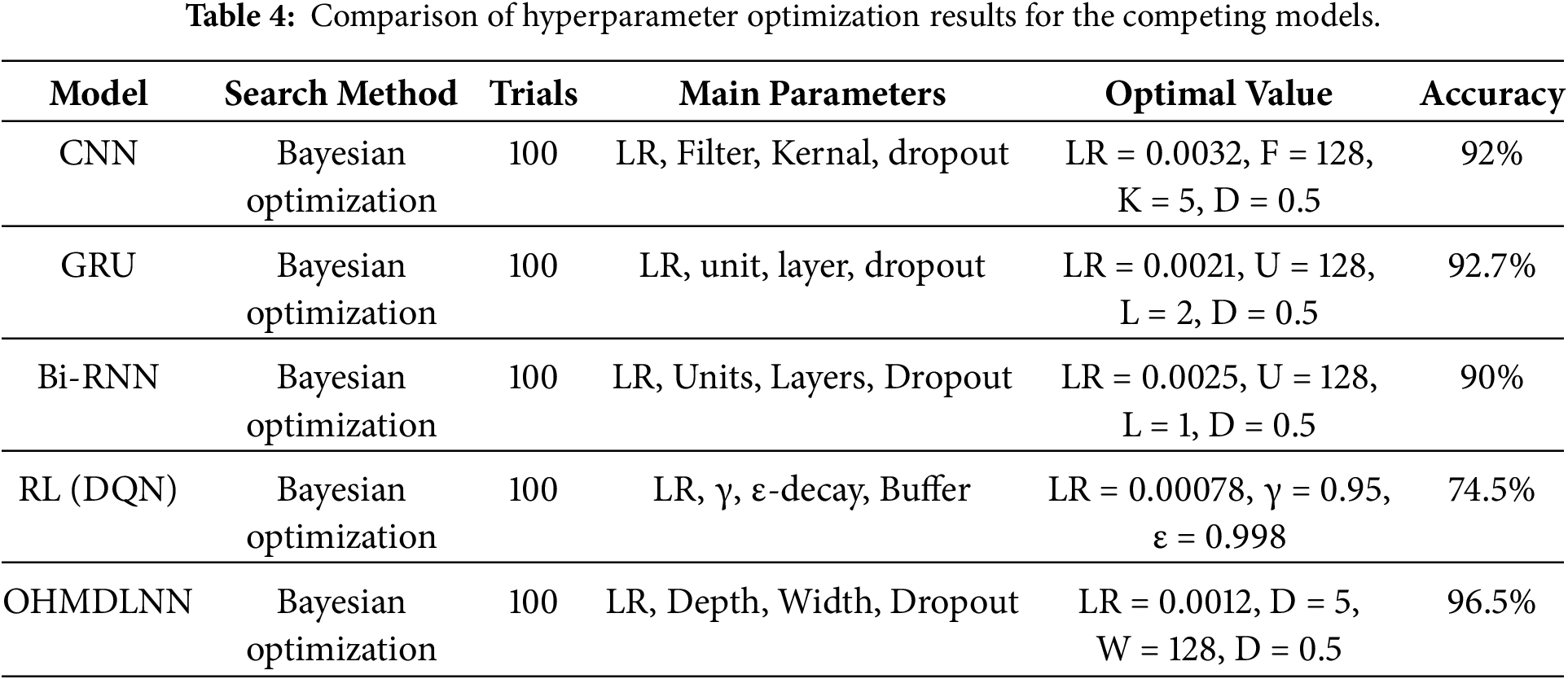

4.3 Baseline Models for Comparison

The OHMDLNN is compared against a set of baseline models, which include both traditional and machine learning approaches. To make the comparison fair, all models were optimized using 100 trials per architecture. This optimization was conducted under the same hyperparameter search settings using a Bayesian optimizer. In each trial, 5-fold cross-validation was applied during training with early stopping (patience = 50). All models were allocated the same computational budget, ensuring that performance differences reflect architectural capabilities rather than tuning advantages. The optimal configurations are presented in Table 4. Thereafter, the performance of the proposed model was tested using our simulated dataset, which comprises 65,000 records generated with Simu5G and originally derived from the DeepSlice dataset [29].

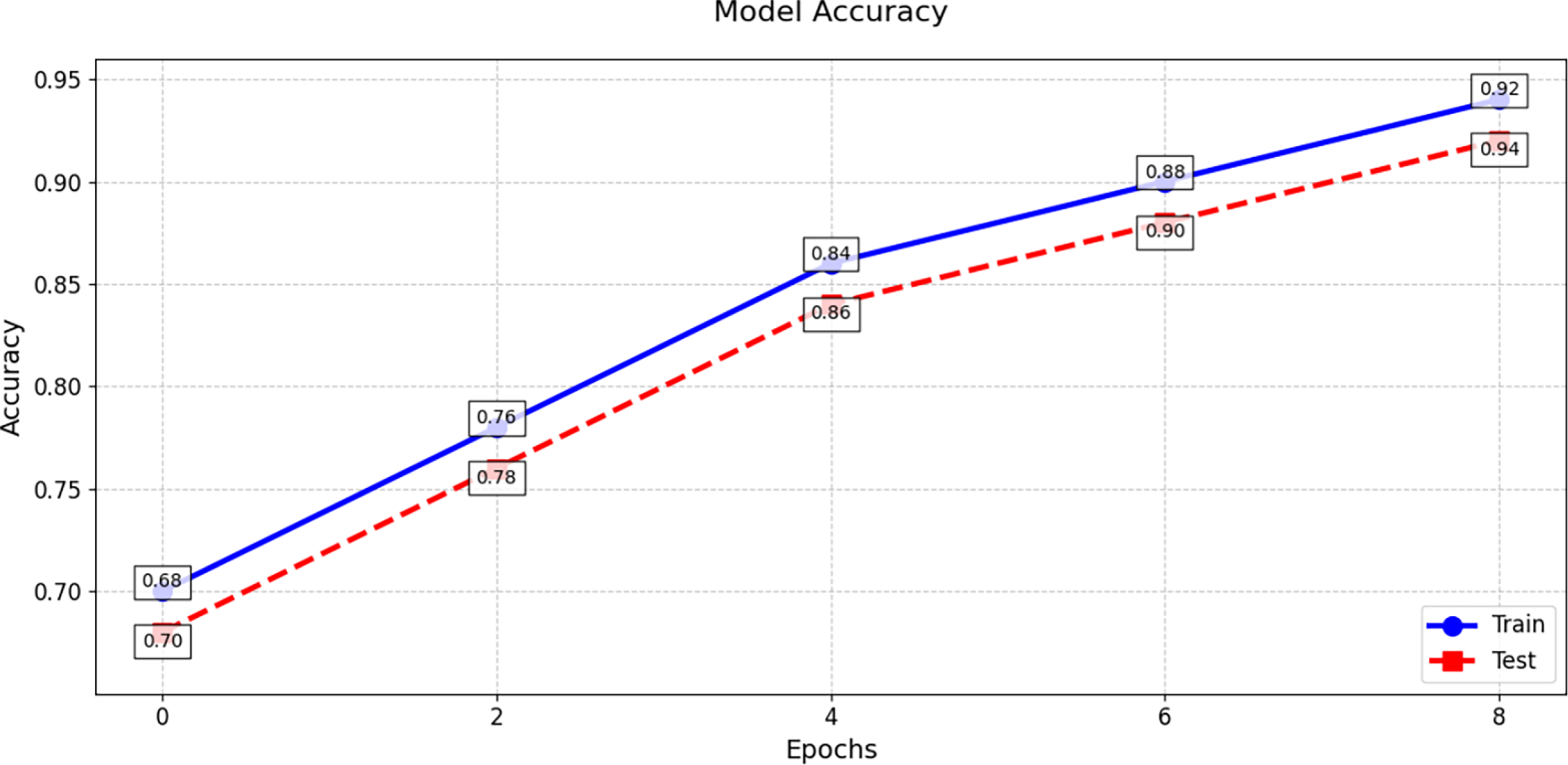

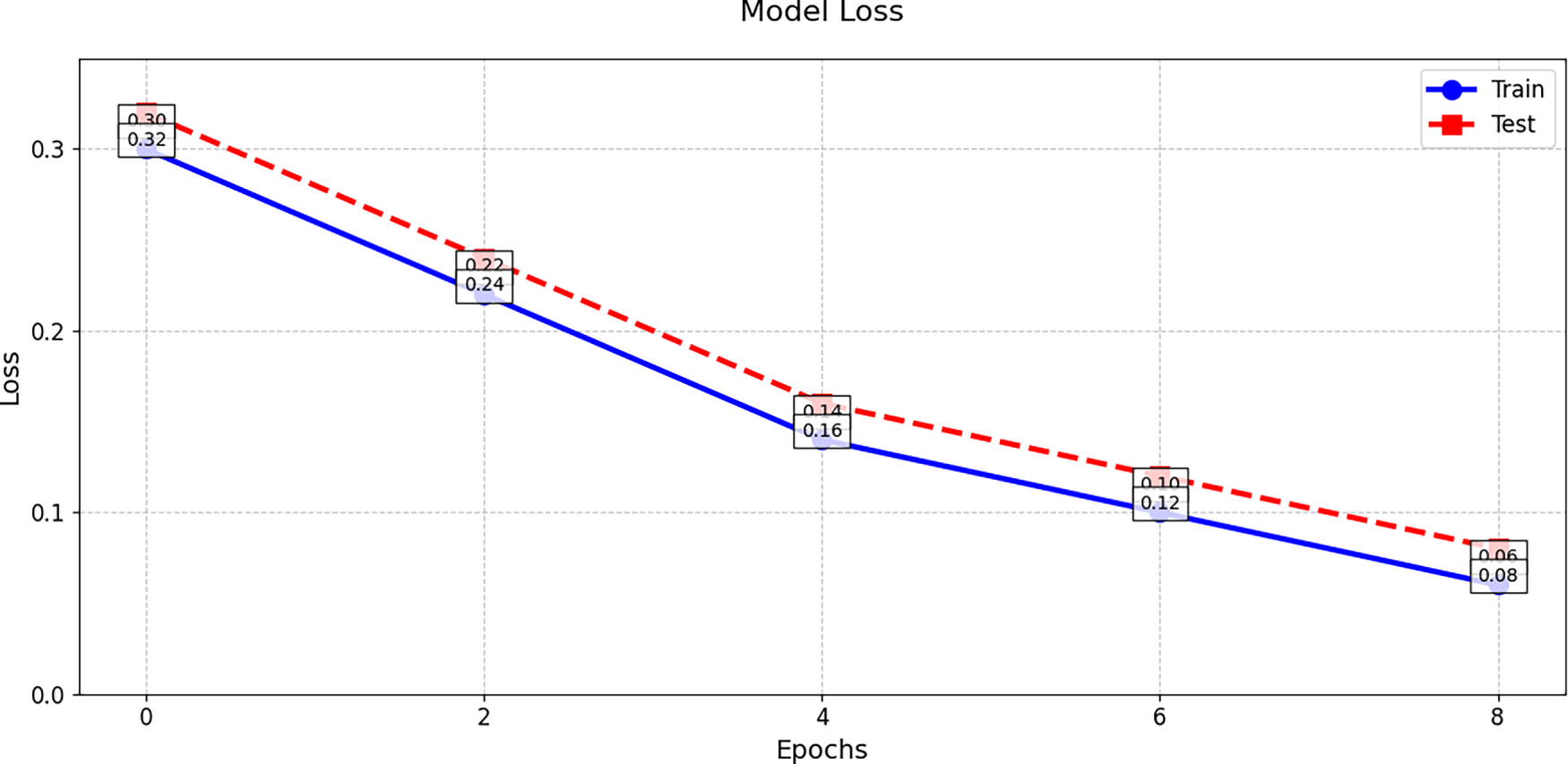

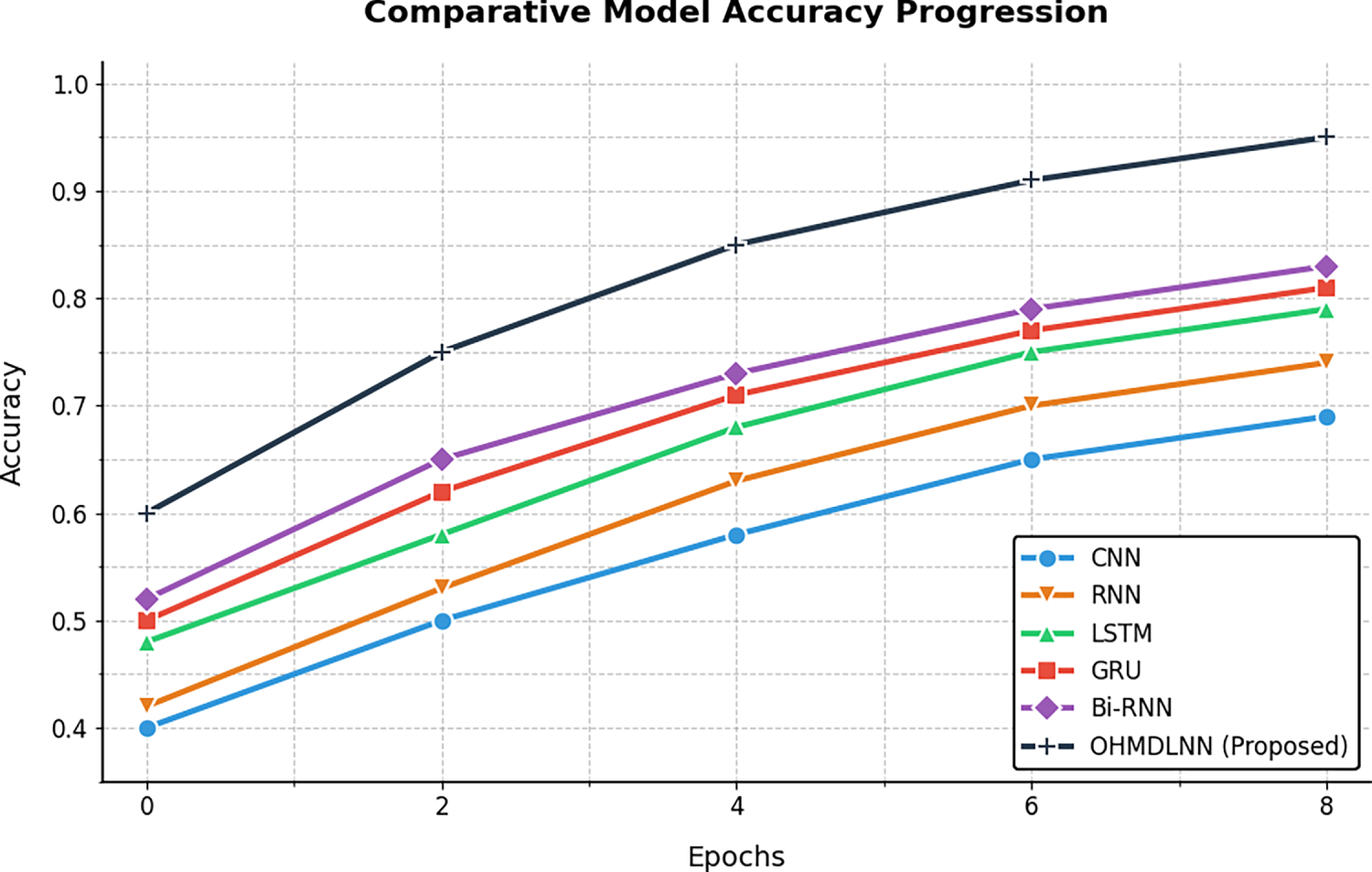

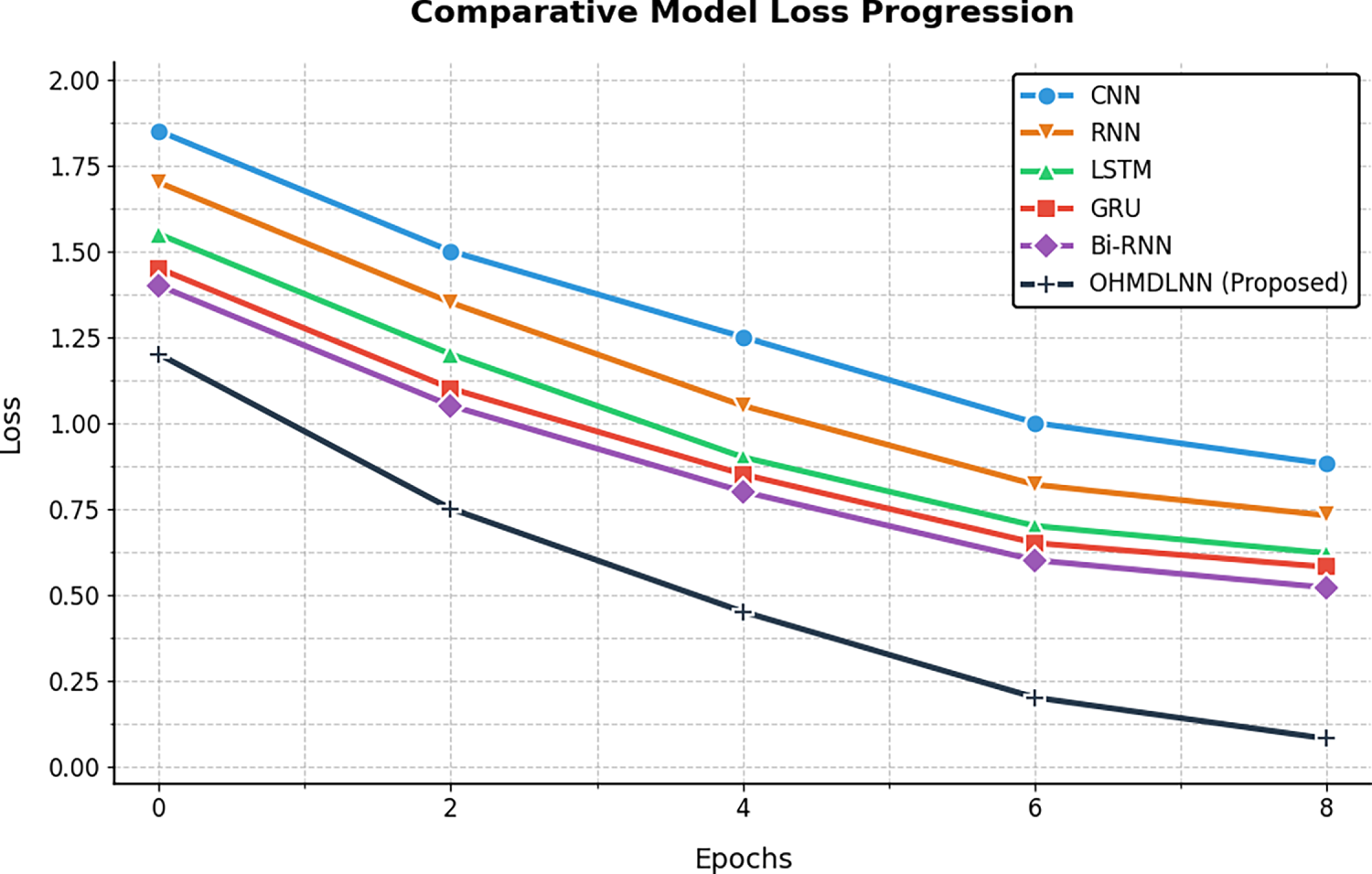

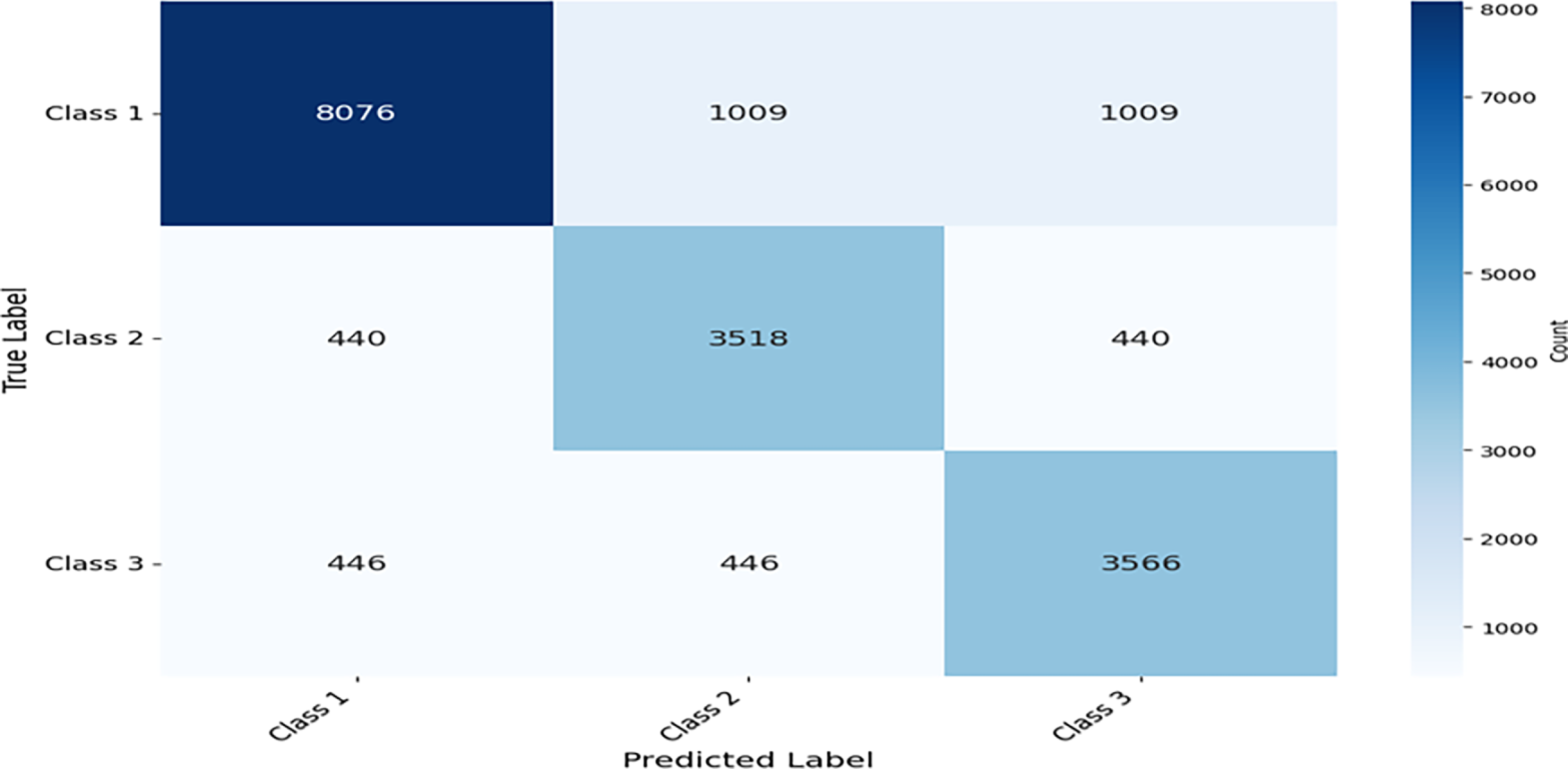

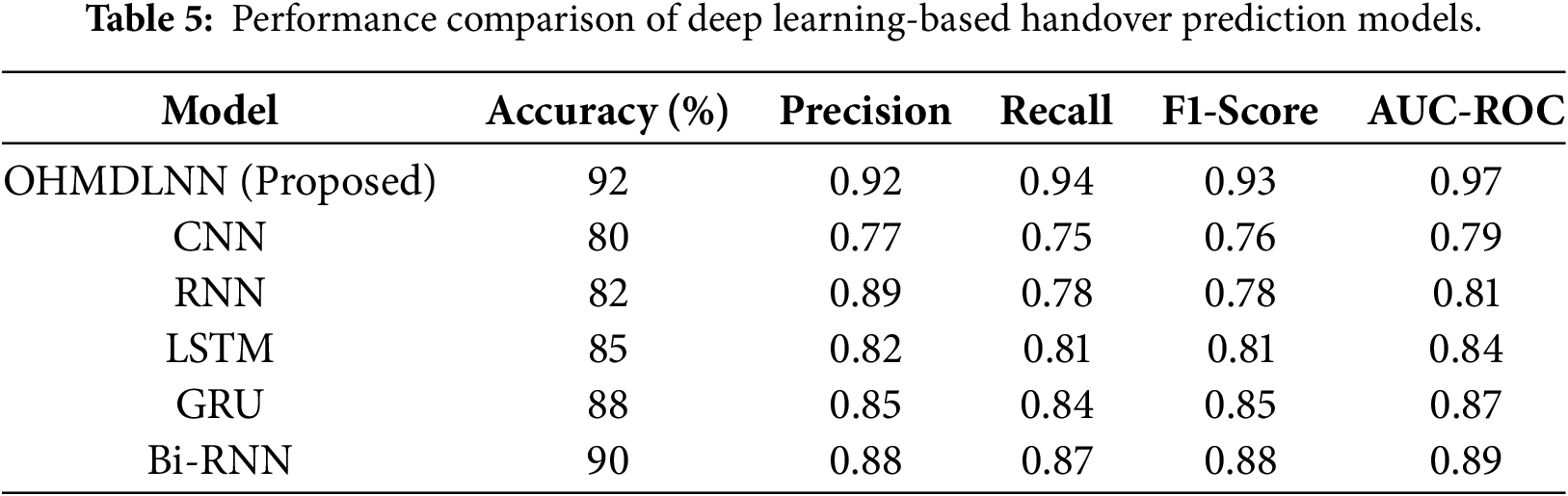

The training and validation accuracy and loss values are plotted to illustrate the model’s learning curve in Figs. 4 and 5, respectively. Likewise, Figs. 6 and 7 present a comparison of the accuracy and loss of the OHMDLNN model with other neural network models for handover management using the same dataset. Furthermore, predictions made on the test data were used to generate a confusion matrix to evaluate the model’s classification performance. The matrix indicates the model’s effectiveness across three classes. In this matrix, each row represents the actual class labels, while each column represents the predicted class labels. The first row of the matrix shows 8076 correct predictions (true positives) and 1009 incorrect predictions (false negatives). The second row shows that the model correctly predicted the second class (class B) 3518 times, with 440 misclassifications. Likewise, the third row shows that the model correctly predicted the third class (class C) 3518 times, and 446 misclassifications as shown in Fig. 8. Next, a comparison of deep learning models with the proposed model is presented using multiple parameters, as described in Table 5. While the RNN has low latency, it cannot deal with long-term dependencies in time-series data, resulting in low accuracy and a low F1-score. Similarly, the RNN and GRU models are not only weak in terms of multi-feature fusion but also lack bidirectional context capture. Moreover, Bi-RNN does not have the capacity to capture spatial features. Conversely, the proposed model achieves competitive accuracy and a low F1-score, making it suitable for real-time 5G handover.

Figure 4: OHMDLNN accuracy upon validation as well as training.

Figure 5: OHMDLNN loss upon validation as well as training.

Figure 6: Comparison of the accuracy of OHMDLNN with other neural network models for handoff management using the same dataset.

Figure 7: Comparison of the loss of OHMDLNN with other neural network models for handoff management using the same dataset.

Figure 8: Confusion matrix for OHMDLNN portraying predicted vs. actual labels.

Furthermore, in the subsequent section, the performance of the OHMDLNN model is compared with speed-based algorithms, fuzzy logic methods, decision tree-based approaches, hybrid techniques, and other deep learning neural network-based HO management models. The comparison against these models is based on important performance metrics, encompassing packet loss ratio, HO latency, HO success rate, and throughput. The details and corresponding results for each of these metrics are presented as follows:

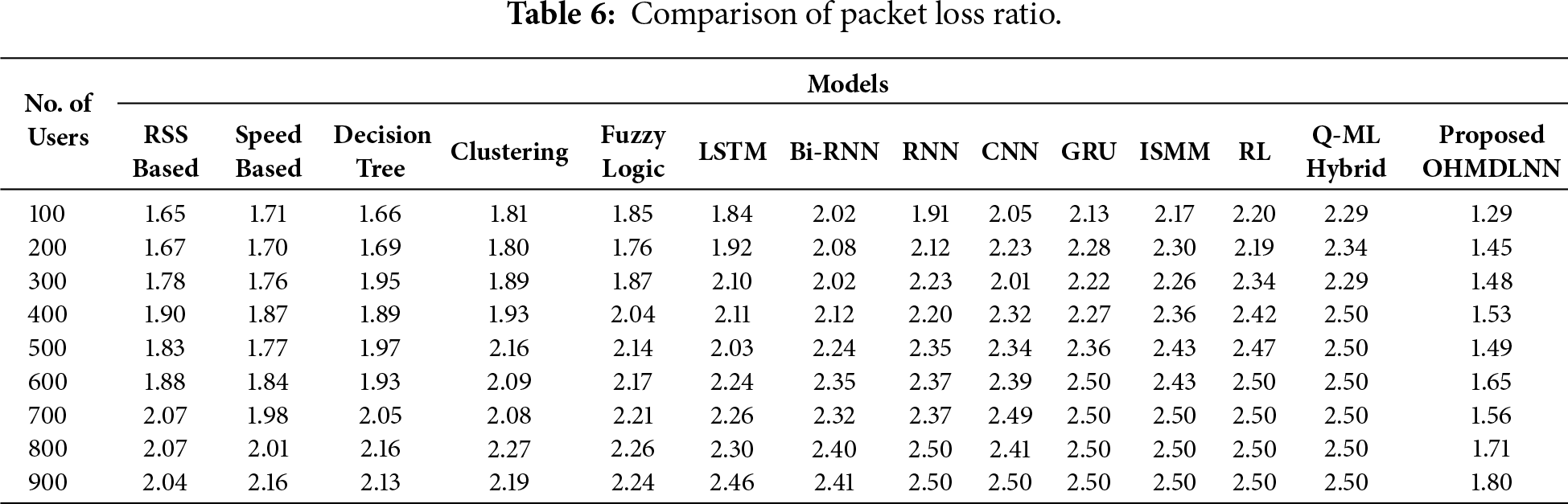

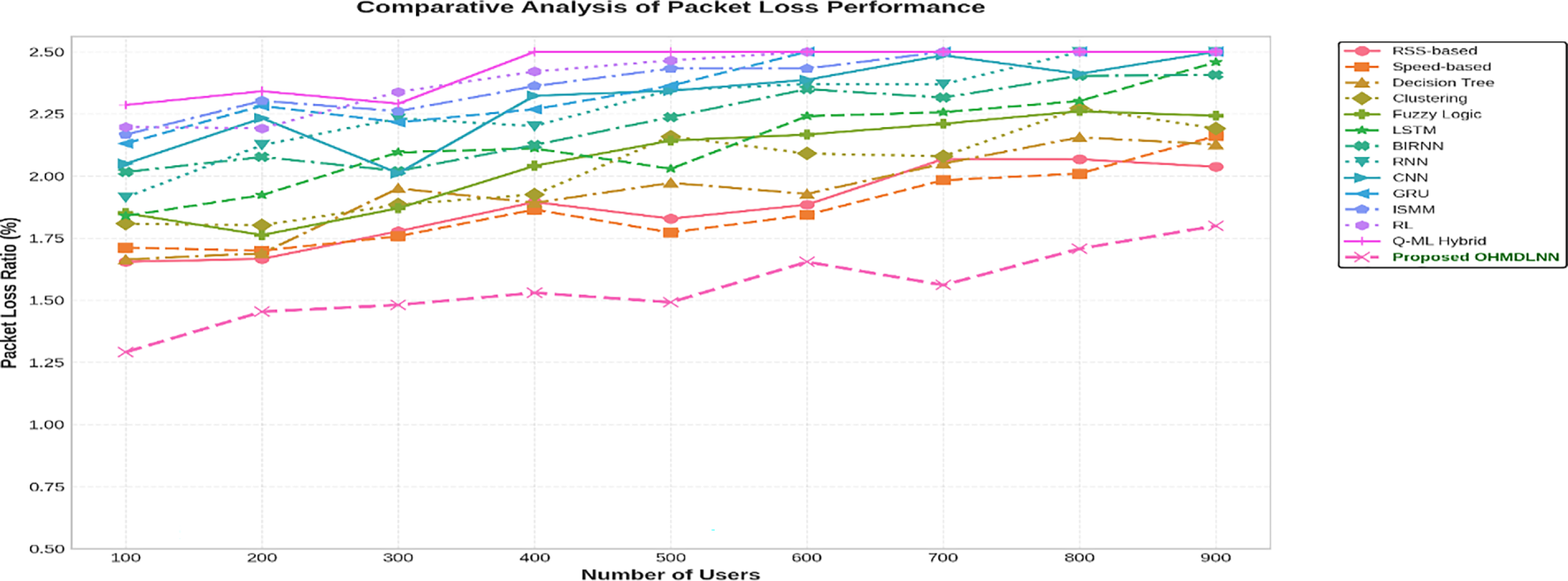

5.1 Assessment of Packet Loss Rate

The packet loss rate for MM in 5G networks refers to the percentage of data packets that are not successfully delivered or received during the handover process. The proposed model will predict the need for a handover, thereby reducing the packet loss. This reduction facilitates communication and provides a stable packet transfer.

Table 6 presents the estimated packet loss rate as the number of users increases and compares the results with different network models. Based on Table 6, Fig. 9 illustrates that the lower packet loss rate is achieved by the proposed model with loss rates shown on the Y-axis. The summary of the packet loss rates for the compared models is as follows: RSS-based 2.4%, speed based 2.11%, decision tree 2.13%, clustering 2.19%, fuzzy logic 2.24%, LSTM 2.46%, Bi-RNN 2.41%, RNN 2.50%, CNN 2.50%, GRU 2.50%, ISMM 2.50%, RL 2.50%, Q-ML hybrid 2.50% and the proposed OHMDLNN model has 1.80%.

Figure 9: Packet loss comparison against the number of users.

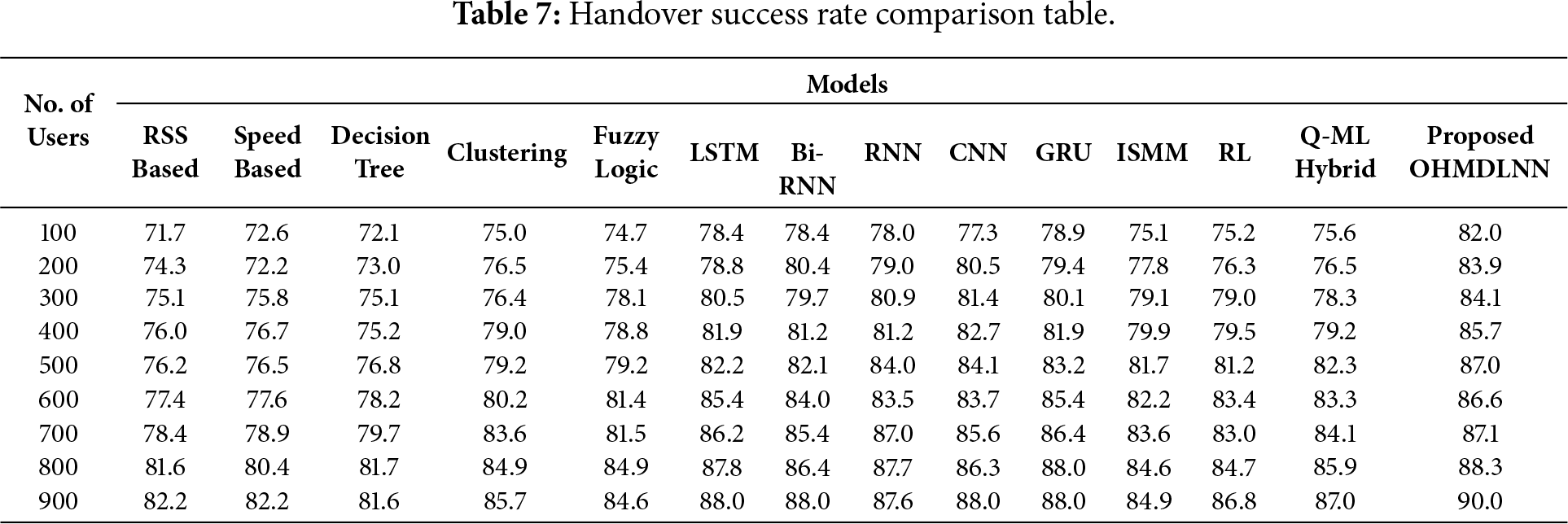

5.2 Assessment of HO Success Rate

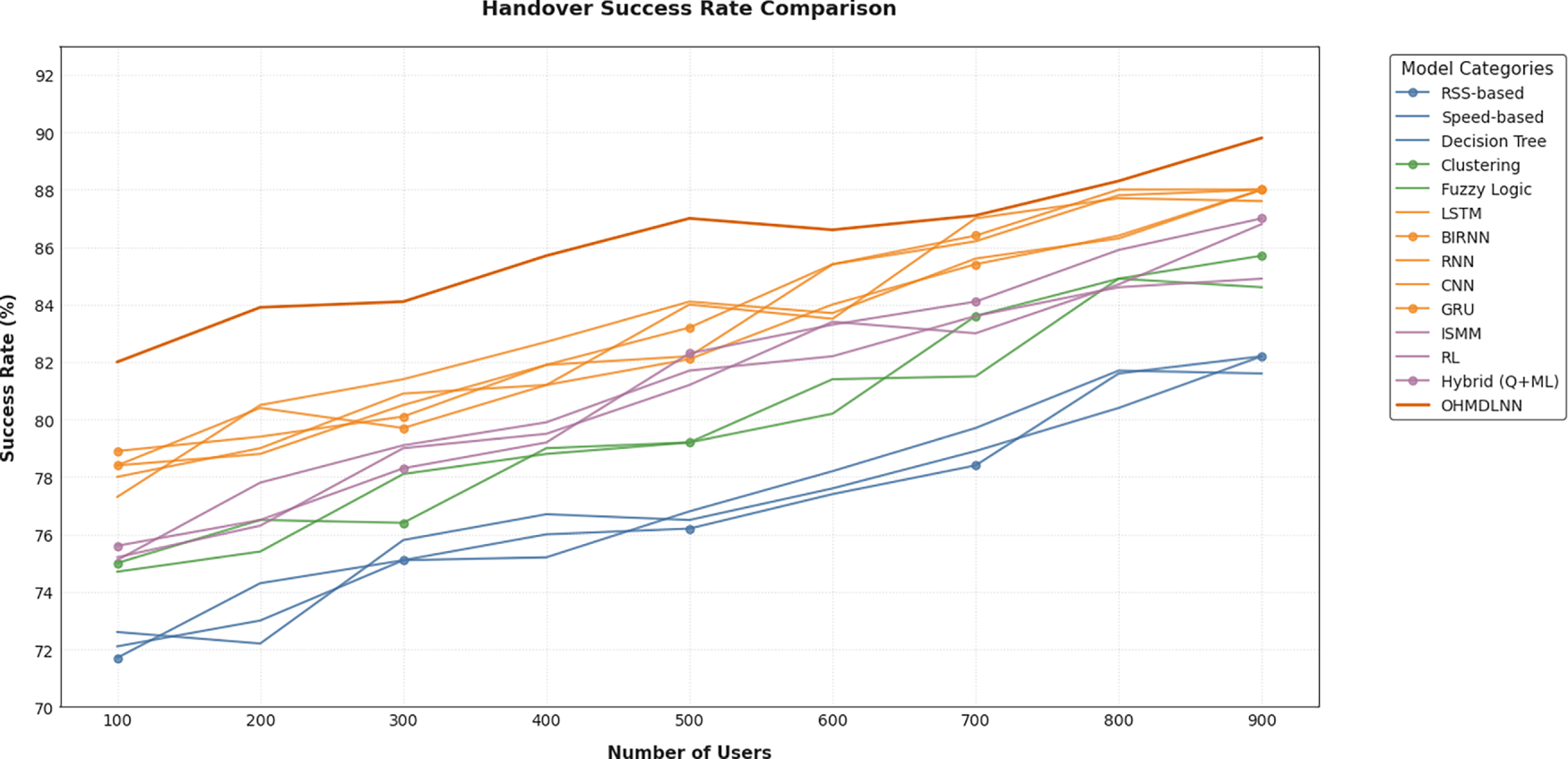

The high success rates play a significant role in the service continuity and an ideal user experience, particularly in high-mobility situations. This rate is used to measures the proportion of successful handovers out of all handover attempts. An increase in the success percentage proves that the OHMDLNN model is effective at classifying handover situations and minimizing failures in heterogeneous and dense network systems. Table 7 presents the estimated HO success rate with the increase in the number of users and compares the results with other models. According to Table 7, Fig. 10 shows that the success rate of the proposed model is the highest. The summary of the HO success rates for the compared models is as follows: RSS-based 82.2%, speed based 82.2%, decision tree 81.6%, clustering 85.7%, fuzzy logic 84.6%, LSTM 88.0%, Bi-RNN 88.0%, RNN 87.6%, CNN 88.0%, GRU 88.0%, ISMM 84.9%, RL 86.8%, Q-ML hybrid 87.0% and the proposed OHMDLNN model has 90.0%.

Figure 10: HO success rate comparison against the number of users.

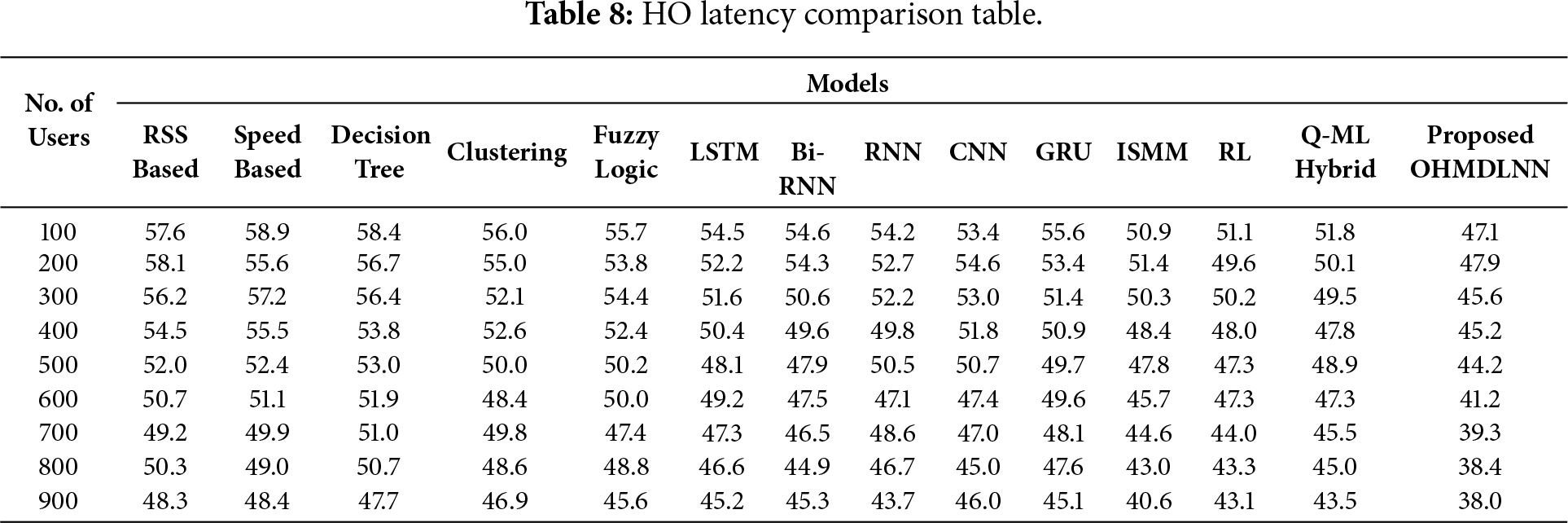

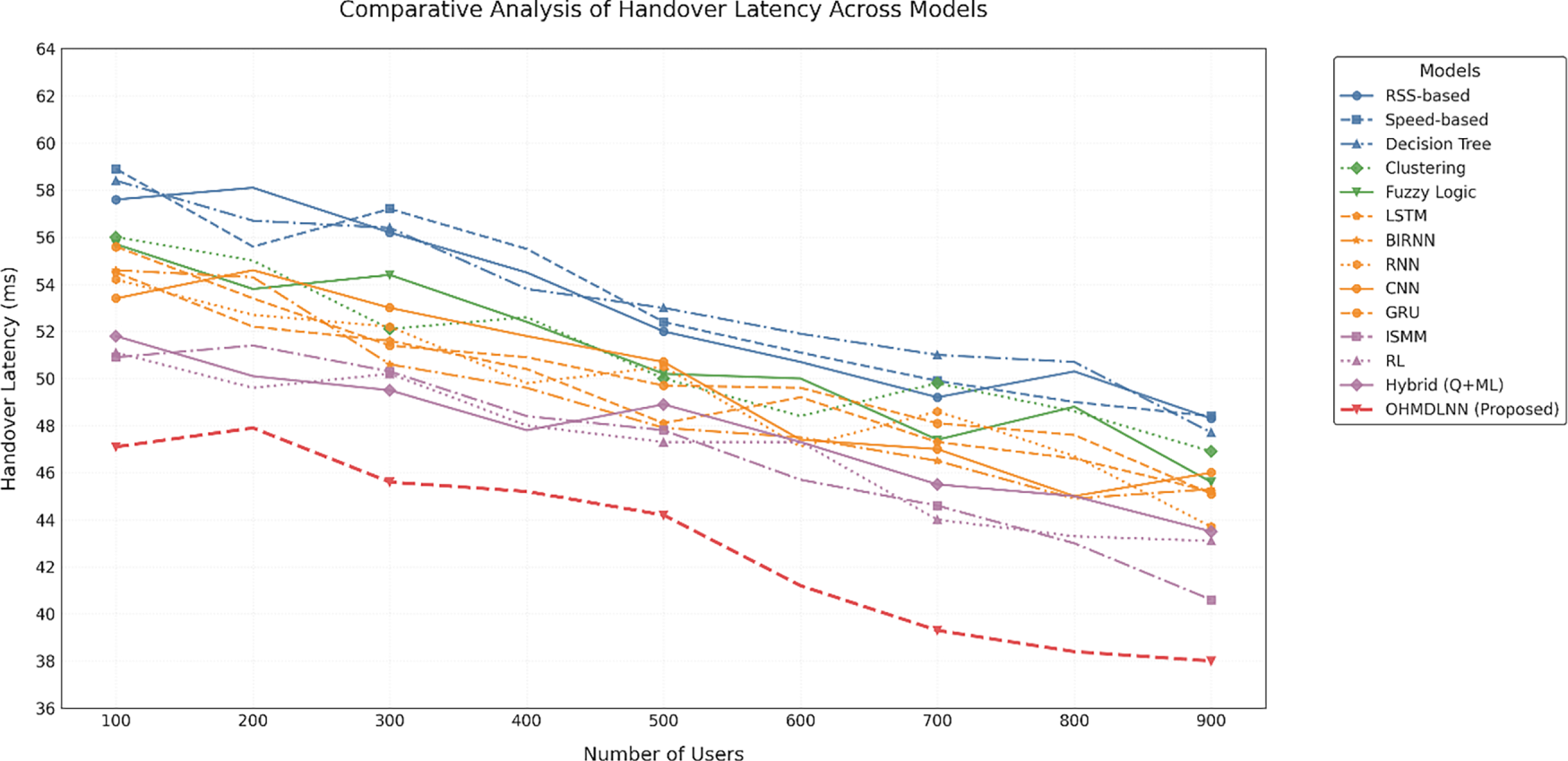

HO latency is defined as the time interval from the start of a handover decision until the user equipment connects to the target base station. The HO latency plays a critical role in ensuring continuous service provision and an optimal user experience in high-mobility scenarios. The proposed model accounts for both low and high user mobility ranges since incorrect handover decisions can lead to service interruptions. Furthermore, HO latency is reduced by making accurate handover predictions and executing the process efficiently. Table 8 presents the estimated HO latency with the increase in the number of users and compares it with other models. According to Table 8 and Fig. 11, the HO latency values of the compared models are as follows: RSS-based 48.3 ms, speed based 48.4 ms, decision tree 47.7 ms, clustering 46.9 ms, fuzzy logic 45.6 ms, LSTM 45.2 ms, Bi-RNN 45.3 ms, RNN 43.7 ms, CNN 46.1 ms, GRU 45.1 ms, ISMM 40.6 ms, RL 43.1 ms, Q-ML hybrid 43.5 ms and OHMDLNN 38.0 ms.

Figure 11: HO latency comparison against the number of users.

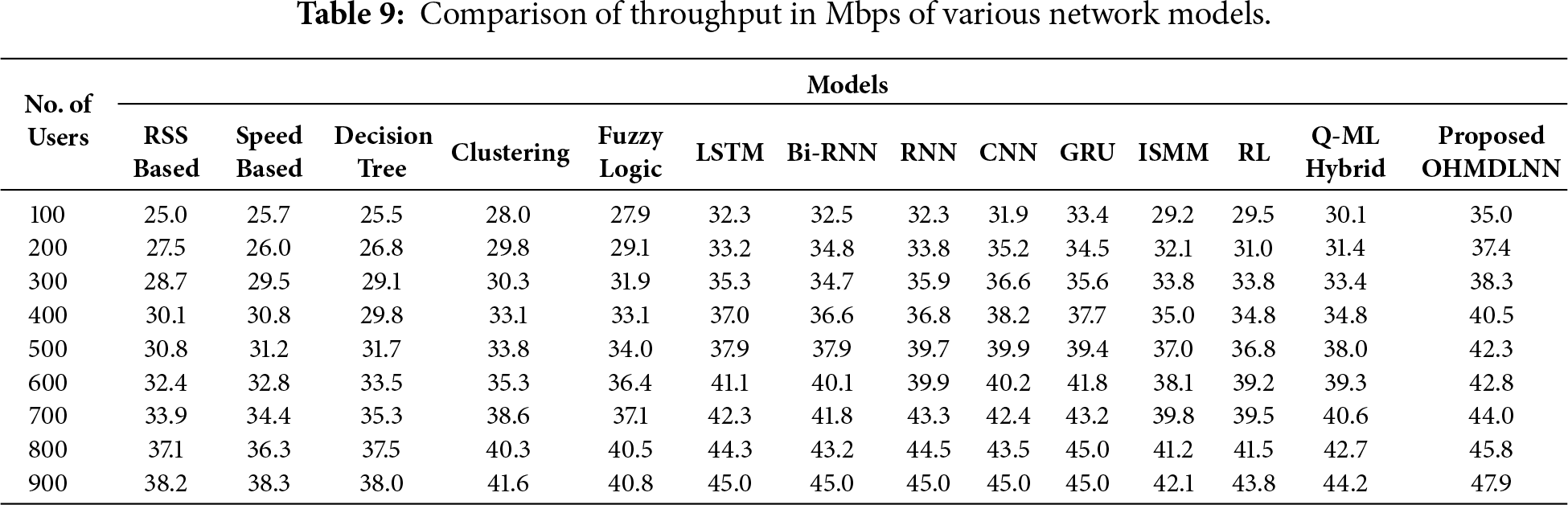

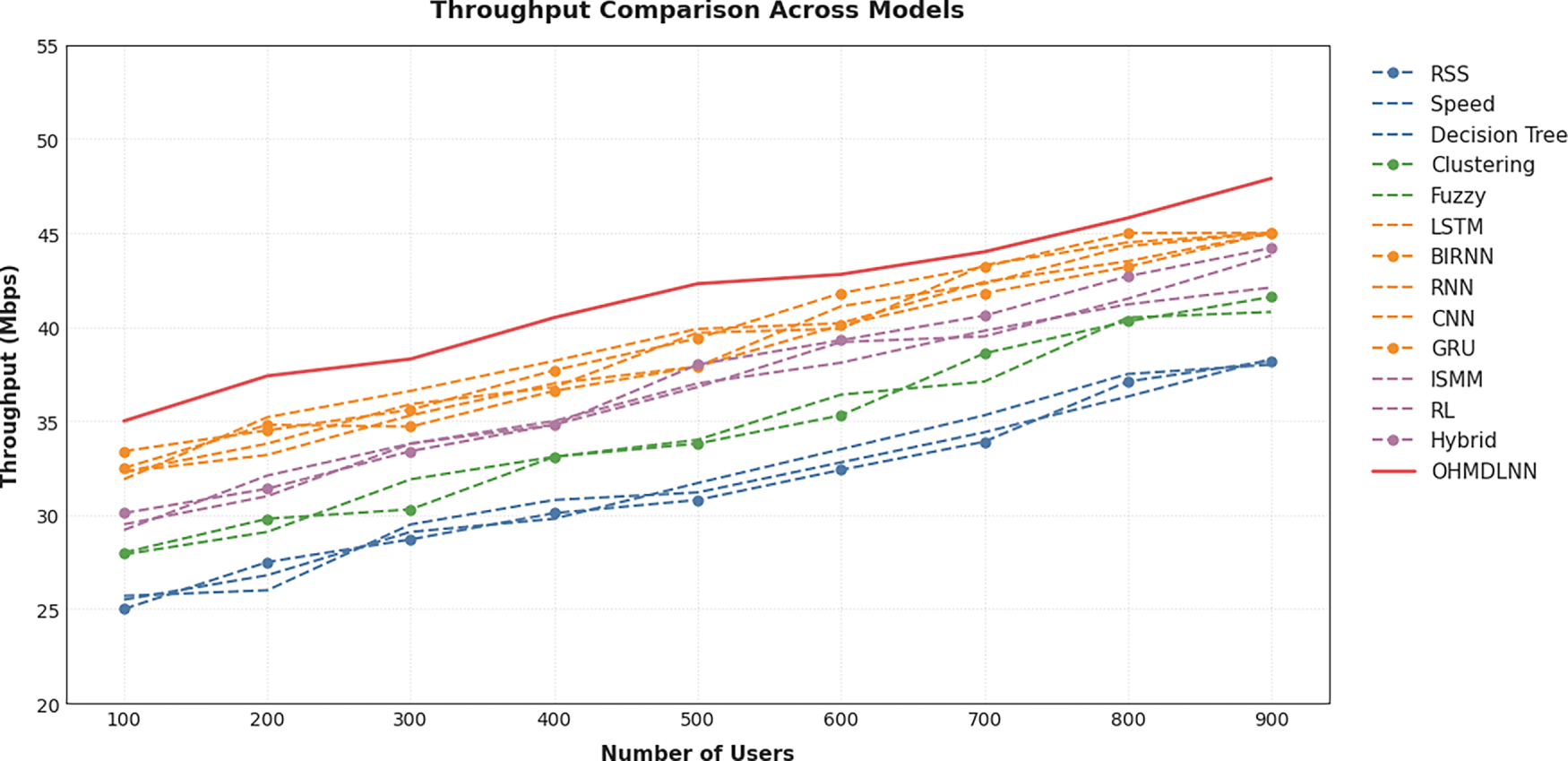

The throughput at the time of HO is crucial for continuous service delivery and providing an ideal user experience, especially in high-mobility scenarios. A delayed or wrong handover decision causes packet retransmission and, consequently, low throughput. The proposed model efficiently balances network load and maintains stability during handover, as evidenced by the results. Consequently, it enhances overall network performance. Furthermore, Table 9 presents the estimated throughput with the increase in the number of users and compares these results with other models. Based on Table 9, Fig. 12 illustrates the throughput during HO achieved by the proposed model. A summary of the throughput values for these models, following comparison with others, is as follows: RSS-based 38.2 Mbps, speed based 38.3 Mbps, decision tree 38.0 Mbps, clustering 41.6 Mbps, fuzzy logic 40.8 Mbps, LSTM 45.1 Mbps, Bi-RNN 45.2 Mbps, RNN 45.3 Mbps, CNN 45 Mbps, GRU 45.1 Mbps, ISMM 42.1 Mbps, RL 43.8 Mbps, Q-ML hybrid 44.2 Mbps and the proposed OHMDLNN model 47.9 Mbps.

Figure 12: Throughput comparison against the number of users.

All reported results correspond to the best-performing configurations identified through the Bayesian optimization process. The consistent superiority of the OHMDLNN across all evaluation metrics reflects the inherent advantages of the proposed architecture rather than tuning effects. Furthermore, the performance of the proposed OHMDLNN model was thoroughly evaluated in a realistic 5G simulation environment. It was compared against several baseline models, including traditional, machine learning, and neural network approaches such as CNN, RNN, LSTM, GRU, Bi-RNN, RL, and ISMM. The results consistently demonstrated that OHMDLNN outperformed all these models across the defined performance metrics. For instance, the OHMDLNN model achieved the lowest packet loss ratio across a wide range of user counts. It started at just 1.80% and remained steady at 1.29% even with up to 900 users. In terms of HO success rate, OHMDLNN reached a peak of 90.0%. This performance surpassed that of CNN 86.0%, RNN 87.0%, and LSTM 87.3%. The model also achieved a significant reduction in packet loss. This finding highlights its applicability in maintaining data integrity during handover events, which is also critical for latency-sensitive applications. In terms of latency, OHMDLNN had the lowest handover delay of 38 ms for 900 users. It performed even better than LSTM (45.2 ms) and Bi-RNN (45.3 ms). These low latencies are especially useful for uRLLC in 5G networks.

Moreover, the OHMDLNN model maintained high throughput even as user numbers increased. This reflects efficient bandwidth utilization in high mobility scenarios. The overall outcomes of the research prove that the model is effective at predicting network transitions while executing handovers efficiently. This improvement in decision-making was possible with the help of network-side KPIs and UE KPIs. These KPIs help mitigate handover failures and ensure uninterrupted service delivery. In addition, the architecture of the model includes a time-convolving structure, dropout, and flattening layers. Such architectural aspects enhance its responsiveness and generalization capability.

The evolution of 5G networks presents challenges in managing HO between multiple cell sites, particularly when manual human intervention is required. In response to these challenges, service providers are focusing on utilizing diverse mobility management methods. These methods incorporate intelligent decision-making processes without human involvement to achieve seamless connectivity and an optimised user experience. To address these challenges, we propose a model of handover prediction that considers several parameters from both the network and UE sides. In the proposed model, the test data was used separately from the training and validation sets to avoid any bias. The model’s predictions were then generated for each instance in the test dataset and compared with the ground truth labels using evaluation metrics such as accuracy and precision. At the conclusion of the evaluation process, the model’s performance results, including errors, areas of success, and areas of failure, are presented. The evaluation also involves analysing misclassified examples to gain deeper insights into system behaviour. Additionally, it includes details of the selected evaluation measures, data preprocessing steps, and any modifications made during the testing phase. The proposed model is particularly valuable as it ensures 92% test accuracy, optimal performance, and user satisfaction in the rapidly evolving wireless communication environment. It achieves this by considering multiple factors that influence the handover. In terms of handover success rate, OHMDLNN achieved a peak of 90.0%, surpassing the performance of CNN (86.0%), RNN (87.0%), and LSTM (87.3%). Regarding latency, OHMDLNN recorded the shortest handover delay of 38 ms for 900 users, outperforming LSTM (45.2 ms) and Bi-RNN (45.3 ms). Finally, this model has the potential to be extended to future generations of handover algorithms. It is particularly suitable for applications such as machine-to-machine (M2M) communication, millimeter-wave (mmWave) technology, hyper-network densification, and ultra-reliable low-latency communication (uRLLC).

Acknowledgement: We would like to take this opportunity to express our gratitude to all those who made a contribution to the completion of this article.

Funding Statement: The authors received no specific funding.

Author Contributions: Study conception and design: Muhammad Mukhtar, Farizah Yunus. Analysis and interpretation of the results: Muhammad Mukhtar, Ahmad Shukri Mohd Noor. Draft manuscript preparation: Muhammad Mukhtar, Farizah Yunus, Ahmad Shukri Mohd Noor, Muhammad Junaid, Zulfiqar Ali, Mehmood Ahmed. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The data and materials used to support the finding of this study are available from the corresponding authors upon request.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Amirova A, Shayea I, Yedilkhan D, Aldasheva L, Zakirova A. Handover decisions for ultra-dense networks in smart cities: a survey. Technologies. 2025;13(8):313. doi:10.3390/technologies13080313. [Google Scholar] [CrossRef]

2. Nguyen TT, Bonnet C, Harri J. SDN-based distributed mobility management for 5G networks. In: Proceedings of the 2016 IEEE Wireless Communications and Networking Conference; 2016 Apr 3–6; Doha, Qatar. doi:10.1109/WCNC.2016.7565106. [Google Scholar] [CrossRef]

3. Mukhtar M, Yunus F, Alqahtani A, Arif M, Brezulianu A, Geman O. The challenges and compatibility of mobility management solutions for future networks. Appl Sci. 2022;12(22):11605. doi:10.3390/app122211605. [Google Scholar] [CrossRef]

4. Papidas AG, Polyzos GC. Self-organizing networks for 5G and beyond: a view from the top. Future Internet. 2022;14(3):95. doi:10.3390/fi14030095. [Google Scholar] [CrossRef]

5. Alraih S, Nordin R, Abu-Samah A, Shayea I, Abdullah NF. A survey on handover optimization in beyond 5G mobile networks: challenges and solutions. IEEE Access. 2023;11:59317–45. doi:10.1109/ACCESS.2023.3284905. [Google Scholar] [CrossRef]

6. Chabira C, Shayea I, Nurzhaubayeva G, Aldasheva L, Yedilkhan D, Amanzholova S. AI-driven handover management and load balancing optimization in ultra-dense 5G/6G cellular networks. Technologies. 2025;13(7):276. doi:10.3390/technologies13070276. [Google Scholar] [CrossRef]

7. Thantharate A, Paropkari R, Walunj V, Beard C, Kankariya P. Secure5G: a deep learning framework towards a secure network slicing in 5G and beyond. In: Proceedings of the 2020 10th Annual Computing and Communication Workshop and Conference (CCWC); 2020 Jan 6–8; Las Vegas, NV, USA. doi:10.1109/ccwc47524.2020.9031158. [Google Scholar] [CrossRef]

8. Alraih S, Nordin R, Abu-Samah A, Shayea I, Abdullah NF, Alhammadi A. Robust handover optimization technique with fuzzy logic controller for beyond 5G mobile networks. Sensors. 2022;22(16):6199. doi:10.3390/s22166199. [Google Scholar] [PubMed] [CrossRef]

9. Barzizza E, Salmaso L, Trolese F. Recent developments in machine learning techniques for handover optimization in 5G. In: Proceedings of the 6th International Conference on Statistics: Theory and Applications (ICSTA’24); 2024 Aug 19–21; Barcelona, Spain. doi:10.11159/icsta24.147. [Google Scholar] [CrossRef]

10. Magagula LA, Chan HA, Falowo OE. Handover approaches for seamless mobility management in next generation wireless networks. Wirel Commun Mob Comput. 2012;12(16):1414–28. doi:10.1002/wcm.1074. [Google Scholar] [CrossRef]

11. Raeisi M, Sesay AB. Handover reduction in 5G high-speed network using ML-assisted user-centric channel allocation. IEEE Access. 2023;11:84113–33. doi:10.1109/ACCESS.2023.3297982. [Google Scholar] [CrossRef]

12. Yajnanarayana V. Proactive mobility management of UEs using sequence-to-sequence modeling. In: Proceedings of the 2022 National Conference on Communications (NCC); 2022 May 24–27; Mumbai, India. doi:10.1109/NCC55593.2022.9806726. [Google Scholar] [CrossRef]

13. Fonseca DF, Guzman BG, Martena GL, Bian R, Haas H, Giustiniano D. Prediction-model-assisted reinforcement learning algorithm for handover decision-making in hybrid LiFi and WiFi networks. J Opt Commun Netw. 2024;16(2):159–70. [Google Scholar]

14. Jiang C, Zhu X. Reinforcement learning based capacity management in multi-layer satellite networks. IEEE Trans Wirel Commun. 2020;19(7):4685–99. doi:10.1109/TWC.2020.2986114. [Google Scholar] [CrossRef]

15. Yadav AK, Singh K, Arshad NI, Ferrara M, Ahmadian A, Mesalam YI. MADM-based network selection and handover management in heterogeneous network: a comprehensive comparative analysis. Results Eng. 2024;21:101918. doi:10.1016/j.rineng.2024.101918. [Google Scholar] [CrossRef]

16. Tashan W, Shayea I, Aldirmaz-Colak S, El-Saleh AA, Arslan H. Optimal handover optimization in future mobile heterogeneous network using integrated weighted and fuzzy logic models. IEEE Access. 2024;12:57082–102. doi:10.1109/ACCESS.2024.3390559. [Google Scholar] [CrossRef]

17. Harja SL, Hendrawan. Evaluation and optimization handover parameter based X2 in LTE network. In: Proceedings of the 2017 3rd International Conference on Wireless and Telematics (ICWT); 2017 Jul 27–28; Palembang, Indonesia. doi:10.1109/icwt.2017.8284162. [Google Scholar] [CrossRef]

18. Qureshi MM, Yunus FB, Li J, Ur-Rahman A, Mahmood T, Ali Abdelrahman Ali Y. Future prospects and challenges of on-demand mobility management solutions. IEEE Access. 2023;11:114864–79. doi:10.1109/ACCESS.2023.3324297. [Google Scholar] [CrossRef]

19. Arzo ST, Scotece D, Bassoli R, Granelli F, Foschini L, Fitzek FHP. A new agent-based intelligent network architecture. IEEE Commun Stand Mag. 2022;6(4):74–9. doi:10.1109/MCOMSTD.0001.2100053. [Google Scholar] [CrossRef]

20. Shrivastava VK, Sharma D, Raj R. Unified service delivery framework for 5G edge networks. In: Proceedings of the 2018 IEEE Wireless Communications and Networking Conference (WCNC); 2018 Apr 15–18; Barcelona, Spain. doi:10.1109/wcnc.2018.8377186. [Google Scholar] [CrossRef]

21. Mangipudi PK, McNair J. SDN enabled mobility management in multi radio access technology 5G networks: a survey. arXiv:2304.03346. 2023. [Google Scholar]

22. Alhabo M, Zhang L, Nawaz N, Al-Kashoash H. Game theoretic handover optimisation for dense small cells heterogeneous networks. IET Commun. 2019;13(15):2395–402. doi:10.1049/iet-com.2019.0383. [Google Scholar] [CrossRef]

23. Buenrostro-Mariscal R, Santana-Mancilla PC, Montesinos-López OA, Nieto Hipólito JI, Anido-Rifón LE. A review of deep learning applications for the next generation of cognitive networks. Appl Sci. 2022;12(12):6262. doi:10.3390/app12126262. [Google Scholar] [CrossRef]

24. Ali Z, Miozzo M, Giupponi L, Dini P, Denic S, Vassaki S. Recurrent neural networks for handover management in next-generation self-organized networks. In: Proceedings of the 2020 IEEE 31st Annual International Symposium on Personal, Indoor and Mobile Radio Communications; 2020 Aug 31–Sep 3; London, UK. doi:10.1109/pimrc48278.2020.9217178. [Google Scholar] [CrossRef]

25. Shelhamer E, Long J, Darrell T. Fully convolutional networks for semantic segmentation. IEEE Trans Pattern Anal Mach Intell. 2017;39(4):640–51. doi:10.1109/TPAMI.2016.2572683. [Google Scholar] [PubMed] [CrossRef]

26. Ali Montazer G, Giveki D, Karami M, Rastegar H. Radial basis function neural networks: a review. Comput Rev J. 2018;1(1):52–74. [Google Scholar]

27. Balmuri KR, Konda S, Lai WC, Divakarachari PB, Gowda KMV, Kivudujogappa Lingappa H. A long short-term memory network-based radio resource management for 5G network. Future Internet. 2022;14(6):184. doi:10.3390/fi14060184. [Google Scholar] [CrossRef]

28. Wang X, Wang Z, Yang K, Song Z, Bian C, Feng J, et al. A survey on deep learning for cellular traffic prediction. Intell Comput. 2024;3(2):54. doi:10.34133/icomputing.0054. [Google Scholar] [CrossRef]

29. DeepSlice Dataset [Internet]. [cited 2019 May 25]. Available from: https://github.com/adtmv7/DeepSlice. [Google Scholar]

30. Kaur G, Goyal RK, Mehta R. An efficient handover mechanism for 5G networks using hybridization of LSTM and SVM. Multimed Tools Appl. 2022;81(26):37057–85. doi:10.1007/s11042-021-11510-x. [Google Scholar] [CrossRef]

31. Paropkari RA. Optimization of handover, survivability, multi-connectivity and secure slicing in 5G cellular networks using matrix exponential models and machine learning [dissertation]. Kansas City, MI, USA: University of Missouri-Kansas City; 2022. [Google Scholar]

32. Rafiq MA. Handover optimization scheme for 5G heterogeneous network. Int J Adv Eng Manag Sci. 2025;11(2):38–56. doi:10.22161/ijaems.112.5. [Google Scholar] [CrossRef]

33. Odeyomi OT, Akintade OO, Olowu TO, Zaruba G. A review of the convergence of 5G/6G architecture and deep learning. arXiv:2208.07643. 2022. [Google Scholar]

34. Nardini G, Sabella D, Stea G, Thakkar P, Virdis A. Simu5G—an OMNeT++ library for end-to-end performance evaluation of 5G networks. IEEE Access. 2020;8:181176–91. doi:10.1109/ACCESS.2020.3028550. [Google Scholar] [CrossRef]

35. Koketsu Rodrigues T, Verma S, Kawamoto Y, Kato N, Fouda MM, Ismail M. Smart handover with predicted user behavior using convolutional neural networks for WiGig systems. IEEE Netw. 2024;38(4):190–6. doi:10.1109/MNET.2024.3353301. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF

Downloads

Downloads

Citation Tools

Citation Tools