Open Access

Open Access

ARTICLE

Structured Random Cycle-Guided Algorithm (SRCA): An Adaptive Metaheuristic Combining Directionally-Guided and Stochastic Search Strategies

Department of Engineering, University of Palermo, Viale delle Scienze, Palermo, Italy

* Corresponding Author: Giuseppe Marannano. Email:

Computers, Materials & Continua 2026, 87(3), 76 https://doi.org/10.32604/cmc.2026.077884

Received 18 December 2025; Accepted 26 February 2026; Issue published 09 April 2026

Abstract

In response to the growing need for adaptive optimization algorithms capable of handling complex, multimodal, and high-dimensional search spaces, this paper introduces the Structured Random Cycle-guided Algorithm (SRCA). SRCA is not presented as a fundamentally new optimization paradigm, but rather as an architectural synthesis and a unified adaptive framework for dynamic operator selection. Based on a cycle-structured architecture, directional and stochastic search behaviors are dynamically selected at the individual level. The algorithm orchestrates well-established structured movements with a diverse pool of stochastic exploration strategies, enabling a coherent and adaptive balance between exploration and exploitation throughout the optimization process. Unlike traditional metaheuristics that rely on fixed behavioral roles or static movement schemes, SRCA allows each individual to adapt its search strategy based on real-time population feedback, monitored through convergence and dispersion indicators. The performance of SRCA is quantitatively assessed under strictly identical experimental conditions on a comprehensive set of 23 benchmark functions, including multimodal and high-dimensional problems, as well as on six classical constrained engineering design problems. Numerical results demonstrate competitive convergence reliability and robustness across diverse optimization tasks, confirming the effectiveness of the proposed adaptive cycle-based framework.Keywords

In recent decades, the optimization phase has become a central component in numerous fields, including structural engineering, artificial intelligence, and applied mathematics, serving as a foundational tool for the design and analysis of complex systems [1]. Whether the goal is to minimize energy consumption, maximize structural performance, or fine-tune parameters in machine learning models, the demand for robust and versatile optimization algorithms is increasingly critical. Although classical optimization techniques perform well in smooth, convex, and well-understood problem search spaces, they often struggle when applied to multimodal, non-convex, or poorly defined objective functions. To address these challenges, heuristic and metaheuristic algorithms have gained considerable attention [2]. Inspired by natural processes, biological evolution, and swarm intelligence, these approaches offer flexible frameworks for exploring high-dimensional search spaces without requiring gradient information or convexity assumptions [3]. Their stochastic nature and adaptability make them particularly effective in solving real-world optimization problems characterized by discontinuities, noise, or an undefined mathematical structure. In this regard, within the field of heuristic optimization, numerous exploration strategies have been developed to balance the dual objectives of global search and local refinement. A common design philosophy among many nature-inspired algorithms is the incorporation of structured movement paradigms, such as linear guidance toward promising solutions, spiral navigation for controlled diversification, and stochastic sampling to ensure global search [4]. Each of these mechanisms fulfills a distinct functional role in the optimization process: linear movements are primarily exploitative, drawing individuals toward known optima; spiral trajectories promote controlled exploration around high-potential regions, maintaining diversity while progressively refining the search; and random sampling enables broad exploration of the solution space, improving the algorithm’s ability to escape local optima.

Several well-known metaheuristics embody these principles. For instance, the Group Search Optimizer (GSO) mimics animal group behavior by incorporating a scrounger phase, where individuals move linearly toward a leading member, termed the producer, to intensify search in promising areas [5–7]. The Whale Optimization Algorithm (WOA) [8–11] draws inspiration from the bubble-net feeding behavior of humpback whales, utilizing a spiral-shaped movement to encircle prey (solutions) with both exploitation and mild exploration properties. In contrast, simpler methods like Hill Climbing [12] rely on deterministic and greedy local improvements, which, while efficient in convex search spaces, tend to stagnate in multimodal problems due to their lack of exploratory capacity. To enhance global search capabilities, other algorithms like Cuckoo Search [4,13,14] and those employing Lévy flights introduce heavy-tailed stochastic steps, enabling long-range transitions across the search space. These mechanisms allow the algorithm to jump out of local basins of attraction and reach unexplored regions, significantly boosting exploratory power. Among swarm-based optimization methods, Particle Swarm Optimization (PSO) [15–17] and the Firefly Algorithm (FA) [18–21] have consistently shown strong performance in handling complex search spaces. PSO operates by updating each particle’s position using a blend of individual experience and global guidance, facilitating rapid convergence. However, its deterministic update rules may cause premature convergence when faced with fragmented or non-convex fitness search spaces. The Firefly Algorithm employs a light-intensity-based attraction scheme, where individuals are probabilistically drawn toward brighter (i.e., higher-quality) candidates in the population; this model balances intensification and diversification but remains sensitive to user-defined parameters that govern light decay and randomness. In a previous study [22], we introduced adaptive improvements to both GSO and the Firefly Algorithm to enhance their robustness in complex scenarios by promoting diversity and context-aware search. These enhancements highlighted the importance of strategic flexibility and responsiveness to the evolving population dynamics. Recent approaches, such as Hybrid Differential Evolution (DE) [23] and Adaptive PSO [24,25], combine multiple search strategies or adjust parameters based on population feedback. However, these methods often rely on tuning continuous parameters (e.g., inertia weights) or population size, rather than dynamically switching the fundamental search structure itself to match the landscape topology.

Rather than proposing a fundamentally new algorithmic paradigm, we introduce an architectural synthesis of existing concepts named the Structured Random Cycle-guided Algorithm (SRCA). Explicitly, SRCA acts as a unified adaptive framework for operator scheduling that modularly combines structured directionally-guided movements with a diverse pool of stochastic exploration strategies. Specifically, SRCA operates through different directionally-guided movement strategies: linear guidance, spiral navigation (inspired by the encircling behavior modeled in the whale optimization algorithm) and adaptive data-driven movement. These deterministic strategies are complemented by nine randomized exploration mechanisms, including global, local, hybrid (cycle-by-cycle and periodic), standard deviation-guided, memory-based, Gaussian, Lévy flight sampling, and adaptive strategy selection. By coherently integrating these structured and stochastic behaviors, SRCA maintains a balance between robust convergence and continuous exploration across the optimization process. This contrasts with many existing algorithms that are typically limited to a fixed set of movement rules. For example, the GSO statically assigns individuals to predefined behavioral roles (producer, scrounger, ranger), while the WOA relies almost exclusively on spiral-based movements. Similarly, Cuckoo Search emphasizes long-range transitions through Lévy flights but lacks integrated mechanisms for localized refinement or structured adaptation. In contrast, SRCA enables each individual to explore the search space through a controlled application of diverse stochastic strategies, selected adaptively according to the search context and on real-time population feedback. By monitoring convergence and variable dispersion, SRCA adapts its search focus, favoring exploration initially and shifting toward exploitation as convergence improves, thus maintaining a balanced search and mitigating premature convergence.

To accurately reflect the methodological positioning of this work, the main contributions are framed in terms of architectural synthesis rather than conceptual novelty: (i) the introduction of a unified adaptive framework for operator scheduling, where directional and stochastic operators are dynamically selected at the individual level; (ii) the implementation of an adaptive control mechanism based on population-level convergence and dispersion indicators; (iii) the rigorous quantitative validation of the framework’s internal architecture through a dedicated ablation study; and (iv) the demonstration of its highly competitive performance on both mathematical benchmarks and complex structural engineering problems under strictly normalized computational budgets.

From an artificial intelligence perspective, SRCA functions as an adaptive decision-making system governed by procedural rules. It is necessary to explicitly distinguish this approach from other adaptive paradigms. Unlike parameter control methods (which tune numerical coefficients like inertia weights) or operator probability adaptation (which updates roulette-wheel selection chances based on historical success), SRCA employs a direct, rule-based switching mechanism that completely alters the structural search behavior based on current population metrics. Furthermore, unlike hyper-heuristics, which often employ machine learning to generate heuristics over time, SRCA’s adaptivity relies entirely on a computationally lightweight, threshold-driven logic.

To objectively assess the performance of the proposed algorithm, SRCA is tested against state-of-the-art methods under strictly identical experimental conditions (normalized by maximum function evaluations) on a comprehensive set of multimodal and high-dimensional benchmark functions, along with a selection of six structural optimization problems. Compared to well-known metaheuristics, the algorithm proves particularly effective in maintaining consistent convergence behavior and robustness across diverse optimization tasks.

As already mentioned in the introduction section, SRCA operates on a population of candidate solutions and evolves them over multiple iterations, integrating a two-phase process: a directional movement phase and a stochastic generation phase. The directional movement is primarily designed to guide individuals toward promising areas of the search space. By default, this movement is directed toward the current best solution

At the beginning of the algorithm, a population array

where

2.2 Directionally-Guided Exploitation Strategies

All individuals except the best one are updated through a movement mechanism that drives them closer to the current best solutions. These directionally-guided moves are primarily responsible for the exploitation phase, providing a structured path toward optimality compared to pure random exploration. Furthermore, the framework allows new directional operators to be added to the move pool seamlessly due to its modular architecture. Four distinct strategies have been implemented and tested. In the following formulations, the movement is described with respect to

Linear Movement Each variable

where

Spiral Movement Inspired by natural spiral navigation patterns [9], this strategy updates each variable by combining both radial and angular components, enabling the solution to follow a helical trajectory around a target point (see Eq. (3)).

where

Adaptive Data-Driven Movement. This is a newly introduced strategy designed to enhance the responsiveness of the algorithm to the evolving state of the population. Instead of applying a fixed movement rule, each variable is updated through a directionally biased mechanism driven by real-time population statistics. The update formulation integrates two complementary indicators:

•

•

The procedure involves the computation of two dynamic coefficients,

where

This metric reflects the overall dispersion of the population in the decision space at iteration

where

Although

• If

• If

• If

To transition from empirical justification to a formally robust configuration, a targeted sensitivity analysis was conducted on the adjustment factors (e.g., the

Directional Ensemble Movement. In the work, a fourth strategy aims to combine the strengths of the previous mechanisms by integrating them into a unified ensemble update. Rather than relying on a single movement model, this approach blends the linear, spiral, and adaptive components into a single formulation:

By combining multiple directional components, the directional ensemble strategy enables a richer and more balanced navigation of the search space. This formulation mitigates the weaknesses of any single method and increases robustness across different search space topologies. The weights can either be fixed or adaptively adjusted according to real-time feedback from the search dynamics. To ensure a robust baseline performance without problem-specific tuning, a limited sensitivity analysis was conducted on a representative subset of structurally distinct functions (F1 for unimodality, F10 for multimodality, and F22 for asymmetrical local traps). The analysis evaluated variations in

While this static configuration demonstrated strong generalization capabilities and was adopted for all subsequent experiments, we explicitly acknowledge that an exhaustive, problem-specific tuning of the

2.3 Stochastic Exploration Strategies

To maintain diversity and exploration, a second set of solutions is generated using random movements based on predefined or adaptive strategies. These new individuals are appended to the population P and evaluated using the objective function. The algorithm supports nine random movement strategies, selected via a control parameter. Moreover, if required and in automatic mode, the algorithm permits a fully adaptive mode (strategy 9), where the choice of strategy depends on the convergence and diversity of the population: when the population is stagnant, global exploration is favored; when it’s highly dispersed, localized refinement is applied. In the work, the implemented strategies are:

1. Global Random Sampling (GRS): Uniform random variables in the search space;

2. Local Random Sampling (LRS): Sampling around the best solution with shrinking amplitude;

3. Cycle-by-Cycle Hybrid: Alternating global and local at each iteration;

4. Periodic Hybrid: Periodic switching between global and local every three cycles;

5. Standard Deviation-Guided Sampling: Perturbation proportional to the inverse of variable-wise standard deviation;

6. Memory-Based Sampling: Centered around historical best values;

7. Gaussian Perturbation: Centered on the best solution with added Gaussian noise;

8. Lévy Flight: Long-tailed random steps for global jumps;

9. Adaptive Strategy Selection: Dynamic control of search behavior.

Each strategy, whose underlying mechanism is discussed in detail in Section 3, aims to address a specific trade-off between diversification and intensification, enabling a coherent balance between global exploration and local refinement.

2.4 Fitness Evaluation, Population Update, and Probabilistic Convergence

After each distinct phase (firstly the directional movement, and subsequently the stochastic exploration), the algorithm merges the newly generated candidate solutions with the current population. The extended array is immediately re-sorted by fitness, and only the best N individuals are retained. This two-stage intra-generational elitism accelerates convergence by ensuring that the stochastic phase strictly operates on the refined outcomes of the deterministic phase. This strict retention of the top-performing individuals ensures that the sequence of best solutions is monotonically non-increasing in terms of fitness, i.e.,

Furthermore, the algorithmic architecture provides a formal probabilistic guarantee of global convergence. According to stochastic search theory, a metaheuristic converges to the global optimum in probability if two conditions are met: (1) the process is elitist, and (2) the probability of generating a solution in any subset of the search space with a positive measure (including the global optimum region) is strictly greater than zero at every iteration. In SRCA, condition (1) is satisfied by the aforementioned sorting and truncation mechanism. Condition (2) is mathematically guaranteed by the stochastic exploration phase: the continuous availability of the Global Random Sampling (Strategy 1) and the heavy-tailed Lévy flight (Strategy 8) ensures that every point in the domain S has a probability

The entire process, movement, exploration, fitness evaluation, and sorting, is repeated for a fixed number of iterations or until a convergence criterion is met (e.g., negligible improvement in fitness). Throughout the process, the best solution found so far is tracked and returned at the end of the optimization.

2.6 Computational Complexity and Cost

A critical aspect of any metaheuristic is its computational complexity. The time complexity of SRCA is primarily determined by the objective function evaluation, the sorting mechanism, and the update rules. Let N be the population size, D the problem dimension, and

The complexity of the initialization is

1. Deterministic Update: Requires

2. Stochastic Exploration: Generates an additional set of N candidates, requiring another

Consequently, SRCA evaluates

To synthesize the overall execution flow and facilitate full reproducibility, the step-by-step procedure of the proposed framework is detailed in Algorithm 1.

3 Description of the Random Strategies

This section details the nine implemented exploration strategies.

Strategy 1-Global Random Sampling (GRS). As the fundamental form of stochastic exploration, GRS generates each decision variable

where

Strategy 2-Local Random Sampling (LRS). LRS focuses on exploitation by generating solutions within a shrinking neighborhood of the current best individual. The update rule is defined as:

where

Here,

Strategy 3-Cycle-by-Cycle Hybrid Sampling. This strategy alternates between global and local sampling at each iteration based on the cycle number

Strategy 4-Periodic Hybrid Sampling. Unlike the rapid switching of Strategy 3, this method introduces persistent phases of exploration or exploitation by alternating behavior every

This periodic modulation allows for more stable transitions, benefiting search in multimodal landscapes.

Strategy 5-Standard Deviation-Guided Sampling. This strategy uses population statistics to modulate perturbations based on variable spread. For each variable

where

Strategy 6-Memory-Based Sampling. Instead of relying solely on the current best, this strategy utilizes an archive of historical elite solutions. The new solution is generated as:

where

This method exploits evolutionary memory to revisit promising regions that may not be adjacent to the current global best.

Strategy 7-Gaussian Perturbation. This strategy performs probabilistic local search by perturbing the best solution with Gaussian noise to explore its immediate neighborhood:

where

Strategy 8-Lévy Flight. Inspired by natural foraging behavior, Lévy flights introduce heavy-tailed random steps to enable long-distance jumps [26]. The update rule is:

where

with

This scale-free movement is particularly effective for escaping local minima in sparse search spaces.

Strategy 9-Adaptive Strategy Selection. This fully adaptive mode dynamically selects the exploration strategy based on real-time population feedback, guided by convergence

• Stagnation detection: If

• Low convergence: If

• Continuous injection: Apply Strategy 8 (Lévy Flight) to a uniformly distributed subset of the population (e.g., 20%) at each iteration to guarantee persistent heavy-tailed diversification.

• High dispersion: If

• Default: Randomly select from

This mechanism provides context-sensitive control without the computational overhead of training a learning model. As demonstrated by the sensitivity analysis summarized in Section 2.2, the selected switching conditions represent robust operational boundaries verified to prevent premature stagnation across varied landscapes. By dynamically activating or deactivating specific search strategies based on their immediate impact on population metrics, Strategy 9 inherently performs continuous, run-time operator evaluation. While this dynamic behavior offers valuable insights into operator efficacy during the search process, it complements rather than replaces formal structural validation. Therefore, a dedicated static ablation study is explicitly provided in Section 4.3 to quantitatively assess and empirically justify the independent contribution of each algorithmic module.

4.1 Performance Evaluation on Benchmark Functions

The algorithm SRCA was fully developed using Python 3.12 environment. While platforms such as MATLAB are frequently used for numerical optimization due to their extensive libraries and integrated visualization tools, the choice of Python was driven by its open-source nature, rich ecosystem, and flexibility. To ensure rigorous comparative conditions across all tested algorithms, the termination criterion was defined strictly by the maximum number of function evaluations (MaxFEs) rather than a fixed number of iterations. The MaxFEs budget was dynamically determined based on the problem’s dimensionality (D): 100,000 for

To evaluate the performance and robustness of the SRCA algorithm, a comprehensive suite of benchmark functions was employed [9,27,28]. These functions (named F1-F23), widely adopted in the literature, are used to assess the global exploration capabilities, convergence speed, and resilience to local optima across various problem types, including unimodal, multimodal, separable, and non-separable search spaces. Table 2 reports the benchmark functions, including their respective domains and known global minima. For each function, a 3D plot in two variables is also included.

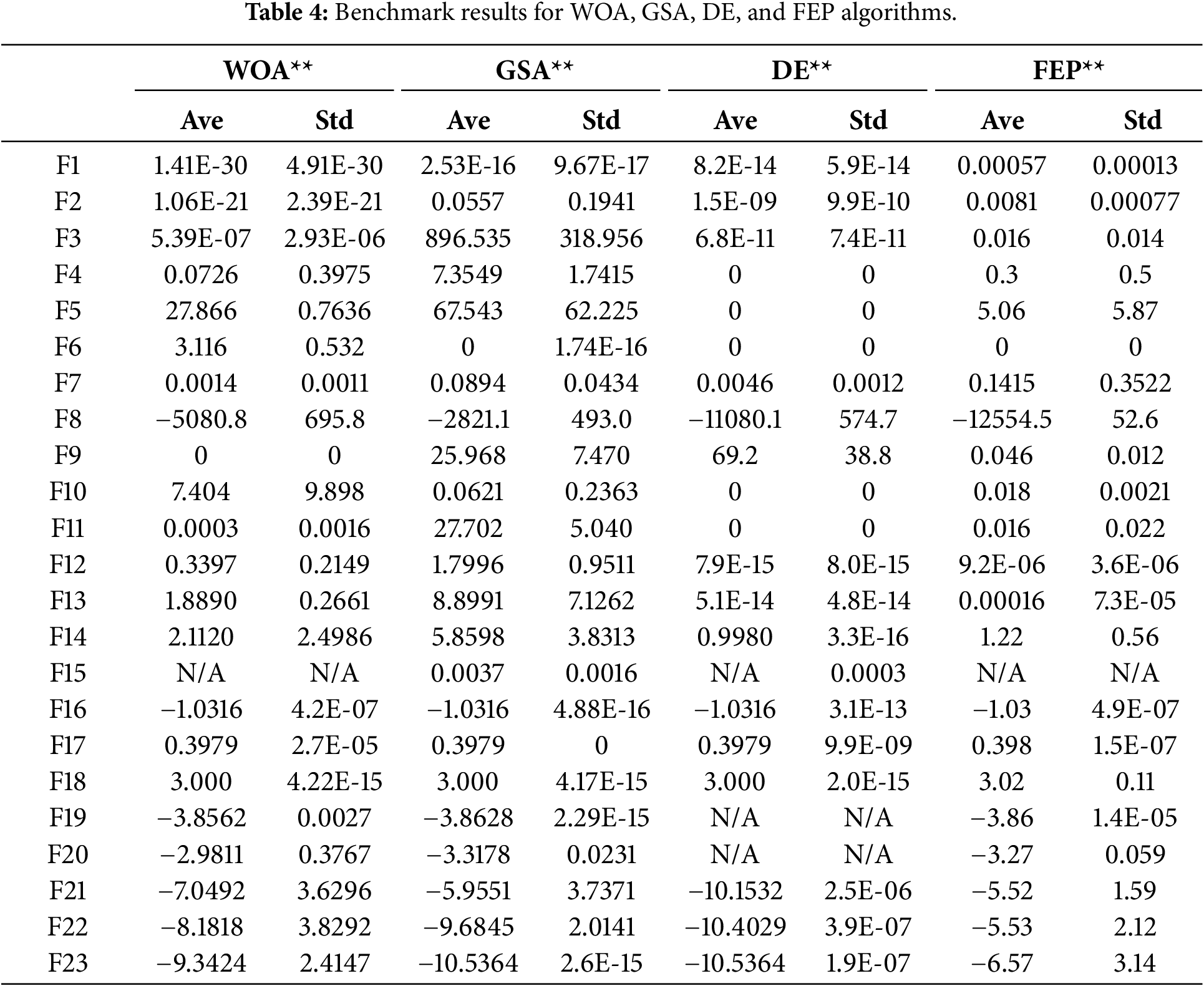

Tables 3 and 4 report the comparative performance of SRCA against well-established metaheuristic algorithms, highlighting its relative effectiveness on benchmark functions. In the tables, the symbol [*] refers to results obtained from Python implementations specifically developed as part of this study. Conversely, the symbol [**] denotes results sourced directly from established literature [9]. Relying on peer-reviewed baseline data for canonical algorithms (e.g., PSO, WOA, GSA) strictly prevents potential “implementation bias”, ensuring these comparators are represented at their objectively verified best under the specified computational constraints. In contrast, the GSO and FA implementations required local execution because they incorporate specific recent extensions [22] for which comprehensive baseline data across all 23 functions was unavailable. In particular, The GSO algorithm was extended beyond its standard formulation through several methodological enhancements [22]. Four distinct exploration strategies were introduced for the rangers (global, local, cycle-by-cycle hybrid, and periodic switching) enabling a dynamic balance between exploration and exploitation. Additionally, producers execute directional searches via adaptive perturbations in spherical coordinates, with a decaying amplitude mechanism that encourages convergence while mitigating premature stagnation. A directional reset mechanism embedded within the producer simulates scouting behavior, further improving adaptability during extended optimization processes. The GSO algorithm was configured with 50 producers, each associated with 20 scroungers and 50 rangers, resulting in a structured and hierarchical search process that balances directed exploitation with distributed exploration. A customized Firefly Algorithm (FA) was also implemented in Python to reflect the exploration logic adopted in SRCA and the revised GSO. In addition to the canonical attractiveness-and-randomness scheme, the proposed FA integrates four interchangeable random exploration strategies controlled by the same parameters used in GSO. At each iteration, every firefly is also attracted toward the centroid of the

Analyzing the results presented in Tables 3 and 4, it can be observed that SRCA consistently approaches the global optimum across a wide variety of search spaces. It is important to objectively note that SRCA does not universally dominate the benchmarks. On certain unimodal and continuous functions (such as F1, F2, F3, and F9), highly specialized algorithms like DE and WOA exhibit superior precision, reaching extreme minima or exact zeroes. Similarly, GSO and PSO outperform SRCA on specific multimodal topologies (e.g., F7, F11, F20). However, SRCA achieves the best results among the compared methods on 14 out of the 23 functions, showcasing a highly balanced exploitation capacity and robust convergence behavior. The deterministic movement module, when combined with the stochastic cycle-based exploration and the adaptive strategy selection, creates a powerful synergy that prevents stagnation and fosters reliable general-purpose optimization.

However, it is important to rigorously analyze the specific behavioral dynamics observed on function F12, a complex benchmark characterized by sharp boundary effects and severe penalty terms. The structural difficulty SRCA occasionally encounters here stems directly from its architectural mechanics. Specifically, the heavy-tailed stochastic operators (such as the Lévy flight) frequently generate large exploratory steps. In a landscape bounded by steep penalty walls, these massive jumps cause frequent boundary violations. While algorithms like PSO naturally mitigate this issue through velocity clamping (which smoothly decelerates particles near the boundaries), SRCA’s current boundary-handling mechanism simply truncates variables at the bounds (clipping). This approach can temporarily cause an artificial concentration of solutions on the boundary, resulting in localized losses of population diversity. However, it must be noted that when SRCA is evaluated under a strictly normalized and equitable computational budget (MaxFEs), its adaptive strategies are granted sufficient evaluations to recover from these boundary collisions, yielding significantly improved final results. As seen in Table 3, while SRCA does not surpass the extreme precision of GSO or PSO on this specific function, adopting the proper MaxFEs budget substantially narrowed the performance gap, validating the algorithm’s resilience on penalized spaces. Nevertheless, this analysis highlights that the integration of advanced boundary-handling or gradient-aware repair mechanisms remains a valuable future improvement for navigating sharp penalty boundaries.

While this isolated issue is observed, SRCA demonstrates a competitive trade-off between convergence speed and solution quality, with low standard deviation values across most runs, indicating high robustness and reliability.

4.2 Performance Evaluation on Benchmark Structural Optimization Problems

To rigorously assess the practical applicability and constraint-handling capabilities of the proposed SRCA framework, a suite of six classic structural engineering design problems was selected [29–31]. These problems are widely recognized in the literature for their highly non-convex search spaces, non-linear constraints, and mixed continuous-discrete decision variables, making them ideal stress tests for modern metaheuristics. The evaluated problems are: Tension/Compression Spring Design (F1), Pressure Vessel Design (F2), Three-Bar Truss Design (F3), Welded Beam Design (F4), Multiple Disk Clutch Brake Design (F5), and Speed Reducer Design (F6). The detailed mathematical formulations, including the objective functions, constraint inequalities, and decision variable bounds for all six optimization problems, are thoroughly documented in existing literature [32,33].

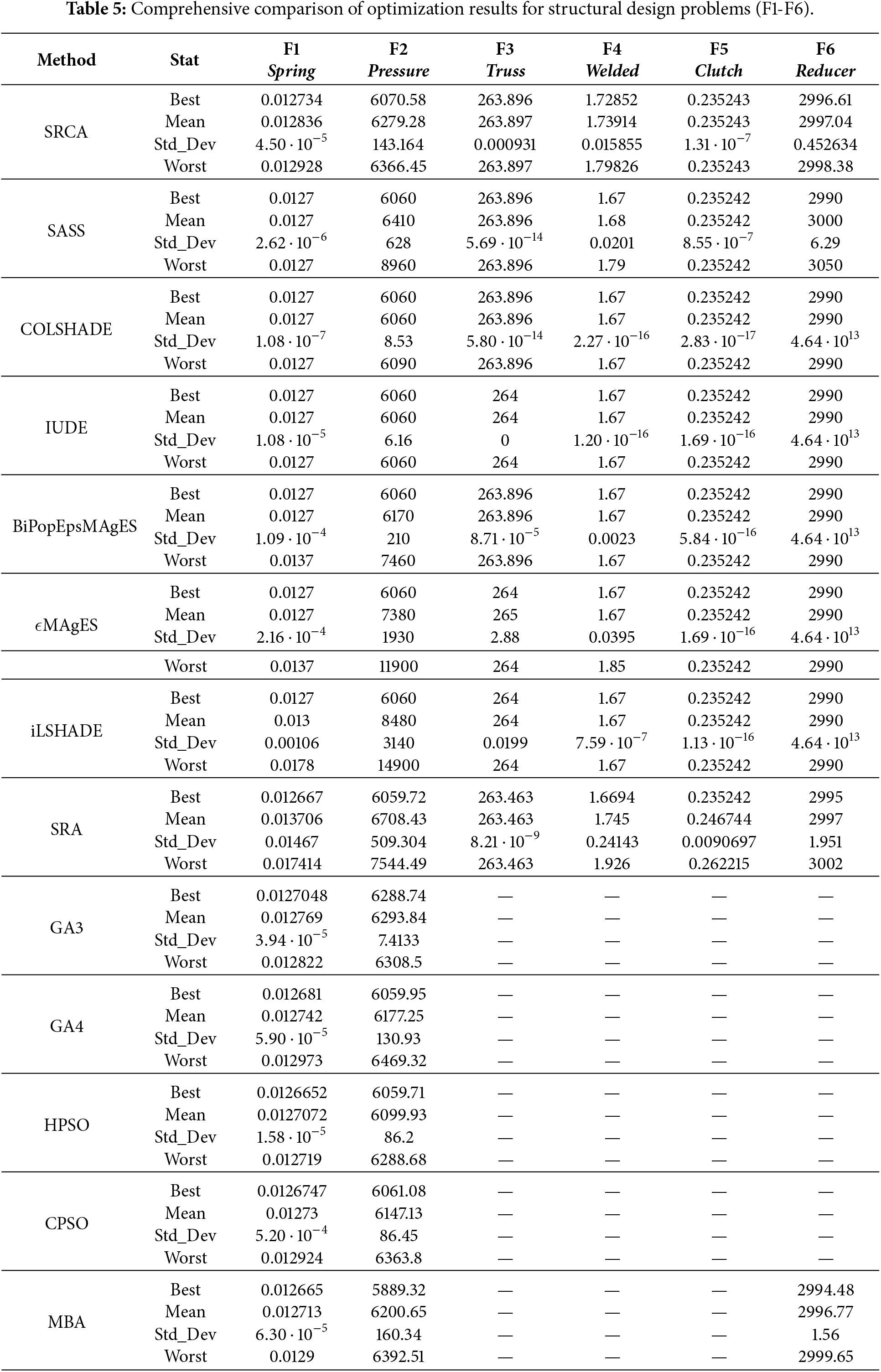

In this experimental phase, SRCA was comprehensively compared against an extensive set of advanced algorithms from the state-of-the-art, including SASS, COLSHADE, IUDE, BiPopEpsMAgES,

An analysis of the results presented in Table 5 reveals that SRCA is a reliable and competitive framework for real-world engineering design. While highly specialized algorithms occasionally report marginally lower extreme minima, SRCA distinguishes itself through operational stability and robustness across independent evaluations, which are critical features for industrial applications where reproducibility is paramount.

For the Tension/Compression Spring Design (F1), SRCA achieves a best cost of

In the Pressure Vessel Design (F2), the algorithm locates a highly competitive minimum of

The Three-Bar Truss Design (F3) highlights SRCA’s precision. The algorithm perfectly matches the optimal global value of

Regarding the Welded Beam Design (F4), SRCA records a best cost of

For the Multiple Disk Clutch Brake (F5), SRCA’s performance is virtually indistinguishable from the known global optimum. It identifies a minimum of

Finally, in the Speed Reducer Design (F6), SRCA achieves a best result of

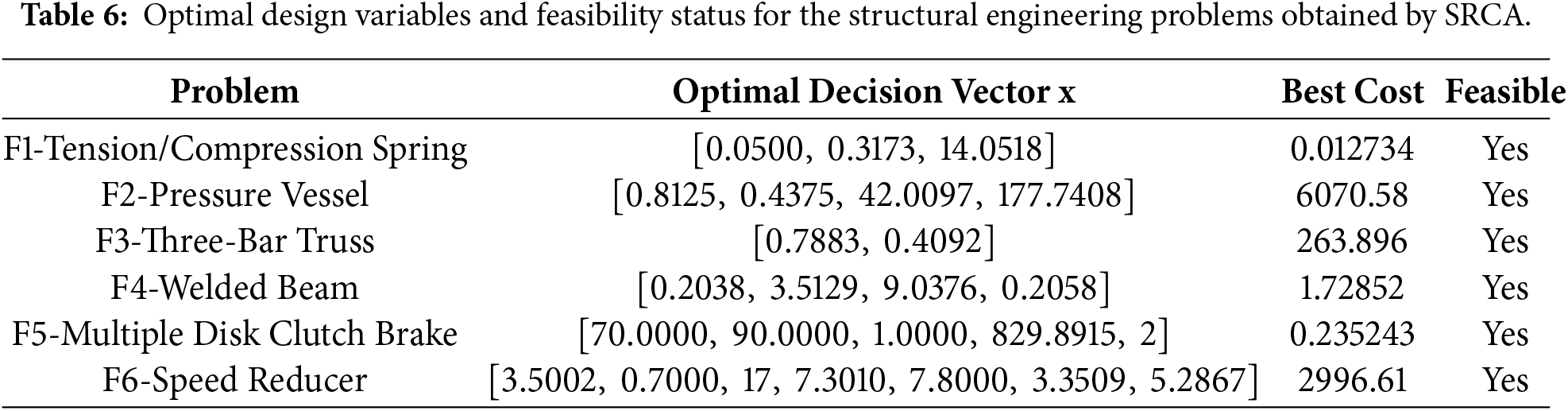

To exhaustively address the verification of structural constraints and provide complete reproducibility, Table 6 explicitly reports the optimal decision vectors (design variables) corresponding to the best solutions achieved by SRCA for each evaluated problem. Furthermore, the table formally confirms the feasibility status, verifying that all reported optimal solutions strictly satisfy the respective non-linear mechanical and geometric inequality constraints without any violations.

In conclusion, the structural benchmark analysis confirms that the proposed integration of directional deterministic exploitation and dynamically scheduled stochastic exploration prevents premature convergence and ensures stable constraint satisfaction across varied engineering topologies.

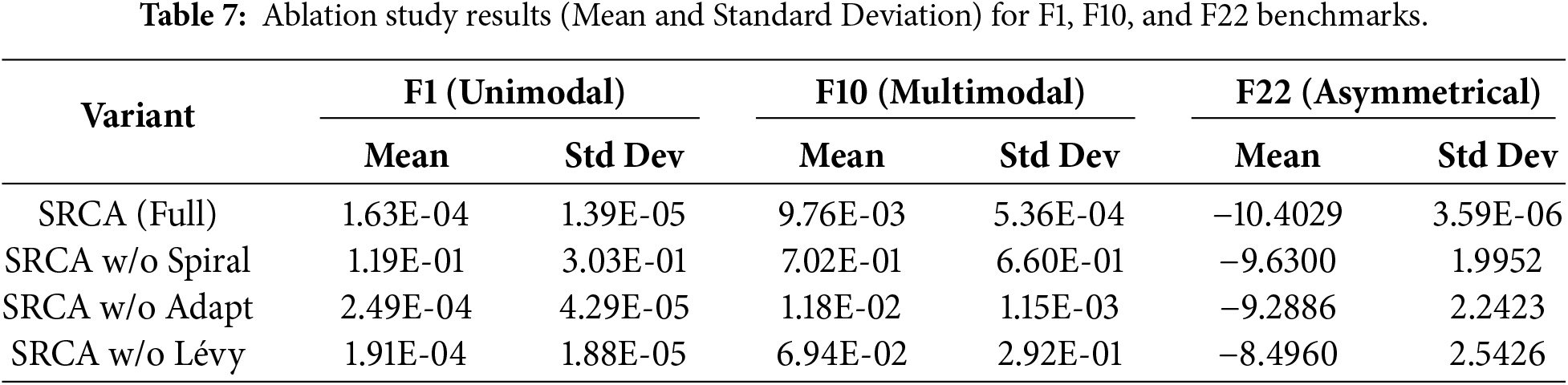

To justify the architectural complexity of SRCA and scientifically validate the inclusion of its distinct movement and exploration strategies, an ablation study was conducted. Following standard experimental practices, three representative benchmark functions were selected: F1 (unimodal, testing pure convergence speed), F10 (multimodal, testing local optima avoidance), and F22 (highly asymmetrical local traps).

The fully equipped SRCA (“Full”) was compared against three structurally disabled variants: SRCA “w/o Spiral” (disabling the spiral directional movement,

The data demonstrates that every architectural component contributes significantly to the framework’s overall robustness. Disabling the spiral movement (w/o Spiral) causes severe performance degradation and extreme variance across all landscape types, proving its necessity for localized spatial navigation. Disabling the heavy-tailed stochastic jumps (w/o Lévy) crucially impairs the algorithm’s ability to escape local minima, as evidenced by the dramatic loss of precision and stability on the multimodal F10 and F22 benchmarks. Finally, disabling the adaptive movement (w/o Adapt) leads to a consistent loss of precision and sharply increased standard deviations, particularly on asymmetrical landscapes. Ultimately, this ablation study empirically demonstrates that the optimal performance of SRCA relies on the synergistic integration of all its structural components.

This study introduced the Structured Random Cycle-guided Algorithm (SRCA), an architectural synthesis that acts as a unified adaptive framework for operator scheduling, combining structured directionally-guided movements with a diverse ensemble of randomized exploration strategies. By embedding these components in a cycle-based framework and enabling adaptive control based on population feedback, SRCA is designed to maintain a robust balance between global exploration and local exploitation across a wide range of optimization search spaces. Quantitative validation across 23 standard benchmark functions and six highly constrained engineering design problems was conducted under strictly identical experimental conditions (normalized by maximum function evaluations, MaxFEs) against state-of-the-art competitors, demonstrating the algorithm’s adaptability and potential for general-purpose optimization.

On benchmark functions, SRCA achieved best-known or near-optimal solutions in 18 out of 23 cases, demonstrating consistent robustness across both unimodal and multimodal landscapes. It showed competitive performance compared to FA, PSO, WOA, and GSA, particularly in terms of average results and convergence stability. On challenging multimodal problems such as F3, F4, and F9, SRCA exhibited strong global search capabilities with low variance across runs. For high-dimensional or hybrid functions (e.g., F11, F13, F20-F23), it maintained a high level of precision and solution consistency, reflecting an effective balance between diversification and intensification achieved through its hybrid search framework.

The algorithm was also validated on six classical engineering design problems (including Tension/Compression Spring, Speed Reducer, and Multiple Disk Clutch Brake). SRCA successfully reached or perfectly matched known global optima in multiple cases, with exceptionally stable convergence and effective constraint satisfaction. Compared to advanced state-of-the-art metaheuristics:

• It matched or outperformed highly specialized algorithms (such as COLSHADE, IUDE, and BiPopEpsMAgES) in solution quality and precision;

• It displayed significantly greater operational robustness and lower variance (standard deviation) than several modern variants (e.g., iLSHADE, SASS), particularly in discrete or tightly constrained scenarios;

• It retained broad, general-purpose applicability without requiring problem-specific constraint-handling heuristics;

• It complemented classical algorithms like GSO and PSO by offering faster early-stage convergence and improved resilience against stagnation.

Additionally, a dedicated ablation study validated the algorithmic architecture, proving that the synergistic integration of spiral navigation, adaptive data-driven movements, and heavy-tailed Lévy flights is justify to prevent premature stagnation and ensure robust performance across diverse topological landscapes.

While boundary handling on landscapes with severe constraint penalties (e.g., F12) initially posed a structural challenge due to strict variable clipping, evaluation under equitable computational budgets (MaxFEs) confirmed the framework’s adaptive capacity to recover and yield highly competitive results. Nevertheless, future work will focus on integrating advanced boundary-handling and gradient-aware repair mechanisms to further streamline navigation in highly penalized regions. Furthermore, in terms of practical applicability, the adaptive nature of SRCA makes it particularly suitable for real-world engineering scenarios beyond the tested benchmarks. Its ability to dynamically balance exploration and exploitation without extensive parameter tuning suggests potential applications in the optimal sizing of mechanical components, the tuning of industrial control systems, and the design of smart structural devices, where objective functions are often non-differentiable or computationally expensive black-box models.

Overall, SRCA emerges as a flexible and effective optimizer for both continuous and discrete domains, capable of addressing complex, real-world problems without the need for domain-specific heuristics. Rather than proposing a single new search operator, SRCA demonstrates how structured cycling and adaptive operator selection can be combined into a coherent optimization paradigm, offering a flexible foundation for future intelligent optimization frameworks. Further developments will focus on scaling to large-dimensional scenarios and refining the adaptive controller to enhance generalization.

Acknowledgement: The authors have no specific acknowledgements to declare.

Funding Statement: The authors received no specific funding for this study.

Author Contributions: The authors confirm their contribution to the paper as follows. Conceptualization, methodology design, investigation, and original draft writing: Giuseppe Marannano and Tommaso Ingrassia; software development, methodology validation, and visualization: Giuseppe Marannano and Antonino Cirello. All authors actively participated in managing and interpreting the results, manuscript revision, and technical discussions. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The numerical data supporting the findings of this study are available from the corresponding author upon reasonable request.

Ethics Approval: Not applicable. This study does not involve human or animal subjects.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Sarker RA, Newton CS. Optimization modelling: a practical approach. Boca Raton, FL, USA: CRC Press; 2007. doi:10.1201/9781420043112. [Google Scholar] [CrossRef]

2. Kaveh A, Ghazaan MI. Meta-heuristic algorithms for optimal design of real-size structures. Cham, Switzerland: Springer; 2018. doi:10.1007/978-3-319-78780-0. [Google Scholar] [CrossRef]

3. Kumar A, Nadeem M, Banka H. Nature inspired optimization algorithms: a comprehensive overview. Evol Syst. 2023;14(1):1–31. doi:10.1007/s12530-022-09432-6. [Google Scholar] [CrossRef]

4. Yang X-S, Deb S. Engineering optimisation by cuckoo search. Int J Math Model Numer Optim. 2010;1(4):330–43. doi:10.1504/ijmmno.2010.035430. [Google Scholar] [CrossRef]

5. He S, Wu QH, Saunders JR. A novel group search optimizer inspired by animal behavioural ecology. In: 2006 IEEE International Conference on Evolutionary Computation; 2006 Jul 16–21; Vancouver, BC, Canada. p. 1272–8. doi:10.1109/CEC.2006.1688455. [Google Scholar] [CrossRef]

6. Li L, Liu F. Optimum design of structures with group search optimizer algorithm. In: Group search optimization for applications in structural design. Adaptation, Learning, and Optimization. Vol. 9. Berlin, Heidelberg, Germany: Springer; 2011. p. 123–64. doi:10.1007/978-3-642-20536-1_4. [Google Scholar] [CrossRef]

7. Marannano G, Ingrassia T, Ricotta V, Nigrelli V. Numerical optimization of a composite sandwich panel with a novel bi-directional corrugated core using an animal-inspired optimization algorithm. In: Lecture notes in mechanical engineering. Cham, Switzerland: Springer; 2023. p. 565–75. doi:10.1007/978-3-031-15928-2_56. [Google Scholar] [CrossRef]

8. Li X, Tian D. The importance of the whale optimization algorithm in addressing cloud computing issues. J Eng Appl Sci. 2025;72(1):162. doi:10.1186/s44147-025-00740-7. [Google Scholar] [CrossRef]

9. Mirjalili S, Lewis A. The whale optimization algorithm. Adv Eng Softw. 2016;95:51–67. doi:10.1016/j.advengsoft.2016.01.008. [Google Scholar] [CrossRef]

10. Mahmood S, Bawany NZ, Tanweer MR. A comprehensive survey of whale optimization algorithm: modifications and classification. Indones J Electr Eng Comput Sci. 2023;29(2):899–910. doi:10.11591/ijeecs.v29.i2.pp899-910. [Google Scholar] [CrossRef]

11. Rahimnejad A, Akbari E, Mirjalili S, Gadsden SA, Trojovský P, Trojovská E. An improved hybrid whale optimization algorithm for global optimization and engineering design problems. PeerJ Comput Sci. 2023;9:e1557. doi:10.7717/peerj-cs.1557. [Google Scholar] [PubMed] [CrossRef]

12. Rao SS. Engineering optimization: theory and practice. 4th ed. Hoboken, NJ, USA: Wiley; 2009. doi:10.1002/9780470549124. [Google Scholar] [CrossRef]

13. Makhadmeh SN, Awadallah MA, Kassaymeh S, Al-Betar MA, Sanjalawe Y, Kouka S, et al. Recent advances in multi-objective cuckoo search algorithm, its variants and applications. Arch Comput Methods Eng. 2025;32(5):3213–40. doi:10.1007/s11831-025-10240-9. [Google Scholar] [CrossRef]

14. Shlesinger MF, Zaslavsky GM, Klafter J. Strange kinetics. Nature. 1993;363(6424):31–7. doi:10.1038/363031a0. [Google Scholar] [CrossRef]

15. Ma L, Dai C, Xue X, Peng C. A multi-objective particle swarm optimization algorithm based on decomposition and multi-selection strategy. Comput Mater Contin. 2025;82(1):997–1026. doi:10.32604/cmc.2024.057168. [Google Scholar] [CrossRef]

16. Kennedy J, Eberhart R. Particle swarm optimization. In: Proceedings of ICNN’95—International Conference on Neural Networks. Piscataway, NJ, USA: IEEE; 1995. Vol. 4, p. 1942–8. doi:10.1109/ICNN.1995.488968. [Google Scholar] [CrossRef]

17. Wang D, Tan D, Liu L. Particle swarm optimization algorithm: an overview. Soft Comput. 2018;22(2):387–408. doi:10.1007/s00500-016-2474-6. [Google Scholar] [CrossRef]

18. Yang XS. Firefly algorithm, stochastic test functions and design optimization. Int J Bio-Inspired Comput. 2010;2(2):78–84. doi:10.1504/IJBIC.2010.032124. [Google Scholar] [CrossRef]

19. Fister I, Yang XS, Brest J. A comprehensive review of firefly algorithms. Swarm Evol Comput. 2013;13(3):34–46. doi:10.1016/j.swevo.2013.06.001. [Google Scholar] [CrossRef]

20. Zare M, Ghasemi M, Zahedi A, Golalipour K, Mohammadi SK, Mirjalili S, et al. A global best-guided firefly algorithm for engineering problems. J Bionic Eng. 2023;20:1102–15. doi:10.1007/s42235-023-00386-2. [Google Scholar] [CrossRef]

21. Scalia GLa, Micale R, Giallanza A, Marannano G. Firefly algorithm based upon slicing structure encoding for unequal facility layout problem. Int J Ind Eng Comput. 2019;10:215–26. doi:10.5267/j.ijiec.2019.2.003. [Google Scholar] [CrossRef]

22. Cirello A, Ingrassia T, Mancuso A, Marannano G, Mirulla AI, Ricotta V. Evaluating the efficiency of nature-inspired algorithms for finite element optimization in the ANSYS environment. Appl Sci. 2025;15(12):6750. doi:10.3390/app15126750. [Google Scholar] [CrossRef]

23. Wei Y, Song P, Luo Q, Zhou Y. Differential evolution with improved equilibrium optimizer for combined heat and power economic dispatch problem. Comput, Mat Cont. 2025;85(1):1235–65. doi:10.32604/cmc.2025.066527. [Google Scholar] [CrossRef]

24. Zhan Z-H, Zhang J, Li Y, Chung HS-H. Adaptive particle swarm optimization. IEEE Trans Syst Man, Cybernet, Part B (Cybernetics). 2009;39(6):1362–81. doi:10.1109/TSMCB.2009.2015956. [Google Scholar] [PubMed] [CrossRef]

25. Hop DC, Hop NVan, Anh TTM. Adaptive particle swarm optimization for integrated quay crane and yard truck scheduling problem. Comput Indust Eng. 2021;153:107075. doi:10.1016/j.cie.2020.107075. [Google Scholar] [CrossRef]

26. Mantegna RN. Fast, accurate algorithm for numerical simulation of Lévy stable stochastic processes. Phys Rev E. 1994;49(5):4677–83. doi:10.1103/PhysRevE.49.4677. [Google Scholar] [PubMed] [CrossRef]

27. Mohammed HM, Umar SU, Rashid TA. A systematic and meta-analysis survey of whale optimization algorithm. Comput Intell Neurosci. 2019. doi:10.1155/2019/8718571. [Google Scholar] [PubMed] [CrossRef]

28. Tang J, Wang L. A whale optimization algorithm based on atom-like structure differential evolution for solving engineering design problems. Sci Rep. 2024;14(1):1730. doi:10.1038/s41598-023-51135-8. [Google Scholar] [PubMed] [CrossRef]

29. Yang XS, Huyck C, Karamanoglu M, Khan N. True global optimality of the pressure vessel design problem: a benchmark for bio-inspired optimization algorithms. Int J Bio-Inspired Comput. 2013;5(6):329–35. doi:10.1504/ijbic.2013.058910. [Google Scholar] [CrossRef]

30. Sandgren E. Nonlinear integer and discrete programming in mechanical design optimization. J Mech Des. 1990;112(2):223–9. doi:10.1115/1.2912596. [Google Scholar] [CrossRef]

31. Cagnina LC, Esquivel SC, Coello Coello CAC. Solving engineering optimization problems with the simple constrained particle swarm optimizer. Informatica. 2008;32(3):319–26. doi:10.1080/03052150802265870. [Google Scholar] [CrossRef]

32. Hussein NK, Qaraad M, Najjar AMEl, Farag MA, Elhosseini MA, Mirjalili S, et al. Schrödinger optimizer: a quantum duality-driven metaheuristic for stochastic optimization and engineering challenges. Knowl-Based Syst. 2025;328:114273. doi:10.1016/j.knosys.2025.114273. [Google Scholar] [CrossRef]

33. El-Shorbagy MA, Elazeem AMA. Convex combination search algorithm: a novel metaheuristic optimization algorithm for solving global optimization and engineering design problems. J Eng Res (Kuwait). 2025;13(3):2320–39. doi:10.1016/j.jer.2024.05.008. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools