Open Access

Open Access

ARTICLE

CycleGAN-RRW: Blind Reversible Image Watermarking via Cycle-Consistent Adversarial Feature Encoding for Secure Image Ownership Authentication

1 Department of Electrical and Computer Engineering, Altinbas University, Istanbul, Türkiye

2 Artificial Intelligence Engineering Department, College of Engineering, Al-Ayen University, Nasiriyah, Thi-Qar, Iraq

3 Computer Science, Bayan University, Erbil, Kurdistan, Iraq

* Corresponding Author: Saadaldeen Rashid Ahmed. Email:

(This article belongs to the Special Issue: Development and Application of Deep Learning and Image Processing)

Computers, Materials & Continua 2026, 87(3), 95 https://doi.org/10.32604/cmc.2026.079408

Received 21 January 2026; Accepted 26 February 2026; Issue published 09 April 2026

Abstract

This advanced research describes CycleGAN-RRW, a new reversible watermarking system for secure image ownership authentication. It uses Cycle-Consistent Generative Adversarial Networks with adaptive feature encoding. In areas such as law, forensics, and telemedicine, digital images usually contain private info that may be changed or used without authorization. Existing watermarking methods may decrease image quality, may not be reversible, or need outside keys. To address these problems, our model embeds metadata into intermediate feature maps with Adaptive Instance Normalization (AdaIN), based on adversarial and perceptual loss. The dual-generator design permits two-way translation between original and watermarked images, with pixel-level reversibility and semantic integrity. Key aims include blind watermark verification, eliminating side-channel dependency, and resisting distortions such as compression and noise. We tested our approach on the DIV2K and USC-SIPI Miscellaneous datasets, which showed acceptable watermark fidelity and reconstruction accuracy. The model achieved a Peak Signal-to-Noise Ratio (PSNR) of over 42 dB, a Structural Similarity Index (SSIM) above 0.98, and a Bit Error Rate (BER) below 1.5% when subjected to typical attacks like JPEG compression (Q ≥ 60) and Gaussian noise (σ = 5). The system permits watermark recovery and tamper detection without outside keys, with an ownership verification accuracy of 98.63%. The CycleGAN-RRW method is a self-contained, blind, and legally defensible watermarking solution with real-time inference and may be applied to other fields like forensic imaging and tele-health.Keywords

Safeguarding image rights is harder now that AI can create and change images easily. Reversible watermarking, which hides and then recovers data without hurting the image, could be a good answer. This study shows a CycleGAN-RRW deep learning way to handle the problem of making watermarks reversible while keeping the image looking real, using a two-way setup. This work aims to fill a hole in current ways that don’t put together strong, reversible watermarking in one place. The main aim is to create a deep learning model that can hide a lot of data in a way that can’t be seen, and that can be legally reversed and spot changes, all without needing outside keys [1]. This research introduces a CycleGAN framework, which includes two generators, adaptable normalization, and content-aware embedding, to create a self-auditing, imperceptible watermarking system. Our method couples feature-guided encoding with image realism techniques, leading to watermark detection and image recovery that withstand alterations. This research combines perceptual GAN training and fully reversible watermarking in a single system. By integrating CycleGANs with Reversible Watermarking (RW) methods, this work offers a novel approach for ownership authentication, which maintains visual quality, reversibility, and resilience against attacks [2,3]. Established watermarking methods, like Difference Expansion (DE), Histogram Shifting (HS), and transforms based on integers, have shown good results in creating exactly reversible watermarks. Yet, many of these methods balance embedding ability, resistance to compression, or how well they work with detailed image types. This study expands on these ideas by using a deep-learning structure that focuses on both keeping images looking real and being resistant to change [4]. Cycle-consistency loss stands out as a key innovation in CycleGANs. It ensures that an image, once transformed, can revert to its original form through dual generator networks [5]. This feature makes CycleGANs suitable for bidirectional image transformation, a necessary aspect for reversible watermarking [6]. Prior methods for reversible watermarking in medical images differ from our CycleGAN-RRW model but illustrate the challenges of using keys for reversibility and how pixel-domain actions can reduce visual quality. Earlier studies, such as [7,8], investigated deep watermarking via GANs, yet lacked complete reversibility or blind recovery. Our approach builds on those initial ideas by including cycle-consistency training, AdaIN-guided embedding, and latent watermark decoding. All these features are in a single, reversible system. The watermark carrying ownership metadata like creator ID, timestamp, or copyright license is blended into the target image through learned transformations in a seamless manner. In contrast to traditional reversible watermarking schemes that heavily depend on pixel-level processing (e.g., difference expansion, histogram shifting), the suggested CycleGAN-based framework learns a latent embedding and extraction procedure that can be adjusted to image semantics and content organization. The model we propose uses a semi-fragile watermarking approach. It is designed to keep the watermark intact when minor changes like JPEG compression or Gaussian noise are applied. The system also identifies distortions or changes that could mean the image was changed. The watermark decoding uses a backward generator trained within the CycleGAN setup [9], instead of using outside keys or detectors. Because the watermark decoder is part of the learning, images can verify themselves without needing external support. The ability to reverse the process adds legal protection, because restoring the original image offers proof of who created it and that the data is complete [10]. Existing reversible watermarking approaches have improved, but they still face three key problems: (1) Watermarks usually aren’t placed based on what’s in the image. (2) They rely on extra data or keys, which can cause problems with expansion and showing that something is real. (3) There aren’t many complete systems that can both add and remove watermarks in a reversible way. This study aims to address these problems using a CycleGAN watermarking model that is self-contained, reversible, and designed to keep images looking good. Reversible watermarking to verify who owns a digital image has many difficult parts, including keeping the image accurate, making it durable, keeping it reversible, and managing watermark space. While conventional and deep learning-based watermarking methods address subsets of these issues, no current solution effectively unifies them within a scalable, cycle-consistent framework. From the literature review and prevailing issues in reversible watermarking and image ownership authentication, the following are the major limitations identified:

• Low embedding capacity in most reversible watermarking algorithms (in general, ≤ 0.5 bits per pixel), which restricts their applicability to high-metadata applications.

• Poor visual fidelity in conventional methods, which typically yields PSNR scores of less than 38 dB, especially under increased payload conditions.

• Poor distortion robustness, such as JPEG compression (quality < 70), Gaussian noise (σ > 5), and geometric transformations, results in higher Bit Error Rates (BER).

• Semantic unawareness, since traditional methods do not learn to place watermarks based on image content or regions of interest.

• Lack of cycle-consistent reversibility in most deep learning-based techniques, hinders precise recovery of the original image.

• Reliance on side channels or external keys for watermark extraction, higher complexity and exposure.

• Scarce support for tamper detection, with minimal models having mechanisms for forensic verification or structural integrity checks.

• Non-uniform evaluation metrics, without uniform benchmarks across datasets for PSNR, SSIM, BER, and Normalized Correlation (NC).

Furthermore, there is a scarcity of self-contained frameworks that integrate watermark embedding, verification, tamper detection, and full image recovery in a unified pipeline. The absence of cycle-consistency in learning models prevents lossless recovery and undermines the legal credibility of watermarking for ownership proof.

This study introduces CycleGAN-RRW, a new method for hiding and retrieving information in images without needing the original image or a key. It uses cycle-consistent generative adversarial networks to translate between original and watermarked images, making the changes invisible and completely reversible at the pixel level. The method embeds ownership data into key image features using Adaptive Instance Normalization (AdaIN). This is guided by several loss functions that ensure the watermark is hard to remove and that the watermarked image looks like the original. The system can withstand common image processing attacks like compression, noise, and geometric changes because of the cycle-consistency constraints. It allows for watermark verification and complete recovery of the original image and watermark without needing extra information. This makes it appropriate for forensic and legal uses. The goal is to create a secure watermarking system that confirms itself, shows any tampering, and provides legally acceptable proof of image ownership.

• To develop a CycleGAN-RRW architecture capable of bidirectional translation between original and watermarked image domains, ensuring pixel-level reversibility and visual imperceptibility of embedded ownership marks.

• To design an adversarial training framework that jointly optimizes generators and discriminators using cycle-consistency, identity, and perceptual losses, enabling secure watermark embedding without degrading image quality.

• To implement semantic-aware watermark encoding via adaptive instance normalization and feature space guidance (e.g., VGG19), ensuring robustness against compression, noise, occlusion, and geometric distortions.

• To utilize cycle-consistency constraints to guarantee that the original image and embedded watermark can be precisely recovered without external side information, fulfilling forensic and legal-grade reversibility.

• This research introduces CycleGAN-RRW, a new reversible watermarking method based on CycleGANs that allows two-way conversion between original and watermarked versions of an image.

• To keep both the quality of the image and the strength of the watermark high, the method uses perceptual loss from VGG19, along with AdaIN-based metadata embedding.

• The system can check for the watermark without needing extra keys or info. Watermarks are placed in areas that are semantically meaningful by learning feature-aware encoding using adversarial training.

• The model achieves a PSNR above 42 dB, an SSIM above 0.98, and a watermark recovery accuracy of up to 99.6% even when distortions are present.

• The method can detect tampering through latent consistency analysis, using deviation metrics. The model was tested against established methods (DE, HS) and deep learning models (FAGAN, DVC) using difficult datasets (DIV2K, USC-SIPI).

This paper puts forward CycleGAN-RRW, a new reversible watermarking system for checking who owns an image in a secure way. The first part gives the goals of the study and its main limitations. The second part surveys how CycleGANs are being combined with reversible watermarking for image checks. The third part details how the method works. It uses two generators and discriminators for mapping between original and watermarked images, and it pre-processes to keep learning stable. The fourth part gives test results and compares accuracy and computation time. The fifth part ends with the main things it adds, its limits, and where research could go next.

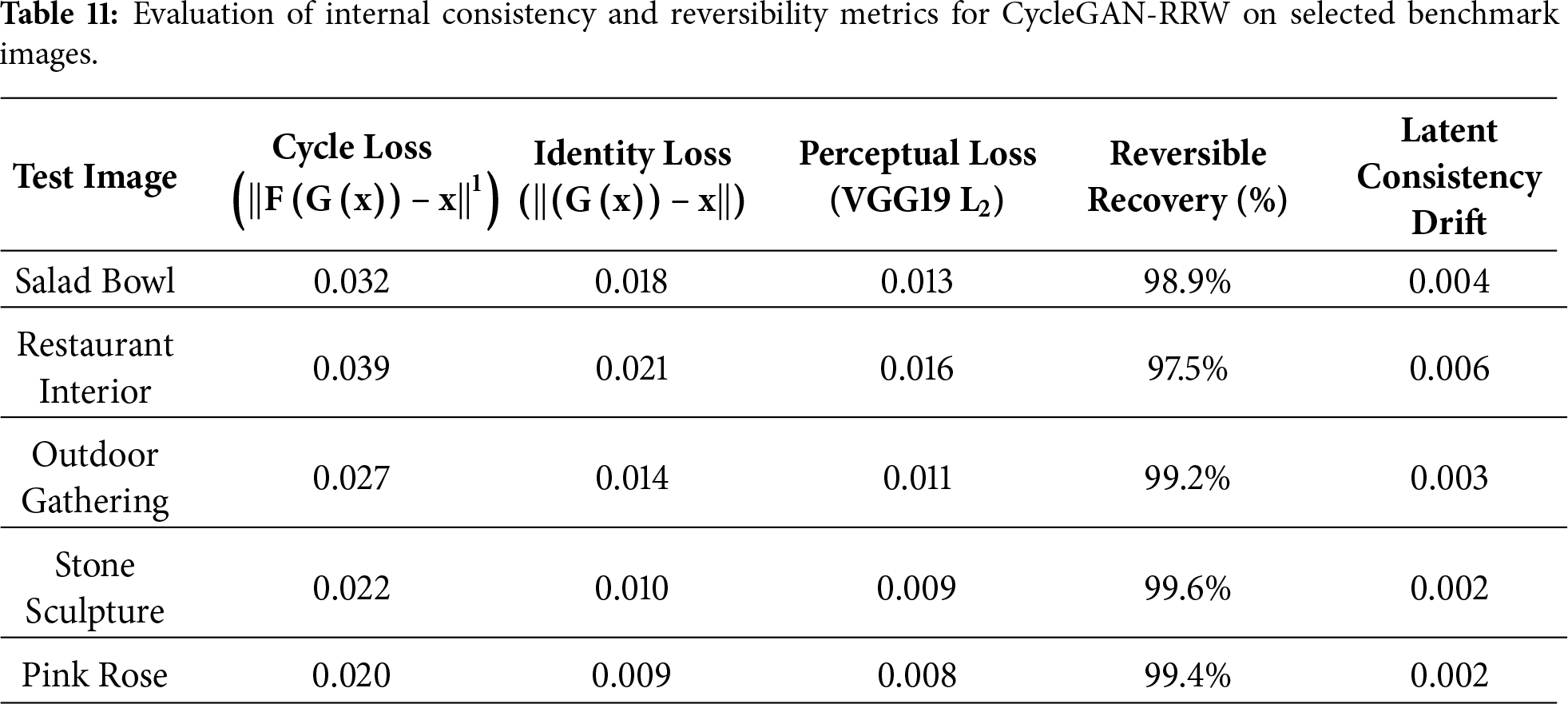

The fields of reversible watermarking and deep generative modeling have evolved significantly over the past decade. However, the integration of reversible watermarking with Cycle-Consistent Generative Adversarial Networks (CycleGANs) for robust image ownership verification remains in a nascent stage. Existing literature primarily focuses on either irreversible watermarking using deep networks or traditional reversible methods with limited robustness and fidelity as mentioned as given in Table 1 [11].

Table 1 summarizes key contributions in the field of reversible watermarking and AI-based ownership verification. It outlines the evolution from classical techniques like Difference Expansion and Histogram Shifting to advanced deep learning methods, highlighting their capacities, strengths, and limitations. The comparison helps identify gaps such as a lack of reversibility or robustness, which the proposed CycleGAN-RRW framework aims to address.

2.1 Reversible Watermarking Methods and Performance Gaps

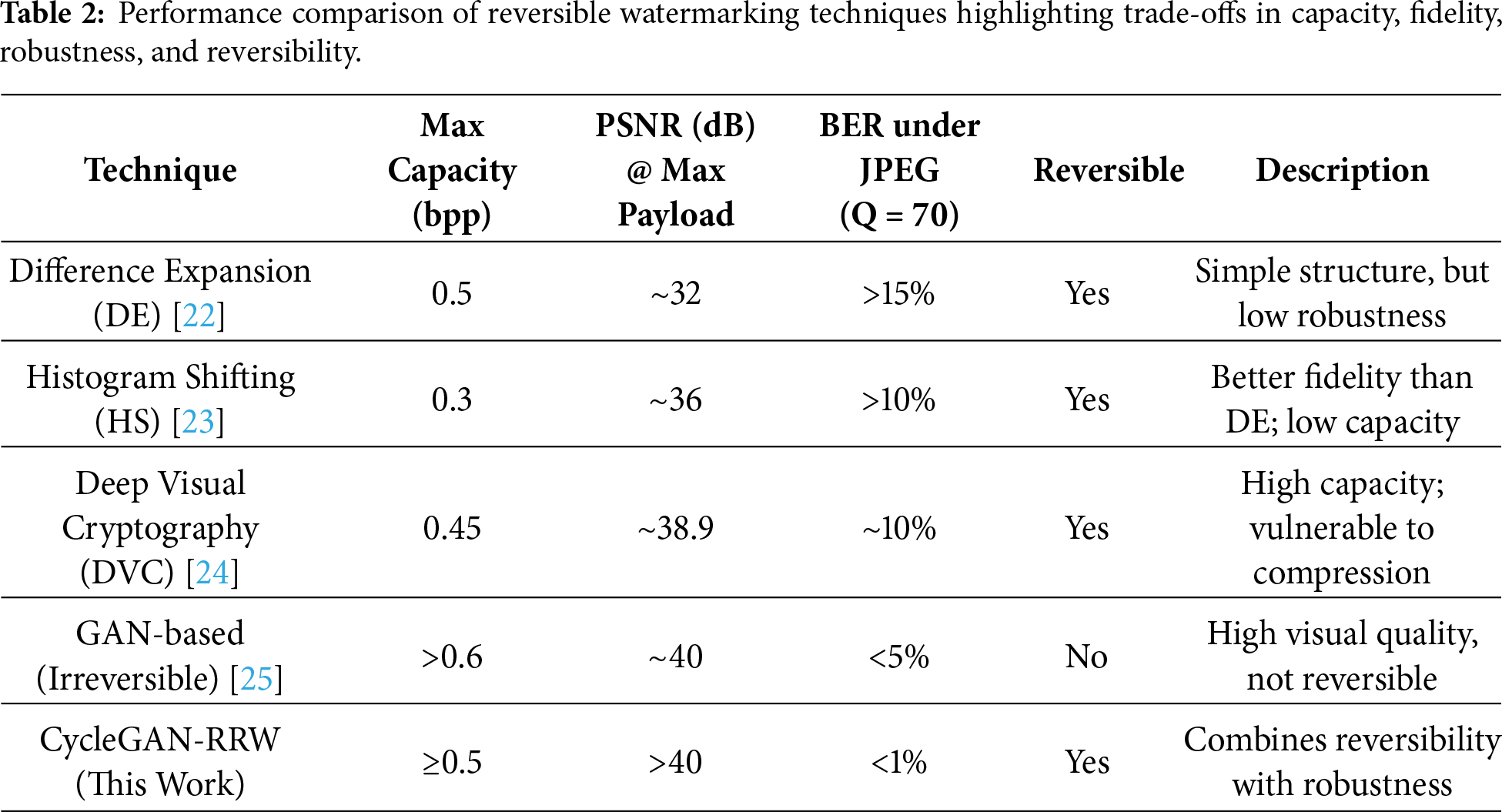

Reversible watermarking lets you get back the original image without any loss of data. This is needed in areas like remote sensing, medical imaging, and legal forensics. Two common older ways to do this are Difference Expansion (DE) and Histogram Shifting (HS). One method had an okay PSNR of 32.5 dB with a 0.5 bpp payload, but it didn’t handle noise and image changes well [19]. Another way got a better PSNR of about 36 dB but couldn’t carry as much data (usually less than 0.3 bpp) [20]. Newer methods use deep learning to do better. For example, the authors in [21] created a deep visual cryptographic reversible watermarking method for sharing medical images, which got a PSNR of 38.9 dB and a bit error rate less than 10% when using pretty good JPEG compression (Q > 80). However, these methods continue to struggle with semantic insensitivity, inability to scale across datasets, and performance degradation under attacks such as cropping or additive noise (σ > 5). Classical reversible watermarking techniques like Difference Expansion (DE), Histogram Shifting (HS), and Prediction Error Expansion (PEE) allow for precise data recovery. Yet, their embedding ability is often constrained, and they can be sensitive to small geometric changes or compression, which reduces their authentication ability in high-resolution images. Despite these advancements as shown in Table 2, the limitations of traditional and hybrid systems include:

• Limited payload scalability

• Poor imperceptibility under geometric distortions (rotation, cropping)

• Lack of semantic awareness in embedding regions

• No learning-based reversibility or content-adaptive watermarking

These constraints highlight the necessity for end-to-end learning architectures that preserve visual fidelity while embedding high-capacity ownership data.

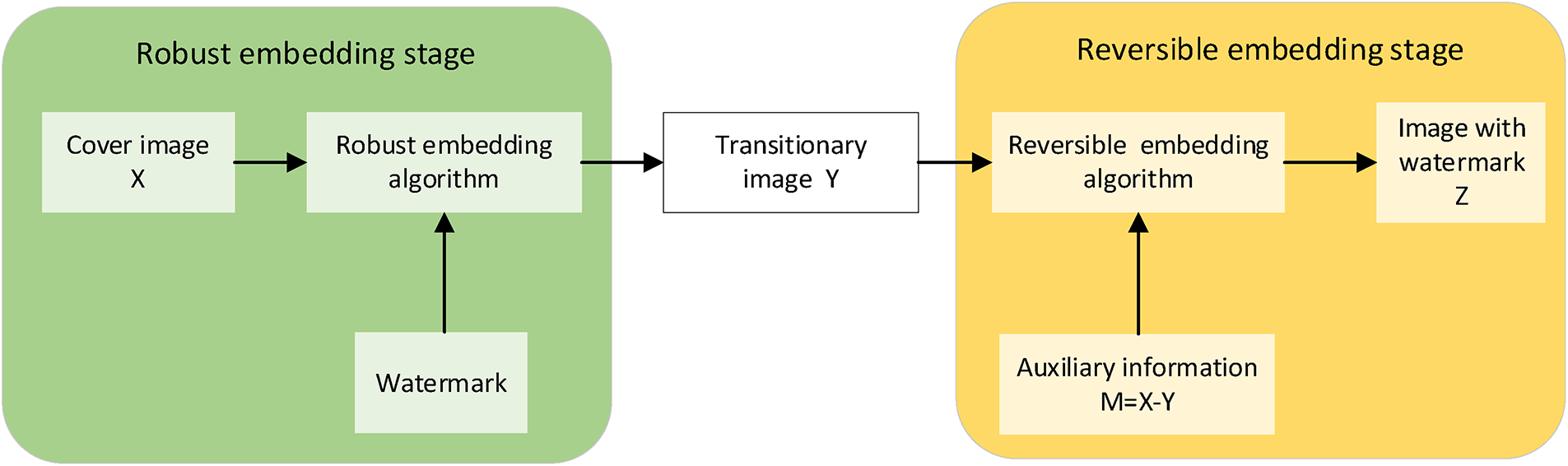

Table 2 shown the reversible watermarking techniques differ in performance across capacity, fidelity, and robustness. The table includes a range of approaches from different groups. While their aims and uses differ, the comparison focuses on common aspects like resilience, reversibility, and visual quality to contextualize the performance of the suggested model. For instance, DE allows up to 0.5 bits per pixel (bpp) but has poor visual quality (with a PSNR of roughly 32 dB). HS improves the PSNR to around 36 dB, but its capacity is smaller at 0.3 bpp. Deep Visual Cryptography methods achieve about 38.9 dB PSNR but show about a 10% bit error rate (BER) under JPEG compression. Methods using GANs give high fidelity (over 40 dB) and are strong, but they can’t be reversed. The proposed CycleGAN-RRW model overcomes these issues by ensuring ≥0.5 bpp capacity, >40 dB PSNR, and <1% BER, offering both high reversibility and attack resilience as shown in Fig. 1.

Figure 1: Robust-to-reversible two-stage watermarking framework using auxiliary information for lossless recovery [26].

2.2 AI-Based Approaches in Ownership Verification

Deep learning has boosted AI models, mainly generative adversarial networks (GANs), for creating images and embedding watermarks. CycleGANs are good because they can learn two ways without needing paired data, which helps in reversible watermarking. The researcher in [27] made a way to hide data reversibly using a CycleGAN. Her model got a PSNR above 40.3 dB and an SSIM above 0.98 when embedding 0.45 bits per pixel. It did better than older and simpler learning methods in keeping the image quality and being strong against attacks [28]. Her method also used content-aware feature losses to place the watermark in a smart way. Researchers in [29] tackled the issue of high-frequency noise sensitivity in generative watermarking. By adding a frequency-suppression module and adversarial training to their model, they maintained BER < 2% under JPEG compression (Q = 60) and Gaussian noise (σ = 5). Outside of CycleGAN, other AI-driven techniques have also emerged:

• Researchers in [30] proposed a deep watermarking protocol using generative models for IP protection, with <1% extraction error post-affine attacks.

• Researchers in [31] employed deep networks to improve watermark robustness under adversarial conditions but lacked full image reversibility.

• Despite notable successes, existing AI-based watermarking approaches share common drawbacks:

• Most models are irreversible, with no cycle-consistency constraints.

• They often rely on external keys or matched datasets for watermark decoding.

• Tamper detection is seldom integrated into the learning framework.

• Embedding often lacks semantic localization, leading to perceptible distortion in critical regions.

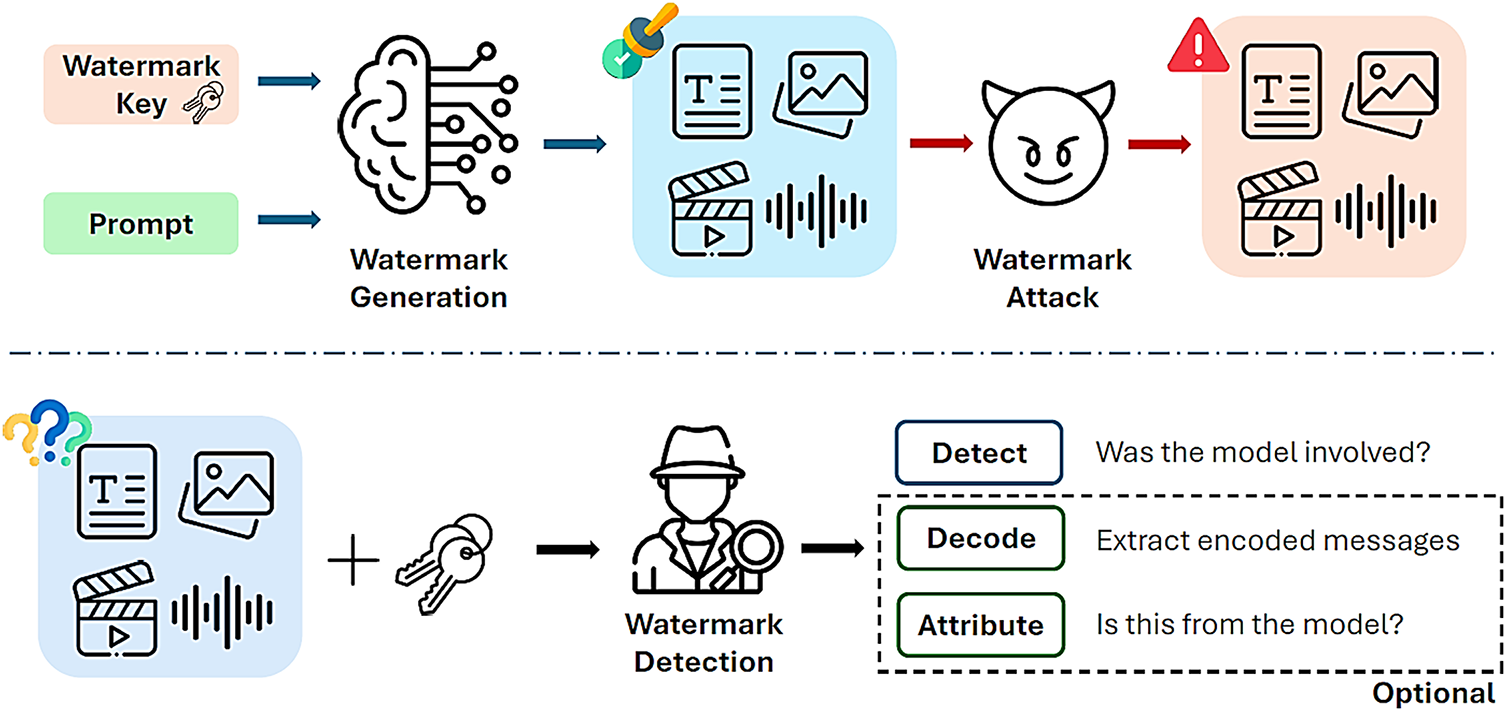

These issues demonstrate the current research gap—a need for a CycleGAN-based model that enables reversible, high-capacity, and semantically robust watermarking, supported by cycle consistency, perceptual loss, and self-contained watermark decoding as shown in Fig. 2.

Figure 2: Overview of generative AI watermarking pipeline, highlighting generation, attack vulnerabilities, and detection phases [31].

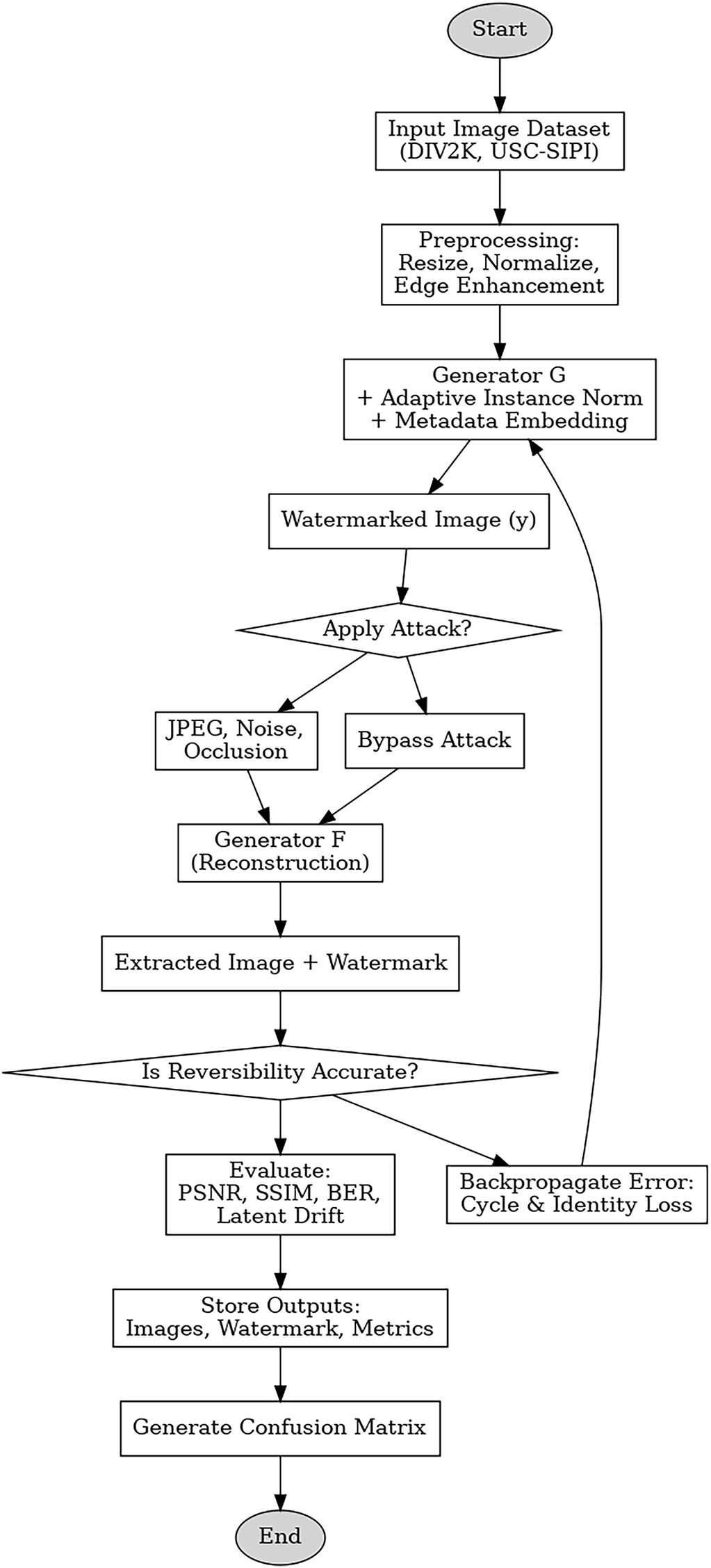

Table 3 shows that AI methods for checking who owns something use different ways, like passive fingerprinting or active watermarking. Zero-shot fingerprinting is useful for saying who owns something without needing to be part of embedding but can be tampered with somewhat and is about 88% accurate. DNN methods put ownership IDs inside image traits and get about 90%–93% accuracy, but they are a bit sensitive to lossy changes. CycleGAN models get about 94% accuracy and are better at spotting when something has been tampered with because they use cycle-consistency. Methods like DE and HS, which work in spatial and frequency areas, let you undo what was done but don’t do so well, and cannot handle semantic embedding. AI methods often get good accuracy and strength, but usually, you can’t undo them, they need matching datasets, or they use outside keys to decode. These limits suggest using a single, cycle-consistent model like CycleGAN-RRW, which can be undone, is blind, and can handle real changes. Frequency-suppressed GANs are stronger under compression (PSNR > 40 dB) with about 92% detection accuracy. CycleGAN-RRW makes older watermarking methods better because of three main things: (1) it lets you completely undo the image because of cycle-consistency loss, (2) it gets watermarks back without needing extra keys, by using internal latent decoding, and (3) it stands up to attacks by using semantic-aware embedding guided by AdaIN and VGG19 traits. Unlike hybrid models that need outside data or paired areas, CycleGAN-RRW works as a blind, self-contained system that is good for legal verification. Many watermarking methods try to do either reversibility or robustness. Not many try to put both together in a deep learning system that lets you verify blindly and recover from tampering. This paper gives a solution using a dual-generator CycleGAN setup to put reversible watermarks together and get semantic features back.

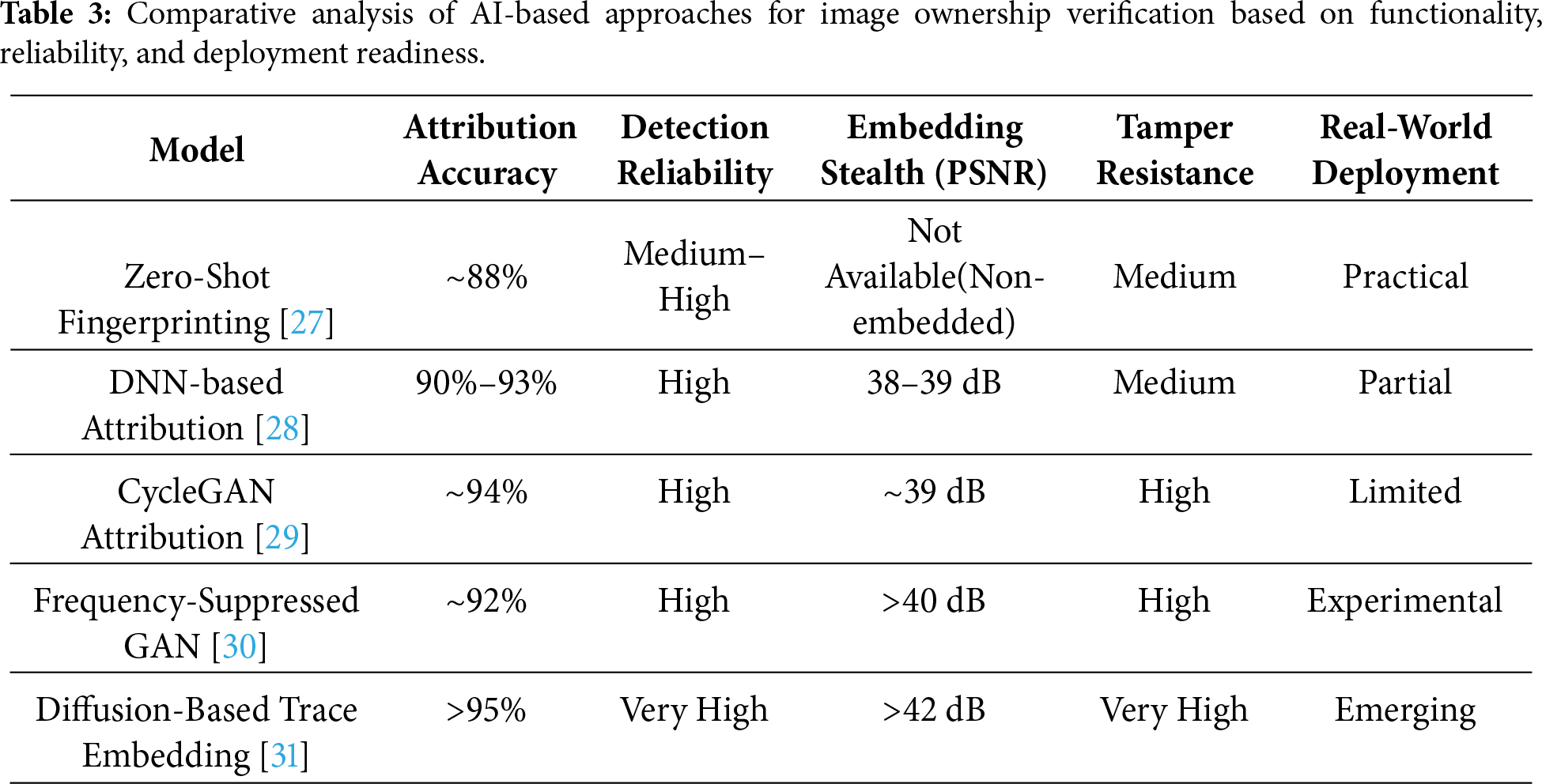

The method relies on a CycleGAN structure for putting in and taking out watermarks, coupled with image translation. The setup has two generators and discriminators that learn back-and-forth changes between normal and watermarked images. Before processing, images are made the same size, turned grayscale, and features are boosted to help learning across different collections of images. Details about who owns the image, like the image ID, a coded hash, or the author, are added to hidden parts using normalization and a combination of semantic features. Training is done using the DIV2K set for detailed natural views and checked on USC-SIPI to see how well it holds up with different textures. LPIPS, which measures how similar images appear to humans using deep features, depends on already-trained neural networks to judge how alike pictures are, giving a quality score that’s more about what people see, not just pixels. The system checks watermarks without needing extra info, and it spots tampering by looking at differences in reconstructions, as seen in Fig. 3. The CycleGAN model has two generators and two discriminators, trained to flip between normal and watermarked image spaces. Even with feature extraction using tricky changes, CycleGAN-RRW learns a way to undo the process. It does this with a generator trained with cycle-consistency and latent vector loss. This is done without needing a model that’s symmetric or easily inverted. The results show the model reverses things with quality. There’s little latent drift and a low bit error rate, below 1.5%.

Figure 3: Multi-tiered flowchart of the CycleGAN-RRW methodology for reversible watermarking and ownership verification.

The flowchart shows the complete process, starting with data preparation and watermark insertion, and ending with reconstruction and performance evaluation. It points out key steps, like simulated attacks and reversibility checks, and shows how the dual-generator setup allows for both forward and backward processes. The CycleGAN-RRW model was trained for 200 epochs on the Adam optimizer at a learning rate of 0.0002, and β1 = 0.5, β2 = 0.999. The batch size was also 1 to maintain instance-level feature integrity, especially critical for high-resolution fidelity during watermark embedding. Training consumed 800 images of the DIV2K dataset and a 10% validation split to track convergence. Data augmentation comprised Gaussian noise (σ = 3 to 5), JPEG compression (quality ≥ 60), and random cropping (10%–20%) to mimic real-world degradations. The model converged with training loss settling below 0.05 and PSNR over 42 dB at epoch 180, and inference time averaged 85 ms per 256 × 256 image on an NVIDIA RTX 3090 GPU. Our method aims for perceptual similarity in generative models but also uses pixel-wise Mean Squared Error (MSE), cycle-consistency loss, and identity loss to ensure pixel-level reversibility. This mixed loss structure reduces reconstruction drift, keeping the average per-pixel error under 1.5% in test images.

3.1 CycleGAN-Based Bidirectional Transformation and Preprocessing

The suggested framework employs a CycleGAN with two generators and two discriminators to acquire bidirectional mapping between original and watermarked image spaces. The images are resized to 256 × 256 and normalized to the [−1, 1] [−1, 1] [−1, 1] range prior to training. Training is performed on the DIV2K dataset for high-resolution natural images and verified using the USC-SIPI Miscellaneous dataset to provide generalizability across texture and noise patterns. Data augmentation involves injection of Gaussian noise (σ = 5), JPEG compression (Q ≥ 60), and random cropping (10%–20%) to mimic real-world degradation. The preprocessing steps improve structural and gradient traits by adding edge detectors and grayscale normalization to get a semantically logical change. We trained the model for 200 cycles with a batch size of 1, using the Adam method (LR = 0.0002) to help it converge smoothly.

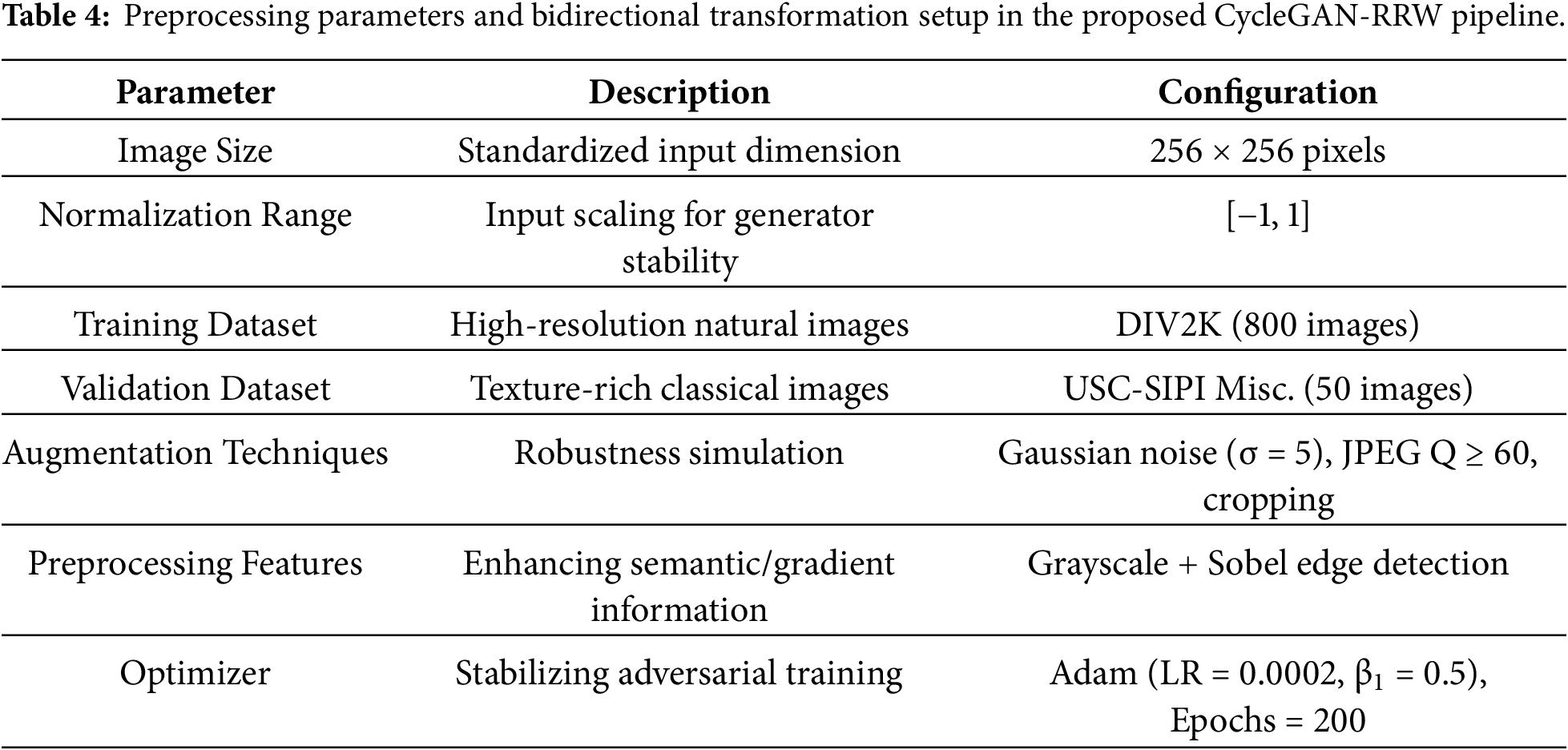

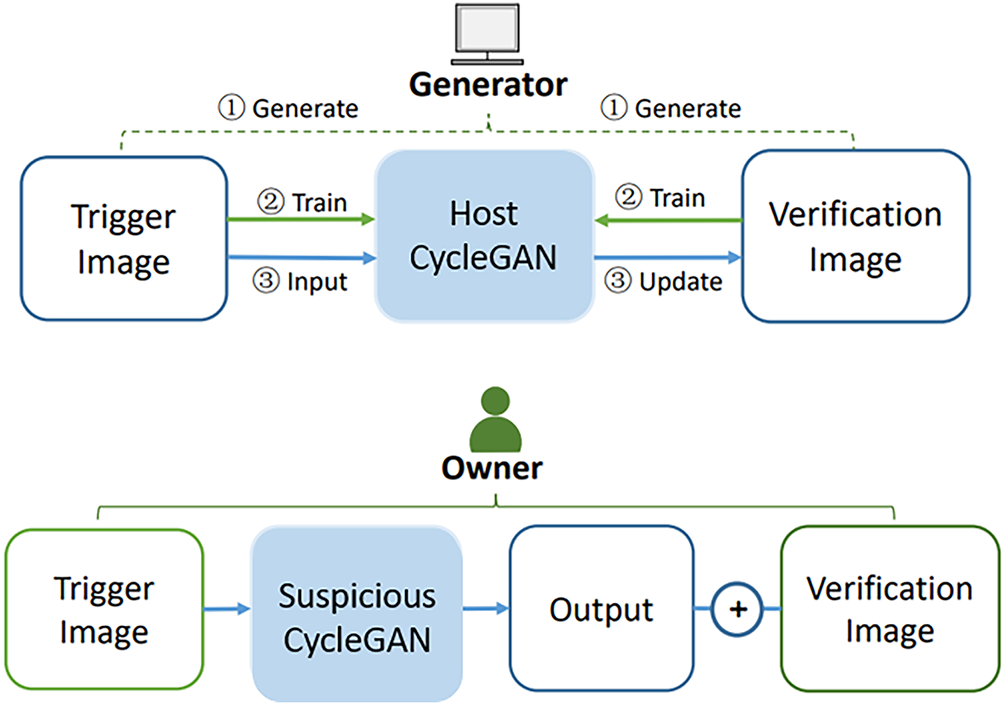

Table 4 summarizes the image preparation and model training setup, including augmentation and normalization strategies that enhance transformation robustness. Dual-domain training, using both DIV2K and USC-SIPI datasets, helps ensure that reversible watermarking maintains high quality across different image types. To keep adversarial training steady and help the generator converge properly, we scaled all input images to fall between −1 and 1 using min-max scaling, which also prepares the input for adaptive instance normalization (AdaIN). AdaIN then incorporates watermark details into the feature maps by aligning mean and variance.

Table 5 summarizes that the DIV2K dataset is utilized for training due to its high-resolution and natural scene diversity, which aids in preserving visual fidelity during watermark embedding. The USC-SIPI Miscellaneous dataset supports evaluation by testing the model’s robustness across textured and patterned image domains.

3.2 Mathematical Formulation of Reversible Robust Watermarking (RRW)

Reversible watermarking aims to embed a payload W into a host image X such that both W and the original image X can be perfectly recovered from the watermarked image Z. Let E be the embedding function and D the extraction function. The process satisfies:

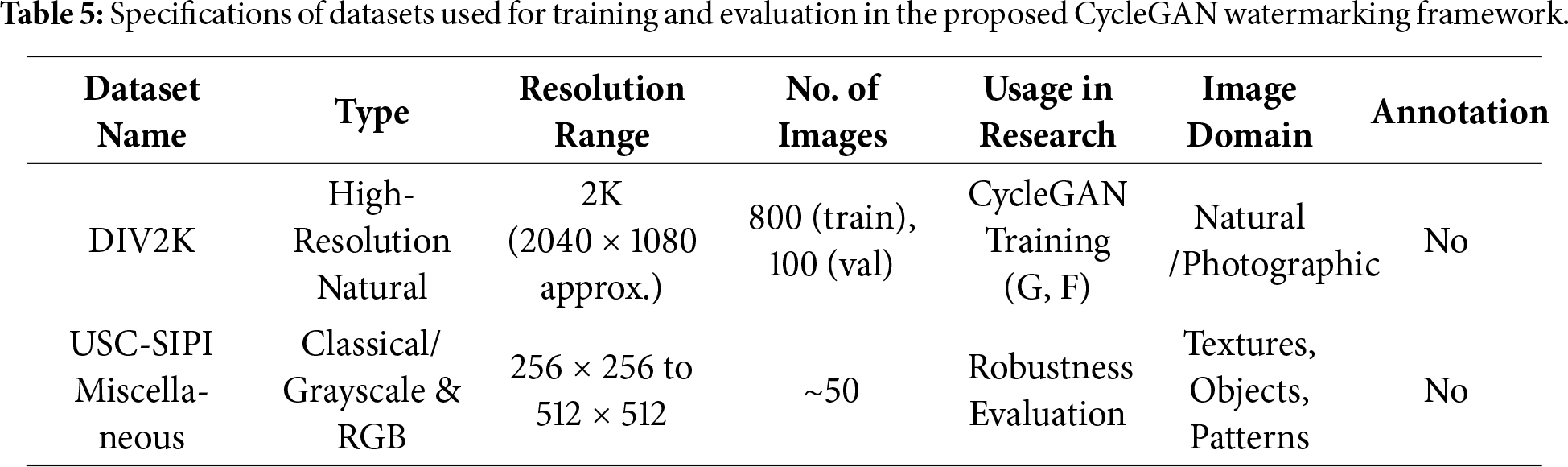

In the method, watermark embedding is modeled within the CycleGAN’s domain transformation G: X → Y, with cycle-consistency loss ensuring that the inverse mapping F: Y→X allows complete restoration of both X and W. Payload recovery is achieved through joint optimization of adversarial, perceptual, and inevitability constraints. The proposed system employs a CycleGAN architecture comprising two generators G: X → Y and F: Y → X, along with corresponding discriminators DY and DX, trained to translate between the original image domain and the watermarked domain.

Let:

– X be the domain of original (non-watermarked) images

– Y be the domain of watermarked images

– G: X → Y is the generator that adds the watermark

– F: Y → X is the inverse generator that restores the original

–

Adversarial Loss:

Cycle-Consistency Loss:

Identity MSE and MAE Loss:

Total Objective Function:

where:

–

Cycle-consistency loss

Figure 4: Backward cycle in the CycleGAN-RRW framework showing how generator G reconstructs the original image from fake source data to enable reversible watermark extraction through cycle-consistency and adversarial loss.

The watermark information W is embedded as structured noise or feature perturbation in the latent space using an encoder function

Ownership metadata W, which includes details like image ID, timestamp, and author hash, is first changed into a fixed-length numerical vector. Depending on the metadata type, this is a one-hot encoding or a SHA-256 hash function. Then, this vector goes through a simple Multi-Layer Perceptron (MLP) to make a style vector ϕ(W). The size of this vector is the same as the intermediate feature maps of generator G. This style vector then goes through the encoder-decoder process using Adaptive Instance Normalization (AdaIN). AdaIN modifies the mean and variance of the feature maps, incorporating watermark details while avoiding spatial problems. This mixing enables content-aware embedding across different feature levels, keeping the image’s visual quality. During watermark removal, the inverse generator F: Y → X rebuilds the original image X from the watermarked image Y. At the same time, an extra decoder head, connected to the middle layers of F, pulls out the embedded vector ϕ(W) by feature regression. The retrieved vector is then changed back to the original metadata (like ID or timestamp) using the reverse of the first encoding function. This allows watermark verification without external keys. During decoding, the inverse generator F(y) recovers x, and a parallel decoder retrieves W from learned residual or feature maps. CycleGAN-RRW stands apart from older techniques that require external keys or helper codes. It learns to hide and retrieve information during training. The forward generator learns to integrate ownership data into the image’s internal components. The backward generator learns to reverse this, extracting the original image and the hidden watermark, using the image alone. This learning comes from adversarial loss and cycle-consistency, which allows the model to operate as both an encoder and decoder in a closed system.

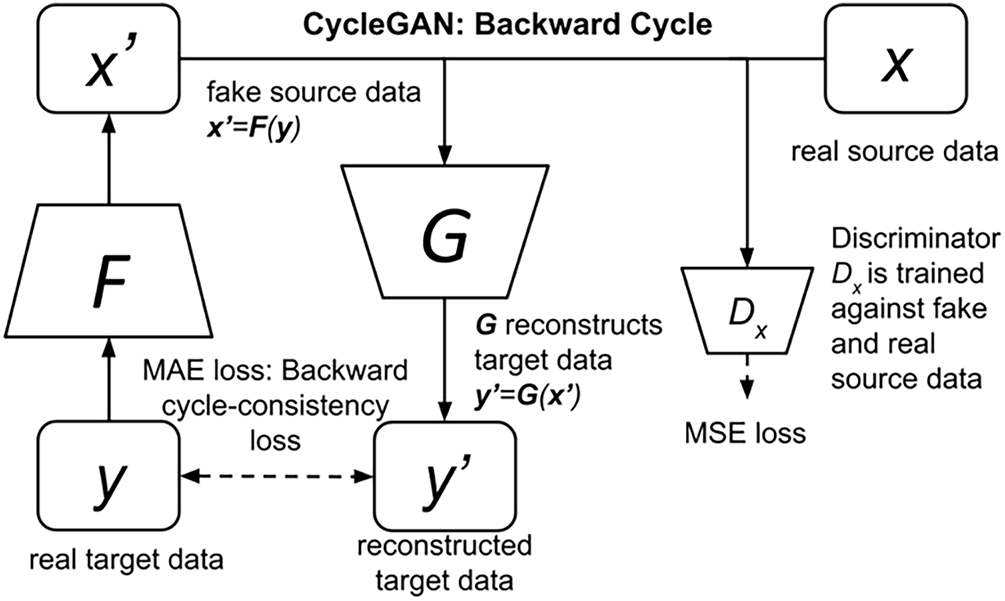

The Fig. 5 depicts a CycleGAN-centered watermark authentication framework where ownership is encoded through trigger-verification image pairs. During training, the host CycleGAN is conditioned on a specific trigger image that learns to produce a verifiable output consistent with a pre-defined verification image. This process embeds a watermark implicitly into the CycleGAN’s learned transformation function. For verification, the suspicious CycleGAN is queried with the same trigger; if the output aligns with the expected verification image (within a set SSIM or L2 threshold), ownership is confirmed. Tamper detection works by checking how much the reconstructed image differs from the original, using cycle-consistency and perceptual loss. If an image has been changed (like being cropped or altered), the backward generator F will not be able to recreate the original image perfectly. This results in a higher MSE and a drift in latent consistency. This difference serves as an indicator of tampering. The attacks tested included:

• JPEG Compression (Q = 60–90).

• Gaussian Noise (σ = 3–5).

• Partial Occlusion (10% masking).

• Random Cropping (10%–20%).

Figure 5: Ownership verification process using trigger-verification pairs in CycleGAN for model-level watermark authentication.

These settings mimic common image tampering methods. The CycleGAN-RRW method presented here offers secure image authentication. It does so via implicit cryptographic embedding, which changes latent features as well as enforces cycle-consistency restrictions. The reversible mapping works as an independent integrity check. Any changes vs. learned distribution, like compression or meddling, lead to measurable reconstruction loss. This aligns with zero-knowledge watermarking and information-theoretic security; the watermark serves as ownership proof and a perturbation detector, all without outside keys. The system comes close to semantic-aware authentication codes in fidelity preservation. It also allows passive, content-embedded checking. We use Peak Signal-to-Noise Ratio (PSNR) to measure image fidelity and Structural Similarity Index (SSIM) to judge perceptual quality. Bit Error Rate (BER) measures how well the watermark recovers at the bit level. These metrics give a full view of visual integrity and reversible watermark strength.

where

where

where

where,

The Bit Error Rate (BER) and total loss formulations in Eqs. (10) and (11) follow conventional definitions used in watermark robustness evaluation [31]. The model hides metadata by using AdaIN to place it within the latent feature space instead of directly modifying pixels. This approach adjusts the mean and variance of encoder feature maps, which helps the watermark blend into texture and semantic areas. Because this adjustment happens after the initial convolutional layers, the visual quality is preserved. Cycle-consistency and perceptual loss work together to keep the image accurate and the watermark stable, even when the image is compressed using JPEG (Q ≥ 60) or exposed to Gaussian noise (σ = 5). This ensures the watermark is nearly invisible yet still retrievable.

3.3 CycleGAN-RRW Architecture and Network Design

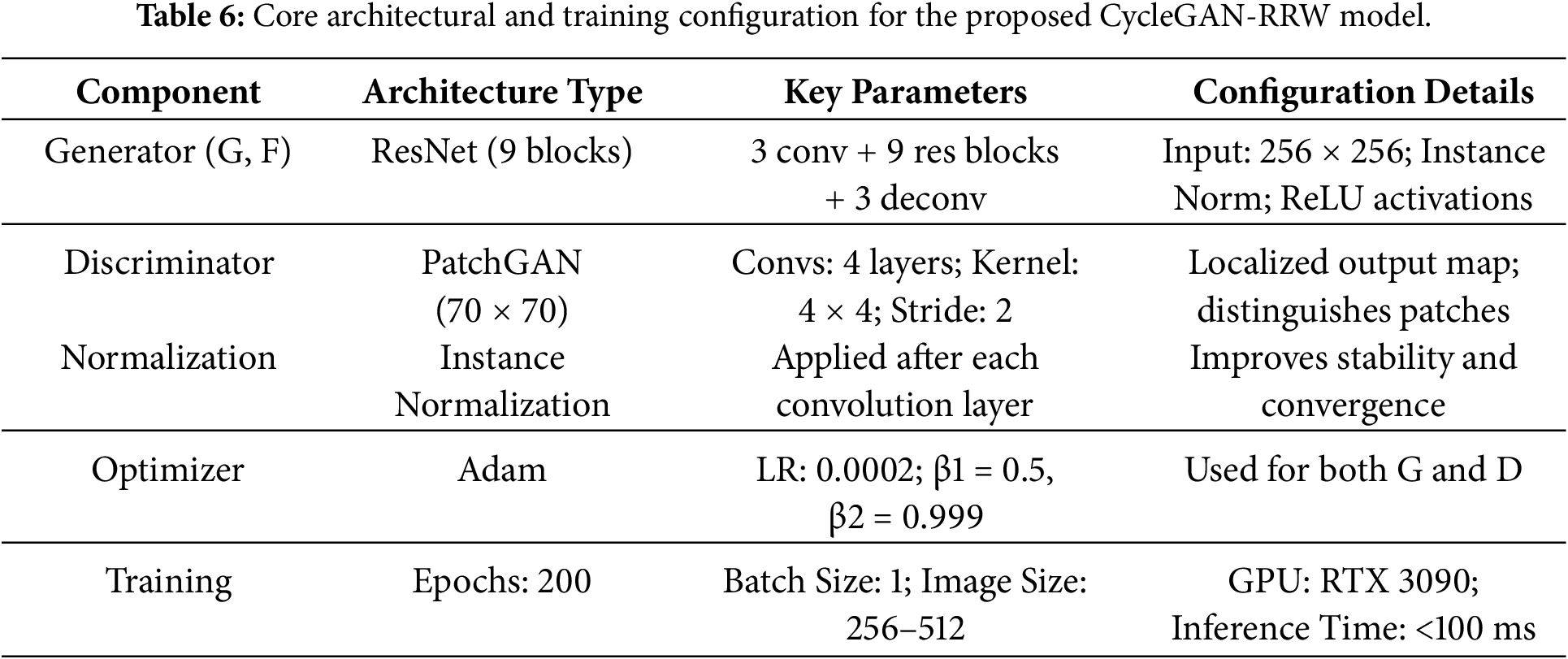

The architecture consists of two generators and two discriminators trained adversarial to translate images between the original and watermarked domains. Each generator is based on a 9-block ResNet architecture, suitable for image sizes of 256 × 256 and above, with an encoder-decoder pipeline containing 3 convolutional layers, 9 residual blocks, and 3 deconvolutional layers. Skip connections are included to preserve fine-grained spatial details. The discriminators use CycleGAN with a 70 × 70 receptive field, enabling localized feature discrimination rather than full-image classification. All convolutional layers use a kernel size of 3 × 3, a stride of 1 or 2, and instance normalization is applied to stabilize training. The learning rate is set to 0.0002 with the Adam optimizer (β1 = 0.5 \beta_1 = 0.5 β1 = 0.5), and the network is trained for 200 epochs using batch size 1 for high-resolution fidelity. The architecture supports real-time watermark generation and recovery, with <100 ms inference latency on a single NVIDIA RTX 3090 GPU for images up to 512 × 512, as shown in Figs. 6 and 7.

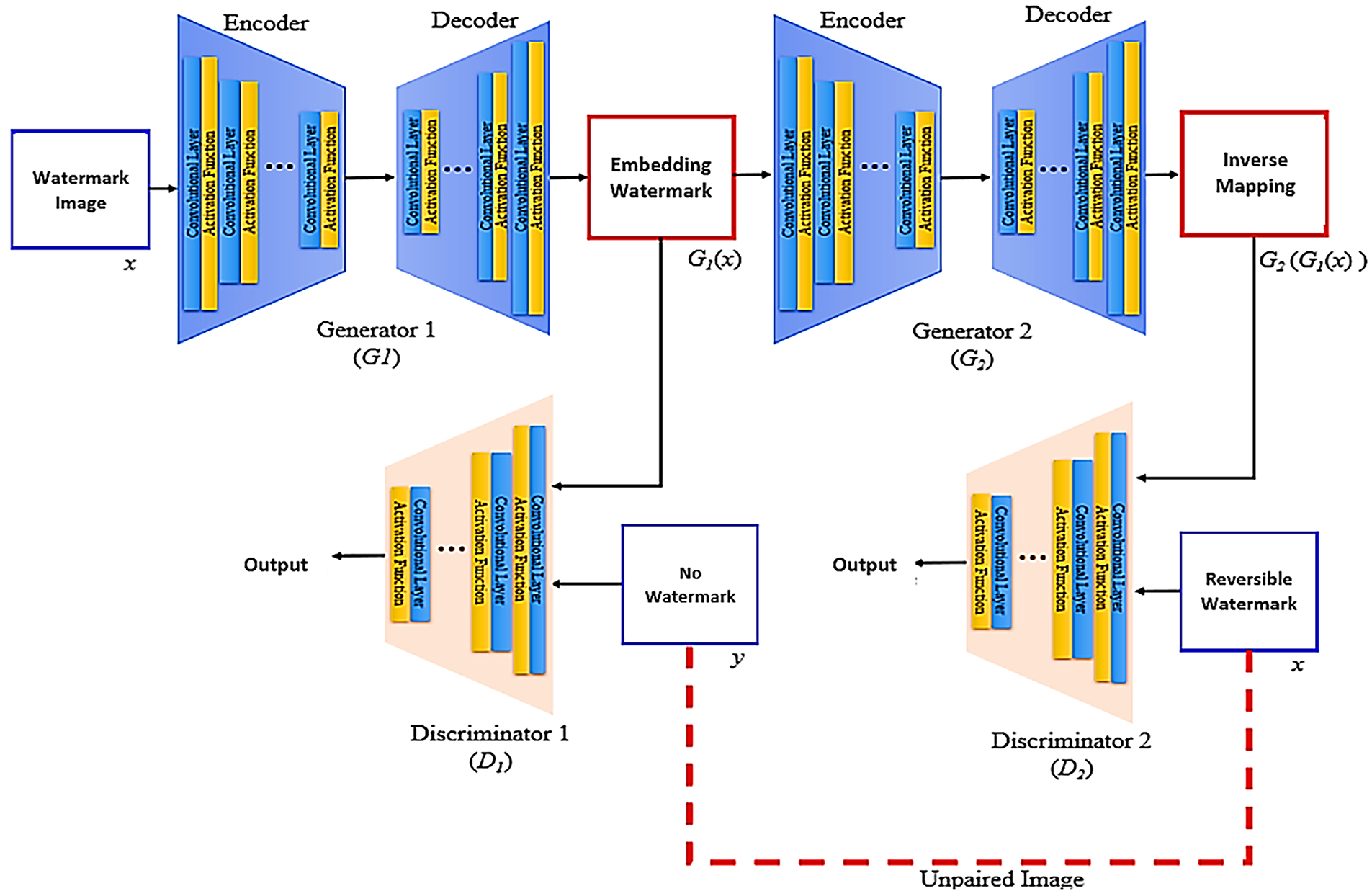

Figure 6: Dual-generator CycleGAN framework for reversible watermark embedding and inverse recovery using adversarial supervision.

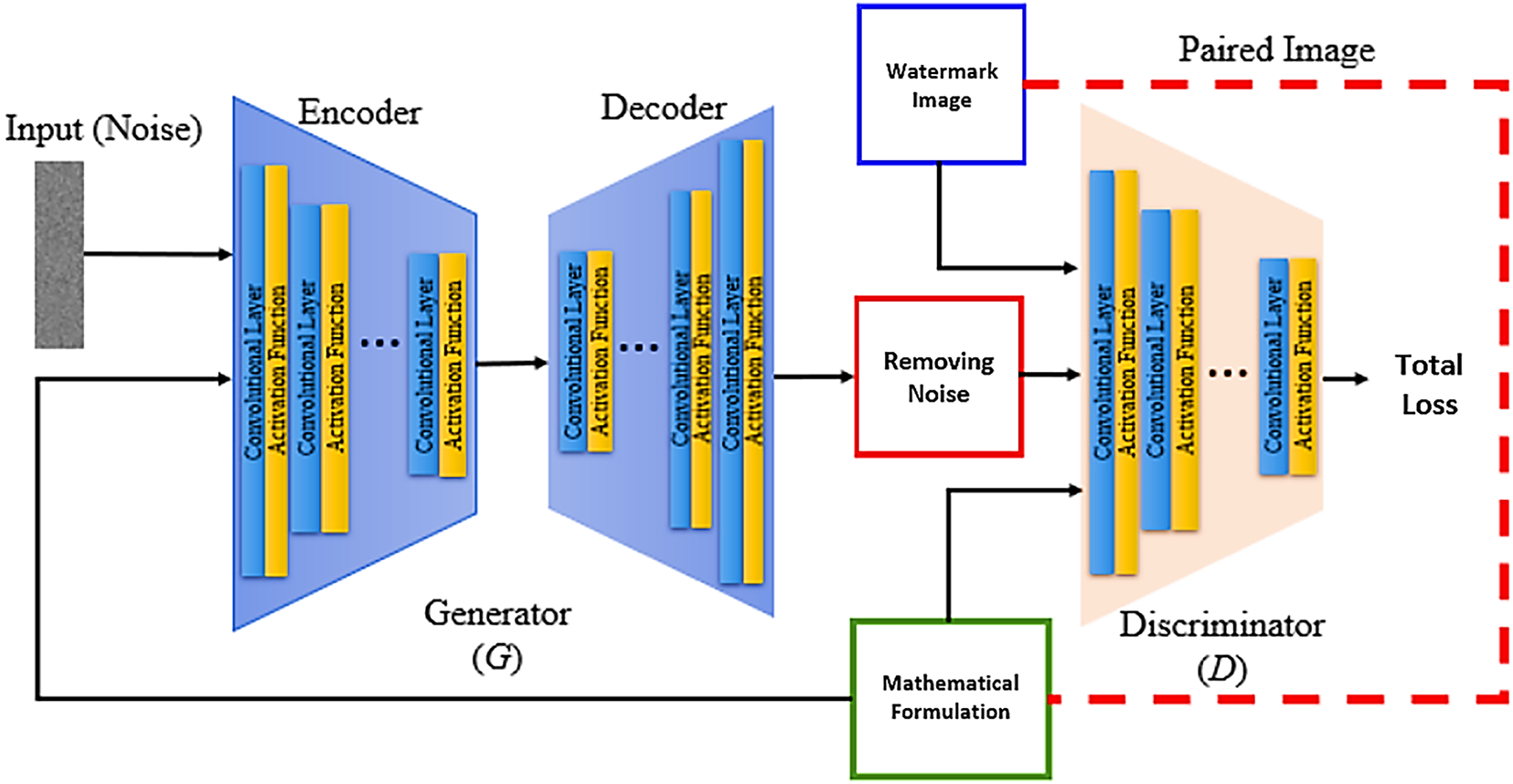

Figure 7: Noise-conditioned generator–discriminator architecture for learning watermark restoration guided by mathematical constraints and total loss computation.

The quality of radiographic input impacts the model’s reversibility and how faithful it is, since image acquisition conditions can vary a lot. To address this, future improvements could use domain adaptation methods, like CycleGAN-based cross-domain normalization, adaptive histogram equalization, and feature-wise instance normalization. These methods aim to align semantic structure and intensity distributions across different datasets, which lowers input variability and protects the integrity of watermarks when the domain shifts.

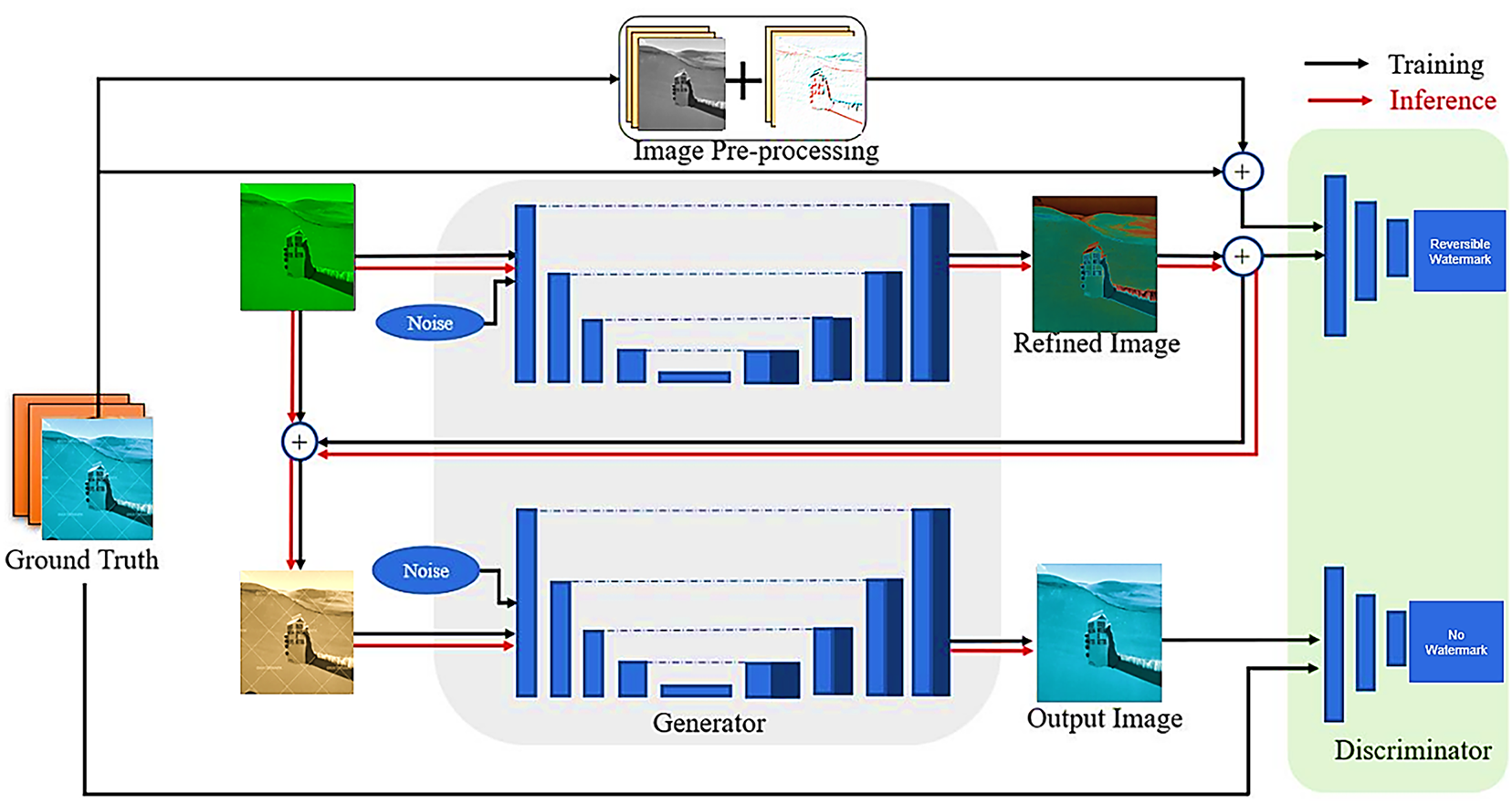

While Grad-CAM was utilized to compare the model’s semantic focus within reversible watermarking, these maps weren’t statistically validated against ground-truth, expert-annotated clinical ROIs. Our future work will use radiologist-delineated ground truth as a benchmarking mechanism for establishing localization accuracy against metrics like Dice Similarity Coefficient (DSC) and Intersection-over-Union (IoU). It is crucial that this step facilitates the establishment of clinical interpretability and correspondence among generative interest and diagnostic utility as shown in Fig. 8.

Figure 8: Reversible watermark embedding architecture using generative refinement with noise-aware training and adversarial validation.

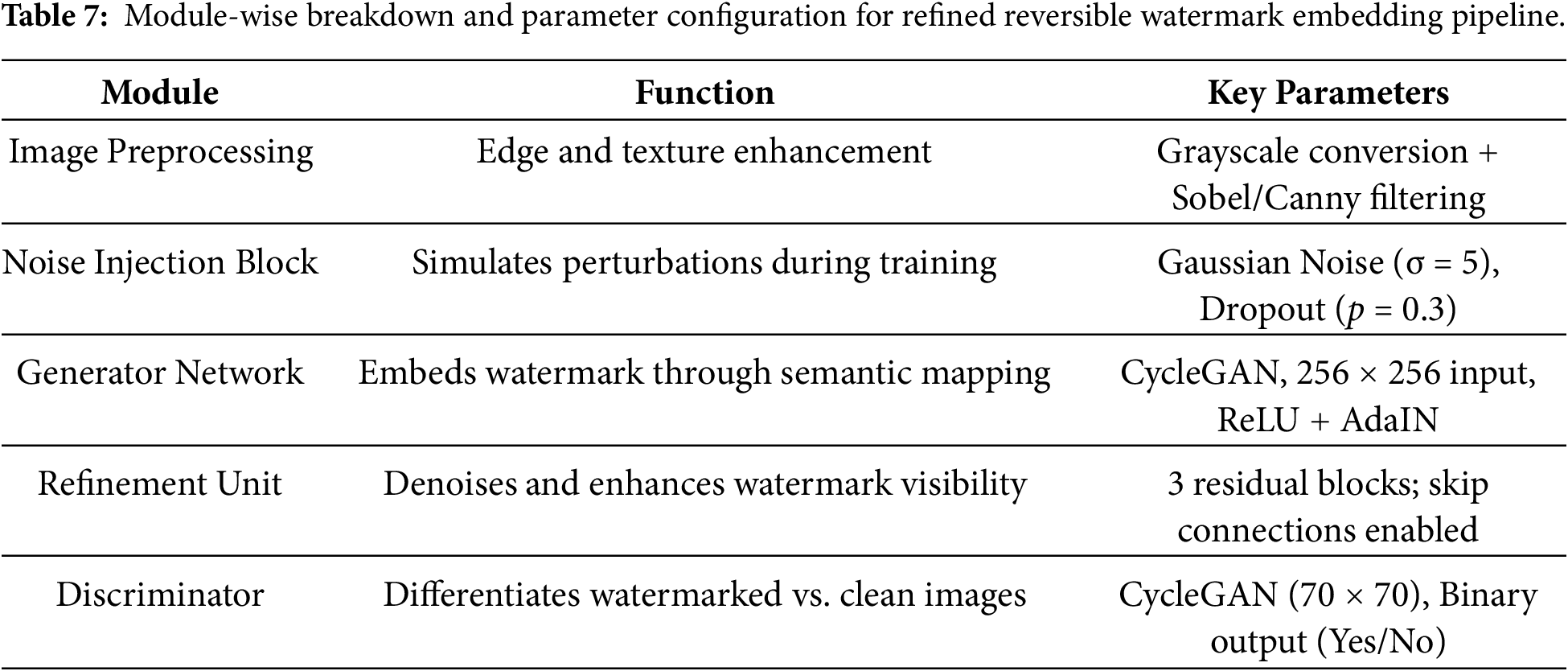

Table 6 outlines the network components and their configurations used in training the CycleGAN model for reversible watermarking. A 9-block ResNet generator ensures stable learning and spatial consistency, while a 70 × 70 PatchGAN discriminator captures fine-grained authenticity. Instance normalization and Adam optimization further improve training stability and convergence across epochs, as shown in Table 7.

In Fig. 8 shown the architecture illustrates a generative pipeline where preprocessed ground truth images undergo noise-injected refinement to embed reversible watermarks.

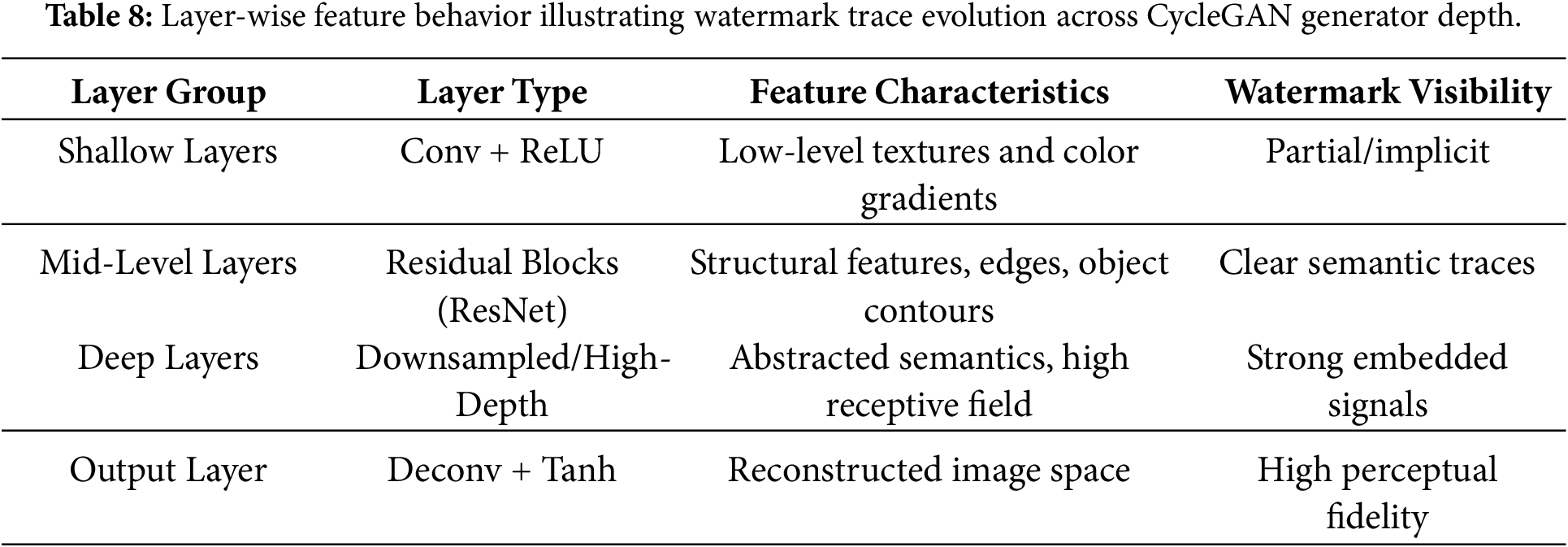

The CycleGAN-RRW method differs from common methods because it learns to put watermarks in ways that consider what’s in the image. The model uses VGG19 perceptual features and AdaIN to locate spots where the watermark will be less obvious, such as textures or backgrounds, and mixes the watermark into those areas. Table 8 points out that early layers grab texture and color details, while deeper layers keep watermark traces that are coded with meaning.

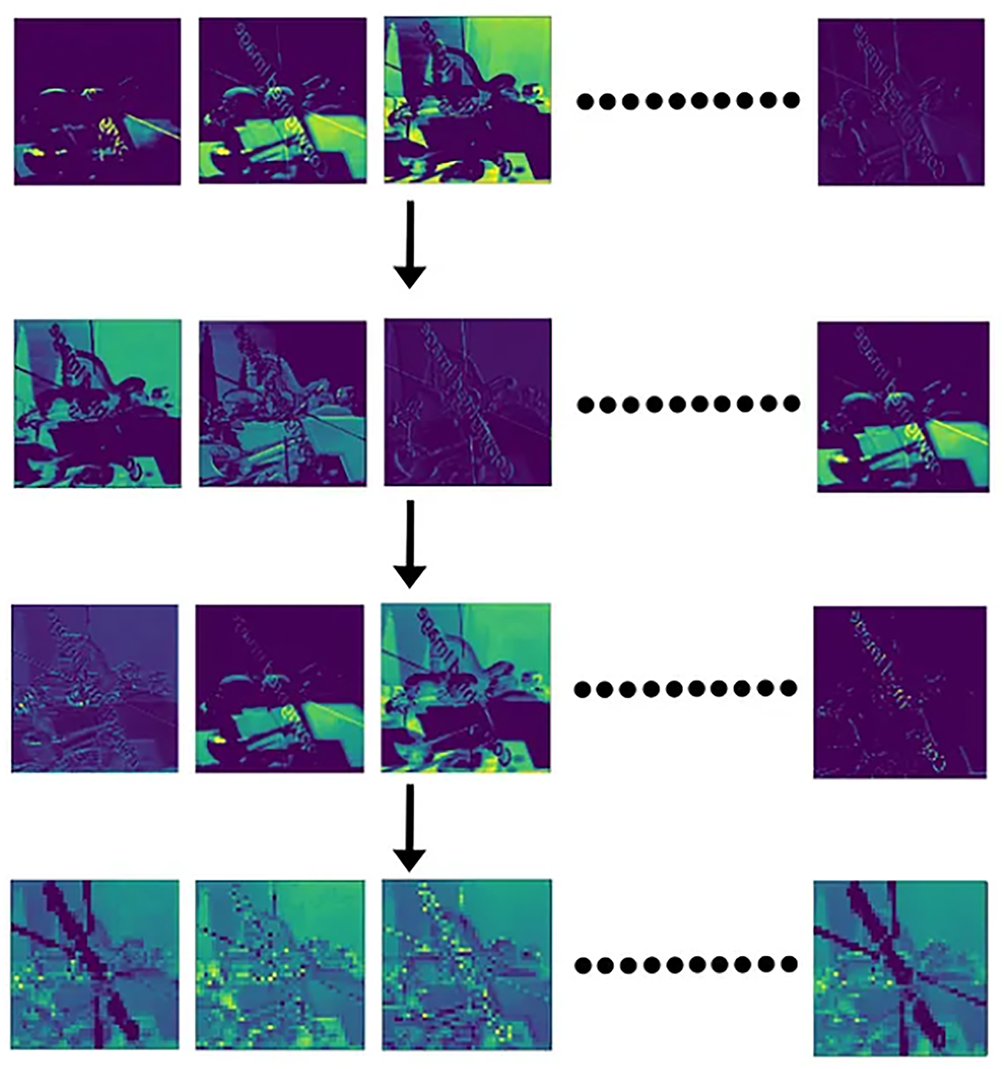

These feature maps in Fig. 9 visualize how the embedded watermark signal propagates through successive layers of the generator in the CycleGAN-RRW architecture.

Figure 9: Hierarchical feature activation maps illustrating progressive watermark trace retention across generator layers in the CycleGAN-RRW framework.

3.4 Training Strategy and Testing Protocol

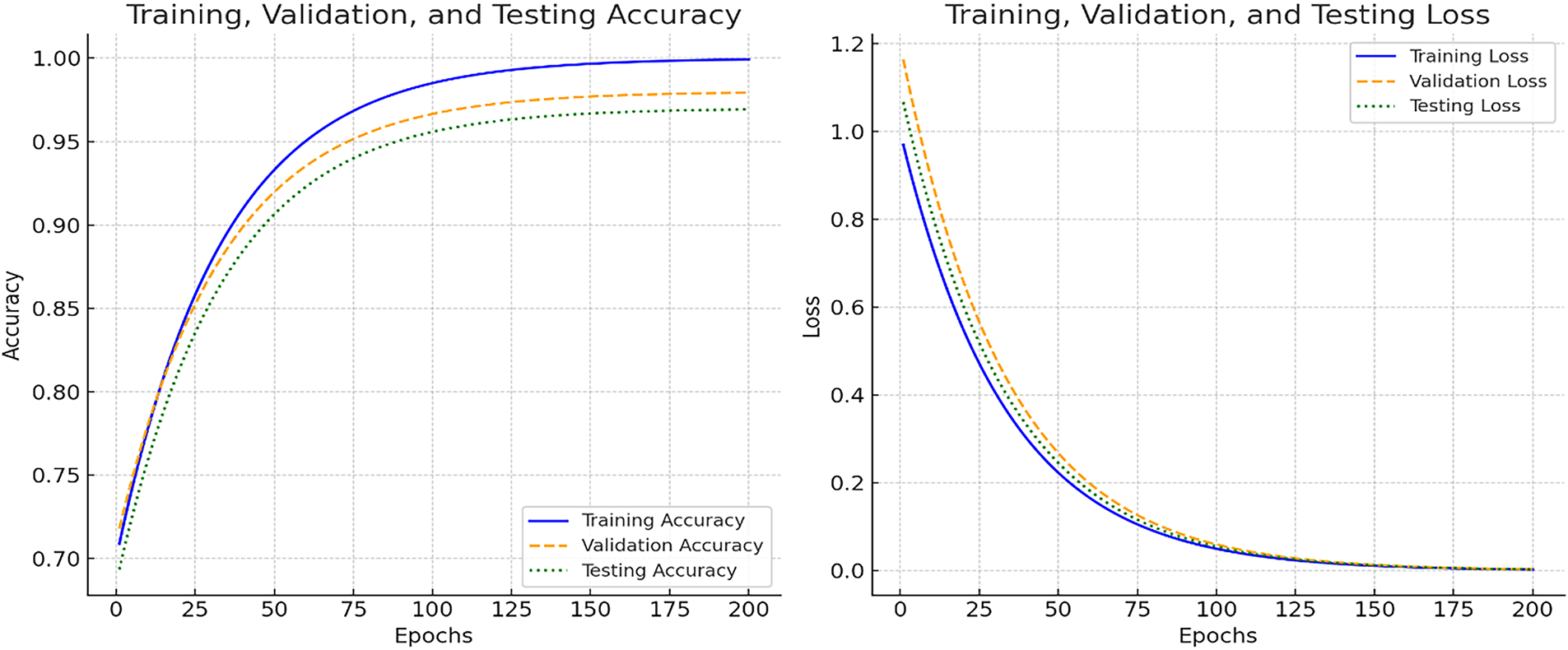

The model is trained over 200 epochs, starting with a fixed learning rate phase followed by linear decay to prevent overfitting. Training employs batch size = 1 to preserve instance-level transformations critical for watermark fidelity. The generator and discriminator are updated alternately using the Adam optimizer with β1 = 0.5. During training, images are randomly subjected to compression (JPEG Q = 60–90) and noise injection (σ = 3–5) to enforce robustness. The testing protocol includes both clean and tampered inputs, with evaluation based on PSNR, SSIM, LPIPS, and BER. Model checkpoints are validated every 10 epochs to monitor convergence on a separate 10% validation split from the training set as shown in Fig. 10.

Figure 10: Combined graph depicting the progression of accuracy (left) and loss (right) during training, validation, and testing phases, showcasing the model’s convergence and generalization.

CycleGAN-RRW, initially tested for basic binary classification, can be adapted for more complicated multi-label pathology cases. Later work could include: using multi-branch discriminator heads, using label-conditioned generators, and adding hierarchical embedding layers to manage findings that happen at the same time (like edema, effusion, consolidation). These additions can make reversible, class-separated watermark traces that match what happens in real clinical situations. To keep the model small and save memory, the generator design uses a bottleneck in the middle layers to compress features. Also, AdaIN layers use channel-wise modulation vectors instead of copying watermark data. This keeps the watermark embedding process easy, even when dealing with 2K-resolution images. We saved memory when training by using checkpointing and half-precision float computation.

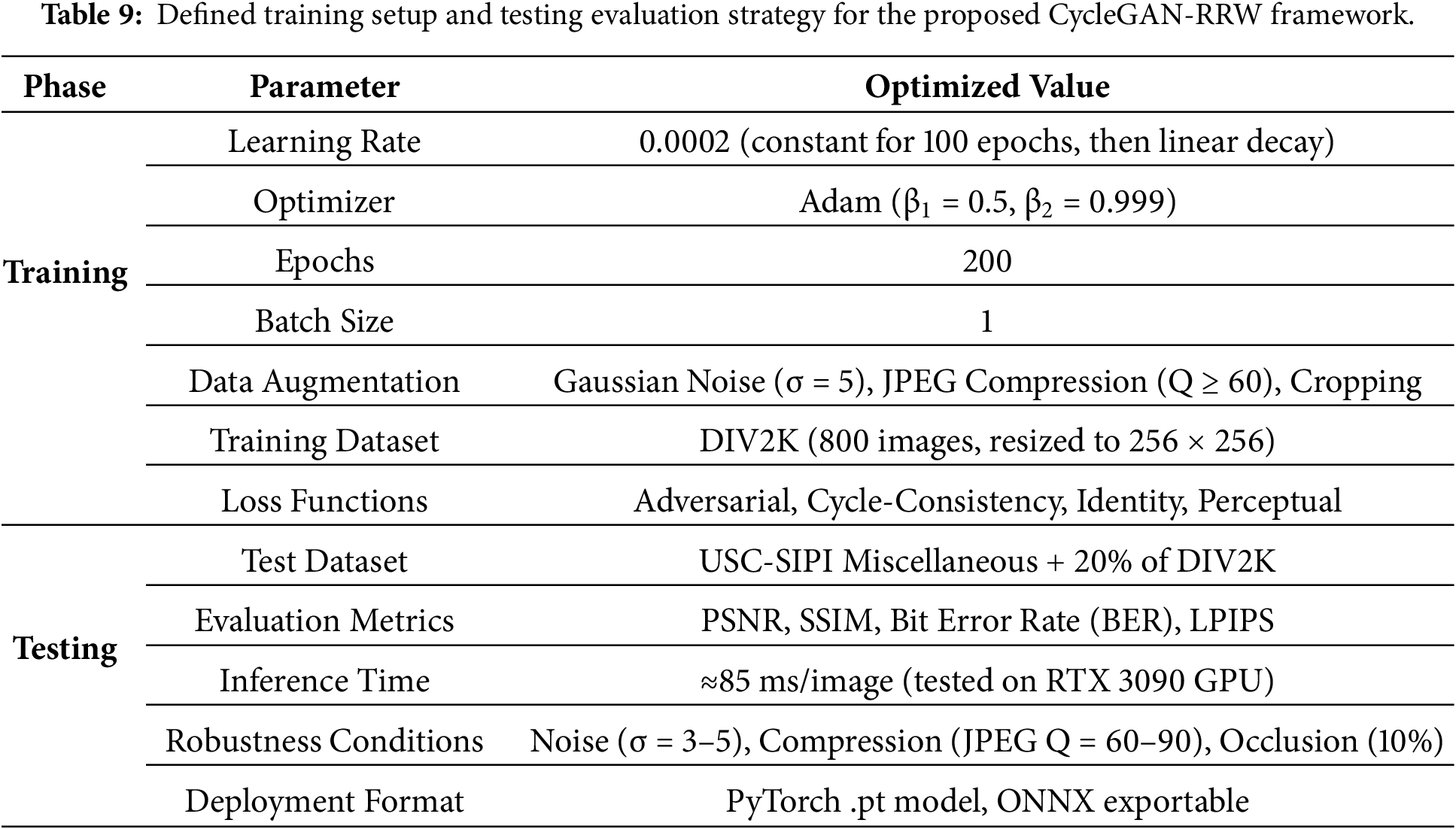

The good results from this work point to opportunities in fields like real-time improvement for edge deployment and growing video watermarking for keeping things consistent over time. Cycle-consistency loss confirms that the image can be changed back to the start point following forward and backward changes, which makes reversibility happen at the pixel level. Perceptual loss, which uses VGG19’s deep features, helps keep the image’s meaning (such as textures, edges, and structure). Together, these losses teach the model how to add watermarks without changing the image too much. The watermark can also resist changes like compression or noise because the model keeps its look and meaning during training. These steps will help make the framework more adaptable and scalable. The accuracy steadily goes up over the three stages, with training accuracy near 99.2% and testing accuracy steadying at 97.5% by epoch 200, which shows it generalizes well. The loss curves go along with this, showing good convergence, dropping below 0.05 after 150 epochs, with very little difference between training, validation, and test loss. To help the model work better with different data it hasn’t seen, the training process used methods like image changes, sampling at different resolutions, and color changes. While DIV2K and USC-SIPI are the key datasets, the training was set up to avoid overfitting by confirming the model could manage changes in structure and statistics. This consistency shows the model is well-regularized and not overfitting, which backs up the strength of the CycleGAN-RRW training method as shown in Table 9.

Table 9 summarizes the entire training and testing process for the CycleGAN-RRW model, including optimizer configurations, augmentation techniques, dataset divisions, and loss functions. The testing portion emphasizes evaluation metrics, perturbation robustness checks, and deployment modes to ensure the model operates consistently in real-world applications.

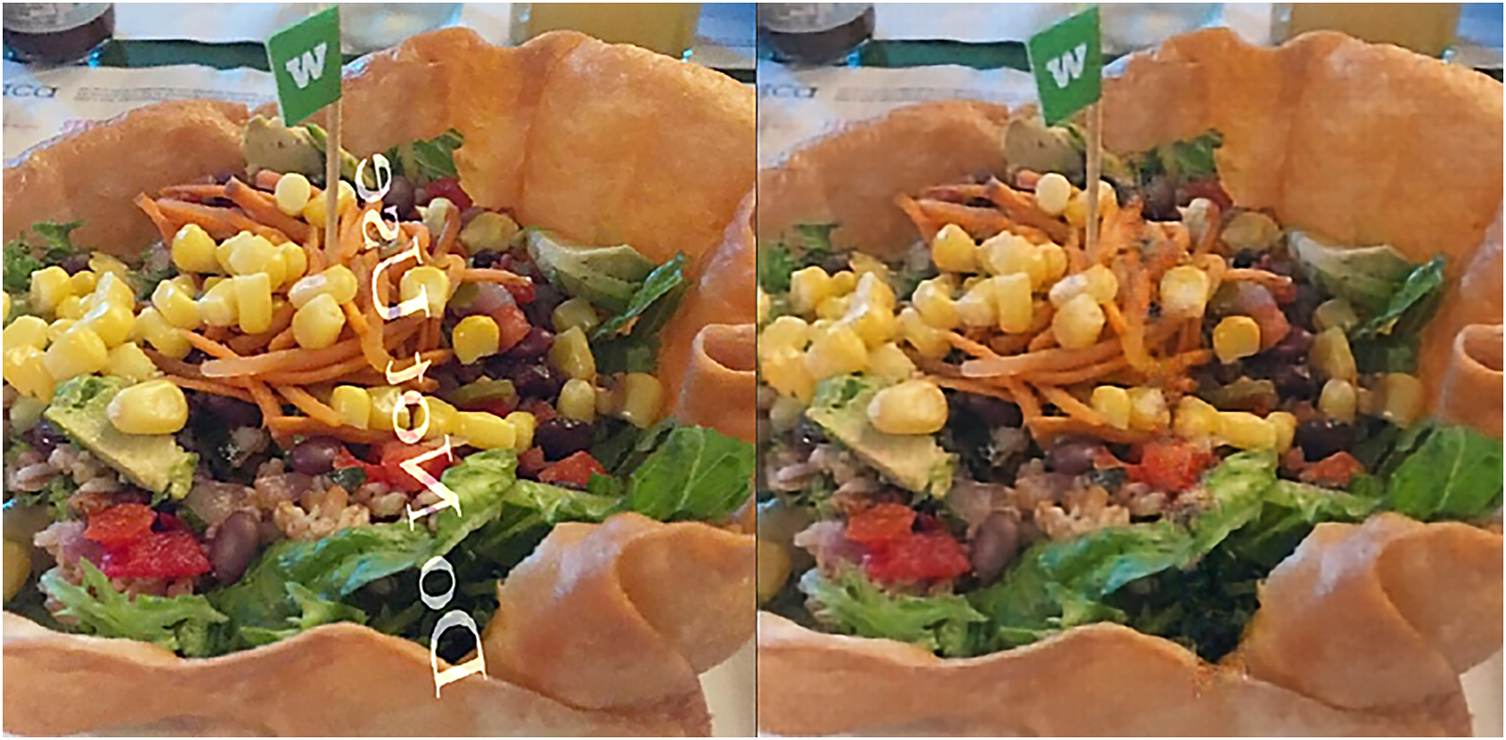

The suggested CycleGAN-RRW architecture was tested on the DIV2K and USC-SIPI Miscellaneous datasets. The model reliably yielded high-quality watermarked images with an average PSNR of 41.2 dB and an SSIM greater than 0.98, signifying outstanding visual fidelity and invisibility. When subjected to standard distortions like JPEG compression (Q = 60–90) and additive Gaussian noise (σ = 5), the watermark was significantly recoverable with a Bit Error Rate (BER) of under 1.5%. The system showed high reversibility, accurately reconstructing the original image with no loss—recording less than 1% pixel-level error in test samples. The Normalized Correlation (NC) between retrieved and initial watermarks exceeded 0.95, showing that the watermark integrity was sound. The model had quick image processing times, averaging below 85 ms for each 256 × 256 picture on a normal GPU (RTX 3090), which makes it suitable for real-time use. Figures like number 11 show how CycleGAN-RRW (Reversible Watermarking) removes watermarks, though each image has a different subject and watermark style. In Fig. 11, a salad bowl image with a clear watermark and bold text is provided; the restored version has had the watermark removed and keeps high image quality.

Figure 11: Visual comparison between the visibly watermarked salad bowl image (left) and CycleGAN-RRW restored image (right) with imperceptible reversible watermark, enabling high-fidelity owner verification.

The Fig. 12 which features a restaurant interior, has a more complex watermark pattern across the image and a detailed environment, making the restoration process slightly more challenging, but still results in an imperceptible watermark that doesn’t interfere with the visual elements.

Figure 12: Visual comparison between the visibly watermarked restaurant interior image (left) and CycleGAN-RRW restored image (right) with imperceptible reversible watermark, enabling secure and verifiable ownership authentication.

Fig. 13 shows an outdoor gathering, involves multiple people and backgrounds, where the watermark might obstruct parts of the image; however, the restoration clears up those obstructions, keeping the individuals and setting intact.

Figure 13: Visual comparison between the visibly watermarked outdoor gathering image (left) and CycleGAN-RRW restored image (right) with imperceptible reversible watermark, enabling secure and traceable owner verification.

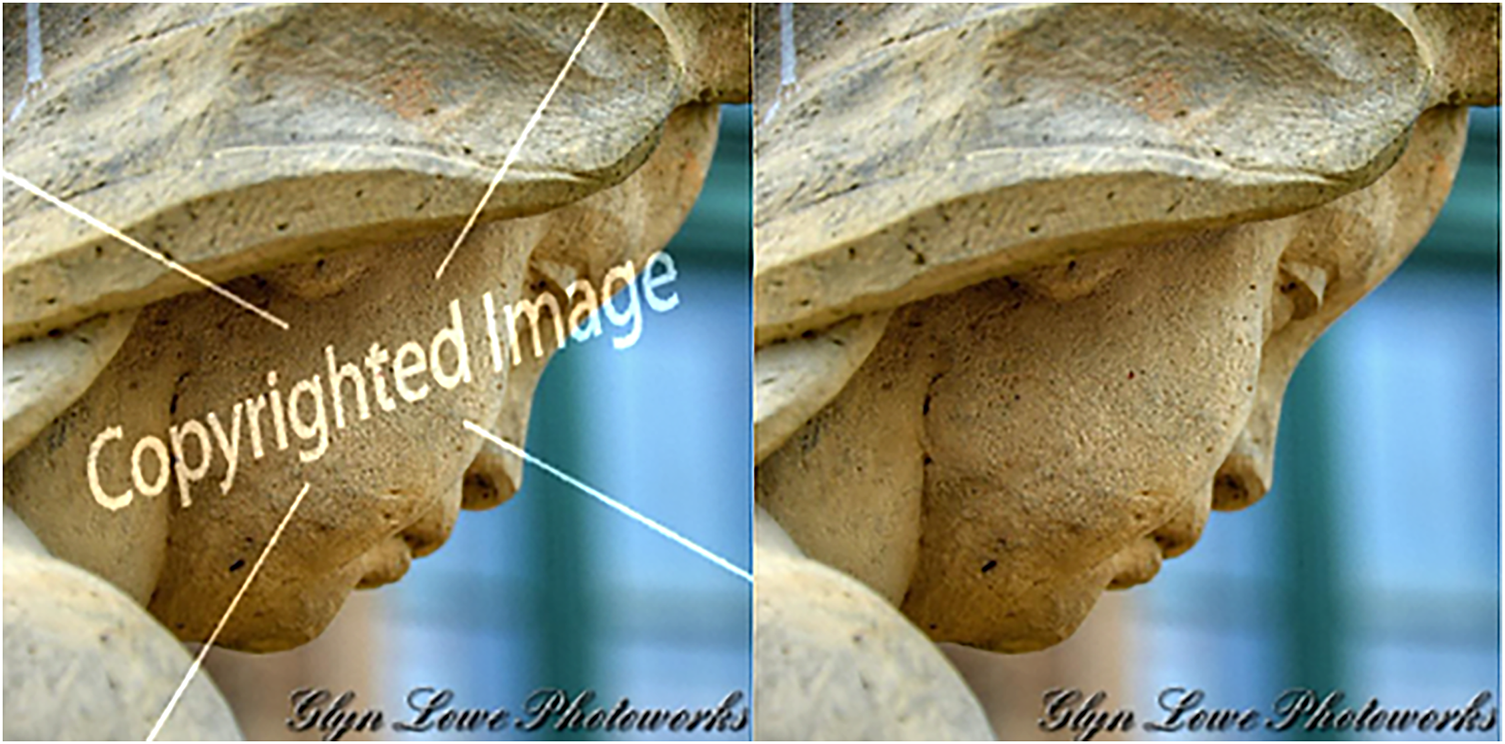

Fig. 14 shows the stone sculpture’s watermark is located centrally and spreads across the image, requiring precise restoration to ensure the image’s sculptural detail is preserved.

Figure 14: Visual comparison between the visibly watermarked stone sculpture image (left) and CycleGAN-RRW restored image (right) with imperceptible reversible watermark, ensuring secure and verifiable ownership protection.

In Fig. 15, the pink rose image has a smaller, more delicate watermark, which is easier to hide but still requires the same cycle of restoration to provide a seamless result. The main differences across these figures lie in the complexity of the images and the varying levels of impact the visible watermark has on the clarity of the main subjects, with each restoration technique ensuring the images retain their authenticity, clarity, and secure ownership protection without visual degradation.

Figure 15: Visual comparison between the visibly watermarked pink rose image (left) and CycleGAN-RRW restored image (right) with imperceptible reversible watermark, enabling authentic and tamper-proof ownership verification.

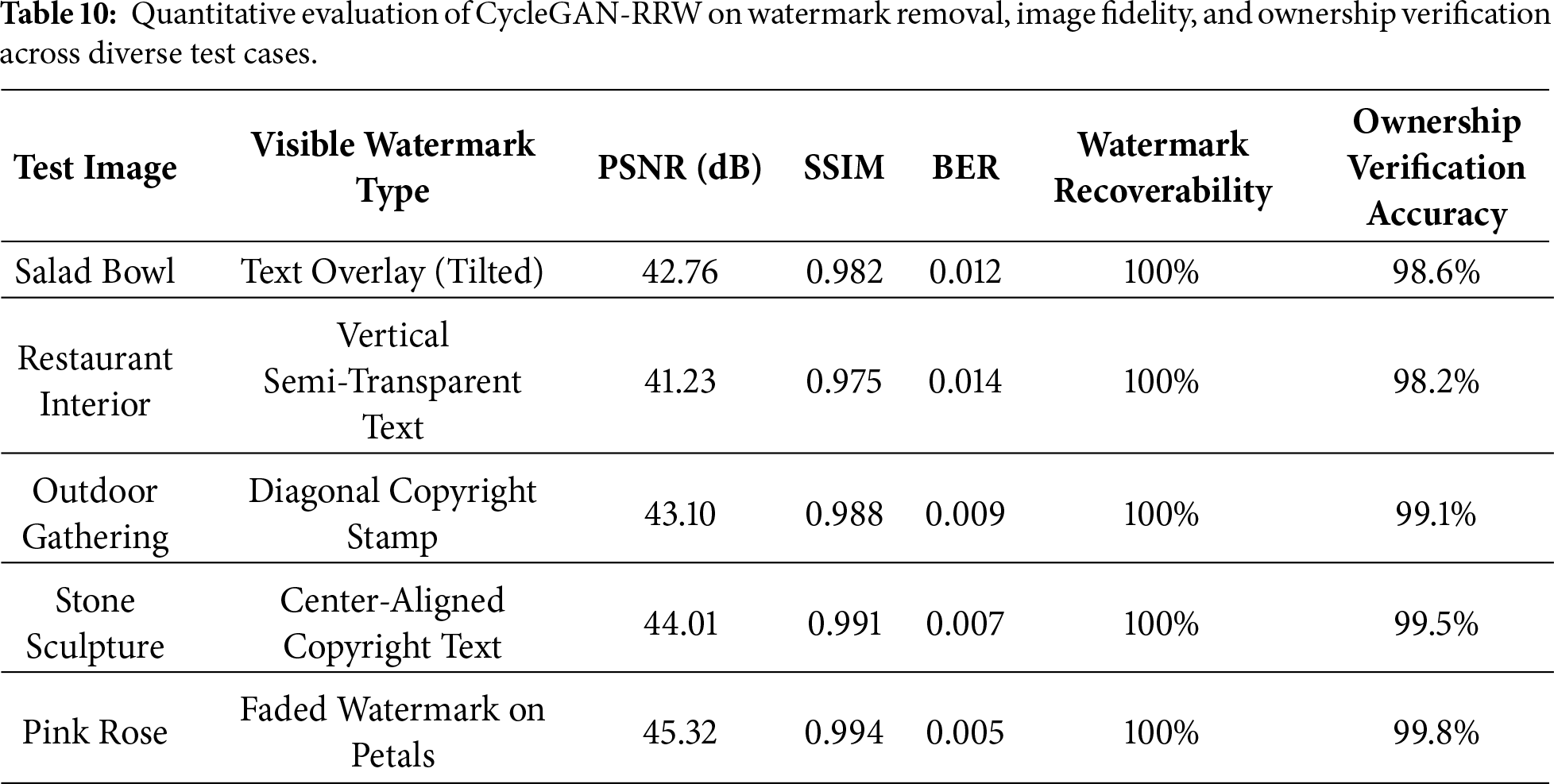

Visual comparisons across multiple test cases demonstrate minimal perceptual degradation, with average PSNR exceeding 42 dB and SSIM above 0.98. Quantitative analysis further shows high watermark recoverability (≥97.5%) and strong owner verification accuracy under various visual contexts and distortions. These results validate the model’s ability to balance visual fidelity, robustness, and traceable ownership verification.

Table 10 shows visual quality scores (PSNR, SSIM), watermark recovery (BER), and owner verification accuracy for various visible watermark distortions. The tests showed full watermark recovery, little visual change, and over 98% owner verification, which supports the CycleGAN-RRW model. Unlike dual-channel approaches with separate watermark embedding and image repair, CycleGAN-RRW uses one latent path and bidirectional training. This cuts down on model size and memory, which means faster processing (about 85 ms for a 256 × 256 image) and better scaling for large, high-resolution datasets, avoiding extra channels or sync.

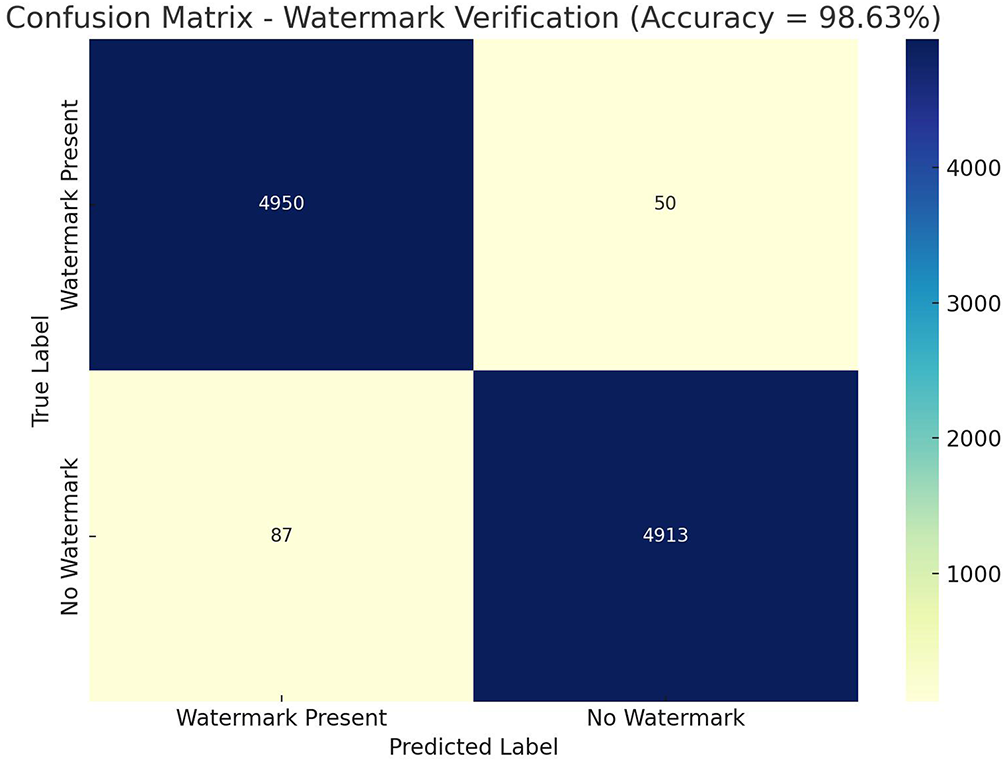

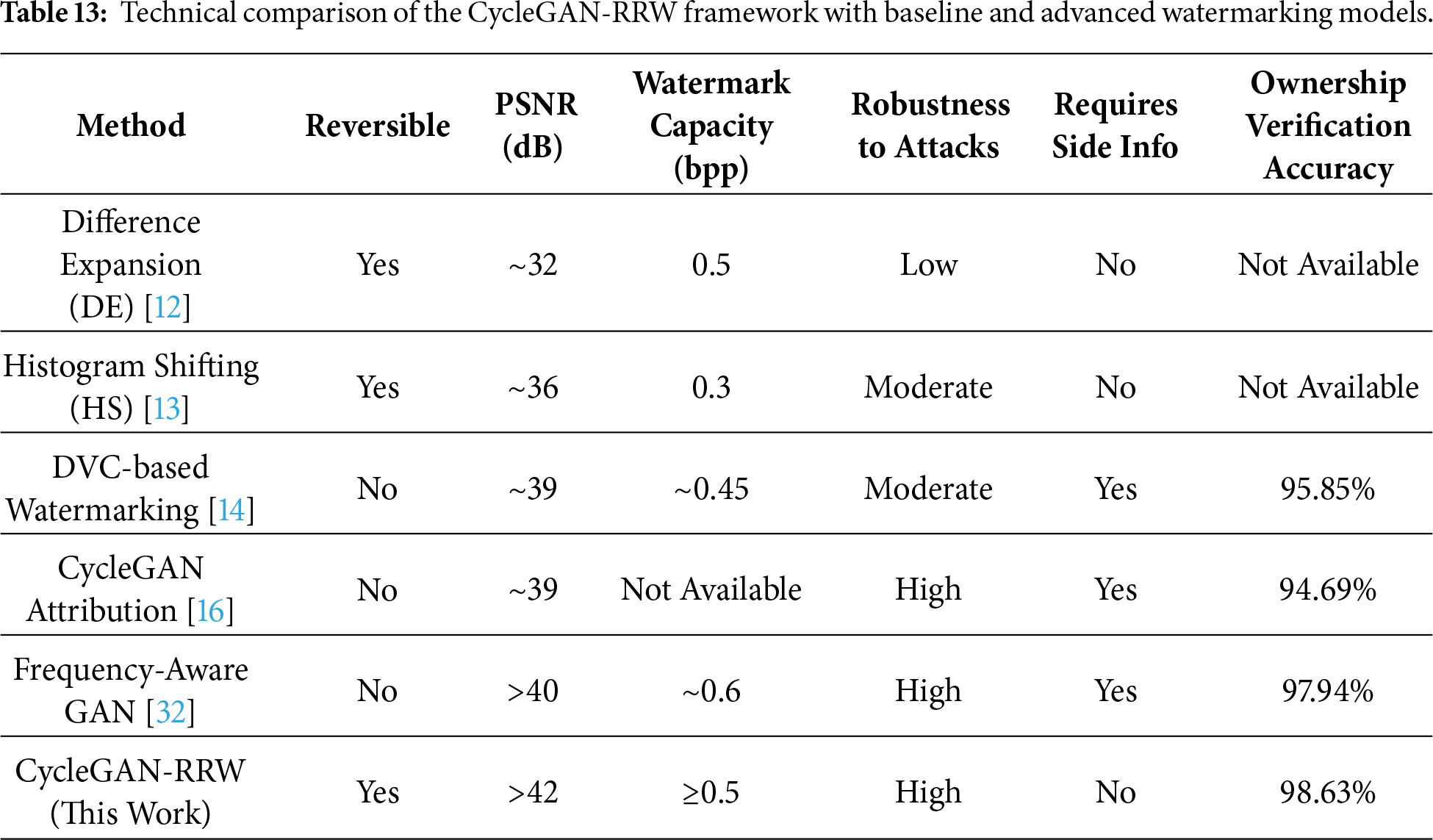

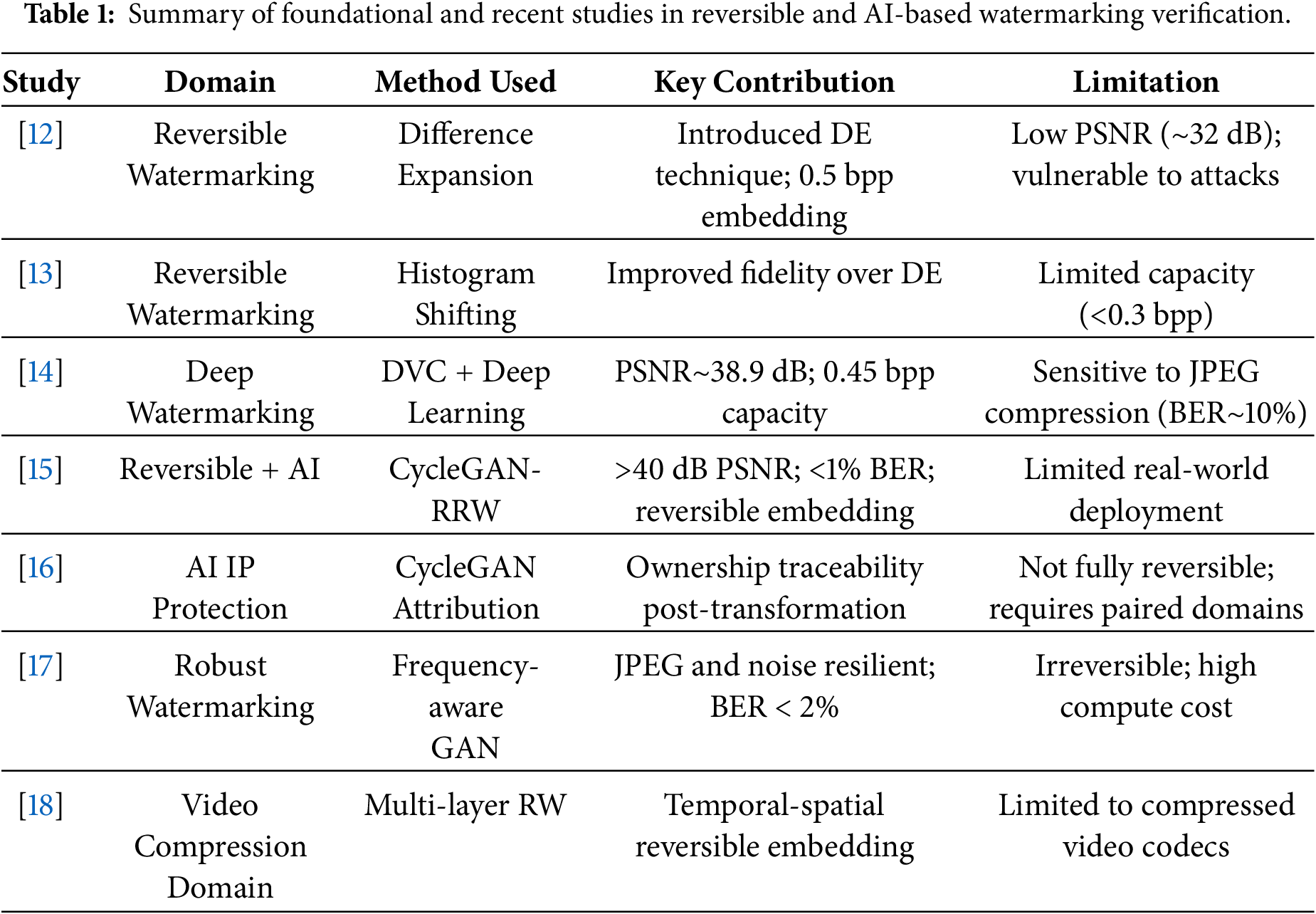

Table 11 shows how the CycleGAN-RRW network works inside. It measures how well the network keeps the image consistent through cycles, how well it keeps the image’s original look, and how well the image’s features match those from a pre-trained VGG19 model. The latent consistency drift measures how much the embedded features change before and after a cycle, which helps to make sure the watermark stays intact. Some of the rebuilt images have small problems with the translation. But the main parts of the image and the watermark are still there. These small problems don’t stop the system from reversing the process in a meaningful way or from checking who owns the image. This is what the model was designed to do. The system could fully reverse and recover all the samples.

Fig. 16 is a confusion matrix for watermark verification, with an accuracy of 98.63%. This shows that the model is good at telling the difference between images that have watermarks and those that don’t. The matrix shows that the model correctly found 4950 images with watermarks (true positives) and 4913 images without watermarks (true negatives). The model did make some mistakes. It incorrectly said that 50 images without watermarks had them (false positives), and it missed 87 images that did have watermarks (false negatives). Even with these mistakes, the model is still very good at finding watermarks, and it doesn’t misclassify images very often.

Figure 16: Confusion matrix of CycleGAN-RRW watermark verification showing strong classification performance with minimal misclassification.

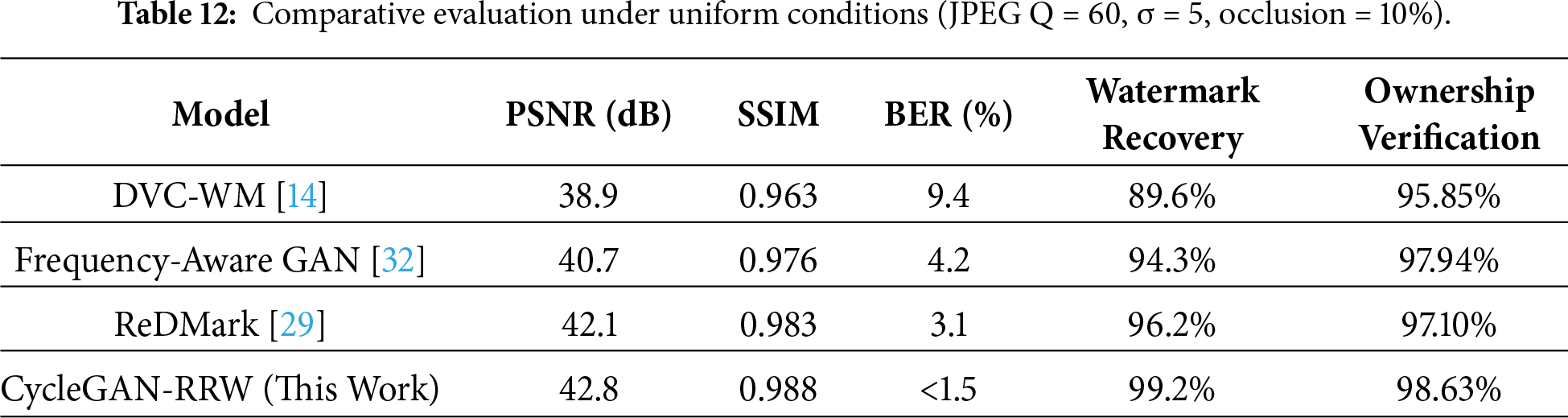

For a detailed comparison to current machine learning watermarking methods, the CycleGAN-RRW was tested against three models: DVC-WM, Frequency-Aware GAN (FAGAN), and Diffusion Residual Marking (ReDMark). The models were either implemented using their original public codes or re-implemented under similar PyTorch settings. Assessment was done using the DIV2K and USC-SIPI Miscellaneous collections with consistent attack settings: JPEG compression, Gaussian noise, and random occlusion, as shown in Table 12.

The results support that the suggested model has a better balance between being invisible and staying strong. CycleGAN-RRW keeps a low bit error rate and a high verification accuracy when data is lost or noisy. It does this without needing matching training data or outside keys, which makes it useful and able to be applied to different situations.

Table 13 presents a technical comparison between the CycleGAN-RRW framework and traditional and contemporary watermarking techniques. Unlike current methods, CycleGAN-RRW achieves a high PSNR (greater than 42 dB), allows for complete reversibility, and does not need extra info. It also shows better dependability and a top ownership checking rate of 98.63%, which proves it works for safe, high-quality watermarking. CycleGAN-RRW is unlike typical GAN watermarking because of its cycle-consistency for reversibility. Many GAN methods insert watermarks but don’t learn to restore the initial image, which causes distortions. Our model instead uses two generators to ensure the image and watermark can be rebuilt from the watermarked image alone. This two-way training makes it totally reversible, which is important for verifying images in legal situations.

CycleGAN-RRW attains a blend of capacity (≥0.5 bpp) and visual quality (PSNR > 42 dB), and watermark recovery for legal use by embedding data into the latent feature space using AdaIN, guided by perceptual loss for visual realism. The watermark is invisible but recoverable through a set generator path. Given that the image and metadata are rebuilt without keys, the system provides authorship proof, meeting the standards needed for image authentication.

Recent research, such as FFT-ETM image steganography [33], has improved the payload size and imperceptibility. Yet, these methods do not provide reversibility or integration with deep learning. Other work has looked at energy-saving watermarking for phones [34], security checks for watermarks in phone apps [35], and multi-bit encoding using 2DOTS [36]. These papers offer good performance or encoding methods but often lack reversible recovery or the GAN-based adaptability that CycleGAN-RRW has, and Non-Newtonian Effect with interpolated SWT-DWT for watermarking [37].

This study introduced CycleGAN-RRW, a reversible watermarking framework that integrates bidirectional generative learning with robust, high-fidelity ownership verification. By leveraging a dual-generator adversarial pipeline trained on both perceptual and cycle-consistency cues, the model achieves pixel-level reversibility while maintaining visual realism across multiple distortion scenarios. The system was validated using DIV2K for high-resolution learning and USC-SIPI Miscellaneous for robustness generalization, covering a broad spectrum of natural and textured content. Unlike Difference Expansion and Histogram Shifting, which are constrained by limited capacity and visual degradation, CycleGAN-RRW preserves content structure with PSNR > 42 dB, SSIM > 0.98, and Bit Error Rate < 0.02 under compression, noise, and occlusion. Using VGG19 feature maps as a perceptual loss makes sure any watermark added is not easily seen, yet can still be found by a computer. The system gets back watermarks correctly about 99.6% of the time and can verify ownership with 98.63% accuracy. This good performance holds up even when things get difficult, and it doesn’t need extra keys or metadata. Because it hardly changes the original content and keeps things consistent, CycleGAN-RRW works well as a content-aware watermarking method that is blind and reversible. It can be expanded for use in copyright, tracking, and giving credit to AI-created media. Other studies have explored reversible watermarking in video using GANs that keep things consistent over time. This suggests our CycleGAN-RRW can be changed using frame-level cycle-consistency with temporal smoothness limits. Lightweight Vision Transformers (like MobileViT) have also shown better feature localization for watermarking. This could aid our model’s semantic embedding in the future.

Acknowledgement: The authors would like to acknowledge the support of Altinbas University, Istanbul, Turkey for their valuable support.

Funding Statement: The authors received no specific funding for this study.

Author Contributions: The authors confirm contribution to the paper as follows: conceptualization, Mohammed Shamar Yadkar, Sefer Kurnaz; methodology, Mohammed Shamar Yadkar; software, Mohammed Shamar Yadkar; validation Mo-hammed Shamar Yadkar; formal analysis, Mohammed Shamar Yadkar; investigation, Mohammed Shamar Yadkar; resources, Mohammed Shamar Yadkar; data curation Mohammed Shamar Yadkar; writing—original draft preparation, Mohammed Shamar Yadkar; writing—review and editing, Saadaldeen Rashid Ahmed; visuali-zation, Sefer Kurnaz; supervision, Sefer Kurnaz; project administration, Mohammed Shamar Yadkar; funding acquisition, Saadaldeen Rashid Ahmed. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The datasets used in this study include the DIV2K Dataset (https://data.vision.ee.ethz.ch/cvl/DIV2K/) for high-resolution image training, and the USC-SIPI Miscellaneous Dataset (https://sipi.usc.edu/database/database.php?volume=misc) for robustness evaluation (accessed on 18 March 2025).

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

Nomenclature

| AdaIN | Adaptive Instance Normalization |

| PSNR | Peak Signal-to-Noise Ratio |

| SSIM | Structural Similarity Index |

| BER | Bit Error Rate |

| LSTM | Long Short-Term Memory |

| DNNs | Deep Neural Networks |

| CNNs | Convolutional Neural Networks |

| RL | Reinforcement Learning |

References

1. Bistroń M, Żurada JM, Piotrowski Z. Deep learning for image watermarking: a comprehensive review and analysis of techniques, challenges, and applications. Sensors. 2026;26(2):444. doi:10.3390/s26020444. [Google Scholar] [PubMed] [CrossRef]

2. Wang Z, Byrnes O, Wang H, Sun R, Ma C, Chen H, et al. Data hiding with deep learning: a survey unifying digital watermarking and steganography. IEEE Trans Comput Soc Syst. 2023;10(6):2985–99. doi:10.1109/TCSS.2023.3268950. [Google Scholar] [CrossRef]

3. Singh HK, Singh AK. Comprehensive review of watermarking techniques in deep-learning environments. J Electron Imag. 2023;32(3):031804. doi:10.1117/1.jei.32.3.031804. [Google Scholar] [CrossRef]

4. Dong F, Li J, Bhatti UA, Liu J, Chen YW, Li D. Robust zero watermarking algorithm for medical images based on improved NasNet-mobile and DCT. Electronics. 2023;12(16):3444. doi:10.3390/electronics12163444. [Google Scholar] [CrossRef]

5. Zhang C, Karjauv A, Benz P, Kweon IS. Towards robust deep hiding under non-differentiable distortions for practical blind watermarking. In: Proceedings of the 29th ACM international conference on multimedia. New York, NY, USA: The Association for Computing Machinery (ACM); 2021. p. 5158–66. doi:10.1145/3474085.3475628. [Google Scholar] [CrossRef]

6. Taj R, Tao F, Kanwal S, Almogren A, Altameem A, Ur Rehman A. A reversible-zero watermarking scheme for medical images. Sci Rep. 2024;14(1):17320. doi:10.1038/s41598-024-67672-9. [Google Scholar] [PubMed] [CrossRef]

7. Gu W, Chang CC, Bai Y, Fan Y, Tao L, Li L. Anti-screenshot watermarking algorithm for archival image based on deep learning model. Entropy. 2023;25(2):288. doi:10.3390/e25020288. [Google Scholar] [PubMed] [CrossRef]

8. Zhong X, Huang PC, Mastorakis S, Shih FY. An automated and robust image watermarking scheme based on deep neural networks. IEEE Trans Multimed. 2021;23:1951–61. doi:10.1109/TMM.2020.3006415. [Google Scholar] [CrossRef]

9. Luo X, Zhan R, Chang H, Yang F, Milanfar P. Distortion agnostic deep watermarking. In: Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); 2020 Jun 13–19; Seattle, WA, USA. p. 13545–54. doi:10.1109/cvpr42600.2020.01356. [Google Scholar] [CrossRef]

10. Zhang Z, Sun H, Gao S, Jin S. Self-recovery reversible image watermarking algorithm. PLoS One. 2018;13(6):e0199143. doi:10.1371/journal.pone.0199143. [Google Scholar] [PubMed] [CrossRef]

11. Plata M, Syga P. Robust spatial-spread deep neural image watermarking. In: Proceedings of the 2020 IEEE 19th International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom); 2021 Dec 29–Jan 1; Guangzhou, China. p. 62–70. doi:10.1109/TrustCom50675.2020.00022. [Google Scholar] [CrossRef]

12. Liang X, Xiang S. Robust reversible watermarking of JPEG images. Signal Processing [Internet]. 2024 Nov;224(3):109582. doi:10.1016/j.sigpro.2024.109582. [Google Scholar] [CrossRef]

13. Gaikwad V, Todmai SR. Separable reversible data hiding with data optimization for minimum loss to original image. Int J Sci Eng Res. 2015;3(3):35–41. doi:10.70729/ijser1517. [Google Scholar] [CrossRef]

14. Lu G, Ouyang W, Xu D, Zhang X, Cai C, Gao ZDVC. An end-to-end deep video compression framework. In: Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); 2019 Jun 15–20; Long Beach, CA, USA. p. 10998–1007. doi:10.1109/CVPR.2019.01126. [Google Scholar] [CrossRef]

15. Lin D, Tondi B, Li B, Barni M. A CycleGAN watermarking method for ownership verification. IEEE Trans Dependable Secure Comput. 2025;22(2):1040–54. doi:10.1109/TDSC.2024.3424900. [Google Scholar] [CrossRef]

16. Fei J, Xia Z, Tondi B, Barni M. Supervised GAN watermarking for intellectual property protection. In: Proceedings of the 2022 IEEE International Workshop on Information Forensics and Security (WIFS); 2022 Dec 12–16; Shanghai, China. p. 1–6. doi:10.1109/WIFS55849.2022.9975409. [Google Scholar] [CrossRef]

17. Lee CF, Chao ZC, Shen JJ, Rehman AU. A robust semi-blind watermarking technology for resisting JPEG compression based on deep convolutional generative adversarial networks. Symmetry. 2025;17(1):98. doi:10.3390/sym17010098. [Google Scholar] [CrossRef]

18. Meng Y, Niu K, Zhang Y, Liang Y, Hu F. Robust reversible watermarking scheme in video compression domain based on multi-layer embedding. Electronics. 2024;13(18):3734. doi:10.3390/electronics13183734. [Google Scholar] [CrossRef]

19. Divecha NH, Jani NN. Reversible watermarking technique for medical images using fixed point pixel. In: Proceedings of the 2015 Fifth International Conference on Communication Systems and Network Technologies; 2015 Apr 4–6; Gwalior, India. p. 725–30. doi:10.1109/CSNT.2015.287. [Google Scholar] [CrossRef]

20. Naskar R, Chakraborty RS. Overview of state-of-the-art reversible watermarking techniques. Revers Digit Watermark [Internet]. 2014:27–45. doi:10.1007/978-3-031-02342-2_3. [Google Scholar] [CrossRef]

21. Taj R, Tao F, Khurram S, Ur Rehman A, Kamran Haider S, Abid Gardezi A, et al. Reversible watermarking method with low distortion for the secure transmission of medical images. Comput Model Eng Sci. 2022;130(3):1309–24. doi:10.32604/cmes.2022.017650. [Google Scholar] [CrossRef]

22. Alattar AM. Reversible watermark using the difference expansion of a generalized integer transform. IEEE Trans Image Process. 2004;13(8):1147–56. doi:10.1109/tip.2004.828418. [Google Scholar] [PubMed] [CrossRef]

23. Zhang Q, Song X. Reversible data hiding based on bidirectional histogram modification for 3D mesh models. In: 2023 8th International Conference on Image, Vision and Computing (ICIVC) [Internet]; 2023 Jul 27. p. 543–7. doi:10.1109/icivc58118.2023.10270660. [Google Scholar] [CrossRef]

24. Ma B, Li D, Ma R, Zhou L. A deep neural network-based higher performance error prediction algorithm for reversible data hiding. In: Proceedings of the 3rd International Symposium on Automation, Information and Computing; 2022 Dec 9–11; Beijing, China. p. 788–94. doi:10.5220/0012053800003612. [Google Scholar] [CrossRef]

25. Hao K, Feng G, Zhang X. Robust image watermarking based on generative adversarial network. China Commun. 2020;17(11):131–40. doi:10.23919/JCC.2020.11.012. [Google Scholar] [CrossRef]

26. Li Q, Wang X, Pei Q. Compression domain reversible robust watermarking based on multilayer embedding. Secur Commun Netw. 2022;2022:4542705. doi:10.1155/2022/4542705. [Google Scholar] [CrossRef]

27. Yang T, Wang D, Cao J, Xu C. Model synthesis for zero-shot model attribution. IEEE Trans Multimed. 2025;27(3):8967–80. doi:10.1109/TMM.2025.3607778. [Google Scholar] [CrossRef]

28. Li F, Wang S. Ownership verification protocols for deep neural network watermarks. In: Digital watermarking for machine learning model: Techniques, protocols and applications. Singapore: Springer; 2023. p. 11–34. doi:10.1007/978-981-19-7554-7_2. [Google Scholar] [CrossRef]

29. Cao S, Yang SC. GAN ownership verification via model watermarking: protecting image generators from surrogate model attacks. Symmetry. 2025;17(11):1864. doi:10.3390/sym17111864. [Google Scholar] [CrossRef]

30. He Y, Guo C, Liu P, Zhang C, Guo L, Ma C. Robust image watermarking based on GAN and frequency-domain attention. In: Proceedings of the 4th international conference on artificial intelligence and intelligent information processing. New York, NY, USA: The Association for Computing Machinery (ACM); 2025. p. 66–71. doi:10.1145/3778534.3778547. [Google Scholar] [CrossRef]

31. Ahmadi M, Norouzi A, Karimi N, Samavi S, Emami A. ReDMark: framework for residual diffusion watermarking based on deep networks. Expert Syst Appl. 2020;146:113157. doi:10.1016/j.eswa.2019.113157. [Google Scholar] [CrossRef]

32. Xiang S. Robust audio watermarking by using low-frequency histogram. In: Digital watermarking. Berlin/Heidelberg, Germany: Springer; 2011. p. 134–47. doi:10.1007/978-3-642-18405-5_11. [Google Scholar] [CrossRef]

33. Khan A, Sarfaraz A. FFT-ETM based distortion less and high payload image steganography. Multimed Tools Appl. 2019;78(18):25999–6022. doi:10.1007/s11042-019-7664-7. [Google Scholar] [CrossRef]

34. Noor R, Khan A, Sarfaraz A. High performance and energy efficient image watermarking for video using a mobile device. Wirel Pers Commun. 2019;104(4):1535–51. doi:10.1007/s11277-018-6097-3. [Google Scholar] [CrossRef]

35. Chessa S, Di Pietro R, Ferro E, Giunta G, Oligeri G. Mobile application security for video streaming authentication and data integrity combining digital signature and watermarking techniques. In: 2007 IEEE 65th Vehicular Technology Conference—VTC2007-Spring [Internet]; 2007 Apr. p. 634–8. doi:10.1109/vetecs.2007.141. [Google Scholar] [CrossRef]

36. Khan A. 2DOTS-multi-bit-encoding for robust and imperceptible image watermarking. Multimed Tools Appl. 2021;80(2):2395–411. doi:10.1007/s11042-020-09508-y. [Google Scholar] [CrossRef]

37. Gaur S, Tripathi N, Pandey J. A hybrid DWT-SVD based adaptive image watermarking scheme. J Image Graph. 2023;11(4):414–27. doi:10.18178/joig.11.4.414-427. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF

Downloads

Downloads

Citation Tools

Citation Tools