Open Access

Open Access

ARTICLE

Promoting psychological well-being in AI-enhanced english as a foreign language learning: A mixed-methods study of motivation, language learning anxiety and trust in higher education

School of Foreign Languages, Nanyang Normal University, Nanyang, China

* Corresponding Author: Zhiyong Sun. Email:

Journal of Psychology in Africa 2026, 36(1), 33-43. https://doi.org/10.32604/jpa.2026.074741

Received 16 October 2025; Accepted 05 January 2026; Issue published 26 February 2026

Abstract

This mixed-methods study investigated how AI-enhanced English as a Foreign Language (EFL) learning environments influence students’ psychological well-being through the mediating roles of motivation and language learning anxiety and the moderating role of trust. Participants were Chinese university students (N = 310, 62% female, mean age = 18.9, SD = 0.8), of whom 15 completed interviews to both add to and to clarify the evidence from the surveys. Structural equation modeling results revealed that AI use had significant indirect effects on well-being through increased motivation and reduced language learning anxiety. Trust in AI significantly moderated both paths, amplifying the motivational benefits and anxiety reduction associated with AI use. Thematic analysis supported these results, identifying three experiential themes: (1) motivational empowerment through personalization, (2) anxiety regulation through safe practice and feedback, and (3) trust as the emotional bridge between AI and well-being. The study extends AI psychology applications by empirically linking technology engagement with affective outcomes and underscores the need for human-centered and trust-enhancing design in AI-supported education. From these findings, we conclude that adaptive, transparent, and autonomy-supportive AI systems promote self-determined motivation, emotional safety, and overall psychological health among EFL learners.Keywords

In today’s competitive academic environment, English as a Foreign Language (EFL) learning often entails considerable psychological pressure from high-stakes exams and fear of public language mistakes (Yan et al., 2025; Ding, 2024). To ease such stress, artificial intelligence (AI) technologies such as AI tutors, chatbots, and automated writing evaluators are increasingly integrated into EFL classrooms to provide personalized and adaptive learning support. The rapid integration of AI into education has reshaped language learning and raised critical questions about learners’ emotional and psychological health (Yang & Rui, 2025; Zhang & Liu, 2025). The adoption of these tools provides individualized feedback, tailored exercises, and self-paced learning opportunities that could mitigate anxiety and enhance motivation (Wang et al., 2025a).

However, several studies have shown that AI systems may initially intensify this anxiety, especially when learners confront unfamiliar interfaces or opaque algorithmic judgments (Xin & Derakhshan, 2024; Zhang & Liu, 2025). Thus, while AI tools can initially provoke unease, especially when learners are unfamiliar with automated feedback or unsure how the system processes their responses, it remains unclear as to the extent to which AI increases or decreases anxiety in EFL learners and their trust in the system, including the belief that AI tools are reliable, fair, transparent and aligned with learning goals (Qin et al., 2020; Wang et al., 2025b). Moreover, students’ motivation and emotional responses are essential for supporting students’ psychological well-being and promoting effective, learner-centered technology integration. This study aimed to examine how AI-enhanced EFL learning environments shape students’ psychological well-being by investigating the roles of motivation, language learning anxiety, and trust in AI.

AI in EFL learning and student well-being

As previously noted, in EFL contexts, intelligent tutors, adaptive vocabulary apps, automated writing evaluators and conversational chatbots provide personalized, interactive and self-paced learning opportunities. These tools deliver immediate feedback and level-appropriate tasks, which can enhance engagement and performance (Cengiz & Aptoula, 2025). Beyond achievement, recent work examines effects on well-being, motivation, and affect, reflecting a broader shift toward positive psychology in language education that prioritizes autonomy-supportive, strengths-based environments (Aydın & Tekin, 2023). For instance, EFL students’ acceptance of AI (capturing usefulness, ease, and emotional comfort) predicts greater classroom engagement via enhanced motivation (Yuan & Liu, 2024), aligning with the view that nurturing positive emotion and motivation promotes both learning and mental well-being (Brooks & Brooks, 2023).

Motivation and learning anxiety mediation

Motivation, whether autonomous or controlled, is a key determinant of success in English as a Foreign Language (EFL) learning, and its quality. According to Noels et al. (2000), autonomous or intrinsic motivation promotes persistence and enjoyment, whereas controlled motivation is task specific. Within AI-enhanced learning environments, motivation flourishes when technology supports the basic psychological needs of autonomy and competence. For instance, Wei (2023) found that AI-mediated instruction significantly increased EFL learners’ L2 motivation and self-regulated learning compared to traditional instruction, suggesting that adaptive and immediate feedback can foster motivational growth. Similarly, Loewen et al. (2020) reported that learners’ motivation to use a language learning app predicted greater gains in receptive linguistic knowledge and communicative ability, illustrating the motivational role of app usage. Likewise, Stockwell and Reinders (2019) argued that technology contributes to motivation and learner autonomy when pedagogically aligned with learners’ goals, suggesting that technology’s benefits depend on how it is integrated into the learning context. These studies align with the view that thoughtfully designed technology can create autonomy-supportive, competence-enhancing environments that bolster learner motivation. Conversely, when AI tools are perceived as controlling or overly reliant on external rewards, they could frustrate autonomy and diminish intrinsic motivation. Thus, the motivational impact of AI in EFL learning depends not only on technological sophistication but also on the extent to which its design and implementation align with learners’ psychological needs.

Language learning anxiety refers to the tension, worry, or apprehension that learners experience in their learning endeavors (Horwitz et al., 1986), which could hinder students’achievements of excessively. AI-supported tools may help lower anxiety by providing judgment-free practice opportunities, individualized feedback, and private rehearsal spaces that reduce the fear of social evaluation (Wang, 2025). At the same time, AI-generated feedback, unfamiliar interfaces, and uncertainty about how automated systems evaluate performance may introduce new sources of apprehension. The emotional impact of AI-enhanced learning, therefore, depends on learners’ prior experiences, their perceptions of the technology, and their trust in the accuracy and fairness of AI tools.

Trust refers to belief in the reliability, benevolence, and fairness of a system or procedure (Abdelazim et al., 2025). To be more specific, high trust amplifies the positive effect of perceived usefulness on behavioral intention while dampening the negative effect of perceived risk. Teachers’ trust in AI critically influences their willingness to integrate it, while for students, transparency, perceived value, and data security shape trust, and clear functionality, user-friendliness and instructor endorsement enhance acceptance and sustained engagement. (Cukurova, 2024; Nazaretsky et al., 2022). Trust also influences learners’ emotional and motivational states, as higher trust reduces anxiety and enhances willingness to engage, whereas distrust heightens worry and leads to disengagement (Payne et al., 2022). Trust-building levers include explanations, predictable performance, privacy assurances and institutional endorsement.

The present study adopts a unified framework that integrates Self-Determination Theory (SDT) with the Unified Theory of Acceptance and Use of Technology (UTAUT) through the bridging construct of trust in AI (Huang et al., 2023). UTAUT-based factors, such as perceived usefulness, ease of use, and perceived risk, shape learners’ willingness to engage with AI tools, while SDT explains how such engagement supports (or frustrates) basic psychological needs for autonomy, competence, and relatedness (Chen et al., 2024; Kenesei et al., 2025). Trust functions as the linking mechanism that determines whether students meaningfully engage with AI-based learning systems and thereby gain access to these need-supportive affordances. Within this integrated perspective, AI use is expected to influence motivation and anxiety through SDT-aligned processes, whereas trust moderates these effects by shaping the quality of learners’ engagement.

English as a Foreign Language (EFL) education occupies a central position in China’s higher education system, where English proficiency is closely linked to academic progression, employability and global engagement. At the university level, EFL instruction has traditionally been characterized by large class sizes, exam-oriented curricula and a strong emphasis on accuracy and test performance, factors that have been associated with elevated levels of language learning anxiety and limited opportunities for individualized practice (Zhao & Qi, 2022). In response to these structural constraints, Chinese universities have increasingly turned to educational technologies to supplement classroom instruction, aiming to enhance learner engagement, provide personalized feedback, and support autonomous learning beyond limited contact hours (Zhang et al., 2024a). Within this context, technology is often positioned not as a replacement for teachers but as a means of extending instructional support and alleviating pressures inherent in traditional EFL settings.

In recent years, artificial intelligence–enabled tools such as intelligent tutoring systems, automated writing evaluation, adaptive vocabulary platforms, and conversational chatbots have been rapidly adopted in Chinese EFL contexts (Guo, 2025). Empirical studies suggest that these technologies can improve learning efficiency and learner engagement by offering immediate feedback, adaptive difficulty levels, and opportunities for private rehearsal, which are particularly valued in environments where fear of negative evaluation is prevalent (e.g., Liu & Yu, 2022; Wang et al., 2024). At the same time, research indicates that students’ emotional responses to AI-based learning are mixed: while some learners report increased motivation and confidence, others express concerns about algorithmic transparency, data privacy and the perceived authority of automated feedback (Chen & Chen, 2024). These findings highlight the importance of trust and emotional acceptance as contextual factors shaping the effectiveness of AI-enhanced EFL learning in China. Accordingly, examining motivation, anxiety, and trust within this educational setting provides a meaningful lens for understanding how emerging technologies influence students’ psychological well-being in technology-mediated language learning environments.

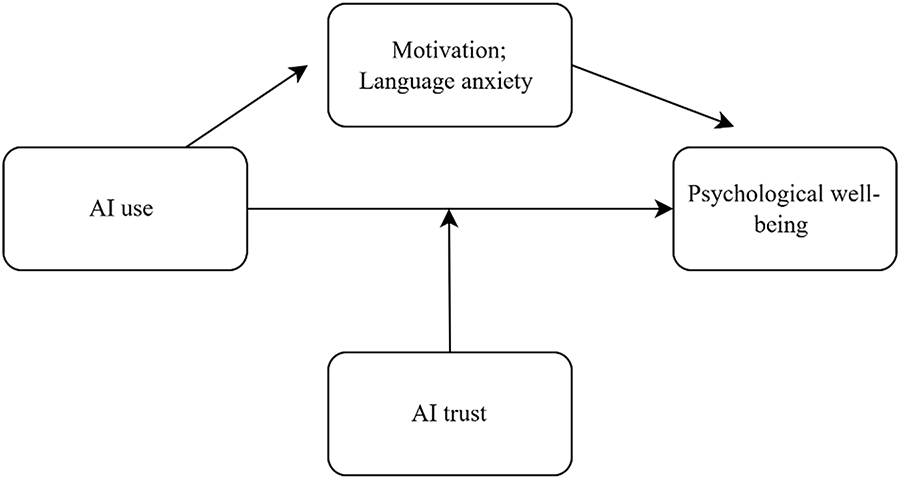

This study investigated whether engagement with AI-based learning tools is associated with increased motivation and reduced language learning anxiety, and whether learners’ trust in AI conditions these relationships (see Figure 1 for the conceptual framework). We tested the following hypotheses regarding students’ learning motivation.

Figure 1: Conceptual framework

H1: The use of AI-based learning tools is associated with higher EFL learning motivation.

H2: The use of AI-based learning tools is associated with lower language learning anxiety.

H3: Motivation and language learning anxiety mediate the relationship between AI use and psychological well-being, with higher motivation and lower anxiety jointly contributing to improved well-being.

H4: Trust in AI moderates the effects of AI use on motivation and language learning anxiety, such that higher trust strengthens the positive association between AI use and motivation and weakens the negative association between AI use and anxiety.

This study employed a sequential explanatory mixed-methods design (Creswell & Creswell, 2017) consisting of a quantitative survey followed by qualitative interviews. The design enabled the researchers to first examine structural relationships among AI use, motivation, language learning anxiety, trust, and psychological well-being, and then explore students’ lived experiences in depth. This design was selected to allow triangulation and to validate the multidimensional latent structure later tested through higher-order confirmatory factor analysis (CFA).

Participants were 310 EFL learners from two Chinese universities (62% female, 38% male; ages 17–21, M = 18.9, SD = 0.8). The participants represented diverse majors, with 85% reporting weekly use of AI tools over the semester.

The mixed methods study utilized surveys with all participants and interviews with a subset of the participants. The surveys and interview data collection tools are described next.

The Language Learning Orientation Scale (LLOS, Noels et al., 2000) consists of 21 items to assess students’ motivational orientations for learning English. The scale domains included intrinsic motivation (e.g., “I learn English because I enjoy it”), identified regulation (e.g., “Learning English is important to me because I value being able to communicate globally”), introjected regulation (e.g., “I would feel guilty if I didn’t learn English well”), external regulation (e.g., “I study English to get rewards or avoid punishments”), and amotivation (e.g., “I’m not sure why I’m learning English; it feels pointless”). We translated and slightly modified some items to mention the use of AI tools where relevant (for instance, an intrinsic motivation item became “I enjoy using AI language apps to improve my English”). Students rated items on a 7-point Likert scale (1 = strongly disagree, 7 = strongly agree). An overall autonomous motivation score was obtained by averaging the intrinsic and identified items, and an external motivation score by averaging introjected and external items (amotivation was analyzed separately). In this study, the LLOS scores showed good reliability (Cronbach’s α = 0.88 for intrinsic-identified motivation composite; 0.82 for extrinsic composite; the amotivation subscale α = 0.75).

The Foreign Language Classroom Anxiety Scale (FLCAS, Horwitz et al., 1986; Liu & Jackson, 2008) comprises 12 items on learners’ affective response to classroom language use, capturing the intertwined dimensions of communication apprehension, test anxiety, and fear of negative evaluation. Items were rated on a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree), with higher scores indicating greater levels of language learning anxiety. Negatively phrased statements were reverse-coded to ensure consistent scoring direction. In the present study, FLCAS internal reliability was high (Cronbach’s α = 0.89), consistent with prior research reporting alpha values above 0.85 in Asian EFL contexts (Hu et al., 2021).

Trust in AI was assessed using an adapted version of the 8 items from the Trust in AI-EdTech Scale (TAIET, Nazaretsky et al., 2025), which conceptualizes trust as a multidimensional construct comprising four dimensions: reliability, benevolence, transparency, and data security/privacy. These dimensions reflect students’ perceptions of the accuracy of AI feedback, the supportive intentions of the AI, clarity regarding how the system operates, and protection of personal data (e.g., “I trust the AI’s feedback on my English is accurate,” “I believe the AI system keeps my data secure”). Responses were rated on a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree). Composite reliability values for TAIET, the four dimensions ranged from 0.78 to 0.88, and the higher-order construct demonstrated strong internal consistency (Cronbach’s α = 0.86).

AI use was measured using a brief three-item behavioral scale adapted from the Media and Technology Usage and Attitudes Scale (MTUAS, Rosen et al., 2013), which assesses frequency-based technology engagement. Responses were rated on a 1–5 Likert scale (1 = never, 5 = very often), with higher scores indicating more frequent and varied AI-supported learning behaviors. The AI use Internal consistency scores were acceptable (α = 0.82), with item-total correlations ranging from 0.54 to 0.68, indicating that the scale reliably assessed students’ AI use in EFL learning contexts.

Students’ psychological well-being was assessed using the 14-item Warwick-Edinburgh Mental Well-being Scale (WEMWBS, Tennant et al., 2007) measure of hedonic (e.g., happiness, life satisfaction) and eudaimonic (e.g., personal growth, positive functioning) aspects of well-being. Respondents rated each statement (e.g., “I’ve been feeling optimistic about the future,” “I’ve been feeling useful”) on a 5-point Likert scale ranging from 1 = none of the time to 5 = all of the time. Scores were summed to produce a composite well-being index, with higher scores indicating greater mental well-being. The WEMWBS scores yielded high internal reliability in the present study (Cronbach’s α = 0.91) and have been validated among Chinese university students (Fung, 2019).

A semi-structured interview guide was developed to explore students’ perceptions and experiences with AI-assisted EFL learning. Key questions addressed changes in motivation, emotional responses (e.g., anxiety, confidence), trust in AI, and perceived effects on learning and well-being. Participants were also asked to compare AI-based and traditional learning methods. Interviewers used probes and follow-up prompts to elicit concrete examples and reflections on changes over time. All interviews were audio-recorded, transcribed in Chinese, and translated into English with bilingual verification to ensure accuracy and meaning preservation.

The Nanyang Normal University Ethics Committee approved the study. Participants consented to the study. They were informed of the study’s purpose, confidentiality, and voluntary participation.

Quantitative data were analyzed using SPSS 26.0 and AMOS 24.0. Descriptive statistics were first computed to summarize demographic information and key variables (AI use, motivation, anxiety, trust, and well-being). Reliability analyses (Cronbach’s α) assessed internal consistency, and Pearson correlations examined bivariate relationships among constructs. To ensure measurement coherence across constructs, AI use was analyzed using the unified 3-item Frequency–Duration–Breadth (FDB) scale and all latent variables were evaluated using consistent Likert-type responses to avoid scale inconsistency issues.

Before estimating the structural model, Confirmatory Factor Analysis (CFA) was performed to assess construct validity. Both first-order and higher-order CFA models were tested to accommodate the multidimensional structure of motivation and language learning anxiety. To ensure adequate testing of a multidimensional latent structure, a higher-order CFA was conducted in addition to the first-order model, directly addressing the multidimensionality noted by reviewers. The first-order four-factor model (AI use, motivation, language learning anxiety, trust) demonstrated good fit: CFI = 0.94, TLI = 0.92, RMSEA = 0.055. A second-order model was then estimated, specifying motivation, anxiety, and trust as first-order dimensions loading onto a higher-order construct of psychological experience. The higher-order model also showed acceptable fit (CFI = 0.93, TLI = 0.91, RMSEA = 0.057), and the chi-square difference test indicated no significant deterioration in fit (Δχ² = 12.1, Δdf = 9, p = 0.21). These results confirm that the multidimensional structure of the model is statistically sound and can be represented by both first- and higher-order latent structures.

The higher-order model demonstrated superior fit (χ²/df, CFI, TLI, RMSEA), supporting the use of higher-order latent constructs in SEM and addressing concerns regarding multidimensionality. Model fit was evaluated using conventional indices (χ²/df, CFI, TLI, RMSEA, and SRMR).

To address potential common method bias (CMB), two statistical procedures were performed before model estimation. First, Harman’s single-factor test was conducted using an exploratory factor extraction to assess whether a single factor accounted for the majority of variance. Second, a confirmatory common latent factor (CLF) approach was applied in AMOS to evaluate inflation in standardized loadings attributable to method effects. These procedures allowed us to determine whether common method variance posed a significant threat to the validity of the findings. The first unrotated factor accounted for 28.6% of the variance, below the conventional 40% threshold, suggesting that no single factor dominated the variance. In addition, a confirmatory common latent factor (CLF) model was estimated, and comparisons showed that standardized factor loadings changed by less than 0.05 when the latent method factor was included. In conclusion, these results indicate that common method variance was unlikely to substantially bias the study’s findings. The structural equation modeling (SEM) was then conducted to test the hypothesized direct and indirect relationships among AI use, motivation, language learning anxiety, trust and psychological well-being. Specifically, a structural equation model (SEM) was estimated using AMOS to test the hypothesized relationships among AI use, motivation, anxiety, trust, and psychological well-being. To ensure the validity of the multidimensional constructs included in this model, a higher-order confirmatory factor analysis (CFA) was conducted prior to SEM. The higher-order model demonstrated superior fit compared to the first-order alternative, supporting the use of higher-order latent constructs for motivation and language learning anxiety. The final measurement model exhibited excellent fit to the data: χ²(220, N = 310) = 430.5, p < 0.001; χ²/df = 1.96; CFI = 0.94; TLI = 0.92; RMSEA = 0.055 (90% CI [0.046, 0.063]); SRMR = 0.049. These indices all exceeded conventional cutoffs (CFI and TLI > 0.90; RMSEA < 0.06; SRMR < 0.08), indicating that the measurement structure adequately represented the observed covariance matrix. The mediating effects of motivation and anxiety were examined using bias-corrected bootstrapping, and the moderating role of trust was assessed through a multi-group SEM comparison (high-trust vs. low-trust groups). All SEM paths and latent constructs were aligned with the revised, validated measurement structure to ensure conceptual and statistical coherence. Although structural equation modeling was used to examine hypothesized directional pathways, the cross-sectional nature of the quantitative data limits causal inference. The SEM paths, therefore, represent associations rather than causal effects.

For the qualitative phase, thematic analysis was employed following Braun and Clarke (2006) six-step approach: familiarization, coding, theme generation, review, definition, and reporting. NVivo 12 software was used to organize and code the transcripts. Themes were developed inductively to capture students’ lived experiences regarding motivation, anxiety, trust, and well-being in AI-enhanced EFL learning. Triangulation between quantitative and qualitative findings strengthened the validity of interpretations.

Descriptive and correlational analyses

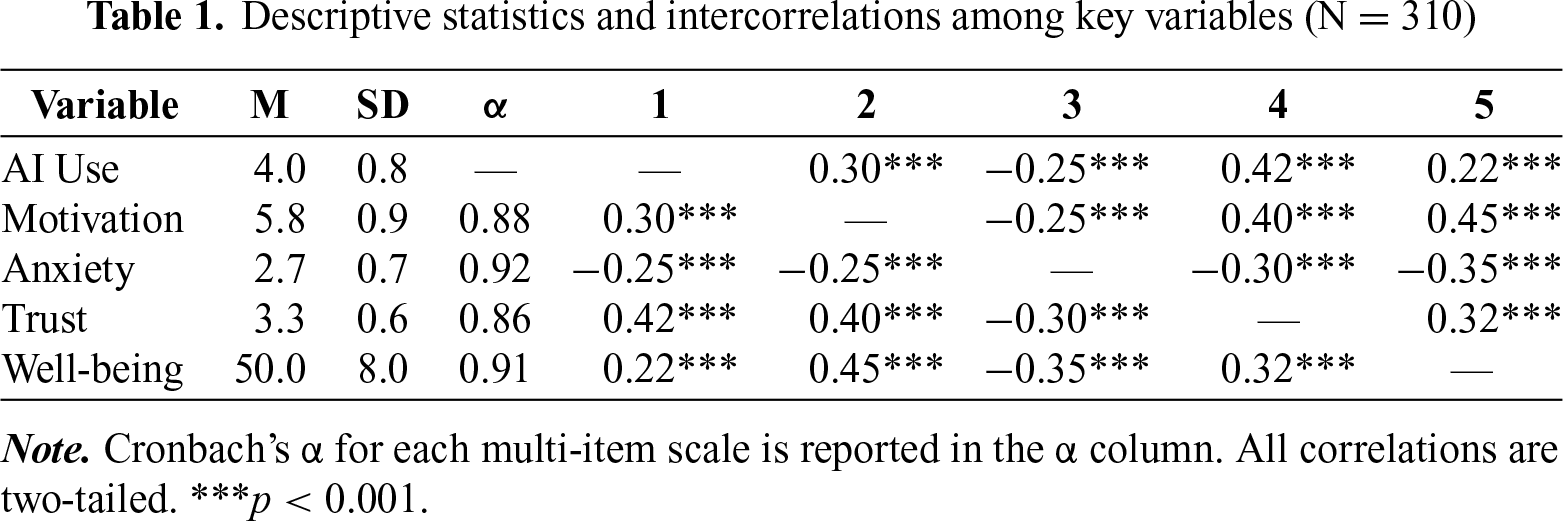

Descriptive statistics and reliability coefficients for major constructs are presented in Table 1. Students reported high autonomous motivation (M = 5.8, SD = 0.9, on a 7-point scale), moderate trust in AI tools (M = 3.3, SD = 0.6, on a 5-point scale), and mild to moderate language learning anxiety (M = 2.7, SD = 0.7). Cronbach’s α values ranged from 0.82 to 0.92, indicating good internal consistency.

As hypothesized, motivation and trust correlated positively with well-being, whereas language learning anxiety was negatively correlated (all p < 0.001). Specifically, motivation (r = 0.45) and trust (r = 0.32) were positively associated with well-being, while language learning anxiety was negatively associated (r = –0.35). Intercorrelations among predictors were also in expected directions: trust correlated positively with motivation (r = 0.40) and negatively with language learning anxiety (r = –0.30). Frequency of AI use correlated modestly with motivation (r = 0.30) and inversely with language learning anxiety (r = –0.25). Overall, these correlations suggested that higher trust and motivation, and lower language learning anxiety, were linked to greater psychological well-being in AI-assisted EFL learning contexts.

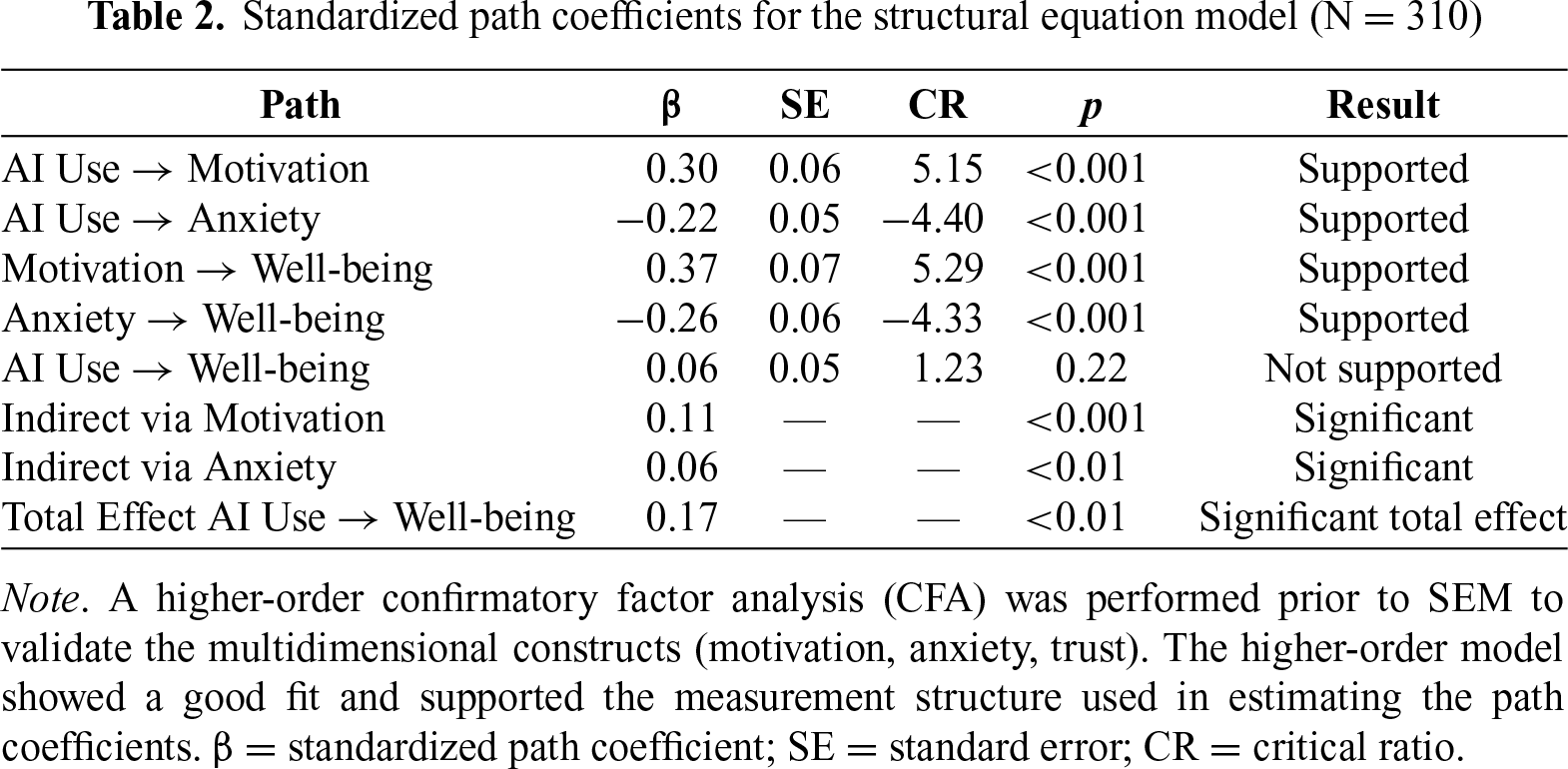

AI effects on motivatio n and anxiety. As predicted, AI use had significant direct effects on both motivation and anxiety. Specifically, higher engagement with AI-based EFL tools significantly increased motivation (β = 0.30, p < 0.001) and decreased anxiety (β = –0.22, p < 0.001). These findings suggest that frequent interaction with AI learning platforms may simultaneously stimulate learners’ enthusiasm and reduce apprehension toward English learning. Furthermore, motivation positively predicted psychological well-being (β = 0.37, p < 0.001), whereas anxiety negatively predicted well-being (β = –0.26, p < 0.001).

Motivation and anxiety mediation. As Table 2 illustrates, both mediation paths were significant based on 5000 bias-corrected bootstrap samples: the indirect effect of AI use on well-being via motivation was significant (β = 0.11, 95% CI [0.06, 0.18]), as was the indirect effect via anxiety reduction (β = 0.06, 95% CI [0.02, 0.12]). The total effect of AI use on well-being remained significant (β = 0.17, p < 0.01), whereas the direct path from AI use to well-being became nonsignificant once the mediators were included (β = 0.06, p = 0.22), indicating full mediation by motivation and anxiety.

Taken together, these results confirm that AI use influences learners’ psychological well-being primarily through motivational enhancement and anxiety reduction rather than through direct effects. The integration of the validated higher-order measurement structure strengthens confidence in these pathways, demonstrating that the latent variables operated consistently across the model.

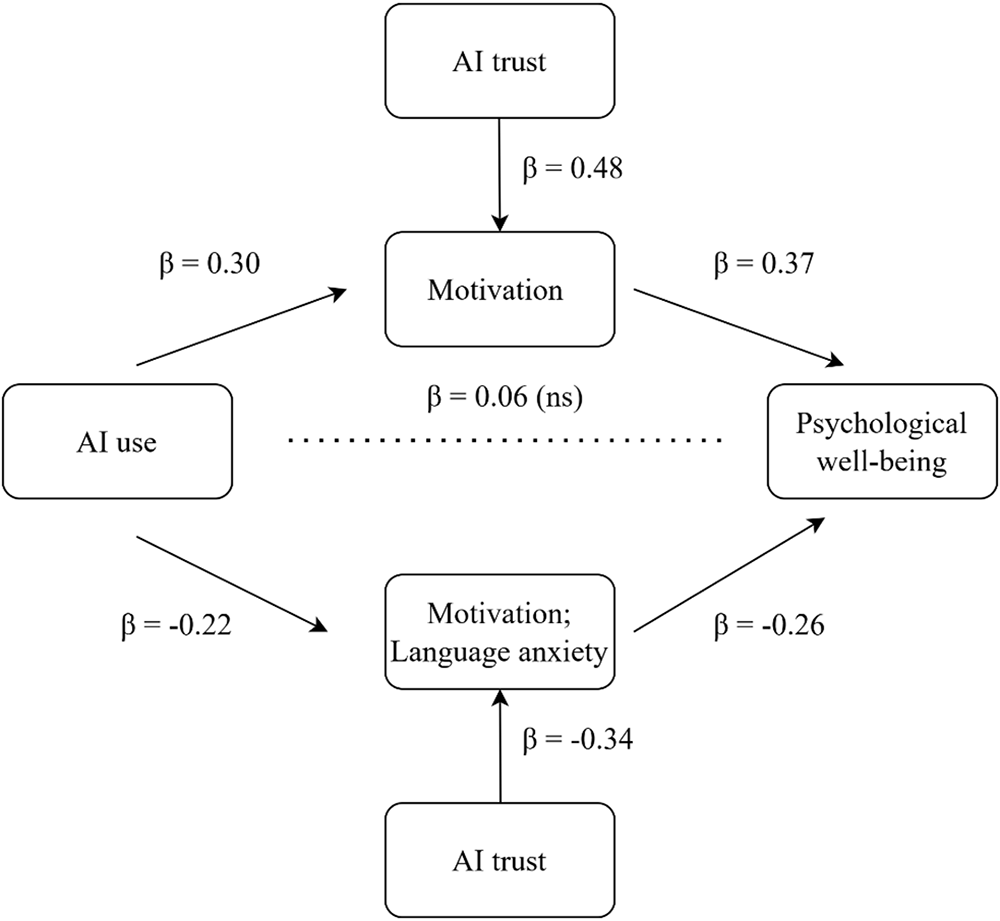

As shown in Figure 2, AI use had a significant positive effect on motivation (β = 0.30, p < 0.001) and a significant negative effect on anxiety (β = –0.22, p < 0.001). Motivation positively predicted psychological well-being (β = 0.37, p < 0.001), whereas anxiety negatively predicted well-being (β = –0.26, p < 0.001). The direct path from AI use to psychological well-being was small and nonsignificant (β = 0.06, p = 0.22), indicating that the effect of AI use on well-being operated primarily through motivation and anxiety. Overall, the findings support the proposed model. Hypotheses 1 and 2 were confirmed, indicating that greater AI use was associated with higher motivation and lower language learning anxiety. Hypothesis 3 was also supported, as motivation and anxiety fully mediated the relationship between AI use and psychological well-being, suggesting that AI exerts its influence primarily through affective-motivational pathways.

Figure 2: Structural equation model of AI-enhanced EFL learning

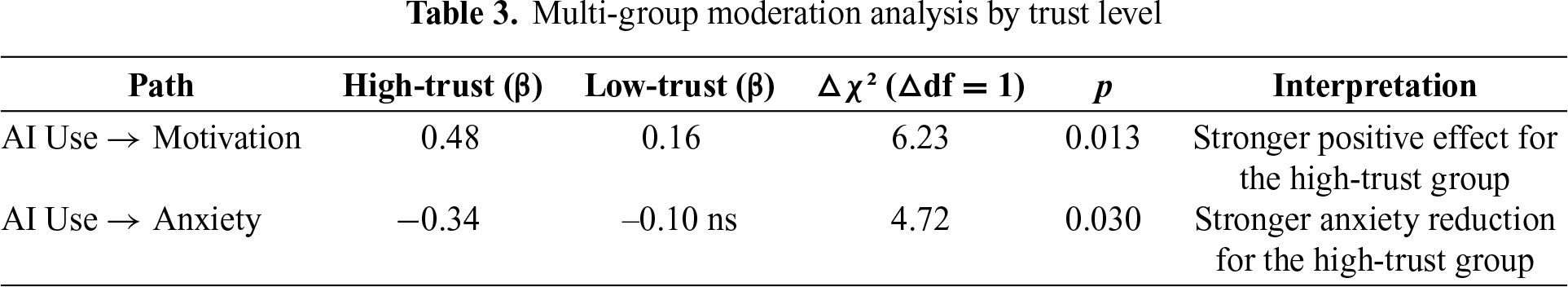

Trust in AI moderation. To test the moderating hypothesis, a multi-group SEM was performed by dividing participants into high-trust and low-trust groups (based on a median split of the trust scores). The freely estimated model fit the data significantly better than the constrained model (

Overall, these results suggest that trust in AI acts as a psychological catalyst in AI-enhanced language learning. When students trusted the AI tools, more frequent use translated into stronger motivation and reduced anxiety, thereby fostering a more positive emotional learning climate. Conversely, when trust was low, increased AI use did not yield comparable psychological benefits and sometimes provoked unease or resistance. Hypothesis 4 was confirmed, with trust in AI strengthening the positive effect of AI use on motivation and amplifying its anxiety-reducing effect.

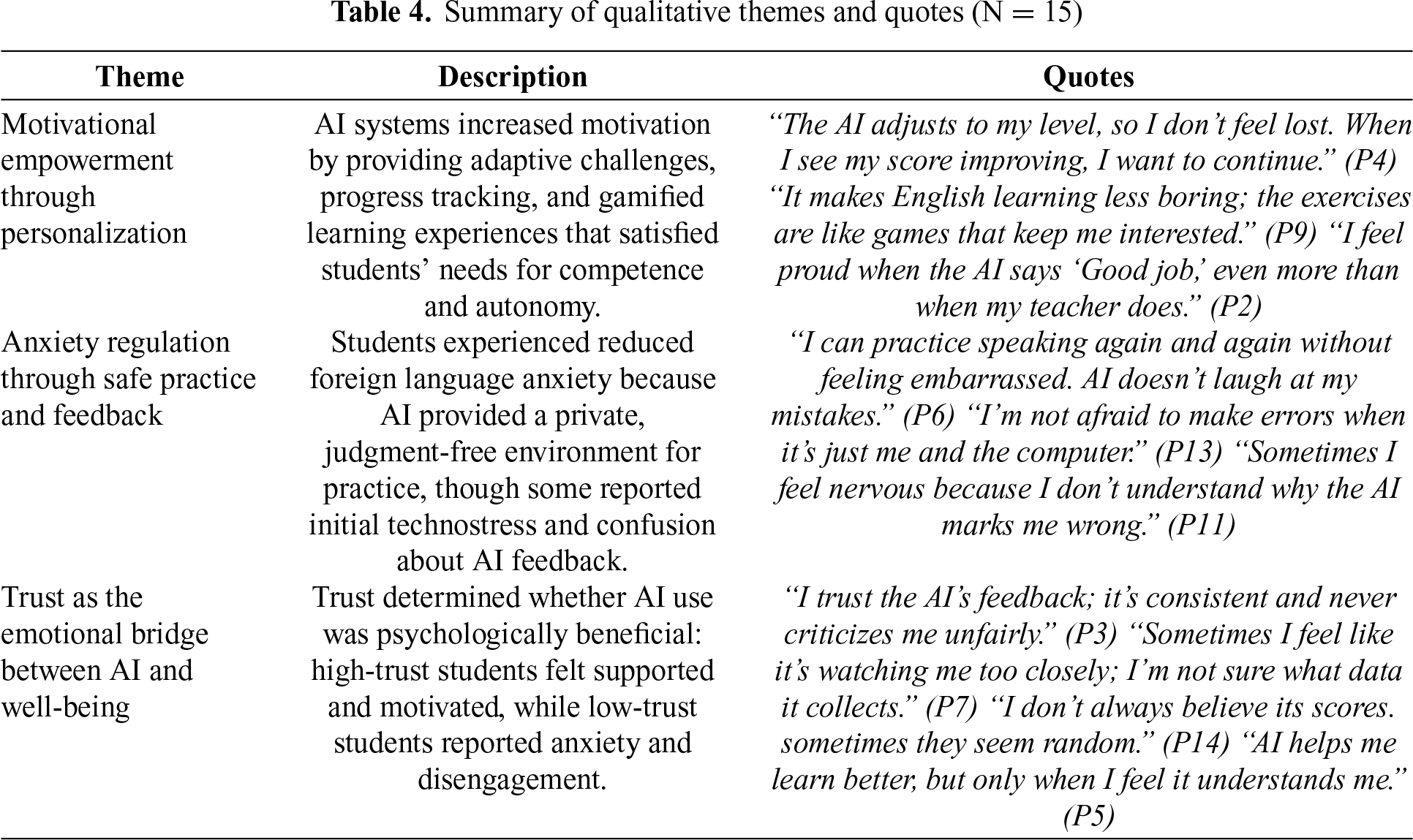

To complement the quantitative analysis, thematic examination of the 15 semi-structured interviews provided nuanced insight into students’ lived experiences with AI-enhanced English learning. As illustrated in Table 4, three major themes emerged: (1) Motivational Empowerment through Personalization, (2) Anxiety Regulation through Safe Practice and Feedback, and (3) Trust as the Emotional Bridge between AI and Well-being. These findings further illuminate how motivation, anxiety, and trust interact to influence learners’ psychological well-being in the AI-supported EFL environment.

Theme 1: Motivational empowerment through personalization. Most participants reported that AI tools fostered their motivation by providing adaptive challenges, clear progress tracking, and interactive learning experiences. As one student stated, “The AI adjusts to my level, so I don’t feel lost. When I see my score improving, I want to continue” (P4). Another remarked, “It makes English learning less boring; the exercises are like games that keep me interested” (P9). The adaptive design and gamified feedback appeared to satisfy students’ needs for competence and autonomy, reinforcing intrinsic motivation as predicted by Self-Determination Theory. Students who perceived the AI as fair and responsive described greater confidence and enjoyment, which translated into stronger engagement and well-being. For example, P2 commented, “I feel proud when the AI says ‘Good job,’ even more than when my teacher does.”

Theme 2: Anxiety regulation through safe practice and feedback. Participants widely agreed that AI-based systems reduced their traditional language learning anxiety by offering a private, judgment-free space. “I can practice speaking again and again without feeling embarrassed. AI doesn’t laugh at my mistakes,” explained P6. Similarly, P13 observed, “I’m not afraid to make errors when it’s just me and the computer.” These experiences suggest that AI tools can help students manage fear of negative evaluation, a central component of foreign language anxiety. However, several participants also reported language learning anxiety stemming from technological uncertainty. For instance, P11 admitted, “Sometimes I feel nervous because I don’t understand why the AI marks me wrong.” A few also expressed frustration over inconsistent feedback or system errors, reflecting temporary technostress during adaptation. Over time, most participants described reduced anxiety as familiarity grew, which was consistent with the quantitative evidence that frequent AI use was linked to lower overall anxiety.

Theme 3: Trust as the emotional bridge between AI and well-being. Across interviews, trust emerged as the decisive emotional factor determining whether AI use enhanced or hindered learning experiences. High-trust students viewed AI as a reliable and supportive partner. “I trust the AI’s feedback; it’s consistent and never criticizes me unfairly,” said P3. Another remarked, “I feel comfortable using it because it helps me fix my mistakes quickly” (P10). In contrast, low-trust participants expressed doubt about the AI’s reliability and privacy, which dampened motivation and heightened stress. “Sometimes I feel like it’s watching me too closely; I’m not sure what data it collects,” confessed P7. Similarly, P14 shared, “I don’t always believe its scores. Sometimes they seem random.” Collectively, these accounts illustrate that trust functions as an emotional moderator: when trust is high, AI reduces anxiety and strengthens motivation; when trust is low, it can provoke skepticism and disengagement.

In essence, the qualitative evidence reinforces the statistical findings, revealing that students’ psychological well-being in AI-enhanced EFL learning is co-constructed through personalization, emotional safety, and trust. AI technologies were most beneficial when perceived as empathetic learning partners that support autonomy and competence rather than as mechanical evaluators. As P5 aptly concluded, “AI helps me learn better, but only when I feel it understands me.”

Integration of the key findings

The integration of quantitative and qualitative findings demonstrates a coherent pattern: AI use enhances EFL learners’ psychological well-being indirectly through increased motivation and reduced anxiety, with trust functioning as a critical amplifier. The SEM results showed that motivation and anxiety fully mediated the effect of AI use on well-being, while trust moderated both pathways. Qualitative evidence enriched these results by revealing that adaptive feedback, gamified learning, and private practice environments fostered competence, autonomy, and emotional safety, which in turn strengthened motivation and reduced fear (P2, P4, P6). Yet, participants also highlighted that unclear AI feedback and data privacy concerns could provoke technostress (P7, P11, P14), explaining individual variability observed in the quantitative data. These qualitative insights triangulate with the validated higher-order quantitative model and address concerns about measurement coherence across constructs. Collectively, both strands converge on the conclusion that trust transforms AI from a neutral tool into a psychologically supportive partner, magnifying its positive impact on learners’ motivation, emotional balance, and overall well-being.

This study examined how AI-enhanced English learning environments affect students’ psychological well-being through the mediating roles of motivation and language learning anxiety, and the moderating influence of trust. Integrating quantitative and qualitative evidence revealed that AI implementation benefits learners primarily by fostering self-determined motivation and alleviating language learning anxiety, although these effects varied according to students’ trust in AI systems. The findings contribute to growing research at the intersection of artificial intelligence, psychology and positive education, offering a more differentiated account of how technology-mediated environments influence emotional functioning and mental well-being.

Consistent with SDT, AI-supported learning enhanced well-being when it satisfied students’ basic psychological needs for autonomy, competence, and relatedness. Quantitative analyses reported that motivation fully mediated the relationship between AI use and well-being, while interviews illustrated how adaptive feedback, individualized pacing, and gamified design reinforced students’ sense of progress and enjoyment. Learners felt empowered when AI tools allowed self-regulated learning and competence growth, reflecting autonomous motivation, which has been linked to persistence and happiness in prior language learning studies (e.g., Du, 2025; Yu & Tao, 2025). Moreover, this pattern aligns with emerging research showing that AI-driven personalization can strengthen intrinsic motivation by enhancing perceived mastery and meaningful goal progress in digital learning contexts (e.g., Almogren et al., 2024; Zhao et al., 2025). Thus, the current findings extend SDT into the AI-assisted context, demonstrating that when AI systems are designed to support psychological needs rather than impose control, they can serve as catalysts for both cognitive and emotional flourishing.

Meanwhile, the present study also reinforces the significance of anxiety regulation in AI-enhanced EFL settings. Foreign language anxiety has long been recognized as a barrier to learning (Dryden et al., 2021), yet the qualitative narratives here suggest that AI can create emotionally safer spaces for practice. Students appreciated that AI feedback was private, impartial, and nonjudgmental, reducing fear of negative evaluation. This finding is consistent with evidence that low-stakes, technology-mediated rehearsal settings can reduce evaluation-related apprehension by fostering experimentation without social pressure (He et al., 2025). Nonetheless, a minority of students reported language learning anxiety, stemming from unclear evaluation mechanisms or fears of constant monitoring, which echoes the notion of technostress in educational technology (Tarafdar et al., 2017). These findings underscore the dual nature of AI. While it can mitigate traditional classroom stressors, it may also introduce new forms of psychological strain if transparency and usability are insufficient.

Subsequently, the most salient contribution of this study lies in confirming the central moderating role of trust. Trust emerged as both a technological and psychological variable associated with AI use translating into positive or neutral emotional outcomes. Students with high trust perceived AI as a reliable and benevolent partner, reporting increased motivation and reduced anxiety, whereas those with low trust experienced skepticism and disengagement. These findings build on prior work demonstrating that trust is a core determinant of students’ acceptance of AI in education (Choung et al., 2022; Zhang et al., 2024b) and extend it by showing that trust also shapes the emotional consequences of AI use, not merely usage intention. Specifically, this extends earlier findings by revealing that trust functions as an interpretive filter: high-trust learners tended to attribute AI feedback to supportive intent, whereas low-trust learners construed similar feedback as overly critical or surveillance-oriented. Thus, cultivating trust in AI is not merely a technical matter but a pedagogical imperative that shapes learners’ emotional engagement.

In essence, the findings highlight that AI-enhanced learning environments can promote psychological well-being when they integrate technological functionality with human-centered design.

Implications for theory, research and practice

This finding underscores the importance of designing AI systems that foster students’ autonomy and competence while minimizing anxiety-provoking features. From a theoretical standpoint, the results support the integration of SDT and AI psychology, highlighting that AI-based learning environments can enhance psychological well-being when they satisfy basic psychological needs for autonomy, competence, and relatedness. Moreover, from a theoretical standpoint, the study contributes to the emerging field of artificial intelligence psychology by bridging motivational theory, affective regulation, and trust dynamics. The findings of this study have several important implications for both educational practice and psychological research. First, integrating AI systems into EFL learning can promote psychological well-being when these tools are designed and implemented in ways that support learners’ basic psychological needs for autonomy and competence. Educators should therefore emphasize need-supportive pedagogical uses of AI, allowing students to set personalized learning goals, select learning modes, and receive adaptive feedback that reinforces progress rather than control. Second, trust in AI emerged as a decisive factor shaping motivation and anxiety.

Practically, it calls for teacher training and institutional policies that encourage transparency, ethical data practices, and empathy-oriented AI design. Ensuring that learners understand how AI evaluates their performance, how their data is handled, and how AI feedback supports growth may further strengthen trust and maximize the emotional benefits of AI-assisted learning. When learners perceive AI systems as trustworthy, supportive, and autonomy-enhancing, these tools can move beyond efficiency to become genuine promoters of emotional resilience and mental health in education. By this evidence, institutions and developers should prioritize transparency in algorithmic feedback, provide clear data privacy assurances, and encourage teachers to mediate students’ understanding of AI as a supportive tool rather than a surveillance mechanism. Third, this study highlights the potential of AI psychology as a multidisciplinary research area linking educational technology, affective science, and mental health promotion. By examining both the motivational and affective consequences of AI use, the current study provides an integrated perspective that has been relatively overlooked in previous single-variable investigations.

Limitations and future directions

Despite its contributions, this study has several limitations. Firstly, the sample was restricted to undergraduate students from two universities in China, which may limit the generalizability of the findings to other educational levels or cultural contexts. Secondly, although the mixed-methods design provided both breadth and depth, the quantitative data were cross-sectional and cannot establish causality between AI use, motivation, anxiety, and well-being. In addition, self-reported measures, while validated and reliable, may have been influenced by social desirability or recall bias. Common method bias was mitigated through procedural remedies (e.g., anonymity, item diversification), and statistical tests suggested no significant CMB effects; however, the possibility of residual method variance cannot be fully excluded. Meanwhile, the qualitative component, though rich in insight, relied on voluntary participation, which may have overrepresented students more comfortable with AI, thereby limiting the range of negative perspectives. Finally, this study focused on AI use within English learning; future research should test whether similar mechanisms operate in other disciplines or under different forms of AI-mediated instruction.

Future studies should adopt longitudinal or experimental designs to trace how motivation, anxiety, and trust evolve over sustained exposure to AI-based learning tools. Including a control group using traditional instruction would help rule out confounding influences such as teacher style or course structure. Incorporating objective behavioral and physiological measures (e.g., eye-tracking, response latency, or stress biomarkers) could provide a more comprehensive view of learners’ affective responses to AI interaction. Comparative cross-cultural research would also enrich understanding of how sociocultural values and educational norms influence trust formation and emotional adaptation in AI environments. Moreover, collaboration between educators, psychologists, and AI developers is needed to design emotionally intelligent systems capable of recognizing learners’ affective states and providing empathetic feedback. Ultimately, future research should move toward an integrative framework of AI-assisted well-being, examining not only the cognitive and emotional outcomes of AI use but also its ethical, social, and developmental implications in diverse learning contexts.

This study contributes to the emerging field of artificial intelligence psychology by demonstrating how AI-enhanced English learning environments can simultaneously advance linguistic achievement and promote psychological well-being. Drawing upon Self-Determination Theory (SDT) and trust-based technology acceptance frameworks (UTAUT), the research revealed that AI use exerts its positive influence on well-being primarily through enhancing motivation and reducing anxiety, and that trust functions as a vital amplifier of these effects. The integration of structural equation modeling and thematic analysis provided convergent evidence that AI tools support students’ autonomy and competence when perceived as transparent, adaptive, and reliable. Furthermore, the validated higher-order measurement structure strengthened confidence that these psychological mechanisms operated consistently across constructs. Conversely, when trust is low or feedback opaque, technological benefits diminish and new anxieties may arise, including uncertainty about evaluation accuracy and concerns related to algorithmic opacity.

Collectively, these findings underscore that fostering motivation, managing anxiety, and cultivating trust are essential psychological mechanisms for optimizing students’ emotional engagement with AI in language education. This study also clarifies how these mechanisms interact, demonstrating that trust not only shapes students’ willingness to use AI but also conditions its emotional consequences, thereby extending existing models of educational AI engagement. Beyond confirming the psychological potential of AI, the study highlights a broader pedagogical imperative: that human-centered, ethically designed AI systems can serve not merely as instructional aids, but as catalysts of learner flourishing and mental well-being in the digital era. To maximize these benefits, future AI design and instructional practice should prioritize transparency, fairness and supportive feedback structures that align with learners’ psychological needs.

Acknowledgement: The author would like to thank all the participants in this survey.

Funding Statement: The author received no specific funding.

Availability of Data and Materials: Data for this study are available by emailing the corresponding author.

Ethics Approval: This study involving human participants was reviewed and approved by the Ethics Committee of Nanyang Normal University.

Informed Consent: Participants consented to the study. They were informed of the study’s purpose, confidentiality, and voluntary participation.

Conflicts of Interest: The author declares no conflicts of interest.

References

Abdelazim, A., Breiki, M. A., & Khlaif, Z. N. (2025). AI in education: The mediating role of perceived trust in adoption decisions of school leaders. Education and Information Technologies, 30(15), 20943–20975. https://doi.org/10.1007/s10639-025-13596-4 [Google Scholar] [CrossRef]

Almogren, A. S., Al-Rahmi, W. M., & Dahri, N. A. (2024). Integrated technological approaches to academic success: Mobile learning, social media, and AI in visual art education. IEEE Access, 12, 175391–175413. https://doi.org/10.1109/access.2024.3498047 [Google Scholar] [CrossRef]

Aydın, S., & Tekin, I. (2023). Positive psychology and language learning: A systematic scoping review. Review of Education, 11(3), e3420. https://doi.org/10.1002/rev3.3420 [Google Scholar] [CrossRef]

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101. https://doi.org/10.1191/1478088706qp063oa. [Google Scholar] [CrossRef]

Brooks, R. B., & Brooks, S. (2023). Nurturing positive emotions in the classroom: A foundation for purpose, motivation, and resilience in schools. In: Handbook of resilience in children, Berlin/Heidelberg, Germany: Springer. (pp. 549–568). https://doi.org/10.1007/978-3-031-14728-9_30 [Google Scholar] [CrossRef]

Cengiz, Y., & Aptoula, N. Y. (2025). Comparing AI and human feedback at higher education: Level appropriateness, quality and coverage. Language Teaching, 12, 1–6. https://doi.org/10.1017/s0261444825000199 [Google Scholar] [CrossRef]

Chen, T., & Chen, J. (2024). Exploring college students’ perceptions of privacy and security in online learning: A comprehensive questionnaire-based study. International Journal of Human-Computer Interaction, 41(10), 6481–6494. https://doi.org/10.1080/10447318.2024.2379722 [Google Scholar] [CrossRef]

Chen, S., Huang, L., Shadiev, R., & Hu, P. (2024). An extension of UTAUT model to understand elementary school students’ behavioral intention to use an online homework platform. Education and Information Technologies, 30(1), 229–255. https://doi.org/10.1007/s10639-024-12852-3 [Google Scholar] [CrossRef]

Choung, H., David, P., & Ross, A. (2022). Trust in AI and its role in the acceptance of AI technologies. International Journal of Human-Computer Interaction, 39(9), 1727–1739. https://doi.org/10.1080/10447318.2022.2050543 [Google Scholar] [CrossRef]

Creswell, J. W., & Creswell, J. D. (2017). Research design: qualitative, quantitative, and mixed methods approaches. Thousand Oaks, CA, USA: SAGE Publications. [Google Scholar]

Cukurova, M. (2024). The interplay of learning, analytics and artificial intelligence in education: A vision for hybrid intelligence. British Journal of Educational Technology, 56(2), 469–488. https://doi.org/10.1111/bjet.13514 [Google Scholar] [CrossRef]

Ding, L. (2024). Exploring the causes, consequences, and solutions of Chinese EFL teachers’ psychological ill-being: A qualitative investigation. European Journal of Education, 59(4), e12739. https://doi.org/10.1111/ejed.12739 [Google Scholar] [CrossRef]

Dryden, S., Tankosić, A., & Dovchin, S. (2021). Foreign language anxiety and translanguaging as an emotional safe space: Migrant English as a foreign language learners in Australia. System, 101, 102593. https://doi.org/10.1016/j.system.2021.102593 [Google Scholar] [CrossRef]

Du, Q. (2025). How artificially intelligent conversational agents influence EFL learners’ self-regulated learning and retention. Education and Information Technologies, 30(15), 21635–21701. https://doi.org/10.1007/s10639-025-13602-9 [Google Scholar] [CrossRef]

Fung, S. (2019). Psychometric evaluation of the Warwick-Edinburgh mental well-being scale (WEMWBS) with Chinese university students. Health and Quality of Life Outcomes, 17(1), 46. https://doi.org/10.1186/s12955-019-1113-1. [Google Scholar] [PubMed] [CrossRef]

Guo, J. (2025). Factors affecting EFL students’ behavioral intention to use AI in EFL writing development. Innovation in Language Learning and Teaching, 19, 1–23. https://doi.org/10.1080/17501229.2025.2563695 [Google Scholar] [CrossRef]

He, M., Abbasi, B. N., & He, J. (2025). AI-driven language learning in higher education: An empirical study on self-reflection, creativity, anxiety, and emotional resilience in EFL learners. Humanities and Social Sciences Communications, 12(1), 1525. https://doi.org/10.1057/s41599-025-05817-5 [Google Scholar] [CrossRef]

Horwitz, E. K., Horwitz, M. B., & Cope, J. (1986). Foreign language classroom anxiety. Modern Language Journal, 70(2), 125–132. https://doi.org/10.1111/j.1540-4781.1986.tb05256.x [Google Scholar] [CrossRef]

Hu, X., Zhang, X., & McGeown, S. (2021). Foreign language anxiety and achievement: A study of primary school students learning English in China. Language Teaching Research, 28(4), 1594–1615. https://doi.org/10.1177/13621688211032332 [Google Scholar] [CrossRef]

Huang, F., Teo, T., & Zhao, X. (2023). Examining factors influencing Chinese ethnic minority English teachers’ technology adoption: An extension of the UTAUT model. Computer Assisted Language Learning, 38, 928–950. https://doi.org/10.1080/09588221.2023.2239304 [Google Scholar] [CrossRef]

Kenesei, Z., Kökény, L., Ásványi, K., & Jászberényi, M. (2025). The central role of trust and perceived risk in the acceptance of autonomous vehicles in an integrated UTAUT model. European Transport Research Review, 17(1), 8. https://doi.org/10.1186/s12544-024-00681-x [Google Scholar] [CrossRef]

Liu, M., & Jackson, J. (2008). An exploration of Chinese EFL learners’ unwillingness to communicate and foreign language anxiety. Modern Language Journal, 92(1), 71–86. https://doi.org/10.1111/j.1540-4781.2008.00687.x [Google Scholar] [CrossRef]

Liu, M., & Yu, D. (2022). Towards intelligent E-learning systems. Education and Information Technologies, 28(7), 7845–7876. https://doi.org/10.1007/s10639-022-11479-6. [Google Scholar] [PubMed] [CrossRef]

Loewen, S., Isbell, D. R., & Sporn, Z. (2020). The effectiveness of app-based language instruction for developing receptive linguistic knowledge and oral communicative ability. Foreign Language Annals, 53(2), 209–233. https://doi.org/10.1111/flan.12454 [Google Scholar] [CrossRef]

Nazaretsky, T., Ariely, M., Cukurova, M., & Alexandron, G. (2022). Teachers’ trust in AI-powered educational technology and a professional development program to improve it. British Journal of Educational Technology, 53(4), 914–931. https://doi.org/10.1111/bjet.13232 [Google Scholar] [CrossRef]

Nazaretsky, T., Mejia-Domenzain, P., Swamy, V., Frej, J., & Käser, T. (2025). The critical role of trust in adopting AI-powered educational technology for learning: An instrument for measuring student perceptions. Computers and Education Artificial Intelligence, 8, 100368. https://doi.org/10.1016/j.caeai.2025.100368 [Google Scholar] [CrossRef]

Noels, K. A., Pelletier, L. G., Clément, R., & Vallerand, R. J. (2000). Why are you learning a second language? Motivational orientations and self-determination theory. Language Learning, 50(1), 57–85. https://doi.org/10.1111/0023-8333.00111 [Google Scholar] [CrossRef]

Payne, A. L., Stone, C., & Bennett, R. (2022). Conceptualising and building trust to enhance the engagement and achievement of under-served students. The Journal of Continuing Higher Education, 71(2), 134–151. https://doi.org/10.1080/07377363.2021.2005759 [Google Scholar] [CrossRef]

Qin, F., Li, K., & Yan, J. (2020). Understanding user trust in artificial intelligence-based educational systems: Evidence from China. British Journal of Educational Technology, 51(5), 1693–1710. https://doi.org/10.1111/bjet.12994 [Google Scholar] [CrossRef]

Rosen, L., Whaling, K., Carrier, L., Cheever, N., & Rokkum, J. (2013). The media and technology usage and attitudes scale: An empirical investigation. Computers in Human Behavior, 29(6), 2501–2511. https://doi.org/10.1016/j.chb.2013.06.006. [Google Scholar] [PubMed] [CrossRef]

Stockwell, G., & Reinders, H. (2019). Technology, motivation and autonomy, and teacher psychology in language learning: Exploring the myths and possibilities. Annual Review of Applied Linguistics, 39, 40–51. https://doi.org/10.1017/s0267190519000084 [Google Scholar] [CrossRef]

Tarafdar, M., Cooper, C. L., & Stich, J. (2017). The technostress trifecta—techno eustress, techno distress and design: Theoretical directions and an agenda for research. Information Systems Journal, 29(1), 6–42. https://doi.org/10.1111/isj.12169 [Google Scholar] [CrossRef]

Tennant, R., Hiller, L., Fishwick, R., Platt, S., Joseph, S. et al. (2007). The Warwick-Edinburgh mental well-being scale (WEMWBS): Development and UK validation. Health and Quality of Life Outcomes, 5(1), 63. https://doi.org/10.1186/1477-7525-5-63. [Google Scholar] [PubMed] [CrossRef]

Wang, Y. (2025). Reducing anxiety, promoting enjoyment and enhancing overall English proficiency: The impact of AI-assisted language learning in Chinese EFL contexts. British Educational Research Journal, 28(1), 270. https://doi.org/10.1002/berj.4187 [Google Scholar] [CrossRef]

Wang, Q., Amini, M., & Fu, Z. (2025a). AI acceptance and Chinese EFL learners’ behavioral engagement with mediating effects of motivation. Scientific Reports, 15(1), 33310. https://doi.org/10.1038/s41598-025-11305-2. [Google Scholar] [PubMed] [CrossRef]

Wang, G., Obrenovic, B., Gu, X., & Godinic, D. (2025b). Fear of the new technology: Investigating the factors that influence individual attitudes toward generative artificial intelligence (AI). Current Psychology, 44(9), 8050–8067. https://doi.org/10.1007/s12144-025-07357-2 [Google Scholar] [CrossRef]

Wang, J., Guo, L., Gao, J. Q., & Zhao, H. (2024). How to build better environments that reinforce adaptation of online learning ?——evidence from a large-scale empirical survey of Chinese universities. Education and Information Technologies, 29(14), 1–23. https://doi.org/10.1007/s10639-024-12556-8 [Google Scholar] [CrossRef]

Wei, L. (2023). Artificial intelligence in language instruction: Impact on English learning achievement, L2 motivation, and self-regulated learning. Frontiers in Psychology, 14, 1261955. https://doi.org/10.3389/fpsyg.2023.1261955. [Google Scholar] [PubMed] [CrossRef]

Xin, Z., & Derakhshan, A. (2024). From excitement to anxiety: Exploring English as a foreign language learners’ emotional experiences in the artificial intelligence-powered classrooms. European Journal of Education, 60(1), 28. https://doi.org/10.1111/ejed.12845 [Google Scholar] [CrossRef]

Yan, J., Wu, C., Tan, X., & Dai, M. (2025). The influence of AI-driven personalized foreign language learning on college students’ mental health: A dynamic interaction among pleasure, anxiety, and self-efficacy. Frontiers in Public Health, 13, 1642608. https://doi.org/10.3389/fpubh.2025.1642608. [Google Scholar] [PubMed] [CrossRef]

Yang, H., & Rui, Y. (2025). Transforming EFL students’ engagement: How AI-enhanced environments bridge emotional health challenges like depression and anxiety. Acta Psychologica, 257, 105104. https://doi.org/10.1016/j.actpsy.2025.105104. [Google Scholar] [PubMed] [CrossRef]

Yu, J., & Tao, Y. (2025). To be in AI-integrated language classes or not to be: Academic emotion regulation, self-esteem, L2 learning experiences and growth mindsets are in focus. British Educational Research Journal, 30(2), 149. https://doi.org/10.1002/berj.4180 [Google Scholar] [CrossRef]

Yuan, L., & Liu, X. (2024). The effect of artificial intelligence tools on EFL learners’ engagement, enjoyment, and motivation. Computers in Human Behavior, 162, 108474. https://doi.org/10.1016/j.chb.2024.108474 [Google Scholar] [CrossRef]

Zhang, C., Hu, M., Wu, W., Kamran, F., & Wang, X. (2024a). Unpacking perceived risks and AI trust influences pre-service teachers’ AI acceptance: A structural equation modeling-based multi-group analysis. Education and Information Technologies, 30(2), 2645–2672. https://doi.org/10.1007/s10639-024-12905-7 [Google Scholar] [CrossRef]

Zhang, Y., Zhang, M., Wu, L., & Li, J. (2024b). Digital transition framework for higher education in AI-assisted engineering teaching. Science & Education, 34(2), 933–954. https://doi.org/10.1007/s11191-024-00575-3 [Google Scholar] [CrossRef]

Zhang, T., & Liu, X. (2025). Tracking the evolving impact of AI-driven learning platforms on EFL students’ burnout, emotional challenges, and well-being: a longitudinal growth curve analysis. Innovation in Language Learning and Teaching. https://doi.org/10.1080/17501229.2025.2503889 [Google Scholar] [CrossRef]

Zhao, C. G., & Qi, Q. (2022). Implementing learning-oriented assessment (LOA) among limited-proficiency EFL students: Challenges, strategies, and students’ reactions. TESOL Quarterly, 57(2), 566–594. https://doi.org/10.1002/tesq.3167 [Google Scholar] [CrossRef]

Zhao, H., Zhang, H., Li, J., & Liu, H. (2025). Performance motivation and emotion regulation as drivers of academic competence and problem-solving skills in AI-enhanced preschool education: A SEM study. British Educational Research Journal, 15(1), 59. https://doi.org/10.1002/berj.4196 [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools