Open Access

Open Access

REVIEW

Survey of AI-Based Threat Detection for Illicit Web Ecosystems: Models, Modalities, and Emerging Trends

1 Department of Immersive Media Engineering, Sungkyunkwan University, Seoul, Republic of Korea

2 Department of Computer Education/Social Innovation Convergence Program, Sungkyunkwan University, Seoul, Republic of Korea

* Corresponding Author: Moohong Min. Email:

(This article belongs to the Special Issue: The Evolution of Cybersecurity and AI: Surveys and Tutorials)

Computer Modeling in Engineering & Sciences 2026, 146(3), 6 https://doi.org/10.32604/cmes.2026.078940

Received 11 January 2026; Accepted 02 March 2026; Issue published 30 March 2026

Abstract

Illicit web ecosystems, encompassing phishing, illegal online gambling, scam platforms, and malicious advertising, have rapidly expanded in scale and complexity, creating severe social, financial, and cybersecurity risks. Traditional rule-based and blacklist-driven detection approaches struggle to cope with polymorphic, multilingual, and adversarially manipulated threats, resulting in increasing demand for Artificial Intelligence (AI)-based solutions. This review provides a comprehensive synthesis of research on AI-driven threat detection for illicit web environments. It surveys detection models across multiple modalities, including text-based analysis of Uniform Resource Locator (URL) and HyperText Markup Language (HTML), vision-based recognition of webpage layouts and logos, graph-based modeling of domain and infrastructure relationships, and sequence modeling using transformer architectures. In addition, the paper examines system architectures, data collection and labeling pipelines, real-time detection frameworks, and widely used benchmark datasets, while also discussing their inherent limitations related to imbalance, representativeness, and reproducibility. The review highlights critical challenges such as evasion strategies, cross-lingual detection barriers, deployment latency, and explainability gaps. Furthermore, it identifies emerging research directions, including the use of Generative Adversarial Network (GAN) for threat simulation, few-shot and self-supervised learning for data-scarce environments, Explainable Artificial Intelligence (XAI) for transparency, and predictive AI for proactive threat forecasting. By integrating technical, legal, and societal perspectives, this survey offers a structured foundation for researchers and practitioners to design resilient, adaptive, and trustworthy AI-based defense systems against illicit web threats.Keywords

In recent years, the quantitative and qualitative growth of web-based cybercrimes in cyberspace has become increasingly evident. Among them, phishing and illicit online gambling websites have emerged as representative digital threats that cause substantial social harm. In 2023, more than five million phishing attacks were reported worldwide, and in the first quarter of 2024 alone, 960,000 incidents were detected, underscoring the severity of the threat [1]. According to statistics from the Federal Bureau of Investigation’s Internet Crime Complaint Center (FBI IC3), approximately 200,000 phishing-related crimes are reported annually in the United States, while globally an estimated 3.4 billion phishing emails are transmitted every day [2,3].

The emergence of Large Language Model (LLM), such as ChatGPT, has triggered a surge in AI-driven, automated phishing attacks, with increasingly diverse techniques. Reports by cybersecurity companies Hoxhunt and KnowBe4 indicate that phishing attempts have risen by approximately 4151% since the release of ChatGPT in 2022 [4]. Polymorphic phishing campaigns, which modify content to evade detection, are also proliferating rapidly [5].

During the early phase of the COVID-19 pandemic, adversaries exploited widespread social instability to launch large-scale phishing campaigns, which in turn stimulated research on phishing detection [6]. Early foundational studies proposed taxonomies of phishing attacks by categorizing attack motivations, techniques, and defense mechanisms. Since then, numerous studies have advanced Machine Learning (ML)-based phishing detection, demonstrating the effectiveness of integrating URL-based, visual similarity-based, and behavioral approaches [7,8].

The online gambling industry has also expanded rapidly since the pandemic, leading to a parallel increase in illicit activities and cybersecurity threats. Min and Lee analyzed more than 11,000 illegal gambling websites and demonstrated that ML models integrating landing page features, domain information, WHOIS records, and images achieved high detection performance [9]. Musa et al., in their study of the Croatian lottery system, highlighted that vulnerabilities in online gambling platforms are distributed across networks, web applications, and user interactions [10].

Ultimately, phishing and illicit gambling threats are evolving within highly organized web ecosystems, and the limitations of traditional rule-based detection methods are becoming increasingly evident. As a result, there is a growing demand for the systematic adoption of AI-based detection techniques, coupled with a macro-level analysis of the structural characteristics and domain interconnections of the web ecosystem.

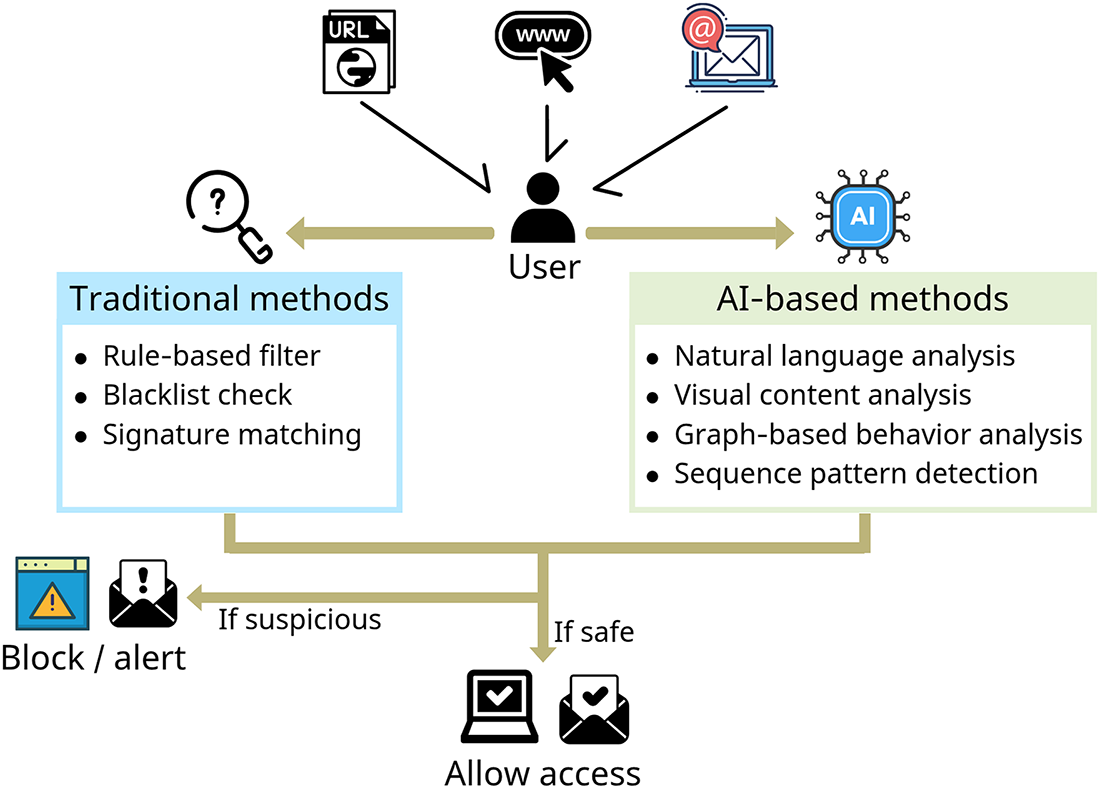

Fig. 1 is an overview of traditional and AI-based phishing detection. Most conventional detection systems have relied on blacklists, signatures, or static rule-based methods. While these methods are relatively simple to design and operate, they suffer from fundamental shortcomings in real-world environments. Chief among these is the inability to respond effectively to rapidly mutating threats. For example, when attackers slightly alter and re-register phishing domains or subtly modify content, static methods alone cannot identify them [11–13]. Moreover, blacklist-based approaches are hindered by detection delays and higher false positive/false negative rates. Their reliance on manual updates not only slows response times but also weakens adaptability to evolving attack scenarios [14]. Similarly, heuristic methods that rely on predefined keywords or patterns are easily disrupted by adversarial obfuscation strategies, and their performance deteriorates significantly under shifts in user behavior [8].

Figure 1: Overview of traditional vs. AI-based phishing detection.

To address these limitations, ML and Deep Learning (DL)-based detection methods have been actively explored. ML models are capable of generalizing classification rules from diverse input features, enabling them to adapt flexibly to changing attack patterns [15]. DL methods, such as Convolutional Neural Network (CNN), Recurrent Neural Network (RNN), and Long Short-Term Memory (LSTM) networks, can process multiple modalities—including URLs, HTML structures, images, and screenshots—achieving higher accuracy and lower error rates compared to rule-based approaches [16,17].

More recently, LLM-based detection techniques have garnered significant attention. By leveraging advanced contextual understanding, LLMs go beyond keyword matching to evaluate the semantic coherence of sentences. While phishing sentences generated by ChatGPT, Gemini, or Claude frequently bypass traditional ML detectors, LLM-based models have shown superior resilience [18]. In fact, rephrased phishing content generated by LLMs often degrades the accuracy of conventional detectors, reinforcing the importance of integrating LLM-based approaches as a complement to existing systems [19].

In addition, Reinforcement Learning (RL)-based adaptive detection systems have proven valuable in adversarial environments where evasion and detection strategies co-evolve. RL models learn reward/penalty structures in security contexts and can design proactive detection strategies that evolve autonomously [20].

This paper does not limit itself to analyzing individual attack techniques. Rather, it offers an integrated survey of illicit web ecosystems while comprehensively reviewing AI-based detection techniques and recent research trends. By extending beyond the scope of prior studies, which often focused narrowly on specific attacks or datasets, this work provides a multi-layered perspective encompassing models, modalities, datasets, system architectures, legal responses, and future directions.

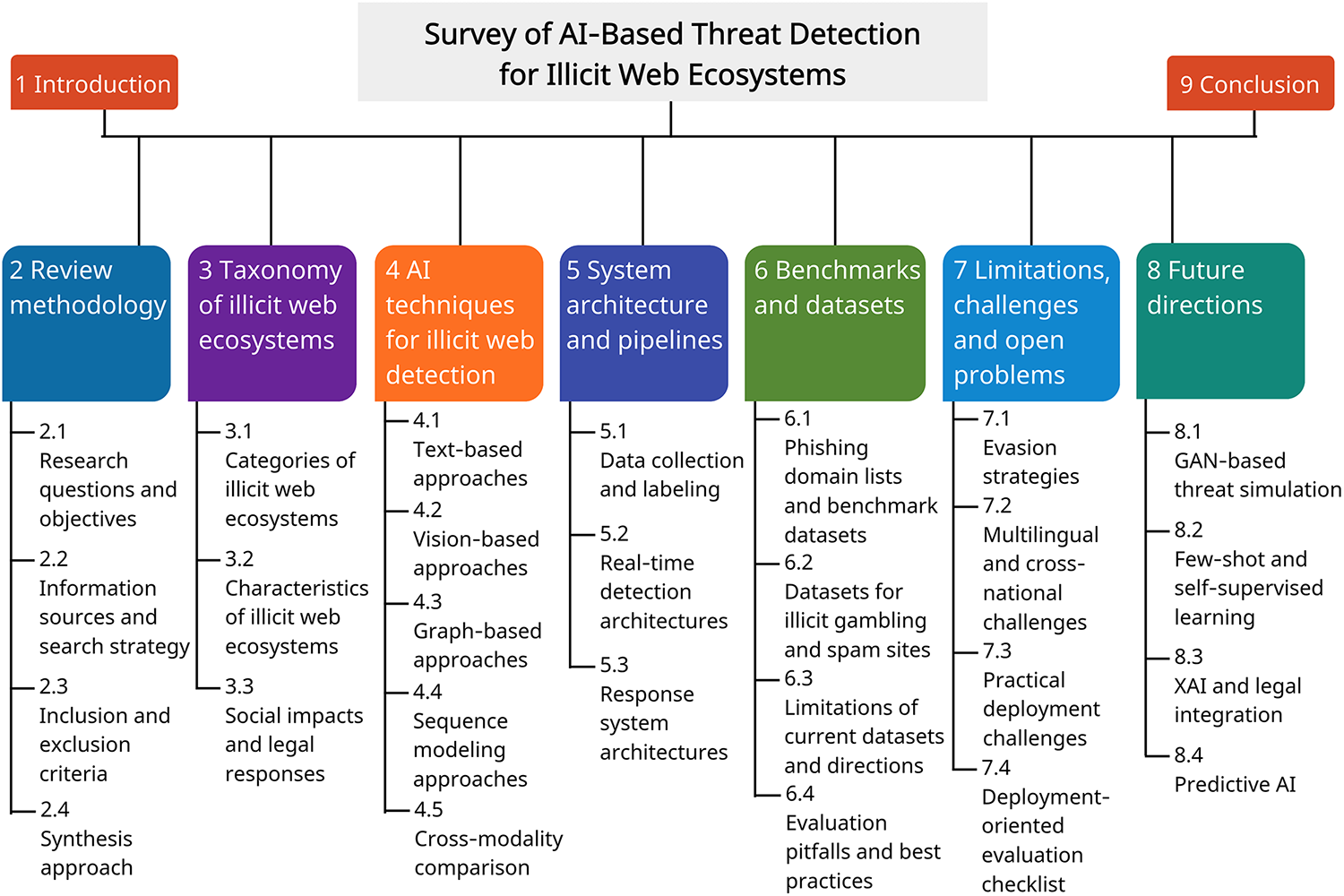

As outlined in Fig. 2, the remainder of this paper is organized as follows: Section 2 presents the review methodology. Section 3 categorizes illicit web ecosystems, presenting their technical and social characteristics along with legal responses. Section 4 reviews AI-based detection methods by modality, including text, vision, graph, and sequence models, and summarizes recent findings. Section 5 introduces system architectures and detection pipelines, covering data collection, labeling, real-time processing, and response mechanisms. Section 6 surveys public benchmarks and datasets, examining issues of data quality, representativeness, and reproducibility. Section 7 discusses current limitations and open challenges, including evasion strategies, multilingual issues, and practical deployment barriers. Section 8 outlines future research directions, such as GAN-based threat simulation, few-shot and self-supervised learning, XAI, and predictive AI. Finally, Section 9 concludes the paper by summarizing the overall discussion.

Figure 2: Overview of the content structure.

Through this structured approach, the paper aims to provide both macro-level and micro-level perspectives on AI-driven threat detection for illicit web ecosystems, offering researchers and practitioners a comprehensive reference for future studies and practical implementations.

This survey was conducted using a structured but iterative literature exploration process. Rather than following a strictly pre-registered systematic review protocol, the study employed an evolving search-and-refinement strategy to capture the rapidly developing research landscape of AI-based detection for illicit web ecosystems.

2.1 Research Questions and Objectives

To systematically synthesize the rapidly evolving body of research on AI-driven threat detection for illicit web ecosystems, this survey is guided by the following Research Questions (RQ):

• RQ1: How can AI-based detection techniques be systematically categorized for illicit web ecosystems, and what distinct threat assumptions does each modality address?

• RQ2: What factors in dataset construction and evaluation protocols critically influence reported detection performance and generalization to unseen or zero-day threats?

• RQ3: What architectural patterns and system-level designs enable scalable, real-time, and deployment-aware detection in practical environments?

• RQ4: What fundamental limitations constrain the robustness and adaptability of current AI-based detection systems in evolving illicit web ecosystems?

By addressing these questions, this survey aims not only to organize existing approaches across modalities and system designs, but also to critically examine their empirical validity, deployment feasibility, and resilience against zero-day and adversarial evolution.

In this survey, we use the term zero-day threats to refer to attack instances or infrastructures that have not been previously observed or labeled in the training data. The term unseen data denotes evaluation samples drawn from distributions not represented in the training split, while novel attacks may involve new Tactics, Techniques, or Procedures (TTPs) that differ structurally from known patterns. Although these terms are sometimes used interchangeably in prior work, we distinguish them conceptually for clarity.

2.2 Information Sources and Search Strategy

Literature was primarily retrieved from IEEE Xplore, ACM Digital Library, ScienceDirect, Scopus, Web of Science, and Google Scholar. Initial searches were performed using combinations of ecosystem-related and AI-related keywords (e.g., illicit, illegal, malicious, taxonomy, website detection, threat detection, machine learning, deep learning, AI).

During the review process, backward and forward snowballing techniques were also employed. Additional keyword searches (e.g., phishing, gambling, scam, malvertising, text, vision, graph, sequence, transformer, ensemble, dataset) were repeated to expand coverage. Among the search results, the review was conducted with a priority on relatively high-cited or recently published.

2.3 Inclusion and Exclusion Criteria

Studies were included if they met the following criteria:

• Proposed or evaluated AI-based detection mechanisms targeting illicit web content, infrastructure, or distribution channels.

• Provided empirical evaluation using publicly available or proprietary datasets.

• Clearly described model architecture or feature engineering techniques.

• Based on peer-reviewed journals or conference publications. Exceptionally, a limited number of preprint studies (e.g., large language model technical reports) were included due to their relevance to emerging trends.

Studies were excluded if they:

• Focused solely on traditional signature-based detection without AI components.

• Lacked experimental validation.

• Were non-English publications (excluding reports to verify local statistical data).

• Were duplicate reports of the same methodology.

A narrative synthesis approach was employed to identify cross-paper patterns, trade-offs, and recurring evaluation pitfalls. Given the interdisciplinary and fast-evolving nature of the topic, the review emphasizes conceptual synthesis and modal analysis rather than strict quantitative aggregation of performance metrics.

Particular attention was given to overlapping domains such as phishing, gambling, and scam. When studies addressed multiple illicit domains, they were classified based on their primary threat model and evaluation focus.

3 Taxonomy of Illicit Web Ecosystems

3.1 Major Categories of Illicit Web Ecosystems

The structure of the web-based cybercrime ecosystem has become increasingly complex, with blurred boundaries among different types of criminal activities. Accordingly, to effectively detect and counter illicit websites, it is essential to establish a clear taxonomy of categories and to systematically understand the characteristics and threat mechanisms of each. This section summarizes the representative types of illicit web ecosystems—namely phishing, illegal gambling, malicious advertising, and scam—focusing on their technical and socio-structural classification criteria.

Phishing is a representative social-engineering attack designed to steal sensitive user information or to install malware. Chiew et al. analyzed phishing attacks by categorizing their types, vectors, and technical implementations, thereby offering a systematic understanding of phishing ecosystems [13]. Fra̧szczak and Fra̧szczak systematized phishing detection techniques according to the types of detection features, such as URL-based, content-based, and visual similarity-based approaches [21].

Apea and Yin categorized phishing detection into list-based, HTML content similarity-based, and hybrid approaches, and comparatively analyzed their applicability and technical limitations [22]. From a practical perspective, phishing types are also distinguished by attack channels and media, including spear-phishing, Short Message Service (SMS) phishing (smishing), voice-based phishing (vishing), and pharming (phishing without a lure).

3.1.2 Illegal Gambling Ecosystems

Illegal gambling websites not only cause direct financial loss to users but also involve broader social issues such as money laundering, links to organized crime, and personal data breaches. Banks and Waugh classified gambling-related crimes into categories such as organized-crime related, financial-crime related, and user addiction-inducing, while elaborating on the proliferation structure through online platforms [23]. Gainsbury et al. proposed a game-content-based taxonomy of gambling, classifying gambling games according to platform, payment method, and the level of skill required [24].

In addition, the boundary between legal and illegal online gambling platforms is often ambiguous, with the same website potentially being legal in one jurisdiction while illegal in another. Therefore, a comprehensive taxonomy must consider not only technical analyses of platforms but also operational entities, payment flows, and distributed server infrastructures [23,24].

3.1.3 Malvertising and Scam Ecosystems

Scam sites and malicious advertising (malvertising) resemble phishing in that they lure users but primarily aim at fraudulent payments, click-through revenue, or malware distribution. Verma et al. proposed a domain-independent deception taxonomy that encompasses various fraudulent activities, including phishing, scams, fake news, and job fraud. Their framework introduces a multi-dimensional classification of deception, covering agents, stratagems, goals, exposure mechanisms, and communication modalities, thereby extending analysis beyond phishing alone [25].

Phillips and Wilder conducted clustering-based classification of advance-fee scams and cryptocurrency scam sites, revealing that such sites are often linked at the infrastructure level to phishing or illegal gambling sites [26]. Consequently, the taxonomy of malvertising and scam sites should adopt a multidimensional approach that considers not only content but also link structures, hosting environments, and backend domain connections.

3.2 Key Characteristics of Illicit Web Ecosystems

The illicit website ecosystem goes beyond a mere list of URLs; it comprises a multi-layered infrastructure that encompasses the dynamics of domain creation, alteration, and expiration, Internet Protocol (IP) distribution strategies, and the utilization of global hosting infrastructures. This section describes the principal features of web-based cyber threats in three aspects: domain lifetimes, IP distribution structures, and the concentration of multinational operations and hosting providers.

The average lifetime of malicious domains is generally very short, and in many cases they are abandoned or re-registered before being detected. Lee et al. analyzed 286,237 phishing URLs collected at 30-min intervals and reported an average lifetime of approximately 54 h, with a median lifetime of 5.46 h. Notably, Google Safe Browsing’s average detection time was 4.5 days, while 83.9% of phishing domains had already expired before detection [27].

Research by Foremski and Vixie and Affinito et al. further revealed that 9.3% of newly registered domains were deleted within seven days, most of them as part of automated phishing campaigns. Their reported median lifetime was only four hours and 16 min [28,29]. An analysis by the DNS Research Federation indicated that about 63% of newly registered domains were blocked within four days of registration, with an average lifetime of about 21 days [30].

3.2.2 IP Distribution and Fast-Flux Structures

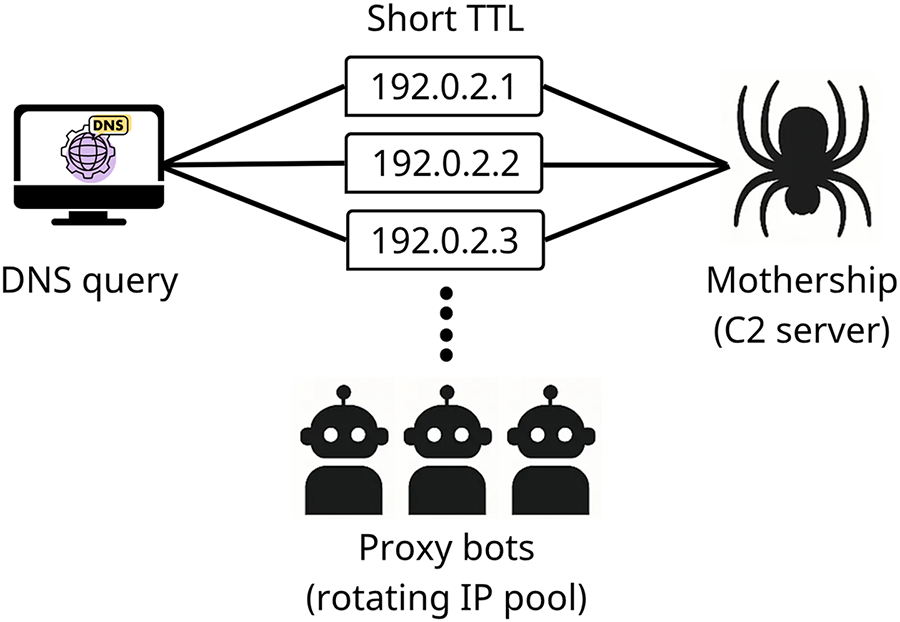

Malicious domains often employ the fast-flux technique to evade detection by rapidly rotating IP addresses for a single domain name. Fast-flux typically involves associating multiple IPs with a single domain and configuring a short Time-to-Live (TTL) so that each Domain Name System (DNS) query returns a different IP. Each IP corresponds to a pool of compromised zombie hosts (proxy bots), which relay requests to the actual control server (mothership, or Command and Control (C2) server), thereby concealing its location. As illustrated in Fig. 3, this process enables rapid IP replacement through short TTLs, while directing traffic through proxy bots to the hidden C2 server. Such structures are widely used to support load balancing, peer-to-peer C2 server operations, and domain evasion strategies, thereby undermining IP-based blocking policies [31,32].

Figure 3: Fast-flux IP rotation.

This IP distribution strategy complicates detection at the DNS level, as it combines rapid IP changes, geographic dispersion, short TTLs, and short-term registrations identifiable via WHOIS records, all of which favor detection evasion [33,34].

3.2.3 TLDs and Multinational Hosting Infrastructures

According to Interisle Consulting Group’s 2024 annual report, approximately 79% of domains used in phishing were maliciously registered, and many became operational within three days of registration. A substantial share of phishing domains were registered through the top five generic Top-Level Domain (TLD) registrars, and 42% of all phishing domains reported during the study period were concentrated in the top ten new generic TLDs. Moreover, half of all phishing attacks originated from IP address ranges belonging to the top ten hosting providers. Among them, Cloudflare alone accounted for 23% of cases (437,108 incidents), underscoring the severe concentration of registration and hosting infrastructures [35].

At the same time, infrastructures supporting malicious domains and phishing emails are becoming increasingly geographically distributed. For example, numerous malicious websites have been observed embedding U.S. city names into their domain names to target local users [36]. Furthermore, about 40% of phishing emails were routed through more than three countries before reaching recipients, with many originating from Eastern Europe, Central America, Middle East, and Africa [37]. Such routing practices hinder traceability and illustrate how attackers leverage globally dispersed infrastructures and multinational email routing paths to avoid detection, while tailoring attacks with geographic and linguistic features to target specific user groups.

3.3 Social Impacts and Legal Responses

Illegal web–driven cybercrimes extend beyond mere technical threats, leading to tangible societal harms and imposing significant burdens on legal and policy responses. This section analyzes the social damages and legal response frameworks with a focus on phishing, illegal online gambling, and scam/malicious advertising platforms.

3.3.1 Social Impacts and Legal Responses to Phishing

Phishing attacks cause far-reaching consequences, not only individual damages but also corporate financial losses, brand reputation degradation, and data breaches. According to a UK government survey, 79% of companies reported experiencing phishing within the past 12 months, with 56% responding that phishing was the most disruptive type of attack [38]. A Proofpoint report similarly revealed that 91% of companies experienced at least one email-based phishing attempt, and 26% suffered direct financial losses [39]. A 2025 Keevee research review indicated that social engineering–based cyberattacks account for 98% of all cyber incidents, of which phishing comprises 70%. On average, companies incur USD 17.7 million in annual financial losses from such attacks, with approximately 60% suffering reputational damage and an average of 200 days required for incident recovery [40].

Legal response systems have been progressively institutionalized. In the United States, the Anti-Phishing Act of 2005 was introduced [41]. In the United Kingdom, the Fraud Act 2006 established a general offense of fraud, thereby closing loopholes within preexisting statutory and common law frameworks [42]. National cybercrime legislations across multiple jurisdictions are evolving not only to impose criminal penalties on phishing perpetrators but also to incorporate victim protection, corporate accountability, and compensation frameworks [43–45].

3.3.2 Social Impacts and Legal Responses to Illegal Online Gambling

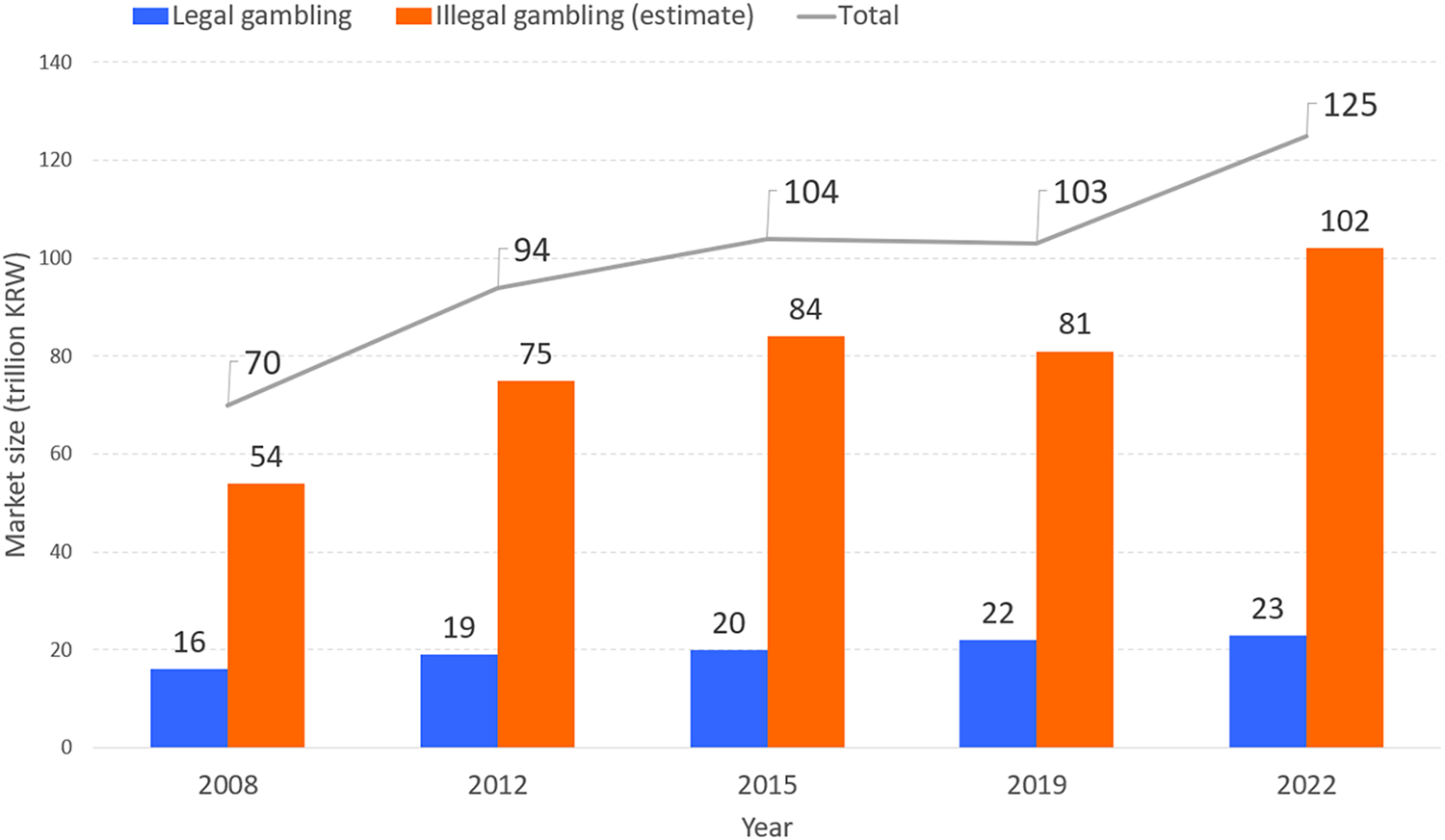

The determination of illegality in online gambling varies across nations and cultural contexts. In Asian cultural spheres, where social sensitivity toward gambling is particularly high, illegality criteria are applied more strictly. For example, research on illegal online gambling in South Korea indicates that, as shown in Fig. 4, the scale of the illegal market has grown annually and has already surpassed the legal gambling market [46]. According to a 2024 meta-analysis by Tran et al., gambling has become a globally prevalent activity, with approximately 46% of adults worldwide having engaged in gambling. Alongside the expansion of the online gambling sector, the prevalence of problematic gambling has increased. An estimated 8.7% of adults engage in risky gambling, and 1.41% meet the criteria for problematic gambling, which is closely associated with household financial deterioration, mental health decline, and the breakdown of interpersonal relationships [47]. Illegal online gambling is particularly harmful due to its structural characteristics, such as the availability of high-stakes betting, absence of age or time restrictions, and lack of minimal safeguards. Moreover, fraudulent operations, such as manipulated winning odds or misappropriation of funds, further exacerbate the risk of users falling into problematic gambling, amplifying socioeconomic harms [48].

Figure 4: Market growth trends of legal vs. illegal online gambling in South Korea [46].

Representative legal responses include the U.S. Unlawful Internet Gambling Enforcement Act of 2006 (UIGEA), which restricts payment flows related to illegal internet gambling. The act prohibits the acceptance of funds from unlawful gambling through credit cards, electronic transfers, or checks, and obligates payment networks and financial intermediaries to block such transactions [49]. Similarly, countries such as South Korea, Japan, Philippines, Singapore, and Indonesia apply national cybercrime statutes and gambling regulations to restrict online gambling through a combination of platform blocking, Internet Service Provider (ISP) controls, and criminal prosecutions [48,50].

3.3.3 Social Impacts and Legal Responses to Scam Platforms

Scam platforms combine phishing, malicious advertising, and ransomware, thereby causing multilayered damages: stealing personal information, encrypting systems for ransom, or harvesting identity data for secondary crimes [51–53]. According to Tanti’s 2023 study, Business Email Compromise (BEC) in the United States alone resulted in USD 2.9 billion in damages, with average losses per company ranging from USD 4.65 million to USD 5.01 million. Among surveyed companies, 60% reported experiencing data loss, 52% reported credential or account compromise, and 47% had encountered ransomware infections; 18% reported direct financial losses [54].

A notable case is LabHost, a global scam platform originating in Canada in 2021, which positioned itself as a “one-stop-shop” for fraud. LabHost is estimated to have caused more than 94,000 victims and USD 28 million in damages in Australia alone. Over 10,000 cybercriminals are reported to have used the platform to replicate legitimate websites of banks, government agencies, and major organizations, thereby producing large-scale victimization. The Australian Federal Police (AFP) continues to pursue the related infrastructure under the international investigation Operation Nebulae, highlighting the highly organized nature of the scam ecosystem [55].

Consequently, international cooperation has become both essential and increasingly active. A prime example is the takedown of the Avalanche infrastructure, one of the largest botnet dismantling operations in history. This four-year collaboration involved law enforcement agencies from 180 countries, resulting in the shutdown of approximately 800,000 malicious domains, the arrest of key operators, and the successful dismantling of the infrastructure [56].

4 AI Techniques for Illicit Site Detection

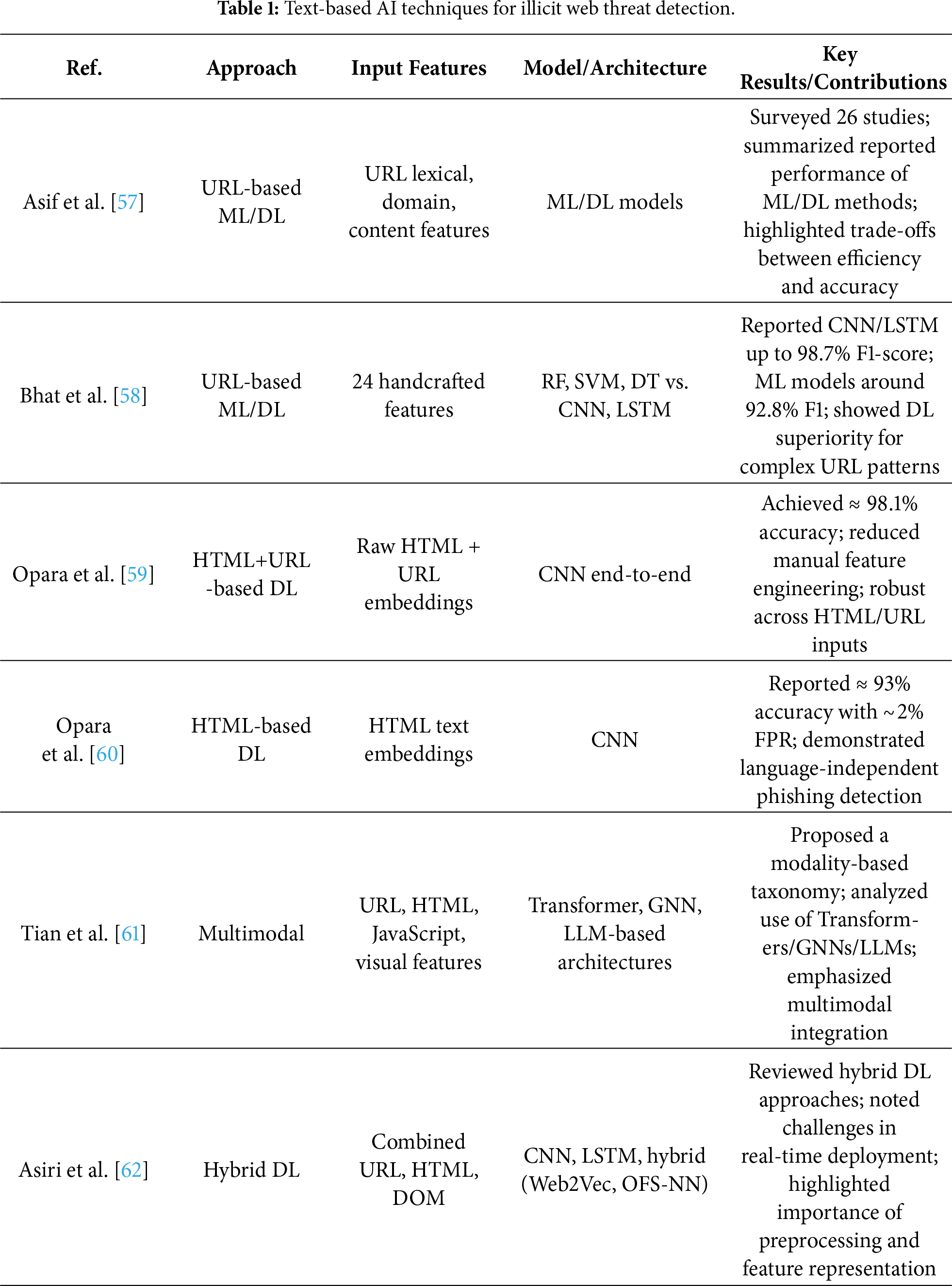

One of the most widely adopted approaches in illicit website detection is the analysis of text-based features, including URL strings, HTML content, email bodies, and headers. As shown in Table 1, this section provides a structured overview of detection techniques centered on text modalities, and compares representative model architectures.

4.1.1 Text-Based AI Techniques

Although a URL is short in length, it inherently contains various syntactic and structural characteristics—such as regular expression patterns, length, domain form, and the presence of special characters—which make it highly useful for malicious URL detection. Asif et al. conducted a comprehensive review of recent ML–based phishing detection algorithms focusing on structural URL features (e.g., token counts, number of subdomains, and suspicious TLDs), emphasizing the balance between accuracy and efficiency [57]. Bhat et al. extracted 24 structural URL features such as length, number of special characters, and the presence of IP addresses, and reported that traditional classifiers (Support Vector Machine (SVM), Random Forest (RF), Decision Tree (DT)) achieved an average F1-score of approximately 92.8%. In comparison, DL models such as CNN and LSTM achieved up to 98.7% F1-score, demonstrating superior detection performance on complex URL patterns [58].

At the HTML code level, multiple features can also be leveraged to detect phishing. Opara et al. designed a CNN-based DL model (WebPhish) that takes entire HTML documents as raw input, achieving a high accuracy of about 98.1%. This end-to-end model integrates both URL and HTML embeddings during training [59]. The same researchers also proposed HTMLPhish, which embeds HTML content at both character and word levels and uses a CNN for classification. This model incorporates all elements of HTML—including tags, attributes, links, and text—highlighting its ability to perform language-independent detection. In experiments with over 50,000 multilingual HTML documents, HTMLPhish achieved approximately 93% accuracy on test datasets [60]. The model demonstrated strong generalization to new HTML patterns without manual feature engineering, while maintaining a low False Positive Rate (FPR) (

More recently, multimodal detection models that integrate textual and non-textual inputs—such as URL strings, HTML structures, JavaScript execution, and webpage visual features—have gained attention. Tian et al. categorized these inputs into key modalities, systematically reviewed state-of-the-art detection techniques, and analyzed the architectures, advantages, and limitations of each. They also discussed the applicability of advanced DL techniques—including Transformers, Graph Neural Network (GNN), and LLMs—to malicious URL detection, and suggested directions for future research [61]. Notably, their study shifted the taxonomy from an algorithm-centric perspective to a modality-centric perspective, thereby offering a multidimensional view of multimodal information utilization in URL security research.

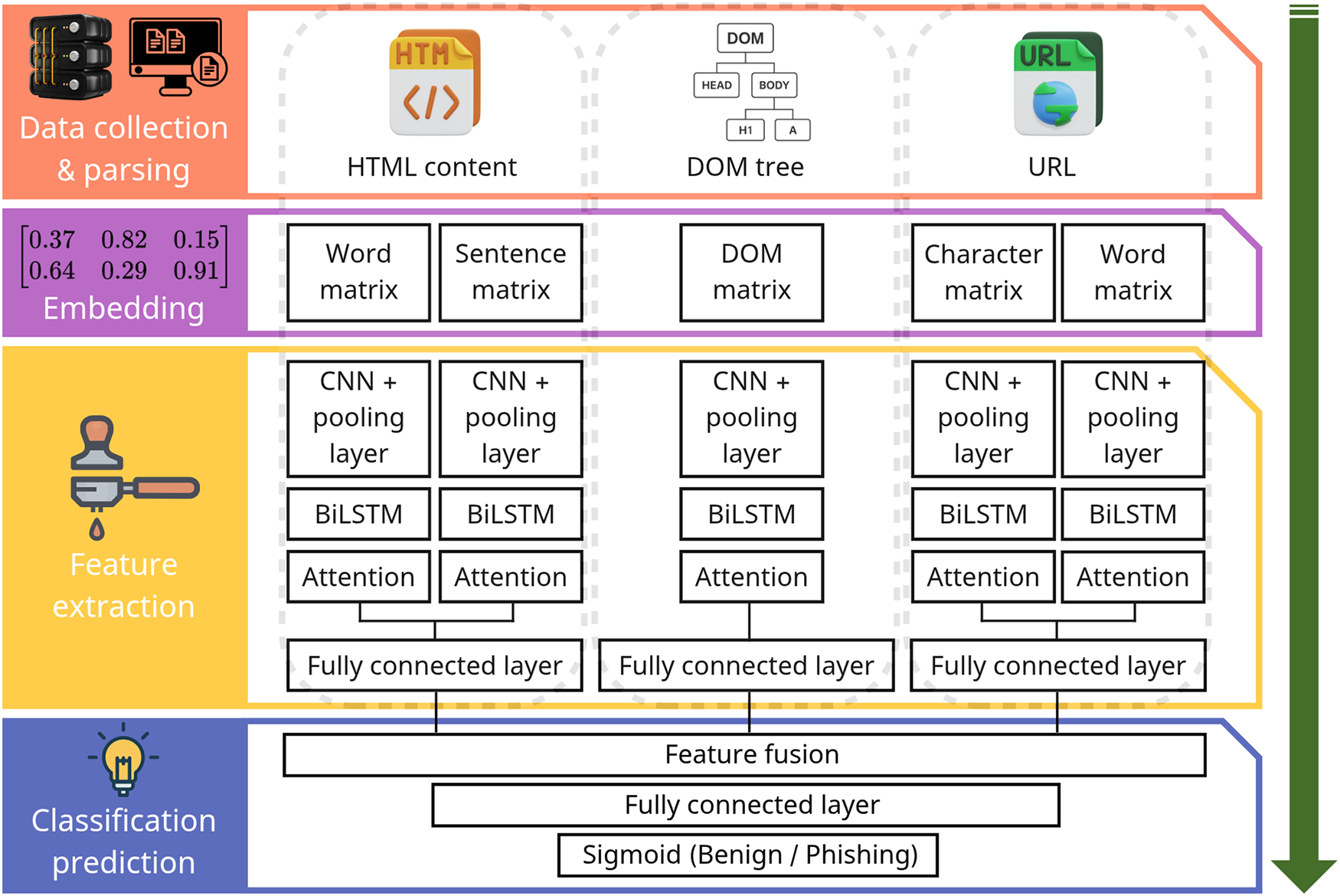

Asiri et al. provided a detailed analysis of hybrid DL–based phishing detection models (e.g., Web2Vec, Optimal Feature Selection and Neural Network (OFS-NN)). Among them, the Web2Vec model, illustrated in Fig. 5, integrates multiple inputs such as URLs, HTML content, and Document Object Model (DOM) structures, and employs CNN, Bidirectional LSTM (BiLSTM), and attention mechanisms to extract multi-layered features before performing final classification. While these models achieved high accuracy, the complexity of processing heterogeneous inputs introduces latency in real-time scenarios. As such, the trade-off between detection performance and processing time was emphasized as a major issue. Accordingly, they proposed model design strategies that combine lightweight CNN architectures with sequence-learning LSTM components to ensure both real-time performance and detection accuracy [62].

Figure 5: Architecture of the Web2Vec hybrid phishing detection model [62].

4.1.2 Synthesis and Implications of Text-Based Approaches

Text-based detection approaches—leveraging URL lexical features, HTML content, email headers, and textual embeddings—remain the most computationally efficient and widely deployable modality for illicit web threat detection. Their strengths lie in scalability, low-latency processing, and ease of integration into browser extensions, email gateways, and Application Programming Interface (API)-based filtering systems.

However, under zero-day or unseen attack conditions, purely supervised text-based models exhibit notable limitations. Because many models rely on statistical regularities within known lexical patterns, adversarial modifications—such as homoglyph substitutions, token obfuscation, multilingual drift, or LLM-generated rephrasing—can significantly degrade performance. While transformer-based and LLM-driven models demonstrate improved contextual generalization, their robustness remains dependent on training data diversity and evaluation protocols.

From a deployment perspective, text-based approaches offer low computational overhead and minimal crawling requirements, making them suitable for real-time detection scenarios. Nevertheless, their vulnerability to semantic obfuscation and evolving content manipulation suggests that text-only detection may be insufficient for resilient zero-day defense. These findings address RQ1 by clarifying the threat assumptions under which text modalities perform optimally, and highlight the need for multimodal or anomaly-aware integration to enhance robustness.

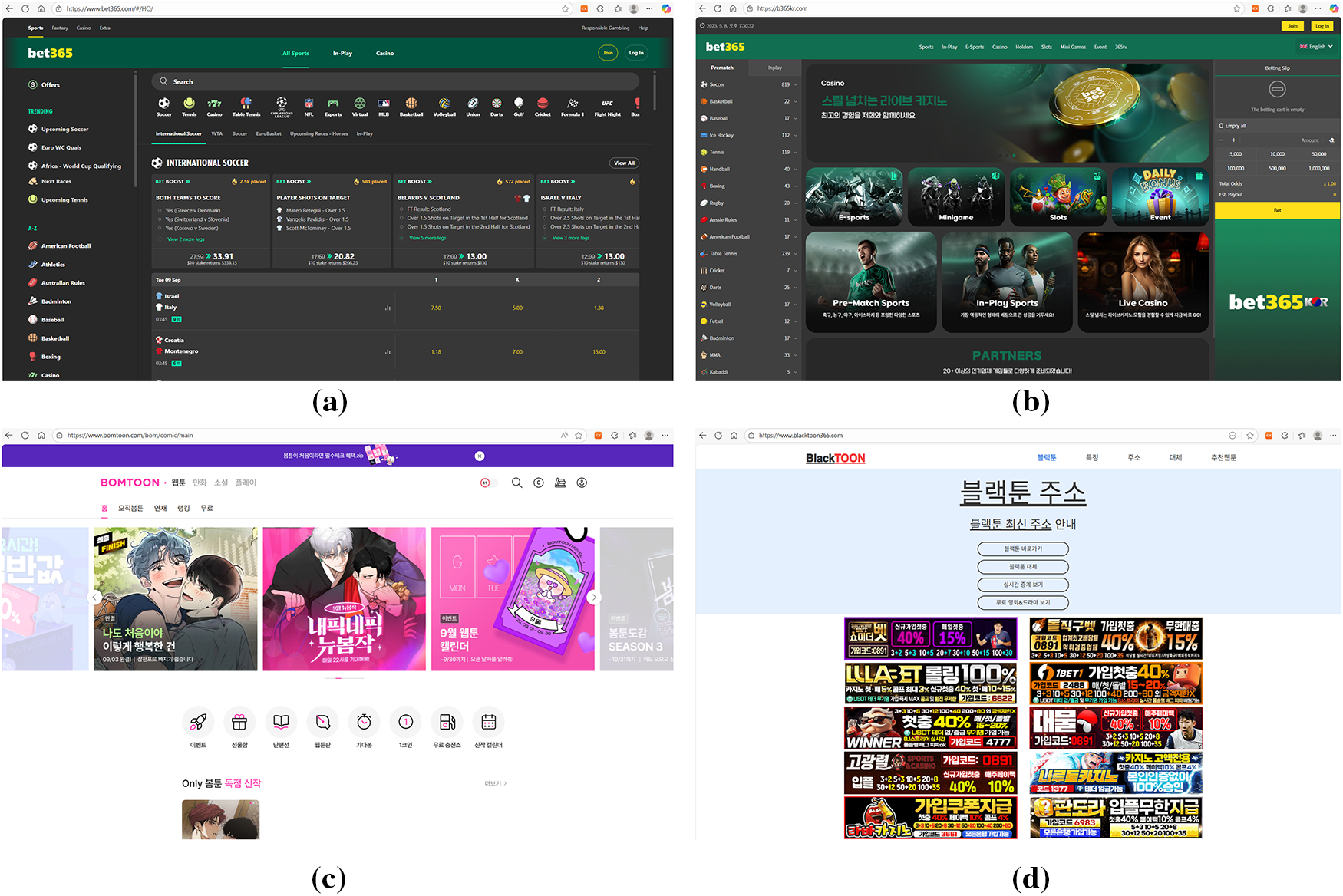

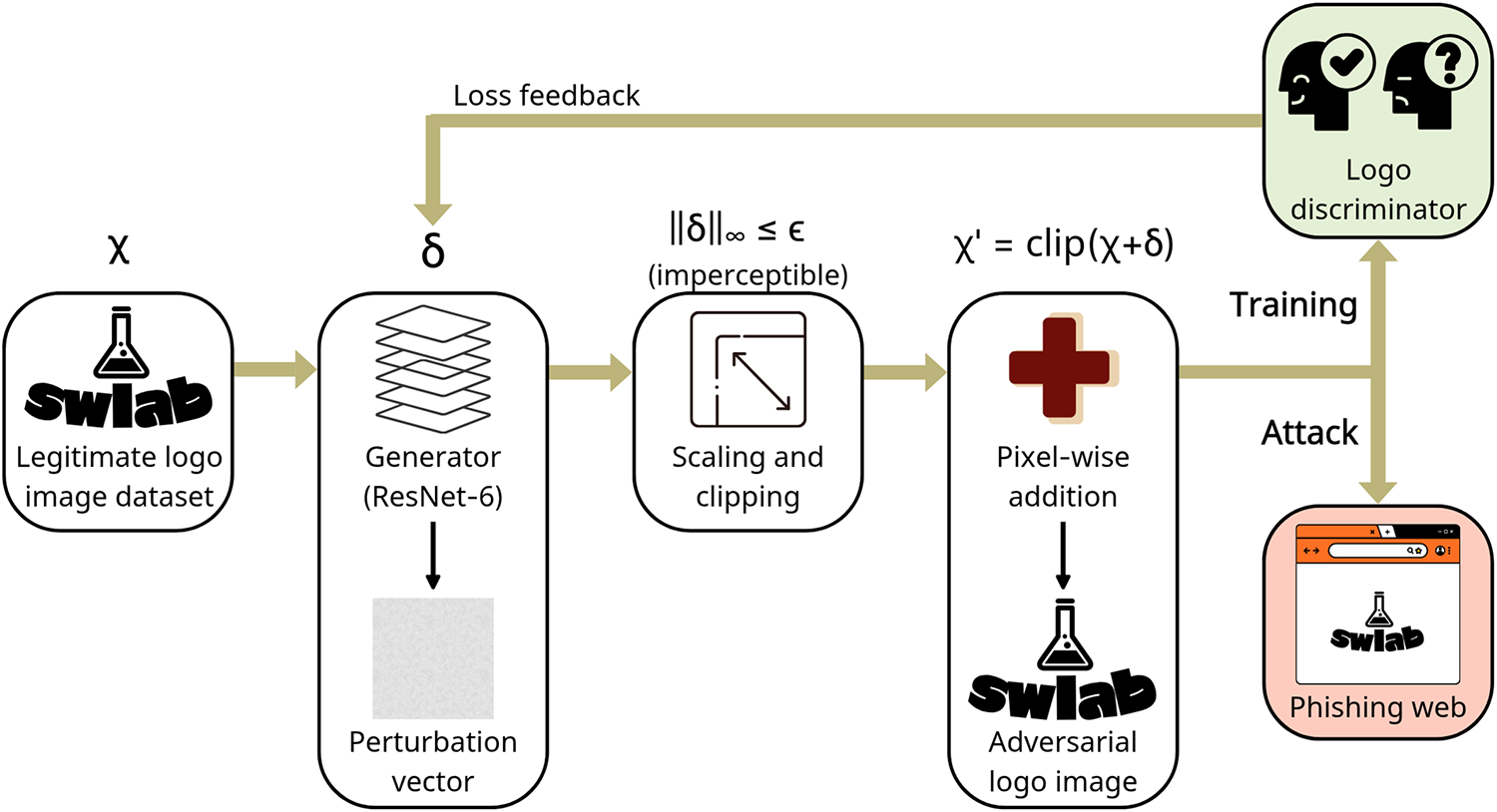

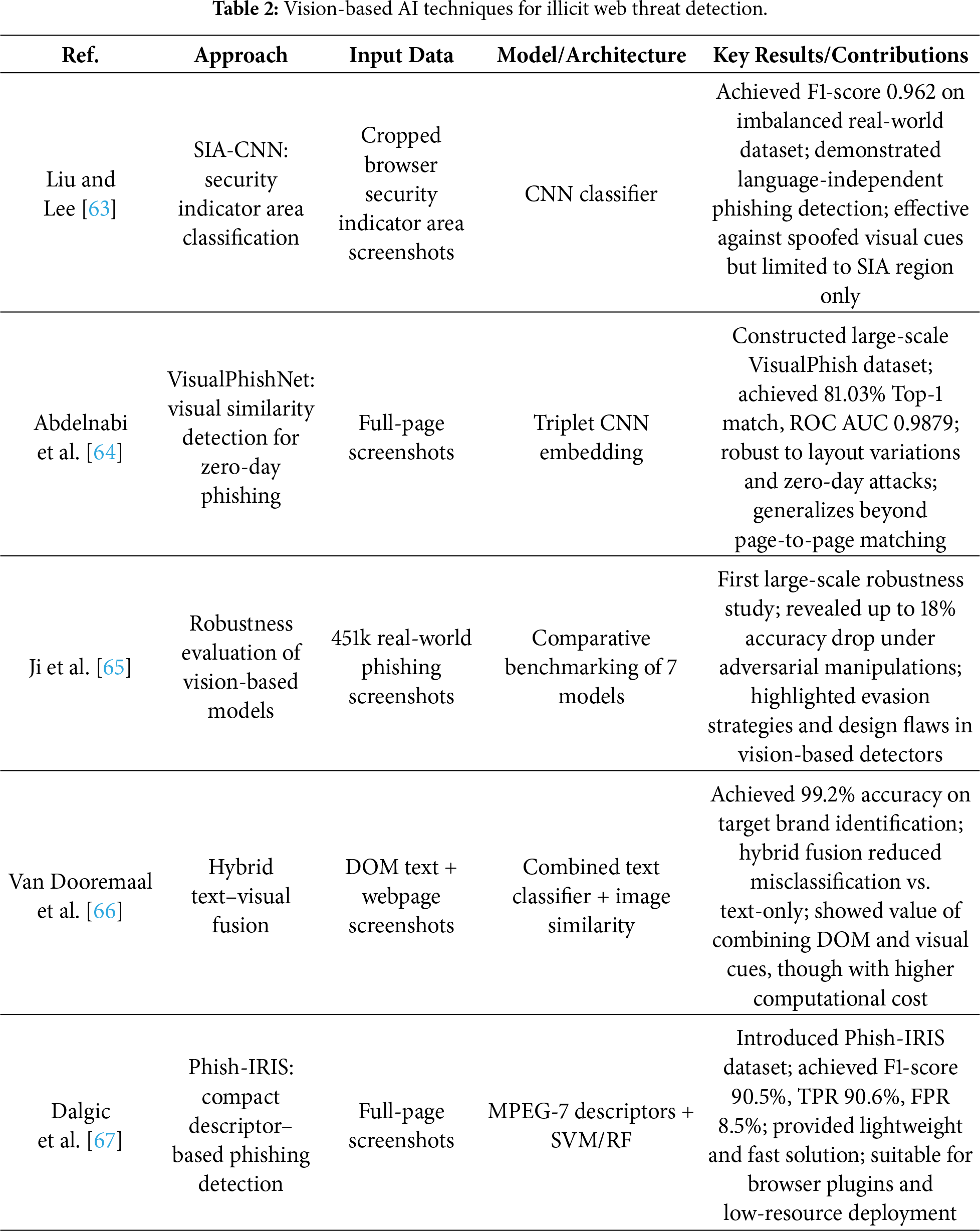

Text-based detection models suffer from inherent limitations, as they are highly sensitive to variations or crafted patterns in the strings used by attackers. To overcome this, recent studies have actively explored detection methods that leverage non-textual visual elements of websites, such as layout, logos, colors, and buttons. Such vision-based analysis is particularly effective for detecting illicit sites designed on the principle of “brand impersonation” from the user’s perspective. In this section, we provide a structured overview of vision modality–oriented detection techniques, as summarized in Table 2. By comparing the visual differences between legitimate and illicit websites, one can intuitively identify features such as layout, color schemes, and advertisement patterns that can be exploited by detection models. For instance, Fig. 6 presents screenshots of legitimate vs. illicit online gambling sites (a, b) and legitimate vs. illicit webtoon platforms (c, d), demonstrating visual cases that vision-based approaches can analyze.

Figure 6: Sample screenshots for vision-based detection. (a) Legitimate online gambling site; (b) Illicit online gambling site; (c) Legitimate webtoon platform; (d) Illicit webtoon platform. The visual differences across these categories (e.g., layout consistency, advertisement patterns, and design quality) illustrate how vision-based approaches can leverage screenshot-level cues for distinguishing between benign and malicious websites. Takeaway: Screenshot-level cues can expose impersonation patterns beyond lexical analysis.

4.2.1 Vision-Based AI Techniques

The Security Indicator Area (SIA) refers to the visual elements located in the upper portion of a browser, including the URL address bar, HyperText Transfer Protocol Secure (HTTPS) padlock, tab icons, and brand logos, which users intuitively rely on to assess the trustworthiness of a website. Liu and Lee proposed an approach that automatically captures this SIA region, converts it into images, and classifies them using a CNN model. By doing so, the method achieves phishing detection in a manner that is independent of language or textual content. Even on an imbalanced real-world dataset (3843 legitimate samples and 1593 phishing samples), the model achieved an F1-score of 0.962, demonstrating its ability to overcome the language dependency and spoofing weaknesses of conventional URL/HTML-based detectors [63].

Abdelnabi et al. proposed VisualPhishNet, a model that leverages a triplet CNN to learn visual similarities between webpages belonging to the same brand, and then classify phishing attempts based on this learned similarity space. Unlike simple image matching, the model generalizes visual characteristics across webpages with diverse layouts and components, thereby achieving strong robustness against zero-day attacks and user interface obfuscations. In experiments, the model recorded a Top-1 accuracy of 81.03% and an Receiver Operating Characteristic (ROC) Area Under the Curve (AUC) of 0.9879. The evaluation was conducted on the VisualPhish dataset, which included 155 legitimate websites and 1195 phishing pages targeting those websites [64].

Ji et al. systematically evaluated the effectiveness and robustness of seven vision-based phishing detection models using a large-scale dataset comprising over 450,000 real phishing screenshots. Their study analyzed various evasion strategies, including logo removal, font perturbation, background color imitation, and logo repositioning. The results revealed that some logo-based models exhibited accuracy drops of over 18% under certain manipulations, and that these models were particularly vulnerable even to simple techniques such as logo replacement or removal [65]. This work emphasizes the need for designing vision-based detectors that incorporate adversarial evasion strategies from the attacker’s perspective in order to enhance robustness.

To overcome the limitations of single-modality detection, hybrid models have recently been proposed that integrate textual information extracted from the DOM (e.g., title elements) with visual features obtained from webpage screenshots. Van Dooremaal et al. suggested a model that combines these two feature types to identify the legitimate websites being impersonated, thereby enabling phishing detection. Evaluated on a dataset of 2000 real phishing and benign websites, this model achieved a target identification accuracy of 99.2% [66]. Furthermore, the approach was designed to be deployable as a browser plugin, demonstrating its feasibility for real-time response in practical user environments.

Dalgic et al. introduced Phish-IRIS, a framework that summarizes webpage screenshots into Moving Picture Experts Group-7 (MPEG-7) and related compact visual descriptors (e.g., Scalable Color Descriptor (SCD), Color Layout Descriptor (CLD), Color and Edge Directivity Descriptor (CEDD)), which are then classified using lightweight ML models such as SVM or RF. Notably, the combination of the SCD with SVM achieved an F1-score of 90.5%, a True Positive Rate (TPR) of 90.6%, and an FPR of 8.5% [67]. With an average processing time of only 0.21 s per image, the approach is highly efficient and can operate without high-end Graphics Processing Unit (GPU), making it suitable for deployment as a browser plugin or phishing detector on mobile devices.

4.2.2 Synthesis and Implications of Vision-Based Approaches

Vision-based approaches address a distinct threat assumption: brand impersonation through visual mimicry. By analyzing webpage layouts, logos, and security indicator areas, these models operate independently of textual obfuscation and multilingual variation. As a result, vision-based systems are particularly effective against phishing campaigns that replicate legitimate brand identities.

However, empirical studies demonstrate that these models remain vulnerable to adversarial visual perturbations, including logo removal, color modification, background imitation, and subtle pixel-level attacks. Furthermore, the requirement for webpage rendering and screenshot acquisition introduces additional latency and infrastructure overhead, limiting deployment feasibility in high-throughput environments.

In zero-day scenarios, vision-based models may outperform lexical models when attacks rely on novel domain names but visually mimic trusted brands. Conversely, purely content-based scams lacking recognizable visual anchors may evade such systems. These observations clarify the operational boundaries of vision modalities in addressing RQ1 and underscore the importance of balancing robustness with deployment constraints (RQ3).

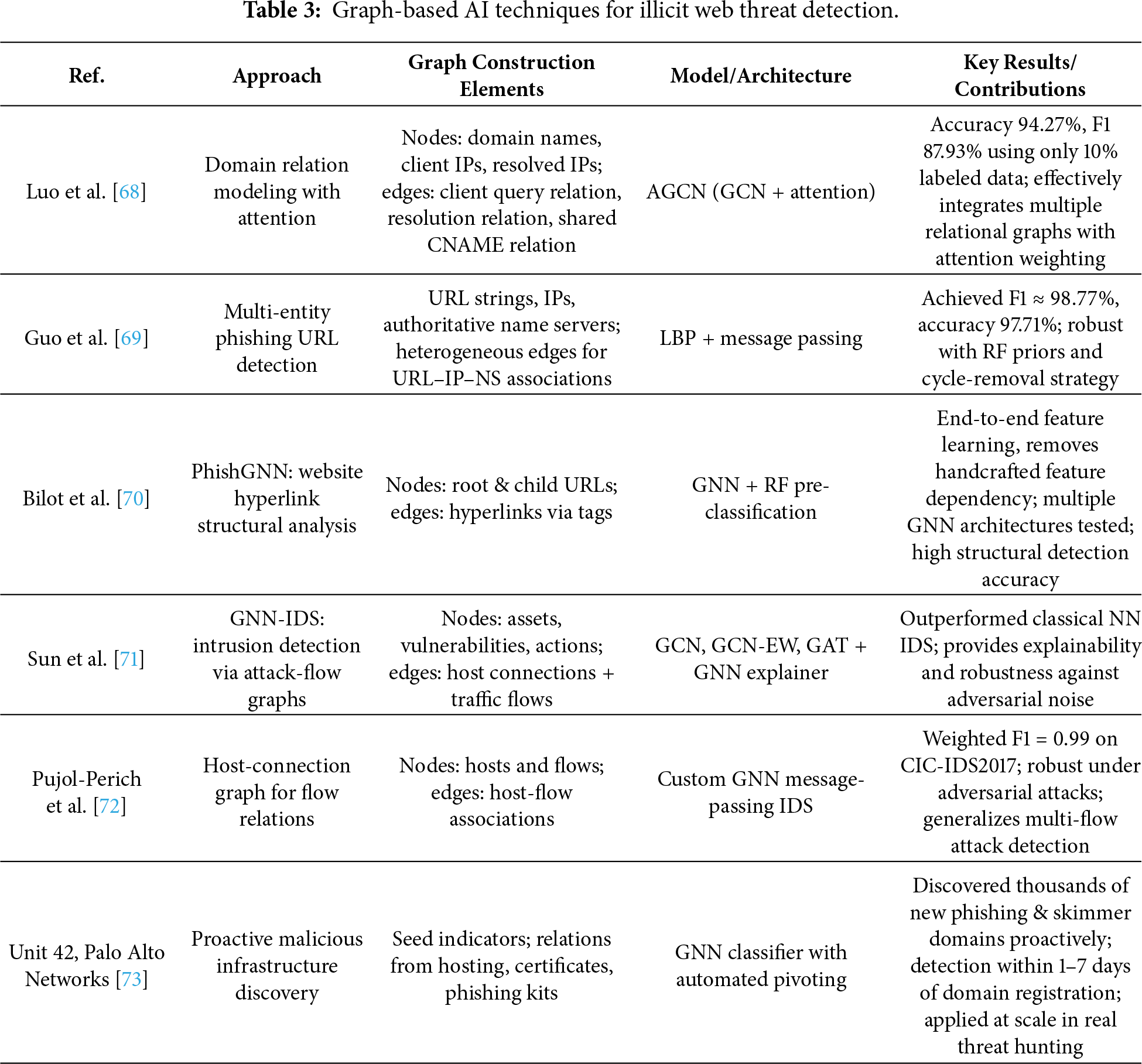

Illicit websites are not confined to a single URL or HTML page but are instead constructed upon a complex infrastructure ecosystem involving inter-domain connections, shared IP structures, and common name servers. Such relational structures are difficult to capture using conventional text- or image-based detection methods; hence, in recent years, GNN-based detection approaches have attracted significant attention. As shown in Table 3, this section summarizes the principal detection models leveraging GNNs, the corresponding graph construction strategies, and the advantages and limitations of GNNs.

4.3.1 Graph-Based AI Techniques

A key factor in GNN-based domain detection lies in the definition of nodes (entities) and edges (relations). The AGCN-Domain model proposed by Luo et al. defines domains, client IPs, and resolved IP addresses as nodes and models three principal relations—client relation, resolution relation, and Canonical Name (CNAME) relation—into graph structures. Here, the client relation connects domains queried by the same client, the resolution relation connects domains mapped to the same IP, and the CNAME relation connects domains sharing identical CNAME records. The proposed model applies a Graph Convolutional Network (GCN) to each relational graph and integrates them by assigning weights to each relation through an attention mechanism, thereby constructing unified feature vectors. Experimental results demonstrate that even with only 10% of the domains labeled, the model achieved 94.27% accuracy and an F1-score of 87.93%, thereby outperforming existing baselines [68].

Guo et al. proposed a method that constructs a heterogeneous graph integrating URL strings, IP addresses, and authoritative name servers, and applied a detection model based on Loopy Belief Propagation (LBP) to classify phishing URLs. The model dynamically adjusts edge potentials using similarity-based metrics and enhances stability through a combination of RF-based priors and a cycle-removal convergence strategy. On a large-scale dataset comprising 306,354 URLs, the approach recorded an F1-score of 98.77% and an accuracy of 97.71%, demonstrating superior performance and robustness compared with existing baselines [69].

PhishGNN introduces a framework that models the internal hyperlink structures of phishing websites as graphs, learning semantic relations via a GNN. Each node represents either a root URL or child URLs linked through internal hyperlinks, while edges are constructed from links extracted from HTML tags such as <a>, <form>, and <iframe>. PhishGNN incorporates initial labels for root and child nodes using a RF pre-classifier, which are subsequently integrated into the GNN for automatic embedding and message passing. In this way, the model effectively captures the structural characteristics of websites [70]. This framework reduces dependency on handcrafted features while supporting end-to-end learning and maintains extensibility to multiple GNN architectures.

GNN-IDS is an Intrusion Detection System (IDS) that integrates attack graphs with real-time measurements to construct input graphs, upon which models such as GCN, GCN with Edge Weights (GCN-EW), and Graph Attention Network (GAT) are applied. By combining static information within networks (e.g., assets, vulnerabilities) with dynamic behavior information (e.g., traffic, logs), the system is capable of learning complex attack scenarios. Experimental results reveal that the proposed GNN-IDS achieves superior accuracy and F1-scores compared to conventional neural network-based IDS. Furthermore, with the aid of GNNExplainer, the model provides interpretable detection results. It also demonstrates resilience under noisy or altered attack scenarios, indicating strong robustness for practical deployment [71].

GNN can enhance the detection of multi-flow attacks or interaction-based threats by modeling structural relations across multiple network flows. To overcome the limitations of traditional ML-based IDS that learn only individual flow features, Pujol-Perich et al. introduced the Host-Connection Graph (HCG), which represents flows as node–edge structures, and designed a specialized GNN message-passing architecture. Their model achieved an F1-score of 0.99 on the CIC-IDS2017 dataset and maintained detection accuracy even under adversarial attacks that manipulated packet sizes and inter-arrival times [72]. These findings demonstrate the potential of GNNs to capture structural patterns of emerging threats and ambiguous domains, enabling resilient detection. They further suggest that integration with IDS systems can substantially enhance flow-based detection. Beyond supervised classification, GNNs are also considered effective for unsupervised tasks such as graph anomaly detection, link prediction, and similarity assessment, as they can jointly exploit graph structures and node attributes to detect anomalous patterns at the node, edge, subgraph, or entire graph level [74]. This capability provides flexibility in identifying novel threats, ambiguous domain clusters, and anomalous flows arising from dynamic structural changes, thereby extending applicability to graph-based IDS research [75].

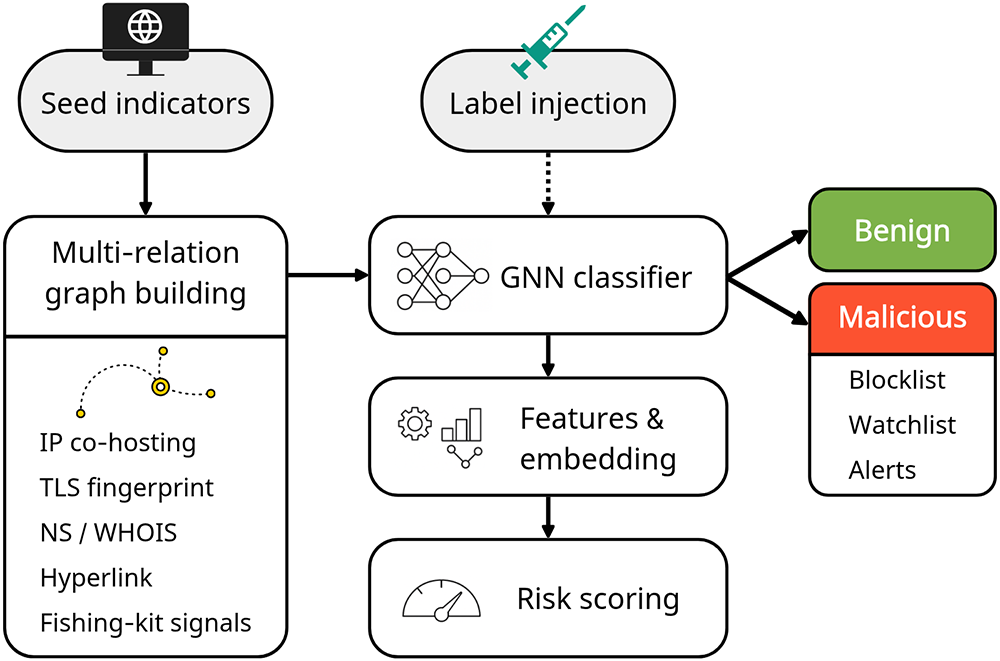

The Unit 42 project of Palo Alto Networks proposed a proactive discovery framework, illustrated in Fig. 7, that constructs graphs from a small set of known malicious domains (seed indicators) and applies a GNN-based classifier to identify related but previously undetected infrastructures. The system expands relational graphs using indicators such as IP co-hosting, Transport Layer Security (TLS) certificate similarity, and registrar information. By leveraging GNN learning, the framework reduced detection delays to within 1–7 days after domain registration, effectively identifying phishing and skimmer domains before they became weaponized [73]. This demonstrates the feasibility of proactive infrastructure hunting strategies that preemptively block malicious assets.

Figure 7: Proactive domain-infrastructure discovery model. Starting from a few seed indicators, a multi-relation graph is built by aggregating. Labels are injected for training only. The graph is processed by a GNN classifier to produce embeddings and risk scores, enabling early identification of related infrastructure and driving operational outputs (benign/malicious decision) [73]. Takeaway: Infrastructure-level graph modeling enables early identification of malicious domain clusters, supporting proactive rather than purely reactive threat mitigation.

While GNN-based detection techniques offer advantages in modeling the structural relationships of illicit web ecosystems, persistent challenges remain. These include the limitations of semi-supervised learning under label scarcity, performance degradation due to heterophily and noise within graphs, and class imbalance issues. Furthermore, GNNs often face challenges in interpretability, restricting their adoption in high-trust security analyses, and are vulnerable to privacy attacks (e.g., membership inference, model extraction) in distributed or collaborative detection environments. To address these issues, active research efforts are exploring strategies such as label denoising, structural redesign, imbalance correction, differential privacy, and federated GNN frameworks [76].

4.3.2 Synthesis and Implications of Graph-Based Approaches

Graph-based approaches shift the detection paradigm from single-instance classification to infrastructure-level analysis. By modeling domain-IP relationships, hosting clusters, certificate reuse, and hyperlink structures, GNN-based systems capture latent structural dependencies within illicit web ecosystems.

This modality is particularly advantageous under zero-day conditions, where newly registered domains may evade lexical and visual inspection but remain structurally linked to malicious infrastructure. However, graph construction requires large-scale data aggregation, label availability, and periodic updates, introducing computational and operational complexity.

Performance may degrade under conditions of graph noise, heterophily, or label scarcity. Additionally, interpretability and privacy concerns remain open challenges. These trade-offs highlight the ecosystem-level strengths of graph models while exposing scalability and deployment limitations, directly addressing RQ1 and RQ4.

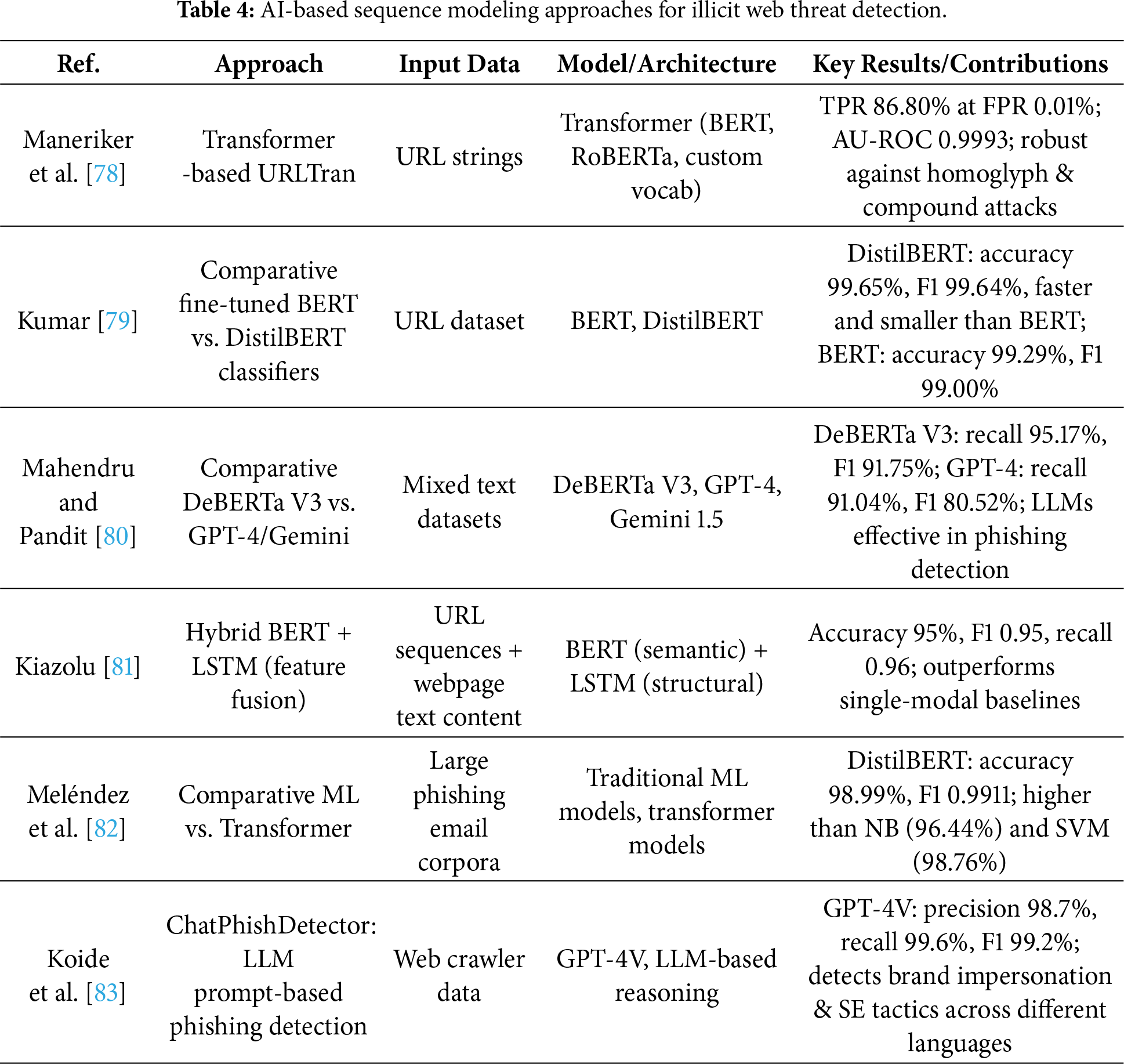

4.4 Sequence Modeling Approaches

Traditional URL-based rule filters and HTML analysis methods have inherent limitations, as they rely on fixed patterns that are difficult to adapt to complex and dynamic cyber threats. Against this backdrop, recent studies have applied Transformer-based Natural Language Processing (NLP) models, which process sequence data as input, to the detection of illicit websites. As shown in Table 4, this section reviews web content analysis approaches employing sequence models such as Transformer, Bidirectional Encoder Representations from Transformers (BERT), and Generative Pre-trained Transformer (GPT) families.

4.4.1 Sequence Modeling AI Techniques

Although URLs appear short and simple in structure, they actually contain information units at the character and token levels that reflect the attacker’s obfuscation strategies. Recent studies have considered this property and proposed Transformer-based models such as URLTran, which directly ingest URL strings as input for phishing classification. These approaches have been reported to achieve higher detection accuracy and robustness than conventional CNN- or RNN-based architectures [77]. In particular, the URLTran_BERT model achieved a TPR of 86.80% at a very low FPR of 0.01%, outperforming URLNet (71.20%) and Texception (52.15%). Moreover, by incorporating character-level and byte-level subword tokenization, URLTran demonstrated superior contextual understanding and generalization for phishing URLs with ambiguous word boundaries or deliberately manipulated structures [78].

In recent years, pre-trained language models such as BERT, RoBERTa, and DistilBERT have been widely studied for cybersecurity text analytics, including URL and email content. These models achieve significantly higher precision and recall compared to traditional keyword-based detection methods. Among them, the lightweight DistilBERT maintains more than 95% of BERT’s language understanding capability on the GLUE benchmark, while offering a smaller model size and faster inference speed. In Kumar’s comparative study on phishing URL detection, DistilBERT achieved 99.65% accuracy and 99.64% F1-score, slightly but consistently outperforming BERT (99.29% accuracy and 99.00% F1-score), thereby demonstrating practical detection performance even under resource-constrained environments [79].

The SecureNet project further compared multiple pre-trained language models for phishing detection. Results indicated that DeBERTa V3 achieved the best performance, with 95.17% recall and an F1-score of 91.75% on the HuggingFace phishing dataset. In contrast, GPT-4 recorded 91.04% recall and an F1-score of 80.52% under the same conditions, showing particular strength in web and HTML-based phishing scenarios [80]. These experiments demonstrate that LLMs can deliver meaningful detection performance beyond text generation, suggesting their potential in integrated detection strategies that span diverse data types such as URLs, HTML, emails, and SMS.

To leverage complementary information from both URL structure and web content, Kiazolu proposed a hybrid model combining LSTM and BERT. The model extracts structural features such as domain length, use of special characters, and subdomain patterns from URL sequences using LSTM, while simultaneously generating deep semantic embeddings of webpage content using BERT. The embeddings are fused through feature fusion, and the final classifier predicts phishing status based on the combined representation. Experimental results showed accuracy of 95%, F1-score of 0.95, and recall of 0.96, surpassing single-modal baselines [81]. These findings indicate that integrating sequential URL structures with semantic content features can enhance both detection accuracy and adaptability.

Meléndez et al. demonstrated that lightweight pre-trained language models such as DistilBERT also achieve excellent results in phishing email detection compared to traditional ML methods. For instance, DistilBERT achieved 98.99% accuracy and an F1-score of 0.9911, slightly but consistently outperforming Naive Bayes (NB) (96.44%) and SVM (98.76%) [82]. This suggests that Transformer-based models can effectively replace conventional approaches not only in terms of accuracy improvement but also in terms of model efficiency and deployability.

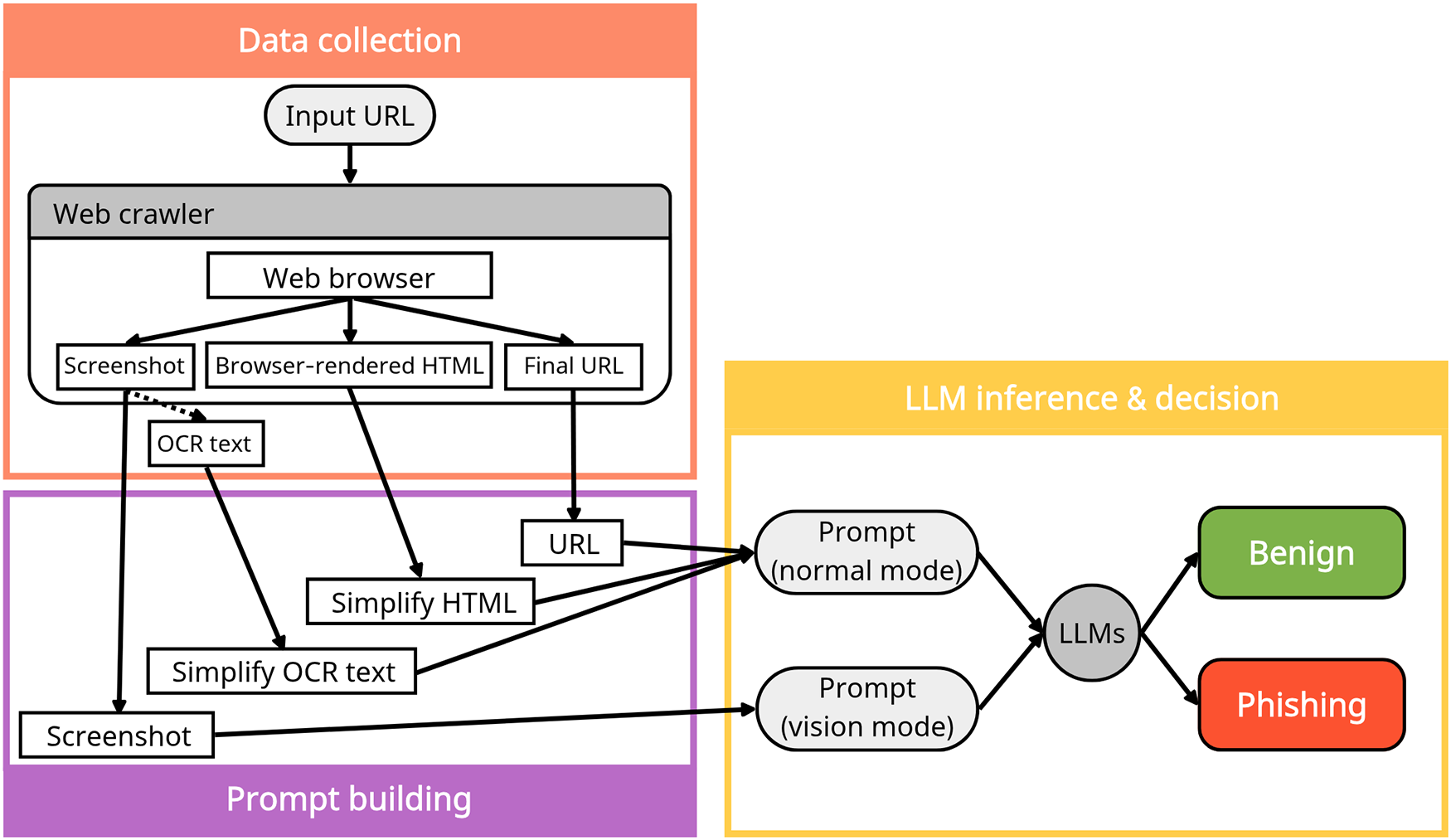

The emergence of LLMs has opened the possibility of zero-shot approaches for phishing detection without additional training. As illustrated in Fig. 8, ChatPhishDetector utilizes GPT-4V to determine phishing status based on prompts constructed from website screenshots, HTML, Optical Character Recognition (OCR) text, and URLs. This system was designed to overcome the limitations of conventional detection approaches. Experimental results demonstrated that GPT-4V achieved a precision of 98.7%, recall of 99.6%, and F1-score of 99.2%, outperforming other LLMs and traditional detection systems. Furthermore, it effectively identified brand impersonation and social engineering techniques [83]. Notably, the system demonstrated high practicality and scalability in real-world settings, as it can generalize across multiple languages and domains based solely on prior training.

Figure 8: LLM-based phishing detection model (ChatPhishDetector). URL/HTML/screenshot (+OCR) are fused into normal (text) or vision (text+image) prompts, yielding a benign/phishing decision [83]. Takeaway: Prompt-driven LLM architectures demonstrate how multimodal contextual reasoning can unify textual and visual signals for adaptive phishing detection.

4.4.2 Synthesis and Implications of Sequence Modeling Approaches

Transformer-based and sequence modeling approaches introduce contextual reasoning capabilities that extend beyond handcrafted features. By learning semantic dependencies within URLs, HTML structures, and phishing messages, these models exhibit improved resilience to superficial lexical manipulation.

In zero-day settings, sequence models demonstrate stronger generalization than traditional classifiers when trained on diverse corpora. However, their performance remains sensitive to domain shifts and adversarial prompt engineering. Moreover, the computational demands of large language models introduce latency and resource constraints, particularly in real-time detection environments.

While LLM-based detection frameworks show promise for adaptive and cross-lingual threat analysis, their reliability depends heavily on calibration, evaluation protocols, and robustness testing. These findings reinforce the need for evaluation rigor (RQ2) and deployment-aware model design (RQ3).

4.5 Cross-Modality Comparison of AI-Based Detection in Illicit Web Ecosystems

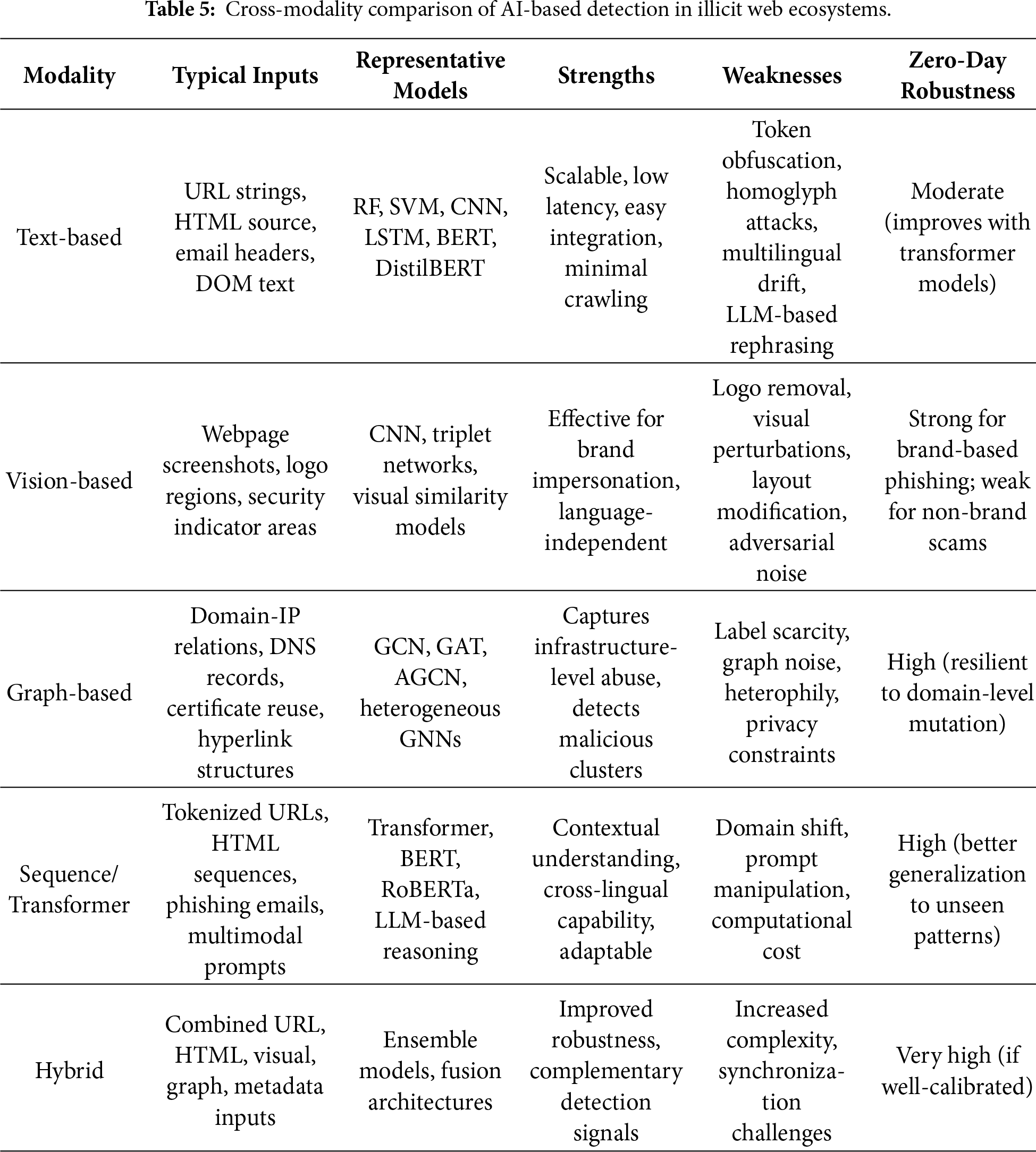

The comparative analysis between the modalities of AI-based detection in the illegal web ecosystem is shown in Table 5.

The comparative analysis highlights that no single modality provides comprehensive resilience across all illicit web threat scenarios. Text-based methods offer high scalability but remain vulnerable to semantic obfuscation. Vision-based approaches excel in brand impersonation detection yet incur rendering overhead. Graph-based models demonstrate strong ecosystem-level robustness but require substantial infrastructure data. Sequence models improve contextual generalization but introduce computational trade-offs. Hybrid architectures appear most promising for zero-day resilience, though their deployment complexity necessitates careful system design. These findings directly address RQ1 and RQ3 by clarifying the interplay between modality strengths, operational constraints, and generalization capacity.

5 System Architecture and Pipelines

5.1 Data Collection and Labeling

The performance of AI-based illicit website detection systems largely depends on the quality of the input data. To train high-performance models, it is essential to secure a sufficient amount of high-quality labeled data, and thus strategies for data collection and labeling must be carefully considered at the early stages of model design. This section reviews several dataset construction cases, focusing on key collection methods, labeling procedures, feature design principles, and quality evaluation techniques.

For data collection, widely used public phishing domain repositories such as PhishTank and OpenPhish are typically employed. In addition, domain lists scraped from Computer Emergency Response Team (CERT), security companies, research institutes, and public data sources can be incorporated. Based on these domain lists, a dedicated environment is configured to automatically collect webpage HTML sources and metadata, with various feature design and labeling methodologies being introduced to ensure data quality.

PhishStorm is a system built for real-time phishing URL detection. It constructed a balanced dataset of 96,018 entries using 48,009 phishing URLs collected from PhishTank and 48,009 legitimate URLs obtained from DMOZ. Each URL was split into its registered domain and the remaining part, and twelve features were extracted based on intra-URL relatedness and URL popularity using query data from Google Trends and Yahoo Clues. These features were computed within a distributed streaming architecture and Bloom filters to achieve real-time performance. Using a RF classifier, PhishStorm achieved high classification performance, with 94.91% accuracy and a 1.44% FPR [84].

Verma et al. emphasized that the performance of AI models in the security domain is heavily influenced by data quality, and to this end, they constructed a public dataset called IWSPA-AP for phishing email detection. The dataset was built by collecting phishing and legitimate emails from diverse sources, including university IT departments, Wikileaks, and the Nazario dataset. The collected emails underwent cleaning processes such as URL replacement, organization name normalization, signature removal, and HTML tag sanitization. However, from the earliest version, classification accuracy consistently exceeded 99%, and further refinements yielded little change. Consequently, they proposed a new quality indicator termed data difficulty [85]. This case highlights the importance not only of accurate labeling but also of identifying and correcting dataset construction issues that may lead to overly optimistic results.

Khan et al. applied and compared various ML algorithms to three publicly available datasets for phishing website detection. The first dataset comprised 10,000 webpages (5000 phishing and 5000 legitimate) collected between 2015 and 2017. The second was the University of California, Irvine (UCI) repository dataset containing 11,055 URLs (4898 phishing and 6157 legitimate). The third was a multi-class dataset of 1353 websites (702 phishing, 548 legitimate, and 103 suspicious). Each dataset included diverse attributes such as URL structure, domain information, Secure Sockets Layer (SSL)/TLS status, and page rank. Principal Component Analysis (PCA) was employed to assess attribute importance and eliminate redundant variables. Experiments were conducted using DT, SVM, RF, NB, K-Nearest Neighbors (KNN), and Artificial Neural Network (ANN), with RF and ANN achieving the best performance, recording over 97% accuracy on the first dataset [86]. This study underscores the importance of enhancing generalizability by leveraging datasets from multiple sources and conducting attribute analyses.

Aung and Yemana presented a detailed methodology for the construction and refinement of URL datasets for phishing detection. They collected phishing URLs from PhishTank and legitimate domains from IP2Location, subsequently crawling up to 100 webpages per domain and randomly extracting legitimate URLs. This process produced both a balanced dataset (10,000 phishing and 10,000 legitimate) and an imbalanced dataset (5000 phishing and 50,000 legitimate). Preprocessing steps included URL length restrictions and the removal of GET parameters. The proposed URL-Tokenizer, which combined BERT and WordSegment tokenizers, successfully segmented URLs into meaningful units, drastically reducing the number of out-of-vocabulary terms. As a result, the system achieved detection accuracies of 95.7% on the balanced dataset and 97.7% on the imbalanced dataset [87]. This case demonstrates that a high-quality dataset suitable for learning can be constructed solely from refined URLs, beyond mere collection.

Sánchez-Paniagua et al. introduced PILWD-134K, a dataset designed to ensure both timeliness and representativeness in phishing detection research. This dataset, collected between August 2019 and September 2020, contains 134,000 verified samples, including URLs, HTML code, screenshots, web technology analyses, offline replicas, and PhishTank metadata. Noting that 77% of phishing websites contain login forms, they ensured that legitimate sites in the dataset mirrored this proportion by crawling based on Quantcast and the Majestic Million. Phishing URLs were collected in real time from PhishTank, with content and final redirection URLs also stored. Following a three-stage filtering process, 66,964 phishing samples and 129,742 legitimate samples were retained. With 54 proposed features and a LightGBM classifier, the dataset achieved 97.95% accuracy [88]. This work demonstrates a practical and generalizable approach by reflecting login page distribution, employing multi-source data collection, and designing independent features.

Prasad and Chandra proposed the PhiUSIIL framework, which integrates a URL similarity index with incremental learning for phishing URL detection. A distinctive feature of this framework is its large-scale dataset construction, comprising 134,850 legitimate URLs and 100,945 phishing URLs. Legitimate domains were collected from Open PageRank, while phishing domains were obtained from PhishTank, OpenPhish, and MalwareWorld. Automated Java-based scripts were used to archive HTML content and extract dozens of features, including TLD, number of subdomains, HTTPS usage, iframes, and form submission methods. Additional derived features, such as CharContinuationRate, URLTitleMatchScore, and TLDLegitimateProb, were also designed to enhance dataset quality. Combining pre-training with incremental learning, the PhiUSIIL dataset achieved a detection accuracy of 99.79% [89]. Considering the short lifespan of phishing sites, this study is notable for establishing a real-time monitoring and immediate crawling environment, thereby providing a continuously expandable high-quality learning resource.

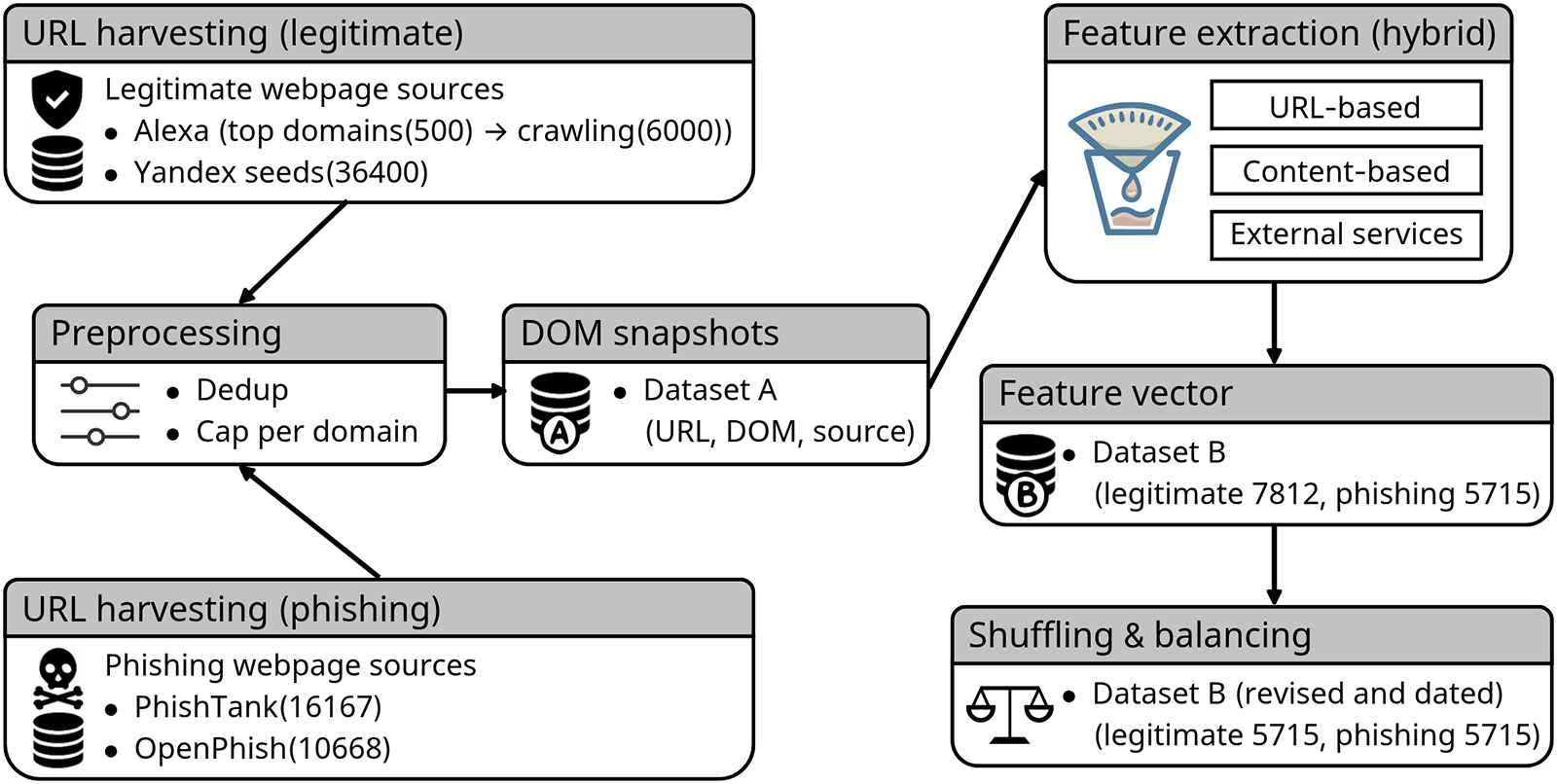

Hannousse and Yahiouche defined a total of 87 hybrid features by integrating URL-based, content-based, and external service-based attributes, and constructed a reproducible and extensible dataset for phishing detection, as shown in Fig. 9. The collected data included automated HTML content and external metadata. Experimental results demonstrated a detection accuracy of 96.83% using a RF classifier [90]. This study provides empirical evidence for the effectiveness of hybrid dataset-based approaches combining multiple feature categories.

Figure 9: Hybrid dataset construction pipeline for phishing detection. URLs are harvested from legitimate and phishing sources, preprocessed, and stored as DOM snapshots (dataset A). Hybrid features (URL, content, external services) are extracted to produce feature vectors (dataset B), which are then shuffled and balanced; the final dataset is dated for versioning [90]. Takeaway: A structured and version-controlled data pipeline improves dataset reproducibility and mitigates biases arising from asynchronous collection and feature inconsistency.

5.2 Real-Time Detection System Architectures

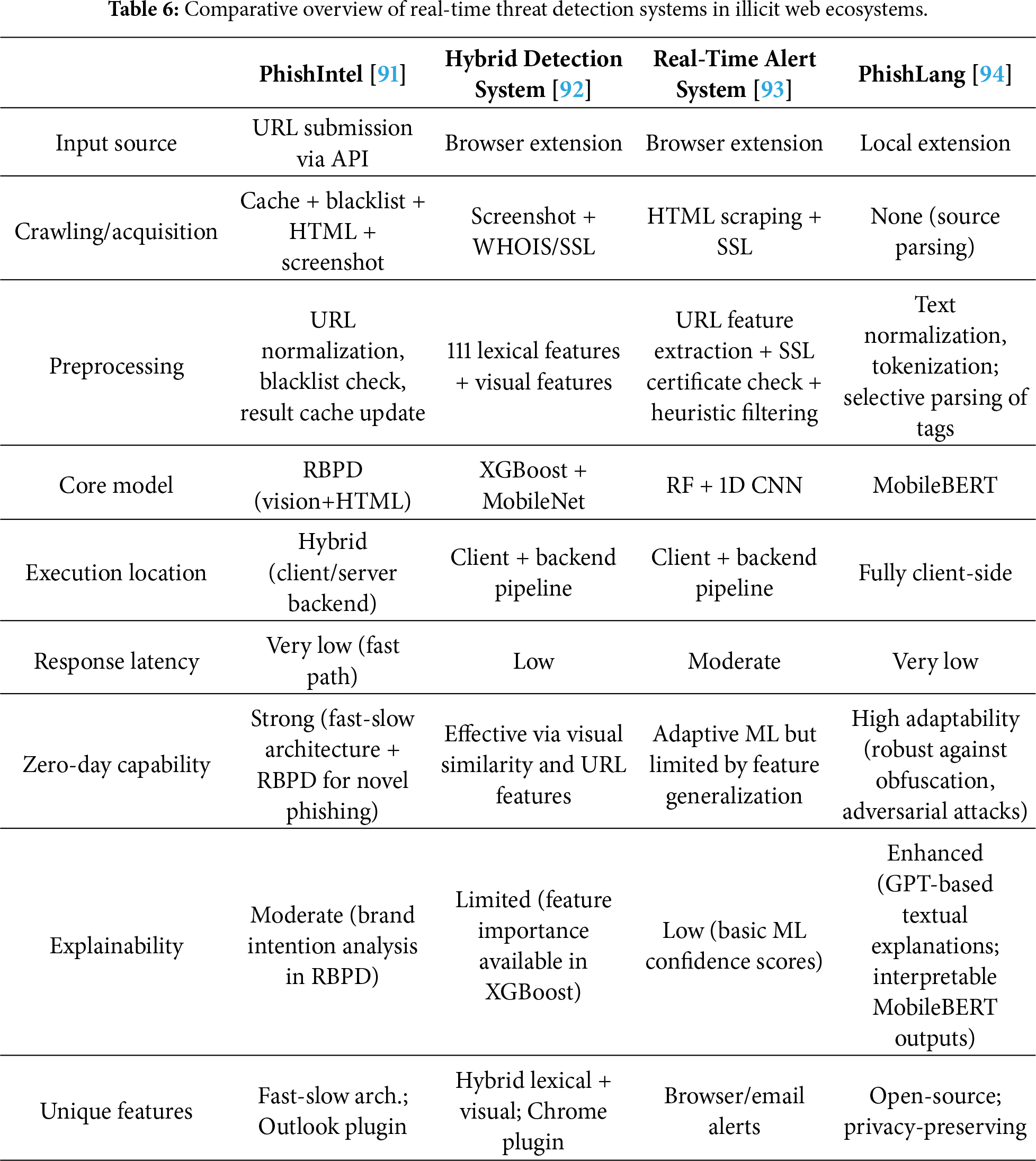

The detection of illicit websites must satisfy not only accuracy requirements but also practical considerations such as latency, adaptability, and deployment environments. Given that many malicious domains are short-lived and abandoned shortly after registration, the real-time capability of a detection system is a critical determinant of its overall detection rate. Consequently, recent years have witnessed the proposal of various real-time detection system architectures. As shown in Table 6, this section provides a comparative analysis of representative systems, focusing on their architectural components.

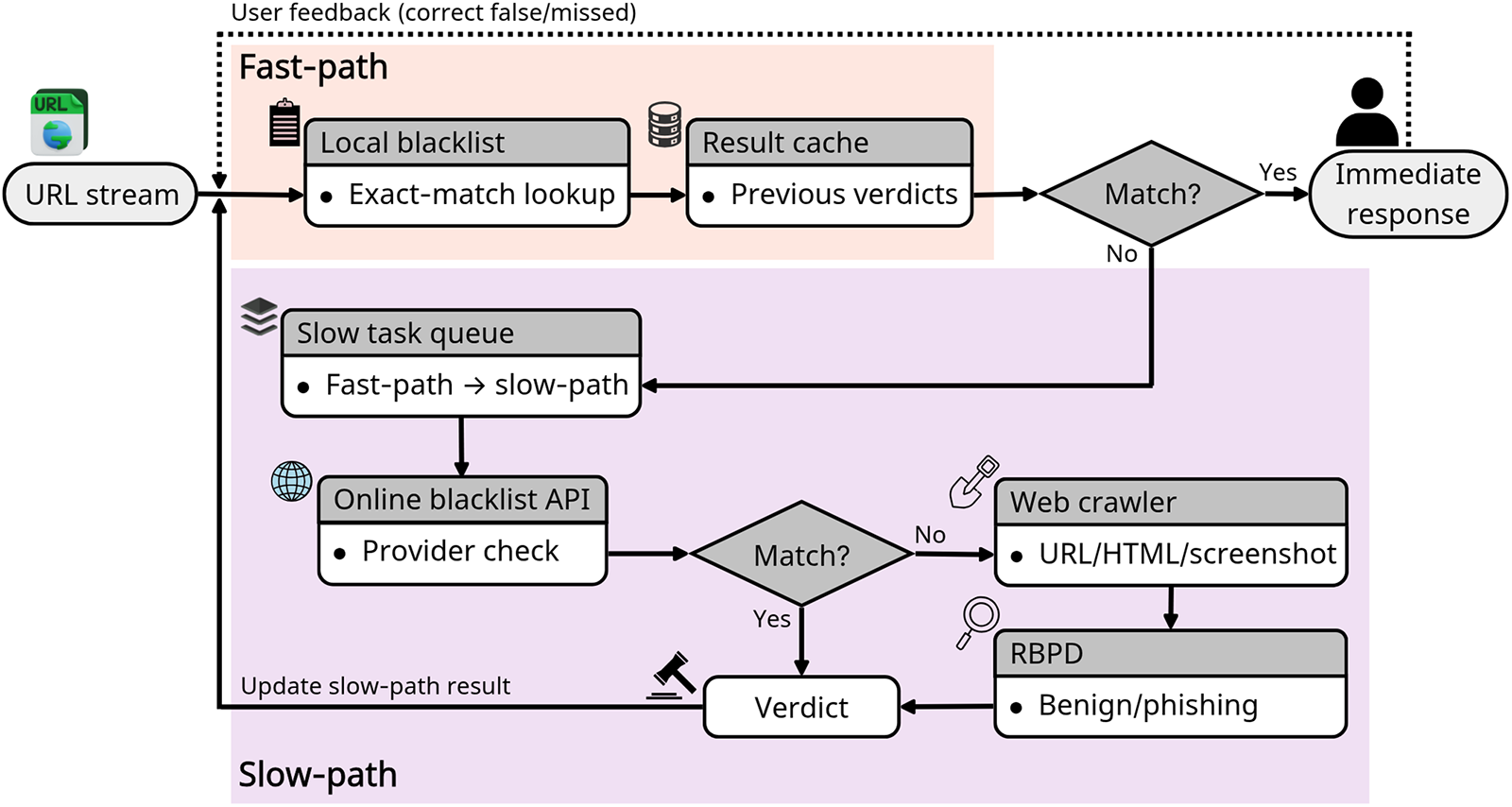

The PhishIntel system is based on a fast–slow task system architecture, in which the detection process is divided into fast and slow tasks (Fig. 10). The fast task quickly returns detection results using caches and blacklists, while URLs that remain unresolved are forwarded to the slow task for webpage crawling and model-based analysis. This design reduces the average response time to 0.016 seconds while maintaining robust zero-day phishing detection capability, thereby achieving a balance between responsiveness and detection performance [91]. The hybrid detection system by Gnanesh et al. and the real-time alert system by Pawar et al. both operate through browser extensions that collect URLs when users visit websites or open emails. On the backend, these URLs undergo further inspection, including HTML content crawling, SSL/TLS certificate validation, and screenshot-based visual similarity analysis. This enables more precise and real-time phishing detection [92,93].

Figure 10: Fast–slow task architecture for phishing detection (PhishIntel). Incoming URLs first take a latency-optimized fast path. Unmatched URLs are enqueued to the slow path for deep analysis using an online blacklist, a crawler, and RBPD. Slow-path verdicts update the cache, achieving sub-second response while retaining robust zero-day detection capability [91]. Takeaway: The fast–slow design balances sub-second response latency with deep inspection, illustrating a practical trade-off between real-time usability and zero-day robustness.

Input URLs are subjected to basic feature extraction, such as length, domain structure, and suspicious keywords, in addition to analyses of HTML structure, SSL/TLS certificate details, redirect logs, and iframe composition. The hybrid system by Gnanesh et al. combines XGBoost-based lexical analysis with MobileNet-driven visual similarity analysis to provide more resilient detection [92]. PhishLang executes all preprocessing on the client device. It parses meaningful elements directly from webpage HTML source code and feeds them into MobileBERT. The model tokenizes and embeds the input text to classify phishing attempts, offering effective resistance against previously unseen URLs and zero-day phishing attacks. With its lightweight architecture, MobileBERT achieves an average inference time of approximately 0.4 s and a memory footprint below 74 MB, ensuring stable real-time detection even in low-resource environments [94].

PhishIntel employs a Reference-Based Phishing Detection (RBPD) model, which classifies webpages by comparing their similarity with known phishing samples. Other systems adopt traditional ML, DL, or MobileBERT-based approaches, with some additionally incorporating CNN-based visual analysis. The execution environment varies among server-centric, client-centric, and hybrid client–server configurations, which influences latency, scalability, and privacy guarantees. Finally, inference results are communicated to users through browser extensions or backend APIs, and certain systems incorporate user feedback loops to refine models via continual retraining [91–94]. Such architectures enable continuous learning frameworks, thereby enhancing zero-day resilience.

5.3 Response System Architectures

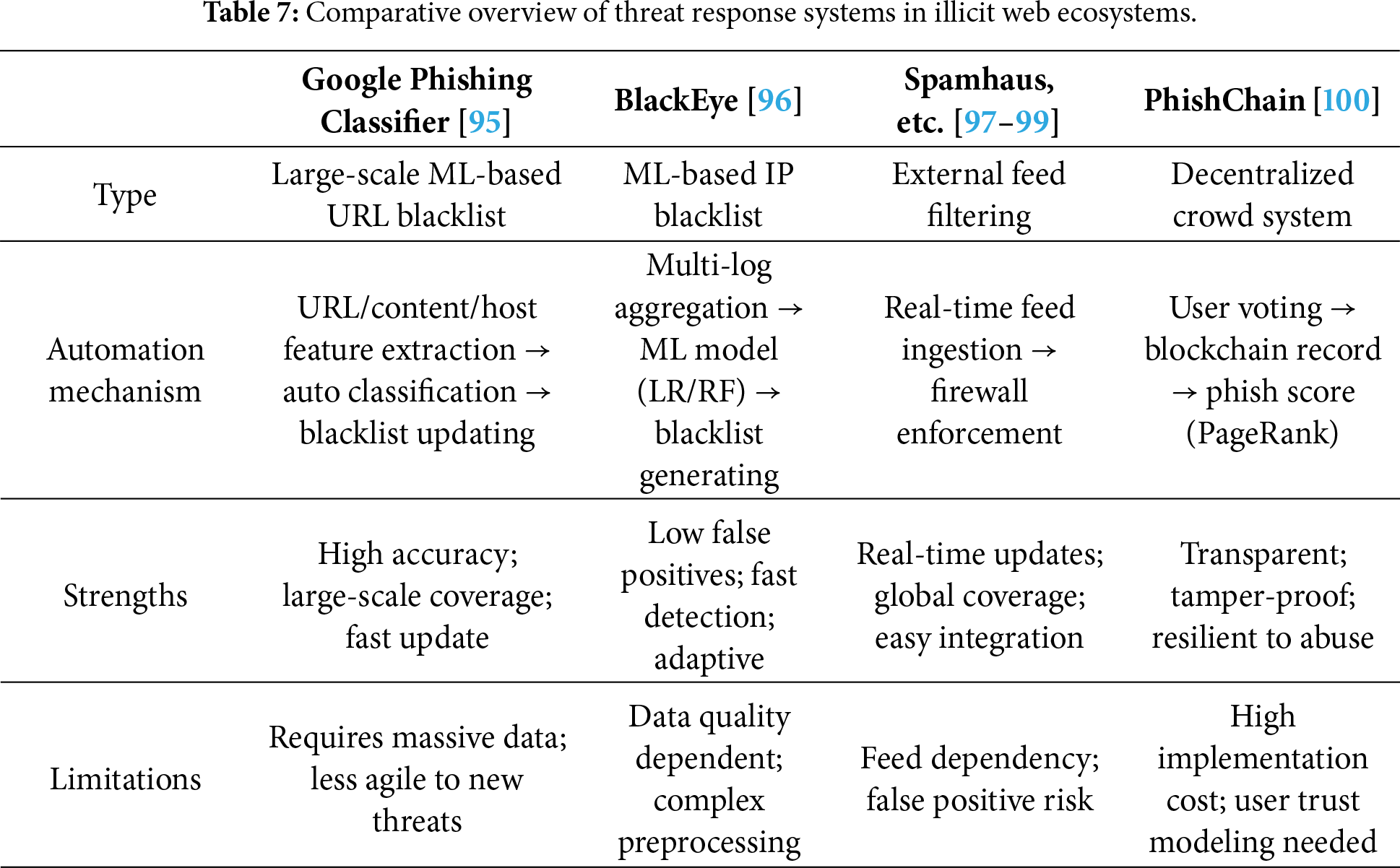

Illicit website detection systems should not merely stop at detection; they must be followed by actual blocking measures and coordinated responses at the network level. As shown in Table 7, this section examines various system architectures for response, including blacklist automation, network filtering, and user-driven blocking frameworks.

Google disclosed its large-scale ML-based phishing detection system in a 2010. This system automatically analyzes millions of webpages daily and classifies them as phishing or benign by leveraging a wide range of features such as URL, HTML, and hosting information. The classification results are reflected in real time in the Safe Browsing blacklist, which is integrated into Chrome, Firefox, Safari, as well as Gmail and the Google search engine. Remarkably, despite being trained on approximately 10 million noisy samples, the model consistently achieved a high performance with an average precision of 97.5% and recall of 94.9%, enabling automated detection and blocking of most URLs within a short time frame [95]. This design effectively counters the typically short lifespan of phishing webpages.

The BlackEye framework is designed to aggregate and analyze diverse security logs—such as those from web firewalls, network firewalls, and Intrusion Prevention Systems (IPS)—at the IP level. Using ML models, it automatically detects malicious IP addresses and generates blacklist candidates. Evaluations on datasets collected in operational security environments demonstrated that BlackEye identified malicious IPs on average 27 days earlier than manual analysis and reduced the rate of incorrectly blacklisted IPs by more than 89%, significantly lowering false positives. In particular, data refinement through Ridge regression improved training quality, while Logistic Regression (LR) and RF models were experimentally verified to effectively generate real-time blacklists [96].

At the level of network firewalls or ISPs, external security vendors such as Spamhaus and CrowdSec provide IP blocklist feeds that can be subscribed to for real-time filtering. For example, the Spamhaus data feed updates within an average of 30 s and offers a wide range of real-time integration solutions, enabling rapid response to emerging threats [97]. Palo Alto Networks’ firewall systems provide an External Dynamic List (EDL) feature, allowing administrators to dynamically import IP, URL, or domain lists managed externally. Security administrators can reference these lists directly in security policies to automatically block malicious IPs or URLs. The firewall then refreshes these lists according to configured intervals, ensuring up-to-date defenses against the latest threats [98]. Bellekens further emphasized that dynamic IP blocklist systems based on external threat feeds can update on a minute-level basis, thereby extending the scope of real-time threat response [99].

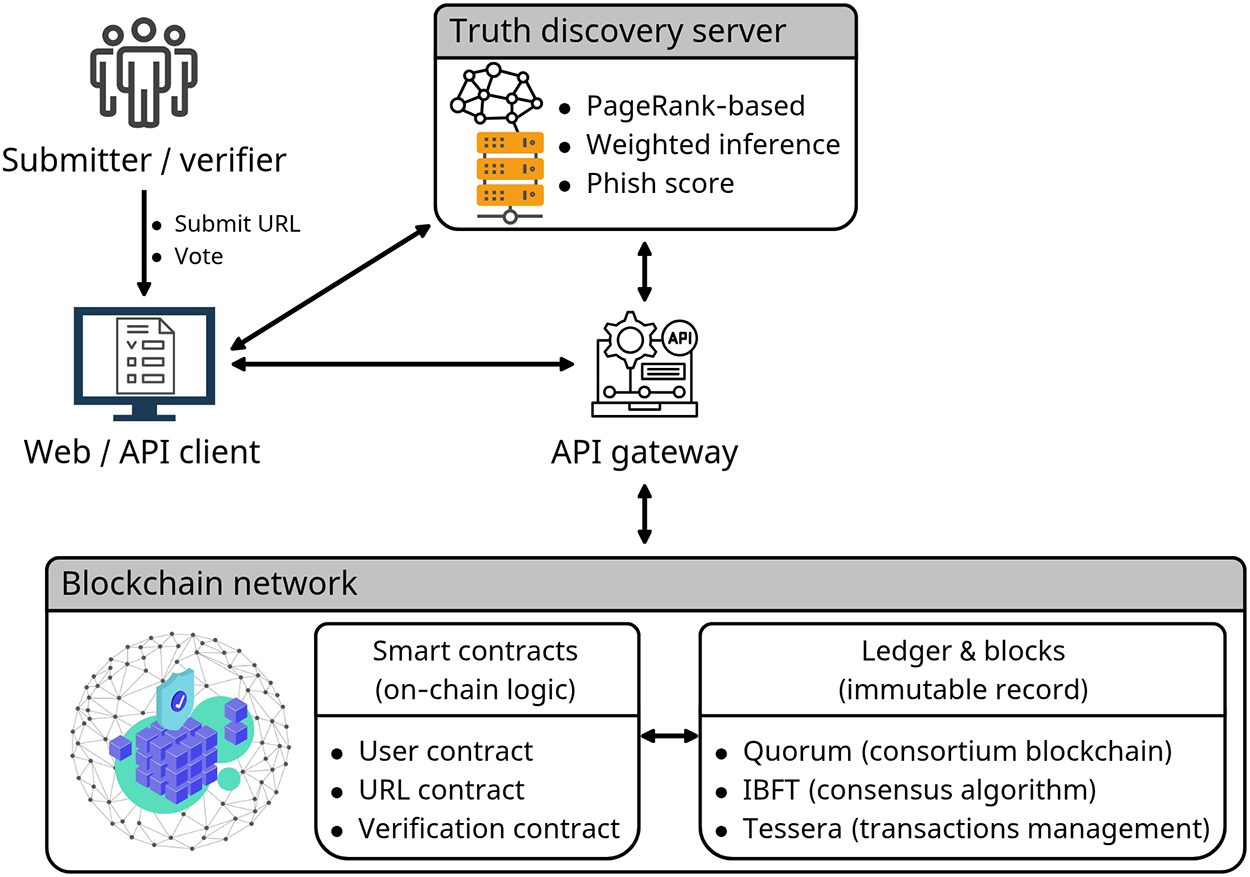

PhishChain is a decentralized URL blacklist system that employs blockchain technology to evaluate URLs submitted by users. Its basic architecture is illustrated in Fig. 11. Unlike centralized validators, it relies on majority user assessments and applies a PageRank-based truth discovery algorithm to compute a phishing score for each URL. All records are immutably stored on the blockchain, ensuring both transparency of blocking criteria and post-hoc auditability [100].

Figure 11: Decentralized crowd-sourced URL blacklisting architecture and workflow (PhishChain). The Web/API client submits URLs and votes via an API gateway; a PageRank-based truth discovery server runs off-chain, reads on-chain votes/status, and writes the computed phish score back on-chain through smart contracts. The consortium blockchain (Quorum with IBFT and Tessera) maintains the immutable ledger for transparency and auditability [100]. Takeaway: Decentralized validation mechanisms enhance transparency and auditability in phishing blacklisting, though they introduce complexity in trust modeling and scalability.

As summarized in Table 7, these response systems exhibit distinct strengths and limitations depending on their architectural design. Large-scale automated classifiers provide high accuracy and scalability, but may be slower to adapt to novel attacks. In contrast, real-time external feed integration offers immediacy but introduces dependency risks and potential false positives. Decentralized approaches ensure transparency and integrity, but pose challenges in system complexity and in designing robust models for user trust evaluation.

6.1 Public Phishing Domain Lists and Benchmark Datasets

The performance evaluation of AI-based illicit website detection models depends on the availability of consistent criteria and comparable datasets. To date, the most widely used public phishing domain lists are PhishTank and OpenPhish, upon which a variety of benchmark datasets have been constructed. This section summarizes the structure and utility of these lists, the characteristics of derivative datasets, and key considerations when employing them.

6.1.1 Public Phishing Domain Lists

PhishTank is a community-driven phishing domain verification platform operated by Cisco Talos. Users submit suspicious URLs, and the community determines their phishing status through voting. Since its launch in 2006, approximately 9.1 million suspicious URLs had been submitted and evaluated as of August 2025, with tens of thousands of them still active. PhishTank provides open access through APIs and JavaScript Object Notation (JSON)-based downloads, and its data are integrated into web browsers, email services, and various security solutions [101,102].

OpenPhish is an automated, ML-based phishing intelligence platform, primarily integrated into enterprise security solutions to detect and respond to phishing threats in real time. The platform continuously collects millions of webpages to identify phishing URLs and provides free as well as paid subscription plans, which differ in terms of update frequency and scope of phishing data. Supplied metadata include URL, target brand, IP and Autonomous System Number (ASN), SSL/TLS certificate information, country, language, and timestamp, making the dataset suitable for forensic analysis and threat intelligence [101,103]. Moreover, OpenPhish offers an academic use program, under which non-commercial users from accredited institutions such as universities and research centers may receive real-time phishing feeds and historical archives for a limited period. Such data may be employed in scholarly research with proper source acknowledgment and in compliance with legal and ethical requirements [104].

6.1.2 Public Phishing Benchmarks and Datasets

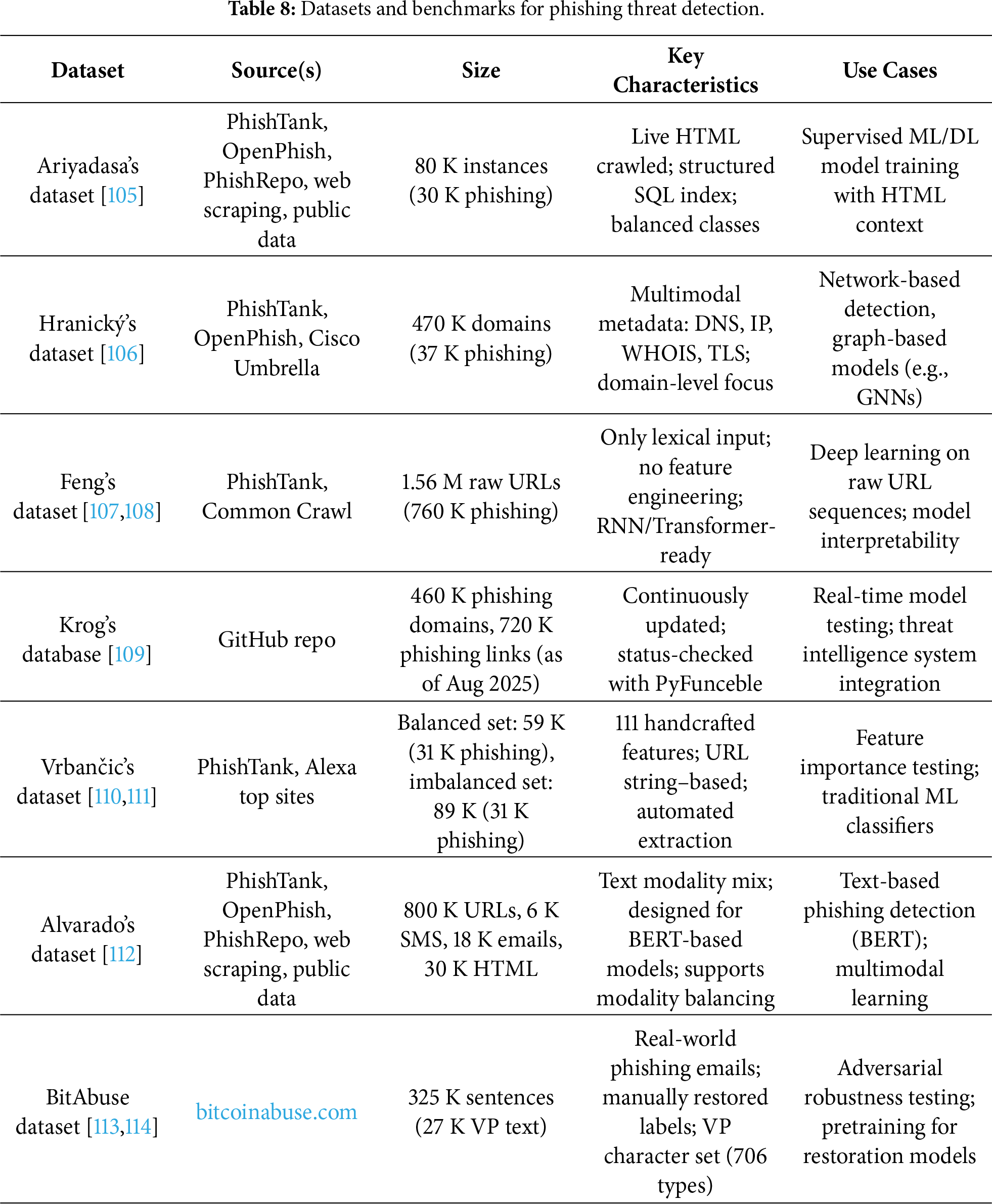

The phishing websites dataset by Ariyadasa et al. comprises approximately 80,000 instances, including 50,000 legitimate websites and 30,000 phishing websites. Each instance consists of a URL mapped to its corresponding HTML page, with the index.sql file containing five fields: rec_id, url, website, result (0: legitimate, 1: phishing), and created_date. Legitimate data were collected from Google searches and the Ebbu2017 Phishing Dataset, while phishing data were gathered from PhishTank, OpenPhish, and PhishRepo. Automated scripts continuously monitored PhishTank and OpenPhish to fetch pages promptly and minimize issues with disappearing resources [105].

The phishing and benign domain dataset by Hranický et al. consists of approximately 430,000 benign domains collected via Cisco Umbrella and 36,993 phishing domains sourced from PhishTank and OpenPhish. For each domain, it provides rich multidimensional features derived from DNS records, IP-related data, WHOIS/Registration Data Access Protocol (RDAP) information, SSL/TLS certificate fields, and GeoIP metadata [106]. This dataset is particularly useful for ML-based phishing detection studies focusing on network-level detection models.

The dataset developed by Feng and Yue integrates 800,000 legitimate URLs from Common Crawl with 759,485 phishing URLs from PhishTank. Designed for RNN-based models (LSTM, Gated Recurrent Unit (GRU), BiLSTM, BiGRU), it relies solely on raw URL strings as inputs without handcrafted feature extraction [107,108]. Its structure is also adaptable to Transformer-based models, making it suitable for research on pattern learning from raw sequences.

The phishing database by Krog and Chababy, maintained as an open-source threat intelligence repository on GitHub, periodically validates the activity status of known phishing domains using the PyFunceble tool. As of August 2025, it contained about 460,000 phishing domains and 720,000 phishing links [109]. Its continuously updated nature makes it valuable for real-time model testing and integration into operational threat-response systems.

Vrbančic et al. released a phishing detection dataset with 111 detailed features, 96 extracted directly from URL strings and 15 from external services. Two variants are provided: a balanced version (30,647 phishing, 27,998 legitimate; total 58,645) and an imbalanced version (30,647 phishing, 58,000 legitimate; total 88,647). Features include domain length, counts of various delimiters, presence of IP addresses, and inclusion of email addresses. Phishing URLs were sourced from PhishTank, while legitimate URLs came from Alexa top domains and community-labeled news sites. Data were acquired using a automated Python-based pipeline [110,111]. This dataset has been widely adopted for feature engineering and benchmarking of URL-based phishing detection models.

The phishing-dataset by Alvarado is a multimodal text dataset that includes URLs, emails, SMS, and HTML pages. Each sample contains text data with labels (0: benign, 1: phishing). It comprises more than 800,000 URLs, approximately 6000 SMS, about 18,000 emails, and 30,000 instances out of 80,000 collected HTML webpages. A combined_reduced version downscales URLs by 95% to balance across modalities, making it well-suited for BERT-based phishing detection experiments [112].

BitAbuse is a restoration-oriented dataset focusing on visually perturbed phishing sentences (VP sentences). It was constructed from more than 260,000 real phishing emails collected via bitcoinabuse.com, resulting in about 320,000 sentences, of which 26,591 VP sentences were manually annotated with original, non-perturbed labels. Restoration experiments demonstrated that Character BERT trained on BitAbuse achieved up to 96.56% accuracy in restoring VP sentences [113,114]. Owing to its real-world origin, manually restored labels, and diverse perturbation types, BitAbuse is valuable not only for adversarial robustness testing but also for applications in digital forensics, secure messaging, and high-performance text restoration.

Table 8 compares the key characteristics of these datasets.

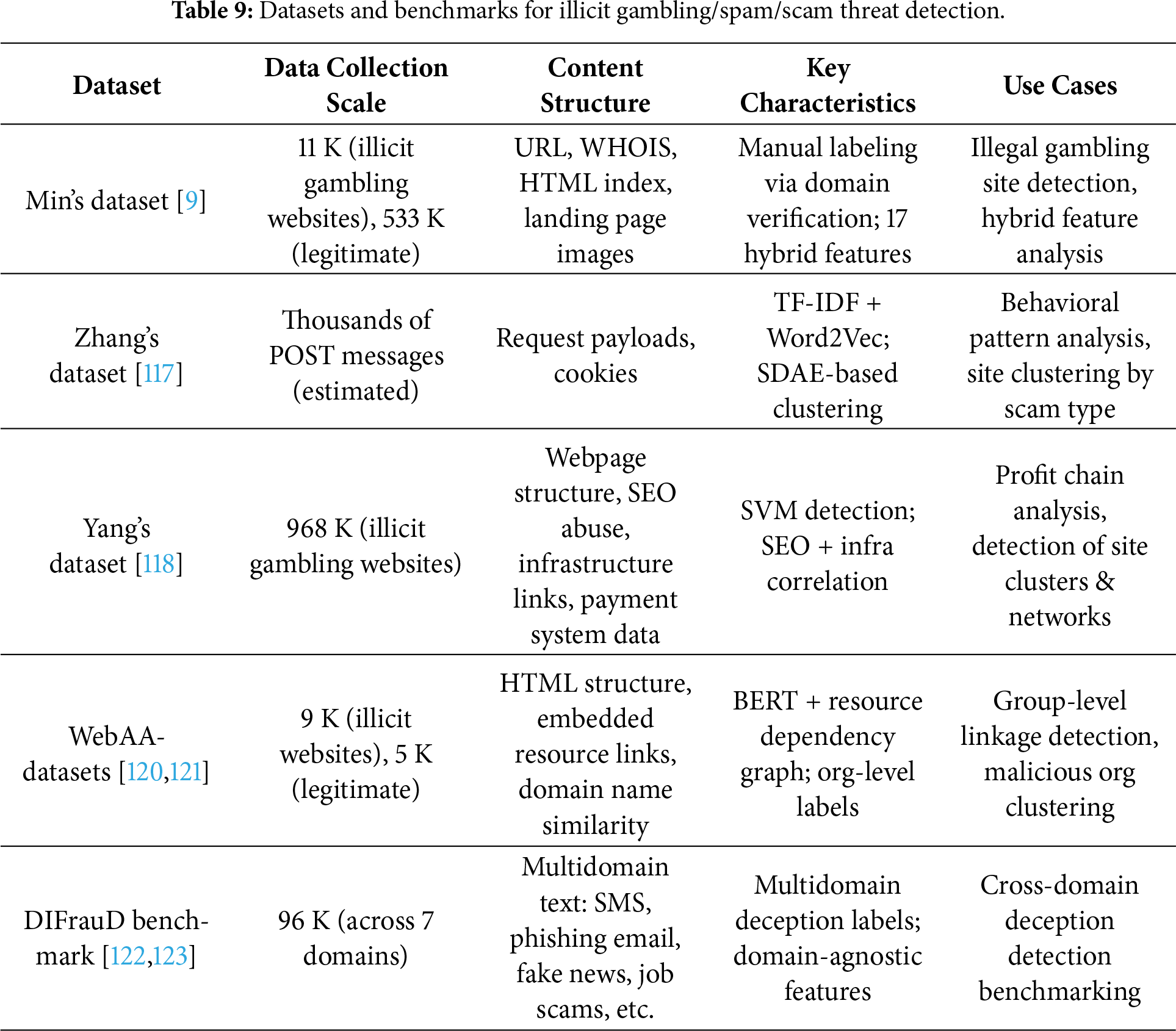

6.1.3 Considerations in Utilization