Open Access

Open Access

REVIEW

A Review of Genetic Algorithms: Principles, Procedures, and Applications in Optimization

Department of Mathematics, College of Science and Humanities in Al-Kharj, Prince Sattam bin Abdulaziz University, Al-Kharj, Saudi Arabia

* Corresponding Author: M. A. El-Shorbagy. Email:

Computer Modeling in Engineering & Sciences 2026, 147(1), 6 https://doi.org/10.32604/cmes.2026.079859

Received 29 January 2026; Accepted 17 March 2026; Issue published 27 April 2026

Abstract

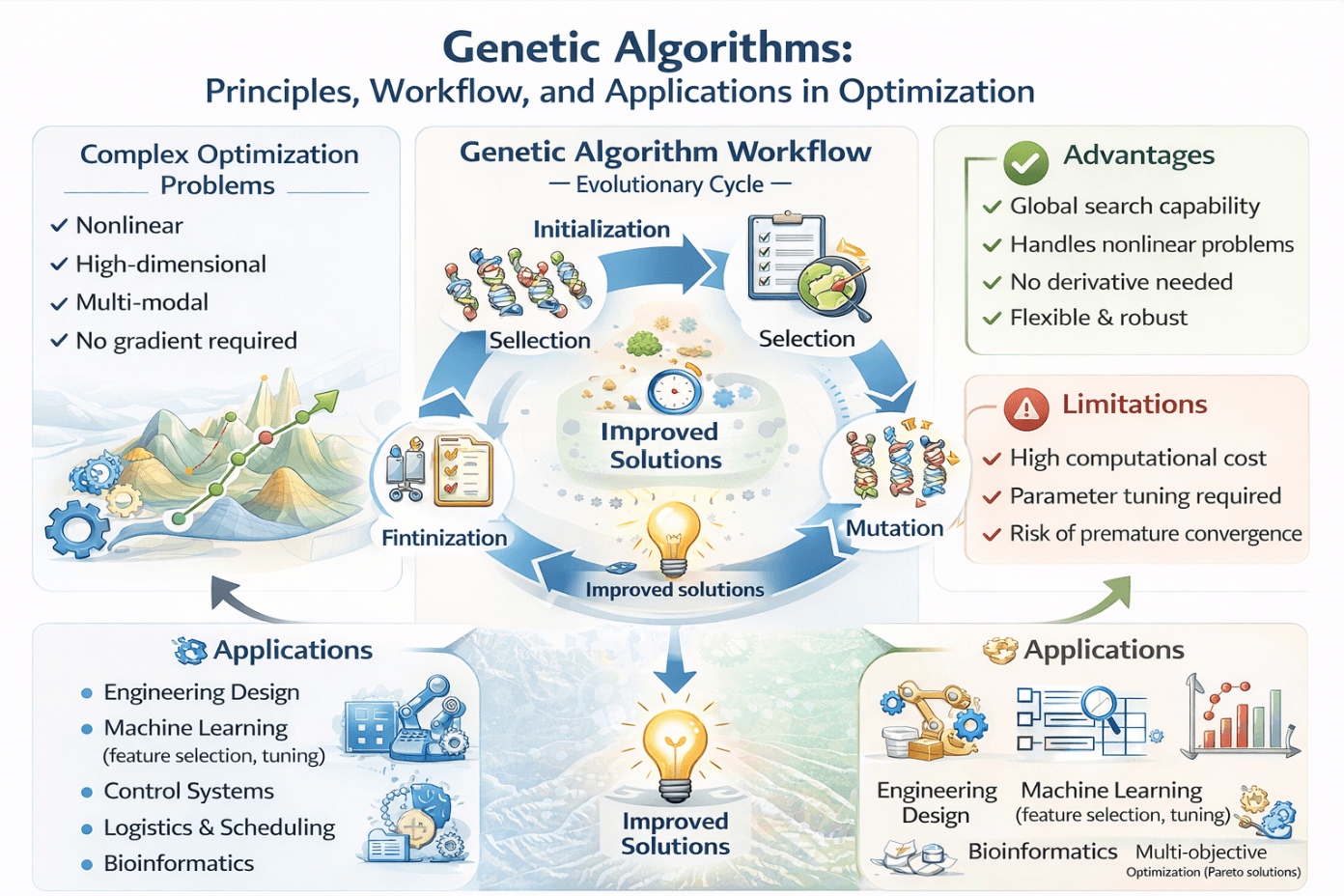

This paper provides a thorough examination of Genetic Algorithms (GAs), a category of evolutionary computation methods derived from the concepts of natural selection and genetics. The main concept and operational principle of GAs are elucidated, highlighting the evolution of populations of candidate solutions across multiple generations to get optimal or near-optimal solutions for complicated problems. The paper delineates the sequential phases of a conventional GA, encompassing problem formulation, solution encoding, initialization of population, fitness evaluation, selection, crossover, mutation, and termination criteria, so offering a coherent framework for comprehending the algorithm’s functionality. Moreover, numerous prominent genetic operators, including crossover and mutation, are examined, highlighting their distinct forms and processes for fostering diversity and exploration within the search space. Also, the paper emphasizes the benefits of GAs, including their capacity to address nonlinear, multi-modal, and high-dimensional optimization challenges without necessitating gradient information, along with their adaptability in resolving both continuous and discrete issues. The limitations and constraints of GAs, such as computing expense, parameter optimization, and the risk of premature convergence, are thoroughly analyzed. The paper examines various applications of GAs across fields, including engineering design, control systems, combinatorial optimization, machine learning, operations research, and multi-objective optimization, demonstrating the versatility and practical significance of this evolutionary method. This work establishes a robust basis for scholars and practitioners seeking to implement GAs in intricate optimization challenges. The review indicates that GAs have greatly progressed from Holland’s original formulation to specialized variations, such as real-valued, permutation, and tree-based encodings, each tailored to certain issue categories. The critical study indicates that although classical GAs are proficient in global exploration, their hybridization with local search techniques (memetic algorithms), swarm intelligence (GA-PSO), and surrogate models significantly improves convergence time and solution accuracy. The study highlights ongoing research deficiencies, such as the disparity between theoretical convergence proofs and the actual performance of algorithms, as well as the necessity for systematic recommendations in the design of hybrid algorithms.Graphic Abstract

Keywords

GAs are acknowledged as effective global optimization methods, especially adept at addressing nonlinear optimization challenges [1]. Numerous actual design and engineering optimization challenges cannot be efficiently resolved using conventional optimization techniques due to the presence of discontinuous, stochastic, highly nonlinear, or non-differentiable objective functions [2]. Classical optimization methods frequently demonstrate inefficiency, high computational costs, and often converge to a local optimum close to the initial starting point. Conversely, GAs can surmount these obstacles as they do not depend on linearization assumptions or derivative calculations. Their primary benefit over conventional approaches is their global sampling capabilities, allowing for broader exploration of the search space instead of being limited to local areas [3].

Numerous extensive studies of GAs are present in the literature, each highlighting different aspects. Certain studies offer comprehensive summaries of essential GA techniques but fail to comprehensively categorize hybrid methodologies. Others focus predominantly on engineering and practical applications, providing minimal theoretical synthesis. Previous foundational reviews outline fundamental principles and classical formulations; nevertheless, they predate numerous current advancements in adaptive mechanisms and hybrid GAs. This review diverges from previous studies in three key aspects. Initially, it presents a comprehensive taxonomy that categorizes GA variations across many dimensions, such as representation schemes, operator adaptation strategies, and hybridization structures. Secondly, it offers a critical examination of the evolutionary advancement of GAs, mapping their evolution from traditional formulations to modern adaptive and hybrid models. Third, it provides a stringent conceptual definition of authentic algorithmic hybridization, distinctly differentiating substantive methodological integration from superficial or weakly connected combinations. This review complements prior surveys by offering historical continuity and methodological clarity for academics and practitioners aiming to comprehend and use contemporary advancements in GAs [4,5].

This review presents a new contribution via its systematic taxonomy that categorizes GA variants across several dimensions—representation, operators, and hybridization—offering researchers a formal framework for comprehending the links among various techniques. Furthermore, we present a stringent definition of authentic algorithmic hybridization that differentiates substantive integration from mere surface amalgamation, providing methodological clarity frequently absent in the literature.

Using concepts from genetics and natural selection, GAs perform stochastic searches and optimizations [6]. Instead of starting with a single point in the search space, as is the case with traditional deterministic search techniques, GAs work with a population of potential solutions. Starting with a population of randomly generated solutions (chromosomes) that fit within the constraints of the issue is the first step of the process. There is a potential solution for every chromosome [7].

Following the establishment of the first population, subsequent generations of solutions are generated through the utilization of genetic operators, which include:

• Selection identifies individuals according to their fitness values, favoring superior solutions.

• Crossover (recombination) merges segments of two parental chromosomes to generate novel offspring solutions.

• Mutation brings minor random mutations into chromosomes to maintain variety and avert premature convergence.

By repeatedly using these operators, the algorithm generates subsequent generations of chromosomes. The mean quality of solutions in each successive generation is generally superior to that of its predecessor, progressively steering the population toward optimal or near-optimal solutions [8]. The evolutionary process persists until a stopping requirement is satisfied, such as attaining a maximum number of generations or achieving a certain convergence threshold. Upon completion of the process, the highest-performing chromosomes from the last generation are presented as the solution set [9].

This work aims to elucidate GAs as a potent optimization method derived from natural evolution. The paper specifically intends to:

• Elucidate the core concept and operational concepts of GAs, demonstrating how populations of candidate solutions undergo evolutionary processes throughout generations to converge towards optimal answers.

• Elucidate the fundamental stages of a conventional GA, encompassing issue formulation, solution encoding, population initialization, fitness evaluation, mechanisms of selection, and the implementation of genetic operators, including crossover and mutation.

• Present and examine frequently employed genetic operators, emphasizing their function in enhancing diversity, navigating the search space, and facilitating convergence to optimal solutions.

• Examine the benefits and drawbacks of GAs, highlighting their capacity to resolve intricate, nonlinear, and multimodal issues, while also considering concerns such as computing expense, parameter optimization, and the risk of premature convergence.

• Examine the practical applications of GAs in various fields, such as engineering design, control systems, combinatorial optimization, machine learning, operations research, and multi-objective optimization, to illustrate their adaptability and significance in real-world scenarios.

This paper seeks to provide readers with both a theoretical framework and a practical viewpoint regarding the efficacy of GAs as potent instruments for addressing intricate real-world optimization challenges.

1.1 A Systematic Taxonomy of GAs

This paper employs a systematic taxonomy to classify GAs across several dimensions: representation scheme, evolutionary operators, population structure, and hybridization approach. GA variants are categorized into four principal classifications as follows:

1. Classical GAs: The standard model proposed by Holland [2], defined by binary encoding, proportional selection, single-point crossover, and bit-flip mutation. These constitute the basis upon which all future versions are constructed.

2. Representation-Based Variants: GAs customized for certain problem domains via specialized encoding methods, such as real-valued GAs for continuous optimization, permutation-based GAs for combinatorial challenges, and tree-based GAs (Genetic Programming) for program evolution.

3. Operator-Adaptive GAs: Algorithms that dynamically modify genetic operators or parameters during execution, encompassing self-adaptive GAs, feedback-based adaptive GAs, and parameter-less schemes.

4. Hybrid and Memetic Algorithms: Methodologies that amalgamate GAs with alternative optimization techniques, such as local search (memetic algorithms), swarm intelligence (GA-PSO), simulated annealing, and surrogate models.

This taxonomy offers a structured framework for comprehending the interrelations among various GA methodologies and directs the organization of this review. In the subsequent sections, we delineate the emergence of each branch of this taxonomy from the constraints of prior methodologies and how they collectively tackle the issues of contemporary optimization.

1.2 Origins and Evolutionary Development

GAs are based on John Holland and his colleagues’ 1960s and 1970s University of Michigan research. Holland’s main idea was to abstract natural evolution’s selection, crossover, and mutation into computational operators for adaptive search. His 1975 book, “Adaptation in Natural and Artificial Systems,” established the theoretical framework, including the Schema Theorem, which demonstrated how GAs automatically process and combine appropriate building elements.

GA evolution has numerous phases. Classical formulation began in the 1970s and 1980s. Holland formalized and Goldberg popularized the canonical GA, which had binary encoding, fitness-proportionate selection, and fixed crossover and mutation rates. Applications began with function optimization and minimal control. This time saw the first systematic parameter investigations, which set population sizing and operator probability for a generation of practitioners.

Specialization and encoding advancements characterized the 1980s–1990s second phase. AS researchers applied GAs to more diverse issues, binary encoding’s limits became evident. This led to real-valued encoding for continuous optimization, permutation encoding for sequencing issues, and tree-based encoding for genetic programming. Each representation needed crossover and mutation operator advancements, resulting in problem-specific variations like Partially Mapped Crossover and Order Crossover for permutation issues.

The 1990s–2000s third phase concentrated on adaptive and self-adaptive GAs. The realization that static parameters limit GA performance across issue instances and search phases spurred adaptive control research. Early research on dynamic mutation rates led to sophisticated self-adaptive processes where chromosome properties evolve with solutions. Feedback-based techniques adjusted parameters based on population diversity or convergence indicators.

From the 2000s to the present, hybridization and memetic algorithms dominated the fourth phase. GAs thrive at global exploration but may struggle with local refining, leading to hybrid algorithms. Memetic algorithms enable local search within GA by combining evolutionary search with gradient-based or neighborhood search. Combining swarm intelligence, simulated annealing, and ant colony optimization has created strong hybrid solvers for challenging real-world problems.

From 2010 until the present, the fifth phase covers GA research advances. Modern research tackles high-dimensional, computationally demanding, dynamic optimization problems. Surrogate-assisted GAs approximate fitness functions with machine learning models, lowering computational cost. Modern hardware allows parallel and distributed implementations to scale to intractable issue sizes. Deep learning framework integration has advanced neural architecture search and automated machine learning.

This historical perspective shows a clear path from a single canonical algorithm to a broad family of specialized, adaptive, and hybrid algorithms that address specific shortcomings of their predecessors while maintaining evolutionary search principles.

The operation of a GA is primarily rooted in Darwin’s theory of survival of the fittest, which posits that more robust individuals within a population possess a greater likelihood of survival and reproduction [10]. In the realm of optimization, GA replicates this notion by progressively refining a population of potential solutions to achieve superior fitness values [11].

The primary elements of GAs consist of the chromosome, gene, population, fitness function, selection, crossover, and mutation [12]. A chromosome is an organized depiction of a potential solution, usually stored as a sequence of variables (genes). A population comprises a set of chromosomes that collectively embody various potential solutions to the optimization problem.

The GA process commences with the creation of an initial population, often generated randomly inside the problem’s search space while adhering to boundary constraints. Every chromosome in this population is assessed by a fitness function that quantifies the quality of the solution it embodies. Certain chromosomes are picked as parents for the subsequent generation based on their fitness values. This is accomplished by the utilization of genetic operators [13]:

• Selection emulates natural selection by prioritizing chromosomes with superior fitness values for reproduction.

• Crossover (recombination): merges genetic material from two parental chromosomes to generate children, hence facilitating exploration of the search space.

• Mutation: introduces minor random alterations in chromosomes to sustain variety and avert premature convergence.

The progeny produced via crossover and mutation constitutes a new population that supplants the previous one. This evolutionary cycle of selection, crossing, mutation, and replacement is reiterated over multiple iterations (generations). With each iteration, the population is anticipated to progress toward superior solutions, ideally converging on the most “fit” individuals. The process persists until a specified termination criterion is satisfied, such as attaining a maximum number of generations, achieving an acceptable fitness level, or witnessing population convergence [14].

The fundamental technique of GA can be succinctly outlined as follows [15]:

1. Commence the population using a collection of randomly created chromosomes.

2. Assess the viability of each chromosome within the population.

3. Choose parent chromosomes according to their fitness values.

4. Implement crossover between parental pairs to produce progeny.

5. Implement mutation in progeny based on a specified mutation probability.

6. Establish a new population by substituting the previous one with the progeny.

7. Reiterate stages 2–6 until the termination requirement (e.g., maximum generations or an acceptable solution) is met.

8. Document the optimal chromosome(s) identified as the conclusive solution(s).

2.1 Classical GA Pseudocode and Complexity Analysis

Algorithm 1 presents the classical GA in pseudocode form, followed by variants and computational complexity analysis.

Variants of the classical GA alter particular structural elements while maintaining the overarching evolutionary framework. In the steady-state GA, only a portion of people is substituted in each generation instead of reconstituting the complete population, which frequently facilitates more gradual convergence dynamics. The elitist GA implements a preservation mechanism that retains the top (

2.2 Computational Complexity Analysis

Let

Where

Table 2 indicates that for low to intermediate dimensional issues (

3 GA Procedure for Optimization Problems

The initial stage in employing a GA to address an optimization problem is to determine the representation of the design parameters for an individual [16]. This representation directly affects the efficacy of the search process, as it dictates the encoding, manipulation, and decoding of solutions into significant variables. In GA language, each solution is denoted as a chromosome, comprised of smaller components known as genes. The expression of genes and chromosomes is dictated by the encoding method [17].

Encoding techniques offer a systematic method for representing issue variables and may manifest in various ways, such as binary strings, arrays, numerical values, symbolic trees, or other specific data structures [18]. The selection of encoding is contingent upon the specific challenge and must be made judiciously to guarantee both representational correctness and operational efficiency in genetic processes (crossover and mutation).

Numerous encoding schemes have been suggested and effectively used for various optimization challenges over the years. The major approaches employed encompass [19]:

1. Binary Encoding: The conventional method in which variables are represented as sequences of binary digits (0 and 1 s). Each chromosome comprises a sequence of bits, facilitating the implementation of crossover and mutation procedures. This method is especially efficacious for discrete optimization challenges.

2. Permutation Encoding: Primarily utilized for ordering and sequencing challenges (e.g., the Traveling Salesman Problem). A chromosome is depicted as a series or permutation of integers, with each position representing a specific activity or city.

3. Value (Real-number) Encoding: Chromosomes are represented as arrays of real numbers rather than binary digits. This encoding is appropriate for continuous optimization problems, as it circumvents the precision challenges linked to binary representation.

4. Tree Encoding: Frequently utilized in Genetic Programming (GP), wherein chromosomes are depicted as trees with functions and terminals. Each tree structure represents a prospective solution program or mathematical expression.

5. Hybrid or Problem-specific Encoding: In certain instances, conventional encodings prove inefficient, prompting the creation of tailored encodings to address unique limitations or attributes of a problem (e.g., graph-based encodings for network design issues).

The selection of the encoding strategy is vital as it influences the representation of the search space and dictates the efficacy of GA operators in producing significant new solutions. An inadequate encoding selection may result in ineffective exploration and early convergence, whereas a judiciously selected encoding can markedly improve GA performance [20].

GAs operate in two spaces: the coding space (genotype) and the solution space (phenotype). The genotype encodes the solution, while the phenotype represents its expressed form, obtained through a mapping process [21,22]. A chromosome is a linear structure composed of genes, which are the smallest units of information. The GA search is conducted in the genotype space, whereas evaluation and selection occur in the corresponding phenotype space (Fig. 1).

Figure 1: Encoding—Decoding method.

In real-valued encoding, a chromosome is represented as a vector of real numbers, where each element directly corresponds to a design variable in the optimization problem [21–23]. This approach is particularly suitable for continuous optimization, as it preserves a direct mapping between variables and chromosome structure.

Unlike binary encoding, real-valued representation eliminates the need for encoding and decoding, reducing computational complexity and preventing precision loss. The chromosome length equals the number of design variables, leading to a compact and efficient representation that often improves convergence behavior.

Real-valued encoding is widely applied in structural design, parameter optimization, machine learning, and control systems, where decision variables are inherently continuous. Fig. 2 illustrates a chromosome represented in real-valued encoding.

Figure 2: Real-valued encoding.

Binary encoding is the predominant encoding method employed in GAs [24]. In binary encoding, each gene inside a chromosome is denoted as a bit (0 or 1), and the chromosome is represented as a sequence of these bits. This representation is suitable for issues where fitness depends on both the value and the inclusion or exclusion of specific elements [25].

In this approach, each gene is represented by a bit (0 or 1), forming a binary string. Binary encoding has numerous benefits, such as straightforward implementation, compatibility with conventional genetic operators (crossover and mutation), and appropriateness for discrete optimization challenges. Nonetheless, it may exhibit reduced efficiency for issues involving continuous variables, as precision is constrained by the length of the binary string. Fig. 3 depicts a chromosome represented by binary encoding.

Figure 3: Binary encoding.

The mathematical formulation of binary encoding enables the representation of continuous or discrete variables as binary strings appropriate for GAs. The binary encoding for the nth variable

Define the variable

where,

Permutation encoding is appropriate for ordering, sequencing, routing, or scheduling problems, where the solution is represented as an arrangement of elements. A typical example is the Travelling Salesman Problem (TSP) [22], though this encoding can introduce additional complexity [26].

In the permutation encoding, each chromosome is represented as a sequence (permutation) of numbers, with each number denoting a specific element (e.g., a city in the TSP or a task in a scheduling problem). The defining feature of permutation encoding is the prohibition of repetition within a chromosome, guaranteeing that each element is represented exactly once in the sequence. Fig. 4 depicts a chromosome in permutation encoding.

Figure 4: Permutation encoding.

Tree encoding aimed at evolving programs, symbolic expressions, or decision rules instead of mere numerical solutions [27]. In this depiction, each chromosome is organized as a tree, with nodes symbolizing functions or operators, and leaves (terminals) denoting input variables or constants [21,22].

In tree encoding, chromosomes resemble syntax trees used in programming. Internal nodes represent operators (mathematical/logical) or functions (e.g., +, −, ×, ÷, AND, OR, sin, cos), while leaf nodes represent variables, parameters, or constants. For example, in symbolic regression, a tree chromosome may represent the expression x + (y × 3) (Fig. 5).

Figure 5: Chromosome representation using tree enoling.

Tree encoding allows exploration of complex solution spaces, with genetic operators applied directly to the tree: crossover exchanges subtrees between parents, and mutation replaces a node or subtree randomly. This makes it ideal for automatic program generation, symbolic regression, and expression evolution, though it can produce large, complex trees (“bloat”) that require additional management.

The GA commences with a set of chromosomes known as the starting population. Each chromosome consists of genes, which signify potential solutions to the issue [28]. These genes are generally produced randomly under defined boundary constraints to guarantee diversity in the search space. The caliber and variety of this initial population significantly influence the efficacy and convergence of the GA [29]. There are two distinct representation schemes:

• Real-valued representation—in which each gene is denoted by a real number, rendering it especially appropriate for optimization issues involving continuous variables.

• Binary encoding involves representing each gene as a binary digit (0 or 1). This method is frequently employed when the issue variables are discrete or when the objective function relies on combinations of binary states.

By integrating these methodologies, the GA can proficiently navigate both continuous and discrete solution spaces, hence augmenting its ability to address a diverse array of optimization challenges.

3.2.1 Initial Population for Real-Valued Encoding

The starting population in real-valued encoding is created as a collection of chromosomes, each of which is represented by a vector of real values. The optimization problem’s design variables are represented by each gene in the chromosome. Every gene is initialized at random within its designated boundaries to guarantee viability.

A chromosome for a problem with

where:

•

•

•

•

Each variable

where

The initial population is represented as a matrix of dimensions

3.2.2 Initial Population for Binary Encoding

In binary encoding, a chromosome is represented by a sequence of binary digits (0 and 1 s). Each gene within the chromosome is associated with a particular decision variable or a segment of its binary representation. The bit allocation for each variable is contingent upon the precision requirements of the solution and the range of the decision variables.

In a problem involving

where:

•

•

•

•

The association between a binary string and its respective real value is defined as follows:

where

The initial step following the creation of the starting population is to compute the fitness value of each chromosome. The fitness function quantifies the extent to which an individual solution meets the optimization objective. Chromosomes exhibiting elevated fitness values signify more optimal solutions and are more probable to be chosen for reproduction in the subsequent generation.

In real-valued encoding, fitness evaluation is direct: each chromosome (a vector of real values) is inserted into the objective function to calculate its fitness value.

The evaluation method for alternative encoding schemes, including binary, permutation, or tree encoding [21], comprises two primary steps:

1. Analyzing the chromosome: The chromosome is initially converted into a set of significant decision variables.

2. Evaluating the decoded variables: After decoding the chromosome, the resultant values of the choice variables are inserted into the objective function.

The calculated objective function value is thereafter designated as the chromosome’s fitness value or adjusted accordingly if the situation necessitates scaling for maximizing or minimizing.

During this procedure, each chromosome within the population is allocated a fitness value. Chromosomes exhibiting superior fitness are afforded an increased likelihood of selection for subsequent evolutionary processes, including selection, crossover, and mutation.

After evaluation, a new population of chromosomes is obtained by applying genetic search operators one after another. The genetic search operators are selection, mutation, and crossover. The expected quality of a new population over all the chromosomes is better than that of the current population.

Selection is a crucial operator in GAs, determined by an evaluative criterion [30]. The most ideal chromosomes are picked for breeding and hybridization to generate a new generation that is anticipated to be better. During the selection phase, individuals are extracted from the sequence of chromosomes. The selection approach is the same for both real-valued representation and representation of encoding techniques. The selection is addressed with fitness that already comprises real values. The prevalent methods for chromosomal selection include roulette wheel, stochastic universal sampling, rank selection, tournament selection, and steady state selection [31,32].

(A) Roulette Wheel Selection

The parents’ choice in the roulette wheel method is based on their fitness worth. Here we have the most popular approach to fitness-proportionate selection. Every chromosome has a certain area on the imaginary roulette wheel that is proportionate to its fitness value. Consequently, the fittest individuals have a better shot at selection and take up more space on the wheel. However, the contribution to maintaining population diversity occurs through the fact that less fit chromosomes can be selected in this method [31].

The selection process of the roulette wheel can be articulated as follows:

Step 1. The fitness values

Step 2. Calculate, for every individual, the selection probability

Step 3. Construct a cumulative probability distribution as

Step 4. Spin the roulette wheel: Generate a random number

Step 5. Select a chromosome: Select chromosome

Step 6. Repeat: Continue the process until the desired number of parents is selected.

Fig. 6 depicts a roulette wheel representing five individuals with varying fitness levels. Each individual is allocated a sector (slice) of the wheel, with the dimensions of each sector being proportional to its fitness level. The likelihood of the pointer halting on a specific individual while the wheel is spun is contingent upon the dimensions of its segment.

Figure 6: Roulette wheel selection.

The graphic clearly indicates that Individual 3 occupies the most substantial segment of the wheel. Consequently, it possesses a higher likelihood of being chosen relative to the other persons. This illustrates the principle of fitness-proportionate selection: the greater the fitness of an individual, the higher the probability of its selection as a parent for the subsequent generation.

(B) Stochastic Universal Sampling (SUS) Selection

Stochastic Universal Sampling (SUS) was proposed by Baker (1987) [32] as an enhancement to the roulette wheel selection technique. Like roulette wheel selection, SUS allocates slots to individuals in accordance with their fitness values. Instead of using a single pointer to choose individuals, as in roulette wheel selection, SUS employs

The process operates as outlined below:

• The overall fitness of the population is computed, and individuals are allocated proportional positions on a line, like a roulette wheel.

• A random number

• The remaining

• All candidates who meet these criteria are selected concurrently.

This technique guarantees a more equitable distribution of selection pressure throughout the population. In contrast to roulette wheel selection, which may exhibit stochastic fluctuations leading to bias towards specific individuals, Stochastic Universal Sampling (SUS) offers a more reliable and equitable depiction of selection possibilities.

Benefits of SUS Selection:

• Ensures a selection result that aligns more closely with the anticipated proportion.

• Minimizes stochastic mistakes associated with roulette wheel selection.

• Guarantees equitable and uniform sampling throughout the population.

• Facilitates the choosing of multiple options with a single rotation of the wheel.

Consequently, SUS selection enhances the dependability of fitness-proportionate selection while maintaining population variety [31,32]. Fig. 7 illustrates Stochastic Universal Sampling (SUS) Selection, wherein the line is segmented into proportionate slots for five individuals, accompanied by evenly spaced red indicators demonstrating the process of several selections in a single rotation.

Figure 7: Stochastic Universal Sampling (SUS) selection.

(C) Rank selection method

Roulette wheel selection may become ineffective when the fitness values of chromosomes exhibit significant variation. In these instances, the individual exhibiting the best fitness typically prevails in the selection process, whereas other individuals possess minimal likelihood of getting selected. This results in the algorithm’s premature convergence.

The rank selection approach resolves this issue by allocating probability according to the rank of individuals instead of their absolute fitness ratings. The protocol is as follows:

The population is initially organized based on fitness values.

• Each individual is allocated a rank, with rank

• The chance of selection for each chromosome is assigned in proportion to its rank instead of its absolute fitness.

• Individuals are chosen based on these probabilities, guaranteeing that even less robust individuals maintain a reasonable opportunity for selection.

This approach inhibits quick convergence to a suboptimal solution and preserves variation throughout the population. While convergence may occur at a slower pace, the likelihood of premature stagnation is diminished.

Depiction:

- Fig. 8A: Roulette Wheel Selection—When the optimal individual possesses 80% of the fitness share, it occupies 80% of the wheel, significantly diminishing the probability of selection for other individuals.

- Fig. 8B: Rank Selection—The top individual is allocated a selection probability commensurate with its rank (e.g., 45%), whereas the remaining individuals are granted more equitable probabilities.

Figure 8: Rank selection.

Consequently, rank selection allocates selection probabilities more uniformly throughout the population, mitigating bias towards a singularly fit individual and promoting exploration of the solution space [32].

(D) Tournament Selection

Tournament selection was proposed by Goldberg (1989) [7] as a straightforward yet efficient selection technique in GAs. The procedure commences with the random selection of a subset of

• Select

• Evaluate their fitness values.

• Choose the individual exhibiting the highest fitness to serve as a parent.

• Continue the procedure until the requisite number of parents is selected.

The parameter

- An increased tournament size (

- A reduced tournament size preserves greater diversity but hinders convergence.

Consequently, the tournament size establishes an equilibrium during the search process between exploration and exploitation.

Nonetheless, tournament selection can become computationally intensive when the population size is substantial, as numerous tournaments are required to choose an adequate number of parents for reproduction [32].

Fig. 9 illustrates the Tournament Selection Example; the blue boxes represent individuals along with their fitness values, the red lines delineate the tournament participants, and the gold box indicates the winner (the individual with the highest fitness among the selected).

Figure 9: Tournament selection example.

(E) Steady-state selection

Steady-state selection is a GA replacement approach in which only a limited number of people are substituted for each generation, rather than creating an entirely new population. Generally, A limited number of parents (often two) are chosen from the population. Then the crossover and mutation result in the production of offspring. Finally, the least favorable members in the population are supplanted by the new progeny.

This methodology guarantees that the majority of the population persists into the subsequent generation, effective solutions endure for an extended duration, and diversity is preserved, averting early convergence [31–33].

Crossover is crucial genetic operators. It combines two chromosomes to produce children with those characteristics. Segment exchange between parent strings generates new strings. Starting crossover requires selecting a random cut point. The offspring is produced by combining segments from one parent to the left of the cut-point and the other to the right. Choosing the right crossover is critical to fixing an issue [34]. This section discusses crossovers between different encoding systems and optimization difficulties.

(A) Single Point Crossover

Single-point crossover is a prominent GA crossover method. This method randomly selects a chromosomal cut spot. Segments from two parents create offspring. The first set of offspring uses the segment to the left of the cut-point from one parent and the segment to the right from the other. The next set reverses this technique.

This strategy preserves genetic variety by giving all offspring a mix of genes from both parents. Cut point position greatly affects operator efficacy. A good placement can yield fitter offspring, while a bad cut-point can reduce solution quality. Despite this limitation, single-point crossover is a simple and effective technique for genetic variety [35]. Figs. 10–13 show single-point crossover in real-valued, binary, valued, and tree encodings, respectively.

Figure 10: Single-point crossover, real-valued encoding.

Figure 11: Single-point crossover, binary encoding.

Figure 12: Single-point crossover, value encoding.

Figure 13: Single-point crossover, tree encoding crossover.

(B) N-Point Crossover

De Jong’s 1975 N-point crossover expands the GAs’ single-point crossover technique [36]. This approach generates children by picking numerous cut locations along parent chromosomes and exchanging segments between them. Despite numerous cutting points, it follows single-point crossover: mixing genetic material from two parents to create new solutions.

Both parent chromosomes exchange segments between two specified loci in the two-point crossover. Increased cut points divide the chromosome into segments, which may disrupt well-adapted building blocks, subsets of genes that improve solution quality. Adding cut points may increase population diversity, but it may also destroy key building blocks, lowering algorithm performance. In N-point crossover, exploration (diversity) and exploitation (effective genetic structures) are trade-offs. Depending on the problem, choose the number of cut points carefully. Figs. 14 and 15 show real-valued and binary two-point crossover, respectively.

Figure 14: Two-point crossover, real-valued encoding.

Figure 15: Two-point crossover, binary encoding.

(C) Uniform Crossover

Uniform crossover, a GA recombination method, does not segment parent chromosomes. Use a binary crossover mask the same length as the parent chromosomes. Every parent pair receives a random mask.

The crossover mask’s matching bit selects each offspring gene from one of the parents during uniform crossover. Choose the gene from the first parent if the mask bit is 1 and from the second parent if it is 0. Thus, the offspring inherits genes from both parents, boosting genetic diversity while retaining their advantages. Figs. 16 and 17 show uniform crossover in real-valued and binary encoding, respectively [37].

Figure 16: Uniform crossover, real-valued encoding.

Figure 17: Uniform crossover, binary encoding.

(D) Three Parents Crossover

Randomly selecting three parent chromosomes from the population starts the three-parent crossover. If both parents have the same gene at a gene site, the baby inherits it. If the first two parents’ genes differ, the offspring will inherit the third parent’s gene at that place.

This crossover strategy works well for binary-encoded chromosomes because it keeps the first two parents’ traits while adding the third parent’s. This method may increase offspring variety and GA search efficiency by integrating data from three sources.

(E) Arithmetic

Arithmetic crossover is a real-valued (continuous) recombination operator. This approach produces offspring by linearly combining two parent chromosomes. The offspring gene for each chromosome gene is a weighted average of the parents’ genes.

To calculate the offspring gene y, use the formula:

Figure 18: Arithmetic crossover, binary encoding.

(F) Partially mapped Crossover

Partially Mapped Crossover (PMX) is a popular crossover operator for permutation encoding, especially in the Travelling Salesman Problem. Goldberg and Lingle introduced PMX [38]. This crossover approach maintains gene positions on parental chromosomes while ensuring offspring permutations.

PMX selects two parent chromosomes and randomly assigns two crossing spots. One progenitor passes on the genes between these two loci to the progeny. To preserve permutation structure and avoid gene duplication, position-by-position mapping populates the remaining genes. By mapping each gene precisely once in the progeny, PMX is ideal for combinatorial optimization problems where element sequence is critical. Fig. 19 shows a permutation-encoded partial mapped crossover.

Figure 19: Partially mapped crossover, permutation encoding.

(G) Order Crossover (OX)

Order Crossover (OX), described by Davis [39], is typical for permutation-encoding chromosomes. Instead of fixing gene sites, OX maintains gene relative order.

Choose two crossover spots on the parent chromosomes to start OX. The offspring inherit the genes between these two locations directly from the primary parent. Deriving the remaining genes from the second parent preserves their relative order and excludes genes from the copied section. This “slide” method ensures that the offspring retains maximal secondary parent ordering information and maintains a legal gene permutation.

Combinatorial optimization problems like the traveling salesman problem, where element sequencing is crucial for superior solutions, use Order Crossover extensively. Fig. 20 shows Order Crossover.

Figure 20: Illustration of the order crossover (OX).

(H) Cycle Crossover (CX)

Cycle Crossover (CX) is a permutation-encoding recombination operator. The basic idea is that each gene in the offspring must come from one parent, ensuring true permutations.

CX is about recognizing positional cycles between parents. The procedure begins with Parent 1’s first gene. We then look for the gene at the Parent 2 locus and trace it back to Parent 1. Continue alternating between parents until the cycle returns to Parent 1’s gene. The genes collected this way form a cycle.

Parent 1 replicates all cycle components, whereas Parent 2 fills the remaining spots to create the first offspring. In the second offspring, Parent 2 derives the cycle elements while Parent 1 populates the remaining slots. Thus, CX produces two offspring that follow the parents’ cycle. Fig. 21 shows Cycle Crossover.

Figure 21: Illustration of the Cycle Crossover (CX).

Premature convergence is the most significant challenge that the majority of optimization approaches face. When highly suited parent chromosomes in a population create many offspring that are identical to each other during the early stages of evolution, this phenomenon is known as premature convergence [40,41]. It is not likely that the crossover action of GA will produce children that are significantly different from their parents in this scenario. Mutation is a frequent operator that seeks to locate new points in the search space to examine in order to assist in the preservation of diversity within the population. In the process of selecting a chromosome for mutation, the values of certain sites within the chromosome are altered in a completely random manner. The purpose of this article is to discuss several sorts of mutations and to propose some of them for real-valued representation and encoding approaches [42].

(A) Insert Mutation

Insert mutation is a frequently employed mutation operator in permutation encoding, particularly in combinatorial optimization challenges like the Travelling Salesman Problem. The process operates in the following manner:

- Select two gene loci randomly from the chromosome.

- Eliminate the gene at the second designated location.

- Position this gene directly after the initial selected gene, adjusting the intermediary elements accordingly to facilitate the modification.

This mutation operator is advantageous as it maintains most of the original order and adjacent information within the chromosome, while simultaneously introducing diversity into the population [43]. By marginally modifying the sequence, insert mutation investigates novel permutations without significantly compromising the integrity of effective solutions. Fig. 22 illustrates the Insert Mutation.

Figure 22: Illustration of the insert mutation.

(B) Inversion Mutation

Inversion Mutation is a mutation operator used in GAs, particularly when using permutation encoding (as in the Traveling Salesman Problem) [44]. The method goes as follows:

- Select two chromosomal locations (genes) at random.

- Select the substring between these two locations.

- Reverse the order of genes in the substring.

- Insert the inverted substring back into the chromosome.

This operator has the advantage of assisting in the maintenance of building blocks (subsequences) of good solutions, as well as introducing diversity without significantly disturbing the chromosome structure. However, it can greatly disturb order information and may not be effective if the problem is not order-sensitive. Fig. 23 illustrates the Insert Mutation.

Figure 23: Illustration of the inversion mutation.

(C) Scramble Mutation

Scramble Mutation is another mutation operator used in GAs that encodes permutations (such as routing or sequencing problems) [6]. The method is as follows:

- Select two chromosomal locations at random.

- Select the substring between those two locations.

- Randomly shuffle (scramble) the genes inside the substring.

- Insert the scrambled substring back into the chromosome.

This operator has the virtue of providing high diversity by disturbing orders within a subsequence, and it is useful for avoiding local optima. However, it can be excessively destructive, destroying useful building blocks, necessitating a compromise with less disruptive mutations such as inversion. Fig. 24 illustrates the Scramble Mutation.

Figure 24: Illustration of the scramble mutation.

(D) Swap Mutation

Swap Mutation is among the most elementary mutation operators in GAs, particularly in the context of permutation encoding, as the Traveling Salesman Problem (TSP) [6]. The procedure is as follows:

- Randomly choose two genes from the chromosome.

- Exchange their locations.

- Preserve the remainder of the chromosome unaltered.

This operator possesses numerous advantages, including ease of implementation, Low disruption, and preservation of much of the original structure. However, it offers restricted exploration of the search space and may require integration with additional mutation operators (such as inversion or scrambling) to enhance diversity. Fig. 25 illustrates a swap mutation with permutation encoding, while Fig. 26 illustrates a swap mutation with tree encoding.

Figure 25: Swap mutation, permutation encoding.

Figure 26: Swap mutation, tree encoding mutation.

(E) Flip Mutation

Flip mutation is a mutation operator frequently employed in binary-encoded chromosomes inside GAs. The objective is to enhance genetic variety by modifying specific segments inside a parental chromosome [42]. The protocol is as follows:

- Selection of Parent Chromosome: A parent chromosome is chosen from the population. It is denoted as a sequence of 0 and 1 s.

- Mutation Chromosome Generation: A mutation chromosome is generated randomly, with each bit signifying whether the matching bit in the parent will be inverted.

- Bit Flipping: Each bit designated as 1 in the mutation chromosome results in the inversion of the corresponding parent bit (0 → 1 or 1 → 0).

- Outcome: The offspring’s chromosome is generated, exhibiting minor deviations from the progenitor to preserve genetic variety within the community.

Fig. 27 depicts a flip mutation utilizing binary encoding.

Figure 27: Flip mutation, binary encoding.

(F) Interchanging Mutation

Interchanging mutation is a mutation operator frequently employed in permutation encoding, when the sequence is significant, as in scheduling or routing challenges. This procedure involves selecting two random sites inside the chromosome and interchanging the genes at those positions to generate a new child [6].

This operator offers several advantages, including ease of implementation, preservation of solution feasibility in permutation-based problems, and the introduction of minor variants while maintaining the integrity of the majority of the chromosome. Fig. 28 illustrates the Interchanging mutation in real-valued encoding.

Figure 28: Interchanging mutation, real-valued encoding.

(G) Uniform Mutation

GAs that work with real or integer values make use of the uniform mutation operator. This operator substitutes a new randomly determined value within the gene’s permitted range (lower and upper boundaries) for the original value, as opposed to binary encoding (bit flipping) or permutation encoding (position swapping).

One of the many benefits of this operator is that it prevents premature convergence by substituting new values for old ones. Another advantage is that it is easy to construct and is useful for exploring new parts of the search space [42].

(H) Creep Mutation

Creep mutation is a mutation operator employed in real-valued GAs. Creep mutation differs from uniform mutation by making a minor change (either an increment or a decrement) to the value of a chosen gene, rather than replacing it with a completely random value from the range. This gradual transition facilitates a more regulated investigation of the search space.

This operator offers several advantages, including a balance between exploration and exploitation. More effective in refining solutions than uniform mutation and diminishes the likelihood of significant disruptive alterations [6].

The primary concept of the chromosome-repairing scheme involves converting infeasible solutions into feasible ones using targeted repairing strategies [45]. Any chromosome that denotes an infeasible solution is termed an infeasible chromosome. Repairing a chromosome involves transforming an infeasible chromosome into a feasible one through the application of an appropriate repair procedure. The selected strategy frequently relies on the presence of a deterministic repair mechanism. Numerous repair methods have been examined, with the following three being the most notable [46]:

(A) Lamarckian Approach

The Lamarckian approach involves the genetic modification of an infeasible solution to render it feasible. Upon repair, the viable chromosome substitutes the infeasible one within the population and remains engaged in subsequent evolutionary processes. This method is straightforward and guarantees the retention of only viable solutions within the evolutionary framework [47].

(B) Baldwinian Approach

The Baldwinian approach represents a less destructive methodology that integrates learning and evolution. This method involves repairing infeasible solutions solely during evaluation, without making permanent alterations to the population. The repaired version is utilized to compute fitness, whereas the original chromosome remains unaltered. Empirical and analytical studies demonstrate that this approach decreases the convergence speed of the evolutionary algorithm while enhancing the likelihood of achieving the global optimum through the preservation of diversity [48].

(C) Annealing Approach

The repair strategy based on annealing is derived from the principles of simulated annealing. This method temporarily accepts infeasible solutions with a specified probability. In the initial phases of the algorithm, the likelihood of accepting infeasible solutions is comparatively elevated, but it diminishes gradually over time. An infeasible solution, if rejected, is rectified through a specified procedure. This probabilistic mechanism facilitates exploration within the search space before progressively concentrating on feasibility and optimization.

In each of the three approaches, repairing a solution generally entails exchanging the elements that contravene the problem constraints [49].

3.6 Elitism and Population Update (Migration)

During this step, the newly produced offspring are included in the current population, and a new population is formed by choosing the people who are the most physically fit from both the parent group and the offspring group. This mechanism ensures the retention of optimal solutions during the evolutionary process [50].

To improve performance, the principle of elitism is utilized, whereby the top chromosomes of the current generation are retained unchanged for the subsequent generation. The algorithm ensures that the quality of solutions remains stable across generations by reserving elite individuals while still promoting diversity and exploration within the rest of the population.

The termination condition delineates the cessation criterion of the GA. The algorithm concludes when one of the subsequent requirements is met:

- The algorithm terminates upon reaching a predetermined number of generations, irrespective of the discovery of the best solution.

- Population convergence refers to the phenomenon wherein all individuals within a population become identical or almost indistinguishable. At this juncture, crossover procedures have no additional impact, as they are incapable of generating new variations within the population.

In the absence of both circumstances, the algorithm will proceed to build a new population through the processes of selection, crossover, mutation, and replacement until the termination criteria are fulfilled.

The fundamental variables in GA are the probability of crossover, mutation, and population size [8].

4.1 Crossover Probability (

The ratio of the number of offspring produced by the crossover operator in each generation, expressed as a percentage of the overall population size, is the definition of the crossover probability.

The process of crossover is carried out with the assumption that the chromosomes that are produced will have characteristics that are superior to those of the population that is already there. There is a significant relationship between the magnitude of the crossover probability and the efficiency of GAs. A larger crossover probability makes it easier to explore a wider universe of potential solutions and reduces the possibility of arriving at a solution that is less than ideal. On the other hand, when the crossover probability is very high, a significant amount of computing effort is wasted on portions of the solution space that do not show any signs of being promising [21].

In the absence of mutation, offspring are produced by the population that is generated by crossover, and they do not undergo any changes. These chromosomes change because of the mutation process. The mutation probability is defined as the ratio of the number of offspring produced by the mutation operator in each generation to the total number of offspring in the population. This ratio is calculated by every generation.

To avoid GAs from convergently reaching a local optimal solution, mutation is an extremely important factor. There is a correlation between the value of mutation probability and the efficacy of GAs. Excessively low Pm levels result in the inactivation of many advantageous genes. In contrast, if the value is very high, the offspring may have a lessened similarity to the parents, the algorithm will be unable to learn from the search history, and there will be a substantial amount of random disturbance [22].

The population size is a basic parameter in GA. It ascertains the quantity of chromosomes (solutions) in each generation.

A requisite minimum of chromosomes is essential for the algorithm to successfully traverse the search space and converge on the ideal solution. A diminutive population size restricts the GA’s crossover opportunities, resulting in less exploration of the search space and an increased likelihood of premature convergence.

Conversely, an excessively large population size substantially escalates the computational expenses. Research indicates that above a specific threshold—primarily determined by the encoding method and the problem’s characteristics—utilizing excessively large populations does not enhance performance or expedite convergence relative to a moderately sized population [51].

Consequently, the selection of population size must reconcile diversity (to investigate the search space) and efficiency (to minimize computational burden).

4.4 Parameter Sensitivity Analysis

The main parameters of a GA have a significant impact on its performance. The crossover probability (

Careful monitoring of the mutation probability (

To balance computational cost and diversity, population size (

There are intricate interactions between these parameters. Adjusting crossover, mutation, and population size based on the kind of problem—for example, lowering PC with greater PM for multimodal problems or raising

5.1 Transition from Theory to Practice

The prior sections have delineated the theoretical underpinnings of the proposed framework, outlining its mathematical architecture, algorithmic processes, convergence attributes, and computational features. These analyses elucidate how fundamental principles regulate search behavior, the balance between exploration and exploitation, parameter sensitivity, and solution quality. The theoretical insights elucidate the strengths and limitations of the strategy across various problem landscapes, including limited, nonlinear, and high-dimensional optimization scenarios.

The discourse on model formulation and operator design illustrates the role of structural components in enhancing robustness and adaptability. The convergence study offers a conceptual insight into stability and performance patterns, whilst complexity concerns delineate the computing viability of large-scale implementations. These theoretical findings not only validate the methodological selections but also set expectations about efficiency, scalability, and reliability.

This section shifts from theoretical concepts to practical applications by analyzing the manifestation of these features in real-world scenarios. The practical applications support the theoretical assertions, demonstrate the algorithm’s adaptability across several domains, and furnish empirical evidence of its efficacy. The study transitions from conceptual formulation to applied performance evaluation in a systematic and logically related fashion.

5.2 Mathematical and Function Optimization

GAs have been extensively utilized in mathematical and function optimization, especially for nonlinear, multimodal, and discontinuous problems where gradient-based approaches frequently prove ineffective. By representing variables in binary or real formats, GAs can efficiently navigate extensive search spaces and circumvent entrapment in local minima. They are particularly adept at benchmark functions like the Rosenbrock and Ackley functions, in addition to practical engineering challenges such as aerodynamic form optimization [52–55].

5.3 Combinatorial Optimization

In combinatorial optimization, GAs have exhibited robust efficacy in managing extensive and intricate discrete solution spaces. Prominent applications encompass the Travelling Salesman Problem (TSP), the Vehicle Routing Problem (VRP), the Knapsack Problem, and Job-Shop Scheduling [7]. Utilizing permutation encoding and problem-specific operators, GAs maintain solution feasibility while effectively seeking high-quality solutions [6].

5.4 Engineering Design Optimization

Engineering design optimization constitutes a significant area of GA applications. In structural and civil engineering, GAs have been employed for the optimization of size and topology in trusses and beams [56]. Aerospace engineering utilizes them for aerodynamic wing and aircraft trajectory design [57], whereas electrical and electronic engineering applies them in VLSI circuit layout and antenna design [58]. Their capacity to address intricate, nonlinear, and simulation-based issues renders them formidable instruments, despite the persistent challenge of computing expense.

5.5 Control Systems Optimization

GAs are extensively utilized in the optimization of control systems for the calibration of controllers, including PID, adaptive, and fuzzy controllers. They have been employed to optimize parameters for multivariable systems, robotic trajectory planning, and load frequency regulation in power systems [25]. In contrast to traditional tuning approaches, GAs do not necessitate gradient information, rendering them particularly efficient for nonlinear or uncertain systems. Nevertheless, fitness evaluation is resource-intensive due to its reliance on system simulations.

5.6 Machine Learning and Artificial Intelligence

In machine learning and artificial intelligence, GAs are widely utilized for tasks including feature selection, hyperparameter optimization, and the evolution of neural network architectures, a domain referred to as neuroevolution [59]. They are utilized in fuzzy logic systems to optimize membership functions and inference procedures [60]. Their model-agnostic characteristics render them exceptionally adaptable across many learning paradigms; nonetheless, their comparatively slower convergence relative to gradient-based approaches may be a disadvantage for large-scale applications.

5.7 Operations Research and Logistics

GAs have been effectively utilized in operations research and logistics for labor scheduling, supply chain optimization, facility location issues, and vehicle routing [61]. Their capacity to manage restrictions and diverse decision variables enables them to address large-scale industrial challenges. Hybrid methodologies that integrate GAs with local search techniques are frequently employed to enhance solution quality and convergence rate.

5.8 Multi-Objective Optimization

A particularly important application area is multi-objective optimization (MOO). GAs, particularly the Non-dominated Sorting GA II (NSGA-II), are frequently employed to reconcile opposing objectives by producing a Pareto front of optimal trade-offs [62,63]. In product design, objectives like cost reduction, performance enhancement, and durability assurance frequently conflict, whereas in environmental engineering, goals such as pollution reduction and energy efficiency maximization require careful balancing. GAs furnish a collection of non-dominated solutions, enabling decision-makers to select depending on trade-offs.

5.9 Bioinformatics, Healthcare, and Finance

In addition to engineering and industry, GAs are progressively utilized in bioinformatics and healthcare. They are employed for DNA sequence alignment, molecular docking in pharmacological design, radiotherapy treatment planning, and medical picture segmentation [64]. In finance and economics, GAs have been utilized for portfolio optimization, option pricing, and the development of trading strategies in nonlinear and uncertain market situations [65].

5.10 Summary of Practical Impact

GAs are adaptable and resilient optimization instruments that have demonstrated efficacy in various domains. Their primary advantage is in addressing complicated, nonlinear, and multimodal optimization problems that conventional deterministic approaches find challenging. However, issues such as elevated computing expenses, parameter optimization, and sluggish convergence in certain instances must be resolved, frequently by hybridization with alternative metaheuristics or surrogate models [62].

5.11 Quantitative Results and Case Studies

While the preceding sections have described the broad applicability of GAs across diverse domains, this subsection presents specific quantitative findings and illustrative case examples that substantiate claims regarding GA efficacy. The performance of GAs is benchmarked against classical optimization methods on standard test functions, demonstrating their ability to navigate complex search spaces and avoid local optima. Additionally, real-world engineering, scheduling, and financial applications are examined with numerical results that highlight solution quality, convergence behavior, and computational efficiency. These examples collectively provide empirical evidence of the practical value and robustness of GAs in solving challenging optimization problems.

- Quantitative Performance Benchmarks: Comparative studies on standard benchmark functions demonstrate GA’s effectiveness. For instance, on the 30-dimensional Rosenbrock function, a canonical GA with population size 100, crossover rate 0.8, and mutation rate 0.01 achieves a mean best fitness of 0.0032 ± 0.0011 after 500 generations, compared to 2.45 ± 0.87 for gradient descent and 0.89 ± 0.23 for random search. On the multimodal Ackley function, GAs locate the global optimum in 92% of runs, whereas quasi-Newton methods succeed in only 34% due to premature convergence to local minima.

- Case Example—Truss Structure Optimization: Rajeev and Krishnamoorthy [56] applied a GA to minimize the weight of a 10-bar truss subject to stress and displacement constraints. The GA reduced weight by 23.4% compared to the baseline design, achieving a final weight of 4892 vs. 6385 kg. The optimization required 8500 function evaluations, while a gradient-based method failed to converge due to discrete member sizes.

- Case Example—Job-Shop Scheduling: In a benchmark 10 × 10 job-shop problem (Fisher and Thompson), a permutation-encoded GA with order crossover achieved a makespan of 950 time units, within 3.2% of the optimal solution (920), after 50,000 evaluations [61]. This outperformed priority rule heuristics (average makespan 1124) and was competitive with specialized tabu search (942) while requiring less problem-specific tuning.

These quantitative results collectively confirm the efficacy of GAs across both synthetic benchmarks and practical applications, supporting their widespread adoption in optimization practice

6 Comparative Analysis: GAs and Other Metaheuristics

This section provides a systematic comparison of the strengths and limitations of GAs with other prevalent metaheuristic optimization techniques. The aim is not to determine a universally optimal approach, but to elucidate the comparative advantages, constraints, and appropriateness across many issue categories.

6.1 Advantages and Disadvantages of GA

GAs serve as an effective global approach for addressing optimization challenges. GAs possess a significant advantage over classical methods, as they do not necessitate linearization assumptions or the computation of partial derivatives. Nonetheless, in spite of these benefits, GA possesses certain disadvantages. Occasionally, GAs encounter difficulties in identifying the precise global optimum, and there is no assurance of discovering the optimal solution. Furthermore, GA may require an extended duration to assess the individuals. Here, we present several of its advantages and cons [66].

- Advantages of GA

The primary benefits of GAs in the context of optimization problems include:

- Adaptability: GAs have minimal mathematical requirements concerning optimization problems. GAs are capable of addressing various objective functions and constraints, including linear and nonlinear, differential and nondifferential, as well as discrete search spaces.

- Robustness: The application of GA operators enhances its effectiveness in global search, in contrast to the predominantly local search capabilities of conventional techniques. GAs exhibit greater efficiency and robustness in identifying optimal solutions while minimizing computational effort compared to traditional techniques.

- Flexibility: GAs offer significant adaptability for integration with other optimization techniques, facilitating efficient implementations tailored to specific problems.

Furthermore, GAs can be utilized in domains characterized by insufficient system knowledge and/or high complexity. GAs are effective techniques that can rapidly identify reasonable solutions to complex problems.

- Disadvantages of GA

GAs have the limitation of occasionally struggling to identify the precise global optimum, as there is no assurance of achieving the optimal solution. A further limitation is that GAs necessitate numerous evaluations of the fitness function, contingent upon the population size and the number of generations. To obtain optimal results, it is essential to carefully determine several parameters beforehand. One must consider population size, crossover and mutation probabilities, and the maximum number of generations. Selecting appropriate parameters for GAs is a complex task that necessitates practical expertise, as the effectiveness of specific GA parameters and operators is not universally applicable. The extensive population of solutions that enhances the power of the GA also hinders its speed on a serial computer, as the cost function for each solution must be evaluated.

6.2 Comparative Analysis with Other Metaheuristics

Particle Swarm Optimization (PSO), Differential Evolution (DE), Simulated Annealing (SA), and Ant Colony Optimization (ACO) are four popular metaheuristic algorithms discussed in Table 3, which compares them to GAs. The evaluation focuses on key performance factors, including the strengths and limitations of each algorithm, as well as where they are commonly used, making it easier to understand how they compare to each other. No singular optimization approach excels universally across all problem classes, a principle articulated by the No Free Lunch theorem. Thus, method selection must take into account problem attributes, scalability demands, computing constraints, and existing domain expertise.

However, GAs frequently demonstrate delayed convergence during later phases of evolution and are prone to premature convergence, particularly in complicated or multimodal search spaces [67]. Despite general recognition for their resilience and global search power, GAs are not without their drawbacks. As a result of these deficiencies, a significant amount of research has been conducted on hybrid GAs (HGAs). The purpose of these algorithms is to combine the exploration power of GAs with the exploitation efficiency of complementary optimization approaches. Different levels of implementation are possible for hybridization, such as integration at the operator level, collaboration at the population level, or coordination at the algorithm level. HGAs have the potential to dramatically improve convergence speed, solution accuracy, and robustness by utilizing methods that are complementary to one another. Several significant hybridization concepts and the performance advantages that have been observed for them across a variety of application domains are discussed in this section.

7.1 Memetic Algorithms (GAs + Local Search)

In the realm of high-level algorithms (HGAs), memetic algorithms (MAs) are among the most intensively researched classes. To improve the performance of individuals following genetic operations such as crossover and mutation, they incorporate local search (LS) algorithms into the GA framework [68–70]. The Learning System (LS) component functions as a method for learning or refining, making it possible for humans to take advantage of local neighborhood information that is otherwise unavailable to standard algorithms. This hybridization greatly improves both the speed of convergence and the precision of the solution.

However, achieving a balance between local exploitation and global exploration opportunities is crucial for the success of MAs. Using too much local search (LS) might make the population too similar and reduce diversity, while not using enough LS could make the benefits of hybridization ineffective. Because of this, researchers have proposed adaptive and selective LS strategies, in which local search is only applied to elite individuals or may be activated depending on convergence indicators.

MAs have shown that they are capable of performing at a state-of-the-art level on classical combinatorial optimization problems, such as the Traveling Salesman Problem (TSP), the Quadratic Assignment Problem (QAP), and graph partitioning issues [71,72]. Extending memetic frameworks to continuous and mixed-integer optimization, as well as multi-objective problems, has been the focus of more recent research [73]. Based on population diversity or fitness improvement rates, adaptive memetic algorithms have demonstrated greater robustness and scalability [74]. These algorithms dynamically modify the LS intensity, frequency, or neighborhood size of the network.

When applied to multimodal landscapes, Particle Swarm Optimization (PSO) frequently experiences stagnation and premature convergence, although it is well-known for its rapid convergence and straightforward implementation. The goal of GA–PSO hybrids is to combine the diversity-preserving operators of GA with the social learning mechanism that is considered efficient in PSO [75]. Examples of common hybridization tactics include applying GA operators (crossover and mutation) to restore diversity into the PSO swarm or using PSO’s velocity update equations to direct GA offspring production. Both of these strategies serve as examples of hybridization tactics. There are mainly three categories that hybrid designs fall into: (1) In sequential hybridization, GA conducts a comprehensive global search while PSO refines promising solutions; (2) PSO updates are periodically applied to a subset of individuals from GA within each generation. This procedure is an example of embedded hybridization and (3) a hybridization technique known as parallel hybridization, in which GA and PSO evolve simultaneously and share information [76].

Extensive benchmarking experiments have shown that GA–PSO hybrids perform better than standalone GA and PSO algorithms when it comes to solving high-dimensional, multimodal, and constrained optimization problems [77]. The utilization of these hybrids has proven to be effective in engineering design, power system optimization, parameter identification, and machine learning model tuning, particularly in situations where gradient information is either absent or incorrect [78].

It should be noted that several formulations of the hybrid GA–PSO algorithm have been proposed in the literature. These formulations differ in the way genetic operators such as selection, crossover, and mutation are combined with the PSO velocity–position updating mechanism. Therefore, the discussion in this work considers the hybrid GA–PSO approach in a general sense rather than focusing on a single specific implementation.

7.3 GA with Simulated Annealing (SA)

One characteristic of the trajectory-based metaheuristic known as simulated annealing (SA) is its probabilistic acceptance of inferior solutions, which enables effective escape from local optimal solutions. The incorporation of SA into the evolutionary cycle allows GA–SA hybrids to take advantage of this special characteristic. In most cases, SA is utilized either as a mutation operator that is controlled by a temperature schedule or as a post-processing refinement phase that is applied to elite people [79].

In situations where standard GA operators may have difficulty, this hybridization is particularly useful for addressing issues that include fitness landscapes that are either harsh or deceptive. Very large-scale integration (VLSI) floorplanning, job-shop scheduling, and layout optimization challenges are some examples of applications [80]. The most significant obstacle that GA–SA hybrids must overcome is the selection of an adequate cooling schedule. An extremely aggressive cooling schedule will inhibit exploration, while an excessively slow cooling schedule can raise the cost of computing [81].

7.4 GA with Ant Colony Optimization (ACO)

Through its constructive solution-building process that is directed by pheromone trails, Ant Colony Optimization (ACO) can perform very well in combinatorial and discrete solutions to optimization problems [82]. Hybrids of GA and ACO take advantage of the global search and recombination capabilities of GA in conjunction with the powerful local constructive heuristics presented by ACO. In several implementations, GA is responsible for producing high-quality initial solutions or pheromone matrices for ACO, while ACO is responsible for refining or repairing galactic offspring [83].

For challenges involving routing, scheduling, and network design, this collaborative technique has proven to be very effective. In the case of vehicle routing challenges, for instance, GA may be responsible for client assignment and clustering, whereas ACO may optimize route sequencing [84]. There have been reports of hybrid models that are the same for supply chain optimization, timetabling, and the architecture of telecommunications networks [85].