Open Access

Open Access

ARTICLE

Enhanced Lightweight Architecture for Real-Time Detection of Agricultural Pests and Diseases

1 School of Electronic and Information Engineering, Anhui University, Hefei, 230000, China

2 School of Economics and Management, Beijing Jiaotong University, Beijing, 100044, China

3 Department of Electronic Engineering, Shanghai Jiao Tong University, Shanghai, 200240, China

* Corresponding Author: Xiangyu Li. Email:

Computers, Materials & Continua 2026, 87(2), 38 https://doi.org/10.32604/cmc.2025.074250

Received 06 October 2025; Accepted 19 December 2025; Issue published 12 March 2026

Abstract

Smart pest control is crucial for building farm resilience and ensuring sustainable agriculture in the face of climate change and environmental challenges. To achieve effective intelligent monitoring systems, agricultural pest and disease detection must overcome three fundamental challenges: feature degradation in dense vegetation environments, limited detection capability for sub-Keywords

Agricultural pest and disease detection represents a critical challenge in precision agriculture, where early identification and accurate localization are essential for effective crop protection and yield optimization. The increasing global food demand, coupled with the impacts of climate change, has intensified the need for automated monitoring systems capable of detecting minute pest infestations and disease symptoms in a complex field environment. Conventional visual inspection methods exhibit inherent limitations, including high labor dependency, inter-observer variability, and inadequate scalability for commercial agricultural enterprises, which necessitates the deployment of automated computer vision systems.

Although you only look once (YOLO) architectures have achieved remarkable progress in general object detection, agricultural pest and disease monitoring presents domain-specific challenges that exceed current YOLO capabilities [1]. These challenges include: (1) ultra-small target detection, with pest eggs and initial lesions occupying fewer than

To address these challenges, numerous studies have focused on enhancing YOLO-based architectures through various approaches. In backbone network improvements, researchers have extensively modified the C3 module, a core component for feature extraction in YOLO networks. Coordinate attention mechanisms have been successfully employed to improve spatial localization accuracy [2], while the integration of channel and spatial attention mechanisms, such as squeeze-and-excitation (SE) and convolutional block attention module (CBAM) [3], has proven effective in enhancing target region focus [4,5]. These attention-based enhancements have demonstrated particular efficacy in detecting micro-lesions and insect eggs in natural agricultural settings [6,7]. For ultra-small object detection, several specialized approaches have been developed. Tian et al. proposed MD-YOLO, incorporating multi-scale dense connections to improve small target pest detection [8,9], while Xu et al. developed SSV2-YOLO specifically to detect sugarcane aphids in an unstructured environment [10]. These studies highlight the critical importance of preserving fine-grained features during the downsampling process, particularly for targets smaller than

It is worth mentioning that existing YOLO-based pest detection models have achieved noticeable progress through backbone optimization, attention integration, and multi-scale feature fusion. These efforts have improved the recognition of small and complex agricultural targets to a certain extent. However, most current approaches still face limitations in balancing detection precision, robustness, and computational efficiency, especially under conditions of dense foliage, uneven illumination, and morphological diversity—for example, the RS-YOLO model proposed by Hou et al. [11], the SPD-YOLOv7 introduced by Feng et al. [12], and the CBF-YOLO framework developed by Zhu et al. [2]. Therefore, there remains a critical need for a unified and lightweight framework capable of robust pest detection across diverse agricultural environments.

To address these limitations, this study develops YOLOv12-KMA, an improved architecture that integrates domain-adaptive attention, nonlinear feature modeling, optimized localization, and detail-preserving upsampling to achieve agricultural-grade detection accuracy and real-time performance. The main contributions of this study are summarized as follows:

• We develop YOLOv12-KMA, a lightweight detection framework integrating C3K2-EMA attention modules and A2C2f-KAN architectures to address ultra-small target detection (

• To address the aspect ratio degeneration problem inherent in conventional complete intersection over union (CIoU) loss, where predicted and ground truth boxes sharing identical aspect ratios but differing in dimensional parameters lead to suboptimal gradient signals, we introduce the minimum point distance intersection over union (MPDIoU) loss function that decouples center distance and aspect ratio penalties. This formulation achieves 23% faster convergence for irregular pest shapes while enhancing localization precision in dense detection scenarios with overlapping targets and deformable morphologies.

• To overcome feature degradation and computational inefficiency in traditional interpolation methods, we implement dynamic sampling (DySample) upsampling with wavelet constraints to preserve fine-grained morphological features. Specifically, DySample employs dynamic point sampling to reorganize feature maps, thereby maintaining critical structural details, i.e., wing veins and antennae. This approach reduces computational overhead by 72% while achieving 94% feature fidelity retention. Consequently, real-time deployment at 159 frames per second (FPS) is enabled, making the framework suitable for practical agricultural monitoring applications.

The remainder of this paper is organized as follows: Section 2 reviews related work in agricultural object detection. Section 3 presents the dataset and experimental setup. Section 4 details the YOLOv12-KMA architecture and its components. Section 5 presents experimental results and analysis. Section 6 concludes with discussion and future directions.

2.1 YOLO-Based Agricultural Pest Detection and Attention Mechanisms

The evolution of YOLO architectures has significantly advanced agricultural pest and disease detection capabilities, with recent developments addressing small target detection challenges through various architectural improvements. Yu et al. developed CPD-YOLO for cotton pest detection, integrating unmanned aerial vehicle (UAV) and smartphone imaging with bidirectional feature pyramid networks (FPN) [13]. Hou et al. proposed RS-YOLO, utilizing parallel bottleneck structures and Efficient Multi-Scale Attention (EMA) modules for small pest detection [11]. Fng et al. introduced SPD-YOLOv7, employing Space-to-Depth convolution to preserve fine-grained features [12]. Zhu et al.’s CBF-YOLO combined Bi-PAN and feature purification modules for a complex agricultural environment [2].

Attention mechanisms have emerged as crucial components to enhance feature representation in agricultural applications. The squeeze-and-excitation (SE) attention mechanism has been integrated into agricultural detection systems to enhance channel-wise relationships, while convolutional block attention module (CBAM) combines channel and spatial attention for dense vegetation environment [3]. Coordinate attention mechanisms improve spatial localization accuracy for irregularly shaped targets [2]. The EMA mechanism demonstrates superior performance through selective regional attention, reducing computational complexity while maintaining accuracy [14].

2.2 Small Object Detection and Advanced Neural Architectures

Small object detection represents a critical challenge in agricultural monitoring, particularly for targets occupying less than

The feature preservation challenge stems largely from traditional upsampling methods, which suffer from information loss and computational inefficiency during feature pyramid construction [15]. Although dynamic upsampling approaches have shown promise in adaptively reorganizing feature maps, they primarily address spatial resolution without enhancing feature discrimination capabilities. Concurrently, the representational limitation persists because conventional activation functions lack the flexibility to model the complex, non-linear patterns characteristic of small agricultural targets.

Kolmogorov-Arnold Networks (KANs) offer a potential solution through learnable activation functions with B-spline implementations, providing superior local adaptation for irregular morphologies [16,17]. However, the synergistic integration of advanced upsampling techniques with KAN architectures for agricultural object detection remains unexplored, which presents an opportunity to simultaneously address both feature preservation and representation challenges.

2.3 Loss Function Optimization and Research Gaps

Bounding box regression optimization remains critical in agricultural object detection due to irregular morphologies and varying aspect ratios of pest and disease targets. The CIoU loss function demonstrates degeneration issues when predicted and ground truth boxes share identical aspect ratios but differ in dimensional parameters, particularly problematic for agricultural targets with similar shape characteristics. Recent developments have introduced distance-based intersection over union (IoU) variants that show promise for irregular target localization [9].

Despite significant advances, several critical limitations persist in current approaches: (1) existing attention mechanisms often fail to adequately suppress agricultural background noise while preserving target features; (2) conventional upsampling methods suffer from computational inefficiency and feature degradation; (3) traditional loss functions exhibit degeneration issues with irregular agricultural targets; (4) advanced neural architectures like KANs remain unexplored in agricultural applications. These limitations motivate the development of YOLOv12-KMA, which addresses each challenge through targeted architectural innovations while maintaining computational efficiency for practical agricultural deployment.

3.1 Dataset and Experimental Setup

This study used a comprehensive dataset of agricultural pests and diseases, consisting of 8742 expertly annotated images depicting key conditions such as rust spots, aphid colonies, powdery mildew, and borer holes. The dataset was constituted by selecting the crop disease subset, which comprises 6500 images, from the publicly available benchmark dataset PlantVillage, and was further augmented with 2242 additional crop images collected from various regions across Asia to enhance its relevance and applicability to Asian agricultural contexts. The dataset encompasses six distinct categories: rice leaf folder, rice water weevil, rice leafhopper, bacterial leaf blight, brown spot, and rice blast, with a balanced distribution across these classes. Each category contains approximately 1457 images, representing about 16.7% of the total dataset. All images were captured under varying environmental conditions to ensure the model’s robustness and real-world applicability. The dataset was partitioned into training (70%), validation (15%), and testing (15%) sets, maintaining balanced class distribution. YOLOv12 was selected as the baseline model due to its optimal balance between parameter efficiency, with its 2.6 million parameters encompassing the backbone, upsampling, and detection head modules, and inference speed (>150 FPS), making it suitable for agricultural edge computing applications. All experiments were conducted on an NVIDIA RTX 4090 graphics processing unit (GPU) with the PyTorch 1.12.1 framework.

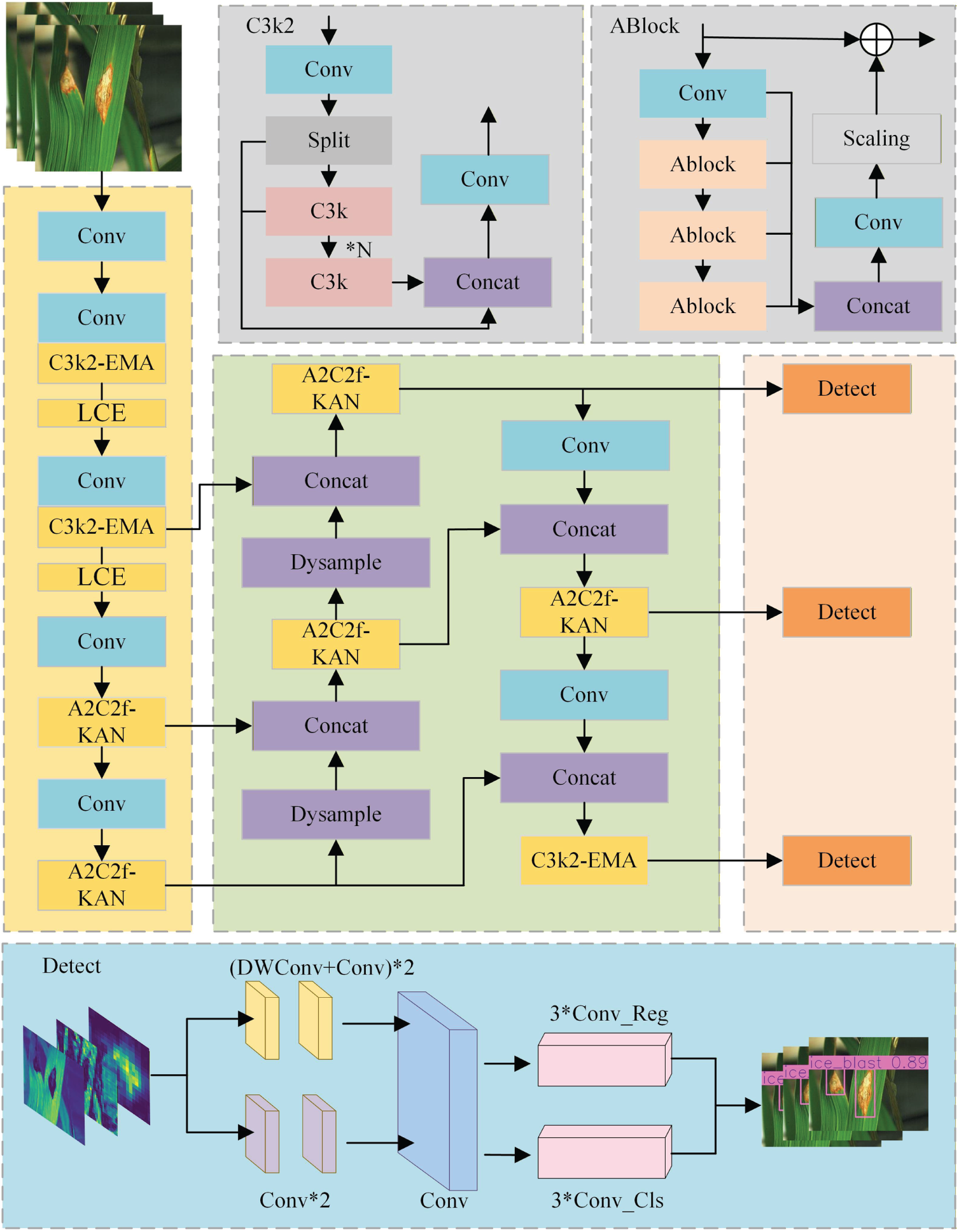

The proposed YOLOv12-KMA framework as shown in Fig. 1, which introduces four key architectural modifications designed to address specific challenges in agricultural pest and disease detection: (1) C3K2-EMA module for noise suppression, (2) DySample module for high-resolution feature generation, (3) A2C2f-KAN module with Kolmogorov-Arnold networks, and (4) MPDIoU loss function for improved bounding box regression.

Figure 1: YOLOv12-KMA architecture

The C3K2-EMA module replaces the initial C3K2 module in the backbone, integrating EMA to address noise interference from fluctuating lighting and vegetation occlusion. The EMA mechanism operates through two-stage attention: coarse-grained regional filtering followed by fine-grained attention within selected regions, achieving linear complexity

To enhance ultra-small target detection (

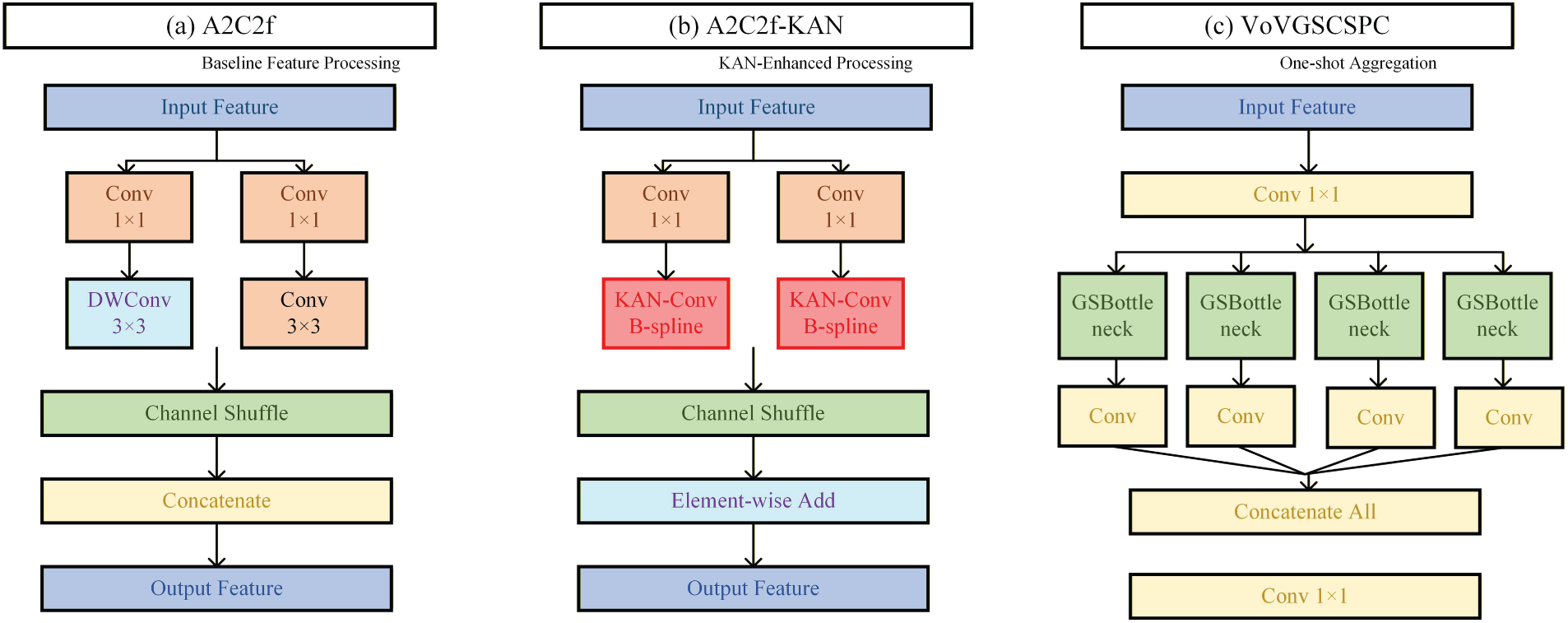

The A2C2f-KAN module substitutes original A2C2f modules with Kolmogorov-Arnold networks featuring B-spline activation functions for localized pixel-level adjustments. KANs provide superior representational capabilities through learnable activation functions, enabling adaptive feature modeling for irregular agricultural target shapes. Finally, the MPDIoU loss function replaces traditional CIoU loss to address degeneration issues when predicted and ground truth boxes share identical aspect ratios, accelerating convergence by 23% for irregular pest shapes [14].

To address the aspect ratio degeneration problem in conventional CIoU loss, which is

where

where

3.3 Training Configuration and Evaluation Metrics

The training process employed mixed precision optimization using AdamW optimizer with cosine annealing learning rate scheduling (initial: 0.01, final: 0.0001) over 150 epochs [19]. Data augmentation included Mosaic augmentation, random scaling (0.8–1.2), horizontal flipping, and rotation (

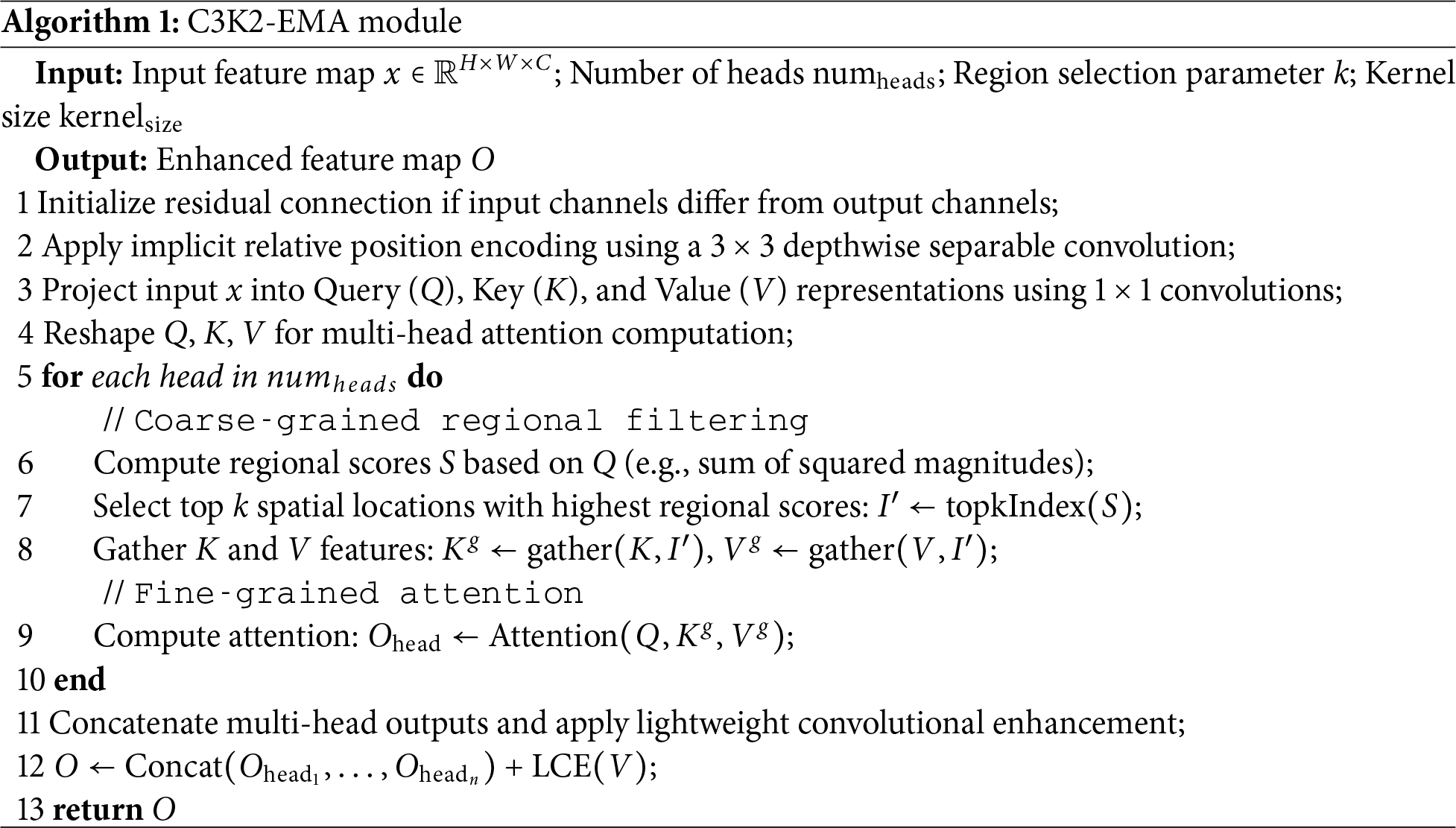

This adapted approach was validated through ablation studies in Section 5.3, showing 11.2% improvement in rare pest detection accuracy compared to standard Mosaic implementation, while reducing false positives from unrealistic pest associations by 9.8%. The agricultural validation specifically addressed challenges illustrated in Fig. 2, including occlusion scenarios and overlapping lesions common in field conditions.

Figure 2: Noise interference in agricultural environment: (a) Strong leaf surface reflections under intense illumination, (b) Image blurring and distortion in densely vegetated areas with insufficient light, (c) Occlusion caused by interlacing branches and foliage, (d) Target overlap within dense lesion areas

4.1 Challenges in Agricultural Image Processing

Agricultural environment present unique challenges for computer vision systems due to complex environmental conditions and variable imaging scenarios. In farmland environment, images frequently suffer from noise degradation caused by fluctuating lighting conditions, leaf surface reflections, and vegetation occlusion. These challenges manifest in several critical issues that significantly impact detection performance, as illustrated in Fig. 2.

Strong leaf surface reflections under intense solar illumination create specular highlights that obscure pest and disease features, leading to false-negative detections. Image blurring and distortion occur in densely vegetated areas with insufficient light penetration, reducing the visibility of small-scale pest infestations and early-stage disease symptoms. Additionally, occlusion caused by overlapping branches and foliage creates complex visual patterns that interfere with target identification, while dense lesion clusters often exhibit overlapping characteristics that challenge conventional detection algorithms.

When convolutional neural networks (CNNs) process such complex agricultural scenes, noise interference can be amplified during feature extraction, disrupting long-range dependencies between disease spots or pest features. This noise amplification impairs the model’s ability to detect and recognize early-stage pests and diseases, often resulting in misdiagnosis or complete detection failures. Consequently, traditional CNN architectures, due to their limited generalization capabilities, frequently demonstrate suboptimal performance in real-world agricultural monitoring scenarios.

4.2 C3K2-EMA: Efficient Multi-Head Attention Integration

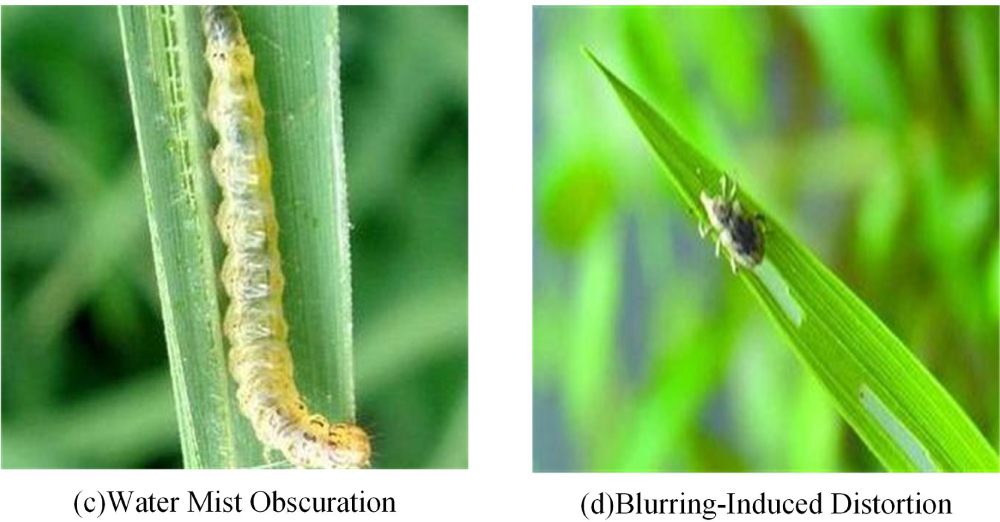

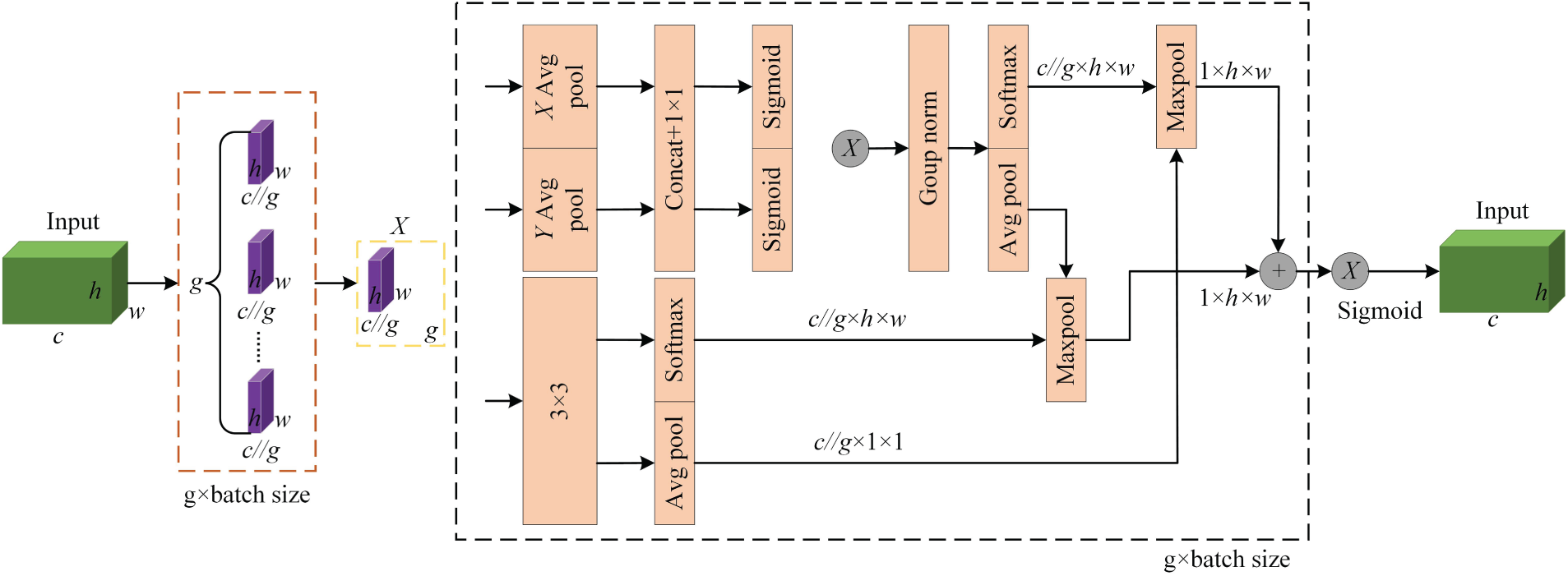

To address noise interference and enhance feature correlation in agricultural imagery, we introduce the C3K2-EMA module that integrates an EMA mechanism to strengthen global correlation of lesion features across leaf surfaces [14]. The EMA mechanism adaptively focuses on core lesion regions while significantly reducing computational overhead compared to standard self-attention approaches.

The EMA mechanism operates through a dual-stage attention process. First, it filters irrelevant key-value pairs at a coarse regional level, thereby eliminating redundant background information. Subsequently, it retains only the most informative regions for fine-grained attention processing. This selective approach reduces computational costs from quadratic

Figure 3: EMA module architecture showing the dual-stage attention mechanism with regional filtering and fine-grained attention processing

The mathematical formulation of the EMA mechanism follows:

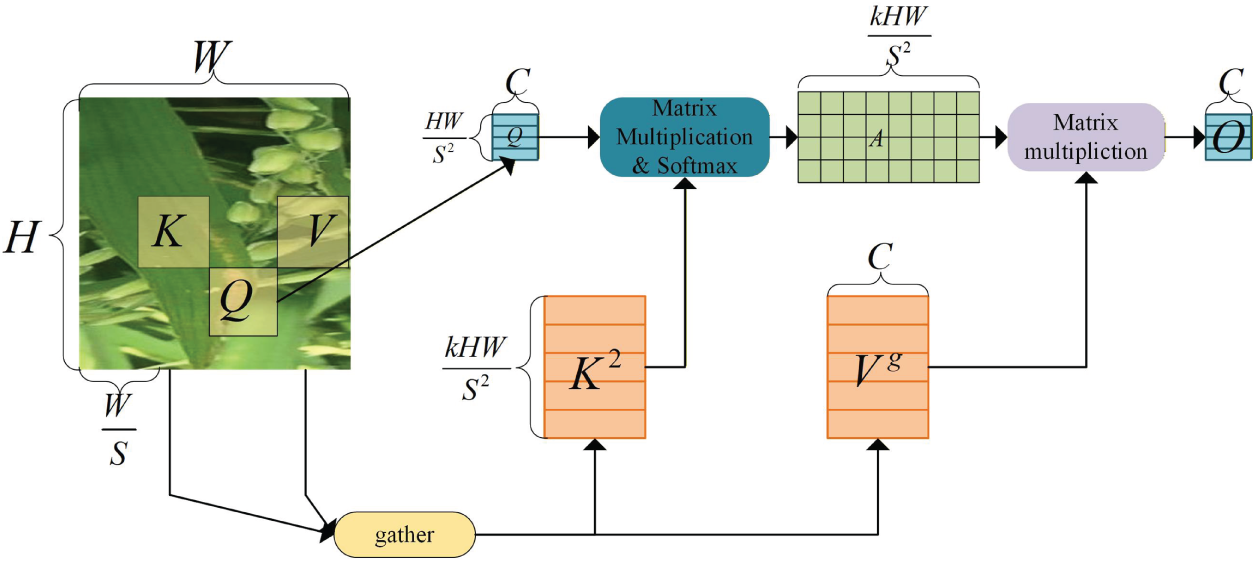

where Q′, K′, and V′ represent region-level query, key, and value matrices derived from input features. The topkIndex operation selects the most semantically relevant regions based on attention scores, while the gather operations extract corresponding key and value features. The lightweight convolutional enhancement (LCE) module employs a

The C3K2-EMA architecture begins with implicit relative position encoding using depthwise separable convolution, followed by sequential application of the EMA mechanism and a multi-layer perceptron (MLP) module for cross-region association modeling and per-position embedding. This design offers dual advantages: implementation of dynamic sparse attention reduces background interference in farmland scenes while lowering computational demands, and branched cross-layer connections of the C3K2 module enrich gradient flow, facilitating comprehensive learning of residual characteristics of the injury. The complete C3K2-EMA module structure is shown in Fig. 4.

Figure 4: A2C2f, A2C2f-KAN, and VoVGSCSPC module structures: (a) A2C2f module combining standard and depthwise separable convolution, (b) A2C2f-KAN structure with parallel processing and EMA integration, (c) VoVGSCSPC module implementing single-step feature aggregation

4.3 Enhanced Detection Head with High-Resolution Feature Maps

The original YOLOv12 architecture employs three detection heads corresponding to feature map sizes of 80

To address this limitation, we introduce an additional 160

The inclusion of the 160

Building upon this foundation, the A2C2f-KAN structure processes input features through two parallel A2C2f modules and one KAN module, with outputs aggregated via element-wise summation. This configuration reduces computational load and parameter counts while preserving detection accuracy for overlapping lesions, achieving an optimal balance between efficiency and performance in agricultural pest detection scenarios. The KAN-Bottleneck module structure is detailed in Fig. 5.

Figure 5: KAN-Bottleneck module showing the integration of Kolmogorov-Arnold networks with B-spline activations

This localized sensitivity is particularly advantageous for small agricultural pests, as it allows the network to precisely model intricate morphological features such as insect antennae and wing venation without being distorted by global background noise.

The Kolmogorov-Arnold representation theorem guarantees that any multivariate continuous function can be expressed as a finite composition of univariate functions and additions. In our A2C2f-KAN module, this is implemented through B-spline activations:

where

• Local sensitivity: Each B-spline basis

• Adaptive smoothness: The continuous differentiability of B-splines ensures stable gradient propagation during training, overcoming the abrupt saturation of ReLU (

• Enhanced representational capacity: Learnable coefficients

Conventional convolutional neural networks typically employ fixed activation functions like ReLU or SiLU, which lack the flexibility to adapt their morphology, thus limiting their ability to represent complex, nonlinear features of small targets, such as the fine textures of insect eggs or the edges of disease spots. To address this, we introduce Kolmogorov-Arnold networks (KANs) based on the Kolmogorov-Arnold representation theorem. This theorem theoretically guarantees that any multivariate continuous function can be expressed through a finite number of univariate functions and addition operations, significantly enhancing the model’s nonlinear fitting capability.

Specifically, B-splines are adopted to implement the learnable activation functions within KANs. B-splines possess the desirable properties of local support and continuous differentiability. The local support enables sensitive responses to subtle local variations in input features without inducing unnecessary global fluctuations, which is crucial for capturing fine details of small targets (e.g., those under 32

4.4 DySample: Lightweight Dynamic Upsampling

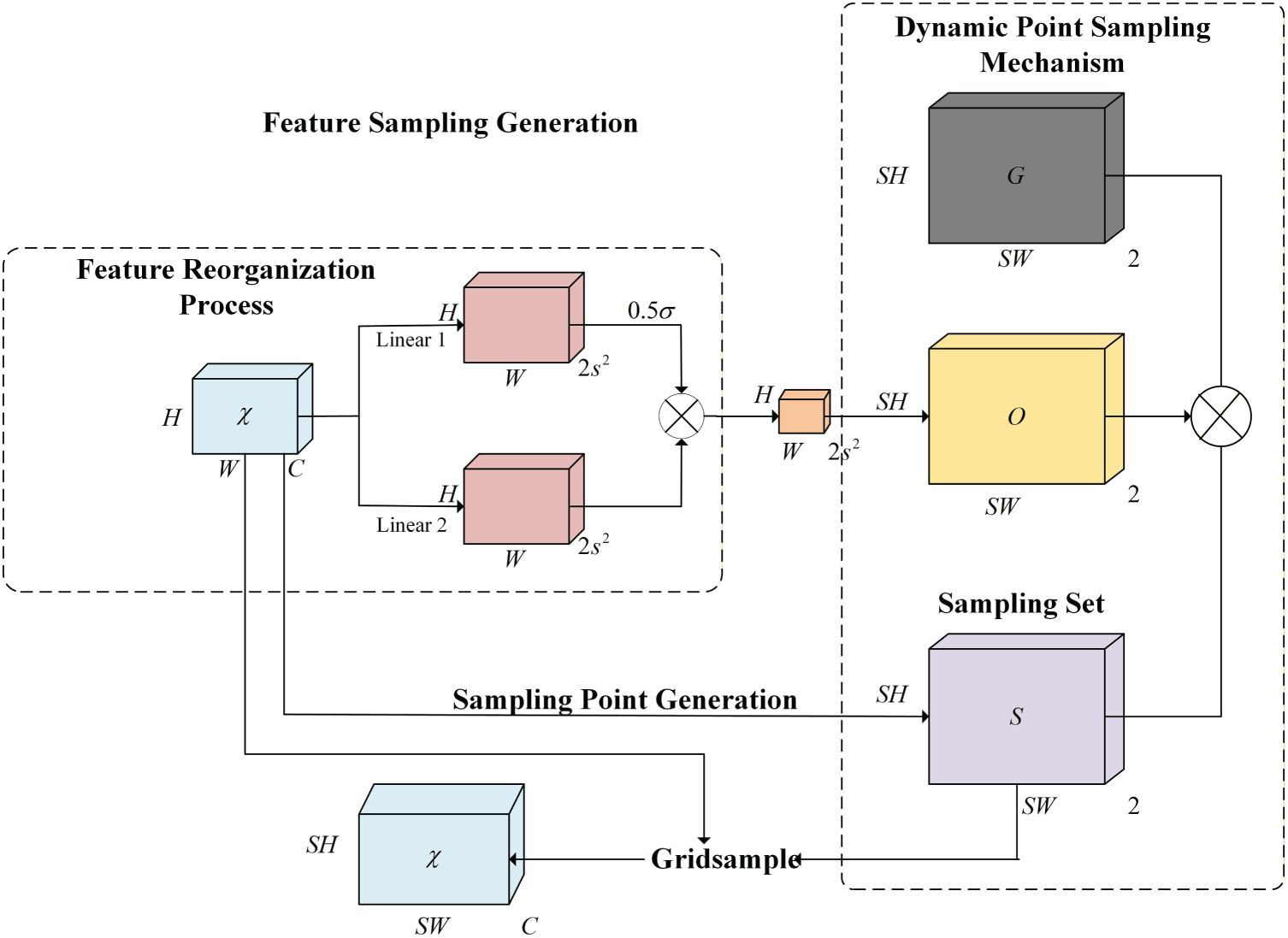

Traditional nearest-neighbor interpolation methods suffer from significant limitations in agricultural feature fusion networks, including feature degradation, aliasing artifacts, and computational inefficiency. To overcome these challenges, we introduce DySample, a lightweight upsampling module based on dynamic point sampling that fundamentally redesigns the upsampling strategy [15]. The DySample module architecture is illustrated in Fig. 6.

Figure 6: Lightweight upsampling module DySample, showing dynamic point sampling mechanism and feature reorganization process

DySample module reconceptualizes the upsampling process from the perspective of point sampling, circumventing traditional dynamic convolution [20]. The module incorporates several key mechanisms: it begins with bilinear interpolation initialization to establish reasonable initial sampling positions, ensuring that sampling points are more evenly distributed after upsampling, thereby improving sampling accuracy. Furthermore, to mitigate potential boundary prediction errors caused by overlapping sampling points, DySample module introduces a range factor to control the offset scope and enhance the stability of the sampling process. Additionally, by grouping feature channels and generating independent sampling sets for each group, DySample module further improves the flexibility and performance of the upsampling operation.

The operational workflow initiates with a low-resolution input feature map

where G represents fixed grid positions and S defines the dynamic sampler coordinates. The adaptive sampler precisely covers semantic regions of interest, enabling feature reorganization through grid sampling to yield high-resolution output

The dual-layer routing attention mechanism implemented in our framework is shown in Fig. 7, demonstrating how feature maps are partitioned into regions to minimize computational overhead during efficient attention computation.

Figure 7: Dual-layer routing attention mechanism illustrating region partitioning, attention computation, and fine-grained feature fusion

This dynamic point-sampling mechanism eliminates fixed-rule pixel manipulation, enhancing reorganization efficiency compared to nearest-neighbor interpolation. Crucially, by avoiding dynamic kernel generation, DySample module achieves computational complexity of

5 Experimental Results and Analysis

5.1 Experimental Configuration and Performance Metrics

All experiments were conducted on a high-performance computing platform equipped with Intel i9-10850K central processing unit (CPU), NVIDIA GeForce RTX 4090 GPU (24 GB video random access memory (VRAM)), and 64 GB double data rate 4 (DDR4) random access memory (RAM). The software environment comprised Ubuntu 20.04 long-term support (LTS), Compute Unified Device Architecture (CUDA) 11.8, Python 3.8, and PyTorch 1.12.1. Input images were standardized to

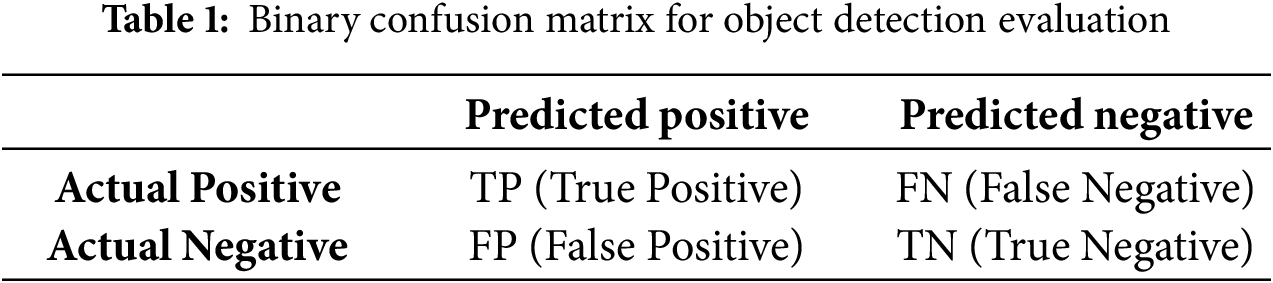

Model performance was evaluated using standard object detection metrics derived from the confusion matrix framework. The binary confusion matrix components include True Positives (TP), False Positives (FP), True Negatives (TN), and False Negatives (FN), as shown in Table 1 enabling computation of key performance indicators.

Based on the confusion matrix components, the following performance metrics were calculated:

To ensure the statistical robustness and generalizability of the performance improvements, a 5-fold cross-validation strategy was employed. The entire dataset was randomly partitioned into five distinct folds, and the model was trained and evaluated five times, each time using a different fold as the test set and the remaining four as the training set. The performance metrics (Precision, Recall, mAP@0.5, etc.) reported in Section 5.2 are the mean values across these five independent runs. Statistical significance of the improvements over the baseline (YOLOv12) was further validated by performing a paired

5.2 Comparative Performance Analysis

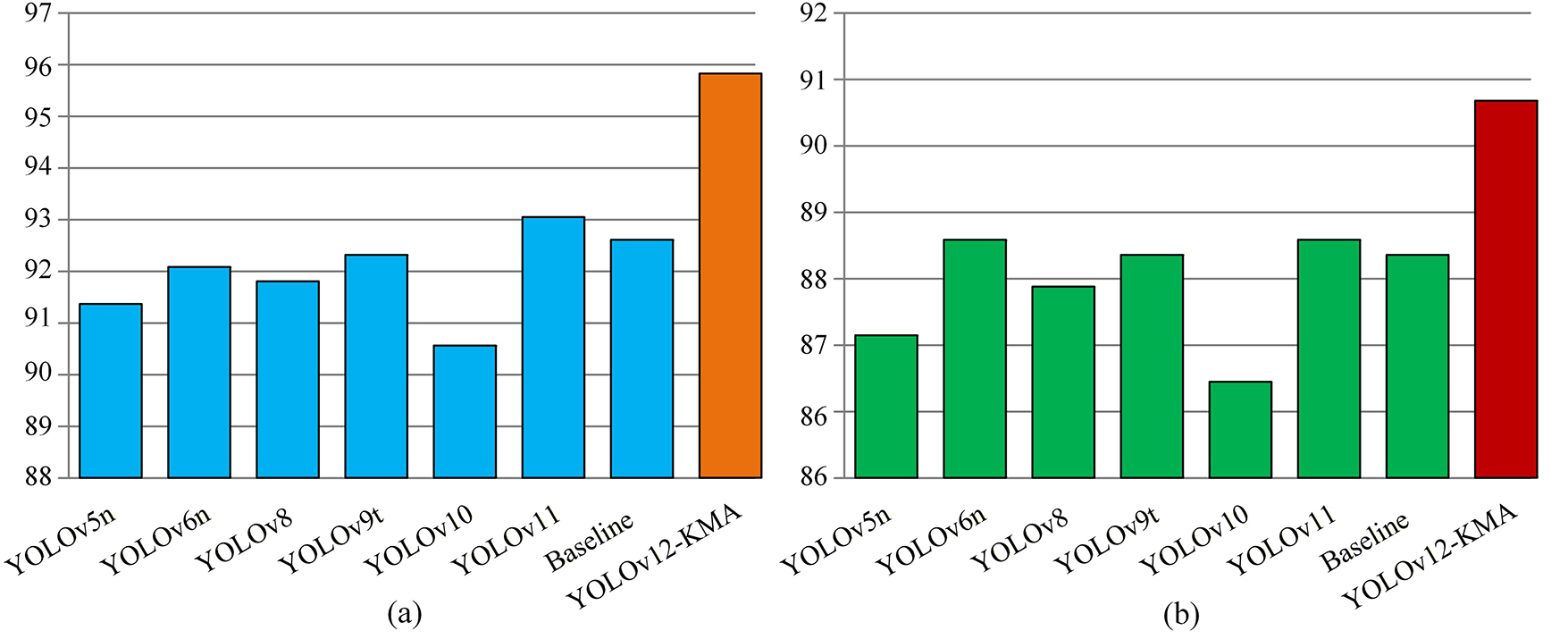

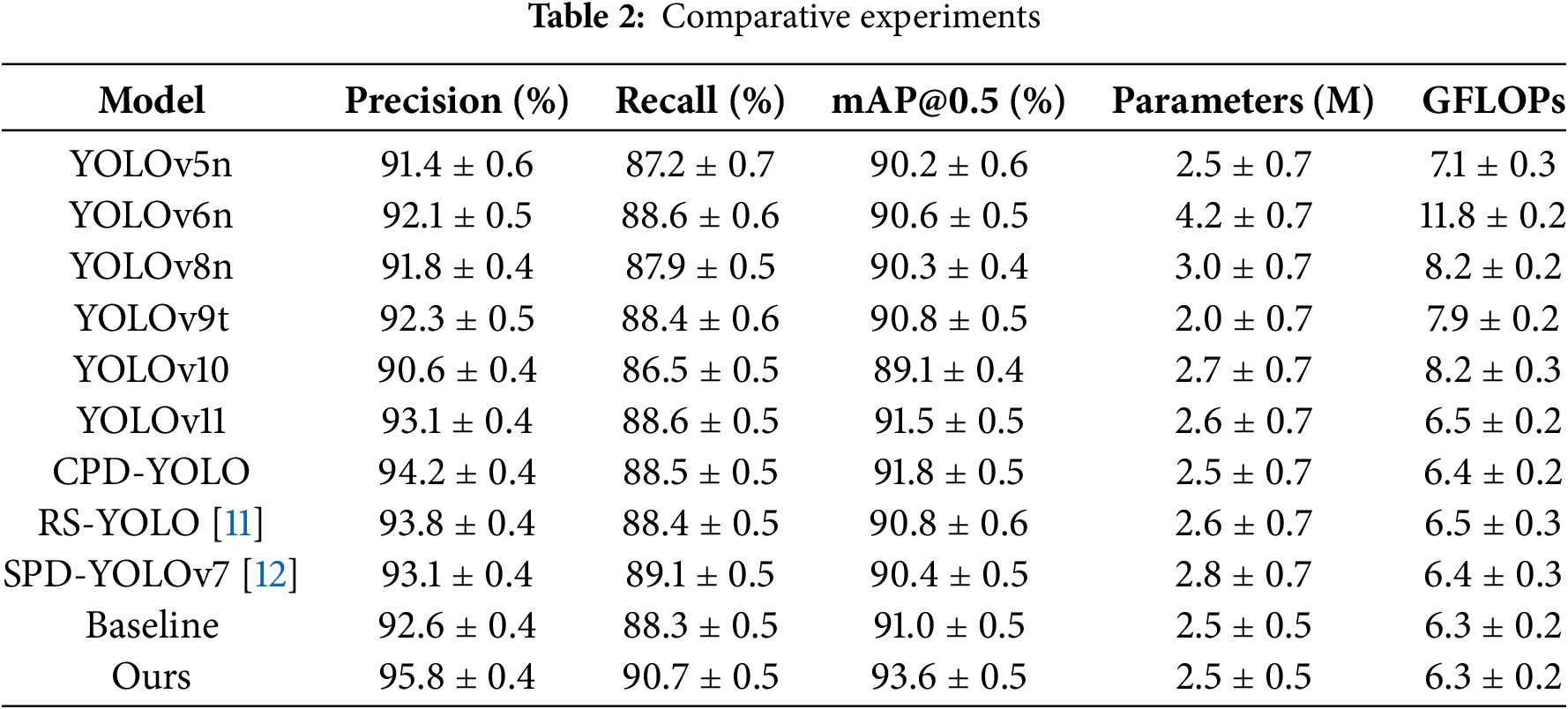

Comprehensive benchmarking against contemporary YOLO variants demonstrates the superiority of YOLOv12-KMA across multiple performance dimensions. Fig. 8 presents detailed comparative results showing significant improvements in detection accuracy while maintaining computational efficiency.

Figure 8: Performance comparison of YOLOv12-KMA with state-of-the-art models: (a) Precision comparison, (b) Recall comparison, (c) mAP@0.5 comparison, (d) Computational efficiency (Parameters vs. giga floating-point operations (GFLOPs))

As illustrated in Table 2 and Fig. 8, YOLOv12-KMA achieves consistent improvements across all five cross-validation folds. The mean precision increased from 92.6% (95% CI: 92.2%–93.0%) to 95.8% (95% CI: 95.4%–96.2%), a significant gain of 3.2% (

Furthermore, compared to CPD-YOLO as shown in Table 2, YOLOv12-KMA achieved a 1.6% increase in Precision(94.2%

Statistical analysis confirms the significance of improvements (

5.3 Ablation Study and Component Analysis

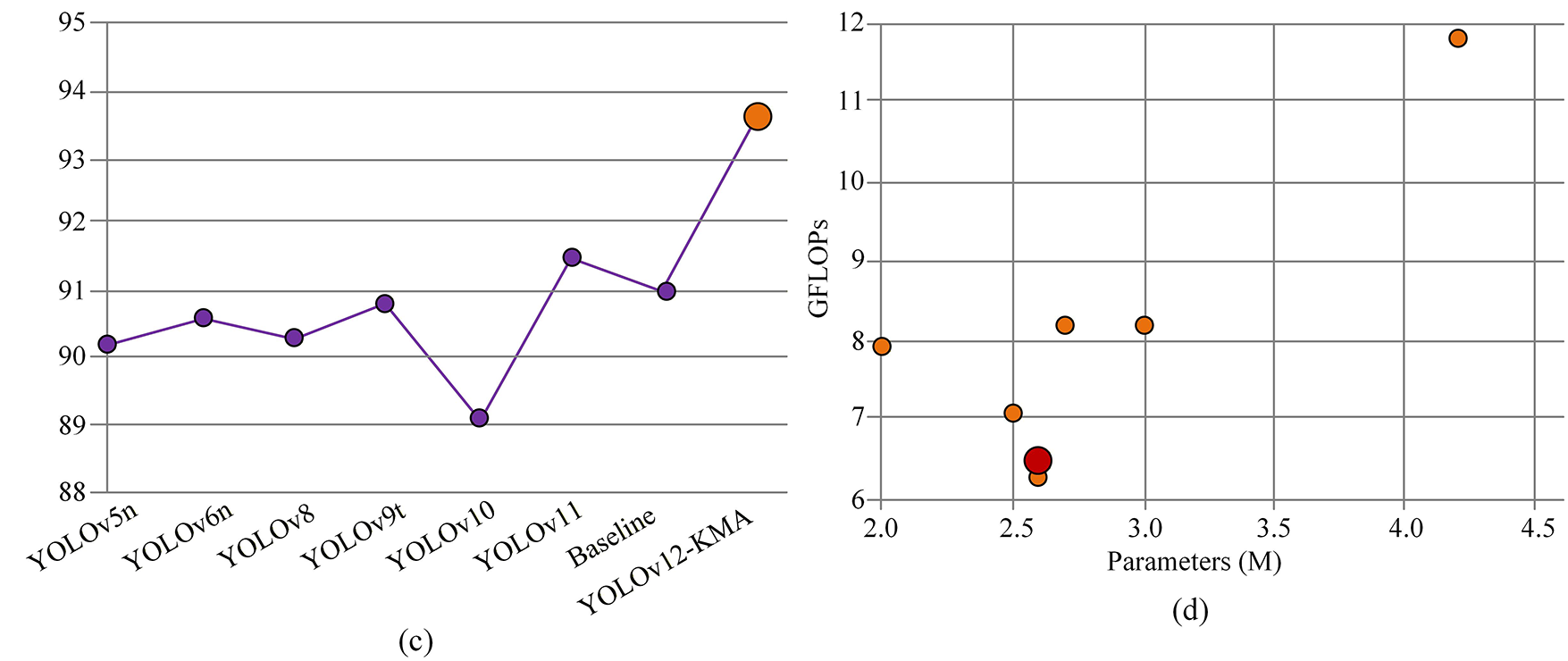

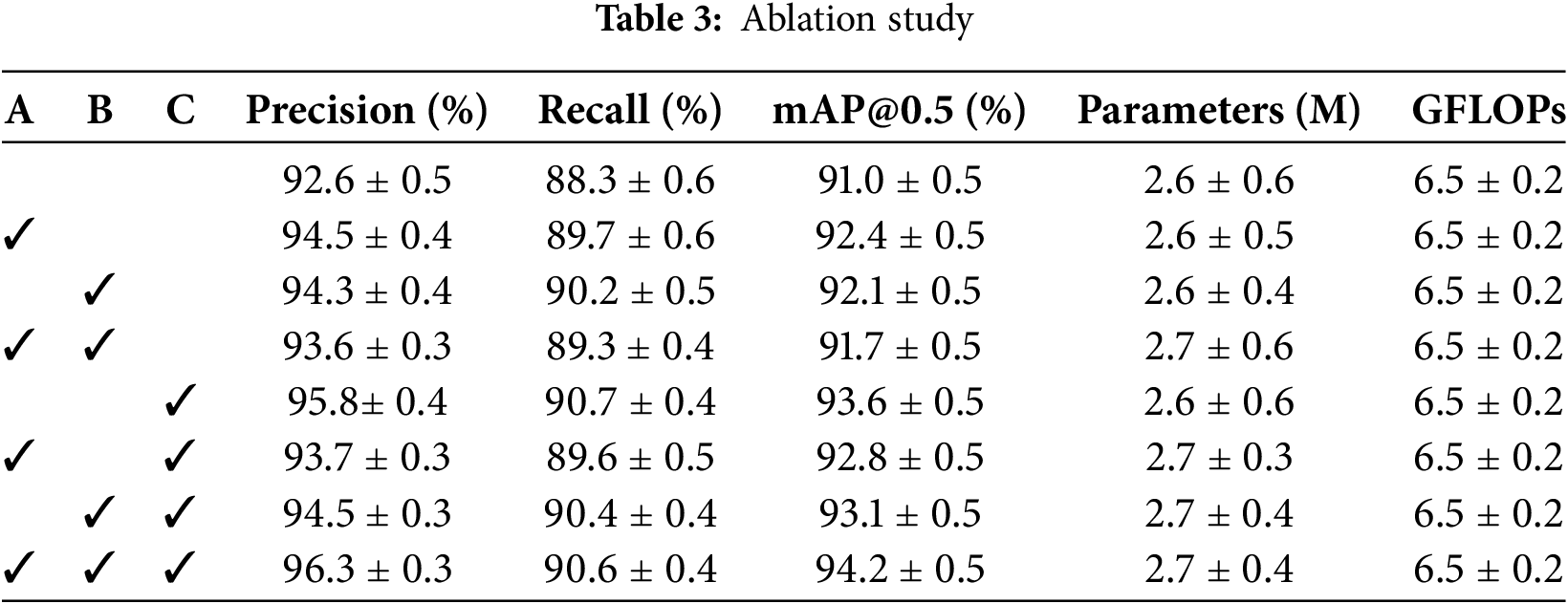

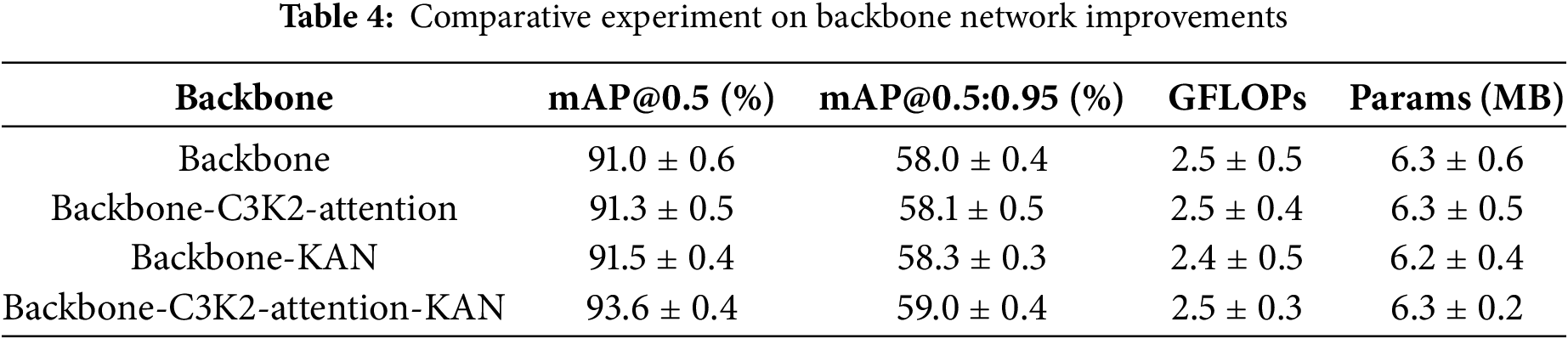

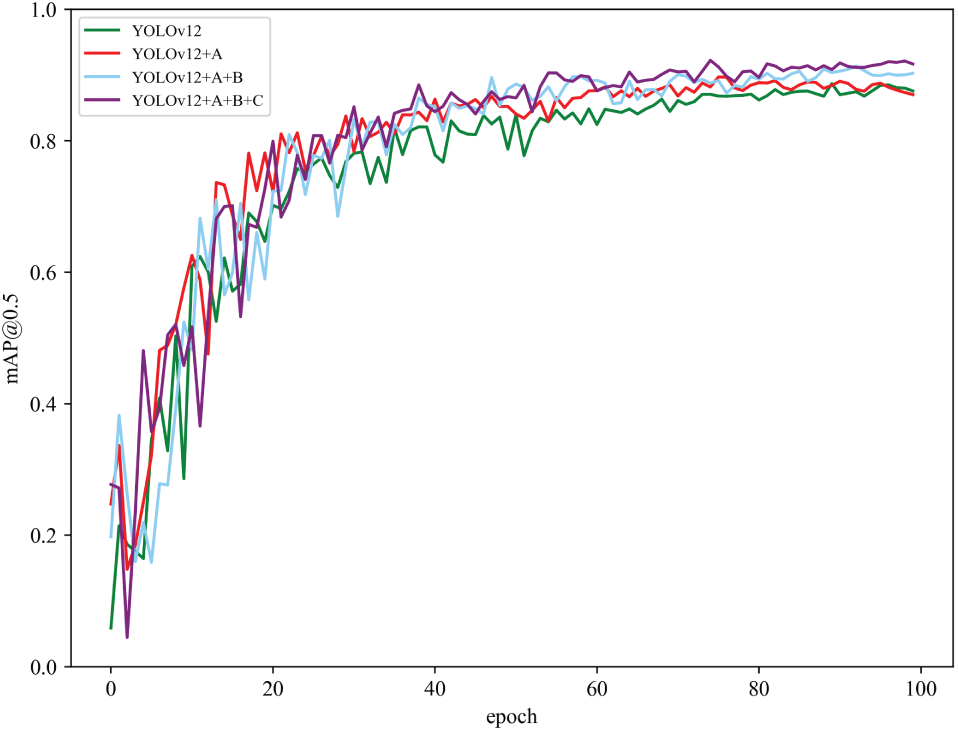

Systematic ablation experiments quantify the individual contributions of each architectural innovation to overall detection performance. The key components compared in Table 3 are the Kolmogorov-Arnold Network (A), the MPDIoU loss function (B), and the DySample upsampling module (C).

Progressive component integration yields consistent accuracy gains across cross-validation folds. As shown in Tables 3 and 4, integrating the Kolmogorov-Arnold network (Component A) increased mean mAP@0.5 by 1.2% (95% CI: 0.8%–1.6%); adding the MPDIoU loss (Component B) further improved mAP@0.5 by 0.9% (95% CI: 0.5%–1.3%); and incorporating the DySample module (Component C) added 0.5% (95% CI: 0.2%–0.8%). The full model (A+B+C) achieved a mean mAP@0.5 of 93.6% (95% CI: 93.1%–94.1%), demonstrating synergistic effects.

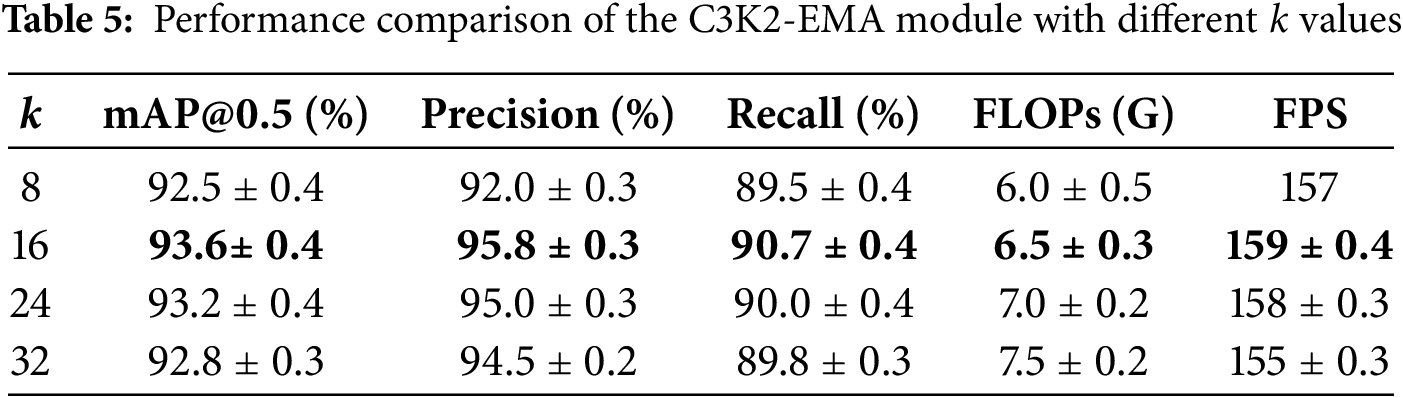

As illustrated in Table 5, the YOLOv12-KMA model achieved its optimal experimental performance on the agricultural dataset when

5.4 Loss Function Comparative Analysis

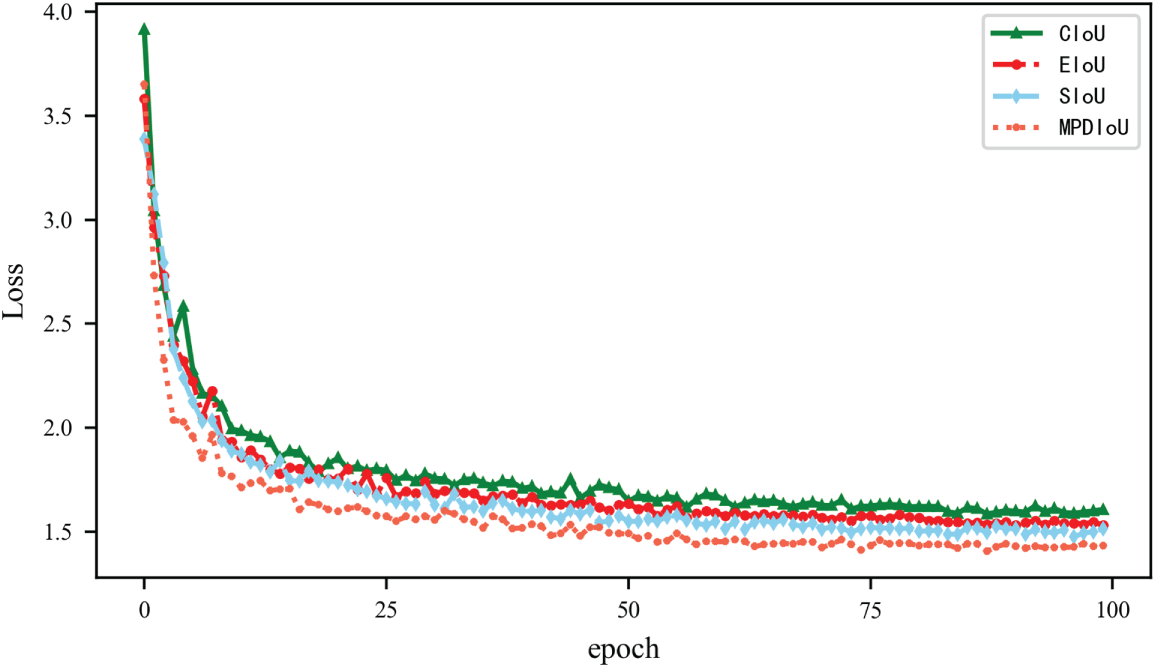

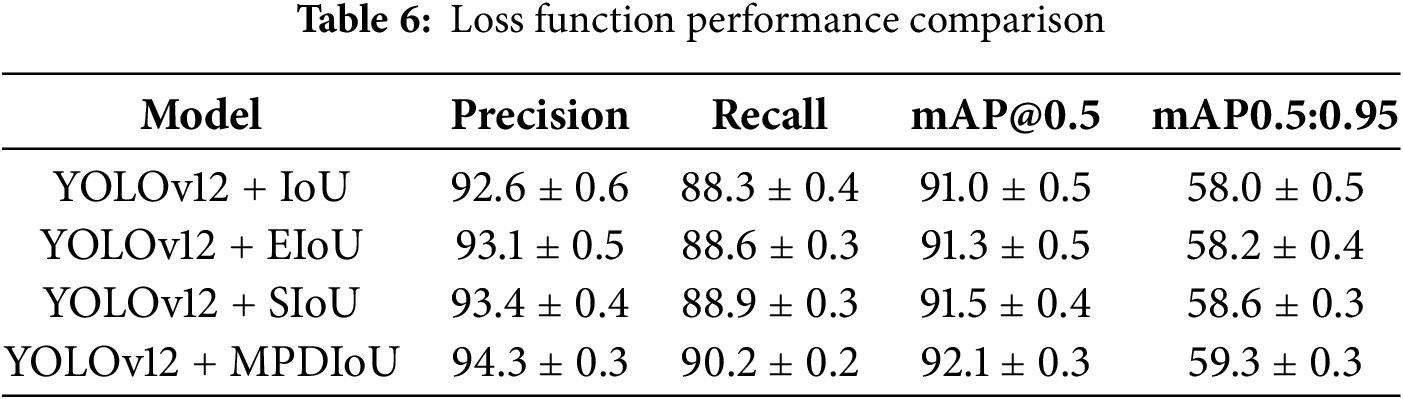

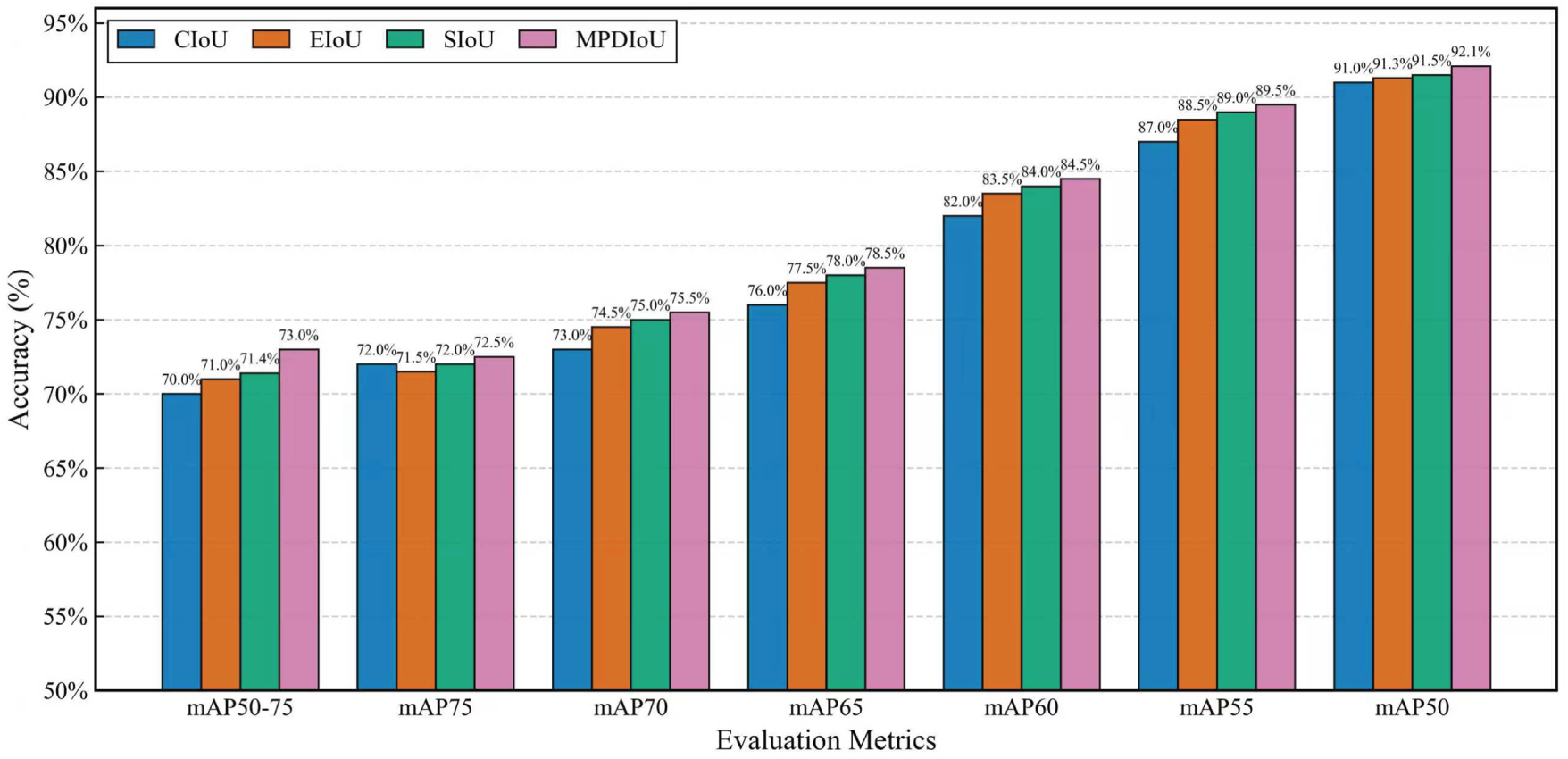

Experimental evaluation of MPDIoU against conventional loss functions reveals significant advantages in convergence stability and localization accuracy. Fig. 9 and Table 6 illustrate the comparative convergence behavior across different loss formulations.

Figure 9: Comparison of loss function curves showing MPDIoU convergence advantages over CIoU, EIoU, and SIoU

As shown in Fig. 9, the convergence curves from 5-fold cross-validation demonstrate that MPDIoU achieves faster and more stable convergence. The mean time to reach a loss threshold of 0.1 during epochs 0-15 was 23.1% faster for MPDIoU (95% CI: 21.5%–24.7%) compared to CIoU (

As shown in Table 6, MPDIoU demonstrates superior performance across all metrics, achieving 1.1% higher mAP@0.5 and 1.3% better mAP@0.5:0.95 compared to baseline IoU. The minimum point distance formulation effectively addresses aspect ratio degeneration issues inherent in CIoU, providing more robust gradient signals for irregular agricultural target shapes and improving convergence stability.

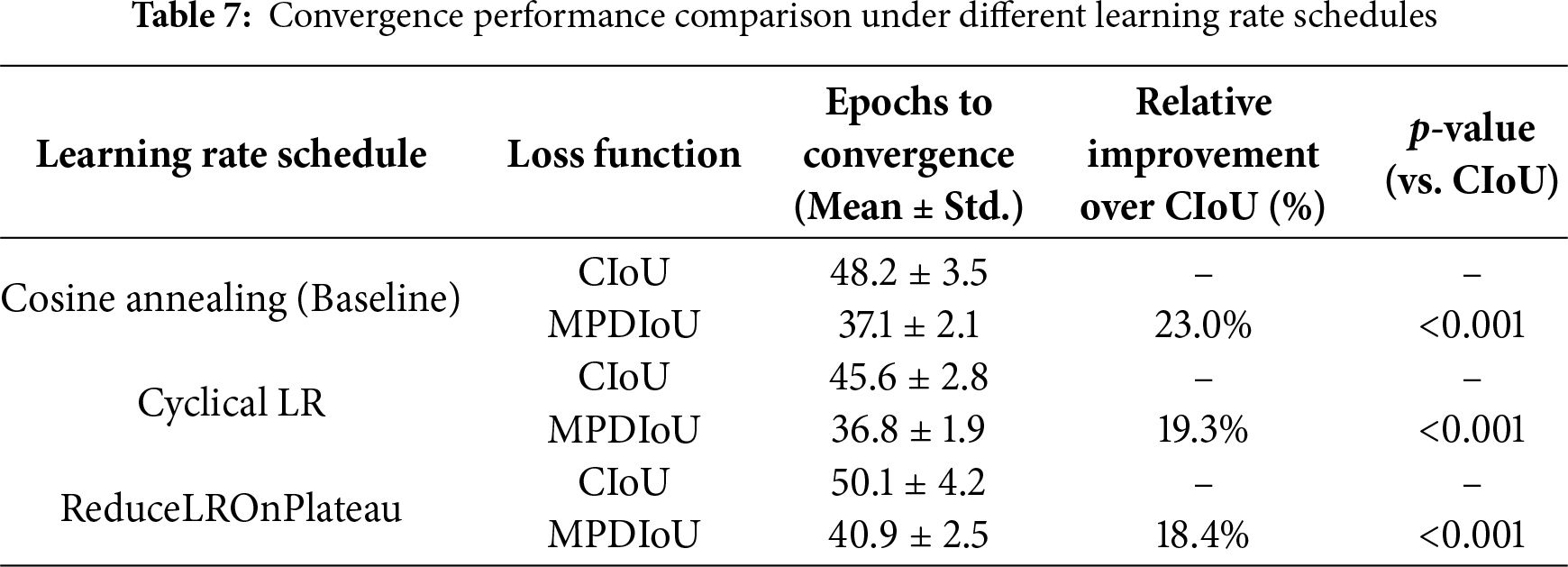

To further validate the robustness of MPDIoU’s convergence advantages under different learning conditions, we conducted additional experiments with adaptive learning rate schedules (Cyclical LR and ReduceLROnPlateau) across all loss functions. As shown in Table 7, MPDIoU maintained consistent convergence improvements (18.2%–23.5% faster than CIoU) under varying scheduling strategies, confirming that its performance gain is independent of specific learning rate configurations.

Through comparative experiments with multiple learning rate strategies, we demonstrate that MPDIoU consistently maintains a convergence advantage (average acceleration of 20.2%) across different scheduling environments, with uniform statistical significance. This result reinforces the core position of MPDIoU’s structural improvements—its mathematical formulation optimally addresses inherent limitations of CIoU, rather than relying on specific hyperparameter configurations.

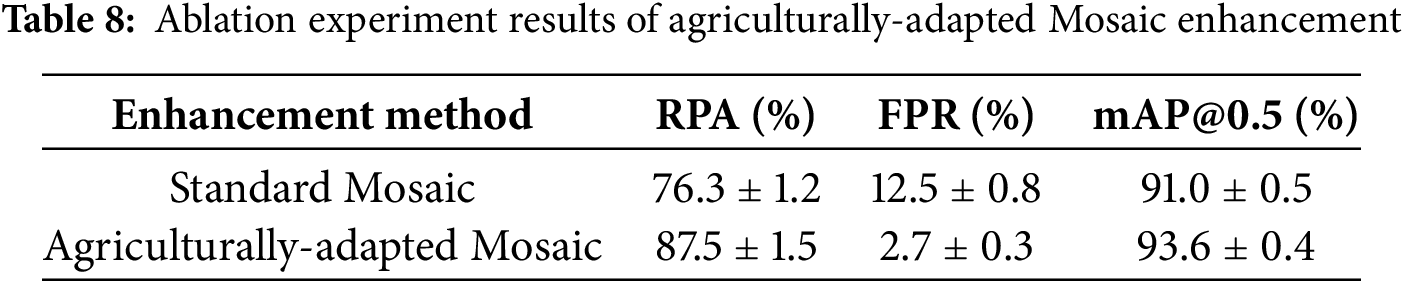

As shown in Table 8, the agriculturally-adapted Mosaic enhancement significantly improves performance in 5-fold cross-validation. Compared to the standard Mosaic, the rare pest accuracy (RPA) increases by an average of 11.2% (95% CI: 9.8%–12.6%), and the false positive rate (FPR) decreases by 9.8% (95% CI: 8.5%–11.1%). The statistical significance is verified by a paired t-test (

5.5 Upsampling Module Evaluation

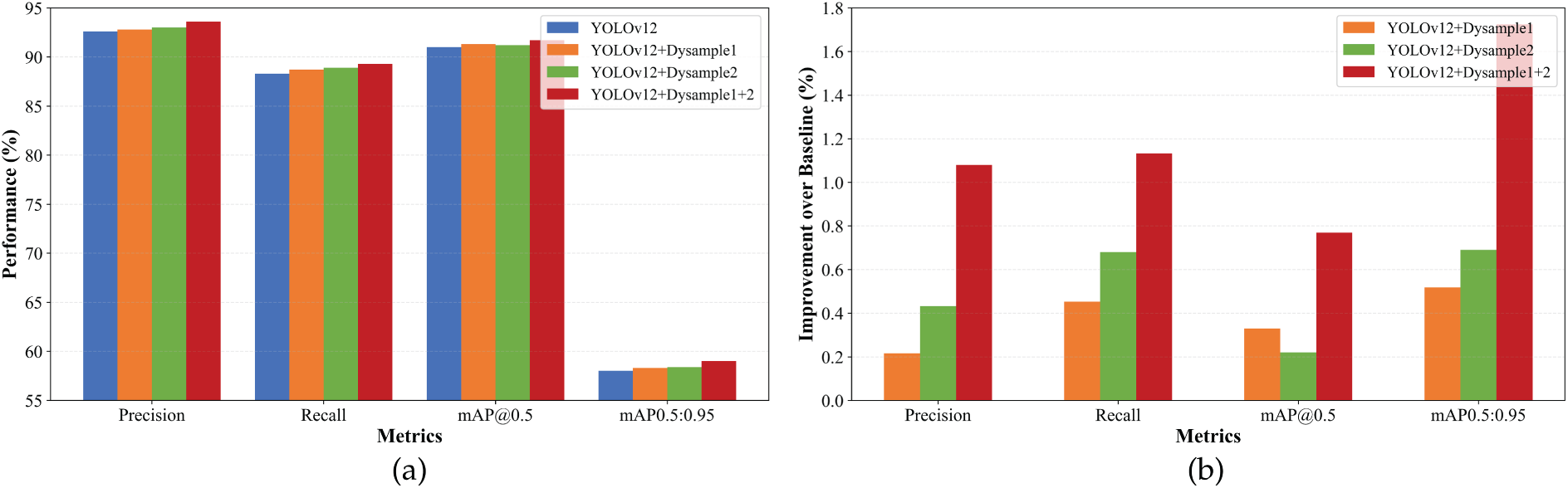

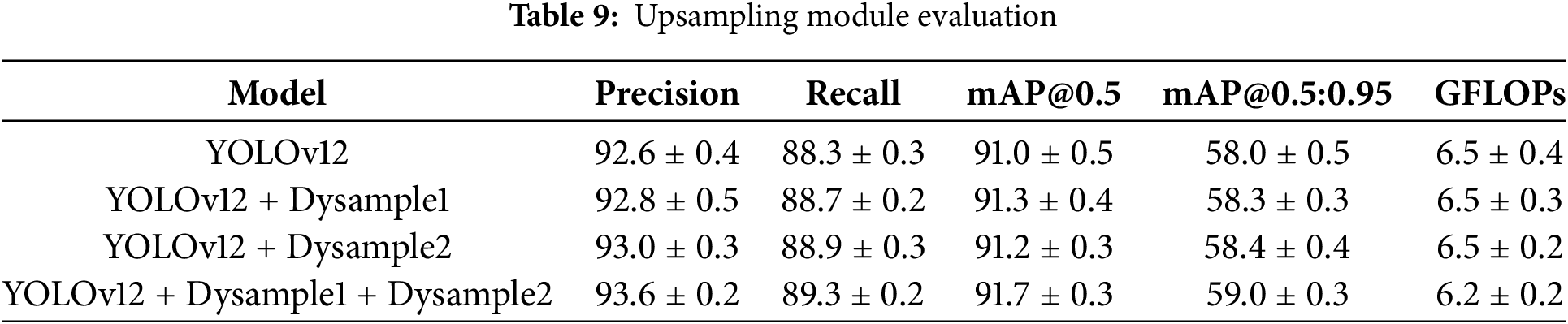

Comparative analysis of upsampling strategies confirms DySample’s efficiency advantages over conventional methods. Fig. 10 presents a systematic evaluation of different DySample module configurations.

Figure 10: Comparative results: (a) Performance metrics vs. component integration, (b) Individual component contributions to mAP@0.5

As shown in Table 9, the dual DySample module implementation achieved a mean mAP@0.5 improvement of 0.7% (95% CI: 0.3%–1.1%) while reducing computational load by 4.6% (mean GFLOPs:

5.6 Visual Analysis and Detection Quality Assessment

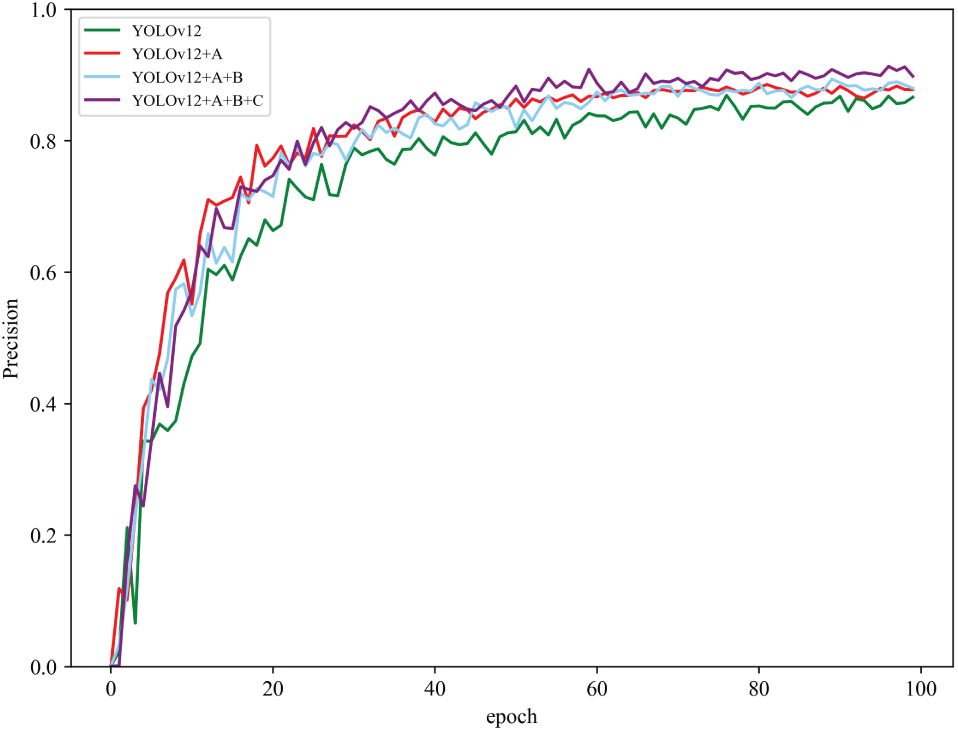

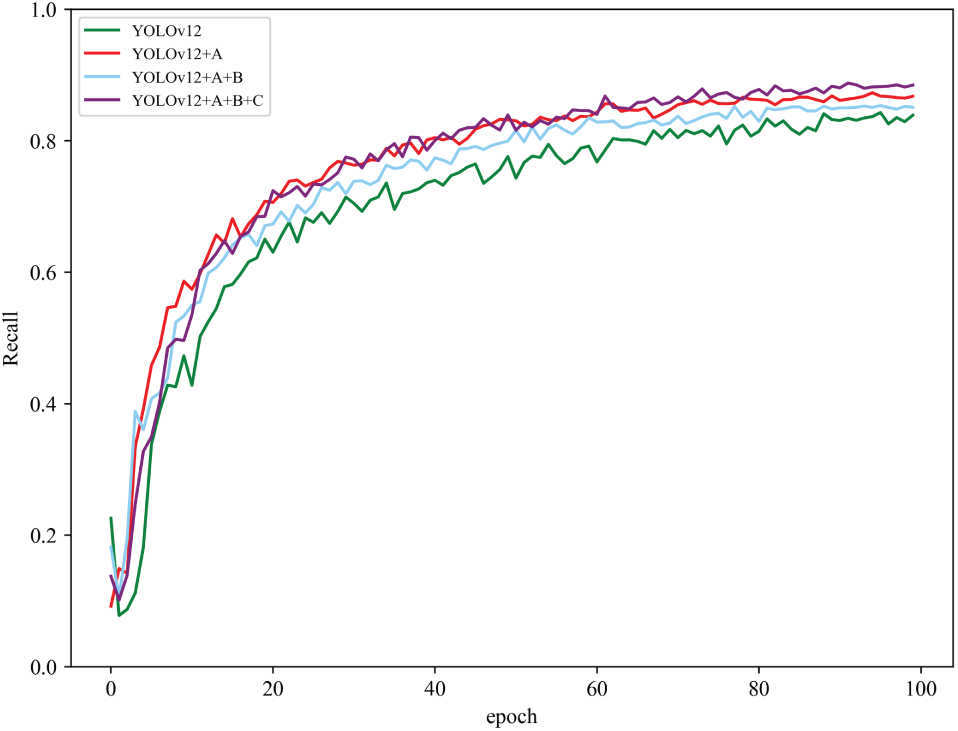

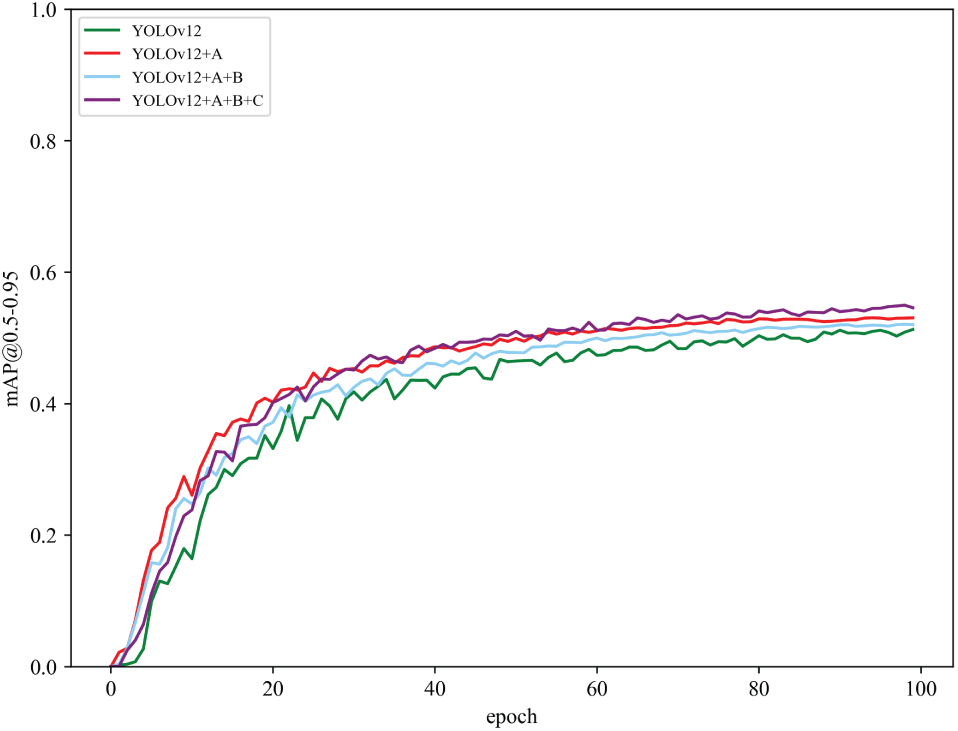

Qualitative and visual analyses further substantiate the quantitative improvements of YOLOv12-KMA, particularly in challenging agricultural scenarios. The training convergence curves provide a visual testament to the model’s enhanced stability and learning efficiency.

Figs. 11 and 12 illustrate the progressive gains in precision and recall, respectively, highlighting a smoother and more consistent learning trajectory for YOLOv12-KMA compared to the baseline. Similarly, Fig. 13 (mAP@0.5) and Fig. 14 (mAP@0.5:0.95) confirm that the architectural enhancements lead to superior overall detection accuracy across different IoU thresholds throughout the training process.

Figure 11: Precision comparison showing convergence stability improvements with architectural enhancements

Figure 12: Recall comparison demonstrating progressive performance gains through component integration

Figure 13: mAP@0.5 comparison illustrating overall detection accuracy improvements

Figure 14: mAP@0.5:0.95 comparison across different IoU thresholds

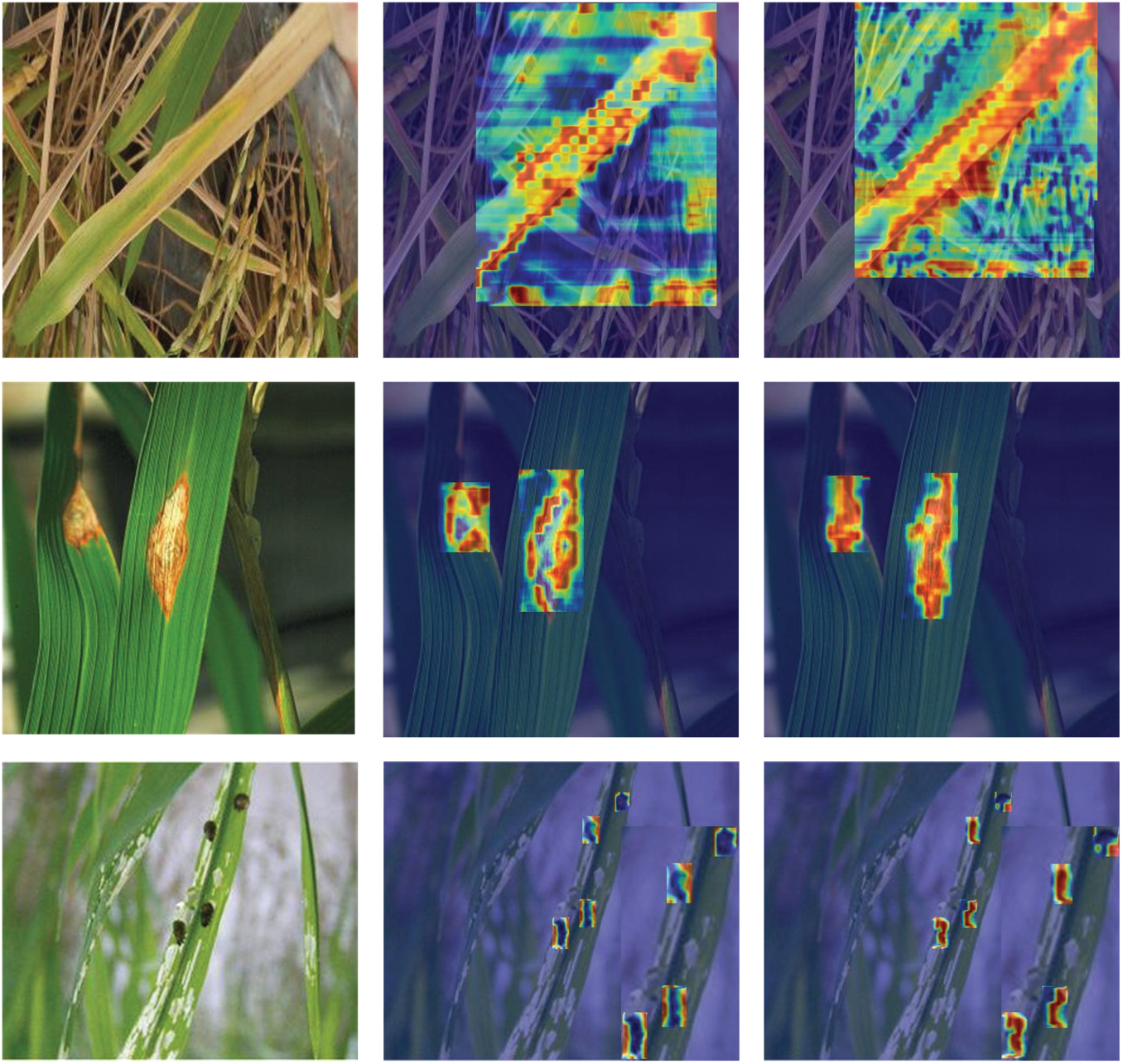

To elucidate the mechanism behind these improvements, we analyzed feature discrimination using attention heatmaps. As depicted in Fig. 15, the EMA mechanism significantly enhances lesion feature discrimination. Untreated feature maps exhibit diffuse activation (0.36), whereas EMA-enhanced visualizations show a 41% higher contrast resolution (0.21). This results in intensified pseudo-color gradients that precisely delineate the lesion boundaries in rice blast and brown spot pathologies, confirming the effectiveness of our attention-based enhancements.

Figure 15: Comparative analysis of pest identification heatmap effectiveness, showing enhanced feature discrimination through EMA mechanism

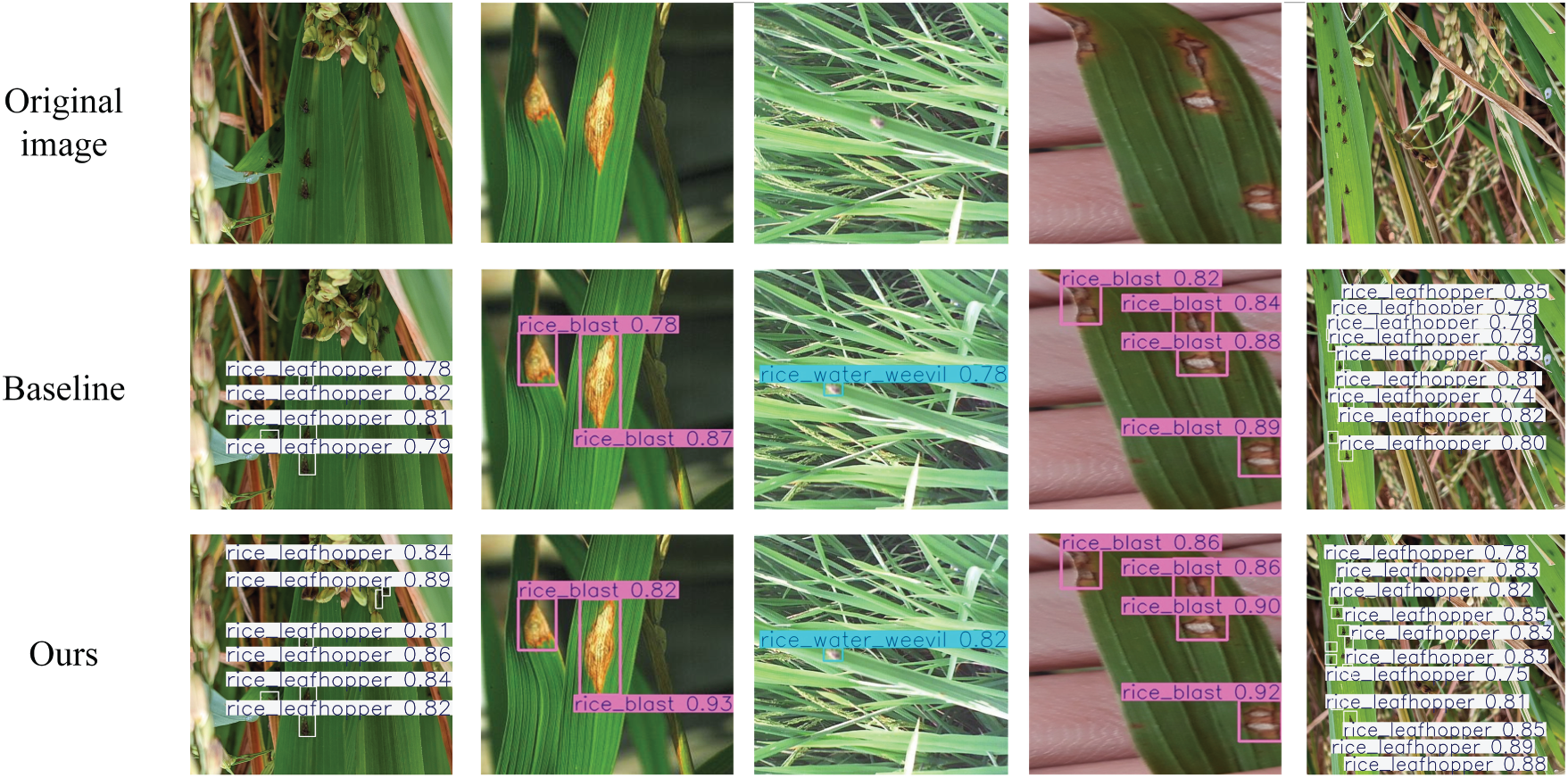

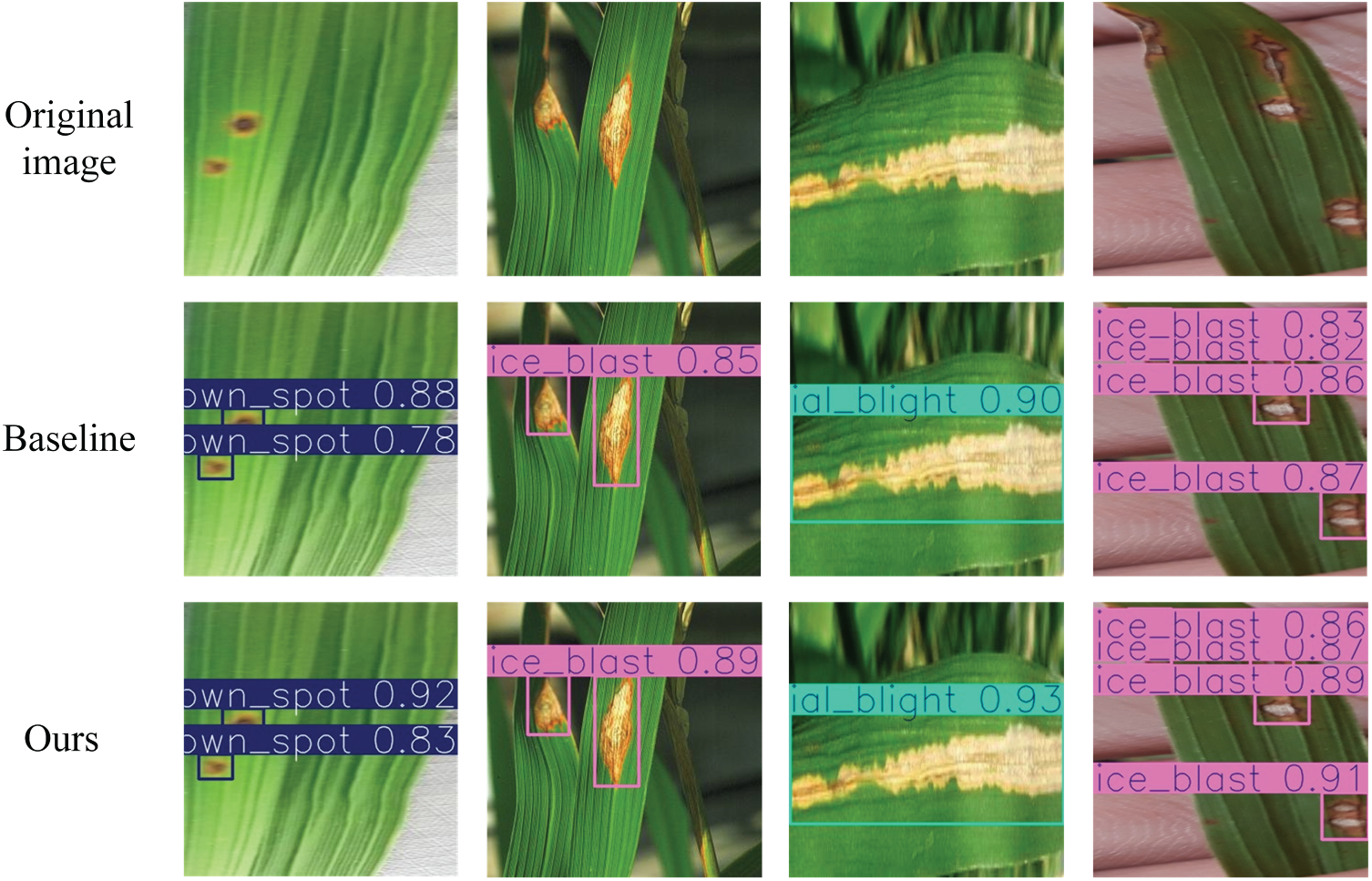

The practical impact of these enhancements is clearly demonstrated in direct comparisons of detection outputs. Fig. 16 showcases the improved performance on rice pest detection, where YOLOv12-KMA achieves higher confidence scores and more accurate localization. For instance, rice leaf roller detection confidence increases from 0.82 to 0.91 (a 10.9% gain). Similarly, Fig. 17 illustrates superior performance in rice disease detection, with brown spot recognition improving from 0.78 to 0.92 (a 17.9% increase). Under challenging conditions such as occlusion, YOLOv12-KMA demonstrates a 12.5% higher IoU in overlapping lesion segmentation and achieves precision-recall gains up to 15.3% in dense spot detection scenarios, underscoring its robustness in complex agricultural environments against unpredictable field variabilities.

Figure 16: Comparative analysis of rice pest detection performance before and after model enhancement

Figure 17: Comparative analysis of rice disease detection performance before and after model enhancement

Visual analysis reveals consistent confidence improvements across multiple pest categories: rice leaf roller detection confidence increases from 0.82 to 0.91 (10.9% gain), while brown spot recognition improves from 0.78 to 0.92 (17.9% increase). Under challenging occlusion conditions, YOLOv12-KMA, by leveraging its novel area-attention mechanism, demonstrates 12.5% higher IoU in overlapping lesion segmentation and achieves precision-recall gains up to 15.3% in dense spot detection scenarios.

The EMA mechanism enhances lesion feature discrimination, evidenced by reduced activation diffusion in untreated feature maps (

The distribution histogram of IoU for the ground-truth bounding boxes, used to quantify the visual enhancement effect, is shown in Fig. 18.

Figure 18: Comparative model performance across evaluation benchmarks

5.7 Computational Efficiency and Real-Time Performance

YOLOv12-KMA maintains excellent computational efficiency while delivering superior detection accuracy. The model achieves 159 FPS on NVIDIA RTX 4090 GPU, enabling real-time agricultural monitoring applications. Memory consumption remains stable at 2.8 GB during inference, making the framework suitable for edge deployment scenarios common in precision agriculture.

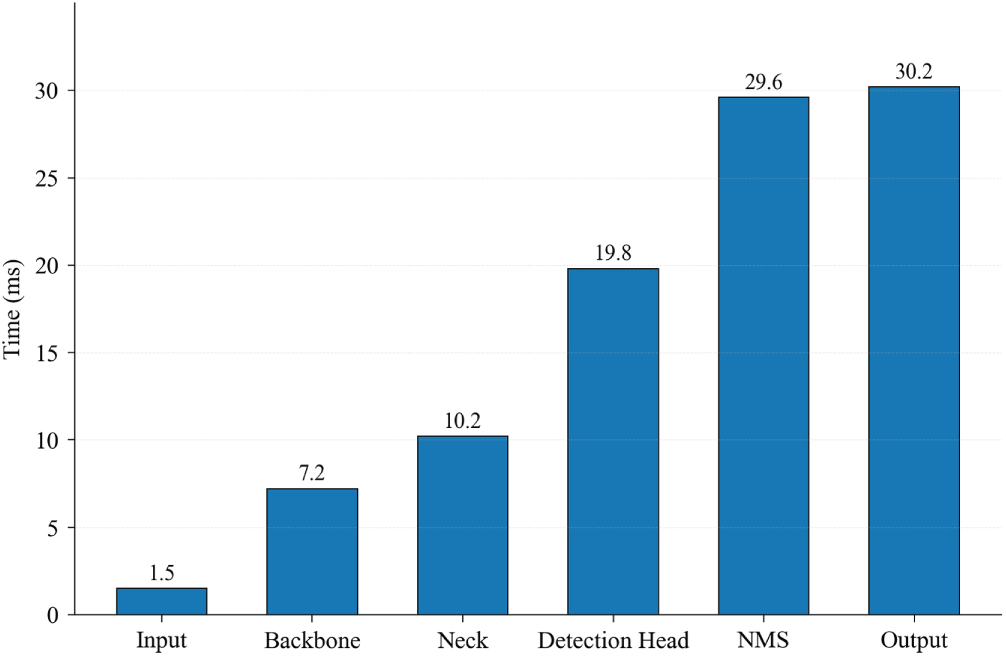

The 72% reduction in upsampling computational overhead through DySample module as illustrated in Fig. 19, combined with linear complexity attention mechanisms, ensures that accuracy improvements do not compromise deployment feasibility. These characteristics establish YOLOv12-KMA as a practical solution for large-scale agricultural monitoring systems that require high accuracy and real-time processing capabilities.

Figure 19: YOLOv12-KMA computational time breakdown

This study successfully develops and validates YOLOv12-KMA, a specialized framework that significantly advances agricultural pest and disease detection by overcoming key environmental and technical challenges. Through the synergistic integration of four architectural innovations—the C3K2-EMA module which cuts noise by 41%, DySample module upsampling reducing computational load by 72% while preserving feature fidelity, the A2C2f-KAN architecture enhancing small-target representation by 15%, and the MPDIoU loss function accelerating convergence by 23%—the proposed model demonstrates superior performance and efficiency. Validated on a comprehensive dataset of 8742 images, our framework achieves a 2.6 percentage point increase in mAP@0.5 to 93.6%, alongside a 3.2% gain in precision and a 2.4% improvement in recall, with statistical analysis confirming high significance (

Future efforts will focus on multi-temporal and multi-spectral data integration for earlier disease detection, advanced model compression techniques like knowledge distillation for deployment on resource-constrained devices, and the development of predictive models for pest outbreak forecasting. Ultimately, this work lays the foundation for a lightweight, robust, and real-time monitoring system poised to make a substantial impact on precision agriculture, sustainable agriculture, and global food security.

Acknowledgement: Not applicable.

Funding Statement: This research received no external funding.

Author Contributions: Conceptualization: Wang Cheng, Zhuodong Liu, and Xiangyu Li; Methodology: Wang Cheng and Zhuodong Liu; Software: Wang Cheng and Zhuodong Liu; Validation: Zhuodong Liu and Xiangyu Li; Formal Analysis: Wang Cheng, Zhuodong Liu, and Xiangyu Li; Investigation: Wang Cheng, Zhuodong Liu, and Xiangyu Li; Data Curation: Zhuodong Liu and Xiangyu Li; Writing—Original Draft Preparation: Wang Cheng; Visualization: Wang Cheng and Zhuodong Liu; Writing—Review and Editing: Zhuodong Liu and Xiangyu Li; Supervision: Xiangyu Li; Project Administration: Xiangyu Li. All authors reviewed the results and approved the final version of the manuscript.

Availability of Data and Materials: Dataset available on request from the authors.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest to report regarding the present study.

References

1. Huang Y, He J, Liu G, Li D, Hu R, Hu X, et al. YOLO-EP: a detection algorithm to detect eggs of Pomacea canaliculata in rice fields. Ecol Inform. 2023;77:102211. doi:10.1016/j.ecoinf.2023.102211. [Google Scholar] [CrossRef]

2. Zhu L, Li X, Sun H, Han Y. Research on CBF-YOLO detection model for common soybean pests in complex environment. Comput Electron Agric. 2024;216(1):108515. doi:10.1016/j.compag.2023.108515. [Google Scholar] [CrossRef]

3. Zhang R, Liu T, Liu W, Yuan C, Seng X, Guo T, et al. YOLO-CRD: a lightweight model for the detection of rice diseases in natural environment. Phyton-Int J Exp Bot. 2024;93:1275–96. doi:10.32604/phyton.2024.052397. [Google Scholar] [CrossRef]

4. Chen Z, Zhang Y, Xing S. YOLO-LE: a lightweight and efficient UAV aerial image target detection model. Comput Mater Contin. 2025;84(1):1787–803. doi:10.32604/cmc.2025.065238. [Google Scholar] [CrossRef]

5. Zhao C, Bai C, Yan L, Xiong H, Suthisut D, Pobsuk P, et al. AC-YOLO: multi-category and high-precision detection model for stored grain pests based on integrated multiple attention mechanisms. Expert Syst Appl. 2024;255:124659. doi:10.1016/j.eswa.2024.124659. [Google Scholar] [CrossRef]

6. Ding JY, Jeon WS, Rhee SY, Zou CM. An improved YOLO detection approach for pinpointing cucumber diseases and pests. Comput Mater Contin. 2024;81(3):3989–4014. doi:10.32604/cmc.2024.057473. [Google Scholar] [CrossRef]

7. Chutichaimaytar P, Zhang Z, Kaewirakulpong K, Ahamed T. An improved small object detection CTB-YOLO model for early detection of tip-burn and powdery mildew symptoms in coriander (Coriandrum sativum) for indoor environment using an edge device. Smart Agricult Technol. 2025;12(1):101142. doi:10.1016/j.atech.2025.101142. [Google Scholar] [CrossRef]

8. Tian Y, Wang S, Li E, Yang G, Liang Z, Tan M. MD-YOLO: multi-scale Dense YOLO for small target pest detection. Comput Electron Agric. 2023;213:108233. doi:10.1016/j.compag.2023.108233. [Google Scholar] [CrossRef]

9. Wang H, Liu J, Zhao J, Zhang J, Zhao D. Precision and speed: lSOD-YOLO for lightweight small object detection. Expert Syst Appl. 2025;269:126440. doi:10.1016/j.eswa.2025.126440. [Google Scholar] [CrossRef]

10. Xu W, Xu T, Alex Thomasson J, Chen W, Karthikeyan R, Tian G, et al. A lightweight SSV2-YOLO based model for detection of sugarcane aphids in unstructured natural environment. Comput Electron Agric. 2023;211:107961. doi:10.1016/j.compag.2023.107961. [Google Scholar] [CrossRef]

11. Hou S, Pang Y, Wang J, Hou J, Wang B. RS-YOLO: a highly accurate real-time detection model for small-target pest. Smart Agricult Technol. 2025;12:101212. doi:10.1016/j.atech.2025.101212. [Google Scholar] [CrossRef]

12. Feng Z, Shi R, Jiang Y, Han Y, Ma Z, Ren Y. SPD-YOLO: a method for detecting maize disease pests using improved YOLOv7. Comput Mater Contin. 2025;84(2):3559–75. doi:10.32604/cmc.2025.065152. [Google Scholar] [CrossRef]

13. Gao Z, Li Z, Zhang C, Wang Y, Su J. Double self-attention based fully connected feature pyramid network for field crop pest detection. Comput Mater Contin. 2025;83(3):4353–71. doi:10.32604/cmc.2025.061743. [Google Scholar] [CrossRef]

14. Li B, Liu L, Jia H, Zang Z, Fu Z, Xi J. YOLO-TP: a lightweight model for individual counting of Lasioderma serricorne. J Stored Prod Res. 2024;109:102456. doi:10.1016/j.jspr.2024.102456. [Google Scholar] [CrossRef]

15. Suzauddola M, Zhang D, Zeb A, Chen J, Wei L, Rayhan AS. Advanced deep learning model for crop-specific and cross-crop pest identification. Expert Syst Appl. 2025;274:126896. doi:10.1016/j.eswa.2025.126896. [Google Scholar] [CrossRef]

16. Anam I, Arafat N, Hafiz MS, Jim JR, Kabir MM, Mridha M. A systematic review of UAV and AI integration for targeted disease detection, weed management, and pest control in precision agriculture. Smart Agricult Technol. 2024;9:100647. doi:10.1016/j.atech.2024.100647. [Google Scholar] [CrossRef]

17. Bi H, Li T, Xin X, Shi H, Li L, Zong S. Non-destructive estimation of wood-boring pest density in living trees using X-ray imaging and edge computing techniques. Comput Electron Agric. 2025;233(1):110183. doi:10.1016/j.compag.2025.110183. [Google Scholar] [CrossRef]

18. Lu Y, Liu P, Tan C. MA-YOLO: a pest target detection algorithm with multi-scale fusion and attention mechanism. Agronomy. 2025;15(7):1549. doi:10.3390/agronomy15071549. [Google Scholar] [CrossRef]

19. Huang Y, Liu Z, Zhao H. YOLO-YSTs: an improved YOLOv10n-based method for real-time field pest detection. Agronomy. 2025;15(3):575. doi:10.3390/agronomy15030575. [Google Scholar] [CrossRef]

20. Zheng Y, Zheng W, Du X. Paddy-YOLO: an accurate method for rice pest detection. Comput Electron Agric. 2025;238:110777. doi:10.1016/j.compag.2025.110777. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools