Open Access

Open Access

ARTICLE

Safety-Aware Reinforcement Learning for Self-Healing Intrusion Detection in 5G-Enabled IoT Networks

1 College of Computer Science, Informatics and Computer Systems Department, King Khalid University, Abha, Saudi Arabia

2 Department of Information Systems, College of Computer and Information Sciences, Princess Nourah bint Abdulrahman University, P.O. Box 84428, Riyadh, Saudi Arabia

3 Computer Science and Engineering Department, Yanbu Industrial College, Royal Commission for Jubail and Yanbu, Yanbu, Saudi Arabia

4 Blockpass ID Lab, Edinburgh Napier University, Edinburgh, UK

5 Department of Information Technology, Cybersecurity and Computer Science, The American College of Greece, Athens, Greece

* Corresponding Author: Nikolaos Pitropakis. Email:

Computers, Materials & Continua 2026, 87(2), 88 https://doi.org/10.32604/cmc.2026.074274

Received 07 October 2025; Accepted 20 January 2026; Issue published 12 March 2026

Abstract

The expansion of 5G-enabled Internet of Things (IoT) networks, while enabling transformative applications, significantly increases the attack surface and necessitates security solutions that extend beyond traditional intrusion detection. Existing intrusion detection systems (IDSs) mainly operate in an open-loop manner, excelling at classification but lacking the ability for autonomous, safety-aware remediation. This gap is particularly critical in 5G environments, where manual intervention is too slow and naive automation can lead to severe service disruptions. To address this issue, we propose a novel Self-Healing Intrusion Detection System (SH-IDS) framework that develops a closed-loop cyber defense mechanism. The main technical contribution is the integration of a deep neural network-based threat detector, which offers uncertainty-quantified predictions, with a safety-aware reinforcement learning (RL) engine formulated as a Constrained Markov Decision Process (CMDP). The CMDP explicitly models operational safety as cost constraints, and a new runtime safety shield actively adjusts any unsafe action proposed by the RL agent to the nearest safe alternative, ensuring operational integrity. Additionally, we introduce a composite utility function for the comprehensive evaluation of the system. Empirical analysis on the 5G-NIDD dataset demonstrates the superior performance of our framework: the detector achievesKeywords

The rapid deployment of 5G networks is reshaping the Internet of Things (IoT) ecosystem by enabling a broad spectrum of applications, including industrial automation, smart cities, and connected healthcare [1,2]. The distinctive features of 5G, including ultra-reliable low-latency communication (URLLC) and massive machine-type communication (mMTC), offer unprecedented connectivity and real-time data exchange [3,4]. However, this expanded and heterogeneous environment also enlarges the attack surface, exposing critical IoT infrastructures to increasingly sophisticated cyber threats [5].

Conventional Intrusion Detection Systems (IDSs), although fundamental to network defense, are not adequately equipped to secure modern, highly dynamic 5G-enabled IoT environments [6,7]. Anomaly-based systems offer some adaptability; however, they often exhibit high false-positive rates and lack the contextual awareness needed for reliable threat interpretation [8]. Moreover, traditional IDSs operate in a reactive, open-loop manner, focusing primarily on detection and alerting while leaving mitigation and remediation to human operators [9]. In 5G settings where latency requirements are measured in milliseconds, any reliance on manual intervention results in unacceptable delays that allow attacks to evolve, propagate, or disrupt services before appropriate countermeasures can be executed [10].

Although recent intrusion detection studies designed for 5G networks have made notable progress in areas such as data preprocessing, feature engineering, and the use of advanced deep learning models, the vast majority remain predominantly detection-oriented [11]. These systems can identify malicious behavior. Still, they do not provide a mechanism for autonomously and safely translating detections into timely corrective actions, a capability crucial to latency-sensitive 5G-IoT infrastructures [12]. Consequently, even high-performing IDS solutions continue to rely on manual or semi-automated responses, which introduce delays and increase the likelihood that threats will propagate [13]. Existing automation efforts also rarely include explicit operational safety constraints, which raises the risk of overly disruptive remediation actions, such as isolating critical nodes in response to minor anomalies [14]. These limitations reveal a substantial gap in the current literature and emphasize the need for a practical self-healing IDS that integrates high-accuracy detection with intelligent, safety-aware remediation, capable of maintaining both effectiveness and service continuity.

To address these limitations, we propose a Self-Healing Intrusion Detection System (SH-IDS), a closed-loop cyber defense framework that combines advanced threat detection with safe, autonomous remediation. The system works in two stages. First, a deep neural network trained on the complexities of 5G network traffic functions as the detection module, delivering real-time, high-accuracy classification of intrusions. Second, the identified threat is sent to a Safe Reinforcement Learning (SRL) decision-making engine, structured as a Constrained Markov Decision Process (CMDP) [15]. The SRL agent learns an optimal policy that maps detected threats to specific healing actions, such as isolating compromised nodes, reducing malicious traffic, or patching vulnerable services, while adhering to explicit safety constraints that penalize disruptive responses. This guarantees both effective and stable autonomous operation.

The primary contributions of this article are summarized below:

1. A closed-loop self-healing intrusion detection architecture is introduced, integrating high-accuracy deep learning–based threat detection with autonomous remediation. This unified design contrasts with traditional IDS approaches that operate exclusively as detection mechanisms and lack integrated, continuous defense loops suitable for real-time 5G-IoT environments.

2. A safety-aware decision-making framework based on a Constrained Markov Decision Process (CMDP) is formulated, enabling reinforcement learning [16] to generate remediation strategies that simultaneously consider mitigation effectiveness and operational safety. This formulation addresses a notable limitation in existing IDS research, where automated responses rarely incorporate explicit safety constraints [16].

3. A runtime safety shield is incorporated to guarantee safety-compliant action selection during both training and deployment. The shield monitors proposed remediation actions, identifies those that violate predefined safety conditions, and projects them onto the nearest safe alternatives. Such mandatory runtime enforcement is absent from current IDS and automated defense systems, which typically lack mechanisms to prevent operationally disruptive actions.

4. A detailed architectural integration of uncertainty-aware deep neural network detection, CMDP-driven safe reinforcement learning, and runtime safety shielding is presented. This combination of calibrated uncertainty estimation, constrained optimization, and safety-enforced action correction distinguishes the proposed SH-IDS from existing schemes that remain detection-centric, do not incorporate prediction uncertainty into decision making, or employ automated responses without verifiable safety guarantees.

5. The proposed framework achieves

The remainder of the article is organized as follows. Section 2 presents an overview of the related studies. Section 3 describes the proposed architecture. Section 4 presents the experimental methodology and a discussion of the results. Section 5 concludes the research.

The increasing deployment of 5G networks, which support critical infrastructure from smart grids to industrial IoT, has heightened the focus on robust cybersecurity measures. The unique design of 5G, defined by network softwarization, network slicing, and the growth of endpoints, creates a broad and complex attack surface. As a result, IDSs have adapted to these new challenges, with recent research using advanced artificial intelligence (AI) and machine learning (ML) techniques. This section reviews current IDS research specifically targeting 5G environments, emphasizing progress toward more adaptive, efficient, and specialized solutions.

Rani et al. [17] proposed a new Target Projection Regressed Gradient Convolutional Neural Network (TPRGCNN) for smart grid security, combining feature selection and classification to achieve high detection accuracy while reducing computational load. Similarly, Gurushanker et al. [18] emphasized the need for 5G-specific IDS by testing ML models on both TCP/IP flow data and Packet Forwarding Control Protocol (PFCP) signaling data, achieving high accuracy with TCP/IP data and highlighting the difficulty of detecting control-plane-specific attacks. These studies show a clear trend toward customizing detection models to suit the unique data features of 5G networks.

Recognizing the evolving threat landscape, several studies have added mechanisms for adaptability and robustness. Neha and Bhatia [19] presented an IDS framework using dynamic neural networks and adversarial training, enabling incremental learning to detect new attacks and resist data poisoning, a key vulnerability in systems that learn continuously. Building on robustness, Reis [20] introduced a hybrid AI-driven framework combining autoencoders, LSTMs, and CNNs to detect a broader range of spatial and temporal anomalies. This work also incorporated federated learning and edge AI, addressing scalability and data privacy issues in distributed smart city environments. These approaches represent a major advancement beyond static, signature-based detection.

Further specialization of the 5G core network has been a key area of innovation. Radoglou-Grammatikis et al. [21] developed 5GCIDS, an IDS specifically designed to protect the N4 interface between the Session Management Function (SMF) and User Plane Function (UPF). Their contribution includes a PFCP flow statistics generator and the integration of explainable AI (XAI) via TreeSHAP, providing crucial transparency for security analysts. In the area of decentralized and resource-efficient learning, Adjewa et al. [22] explored the use of an optimized BERT model within a federated learning framework. Their work demonstrates the viability of large language models for intrusion detection on resource-constrained edge devices while maintaining data privacy and achieving high accuracy even after model compression. Mahmood et al. [23] applied different machine learning algorithms to 5G intrusion detection, finding that a Linear Regression algorithm could achieve high accuracy. This highlights that even traditional models can be effective for specific tasks in 5G security.

While the surveyed literature provides essential building blocks, including specialized feature engineering, adversarial robustness, hybrid model architectures, privacy-preserving federated learning, and core-network-specific detection, a significant gap remains. Most of these studies are primarily detection-focused. They excel at identifying malicious activity but lack an integrated, autonomous, and safety-aware remediation mechanism. Common limitations include the absence of end-to-end evaluations that address detection and mitigation together, insufficient consideration of the operational costs of automated responses, and a lack of explicit constraints to prevent service-disruptive actions. Naive automation, without formal safety guarantees, can cause serious service issues, such as the unnecessary isolation of critical nodes.

Our work directly bridges this gap by proposing a closed-loop SH-IDS. We integrate a high-accuracy deep neural network (DNN) detector, trained on the 5G-NIDD dataset, with a safety-aware reinforcement learning engine designed as a Constrained Markov Decision Process. This is complemented by a runtime safety shield. This combined approach ensures that effective threat mitigation always adheres to explicit operational safety constraints, making the system not only highly effective in detection but also trustworthy and cautious in autonomous actions.

3 The Proposed Self-Healing Intrusion Detection System

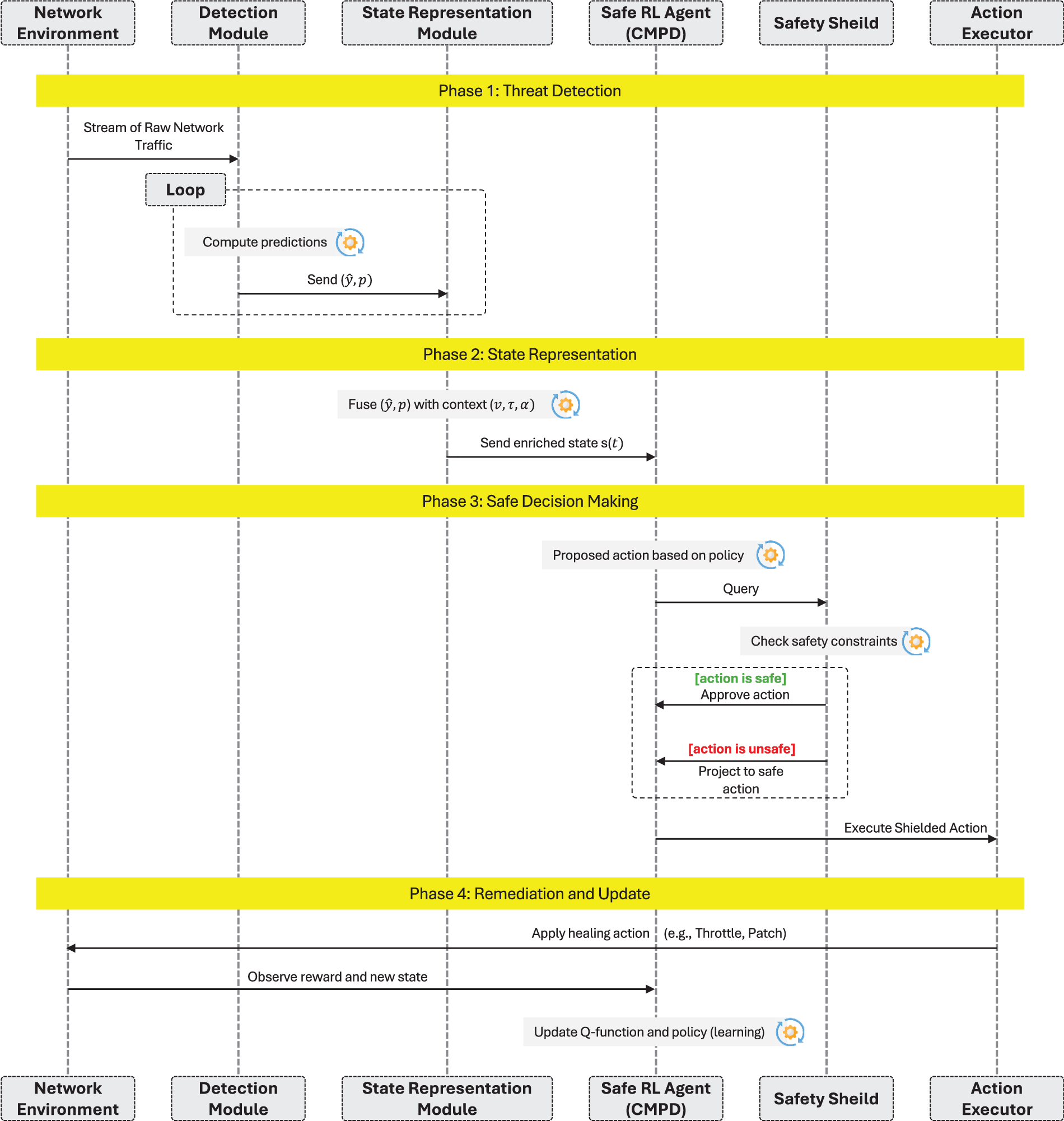

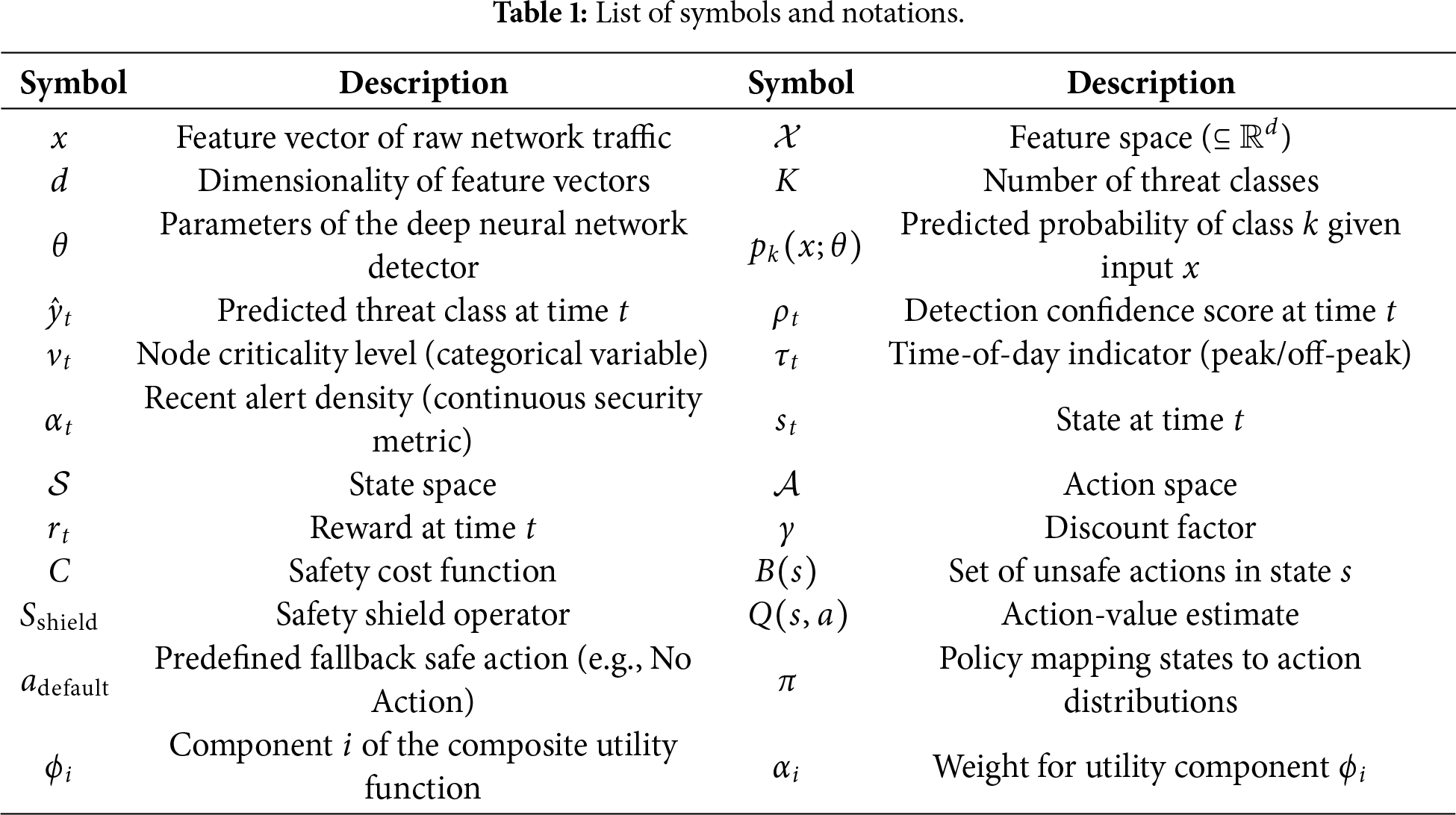

This section presents the mathematical foundation and operational workflow of the Proposed SH-IDS. This closed-loop cyber defense framework integrates high-precision threat detection with safe autonomous remediation for 5G-IoT networks. The workflow of the proposed framework is presented in Fig. 1. Table 1 presents the utilized notations and their description in this section.

Figure 1: Workflow of the proposed SH-IDS architecture.

3.1 System Overview and Operational Workflow

The SH-IDS operates through four integrated phases that form a complete cyber defense loop:

1. Threat Detection & Confidence Estimation: Raw network traffic and telemetry data represented as feature vectors

2. State Representation & Context Enrichment: The detector’s outputs (predicted labels and confidence scores) are fused with real-time contextual information about the network environment. This enriched state representation provides a comprehensive view of the current security situation and operational context.

3. Safe Autonomous Decision-Making: A Safety-Aware Reinforcement Learning agent, formulated as a Constrained Markov Decision Process, selects optimal remediation actions. A runtime safety shield intercepts each proposed action and enforces operational constraints by projecting unsafe actions to safe alternatives.

4. Remediation Execution & System Update: Approved healing actions are executed in the network environment, which transitions to a new state. The agent receives reward signals based on the effectiveness and safety of the taken action, completing the feedback loop for continuous learning.

This closed-loop architecture enables the system to autonomously adapt to evolving threats while maintaining operational stability through explicit safety constraints.

3.2 Threat Detection with Uncertainty Quantification

Threat Detection & Confidence Estimation: Raw network traffic and telemetry data represented as feature vectors

where each

The predicted threat class is determined through maximum a posteriori estimation:

Beyond classification, the module quantifies prediction confidence as the maximum softmax probability:

This confidence measure is crucial for downstream safety-aware decision making, particularly in handling uncertain detections. The detector is trained by minimizing the categorical cross-entropy loss over labeled training data:

3.3 Enriched State Representation

The reinforcement learning agent operates on a comprehensive state representation that extends beyond simple threat classification to incorporate detection confidence and operational context [16]. This enriched state enables context-aware remediation policies that consider both the security situation and network operational status.

The state at time

where the components are:

•

•

•

•

•

This multi-faceted state representation allows the agent to make nuanced decisions, such as avoiding aggressive remediation on critical nodes during peak hours or adjusting response strategies based on detection confidence levels.

3.4 Constrained Markov Decision Process Formulation

The core decision-making process is formally modeled as a Constrained Markov Decision Process (CMDP) to optimize threat mitigation while simultaneously respecting operational safety constraints. The CMDP is defined as:

where S is the state space, A the action space, T the transition dynamics,

3.4.1 Reward Function with Uncertainty-Aware Expectation

The reward mechanism explicitly accounts for detection uncertainty to prevent overreaction to potentially false positives. The immediate reward is computed as an expectation over possible true states, weighted by the detector’s confidence distribution:

For an action

where

The reward function decomposes into three components:

where

3.4.2 Safety Shield with Fallback Guarantee

The safety shield constitutes a runtime enforcement mechanism that guarantees operational constraints are never violated. Define the set of unsafe actions in state

The shielded action operator ensures safe action selection:

where

3.4.3 Constrained Optimization Objective

The learning objective is to find a policy

while satisfying safety requirements expressed as expected cost constraints:

3.5 Safe Q-Learning with Action Projection

The Q-learning algorithm is augmented with the safety shield to ensure constraint satisfaction throughout the learning process. The Q-function update incorporates shielded next-state actions:

where the temporal difference error is computed as:

The resulting optimal safe policy naturally integrates the safety mechanism:

This formulation guarantees that the deployed policy is both performance-optimized and safe by construction.

3.6 Composite Performance Metric

Given the multi-dimensional nature of system requirements in 5G-IoT environments, we evaluate SH-IDS performance using a composite utility function that balances competing objectives:

The utility components represent key performance indicators:

•

•

•

•

•

•

The weights

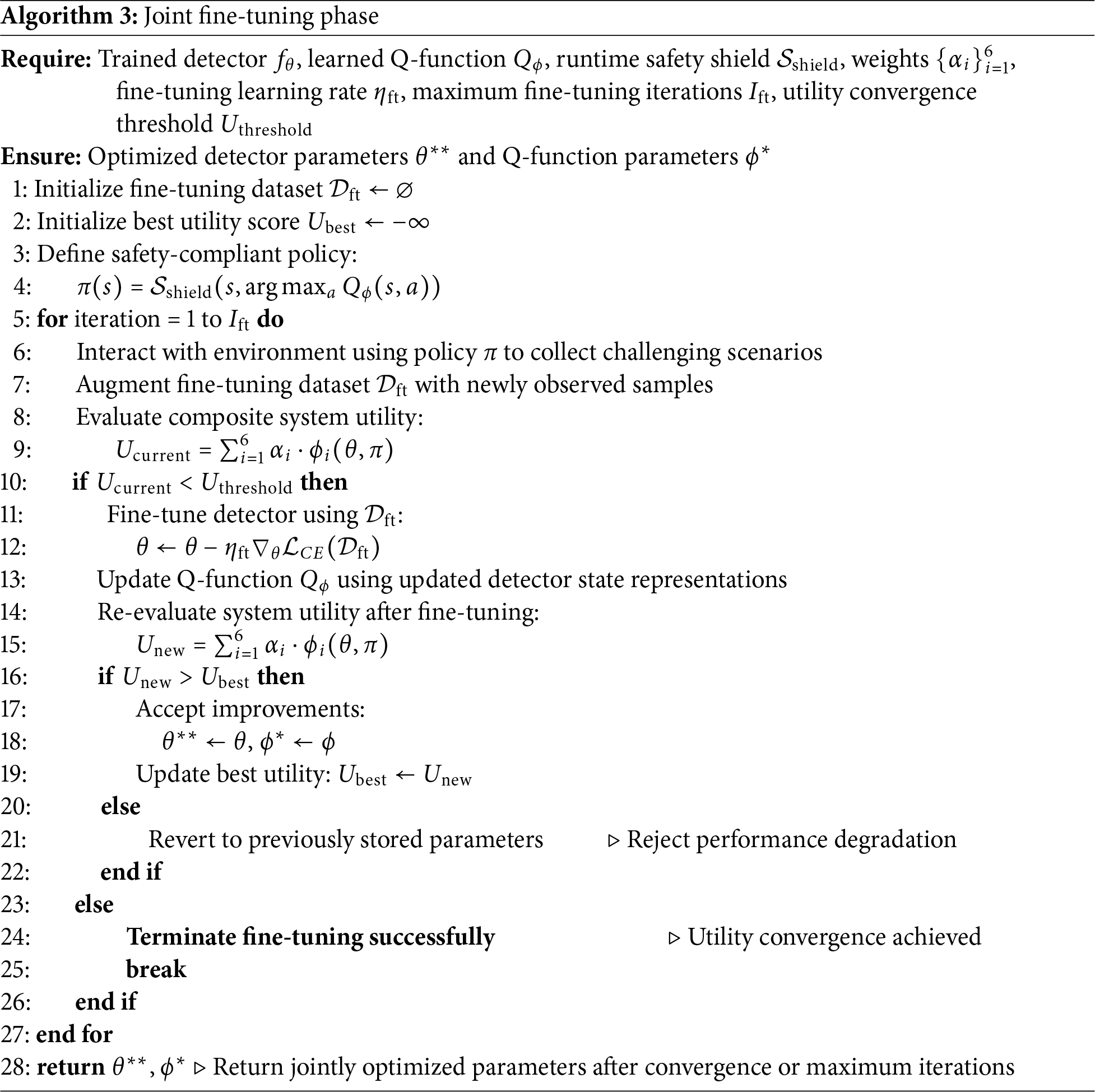

3.7 Training Pipeline of the Proposed SH-IDS

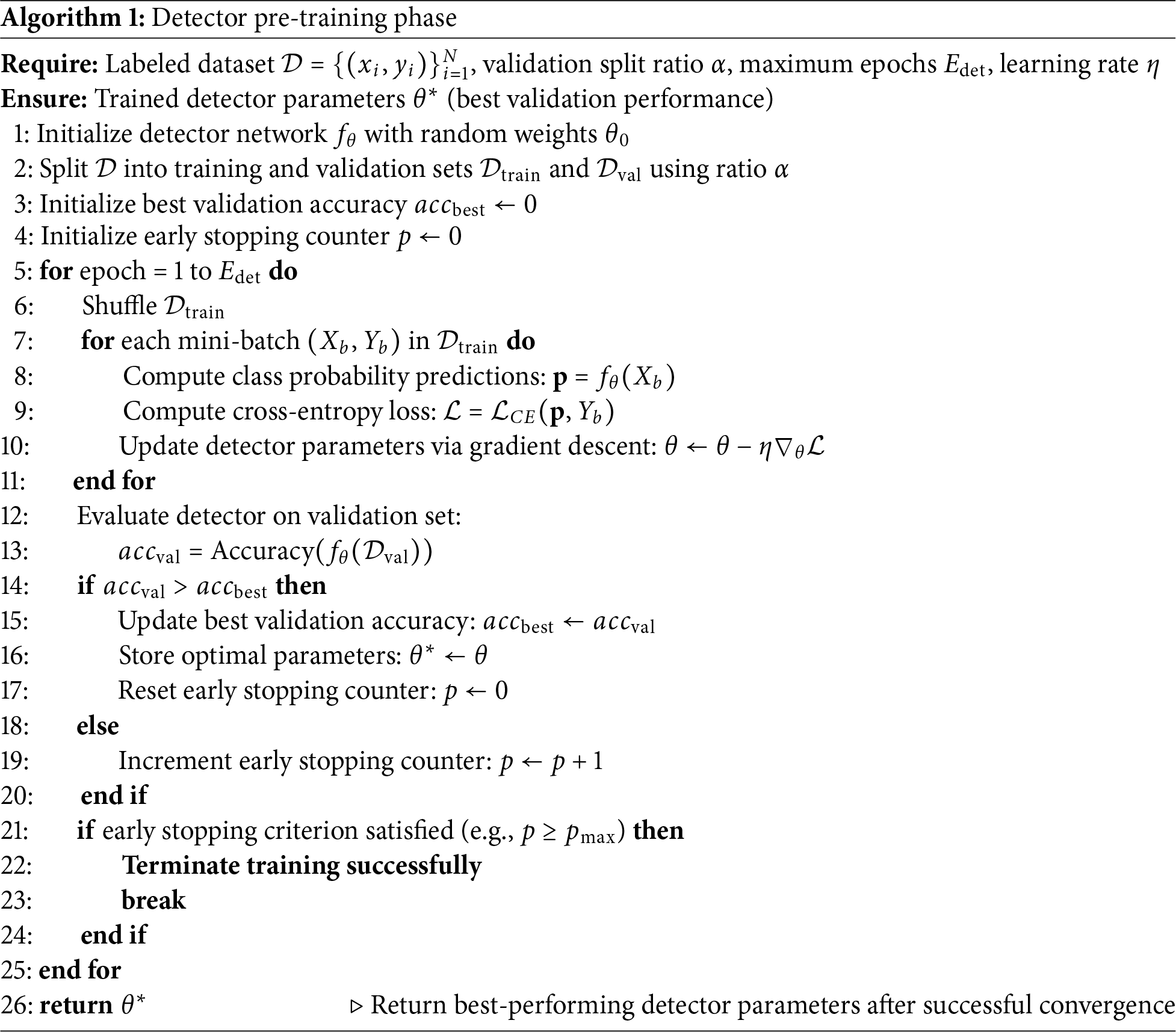

The SH-IDS training pipeline employs a structured three-phase approach that progressively builds from supervised detection learning to safe autonomous decision-making. The pipeline ensures robust performance while maintaining operational safety throughout the training process.

1. Phase 1: Detector Pre-training phase presented in Algorithm 1 establishes the foundation by training a deep neural network for accurate threat classification with calibrated confidence estimates. This phase uses historical network data to learn discriminative patterns between normal and malicious traffic, employing standard deep learning techniques with validation-based early stopping.

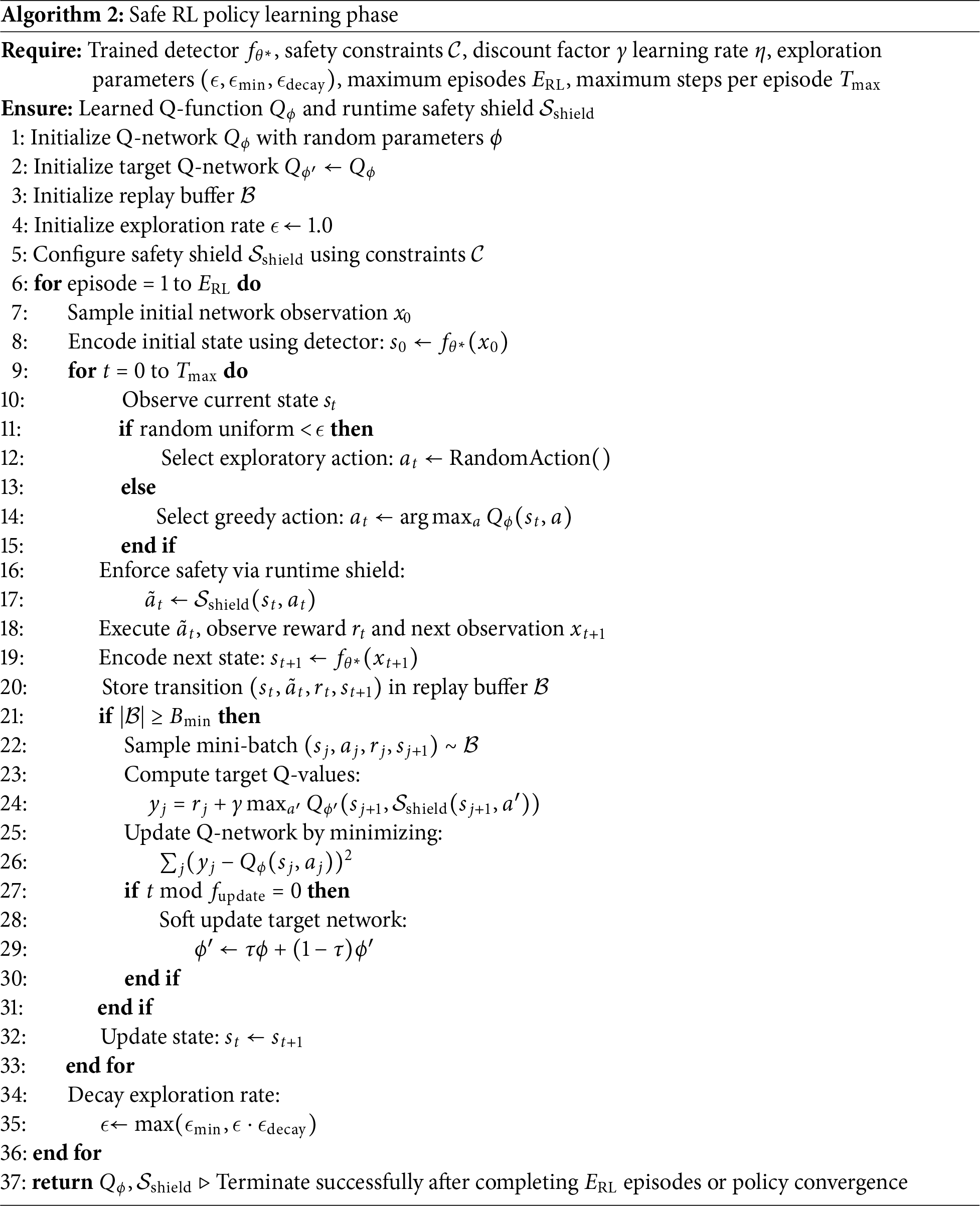

2. Phase 2: Safe RL Policy Learning phase illustrated in Algorithm 2 focuses on learning optimal remediation policies within safety constraints. The pre-trained detector from Phase 1 provides state representations, while the safety shield ensures all exploratory actions comply with operational limits. This phase utilizes experience replay and target networks to facilitate stable learning.

3. Phase 3: Joint Fine-tuning phase presented in Algorithm 3 performs iterative refinement of both detection and remediation components based on the composite utility metric. This phase addresses performance bottlenecks and adapts the system to challenging edge cases, ensuring balanced optimization across all system objectives.

The pipeline incorporates curriculum learning, beginning with basic threat scenarios and progressively introducing complex attack patterns. Safety constraints are enforced at every phase, making the training process suitable for critical infrastructure applications.

This section offers a thorough empirical evaluation of the proposed SH-IDS. We begin by describing the experimental setup and dataset, then detail the specific configurations tested. Subsequently, we analyze the system’s performance, emphasizing threat-detection accuracy, the safety-aware reinforcement learning agent’s effectiveness, and the computational efficiency of the framework.

The experiments were performed on the Google Colab Pro platform, using its cloud-based computing resources, including an NVIDIA T4 Tensor Core GPU and high-RAM capabilities. This environment provides the necessary processing power to efficiently train and evaluate deep learning and reinforcement learning models at the core of our proposed architecture.

To validate the SH-IDS in a relevant and contemporary network environment, we used the 5G-NIDD dataset [24]. This dataset is specifically designed for 5G networks. It includes a realistic mix of normal traffic and various modern cyberattacks, such as flooding, scanning, and Denial-of-Service (DoS). Its use ensures that our evaluation captures the challenges and complexities of securing next-generation IoT networks, making it an ideal benchmark for assessing the proposed system’s performance.

Before training, the 5G-NIDD dataset underwent structured preprocessing to ensure high-quality, unbiased training data. First, all numerical features were scaled to a [0, 1] range using min–max normalization to prevent large-magnitude attributes from dominating during neural network training. Second, class imbalance, particularly between benign and minority attack types, was addressed through stratified sampling and moderate oversampling of underrepresented classes, thereby maintaining the statistical integrity of the traffic distribution. Third, incomplete or noisy samples with missing feature values or corrupted network fields were removed after threshold-based validation checks. Finally, all categorical attributes were encoded with one-hot encoding and combined into fixed-length feature vectors. These preprocessing steps made sure that the detector training in Algorithm 1 used clean, balanced, and normalized data, reducing bias and improving the model’s robustness and generalizability.

4.2 Performance Evaluation Metrics

To rigorously assess the proposed SH-IDS, we employ a multidimensional evaluation framework that covers threat detection accuracy, reinforcement learning efficacy, and system efficiency. The utilized performance assessment metrics are summarized in the following.

4.2.1 Detection Performance Metrics

The detection module is evaluated using standard classification metrics derived from the confusion matrix, where TP, TN, FP, and FN represent True Positives, True Negatives, False Positives, and False Negatives, respectively:

• Accuracy: The proportion of total instances correctly classified.

• Precision: The ratio of correctly predicted positive observations to the total predicted positives.

• Recall: The ratio of correctly predicted positive observations to all observations in the actual class.

• F1-Score: The harmonic mean of Precision and Recall, providing a balanced measure for imbalanced datasets.

4.2.2 Self-Healing and RL Metrics

The performance of the autonomous remediation engine is quantified by the cumulative reward and the safety shield’s intervention rate:

• Expected Cumulative Reward: Measures the agent’s ability to maximize mitigation success while minimizing operational costs over time

• Safety Shield Block Count: The total number of proposed actions

4.2.3 Efficiency and Composite Utility

To ensure deployability in 5G-IoT environments, we measure latency and model size, integrated into a composite utility function

• Inference Latency

• Composite Utility: A weighted sum of performance indicators

4.3 Experimental Configurations

To rigorously evaluate the performance and behavior of the SH-IDS, we established seven distinct experimental configurations. Each configuration modifies a specific component or hyperparameter of the system to isolate its impact on the overall performance.

The configurations are described as follows:

1. Baseline SH-IDS: The standard implementation of our proposed architecture, featuring a standard deep neural network for detection and a Q-learning agent with baseline learning parameters and an active safety shield.

2. Complex Detector: This configuration replaces the standard detector with a more complex neural network containing additional hidden layers and neurons to assess the trade-off between model complexity and detection performance.

3. High Learning Rate RL: The reinforcement learning agent’s learning rate is significantly increased to observe its effect on the speed and stability of policy convergence.

4. Fast Epsilon Decay RL: The exploration-exploitation trade-off in the RL agent is shifted by implementing a faster decay for the epsilon parameter, encouraging the agent to exploit known effective actions more quickly.

5. Extended RL Training: The RL agent undergoes a significantly longer training period (5000 episodes) to determine if more extensive training leads to a more optimal and stable remediation policy.

6. High-Safety Constraints: The safety shield is configured with a broader set of critical nodes, making the operational constraints more stringent to evaluate the system’s performance under heightened safety requirements.

7. No Safety Shield (Baseline): This configuration deactivates the safety shield entirely, allowing the RL agent to select any action without constraint. It serves as a crucial baseline to demonstrate the value and impact of the safety-aware decision-making component.

4.4 Performance Evaluation of the Threat Detection Module

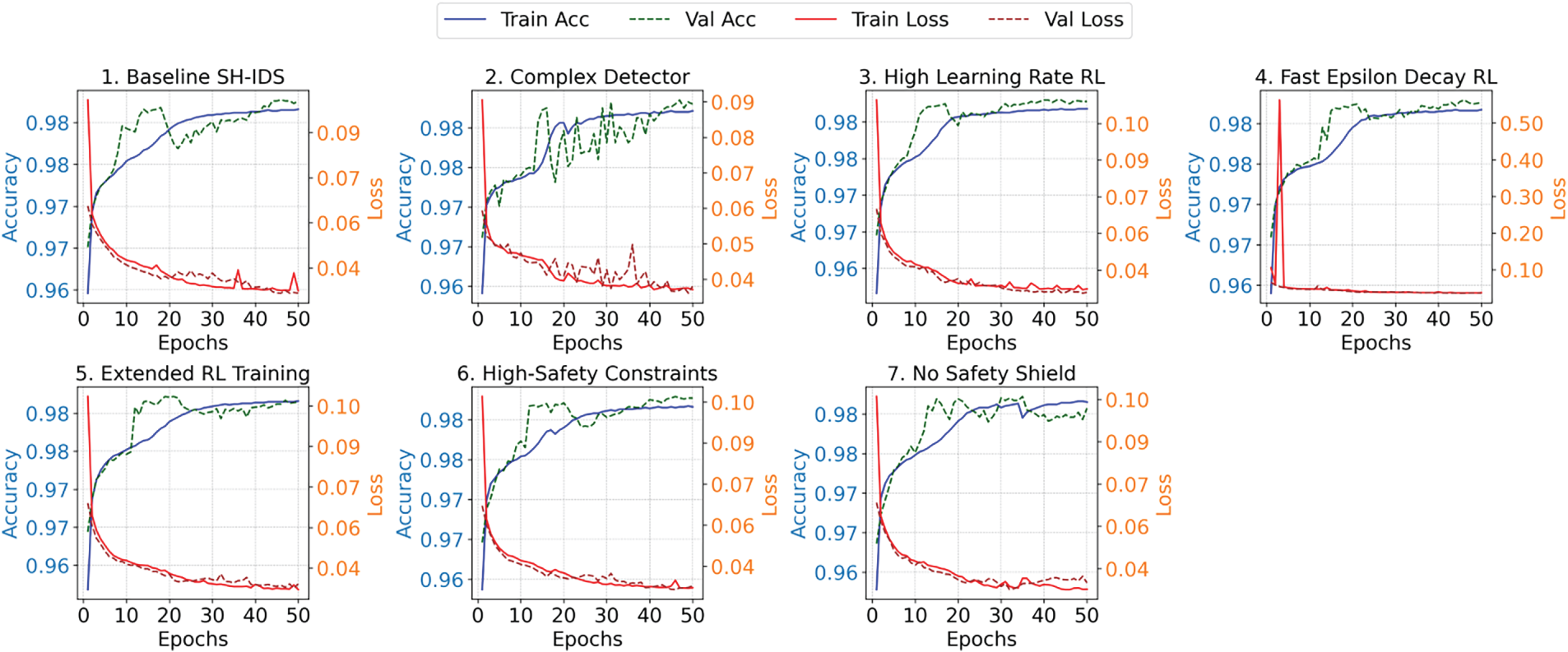

Fig. 2 illustrates the training and validation results for the seven experimental configurations. In all cases, training and validation accuracies increase rapidly and typically stabilize within the first 20–30 epochs. This indicates that the proposed model can efficiently learn useful patterns. Meanwhile, the training and validation loss consistently decline and stabilize at low values, with no significant differences between the two curves.

Figure 2: Training and validation curves under different experimental configurations.

The close match between training and validation performance across all configurations, including the Baseline SH-IDS, Complex Detector, and High-Safety Constraints, suggests that the preprocessing steps and stratified sampling were effective in reducing overfitting. In Configuration 2 (Complex Detector), although the model architecture is more complex, the validation loss remains stable, indicating that the model generalizes well. In comparison, the “No Safety Shield” baseline exhibits slightly greater variation in validation loss (Fig. 2, Plot 7), suggesting that safety constraints also improve training stability. A detailed discussion of system performance is presented below.

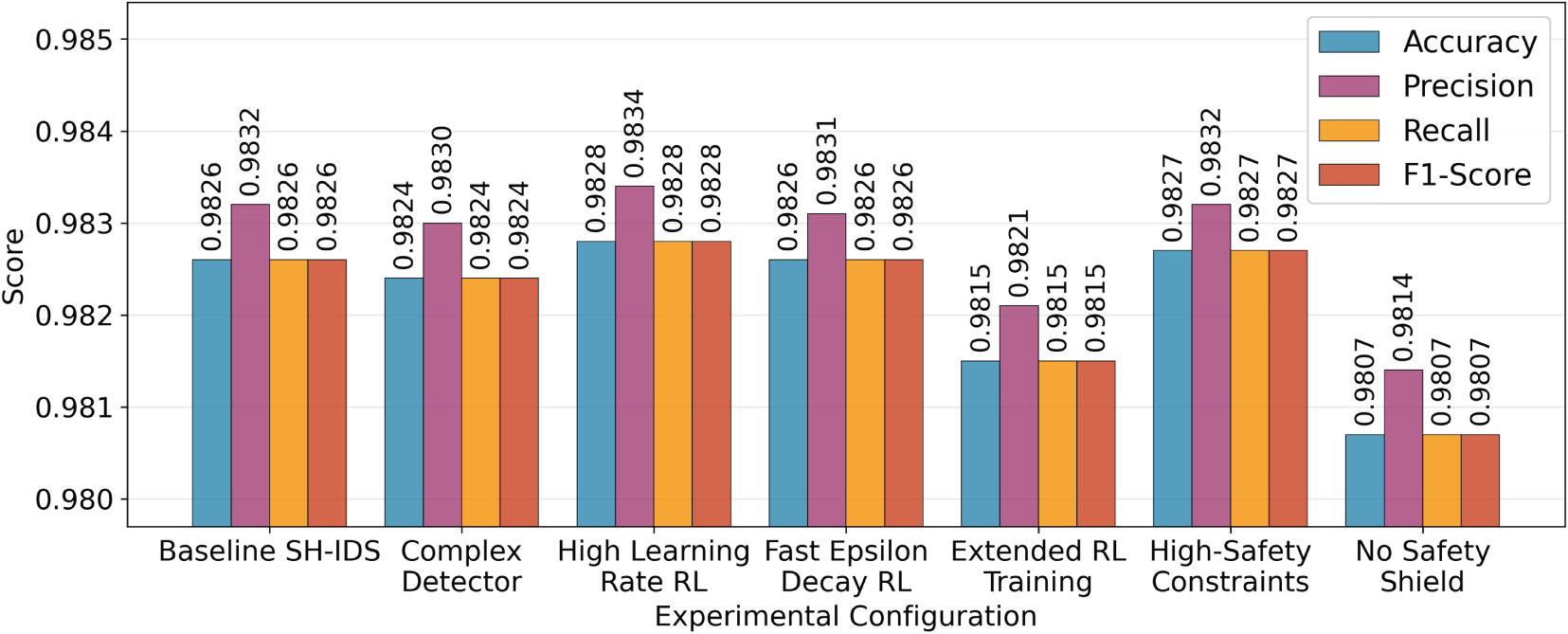

The attack detection performance of the proposed design is presented in Fig. 3. The results consistently demonstrate high performance across all configurations, underscoring the robustness of the chosen approach in identifying threats within the 5G-NIDD dataset.

Figure 3: Attack detection performance under different experimental configurations.

The Baseline SH-IDS setup achieved an outstanding accuracy of 98.26%, with high precision (98.32%), recall (98.26%), and F1-Score (98.26%). These metrics indicate that the detector is highly effective in accurately identifying both normal and malicious traffic, with minimal false positives and false negatives. Interestingly, the Complex Detector setup did not produce a significant performance gain and even resulted in a slight decrease in accuracy to 98.24%. This suggests that the standard model architecture is already sufficiently complex to detect patterns in the data, and adding further complexity does not offer additional advantages, while increasing computational demands. The other setups, mainly changing the RL agent’s parameters, showed little variation in detection performance, confirming that the effectiveness of the detection module does not depend on the configuration of the remediation module. The lowest accuracy recorded was 98.07% in the “No Safety Shield” experiment, which remains a remarkably high result.

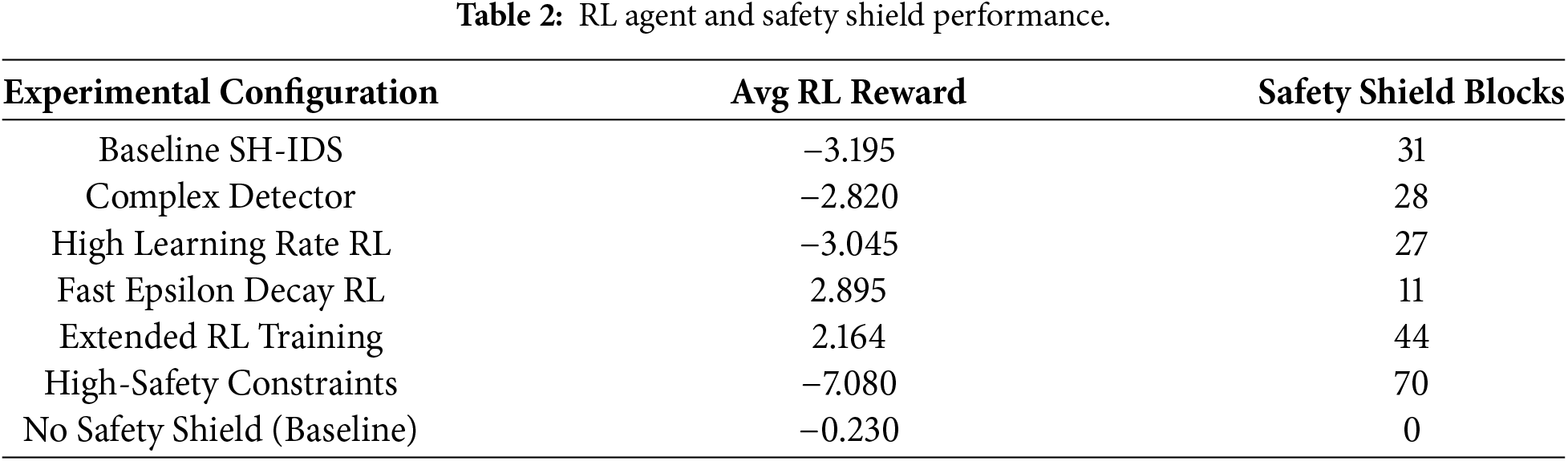

4.5 Analysis of the RL-Based Self-Healing Module

The performance of the reinforcement learning agent and the safety shield is summarized in Table 2. This table provides critical insights into the effectiveness of the autonomous decision-making process. The two key metrics are the average reward obtained by the RL agent and the number of times the safety shield intervened to block a potentially disruptive action.

A key finding is the positive average reward achieved by the Fast Epsilon Decay RL (2.895) and Extended RL Training (2.164) configurations. This demonstrates that the agent has successfully learned a policy that not only effectively reduces threats but also minimizes unnecessary or costly actions, resulting in positive rewards over time. In contrast, the Baseline SH-IDS received a negative average reward (−3.195), indicating that its policy was not fully optimized within the 1000-episode training period.

The importance of the safety mechanism is clearly shown by comparing the Baseline SH-IDS with the No Safety Shield setup. While the “No Safety Shield” agent achieved a nearly neutral average reward (−0.230), it did so without any safety interventions. This means the agent was free to take actions, such as isolating critical nodes, that the shield would have blocked. The Baseline SH-IDS, however, blocked 31 such unsafe actions. This difference is even more evident in the High-Safety Constraints scenario, where the shield blocked 70 actions, resulting in a significantly lower average reward (−7.080) due to the penalties for attempting unsafe operations. This shows a clear trade-off: the safety shield effectively enforces operational limits but at the expense of immediate reward, favouring system stability over aggressive and potentially harmful fixes.

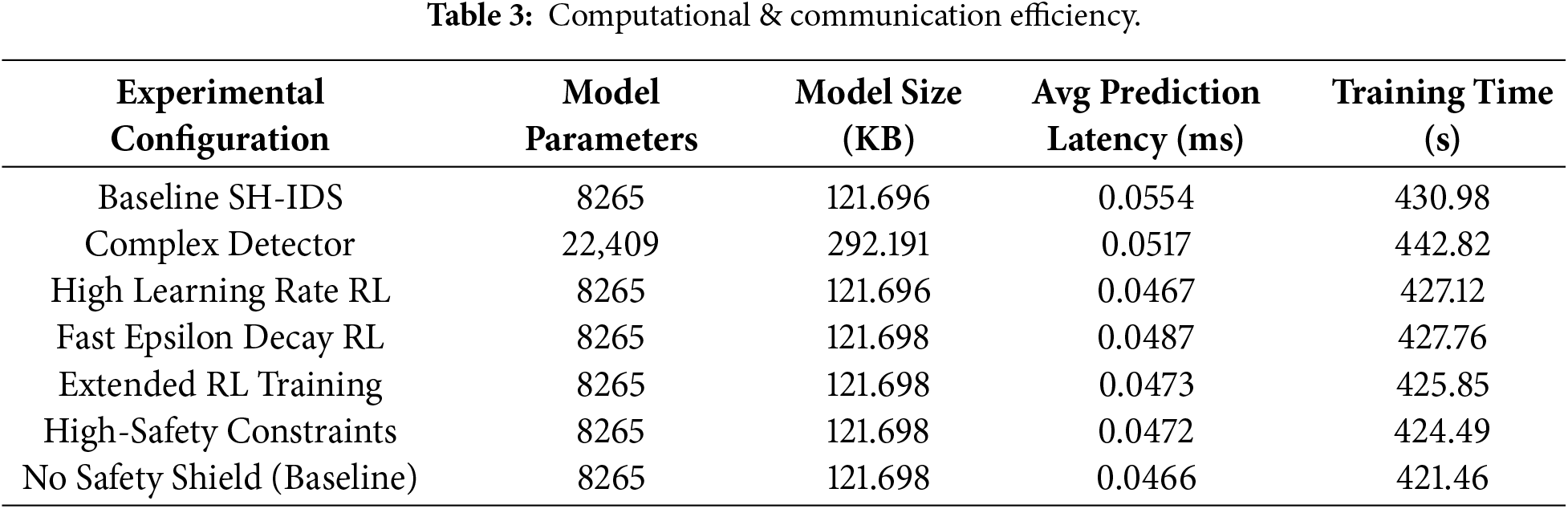

4.6 Computational and Communication Efficiency

Table 3 presents the efficiency metrics of the proposed SH-IDS, which are critical for its practical deployment in resource-constrained IoT environments. The Baseline SH-IDS model is relatively lightweight, with 8265 parameters and a serialized model size of approximately 121.7 KB. This small footprint makes it suitable for deployment on edge devices.

As expected, the Complex Detector has a much larger footprint, with 22,409 parameters and a model size of 292.2 KB. Since it showed no noticeable improvement in detection accuracy, the baseline model offers a better balance between performance and resource use. Latency is a key metric for 5G networks. The average prediction latency across all configurations is very low, ranging from 0.0466 to 0.0554 ms. This almost instant prediction capability meets the stringent low-latency requirements of 5G URLLC applications, enabling the SH-IDS to perform real-time detection without introducing significant network delay. The training times are also reasonable, with the baseline model training taking about 431 s on the specified hardware.

It is important to note that the reinforcement learning loop and safety shield introduce only constant per-node overhead, making the SH-IDS scalable for large, multi-node 5G-IoT deployments. The RL agent operates on a compact 5-dimensional state and a fixed, small action set, resulting in a single forward pass through the Q-network and a constant-time safety-shield check per decision. The shield itself performs only a bounded filtering step over the available actions, requiring no inter-node coordination. Therefore, the combined RL + shield inference latency (measured at

4.7 Analysis of Learned Remediation Policies

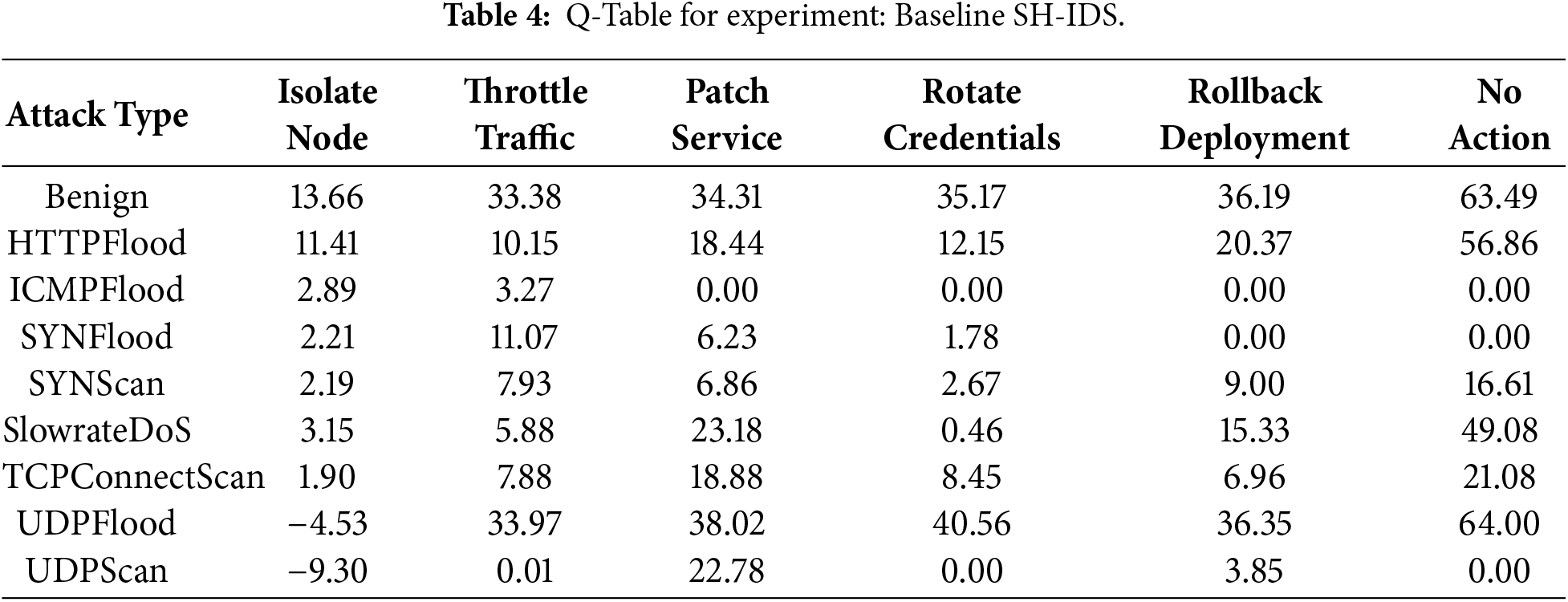

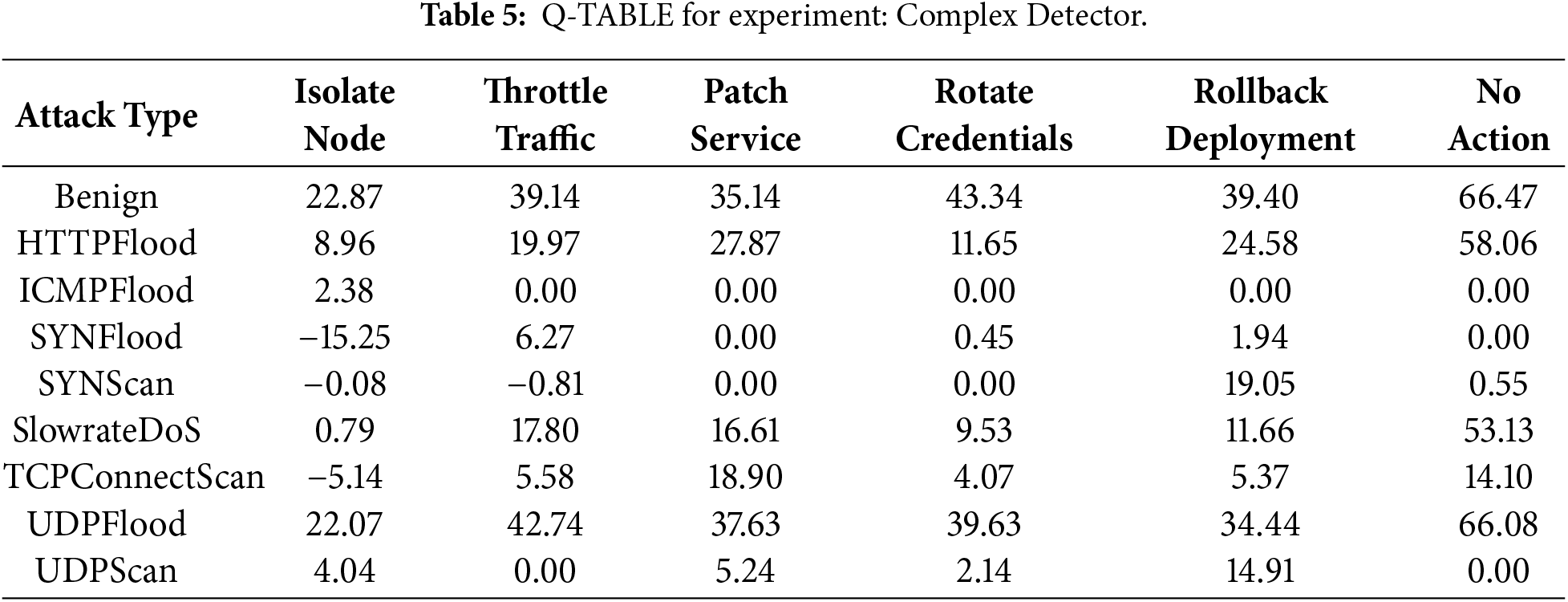

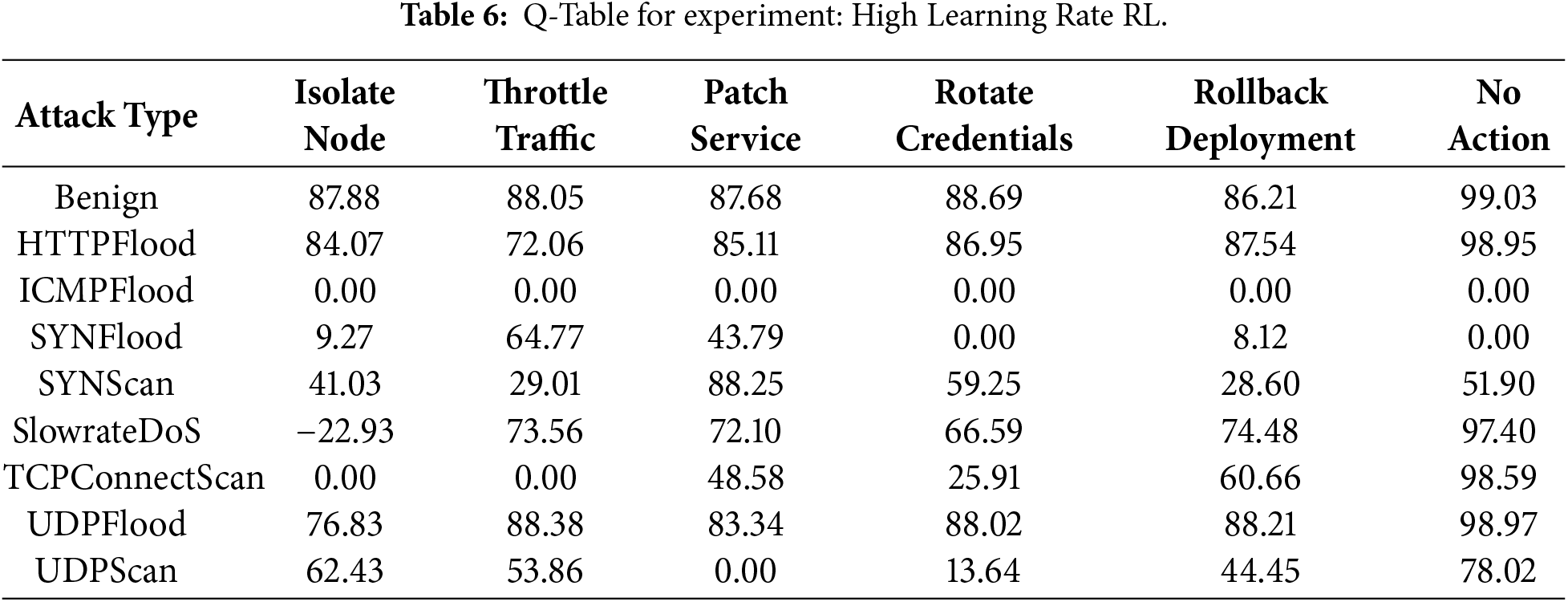

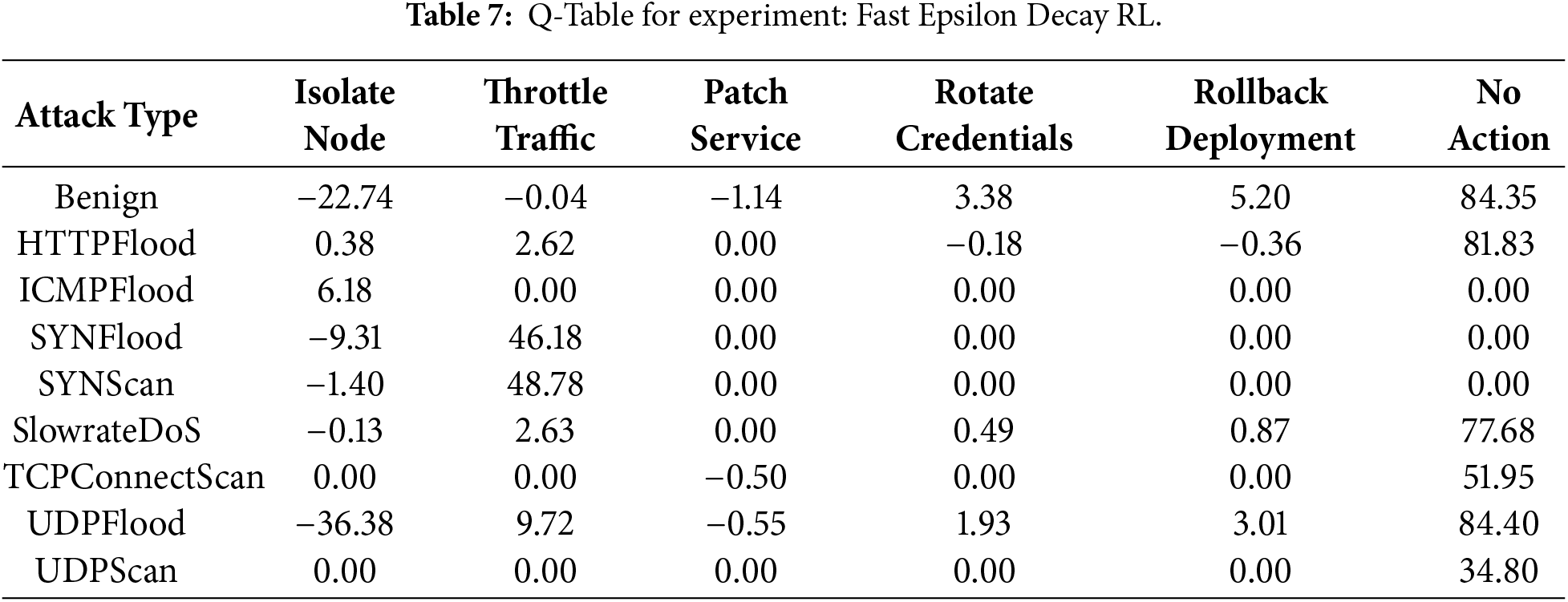

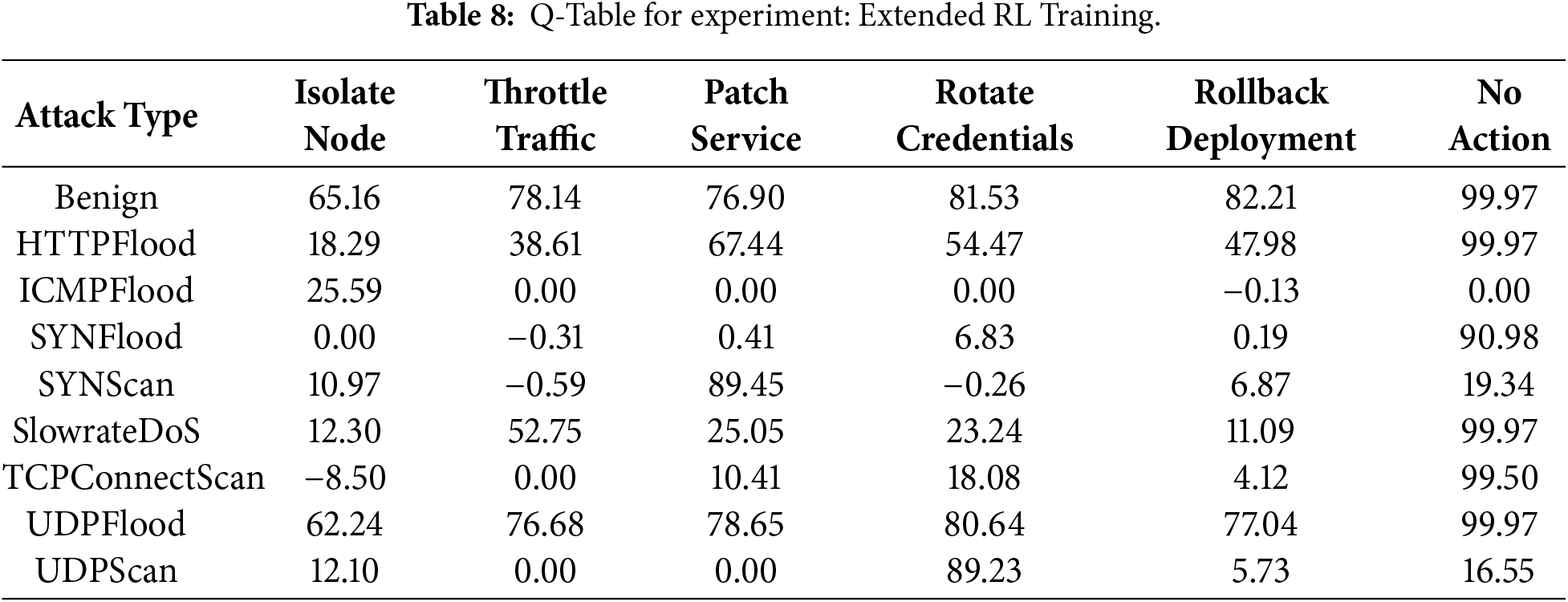

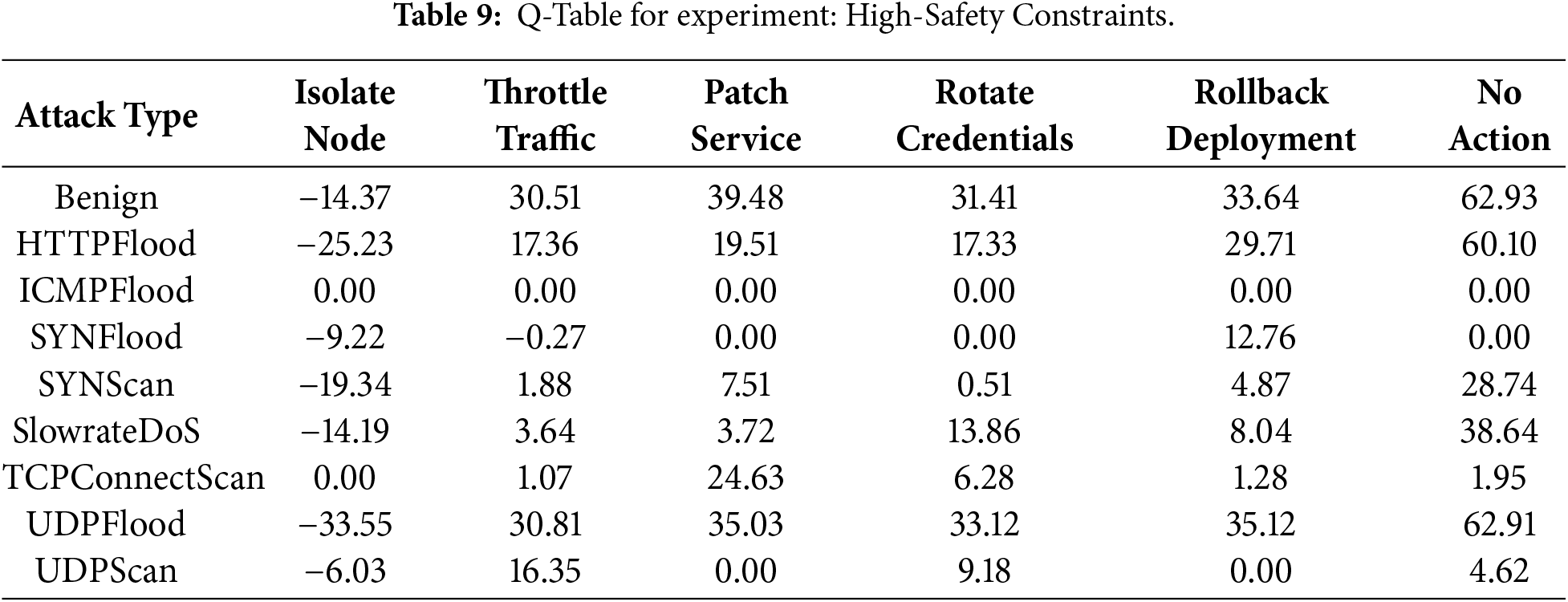

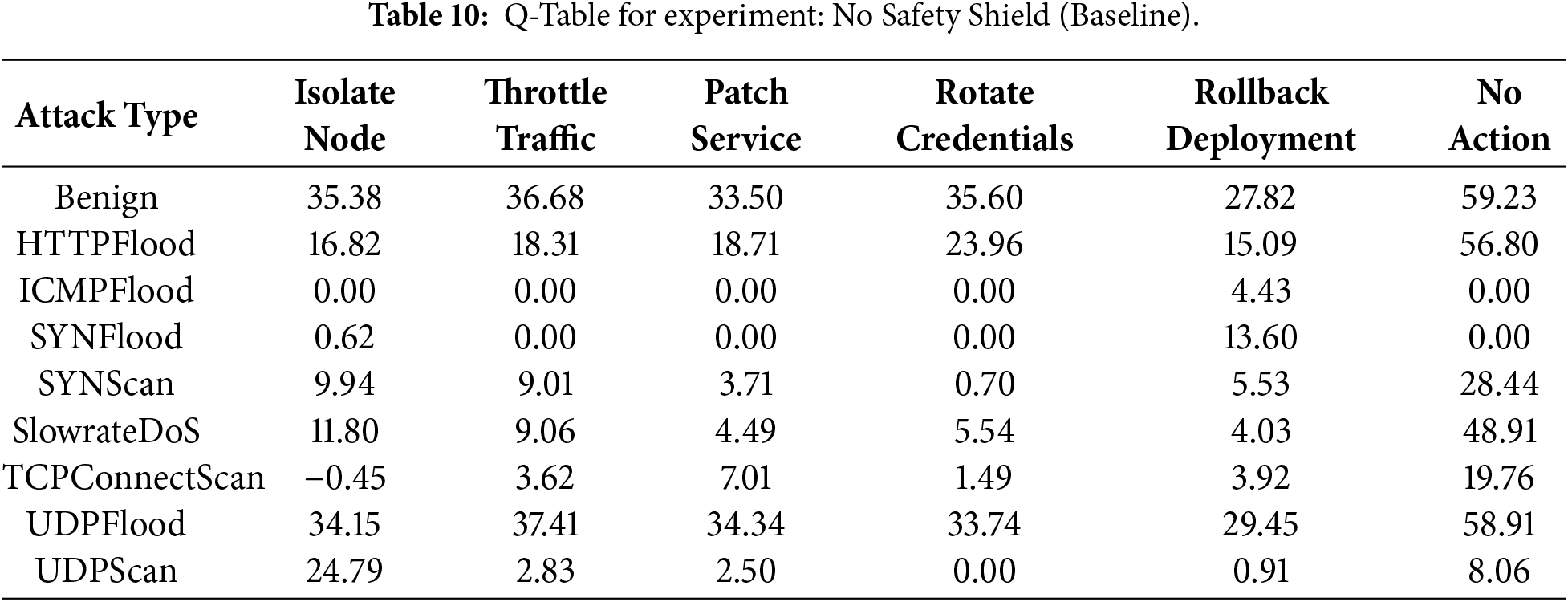

The final set of tables (Tables 4–10) provides the learned Q-tables for each experimental configuration, revealing the specific action policies developed by the RL agent. A comparative analysis of these tables illuminates how different parameters and constraints shape the agent’s decision-making logic.

The Q-table for the Baseline SH-IDS, as demonstrated in Table 4, reveals a nuanced but evolving policy. For ‘Benign’ traffic, the “No Action” choice has the highest Q-value (

The impact of RL hyperparameters is clear in Tables 6–8. The High Learning Rate RL (Table 6) agent exhibits significantly inflated Q-values (e.g.,

The most important insights come from comparing different safety constraint setups. The Q-table for High-Safety Constraints (Table 9) shows the agent learning to be cautious. For almost all attack types, the Q-values for “Isolate Node” are negative, indicating the strong penalties from the safety shield for trying this action on the expanded set of critical nodes. The agent has clearly learned to prefer less disruptive actions. This differs significantly from the No Safety Shield Q-table (Table 10). Without safety constraints, the agent assigns a relatively high Q-value (

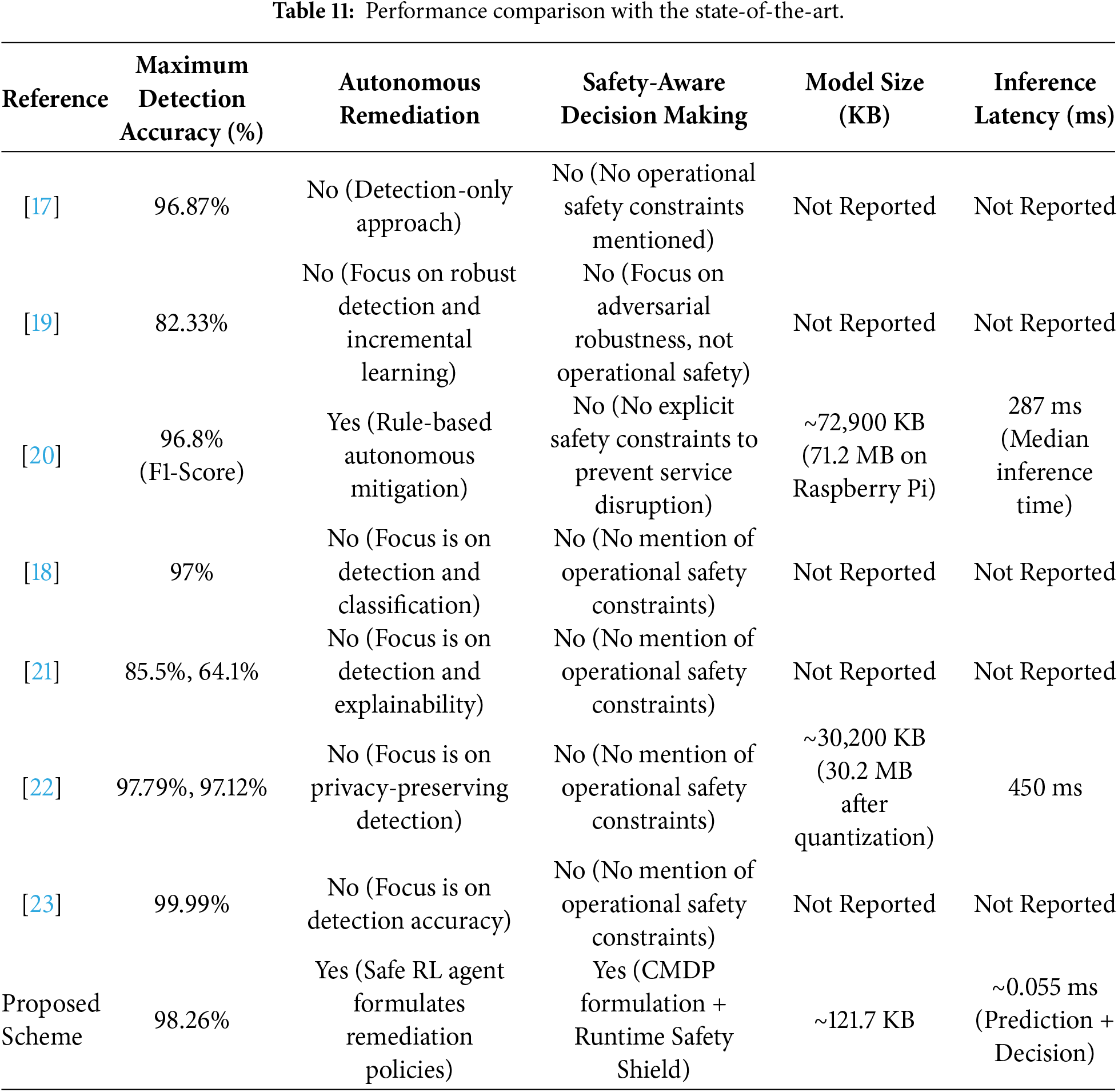

4.8 Performance Comparison with the State-of-the-Art

The analysis shown in Table 11 highlights the key operational benefits of the proposed SH-IDS compared to existing 5G-specific intrusion detection systems. While recent studies such as [23] and [17] have achieved high detection rates of up to

Furthermore, the proposed framework is better suited to resource-constrained 5G-IoT environments than current top models, especially in terms of computational efficiency. As shown in Table 11, complex hybrid models like those in [20] and federated approaches like [22] incur significant resource burdens, with model sizes of

This paper presented a closed-loop, self-healing IDS that effectively bridges the critical gap between accurate threat detection and safe, autonomous remediation in 5G-IoT networks. The core of our contribution is the formalization of the remediation problem as a CMDP and the implementation of a runtime safety shield, which together ensure the reinforcement learning agent’s pursuit of optimal threat mitigation remains within operational safety constraints. Extensive evaluation on the 5G-NIDD benchmark confirms the viability of our approach, demonstrating not only high detection accuracy but also the emergence of intelligent, context-aware mitigation policies. The system’s ability to learn to avoid service-disruptive actions, validated through comparative analysis of Q-tables with and without the safety shield, along with its computational efficiency, underscores its significant advantage over traditional, detection-centric IDS. This work establishes a strong foundation for deploying trustworthy autonomous defense systems in critical and resource-constrained next-generation network environments.

While effective, the system is currently limited by its dependence on static safety constraints and labeled training data. Future work will address these limitations by exploring dynamic constraint adaptation and self-supervised learning to improve resilience against evolving zero-day threats. This work lays a solid foundation for deploying trustworthy autonomous defense systems in critical and resource-constrained next-generation network environments.

Acknowledgement: The authors state that Grammarly’s AI tool was used solely to refine English in a few sections.

Funding Statement: The authors extend their appreciation to the Deanship of Research and Graduate Studies at King Khalid University for funding this work through the Large Group Project under grant number (RGP2/245/46). Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2026R333), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Author Contributions: Wajdan Al Malwi: writing original draft, visualization, validation, software, project administration, methodology, investigation, formal analysis, conceptualization. Fatima Asiri: writing an original draft, visualization, methodology, investigation, formal analysis. Nazik Alturki: review & editing, visualization, methodology. Noha Alnazzawi: review & editing, software, project administration. Dimitrios Kasimatis: writing, review & editing, visualization, validation. Nikolaos Pitropakis: writing original draft, software, methodology, formal analysis. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The experiments conducted in this study are based exclusively on the publicly available, open-source 5G-NIDD dataset, which can be accessed from its original repository. The processed datasets and the Jupyter Notebooks developed for the proposed methodology are available from the authors upon reasonable request and subject to approval by the research group, for non-commercial research purposes.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Varga P, Peto J, Franko A, Balla D, Haja D, Janky F, et al. 5G support for industrial IoT applications—challenges, solutions, and research gaps. Sensors. 2020;20(3):828. doi:10.3390/s20030828. [Google Scholar] [PubMed] [CrossRef]

2. Mukherjee S, Gupta S, Rawlley O, Jain S. Leveraging big data analytics in 5G-enabled IoT and industrial IoT for the development of sustainable smart cities. Trans Emerg Telecomm Technol. 2022;33(12):e4618. doi:10.1002/ett.4618. [Google Scholar] [CrossRef]

3. Pokhrel SR, Ding J, Park J, Park OS, Choi J. Towards enabling critical mMTC: a review of URLLC within mMTC. IEEE Access. 2020;8:131796–813. doi:10.1109/access.2020.3010271. [Google Scholar] [CrossRef]

4. Ji H, Park S, Yeo J, Kim Y, Lee J, Shim B. Ultra-reliable and low-latency communications in 5G downlink: physical layer aspects. IEEE Wirel Commun. 2018;25(3):124–30. doi:10.1109/mwc.2018.1700294. [Google Scholar] [CrossRef]

5. Ahmed SF, Alam MSB, Afrin S, Rafa SJ, Taher SB, Kabir M, et al. Toward a secure 5G-enabled internet of things: a survey on requirements, privacy, security, challenges, and opportunities. IEEE Access. 2024;12(8):13125–45. doi:10.1109/access.2024.3352508. [Google Scholar] [CrossRef]

6. Liao H, Murah MZ, Hasan MK, Aman AHM, Fang J, Hu X, et al. A survey of deep learning technologies for intrusion detection in Internet of Things. IEEE Access. 2024;12(1):4745–61. doi:10.1109/access.2023.3349287. [Google Scholar] [CrossRef]

7. Alshehri M, Saidani O, Malwi W, Asiri F, Latif S, Khattak A, et al. A hybrid wasserstein gan and autoencoder model for robust intrusion detection in iot. Comput Model Eng Sci. 2025;143(3):3899–920. doi:10.32604/cmes.2025.064874. [Google Scholar] [CrossRef]

8. Al-Fuhaidi B, Farae Z, Al-Fahaidy F, Nagi G, Ghallab A, Alameri A. Anomaly-based intrusion detection system in wireless sensor networks using machine learning algorithms. Appl Comput Intell Soft Comput. 2024;2024(1):2625922. doi:10.1155/2024/2625922. [Google Scholar] [CrossRef]

9. Muneer S, Farooq U, Athar A, Ahsan Raza M, Ghazal TM, Sakib S. A critical review of artificial intelligence based approaches in intrusion detection: a comprehensive analysis. J Eng. 2024;2024(1):3909173. doi:10.1155/2024/3909173. [Google Scholar] [CrossRef]

10. Kalodanis K, Papapavlou C, Feretzakis G. Enhancing security in 5G and future 6G Networks: machine learning approaches for adaptive intrusion detection and prevention. Future Internet. 2025;17(7):312. doi:10.3390/fi17070312. [Google Scholar] [CrossRef]

11. Alnazzawi N, Asiri F, Alturki N, Laula Z, Zafar S, Latif S, et al. MGNN-IDS: a multi-graph neural network approach for robust intrusion detection in the internet of things. Telecommun Syst. 2025;88(4):1–20. doi:10.1007/s11235-025-01352-5. [Google Scholar] [CrossRef]

12. Ahmad I, Kumar T, Liyanage M, Okwuibe J, Ylianttila M, Gurtov A. Overview of 5G security challenges and solutions. IEEE Commun Stand Mag. 2018;2(1):36–43. doi:10.1109/mcomstd.2018.1700063. [Google Scholar] [CrossRef]

13. Anwar S, Mohamad Zain J, Zolkipli MF, Inayat Z, Khan S, Anthony B, et al. From intrusion detection to an intrusion response system: fundamentals, requirements, and future directions. Algorithms. 2017;10(2):39. doi:10.3390/a10020039. [Google Scholar] [CrossRef]

14. Bashendy M, Tantawy A, Erradi A. Intrusion response systems for cyber-physical systems: a comprehensive survey. Comput Secur. 2023;124(1):102984. doi:10.1016/j.cose.2022.102984. [Google Scholar] [CrossRef]

15. Altman E. Constrained Markov decision processes. Oxfordshire, UK: Routledge; 2021. [Google Scholar]

16. Kheddar H, Dawoud DW, Awad AI, Himeur Y, Khan MK. Reinforcement-learning-based intrusion detection in communication networks: a review. IEEE Commun Surv Tutor. 2024;27(4):2420–69. doi:10.1109/comst.2024.3484491. [Google Scholar] [CrossRef]

17. Rani SS, Shaaban MF, Ali A. An efficient convolutional neural network based attack detection for smart grid in 5G-IOT. Int J Crit Infrastruct Prot. 2025;48:100738. doi:10.1016/j.ijcip.2024.100738. [Google Scholar] [CrossRef]

18. Gurushanker A, Anandakumaran K, Columbus CC. Enhancing intrusion detection systems for 5G networks using AI. In: Proceedings of the 2024 First International Conference on Software, Systems and Information Technology (SSITCON); 2024 Oct 18–19; Tumkur, India. p. 1–8. [Google Scholar]

19. Neha, Bhatia T. Adaptive intrusion detection system leveraging dynamic neural models with adversarial learning for 5G/6G Networks. In: Proceedings of the 2025 4th International Conference on Computer Technologies (ICCTech); 2025 Feb 20–23; Kuala Lumpur, Malaysia. p. 103–7. doi:10.1109/ICCTech66294.2025.00028. [Google Scholar] [CrossRef]

20. Reis MJ. AI-driven anomaly detection for securing IoT devices in 5G-enabled smart cities. Electronics. 2025;14(12):2492. doi:10.3390/electronics14122492. [Google Scholar] [CrossRef]

21. Radoglou-Grammatikis P, Nakas G, Amponis G, Giannakidou S, Lagkas T, Argyriou V, et al. 5GCIDS: An intrusion detection system for 5G core with AI and explainability mechanisms. In: Proceedings of the 2023 IEEE Globecom Workshops (GC Wkshps); 2023 Dec 4–8; Kuala Lumpur, Malaysia. p. 353–8. [Google Scholar]

22. Adjewa F, Esseghir M, Merghem-Boulahia L. Efficient federated intrusion detection in 5G ecosystem using optimized BERT-based model. In: Proceedings of the 2024 20th International Conference on Wireless and Mobile Computing, Networking and Communications (WiMob); 2024 Oct 21–23; Paris, France. p. 62–7. [Google Scholar]

23. Mahmood I, Alyas T, Abbas S, Shahzad T, Abbas Q, Ouahada K. Intrusion detection in 5G cellular network using machine learning. Comput Syst Sci Eng. 2023;47(2):2439–53. doi:10.32604/csse.2023.033842. [Google Scholar] [CrossRef]

24. Samarakoon S, Siriwardhana Y, Porambage P, Liyanage M, Chang SY, Kim J, et al. 5G-NIDD: a comprehensive network intrusion detection dataset generated over 5G wireless network. IEEE dataport; 2022. doi:10.21227/xtep-hv36. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools