Open Access

Open Access

ARTICLE

Secure and Differentially Private Edge-Cloud Federated Learning Framework for Privacy-Preserving Maritime AIS Intelligence

1 Department of Computer Science, CECOS University of IT and Emerging Sciences, Peshawar, Pakistan

2 Department of Computer Engineering, College of Computer Sciences and Information Technology, King Faisal University, Al-Ahsa, Saudi Arabia

3 Department of Computer Networks Communications, CCSIT, King Faisal University, Al Ahsa, Saudi Arabia

4 Department of Information Systems, College of Computer Science and Information Technology, King Faisal University, Al-Ahsa, Saudi Arabia

* Corresponding Authors: Abid Iqbal. Email: ; Ghassan Husnain. Email:

(This article belongs to the Special Issue: Cloud Computing Security and Privacy: Advanced Technologies and Practical Applications)

Computers, Materials & Continua 2026, 87(3), 21 https://doi.org/10.32604/cmc.2026.077222

Received 04 December 2025; Accepted 19 January 2026; Issue published 09 April 2026

Abstract

Cloud computing now supports large-scale maritime analytics, yet offloading rich Automatic Identification System (AIS) data to the cloud exposes sensitive operational patterns and complicates compliance with cross-border privacy regulations. This work addresses the gap between growing demand for AI-driven vessel intelligence and the limited availability of practical, privacy-preserving cloud solutions. We introduce a privacy-by-design edge-cloud framework in which ports and vessels serve as federated clients, training vessel-type classifiers on local AIS trajectories while transmitting only clipped, Gaussian-perturbed updates to a zero-trust cloud coordinator employing secure and robust aggregation. Using a public AIS corpus with realistic non-IID client partitions, our evaluation shows that non-private FedAvg attains validation AUCKeywords

Cloud computing now underpins large-scale analytics in safety-critical domains, including maritime logistics [1]. Modern vessels stream AIS data to the cloud to enable AI-driven services such as route optimisation, traffic forecasting, collision avoidance and anomaly detection [2]. However, centralising raw AIS data from diverse stakeholders raises major security and privacy risks: trajectories can expose commercial strategies and operational weaknesses and cross-border data sharing must satisfy varied regulations [3]. Perimeter security and basic anonymisation are inadequate, as AIS trajectories remain highly re-identifiable and cloud insiders can still infer sensitive patterns [4].

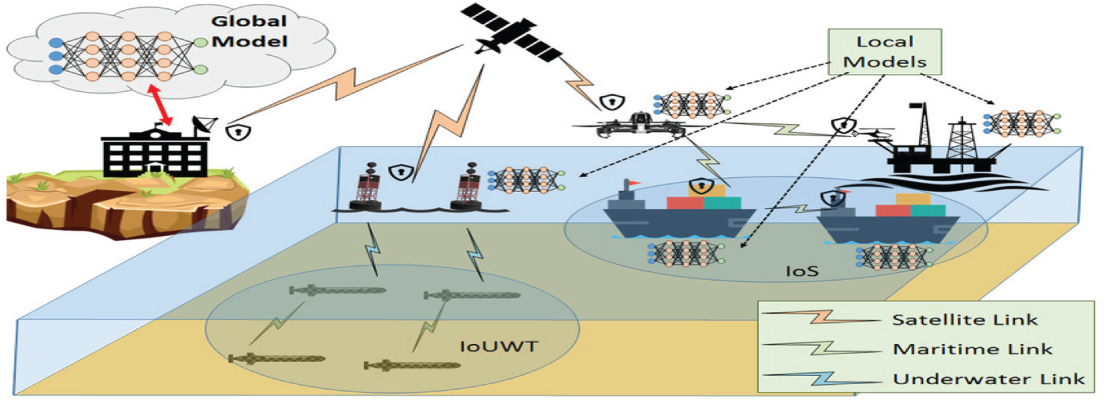

High-quality maritime AI requires diverse data across vessel types, regions and conditions [5]. Yet operators cannot always share raw AIS feeds and isolated local models lack the robustness needed for reliable cloud-based decision support [5]. These tensions highlight the need for privacy-preserving, collaborative edge-cloud analytics in maritime infrastructures [6]. Fig. 1 shows the heterogeneous, distributed environment that motivates applying federated learning in this domain [7].

Figure 1: Federated learning in various maritime scenarios, including Internet of Ships (IoS), maritime IoT with buoys and Internet of Underwater Things (IoUWT), adapted from Giannopoulos et al. [7].

We introduce a privacy-preserving edge-to-cloud collaborative learning framework for AIS-based maritime analytics [8]. In our design, vessels and port infrastructures act as federated clients, retaining raw AIS data locally and transmitting only clipped, noise-perturbed model updates instead of original records [9]. Privacy is enforced at the learning layer through differential privacy, which bounds information leakage from individual trajectories [10], while secure aggregation masks updates so the server observes only jointly aggregated parameters rather than client-specific contributions [11]. This algorithmic protection achieves privacy without depending on external security services. Using a realistic Kaggle AIS dataset, we evaluate performance under non-IID partitions and multiple threat models, considering utility, communication cost, and inference-attack resistance [12]. Results show that anomaly detection and route modelling remain feasible under formal privacy guarantees, supporting practical maritime deployment. The framework therefore provides a reproducible benchmark for federated learning and privacy-enhancing technologies in real-world cyber-physical systems [13].

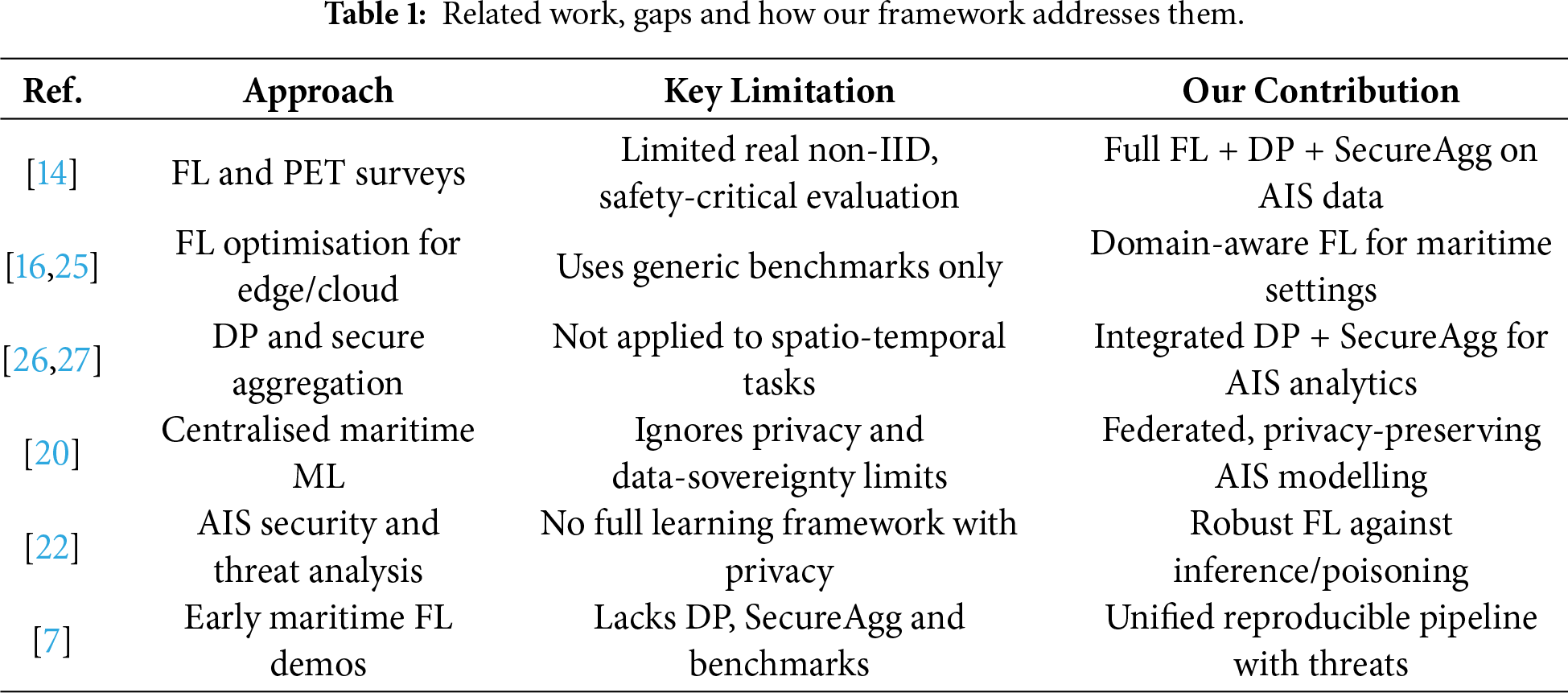

Research on cloud security, PETs and distributed learning has grown rapidly [14]. Two relevant strands are: (i) privacy-preserving and federated learning for cloud-edge systems and (ii) AIS-based maritime analytics in security-critical settings [15]. Taken together, they reveal a gap between generic privacy-preserving AI methods and domain-specific maritime needs motivating our integrated, privacy-aware edge-to-cloud framework.

2.1 Privacy Preserving and Federated Learning in Cloud and Edge Environments

Federated learning (FL) enables distributed training when data cannot be centrally stored due to confidentiality, regulation, or operational constraints [16]. Early work demonstrated that simple model averaging remains viable despite heterogeneous devices, intermittent participation, and bandwidth limitations, while later research adapted FL to edge–cloud settings to handle non-IID data, unbalanced client sizes, and straggler effects [17]. To strengthen confidentiality, privacy-enhancing technologies such as differential privacy and secure aggregation have been incorporated, supporting zero-trust deployment scenarios [18]. However, much of the literature still relies on simplified benchmarks and overlooks the interoperability, privacy, and safety demands of critical infrastructures, often emphasizing accuracy while downplaying trade-offs involving privacy budgets, model fidelity and communication overhead [19]. Our work addresses this gap using realistic spatio-temporal data and threat-aware evaluation.

2.2 Maritime Analytics and AIS Based Security Applications

AIS data underpins maritime situational awareness, enabling traffic characterisation, vessel behaviour modelling, route prediction, anomaly detection and collision avoidance [20]. Machine and deep learning methods have been applied to ship type classification, ETA prediction and abnormal behaviour detection, often assuming access to large central AIS repositories operated by authorities or commercial providers [21]. While effective, this centralised paradigm overlooks confidentiality, cross-border data regulations and sensitivities around vessel operations [20].

Maritime cybersecurity research has also exposed AIS vulnerabilities including spoofing, jamming and inference attacks [22]. Existing defences mainly rely on message-level cryptography or coarse anonymisation [23,24], which do not support fine-grained learning tasks or provide formal privacy guarantees [7]. To our knowledge, no prior work combines federated learning, differential privacy and secure aggregation for AIS analytics in edge–cloud maritime environments, despite early FL demonstrations in related tasks [7]. By treating vessels and ports as clients and evaluating on a realistic AIS corpus, we address this gap. Table 1 summarizes prior work, limitations and our contributions.

This section outlines our end-to-end privacy-preserving federated AIS analytics framework, covering data preprocessing, client partitioning, model training and attack-defense evaluation.

We use a public AIS trajectory dataset from Kaggle [28], consisting of time-stamped maritime broadcasts with vessel kinematics, identifiers and operational metadata. After removing incomplete entries, timestamp errors, and speed outliers, the final corpus contains approximately

3.2 Data Preprocessing and Feature Engineering

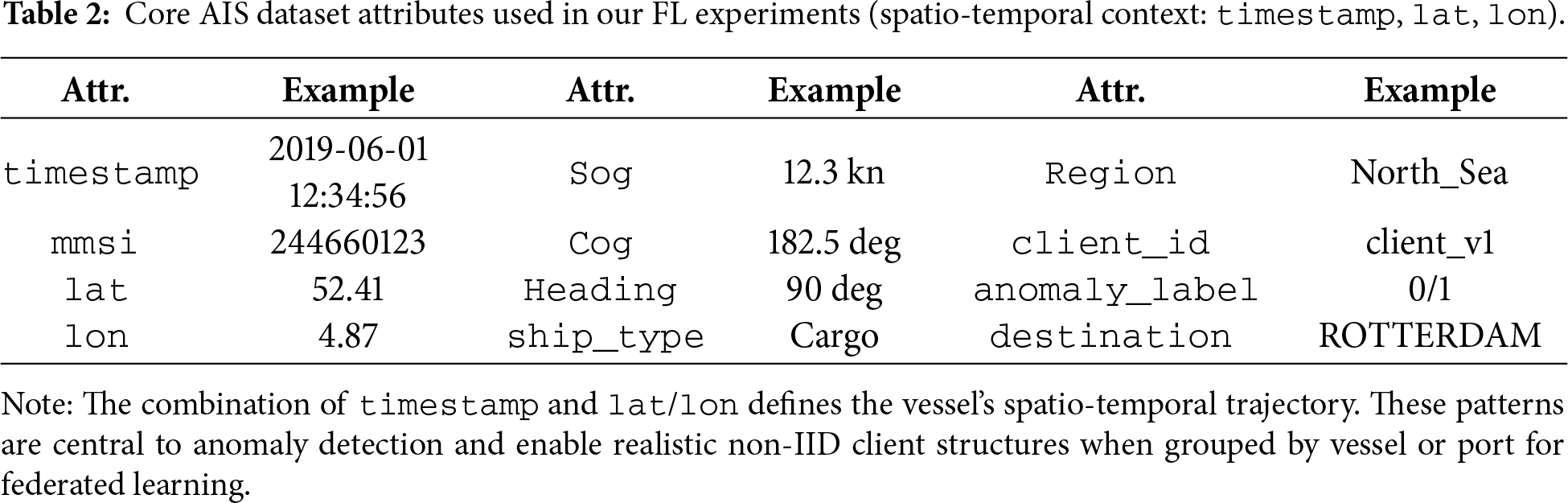

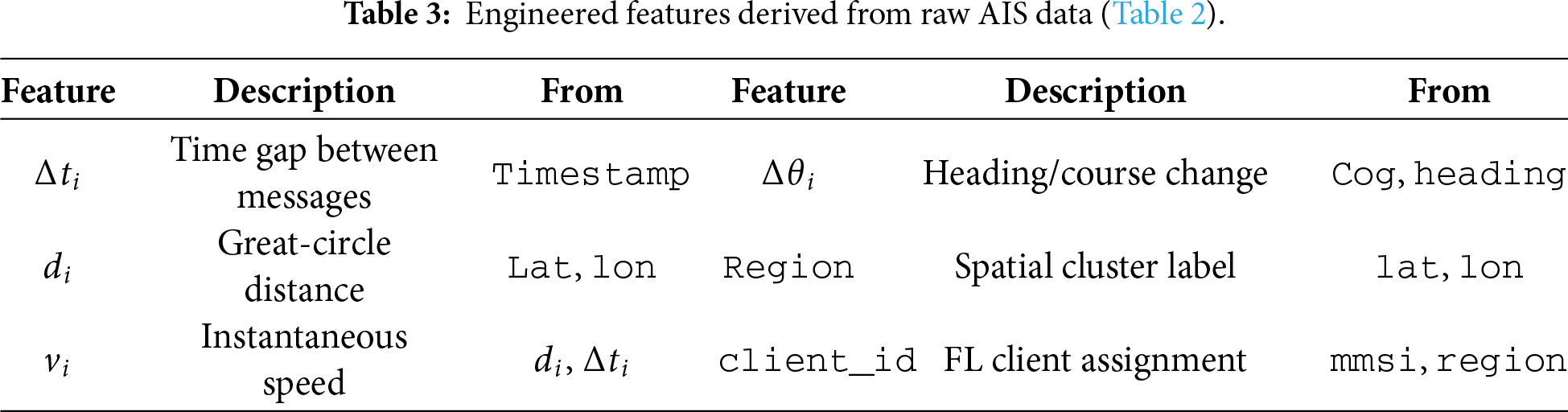

Before training, we preprocess the AIS data in Table 2 to form clean, time-ordered vessel trajectories. For each mmsi, messages are sorted and invalid timestamps removed. To ensure data validity, we apply rule-based cleaning to discard corrupted or inconsistent records: (i) non-monotonic or reversed timestamps (

Given a trajectory

and discard segments with large gaps, while small gaps are linearly interpolated. Spatial displacement is computed using the great-circle (Haversine) distance

where R is the Earth radius. Based on Eqs. (1) and (2), we derive the instantaneous speed over ground

and convert it to knots for consistency with sog. Unrealistic values (e.g.,

where

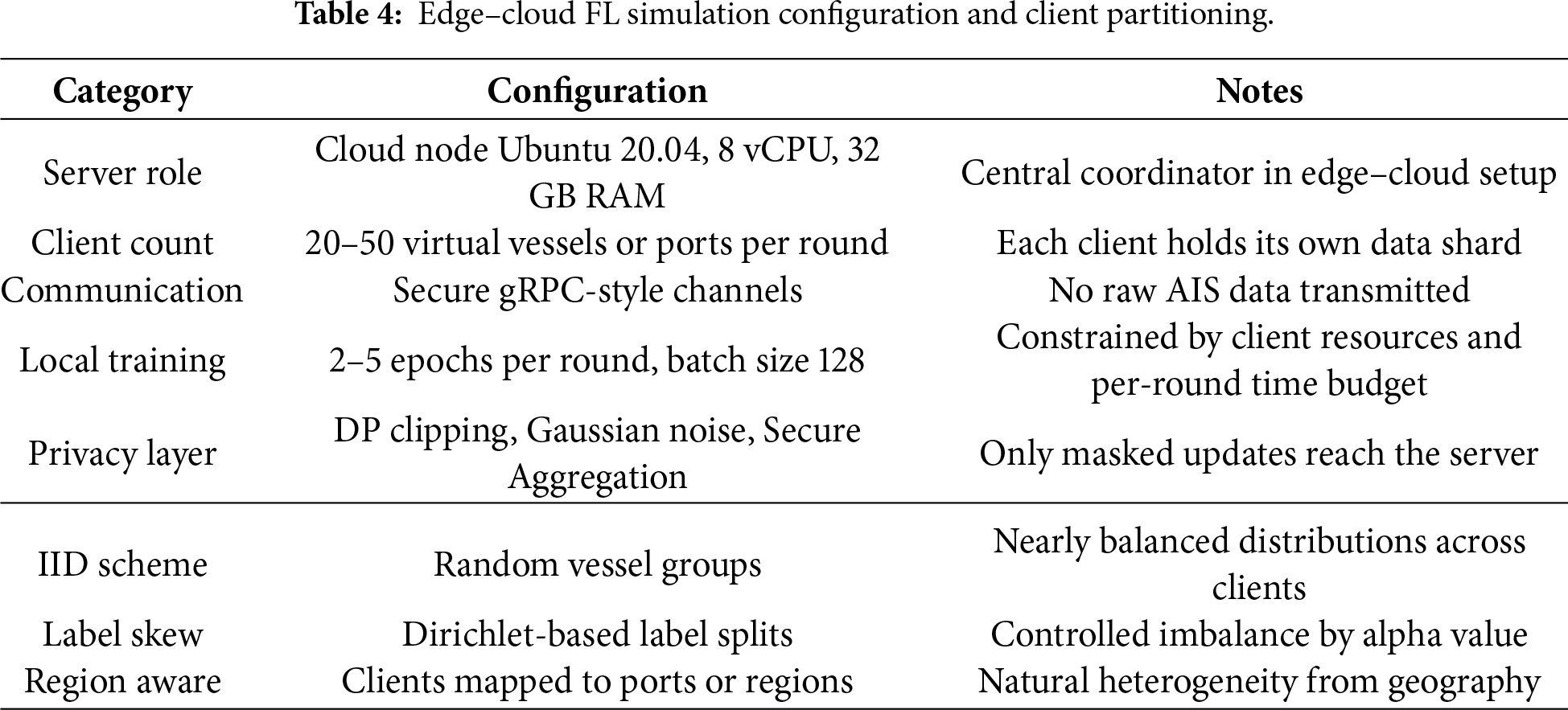

3.3 Federated Client Partitioning and Privacy-Preserving Training

We simulate a practical edge–cloud FL environment in which a central coordinator (cloud node) orchestrates 20–50 virtual maritime clients per round, each emulating either a vessel or port. The server runs on a single cloud instance (Ubuntu 20.04, 8 vCPUs, 32 GB RAM) with a standard PyTorch-based federated loop, and clients communicate with the coordinator over secure gRPC-style channels. Model updates are clipped/noised according to the differential privacy parameters in Table 4. Secure Aggregation is applied prior to averaging, ensuring that only masked model updates are visible to the coordinator.

From this setup, virtual clients are instantiated from the cleaned AIS corpus

Each client further splits its data temporally into training, validation and test sets in Eq. (6):

We vary heterogeneity using the three schemes in Table 4. The IID vessel-based scheme randomizes vessel assignment, while the label-skewed scheme samples client label weights from a Dirichlet prior,

where smaller

Training follows a federated protocol using the global objective in Eq. (9):

Clients compute local updates

To ensure privacy, we adopt DP-FedAvg by clipping client updates in Eq. (11)

and adding Gaussian noise,

yielding

3.4 Attack Models and Defense Mechanisms

To assess robustness and privacy in Section 3.3, we examine standard inference and poisoning attacks in the federated AIS threat model. Eq. (13) reports the membership inference attack success rate, which quantifies privacy leakage by measuring how well an attacker can infer whether a sample

Low

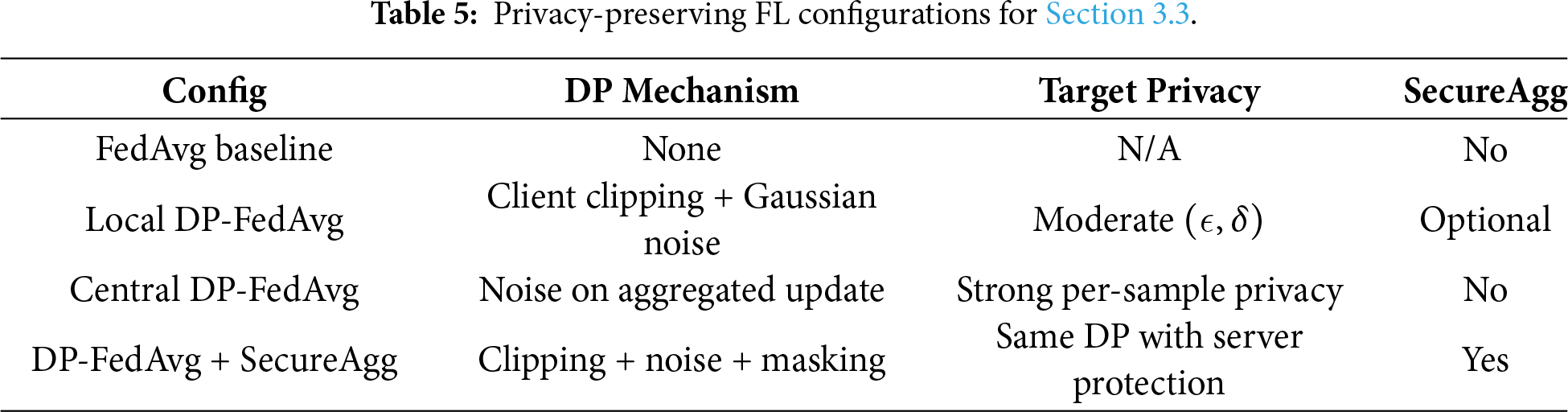

Robust aggregation and anomaly checks enhance security; see Table 6.

3.5 Framework Architecture Overview

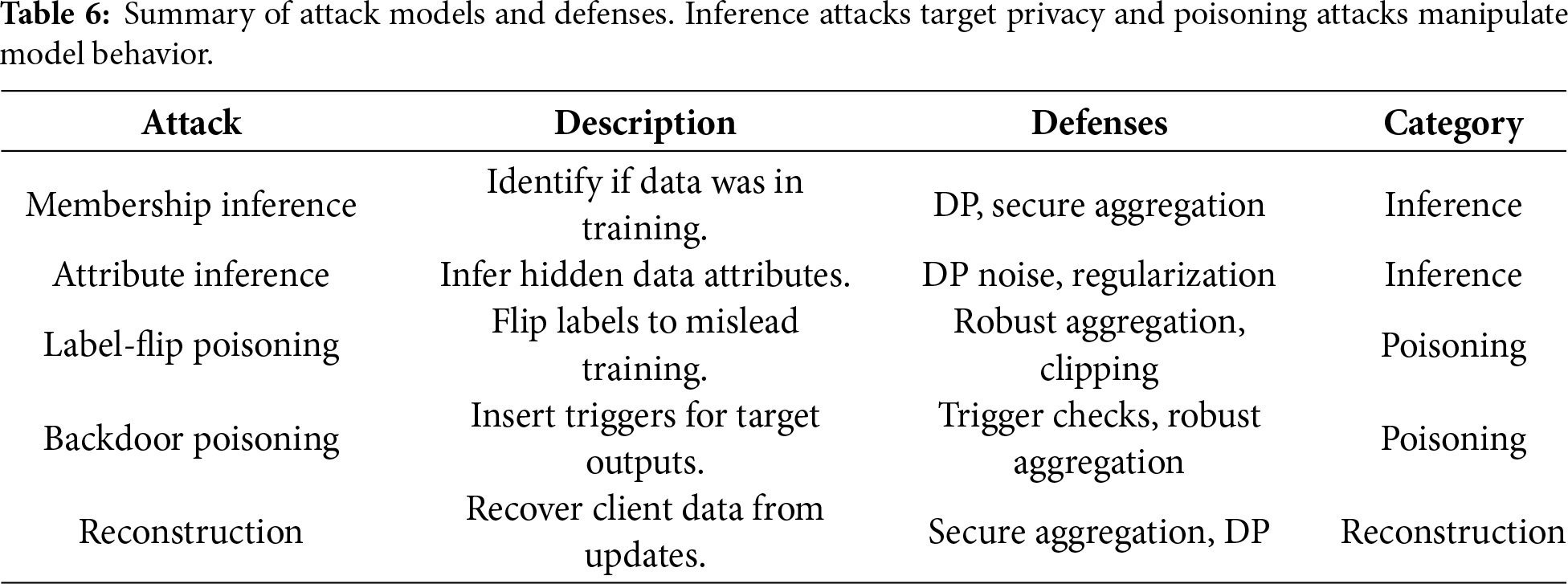

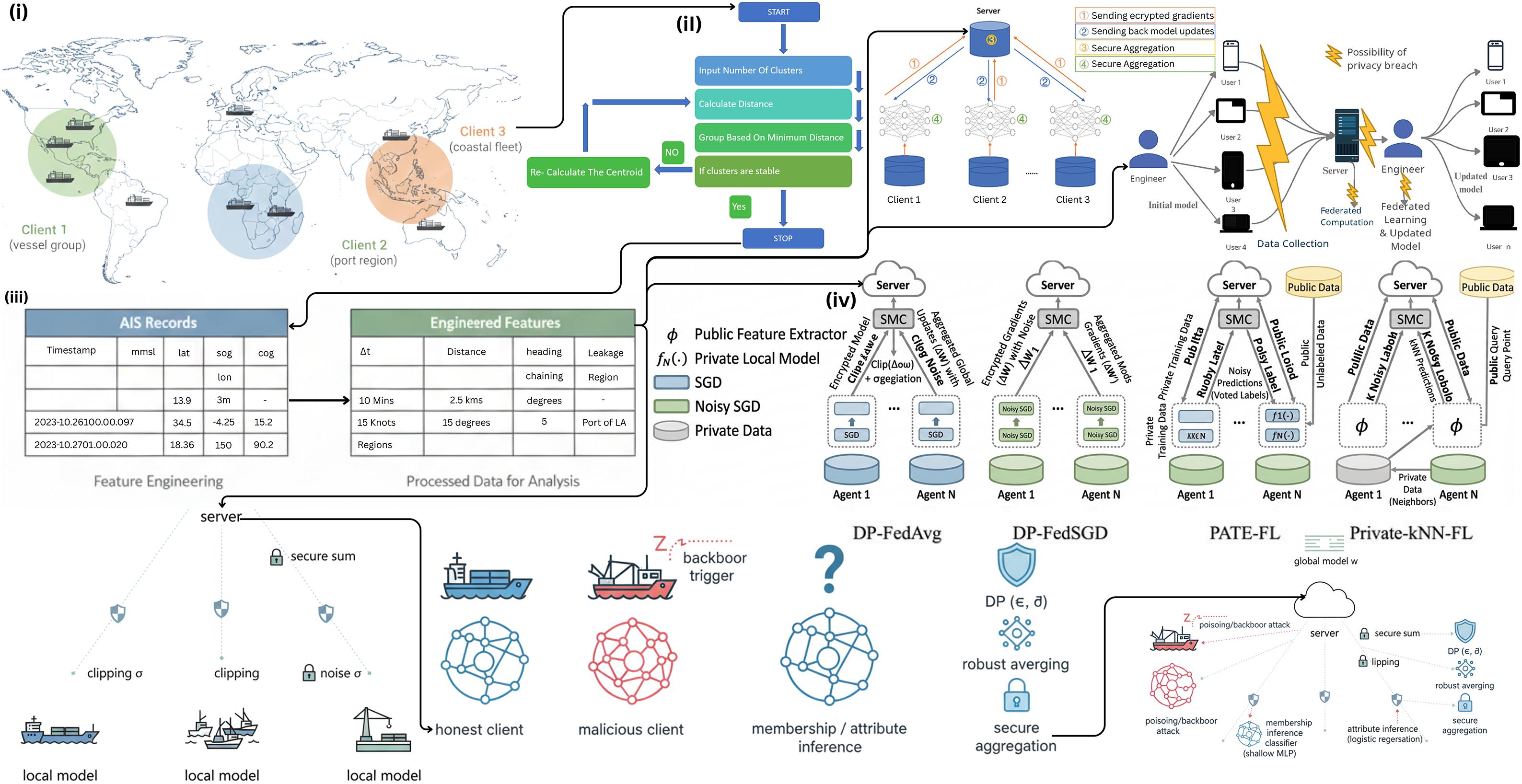

Fig. 2 summarizes the privacy-preserving federated AIS analytics pipeline. Raw AIS records (Table 2) are preprocessed into cleaned trajectories and engineered features (Table 3). Samples are grouped into virtual clients using the partitioning schemes in Table 4 and each client trains locally while the server optimizes the global objective with FedAvg (Eq. (10)) under differential privacy and secure aggregation (Table 5). The right panel illustrates the inference and poisoning threats evaluated in Section 3.4 and their defenses (Table 6). In Algorithm 1, the client update

Figure 2: Overview of the privacy-preserving federated AIS analytics framework. From left to right, the figure shows (i) vessel- and port-level client construction, (ii) AIS feature engineering, (iii) federated and differentially private training with secure aggregation, and (iv) attack and defense mechanisms. These four phases correspond to the components in Sections 3.2–3.4.

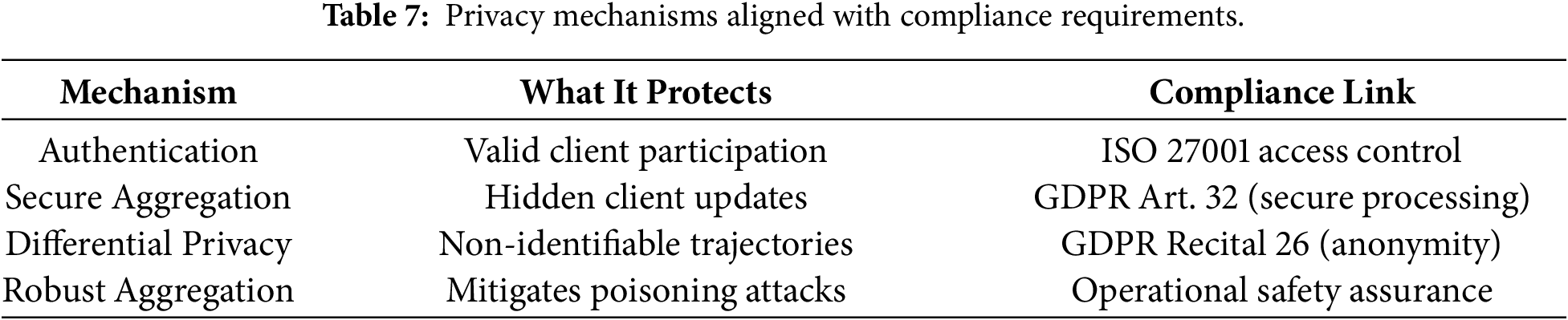

3.6 Mapping Privacy Mechanisms to Compliance

To clarify how privacy is achieved without external security services, we map each mechanism to its compliance role shown in Table 7. Privacy is enforced at the algorithmic level through differential privacy, secure aggregation and authenticated participation, ensuring that raw AIS trajectories never leave local clients.

These mappings show that privacy preservation is embedded directly into the learning workflow rather than outsourced to external security services. As a result, the framework maintains confidentiality and regulatory alignment even in zero-trust edge–cloud deployments.

This section presents the experimental setup and results.

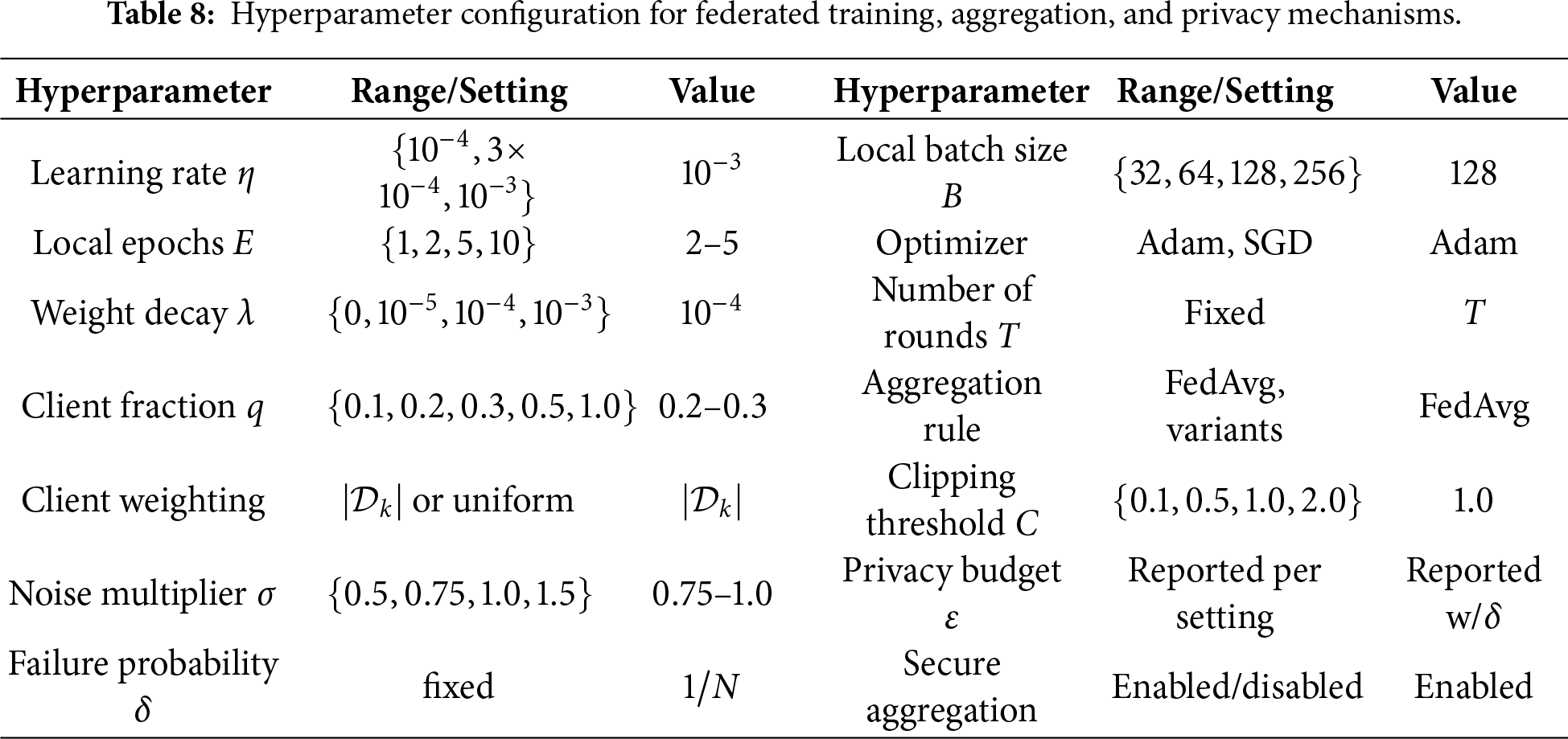

Hyperparameters are optimized through mixed search, with search ranges in Table 8.

4.2 Dataset Samples and Client Heterogeneity

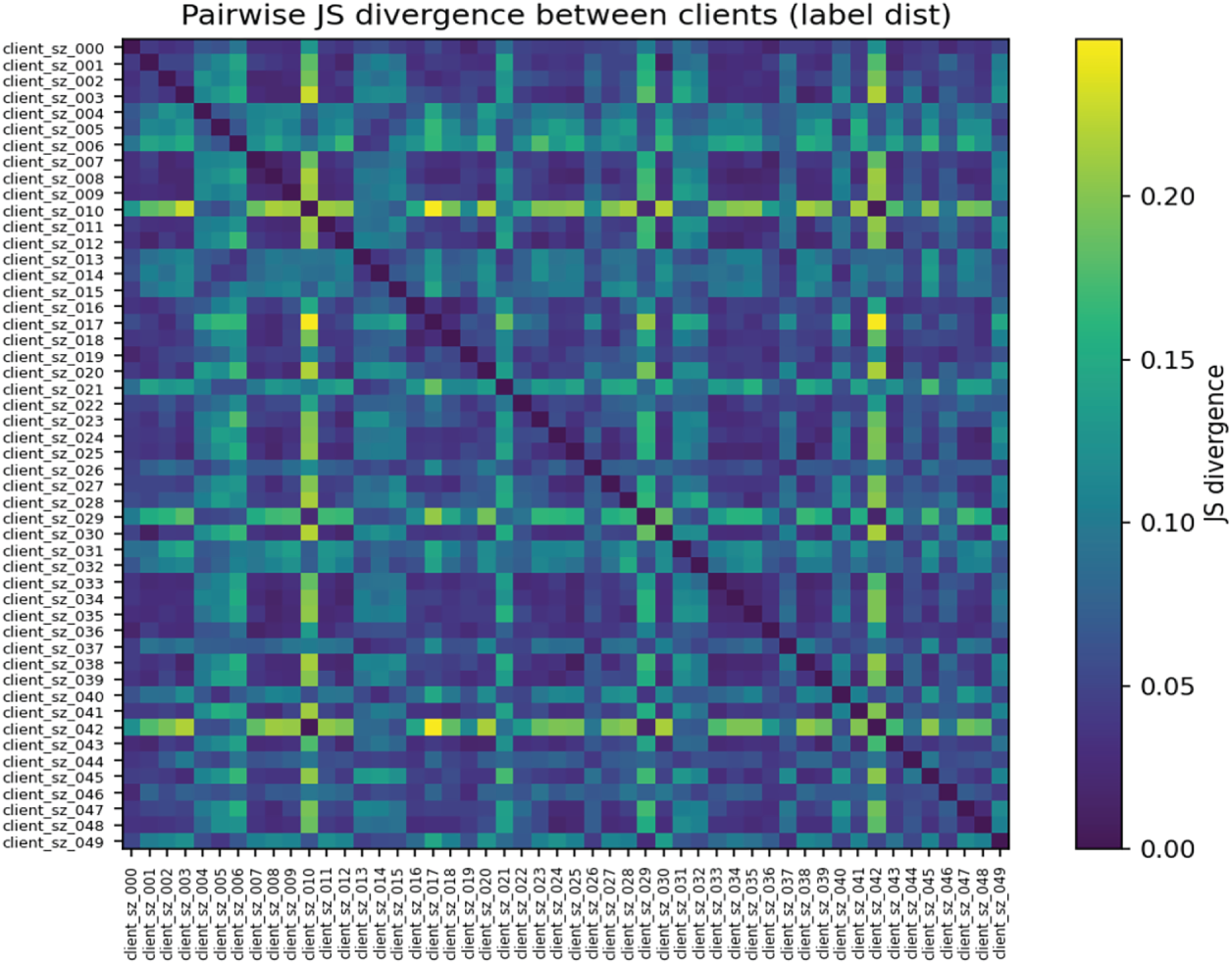

Fig. 3 shows substantial label-distribution heterogeneity across clients, with varied Jensen-Shannon divergence values highlighting the strongly non-IID data partitions used in our federated learning experiments.

Figure 3: Pairwise JS divergence between clients based on label distributions.

4.3 Client Label Skew and Secure Aggregation Characteristics

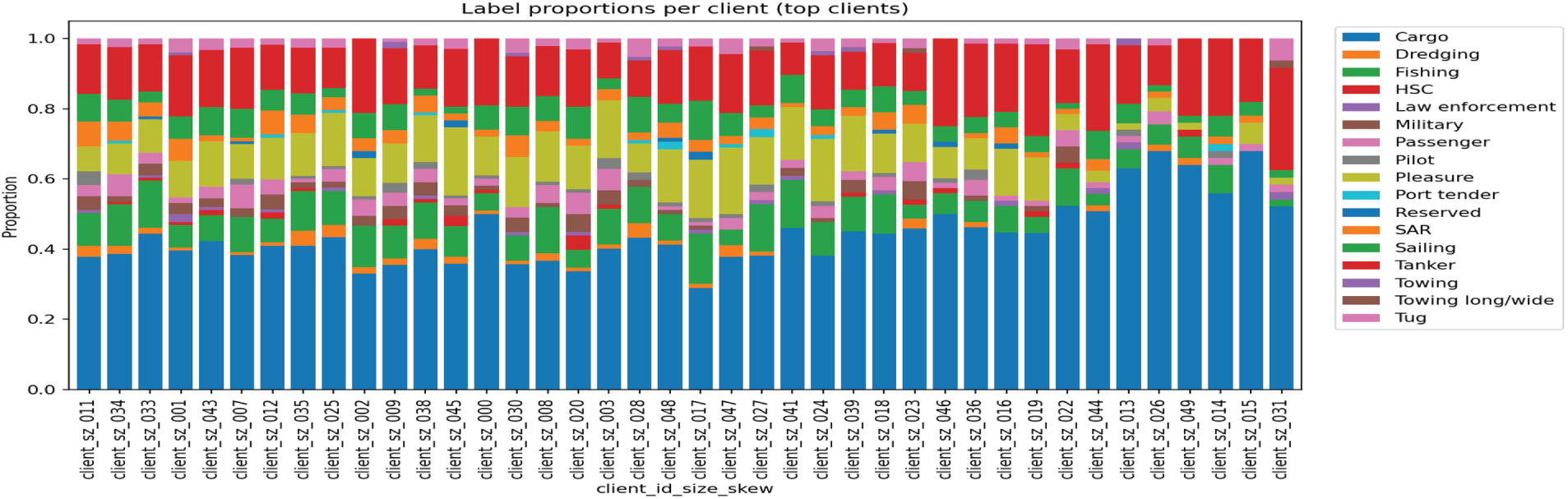

Fig. 4 illustrates pronounced label imbalance across clients, highlighting diverse vessel-type distributions and reinforcing the strongly non-IID characteristics of the federated maritime dataset.

Figure 4: Label distribution proportions for the top clients, illustrating heterogeneity in vessel-type frequencies across the federated network.

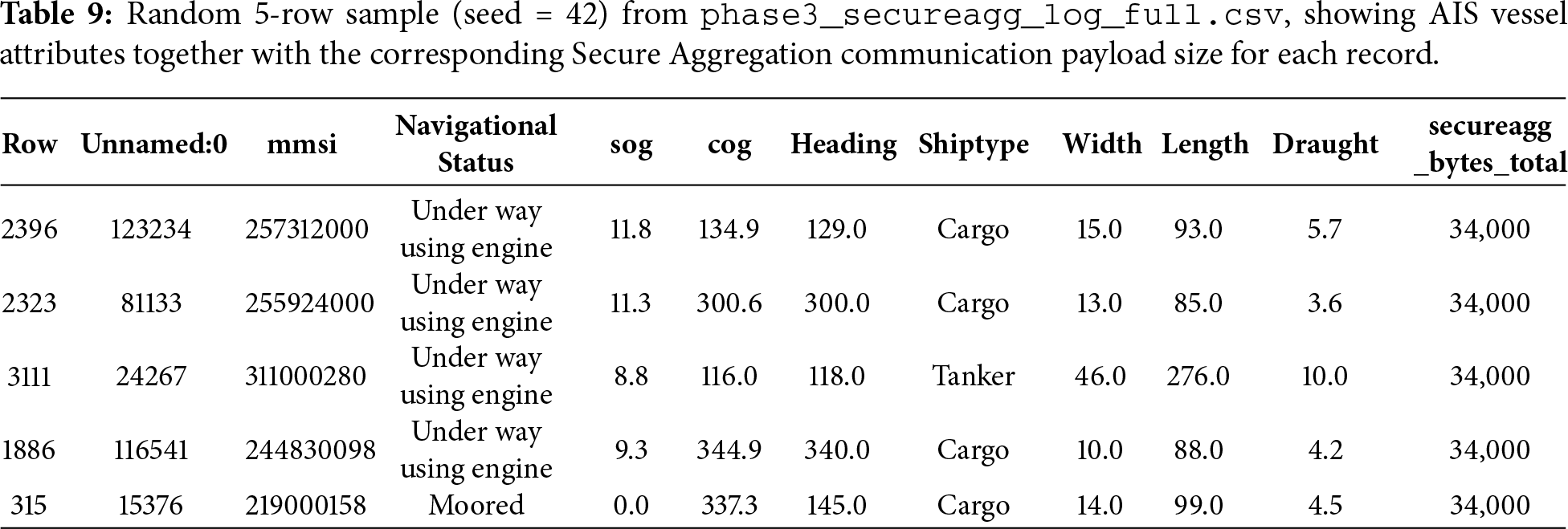

Table 9 shows a 5-row sample illustrating how AIS vessel attributes map to Secure Aggregation payload sizes during federated training. Despite variation in vessel status and characteristics, all clients produce identical 34 kB payloads, reflecting the protocol’s fixed-size encrypted updates. This uniformity prevents information leakage through message length and demonstrates communication-level privacy over heterogeneous maritime data.

4.4 Model Predictions and Utility-Privacy Trade-off

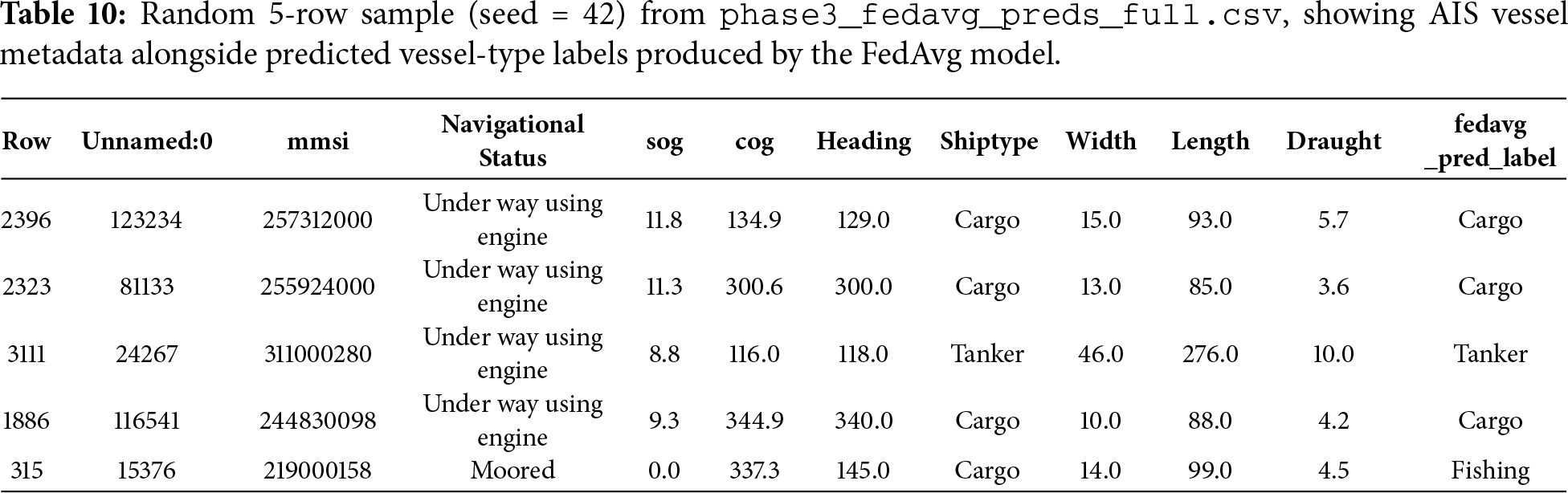

Table 10 shows a sample of AIS records alongside the vessel-type predictions generated by the FedAvg model. The model correctly identifies most Cargo and Tanker vessels, indicating that it captures meaningful motion and dimensional patterns. Occasional errors, often tied to ambiguous behaviour or missing metadata, highlight the challenges of noisy AIS data. The sample demonstrates solid baseline performance while revealing where heterogeneous or sparse client data can limit accuracy.

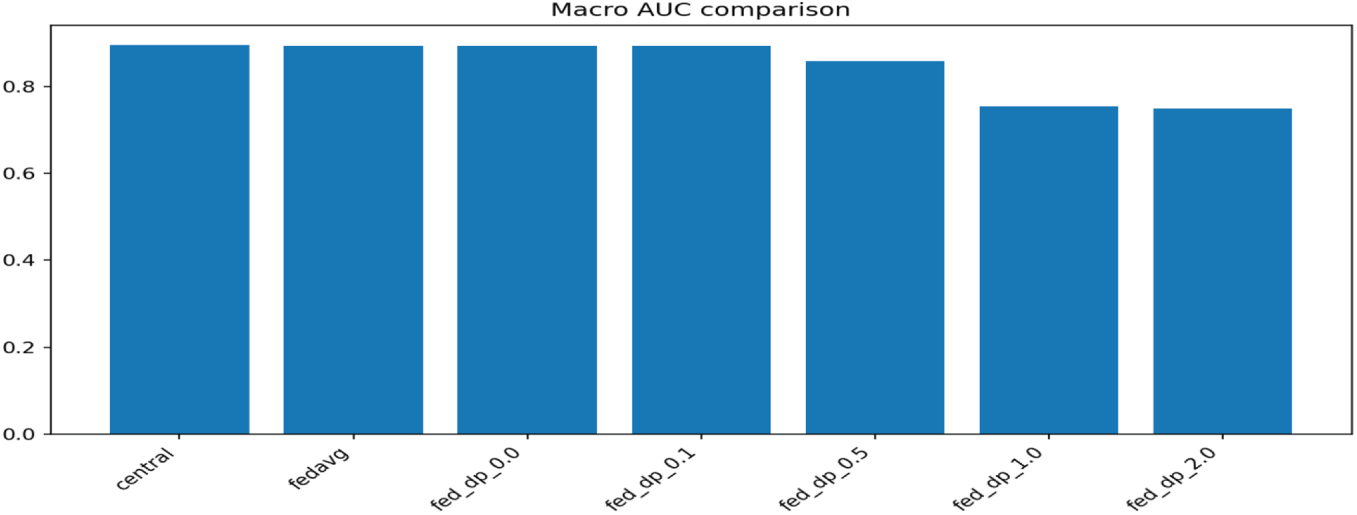

Fig. 5 shows that central and non-private FedAvg models achieve similar macro AUC, while increasing differential privacy noise gradually lowers performance, illustrating the expected utility-privacy trade-off in federated learning.

Figure 5: Macro AUC comparison between central, FedAvg and DP-federated configurations.

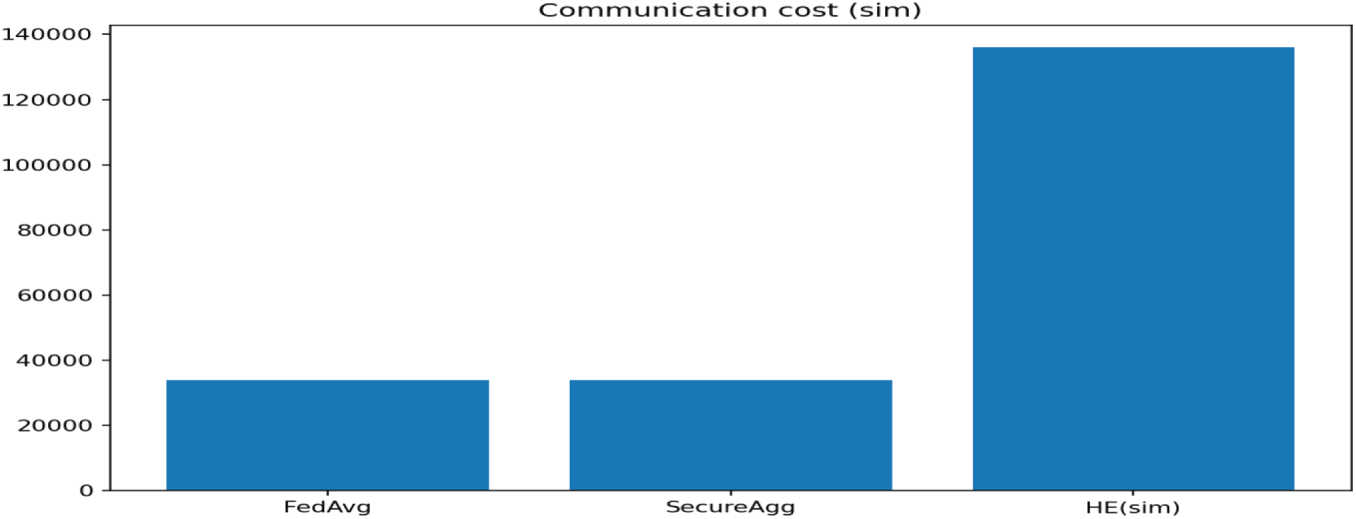

Fig. 6 shows that Secure Aggregation incurs communication costs similar to FedAvg, while Homomorphic Encryption introduces a substantially higher overhead, illustrating the trade-off between cryptographic strength and system efficiency.

Figure 6: Communication cost comparison across FedAvg, Secure Aggregation and Homomorphic Encryption (HE).

4.5 Communication Overhead and Feature Ablation Inputs

4.6 Ablation Results and Privacy Attack Evaluation

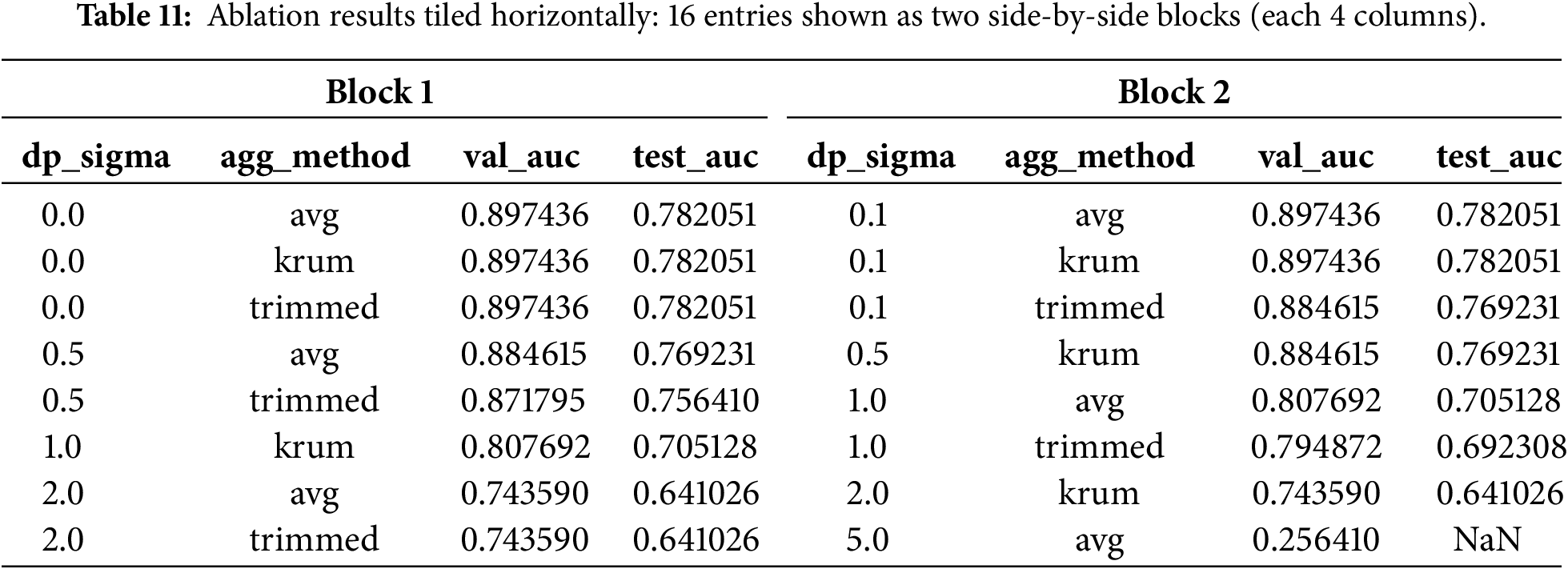

The ablation results in Table 11 show how model performance varies with different differential privacy noise levels and aggregation strategies. When no DP noise is applied (

4.7 Poisoning Robustness and Membership Inference Resistance

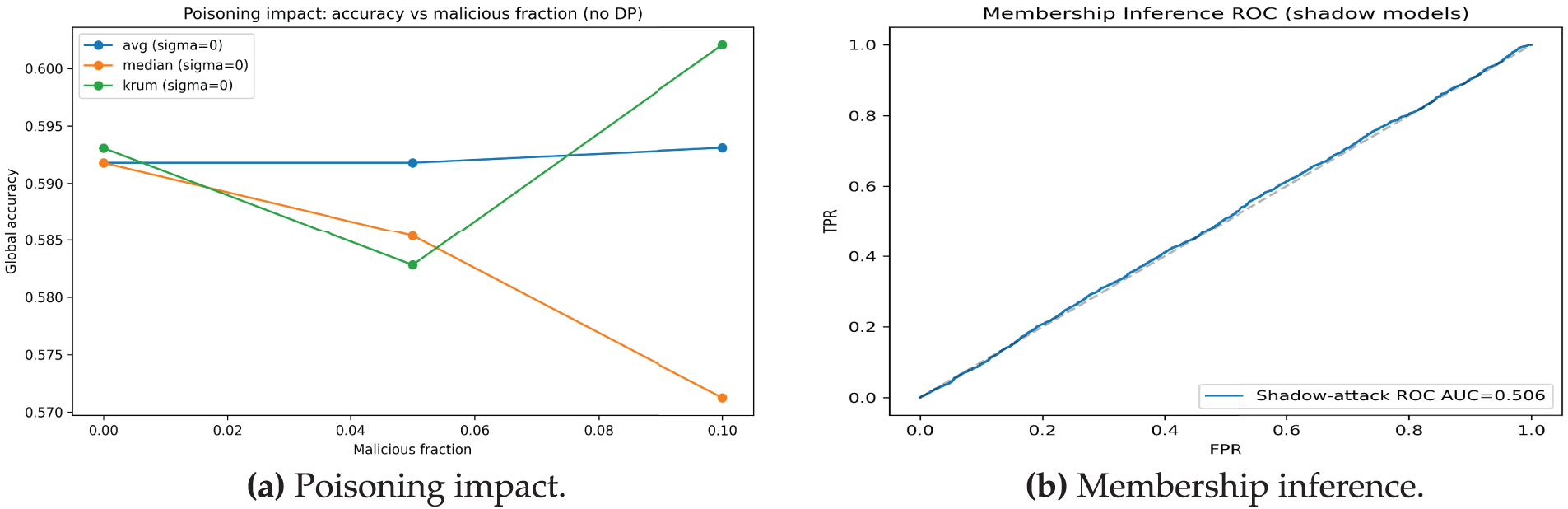

Fig. 7a shows that increasing the malicious client fraction reduces global accuracy, with median aggregation degrading fastest, while KRUM becomes more resilient under higher adversarial participation. Fig. 7b shows that the membership inference attack performs no better than random guessing, as indicated by an ROC AUC near 0.5, demonstrating minimal privacy leakage.

Figure 7: Poisoning robustness and membership inference resistance.

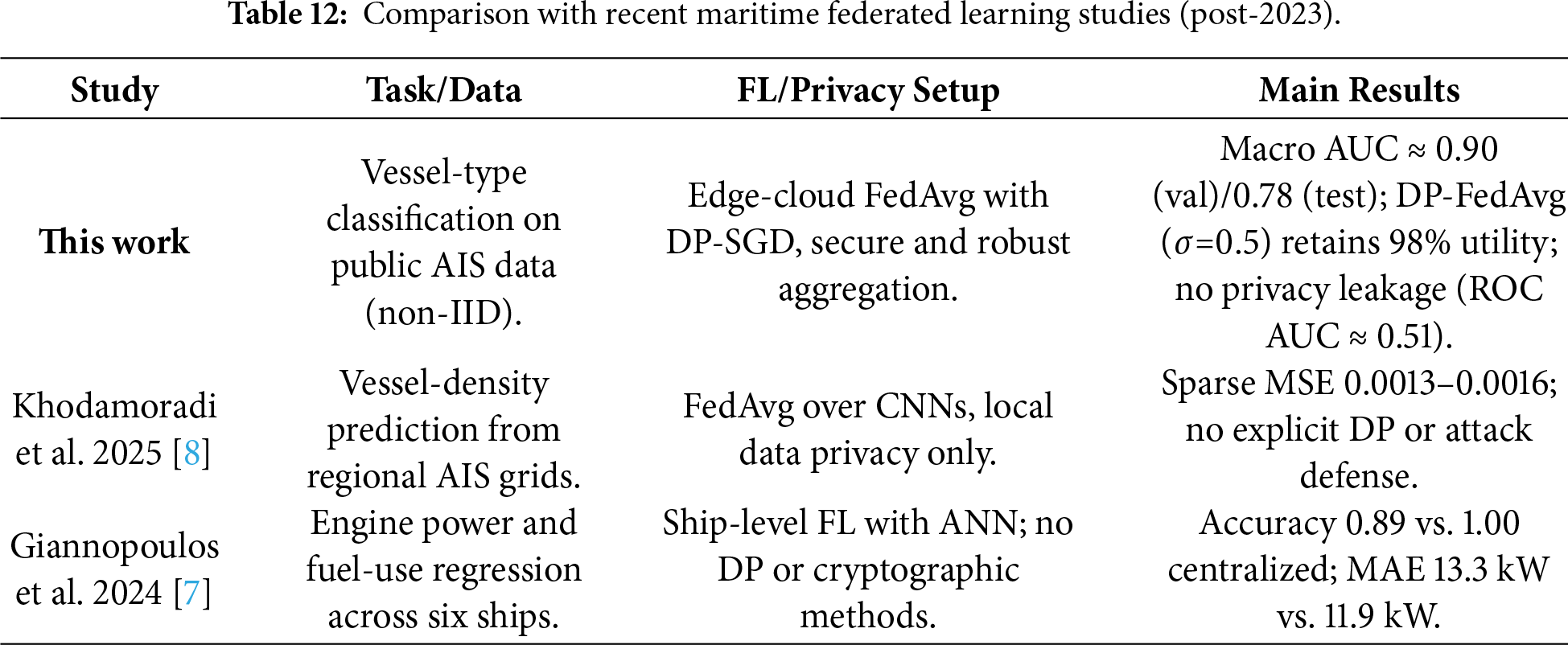

This section discusses the implications of our findings and compares the proposed framework with existing cloud security and privacy-preserving approaches. Table 12 situates our framework within recent maritime federated learning literature, highlighting differences in tasks, privacy mechanisms and resulting performance.

5.1 Utility-Privacy Trade-offs and Non-IID Federated Learning

The empirical results show that our framework maintains strong utility under heterogeneous client data. Exploratory statistics in Tables 2 and 4, together with Figs. 3 and 4, confirm substantial variation and label skew. Despite this, Fig. 5 shows non-private FedAvg remains close to centralized performance, supported by the feature engineering in Section 3.2 and Table 3. Differential privacy degrades performance gradually (Table 11), while secure aggregation incurs minimal overhead (Table 9, Fig. 6). Although validation AUC remains high, the lower test AUC (approximately 0.78) reflects the increased difficulty of fully held-out clients under our non-IID partitioning schemes. In our setting, test clients correspond to vessels or regions with skewed label distributions (Table 5, Fig. 4), so the test performance is intentionally more pessimistic than validation and acts as a proxy for deployment across heterogeneous maritime stakeholders. This gap therefore indicates realistic generalization challenges in strongly non-IID federated environments rather than overfitting to a single client or centralized split.

5.2 Robustness to Poisoning and Inference Attacks

The robustness results in Section 3.4 show that poisoning can degrade accuracy, with Fig. 7a illustrating median aggregation failing fastest while KRUM remains more resilient. Table 6 highlights the value of combining robust aggregation with DP and secure aggregation. Membership inference scores cluster near 0.35 and Fig. 7b shows an AUC near 0.5, consistent with the clipping and noise in Eqs. (11) and (12). Overall, layered defenses remain essential.

5.3 Practical Implications and Limitations

Beyond quantitative results, the framework provides practical advantages for cloud-enabled maritime systems. Treating vessels and ports as federated clients supports cross-organization learning without exposing raw AIS data, reducing regulatory burdens. The fixed-size encrypted payloads in Table 9 and communication costs in Fig. 6 show feasibility over bandwidth-limited links. Strong FedAvg and DP-FedAvg performance under heterogeneous labels indicates realistic traffic patterns do not hinder training. Remaining limitations include reliance on one AIS dataset, simplified partitions, fixed models and non-adaptive attack assumptions.

This work presented a privacy-preserving edge-cloud framework for AIS-based maritime analytics using federated learning, differential privacy and secure aggregation. Experiments showed that strong privacy protections can be applied while maintaining competitive predictive performance and communication efficiency. Robust aggregation further mitigated poisoning and inference risks. Overall, the results demonstrate that secure, collaborative maritime intelligence is feasible and that AIS data provides a strong benchmark for privacy-aware learning. Future work includes validating the framework in real maritime deployments, integrating more expressive sequence or graph models and using adaptive privacy or personalized federated optimization to improve utility. Exploring stronger privacy-enhancing technologies, such as MPC or post-quantum cryptography, may further enhance robustness. We also plan to extend the framework to other critical infrastructures.

Acknowledgement: We gratefully acknowledge the AIS dataset.

Funding Statement: This work was supported by the Deanship of Scientific Research, Vice Presidency for Graduate Studies and Scientific Research, King Faisal University, Saudi Arabia Grant No. KFU254769.

Author Contributions: Conceptualization & methodology: Abuzar Khan; Model & experiments: Abid Iqbal; Writing & analysis: Ghassan Husnain; Validation: Fahad Masood; Review: Mohammed Al-Naeem; Supervision: Sajid Iqbal. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The AIS dataset used in this study is publicly available https://www.kaggle.com/datasets/eminserkanerdonmez/ais-dataset. All resources are available at https://github.com/abuzarkhaaan/A-Secure-and-Differentially-Private-Edge-Cloud.

Ethics Approval: Ethical review not required; AIS data is public dataset.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Ahmad Z, Acarer T, Kim W. Optimization of maritime communication workflow execution with a task-oriented scheduling framework in cloud computing. J Mar Sci Eng. 2023;11(11):2133. doi:10.3390/jmse11112133. [Google Scholar] [CrossRef]

2. Lv T, Tang P, Zhang J. A real-time AIS data cleaning and indicator analysis algorithm based on stream computing. Sci Program. 2023;2023:8345603. doi:10.1155/2023/8345603. [Google Scholar] [CrossRef]

3. Wolsing K, Roepert L, Bauer J, Wehrle K. Anomaly detection in maritime AIS tracks: a review of recent approaches. J Mar Sci Eng. 2022;10(1):112. doi:10.3390/jmse10010112. [Google Scholar] [CrossRef]

4. Chen Y, Zhang G, Liu C, Lu C. Privacy-preserving modeling of trajectory data: secure sharing solutions for trajectory data based on granular computing. Mathematics. 2024;12(23):3681. doi:10.3390/math12233681. [Google Scholar] [CrossRef]

5. Yang Y, Liu Y, Li G, Zhang Z, Liu Y. Harnessing the power of Machine learning for AIS Data-Driven maritime Research: a comprehensive review. Transport Res Part E Logis Transport Rev. 2024;183:103426. doi:10.1016/j.tre.2024.103426. [Google Scholar] [CrossRef]

6. Charpentier V, Slamnik-Kriještorac N, Landi G, Caenepeel M, Vasseur O, Marquez-Barja JM. Paving the way towards safer and more efficient maritime industry with 5G and Beyond edge computing systems. Comput Netw. 2024;250:110499. doi:10.1016/j.comnet.2024.110499. [Google Scholar] [CrossRef]

7. Giannopoulos A, Gkonis PK, Bithas PS, Nomikos N, Ntroulias G, Trakadas P. Federated learning for maritime environments: use cases, experimental results, and open issues. TechRxiv. 2023. doi:10.36227/techrxiv.22133549. [Google Scholar] [CrossRef]

8. Khodamoradi A, Figueiras PA, Grilo A, Lourenço L, Rêga B, Agostinho C, et al. Vessel traffic density prediction: a federated learning approach. ISPRS Int J Geo Inf. 2025;14(9):359. doi:10.3390/ijgi14090359. [Google Scholar] [CrossRef]

9. Zhan S, Huang L, Luo G, Zheng S, Gao Z, Chao HC. A review on federated learning architectures for privacy-preserving AI: lightweight and secure cloud-edge–end collaboration. Electronics. 2025;14(13):2512. doi:10.3390/electronics14132512. [Google Scholar] [CrossRef]

10. Jiao S, Cai L, Meng J, Zhao Y, Cheng K. Efficient DP-FL: efficient differential privacy federated learning based on early stopping mechanism. Comput Syst Sci Eng. 2024;48(1):247–65. doi:10.32604/csse.2023.040194. [Google Scholar] [CrossRef]

11. Guan M, Bao H, Li Z, Pan H, Huang C, Dai HN. SAMFL: secure aggregation mechanism for federated learning with byzantine-robustness by functional encryption. J Syst Archit. 2024;157:103304. doi:10.1016/j.sysarc.2024.103304. [Google Scholar] [CrossRef]

12. Tadi AA, Dayal S, Alhadidi D, Mohammed N. Comparative analysis of membership inference attacks in federated and centralized learning. Information. 2023;14(11):620. doi:10.3390/info14110620. [Google Scholar] [CrossRef]

13. Lim LH, Ong LY, Leow MC. Federated learning for anomaly detection: a systematic review on scalability, adaptability, and benchmarking framework. Future Internet. 2025;17(8):375. doi:10.3390/fi17080375. [Google Scholar] [CrossRef]

14. Li H, Ge L, Tian L. Survey: federated learning data security and privacy-preserving in edge-internet of things. Artif Intell Rev. 2024;57(5):130. doi:10.1007/s10462-024-10774-7. [Google Scholar] [CrossRef]

15. Ribeiro CV, Paes A, de Oliveira D. AIS-based maritime anomaly traffic detection: a review. Expert Syst Appl. 2023;231:120561. doi:10.1016/j.eswa.2023.120561. [Google Scholar] [CrossRef]

16. Xu C, Qu Y, Xiang Y, Gao L. Asynchronous federated learning on heterogeneous devices: a survey. Comput Sci Rev. 2023;50:100595. doi:10.1016/j.cosrev.2023.100595. [Google Scholar] [CrossRef]

17. McMahan B, Moore E, Ramage D, Hampson S, Bay A. Communication-efficient learning of deep networks from decentralized data. In: Proceedings of the 20th International Conference on Artificial Intelligence and Statistics (AISTATS). Vol. 54. London, UK: PMLR; 2017. p. 1273–82. [Google Scholar]

18. Dwork C. Differential privacy. In: Bugliesi M, Preneel B, Sassone V, Wegener I, editors. Automata, Languages and Programming, 33rd International Colloquium, ICALP 2006; 2006 Jul 10–14; Venice, Italy. Vol. 4052. Charm, Switzerland: Springer; 2006. p. 1–12. doi:10.1007/11787006. [Google Scholar] [CrossRef]

19. Mohammadi S, Balador A, Sinaei S, Flammini F. Balancing privacy and performance in federated learning: a systematic literature review on methods and metrics. J Parallel Distr Comput. 2024;192:104918. doi:10.1016/j.jpdc.2024.104918. [Google Scholar] [CrossRef]

20. Huang P, Chen Q, Wang D, Wang M, Wu X, Huang X. TripleConvTransformer: a deep learning vessel trajectory prediction method fusing discretized meteorological data. Front Environ Sci. 2022;10:1012547. doi:10.3389/fenvs.2022.1012547. [Google Scholar] [CrossRef]

21. Huang IL, Lee MC, Nieh CY, Huang JC. Ship classification based on AIS data and machine learning methods. Electronics. 2024;13(1):98. doi:10.3390/electronics13010098. [Google Scholar] [CrossRef]

22. Louart M, Szkolnik JJ, Boudraa AO, Le Lann JC, Le Roy F. Detection of AIS messages falsifications and spoofing by checking messages compliance with TDMA protocol. Digit Signal Process. 2023;136:103983. doi:10.1016/j.dsp.2023.103983. [Google Scholar] [CrossRef]

23. Varshitha GS, Rupa C, Divya D, Gadekallu TR, Srivastava G. A survey of authentication protocols for enhancing security in underwater communication systems. In: 2025 IEEE 34th Wireless and Optical Communications Conference (WOCC). Piscataway, NJ, USA: IEEE; 2025. p. 1–6. [Google Scholar]

24. Rupa C, Varshitha GS, Divya D, Gadekallu TTR, Srivastava G. A novel and robust authentication protocol for secure underwater communication systems. IEEE Inter Things J. 2025;12(22):47519–31. doi:10.1109/jiot.2025.3601984. [Google Scholar] [CrossRef]

25. Barona López LI, Borja Saltos T. Heterogeneity challenges of federated learning for future wireless communication networks. J Sens Actuator Netw. 2025;14(2):37. doi:10.3390/jsan14020037. [Google Scholar] [CrossRef]

26. Tayyeh HK, AL-Jumaili ASA. Balancing privacy and performance: a differential privacy approach in federated learning. Computers. 2024;13(11):277. doi:10.3390/computers13110277. [Google Scholar] [CrossRef]

27. Zhang X, Luo Y, Li T. A review of research on secure aggregation for federated learning. Future Inter. 2025;17(7):308. doi:10.3390/fi17070308. [Google Scholar] [CrossRef]

28. Erdonmez ES. AIS dataset: transition of ships at kattegat strait; 2020. Kaggle Dataset. [cited 2026 Jan 10]. Available from: https://www.kaggle.com/datasets/eminserkanerdonmez/ais-dataset. [Google Scholar]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF

Downloads

Downloads

Citation Tools

Citation Tools