Open Access

Open Access

REVIEW

Large Language Models for Cybersecurity Intelligence: A Systematic Review of Emerging Threats, Defensive Capabilities, and Security Evaluation Frameworks

1 Informatics and Computer Systems Department, College of Computer Science, Center of Artificial Intelligence, King Khalid University, Abha, Saudi Arabia

2 Department of Computer Applications, Shaheed Bhagat Singh State University, Ferozepur, Punjab, India

* Corresponding Author: Gulshan Kumar. Email:

Computers, Materials & Continua 2026, 87(3), 9 https://doi.org/10.32604/cmc.2026.077367

Received 08 December 2025; Accepted 09 February 2026; Issue published 09 April 2026

Abstract

Large Language Models (LLMs) are becoming integral components of modern cybersecurity ecosystems, simultaneously strengthening defensive capabilities while giving rise to a new class of Artificial Intelligence–Generated Content (AIGC)-driven threats. This PRISMA-guided systematic review synthesises 167 peer-reviewed studies published between 2022 and 2025 and proposes a unified threat–defence–evaluation taxonomy as a central analytical framework to consolidate a previously fragmented body of research. Guided by this taxonomy, the review first examines AIGC-enabled threats, including automated and highly personalised phishing, polymorphic malware and exploit generation, jailbreak and adversarial prompting, prompt-injection attack vectors, multimodal deception, persona-steering attacks, and large-scale disinformation campaigns. The surveyed evidence indicates a qualitative escalation in adversarial capabilities, with LLMs significantly enhancing scalability, adaptability, and realism while markedly reducing the technical barriers to conducting sophisticated attacks. Second, the review analyses LLM-enabled defensive applications spanning intrusion and anomaly detection, malware analysis and log-semantic modelling, multilingual threat intelligence extraction, vulnerability discovery and code repair, and Security Operations Center (SOC) automation through Retrieval-Augmented Generation (RAG) and multi-agent systems. Although these approaches demonstrate strong potential as semantic reasoning and decision-support components within hybrid security architectures, their real-world effectiveness remains constrained by hallucination risks, adversarial susceptibility, distributional shifts, and operational overhead. Third, the review synthesises current security evaluation and red-teaming practices, revealing a fragmented assessment landscape characterised by narrow benchmarks, inconsistent evaluation metrics, and limited longitudinal robustness analysis. Overall, the taxonomy-driven synthesis highlights a structurally imbalanced ecosystem in which offensive innovation outpaces defensive maturity and governance, and it informs a structured, research-question-aligned roadmap for developing trustworthy, resilient, and policy-aligned LLM-powered cybersecurity systems.Keywords

Large Language Models (LLMs), developed using large-scale transformer architectures, have rapidly emerged as foundational components of contemporary artificial intelligence, enabling significant advances in reasoning, code generation, summarisation, automated analysis, and decision support across high-stakes application domains [1,2]. Their capacity to process heterogeneous, unstructured, and multilingual data at scale renders them particularly well suited to cybersecurity environments, where analysts must interpret complex and high-volume artefacts, including system logs, threat intelligence reports, malware analyses, vulnerability disclosures, and Security Operations Center (SOC) narratives [3–5]. At the same time, the same generative and reasoning capabilities that enable defensive augmentation can be readily exploited by adversaries. A rapidly expanding body of peer-reviewed research documents the use of LLMs to craft highly personalised phishing campaigns [6,7], generate polymorphic and evasive malware [8,9], automate reconnaissance and exploit scaffolding [10], conduct multimodal deception and persona-steering attacks [11,12], and orchestrate large-scale misinformation and disinformation operations [13,14]. This dual-use character has profound implications for the evolving balance between cyber offence and cyber defence.

Despite growing interest in this area, existing surveys exhibit substantial variation in scope, methodological rigor, and evidentiary depth. Yao et al. synthesise model-level vulnerabilities and privacy risks associated with LLM deployment [15]. Jaffal et al. provide a detailed examination of LLM capabilities, limitations, and defensive applications within SOC and cyber threat intelligence (CTI) ecosystems [3]. Zhang et al. offer a structured review of LLM-driven cybersecurity applications [16], while Karras et al. discuss broader opportunities and risks in big-data security pipelines [4]. However, these surveys share notable limitations: many rely primarily on narrative synthesis rather than systematic review protocols; several include a substantial proportion of preprint literature; few integrate offensive, defensive, and evaluative research strands within a single analytical framework; and none provide a PRISMA [17]-aligned, SCI/SCIE-restricted taxonomy that jointly captures threats, defences, and security evaluation methodologies. As the field continues to expand rapidly, the absence of a comprehensive and methodologically transparent synthesis impedes cross-study comparability, evidence consolidation, and the standardisation of research practices.

To address these gaps, this article presents the first PRISMA-compliant systematic survey of LLMs for cybersecurity intelligence covering the period 2022–2025, explicitly restricted to peer-reviewed SCI/SCIE publications. The overarching objective is to construct a rigorous and unified evidence base that characterises how LLMs reshape cyber offence, cyber defence, and the evaluation ecosystems governing trustworthy deployment. Specifically, this study pursues four core aims:

• to systematically identify and synthesise Artificial Intelligence–Generated Content (AIGC)-driven cyber threats enabled, amplified, or operationalised by LLMs, including phishing, malware generation, jailbreak attacks, deception, misinformation, and persona steering [11];

• to analyse LLM-enabled defensive capabilities spanning intrusion detection [18], malware semantic modelling [19], threat intelligence extraction [20,21], vulnerability assessment and remediation [22], SOC automation [23], and multi-agent defence architectures [24];

• to examine emerging security evaluation frameworks, including benchmarks [25], harm and refusal metrics [3], adversarial red-teaming methodologies [15,26], and lifecycle-oriented risk assessments [27], alongside the growing need for ethical and governance frameworks [28,29];

• to distil cross-cutting methodological limitations, systemic risks (including privacy [30] and ethical compliance [31]), and unresolved research challenges derived from 167 peer-reviewed primary studies and several high-impact surveys.

These aims motivate the following research questions:

• RQ1: What forms of cybersecurity threats are generated, enhanced, or operationalised through AIGC and LLMs, and how are these threats empirically studied and modelled?

• RQ2: How are LLMs leveraged to strengthen cyber defence capabilities across detection, analysis, and response workflows, and what limitations persist?

• RQ3: What security evaluation frameworks, benchmarks, and red-teaming methodologies exist for assessing LLM robustness, safety, and reliability under adversarial conditions?

• RQ4: What methodological gaps, systemic challenges, and governance limitations hinder the trustworthy deployment of LLM-based cybersecurity systems?

The primary contributions of this survey are as follows:

• a PRISMA-aligned systematic review protocol tailored to LLM-centric cybersecurity research, detailing search strategies, inclusion and exclusion criteria, quality appraisal procedures, and multi-stage screening;

• a critical comparison of influential surveys [3,4,15,16], motivating the unified threat–defence–evaluation taxonomy introduced in this work;

• a structured synthesis of 167 peer-reviewed primary studies addressing AIGC-driven threats [6,13], LLM-based defensive mechanisms [18,19,23], and security evaluation and red-teaming frameworks [25,32];

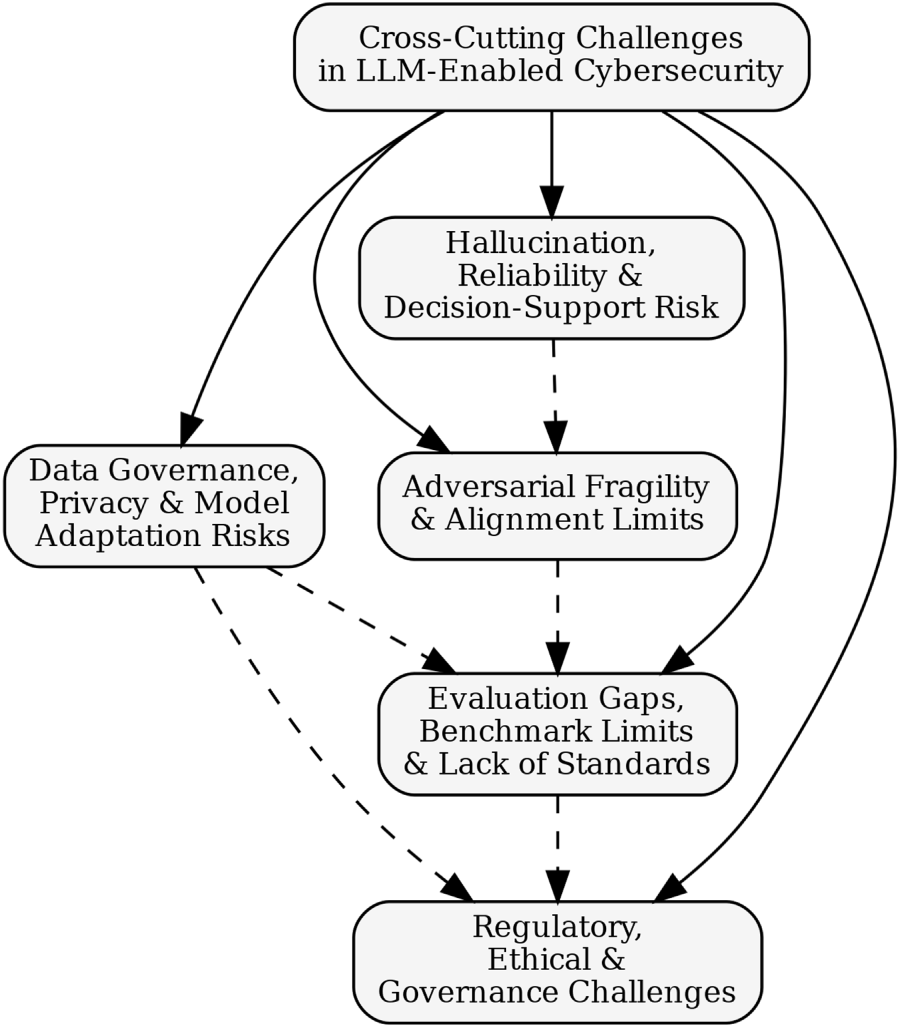

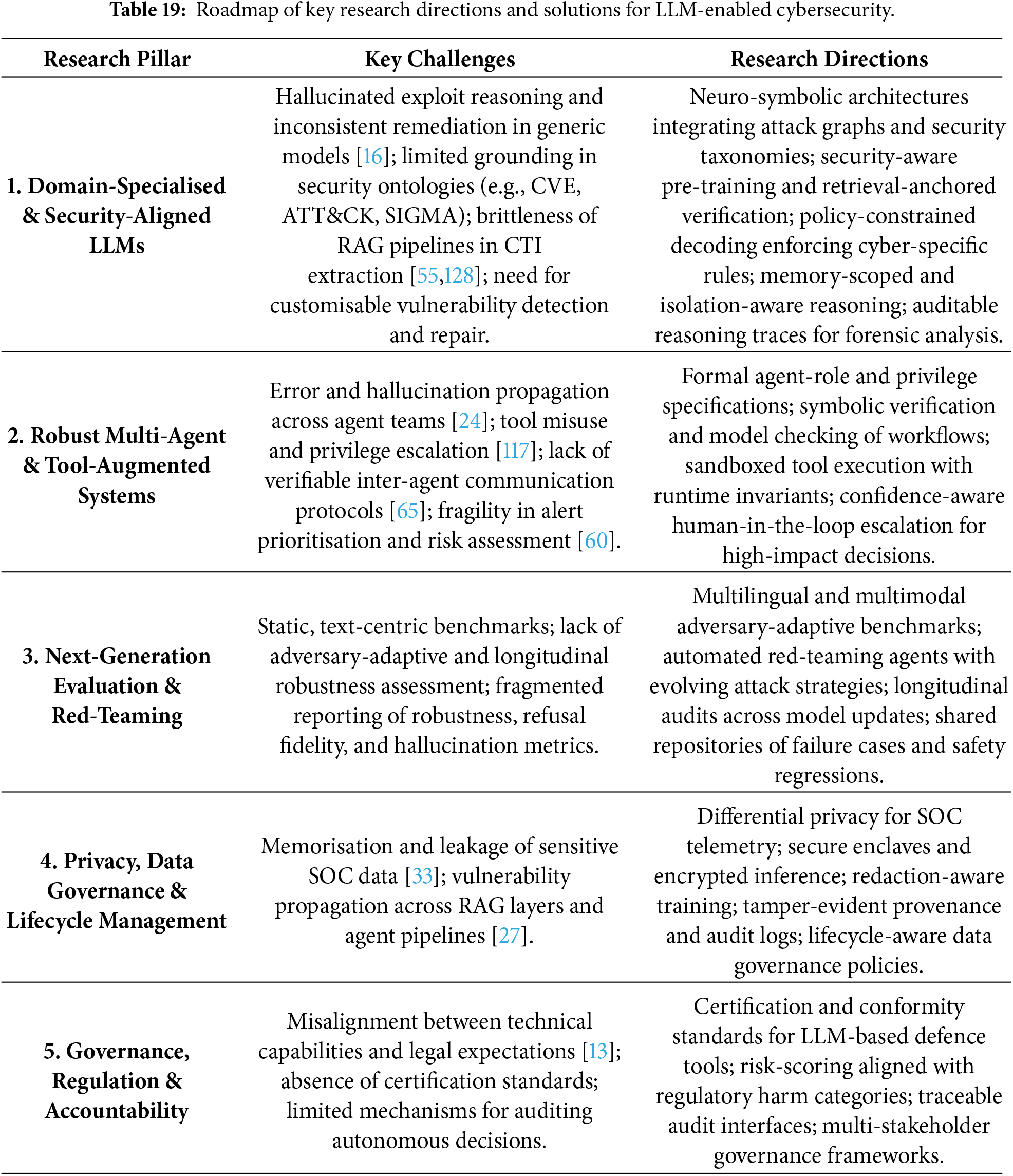

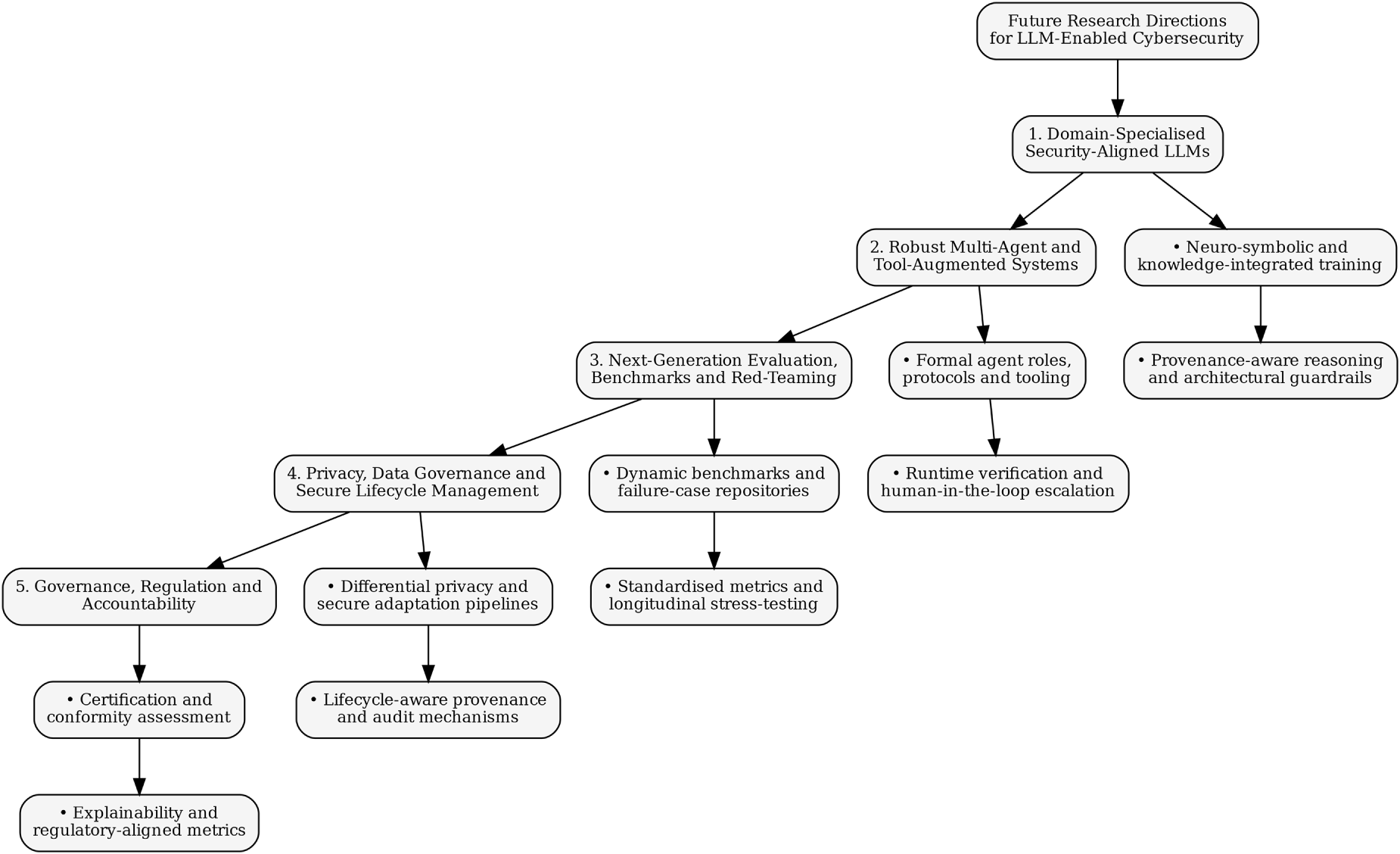

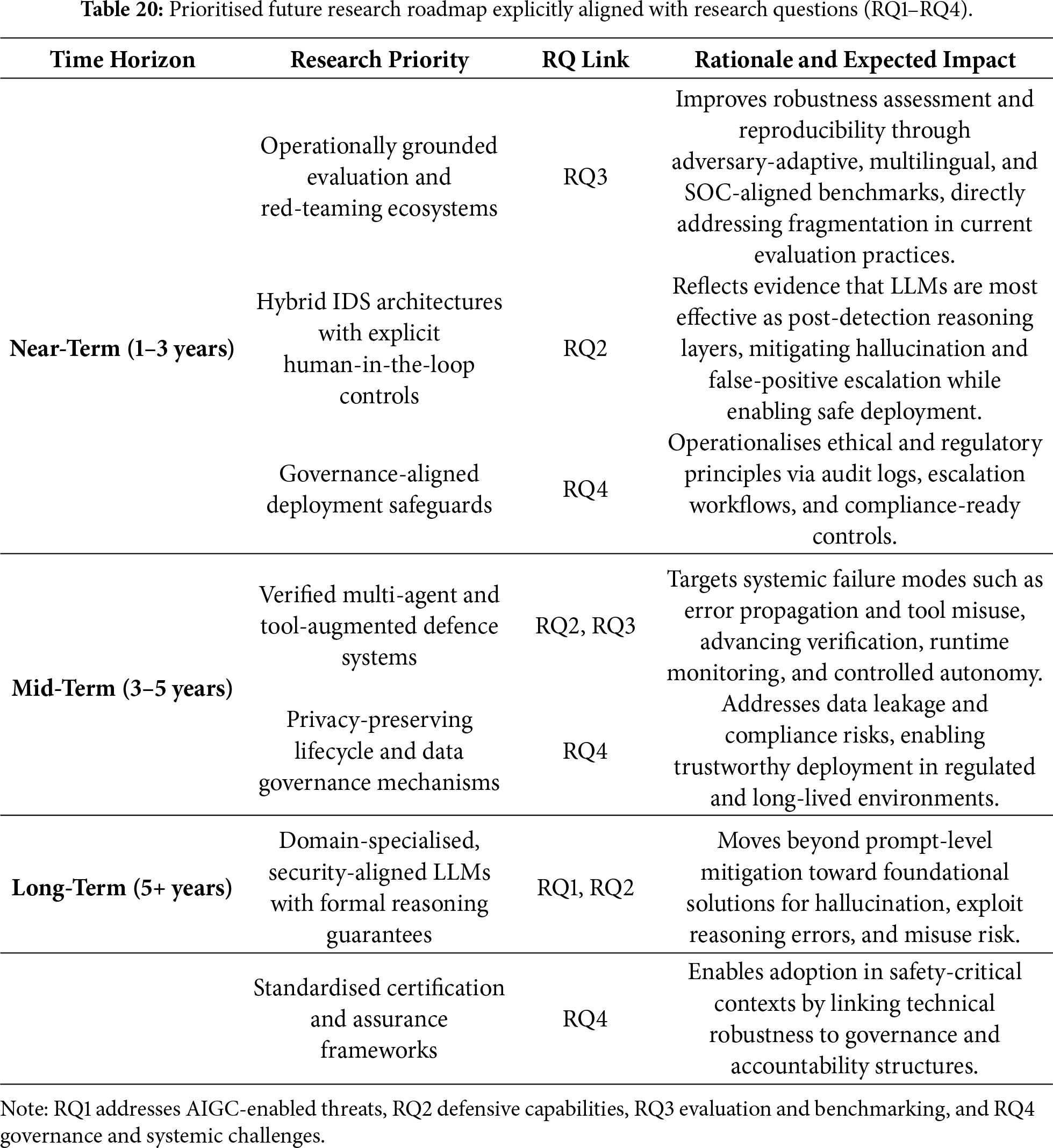

• the identification of cross-cutting challenges—including hallucination, adversarial fragility, privacy and governance risks, evaluation fragmentation, lifecycle vulnerabilities, and the absence of standardised assurance frameworks—followed by a comprehensive future research roadmap for resilient, auditable, and security-aligned LLM deployments.

In contrast to prior surveys, this review grounds each threat and defence category in empirically evaluated case studies and critically analyses their operational limitations under realistic SOC and intrusion detection deployment scenarios. Overall, this survey offers a methodologically rigorous, conceptually integrated, and empirically grounded examination of how LLMs are transforming the cybersecurity landscape, both as powerful enablers of defensive intelligence and as catalysts for increasingly adaptive and scalable adversarial behaviour. By consolidating diverse research trajectories and introducing a unified taxonomy alongside a forward-looking research agenda, this work aims to support the development of trustworthy, verifiable, and operationally reliable LLM-driven cybersecurity systems.

The remainder of this article is organised as follows. Section 2 describes the PRISMA-guided review methodology. Section 3 situates this study within the existing survey literature. Section 4 presents the unified threat–defence–evaluation taxonomy and analytical framework. Sections 5 and 6 analyse AIGC-driven threats and LLM-based defensive capabilities, while Section 7 reviews emerging security evaluation and red-teaming methodologies. Section 8 examines methodological patterns across the evidence base. Section 9 synthesises cross-cutting challenges, and Section 10 outlines strategic future research directions. Section 11 concludes the paper.

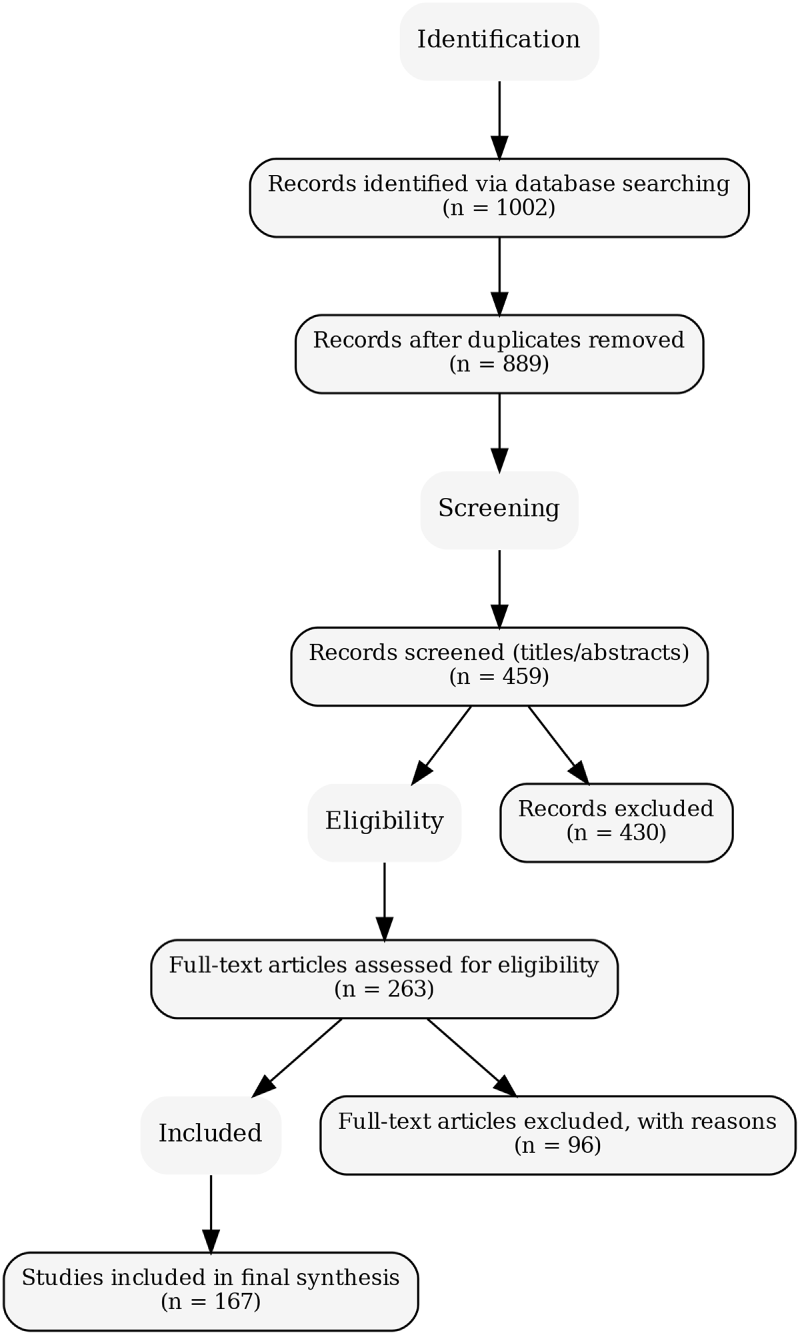

This review adopts a rigorously defined systematic literature review (SLR) methodology grounded in the PRISMA 2020 guidelines to ensure transparency, reproducibility, and analytical completeness. Given the rapid evolution and methodological heterogeneity of Large Language Model (LLM) research in cybersecurity, particular emphasis is placed on bias mitigation, inductive taxonomy construction, and cross-study comparability. The final evidence base comprises 167 peer-reviewed studies published between 2022 and 2025, each systematically mapped to the unified threat–defence–evaluation taxonomy introduced in Section 4. An overview of the study identification and selection process is provided in the PRISMA flow diagram shown in Fig. 1.

Figure 1: PRISMA flow diagram summarising identification, screening, eligibility assessment, and final inclusion of 167 studies.

2.1 Review Protocol and PRISMA Workflow

The review protocol was specified a priori in accordance with PRISMA recommendations to minimise selection bias and enhance methodological transparency. The review workflow comprised four sequential stages: identification, screening, eligibility assessment, and final inclusion. The initial search yielded 1002 records across multiple scholarly databases. Following automated and manual deduplication, 889 unique studies were subjected to title and abstract screening, resulting in the exclusion of 430 records. The remaining 459 studies underwent detailed screening, leading to 263 full-text articles being assessed for eligibility. Of these, 96 studies were excluded due to insufficient cybersecurity relevance, incomplete methodological reporting, or failure to satisfy the predefined inclusion criteria. The final corpus therefore consisted of 167 peer-reviewed studies, representing a balanced cross-section of AIGC-driven threats, LLM-enabled defensive architectures, and security evaluation, safety, and governance frameworks. Fig. 1 provides a transparent visual summary of this selection process.

2.2 Inductive Taxonomy Construction and Coding Rationale

Rather than imposing an externally defined classification scheme, this review employs a bottom-up inductive coding strategy to derive the unified taxonomy presented in Section 4. Following full-text screening, each study was independently coded along multiple analytical dimensions, including: (i) the primary cybersecurity task addressed, (ii) the functional role of the LLM within the system architecture, (iii) the threat or defensive context, (iv) the evaluation or validation methodology, and (v) the assumed deployment environment (e.g., laboratory-scale, SOC-scale, or safety-critical systems).

Initial open coding revealed recurring conceptual and methodological patterns across ostensibly diverse contributions. Through iterative axial coding and constant comparative analysis, these patterns converged into four dominant dimensions: (T) AIGC-driven threat vectors, (D) LLM-based defensive capabilities, (E) evaluation and red-teaming methodologies, and (M) methodological and governance orientation. For instance, studies addressing automated phishing, misinformation generation, exploit scaffolding, and prompt-based attacks consistently clustered within a unified threat dimension, whereas contributions focusing on SOC automation, intrusion detection, vulnerability analysis, and agentic response mechanisms formed a distinct defensive axis.

Subcategories within each dimension were further refined through frequency analysis and conceptual differentiation. Within the defensive dimension, intrusion detection, threat intelligence automation, secure code analysis, and autonomous response emerged as separable categories based on differences in data modality, latency constraints, and operational responsibility. Evaluation-oriented studies similarly diverged into benchmark construction, adversarial red-teaming, metric development, and lifecycle robustness analysis. Edge cases and hybrid studies were explicitly retained and cross-mapped to reflect the interdisciplinary and rapidly converging nature of LLM-driven cybersecurity research. This inductive process ensures that the resulting taxonomy faithfully reflects the empirical structure of the literature rather than an a priori conceptual framework.

2.3 Search Strategy and Information Sources

Searches were conducted across eight authoritative scholarly databases: IEEE Xplore, ACM Digital Library, ScienceDirect, SpringerLink, Wiley Online Library, Taylor & Francis, Scopus, and Web of Science. These sources were selected to capture interdisciplinary research spanning cybersecurity, artificial intelligence, software engineering, and digital governance. The review period extends from January 2022 to December 2025, corresponding to the widespread deployment of transformer-based LLMs (e.g., GPT-3+, LLaMA, PaLM, Codex) in both offensive and defensive cybersecurity contexts.

Search queries combined terms related to LLM architectures, cyber threat categories, defensive applications, and evaluation constructs using Boolean operators:

(“large language model” OR LLM OR “foundation model” OR transformer) AND (cybersecurity OR malware OR phishing OR vulnerability OR anomaly OR SOC) AND (AIGC OR “automated phishing” OR jailbreak OR “prompt injection” OR misuse)

Search expressions were iteratively refined through pilot queries to incorporate emerging themes such as adversarial prompting, exploit generation, multi-agent reasoning, and cyber governance. Foundational surveys on LLM security and misuse [33–36] informed construct definition and ensured terminological consistency across the search strategy.

2.4 Inclusion, Exclusion, and Quality Appraisal

Studies were included if they were peer-reviewed journal or conference publications written in English between 2022 and 2025 and examined LLMs or transformer-based models within cybersecurity-relevant contexts. Eligible studies addressed AIGC-enabled threats such as phishing, deception, exploit scaffolding, or jailbreak attacks [33,34]; proposed or evaluated LLM-based defensive mechanisms including SOC automation, threat intelligence extraction, intrusion or anomaly detection, and vulnerability analysis [35,36]; or contributed to evaluation, red-teaming, benchmark design, or governance frameworks for LLM safety.

Exclusion criteria removed preprints, editorials, theses, and other non-peer-reviewed materials; studies lacking substantive cybersecurity relevance; purely generic natural language processing applications without adversarial or security implications; works with insufficient methodological detail; and duplicate publications. Methodological quality was assessed using a bespoke appraisal framework adapted from established SLR guidelines, evaluating the clarity of threat models, transparency of datasets and evaluation pipelines, reproducibility, metric suitability, and the discussion of limitations and ethical risks. Studies exhibiting methodological weaknesses were retained when they offered unique empirical or conceptual insights, with their limitations explicitly acknowledged in subsequent synthesis sections.

2.5 Inter-Reviewer Agreement, Bias Mitigation, and Sensitivity Analysis

To ensure consistency and minimise subjective bias, study selection and coding were independently performed by two reviewers at both the title–abstract and full-text screening stages. Inter-reviewer agreement was quantified using Cohen’s kappa coefficient, yielding substantial agreement during title–abstract screening (

Bias mitigation measures included multi-database coverage to reduce venue bias, a priori inclusion criteria, blinded screening where feasible, and the use of a standardised, taxonomy-aligned coding template to limit interpretive drift. A sensitivity analysis was conducted on borderline studies excluded during full-text screening (e.g., partially relevant preprints or studies with limited evaluation detail). Reintroduction of these studies did not materially affect the taxonomy structure, thematic distributions, or comparative conclusions, indicating that the review findings are robust and not unduly sensitive to marginal inclusion decisions.

3 Positioning with Respect to Existing Surveys

A substantial body of survey literature has examined intrusion detection systems (IDS) from the perspectives of machine learning, deep learning, and, more recently, transformer-based architectures. These IDS-centric surveys predominantly focus on network intrusion detection systems (NIDS), host-based intrusion detection systems (HIDS), and industrial or IoT-oriented IDS deployments, with primary emphasis on feature engineering strategies, model architectures, benchmark datasets, and performance metrics [37]. Representative works include surveys on transformer-based NIDS, hybrid deep learning IDS frameworks, and intrusion detection in IIoT and industrial control system (ICS) environments, where LLMs-if mentioned at all-are treated as high-capacity sequence models or feature-level classifiers rather than as reasoning or agentic components. Consequently, while these surveys provide valuable insights into detection accuracy, scalability, and dataset-specific performance, they do not address the broader implications of LLMs as cognitive security primitives operating across detection, reasoning, and response pipelines.

In parallel, a distinct line of survey research has emerged that focuses on the security, reliability, and societal implications of large language models. Foundational overviews by Alawida et al. [33], Esmradi et al. [34], and Lopez et al. [35] provide early mappings of the opportunities and risks introduced by generative AI. Alawida et al. [33] conduct a comprehensive examination of ChatGPT, analysing its capabilities, limitations, misuse potential, and associated ethical and privacy concerns. Esmradi et al. [34] systematically catalogue attack techniques and mitigation strategies related to LLM misuse, including data leakage and adversarial behaviours, while Lopez et al. [35] explore application-level opportunities and challenges. However, these surveys remain largely high-level and conceptual, and they do not engage in systematic comparison with IDS-centric research nor integrate intrusion detection within a broader cybersecurity lifecycle.

More recent contributions further narrow the scope toward governance, lifecycle risk, and domain-specific security applications. Nawara and Kashef [36] investigate LLM-powered recommendation and reasoning systems, highlighting security and governance challenges in decision-support pipelines, while Uddin et al. [38] present a critical analysis of generative AI risks, emphasising lifecycle vulnerabilities, regulatory gaps, and systemic misuse pathways relevant to cybersecurity. Despite their analytical depth, these works continue to treat intrusion detection, SOC automation, and response mechanisms as largely isolated application domains rather than as interdependent components within an end-to-end threat–defence–evaluation ecosystem.

The primary distinction between existing IDS-focused surveys and the present work lies in the level of abstraction and the conceptual role assigned to LLMs. IDS-centric surveys predominantly frame models-including transformers-as feature-level classifiers optimised for detection accuracy on specific datasets. In contrast, this review conceptualises LLMs as cognitive and agentic security components capable of semantic reasoning, contextual correlation, decision support, and autonomous coordination across multiple stages of the cybersecurity pipeline. As a result, intrusion detection is not analysed in isolation but is examined alongside AIGC-driven threats, SOC automation, multi-agent response systems, and security evaluation and red-teaming practices.

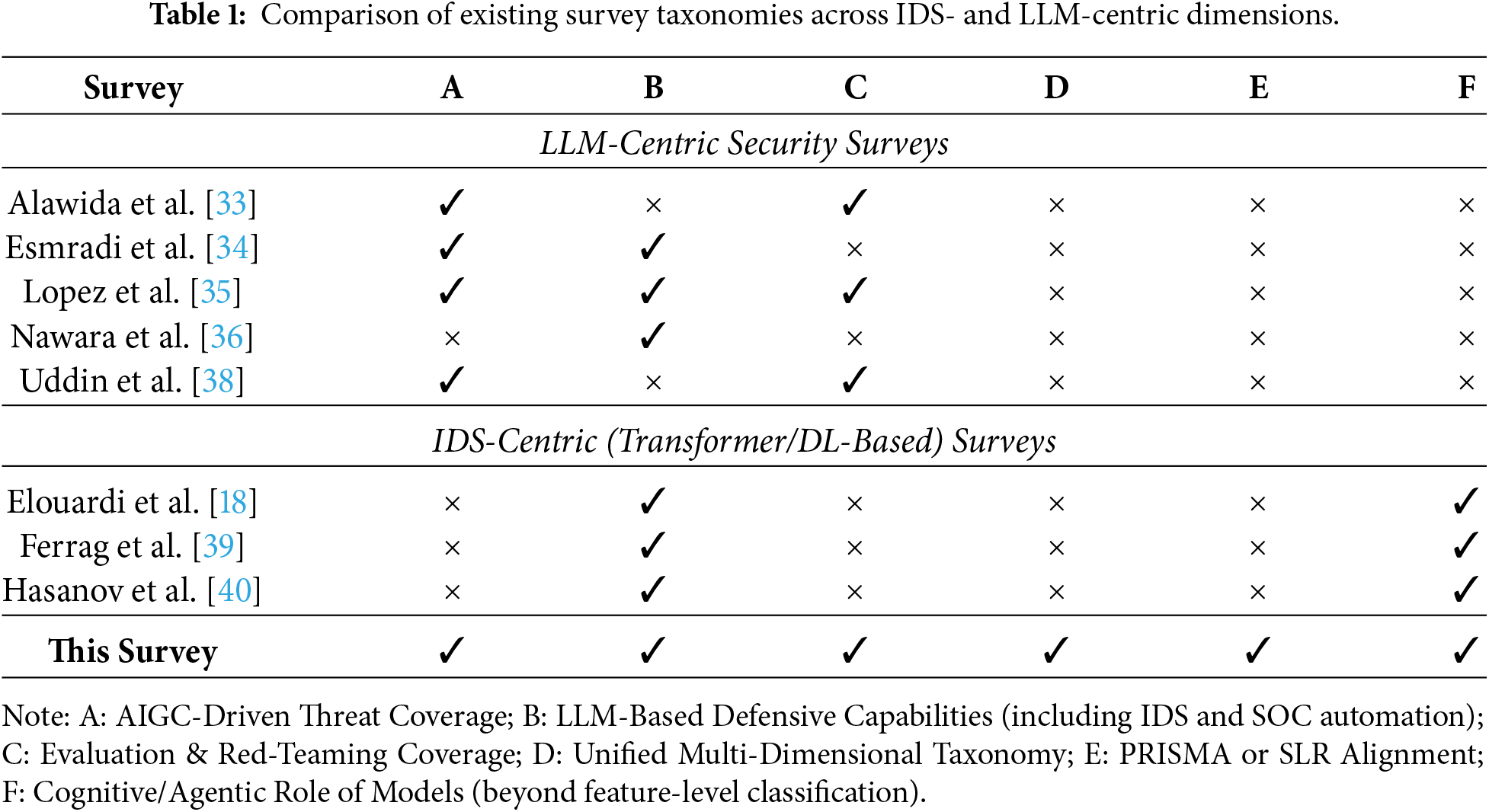

Table 1 systematically positions representative LLM- and IDS-focused surveys within the dimensions of the proposed unified taxonomy. As the comparison illustrates, prior surveys typically concentrate on isolated aspects of the problem space, such as AIGC-driven threat characterisation [33,34], application-level opportunities and limitations [35,36], or governance and lifecycle risk analysis [38]. However, none of these works provides a fully integrated, multi-dimensional perspective that jointly examines offensive misuse, defensive reasoning, evaluation and red-teaming practices, and methodological orientation within a single analytical framework. Furthermore, the absence of PRISMA-aligned screening and eligibility procedures across existing surveys limits reproducibility and systematic coverage.

A further distinguishing factor, highlighted of Table 1, concerns model conceptualisation. Existing IDS-centric surveys—particularly those focusing on transformer-based NIDS, HIDS, and IIoT detection—primarily treat deep learning models as feature-level classifiers optimised for detection performance. In contrast, the present review frames LLMs as cognitive and agentic security components that enable semantic reasoning, contextual awareness, and coordinated decision-making across the full cybersecurity lifecycle, encompassing detection, analysis, response, and evaluation.

Unlike prior IDS-centric and LLM-centric reviews, the present study adopts a PRISMA-guided systematic methodology, restricts its evidence base to 167 peer-reviewed publications from 2022 to 2025, and synthesises findings using a unified four-dimensional taxonomy encompassing AIGC-driven threats, LLM-enabled defensive capabilities (including intrusion detection and SOC automation), security evaluation and red-teaming practices, and methodological orientation. This integrated framework enables a holistic characterisation of LLM-driven cybersecurity research and explicitly bridges the conceptual gap between traditional IDS surveys and emerging LLM security studies. By positioning intrusion detection as one component within a broader cognitive and agentic defence ecosystem, this review provides a more comprehensive, coherent, and methodologically transparent synthesis to inform future research and real-world deployment.

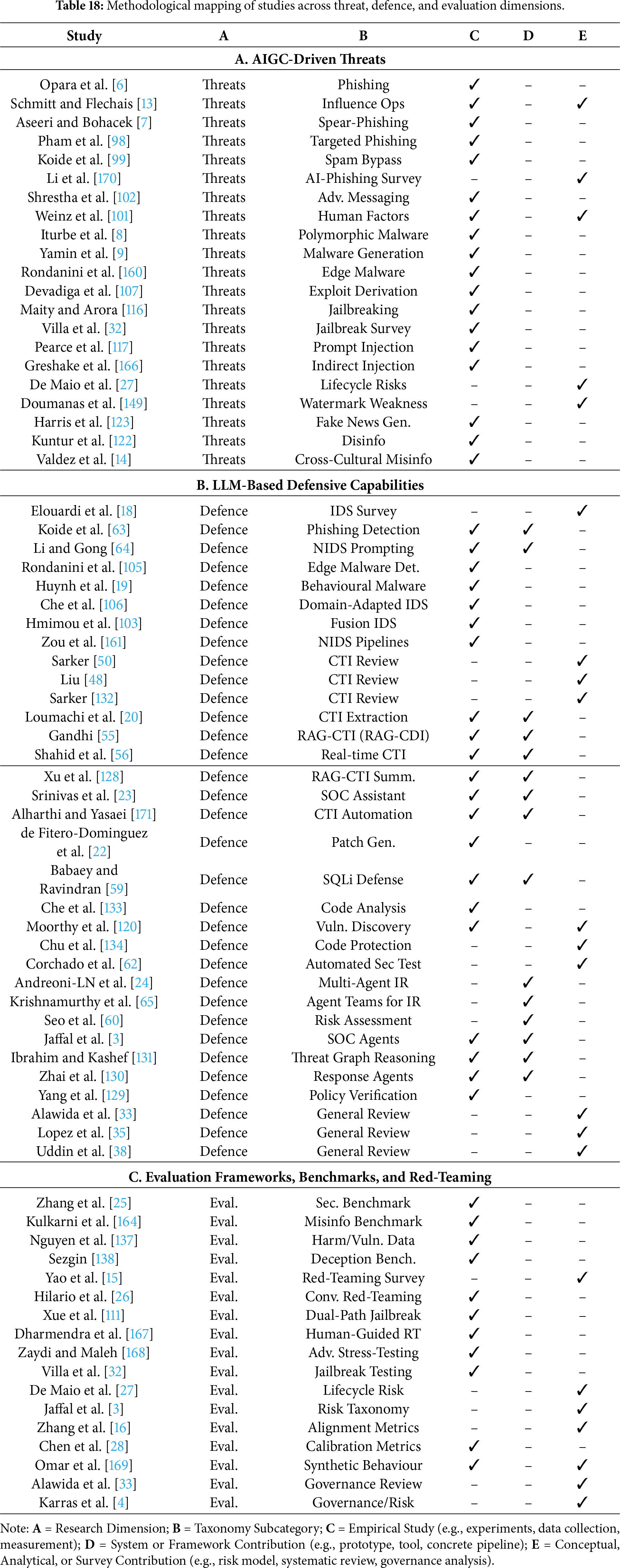

4 A Unified Taxonomy of LLM–Cybersecurity Research

To systematically synthesise the heterogeneous body of literature identified through the PRISMA-guided review process, this section introduces a unified taxonomy of LLM-centric cybersecurity research. The taxonomy is derived through an inductive coding and synthesis procedure applied to all 167 included studies, as described in Section 2.2. Rather than imposing a pre-defined classification scheme, the taxonomy is grounded in empirically observed research patterns spanning adversarial misuse, defensive integration, and security evaluation practices.

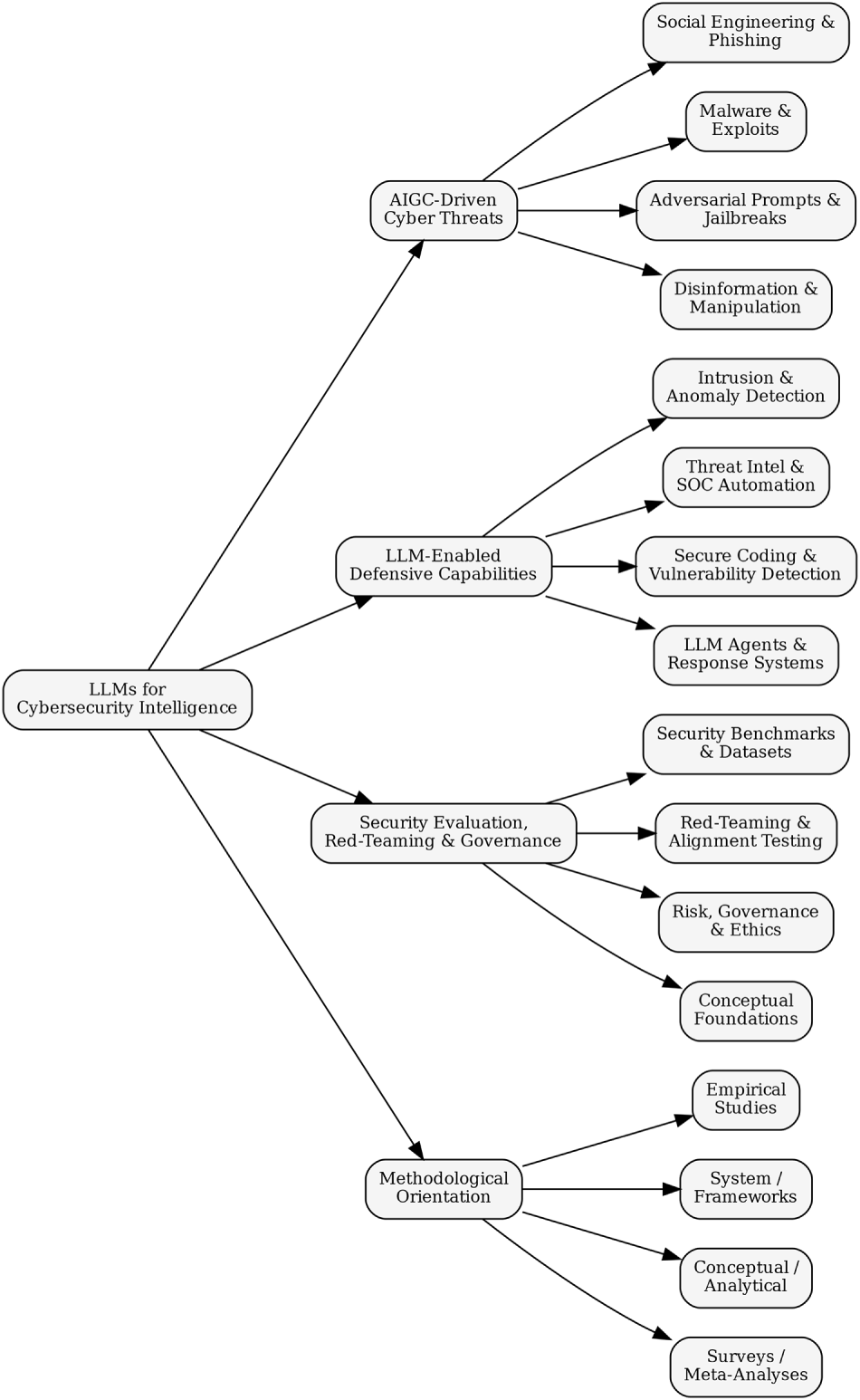

The proposed taxonomy organises the literature along four complementary dimensions: (i) AIGC-driven cyber threats, (ii) LLM-enabled defensive capabilities, (iii) security evaluation, red-teaming, and governance, and (iv) methodological orientation. Collectively, these dimensions capture not only what security problems are addressed, but also how evidence is generated, validated, and operationalised. This structure provides a reproducible analytical framework for comparative analysis, exposes systematic imbalances in the existing research landscape, and supports the identification of underexplored yet high-impact research directions.

4.1 Rationale for the Proposed Taxonomy

The motivation for the proposed taxonomy is threefold. First, existing surveys frequently examine offensive misuse, defensive applications, or ethical considerations of LLMs in isolation, resulting in fragmented insights that obscure the co-evolution of threats and countermeasures [33,34]. By explicitly integrating threat, defence, and evaluation dimensions, the proposed taxonomy captures their interdependencies and reflects the concurrent evolution of attacker innovation, defensive adaptation, and governance constraints.

Second, the taxonomy elevates security evaluation and governance to a primary analytical dimension rather than treating it as a secondary or peripheral concern. An expanding body of work demonstrates that deficiencies in robustness auditing, red-teaming, explainability, and regulatory alignment can undermine otherwise promising technical advances [36,38]. Explicitly foregrounding evaluation and governance therefore aligns the taxonomy with real-world deployment requirements and emerging regulatory expectations.

Third, the taxonomy is directly grounded in the textual, empirical, and methodological evidence of the 167 reviewed studies. Categories and subcategories were iteratively refined through frequency analysis, conceptual differentiation, and cross-mapping of hybrid contributions, ensuring analytical coherence without sacrificing completeness. This inductive grounding strengthens methodological transparency and avoids the conceptual overfitting that often characterises high-level AI taxonomies.

Fig. 2 presents a visual overview of the unified taxonomy, illustrating how threat classes, defensive mechanisms, evaluation frameworks, and methodological orientations collectively structure the emerging LLM–cybersecurity research landscape.

Figure 2: Proposed unified taxonomy of research on LLMs for cybersecurity intelligence.

The first dimension of the taxonomy captures how generative AI and LLMs expand the cyber threat surface by automating deception, lowering technical skill barriers, and enabling adaptive attacks at scale. Across the reviewed literature, AIGC-driven threats consistently emerge as a dominant research focus, reflecting widespread concern regarding the misuse potential of generative models [41]. Unlike conventional cyber threats, these attacks exploit linguistic fluency, contextual awareness, and automation, enabling adversaries with limited expertise to execute sophisticated campaigns.

Empirical and analytical studies converge around three recurring threat clusters: (i) social engineering and deception automation, (ii) malware generation, exploit scaffolding, and code abuse, and (iii) model manipulation, prompt injection, and alignment evasion. These clusters recur across security-focused surveys, AI safety analyses, and governance-oriented discussions [42,43], supporting their treatment as distinct yet interrelated subcategories.

4.2.1 Social Engineering and Deception Automation

A substantial portion of the literature documents how LLMs amplify the scale, realism, and adaptability of social engineering attacks, frequently described as the “dual-edged sword” effect of generative AI [44]. Numerous studies demonstrate that models such as ChatGPT can generate linguistically fluent and context-aware messages that closely mimic professional or personal communication styles, thereby eroding traditional detection cues used in phishing and impersonation attacks [33,45].

Beyond static message generation, interactive and multi-turn deception has emerged as a critical escalation vector. Esmradi et al. [34] show how adaptive conversational strategies enable sustained manipulation, while Lopez et al. [35] and Nawara and kashef [36] highlight risks associated with platform-integrated systems, including chatbots, recommender engines, and customer-service pipelines. Collectively, this body of work indicates a shift from isolated phishing messages toward ecosystem-level influence operations embedded within digital services.

4.2.2 Malware, Exploit Scaffolding, and Code Abuse

A second threat cluster concerns LLM-assisted code generation and its associated dual-use implications. While many studies emphasise the defensive benefits of automated code synthesis, a consistent theme is the risk that generative models may produce insecure logic, reproduce known vulnerabilities, or facilitate exploit construction under ambiguous or adversarial prompts [33,34]. By automating elements of exploit reasoning, LLMs reduce traditional expertise barriers and accelerate attack development cycles.

Although the reviewed corpus contains limited primary experimentation on live malware generation, conceptual and lifecycle-oriented analyses consistently conclude that LLMs can significantly enhance attacker capabilities, particularly when integrated with external knowledge bases or retrieval mechanisms [38,46]. These findings justify treating code abuse as a distinct threat class rather than as a marginal extension of social engineering.

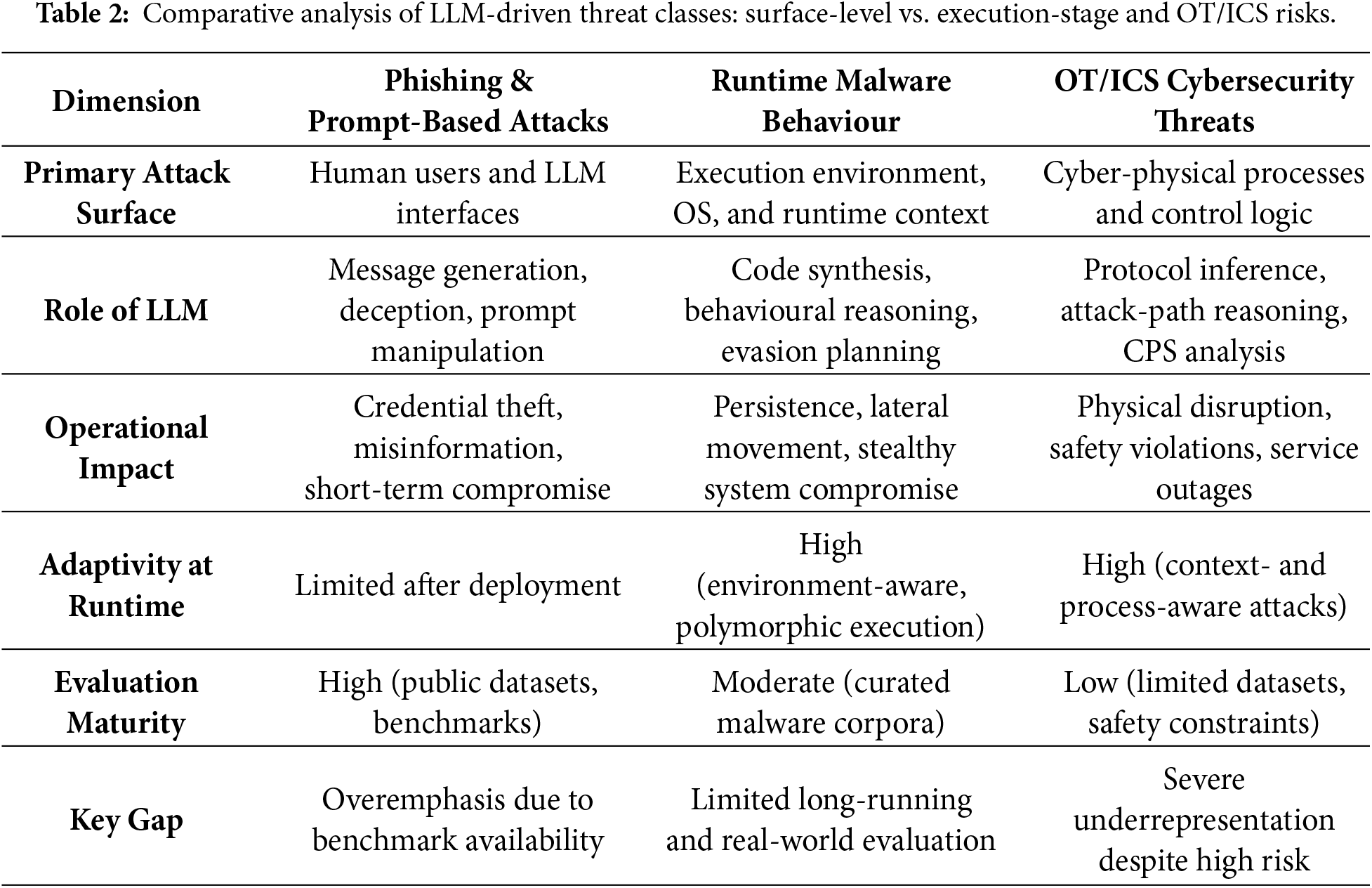

4.2.3 Runtime Malware Behaviour and OT/ICS Implications

Recent studies increasingly recognise that an exclusive focus on text-centric threats underrepresents execution-stage risks. LLMs can assist in generating adaptive malware components, polymorphic payloads, and environment-aware attack logic that evolves at runtime [8,9]. Such threats exhibit increased persistence and lateral movement potential, particularly when targeting operational technology (OT) and industrial control systems (ICS).

Despite their potentially severe impact, OT and ICS contexts remain comparatively underexplored due to data scarcity, safety constraints, and experimental complexity [39,47]. Table 2 explicitly contrasts surface-level attacks with runtime and cyber-physical threats, highlighting a systematic evaluation gap that biases current research toward methodological convenience rather than operational risk.

4.2.4 Model Manipulation, Prompt Injection, and Alignment Evasion

The third threat class encompasses attacks that directly target the behaviour and control mechanisms of large language models themselves. Techniques such as prompt injection, jailbreak attacks, and alignment evasion undermine built-in safety constraints and enable the elicitation of sensitive information or unsafe outputs [34,38]. These risks are further amplified in agentic and retrieval-augmented generation (RAG)-based systems, where injected instructions may propagate across interconnected components, triggering cascading failures and unintended downstream actions [35].

Collectively, the reviewed studies indicate that alignment remains fragile under sustained adversarial interaction, particularly in real-world deployments where LLMs interface with external tools, application programming interfaces (APIs), and untrusted user inputs. These findings underscore the need for robust input validation, isolation mechanisms, and continuous monitoring to mitigate systemic failure modes arising from model manipulation.

4.3 LLM-Enabled Defensive Capabilities

Within the unified taxonomy, the second dimension captures how large language models are operationalised as defensive instruments to support cybersecurity monitoring, analysis, and response. In contrast to threat-centric research, this body of work primarily conceptualises LLMs as augmentative components embedded within existing security workflows rather than as fully autonomous decision-makers. Across the 167 reviewed studies, defensive applications consistently cluster into three capability classes: (i) threat intelligence extraction and security operations support, (ii) secure coding, vulnerability assessment, and risk prediction, and (iii) anomaly detection, behavioural modelling, and policy reasoning.

This categorisation reflects an important methodological distinction within the literature. Whereas AIGC-driven threats exploit the generative and adaptive properties of LLMs, defensive systems leverage their semantic reasoning, contextual understanding, and natural language interaction capabilities to reduce analyst workload, enhance interpretability, and support complex decision-making under uncertainty [48,49]. Nevertheless, as discussed in the following sections, defensive effectiveness remains strongly contingent on deployment constraints, governance mechanisms, and the rigor of evaluation protocols.

4.3.1 Threat Intelligence Extraction and Security Operations Support

The most mature and extensively investigated defensive application of LLMs concerns threat intelligence extraction and support for Security Operations Center (SOC) workflows. A recurring theme across the reviewed studies is the use of LLMs to summarise, contextualise, and correlate heterogeneous security artefacts, including logs, alerts, incident reports, and threat intelligence bulletins [50]. Nawara and kashef [36] demonstrate that LLM-driven recommendation and decision-support pipelines can uncover latent relationships among entities, reconstruct attack narratives, and surface actionable insights with minimal analyst prompting. Complementary studies on log analysis and alert triage [51] further show that such systems can reduce cognitive burden by transforming low-level telemetry into structured, high-level explanations.

Survey-oriented investigations of generative AI adoption in enterprise and digital platform contexts [52–54] consistently position LLMs as effective intermediaries between raw security data and human analysts. Lopez et al. [35] further highlight that LLM-enabled automation can accelerate response workflows by generating concise summaries, drafting mitigation recommendations, and interpreting alerts expressed in natural language. Architectures based on retrieval-augmented generation (RAG) explicitly address scalability and knowledge grounding by integrating semantic search with generative reasoning [55].

Despite these advantages, multiple studies caution that SOC-oriented deployments remain vulnerable to hallucinated correlations, overconfident explanations, and trust calibration failures when LLM outputs are consumed without systematic validation [33,56]. Consequently, the literature converges on the view that LLMs are most effective as analyst-support tools that augment, rather than replace, expert judgment in operational SOC environments.

4.3.2 Secure Coding, Vulnerability Assessment, and Risk Prediction

A second major class of defensive capability concerns the application of LLMs within secure software development lifecycles. Although code synthesis by generative models is widely recognised as a dual-use capability, a substantial body of work demonstrates that, when appropriately constrained, LLMs can assist in identifying insecure constructs, detecting coding antipatterns, and explaining potential vulnerabilities [57,58]. Esmradi et al. [34] show that LLMs can reason over code semantics and generate preliminary vulnerability explanations that complement traditional static analysis techniques.

Specialised studies further demonstrate defensive applications in firewall configuration, secure deployment pipelines, and adaptive policy enforcement [59,60]. Alawida et al. [33] argue that LLMs can function as intelligent static-analysis assistants, particularly in identifying subtle logic flaws that evade rule-based scanners.

However, Uddin et al. [38] emphasise that defensive gains in secure coding are inseparable from governance and lifecycle oversight. In the absence of systematic validation, continuous monitoring, and auditability, LLM-generated recommendations risk propagating insecure patterns at scale. Studies on model trustworthiness and auditing [61] further indicate that higher-level risk prediction tasks-such as vulnerability prioritisation and impact explanation-should be interpreted as decision-support signals rather than authoritative outputs. Collectively, these findings position LLMs as promising yet fragile components within secure development workflows, whose effectiveness depends on rigorous verification and human oversight [62].

4.3.3 Anomaly Detection, Behavioural Modelling, and Policy Reasoning

Beyond intelligence extraction and code analysis, LLMs are increasingly explored for behavioural anomaly detection, system modelling, and policy reasoning. Alawida et al. [33] demonstrate that transformer-based models can capture semantic patterns in logs and behavioural traces, enabling the detection of subtle deviations associated with insider threats, fraud, or anomalous system states. Empirical systems such as ChatPhishDetector [63] and LLM-assisted malicious webpage detection frameworks [64] further illustrate the feasibility of applying LLMs to text-rich security contexts.

At a higher level of abstraction, Lopez et al. [35] highlight the suitability of LLMs for policy reasoning tasks, including interpreting configuration files, validating compliance requirements, and translating complex security policies into human-readable explanations. Such capabilities support automated auditing and continuous compliance monitoring, particularly in specialised domains such as transport and critical infrastructure systems.

Uddin et al. [38] further argue that behavioural modelling and policy reasoning are especially critical in multi-agent or retrieval-augmented systems, where LLMs mediate interactions between user intent, system state, and downstream actions. When properly aligned, these models can detect inconsistencies, enforce safety constraints, and reason about risk propagation across interconnected components [65]. Nevertheless, the literature consistently cautions that such reasoning capabilities must be bounded by explicit control logic to prevent cascading errors or unsafe autonomous behaviour.

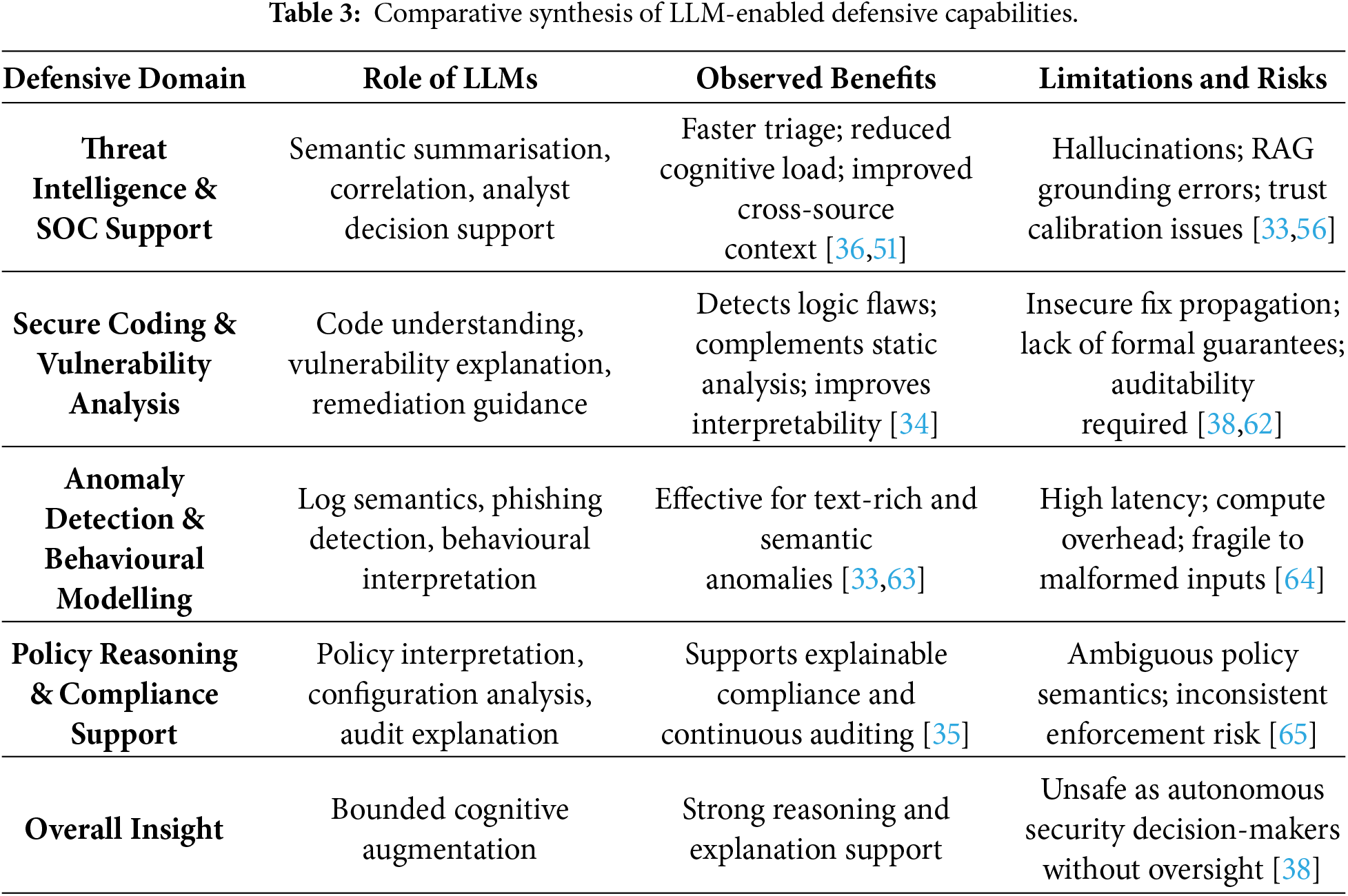

4.3.4 Comparative Synthesis of LLM-Enabled Defensive Capabilities

A cross-cutting synthesis of LLM-enabled defensive studies indicates that, despite notable task-level performance gains, defensive effectiveness is fundamentally determined by how LLMs are embedded within security workflows rather than by model capability alone. Across threat intelligence support, secure coding, and anomaly detection, LLMs consistently function as cognitive amplifiers, enhancing semantic understanding, contextual correlation, and explanation, while remaining dependent on classical detection engines, rule-based controls, and human oversight for operational reliability [35,38,48].

Within SOC and threat intelligence pipelines, LLMs excel at unifying heterogeneous data sources and generating analyst-oriented narratives, yielding measurable reductions in triage time and cognitive workload [36,51]. These benefits, however, are counterbalanced by susceptibility to hallucinated correlations and overconfident reasoning, particularly in retrieval-augmented generation (RAG) settings where corpus incompleteness or temporal drift undermines grounding [33,56]. As a result, the literature consistently advocates hybrid SOC architectures in which LLMs serve advisory roles and validation layers are mandatory.

In secure coding and vulnerability assessment, LLMs demonstrate strong semantic reasoning capabilities, often outperforming purely syntactic static-analysis tools in explaining logic flaws, insecure dependencies, and exploit preconditions [34]. Nevertheless, empirical evidence also highlights the risk of scaled insecurity, whereby unverified LLM recommendations propagate flawed remediation strategies across large codebases [38,57]. Consequently, studies recommend positioning LLMs as assistive auditors integrated with formal verification, testing pipelines, and developer review processes rather than as autonomous security agents [61,62].

For anomaly detection, behavioural modelling, and policy reasoning, LLMs are most effective in text-rich or semantically complex environments—such as log analysis, phishing detection, and compliance interpretation—where traditional feature-based models exhibit limitations [33,63]. However, latency, computational overhead, and sensitivity to input formatting constrain their suitability for real-time detection and control-loop enforcement [64]. In multi-agent and policy-driven systems, LLM-based reasoning enhances interpretability and coordination but introduces new failure modes, including cascading errors and ambiguous policy interpretation, necessitating explicit constraint enforcement and human-in-the-loop safeguards [38,65].

Overall, the comparative evidence indicates that LLM-enabled defensive systems are most robust when deployed as bounded, explainable, and governable components within layered defence architectures. Performance gains are strongest in decision support, correlation, and explanation tasks, whereas autonomous detection or response remains high risk without rigorous validation, continuous monitoring, and governance oversight. Table 3 summarises these trade-offs across representative defensive domains.

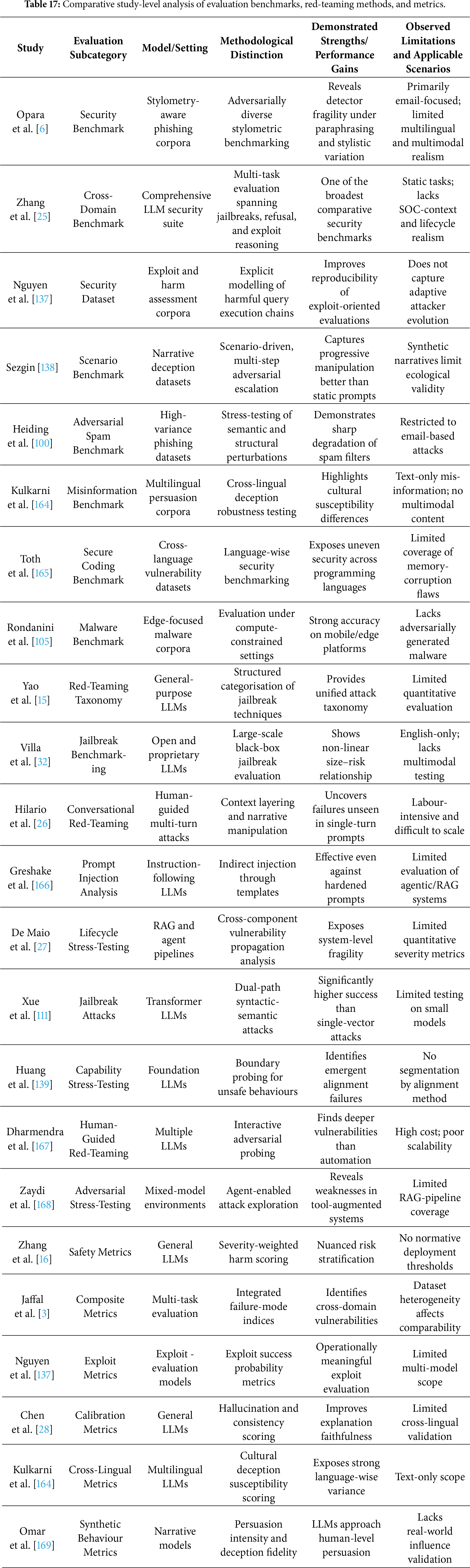

4.4 Security Evaluation, Red-Teaming, and Governance

The third dimension of the unified taxonomy encompasses research focused on evaluating the trustworthiness, robustness, and governance readiness of LLM-enabled cybersecurity systems. Unlike threat- or defence-centric studies, which emphasise capability expansion, this dimension interrogates whether such systems can be safely, reliably, and legally deployed in security-sensitive environments. Across the 167 analysed studies, evaluation and governance emerge as indispensable complements to both offensive and defensive research, reflecting a growing consensus that unregulated or inadequately assessed LLM integration may introduce systemic risks that outweigh operational benefits [66–68].

Within the taxonomy, this dimension is structured around three interrelated themes: (i) security benchmarking and evaluation frameworks, (ii) red-teaming, misuse detection, and lifecycle risk assessment, and (iii) governance, regulatory alignment, and enforceable controls. Together, these themes address not only whether LLMs perform as intended, but also how failures emerge, propagate, and can be constrained under real-world operational and regulatory conditions.

4.4.1 Security Benchmarking and Evaluation Frameworks

A central finding across the reviewed literature is the absence of standardised, security-oriented evaluation frameworks for LLMs. Lopez et al. [35] identify multiple evaluation dimensions, including behavioural stress testing, trustworthiness analysis, and safety auditing, but emphasise that prevailing metrics (e.g., accuracy or BLEU-style scores) are insufficient to capture security-relevant failure modes such as context-sensitive hallucinations, brittle reasoning, and inconsistent safety responses. This critique is reinforced by broader surveys of AI evaluation practices, which consistently highlight the lack of unified, comparable, and deployment-relevant assessment standards [69–71].

Esmradi et al. [34] further argue that evaluation pipelines must explicitly assess susceptibility to adversarial prompting, data leakage, insecure code generation, and alignment failures. In the absence of such targeted benchmarks, empirical claims regarding LLM safety and robustness remain difficult to validate or compare across studies. Alawida et al. [33] similarly identify robustness evaluation as a critical bottleneck, observing that many proposed defence mechanisms are tested only under benign or narrowly scoped experimental conditions.

Recent investigations of specialised security tools [72] and analyses of LLM application ecosystems further underscore the operational consequences of weak evaluation practices, particularly in settings characterised by rapid deployment and frequent model updates. Across the reviewed literature, evaluation is increasingly conceptualised not as a one-time verification activity but as a continuous, lifecycle-spanning process that underpins responsible deployment, model governance, and risk-aware decision-making [73].

4.4.2 Red-Teaming, Misuse Detection, and Lifecycle Risk Assessment

Red-teaming is widely recognised as a critical mechanism for uncovering latent vulnerabilities in LLM-enabled systems. Although the reviewed corpus contains relatively few large-scale empirical red-teaming studies, numerous analytical and survey-oriented works provide detailed examinations of misuse pathways, adversarial prompting strategies, and system-level failure cascades. Uddin et al. [38] present one of the most comprehensive lifecycle-oriented risk assessments, synthesising vulnerabilities across data acquisition, model training, deployment, tool integration, and post-deployment adaptation [74,75].

These analyses consistently reveal that risks often arise not from isolated model behaviour but from interactions between LLMs and surrounding system components. In multi-agent or retrieval-augmented architectures, injected prompts or poisoned contextual data can propagate across subsystems, resulting in cascading failures or unsafe actions [76]. Complementing this perspective, Esmradi et al. [34] catalogue a broad range of adversarial attack surfaces—including jailbreaks, prompt chaining, inference-time manipulation, and information leakage probes—that should form the foundation of systematic red-teaming methodologies. Broader surveys of LLM-enabled cyberattacks [43] further reinforce the need for adversarial evaluation strategies that extend beyond single-prompt testing.

Alawida et al. [33] additionally emphasise that effective misuse detection requires continuous behavioural monitoring, trust calibration, and post-hoc interpretability mechanisms to identify anomalous or unsafe reasoning trajectories. Collectively, these studies converge on the conclusion that red-teaming must evolve from ad hoc adversarial prompting toward structured, repeatable lifecycle assessments that evaluate model behaviour under uncertainty, interaction, and environmental variation [77].

4.4.3 Governance, Regulatory Alignment, and Enforceable Controls

Governance and regulatory considerations constitute a critical cross-cutting pillar of LLM-enabled cybersecurity research, linking technical evaluation with accountability, compliance, and operational safety. While earlier studies extensively discuss ethical concerns such as bias, transparency, privacy, and misuse [78,79], much of this literature remains principle-driven and insufficiently connected to enforceable regulatory requirements or operational security workflows. More recent critical analyses argue that ethical risk mitigation cannot be decoupled from technical robustness and governance enforcement, particularly when LLMs influence security-critical decisions [38,80,81].

A recurring concern across the reviewed studies is the absence of clearly defined accountability structures for LLM-driven systems deployed in high-stakes contexts, including SOC automation, intrusion detection, and incident response. Uddin et al. [38] highlight deficiencies related to explainability, responsibility allocation, and auditability, concluding that governance mechanisms lag behind the pace of operational integration. Similar observations are reported in privacy-sensitive and manipulative-content domains, where ethical shortcomings translate directly into legal, reputational, and operational risks.

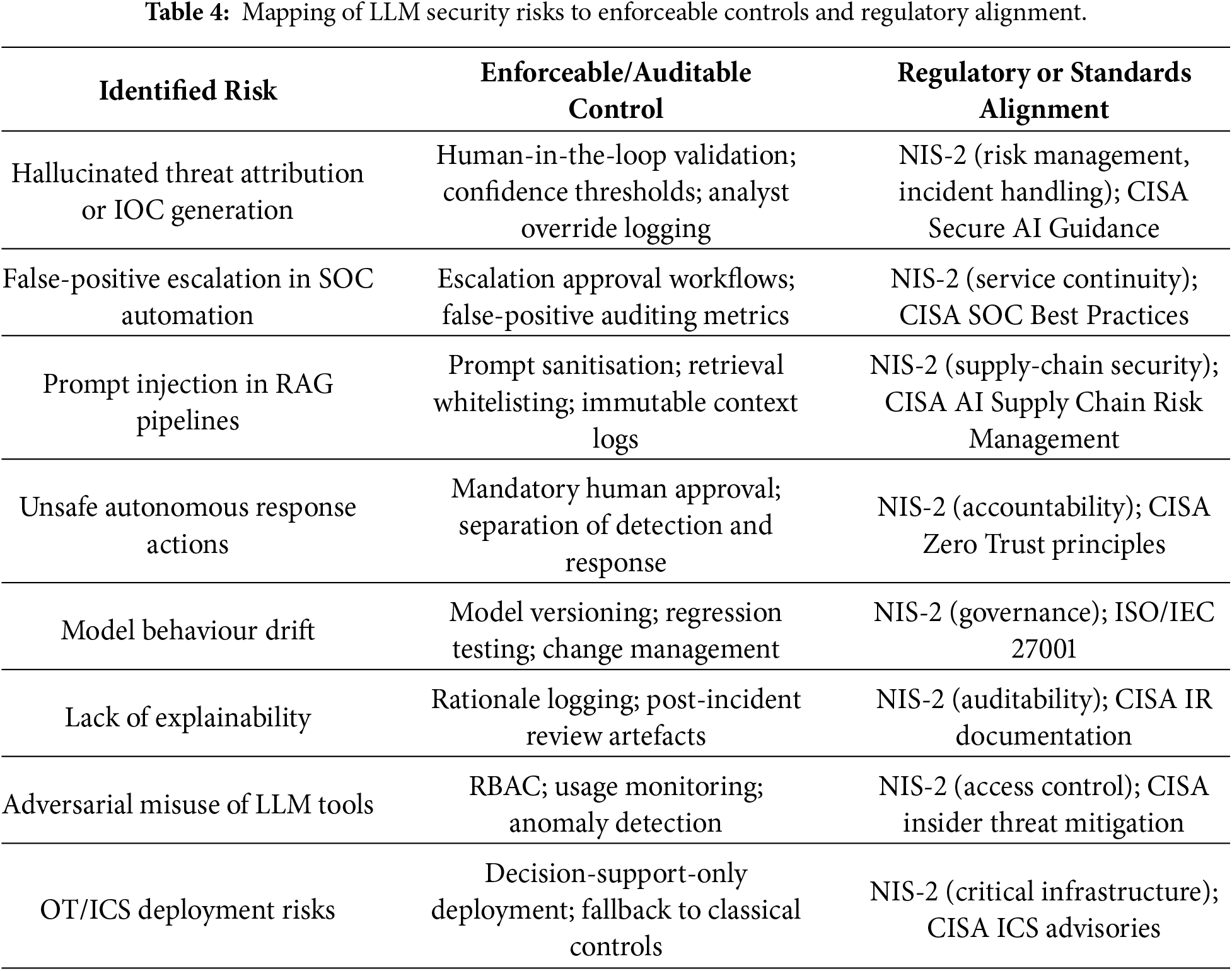

From a regulatory perspective, the EU NIS-2 Directive establishes binding requirements for cybersecurity risk management, incident handling, supply-chain security, and organisational accountability. Many failure modes identified in LLM-enabled cybersecurity systems—including hallucinated threat attribution, false-positive escalation within SOC pipelines, prompt-injection vulnerabilities in RAG architectures, and unsafe autonomous responses—map directly to NIS-2 obligations concerning proportional controls, resilience, and service continuity. Consequently, LLM components that materially influence detection, triage, or response processes fall squarely within the scope of regulated cybersecurity operations.

Complementary guidance issued by the U.S. Cybersecurity and Infrastructure Security Agency (CISA) emphasises defence-in-depth, layered safeguards, and verifiable controls for AI-enabled systems deployed in SOCs and critical infrastructure environments. Consistent with this guidance, the literature increasingly converges on the view that LLMs should function as constrained components within hybrid defence architectures, augmenting classical detection and policy engines rather than acting as autonomous decision-makers [35,82]. Empirical evidence further indicates that hallucinations and reasoning errors can propagate rapidly when LLM outputs are granted excessive operational authority.

Operationalising governance therefore requires translating ethical principles into auditable and enforceable controls. Across the reviewed studies, recurring mechanisms include mandatory human-in-the-loop checkpoints, role-based access control for prompts and policy modification, immutable audit logging, model versioning and change management, and clear separation of duties between detection, reasoning, and response components [83,84]. These controls directly mitigate the operational risks identified in the defensive and evaluation analyses and align with both NIS-2 accountability requirements and CISA deployment guidance.

Table 4 synthesises these insights by explicitly mapping identified LLM security risks to concrete technical and organisational controls, along with corresponding regulatory or standards-based obligations. By grounding governance in enforceable mechanisms, this taxonomy dimension reframes ethics from aspirational guidance into compliance-ready practice, providing actionable direction for researchers, practitioners, and policymakers.

4.5 Methodological Orientation within the Taxonomy

Beyond thematic classification, the proposed taxonomy explicitly incorporates methodological orientation as a cross-cutting analytical dimension. This inclusion is essential for interpreting not only which aspects of LLM-enabled cybersecurity are examined, but also how evidence is generated, validated, and generalised. An analysis of the 167 included studies reveals four dominant methodological orientations—empirical, system-oriented, conceptual/analytical, and survey-based—which collectively shape the maturity, reliability, and operational relevance of the field.

These methodological orientations intersect all three substantive taxonomy dimensions (AIGC-driven threats, LLM-enabled defensive capabilities, and security evaluation and governance), thereby influencing the strength of reported claims, the reproducibility of findings, and the feasibility of real-world deployment. Explicitly embedding methodology within the taxonomy prevents the conflation of conceptual insight with empirical validation and enables a more nuanced and evidence-aware synthesis of the literature.

4.5.1 Empirical Evaluation-Oriented Studies

Empirical studies constitute a substantial portion of the reviewed literature and focus on observing model behaviour, quantifying robustness, and analysing failure modes through controlled experiments or simulations. Representative examples include empirical investigations of hallucination, unsafe code generation, exploit reasoning, and misalignment effects reported by Esmradi et al. [34]. Large-scale comparative assessments of AI-generated code security [85] and experimental evaluations of ChatGPT on log and security datasets [77] further exemplify this orientation.

While empirical studies are indispensable for grounding claims regarding LLM capabilities and risks, a recurring limitation across the corpus is the absence of standardised benchmarks, limited reproducibility, and evaluation under constrained or synthetic conditions. These limitations impede cross-study comparability and restrict generalisation to operational cybersecurity environments. As a result, many empirical findings provide primarily local validity rather than system-level assurance, underscoring the need for the unified evaluation frameworks discussed in Dimension III.

4.5.2 System and Architectural Proposals

System-oriented studies represent a second major methodological category and focus on integrating LLMs into operational cybersecurity workflows. These contributions typically propose architectures, pipelines, or decision-support systems that embed LLMs within SOC operations, threat intelligence platforms, or automated response mechanisms. Nawara and kashef [36], for example, present LLM-driven recommendation systems for contextualising threat intelligence and streamlining analyst workflows. Related efforts include retrieval-augmented cybersecurity intelligence frameworks [55], real-time crime detection systems [56], and scalable log analysis pipelines [51].

Although these system-level contributions signal progress toward operational deployment, they are frequently evaluated under constrained conditions, rely on curated or synthetic inputs, or omit lifecycle-level threat modelling. Consequently, many architectural proposals demonstrate functional feasibility without sufficiently addressing robustness, adversarial resilience, or governance constraints. This methodological shortcoming reinforces the importance of coupling system design with rigorous evaluation, red-teaming, and governance analysis.

4.5.3 Conceptual and Analytical Studies

Conceptual and analytical works form a third methodological orientation, offering taxonomies, risk models, and socio-technical analyses that articulate the broader implications of LLM integration within cybersecurity ecosystems [67]. Uddin et al. [38] provide a comprehensive lifecycle-oriented risk framework that identifies vulnerabilities spanning data provenance, model training, deployment, tool integration, and governance. Other analytical contributions highlight systemic risks, including the erosion of Zero-Trust assumptions induced by generative AI [75] and the cascading effects associated with large-scale LLM adoption [74].

These studies play a critical role in exposing interdependencies and long-term risks that may not be apparent in isolated empirical evaluations. However, their primary limitation lies in limited empirical grounding, as many conceptual insights remain insufficiently validated through controlled experimentation or real-world case studies. Within the taxonomy, such analyses therefore function primarily as instruments of risk foresight rather than as evidence of deployable solutions.

4.5.4 Surveys and Meta-Analyses

Surveys and meta-analyses constitute the fourth methodological category, synthesising research trends across generative AI, cybersecurity threats, defensive mechanisms, and governance considerations. Foundational surveys by Alawida et al. [33] and Lopez et al. [35] provide broad overviews of AIGC risks, defensive opportunities, and ethical challenges. Additional systematic and narrative reviews further contextualise LLM applications in cybersecurity [40,49], their dual-use implications in network security [86], and recent advancements in generative AI [71].

While surveys play a crucial role in consolidating fragmented research, many existing reviews rely predominantly on narrative synthesis and lack systematic protocols such as PRISMA. This limits transparency, reproducibility, and comparability across surveys. The present work addresses this limitation by embedding methodological orientation directly within a PRISMA-guided taxonomy, enabling structured cross-comparison beyond descriptive aggregation.

4.5.5 Methodological Imbalances and Implications

Taken together, the distribution of methodological orientations reveals a research landscape that is broad yet uneven. Empirical studies frequently lack unified benchmarks and stress-testing protocols; system-oriented proposals often under-evaluate adversarial and governance risks; conceptual analyses are weakly anchored in experimental validation; and surveys expose inconsistencies in terminology, threat definitions, and evaluation practices. These imbalances directly influence the maturity and reliability of conclusions drawn across all three substantive taxonomy dimensions.

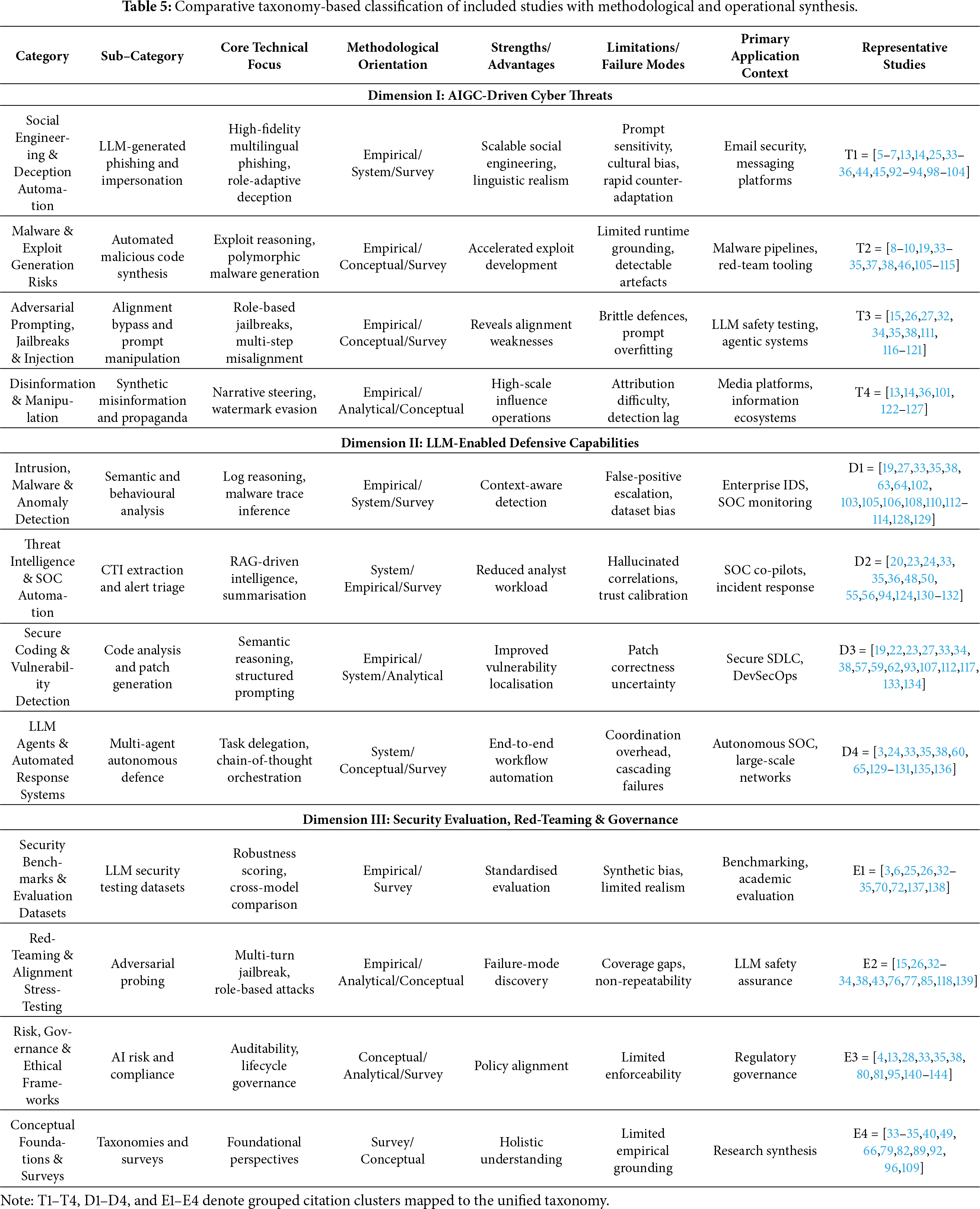

Beyond methodological categorisation, the distribution of studies across the taxonomy (Table 5) highlights several longitudinal trends between 2022 and 2025. Research on AIGC-driven threats remains dominant, particularly in social engineering and adversarial prompting [44,65]. Defensive applications—especially threat intelligence extraction and SOC automation—demonstrate increasing operational focus [36], yet remain unevenly validated. Notably, a growing concentration of studies addresses security evaluation, red-teaming, and governance, reflecting increasing recognition that trustworthiness and accountability are decisive factors for real-world deployment [35,38].

Overall, explicitly positioning methodological orientation within the taxonomy transforms it from a descriptive classification into a critical analytical framework. By revealing how evidence is produced—and where it is systematically weak—this dimension provides a foundation for identifying research gaps, guiding standardisation efforts, and supporting evidence-based advancement of LLM-driven cybersecurity intelligence.

Table 5 consolidates all 167 studies within the unified taxonomy, linking thematic focus, methodological orientation, strengths, and limitations. This synthesis underpins the comparative and longitudinal analyses presented in subsequent sections.

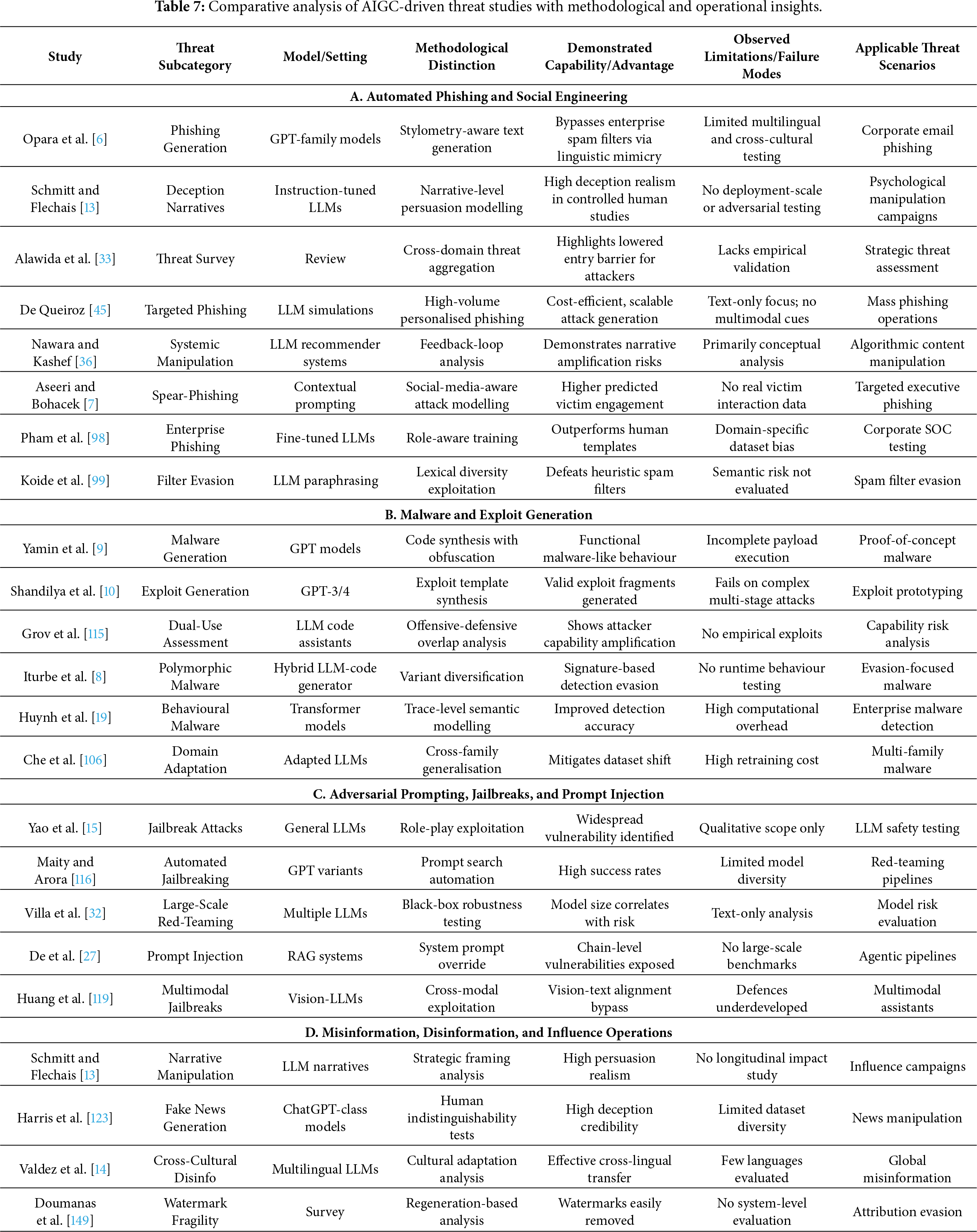

5 AIGC-Driven Threats Enabled by LLMs

Building on the unified threat–defence–evaluation taxonomy introduced in Section 4, this section examines how large language models (LLMs) operate as offensive enablers within contemporary cyber threat ecosystems. Across the 167 studies synthesised in this review, AIGC-enabled attacks consistently emerge not as incremental extensions of existing techniques, but as a qualitative escalation in adversarial capability. LLMs fundamentally alter attacker economics by lowering expertise barriers, automating cognitively complex tasks, and enabling scalable, adaptive, and context-aware malicious behaviour [87–89].

Within the proposed taxonomy, AIGC-driven threats cluster into four dominant and recurrent categories: (i) social engineering and multimodal deception, (ii) malware generation, exploit scaffolding, and runtime behaviour, (iii) prompt injection, jailbreaks, and alignment evasion, and (iv) misinformation, disinformation, and influence operations. These categories are derived inductively through cross-study coding and reflect convergent patterns observed across empirical evaluations, red-teaming analyses, and large-scale surveys. Collectively, they expose the inherent dual-use tension of LLMs, wherein the same generative, reasoning, and contextualisation capabilities that enable defensive innovation are systematically repurposed for adversarial misuse [42,44,90].

5.1 Social Engineering and Multimodal Deception

Social engineering represents the most mature and empirically substantiated class of AIGC-driven threats. A substantial body of literature demonstrates that LLMs enable scalable, highly personalised phishing, impersonation, and deception campaigns characterised by linguistic coherence, contextual sensitivity, and adaptive tone control previously associated with skilled human operators [45,91]. Unlike template-based automation, LLM-driven pipelines dynamically tailor content using inferred organisational roles, conversational context, and cultural cues, thereby significantly increasing attack credibility [33,34,92].

Empirical evaluations reported in [25,93,94] indicate that LLM-generated spear-phishing and business email compromise (BEC) messages consistently degrade the effectiveness of signature-based filters and stylometric detection techniques. Comparative studies further reveal that semantic diversity, multilingual generation, and adversarial paraphrasing undermine feature-driven classifiers, exacerbating the asymmetry between attacker adaptability and defender rigidity [35,95].

Beyond text-only attacks, recent work documents a marked shift toward multimodal deception, wherein adversaries exploit systems capable of jointly reasoning over text, images, code, and structured artefacts. Such cross-modal manipulation produces inconsistencies that evade unimodal detection pipelines [96,97]. Collectively, these findings indicate that social engineering has evolved from isolated phishing attempts into adaptive, multimodal deception workflows that challenge foundational assumptions of existing detection systems.

5.2 Malware, Exploit Scaffolding, and Runtime Behaviour

The second major threat category encompasses LLM-assisted malware generation, exploit scaffolding, and execution-stage behaviour. Across the reviewed corpus, multiple studies demonstrate that LLMs can generate functional malware components, exploit templates, and obfuscation patterns, particularly when safety mechanisms are bypassed through adversarial prompting or indirect instruction leakage [33,109]. Although many generated artefacts are not immediately deployable, they substantially accelerate exploit development cycles and reduce the expertise required for iterative attack refinement [34,145].

Several studies [108,110,111] highlight the dual-use risks inherent to LLM-assisted code generation, showing that models designed for benign development support can emit vulnerable or exploitable logic under ambiguous or underspecified prompts. The integration of structured cybersecurity knowledge bases further amplifies this threat by enabling attackers to rapidly query, adapt, and weaponise technical content at scale [46,104].

A critical limitation identified across this literature is the systematic underrepresentation of runtime behaviour. Most studies validate exploit generation at the code-fragment or proof-of-concept level, without examining execution dynamics, environmental dependencies, or long-term behavioural adaptation [38]. Consequently, current evaluations likely underestimate the persistence, lateral movement, and evasion capabilities enabled by LLM-assisted malware, underscoring the need for execution-aware threat modelling and system-level validation.

5.3 Prompt Injection, Jailbreaks, and Alignment Evasion

A third and rapidly expanding threat class targets LLMs directly through adversarial prompting, jailbreaks, and prompt injection. Extensive empirical evidence demonstrates that contemporary models remain highly susceptible to role-play manipulation, instruction obfuscation, multi-turn coercion, and indirect prompt injection attacks [32,34,111]. Notably, several studies suggest that larger and more capable models may exhibit increased vulnerability due to richer representational capacity and broader behavioural generalisation [116,142].

Importantly, these vulnerabilities extend beyond standalone models to integrated systems. Research on retrieval-augmented generation (RAG) and multi-agent architectures shows that injected prompts can propagate across components, triggering unsafe tool invocation, policy override, or cascading reasoning failures [27,38,118,146]. Across the surveyed literature, no single mitigation strategy—including prompt sanitisation, refusal tuning, or system prompt hardening—consistently defends against the full spectrum of adversarial prompting techniques. This exposes a structural fragility in current alignment approaches and motivates lifecycle-aware evaluation, system-level isolation, and governance-driven safeguards [147,148].

5.4 Misinformation and Influence Operations

LLM-enabled misinformation and influence operations constitute the fourth major threat category and represent one of the most societally consequential forms of AIGC-driven attack. Studies consistently demonstrate that LLMs can generate persuasive, culturally adaptive, and emotionally framed narratives at scale that are often indistinguishable from human-authored content [124,149]. Comparative analyses show that LLM-driven campaigns outperform traditional disinformation efforts in adaptability, multilingual reach, and narrative coherence [13,150].

A recurring observation across this literature is the fragility of existing detection and attribution mechanisms. Watermarking, stylometric analysis, and classifier-based approaches degrade substantially under paraphrasing, translation, and multi-model transformation pipelines [125,149]. Moreover, algorithmic recommendation systems may unintentionally amplify harmful narratives, blurring the boundary between deliberate influence operations and emergent systemic manipulation [36,127]. Despite their high potential impact, longitudinal societal studies and deployment-scale evaluations remain limited, constraining understanding of sustained real-world effects.

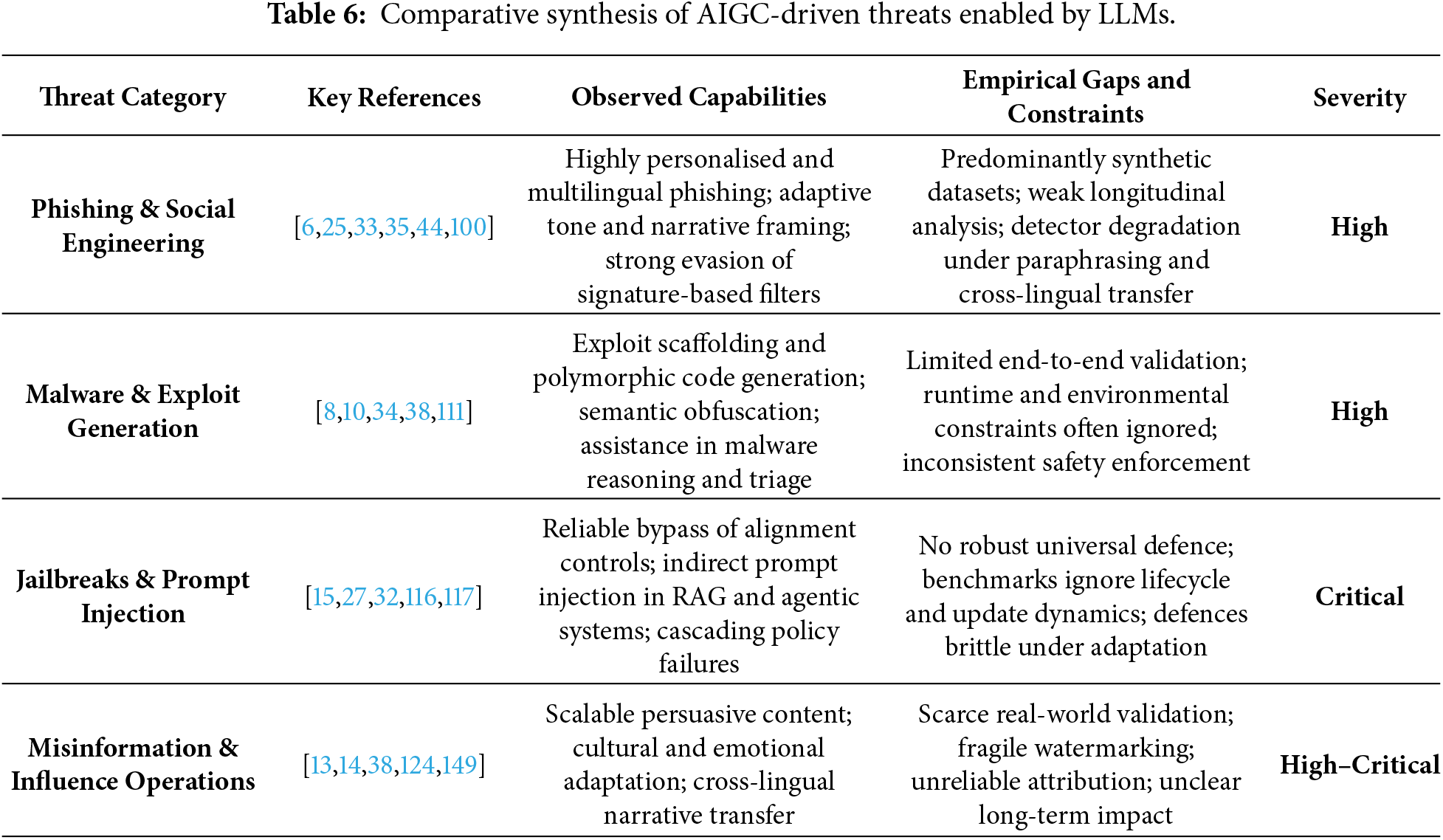

5.5 Synthesis of Threat Findings

Synthesising evidence across all four threat categories reveals a consistent and concerning trajectory: AIGC-enabled threats are advancing more rapidly than corresponding defensive countermeasures. In social engineering, semantic diversity and contextual realism undermine static detection heuristics. In malware generation, LLMs accelerate exploit development while enabling adaptive runtime behaviour. In adversarial prompting, alignment mechanisms remain brittle under sustained or system-level attacks. In misinformation, generative realism and scale overwhelm existing attribution and moderation strategies.

Three cross-cutting insights emerge from the reviewed corpus:

• Escalating attacker capability: LLMs substantially reduce the expertise and resources required to conduct complex cyberattacks, effectively democratising sophisticated offensive techniques.

• Evaluation realism gap: Heavy reliance on synthetic datasets and constrained experimental settings obscures the true operational severity of AIGC-driven threats.

• Absence of comprehensive mitigation: No existing defensive framework robustly addresses the full spectrum of LLM-enabled attack vectors, particularly in multimodal, agentic, and continuously evolving systems.

These findings underscore the need for execution-aware threat modelling, lifecycle-oriented evaluation, and defence architectures that explicitly account for the dual-use nature of generative AI. Tables 6 and 7 consolidate comparative evidence across threat categories and representative studies, demonstrating broad convergence on a central conclusion: AIGC-driven threats represent a systemic and accelerating challenge that existing security controls are structurally ill-equipped to contain.

6 LLM-Based Defensive Capabilities

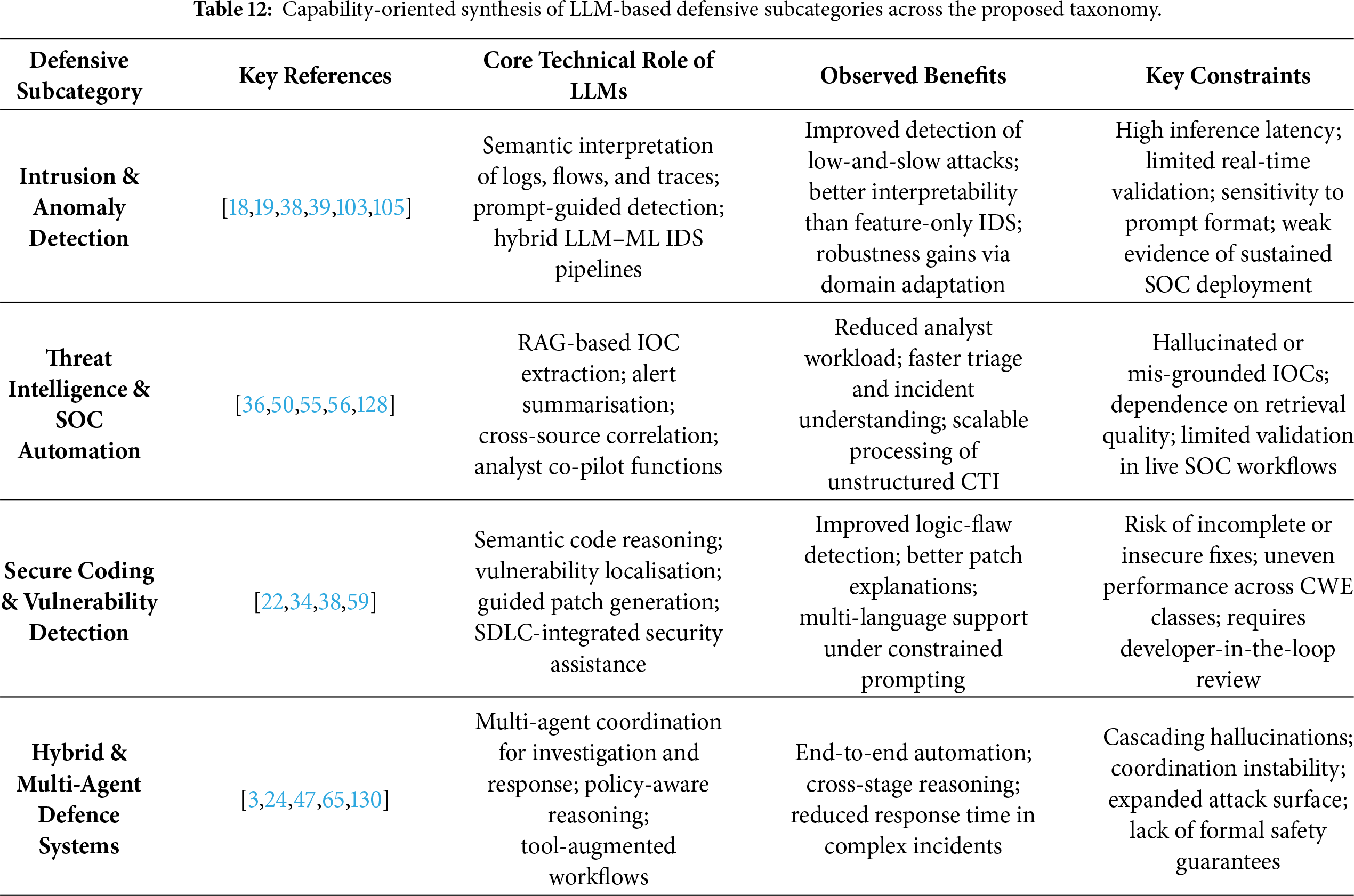

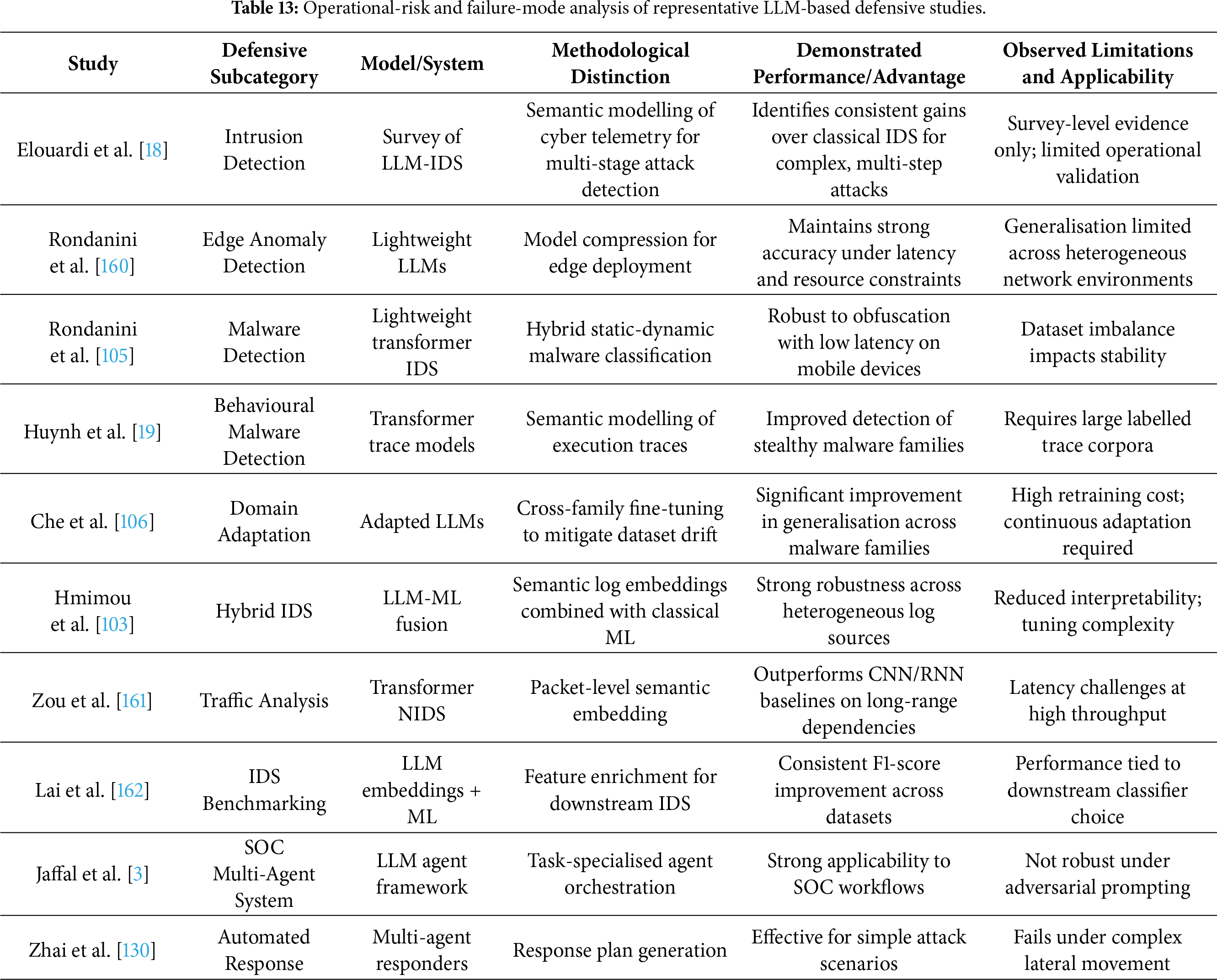

Anchored in the second dimension of the unified threat–defence–evaluation taxonomy, this section synthesises how large language models (LLMs) are operationalised as defensive instruments across contemporary cybersecurity pipelines. In contrast to AIGC-driven threats (Section 5), defensive studies overwhelmingly conceptualise LLMs not as autonomous detectors, but as cognitive and semantic augmentation layers embedded within existing security architectures. An analysis of the 167 reviewed studies published between 2022 and 2025 reveals four dominant defensive domains: (i) intrusion, malware, and anomaly detection; (ii) threat intelligence extraction and Security Operations Center (SOC) automation; (iii) vulnerability detection, secure coding, and automated repair; and (iv) hybrid and multi-agent architectures for investigation and response.

Across these domains, LLMs primarily contribute through enhanced semantic reasoning, cross-context inference, and explainable decision support rather than improvements in raw detection accuracy [151,152]. At the same time, the literature consistently documents structural limitations—including hallucination-induced errors, evaluation fragility, adversarial susceptibility, computational overhead, and governance gaps—that constrain safe deployment in high-assurance operational environments. These tensions motivate a taxonomy-aware and failure-conscious analysis, rather than a purely capability-centric narrative.

6.1 General Cybersecurity Applications vs. IDS-Specific Architectures

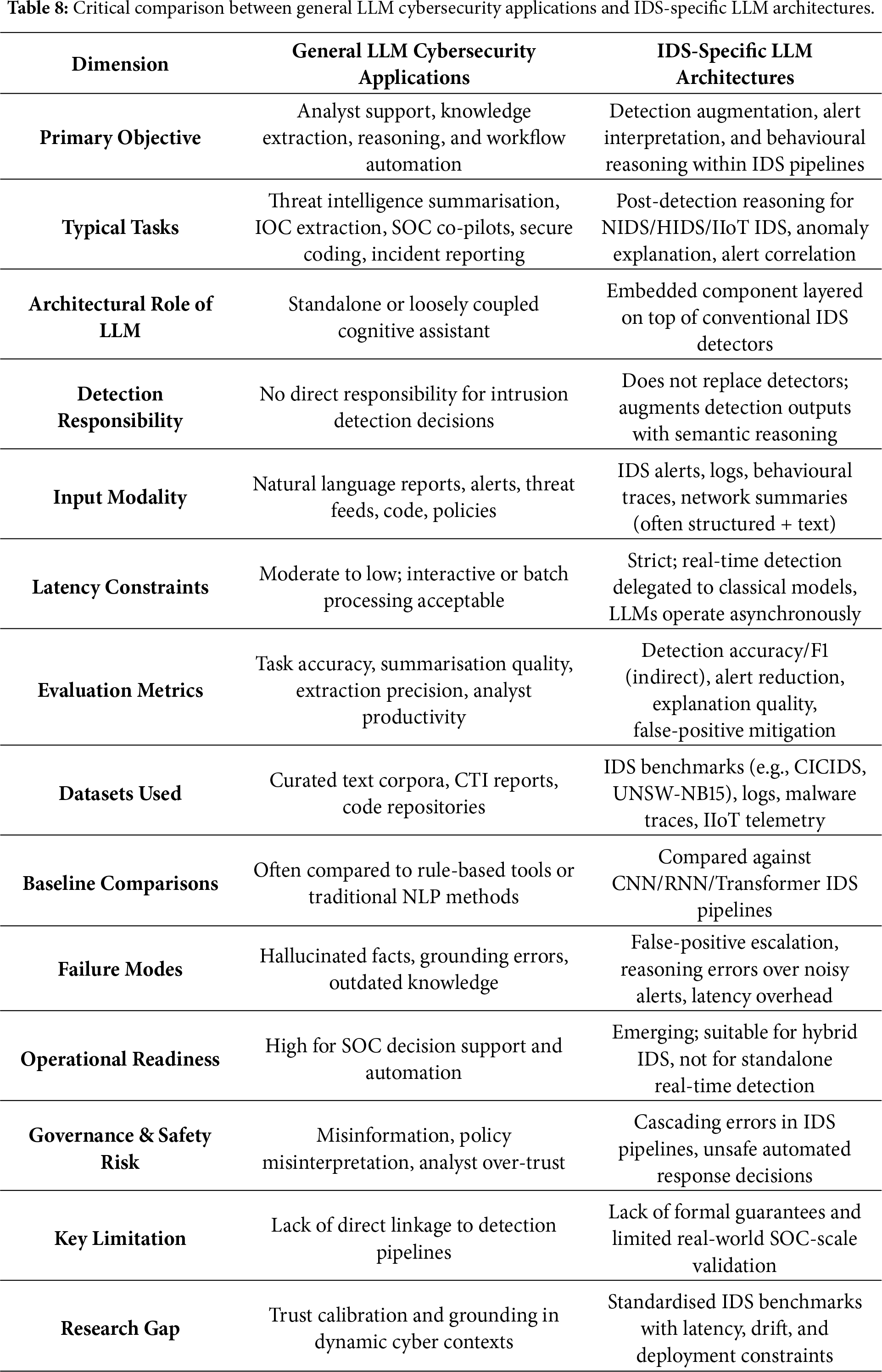

A recurring source of conceptual ambiguity in the existing literature is the frequent conflation of general LLM-enabled cybersecurity applications with IDS-specific LLM architectures. This distinction is not merely terminological; it is foundational for correctly interpreting reported performance gains, assessing deployment readiness, and understanding risk exposure. The absence of a clear separation between these paradigms has contributed to overgeneralised claims regarding the suitability of LLMs for real-time intrusion detection and operational cyber defence.

General LLM-based cybersecurity applications primarily function as analyst-facing cognitive assistants. Representative tasks include threat report summarisation, indicator-of-compromise (IOC) extraction, incident timeline reconstruction, policy and configuration interpretation, and SOC co-pilot support [33,35,36]. Such systems typically operate on curated or semi-structured inputs, tolerate moderate inference latency, and are evaluated using task-centric or qualitative metrics such as extraction accuracy, reasoning coherence, or analyst productivity. Their principal contribution lies in reducing cognitive burden, improving contextual understanding, and accelerating sense-making, rather than in executing primary detection or enforcement decisions.

In contrast, IDS-specific LLM architectures are embedded directly within intrusion detection pipelines and are subject to substantially stricter operational constraints. As synthesised in Sections 6.4 and 6.5, LLMs in IDS contexts do not replace conventional signature-based, statistical, or machine-learning detectors. Instead, they are positioned as auxiliary reasoning layers that augment detection outputs through semantic interpretation of alerts, behavioural abstraction over logs and traces, and contextual explanation of anomalous activity [18,39,40]. These systems must operate under high-throughput data streams, adversarial noise, concept drift, and strict latency budgets, rendering naive adoption of general-purpose LLM deployments impractical without careful architectural mediation.

This distinction has direct implications for both evaluation and deployment. Evaluation protocols commonly applied to general LLM applications-such as standalone reasoning benchmarks or static text-based assessments-are insufficient for IDS-specific scenarios, where errors may propagate downstream and amplify operational risk. In particular, false-positive escalation, inference latency, and reasoning instability can compound across SOC workflows, increasing analyst workload and degrading response effectiveness [38,121,140]. Consequently, performance claims derived from general LLM evaluations cannot be extrapolated to IDS deployments without explicit consideration of these system-level effects.

Explicitly distinguishing between general cybersecurity applications and IDS-specific architectures therefore prevents overstatement of LLM readiness for real-time intrusion detection and clarifies the necessity of hybrid, defence-in-depth designs. In such architectures, classical detectors provide time-critical guarantees, while LLMs contribute semantic reasoning, correlation, and explainability under human-in-the-loop supervision. Table 8 formalises this distinction and provides a conceptual reference framework for interpreting the task-level and quantitative analyses presented in subsequent sections.

6.2 Impact of Model Scale and Architecture

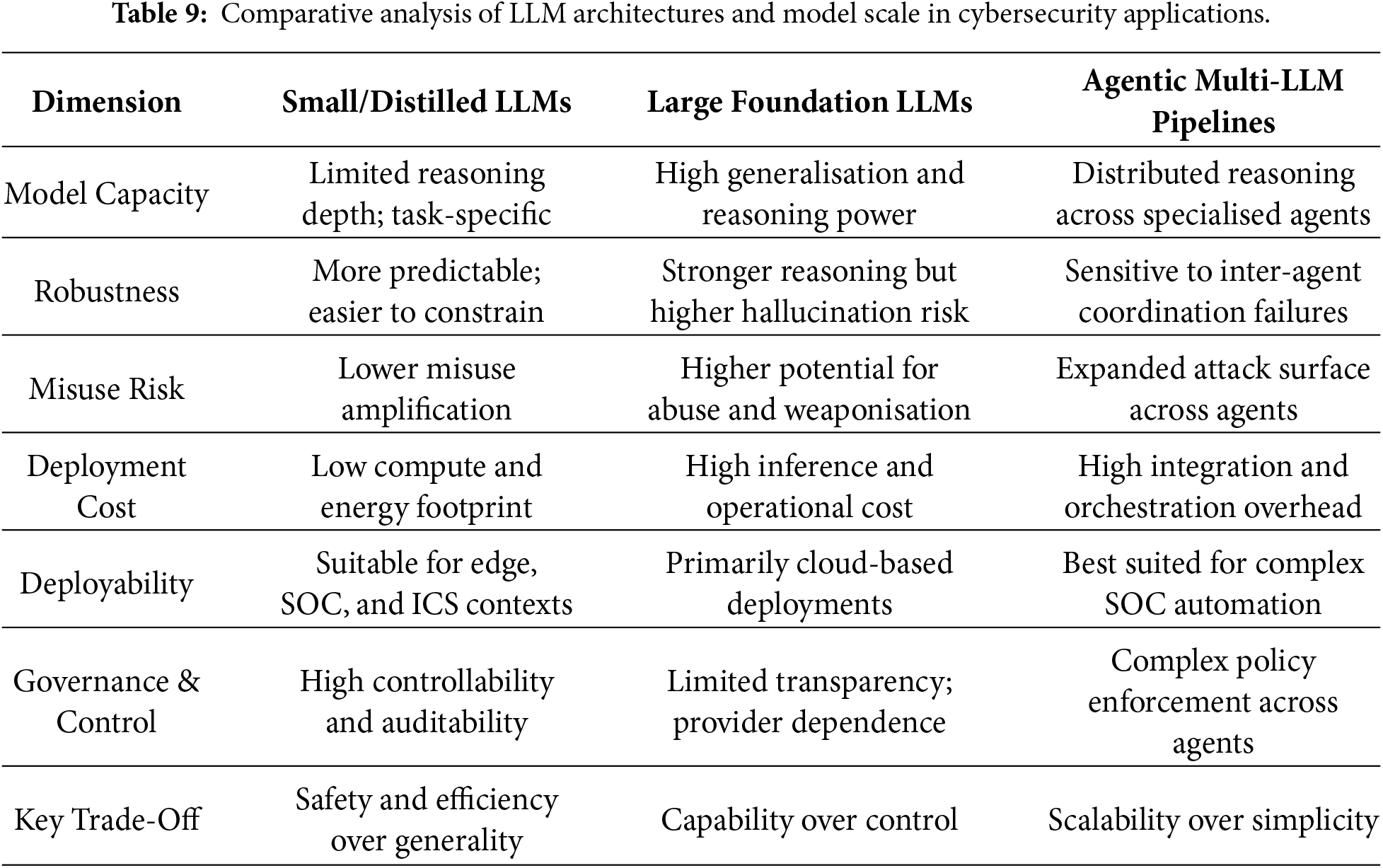

Although LLM-enabled threats and defences have been examined extensively, comparatively limited attention has been devoted to understanding how model scale, architectural design, and deployment modality influence robustness, misuse risk, and operational feasibility [153]. This subsection addresses this gap by synthesising empirical evidence across defensive studies and situating architectural choices within practical deployment constraints.

6.2.1 Model Scale: Small vs. Large LLMs

Small- and medium-scale LLMs-including distilled, quantised, and domain-adapted variants-are increasingly favoured in SOC, edge, and IIoT settings due to their lower inference latency, reduced computational cost, and improved controllability. Multiple studies demonstrate that such models can achieve competitive performance on constrained security tasks, including log interpretation and malware trace reasoning [19,105]. In contrast, large foundation models typically offer superior generalisation and deeper reasoning capabilities but are associated with elevated hallucination risk, greater potential for misuse amplification, and prohibitive operational costs in continuous-deployment environments [33,38]. Collectively, these findings suggest that model scale mediates a fundamental trade-off between defensive capability and deployment risk.

6.2.2 Deployment Modality: API-Based vs. Local Models

Deployment modality further conditions defensive effectiveness and risk exposure. API-based LLM deployments benefit from provider-managed updates, centralised alignment controls, and rapid access to state-of-the-art models, but introduce data sovereignty concerns, external service dependencies, and limited transparency into model behaviour. By contrast, locally deployed and fine-tuned models provide tighter control over data flows, inference logic, and privacy-sensitive logs, making them particularly suitable for SOC operations and critical infrastructure environments [35,36]. However, local deployment shifts responsibility for model maintenance, security hardening, and misuse mitigation to system operators, thereby introducing additional governance and lifecycle-management challenges.

6.2.3 Single-Model vs. Agentic Architectures

Agentic and multi-LLM architectures are increasingly adopted to support complex investigation, correlation, and response workflows [3,24]. While such designs enhance modularity, task specialisation, and workflow automation, they also expand the effective attack surface and introduce new failure modes, including coordination errors, cascading hallucinations, and prompt-injection propagation across agents [38,154]. Single-model architectures, by contrast, are generally easier to audit, reason about, and deploy, but often struggle with multi-stage reasoning, cross-domain correlation, and concurrent task execution.

Taken together, architectural and scale-related design choices exert a first-order influence on defensive robustness and deployability. Larger and agentic systems prioritise capability breadth and scalability, whereas smaller, locally controlled models emphasise predictability, auditability, and operational safety. Table 9 summarises these trade-offs across representative deployment scenarios.

6.3 Intrusion and Anomaly Detection

A substantial subset of the reviewed literature investigates the application of LLMs to intrusion and anomaly detection across heterogeneous telemetry sources, including system logs, network flows, endpoint activity, and malware execution traces. Survey studies such as [18,33] report that language-model-based semantic representations can capture long-range dependencies and behavioural context more effectively than traditional feature-engineered approaches. Practical systems further illustrate complementary strengths: lightweight and domain-adapted models enable deployment in resource-constrained edge environments [105], while semantic trace modelling improves the detection of low-frequency and stealthy attack patterns [19].

Domain adaptation emerges as a critical enabler of robust performance. Studies that integrate LLM-derived embeddings with classical detection mechanisms demonstrate improved resilience to dataset drift and enhanced generalisation across operational environments [103,106]. Nevertheless, persistent limitations remain. Inference latency constrains real-time deployment, prompt sensitivity undermines output stability, and overfitting to benchmark artefacts is frequently observed. As a result, the literature converges on positioning LLMs as post-detection reasoning layers that augment conventional intrusion detection systems rather than as standalone replacements.

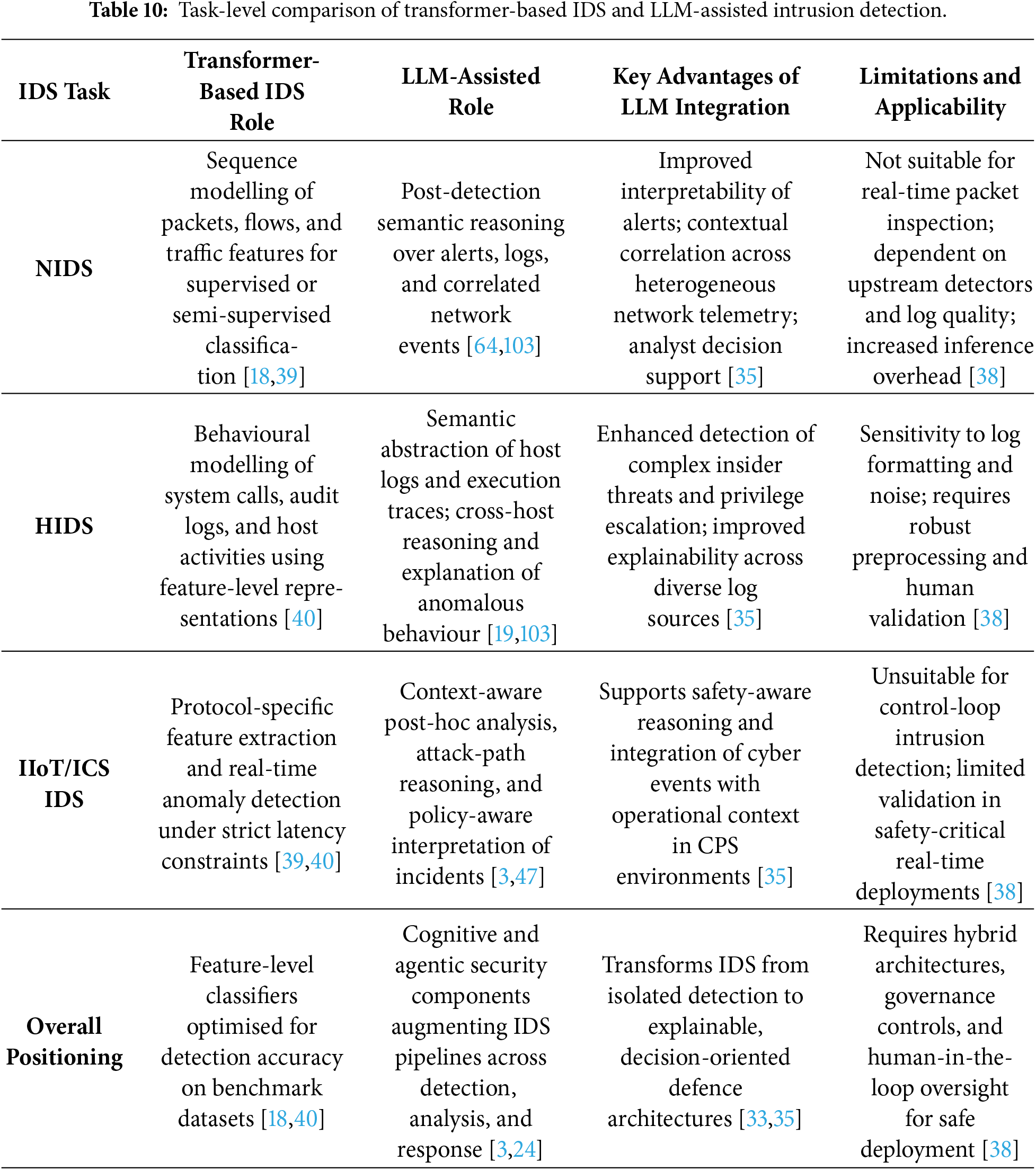

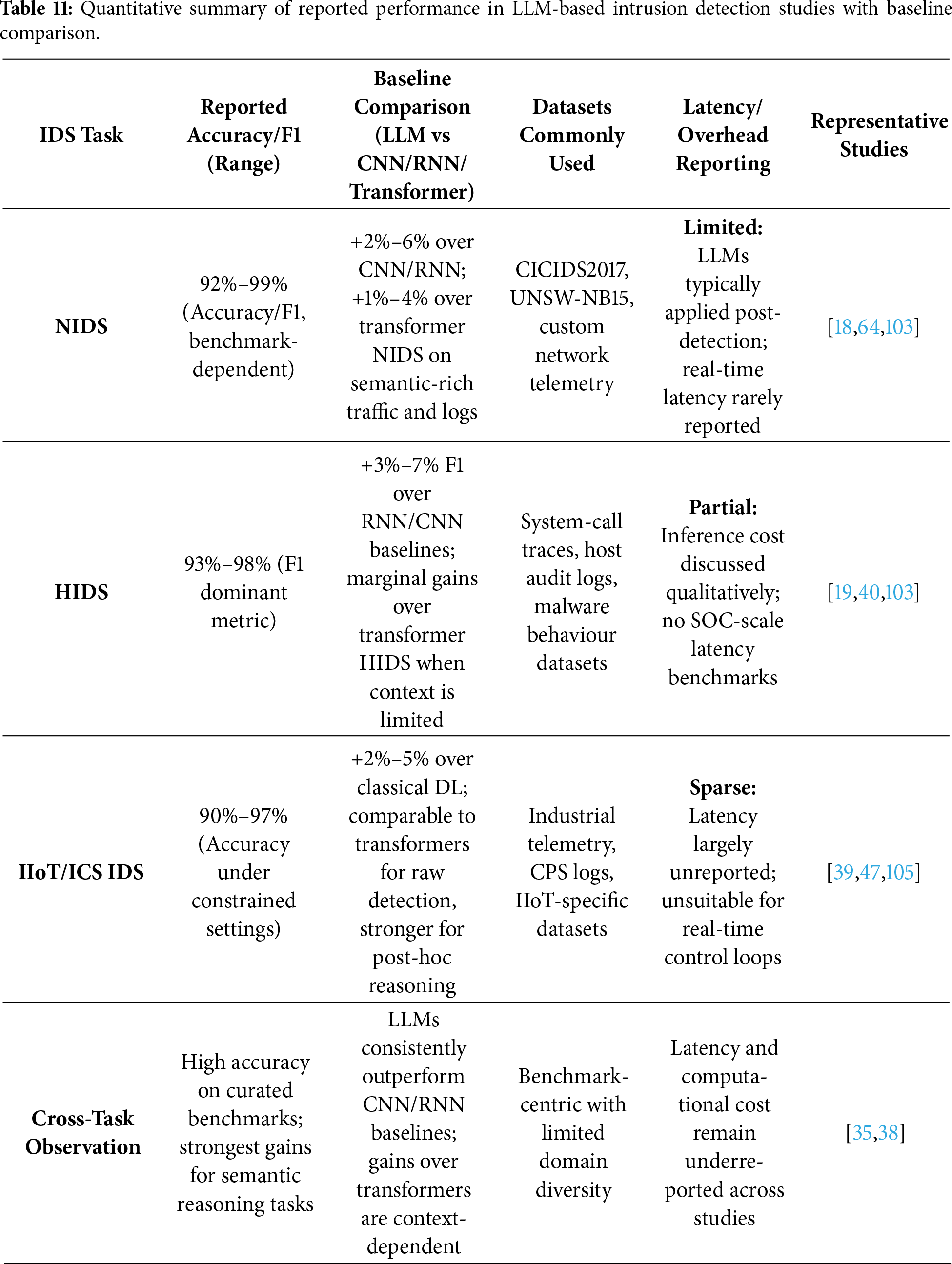

6.4 Task-Level Comparison: NIDS, HIDS, and IIoT/ICS

Traditional IDS surveys typically categorise methods by deployment context-network-based intrusion detection systems (NIDS), host-based intrusion detection systems (HIDS), and IIoT/ICS environments-and evaluate transformer models primarily as feature-level classifiers optimised for benchmark accuracy [18,39,40]. LLM-assisted IDS approaches depart fundamentally from this paradigm. Rather than competing directly at the detection stage, LLMs are employed as higher-level cognitive components that support interpretation, correlation, and response.