Open Access

Open Access

REVIEW

Generative Adversarial Networks for Image Super-Resolution: A Survey

1 School of Engineering, The Hong Kong University of Science and Technology, Clear Water Bay, Kowloon, Hong Kong, China

2 School of Software, Northwestern Polytechnical University, Xi’an, China

3 College of Artificial Intelligence, Nanjing University of Aeronautics and Astronautics, Nanjing, China

4 Department of Distributed Systems and IT Devices, Silesian University of Technology, Gliwice, Poland

5 School of Computer Science and Technology, Harbin Institute of Technology, Harbin, China

* Corresponding Author: Chunwei Tian. Email:

Computers, Materials & Continua 2026, 87(3), 3 https://doi.org/10.32604/cmc.2026.078842

Received 09 January 2026; Accepted 05 March 2026; Issue published 09 April 2026

Abstract

Image super-resolution is a significant area in the field of image processing, with broad applications across multiple domains. In recent years, advancements in Generative Adversarial Networks (GANs) have led to an increased adoption of GAN-based methods in image super-resolution, yielding remarkable results. However, there is still a limited amount of research that systematically and comprehensively summarizes the various GAN-based techniques for image super-resolution. This paper provides a comparative study that elucidates the application differences of GANs in this field. We begin by reviewing the development of GANs and introducing their popular variants used in image applications. Subsequently, we systematically analyze the theoretical motivations, implementation approaches, and technical distinctions of GAN-based optimization methods and discriminative learning from three perspectives: supervised, semi-supervised, and unsupervised learning. We examine these methods concerning their integration of different network architectures, prior knowledge, loss functions, and multitask strategies. Furthermore, we conduct a systematic comparison of state-of-the-art GAN methods through quantitative and qualitative analyses using publicly available super-resolution datasets. In addition to traditional metrics such as PSNR and SSIM in our quantitative analysis, we also consider complexity and running time as reference standards to better align the evaluation with practical application demands. Finally, we identify several challenges currently faced by GANs in the domain of image super-resolution, including issues related to training stability and the need for improved evaluation metrics. We outline future research directions aimed at enhancing the robustness and efficiency of GAN-based super-resolution techniques, emphasizing the importance of integration with other machine learning frameworks to further advance this exciting field.Keywords

Single image super-resolution (SISR) is an important branch in the field of image processing [1]. It aims to recover a high-resolution (HR) image over a low-resolution (LR) image [2], leading to its wide applications in medical diagnosis [3], video surveillance [4] and disaster relief [5], etc. For instance, in the medical field, obtaining higher-quality images can help doctors accurately detect diseases [6]. Thus, studying SISR is very meaningful to academia and industry [7].

To address the SISR problem, researchers have developed a variety of methods based on degradation models of low-level vision tasks [8,9]. There are three categories for SISR in general, i.e., image itself’s information, prior knowledge and machine learning. In image itself’s information, directly amplifying resolutions of all pixels in an LR image through an interpolation method to obtain a HR image was a simple and efficient method in SISR [10], i.e., nearest neighbor interpolation [11], bilinear interpolation [12] and bicubic interpolation [13], etc. It is noted that in these interpolation methods, high-frequency information is lost in the up-sampling process [10], which may decrease performance in image super-resolution. Alternatively, reconstruction-based methods were developed for SISR, according to optimization methods [14]. That is, mapping a projection into a convex set to estimate the registration parameters can restore more details of SISR [15]. Although the mentioned methods can overcome the drawbacks of image itself’s information methods, they still suffer from the following challenges: a non-unique solution, slow convergence speed and higher computational costs. To prevent this phenomenon, prior knowledge and image itself’s information were integrated into a frame to find an optimal solution to improve the quality of the predicted SR images [16,17]. Besides, machine learning methods can be presented to deal with SISR, according to relation of data distribution [18]. There are also many other SR methods [18,19] that often adopt sophisticated prior knowledge to restrict the possible solution space with the advantage of generating flexible and sharp detail. However, the performance of these methods rapidly degrades when the scale factor is increased, and these methods tend to be time-consuming [20].

To obtain a better and more efficient SR model, a variety of deep learning methods were applied to a large-scale image dataset to solve the super-resolution tasks [21,22]. For instance, Dong et al. proposed a super-resolution convolutional neural network (SRCNN) based pixel mapping that used only three layers to obtain stronger learning ability than those of some popular machine learning methods for image super-resolution [23]. Although the SRCNN had a good SR effect, it still faced problems in terms of shallow architecture and high complexity. To overcome challenges of shallow architectures, Kim et al. [24] designed a deep architecture by stacking some small convolutions to improve the performance of image super-resolution. Tai et al. [25] relied on recursive and residual operations in a deep network to enhance the learning ability of a SR model. To further improve the SR effect, Lim et al. [26] used weights to adjust residual blocks to achieve a better SR performance. To extract robust information, the combination of traditional machine learning methods and deep networks can restore more detailed information for SISR [27]. For instance, Wang et al. [27] embedded a sparse coding method into a deep neural network to make a tradeoff between performance and efficiency in SISR. To reduce the complexity, an up-sampling operation is used in a deep layer in a deep CNN to increase the resolution of low-frequency features and produce high-quality images [28]. For example, Dong et al. [28] directly exploited the given low-resolution images to train a SR model to improve training efficiency, where the SR network used a deconvolution layer to reconstruct HR images. There are also other effective SR methods. HGSRCNN enhances inter- and intra-channel correlations through the parallel utilization of heterogeneous group blocks to obtain richer low-frequency structural information, thereby significantly improving robustness in complex scenes [29]. Lai et al. [30] used the Laplacian pyramid technique with shared parameters in a deep network to accelerate the training speed for SISR. Tian et al. proposed a tree-guided convolutional neural network which leverages a tree architecture to strengthen hierarchical information propagation among key nodes, thereby effectively improving the reconstruction quality [31]. Zhang et al. [32] guided a CNN by attention mechanisms to extract salient features for improving the performance and visual effects in image SISR. Dynamic super resolution network (DSRNet) [33] proposes a dynamic network architecture that utilizes a dynamic gate mechanism to dynamically adjust network parameters to adapt to different scenarios, significantly enhancing the robustness and applicability of the image super-resolution model in complex scenes. Tian et al. [34] proposed a cosine network-based method for SISR, which introduced odd and even enhancement blocks to extract complementary homologous structural information and incorporated a cosine annealing mechanism to optimize the training process, thereby achieving superior performance on multiple public datasets.

With the development of hardware devices, a wider variety of captured images are available [35]. However, the number of available images is insufficient for real applications since scenes in the real world vary. To address this problem, generative methods, such as flow-based models, VAEs, and diffusion models, are developed. Specifically, flow-based models directly calculate the mapping relationship between the normal distribution and the target distribution function to better generate samples similar to the given ones [36]. Although it has better generative effects, it faces the challenge of high computational costs. To solve the unstable training of GANs [37], variational autoencoders (VAEs) use encoders to extract hidden variable distributions to stabilize the training process [38]. Although VAEs can help accelerate training, they are limited by blurry image super-resolution [39]. To enhance the adaptive ability of the generated super-resolution models, diffusion models can use game strategies involving adding and reducing noise to learn an unsupervised super-resolution model. In recent years, diffusion models have increasingly been employed for image super-resolution tasks [40]. While diffusion models can enhance the robustness of generated super-resolution outputs, they often incur higher computational costs and greater resource consumption [41]. Under controlled comparisons of resources—where architecture scale, dataset size, and computational budget are standardized—GANs can compete effectively with diffusion models in the domain of image super-resolution, offering substantial advantages in processing speed. Furthermore, GAN-based upscalers facilitate faster training times and enable single-step inference [42]. Considering the above analysis, GAN-based game strategies are effective tools for generative methods in image super-resolution.

To address the problem of small samples, generative adversarial nets (GANs) were proposed [43–46]. Due to their strong learning abilities, GANs have become popular methods for image super-resolution [47]. For instance, Park et al. [48] combined kernel ideas and GANs to extract more structural information to enhance image sharpness and edge thickness for image super-resolution. When addressing the challenges of super-resolution tasks, GAN-based methods also provide effective solutions. Large-scale scaling requires deeper networks, which in turn demands greater stability. GANs can achieve stability through improved loss functions [49], progressive learning [50,51], and other methods. At the same time, to address common failure modes, GANs enhance sharpness and edge information by introducing residual dense structures and attention mechanisms [52] to avoid distortion of fine details. They eliminate artifacts using multi-discriminators [53] and address color shifts through range-nullspace decomposition [54] and second-order channel attention mechanisms [55]. Additionally, they tackle geometric distortions by employing gradient branching [56] and utilizing bidirectional structural consistency [57]. However, there are few studies summarizing the use of these GANs for SISR.

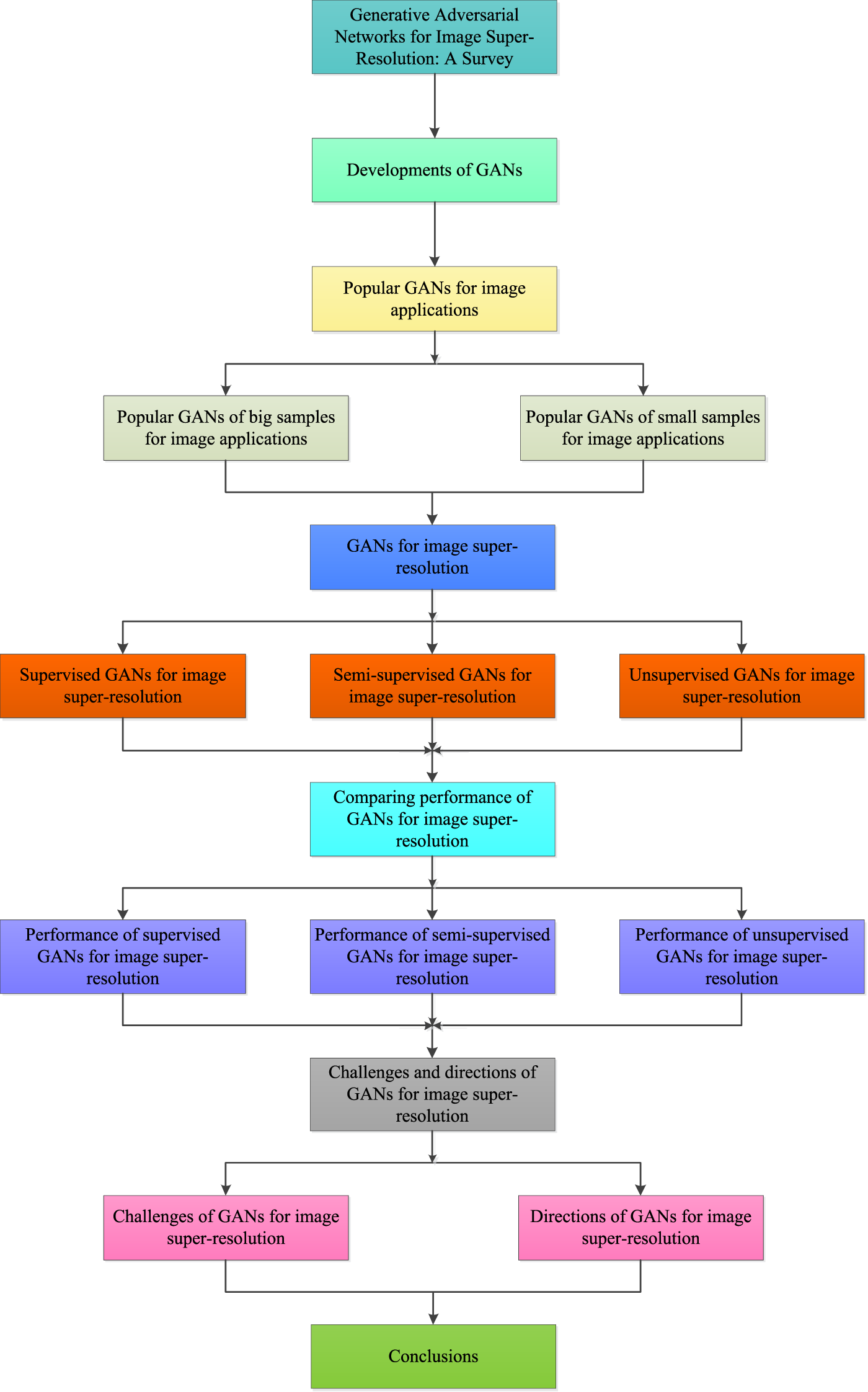

Prior surveys on GANs [58,59] have continuously emerged, elucidating various technical aspects of GANs, including advancements in performance, related technologies, and their evolutionary trajectories. In recent years, surveys specifically addressing GAN-based super-resolution have also been proposed, such as those in references [60–62]. However, these works exhibit certain limitations: (a) the training paradigms covered are incomplete, particularly lacking in the areas of unsupervised and semi-supervised learning, and (b) the evaluation frameworks deviate from practical deployment requirements, failing to incorporate engineering metrics such as model parameter count, inference time, and memory usage. Differing from previous work based on deep learning techniques for image super-resolution, i.e., Refs. [63,64], we not only refer to the importance of GANs for both low- and high-level tasks in the context of small and large samples but also provide a comprehensive summary of GANs in image super-resolution based on a combination of different training methods (i.e., supervised, semi-supervised, and unsupervised), network architectures, prior knowledge, loss functions, and multiple tasks, which makes it easier for readers to understand the principles, improvements, and the strengths and weaknesses of different GANs for image super-resolution. That is, in this paper, we conduct a comprehensive overview of 258 papers to show their performance, pros, cons, complexity, challenges, and potential research points, etc. First, we demonstrate the effects of GANs in image applications. Second, we present popular architectures for GANs in large and small samples for image applications. Third, we analyze the motivations, implementations, and differences of GAN-based optimization methods and discriminative learning for image super-resolution in terms of supervised, semi-supervised, and unsupervised methods to provide a clearer and more comprehensive overview of the relevant technologies, including the latest advancements, where these GANs operate by combining different network architectures, prior knowledge, loss functions, and multiple tasks for image super-resolution. Fifth, we compare these GANs using experimental settings, quantitative analysis (i.e., PSNR, SSIM, complexity, and running time), and qualitative analysis to make a more practical and complete evaluation. Finally, we report on potential research directions and existing challenges associated with GANs for image super-resolution. The overall architecture of this paper is shown in Fig. 1.

Figure 1: The outline of this overview. It mainly consists of basic frameworks, categories (i.e., supervised, semi-supervised, and unsupervised GANs), performance comparison, challenges, and potential directions.

The remainder of this survey is organized as follows: Section 2 reviews the developments of GANs and surveys popular GANs for image applications; Section 3 focuses on introduction of existing GANs via three ways on SISR; Section 4 compares performance of mentioned GANs from Section 2 for SISR; Section 5 offers potential directions and challenges of GANs in image super-resolution; and Section 6 concludes the overview.

Traditional machine learning methods prefer to use prior knowledge to improve the performance of image processing applications [65]. For instance, Sun et al. [65] proposed a gradient profile to restore more detailed information for improving the performance of image super-resolution. Although machine learning methods based on prior knowledge have fast execution speed, they have some drawbacks. First, they require manually set parameters to achieve better performance on image tasks. Second, they require complex optimization methods to find optimized parameters. To address these challenges, deep learning methods have been developed [66]. Deep learning methods use deep networks, such as CNNs, to automatically learn features instead of manually setting parameters to achieve effective results in image processing tasks, such as image classification [66], image inpainting [67], and image super-resolution [1]. Although these methods are effective for large samples, they are limited for tasks involving small samples [43].

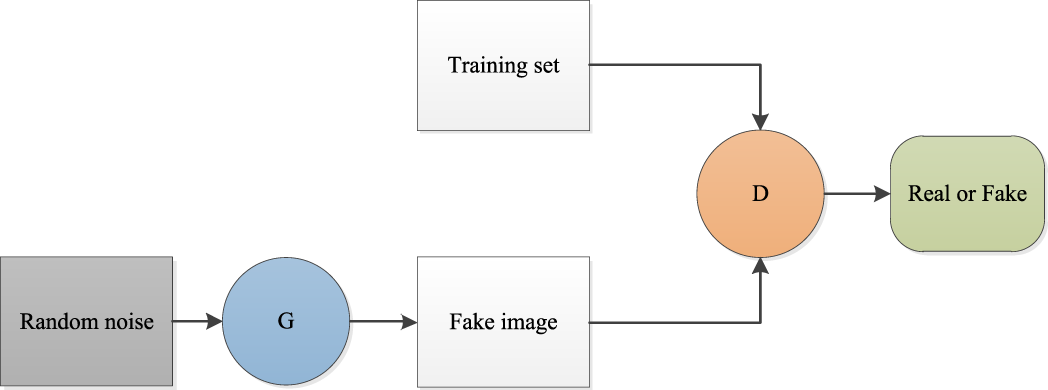

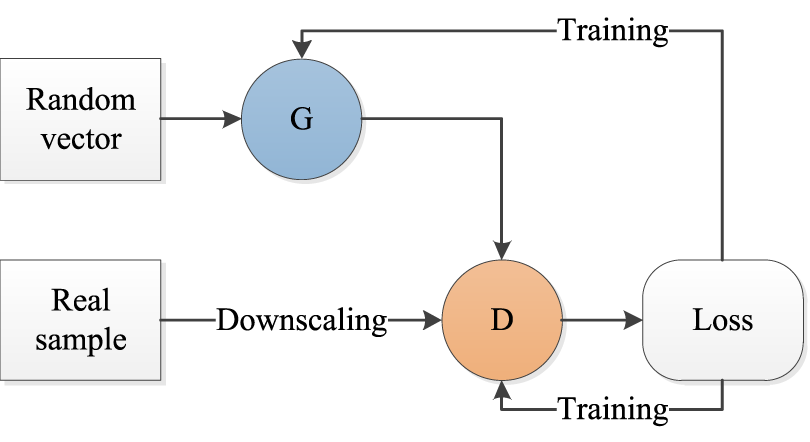

To address the problems mentioned above, GANs are introduced in image processing [43]. GANs consist of a generator network and a discriminator network. The generator network is used to generate new samples according to the given samples. The discriminator network is used to determine the authenticity of the generated new samples. When the generator and discriminator are balanced, the GAN model is complete. The working process of the GAN is shown in Fig. 2, where G and D denote the generator network and the discriminator network, respectively. To better understand GANs, we introduce several basic GANs as follows.

Figure 2: Architecture of generative adversarial network (GAN).

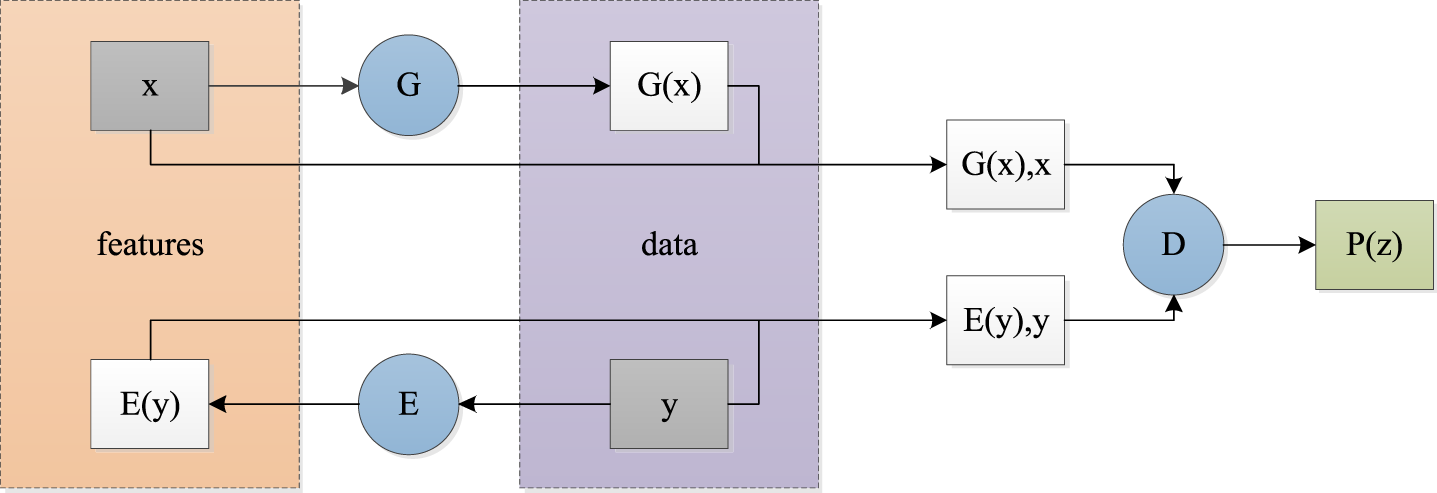

To obtain more realistic effects, conditional information is fused into a GAN (CGAN) to randomly generate images that are closer to real images [68]. CGAN improves GANs to obtain more robust data, which has significant reference value for GANs in computer vision applications. Subsequently, increasing the depth of the GAN instead of using the original multilayer perceptron in a CNN has been developed to improve the expressive ability of GANs for complex vision tasks [69]. To mine more useful information, the bidirectional generative adversarial network (BiGAN) uses dual encoders to collaborate with a generator and a discriminator to obtain richer information for improving performance in anomaly detection, as shown in Fig. 3 [70]. In Fig. 3, x denotes a feature vector, E is an encoder, and y represents an image from the discriminator.

Figure 3: Architecture of bidirectional generative adversarial network (BiGAN).

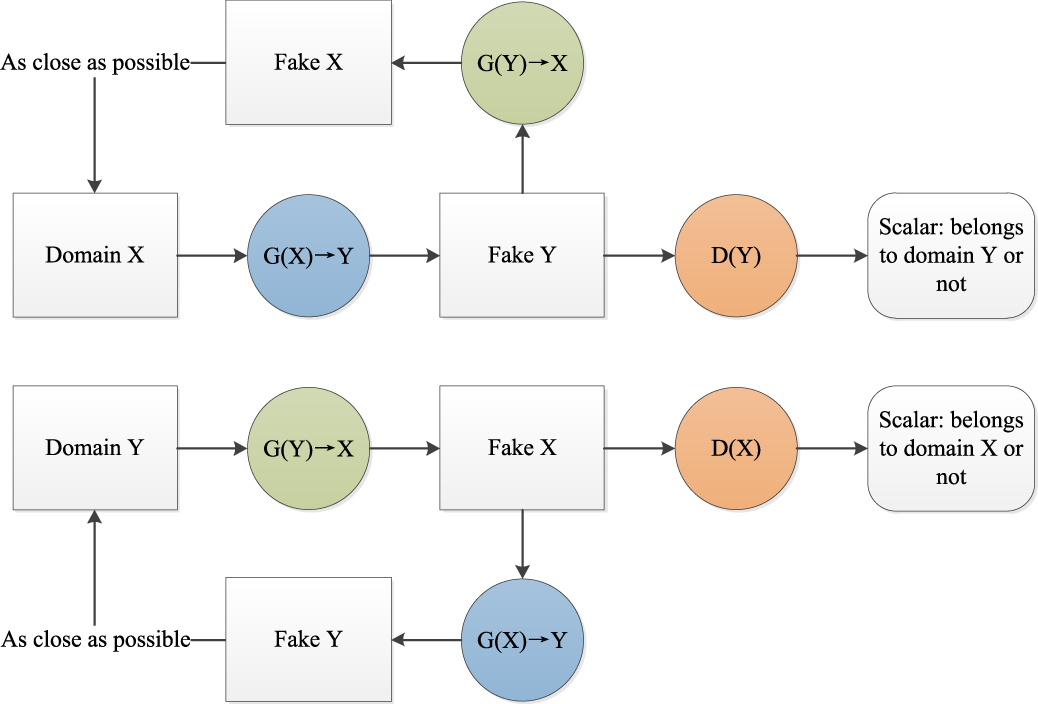

It is known that pretrained operations can be used to accelerate the training speed of CNNs for image recognition [71]. This idea can be described as an energy-driven process. Inspired by that, Zhao et al. proposed an energy-based generative adversarial network (EBGAN) by incorporating a pretraining operation into the discriminator to improve performance in image recognition [72]. To maintain consistency between the obtained features and the original images, the cycle-consistent adversarial network (CycleGAN) relies on a cyclic architecture to achieve excellent style transfer effects [73], as illustrated in Fig. 4. The implementation and application of CycleGAN are detailed in Section 2.2.1.

Figure 4: Architecture of cycle-consistent adversarial network (CycleGAN).

Although pretrained operations are useful for the training efficiency of network models, they may suffer from mode collapse. To address this problem, Wasserstein GAN (WGAN) used weight clipping to enhance the importance of the Lipschitz constraint to improve the stability of training a GAN [74]. WGAN used weight clipping to perform well. However, it is easier to cause gradient vanishing or gradient exploding [75]. To resolve this issue, WGAN used a gradient penalty (treated as WGAN-GP) to break the limitation of Lipschitz for pursuing good performance in computer vision applications [76]. To further improve the results of image generation, GAN enlarged the batch size and used truncation trick, as well as BIGGAN, which can make a tradeoff between variety and fidelity [76]. To better obtain features of different parts of an image (i.e., freckles and hair), style-based GAN (StyleGAN) uses feature decoupling to control different features and finish style transfer for image generation [77]. The architecture of StyleGAN and its generator are shown in Figs. 5 and 6.

Figure 5: Architecture of StyleGAN.

Figure 6: The structure of generator in the StyleGAN.

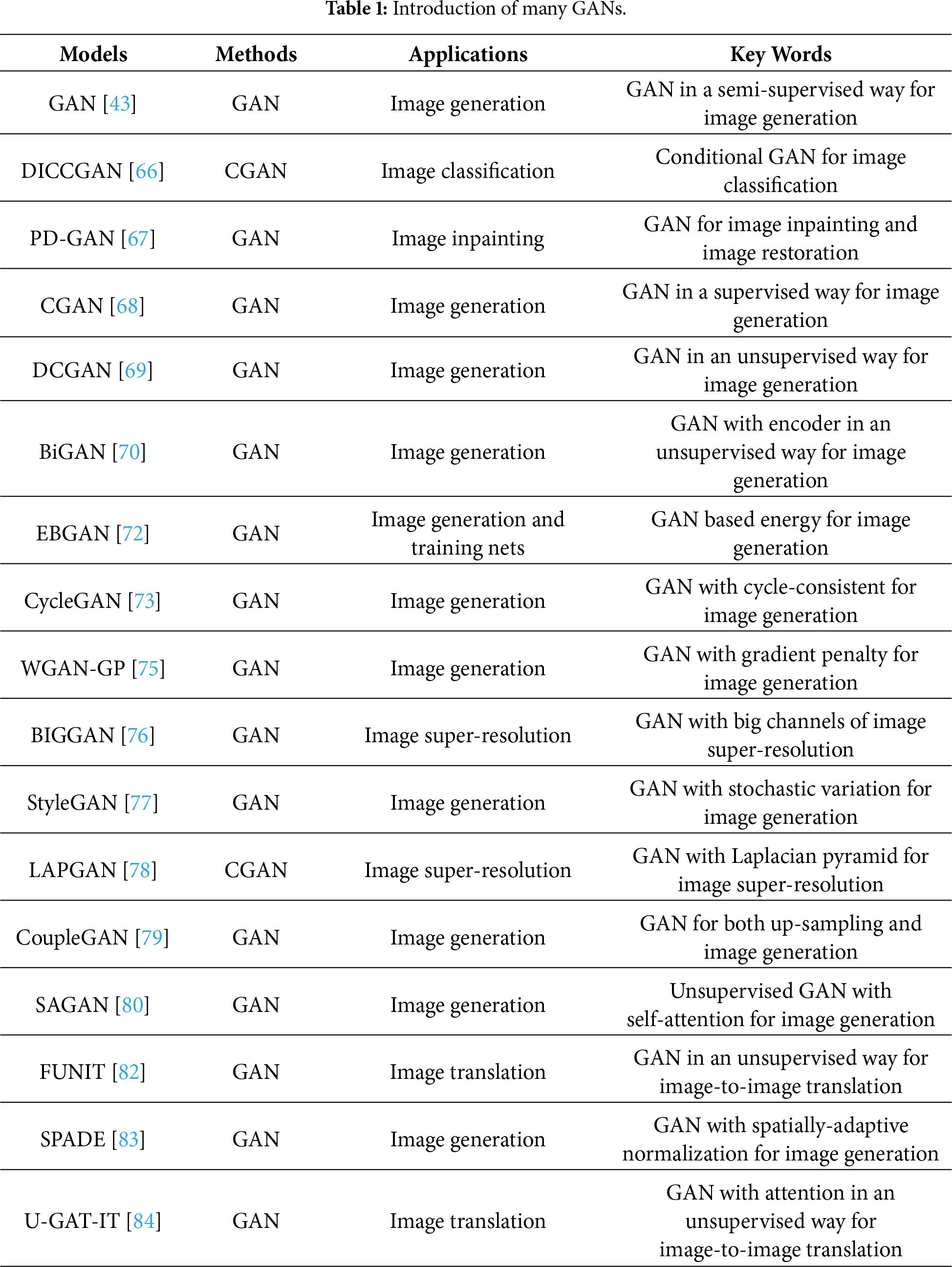

In recent years, GANs with good performance have been applied in the fields of image processing, natural language processing (NLP), and video processing. Also, there are other variants based on GANs for multimedia applications, such as Laplacian pyramid of GAN (LAPGAN) [78], coupled GAN (CoupleGAN) [79], self-attention GAN (SAGAN) [80], and loss-sensitive GAN (LSGAN) [81]. These methods emphasize how to generate high-quality images through various sampling mechanisms. However, researchers have focused on the applications of GANs since 2019, i.e., FUNIT [82], SPADE [83], and U-GAT-IT [84]. Illustrations of more GANs are shown in Table 1.

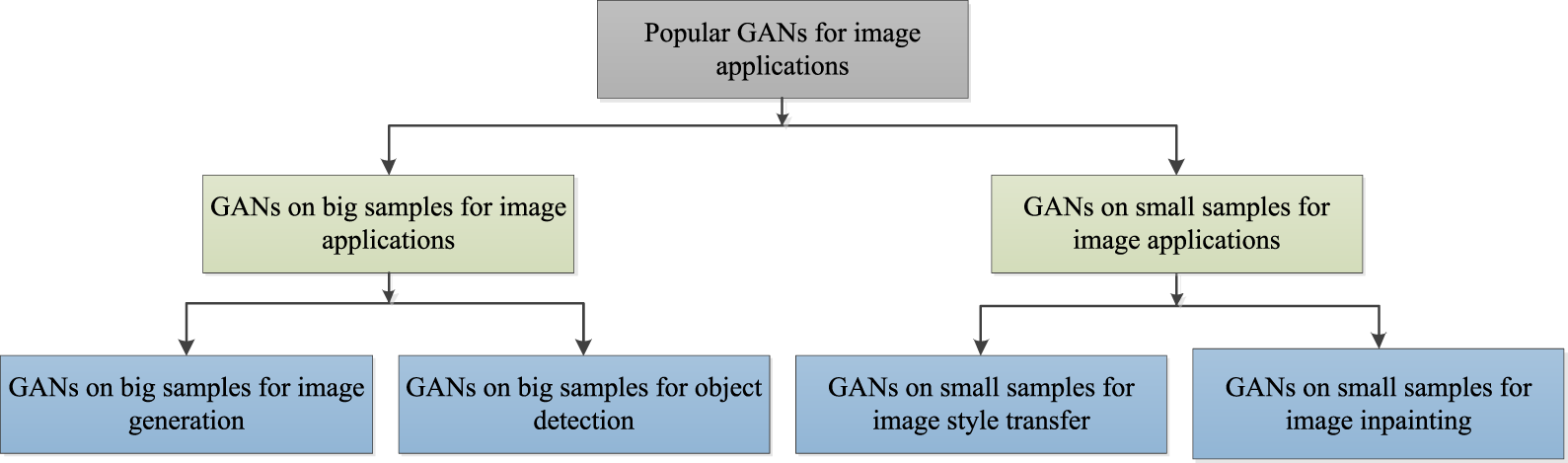

According to the illustrations mentioned, it is known that variants of GANs have been developed based on the properties of vision tasks in Section 2. To further understand GANs, we present different GANs with training data, i.e., big samples and small samples, for various high- and low-level computer vision tasks, as shown in Fig. 7.

Figure 7: Frame of popular GANs for image applications.

2.1 GANs on Big Samples for Image Applications

2.1.1 GANs on Big Samples for Image Generation

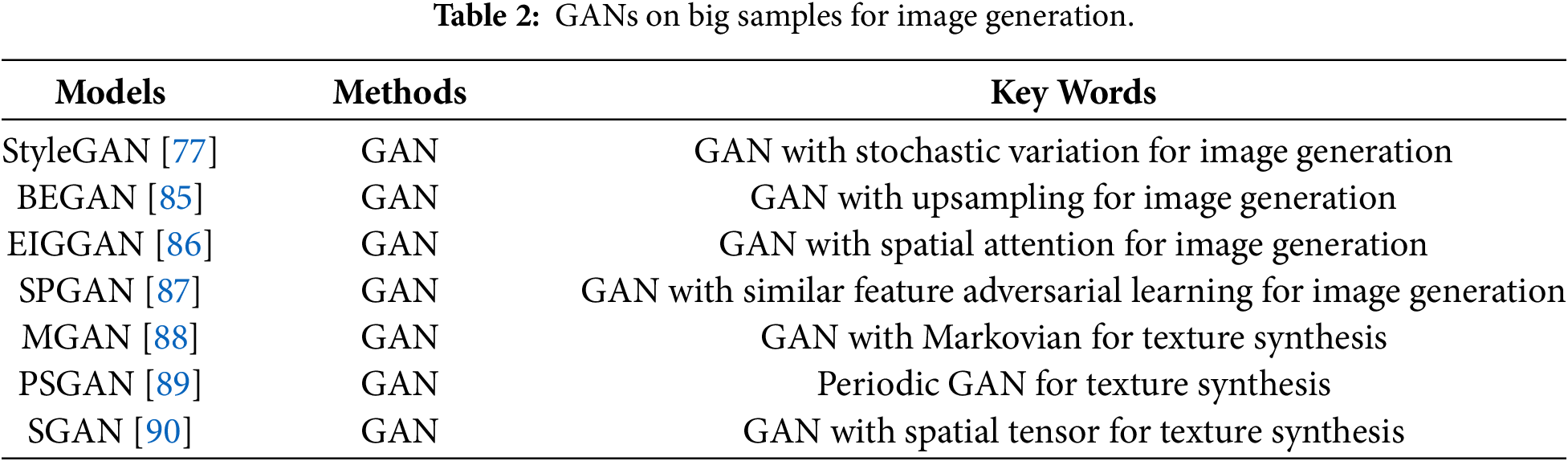

Good performance in image generation depends on rich samples. Inspired by that, GANs have been improved for image generation [45]. That is, GANs use a generator to generate more samples from high-dimensional data to cooperate with the discriminator for promoting the results of image generation. For instance, boundary equilibrium generative adversarial networks (BEGAN) has used the loss obtained from Wasserstein to match the loss of the auto-encoder in the discriminator and achieve a balance between a generator and a discriminator, which can obtain more texture information than common GANs in image generation [85]. To control different parts of a face, StyleGAN decoupled different features to form a feature space to complete the transfer of texture information [77]. Enhanced GAN for image generation (EIGGAN) [86] improves the performance of the generator by incorporating a spatial attention mechanism and parallel residual operations, thereby achieving higher quality and more realistic image generation effects in large-scale image generation tasks. SPGAN leverages a Siamese Projection Network to facilitate adversarial learning of feature similarity; by sharing weights with the discriminator, it reduces parameter complexity, mitigates overfitting, and streamlines the overall model architecture [87]. Besides, texture synthesis is another important application of image generation [88]. For instance, Markovian GANs (MGAN) can quickly capture texture data of Markovian patches to achieve the function of real-time texture synthesis [88], where Markovian patches can be found in Ref. [45]. Periodic spatial GAN (PSGAN) [89] is a variant of spatial GAN (SGAN) [90], which can learn periodic textures of big datasets and a single image. These methods can be summarized in Table 2.

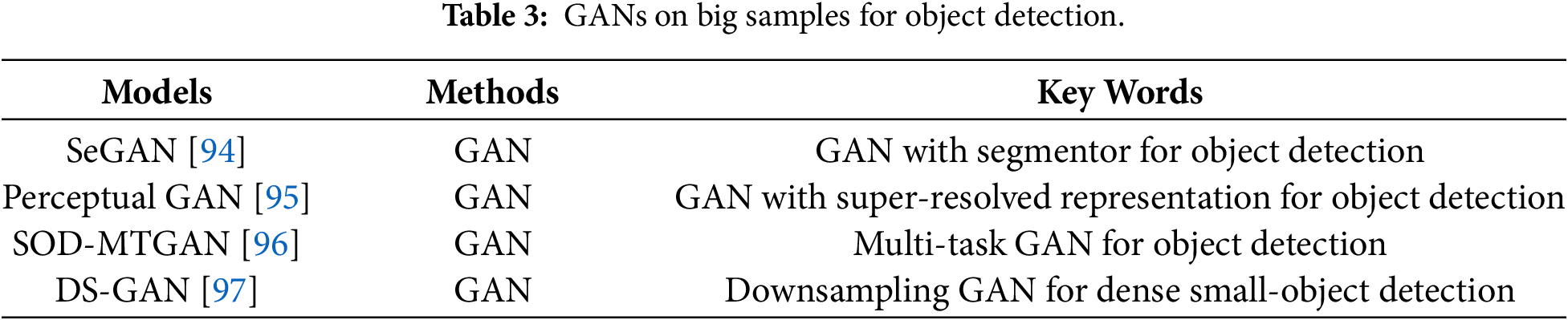

2.1.2 GANs on Big Samples for Object Detection

Object detection has wide applications in the industry, such as smart transportation [91] and medical diagnosis [92], and so on. However, complex environments pose significant challenges to pursuing good performance for object detection methods [93]. Rich data is important for object detection. Existing methods use a data-driven strategy to collect a large-scale dataset, including different object examples under various conditions, to obtain an object detector. However, the obtained dataset does not contain all kinds of deformed and occluded objects, which limits the effectiveness of object detection methods. To resolve this issue, GANs are used for object detection [94,95]. Ehsani et al. used segmentation and generation in GANs of invisible parts of the objects to overcome the challenges of occluded objects [94]. To address the challenge of small object detection on low-resolution and noisy representation, a perceptual GAN (Perceptual GAN) reduced the differences between small objects and large objects to improve performance in small object detection [95]. That is, its generator converts the poorly perceived representations of small objects into high-resolution representations of large objects to fool a discriminator, where the aforementioned large objects resemble real large objects [95]. To obtain sufficient information about objects, an end-to-end multi-task generative adversarial network (SOD-MTGAN) uses a generator to recover detailed information to generate high-quality images to achieve accurate detection [96]. Also, a discriminator transfers classification and regression losses in a back-propagated way into a generator [96]. To address dense small-object detection scenarios, Brais Bosquet et al. proposed Downsampling GAN, wherein the generator synthesizes small-scale object instances from larger targets and the discriminator distinguishes authentic small objects from generated ones; an optical-flow-based spatial selection mechanism is incorporated to ensure the realism and contextual plausibility of the generated samples, thereby improving detector robustness in crowded small-object scenes [97]. Two operations can extract objects from backgrounds to achieve good performance in object detection. More detailed information is shown in Table 3.

2.2 GANs on Small Samples for Image Applications

2.2.1 GANs on Small Samples for Image Style Transfer

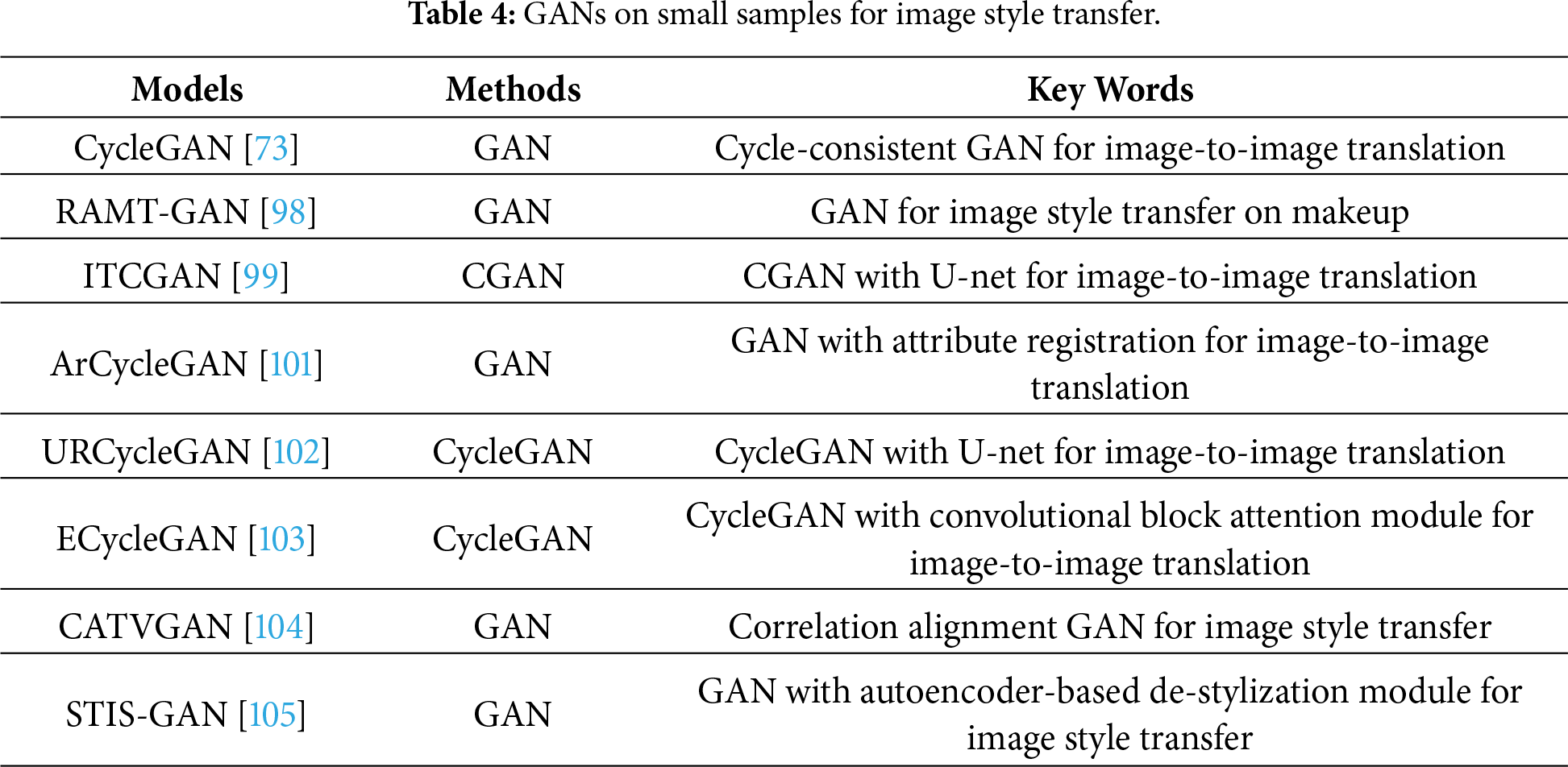

Makeup has important applications in the real world [98]. To save costs, visual makeup software is developed, leading to image style transfer (i.e., image-to-image translation) becoming a research hotspot in the field of computer vision in recent years [45]. GANs are good tools for style transfer on small samples, which can be used for establishing mappings between given images and object images [45]. The obtained mappings are strongly related to aligned image pairs [99]. However, we found that the above mappings do not match the ideal models regarding transfer effects [73]. Motivated by that, CycleGAN used two pairs of a generator and discriminator in a cycle-consistent way to learn two mappings for achieving style transfer [73]. CycleGAN had two phases in style transfer. In the first phase, adversarial loss [83] was used to ensure the quality of generated images. In the second phase, cycle consistency loss [73] was utilized to guarantee that the predicted images fall into the desired domains [100]. CycleGAN had the following merits: it does not require paired training examples [100]. It also does not require the input and output image to share a same low-dimensional embedding space [73]. Due to its excellent properties, many variants of CycleGAN have been conducted for many vision tasks, such as image style transfer [73,101], object transfiguration [102], and image enhancement [103], among others. More GANs on small samples for image style transfer can be found in Table 4.

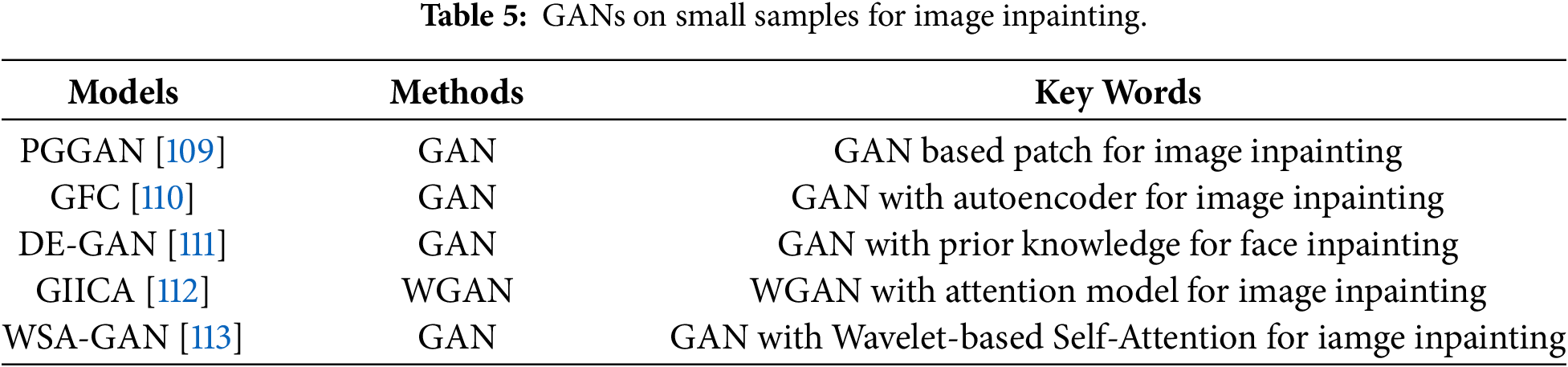

2.2.2 GANs on Small Samples for Image Inpainting

Images have played important roles in human–computer interaction in the real world [106]. However, they may be damaged when collected by digital cameras, which has a negative impact on high-level computer vision tasks. Thus, image inpainting has significant value in the real world [107]. Due to missing pixels, image inpainting faced enormous challenges [108]. To overcome the above shortcomings, GANs are used to generate useful information for repairing damaged images based on the surrounding pixels in the damaged images [109]. For instance, GANs use a reconstruction loss, two adversarial losses, and a semantic parsing loss to guarantee pixel faithfulness and local-global content consistency for face image inpainting [110]. Although this method can generate useful information, it may cause boundary artifacts, distorted structures, and blurry textures inconsistent with surrounding areas [111,112]. To resolve this issue, Zhang et al. embedded prior knowledge into a GAN to generate more detailed information for achieving good performance in image inpainting [111]. Yu et al. exploit a contextual attention mechanism to enhance a GAN for obtaining excellent visual effects in image inpainting [112]. WSA-GAN constructs long-range dependencies of multi-scale frequency information in the wavelet domain and employs dual parallel-coupled streams to dynamically fuse spatial and channel features, thereby effectively addressing structural distortions and high-frequency detail blurring [113]. Typical GANs on small samples for image inpainting are summarized in Table 5.

3 GANs for Image Super-Resolutions

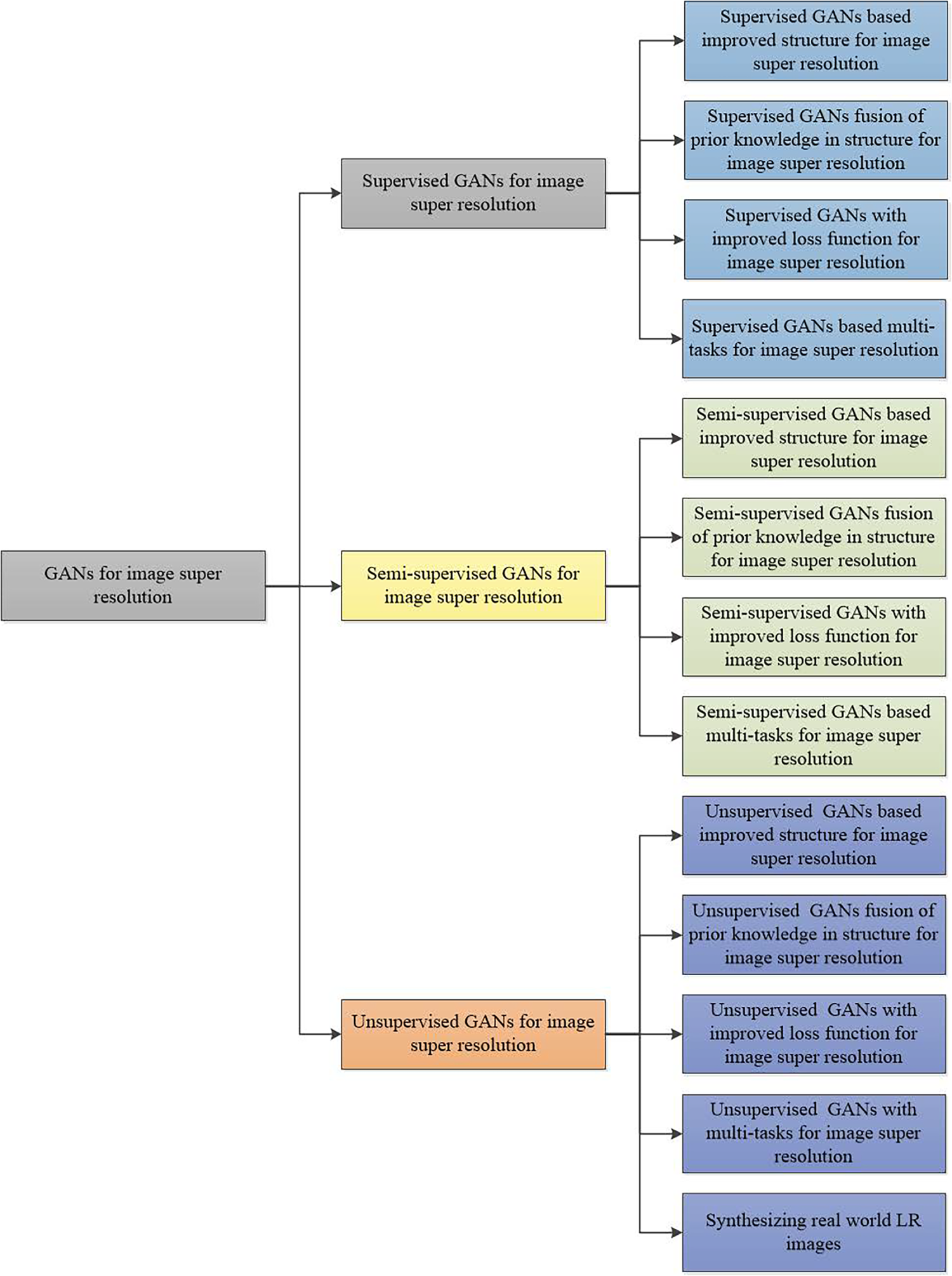

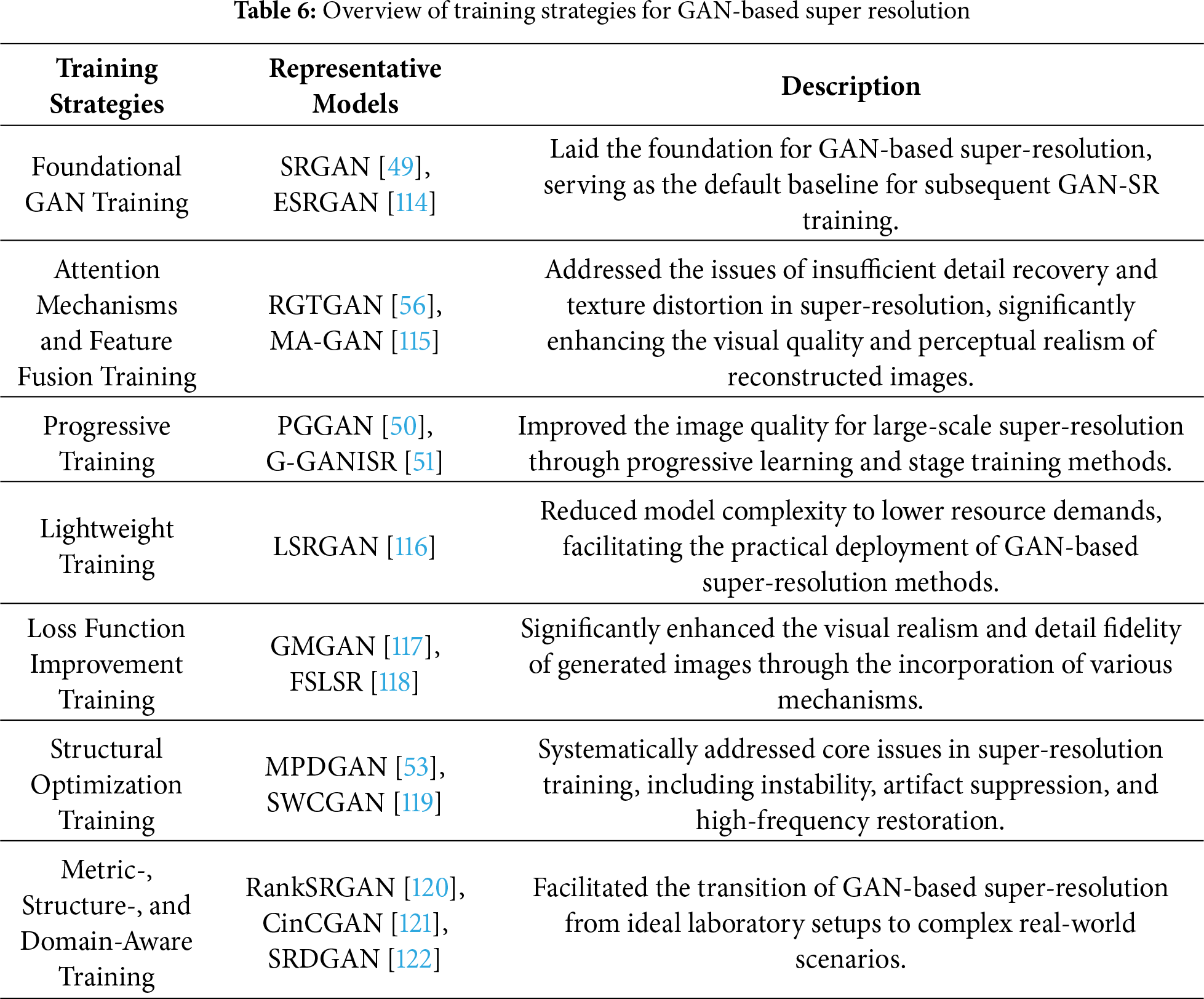

As stated in the illustrations, it is clear that GANs have many important applications in image processing. Also, image super-resolution is crucial for high-level vision tasks, such as medical image diagnosis and weather forecasting. Thus, GANs for image super-resolution are significant for real-world applications. Few comprehensive reviews exist about GANs for image super-resolution. Inspired by that, we present GANs for image super-resolution, categorized into supervised GANs, semi-supervised GANs, and unsupervised GANs for image super-resolution as shown in Fig. 8. To ensure classification rigor and consistency, the dimension of training paradigms (supervised/semi-supervised/unsupervised) should be determined by data provision formats, with the discriminating criterion being the degree of utilization of collected LR-HR paired data during training. Specifically, supervised GANs for image super-resolution include those based on designed network architectures, prior knowledge, improved loss functions, and multi-tasks for image super-resolution. Semi-supervised GANs for image super-resolution comprise those based on designed network architectures, improved loss functions, and multi-tasks for image super-resolution. Unsupervised GANs for image super-resolution consist of those based on designed network architectures, prior knowledge, improved loss functions, and multi-tasks in image super-resolution. Meanwhile, to more intuitively present the diverse training strategies employed in GAN-based SR, we select several representative approaches and summarize them in Table 6. Further information about GANs for image super-resolution is presented below.

Figure 8: Frame of GANs for image super-resolution.

3.1 Supervised GANs for Image Super-Resolution

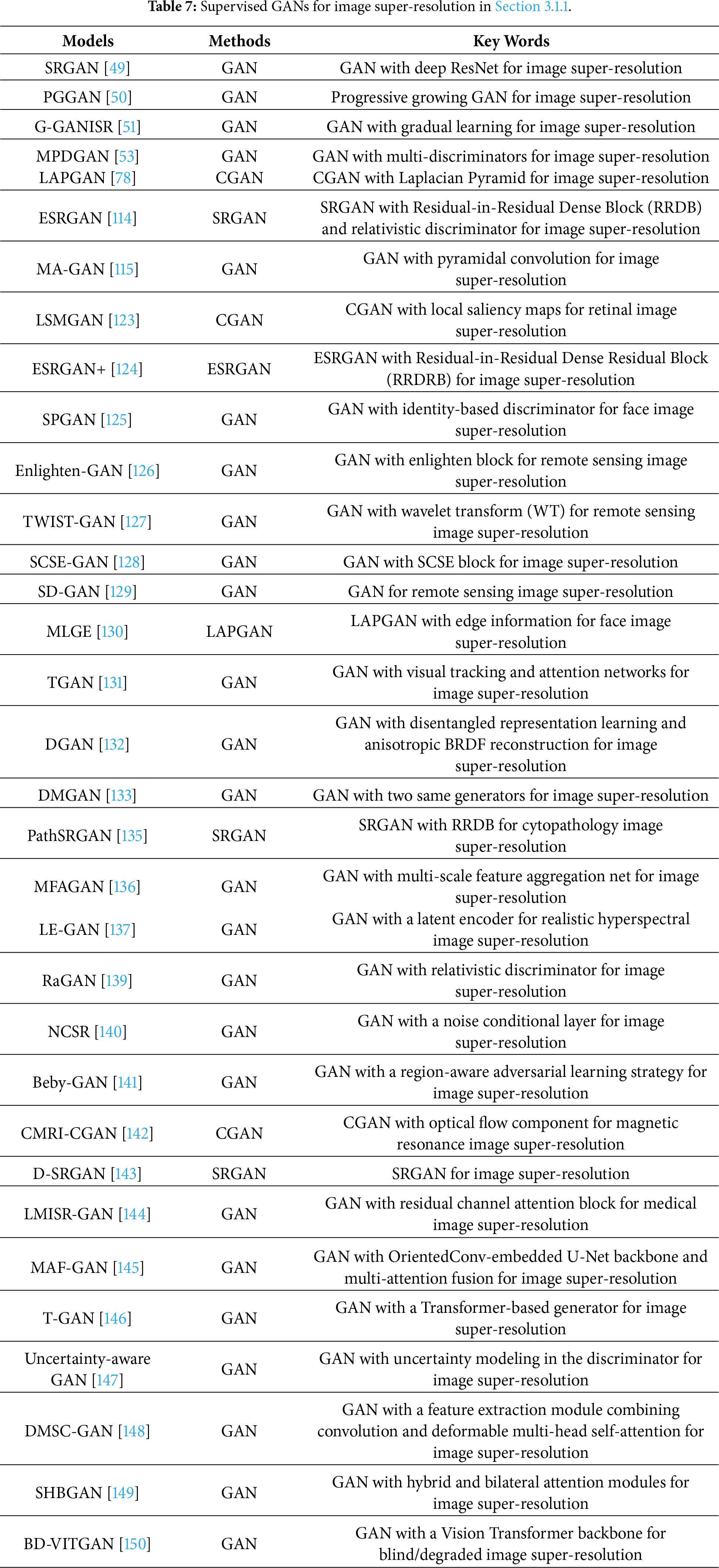

3.1.1 Supervised GANs Based Designed Network Architectures for Image Super-Resolution

GANs trained in a supervised manner for image super-resolution models are very mainstream. Also, designing GANs via improving network architectures are very novel. Thus, improved GANs in a supervised manner for image super-resolution are very popular. This can improve GANs by designing novel discriminator networks, generator networks, attributes of image super-resolution tasks, complexity, and computational costs. For example, the Laplacian pyramid of adversarial networks (LAPGAN) fused a cascade of convolutional networks into a Laplacian pyramid network in a coarse-to-fine way to obtain high-quality images for assisting image recognition tasks [78]. To overcome the effect of large scales, curvature and highlight compact regions can be used to obtain a local salient map for adapting big scales in image resolution [123]. More research on improving discriminators and generators is presented below.

Regarding the design of novel discriminators and generators, progressive growing generative adversarial networks (PGGAN or ProGAN) utilized different convolutional layers to progressively enlarge low-resolution images to improve image quality for image recognition [50]. To achieve better visual quality with more realistic and natural textures, an enhanced SRGAN (ESRGAN) used residual dense blocks within a generator without batch normalization to extract more detailed information for image super-resolution [114]. To eliminate effects of checkerboard artifacts and unpleasant high frequencies, multi-discriminators were proposed for image super-resolution [53]. That is, a perspective discriminator was used to overcome checkerboard artifacts, and a gradient perspective was utilized to address the issue of unpleasant high frequencies in image super-resolution. To improve the perceptual quality of predicted images, ESRGAN+ fused two adjacent layers in a residual learning manner based on residual dense blocks in a generator to enhance memory abilities and added noise in a generator to achieve stochastic variation and capture more details of high-resolution images [124].

Restoring detailed information may generate artifacts, which can seriously affect the quality of restored images [125]. The methods mentioned in this paragraph effectively alleviate this phenomenon. For face image super-resolution, Zhang and Ling used a supervised pixel-wise GAN (SPGAN) to obtain higher-quality face images via given low-resolution face images of multiple scale factors to remove artifacts in image super-resolution [125]. For remote sensing image super-resolution, Gong et al. used enlighten blocks to make a deep network achieve a reliable point and used self-supervised hierarchical perceptual loss to mitigate the effects of artifacts in remote sensing image super-resolution [126]. Dharejo et al. used Wavelet Transform (WT) characteristics into a transferred GAN to eliminate artifacts and improve the quality of predicted remote sensing images [127]. Moustafa and Sayed embedded squeeze-and-excitation blocks and residual blocks into a generator to obtain enhanced high-frequency details [128]. Additionally, Wasserstein distance enhances the stability of training a remote sensing super-resolution model [128]. To address the pseudo-texture problem, a saliency analysis is fused with a GAN to obtain a salient map that can be used to distinguish the differences between a discriminator and a generator [129].

To obtain more detailed information for image super-resolution, many GANs have been developed [130]. Ko and Dai used the Laplacian idea and edge information in a GAN to obtain more useful information to improve the clarity of predicted face images [130]. Using tensor structures in a GAN can enhance texture information for super-resolution [131]. Using multiple generators in a GAN can obtain more realistic texture details, which is useful to recover high-quality images [132,133]. To achieve better visual effects, a gradually growing GAN used gradual growing factors to improve performance in single image super-resolution (SISR) [51]. The RGTGAN enhance texture fidelity by incorporating a dedicated gradient branch that operates in parallel with an image branch, thereby guiding the generator to produce geometrically consistent and richly detailed textures [56]. SA-GAN employs a second-order channel attention mechanism to fully exploit priors inherent to low-resolution inputs, thereby enabling high-quality reconstructions that combine faithful texture preservation with accurate color reproduction [55]. FEGAN prioritizes salient feature extraction and efficient feature fusion, thereby enabling the generation of high-quality super-resolved thermal images under low computational cost [134].

To reduce computational costs and memory, Ma et al. used a two-stage generator in a supervised manner to extract more effective features from cytopathological images, reducing data acquisition costs and saving expenses [135]. Cheng et al. designed a generator using multi-scale feature aggregation and a discriminator based on PatchGAN to reduce memory consumption in a GAN for super-resolution [136]. Besides, distilling a generator and discriminator can enhance training efficiency of a GAN model for super-resolution [136]. To achieve hyperspectral image super-resolution with enhanced robustness to large upsampling factors and noise, LE-GAN integrates a generator incorporating a short-term spectral–spatial relationship window and a latent encoder, jointly capturing localized spectral–spatial dependencies and compact latent representations to improve reconstruction robustness and fidelity [137]. To enable deep feature extraction, SWCGAN employs a generator composed of interleaved convolutional blocks and Swin Transformer layers to synthesize high-resolution images, and a discriminator built exclusively from Swin Transformer blocks to drive adversarial training [119]. NeXtSRGAN employs a relativistic average discriminator that evaluates relative authenticity by contrasting real and generated samples, enabling combined gradient signals for more realistic SR images [138]. More supervised GANs for image super-resolution are shown in Table 7.

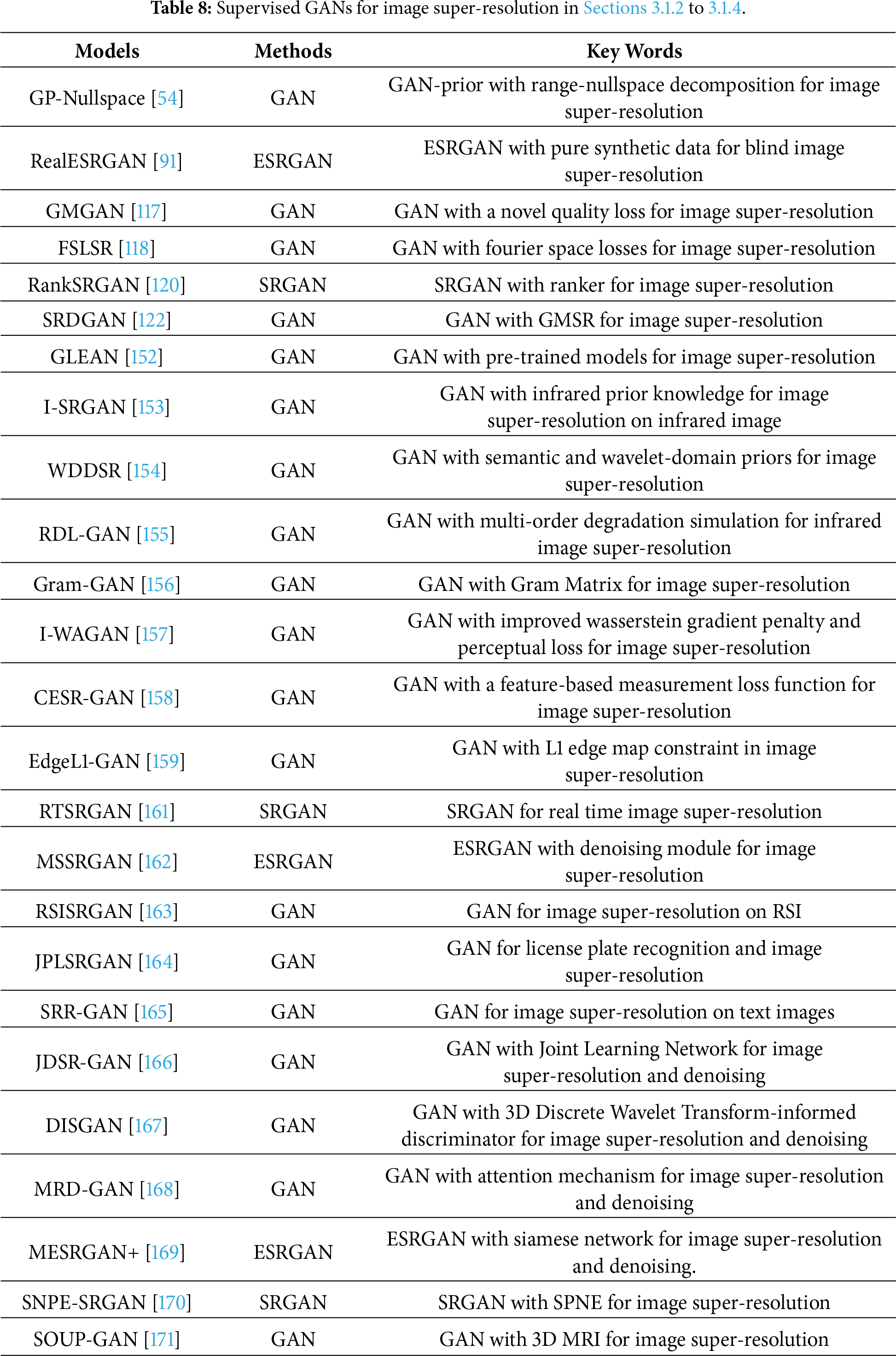

3.1.2 Supervised GANs Based Prior Knowledge for Image Super-Resolution

It is known that the combination of a discriminative method and optimization can create a tradeoff between efficiency and performance [151]. To address the blind distortions in real low-resolution images, Guan et al. utilized a high-resolution to low-resolution image network and a low-resolution to high-resolution image network using the nearest neighbor down-sampling method to learn detailed information and noise priors for image super-resolution [122]. Chan et al. used rich and diverse priors in a given pretrained model to mine latent representative information for generating realistic textures in image super-resolution [152]. Liu et al. incorporated a gradient prior into a GAN to suppress the effects of blur kernel estimation for image super-resolution [153]. Xu et al. proposed the WDDSR, which integrates semantic priors with wavelet-domain priors to enable more accurate image-quality assessment and to provide refined guidance for image generation [154]. In the GAN-prior paradigm, Wang et al. proposed a range–nullspace decomposition for SR to eliminate structural and color inconsistencies [54]. RDL-GAN employs a multi-order degradation simulation framework to model real infrared scene degradation priors, emulating atmospheric, optical, and sensor-induced degradations for enhanced generalization to authentic scenarios [155]. Gram-GAN focuses on the texture similarity of images and overcomes the limitations of spatial location information by leveraging the structural properties of natural images and constructing a Gram matrix [156].

3.1.3 Supervised GANs with Improved Loss Functions for Image Super-Resolution

Loss functions can affect the performance and efficiency of a trained SR model. Thus, we analyze the combination of GANs with various loss functions for image super-resolution [120]. Zhang et al. trained a Ranker to obtain representations of perceptual metrics and used a rank-content loss in a GAN to enhance visual quality in image super-resolution [120]. To eliminate the effects of artifacts, Zhu et al. used an image quality assessment metric to implement a novel loss function to enhance stability in image super-resolution [117]. To decrease the complexity of the GAN model in image super-resolution, Fuoli et al. used a Fourier space supervision loss to recover lost high-frequency information, improve predicted image quality, and accelerate training efficiency in SISR [118]. To enhance the stability of an SR model, using residual blocks and a self-attention layer in a GAN improves the robustness of the trained SR model. Additionally, combining an improved Wasserstein gradient penalty and perceptual loss enhances the stability of an SR model [157]. To extract accurate features, fusing a measurement loss function into a GAN can provide more detailed information for clearer images [158]. Ren et al. imposed an L1 constraint on the edge maps of generated super-resolved and ground-truth high-resolution images to more strongly regularize high-frequency details in the edge domain, thereby producing more realistic reconstructions and better preserving salient edge structures [159].

3.1.4 Supervised GANs Based Multi-Tasks for Image Super-Resolution

Improving image quality is important for high-level vision tasks, such as image recognition [160]. Besides, devices often suffer from the effects of multiple factors, such as device hardware, camera shakes, and shooting distances, which results in collected images being damaged. That may include noise and low-resolution pixels. Thus, addressing the multitasking capabilities of GANs is very necessary [161]. For instance, Adil et al. exploited SRGAN and a denoising module to obtain a clear image. They then used a network to learn unique representative information for person identification [162]. In terms of image super-resolution and object detection, Wang et al. used a multi-class cyclic super-resolution GAN to restore high-quality images and a YOLOv5 detector to complete the object detection task [163]. Zhang et al. used a fully connected network to implement a generator for obtaining high-definition plate images, and a multi-task discriminator was used to enhance super-resolution and recognition tasks [164]. The use of adversarial learning is an effective tool to simultaneously address text recognition and super-resolution [165]. The JDSR-GAN achieves complementarity between the two tasks through joint learning of denoising and super-resolution, thereby improving the overall restoration quality of low-quality faces occluded by masks [166]. DISGAN introduces a 3D Discrete Wavelet Transform-informed discriminator to guide the generator towards minimal noise generation. The model possesses inherent denoising capability while performing super-resolution, enhancing its practicality and generalization [167].

In terms of complex damaged image restoration, GANs are excellent choices [168]. For instance, Li et al. used a multi-scale residual block and an attention mechanism in a GAN to remove noise and restore detailed information in CTA image super-resolution [168]. Nneji et al. improved the VGG19 model to fine-tune two sub-networks with a wavelet technique to simultaneously address COVID-19 image denoising and super-resolution problems [169]. More information can be found in Table 8.

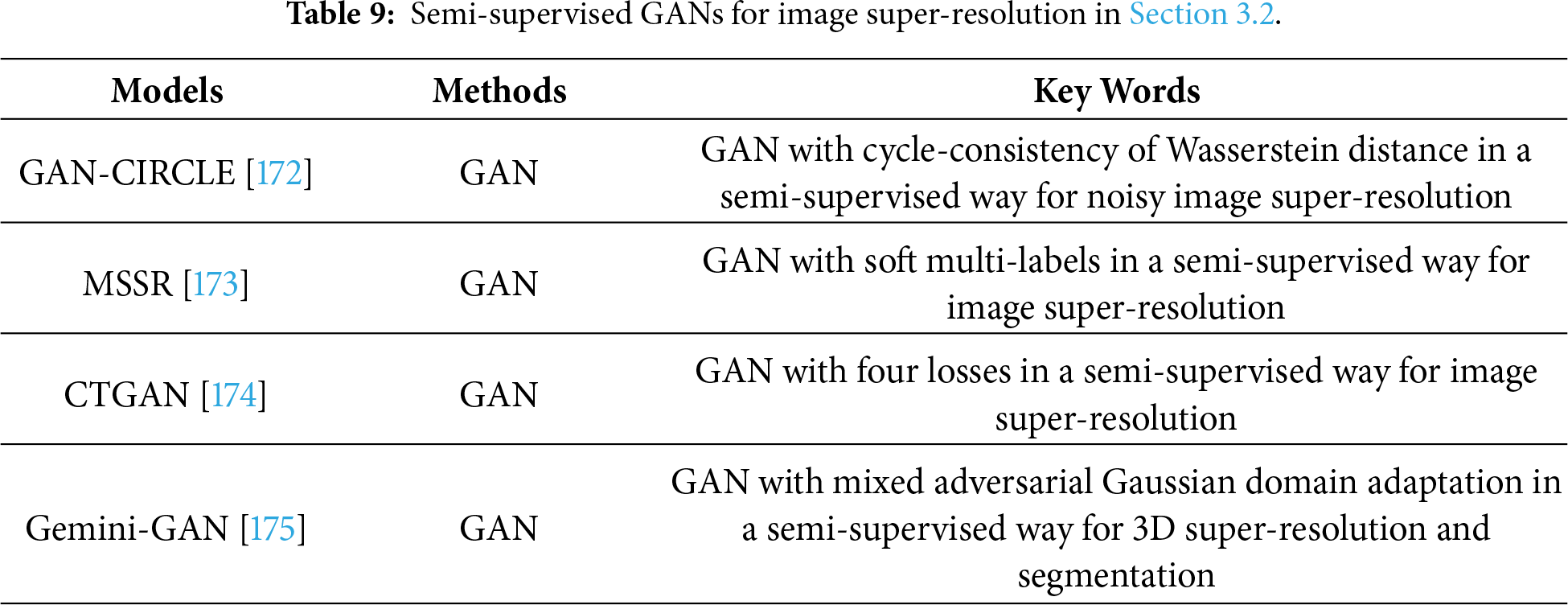

3.2 Semi-Supervised GANs for Image Super-Resolution

3.2.1 Semi-Supervised GANs Based Designed Network Architectures for Image Super-Resolution

For real-world problems with limited data, semi-supervised techniques have been developed. For instance, asking patients to undergo multiple CT scans with additional radiation doses to generate paired CT images for training SR models in clinical practice is unrealistic. Motivated by this, GANs are used in a semi-supervised manner for image super-resolution [172]. For example, You et al. built a mapping from noisy low-resolution images to high-resolution images [172]. Besides, combining a convolutional neural network and residual learning operations in a GAN can facilitate the extraction of more detailed information for image super-resolution [172]. To address super-resolution with limited labeled samples, Xia et al. used soft multi-labels to implement a semi-supervised super-resolution method for person re-identification [173]. That is, first, a GAN is used to conduct an SR model. Second, a graph convolutional network is exploited to construct relationships among the local features of a person. Third, labeled samples are used to train the unlabeled samples via a graph convolutional network.

3.2.2 Semi-Supervised GANs with Improved Loss Functions and Semi-Supervised GANs Based Multi-Tasks for Image Super-Resolution

The combinations of semi-supervised GANs and loss functions are also effective in image super-resolution [174]. For example, Jiang et al. combined an adversarial loss, a cycle-consistency loss, an identity loss, and a joint sparsifying transform loss into a GAN in a semi-supervised way to train a CT image super-resolution model [174]. Although this model made significant progress on some evaluation criteria, it was still disturbed by artifacts and noise.

In terms of multi-tasking, Savioli et al. proposed to use a mixed adversarial Gaussian domain adaptation in a GAN in a semi-supervised way to obtain more useful information for implementing a 3D super-resolution and segmentation [175]. This method achieved better performance on many metrics. More information on semi-supervised GANs in image super-resolution can be illustrated in Table 9.

3.3 Unsupervised GANs for Image Super-Resolution

Collected images in the real world have fewer pairs, which poses a challenge for supervised GANs in SISR. To address this phenomenon, unsupervised GANs have been presented [121]. Unsupervised GANs for image super-resolution can be divided into six types: improved architectures, prior knowledge, loss functions, multi-task learning, real image super-resolution, and real-world LR image synthesis in GAN-based unsupervised super-resolution, as follows.

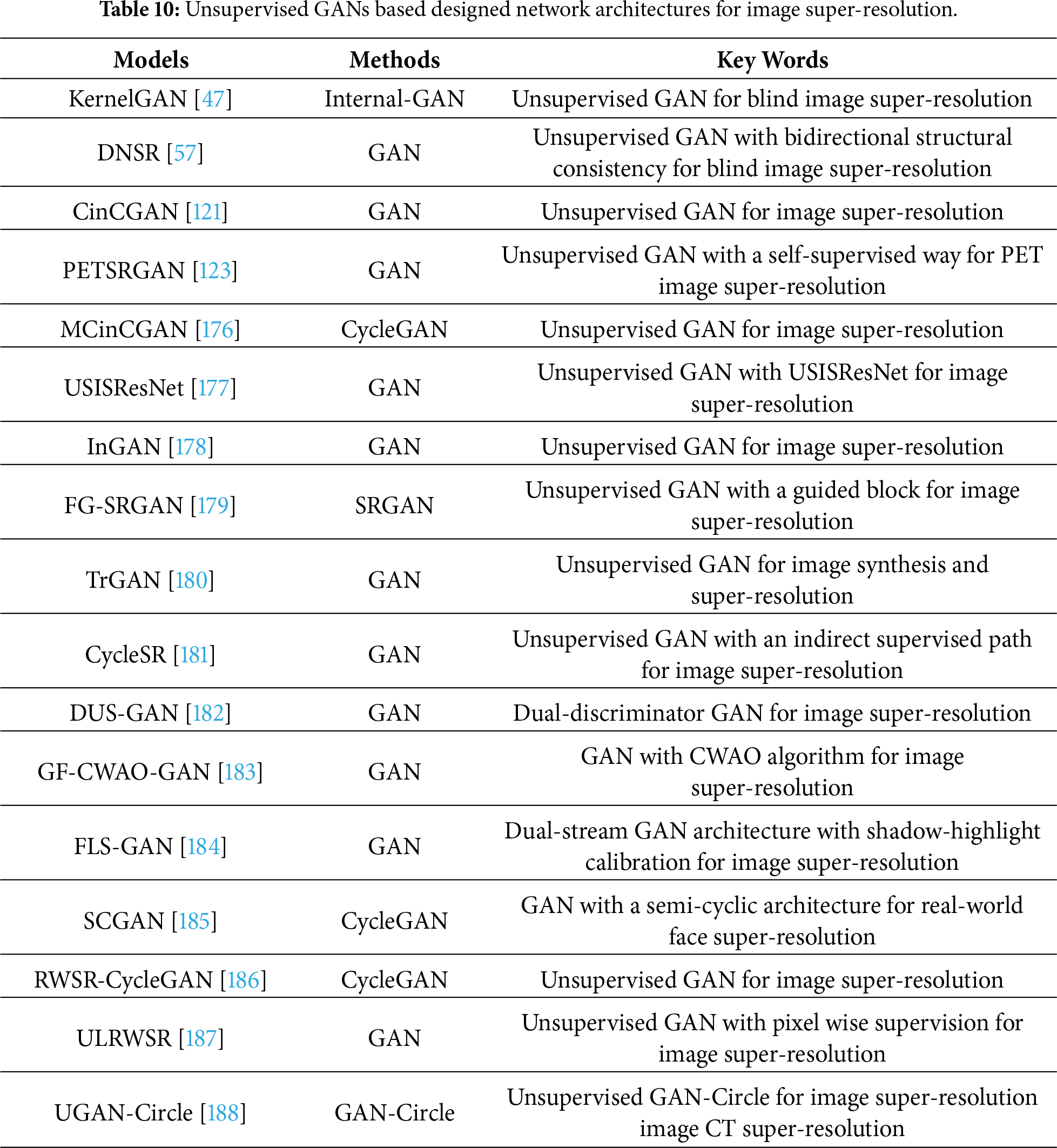

3.3.1 Unsupervised GANs Based Designed Network Architectures for Image Super-Resolution

CycleGANs have obtained success in unsupervised ways in image-to-image translation applications [73]. Accordingly, CycleGANs have been extended into SISR to address unpaired images (i.e., low-resolution and high-resolution) in real-world datasets [121]. Yuan et al. used a CycleGAN for blind super-resolution over the following phases [121]. The first phase removed noise from noisy and low-resolution images. The second phase resorted to an up-sampled operation in a pre-trained deep network to enhance the obtained low-resolution images. The third phase used a fine-tuning mechanism for a GAN to obtain high-resolution images. To address blind super-resolution, bidirectional structural consistency was used in a GAN in an unsupervised way to train a blind SR model and construct high-quality images [57]. Alternatively, Zhang et al. exploited multiple GANs as basis components to implement an improved CycleGAN to train an unsupervised SR model [176]. This method achieves comparable performance with many state-of-the-art supervised models.

There are also other popular methods that use GANs in unsupervised ways for image super-resolution [177]. To improve the learning ability of a SR model in the real world, it combines unsupervised learning and a mean opinion score in a GAN to improve perceptual quality in real-world image super-resolution [177]. To break the fixed downscaling kernel, KernelGAN [47], TVG-KernelGAN [48], and Internal-GAN [178] are used to obtain an internal distribution of patches in the blind image super-resolution. To accelerate the training speed, a guidance module was used in a GAN to quickly seek a correct mapping from a low-resolution domain to a high-resolution domain in unpaired image super-resolution [179]. To improve the accuracy of medical diagnosis, Song et al. used dual GANs in a self-supervised way to mine high-dimensional information for PET image super-resolution [123]. Besides, other SR methods can have an important reference value for unsupervised GANs in terms of image super-resolution. For example, to improve both image synthesis quality and representation learning performance under the unsupervised setting, Wang et al. used an unsupervised method to translate real low-resolution images to real high-resolution images [180]. To achieve better degradation learning and super-resolution performance, Chen et al. resorted to a supervised super-resolution method to convert obtained real low-resolution images into real high-resolution images [181]. In the unsupervised domain, DUS-GAN adopts a dual-discriminator architecture to jointly supervise global content and high-frequency detail generation, thereby improving the fidelity of synthesized images [182]. To promote more stable and reliable training convergence, GF-CWAO-GAN employs the CWAO algorithm to accelerate weight optimization [183]. The FLS-GAN model employs a dual-stream architecture, consisting of an Adaptive Shadow–Highlight Calibration generator and a Near–Far Field Fusion Discriminator, to enable high-fidelity super-resolution reconstruction of deep-sea sonar imagery [184]. The SCGAN model achieves more accurate and robust real-world face super-resolution by establishing a semi-cyclic architecture with two independent degradation branches and a shared restoration branch [185]. More information of mentioned unsupervised GANs for image super-resolution can be shown in Table 10 as follows.

3.3.2 Unsupervised GANs Based Prior Knowledge for Image Super-Resolution

Combining unsupervised GANs and prior knowledge in unsupervised GANs can better address unpaired image super-resolution [189]. Lin et al. combined data error, a regular term, and an adversarial loss to guarantee the consistency of local-global content and pixel faithfulness in a GAN in an unsupervised way to train an image super-resolution model [189]. To better support medical diagnosis, Das et al. combined adversarial learning in a GAN, cycle consistency, and prior knowledge, i.e., identity mapping prior to facilitate more useful information, i.e., spatial correlation, color, and texture information for obtaining cleaner high-quality images [190]. In terms of remote sensing super-resolution, random noise is used in a GAN to reconstruct satellite images [191]. Then, the authors conducted image prior by transforming the reference image into a latent space [191]. Finally, they updated the noise and latent space to transfer the obtained structural information and texture information to improve the resolution of remote sensing images [191].

3.3.3 Unsupervised GANs with Improved Loss Functions for Image Super-Resolution

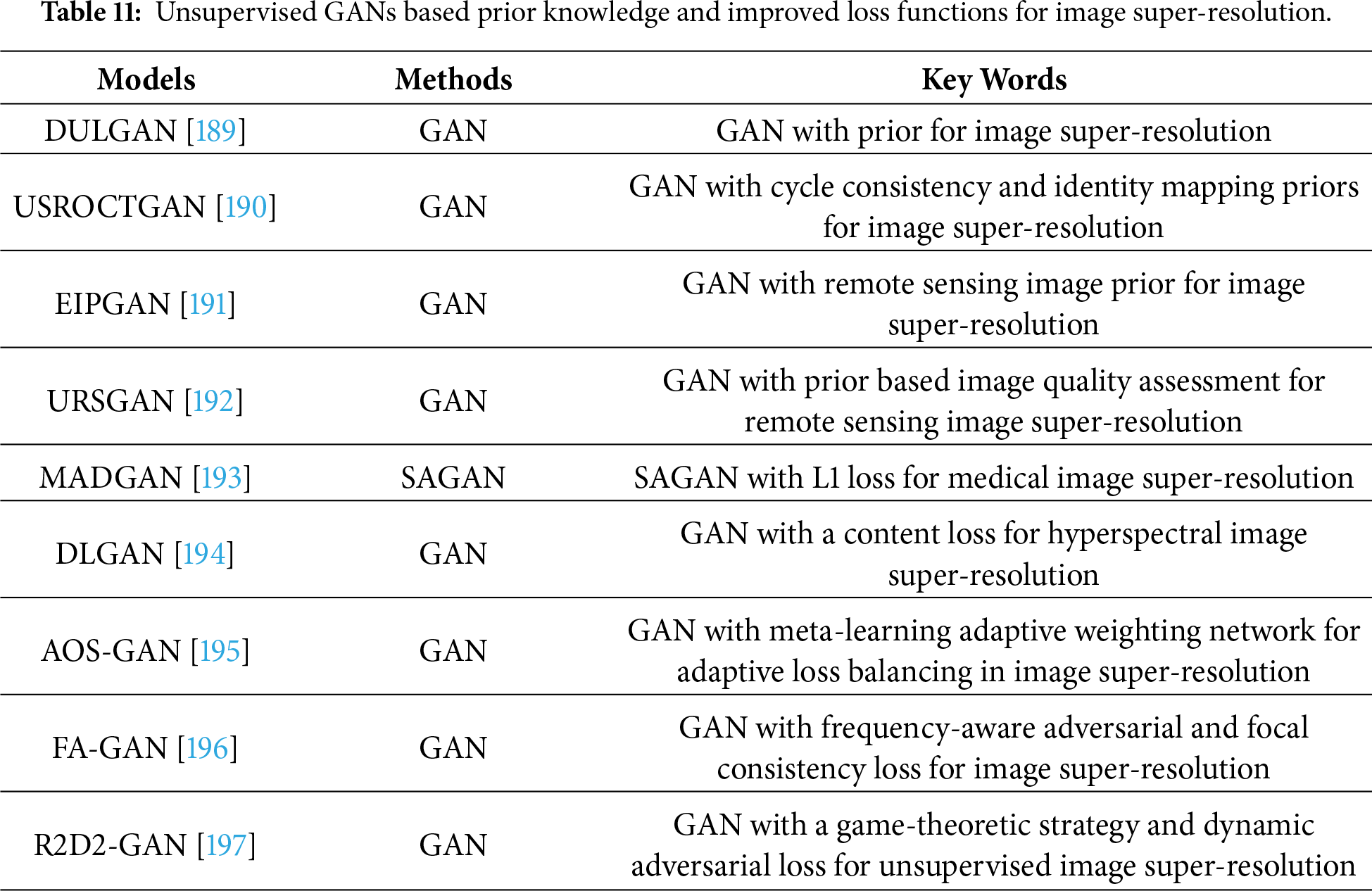

Combining loss functions and GANs in an unsupervised way is useful for training image super-resolution models in the real world [192]. For instance, Zhang et al. used a novel loss function based on image quality assessment in a GAN to obtain accurate texture information and more visual effects [192]. Besides, an encoder-decoder architecture is embedded in this GAN to mine more structural information for pursuing high-quality images of a generator from this GAN [192]. Han et al. depended on SAGAN and L1 loss in a GAN in an unsupervised manner to analyze multi-sequence structural MRI for detecting brain anomalies [193]. Also, Zhang et al. fused a content loss into a GAN in an unsupervised manner to improve the SR results of hyperspectral images [194]. AOS-GAN incorporates an adaptive weighting network that employs meta-learning to automatically learn dynamic weights for individual loss components, thereby enabling adaptive balancing of objectives and more efficient model optimization [195]. Zhang et al. implemented a frequency-aware adversarial loss and a frequency-aware focal consistency loss to dynamically penalize spectral errors and to place additional emphasis on high-frequency components, thereby enhancing the fidelity of super-resolved images [196]. R2D2-GAN better leverages the information consistency between the two modalities during unsupervised training by adopting a game-theoretic strategy and dynamic adversarial loss [197]. Unsupervised GANs based on prior knowledge and improved loss functions for image super-resolution can be summarized in Table 11.

3.3.4 Unsupervised GANs Based Multi-Tasks for Image Super-Resolution

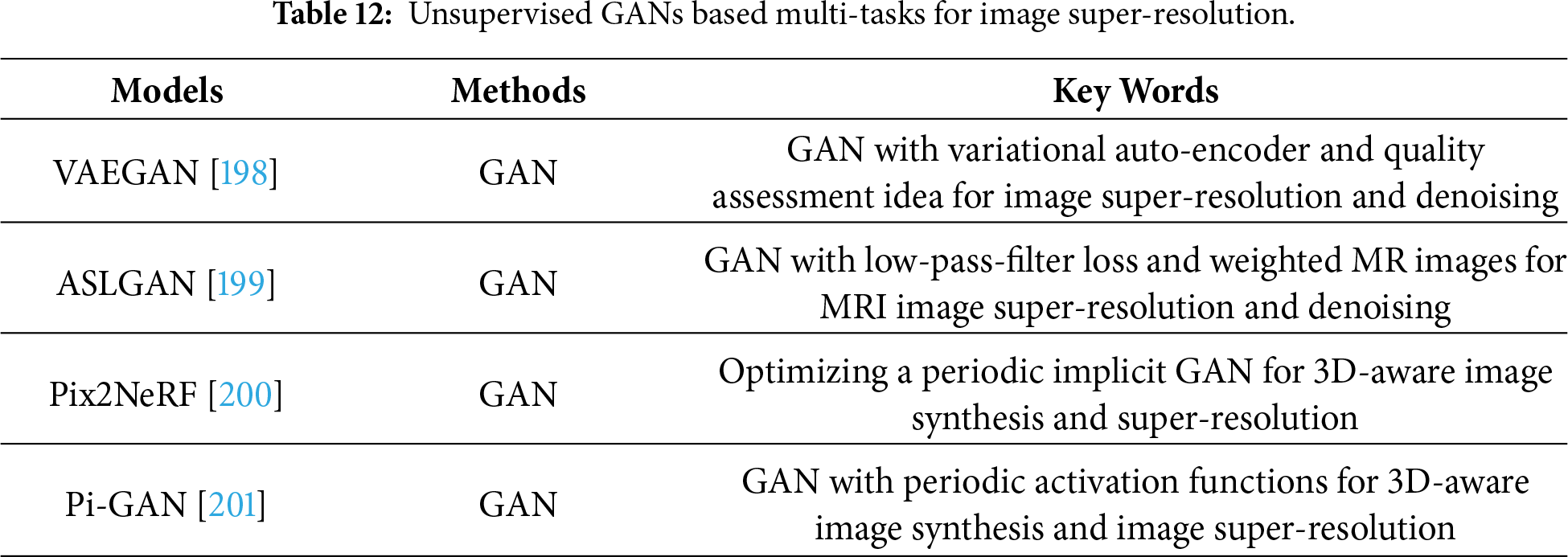

Unsupervised GANs are good tools to address multi-tasking, i.e., noisy low-resolution image super-resolution. For instance, Prajapati et al. transferred a variational auto-encoder and the idea of quality assessment in a GAN to address image denoising and SR tasks [198]. Cui et al. relied on low-pass-filter loss and weighted MR images in an unsupervised GAN to mine texture information for removing noise and recovering the resolution of MRI images [199]. Cai et al. presented a pipeline that optimizes a periodic implicit GAN to obtain neural radiance fields for image synthesis and image super-resolution based on 3D [200]. More unsupervised GANs-based multi-tasking for image super-resolution can be presented in Table 12.

3.3.5 Unsupervised GANs for Real Image Super-Resolution

An important application of GAN-based SR models is dealing with real-world LR images. To eliminate checkerboard artifacts, an upsampling module containing a bilinear interpolation and a transposed convolution was used in an unsupervised CycleGAN to improve the visual effects of restored images in the real world [186]. To recover more natural image characteristics, Lugmayr et al. combined unsupervised and supervised ways for blind image super-resolution [187]. The first step learned to invert the effects of the bicubic downsampling operation in a GAN in an unsupervised way to extract useful information from natural images [187]. To generate image pairs in the real world, the second step used a pixel-wise network in a supervised way to obtain high-resolution images [187]. To remove unpleasing noise and artifacts, Park et al. [202] divided the large and complicated distribution of real-world images into smaller subsets based on similar content. Then, they learned various contents and separable features via multiple discriminators [202]. In terms of computed tomography (CT) images, Li et al. [203] utilized noise injection and a GAN to generate realistic low-resolution images to build training image pairs. This data preprocessing operation enables the proposed KerGAN to generate more precise details and better perceptual quality in medical images [203].

3.3.6 Synthesizing Real World LR Images

An important application of GAN-based SR models is dealing with real-world LR images. So, how to obtain real image pairs with different scale factors to use as training data becomes a problem that we must solve. Starting from SRCNN [23], there are three main ways to prepare super-resolution datasets: synthetic data super-resolution, blind super-resolution, and real image super-resolution. Among them, synthetic data super-resolution is the most common method for data preparation; however, it often exhibits poor generalization due to its inability to account for the complex degradation factors present in real-world scenarios. Therefore, on the basis of the interpolation method, blind super-resolution models add more real-world degradation factors, such as noise, blur, and compression, to the degradation kernel to improve the performance of real-world applications [91,204]. Finally, the best theoretical performance is achieved by directly using a camera to capture real-world images of the same scene at different magnifications. However, in this process, the problem of lens distortion is encountered, which makes image acquisition difficult. Currently, the publicly available real image datasets include RealSR [205] and DRealSR [206].

4 Comparing Performance of GANs for Image Super-Resolution

To make readers understand GANs in image super-resolution more conveniently, we compare the super-resolution performance of these GANs based on datasets and experimental settings, as well as quantitative and qualitative analysis in this section. More information is provided as follows.

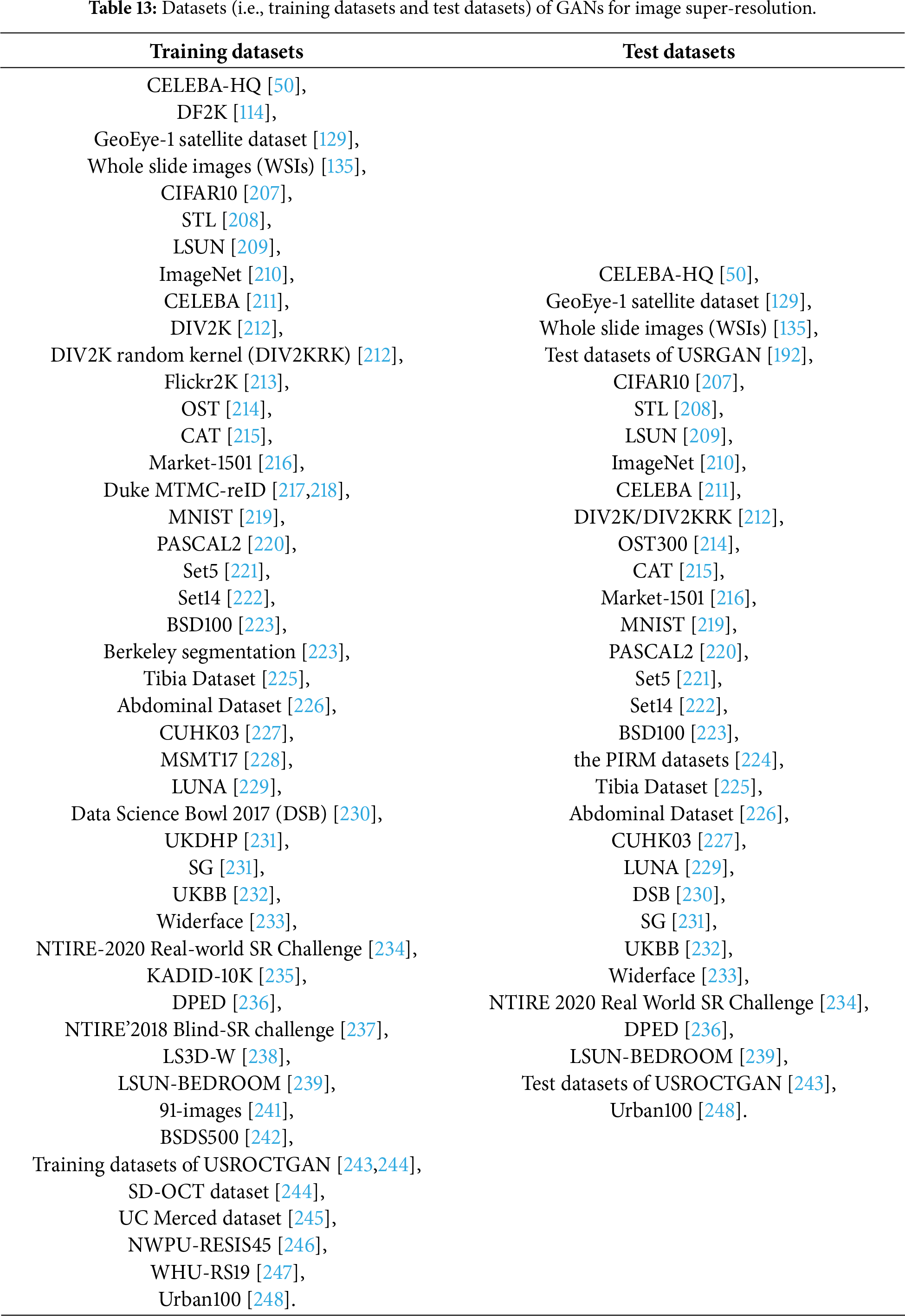

The GANs can be divided into three kinds mentioned above for image super-resolution, with datasets correspondingly categorized into three types for both training and testing. These datasets can be summarized as follows.

(1) Supervised GANs for image-resolution

Training datasets: CIFAR10 [207], STL [208], LSUN [209], ImageNet [210], CELEBA [211], DIV2K [212], Flickr2K [213], OST [214], CAT [215] Market-1501 [216], Duke MTMC-reID [217,218], GeoEye-1 satellite dataset [129], Whole slide images (WSIs) [135], MNIST [219] and PASCAL2 [220].

Test datasets: CIFAR10 [207], STL [208], LSUN [209], Set5 [221], Set14 [222], BSD100 [223], CELEBA [211], OST300 [214], CAT [215], PIRM datasets [224], Market-1501 [216], GeoEye-1 satellite dataset [129], WSIs [135] MNIST [219] and PASCAL2 [220].

(2) Semi-supervised GANs for image-resolution

Training datasets: Market-1501 [216], Tibia Dataset [225], Abdominal Dataset [226], CUHK03 [227], MSMT17 [228], LUNA [229], Data Science Bowl 2017 (DSB) [230], UKDHP [231], SG [231] and UKBB [232].

Test datasets: Tibia Dataset [225], Abdominal Dataset [226], CUHK03 [227], Widerface [233], LUNA [229], DSB [230], SG [231] and UKBB [232].

(3) Unsupervised GANs for image-resolution

Training datasets: CIFAR10 [207], ImageNet [210], DIV2K [212], DIV2K random kernel (DIV2KRK) [212], Flickr2K [213], Widerface [233], NTIRE 2020 Real World SR challenge [234], KADID-10K [235], DPED [236], DF2K [114], NTIRE’ 2018 Blind-SR challenge [237], LS3D-W [238], CELEBA-HQ [50], LSUN-BEDROOM [239], ILSVRC2012 [210,240], NTIRE 2020 [234], 91-images [241], Berkeley segmentation [223], BSDS500 [242], Training datasets of USROCTGAN [243,244], SD-OCT dataset [244], UC Merced dataset [245], NWPU-RESIS45 [246] and WHU-RS19 [247].

Test datasets: CIFAR10 [207], ImageNet [210], Set5 [221], Set14 [222], BSD100 [223], DIV2K [212], Urban100 [248], DIV2KRK [212], Widerface [233], NTIRE 2020 [234], NTIRE 2020 Real-world SR Challenge [234], NTIRE 2020 Real World SR challenge [234], DPED [236], CELEBA-HQ [50], LSUN-BEDROOM [239], Test datasets of USROCTGAN [243,244], and Test datasets of USRGAN [192].

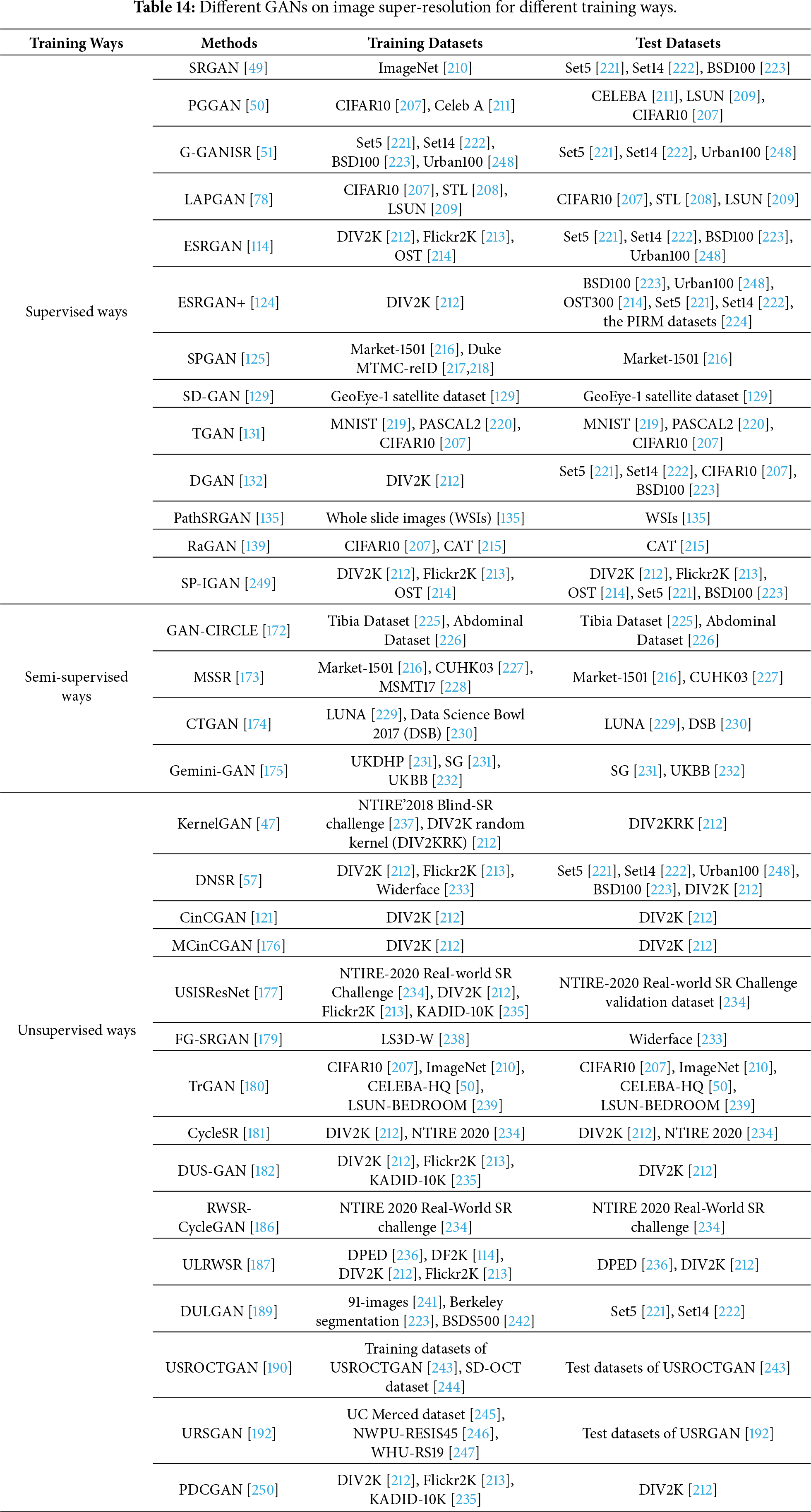

The datasets mentioned regarding GANs for image super-resolution are presented in Table 13. To make readers more easily understand datasets of different methods using various GANs for different training approaches in image super-resolution, we present Table 14 to provide their detailed information.

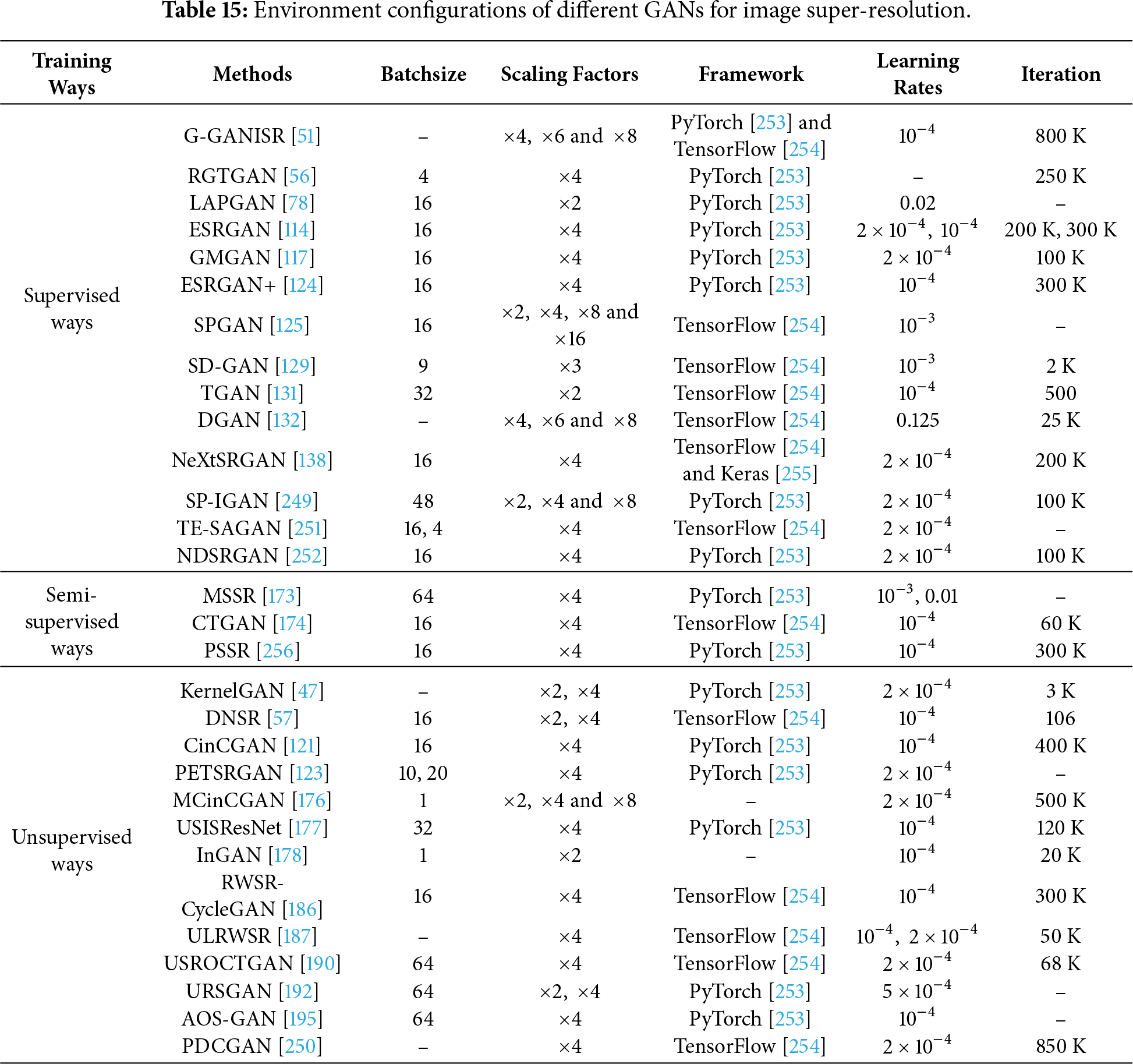

4.2 Environment Configurations

In this section, we compare the differences in environment configurations among different GANs using various training methods (i.e., supervised, semi-supervised, and unsupervised) for image super-resolution, which include batch size, scaling factors, deep learning framework, learning rate, and iterations. This can help readers more easily conduct experiments with GANs for image super-resolution. The information can be summarized as shown in Table 15.

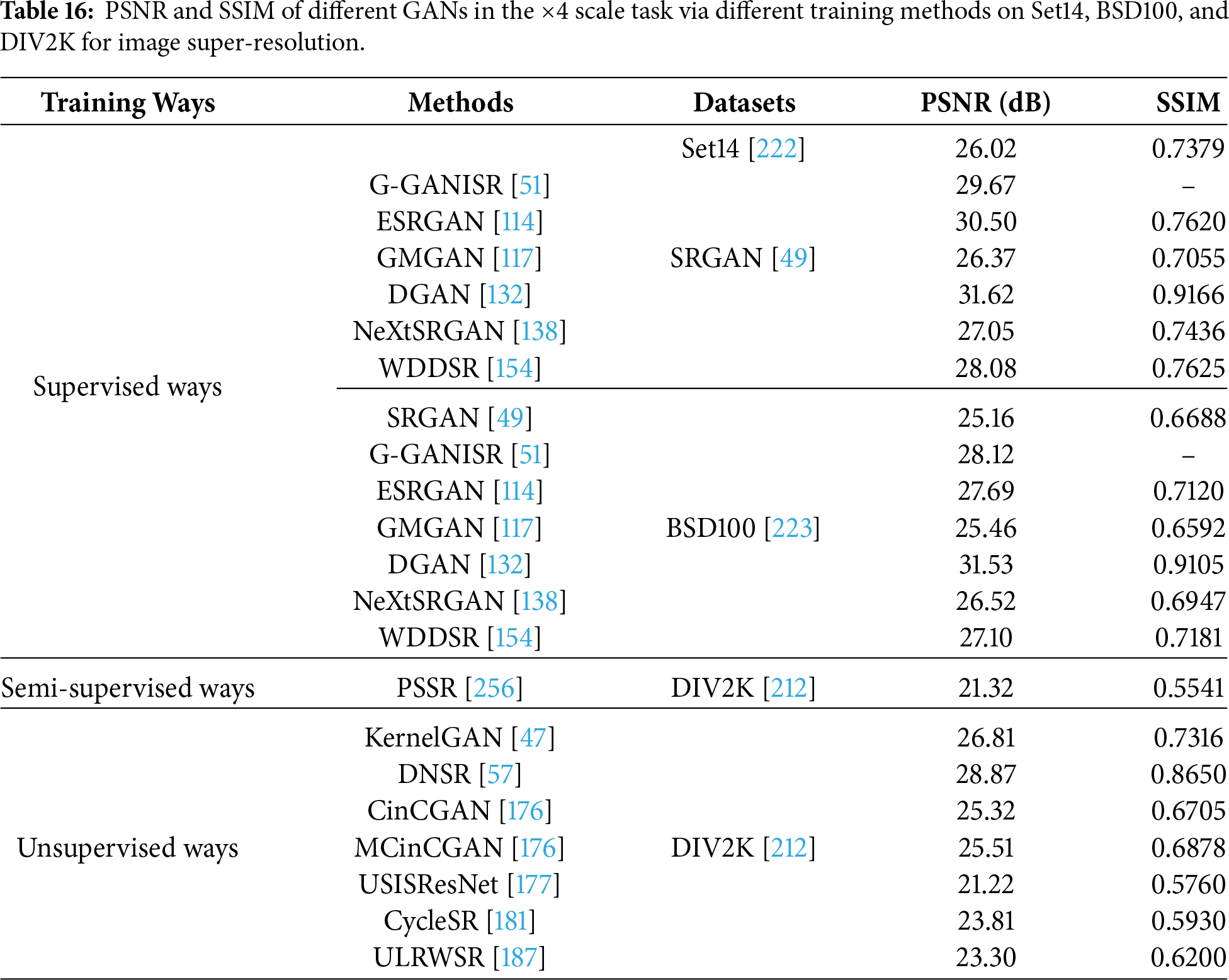

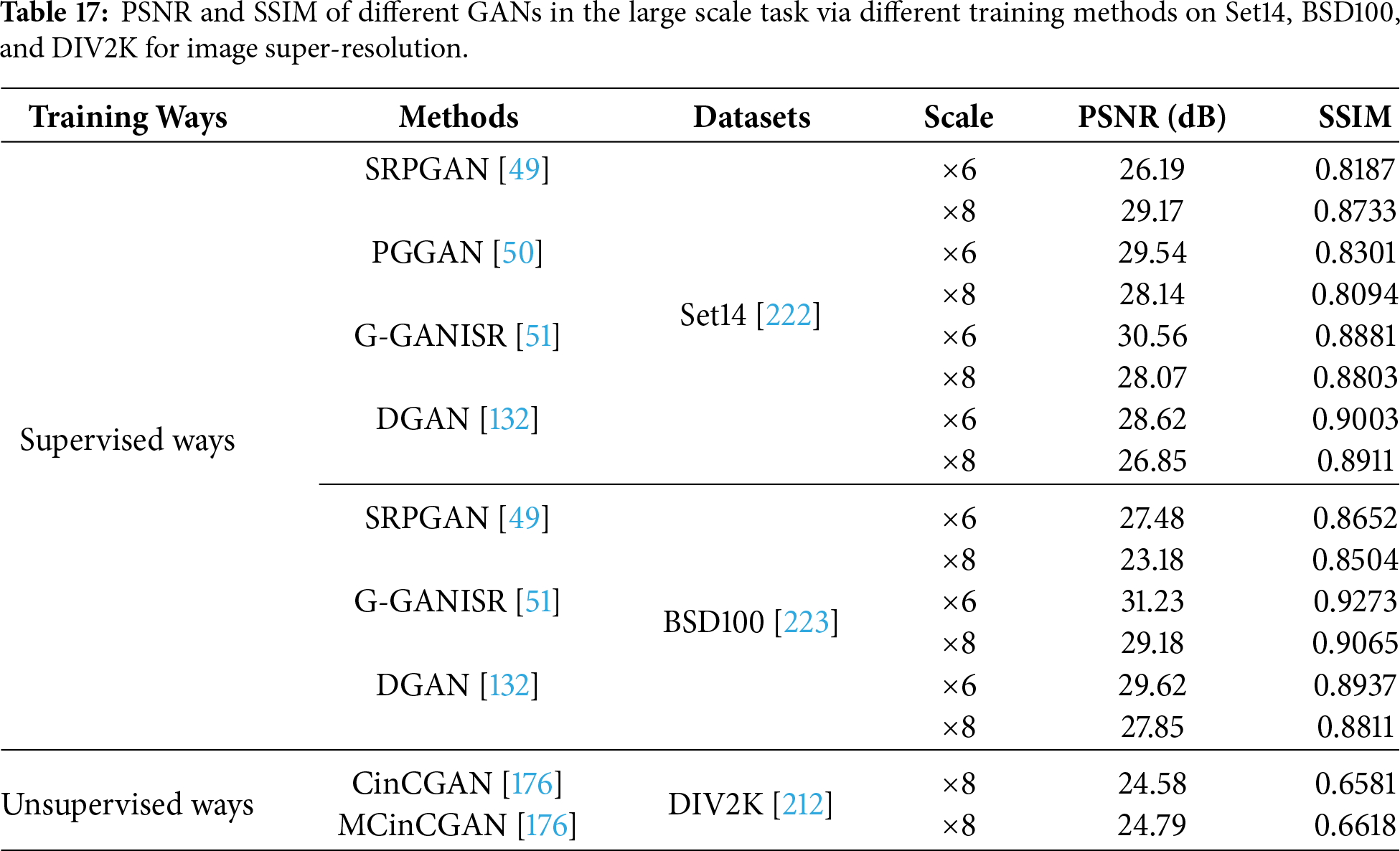

To make readers understand the performance of various GANs in image super-resolution, we use quantitative analysis and qualitative analysis to evaluate the super-resolution effects of these GANs. Quantitative analysis includes PSNR and SSIM of various methods using three training approaches on different datasets for image super-resolution, as well as the running time and complexities of different GANs. Qualitative analysis is used to evaluate the quality of the recovered images.

4.3.1 Quantitative Analysis of Different GANs for Image Super-Resolution

We use SRGAN [49], PGGAN [50], ESRGAN [114], ESRGAN+ [124], DGAN [132], G-GANISR [51], GMGAN [117], SRPGAN [49], DNSR [57], DULGAN [189], CinCGAN [121],MCinCGAN [176], USISResNet [177], ULRWSR [187], KernelGAN [47] and CycleSR [181] in one training way on a public dataset from Set14 [222], BSD100 [223] and DIV2K [212] to test performance for different scales in image super-resolution, as shown in Tables 16 and 17. For instance, ESRGAN [114] outperforms SRGAN [49] in terms of PSNR and SSIM in a supervised manner on Set14 for

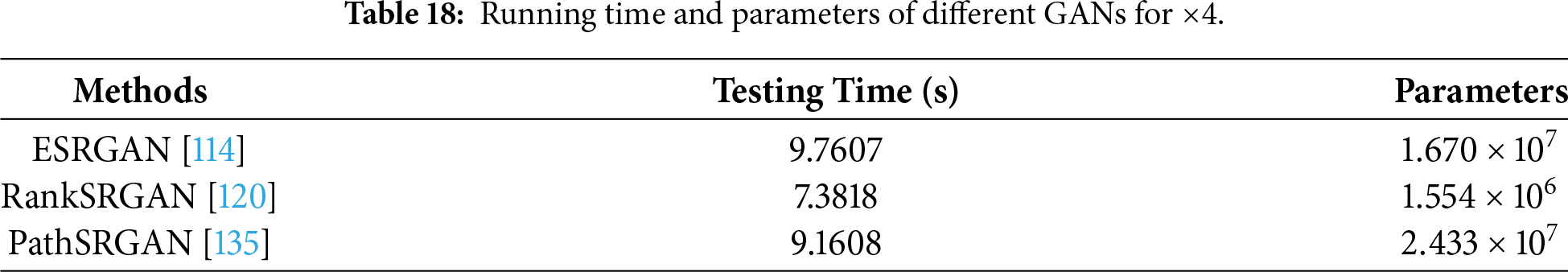

Running time and complexity are important indices to evaluate the performance of image super-resolution techniques on real devices [257]. According to that, we conduct experiments with four GANs (i.e., ESRGAN [114], PathSRGAN [135], RankSRGAN [120], and KernelGAN [47]) on two low-resolution images with sizes and for

4.3.2 Qualitative Analysis of Different GANs for Image Super-Resolution

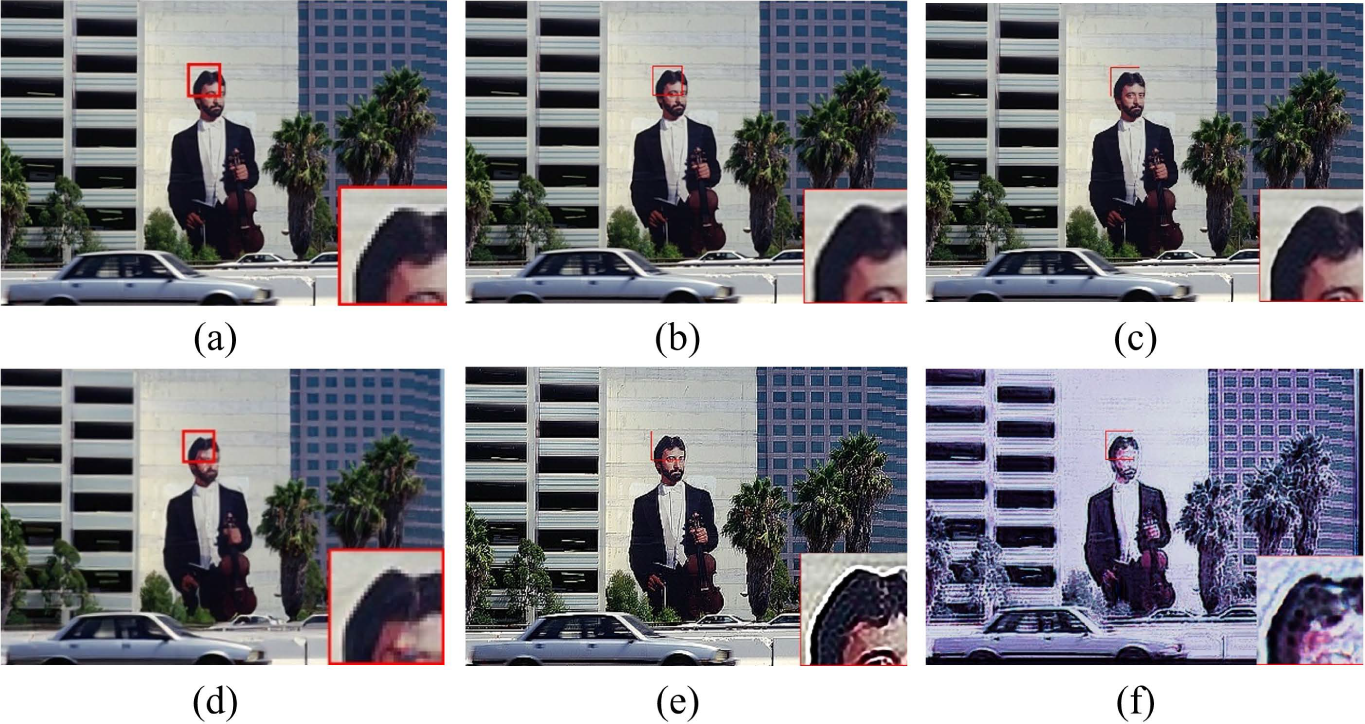

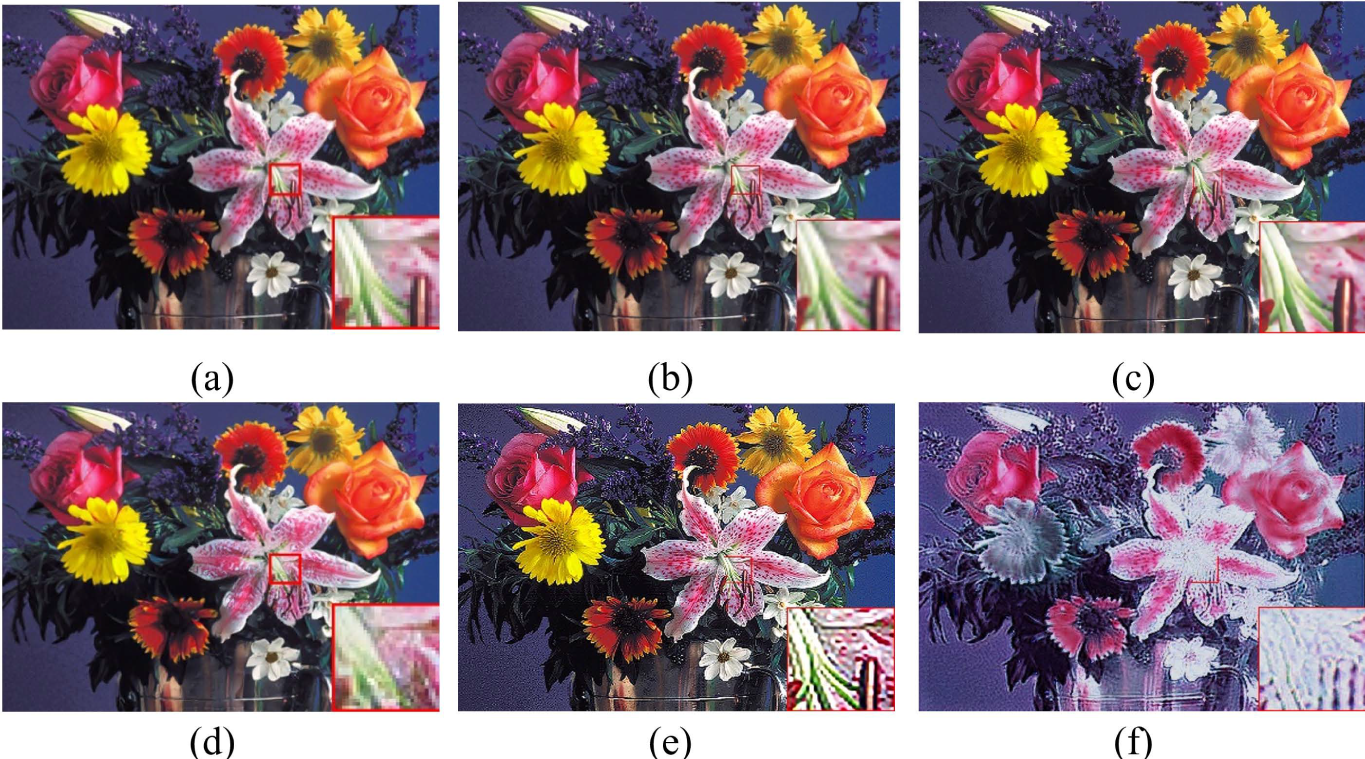

To test the visual effects of different GANs for image super-resolution, we choose Bicubic, ESRGAN [114], RankSRGAN [120], KernelGAN [47], and PathSRGAN [135] to conduct experiments to obtain high-quality images for

Figure 9: Visual images of different GANs on an image of BSD100 for

Figure 10: Visual images of different GANs on an image of Set14 for

5 Challenges and Directions of GANs for Image Super-Resolution

Variations of GANs have achieved excellent performance in image super-resolution. Accordingly, we provide an overview of GANs for image super-resolution to serve as a guide for readers to better understand these methods. In this section, we analyze the challenges faced by current GANs for image super-resolution and provide corresponding solutions to facilitate the development of GANs in image super-resolution.

Although GANs perform well in image super-resolution, they encounter the following challenges:

1) Unstable training. Due to the confrontation between generator and discriminator, GANs are unstable in the training process.

2) Large computational resources and high memory consumption. A GAN is composed of a generator and discriminator, which may increase computational costs and memory consumption. This may lead to a higher demand on digital devices.

3) High-quality images without references. As mentioned in Section 3.3.6, the dependency on paired HR/LR data limits the application of GAN-based super-resolution in real-world scenarios.

4) Complex image super-resolution. Most GANs can deal with a single task, i.e., image super-resolution and synthetic noisy image super-resolution. However, collected images by digital cameras in the real world suffer from drawbacks, i.e., low-resolution and dark-lighting images, complex noisy and low-resolution images. Besides, digital cameras have a higher requirement for the combination of low-resolution images and image recognition. Thus, the existing GANs for image super-resolution cannot effectively repair low-resolution images under the mentioned conditions.

5) Metrics of GANs for image super-resolution. Most of the existing GANs used PSNR and SSIM to test super-resolution performance of GANs. However, PSNR and SSIM cannot fully measure the quality of restored images. Thus, finding effective metrics is very essential for GANs for image super-resolution.

6) GANs are sensitive to hyperparameters and the choice of architecture, which can affect their performance and lead to overfitting.

7) GANs can generate unrealistic artifacts or distortions in the output images, especially in regions with low texture or detail, which can affect the visual quality of the output images.

8) GAN-based image super-resolution methods may not perform well on certain types of images or degradation scenarios.

To address these problems, some potential research points about GANs for image super-resolution are stated below.

1) Enhancing a generator and discriminator extracts salient features to enhance the stability of GANs on image super-resolution. For example, using attention mechanism (i.e., Transformer [258]), residual learning operations, concatenation operations act as the generator and discriminator to extract more effective features to enhance stability, accelerating GAN models in image super-resolution.

2) Designing lightweight GANs for image super-resolution. Reducing convolutional kernels, group convolutions, and combining prior and shallow network architectures can decrease the complexity of GANs for image super-resolution.

3) Using self-supervised methods can obtain high-quality reference images.

4) Combining the attributes of different low-level tasks, along with decomposing complex low-level tasks into a single low-level task via different stages in different GANs, can repair complex low-resolution images, which can help with high-level vision tasks.

5) Using image quality assessment techniques as metrics to evaluate the quality of predicted images from different GANs.

In this paper, we analyze and summarize GANs for image super-resolution. First, we review the development of GANs and popular GANs for image applications; then we give differences of GANs based optimization methods and discriminative learning for image super-resolution in terms of supervised, semi-supervised and unsupervised manners. Next, we compare the performance of these popular GANs on public datasets via quantitative and qualitative analysis in SISR. Then, we highlight challenges of GANs and potential research points on SISR. Finally, we summarize the whole paper.

Acknowledgement: This work was supported in part by the National Natural Science Foundation of China, in part by CAAI-CANN Open Fund developed on OpenI Community, and in part by the Natural Science Foundation of Heilongjiang Province.

Funding Statement: This work was supported in part by the National Natural Science Foundation of China under Grants 62576123, in part by CAAI-CANN Open Fund developed on OpenI Community, and in part by the Natural Science Foundation of Heilongjiang Province under Grant YQ2025F003.

Author Contributions: The authors confirm contribution to the paper as follows: Conceptualization, Chunwei Tian; methodology, Ziang Wu and Chunwei Tian; writing—original draft preparation, Ziang Wu, Xuanyu Zhang and Yinbo Yu; writing—review and editing, Qi Zhu, Jerry Chun-Wei Lin and Chunwei Tian. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: All reviewed materials are publicly available and cited, primarily sourced from major academic repositories and publishers including IEEE Xplore, ACM Digital Library, ScienceDirect (Elsevier), SpringerLink, AAAI, Nature, and the arXiv repository.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Wang Z, Chen J, Hoi SC. Deep learning for image super-resolution: a survey. IEEE Trans Pattern Anal Mach Intell. 2020;43(10):3365–87. doi:10.1109/tpami.2020.2982166. [Google Scholar] [PubMed] [CrossRef]

2. Yang W, Zhang X, Tian Y, Wang W, Xue JH, Liao Q. Deep learning for single image super-resolution: a brief review. IEEE Trans Multim. 2019;21(12):3106–21. doi:10.1109/tmm.2019.2919431. [Google Scholar] [CrossRef]

3. Isaac JS, Kulkarni R. Super resolution techniques for medical image processing. In: 2015 International Conference on Technologies for Sustainable Development (ICTSD). Piscataway, NJ, USA: IEEE; 2015. p. 1–6. [Google Scholar]

4. Zhang L, Zhang H, Shen H, Li P. A super-resolution reconstruction algorithm for surveillance images. Signal Process. 2010;90(3):848–59. doi:10.1016/j.sigpro.2009.09.002. [Google Scholar] [CrossRef]

5. Zha Y, Huang Y, Sun Z, Wang Y, Yang J. Bayesian deconvolution for angular super-resolution in forward-looking scanning radar. Sensors. 2015;15(3):6924–46. doi:10.3390/s150306924. [Google Scholar] [PubMed] [CrossRef]

6. Huang Y, Shao L, Frangi AF. Simultaneous super-resolution and cross-modality synthesis of 3D medical images using weakly-supervised joint convolutional sparse coding. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2017. p. 6070–9. [Google Scholar]

7. Cheng T, Bi T, Ji W, Tian C. Graph convolutional network for image restoration: a survey. Mathematics. 2024;12(13):2020. doi:10.3390/math12132020. [Google Scholar] [CrossRef]

8. Tian C, Cheng T, Peng Z, Zuo W, Tian Y, Zhang Q, et al. A survey on deep learning fundamentals. Artif Intell Rev. 2025;58(12):381. doi:10.1007/s10462-025-11368-7. [Google Scholar] [CrossRef]

9. Zhou L, Lu X, Yang L. A local structure adaptive super-resolution reconstruction method based on BTV regularization. Multim Tools Applicat. 2014;71(3):1879–92. doi:10.1007/s11042-012-1311-x. [Google Scholar] [CrossRef]

10. Park SC, Park MK, Kang MG. Super-resolution image reconstruction: a technical overview. IEEE Signal Process Magaz. 2003;20(3):21–36. doi:10.1109/msp.2003.1203207. [Google Scholar] [CrossRef]

11. Rukundo O, Cao H. Nearest neighbor value interpolation. arXiv:1211.1768. 2012. [Google Scholar]

12. Li X, Orchard MT. New edge-directed interpolation. IEEE Trans Image Process. 2001;10(10):1521–7. [Google Scholar] [PubMed]

13. Keys R. Cubic convolution interpolation for digital image processing. IEEE Trans Acoust Speech Signal Process. 1981;29(6):1153–60. doi:10.1109/tassp.1981.1163711. [Google Scholar] [CrossRef]

14. Hardeep P, Swadas PB, Joshi M. A survey on techniques and challenges in image super resolution reconstruction. Int J Comput Sci Mobile Comput. 2013;2(4):317–25. doi:10.32657/10356/58567. [Google Scholar] [CrossRef]

15. Stark H, Oskoui P. High-resolution image recovery from image-plane arrays, using convex projections. JOSA A. 1989;6(11):1715–26. doi:10.1364/srs.1989.wc4. [Google Scholar] [CrossRef]

16. Elad M, Feuer A. Restoration of a single superresolution image from several blurred, noisy, and undersampled measured images. IEEE Trans Image Process. 1997;6(12):1646–58. doi:10.1109/83.650118. [Google Scholar] [PubMed] [CrossRef]

17. Irani M, Peleg S. Improving resolution by image registration. CVGIP Graph Models Image Process. 1991;53(3):231–9. [Google Scholar]

18. Sun J, Xu Z, Shum HY. Image super-resolution using gradient profile prior. In: 2008 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2008. p. 1–8. [Google Scholar]

19. Yan Q, Xu Y, Yang X, Nguyen TQ. Single image superresolution based on gradient profile sharpness. IEEE Trans Image Process. 2015;24(10):3187–202. doi:10.1109/tip.2015.2414877. [Google Scholar] [PubMed] [CrossRef]

20. Nasrollahi K, Moeslund TB. Super-resolution: a comprehensive survey. Mach Vis Appl. 2014;25(6):1423–68. doi:10.1007/s00138-014-0623-4. [Google Scholar] [CrossRef]

21. Guo H, Li J, Gao G, Li Z, Zeng T. PFT-SSR: parallax fusion transformer for stereo image super-resolution. In: ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). Piscataway, NJ, USA: IEEE; 2023. p. 1–5. [Google Scholar]

22. Gao G, Xu Z, Li J, Yang J, Zeng T, Qi GJ. CTCNET: a CNN-transformer cooperation network for face image super-resolution. IEEE Trans Image Process. 2023;32:1978–91. [Google Scholar] [PubMed]

23. Dong C, Loy CC, He K, Tang X. Image super-resolution using deep convolutional networks. IEEE Trans Pattern Anal Mach Intell. 2015;38(2):295–307. doi:10.1109/tpami.2015.2439281. [Google Scholar] [PubMed] [CrossRef]

24. Kim J, Lee JK, Lee KM. Accurate image super-resolution using very deep convolutional networks. In: Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2016. p. 1646–54. [Google Scholar]

25. Tai Y, Yang J, Liu X. Image super-resolution via deep recursive residual network. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2017. p. 3147–55. [Google Scholar]

26. Lim B, Son S, Kim H, Nah S, Mu Lee K. Enhanced deep residual networks for single image super-resolution. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops. Piscataway, NJ, USA: IEEE; 2017. p. 136–44. [Google Scholar]

27. Wang Z, Liu D, Yang J, Han W, Huang T. Deep networks for image super-resolution with sparse prior. In: Proceedings of the 2015 IEEE International Conference on Computer Vision. Piscataway, NJ, USA: IEEE; 2015. p. 370–8. [Google Scholar]

28. Dong C, Loy CC, Tang X. Accelerating the super-resolution convolutional neural network. In: European Conference on Computer Vision. Cham, Switzerland: Springer; 2016. p. 391–407. [Google Scholar]

29. Tian C, Zhang Y, Zuo W, Lin CW, Zhang D, Yuan Y. A heterogeneous group CNN for image super-resolution. IEEE Trans Neural Netw Learn Syst. 2024;35(5):6507–19. doi:10.1109/tnnls.2022.3210433. [Google Scholar] [PubMed] [CrossRef]

30. Lai WS, Huang JB, Ahuja N, Yang MH. Deep laplacian pyramid networks for fast and accurate super-resolution. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2017. p. 624–32. [Google Scholar]

31. Tian C, Song M, Fan X, Zheng X, Zhang B, Zhang D. A tree-guided CNN for image super-resolution. IEEE Trans Consumer Electr. 2025;71(2):3631–40. doi:10.1109/tce.2025.3572732. [Google Scholar] [CrossRef]

32. Zhang Y, Li K, Li K, Wang L, Zhong B, Fu Y. Image super-resolution using very deep residual channel attention networks. In: Proceedings of the European Conference on Computer Vision (ECCV). Cham, Switzerland: Springer; 2018. p. 286–301. [Google Scholar]

33. Tian C, Zhang X, Zhang Q, Yang M, Ju Z. Image super-resolution via dynamic network. arXiv:2310.10413. 2024. [Google Scholar]

34. Tian C, Zhang C, Zhang B, Li Z, Chen CP, Zhang D. A cosine network for image super-resolution. IEEE Trans Image Proces. 2026;35:305–16. [Google Scholar] [PubMed]

35. Li N, Zhu Z, Wei S, Liu Y. EVASR: edge-based salience-aware super-resolution for enhanced video quality and power efficiency. ACM Trans Multim Comput Commun Appl. 2025;21(9):245. [Google Scholar]

36. Jo Y, Yang S, Kim SJ. Srflow-da: super-resolution using normalizing flow with deep convolutional block. In: Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2021. p. 364–72. [Google Scholar]

37. Gan Y, Yang C, Ye M, Huang R, Ouyang D. Generative adversarial networks with learnable auxiliary module for image synthesis. ACM Trans Multim Comput Commun Appl. 2025;21(4):1–21. doi:10.1145/3653021. [Google Scholar] [CrossRef]

38. Chira D, Haralampiev I, Winther O, Dittadi A, Liévin V. Image super-resolution with deep variational autoencoders. In: 2022 European Conference on Computer Vision. Cham, Switzerland: Springer; 2022. p. 395–411. [Google Scholar]

39. Dhanusha PB, Muthukumar A, Lakshmi A. Deep feature blend attention: a new frontier in super resolution image generation. Neurocomputing. 2025;618:128989. doi:10.1016/j.neucom.2024.128989. [Google Scholar] [CrossRef]

40. Moser BB, Shanbhag AS, Raue F, Frolov S, Palacio S, Dengel A. Diffusion models, image super-resolution, and everything: a survey. IEEE Trans Neural Netw Learn Syst. 2025;36(7):11793–813. doi:10.1109/tnnls.2024.3476671. [Google Scholar] [PubMed] [CrossRef]

41. Li X, Ren Y, Jin X, Lan C, Wang X, Zeng W, et al. Diffusion models for image restoration and enhancement: a comprehensive survey. Int J Comput Visi. 2025;133(11):8078–108. doi:10.1007/s11263-025-02570-9. [Google Scholar] [CrossRef]

42. Kuznedelev D, Startsev V, Shlenskii D, Kastryulin S. Does diffusion beat GAN in image super resolution? arXiv:2405.17261. 2024. [Google Scholar]

43. Goodfellow I, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, et al. Generative adversarial nets. In: Advances in neural information processing systems. Red Hook, NY, USA: Curran Associates, Inc.; 2014. 27 p. [Google Scholar]

44. Wang Z, She Q, Ward TE. Generative adversarial networks in computer vision: a survey and taxonomy. ACM Comput Surv (CSUR). 2021;54(2):1–38. doi:10.1145/3439723. [Google Scholar] [CrossRef]

45. Gui J, Sun Z, Wen Y, Tao D, Ye J. A review on generative adversarial networks: algorithms, theory, and applications. IEEE Trans Knowl Data Eng. 2023;35(4):3313–32. doi:10.1109/tkde.2021.3130191. [Google Scholar] [CrossRef]

46. Nayak AA, Venugopala P, Ashwini B. A systematic review on generative adversarial network (GANchallenges and future directions. Arch Computat Meth Eng. 2024;31(8):4739–72. doi:10.1007/s11831-024-10119-1. [Google Scholar] [CrossRef]

47. Bell-Kligler S, Shocher A, Irani M. Blind super-resolution kernel estimation using an internal-GAN. In: Advances in neural information processing systems. Red Hook, NY, USA: Curran Associates, Inc.; 2019. [Google Scholar]

48. Park J, Kim H, Kang MG. Kernel estimation using total variation guided GAN for image super-resolution. Sensors. 2023;23(7):3734. doi:10.3390/s23073734. [Google Scholar] [PubMed] [CrossRef]

49. Ledig C, Theis L, Huszár F, Caballero J, Cunningham A, Acosta A, et al. Photo-realistic single image super-resolution using a generative adversarial network. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2017. p. 4681–90. [Google Scholar]

50. Karras T, Aila T, Laine S, Lehtinen J. Progressive growing of GANs for improved quality, stability, and variation. arXiv:1710.10196. 2017. [Google Scholar]

51. Shamsolmoali P, Zareapoor M, Wang R, Jain DK, Yang J. G-GANISR: gradual generative adversarial network for image super resolution. Neurocomputing. 2019;366(4):140–53. doi:10.1016/j.neucom.2019.07.094. [Google Scholar] [CrossRef]

52. Wei Z, Huang Y, Chen Y, Zheng C, Gao J. A-ESRGAN: training real-world blind super-resolution with attention U-Net Discriminators. In: PRICAI 2023: trends in artificial intelligence (PRICAI 2023). Singapore: Springer; 2023. p. 16–27. doi:10.1007/978-981-99-7025-4_2. [Google Scholar] [CrossRef]

53. Lee OY, Shin YH, Kim JO. Multi-perspective discriminators-based generative adversarial network for image super resolution. IEEE Access. 2019;7:136496–510. doi:10.1109/access.2019.2942779. [Google Scholar] [CrossRef]

54. Wang Y, Hu Y, Yu J, Zhang J. GAN prior based null-space learning for consistent super-resolution. arXiv:2211.13524. 2022. [Google Scholar]

55. Zhao J, Ma Y, Chen F, Shang E, Yao W, Zhang S, et al. SA-GAN: a second order attention generator adversarial network with region aware strategy for real satellite images super resolution reconstruction. Remote Sens. 2023;15(5):1391. doi:10.3390/rs15051391. [Google Scholar] [CrossRef]

56. Tu Z, Yang X, He X, Yan J, Xu T. RGTGAN: reference-based gradient-assisted texture-enhancement GAN for remote sensing super-resolution. IEEE Trans Geosci Remote Sens. 2024;62:1–21. [Google Scholar]

57. Zhao T, Ren W, Zhang C, Ren D, Hu Q. Unsupervised degradation learning for single image super-resolution. arXiv:1812.04240. 2018. [Google Scholar]

58. Jabbar A, Li X, Omar B. A survey on generative adversarial networks: variants, applications, and training. ACM Comput Surv. 2022;54(8):1–49. doi:10.1145/3463475. [Google Scholar] [CrossRef]

59. Creswell A, White T, Dumoulin V, Arulkumaran K, Sengupta B, Bharath AA. Generative adversarial networks: an overview. IEEE Signal Proces Magaz. 2018;35(1):53–65. doi:10.1109/msp.2017.2765202. [Google Scholar] [CrossRef]

60. Xue X, Zhang X, Li H, Wang W. Research on GAN-based image super-resolution method. In: 2020 IEEE International Conference on Artificial Intelligence and Computer Applications (ICAICA). Piscataway, NJ, USA: IEEE; 2020. p. 602–5. [Google Scholar]

61. Wang X, Sun L, Chehri A, Song Y. A review of GAN-based super-resolution reconstruction for optical remote sensing images. Remote Sens. 2023;15(20):5062. doi:10.3390/rs15205062. [Google Scholar] [CrossRef]

62. Feng H. Review of GAN-based image super-resolution techniques. Theoret Natural Sci. 2024;52(1):146–52. doi:10.54254/2753-8818/52/2024ch0134. [Google Scholar] [CrossRef]

63. Jiang J, Wang C, Liu X, Ma J. Deep learning-based face super-resolution: a survey. ACM Comput Surv (CSUR). 2021;55(1):1–36. doi:10.1145/3485132. [Google Scholar] [CrossRef]

64. Anwar S, Khan S, Barnes N. A deep journey into super-resolution: a survey. ACM Comput Surv (CSUR). 2020;53(3):1–34. doi:10.1145/3390462. [Google Scholar] [CrossRef]

65. Sun J, Xu Z, Shum HY. Gradient profile prior and its applications in image super-resolution and enhancement. IEEE Trans Image Process. 2010;20(6):1529–42. doi:10.1109/tip.2010.2095871. [Google Scholar] [PubMed] [CrossRef]

66. Bird JJ, Barnes CM, Manso LJ, Ekárt A, Faria DR. Fruit quality and defect image classification with conditional GAN data augmentation. Sci Hortic. 2022;293(9):110684. doi:10.1016/j.scienta.2021.110684. [Google Scholar] [CrossRef]

67. Liu H, Wan Z, Huang W, Song Y, Han X, Liao J. PD-GAN: probabilistic diverse GAN for image inpainting. In: Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway, NJ, USA: IEEE; 2021. p. 9371–81. [Google Scholar]

68. Mirza M, Osindero S. Conditional generative adversarial nets. arXiv:1411.1784. 2014. [Google Scholar]

69. Radford A, Metz L, Chintala S. Unsupervised representation learning with deep convolutional generative adversarial networks. arXiv:1511.06434. 2015. [Google Scholar]