Open Access

Open Access

ARTICLE

Hybrid Temporal Convolutional Network-Transformer Model Optimized by Particle Swarm Optimization for State of Charge Estimation of Lithium-Ion Batteries

1 School of Intelligence Science and Technology, Beijing University of Civil Engineering and Architecture, Beijing, 100044, China

2 Institute of Distributed Energy Storage Safety Big Data, Beijing, 100044, China

3 Beijing Key Laboratory of Super Intelligent Technology for Urban Architecture, Beijing University of Civil Engineering and Architecture, Beijing, 100044, China

* Corresponding Author: Hongyan Ma. Email:

Energy Engineering 2026, 123(4), 23 https://doi.org/10.32604/ee.2025.072906

Received 06 September 2025; Accepted 12 November 2025; Issue published 27 March 2026

Abstract

Lithium-ion (Li-ion) batteries stand as the dominant energy storage solution, despite their widespread adoption, precisely determining the state of charge (SOC) continues to pose significant difficulties, with direct implications for battery safety, operational reliability, and overall performance. Current SOC estimation techniques often demonstrate limited accuracy, particularly when confronted with complex operational scenarios and wide temperature variations, where their generalization capacity and dynamic adaptation prove insufficient. To address these shortcomings, this work presents a PSO-TCN-Transformer network model for SOC estimation. This research uses the Particle Swarm Optimization (PSO) method to automatically configure the architectural parameters of the Temporal Convolutional Network (TCN) and Transformer components. This automated optimization enhances the model’s ability to represent the dynamically evolving nature of SOC. Additionally, this integrated framework significantly increases the model’s capacity to capture SOC dynamics in complex operational scenarios. During training and evaluation using a comprehensive dataset that covers complex operating conditions and a broad temperature spanning from −20°C to 40°C, the proposed model achieves a root mean square error (RMSE) of less than 0.6%, a maximum absolute error (MAXE) below 4.0%, and a coefficient of determination (R2) of 99.99%. Additional comparative experiments on data from an energy storage company further verify the model’s superior performance, with an RMSE of 1.18% and an MAXE of 1.95%. The implications of this work extend to the development of optimization strategies and hybrid architectures, providing insights that can be adapted for state estimation across a range of complex dynamic systems.Keywords

The global transition toward new energy vehicles (NEVs) embodies a global strategic priority for addressing climate change and promoting green growth. Driven by dual-carbon policy frameworks and market demands, electric vehicles (EVs) have achieved exponential progress in technological innovation and market expansion [1–4]. Li-ion batteries represent the leading choice for energy storage applications [5]. This technological edge has accelerated the evolution of portable power systems. As the vital energy core of EVs, Li-ion battery performance directly impacts vehicle range and operational safety. The Battery Management System (BMS) enables real-time battery status monitoring, remaining range prediction, and overall vehicle safety enhancement. It necessitates integrating advanced algorithms and hardware architectures to optimize energy efficiency and mitigate safety risks in EV applications.

BMS is the cornerstone of an electric vehicle’s energy management system. Its primary role is to continuously monitor battery performance, ensuring vehicle safety and stable operation. The heart of BMS functionality is SOC. Accurate SOC estimation not only optimizes battery usage but also reduces costs by minimizing over-engineering in battery pack design [6], it further ensures safe operation by preventing overcharge/overdischarge, which are major causes of battery degradation or thermal runaway. However, the inherent intricate electrochemistry of Li-ion batteries, as well as their nonlinear, time-varying operating characteristics, poses considerable challenges for SOC prediction. Moreover, factors like battery aging, ambient temperature changes, and cycling history further complicate the issue [7]. These elements introduce uncertainties that compromise the reliability of traditional estimation methods. To address these challenges, the research community has developed numerous SOC estimation methods. These estimation approaches primarily leverage a set of readily accessible physical quantities, including terminal voltage, operating current, capacity, and temperature [8]. They can be generally categorized into four types: Ampere-Hour Integration (AHI), Coulombic counting, model-based Kalman filtering algorithms, and data-driven techniques.

Among SOC estimation methods, Ampere-Hour Integration (AHI) ranks among the simplest and most frequently employed. Its estimation accuracy is heavily contingent on initial SOC values and the accuracy of current sensor measurements. However, it has two major limitations. First, the inevitable uncertainty in the initial SOC leads to inherent estimation bias. Second, the zero-drift phenomenon of current sensors during long-term operation causes cumulative error amplification. Due to these error propagation issues, AHI fails to meet the engineering requirements for estimation accuracy in long-term dynamic conditions [9]. Based on open circuit voltage (OCV) method or impedance characteristics establish nonlinear relationships between OCV and SOC, or identify parameters of equivalent circuit models. But these methods critically rely on the battery reaching an electrochemical equilibrium state. To eliminate polarization effects, a long rest period before measurement is required. In real-world dynamic operation scenarios, such a condition is rarely met, severely restricting their practical application [10,11]. Model-based Kalman filtering approaches, including the Kalman Filter (KF), Extended Kalman Filter (EKF), and square root cubature Kalman filter (SRCKF), are advantageous in dealing with nonlinear systems. Researchers in Ref. [12] proposed a framework, which integrates PSO with an adaptive SRCKF. In Ref. [13], researchers proposed a hybrid model that attains superior SOC estimation accuracy without complex parameter tuning, demonstrating strong performance and generalization in experimental tests. Researchers in Ref. [14] sought to realize more precise modeling of battery behavior by developing a multi-characteristic electrochemical-thermal coupling modeling approach. This method enabled enhanced robustness in SOC prediction under complex operating conditions. However, their estimation performance highly depends on the accuracy of battery equivalent circuit model parameters and the precision of noise characterization. In practical applications, time-varying parameters caused by battery aging, along with disturbances under complex operating conditions, make it difficult to effectively suppress model prediction errors [15–19].

The data-driven methodology offers a model-free solution for SOC estimation. It works by uncovering underlying correlations between a battery’s state and measurable parameters, leveraging analysis of large-scale operational data—such as voltage and current—collected from real-world battery operations [20,21]. By utilizing empirical data to infer SOC rather than depending on complex mechanism-based models, these data-driven approaches streamline the estimation workflow. Traditional methods including support vector machine, random forest, and gaussian process regression have been extensively investigated for this application [22,23]. However, the dynamic evolution of battery SOC exhibits strong temporal dependencies, with each time-step’s state closely linked to historical operational data. Additionally, the interplay of electrochemical reactions, thermal dynamics, and power management in batteries introduce multi-physical coupling effects. These challenges test the modeling capabilities of traditional methods. Specifically, they highlight the limitations of mechanistic models and basic filtering algorithms in capturing the complex nonlinear dynamics of battery systems. Deep learning architectures provide an effective solution. They characterize complex input-output relationships through stacked nonlinear transformations. By optimizing network depth, neuron quantity, and connection weights, the developed models show superior expressiveness compared to conventional approaches. For capturing the time-varying SOC dynamics of Li-ion batteries, Long Short-Term Memory (LSTM), Gated Recurrent Unit (GRU), and TCN have shown particular effectiveness [24]. In the work presented in Ref. [25], researchers proposed a weight clustered CNN-LSTM model for co-estimating battery State of Temperature (SOT) and SOC, enabling a 52.98% smaller model while maintaining estimation precision. Ren et al. [26] used PSO to fine-tune LSTM-recurrent neural networks (RNNs) hyperparameters, improving SOC estimation accuracy. Their framework included controlled input noise during training to enhance robustness against real-world operational variations. Yao et al. [27] tackled the issue of preserving learned knowledge by integrating the progressive neural network architecture with TCN. Their hybrid design effectively captures temporal patterns and ensures stable knowledge transfer across tasks, which empowers the model to quickly adjust to new battery chemistries without discarding previously acquired information. Xu et al. [28] introduce a pioneering method that merges EKF algorithm with PSO and LSTM model, aiming to achieve precise SOC estimation for power batteries. Li et al. [29] developed an approach that combines deep learning models with a PSO-tuned Kalman Filter. In their design, PSO optimizes both the hyperparameters of model and the noise covariance matrix of the Filter, alleviating estimation errors induced by transient signal oscillations. However, a critical limitation of their work is its dependence on RNN-based architectures and PSO’s inability to address inter-module parameter coupling—factors that restrict its generalization under ultra-wide temperature ranges. Jiao et al. [30] investigated the impact of momentum terms, noise variance, training epochs, and hidden layer architecture on GRU-RNN performance, using gradient-based optimization to balance training efficiency and estimation precision. Hu et al. [31] proposed a TCN-LSTM cascade model, where TCN preprocessed input sequences to extract temporal features for subsequent LSTM sequential modeling. Empirical results showed improved accuracy over standalone TCN or LSTM architectures.

However, TCN’s fixed convolutional kernel struggles to dynamically adapt to the varying importance of different time steps. Moreover, its receptive field expansion has a theoretical upper limit, which may lead to the loss of critical aging features when handling long sequential data [32]. In contrast, the attention mechanism, a proven technique for feature selection, enhances model learning capacity and prediction accuracy. It has been widely integrated into deep learning frameworks [33,34], demonstrating efficacy in natural language processing and computer vision. Yang et al. [35] integrated TCN and GRU with an attention mechanism to perform SOC estimation. To validate their approach, the team tested their model on two datasets. However, all tests conducted under a fixed ambient temperature of 25°C. Li et al. [36] put forward a PSO-TCN-Attention network model, which was evaluated solely using datasets gathered under temperatures above zero. Unlike standalone attention, the Transformer uses multi-head self-attention and positional encoding to: (1) enable parallel data processing (critical for real-time BMS applications), (2) capture long-range temporal dependencies without relying on sequential computation, and (3) dynamically weight key features. This enables real-time battery health analysis and remote management to optimize operational efficiency and extend system lifespan. Bao et al. [37] put forward a Transformer-LSTM hybrid model, which integrates the Transformer’s capacity for capturing long-term dependencies with LSTM’s sequential modeling of short-to-medium-term patterns. Experiments showed reduced computational overhead and improved prediction accuracy compared to standalone Transformer or LSTM models. However, most hybrids either lack TCN’s parallel computation efficiency or fail to leverage dynamic global feature weighting. Notably, TCN offers higher parallel computation efficiency and fewer parameters than LSTM/GRU for long sequences, enabling more effective capture of temporal dependencies. To address the real-time computation demands and cross-condition generalization limitations of traditional methods, this work introduces a TCN-Transformer model for lithium battery SOC prediction. The framework leverages TCN to efficiently extract temporal features and expand the receptive field, while the Transformer’s self-attention mechanism captures global dependencies, adaptively weights key features, and enhances model robustness, achieving synergy that neither TCN nor Transformer alone can provide. Furthermore, considering the significant impact of hyperparameters on SOC prediction, PSO algorithm optimizes the architectural parameters of both TCN and Transformer to tailor the model for diverse operational conditions. The study’s core contributions include:

(1) The effective integration of the TCN network with the Transformer markedly improves the accuracy of SOC estimation. Our approach also exhibits excellent performance as reflected by its RMSE < 0.6% and MAXE < 4.0%. This approach surpasses mainstream deep learning models, such as CNN-LSTM-Attention and CNN-GRU-Attention, across diverse operational scenarios.

(2) The TCN-Transformer cascade architecture shows exceptional generalization capability, maintaining high accuracy and stability across a broad temperature spanning from −20°C to 40°C and complex dynamic conditions, filling the practical gap of existing models that only perform well above 0°C (inapplicable to cold climates).

(3) PSO algorithm streamlines model parameter tuning, reducing computational overhead and enhancing performance. Compared with traditional manual adjustment methods, PSO automates the optimization process, making the model more scalable for practical engineering applications.

2 PSO-TCN-Transformer Framework for State of Charge Estimation

TCN [38] is a specialized neural architecture for time series processing, distinguished from traditional Convolutional Neural Networks (CNNs) by its exclusive use of causal convolution for local feature extraction. TCN enhances this foundation with residual connections and dilated convolutions, enabling multi-scale temporal dependency capture. By adjusting the dilation coefficient, the receptive field expands to integrate historical information from varying time horizons, while residual connections between convolutional layers mitigate the gradient vanishing issue typical of deep CNN architectures. This design not only accelerates model convergence but also ensures effective learning of long-range temporal patterns in sequential data.

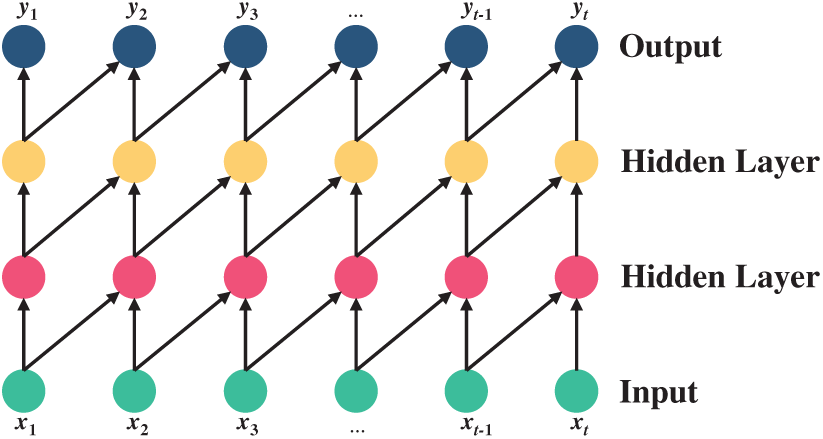

The causal convolution, illustrated in Fig. 1, differs from traditional convolutional neural networks in that it lacks access to future data: it has a unidirectional instead of bidirectional structure and functions as a strictly time-constrained model. Mathematically, for a time series X = (x1, x2, ..., xT) and a filter F = (f1, f2, ..., fK), the causal convolution at xt can be expressed as

Figure 1: Causal convolution

The temporal modeling capacity of conventional convolutional neural networks is fundamentally governed by the convolutional kernel size and the depth of the network. Consequently, capturing extended and intricate temporal dependencies often demands a prohibitively deep architecture, achieved through the sequential stacking of layers. For an n-layer standard convolutional network with a kernel size of k, the receptive field RF is calculated as:

From the equation, it is evident that when the convolutional kernel length k is fixed, the number of layers n in a causal convolutional network exhibits a linear relationship with the receptive field RF. This implies that achieving full coverage of the input sequence—where n equals the sequence length—would necessitate an excessively deep network, requiring the training of a large number of parameters. Consequently, this leads to a significant reduction in training speed.

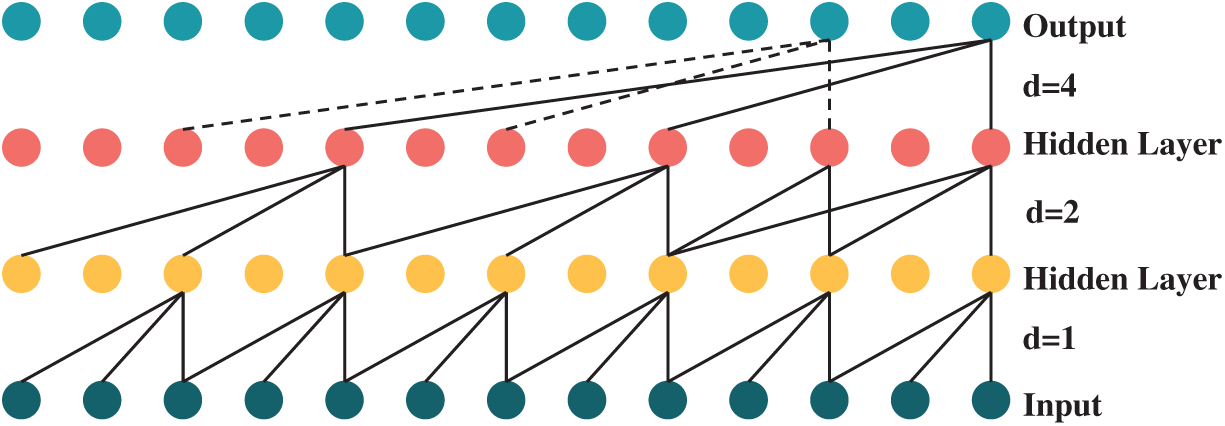

To address this, TCN networks introduce dilated convolution to expand the receptive field while maintaining a shallow network architecture. In standard causal convolution, the kernel elements are contiguous, covering consecutive subsequences of the input sequence as the kernel slides. In contrast, dilated convolution features kernel elements with exponentially increasing spacing between them as more layers are stacked, as illustrated in Fig. 2.

Figure 2: Causal convolution

The formula for the receptive field at this juncture is given as follows:

where d denotes the dilation base, and di represents the spacing between elements of the convolutional kernel for the i-th layer.

From the above equation, it is evident that when the convolutional kernel length k remains constant, the number of layers n in a dilated convolutional network exhibits a logarithmic relationship with the receptive field RF. This allows for full coverage of input sequences using a reduced number of layers.

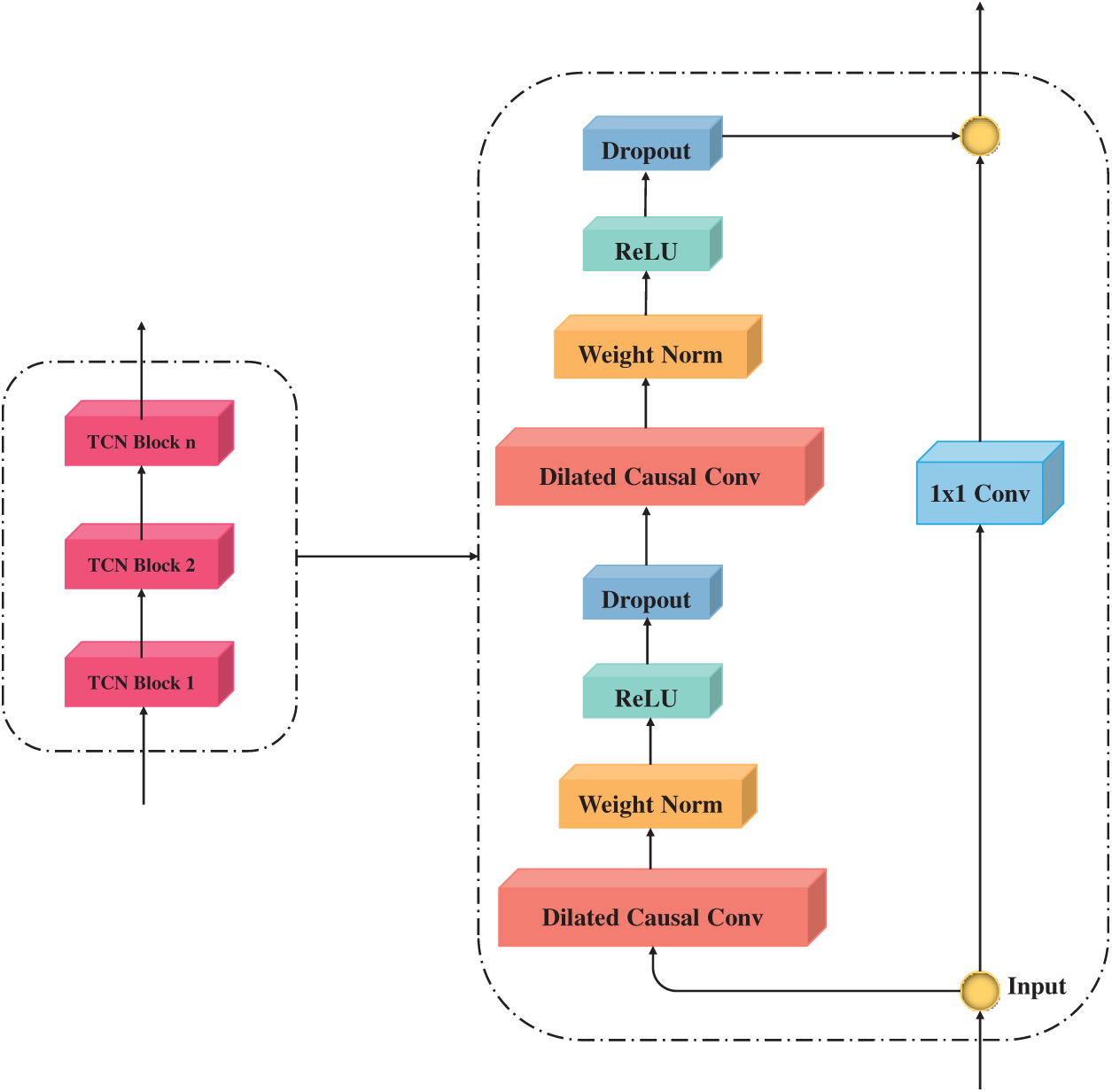

As the depth of the convolutional network increases, the model gains enhanced capability in capturing temporal dependencies across multiple scales. However, this deeper architecture often leads to compromised training stability. To address this, The TCN architecture integrates residual linking mechanisms, where outputs from dilated causal convolutional layers are propagated forward while being simultaneously combined with the original input through summation operations. This design effectively mitigates the gradient vanishing problem. The residual structure of TCN, as depicted in Fig. 3, consists of a main pathway with two dilated causal convolutional layers. Each convolutional layer is equipped with weight normalization, the ReLU activation function, and a Dropout layer. A 1 × 1 convolutional layer is used in the residual pathway to ensure the dimensional consistency between the input and the output of the main pathway. The residual connection mechanism allows the network to efficiently capture discrepancies between inputs and outputs, speeding up the training process and alleviating problems such as the gradient explosion or vanishing—even in deep architectures. Through the integration of residual pathways, TCNs promote the transmission and utilization of historical information, which in turn strengthens their ability to model complex time-series dynamics.

Figure 3: Residual block

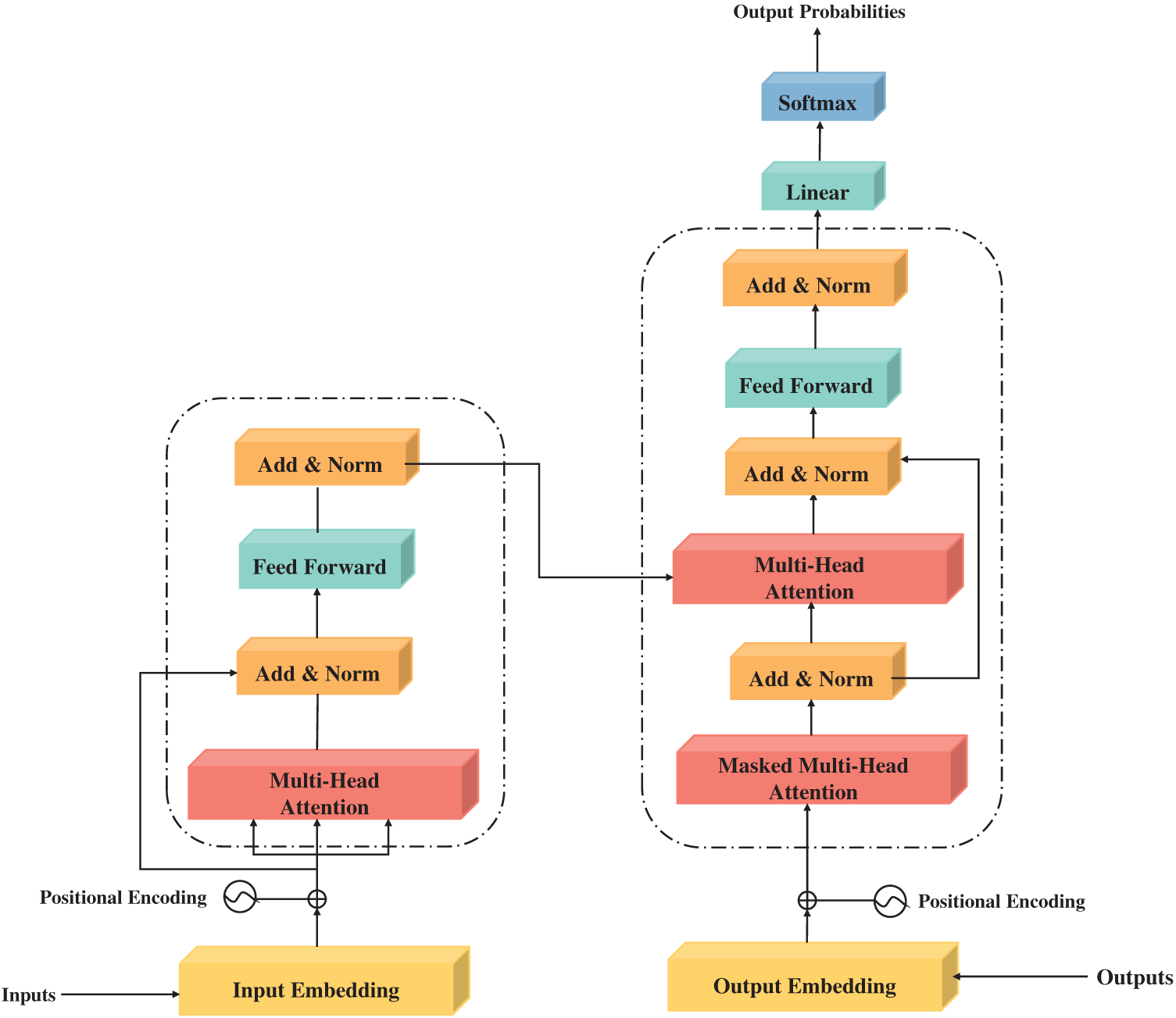

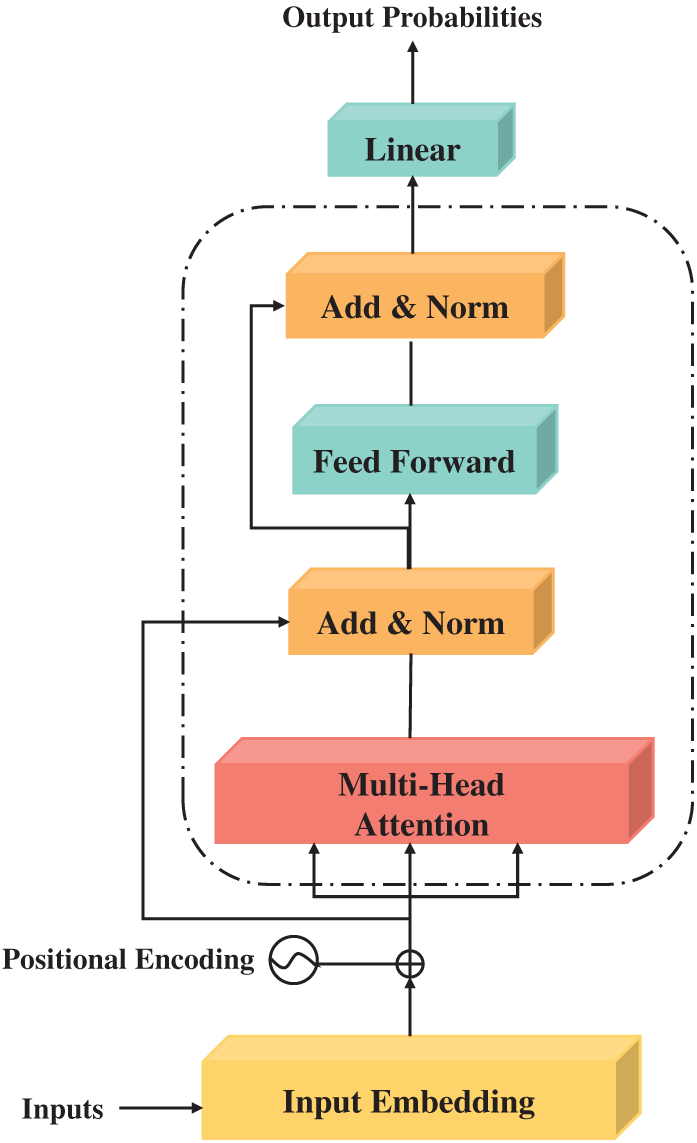

The Transformer architecture [39] is an end-to-end framework designed specifically for time series analysis. Different from traditional RNNs, the Transformer employs a self-attention mechanism to capture contextual information across spatial positions—this effectively overcomes the limitations in capturing long-term dependencies that are inherent to conventional RNN-based architectures. The architecture of the Transformer model, depicted in Fig. 4, is built upon two primary components: an encoder and a decoder. Each can be composed of multiple identical layers. A key innovation within these layers is the multi-head attention mechanism, which employs several parallel self-attention units. This design enables the model to concurrently process information from distinct representation subspaces.

Figure 4: Transformer

However, time series prediction presents distinct challenges compared to text data due to the inherent differences in data characteristics. Direct application of the standard Transformer architecture to time series may lead to escalating training errors during iterations, often yielding prediction accuracies inferior to traditional recurrent neural networks. To address this, this study modifies the standard Transformer architecture for Li-ion battery SOC prediction by removing the decoder module and the final activation function layer. The optimized structure is depicted in Fig. 5.

Figure 5: Improved transformer

The efficacy of an SOC estimation model is critically dependent upon its parameterization, which directly governs its predictive performance and ability to generalize beyond the training data. The parameter optimization of models typically entails a high-dimensional, nonconvex optimization problem. In such scenarios, the intuitive and straightforward PSO algorithm excels at searching for global optimal solutions or approximating them [36]. PSO algorithm exhibits excellent ability to search for and approximate global optimal solutions. A hyperparameter optimization strategy using the PSO algorithm was implemented to enhance the predictive accuracy of the TCN-Transformer model. This process targeted key architectural parameters, including the number of TCN layers, the learning rate, the number of Transformer attention heads, and the maximum training epochs. The optimization thus pursues a dual objective: it streamlines the network architecture for reduced complexity while simultaneously improving training efficiency by refining its internal learning dynamics.

Rooted in swarm intelligence, PSO algorithm takes inspiration from the collective behaviors of natural groups—like bird flocking or fish schooling. In this setup, potential solutions are modeled as particles in a high-dimensional space, each with independent velocity and position characteristics. Particle trajectories are adjusted iteratively based on both individual experience and shared group insights, emulating the adaptive learning mechanisms seen in biological swarms. PSO’s core function lies in its capacity to update particle velocities and positions iteratively, steering the swarm toward the global optimal solution. The optimization goal is embedded in the swarm’s dynamics: a population of n particles works together to search for M parameters in the solution space.

After each iteration, particle velocities and positions are updated according to the following equations, which govern the dynamic adjustment process

here, Xi represents the position of the i-th particle in the population, while Vi denotes the velocity of the i-th particle, pbest stands for the optimal position found by the i-th particle, and gbest signifies the global optimal position for the entire population, where i = 1, 2, …, n. Here, t represents the current iteration number, and vi refers to the particle’s speed at this iteration. ω denotes the inertia weight: a larger ω enhances the algorithm’s global search capability and speeds up the search process, while a smaller ω strengthens the algorithm’s local optimization capability. c1 and c2 are acceleration factors. r1 and r2 are random number between [0, 1].

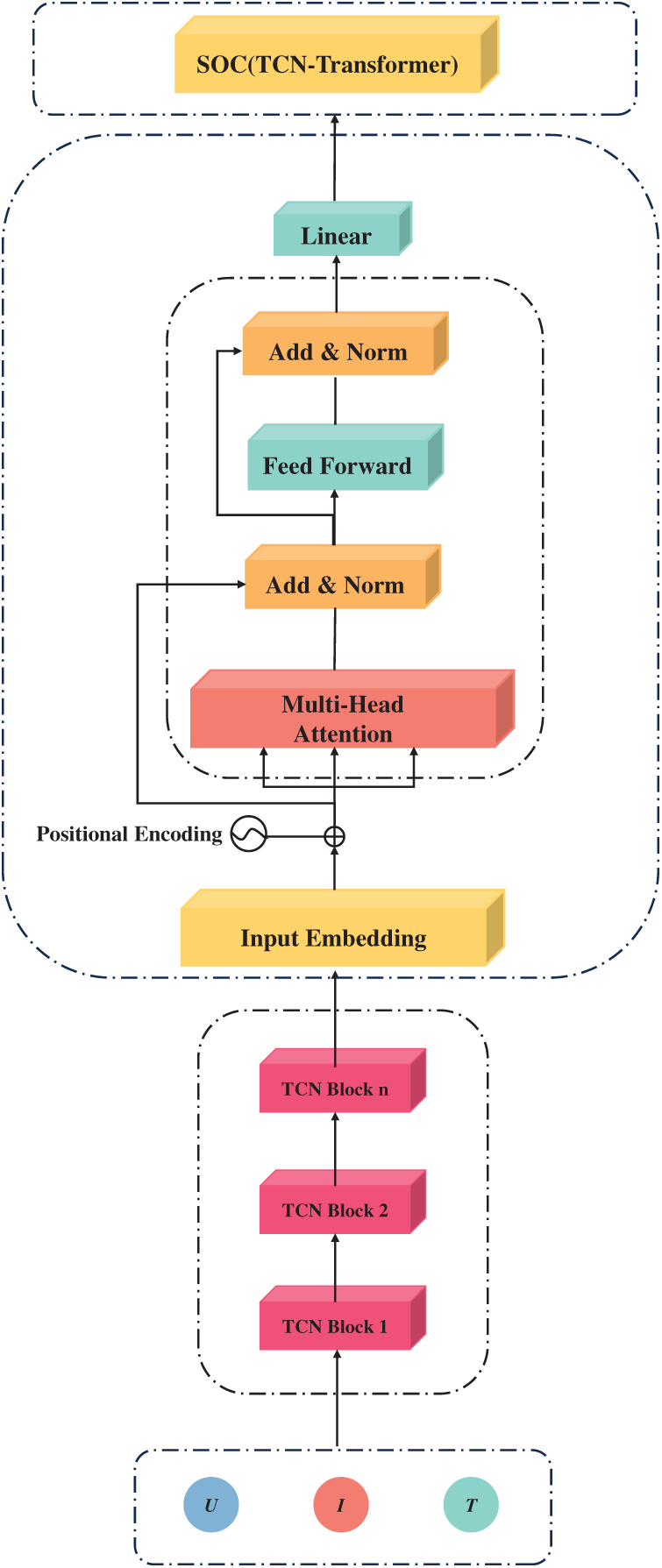

Both TCN and Transformer models exhibit distinct advantages in time series forecasting. The TCN excels at capturing local temporal dependencies through sliding convolutional kernels that process sequential information step-by-step. Meanwhile, Within the Transformer framework, the self-attention mechanism assigns importance weights to all temporal positions concurrently, facilitating dynamic contextual relevance assessment and enhanced modeling of long-range dependencies. This study aims to integrate the complementary strengths of TCN and Transformer by constructing a TCN-Transformer hybrid framework for enhanced battery SOC prediction. The fusion model, as illustrated in Fig. 6, synergizes the local feature extraction capability of TCN with the global contextual understanding of Transformer, thereby enabling more precise modeling of complex battery dynamics.

Figure 6: TCN-Transformer

The TCN-Transformer fusion model comprises an input layer, TCN layer, Transformer layer, and output layer. The specific procedures for performing SOC prediction are as follows:

First, the input layer processes time-series features constructed from sequential voltage, current, and temperature data. The TCN layer employs dilated causal convolutional layers and residual blocks to thoroughly learn the dependency relationships within the sequence data, extracting features from the input layer’s output. The extracted feature data undergo positional encoding to incorporate sequence order information before being fed into the Transformer layer. In the proposed architecture, the input sequence undergoes successive stages of processing through a stack of identical encoder layers within the Transformer. These layers are responsible for refining the features, wherein the multi-head self-attention mechanism computes adaptive weights to capture interdependencies between different time steps. The output from this encoder stack is subsequently passed to a linear projection layer to estimate the final SOC value.

3.1 Dataset Description and Preprocessing

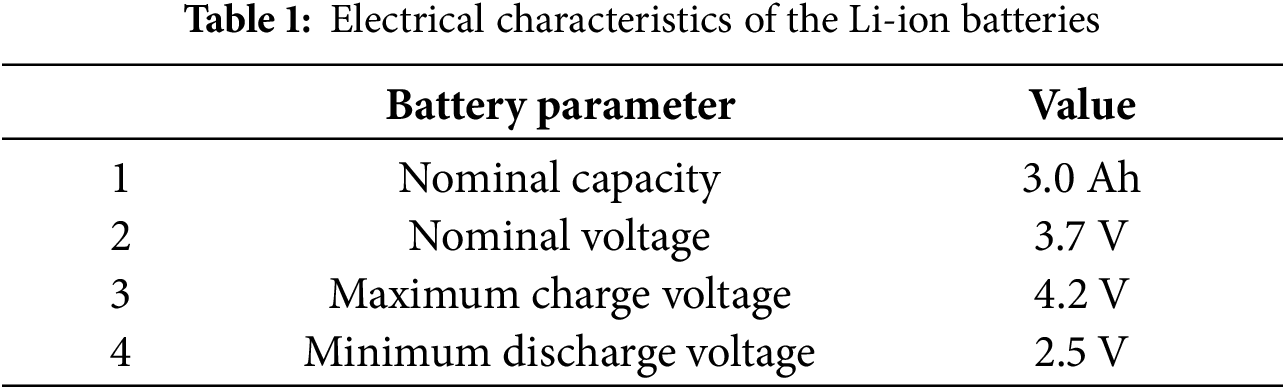

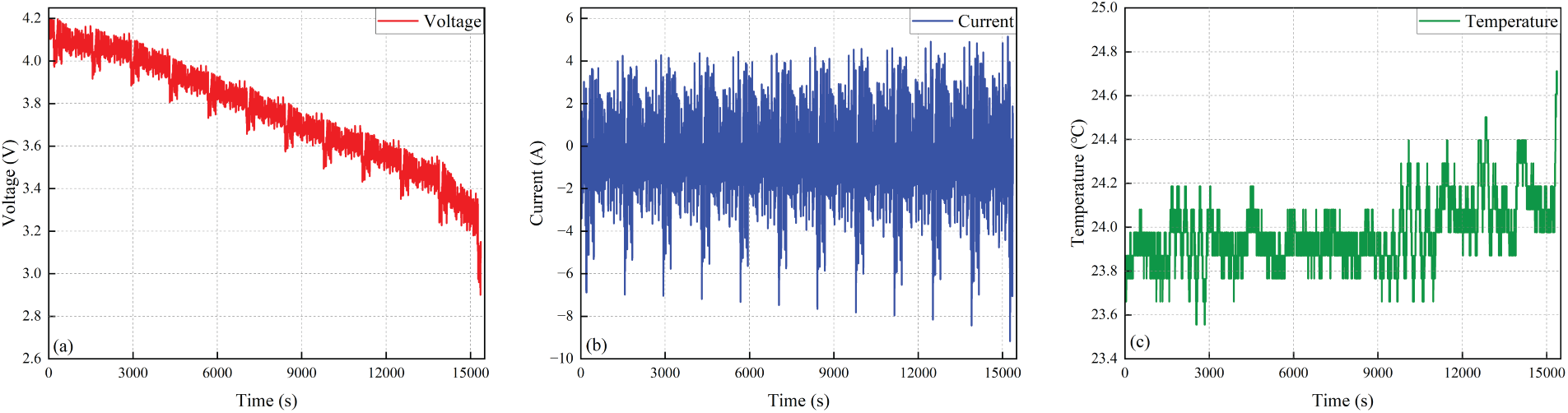

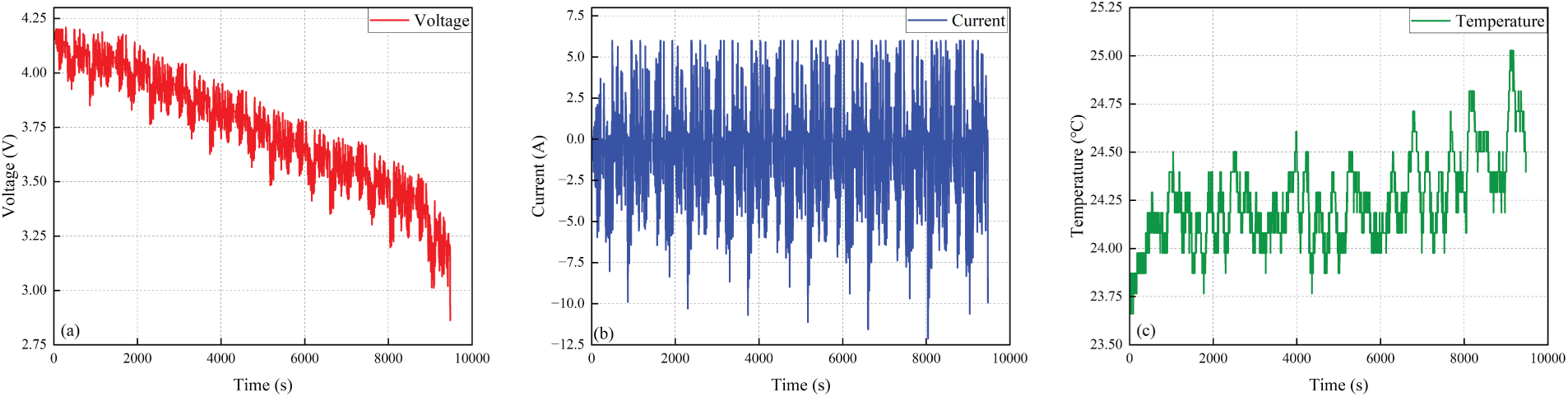

The dataset used in this work is obtained from McMaster University, which utilizes an LG18650—its parameters are detailed in Table 1. This dataset includes results from a set of tests performed on the LG18650 battery under different temperature (−20°C~40°C), covering typical driving profiles like Hybrid 1–8, UDDS, HWFET, US06, and LA92 [40]. It effectively simulates the complexity and unpredictability of real-world electric vehicle (EV) driving scenarios. In this study, data from the LG18650 battery at five temperatures (without 10°C) is selected, with Hybrid 1–8 operating profile data used as training data for our model. For the purpose of performance evaluation, 25% of the data is designated as a validation set during model training. The UDDS, HWFET, US06, and LA92 driving cycles are employed as test sets for assessing the proposed method. This data selection and partitioning strategy enables realistic simulation of various EV operating scenarios. Figs. 7 and 8 present the voltage, current, and temperature distributions of the UDDS and LA92 profile datasets at 25°C.

Figure 7: Input variable profiles under the UDDS driving cycle at 25°C: (a) Voltage; (b) Current; (c) Temperature

Figure 8: Input variable profiles under the LA92 driving cycle at 25°C: (a) Voltage; (b) Current; (c) Temperature

Accordingly, we employ the min-max normalization method to map such data to the interval [−1, 1]. The equations for this process are expressed as follows:

here, xmax and xmin represent the extreme values within the measurement range, xori is the unprocessed data point, and xnor is the resultant normalized value. This xnorm consequently designates the actual data presented to the network input layer.

RMSE and MAXE are used to evaluate the SOC estimation performance of the method. RMSE measures the average magnitude of the estimation error, indicating the precision of the results, while MAXE measures the largest absolute error, indicating the worst-case deviation.

In the equations, n denotes the total sample count, while SOCreal and SOCi represent the ground-truth SOC (obtained via the Coulomb counting method) and the model’s estimated value. SOCaverage signifies the mean of all ground-truth SOC values.

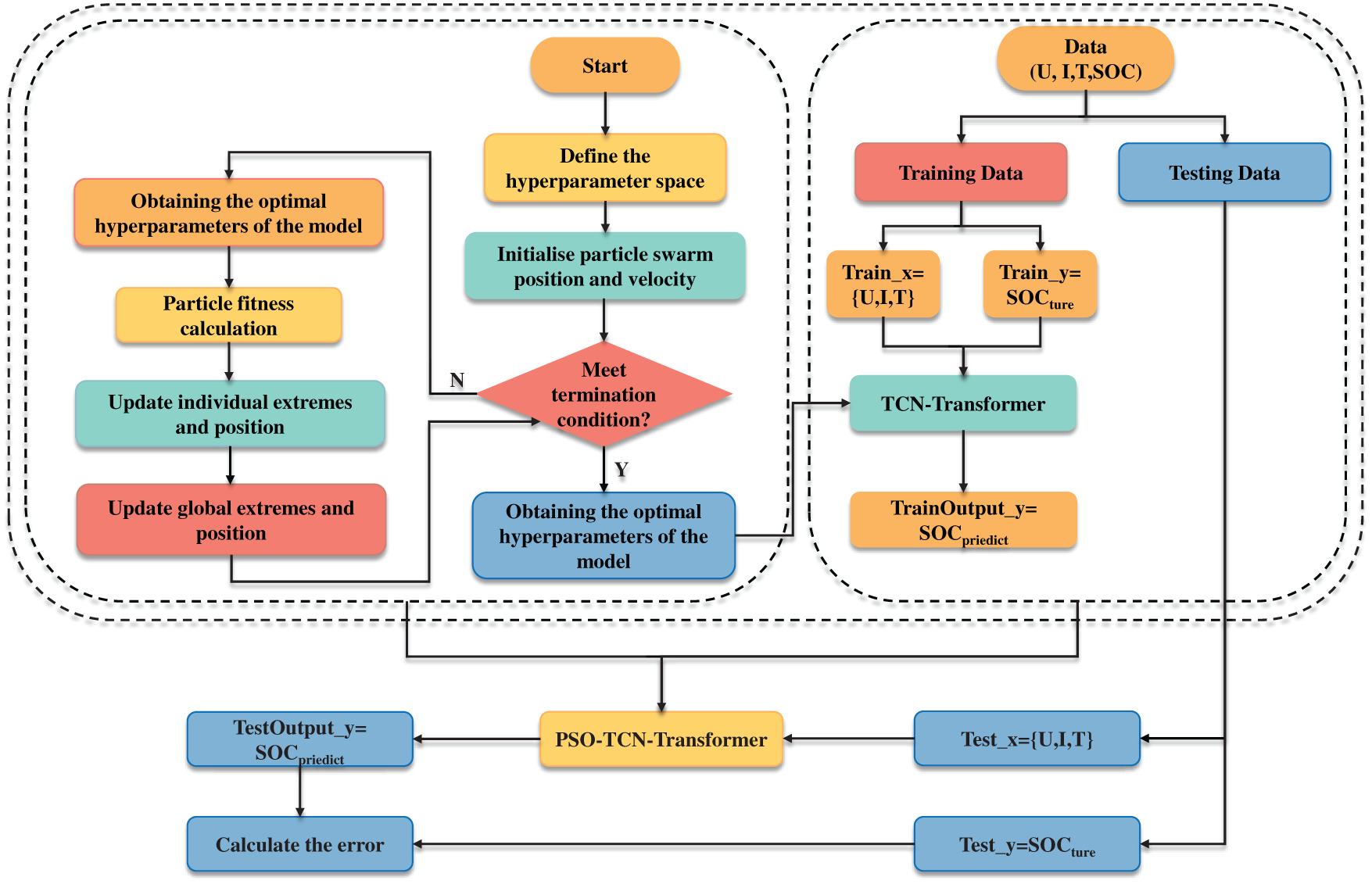

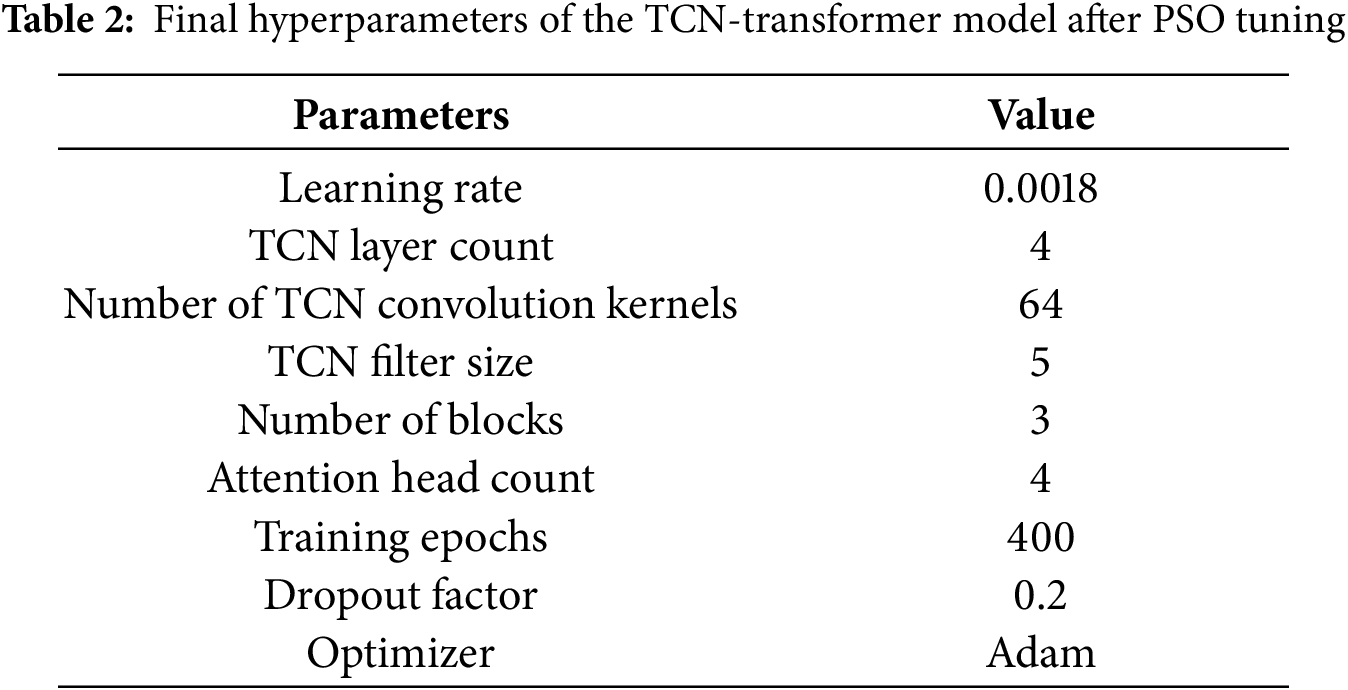

3.3 PSO-TCN-Transformer Simulation Steps

PSO algorithm was utilized to tune key hyperparameters of the TCN-Transformer model, encompassing the number of TCN layers, learning rate, number of Transformer attention heads, and maximum training epochs. The search ranges were defined as follows: TCN layer count (integer range [1, 4]), learning rate [0.0001, 0.005], attention head count [1, 6], and training epochs [100, 500]. The population size was set to 30, the iteration count to 100, and the Adam optimizer was chosen; additionally, a regularization parameter was introduced to prevent overfitting. The optimization workflow is illustrated in Fig. 9, with the final hyperparameter optimization results for the network via PSO presented in Table 2. The process involves the following steps:

(1) The hyperparameter optimization process commenced with the configuration of the search space. For the TCN-Transformer model, the key parameters to be optimized were delineated, encompassing the depth of TCN layers, the global learning rate, the count of Transformer attention heads, and the upper limit of training epochs. The feasible range for each parameter was bounded based on preliminary experiments and established domain knowledge.

(2) The optimization commences with the initialization phase of the particle swarm. Each particle is assigned a random initial position and velocity within the predefined bounds, where each position vector encodes a distinct hyperparameter set for the TCN-Transformer network. This stochastic initialization ensures a comprehensive exploration of the search space at the outset.

(3) For each particle, adjust its velocity and position by applying Eqs. (6) and (7). After obtaining the new position, calculate the corresponding fitness value, and use this value to revise pbest and gbest of the particle swarm.

(4) The algorithm subsequently updates both the individual and global best positions. Each particle’s current fitness is evaluated against its pbest; if surpassed, the pbest is replaced by the current position. Concurrently, the swarm’s gbest is updated to the position of the particle achieving the highest fitness among all current pbest values.

(5) After confirming the optimal parameters from PSO, construct the TCN-Transformer model with these parameters and conduct network training according to the preset training process.

(6) Use the trained TCN-Transformer model to perform SOC prediction on the test set, and calculate evaluation metrics such as RMSE and MAXE to assess the model’s prediction error.

Figure 9: Flowchart of the TCN-Transformer model for PSO optimization

4.1 McMaster University Dataset Prediction Results and Discussion

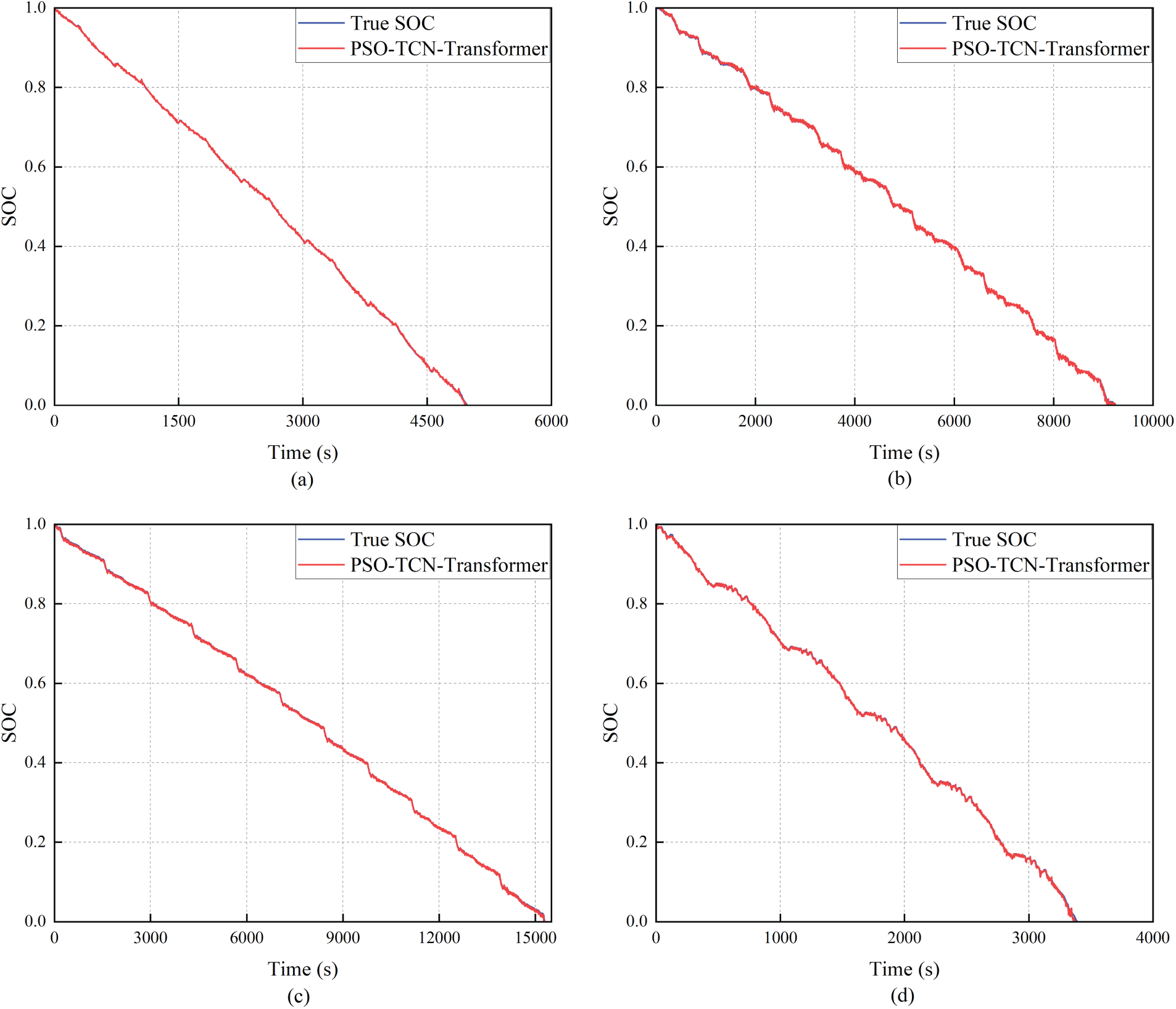

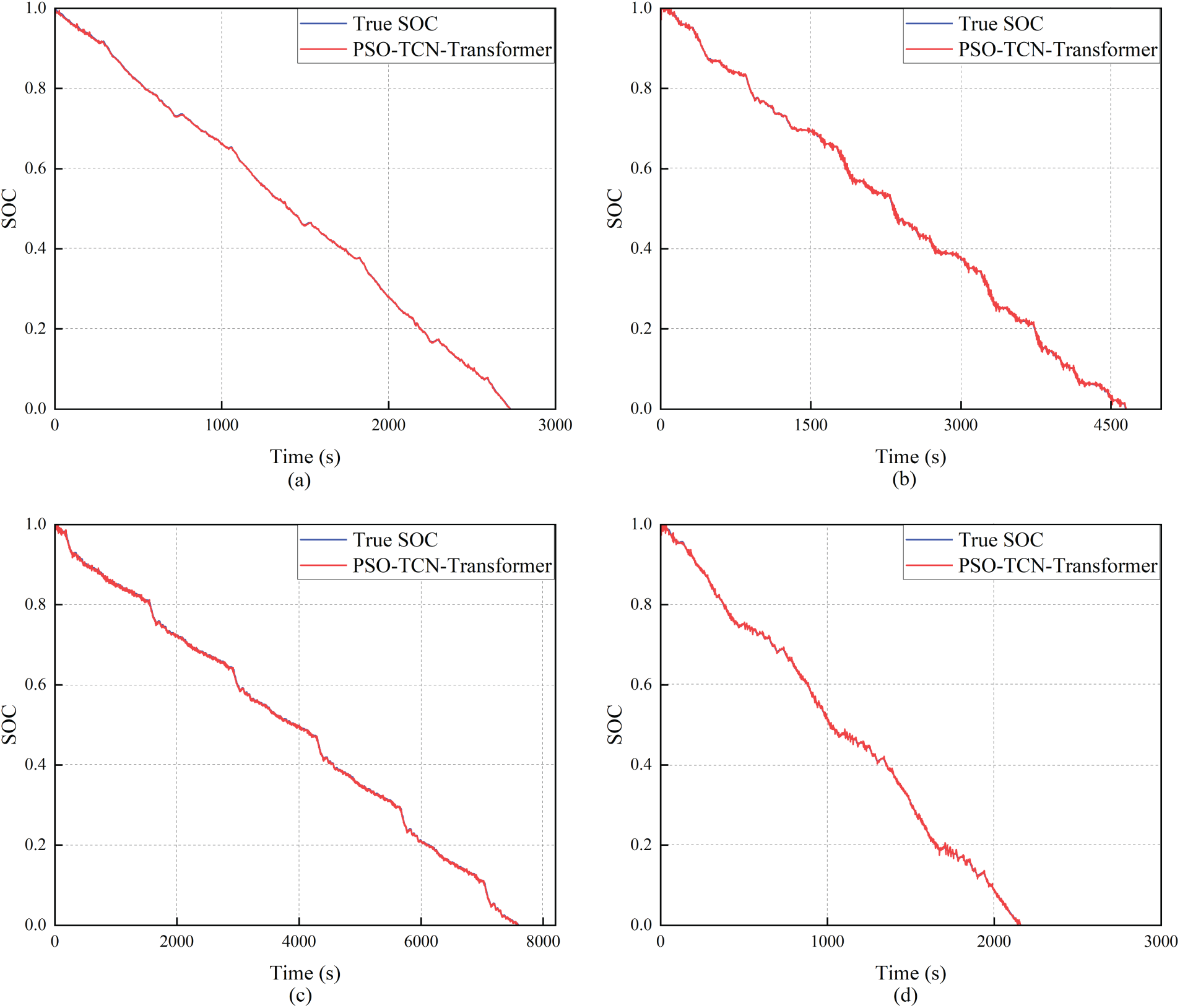

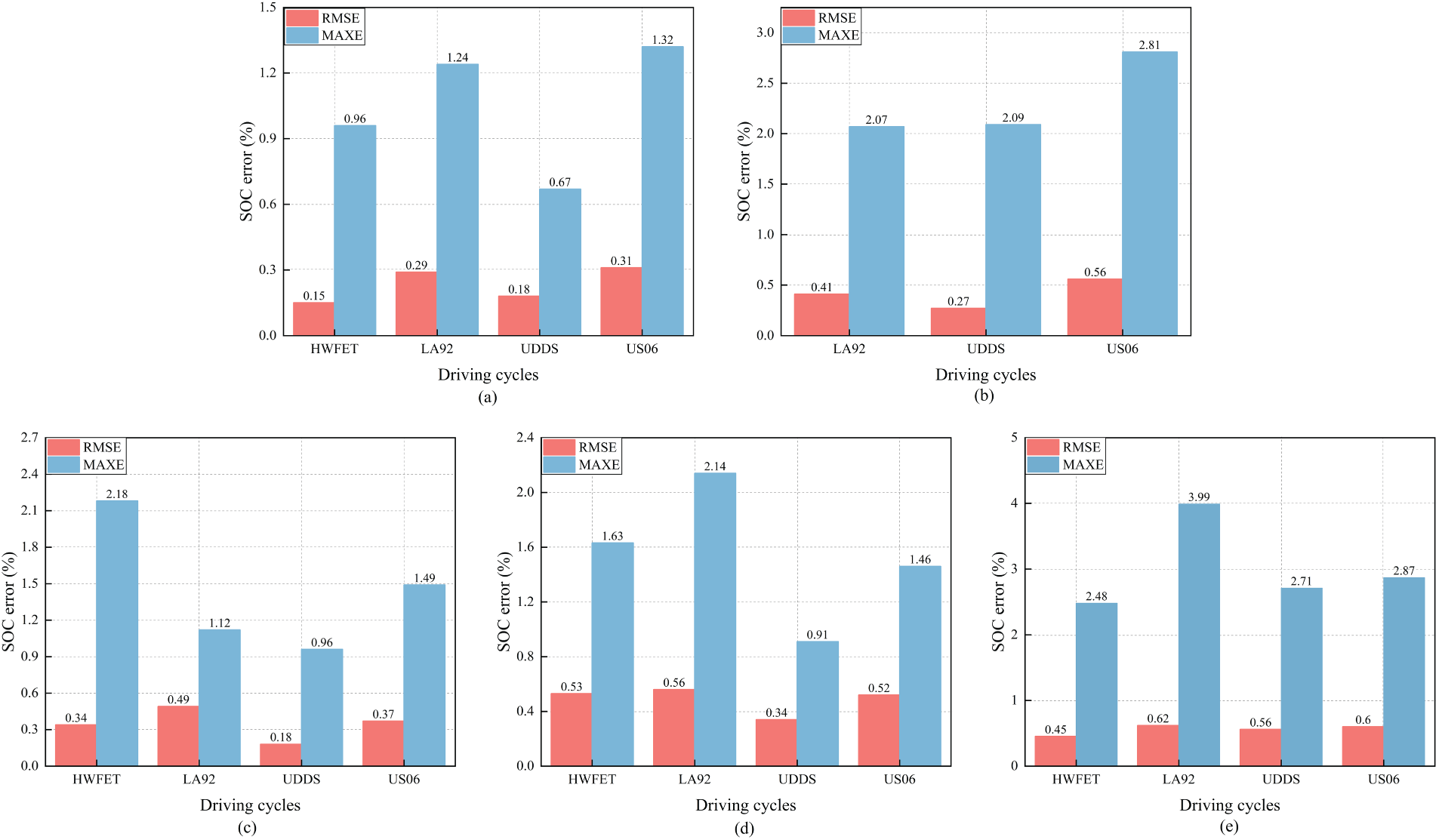

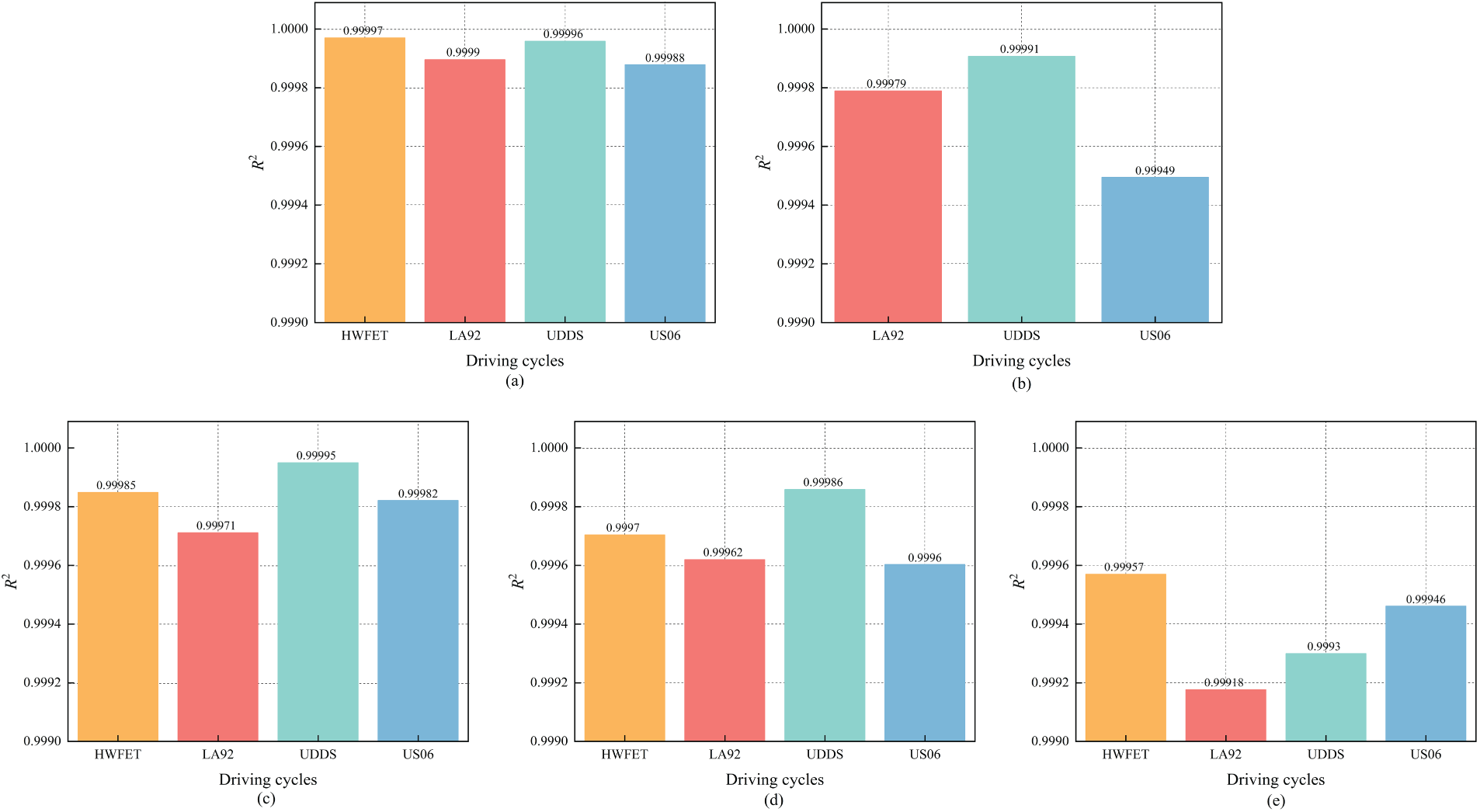

For the purpose of model validation, simulation experiments were conducted using the publicly available McMaster University dataset. The corresponding results, which illustrate the predictive performance and key evaluation metrics, are presented in Figs. 10–16.

Figure 10: Performance of the PSO-TCN-Transformer model under 40°C (Subfigures present SOC estimation results for (a) HWFET, (b) LA92, (c) UDDS, and (d) US06 profiles)

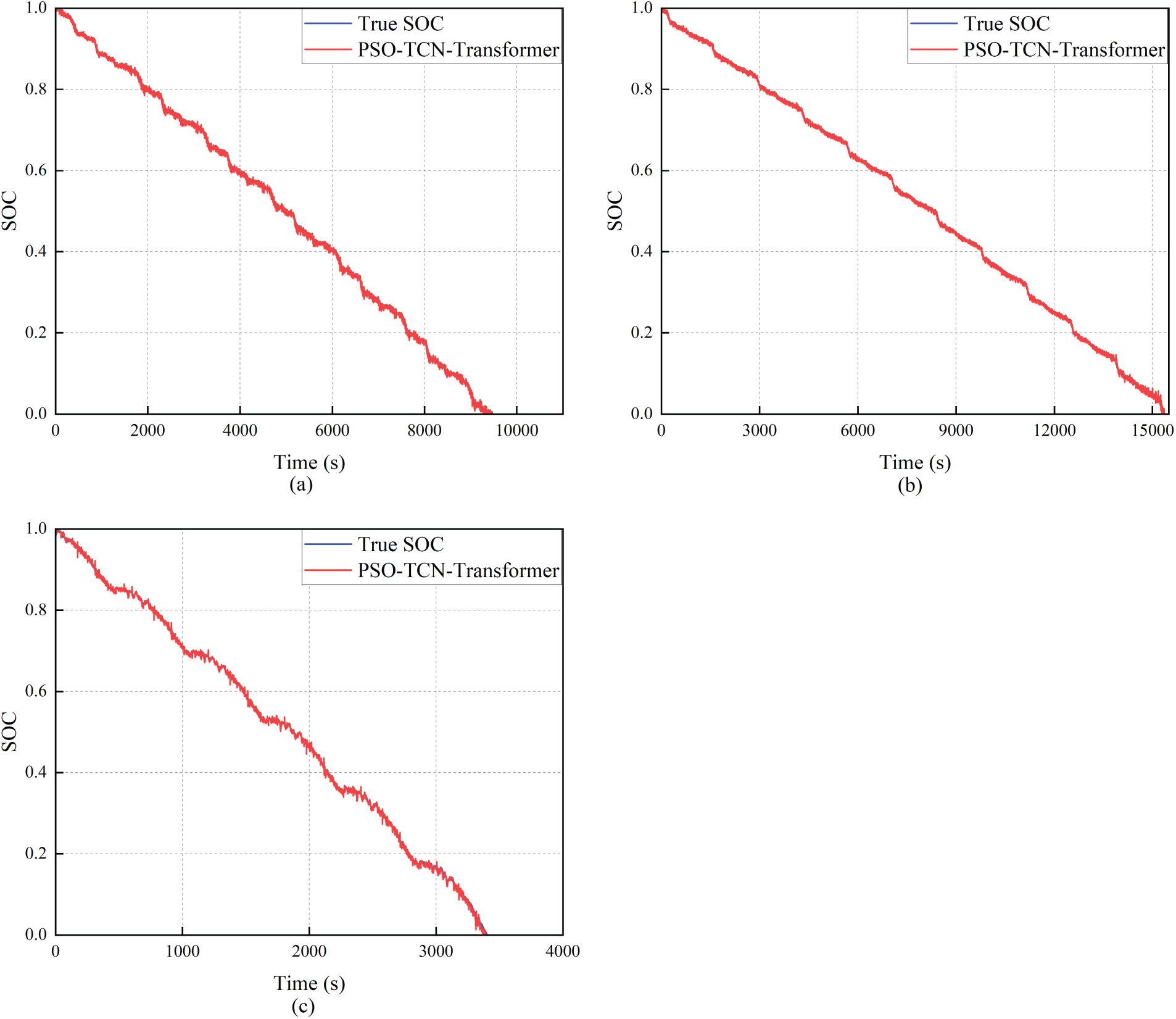

Figure 11: Performance of the PSO-TCN-Transformer model under 25°C (Subfigures present SOC estimation results for (a) LA92, (b) UDDS, and (c) US06 profiles)

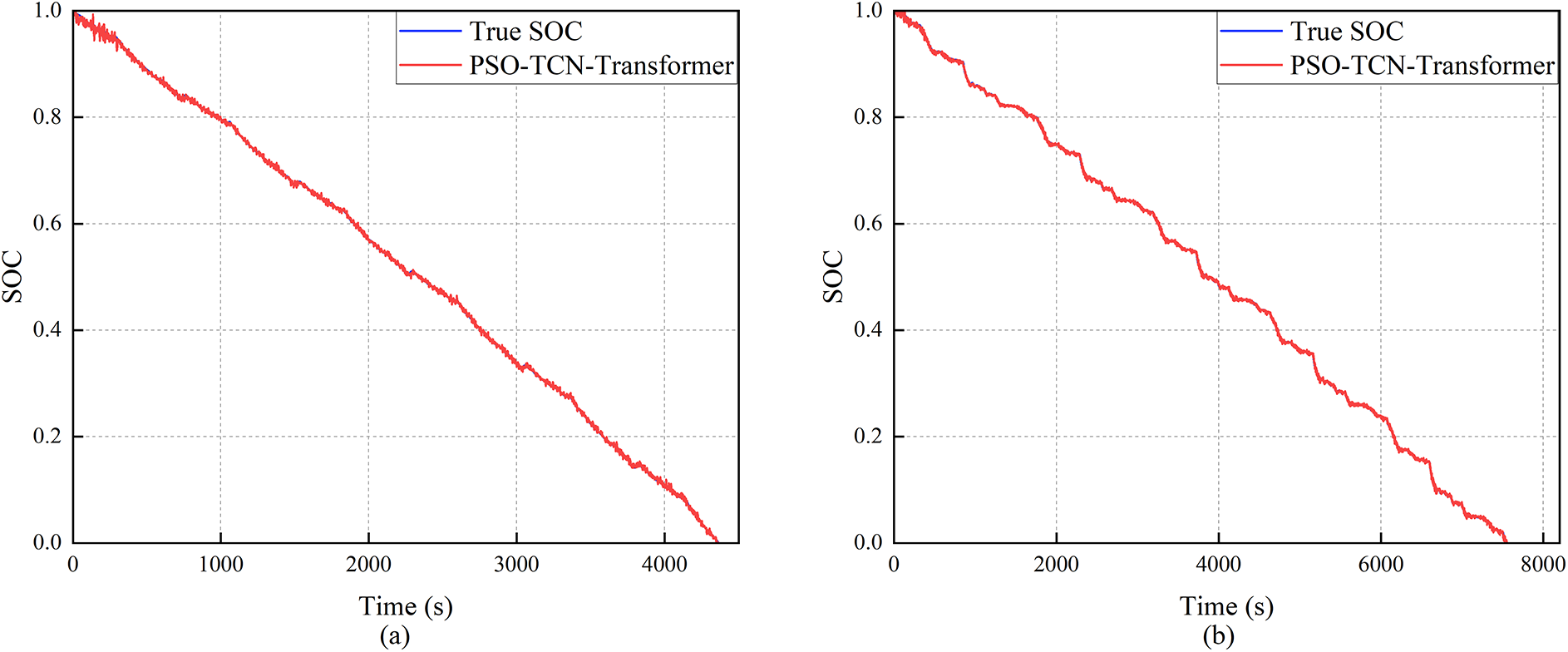

Figure 12: Performance of the PSO-TCN-Transformer model under 0°C (Subfigures present SOC estimation results for (a) HWFET, (b) LA92, (c) UDDS, and (d) US06 profiles)

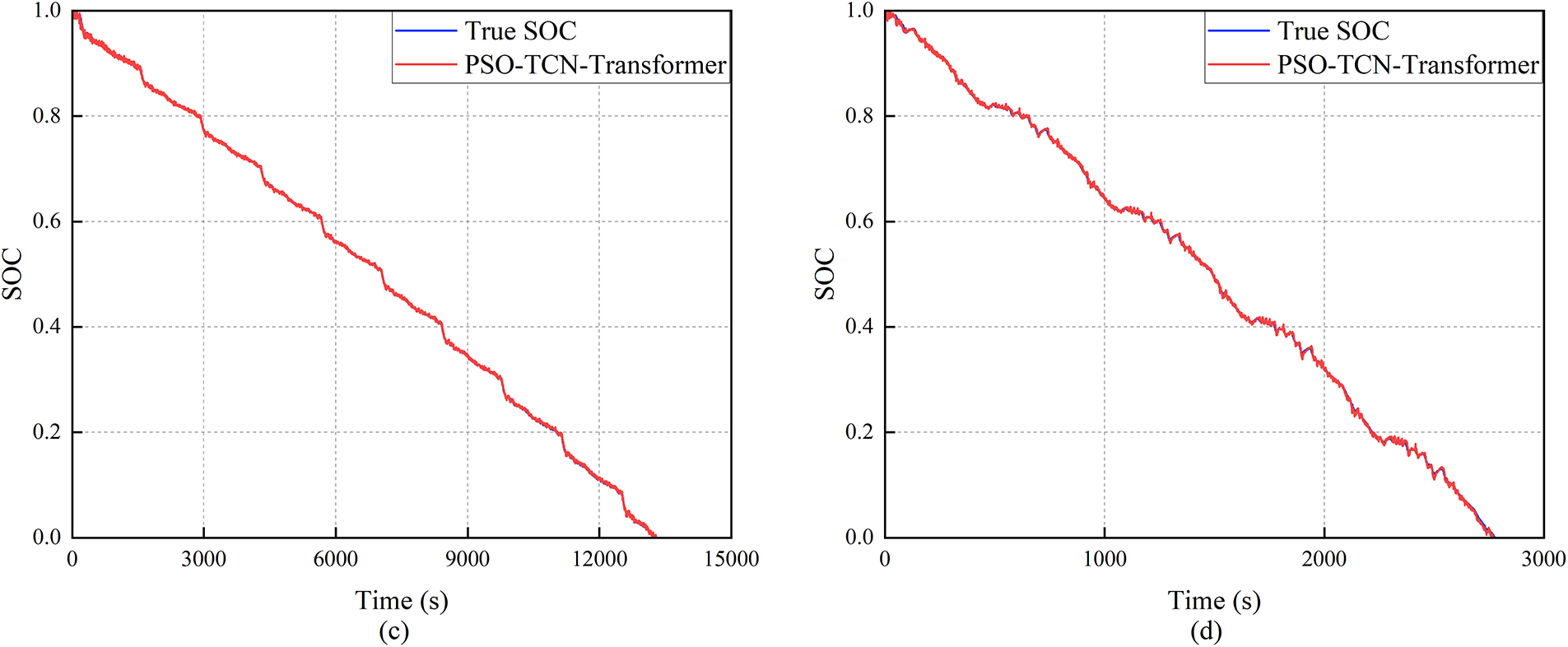

Figure 13: Performance of the PSO-TCN-Transformer model under −10°C (Subfigures present SOC estimation results for (a) HWFET, (b) LA92, (c) UDDS, and (d) US06 profiles)

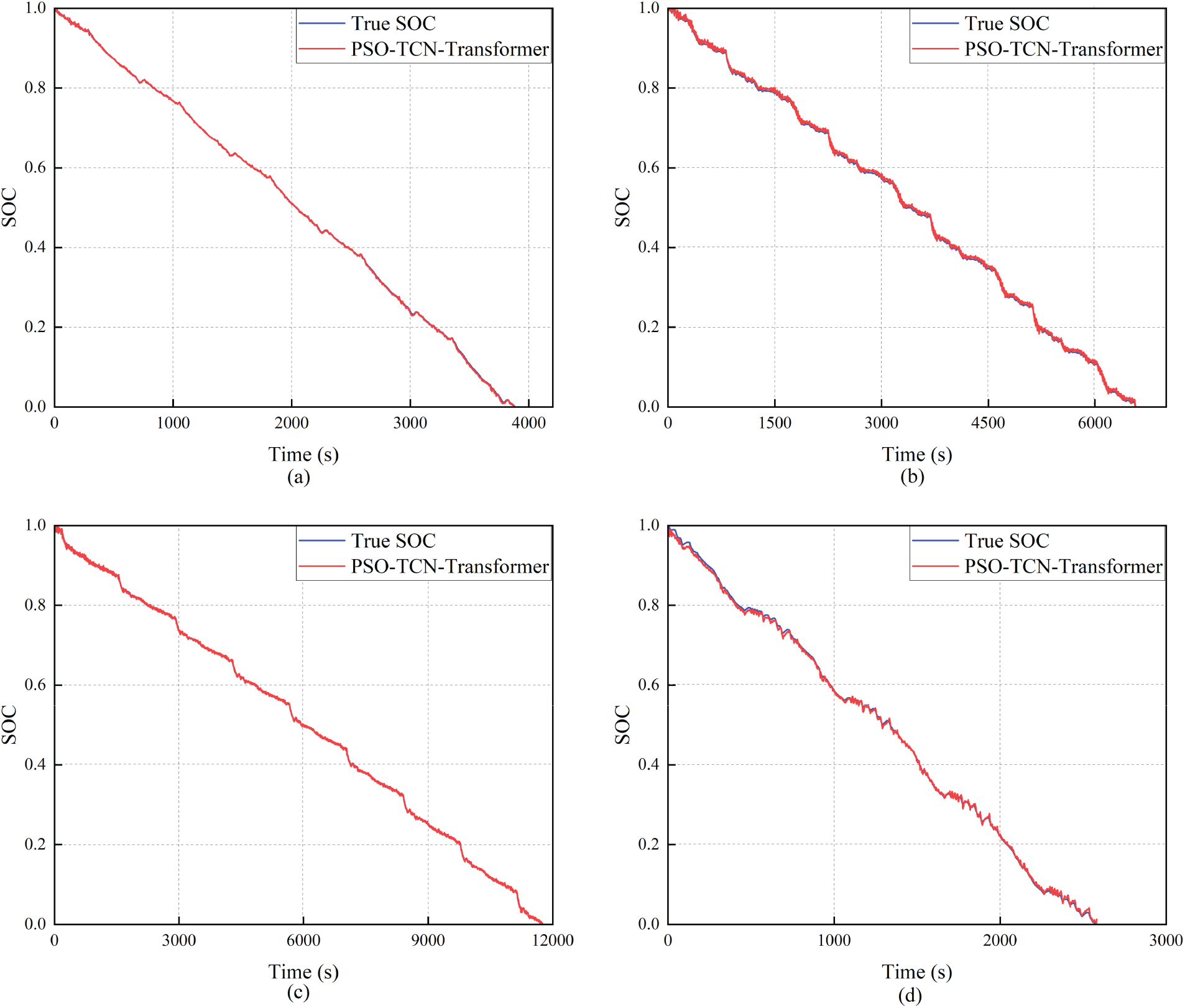

Figure 14: Performance of the PSO-TCN-Transformer model under −20°C (Subfigures present SOC estimation results for (a) HWFET, (b) LA92, (c) UDDS, and (d) US06 profiles)

Figure 15: Performance consistency: RMSE and MAXE of the proposed model across temperatures from 40°C to −20°C (Subfigures correspond to (a) 40°C, (b) 25°C, (c) 0°C, (d) −10°C, and (e) −20°C)

Figure 16: Prediction accuracy (R2) of SOC estimations under a broad temperature spectrum. Results are shown for (a) 40°C, (b) 25°C, (c) 0°C, (d) −10°C, and (e) −20°C

LSTM and GRU networks exhibit strengths in sequence modeling, with particular proficiency in capturing long-term dependencies. Compared to traditional RNNs, LSTM introduces a gating mechanism that facilitates more effective handling of long-sequence data and mitigates gradient vanishing/exploding issues; GRU similarly incorporates simplified gating structures to achieve comparable sequence modeling capabilities while reducing parameter complexity.

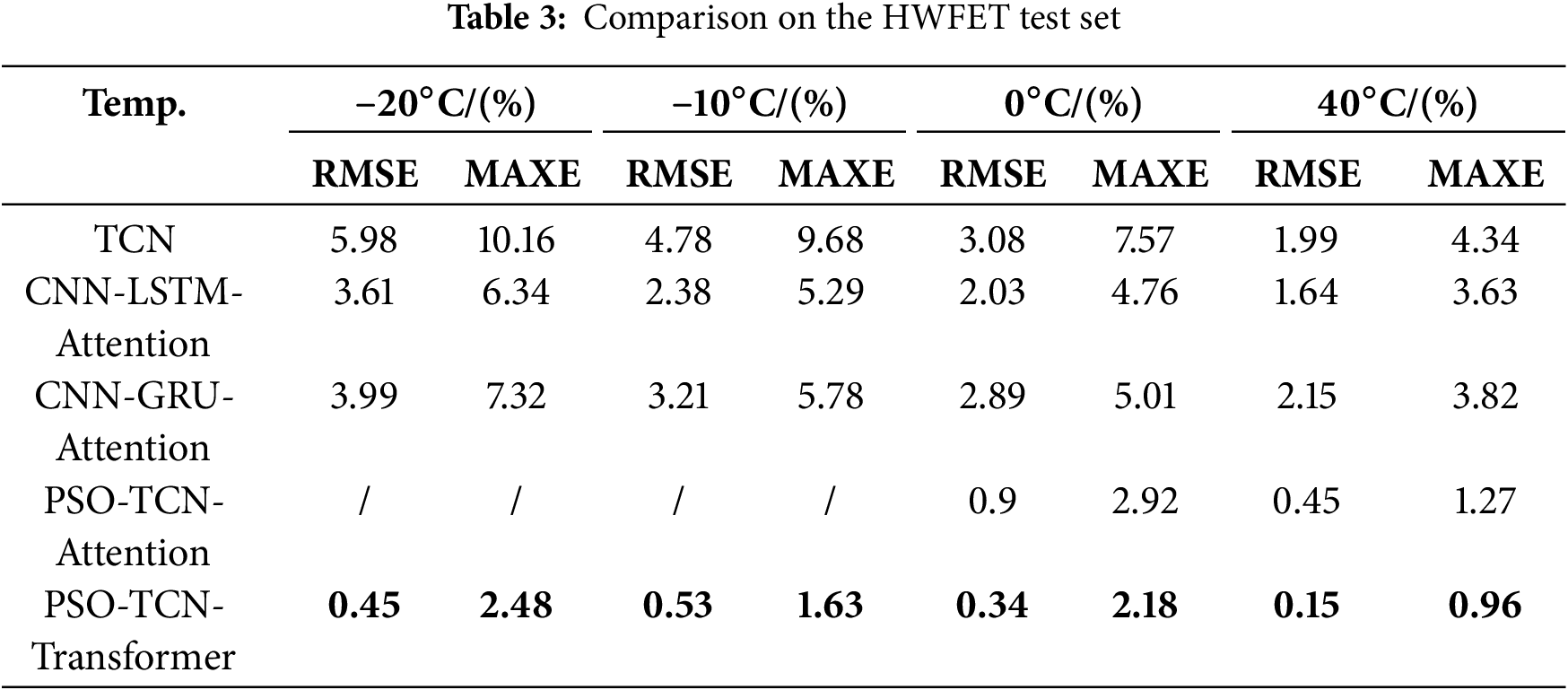

However, the Temporal Convolutional Network (TCN) inherently addresses the key limitations of standalone LSTM/GRU architectures. First, TCNs leverage convolutional operations to enable computational parallelism for sequential data processing, which drastically reduces training latency compared to the serial computation inherent in LSTM/GRU architectures. This parallel design also maintains a more compact parameter footprint, avoiding the redundant parameter overhead of recurrent units. Second, the dilation mechanism in TCNs expands the receptive field more efficiently than the stepwise sequence traversal of LSTM/GRU. This efficiency enables TCNs to capture multi-scale temporal features in battery operation data—such as short-term current pulses and medium-term temperature trends—which are critical for accurate SOC estimation. To avoid redundant comparisons with standalone LSTM/GRU, this study focused on performance benchmarking against hybrid LSTM/GRU-based architectures. These hybrids—such as CNN-GRU-Attention and CNN-LSTM-Attention—integrate advanced modules to address the limitations of standalone recurrent networks.

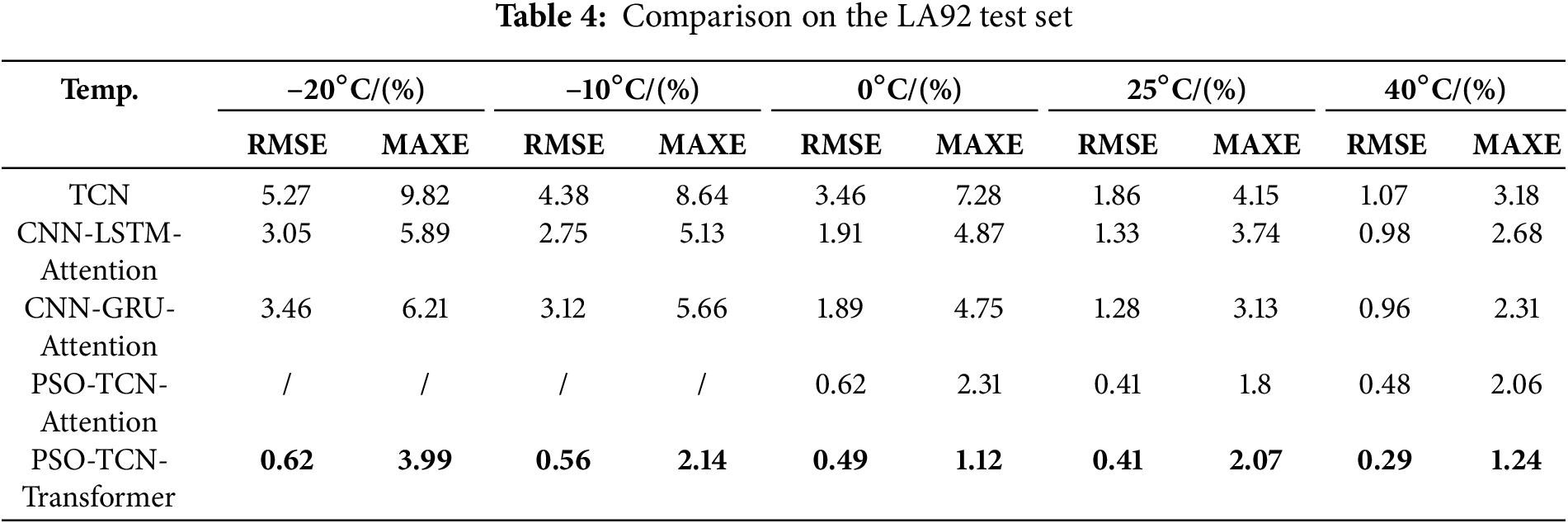

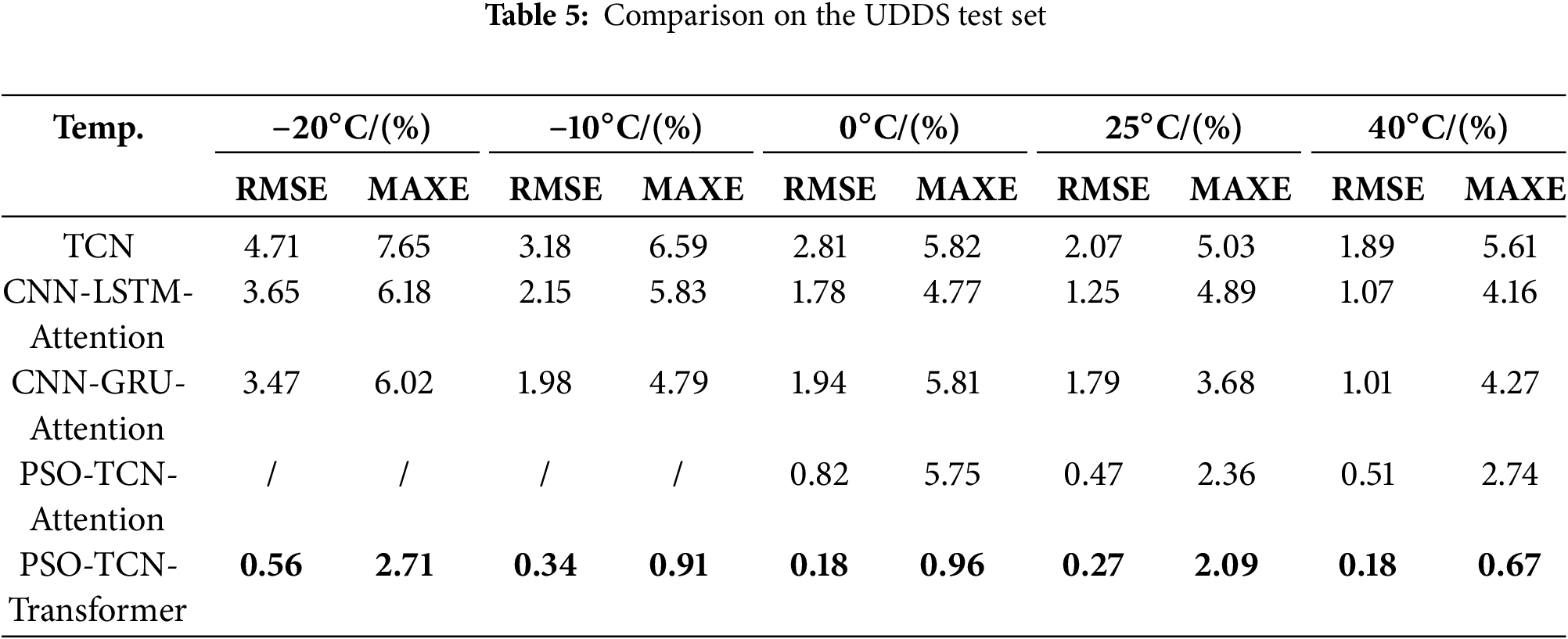

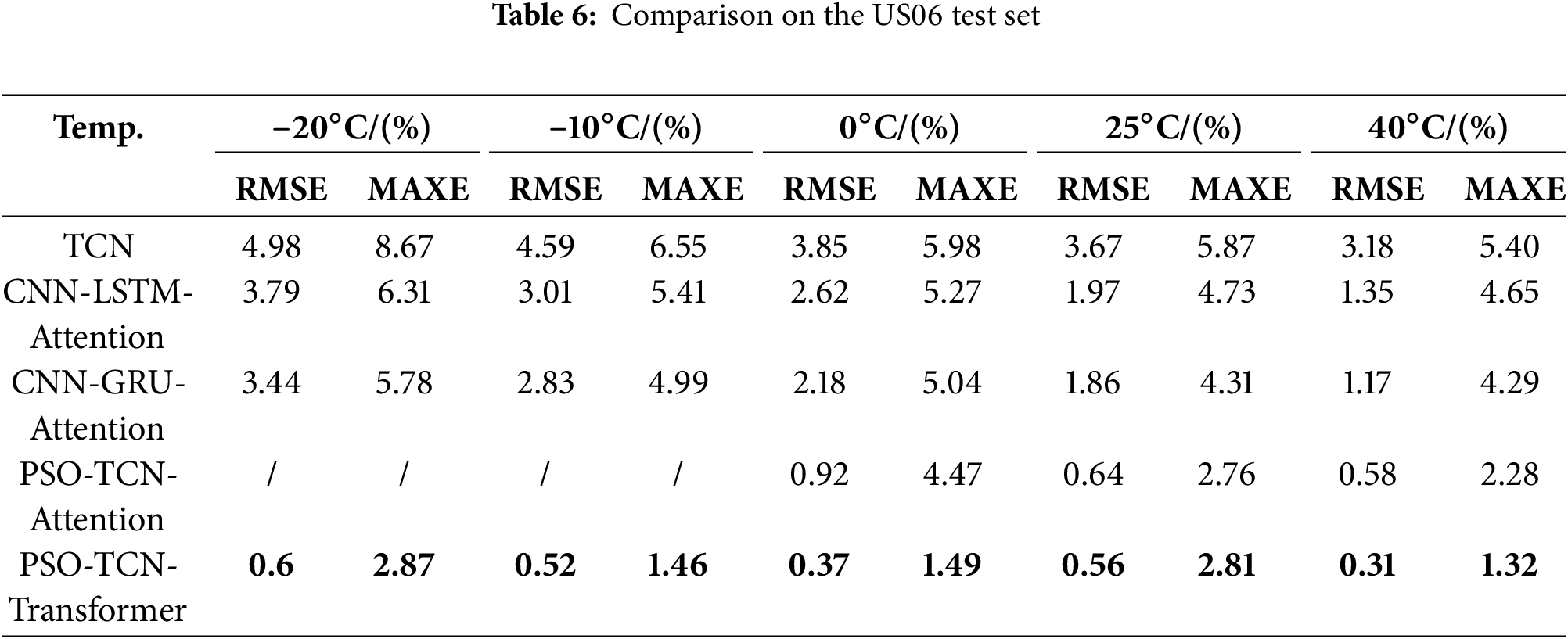

SOC estimation results of the PSO-TCN-Transformer model are shown in Figs. 10–14. As depicted in Fig. 15, the PSO-TCN-Transformer model shown excellent performance across various test scenarios. At 40°C, the RMSE values for the HWFET, LA92, UDDS, and US06 test sets were 0.15%, 0.29%, 0.18%, and 0.31%, respectively, with corresponding MAXE values of 0.96%, 1.24%, 0.67%, and 1.32%. At 25°C, for the LA92, UDDS, and US06 test sets, RMSE values were 0.41%, 0.27%, and 0.56%, while MAXE reached 2.07%, 2.09%, and 2.81%. At 0°C, RMSE values for the HWFET, LA92, UDDS, and US06 test sets were 0.34%, 0.49%, 0.18%, and 0.37%, with MAXE values of 2.18%, 1.12%, 0.96%, and 1.49%. At −10°C, RMSE values were 0.53%, 0.56%, 0.34%, and 0.52%, and MAXE values were 1.63%, 2.14%, 0.91%, and 1.46%, respectively. At −20°C, the RMSE values for the four test sets were 0.45%, 0.62%, 0.56%, and 0.60%, and the MAXE values were 2.48%, 3.99%, 2.71%, and 2.87%. The coefficient of determination (R2) was further introduced to comprehensively assess the SOC estimation error. As illustrated in Fig. 16, the R2 values of the PSO-TCN-Transformer model exceeded 99.94% under different operating conditions and temperatures, approaching 1, which highlights the model’s high accuracy and robustness. As shown in Tables 3–6, the proposed PSO-TCN-Transformer framework consistently outperforms these hybrid architectures, ensuring a rigorous evaluation against enhanced LSTM/GRU-based methods while eliminating unnecessary comparisons with basic recurrent models.

Compared with the PSO-TCN-Attention method proposed in [36], at 40°C, our model outperformed [36] across all test cycles: for HWFET, our RMSE (0.15%) was 71% lower than [36]’s 0.52%; for LA92, our RMSE (0.29%) was 59% lower than [36]’s 0.71%; for UDDS and US06, the RMSE reduction was 64% and 58%, respectively. At 0°C, ref. [36]’s method exhibited a maximum MAXE of 5.75% (LA92 cycle), while our model’s MAXE across all cycles remained below 2.20%—this improvement stems from our Transformer’s ability to capture long-range temperature-dependent SOC dynamics, which ref. [36]’s attention mechanism fails to fully exploit. Importantly, ref. [36] only validated performance above 0°C, limiting its use in cold regions. In contrast, our model was tested at −10°C and −20°C: at −10°C, it achieved RMSE < 0.63% and MAXE < 2.15%; at −20°C, RMSE < 0.63% and MAXE < 4.0%, demonstrating superior low-temperature adaptability.

In summary, although the SOC estimation accuracy of the PSO-TCN-Transformer model slightly decreased with the reduction of temperature, the errors and fluctuations remained minimal. The model shown consistent performance across a wide temperature range, indicating superior robustness and generalization capabilities in both high and low-temperature environments.

4.2 Results and Analysis on Self-Built Datasets

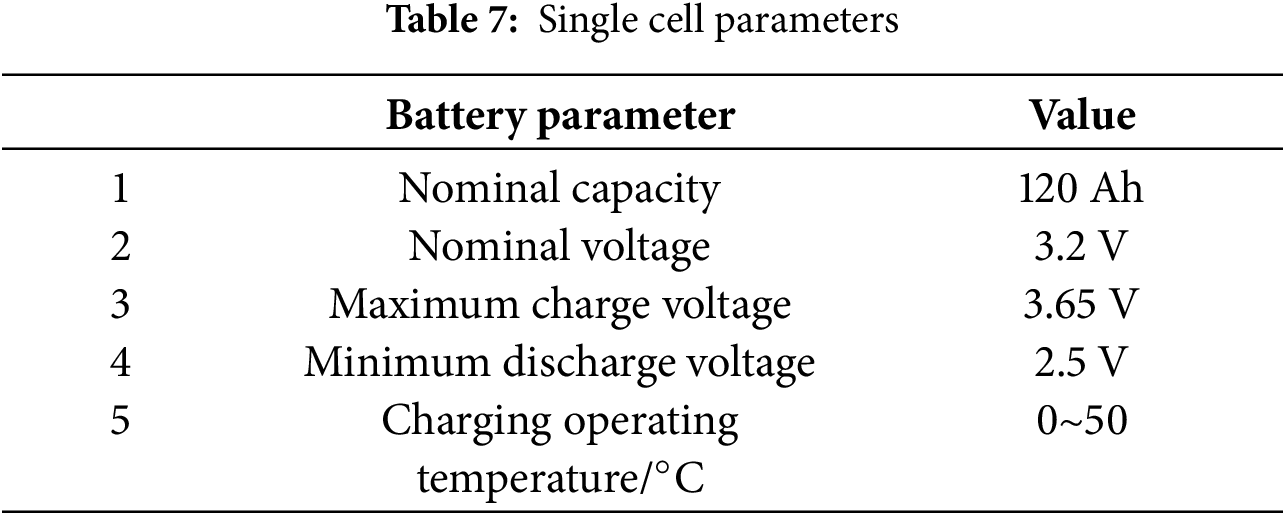

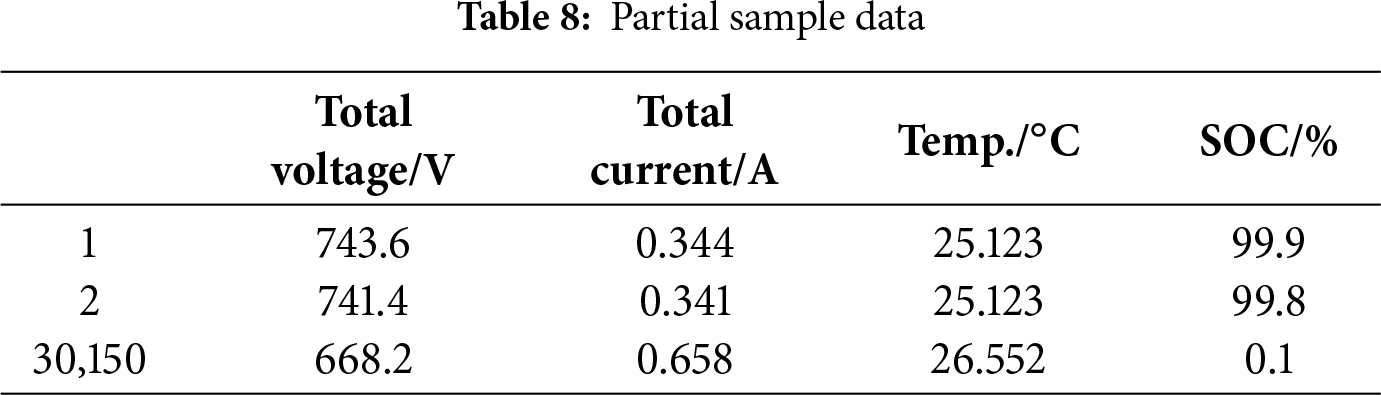

The generalizability of the proposed method was further evaluated using a small-sample dataset to assess its performance with limited training data. Validation was performed on historical discharge data sourced from an industrial energy storage facility [13]. The battery system was configured with 120 Ah lithium iron phosphate (LiFePO4) cells. A 2P16S topology was employed at the pack level, where two cells in parallel form a unit, and 16 such units are connected in series, yielding a pack capacity of 240 Ah and a nominal voltage of 51.2 V. The full system integrated fourteen of these packs in series, resulting in a total rated voltage of 716.8 V, a capacity of 240 Ah, and an overall energy rating of 172 kWh. Detailed cell specifications are provided in Table 7.

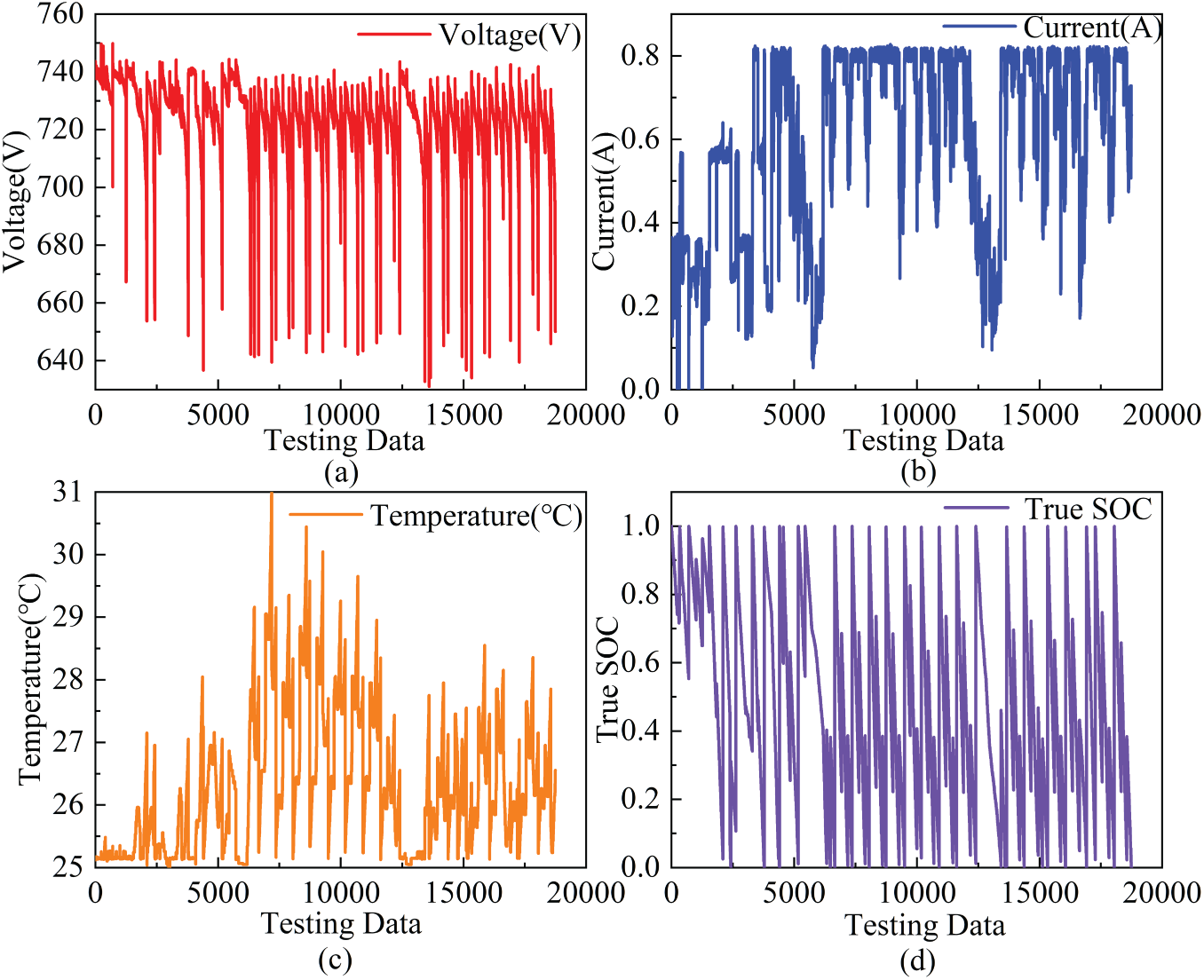

To accurately predict the SOC dynamics of the Li-ion battery pack during discharge, historical data from its discharge states between 14 August 2020, and 15 September 2020, were selected. The dataset comprising battery pack voltage, current, temperature, and measured SOC values underwent rigorous cleaning to discard abnormal readings, yielding a total of 30,150 valid data sets, as documented in Table 8. Moreover, trends in parameter changes during the battery discharge process were visualized using the external parameter curves in Fig. 17.

Figure 17: Evolution of critical discharge parameters: (a) Voltage, (b) Current, (c) Temperature, and (d) SOC

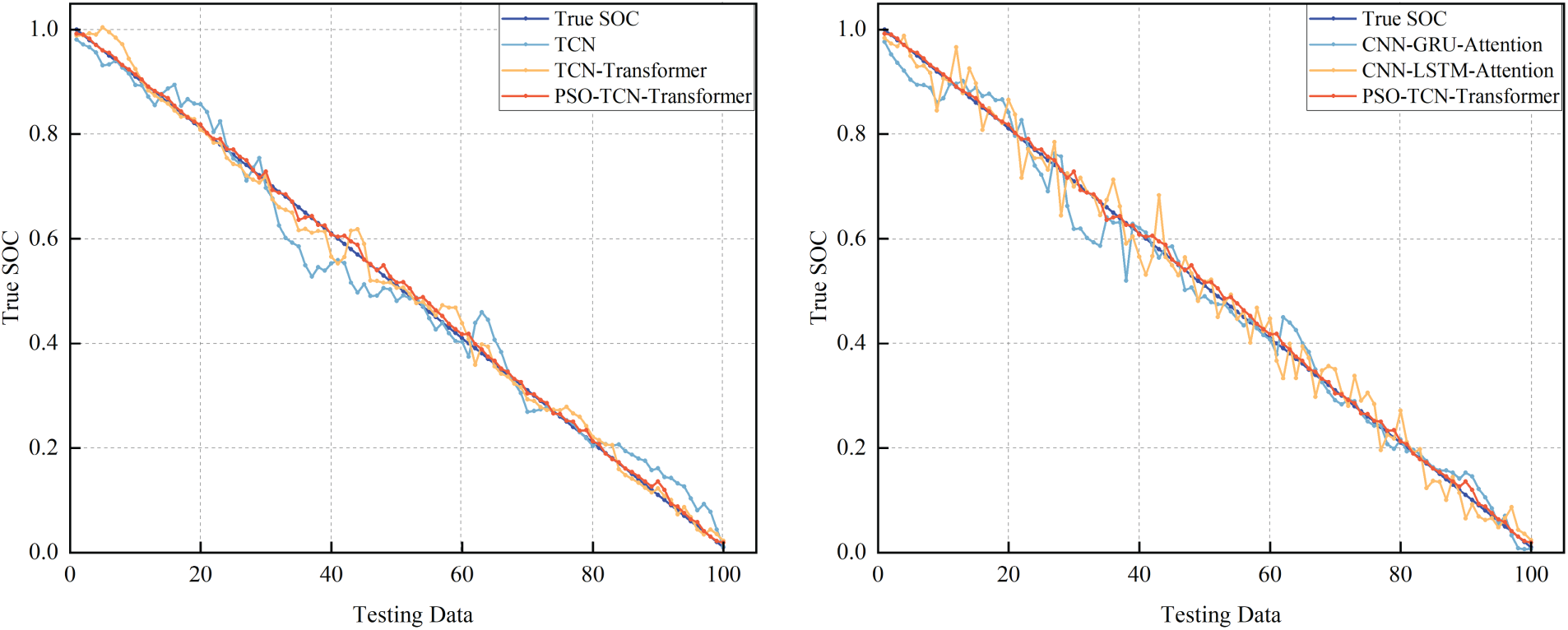

From the 30,150 sets of historical battery pack discharge data, 100 uniformly sampled data points with SOC ranging from 100% to 1% were extracted as the test set, while the remaining data served as the training set. Given the enhanced visibility of fitting trends in the graphs under this sampling strategy, R2 goodness of fit metrics were not included in the comparison. The prediction results are visualized in Fig. 18.

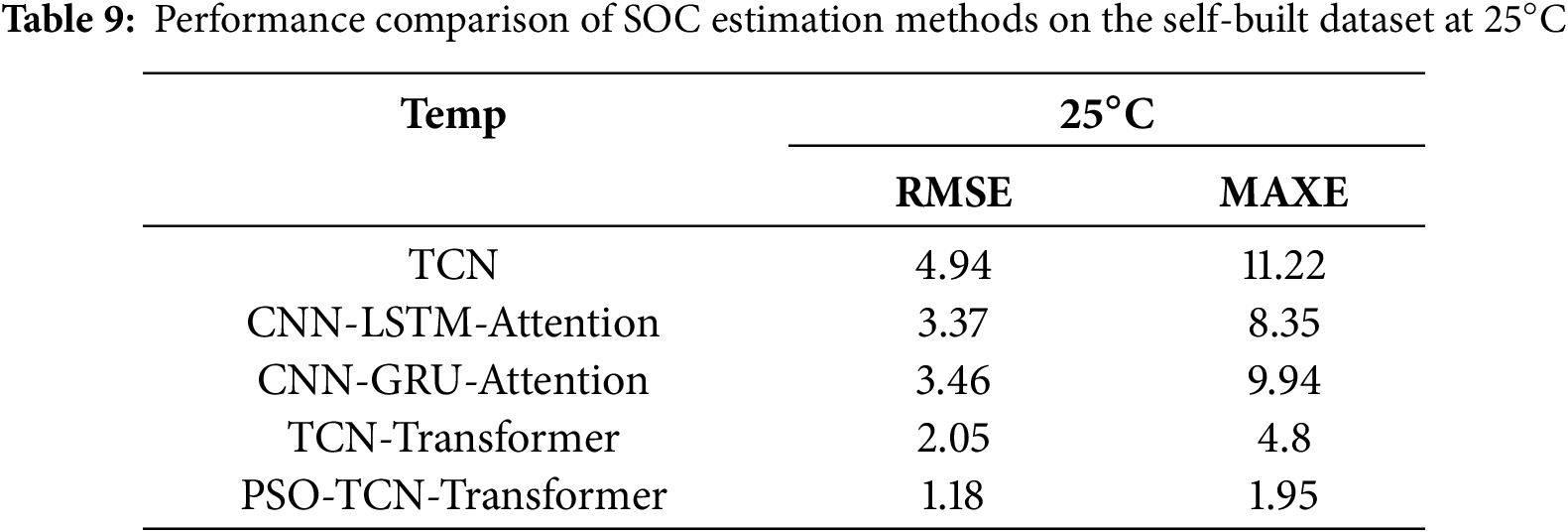

Figure 18: SOC estimations by different models

To ensure a fair comparative analysis, all benchmark models were meticulously configured to match the PSO-TCN-Transformer model in terms of network layer depth, node architecture, and hyperparameter settings during training. Post-configuration, lithium battery datasets were uniformly fed into each model for evaluation, with the prediction outcomes illustrated in Fig. 18. The traditional TCN model exhibited inferior fluctuations and errors, failing to satisfy the stringent accuracy and robustness criteria demanded by practical applications. Specifically, when applied to lithium battery SOC estimation, the standalone TCN model shown pronounced instability, yielding suboptimal results. Although hybrid models such as CNN-GRU-Attention and CNN-LSTM-Attention mitigated some of these issues, reducing SOC estimation variability, their predictive accuracy remained inadequate. Quantitative analysis is presented in Table 9, further validated these observations. The standalone TCN model recorded an RMSE of 4.94% and a MAXE of 11.22%, while the CNN-GRU-Attention and CNN-LSTM-Attention hybrids achieved RMSE values of 3.46% and 3.37%, with corresponding MAXE values of 9.94% and 8.35%. The TCN-Transformer model shown improved performance, with an RMSE of 2.05% and a MAXE of 4.8%. Notably, the PSO-optimized model variant performed better than all other models, with an RMSE of 1.18% and a MAXE of 1.95—this result highlights the effectiveness of the proposed optimization approach.

This work’s key strength stems from three distinct contributions. First, it tackles the longstanding trade-off between capturing granular local features and modeling long-range temporal dynamics—a critical gap in single-model and traditional hybrid architectures—by integrating TCN and Transformer modules. This fusion enables comprehensive capture of both short-term electrochemical transients (e.g., voltage fluctuations during charging) and long-term environmental impacts on SOC, capabilities that conventional designs have not fully achieved. Second, it delivers holistic hyperparameter tuning for both the TCN and Transformer modules, ensuring neither component is optimized in isolation. Third, it closes the practical gap in ultra-wide temperature adaptability for SOC estimation. The proposed model demonstrates a significant advantage over conventional methods by maintaining reliable performance across an extensive temperature range from −20°C to 40°C, effectively addressing the industry-wide limitation that restricts most existing approaches to environments above 0°C. Within this broad operational window, it achieves an RMSE below 0.6%, a MAXE under 4.0%, and the R2 value of 99.99%. Its practical utility was further validated using real-world data from an energy storage company, where it outperformed established methods with an RMSE of 1.18% and a MAXE of 1.95%, underscoring both its technical robustness and engineering applicability. Notably, the study has several limitations. The model’s generalization capability remains unverified for non-cylindrical form factors or emerging chemistries, constrained by its training and testing on datasets comprising solely commercial cylindrical cells. This confines its applicability across the diverse spectrum of industrial battery types. Additionally, an omission in the model is its failure to incorporate the cross-coupling between SOC and SOH. Consequently, estimation accuracy could be compromised in aged batteries, where the correlation between SOC and electrochemical signals is significantly influenced by capacity decay and impedance increase.

Future research will be centered on the influence of battery aging factors on SOC estimation, aiming at the design of more advanced methods for enhanced long-term reliability. Furthermore, improvement of SOC estimation algorithms under conditions of battery aging and capacity balancing—issues driven by cyclic capacity degradation—will be pursued. Concluding this trajectory, experimental verification will be undertaken through the deployment of the model on dedicated hardware platforms.

Acknowledgement: Not applicable.

Funding Statement: This research was funded in part by the Doctoral Scientific Research Foundation of Beijing University of Civil Engineering and Architecture under Grant ZF15054, in part by the Pyramid Talent Training Project of Beijing University of Civil Engineering and Architecture under Grant GJZJ20220802, and in part by the BUCEA Post Graduate Innovation Project under Grant PG2024095.

Author Contributions: The authors confirm contribution to the paper as follows: study conception and design: Xincheng Han, Hongyan Ma; data collection: Xincheng Han, Hongyan Ma; analysis and interpretation of results: Xincheng Han; draft manuscript preparation: Xincheng Han; writing and revising papers: Xincheng Han, Hongyan Ma, Chengzhi Ren, Shuo Meng; funding and resource support: Hongyan Ma. All authors reviewed the results and approved the final version of the manuscript.

Availability of Data and Materials: The data used to support the findings of this study are available from the corresponding author upon request.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest to report regarding the present study.

References

1. Zhao J, Zhu Y, Zhang B, Liu M, Wang J, Liu C, et al. Review of state estimation and remaining useful life prediction methods for lithium-ion batteries. Sustainability. 2023;15(6):5014. doi:10.3390/su15065014. [Google Scholar] [CrossRef]

2. Zhang RP, Shi XG, Wei DC, Wang YQ, Yin W, Cheng Y. Multistage variable current pulse charging strategy based on polarization characteristics of lithium-ion battery. IEEE Trans Energy Convers. 2025;40(1):422–36. doi:10.1109/TEC.2024.3446168. [Google Scholar] [CrossRef]

3. Ren Z, Du C. A review of machine learning state-of-charge and state-of-health estimation algorithms for lithium-ion batteries. Energy Rep. 2023;9(12):2993–3021. doi:10.1016/j.egyr.2023.01.108. [Google Scholar] [CrossRef]

4. Li P, Ju S, Bai S, Zhao H, Zhang H. State of charge estimation for lithium-ion batteries based on physics-embedded neural network. J Power Sources. 2025;640(1):236785. doi:10.1016/j.jpowsour.2025.236785. [Google Scholar] [CrossRef]

5. Zhang Z, Peng Z, Guan Y, Wu L. A nonlinear prediction method of lithium-ion battery remaining useful life considering recovery phenomenon. Int J Electrochem Sci. 2020;15(9):8674–93. doi:10.20964/2020.09.30. [Google Scholar] [CrossRef]

6. Shrivastava P, Soon TK, Bin Idris MYI, Mekhilef S, Adnan SBRS. Combined state of charge and state of energy estimation of lithium-ion battery using dual forgetting factor-based adaptive extended Kalman filter for electric vehicle applications. IEEE Trans Veh Technol. 2021;70(2):1200–15. doi:10.1109/TVT.2021.3051655. [Google Scholar] [CrossRef]

7. Tavakol-Moghaddam Y, Boroushaki M, Astaneh M. Reinforcement learning for battery energy management: a new balancing approach for Li-ion battery packs. Results Eng. 2024;23(33):102532. doi:10.1016/j.rineng.2024.102532. [Google Scholar] [CrossRef]

8. Feng X, Chen J, Zhang Z, Miao S, Zhu Q. State-of-charge estimation of lithium-ion battery based on clockwork recurrent neural network. Energy. 2021;236:121360. doi:10.1016/j.energy.2021.121360. [Google Scholar] [CrossRef]

9. Sánchez L, Otero J, Anseán D, Couso I. Health assessment of LFP automotive batteries using a fractional-order neural network. Neurocomputing. 2020;391(1–2):345–54. doi:10.1016/j.neucom.2019.06.107. [Google Scholar] [CrossRef]

10. Xing Y, He W, Pecht M, Tsui KL. State of charge estimation of lithium-ion batteries using the open-circuit voltage at various ambient temperatures. Appl Energy. 2014;113:106–15. doi:10.1016/j.apenergy.2013.07.008. [Google Scholar] [CrossRef]

11. Lu L, Han X, Li J, Hua J, Ouyang M. A review on the key issues for lithium-ion battery management in electric vehicles. J Power Sources. 2013;226(3):272–88. doi:10.1016/j.jpowsour.2012.10.060. [Google Scholar] [CrossRef]

12. Wang S, Zhang S, Wen S, Fernandez C. An accurate state-of-charge estimation of lithium-ion batteries based on improved particle swarm optimization-adaptive square root cubature Kalman filter. J Power Sources. 2024;624:235594. doi:10.1016/j.jpowsour.2024.235594. [Google Scholar] [CrossRef]

13. He W, Ma H, Guo R, Xu J, Xie Z, Wen H. Enhancing the state-of-charge estimation of lithium-ion batteries using a CNN-BiGRU and AUKF fusion model. Comput Electr Eng. 2024;120(9):109729. doi:10.1016/j.compeleceng.2024.109729. [Google Scholar] [CrossRef]

14. Wang S, Gao H, Takyi-Aninakwa P, Guerrero JM, Fernandez C, Huang Q. Improved multiple feature-electrochemical thermal coupling modeling of lithium-ion batteries at low-temperature with real-time coefficient correction. Prot Control Mod Power Syst. 2024;9(3):157–73. doi:10.23919/PCMP.2023.000257. [Google Scholar] [CrossRef]

15. Feng F, Teng S, Liu K, Xie J, Xie Y, Liu B, et al. Co-estimation of lithium-ion battery state of charge and state of temperature based on a hybrid electrochemical-thermal-neural-network model. J Power Sources. 2020;455(4):227935. doi:10.1016/j.jpowsour.2020.227935. [Google Scholar] [CrossRef]

16. Zhuang S, Gao Y, Chen A, Ma T, Cai Y, Liu M, et al. Research on estimation of state of charge of Li-ion battery based on cubature Kalman filter. J Electrochem Soc. 2022;169(10):100521. doi:10.1149/1945-7111/ac95cf. [Google Scholar] [CrossRef]

17. Zhou Z, Duan B, Kang Y, Cui N, Shang Y, Zhang C. A low-complexity state of charge estimation method for series-connected lithium-ion battery pack used in electric vehicles. J Power Sources. 2019;441:226972. doi:10.1016/j.jpowsour.2019.226972. [Google Scholar] [CrossRef]

18. Shrivastava P, Soon TK, Idris MYIB, Mekhilef S. Overview of model-based online state-of-charge estimation using Kalman filter family for lithium-ion batteries. Renew Sustain Energy Rev. 2019;113(4):109233. doi:10.1016/j.rser.2019.06.040. [Google Scholar] [CrossRef]

19. Dong G, Wei J, Chen Z, Sun H, Yu X. Remaining dischargeable time prediction for lithium-ion batteries using unscented Kalman filter. J Power Sources. 2017;364(Part 1):316–27. doi:10.1016/j.jpowsour.2017.08.040. [Google Scholar] [CrossRef]

20. Shah A, Shah K, Shah C, Shah M. State of charge, remaining useful life and knee point estimation based on artificial intelligence and machine learning in lithium-ion EV batteries: a comprehensive review. Renew Energy Focus. 2022;42(3):146–64. doi:10.1016/j.ref.2022.06.001. [Google Scholar] [CrossRef]

21. Dos Reis G, Strange C, Yadav M, Li S. Lithium-ion battery data and where to find it. Energy AI. 2021;5(4):100081. doi:10.1016/j.egyai.2021.100081. [Google Scholar] [CrossRef]

22. Chang WY. Estimation of the state of charge for a LFP battery using a hybrid method that combines a RBF neural network, an OLS algorithm and AGA. Int J Electr Power Energy Syst. 2013;53(3):603–11. doi:10.1016/j.ijepes.2013.05.038. [Google Scholar] [CrossRef]

23. Hu JN, Hu JJ, Lin HB, Li XP, Jiang CL, Qiu XH, et al. State-of-charge estimation for battery management system using optimized support vector machine for regression. J Power Sources. 2014;269:682–93. doi:10.1016/j.jpowsour.2014.07.016. [Google Scholar] [CrossRef]

24. Liu Y, Li J, Zhang G, Hua B, Xiong N. State of charge estimation of lithium-ion batteries based on temporal convolutional network and transfer learning. IEEE Access. 2021;9:34177–87. doi:10.1109/ACCESS.2021.3057371. [Google Scholar] [CrossRef]

25. Li C, Zhu S, Zhang L, Liu X, Li M, Zhou H, et al. State of charge estimation of lithium-ion battery based on state of temperature estimation using weight clustered-convolutional neural network-long short-term memory. Green Energy Intell Transp. 2025;4(1):100226. doi:10.1016/j.geits.2024.100226. [Google Scholar] [CrossRef]

26. Ren X, Liu S, Yu X, Dong X. A method for state-of-charge estimation of lithium-ion batteries based on PSO-LSTM. Energy. 2021;234(8):121236. doi:10.1016/j.energy.2021.121236. [Google Scholar] [CrossRef]

27. Yao J, Zheng B, Kowal J. Continual learning for online state of charge estimation across diverse lithium-ion batteries. J Energy Storage. 2025;117(6):116086. doi:10.1016/j.est.2025.116086. [Google Scholar] [CrossRef]

28. Xu H, Xu Q, Duanmu F, Shen J, Jin L, Gou B, et al. State-of-charge estimation of lithium-ion batteries based on EKF integrated with PSO-LSTM for electric vehicles. IEEE Trans Transp Electrific. 2025;11(1):2311–21. doi:10.1109/tte.2024.3421260. [Google Scholar] [CrossRef]

29. Li M, Li C, Zhang Q, Liao W, Rao Z. State of charge estimation of Li-ion batteries based on deep learning methods and particle-swarm-optimized Kalman filter. J Energy Storage. 2023;64:107191. doi:10.1016/j.est.2023.107191. [Google Scholar] [CrossRef]

30. Jiao M, Wang D, Qiu J. A GRU-RNN based momentum optimized algorithm for SOC estimation. J Power Sources. 2020;459(4):228051. doi:10.1016/j.jpowsour.2020.228051. [Google Scholar] [CrossRef]

31. Hu C, Cheng F, Ma L, Li B. State of charge estimation for lithium-ion batteries based on TCN-LSTM neural networks. J Electrochem Soc. 2022;169(3):030544. doi:10.1149/1945-7111/ac5cf2. [Google Scholar] [CrossRef]

32. Zhang B, Wang S, Deng L, Jia M, Xu J. Ship motion attitude prediction model based on IWOA-TCN-Attention. Ocean Eng. 2023;272(2):113911. doi:10.1016/j.oceaneng.2023.113911. [Google Scholar] [CrossRef]

33. Bhunia AK, Konwer A, Bhunia AK, Bhowmick A, Roy PP, Pal U. Script identification in natural scene image and video frames using an attention based Convolutional-LSTM network. Pattern Recognit. 2019;85(21):172–84. doi:10.1016/j.patcog.2018.07.034. [Google Scholar] [CrossRef]

34. Niu Z, Zhong G, Yu H. A review on the attention mechanism of deep learning. Neurocomputing. 2021;452:48–62. doi:10.1016/j.neucom.2021.03.091. [Google Scholar] [CrossRef]

35. Yang K, Wang Y, Tang Y, Zhang S, Zhang Z. A temporal convolution and gated recurrent unit network with attention for state of charge estimation of lithium-ion batteries. J Energy Storage. 2023;72:108774. doi:10.1016/j.est.2023.108774. [Google Scholar] [CrossRef]

36. Li F, Zuo W, Zhou K, Li Q, Huang Y. State of charge estimation of lithium-ion batteries based on PSO-TCN-Attention neural network. J Energy Storage. 2024;84(9):110806. doi:10.1016/j.est.2024.110806. [Google Scholar] [CrossRef]

37. Bao G, Liu X, Zou B, Yang K, Zhao J, Zhang L, et al. Collaborative framework of transformer and LSTM for enhanced state-of-charge estimation in lithium-ion batteries. Energy. 2025;322(2):135548. doi:10.1016/j.energy.2025.135548. [Google Scholar] [CrossRef]

38. Lara-Benítez P, Carranza-García M, Luna-Romera JM, Riquelme JC. Temporal convolutional networks applied to energy-related time series forecasting. Appl Sci. 2020;10(7):2322. doi:10.3390/app10072322. [Google Scholar] [CrossRef]

39. Zhao J, Han X, Wu Y, Wang Z, Burke AF. Opportunities and challenges in transformer neural networks for battery state estimation: charge, health, lifetime, and safety. J Energy Chem. 2025;102(4):463–96. doi:10.1016/j.jechem.2024.11.011. [Google Scholar] [CrossRef]

40. Kollmeyer P, Vidal C, Naguib M, Skells M. LG 18650HG2 Li-ion battery data and example deep neural network xEV SOC estimator script [DB/OL]. Mendeley Data. 2020. doi:10.17632/cp3473x7xv.3. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools