Open Access

Open Access

REVIEW

Artificial intelligence assisted 3D in the robotic urooncology? A systematic review and narrative synthesis of current applications, challenges and future directions

1 Department of Urology Robot-Assisted Urology and Uro-Oncology, Hospital Kassel, Kassel, 34125, Germany

2 Department of Urology, University Hospital Essen, Essen, 45147, Germany

3 Department of Urology, Paracelsus Hospital Golzheim, Duesseldorf, 40474, Germany

* Corresponding Author: Bara Barakat. Email:

Canadian Journal of Urology 2026, 33(1), 105-116. https://doi.org/10.32604/cju.2026.071284

Received 04 August 2025; Accepted 26 November 2025; Issue published 28 February 2026

Abstract

Background: Artificial intelligence (AI)-assisted three-dimensional (3D) surgical platforms, integrated with augmented reality, have the potential to improve intraoperative anatomical recognition and provide surgeons with an immersive, dynamic operating environment during uro-oncological procedures. This review aims to examine the current applications of AI in robotic uro-oncology, with a particular focus on its role in facilitating intraoperative navigation during complex surgeries. Methods: A systematic literature search was performed across PubMed, the National Library of Medicine, MEDLINE, the Cochrane Central Register of Controlled Trials (CENTRAL), ClinicalTrials.gov, and Google Scholar to identify relevant studies published up to July 2025. The search strategy incorporated a predefined set of keywords, including AI, machine learning, radical prostatectomy (RP), robotic-assisted radical prostatectomy (RARP), robot-assisted partial nephrectomy (RAPN), and robot-assisted radical cystectomy (RARC). Only clinical trials, full-text peer-reviewed publications, and original research articles were included. Studies were eligible for inclusion if they evaluated or described applications of AI in RARP, RAPN, or RARC. Results: Technological advancements have substantially transformed the field of uro-oncologic surgery. In particular, AI and AI-assisted intraoperative navigation in RARP demonstrate considerable potential to objectively assess surgical performance and predict clinical outcomes. In RAPN, the adoption of preoperative, interactive 3D virtual models for surgical planning has influenced surgical decisions, thus, enhanced precision in resection planning correlates with superior nephron-sparing outcomes and optimized selective clamping. AI applications in RARC, techniques such as augmented reality (AR) can overlay critical information on the surgical field, by facilitating navigation through complex anatomical planes and enhancing identification of critical structures. Conclusion: AI appears to enhance robotic uro-oncologic procedures by increasing operative precision and supporting individualised surgical treatment strategies.Keywords

Supplementary Material

Supplementary Material FileSince its inception, artificial intelligence (AI) has spread rapidly, revolutionising almost every aspect of human life. AI involves the study and development of algorithms that enable machines the ability to reason and perform cognitive functions such as problem solving and decision making.1,2 AI could have one of the biggest impacts on medicine today. It could help to make more accurate decisions and predict patient outcomes more reliably. Uro-oncology surgery, in particular, has benefited from technological innovation. Robot-assisted surgery has become an important tool in modern urological practice. In recent years, the integration of AI the integration of AI into robotic systems has attracted considerable attention. Over the past few decades, significant efforts have been made to develop technical modifications to the traditional open radical prostatectomy (RP), open patrial nephrectomy, an open radical cystectomy procedure with the aim of improving oncological and functional outcomes, thereby minimising patient morbidity following surgery. One of the most significant complications of RP is post-prostatectomy incontinence (PPI), which can negatively impact patient’s quality life after RP.3,4 According to the ‘trifecta’ concept, the goals of RP should be cancer control, complete urinary continence and preservation of erectile function.5 Surgical trauma is not the only risk factor for PPI; preoperative factors such as increasing age, body mass index (BMI), comorbidities, previous radiotherapy, and preoperative lower urinary tract symptoms (LUTS) are responsible for the development of PPI.6–10 Our recently published study revealed that, in addition to age and BMI, anatomical factors such as the length of the membranous urethra—which relates to urethral sphincter function—strongly influence the early return to continence following RP.11 However, achieving improved functional outcomes remains difficult because accurately delineating nerve-sparing planes and tumour margins during surgery is challenging, thereby increasing the risk of positive surgical margins.

Therefore, artificial intelligence (AI) could significantly enhance the ability of uro-oncologists to mark tumour locations or preserve neurovascular bundles during surgery, thereby reducing PSMs. Also in recent years, the use of 3D virtual models has become increasingly common in kidney and bladder surgery. The aim is to perform a precise and oncologically safe procedure. This systematic literature review and narrative synthesis focuses on recent advances in AI for robotic uro-oncology, particularly intraoperative applications, current management strategies and emerging research directions. We also outline important ethical considerations for incorporating AI into robotic surgery.

Search strategy and criteria for considering studies for this review

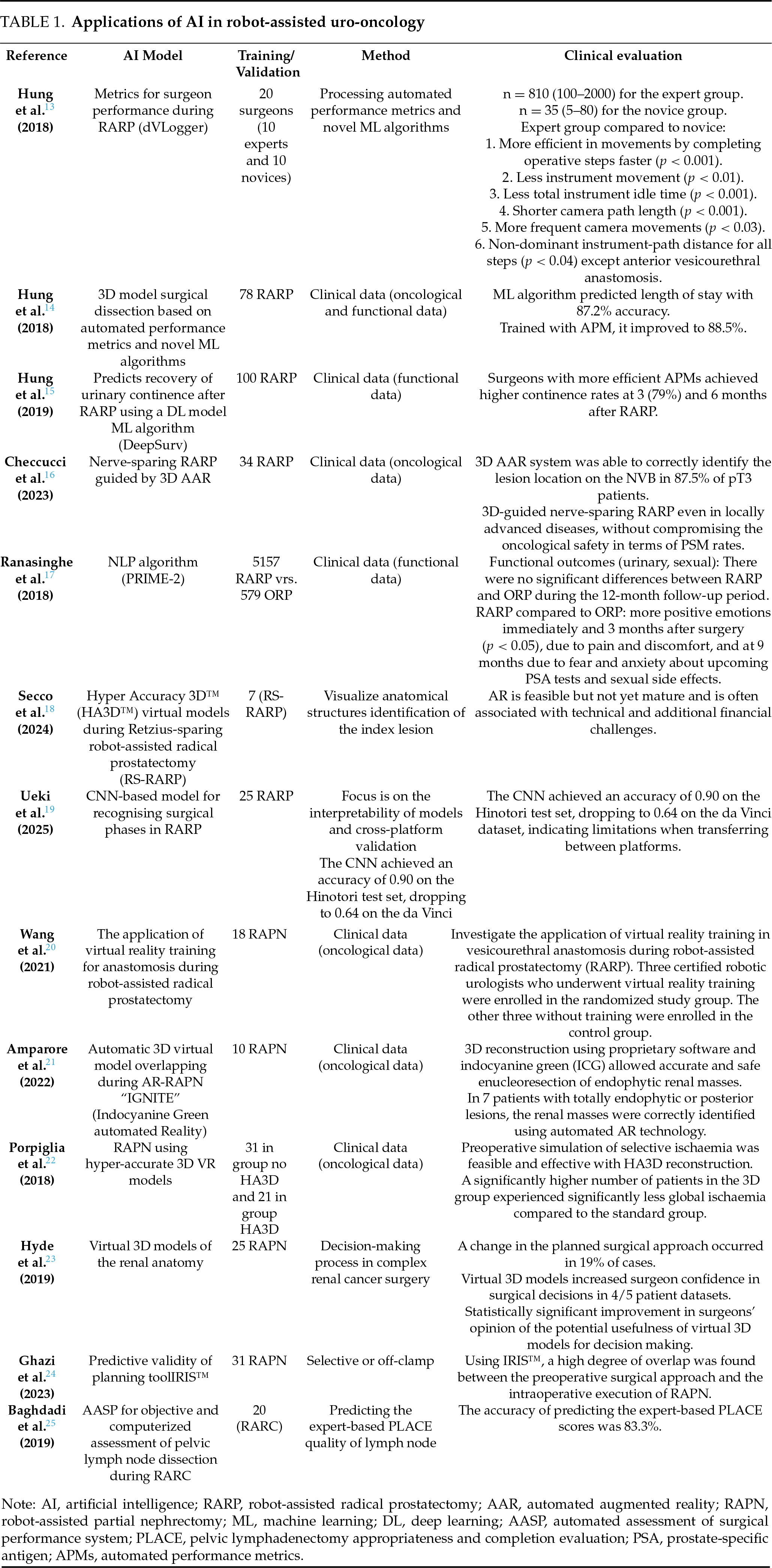

A selective systematic literature review with narrative synthesis was conducted by searching for studies addressing artificial intelligence in uro-oncology in the following databases: PubMed, the National Library of Medicine, the Medical Literature Analysis and Retrieval System Online (MEDLINE), the Cochrane Central Register of Controlled Trials (CENTRAL), Google Scholar and Clinical Trials. Two independent reviewers conducted the literature search between 01 January 2020 and 10 July 2025. The search strategy involved using a search string based on the keywords ‘urology’, ‘artificial intelligence’, ‘machine learning’, ‘artificial intelligence-guided automatic navigation in urology’ and ‘robot-assisted radical prostatectomy, partial nephrectomy and cystectomy’. The search was limited to clinical trials, full papers and original articles. Randomised controlled trials (RCTs) and both prospective and retrospective studies were selected. The reference lists of the articles were also checked for any additional relevant articles. Articles related to AI in robotic radical prostatectomy, partial nephrectomy and cystectomy were included (Table 1). Abstracts, review articles and studies involving animals, laboratories or cadavers were excluded from this review. This systematic literature review adheres to the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) satement.12 The PRISMA checklist is also available in the Supplementary Table S1.

The included studies investigated the future perspective of robotic uro-oncology: automatic, artificial intelligence-guided navigation. The search was limited to published articles and original papers. The reference lists of the included articles were also screened to identify additional relevant studies. Articles were initially selected based on their abstracts and subsequently reviewed in full detail.

The selection of the articles to be included was made by consensus among all the authors. Two independent researchers reviewed the articles before the final decision was made to include them in this review. To assess the risk of bias, two reviewers (Bauer J. and Barakat B.) independently identified all the studies that met the inclusion criteria for a full review. The reviewers then selected the studies to be included. Any disagreements between the extracting authors were resolved by consensus or referred to the third author.

The following information was extracted from studies that met the inclusion criteria: first author’s name, year of publication, study design, type of AI model, type of procedure, validation, technical feasibility of the AI model. Relevant oncological and functional endpoints were extracted from the included studies, including oncological and functional outcomes and complications. This systematic literature review and narrative synthesis excluded studies involving animal experiments, laboratories or cadavers. It summarises the results of different studies. As such, the definitions and outcomes of interest may vary between articles.

Study selection, characteristics and outcomes

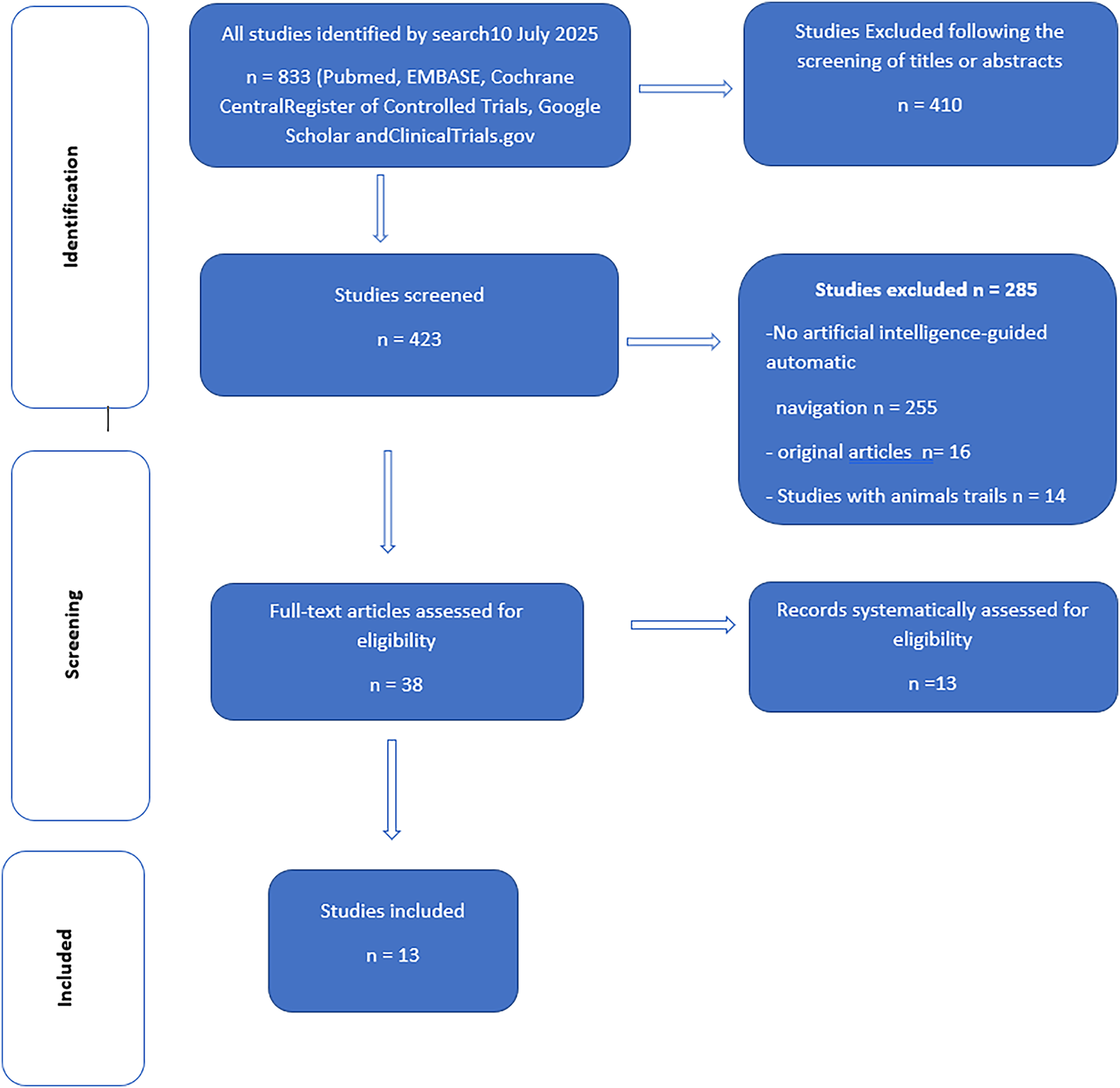

The search strategy and results of this systematic literature review and narrative synthesis are presented in Figure 1. Following de-duplication, 114 articles were screened for further analysis, resulting in the identification of 17 studies.

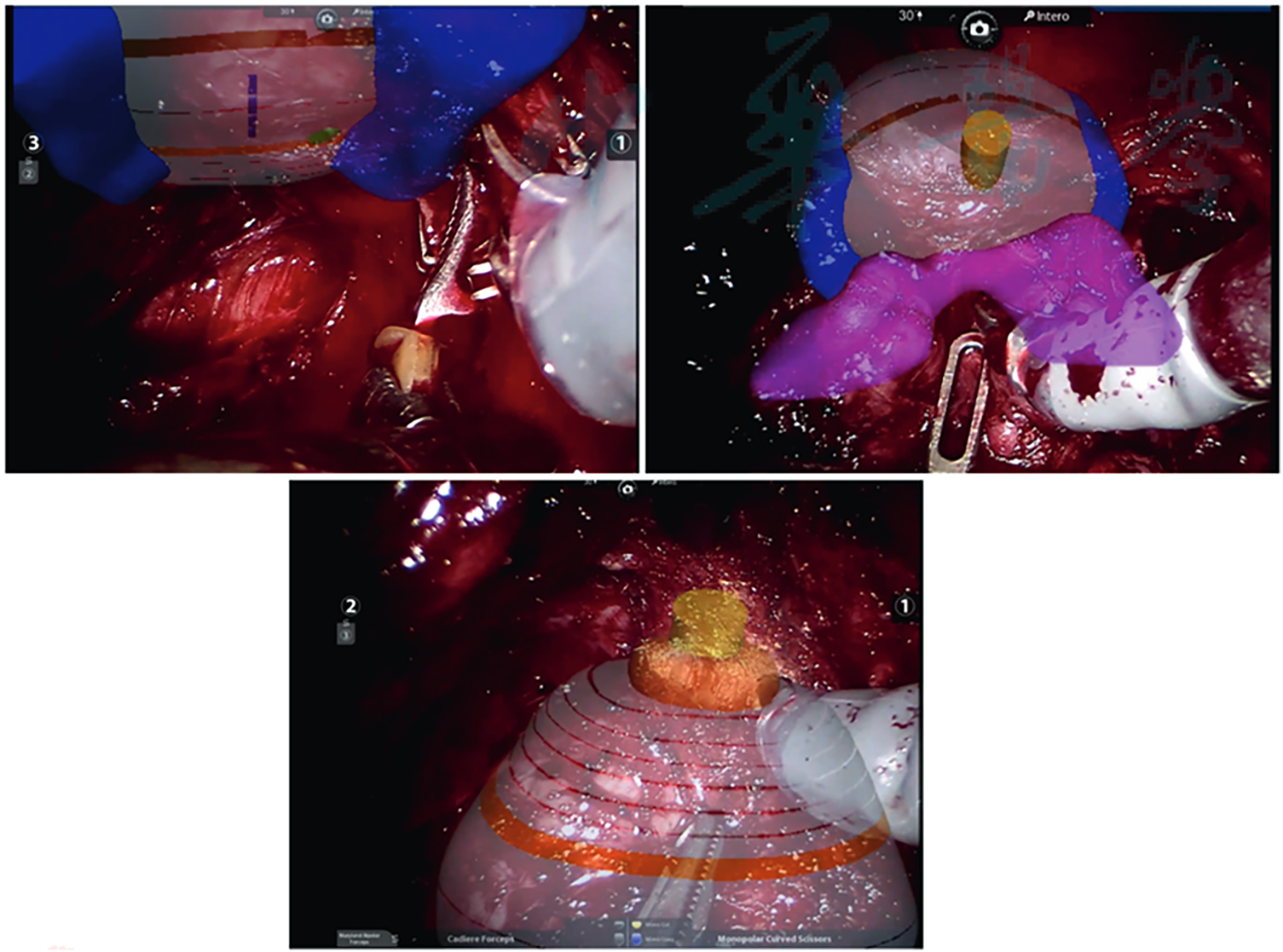

FIGURE 1. A three-dimensional model of Hyper Accuracy superimposed on the endoscopic view. Adaptation of the model to different surgical phases (left: isolation of the prostate bundle, right: mobilization of the prostate with the Cadiere forceps and down: dissection of the urethra) can be observed. Secco et al.18

These included cohorts of patients undergoing AI robot-assisted urooncological surgery that adhered to the Population, Intervention, Control, Outcome (PICO) quality assessment criteria and were included in this analysis. Of the 23 relevant articles, 13 clinical trials met the inclusion criteria (Figure 2, Table 1). The number of operating surgeons was not quantified in the included studies.

FIGURE 2. Study selection flowchart and the systematic Preferred Reporting Items for Systematic Reviews (PRISMA) search strategy are shown below

Initially, our search yielded 833 publications. After removing the duplicates, 410 articles remained and were screened based on their titles and abstracts. This left 423 articles that were selected for a full-text review. Another 185 studies were excluded from the review: 285 studies did not use artificial intelligence-guided automatic navigation, and 16 studies were not original articles. Fourteen studies were excluded because they involved animal trials (Figure 1).

Applications of AI in robot-assisted radical prostatectomy and intraoperative navigation

In recent years, applications of AI in prostate cancer have expanded beyond imaging and pathology to include surgical interventions such as RARP. Overall, robotic prostatectomy is regarded as a safe and highly precise procedure that offers excellent oncological control when performed by experienced surgeons. Five key anatomical structures (prostate, image-visible biopsy-proven ‘index’ cancer lesion, neurovascular bundles, urethra, and recorded biopsy trajectories) were image-fused and displayed on the TilePro™ display feature of the robotic console.26

The 3D model enabled the surgeon to preform careful dissection in the vicinity of the biopsy-proven index lesion. Negative surgical margins were achieved in 90% of cases, with the exception of one case of extensive extra-prostatic extension.26 In three papers published by Hung et al., Hung and colleagues developed an objective method of processing automated performance metrics and novel ML algorithms to evaluate surgical RARP performance, and used parameters from this method as training data for ML algorithms.13–15 In the first project, Hung and his team researched and validated objective metrics for surgical performance during selected steps of RARP using a novel recorder (‘dVLogger’) that directly captures the surgeon’s actions on the da Vinci® Surgical System.13 The objective metrics revealed that the experts were more efficient and purposeful during the selected steps of the RARP. The experts completed the operative steps more quickly (p < 0.001) and travelled a shorter distance with the instruments (p < 0.01). In the second project, Hung and colleagues used the methods of processing automated performance metrics and novel ML algorithms to evaluate RARP and predict clinical outcomes. They concluded that, as clinical data continues to grow, this technique will become increasingly relevant and valuable for surgical and training. Of the algorithms tested, the “Random Forest-50” (RF-50) performed best, achieving 87.2% accuracy in predicting LOS (73 cases were classified as “pExp-LOS” and five as “pExt-LOS”).14 Despite all criteria of bimanual dexterity and movement efficiency, the experts performed significantly better than the novices. The authors illustrate this higher efficiency with 3D instrument trajectory tracking, which shows a shorter instrument trajectory length at a higher speed for the dominant and non-dominant instruments in the expert group.14 Drite’s work of Hung and his team predicts recovery of urinary continence after RARP using a deep learning (DL) model, which was then used to assess the surgeon’s historical patient outcomes. In this feasibility study, surgeons with more efficient automated performance metrics (APMs) achieved higher continence rates at 3 and 6 months after RARP Among the historical cohort, patients in Group 1/APMs demonstrated superior urinary continence rates at 3 and 6 months postoperatively (47.5% vs. 36.7%, p = 0.034 and 68.3% vs. 59.2%, p = 0.047, respectively).15 Of the three models evaluated, the Random Forest-50 machine learning model achieved a predictive accuracy of 88.5%, giving it significant prognostic value for duration of surgery, length of hospital stays and Foley catheter duration.27,28 A similar work was published in 2023, where authors evaluated the accuracy of the 3D automated augmented reality (AAR) system guided by AI in nerve-sparing RARP to identify tumour location at the level of the preserved neurovascular bundle.16 The authors showed that the 3D AAR system was able to correctly identify the lesion location on the NVB in 87.5% of pT3 patients. This raises the possibility of performing 3D-guided tailored NS even in locally advanced disease without compromising oncological safety in terms of PSM rate.16 Overall, the application and particularly the use of AI-guided navigation in RARP demonstrates great potential to objectively assess surgical performance and the prediction of clinical outcomes.

Ranasinghe et al. used the DeepSurv algorithm to compare the outcomes of RARP and open radical prostatectomy (ORP). Using this model, the authors reported more positive emotions in the RARP group immediately and 3 months after surgery (p < 0.05) due to pain and discomfort, and at 9 months due to fear and anxiety about upcoming PSA tests and sexual side effects.17

Secco et al. demonstrated that AAR and 3D imaging improved the real-time identification of prostate lesions, enabling robotic-assisted radical prostatectomy (RARP) using Hyper Accuracy 3D™ (HA3D™) virtual models for preoperative planning and intraoperative support. The authors conclude that augmented reality (AR) is a feasible approach; however, it remains in an early stage of development and is frequently associated with technical limitations and financial constraints18 (Figure 1).

In parallel, Ueki developed a convolutional neural network (CNN)-based model for detecting surgical phases in robot-assisted laparoscopic radical prostatectomy (RARP).19 The model was trained using video data from 75 RARP cases performed with the Hinotori robotic system. Seven phases were annotated: bladder prolapse; prostate preparation; bladder neck dissection; seminal vesicle dissection; posterior dissection; apical dissection; and vesicourethral anastomosis. Validation was conducted on 25 RARP cases performed using the da Vinci robotic system to evaluate the generalisability of the approach across surgical platforms.

While the model demonstrated high accuracy on a single robotic platform, it requires further refinement for consistent cross-platform performance.19 Another study investigated the use of virtual reality training in vesicourethral anastomosis during robot-assisted radical prostatectomy (RARP). The overall score for the virtual training improved significantly from 65.0 ± 10.8 to 92.7 ± 3.5 (p = 0.014), and anastomosis time, movement economy and instrument collisions all decreased (p < 0.05).20

Thus, virtual reality training enabled surgeons to quickly become familiar with robotic manipulation and improve their vesicourethral anastomosis skills, shortening the learning curve and helping them to achieve high surgical efficiency and quality. Step recognition serves as the basis for numerous potential future applications of AI in surgery, including surgeon education and training, quality benchmarking, optimisation of OR logistics, and possibly even intraoperative real-time decision support during surgery in the future.

Applications of AI in robot-assisted partial nephrectomy and intraoperative navigation

A detailed understanding of the anatomical structure is key to personalised surgical planning, especially in renal surgery, which plays a key role in preserving renal function21,29 (Table 1). The integration of 3D models with robotic platforms opens up the possibility of performing mixed reality surgeries, increasing surgeons’ familiarity and confidence with pathology and tailoring procedures to patients’ needs. In recent years, 3D models and augmented reality performance have become increasingly important. Pre-operative 3D reconstruction of the kidney allows the surgeon to choose the appropriate surgical approach and thus guides the surgical planning. Intraoperative identification of the surgical structure leads to maximum safety during dissection. In addition, planning a precise resection strategy is directly related to more effective renal preservation and precise medullary and cortical reconstruction, which reduces postoperative complication rates. Furthermore, renal artery clamping can be minimised by precise planning of the resection area. The new 3D renal reconstruction technology for robot-assisted partial nephrectomy (RAPN) has been investigated in several published studies with the aim of preoperative planning and precision surgery 27,30 (Table 1). However, the overlap between preoperative planning and intraoperative performance is not mentioned in the published studies. 3D reconstruction using specially developed software and indocyanine green (ICG) enabled precise and safe enucleoresection of endophytic renal masses.28 Porpiglia et al. conducted a comparative study comparing standard RAPN (n = 31) with RAPN using hyper-accurate 3D VR models (n = 21) with complex renal tumours (PADUA score ≥ 10). The authors found that a significantly higher number of patients in the 3D group experienced significantly less global ischaemia compared to the standard group (80% vs. 24%, p < 0.01).22 Hyde et al. presented a 3D model to evaluate the impact of interactive visualization of patient-specific virtual 3D models of renal anatomy on the preoperative decision-making process in complex renal cancer surgery.23 The results showed that using preoperative interactive 3D virtual models for surgical planning influenced surgical decisions in 80%. In a case report, the researchers used specially developed software to overlay images using 3D models and live endoscopic images during RAPN. The authors reported that this technique was able to safely enucleate an endophytic renal mass with a PADUA score of 9 and selective clamping of the tumour without major complications.31 Ghazi et al. conducted a prospective study to evaluate the predictive validity of the IRIS (Intuitive Surgical) software as a planning tool for RAPN, based on the degree of overlap with the intraoperative procedure. Thirty-one patients were presented with the 3D IRIS model on an iOS app prior to surgery. They used this to outline their surgical plan, including the surgical approach and ischaemia technique, as well as their confidence in carrying out this plan. When plans for selective or off-clamp were compared, the preoperative plan was executed intraoperatively in 90.0% of cases. The authors concluded that a high degree of overlap was found between the preoperative surgical approach and the intraoperative execution of RAPN using IRIS.24

Applications of AI in robot-assisted radical cystectomy and intraoperative navigation

Integration of AI has revolutionised surgical procedures, including robotic-assisted radical cystectomy (RARC) and intraoperative navigation. A comparison of the available data on intraoperative guidance in urological oncology reveals that RARP surgical navigation has received the most attention, followed by RAPN.

The aim of using AI in RARC is not only to inhance the surgical precision of nerve sparing, oncological safety and functional outcomes of RARC, as well as to optimise intraoperative decision-making and urinary diversion reconstruction. A 3D model can facilitate careful surgical dissection to improve greater oncological safety, but also better functional outcomes.25,32

AI enhances real-time imaging during RARC, providing surgeons with a superior view of tumours and surrounding tissue. Techniques like augmented reality (AR) can overlay critical information on the surgical field, guiding surgeons through complex anatomical landscapes and highlighting vital structures.33 AI algorithms can also improve the accuracy of robotic movements, enabling precise tumour resection while minimising damage to healthy tissues.34 Furthermore, motion compensation algorithms predict and adjust for patient movements, ensuring stable surgical manoeuvres.

This systematic review focuses on the latest developments in the use of AI in robotic uro-oncology. The most significant finding is that effectice use of AI algorithmus in robotic uro-oncology requires high-quality, annotated data. This is the first systematic review on the topic of 3D automatic artificial intelligence navigation in robotic uro-oncology.

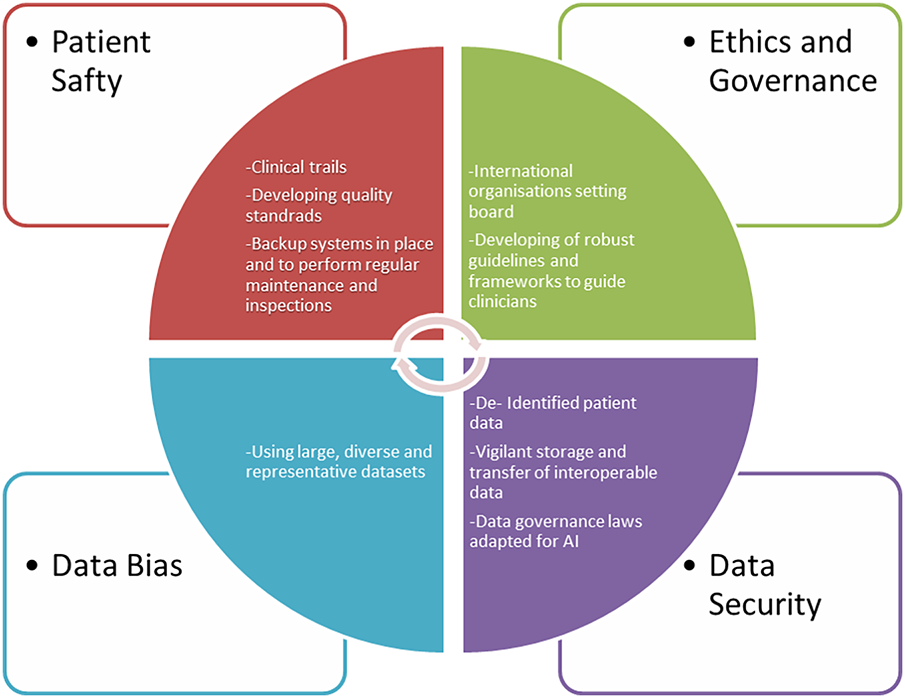

Challenges of artificial intelligence

AI algorithms require high-quality, annotated data to work effectively. Inconsistent or inaccurate data can lead to unreliable results. However, collecting large amounts of specific medical data, particularly in uro-oncology, can be challenging due to privacy concerns and the relative rarity of certain conditions (Figure 3).

FIGURE 3. Challenges and barriers to implementing AI technology in uro-oncology with proposed solutions, graphical software used by Word-document 2024

Although we have entered the era of precision robotic surgery, there is still an unmet need for optimal surgical planning, navigation and training for most genitourinary conditions. One of the major challenges to the introduction of AI and robotic surgery is ensuring the safety of robotics in uro-oncology. There is a constant risk of mechanical failure, software errors, or system malfunctions during robotic surgery. To mitigate these risks, it is essential to implement backup systems and conduct regular maintenance and inspections of the robotic surgical platform. Other barriers to implementing of AI in robotic surgery include ethics, governance and cybersecurity. The increasing autonomy of AI in surgery raises ethical questions about responsibility and liability in the event of adverse incidents. Clear guidelines and regulations are required to ensure patient safety and clarify medical legal issues. In general, AI is thought to assist the surgical decision-making process, but in medicine, difficult decisions are always made by human doctors, often against a strong background of ethical and moral issues, where the right decision is not always obvious.34 In the future, AI may play a key role in resolving such complex clinical decisions. In this context, the development of surgical robots has been categorised into the following generations: stereotactic, endoscopic, bio-inspired and microbots.35 Finally, fifth-generation autonomous surgical robots in the form of cyborg humanoids or swarm-intelligent platforms could be deployed in remote areas or conflict zones and controlled by surgeons using stereoscopic lenses and holographic technology (‘human-in-the-loop’).36 To avoid medical and ethical dilemmas, AI tools should be considered as one of many sources of clinical decision support. This will require the development of robust guidelines and frameworks to guide clinicians in the use of these tools. By addressing the challenges and leveraging the applications, AI has the potential to significantly enhance the field of robot-assisted uro-oncology, leading to improved patient outcomes, increased surgical precision, and more efficient healthcare delivery.

The most common limitations identified by the authors in includes studies were a single-center study design and a small sample size. Hence, restricting the generalisability and statistical power of AI-assisted tools in different studies. Ten studies were underpowered due to small sample sizes. Of these, 11 (84%) included fewer than 100 participants. Another common limitation is the open-label design. Despite numerous claims that AI can improve clinical outcomes, there is a lack of robus evidence. In this systematic literature review with narrative synthesis, we only identified one randomised controlled trial (RCT) that compared AI-assisted care with standard care. AI robot-assisted urooncology tools must demonstrate an unequivocal improvement in clinically relevant outcomes in properly designed randomised controlled clinical trials, in which the use of AI-assisted management is compared with the standard practice of care.

The integration of AI is undoubtedly ushering in a new and exciting era for urology. The way in which these emerging opportunities are leveraged and the way in which the associated challenges are addressed on a large scale are likely to have profound implications for the future of medical practice. Future research should aim to provide valuable insights into the ethical and regulatory challenges posed by AI technologies, particularly in the field of robotic-assisted uro-oncology. Addressing these challenges will contribute to the responsible development and practical implementation of AI-driven robotic surgery applications.

In conclusion, AI’s applications in robot-assisted uro-oncology are transforming the field by enhancing surgical precision, facilitating personalised treatment, improving diagnostic accuracy and optimising postoperative care. As AI technology advances further, its integration into uro-oncology will lead to even greater improvements in patient outcomes and the overall effectiveness of cancer treatment. The future of uro-oncology lies in continued collaboration between AI and robotic technologies, paving the way for innovative solutions and enhanced patient care.

Acknowledgement

Not applicable.

Funding Statement

The authors received no specific funding for this study.

Author Contributions

Bara Barakat had full access to all the data in the study and took responsibility for the integrity of the data and the accuracy of the data analysis. Manuscript writing/editing: Bara Barakat; Protocol/project development: Joerg Bauer; Data collection or management: Bara Barakat and Joerg Bauer; Drafting of the manuscript: Boris Hadaschik, Christian Rehme, Bilal Al-Absi and Samer Schakaki; Critical revision of the manuscript: Boris Hadaschik and Christian Rehme; Administrative, technical and material support: Bara Barakat and Samer Schakaki; Supervision: Boris Hadaschik and Christian Rehme. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials

Not applicable.

Ethics Approval

This is a systematic literature review and Narrative Synthesis of published work using anonymized data. According to the relevant ethics committee, no ethical approval is required for this study. All procedures performed in studies involving human participants complied with the ethical standards of the institutional and/or national research committee as well as with the 1964 Helsinki Declaration and its later amendments, or comparable ethical standards.

Informed Consent

Because the data are anonymized, written informed consent from participants is not required.

Conflicts of Interest

The authors declare no conflicts of interest.

Supplementary Materials: The supplementary material is available online at https://www.techscience.com/doi/10.32604/cju.2026.071284/s1.

Abbreviations

| AI | Artificial intelligence |

| RP | Open radical prostatectomy |

| PPI | Post-prostatectomy incontinence |

| BMI | Body mass index |

| LUTS | Lower urinary tract symptoms |

| SUI | Stress urinary incontinence |

| MUL | Membranous urethra |

| NS | Nerve-sparing |

| PSMs | Positive surgical margins |

| NVB | Neurovascular bundle preservation |

| RARP | Robotic-assisted radical prostatectomy |

| ML | Machine learning |

| 3D | Three-dimensional |

| DL | Deep learning |

| AAR | Automated augmented reality |

| OR | Operating room |

| RAPN | Robot-assisted partial nephrectomy |

| RARC | Robotic-assisted radical cystectomy |

| APMs | Automated performance metrics |

| PRISMA | Preferred reporting items for systematic reviews and meta-analyses |

References

1. Ray TR, Kellogg RT, Fargen KM, Hui F, Vargas J. The perils and promises of generative artificial intelligence in neurointerventional surgery. J Neurointerv Surg 2023;16(1):4–7. doi:10.1136/jnis-2023-020353. [Google Scholar] [PubMed] [CrossRef]

2. Kaplan A, Haenlein M. Siri, Siri, in my hand: who’s the fairest in the land? On the interpretations, illustrations, and implications of artificial intelligence. Bus Horiz 2019;62(1):15–25. doi:10.1016/j.bushor.2018.08.004. [Google Scholar] [CrossRef]

3. Park JJ, Hong Y, Kwon A, Shim SR, Kim JH. Efficacy of surgical treatment for post-prostatectomy urinary incontinence: a systematic review and network meta-analysis. Int J Surg 2023;109(3):401–411. doi:10.1097/JS9.0000000000000170. [Google Scholar] [PubMed] [CrossRef]

4. Lardas M, Grivas N, Debray TPA, et al. Patient- and tumour-related prognostic factors for urinary incontinence after radical prostatectomy for nonmetastatic prostate cancer: a systematic review and meta-analysis. Eur Urol Focus 2022;8(3):674–689. doi:10.1016/j.euf.2021.04.020. [Google Scholar] [PubMed] [CrossRef]

5. Patel VR, Abdul-Muhsin HM, Schatloff O, et al. Critical review of ‘pentafecta’ outcomes after robot-assisted laparoscopic prostatectomy in high-volume centres. BJU Int 2011;108(6 Pt 2):1007–1017. doi:10.1111/j.1464-410X.2011.10521.x. [Google Scholar] [PubMed] [CrossRef]

6. Barakat B, Hadaschik B, Al-Nader M, Schakaki S. Factors contributing to early recovery of urinary continence following radical prostatectomy: a narrative review. J Clin Med 2024;13(22):6780. doi:10.3390/jcm13226780. [Google Scholar] [PubMed] [CrossRef]

7. Hoeh B, Hohenhorst JL, Wenzel M, et al. Full functional-length urethral sphincter- and neurovascular bundle preservation improves long-term continence rates after robotic-assisted radical prostatectomy. J Robot Surg 2023;17(1):177–184. doi:10.1007/s11701-022-01408-7. [Google Scholar] [PubMed] [CrossRef]

8. Spinos T, Kyriazis I, Tsaturyan A, et al. Sphincter preservation techniques during radical prostatectomies: lessons learned. Urol Ann 2023;15(4):353–359. doi:10.4103/ua.ua_126_22. [Google Scholar] [PubMed] [CrossRef]

9. Li X, Zhang H, Jia Z, et al. Urinary continence outcomes of four years of follow-up and predictors of early and late urinary continence in patients undergoing robot-assisted radical prostatectomy. BMC Urol 2020;20(1):29. doi:10.1186/s12894-020-00601-w. [Google Scholar] [PubMed] [CrossRef]

10. Deng W, Chen R, Jiang X, et al. Independent factors affecting postoperative short-term urinary continence recovery after robot-assisted radical prostatectomy. J Oncol 2021;2021(4):9523442. doi:10.1155/2021/9523442. [Google Scholar] [PubMed] [CrossRef]

11. Barakat B, Addali M, Hadaschik B, Rehme C, Hijazi S, Zaqout S. Predictors of early continence recovery following radical prostatectomy, including transperineal ultrasound to evaluate the membranous urethra length (CHECK-MUL study). Diagnostics 2024;14(8):853. doi:10.3390/diagnostics14080853. [Google Scholar] [PubMed] [CrossRef]

12. Moher D, Liberati A, Tetzlaff J, Altman DG. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. Int J Surg 2010;8(5):336–341. doi:10.1016/j.ijsu.2010.02.007. [Google Scholar] [PubMed] [CrossRef]

13. Hung AJ, Chen J, Jarc A, Hatcher D, Djaladat H, Gill IS. Development and validation of objective performance metrics for robot-assisted radical prostatectomy: a pilot study. J Urol 2018;199(1):296–304. doi:10.1016/j.juro.2017.07.081. [Google Scholar] [PubMed] [CrossRef]

14. Hung AJ, Chen J, Che Z, et al. Utilizing machine learning and automated performance metrics to evaluate robot-assisted radical prostatectomy performance and predict outcomes. J Endourol 2018;32(5):438–444. doi:10.1089/end.2018.0035. [Google Scholar] [PubMed] [CrossRef]

15. Hung AJ, Chen J, Ghodoussipour S, et al. A deep-learning model using automated performance metrics and clinical features to predict urinary continence recovery after robot-assisted radical prostatectomy. BJU Int 2019;124(3):487–495. doi:10.1111/bju.14735. [Google Scholar] [PubMed] [CrossRef]

16. Checcucci E, Piana A, Volpi G, et al. Three-dimensional automatic artificial intelligence driven augmented-reality selective biopsy during nerve-sparing robot-assisted radical prostatectomy: a feasibility and accuracy study. Asian J Urol 2023;10(4):407–415. doi:10.1016/j.ajur.2023.08.001. [Google Scholar] [PubMed] [CrossRef]

17. Ranasinghe W, de Silva D, Bandaragoda T, et al. Robotic-assisted vs. open radical prostatectomy: a machine learning framework for intelligent analysis of patient-reported outcomes from online cancer support groups. Urol Oncol 2018;36(12):529.e1–529.e9. doi:10.1016/j.urolonc.2018.08.012. [Google Scholar] [PubMed] [CrossRef]

18. Secco S, Olivero A, Tappero S, et al. Three-dimensional (3D) augmented reality during Retzius-sparing robot-assisted radical prostatectomy (RS-RARPfirst experience. Cancer Pathog Ther 2025;3(1):81–84. doi:10.1016/j.cpt.2024.06.003. [Google Scholar] [PubMed] [CrossRef]

19. Ueki H, Uemura M, Chinzei K, et al. Developing an artificial intelligence model for phase recognition in robot-assisted radical prostatectomy. BJU Int 2025;136(5):891–901. doi:10.1111/bju.16862. [Google Scholar] [PubMed] [CrossRef]

20. Wang F, Zhang C, Guo F, et al. The application of virtual reality training for anastomosis during robot-assisted radical prostatectomy. Asian J Urol 2021;8(2):204–208. doi:10.1016/j.ajur.2019.11.005. [Google Scholar] [PubMed] [CrossRef]

21. Amparore D, Checcucci E, Piazzolla P, et al. Indocyanine green drives computer vision based 3D augmented reality robot assisted partial nephrectomy: the beginning of automatic overlapping era. Urology 2022;164:e312–e316. doi:10.1016/j.urology.2021.10.053. [Google Scholar] [PubMed] [CrossRef]

22. Porpiglia F, Fiori C, Checcucci E, Amparore D, Bertolo R. Hyperaccuracy three-dimensional reconstruction is able to maximize the efficacy of selective clamping during robot-assisted partial nephrectomy for complex renal masses. Eur Urol 2018;74(5):651–660. doi:10.1016/j.eururo.2017.12.027. [Google Scholar] [PubMed] [CrossRef]

23. Hyde ER, Berger LU, Ramachandran N, et al. Interactive virtual 3D models of renal cancer patient anatomies alter partial nephrectomy surgical planning decisions and increase surgeon confidence compared to volume-rendered images. Int J Comput Assist Radiol Surg 2019;14(4):723–732. doi:10.1007/s11548-019-01913-5. [Google Scholar] [PubMed] [CrossRef]

24. Ghazi A, Sharma N, Radwan A, et al. Can preoperative planning using IRISTM three-dimensional anatomical virtual models predict operative findings during robot-assisted partial nephrectomy? Asian J Urol 2023;10(4):431–439. doi:10.1016/j.ajur.2022.12.003. [Google Scholar] [PubMed] [CrossRef]

25. Baghdadi A, Hussein AA, Ahmed Y, Cavuoto LA, Guru KA. A computer vision technique for automated assessment of surgical performance using surgeons' console-feed videos. Int J Comput Assist Radiol Surg 2019;14(4):697–707. doi:10.1007/s11548-018-1881-9. [Google Scholar] [PubMed] [CrossRef]

26. Ukimura O, Aron M, Nakamoto M, et al. Three-dimensional surgical navigation model with TilePro display during robot-assisted radical prostatectomy. J Endourol 2014;28(6):625–630. doi:10.1089/end.2013.0749. [Google Scholar] [PubMed] [CrossRef]

27. Porpiglia F, Bertolo R, Checcucci E, et al. Development and validation of 3D printed virtual models for robot-assisted radical prostatectomy and partial nephrectomy: urologists’ and patients’ perception. World J Urol 2018;36(2):201–207. doi:10.1007/s00345-017-2126-1. [Google Scholar] [PubMed] [CrossRef]

28. Checcucci E, Amparore D, Fiori C, et al. 3D imaging applications for robotic urologic surgery: an ESUT YAUWP review. World J Urol 2020;38(4):869–881. doi:10.1007/s00345-019-02922-4. [Google Scholar] [PubMed] [CrossRef]

29. Bertolo R, Fiori C, Piramide F, et al. Assessment of the relationship between renal volume and renal function after minimally-invasive partial nephrectomy: the role of computed tomography and nuclear renal scan. Minerva Urol Nefrol 2018;70(5):509–517. doi:10.23736/S0393-2249.18.03140-5. [Google Scholar] [PubMed] [CrossRef]

30. Grivas N, Group JRUW, Kalampokis N et al. Robot-assisted versus open partial nephrectomy: comparison of outcomes. A systematic review. Minerva Urol Nefrol 2019;71(2):113–120. doi:10.23736/s0393-2249.19.03391-5. [Google Scholar] [PubMed] [CrossRef]

31. Sica M, Piazzolla P, Amparore D, et al. 3D model artificial intelligence-guided automatic augmented reality images during robotic partial nephrectomy. Diagnostics 2023;13(22):3454. doi:10.3390/diagnostics13223454. [Google Scholar] [PubMed] [CrossRef]

32. Hung AJ, Chen J, Gill IS. Automated performance metrics and machine learning algorithms to measure surgeon performance and anticipate clinical outcomes in robotic surgery. JAMA Surg 2018;153(8):770–771. doi:10.1001/jamasurg.2018.1512. [Google Scholar] [PubMed] [CrossRef]

33. Zhao B, Waterman RS, Urman RD, Gabriel RA. A machine learning approach to predicting case duration for robot-assisted surgery. J Med Syst 2019;43(2):32. doi:10.1007/s10916-018-1151-y. [Google Scholar] [PubMed] [CrossRef]

34. Cobianchi L, Verde JM, Loftus TJ, et al. Artificial intelligence and surgery: ethical dilemmas and open issues. J Am Coll Surg 2022;235(2):268–275. doi:10.1097/xcs.0000000000000242. [Google Scholar] [PubMed] [CrossRef]

35. Ashrafian H, Clancy O, Grover V, Darzi A. The evolution of robotic surgery: surgical and anaesthetic aspects. Br J Anaesth 2017;119(suppl_1):i72–i84. doi:10.1093/bja/aex383. [Google Scholar] [PubMed] [CrossRef]

36. O’Sullivan S, Nevejans N, Allen C, et al. Legal, regulatory, and ethical frameworks for development of standards in artificial intelligence (AI) and autonomous robotic surgery. Int J Med Robot 2019;15(1):e1968. doi:10.1002/rcs.1968. [Google Scholar] [PubMed] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF

Downloads

Downloads

Citation Tools

Citation Tools