Open Access

Open Access

ARTICLE

Interior Point Method-Assisted Differential Evolution for Expensive Optimization of Secondary Source Deployment

1 School of Information Science and Technology, Donghua University, Shanghai, China

2 Engineering Research Center of Digitized Textile & Apparel Technology, Ministry of Education, Donghua University, Shanghai, China

* Corresponding Author: Weijian Kong. Email:

(This article belongs to the Special Issue: Computational Intelligent Systems for Solving Complex Engineering Problems: Principles and Applications-III)

Computer Modeling in Engineering & Sciences 2026, 146(2), 14 https://doi.org/10.32604/cmes.2026.077613

Received 13 December 2025; Accepted 26 January 2026; Issue published 26 February 2026

Abstract

In active noise control, the optimal deployment of secondary sources is a critical factor influencing the noise reduction performance due to the spatial inhomogeneity of the sound field. Traditional methods, which rely on finite element analysis to model the sound field, are accurate but computationally intensive, leading to high costs in solving the deployment optimization problem. To address this issue, this paper proposes an expensive optimization method for secondary source deployment based on Interior Point Method-assisted Differential Evolution with Weibull distribution (IPMDEW). During the optimization process, a Kriging model is employed to construct a response surface, i.e., a surrogate model, of the objective function. The surrogate model is used for the initial evaluation of the population, while the finite element model is utilized to verify promising individuals. A surrogate model update algorithm based on k-means clustering is designed to iteratively refine the model and enhance its accuracy. The IPMDEW algorithm utilizes the Weibull distribution-based weighted differential evolution for global exploration and switches to the gradient-based interior point method for refined local optimization when the population approaches convergence. The results demonstrate Kriging surrogate-assisted optimization method for secondary source deployment reduces the optimization time by 85.79%, i.e., by 347.64 h, significantly improving optimization efficiency. Furthermore, the accuracy of the Kriging model continuously improves during the optimization process. The proposed method achieves a noise reduction of 58.32 dB, ensuring high optimization accuracy while substantially increasing efficiency.Keywords

In the study of active noise control (ANC), particularly in multi-channel systems, the deployment of secondary sources significantly influences the noise reduction performance. Researchers have employed non-deterministic methods, such as enumeration and sensitivity analysis [1,2], to determine the positions of secondary sources, which have, to some extent, addressed the deployment issue. However, in complex, open-field environments, these methods demonstrate limited optimization effectiveness and precision. Modeling the sound field using Finite Element Analysis (FEA) provides a foundation for optimizing secondary source deployment by enabling accurate calculation of the sound pressure levels in the target region based on a given configuration. While the finite element model is accurate, it is computationally intensive. Achieving effective spatial noise reduction necessitates deploying a sufficient number of secondary sources to cover the target area. As the number of secondary sources increases, the dimensionality of the optimization problem’s decision variables grows rapidly, and the computational complexity of calculating the sound pressure in the target region rises exponentially.

Surrogate-assisted nature-inspired algorithms demonstrate significant advantages in solving expensive optimization problems [3]. The general approach involves constructing a low-cost surrogate model and leveraging its synergy with the global search capability of a nature-inspired algorithm. The computational cost associated with these evaluations can be substantially reduced by collaboratively using the surrogate model and the complex physical (mechanistic) model. To overcome limitations of poor model accuracy and low computational efficiency associated with a single training data strategy in high-dimensional scenarios, various advanced methods have been proposed. Guo et al. introduced a classifier-based distributed surrogate-assisted evolutionary algorithm [4] for distributed data-driven optimization problems. Zhou et al. [5] employed a two-level surrogate model structure and achieved adaptive resource allocation through a dynamic management mechanism. Liu et al. [6] proposed a fast generalized surrogate-assisted evolution algorithm based on a multi-strategy hybrid sparrow search algorithm (FGSAEA-MSHSSA), which, by introducing an innovative model management framework, significantly reduces the number of function evaluations and enhances optimization performance. Rahi et al. [7] proposed a partial-evaluation-based strategy for expensive constrained optimization problems, which sequentially evaluates constraints according to their likelihood of violation and aborts evaluation upon detecting a violation. This approach, combined with an improved ranking mechanism, significantly reduces evaluation cost. Zhang et al. [8] proposed a hierarchical surrogate-assisted optimization algorithm, which locates promising regions during the global search phase and employs a dynamically ensemble surrogate model in the local search phase to accelerate convergence. Pan et al. [9] proposed a hybrid surrogate-assisted optimization algorithm that integrates global exploration and local exploitation, incorporating novel pre-screening and dual control strategies, and employs local RBF models to efficiently solve expensive optimization problems.

Among various heuristic algorithms, Differential Evolution (DE) offers potential for extension to expensive optimization problems due to its lower space complexity compared to many other Evolutionary Algorithms (EA) [10]. Initially proposed by Storn and Price in 1997 [11], the DE algorithm has since been significantly improved by researchers. Notable enhancements include: Farda et al. [12], who integrated adaptive mutation operators with a stochastic learning-based population improvement strategy; and Liu et al. [13], who proposed a learnable evolutionary framework based on reinforcement learning, enabling autonomous adaptation of algorithm parameters and intelligent optimization of search strategies through deep reinforcement learning.

In distributed active noise control systems, increasing the number of secondary sources can enhance global noise attenuation performance. However, this also increases the parameter dimensionality due to the inclusion of spatial coordinates and initial phase parameters for each source. This paper formulate the co-optimization of secondary source placement and initial phases as a high-dimensional constrained non-convex nonlinear programming problem. To address the non-convexity of the model, our previous work proposed the IPMDEW algorithm, which integrates the global exploration capability of an improved differential evolution with the local optimization of an interior point method, achieving effective solution of the problem under a non-surrogate framework. However, this algorithm requires multiple calls to computationally expensive finite element simulations during iteration. To improve optimization efficiency, this paper further introduces a surrogate model, constructing a Kriging surrogate model for calculating the superimposed sound pressure of secondary sources, and designs an iterative update mechanism for the surrogate model based on the k-means clustering method to ensure its reliability. The proposed expensive optimization algorithm employs a dynamic switching strategy between global stochastic search and local gradient-based optimization, significantly improving search efficiency in high-dimensional decision spaces while balancing convergence speed and accuracy. Furthermore, by applying k-means clustering to dynamically select representative candidate solutions for finite element evaluation, the method effectively avoids frequent, computationally intensive FEA.

The structure of this paper is organized as follows: Section 1 describes the optimization problem of secondary source deployment. Section 2 presents the proposed Kriging surrogate-assisted Interior Point Method-assisted Differential Evolution with Weibull distribution (IPMDEW) method. Section 3 presents the simulation experiments to validate the accuracy improvement of the Kriging model during the iterative process and the optimization effectiveness of the IPMDEW algorithm. Finally, Section 4 concludes with a summary and outlines directions for future research.

The proposed approach utilizes the Kriging surrogate model and incorporates hybrid differential evolution with clustering-based iterative model updates to address efficiency challenges in expensive optimization problems.

2.1 Description of the Secondary Source Optimization Problem

Active Noise Control is based on the principle of destructive interference of sound waves. In an ideal fluid medium, neglecting the reflection of spherical waves, the sound pressure at any point in space can be expressed as:

where,

By defining the field point as the coordinate origin and taking into account the attenuation effect of each sound source, the resultant sound pressure at the field point derived from the principle of coherent wave superposition can be expressed as:

Typically, the sound pressure level (SPL) is used to evaluate the noise reduction performance, where a lower SPL indicates better performance. The SPL function can be defined as:

where,

Finally, the optimization problem can be formulated as follows:

where

2.2 Construction of the Kriging Model

For a given dataset

Based on the mean and variance, the conditional probability for a given value

To ensure the obtained conditional probability is maximized, the maximum likelihood estimation method is employed to compute the partial derivatives with respect to

Next, prediction is performed using the aforementioned Kriging model. Assuming a prediction point

where,

By taking the partial derivative of Eq. (10), the predicted value

2.3 The Kriging Surrogate-Assisted Optimization Method

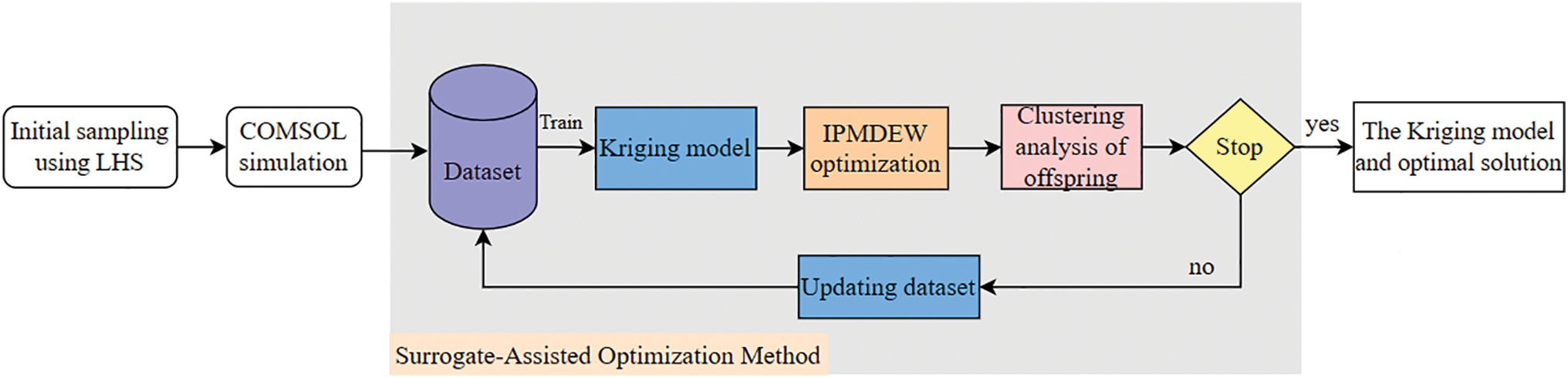

Given the challenges of computational complexity and cost in the secondary source deployment optimization problem, this study proposes an optimization framework based on Kriging surrogate assistance, which integrates the IPMDEW algorithm. The proposed strategy achieves an effective balance between global exploration and local search capabilities, as illustrated in Fig. 1.

Figure 1: Framework of the Kriging surrogate optimization algorithm.

During the initialization phase, a high-information-density initial sample set is constructed via design of experiments, combining Latin Hypercube Sampling [16] (LHS) with historical datasets. Throughout the iterative optimization process, the Kriging model approximates the objective response values in real time to reduce computational overhead. To overcome the limitations of traditional passive surrogate modeling, an active learning mechanism is proposed: in each iteration, k-means clustering is applied to the offspring population generated by differential evolution. This method screens individuals based on diversity, selecting the most spatially representative candidate solutions for Finite Element validation. This strategy ensures that newly added sample points effectively probe undersampled design subspaces and mitigates the local overfitting of the surrogate model that can result from sample clustering.

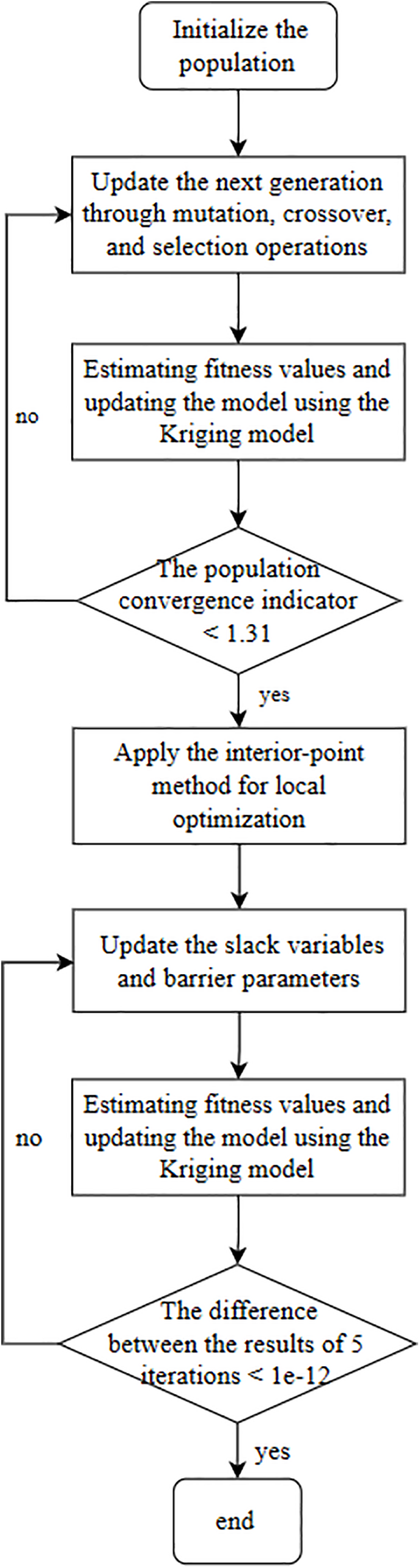

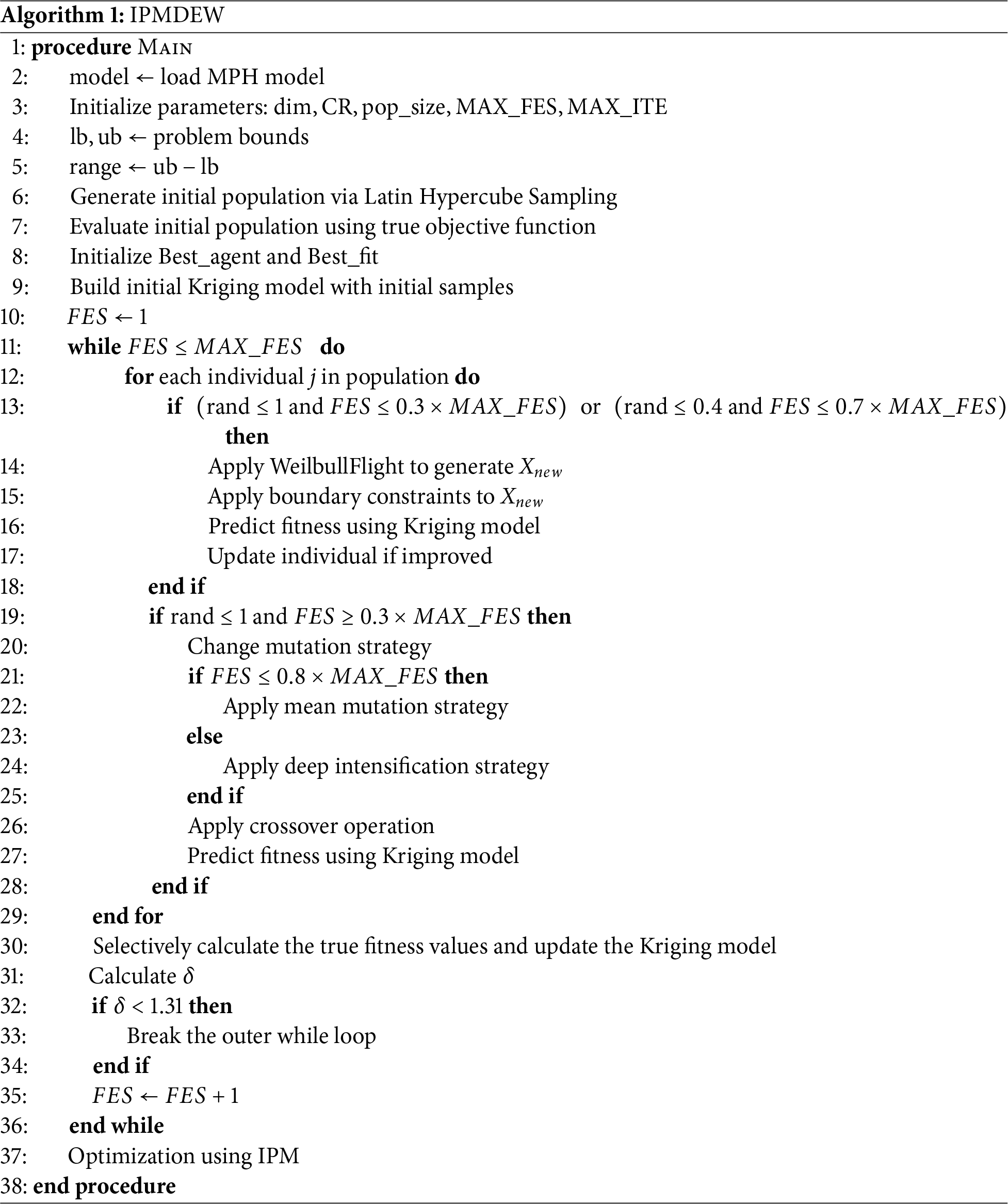

To optimize high-dimensional Kriging models, this paper proposes an integrated algorithm that combines the IPMDEW method (Algorithm 1). The algorithm employs the Weibull distribution-based Weighted Differential Evolution (MDEW) for global exploration. When the population converges, it switches to the Interior Point Method (IPM) for local refinement within the neighborhood of the best solution found. The detailed procedure is illustrated in Fig. 2.

Figure 2: Flowchart of the IPMDEW algorithm.

Step (1) Initialize the population. First, the MDEW algorithm initializes a population randomly, where each individual represents a potential solution

where

Step (2) Generate the offspring. Updating the population involves the following procedures.

(1) Introduce three mutation strategies [17], and generate the mutation vector

where

where

(2) Generate the new crossover vectors as following rule:

where

(3) Push the trial vector to the next generation if it has better fitness.

where

Step (3) Updating the Kriging Model. Offspring are selected using k-means clustering. The selected individuals are then validated through FEA to update both the dataset and the Kriging model.

Step (4) Evaluate the population convergence threshold. To enhance the global search capability of MDEW, a population convergence threshold is defined. the population’s standard deviation in each dimension is calculated as follows:

where

The population convergence threshold

Through multiple convergence experiments, the convergence threshold

Step (5) Construct the approximation function. The approximation function is constructed using the logarithmic potential function and the Lagrange multiplier as follows:

The added logarithmic term acts as a barrier function,

Step (6) Update the slack variable and barrier parameter. Each iteration applies either a direct step or a conjugate gradient step, based on an evaluation function defined as:

(1) The direct step

where

(2) The conjugate gradient step obtains the Lagrangian multipliers by solving the following equation:

When satisfying

Due to the black-box nature of the distributed active noise optimal control model, the finite difference method was employed to compute all first- and second-order derivatives. The validity of this method is supported by Refs. [18,19].

The parameter

The barrier parameter is updated using the predictor-corrector step [20]. In the predictor step, a linearized step size is employed, and with

Define and as the maximum step sizes that satisfy the constraints.

To evaluate the performance of the prediction-corrector step in updating the barrier parameter, a complementary parameter

where

The second correction step, or centering step, is computed by solving the following equation:

where

Step (7) Perform iterative operations. If the difference between the results of the last five iterations is less than

The experiments were conducted on a PC running Windows 10 Pro-64 bit, equipped with an Intel(R) Core(TM) i7-6700 CPU @ 3.40 GHz and 16 GB of RAM. The implementation of all pro-posed algorithms was done using MATLAB 9.9 (R2020b).

In the finite element simulation, the computational domain is configured as follows: each wavelength is discretized with no fewer than 8 mesh elements to ensure convergence, and a PML is applied as the absorbing boundary condition. The MUltifrontal Massively Parallel Sparse Direct Solver (MUMPS) is selected for computation, with the relative tolerance set to 0.001. The description related to the Kriging model is as follows: In the Kriging model, the kernel function employs the RBF Gaussian kernel to characterize the correlation structure of spatial data, which effectively captures the similarity between sample points through an exponential form of radial distance. The estimation of hyperparameters is based on a maximum likelihood estimation strategy, where the likelihood function is maximized to optimize model fitting, thereby enhancing prediction accuracy and robustness.

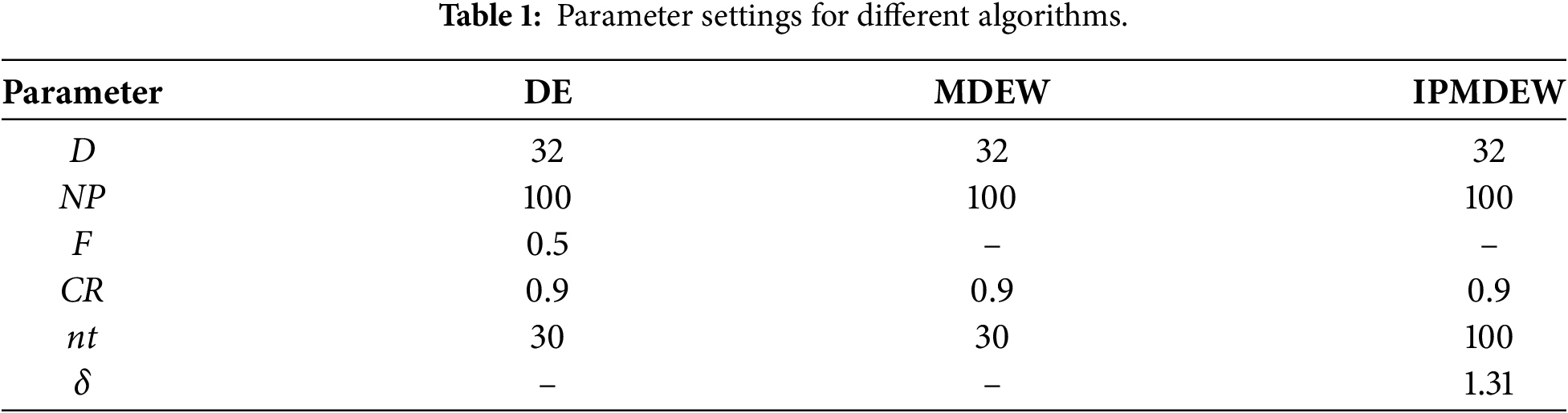

In the experimental design, the IPMDEW algorithm was employed to optimize the Kriging model. To systematically evaluate both the optimization performance of IPMDEW and the prediction accuracy of the Kriging model, multiple comparative schemes were established. For comparative analysis, both the standard DE algorithm and the MDEW algorithm—the latter of which exhibits enhanced global exploration capabilities—were introduced. During the FEA optimization process, key algorithm parameters—including population size (NP), mutation factor (F), crossover probability (CR) and number of iterations (

To assess the prediction accuracy of the Kriging surrogate model, a test sample set was generated within the parameter space using the LHS method, and the predictions of the Kriging model were compared against FEA.

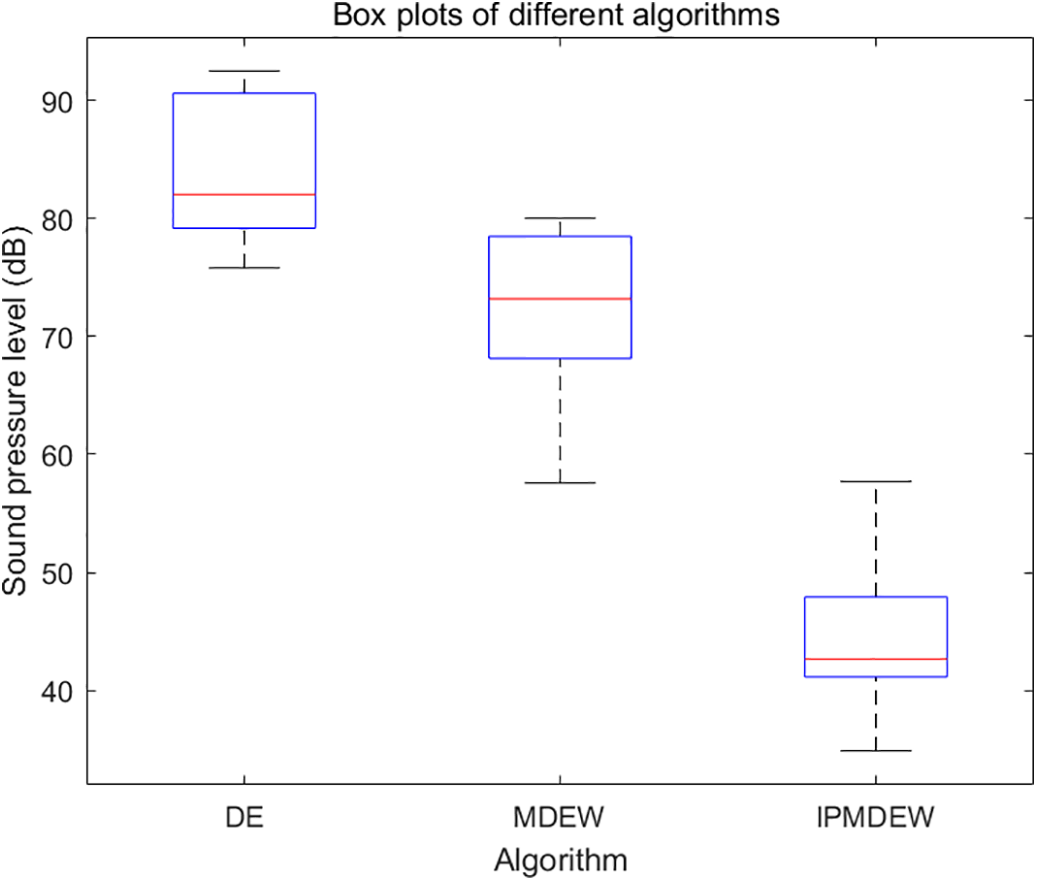

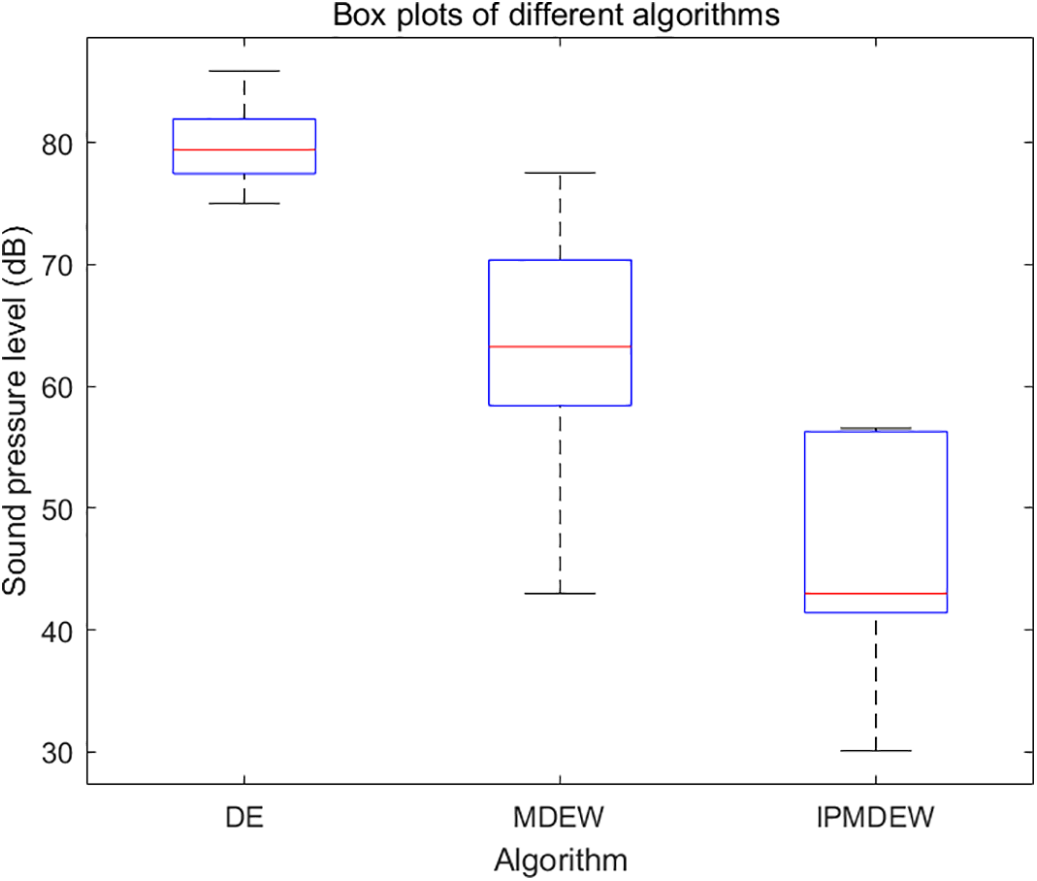

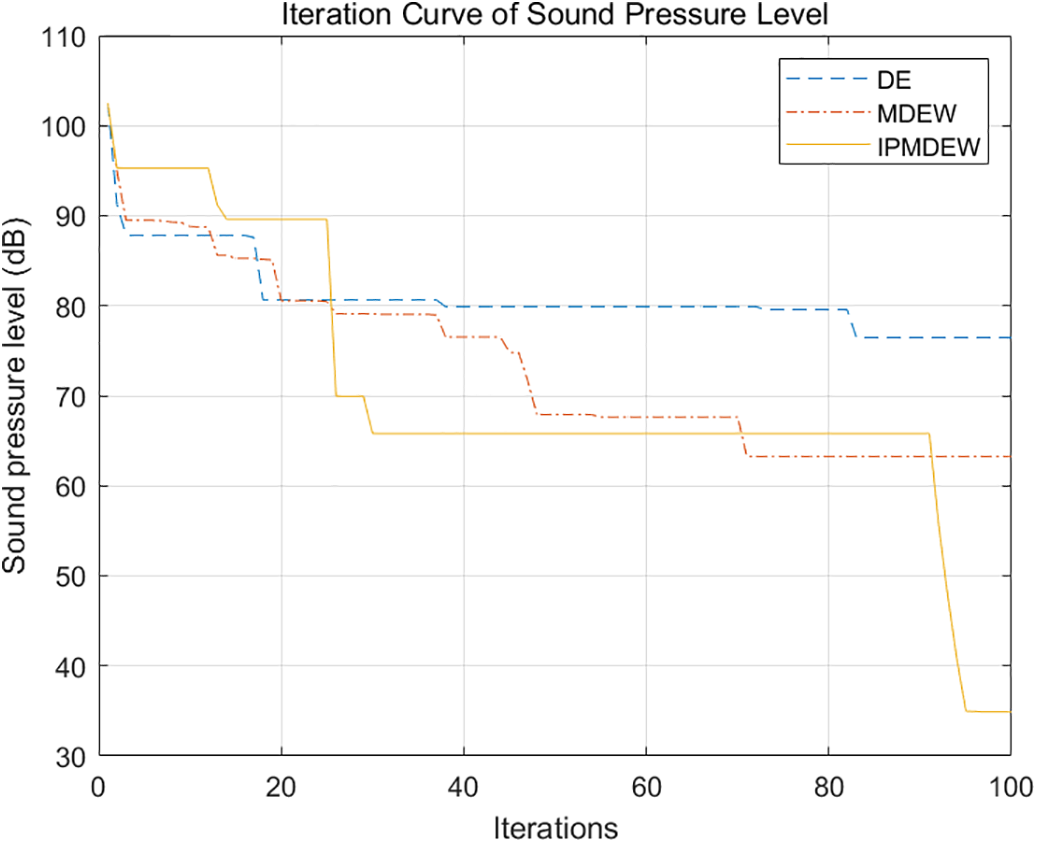

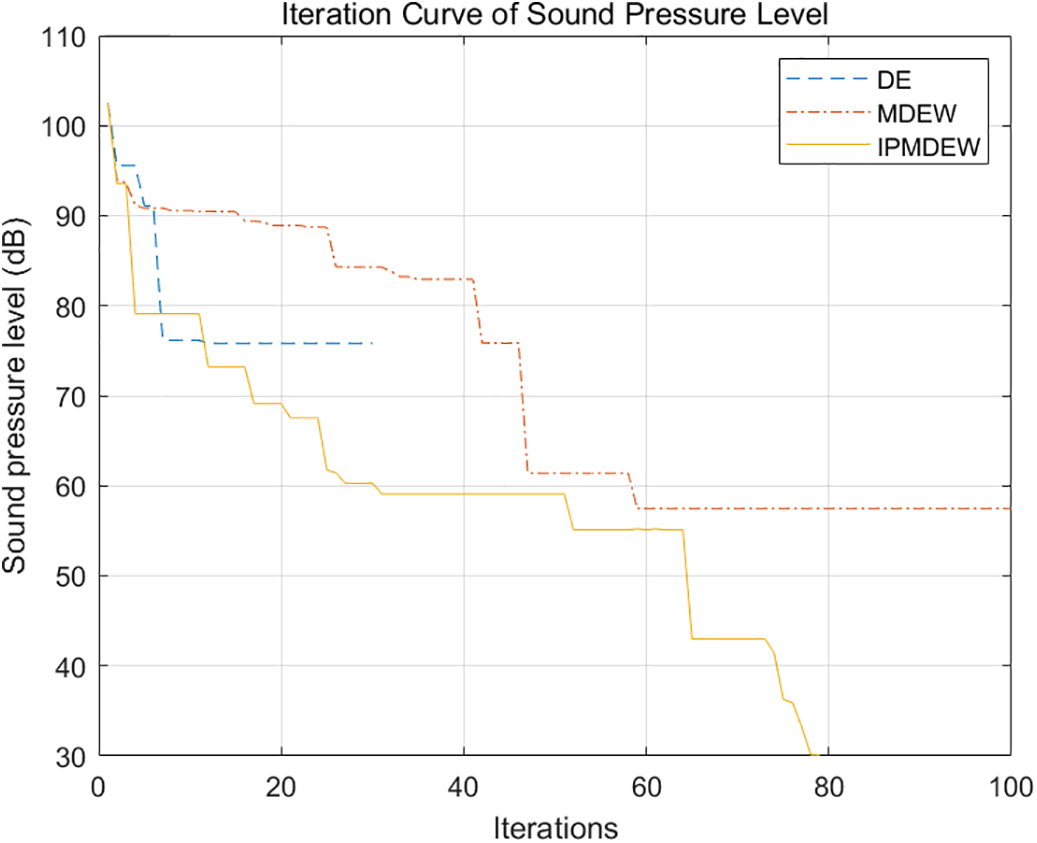

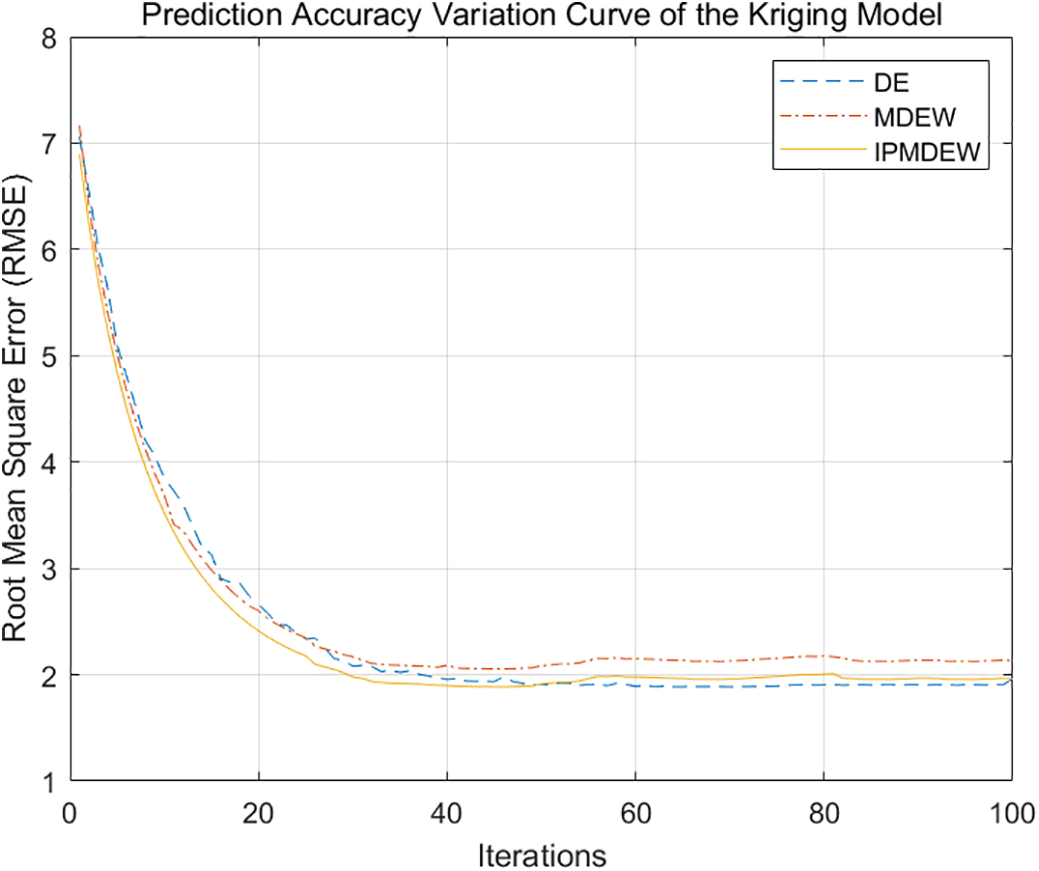

The DE, MDEW, and IPMDEW algorithms were used to perform twenty independent optimization runs based on the Kriging surrogate model (Figs. 3 and 4). The convergence curves in Figs. 5 and 6, which compare the performance of the three algorithms in both Kriging-based surrogate optimization and Non-surrogate optimization, the IPMDEW algorithm exhibits significant performance advantages in optimizing both the surrogate model and the direct simulation model. These advantages are evident in its faster convergence speed and superior solution quality. Furthermore, Fig. 7 shows that the prediction accuracy of the Kriging model improved consistently throughout the iterative process, eventually stabilizing with minor fluctuations. This validates its reliability as an efficient surrogate model.

Figure 3: Box plots of multiple runs for different algorithms under surrogate optimization.

Figure 4: Box plots of multiple runs for different algorithms under Non-surrogate optimization.

Figure 5: Iteration curves of different algorithms for Kriging surrogate optimization.

Figure 6: Iteration curves of different algorithms for Non-surrogate optimization.

Figure 7: Prediction accuracy variation curve of the Kriging model.

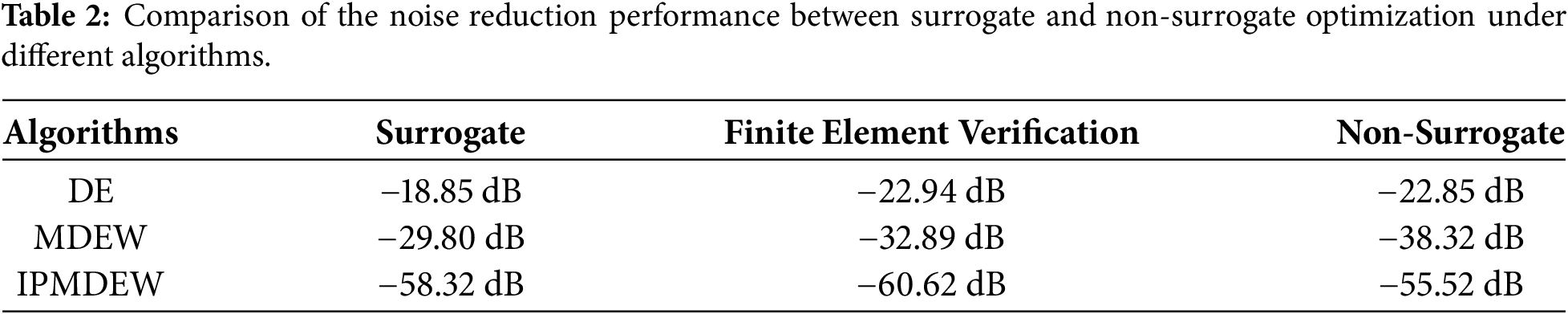

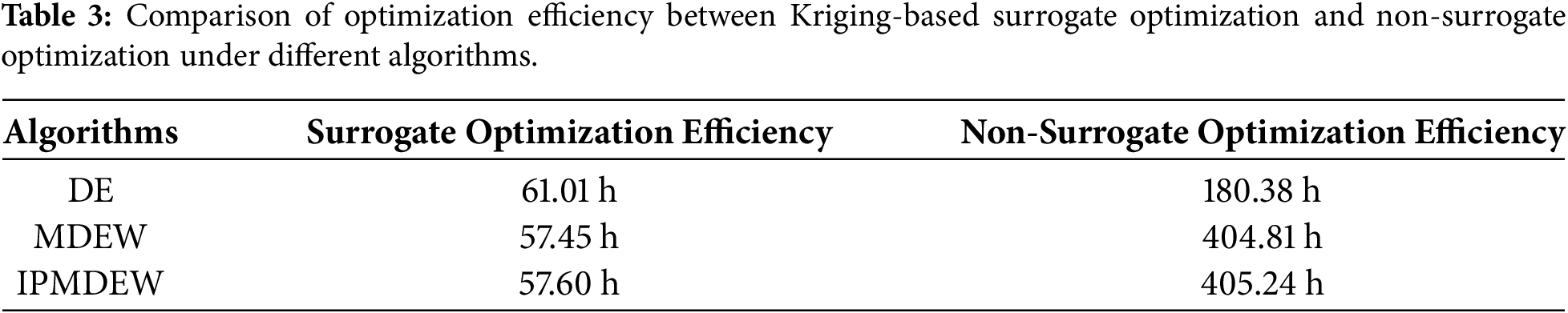

A comparison of the results in Tables 2 and 3 reveal the average performance of differences algorithms under Kriging-based surrogate optimization and Non-surrogate optimization, with the optimization outcomes further validated via finite element analysis. The DE algorithm exhibited premature convergence due to its weak global search capability. Although more effective than DE, the MDEW algorithm was limited by the Kriging model’s prediction accuracy during iterations; as a result, its final noise reduction performance did not reach the optimum achieved by direct simulation. Despite these limitations, the Kriging-assisted optimization approach dramatically improved the optimization efficiency of all algorithms evaluated. The IPMDEW algorithm, however, effectively balancing global exploration and local exploitation. It achieved a noise reduction effect closely approximated the optimum while substantially improving computational efficiency, successfully co-optimizing both performance and efficiency.

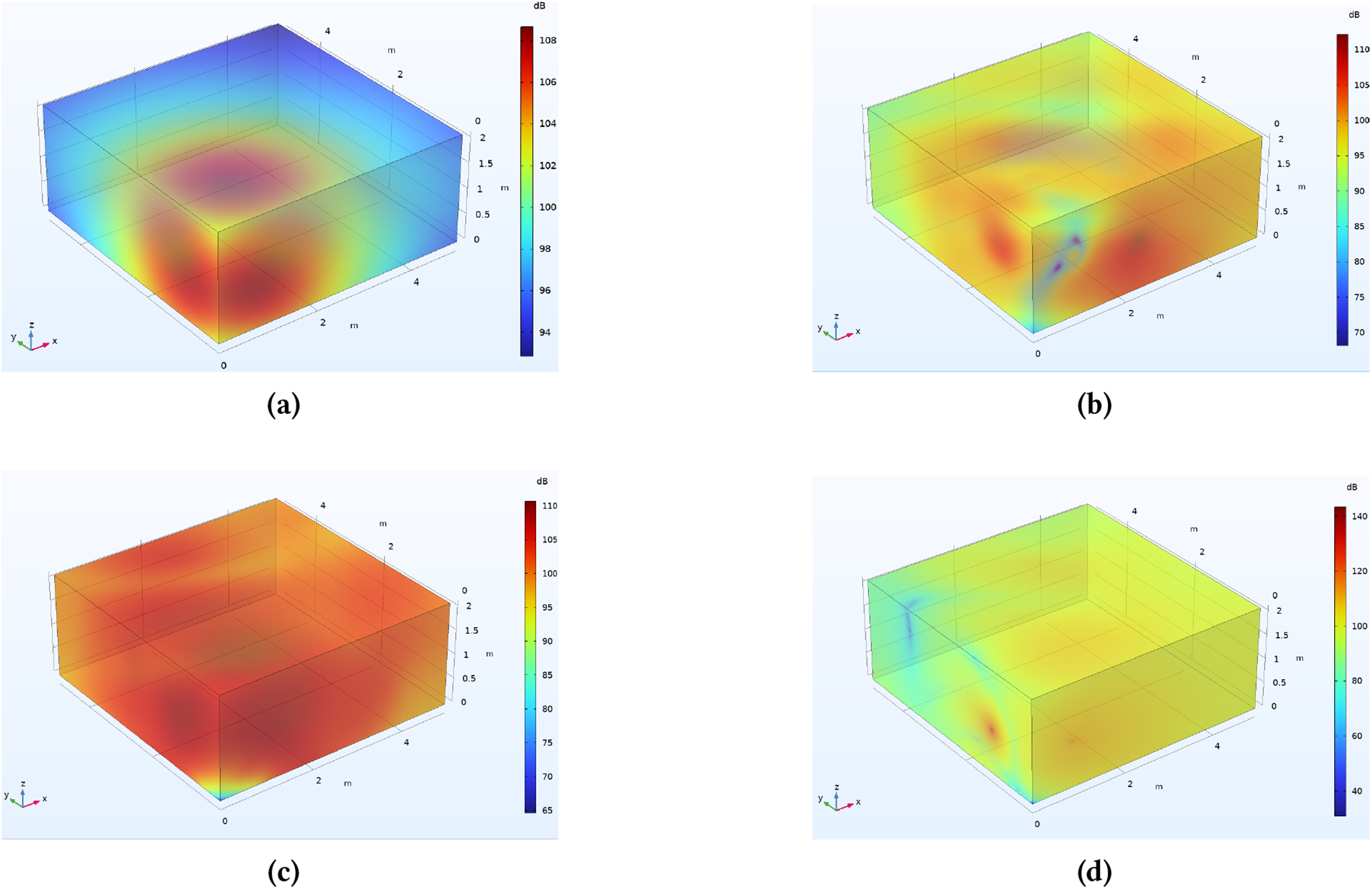

To evaluate the predictive capability of the Kriging model for optimal solutions, we validated its optimization results through finite element simulation. Fig. 8 shows a comparison of the spatial sound pressure level distributions before and after optimization achieved by the three algorithms. The errors between the Kriging-assisted optimization results and the FEA results were all within 3 dB, confirming the reliability of the surrogate model.

Figure 8: Finite Element validation of Kriging surrogate-assisted optimization under different algorithms. (a) baseline sound pressure level distribution; (b) DE algorithm; (c) MDEW algorithm; (d) IPMDEW algorithm.

This paper presents a surrogate-assisted hybrid differential evolution algorithm to address the high-dimensional expensive optimization problem of secondary source deployment in active noise control. By employing a Kriging model as a surrogate for finite element-based sound field simulations, combined with a k-means clustering-based dynamic update mechanism, the computational time for a single sound pressure evaluation was reduced by 85.79%, while model reliability in non-uniform sound fields was maintained. The IPMDEW algorithm integrates the global exploration capability of MDEW with the gradient-based local optimization of the IPM, achieving dynamic switching via a population diversity threshold. Compared to conventional DE and MDEW algorithms, IPMDEW improves noise reduction performance by 67.7% and 48.9%, respectively. The optimized secondary source deployment achieved a noise reduction of 58.32 dB, approaching the accuracy of finite element simulations and validating the algorithm’s effectiveness and precision in complex acoustic environments. This work introduces a surrogate-based hybrid differential evolution approach to acoustic source deployment, providing a practical and efficient solution for optimizing secondary source arrangements in multi-channel spatial active noise control systems. The method has been validated for its reliability in steady sound fields; however, its adaptability to dynamic time-varying acoustic environments, such as those with real-time noise source variations, remains to be investigated. In such scenarios, the existing offline surrogate model update mechanism may struggle to cope with rapid changes, potentially leading to delays in updates and a decline in prediction accuracy.

Acknowledgement: The author would like to express the most sincere gratitude to my supervisor, for his invaluable guidance, continuous support, and patience throughout this study. His profound knowledge and rigorous scientific attitude have been a great asset to my research career.

Funding Statement: The authors received no specific funding for this study.

Author Contributions: Chao Wu: writing—original draft, software, experiment, validation. Weijian Kong: writing—review & editing, methodology, conceptualization. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: Data used to support the findings of this study are included in the article.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Brancatia A, Aliabadi MH, Mallardo V. ABEM sensitivity formulation for three-dimensional active noise control. Int J Numer Methods Eng. 2012;90(9):1183–206. doi:10.1002/nme.3369. [Google Scholar] [CrossRef]

2. Sahu N, Wu L, Babu P, Bhavani Shankar MR, Ottersten B. Optimal sensor placement for source localization: a unified ADMM approach. IEEE Trans Veh Technol. 2022;71(4):4359–72. doi:10.1109/TVT.2022.3146603. [Google Scholar] [CrossRef]

3. Liu HQ, Ren ZG, He CL, Du W. A surrogate-assisted differential evolution algorithm with a dual-space-driven selection strategy for expensive optimization problems. Complex Intell Syst. 2025;11(5):230. doi:10.1007/s40747-025-01812-0. [Google Scholar] [CrossRef]

4. Guo XQ, Wei FF, Zhang J, Chen WN. A classifier-ensemble-based surrogate-assisted evolutionary algorithm for distributed data-driven optimization. IEEE Trans Evol Comput. 2025;29(3):711–25. doi:10.1109/TEVC.2024.3361000. [Google Scholar] [CrossRef]

5. Zhou RF, Ren CL, Meng ZY, Zhu HB. Surrogate-assisted differential evolution with search space tightening for high-dimensional expensive optimization problems. IEEE Trans Syst Man Cybern Syst. 2025;55(10):7356–68. doi:10.1109/TSMC.2025.3582897. [Google Scholar] [CrossRef]

6. Liu G, Su X, Zhou R, Liu N, Zhang L, Tian Y. A fast generalized surrogate -assisted evolutionary algorithm for solving expensive constrained optimization problems. J Supercomput. 2025;81(11):1185. doi:10.1007/s11227-025-07660-6. [Google Scholar] [CrossRef]

7. Rahi KH, Singh HK, Ray T. Partial evaluation strategies for expensive evolutionary constrained optimization. IEEE Trans Evol Comput. 2021;25(6):1103–17. [Google Scholar]

8. Zhang J, Li M, Yue X, Wang X, Shi M. A hierarchical surrogate assisted optimization algorithm using teaching-learning-based optimization and differential evolution for high-dimensional expensive problems. Appl Soft Comput. 2024;152:111212. doi:10.1016/j.asoc.2023.111212. [Google Scholar] [CrossRef]

9. Pan JS, Liu N, Chu SC, Lai T. An efficient surrogate-assisted hybrid optimization algorithm for expensive optimization problems. Inf Sci. 2021;561(2):304–25. doi:10.1016/j.ins.2020.11.056. [Google Scholar] [CrossRef]

10. Jin YC. Surrogate-assisted evolutionary computation: recent advances and future challenges. Swarm Evol Comput. 2011;1(2):61–70. doi:10.1016/j.swevo.2011.05.001. [Google Scholar] [CrossRef]

11. Storn R, Price K. Differential evolution: a simple and efficient heuristic for global optimization over continuous spaces. J Glob Optim. 1997;11(4):341–59. doi:10.1023/A:1008202821328. [Google Scholar] [CrossRef]

12. Farda I, Thammano A, Morris J. Differential evolution with self-adaptive mutation and population improvement strategy for optimization problems. IEEE Access. 2024;12:131809–29. doi:10.1109/ACCESS.2024.3460385. [Google Scholar] [CrossRef]

13. Liu X, Sun JY, Zhang QF, Wang Z, Xu Z. Learning to learn evolutionary algorithm: a learnable differential evolution. IEEE Trans Emerg Top Comput Intell. 2023;7(6):1605–20. doi:10.1109/TETCI.2023.3251441. [Google Scholar] [CrossRef]

14. Chew WC, Liu QH. Using perfectly matched layers for elastodynamics. In: Proceedings of the IEEE Antennas and Propagation Society International Symposium, 1996 Digest; 1996 Jul 21–26; Baltimore, MD, USA. p. 366–9. doi:10.1109/APS.1996.549614. [Google Scholar] [CrossRef]

15. Zhan DW, Cheng YS, Liu J. Expected improvement matrix-based infill criteria for expensive multiobjective optimization. IEEE Trans Evol Comput. 2017;21(6):956–75. doi:10.1109/TEVC.2017.2697503. [Google Scholar] [CrossRef]

16. Ioardanis I, Koukouvino C, Silou I. Classification accuracy improvement using conditioned latin hypercube sampling in supervised machine learning. In: Proceedings of the 2022 12th International Conference on Dependable Systems, Services and Technologies (DESSERT); 2022 Dec 9–11; Athens, Greece. p. 1–5. doi:10.1109/DESSERT58054.2022.10018677. [Google Scholar] [CrossRef]

17. Layeb A. Differential evolution algorithms with novel mutations, adaptive parameters, and Weibull flight operator. Soft Comput. 2024;28:7039–91. doi:10.1007/s00500-023-09561-3. [Google Scholar] [CrossRef]

18. Grapiglia GN, Gonçalves MLN, Silva GN. A cubic regularization of Newton’s method with finite difference Hessian approximations. Numer Algorithms. 2022;99(2):607–30. doi:10.1007/s11075-021-01200-y. [Google Scholar] [CrossRef]

19. Andrei N. Diagonal approximation of the hessian by finite differences for unconstrained optimization. J Optim Theory Appl. 2020;185(3):859–79. doi:10.1007/s10957-020-01676-z. [Google Scholar] [CrossRef]

20. Nocedal J, Wright SJ. Interior-point methods for nonlinear programming. In: Numerical optimization. Springer series in operations research and financial engineering. New York, NY, USA: Springer; 2006. p. 563–97. doi:10.1007/978-0-387-40065-5_19. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools