Open Access

Open Access

ARTICLE

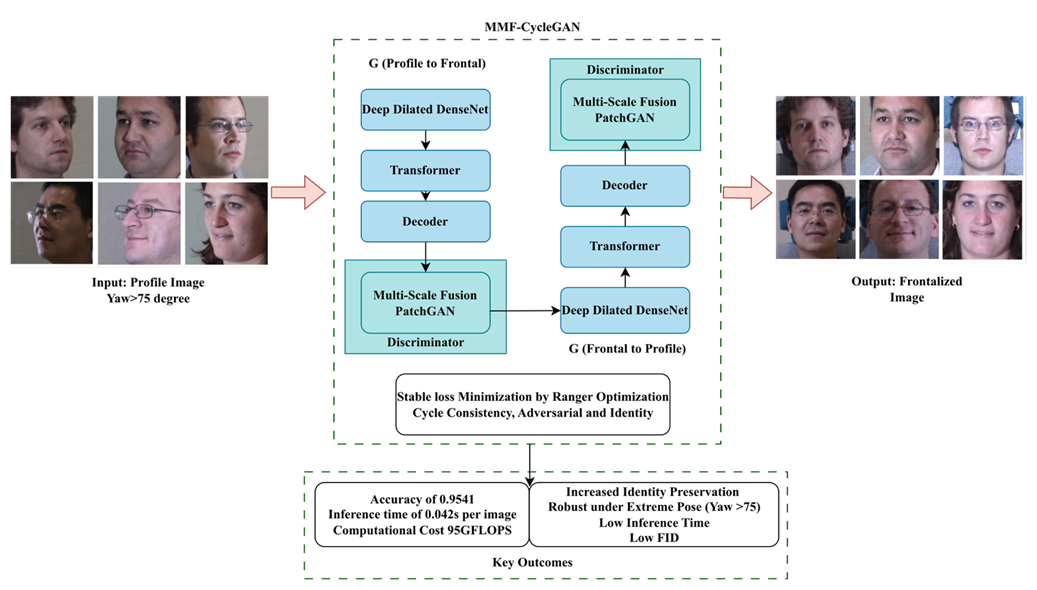

MMF-CycleGAN: A Multi-Scale Generative Framework for Robust and Identity-Preserving Face Frontalization

1 Department of Computer Science and Engineering, College of Engineering Guindy, Anna University, Chennai, India

2 Department of Information Technology, Madras Institute of Technology, Anna University, Chrompet, Chennai, India

* Corresponding Authors: Shiloah Elizabeth Darmanayagam. Email: ,

Computer Modeling in Engineering & Sciences 2026, 146(3), 34 https://doi.org/10.32604/cmes.2026.077293

Received 06 December 2025; Accepted 04 February 2026; Issue published 30 March 2026

Abstract

Recognizing frontal faces from non-frontal or profile images is a major problem due to pose changes, self-occlusions, and the complete loss of important structural and textural components, depressing recognition accuracy and visual fidelity. This paper introduces a new deep generative framework, Modified Multi-Scale Fused CycleGAN (MMF-CycleGAN), for robust and photo-realistic profile-to-frontal face synthesis. The MMF-CycleGAN framework utilizes pre-processing and then the generator employs a Deep Dilated DenseNet encoder-based hierarchical feature extraction along with a transformer and decoder. The proposed Multi-Scale Fusion PatchGAN discriminator enforces consistency at multiple spatial resolutions, leading to sharper textures and improved global facial geometry. Also, GAN training stability and identity preservation are improved through the Ranger optimizer, which effectively balances adversarial, identity, and cycle-consistency losses. Experiments on three benchmark datasets show that MMF-CycleGAN achieves accuracy of 0.9541, 0.9455, and 0.9422, F1-scores of 0.9654, 0.9641, and 0.9614, and AUC values of 0.9742, 0.9714, and 0.9698, respectively, and the extreme-pose accuracy (yaw > 60°) reaches 0.92. Despite its enhanced architecture, the framework maintains an efficient inference time of 0.042 s per image, making it suitable for real-time biometric authentication, surveillance, and security applications in unconstrained environments.Graphic Abstract

Keywords

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools