Open Access

Open Access

ARTICLE

Mobility-Aware Federated Learning for Energy and Threat Optimization in Intelligent Transportation Systems

1 Computer Science Department, College of Computer Science and Information Systems, Najran University, Najran, 61441, Saudi Arabia

2 Department of Computer Science, CECOS University of IT and Emerging Sciences, Peshawar, 25000, Pakistan

3 Department of Information Systems, College of Computer and Information Sciences, Princess Nourah bint Abdulrahman University, Riyadh, 11671, Saudi Arabia

4 Department of Telecommunication, Hazara University, Mansehra, 21120, Pakistan

5 The Faculty of Science and Engineering, Abo Akademi University, Turku, 20520, Finland

* Corresponding Author: Jarallah Alqahtani. Email:

Computers, Materials & Continua 2026, 87(2), 47 https://doi.org/10.32604/cmc.2026.075250

Received 28 October 2025; Accepted 26 December 2025; Issue published 12 March 2026

Abstract

The technological advancement of the vehicular Internet of Things (IoT) has revolutionized Intelligent Transportation Systems (ITS) into next-generation ITS. The connectivity of IoT nodes enables improved data availability and facilitates automatic control in the ITS environment. The exponential increase in IoT nodes has significantly increased the demand for an energy-efficient, mobility-aware, and secure system for distributed intelligence. This article presents a mobility-aware Deep Reinforcement Learning based Federated Learning (DRL-FL) approach to design an energy-efficient and threat-resilient ITS. In this approach, a Policy Proximal Optimization (PPO)-based DRL agent is first employed for adaptive client selection. Second, an autoencoder-based anomaly detection module is considered for malicious node detection. Results reveal that the proposed framework achieved an 8% higher accuracy increase, and 15% lower energy consumption. The model also demonstrates greater resilience under adversarial conditions compared to the state of the art in federated learning. The adaptability of the proposed approach makes it a compelling choice for next-generation vehicular networks.Keywords

The vehicular Internet of Things (IoT) has seen massive growth across an extensive range of features in Intelligent Transport Systems (ITSs). The incorporation of IoT into ITS has transformed it into next-generation ITS with higher connectivity and intelligence [1,2]. This transformation can be leveraged to enhance traffic management, route optimization, and accident prevention. Federated Learning (FL) has emerged as an innovative learning paradigm to address the challenges of high communication cost, privacy risks, and latency. The cooperative model training is enabled on edge nodes to improve data privacy and reduce network congestion [3].

FL deployment in heterogeneous environments is a challenging task regardless of its potential [4]. The model’s convergence and accuracy can be significantly degraded by high vehicular mobility and frequent disconnections. The vehicular nodes continuously move in ITSs, which affects learning stability. Studies have shown that learning performance can be improved with reliable connections and timely node engagements [5]. The direct influence of mobility patterns has been ignored in existing FL frameworks, leading to aggregation delays and suboptimal accuracy in dense scenarios [6].

Energy efficiency is another challenging concern in FL-oriented IoT environments [7]. In vehicular networks, IoT devices are deployed and powered by batteries, which limits their power reserves. A significant amount of computational power is consumed during communication and model training. It not only shortens the device’s lifetime but also disrupts the network connectivity. The proposed FL algorithms focus on energy consumption to optimize communication cost. The impact of node mobility on energy consumption in the ITS-FL environment remains an open challenge [8,9].

Privacy and trust management are other critical issues in addition to the energy and mobility constraints [10–12]. FL systems have a distributed architecture, which makes them vulnerable to data manipulation and to attacks on attack detection. Uncompromised nodes in vehicular networks can introduce malicious information that disrupts the global model. Various detection methods and trust-based schemes have been proposed to address these risks, but most techniques rely on centralized verification [13,14]. There is a need to develop an intelligent mechanism to detect mobile characteristics for secure and efficient FL procedures.

This research proposes an enhanced IoT-based DRL-FL framework for ITS. The main contribution of the paper includes a DRL agent for optimizing accuracy, energy usage, and trust level. An analytical mobility model is considered to estimate the client stability and minimize data loss. It also includes continuous monitoring of the node’s energy and local parameter adjustment. Malicious node detection has been performed using an autoencoder mechanism for secure model aggregation.

Mobility-aware associate learning frameworks (such as MOB-FL, ESAFL, and MDFL) have focused on synchronous or semi-asynchronous aggregation under vehicle mobility. This framework optimizes energy efficiency, mobility stability, and robustness against malicious nodes in a unified architecture. A node selection mechanism and trust-weighted aggregation based on deep reinforcement learning (DRL) have been introduced. This integration includes a policy proximal optimization (PPO) based DRL agent for adaptive client selection, an autoencoder-driven anomaly detection, and a trust-weighted aggregation scheme for predicted connectivity, trust score, threat mitigation, and reliable global model updates. The novelty of the proposed model lies in integrating DRL and FL functionality in a mobile environment. This framework will not only improve the efficiency of intelligent traffic management but also enhance the smart vehicular network.

The remainder of this paper is organized as follows. Section 2 reviews related studies on federated learning in ITS, energy optimization, and security enhancement. Section 3 presents the system model and detailed methods of the proposed framework. Section 4 discusses the experimental setup and evaluation, followed by a conclusion and future work in Section 5.

Federated learning (FL) for vehicular and intelligent connected vehicle (ICV) environments is gaining increasing attention because it enables collaborative model training without sharing raw data within the vehicle. Much recent literature focuses on addressing the unique challenges posed by vehicle mobility—short contact times, frequent handovers, and intermittent connectivity—and on improving FL convergence and robustness under these dynamic conditions. Below, we summarize and critically assess recent representative contributions addressing mobility, synchronization/aggregation strategies, decentralized learning, client selection, and self-supervised pre-training in vehicular FL settings.

Xie et al. proposed MOB-FL, a mobility-aware federated learning framework that explicitly optimizes the duration of each training round and the number of local iterations to maximize resource utilization in short-term wireless connections [13]. MOB optimizations to effectively utilize available contact time in each vehicle, thereby reducing wasted computation/communication opportunities and accelerating convergence. This approach has been validated on beam selection and trajectory prediction tasks. The results show that adapting round duration and workload per client to mobility substantially improves FL convergence in high-mobility scenarios. The strength of MOB-FL lies in operationally calculating contact times and mapping them to FL parameters; however, this approach primarily targets convergence speed through scheduling and does not address complementary issues such as client device energy sustainability or adversarial resilience in updates.

Jin et al. recognized the complementary issues arising from mobility and heterogeneity and proposed ESAFL, a semi-asynchronous FL scheme explicitly designed for vehicular networks [14]. ESAFL mitigates the straggler effect by grouping connected vehicles into layers based on arrival order and employing an Age-of-Information (AoI) aggregation strategy to balance delayed contributions. This semi-asynchronous design mitigates the negative impact of late or missing client uploads and empirically improves accuracy and convergence on standard image datasets. ESAFL is renowned for its practical focus on asynchronous aggregation and its intelligent use of AoI to weight delayed updates. However, ESAFL assumes a certain level of layering and coordination in RSUs. At the same time, it addresses availability and delay, but it does not explicitly optimize energy usage per client or incorporate vehicle reliability into aggregation weights.

Recent research has focused on decentralized or leader-based formulations that are more resilient to infrastructure failures and scale better for dense vehicular deployments. The Mobility-Aware Decentralized Learning (MDFL) framework formulates local iteration and leader election as joint optimization and solves them using a multi-agent RL (MAPPO) approach under the Dec-POMDP formulation [15]. MDFL aims to improve training efficiency in vehicular networks by enabling neighboring vehicles to collaborate in a decentralized manner and selecting a leader that optimally coordinates local aggregation. The multi-agent perspective is powerful for fully distributed settings and can better leverage local vehicle clusters; however, MDFL primarily focuses on iteration/leader selection and on decentralized coordination, raising questions about continuous energy monitoring, client safety thresholds, and security against malicious updates in realistic ITS environments.

Client selection has also been studied from a more classical optimization perspective. Chang et al. proposed a mobility-aware vehicle selection strategy that jointly considers geographic location, speed, and data quality to select vehicles capable of completing training and upload within the available time frame [16]. This dynamic selection approach demonstrated that combining mobility metrics with data utility and resource capability yields faster convergence and greater accuracy compared to naive selection. The strength of this line of work lies in its focus on selecting the highest-value participants under time constraints; its limitation is that selection heuristics are generally reactive and do not learn from long-term results (e.g., they do not utilize DRL to optimize the long-term trade-off between energy, confidence, and accuracy).

The integration of self-supervised learning (SSL) with FL for vehicle perception tasks is another complementary avenue. A mobility-adaptive federated self-supervised learning scheme has been presented using FLSimCo [17]. This scheme uses image blur levels as a quality metric for pre-training aggregation. It also addresses the practical problem that high vehicle speeds can produce blurred images that, if naively aggregated, would corrupt the overall model. FLSimCo improves the stability and convergence of SSL in the vehicular context by weighting or filtering updates based on modality-specific quality. This research underscores the importance of adapting data quality for FL aggregation. Still, it focuses on the pre-training/SSL task rather than broader issues such as scheduleability, energy consumption, or security.

Energy-efficient and privacy-preserving federated learning (FE) are recent advances that have significantly impacted the development of ITS and smart vehicle networks [18]. A hybrid FE-based model for energy-efficient IoT systems demonstrates energy-aware aggregation and lightweight local optimization to reduce communication overhead. Improved model accuracy and device sustainability, reaching accuracy above 93% with lower communication latency, is also a further achievement. An Explainable Federated Learning (XFL) framework has been introduced for vehicular energy control in smart cities that combines hierarchical FE with explainable AI to improve transparency and achieve superior predictive accuracy (R2 up to 99.83%) [19]. It has been complemented by a Weighted Explainable FL (WEFL-XAI) approach that adaptively assigns weight to client updates based on data relevance and local model performance to improve privacy and scalability.

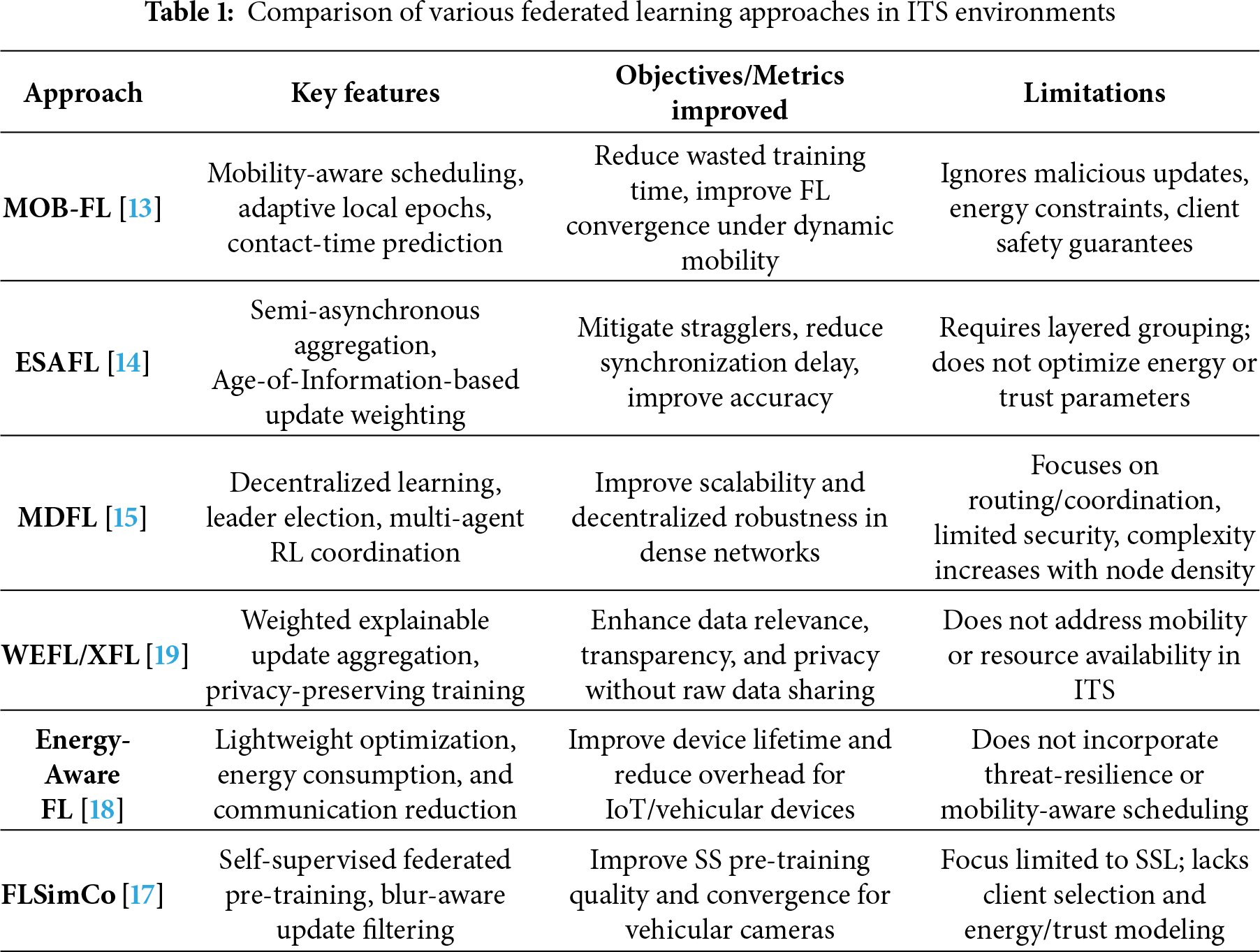

A collaborative air-to-ground ground transportation AF system was proposed that integrates Bayesian prediction mechanisms and incentives [20]. The objective was to manage large-scale, energy-efficient, privacy-protecting intelligent traffic networks. The growing convergence of energy, privacy, and urban mobility has gained valuable space in these studies [21–24]. Adaptive decision-making in critical mobility scenarios has also been lacking, underscoring the need for an improved, energy-aware, and threat-resilient urban mobility framework for next-generation vehicular networks. Table 1 compares various federated learning approaches in ITS environments.

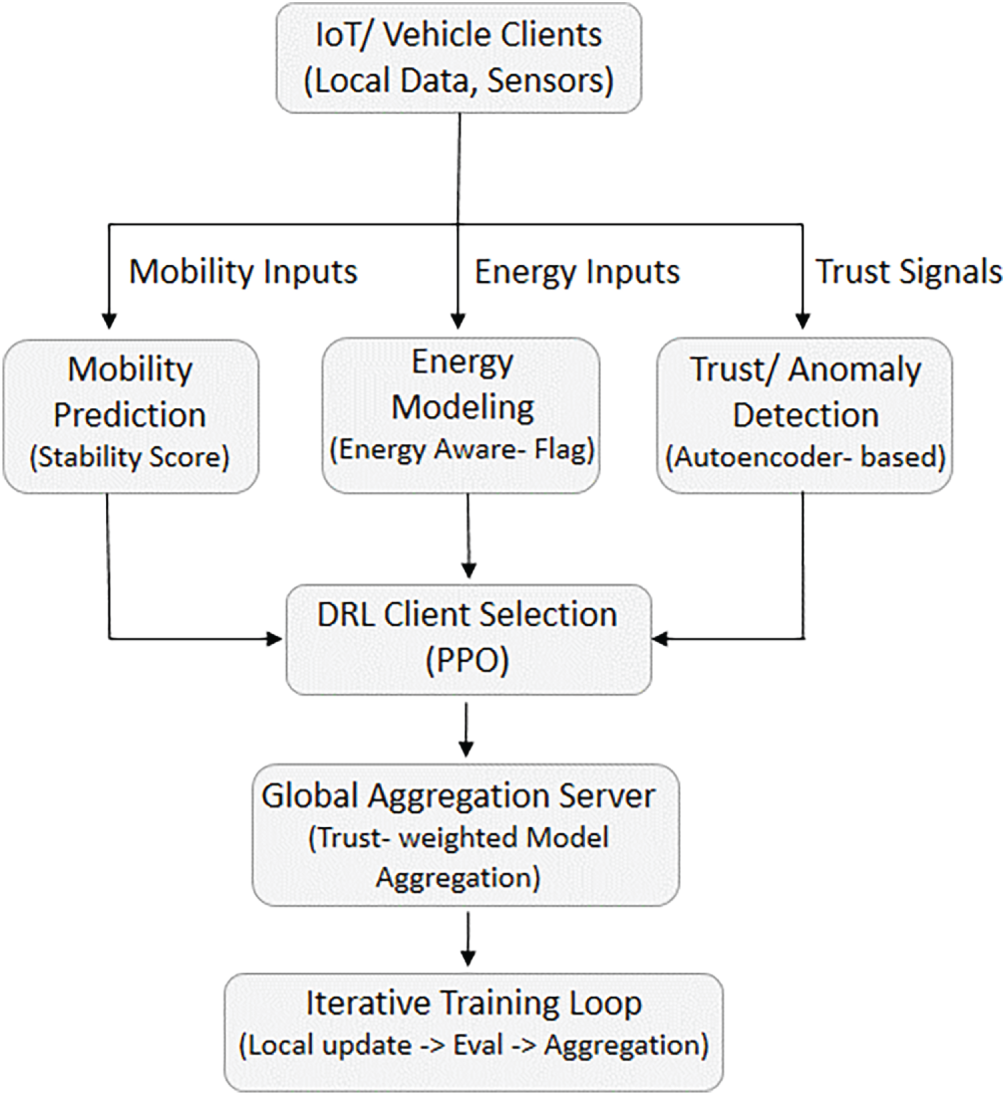

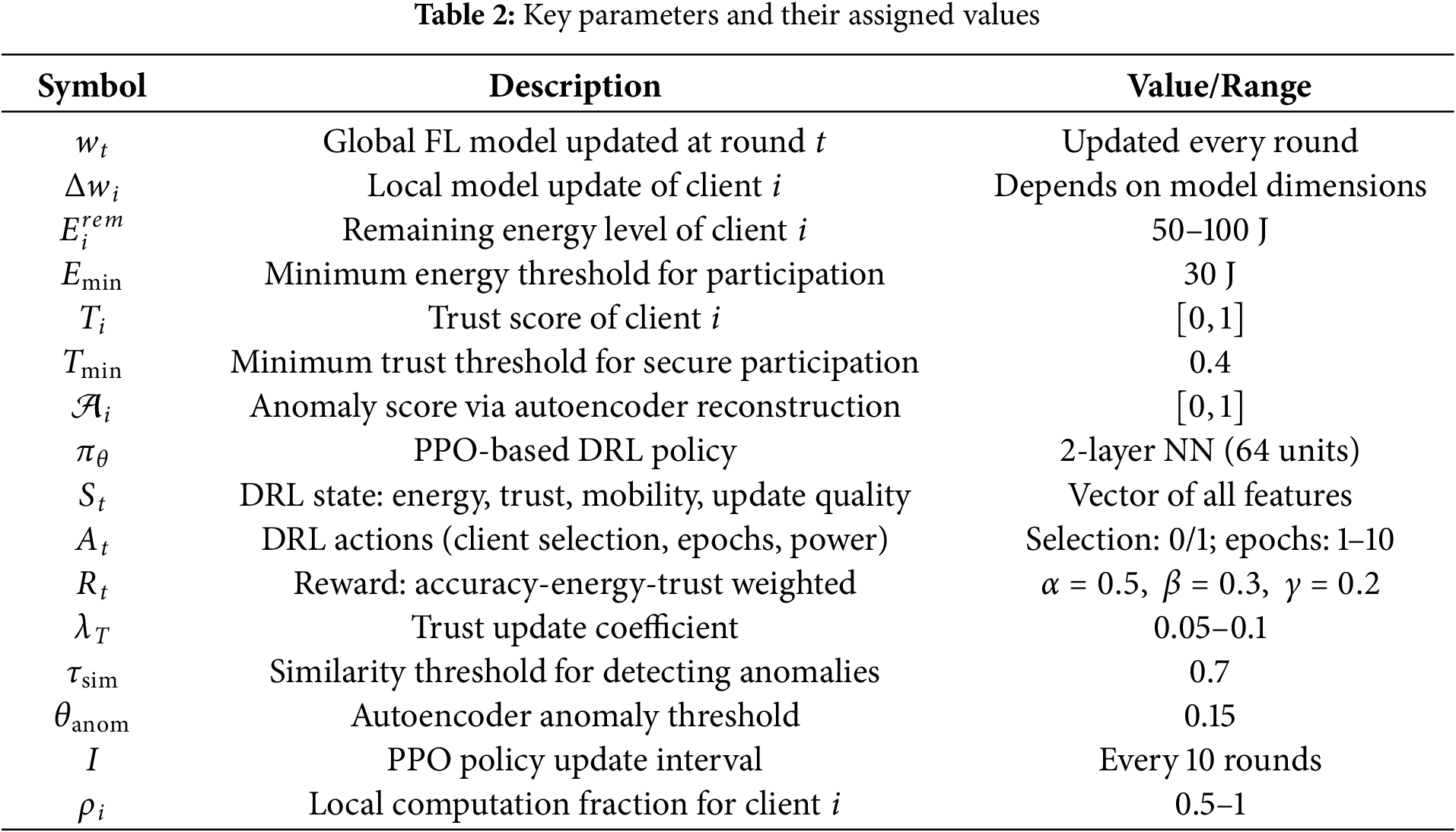

This research aims to develop a mobility-based intelligent Deep Reinforcement Learning, Federated Learning (DRL-FL) framework in an IoT-assisted intelligent transportation system (ITS), shown in Fig. 1. The data has been collected for the IoT nodes, including mobility and energy constraints derived from the Udacity Self-Driving Car Dataset. The global objective and local updates are calculated via federated optimization, followed by the mobility prediction and stability score. The Energy Modeling is performed for energy consumption per client, calculated as the sum of computational and communication energy. Anomaly detection and trust updates have been done for device-level anomaly using a local autoencoder. The combined energy-trust-mobility selection has been posed as a round-by-round constrained optimization problem. This framework allows the DRL-FL components to explore a broader range of possible schemes. It also develops the framework’s ability to enhance learning performance, optimizing ITS solutions in dynamic situations. The key parameters used in the proposed framework have been shown in Table 2.

Figure 1: DRL-FL architecture

3.1 Global Objective and Local Updates

The set of N clients is denoted by

The Eq. (1) defines the global loss to be minimized by federated optimization, where

where

where

3.2 Mobility Prediction and Stability Score

Each client

where

The dwell-time predictor estimates how long client

where

3.3 Energy Modeling and Feasibility Constraints

The energy consumption per client is modeled as the sum of the computational and communication energy required to participate in one round. The computational (local training) energy for client

where

where

The feasibility of computation time and transmission time must comply with the estimated dwell time:

where

where

with

3.4 Anomaly Detection and Trust Update Equations

Device-level anomaly detection uses a local autoencoder to reconstruct the telemetry vector

and the normalized anomaly score for trust weighting is computed as,

where:

If

where

The proposed framework used model poisoning and backdoor injection integrated with the trust evaluation mechanism to identify and suppress malicious contributions. The local gradients are manipulated using a subset of adversarial clients before transmission to the RSU. The poisoning is calculated as,

where

where

where lower values indicate potential poisoning. The final trust score is then updated as,

where

3.6 MDP Formulation and PPO-Based DRL Optimization

This scheduling problem is transformed into an MDP with states

where

with selection flags

where:

•

•

•

•

•

•

The agent learns a policy

where

where

3.7 Optimization Problem (Mixed-Integer Form) and Relaxation

The combined energy-trust-mobility selection can be posed as a round-by-round constrained optimization problem expressed as,

where K is the number of selected target clients. Eq. (26) presents a mixed-integer nonlinear program (MINLP); in practice, the DRL policy approximates the solution online. For analysis, relax

3.8 Metrics and Derived Quantities

The average energy per round is defined as,

The equation above calculates the mean energy consumption of all selected devices, given the typical energy cost per device. The energy efficiency (EE) as accuracy per unit energy can be calculated as,

where Acc

This equation confirms the training convergence by comparing the global model’s loss. Convergence is confirmed if the loss is below the threshold. The detection rate (DR) and false alarm rate (FAR) are calculated from the anomaly detector output as,

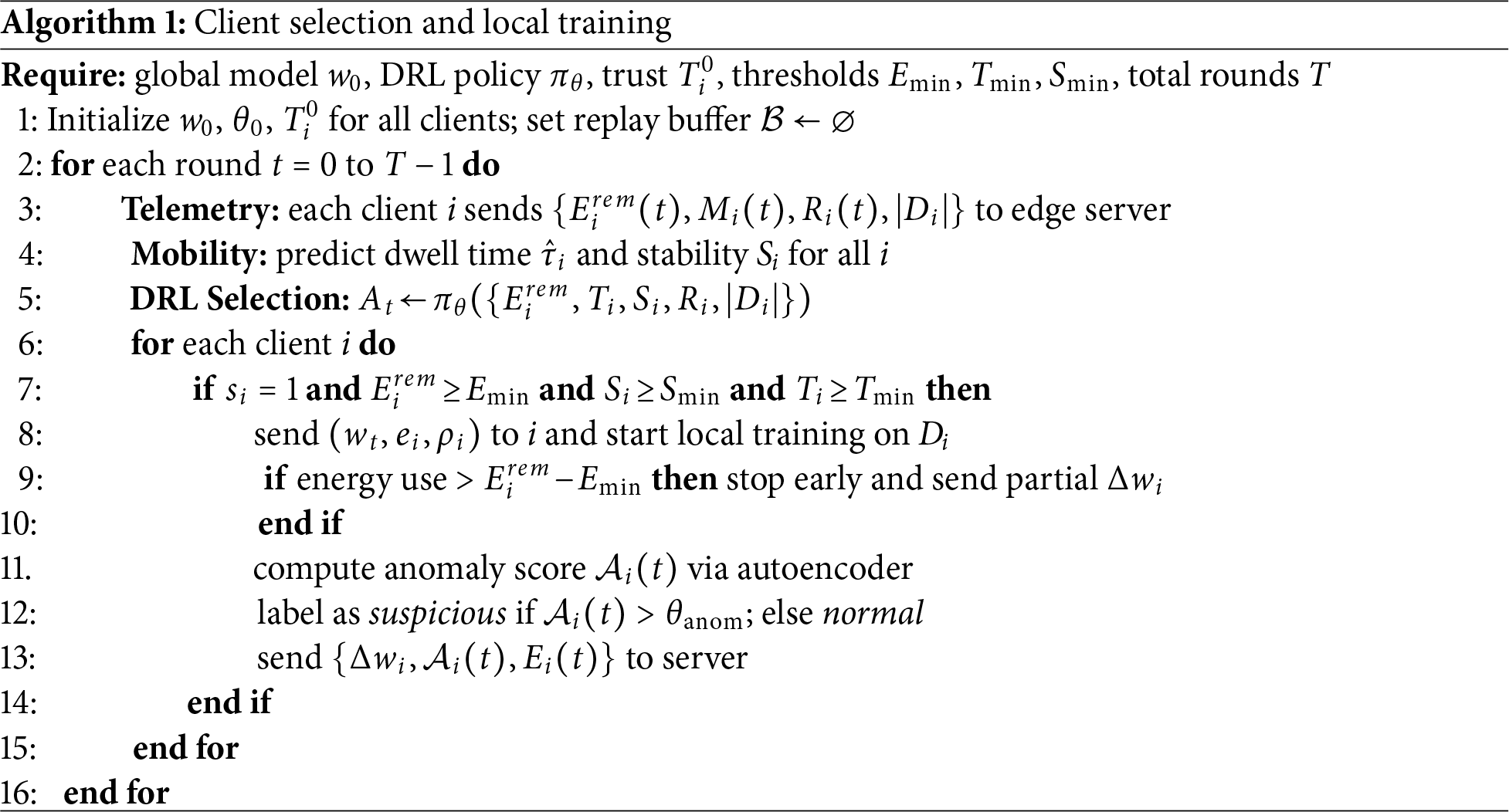

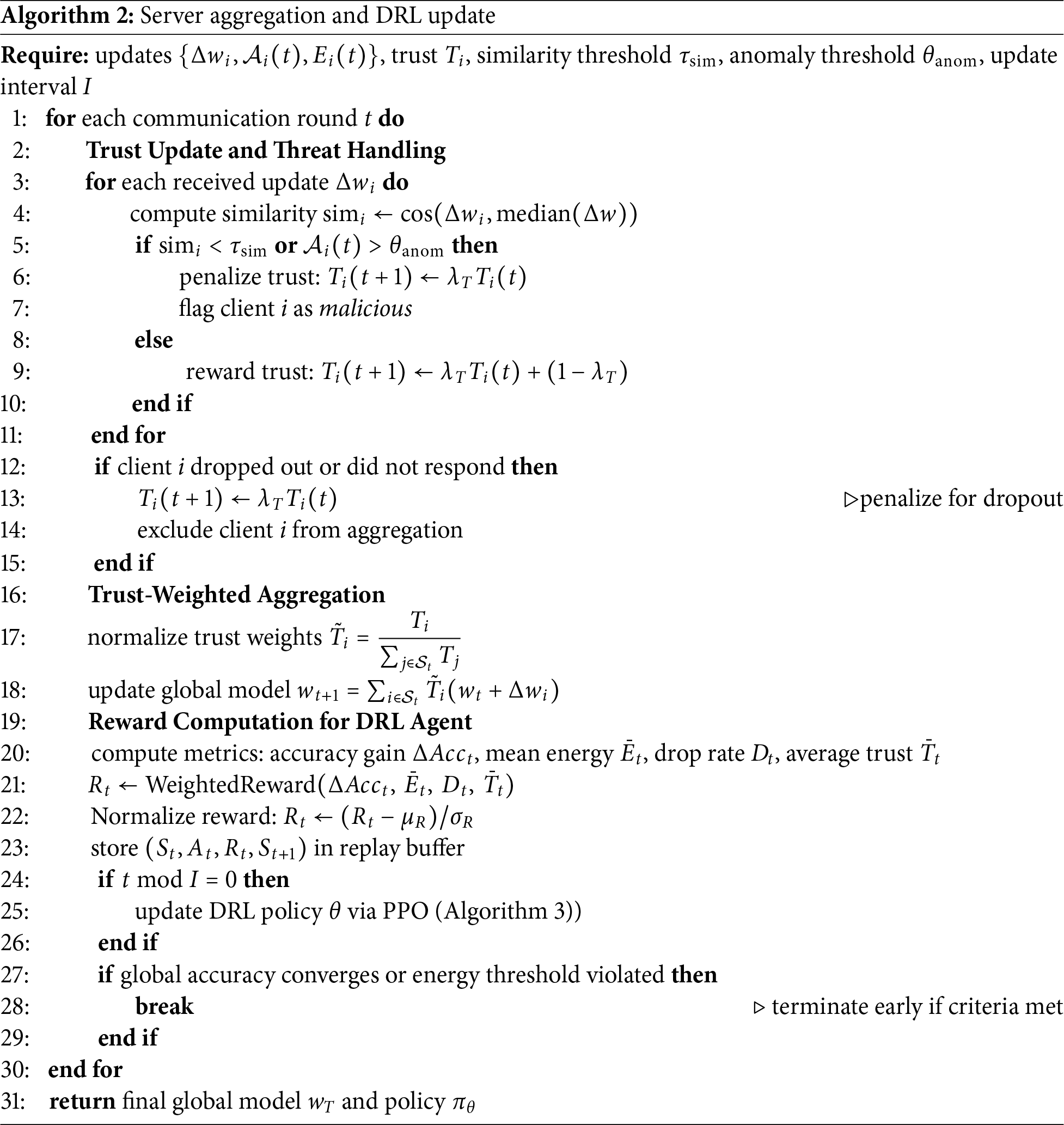

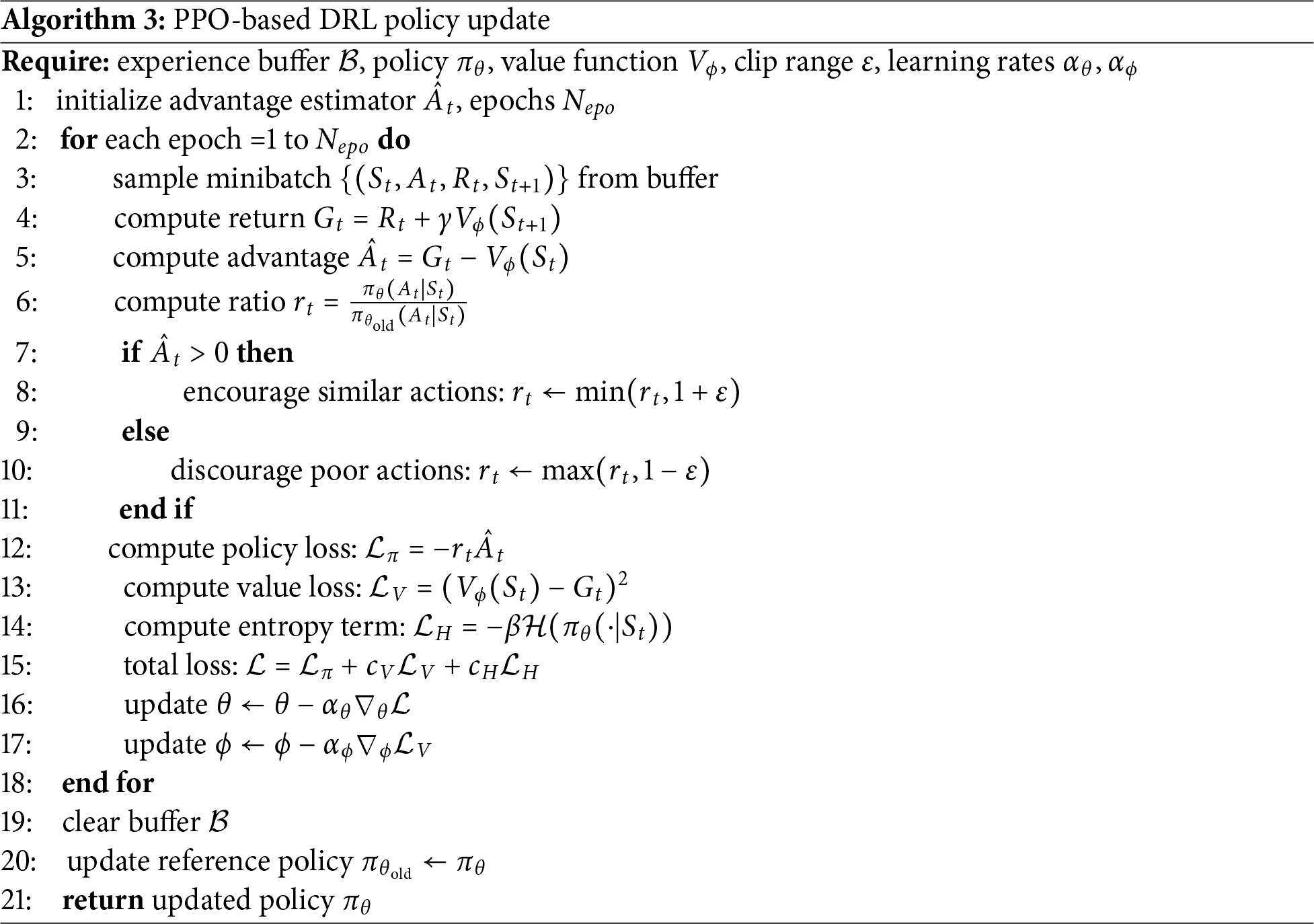

Algorithmic Framework Overview

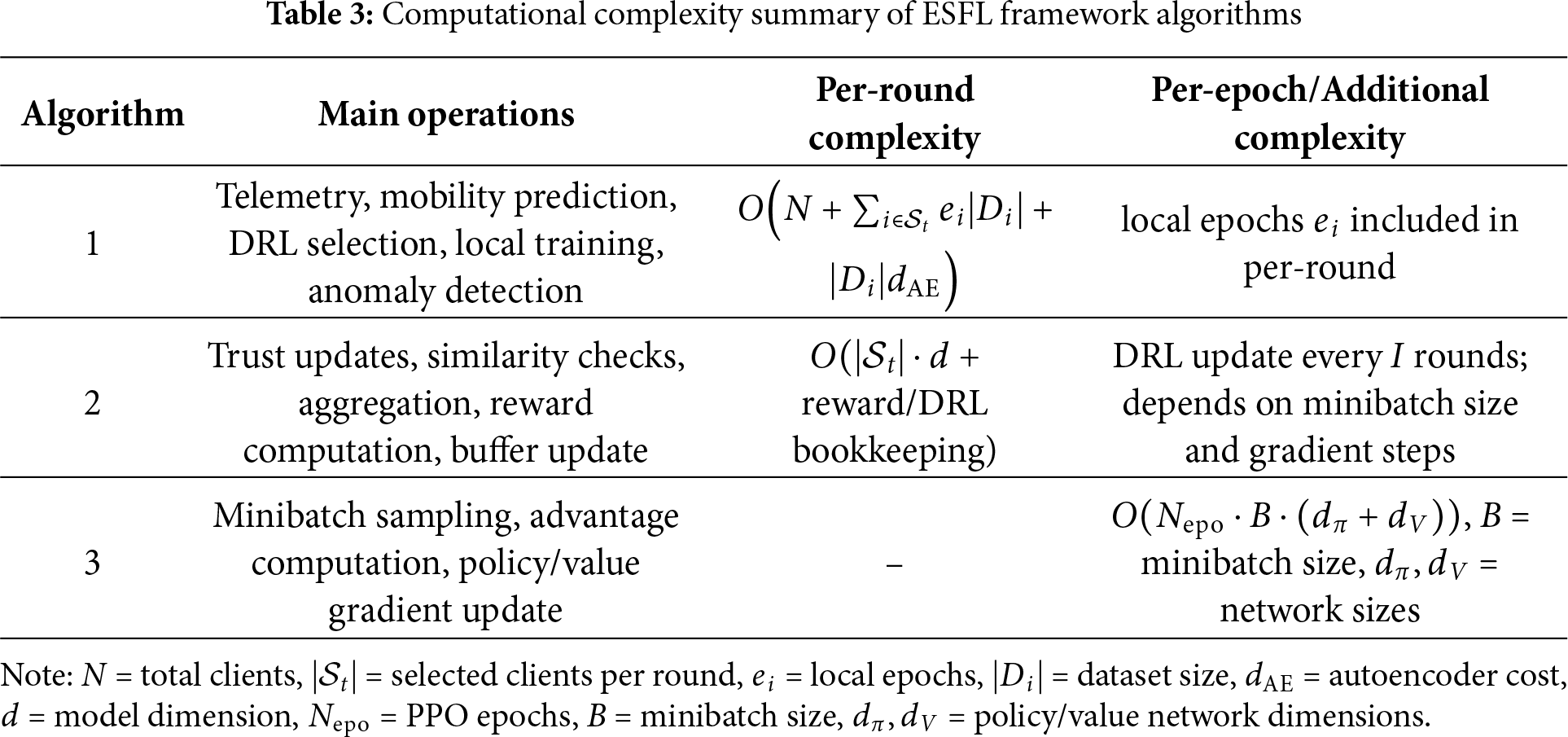

The proposed framework consists of three integrated Algorithms 1–3 that collaboratively achieve adaptive, secure, and energy-efficient federated learning for intelligent transportation systems (ITS). Each algorithm corresponds to a specific operational stage in the federated learning (FL) cycle: client-side participation, server-side aggregation, and policy optimization through deep reinforcement learning (DRL). A mobility-aware self-learning system has been developed that optimizes communication, computation, and trust in dynamic IoT-based vehicular environments. A single-tier centralized aggregation has been implemented in the proposed framework. Global model aggregation has been performed at each round using the Roadside Unit (RSU), which acts as the centralized FL coordinator. The local updates from the IoT nodes are sent to the RSU, which aggregates using an averaging rule. The nodes’ participation, along with the energy allocation and trust-aware update, is optimized directly by keeping the communication pipeline simple. Table 3 shows the computational complexity of the proposed algorithms.

This section presents detailed results and a discussion of the proposed framework for intelligent transportation systems. TensorFlow Federated and PyTorch were used to perform the simulations. One hundred IoT vehicle clients operating under mobility and energy constraints derived from the Udacity Autonomous Vehicle Dataset were deployed. The framework integrates a deep reinforcement learning (DRL) based client-selection mechanism and an autoencoder-based threat-detection module. The evaluation focuses on six key metrics: model accuracy, energy consumption, energy efficiency, threat resilience, communication overhead, and DRL reward convergence. A comparative study of several benchmark FL methods was also performed, including FedAvg, FedProx, MOFedAvg, and Energy-Aware FL.

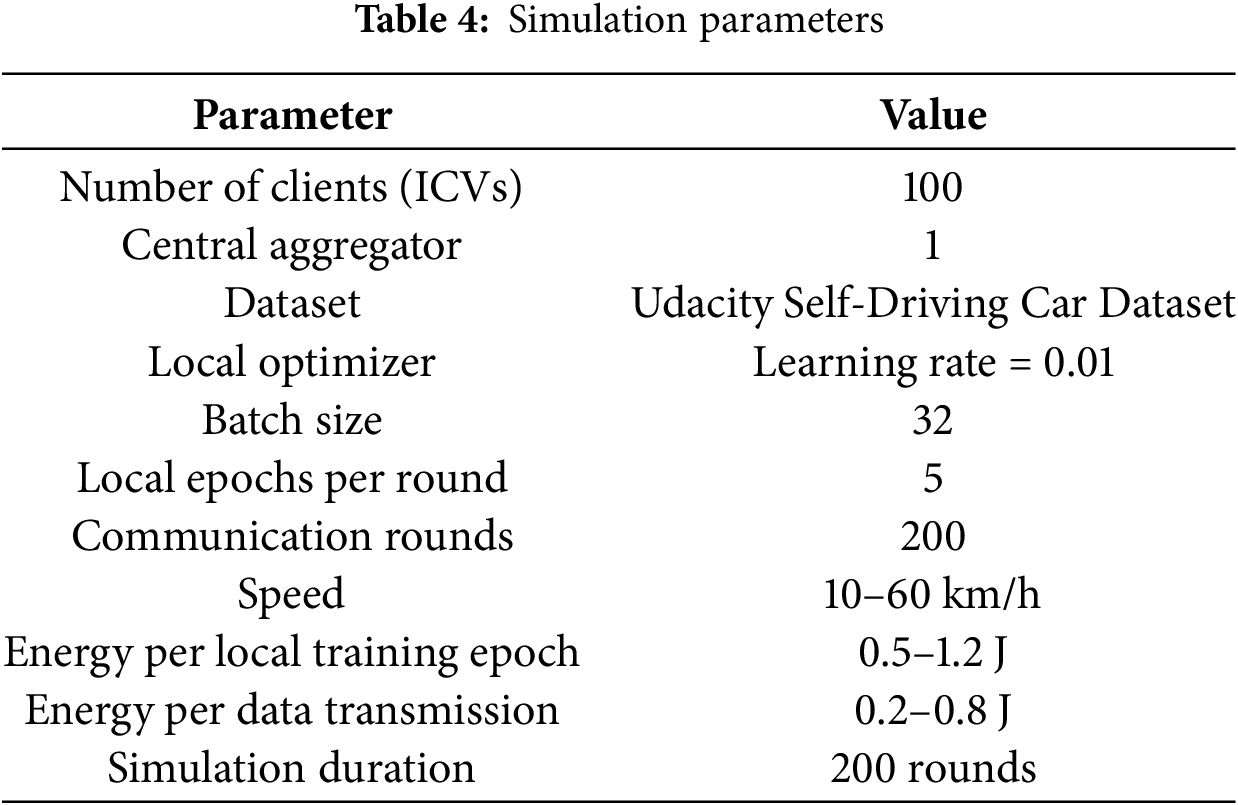

4.1 Simulation and Network Setup

A comprehensive simulation framework was developed using Python with TensorFlow Federated (TFF), PyTorch, and Stable-Baselines. Experiments were run on a workstation equipped with an Intel Core i9 CPU, 32 GB of RAM, and an NVIDIA RTX 4070 GPU. Table 4 shows the detailed simulation parameters and their description. The network of 100 IoT vehicle nodes has been created with a single central aggregator. Telemetry, sensor readings, and steering features have been selected using a random waypoint mobility model. The energy range for the local training epoch has been set to 0.5 to 1.2 J, while the energy per data transmission has been set to 0.2 to 0.8 J. Simulations of over 200 communication rounds were performed.

The experimental setup was simulated for 100 IoT nodes that represent a practical and widely adopted configuration as per MoFeL, ESAFL, and FedProx models. It is consistent with a typical fuzzy-logic-based system, which usually considers 50-200 clients. The increasing number of nodes does not affect the algorithm’s trend but increases the computational load. The training analysis covers an average of 200 rounds, which provides sufficient iterations for convergence analysis. Moreover, the model performance saturates after approximately 150–300 rounds. The Udacity Autonomous Vehicle Dataset has been selected, which contains real-world sensor data and driving scenarios. The mobility features, including acceleration, change of direction, and duration, have been considered with the required parameters for energy consumption, power transmission, and latency. The baseline models, including FedAvg, FedProx, MoFeL, and Energy-Aware FL, have been selected for fair comparison.

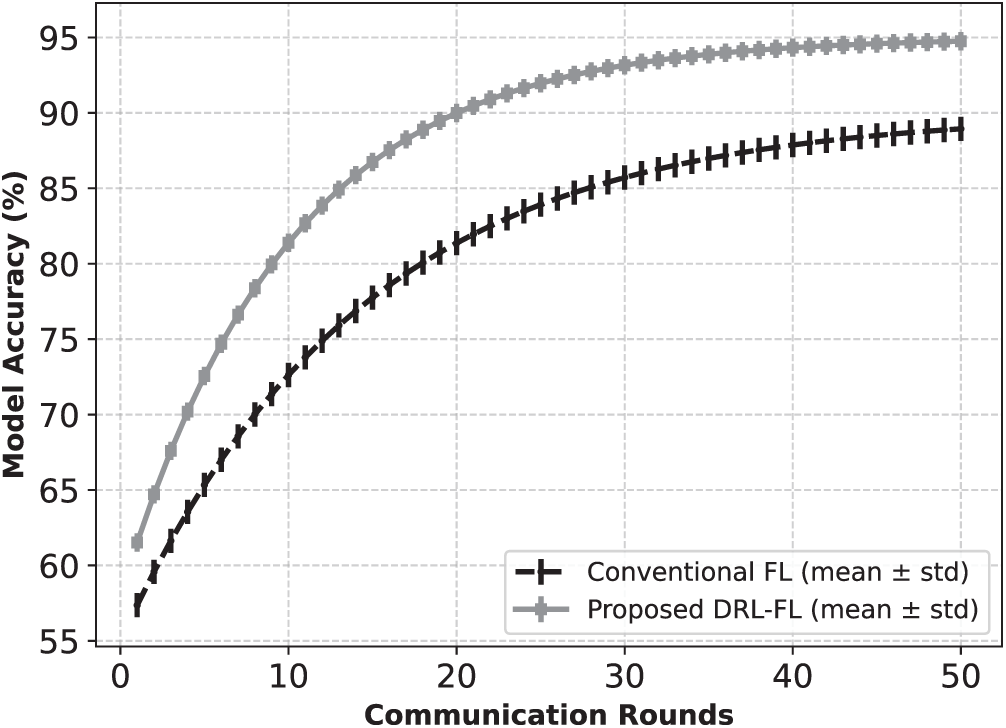

Fig. 2 illustrates the variation in global model accuracy over 50 communication rounds for the conventional FL and the proposed DRL-based FL models. A gradual increase in accuracy for the conventional FL has been observed, reaching 89% after 50 rounds, while the proposed DRL-FL reached 93%. It shows that mobility-aware optimization affects the learning rate and that faster convergence balances local updates and global aggregation.

Figure 2: Accuracy vs. communication rounds for FL and DRL-FL. Error bars show standard deviation across rounds

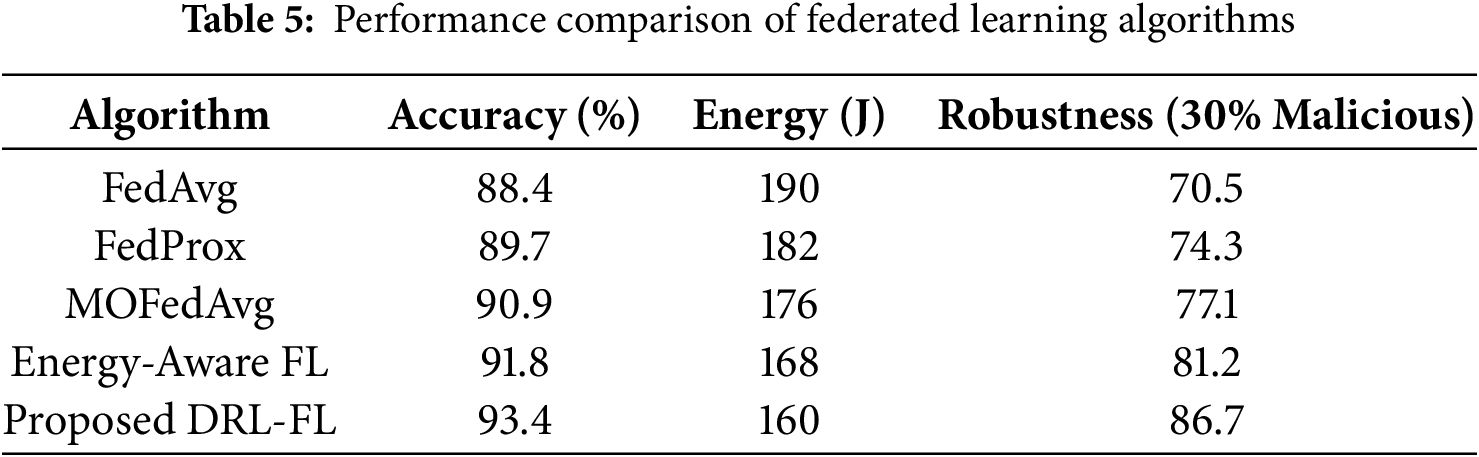

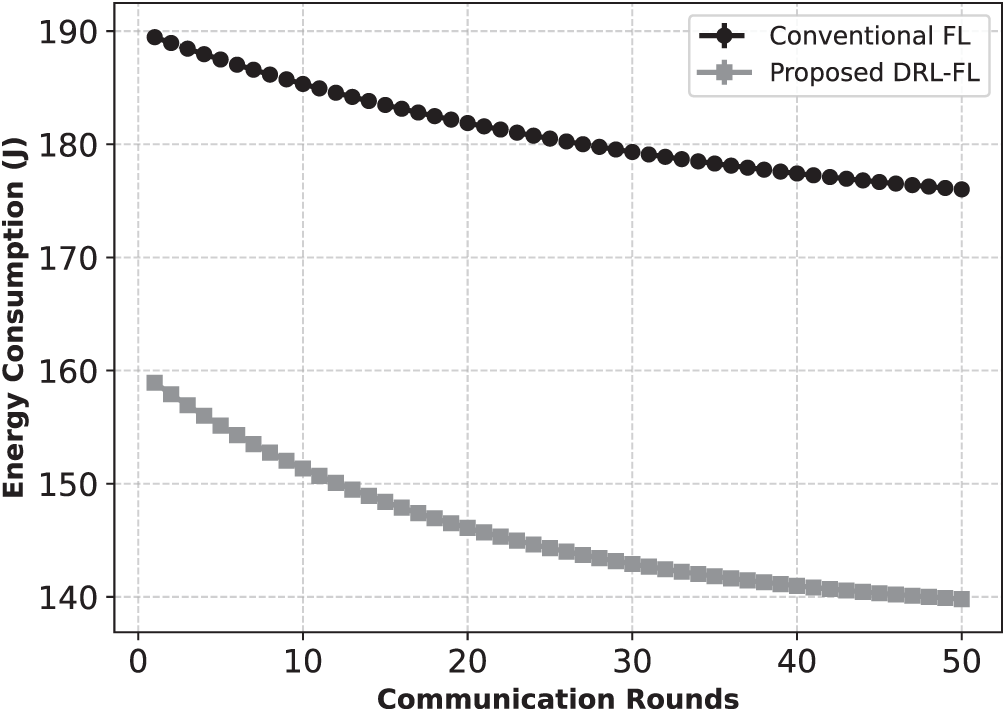

Table 5 shows the comparison results for the proposed DRL-FL with the baseline models. DRL-FL outperforms the other models in terms of the highest accuracy (93.4%), lowest energy consumption (160 J), and highest robustness (86.7%).

The mobility evaluation and the scalability comparison have been shown in Table 6. A gradual decrease in model accuracy and an increase in training latency are observed for both models as the number of IoT nodes increases from 10 to 50. DRL- FL also outperforms FL, maintaining 10% higher accuracy and up to 40% lower latency. The learning performance and energy consumption have been illustrated in the mobility analysis as the vehicle speed increases. The increase in vehicle speed reduces accuracy for both models due to the lower communication stability and less connection time. DRL-FL comparatively maintains higher accuracy and also consumes less energy.

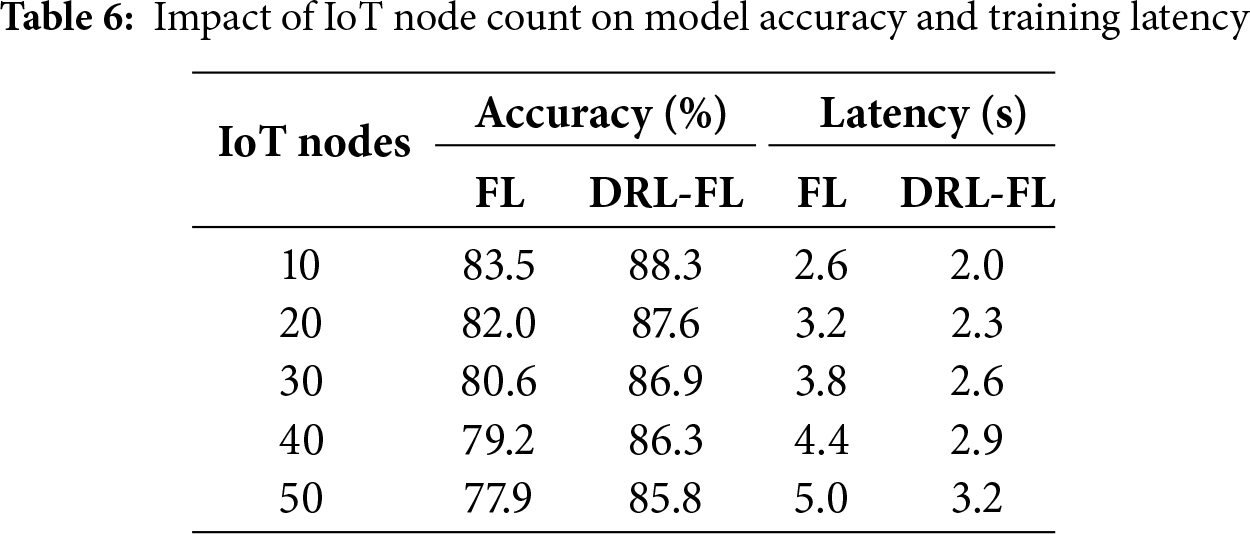

The energy consumption per communication round has been shown in Fig. 3 for both approaches. The proposed DRL-FL model converges to approximately 150 J, while the FL model converges to approximately 180 J. It shows that the proposed model consumes less energy than the simple FL model up to 16.7%. It is due to the reduced data transmission overhead that ensures the continuous involvement of the IoT nodes.

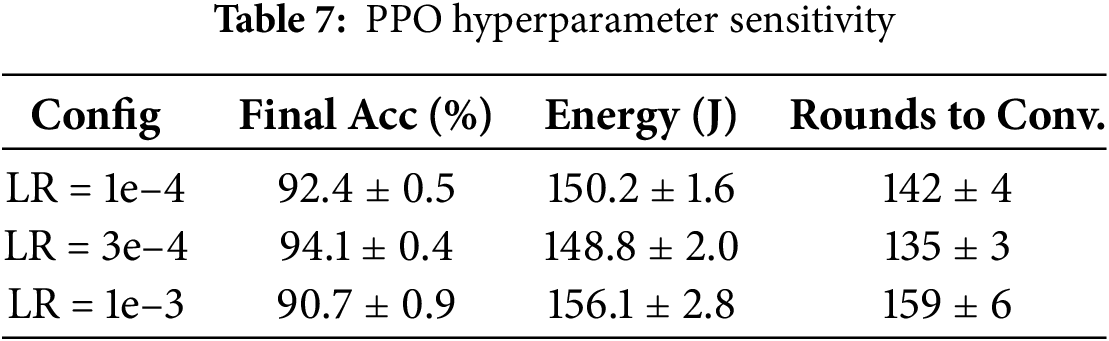

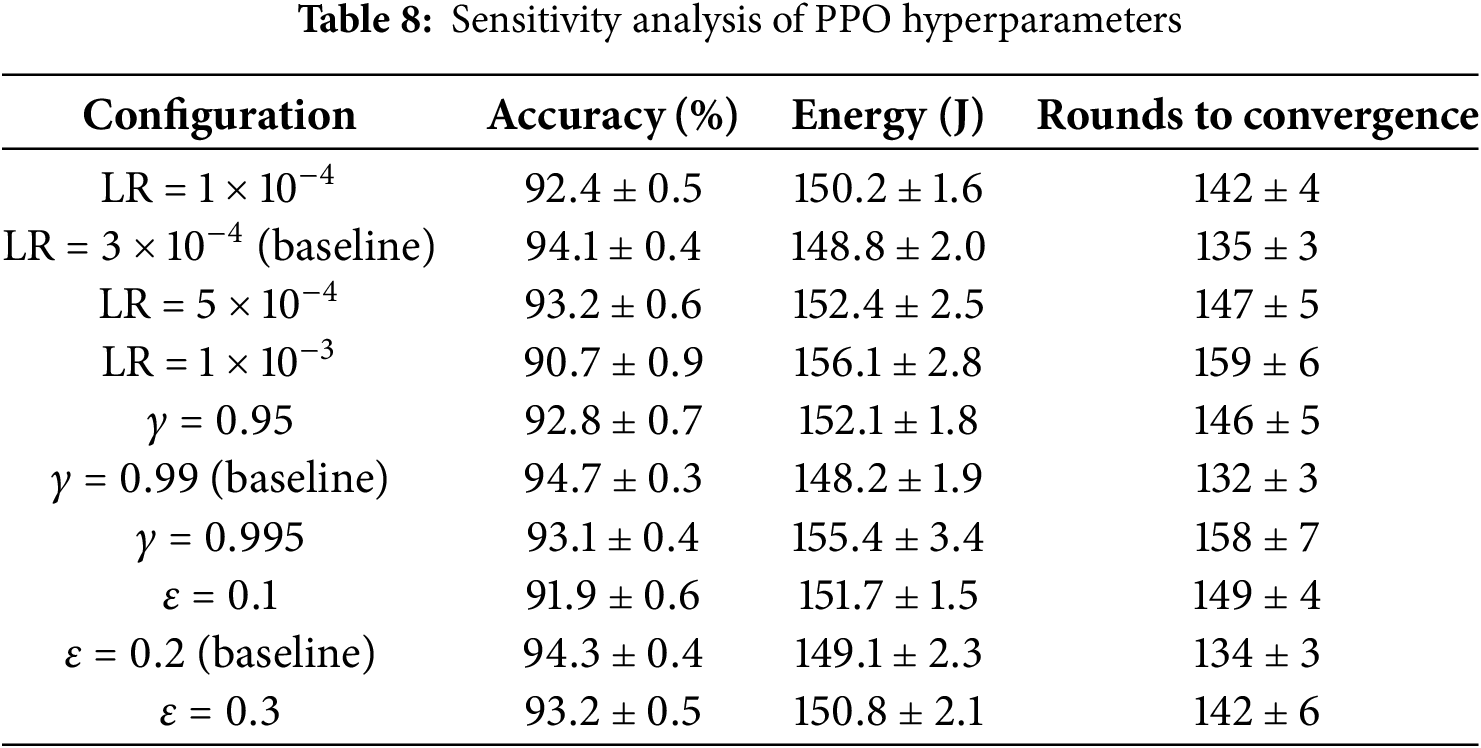

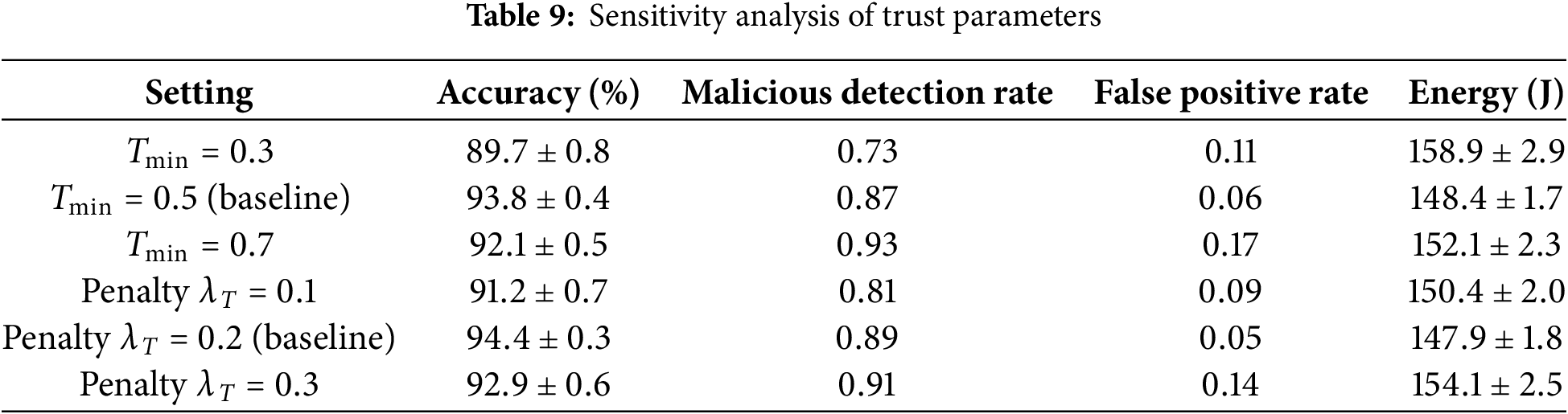

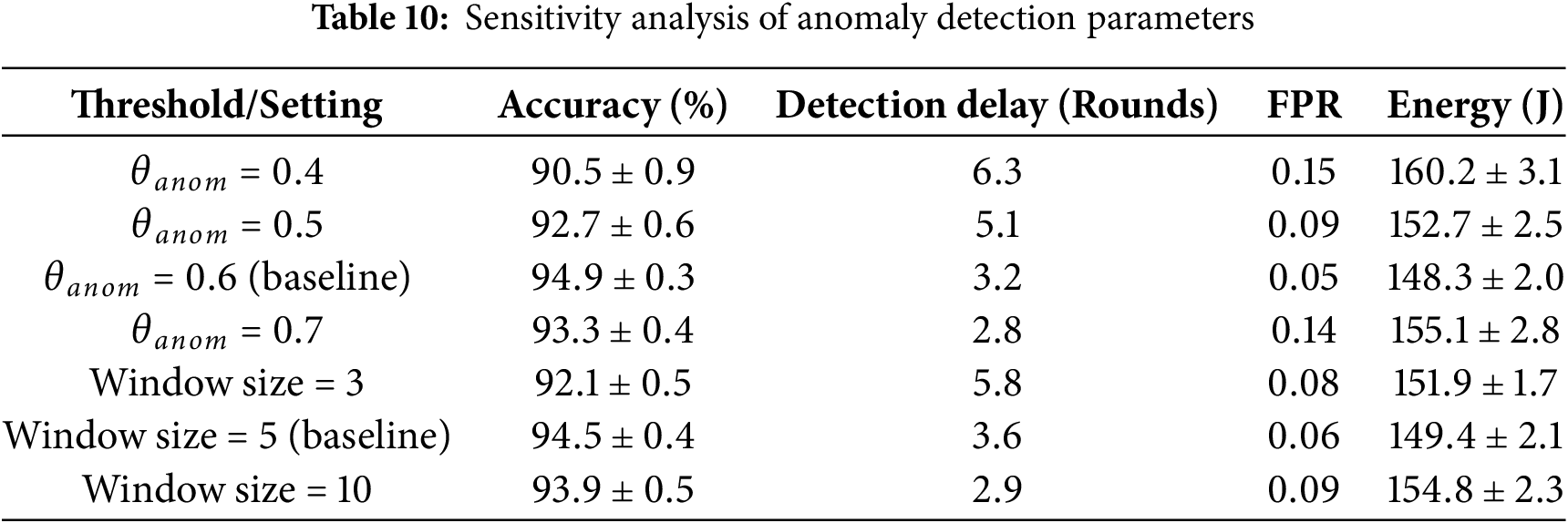

Figure 3: Average energy consumption per communication round. DRL-FL reduces consumption through adaptive selection and power control

The robustness of the proposed framework has been evaluated using parameter sensitivity analysis. Various key design parameters have been assessed, including PPO hyperparameters, trust thresholds, and anomaly-detection parameters. The detailed results are presented in Tables 7–10. Results demonstrated that the proposed model outperforms the baseline models in terms of stability and consistency. A predictable behavior has been observed for PPO in terms of learning rate and discount factor, with aggressive values, while the moderate settings achieved a reasonable balance. The most effective observed parameter is the trust mechanism, with a value range of 0.5–0.7 without increasing the false-positive rate. The anomaly detection parameter demonstrated resilience behavior, avoiding unnecessary exclusion of benign nodes. The overall performance indicates that the framework parameters are not overly sensitive to narrow hyperparameter tuning.

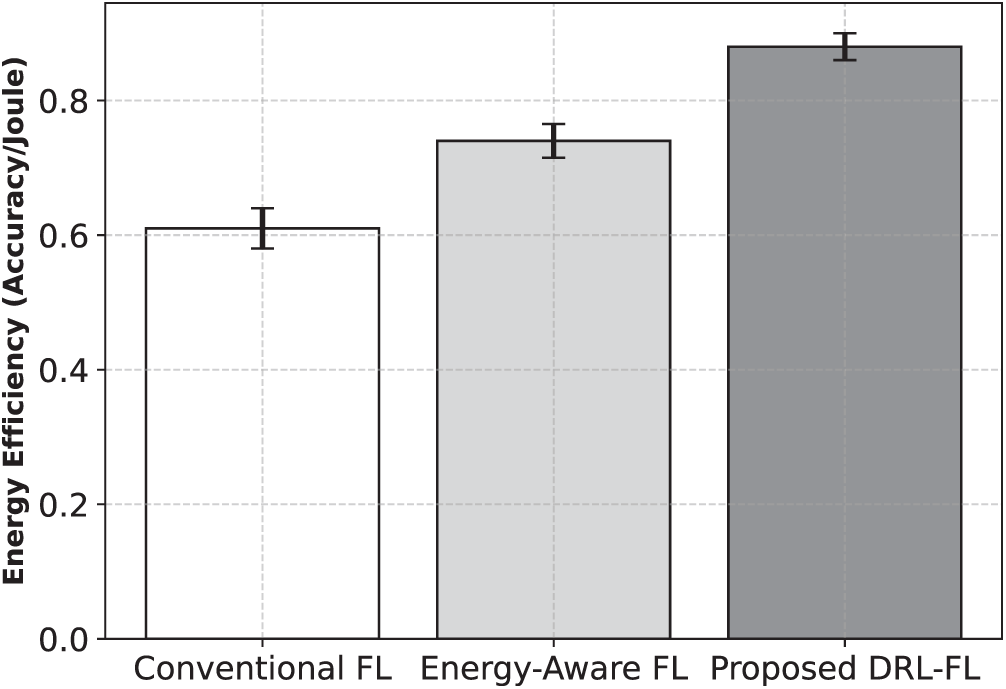

Fig. 4 exhibits the energy efficiency of various learning configurations. Results indicate that the FL achieves an efficiency of 0.62, while DRL-FL achieves 0.89. It highlights an overall improvement of 43.5%, demonstrating the minimization of redundant computation. Moreover, each training round effectively contributes to the overall learning process without the energy loss.

Figure 4: Energy efficiency comparison among baseline algorithms. DRL-FL achieves the highest accuracy-per-joule ratio

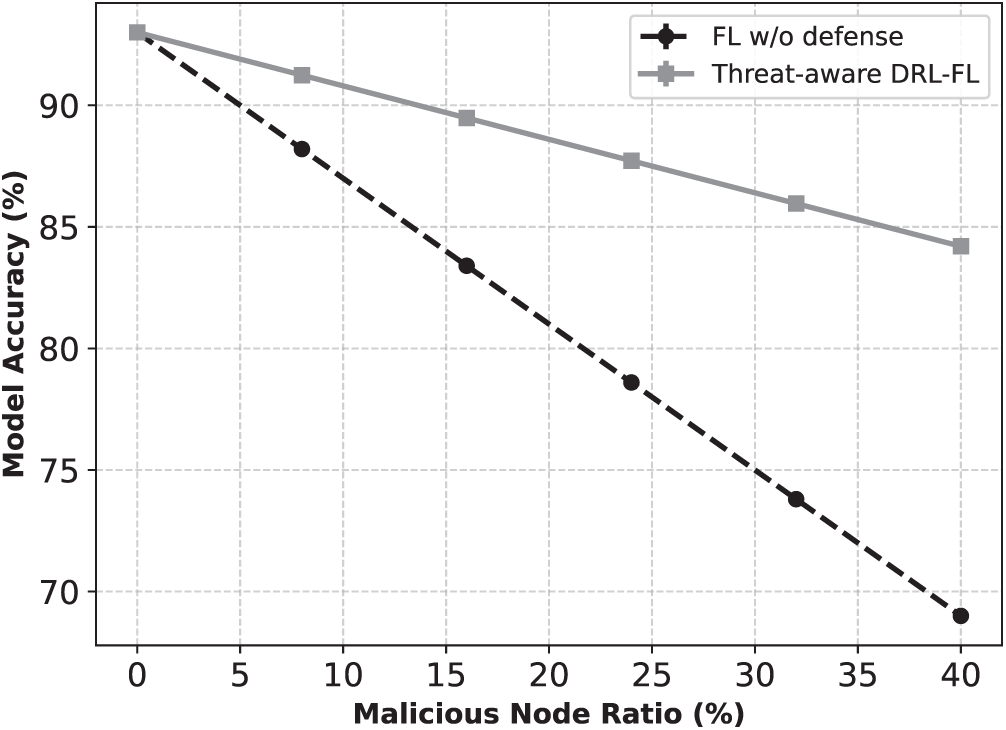

The impact of malicious nodes on accuracy has been shown in Fig. 5. FL accuracy decreases gradually from 93% to 69% as the malicious nodes increase from 0%–40%. A minor decrease in the proposed DRL-FL has been observed, thus maintaining the accuracy around 82%. It shows that the proposed model effectively detects suspicious updates, confirming its robust threat detection.

Figure 5: Accuracy degradation under increasing malicious-client ratio. DRL-FL maintains robustness due to trust and anomaly filtering

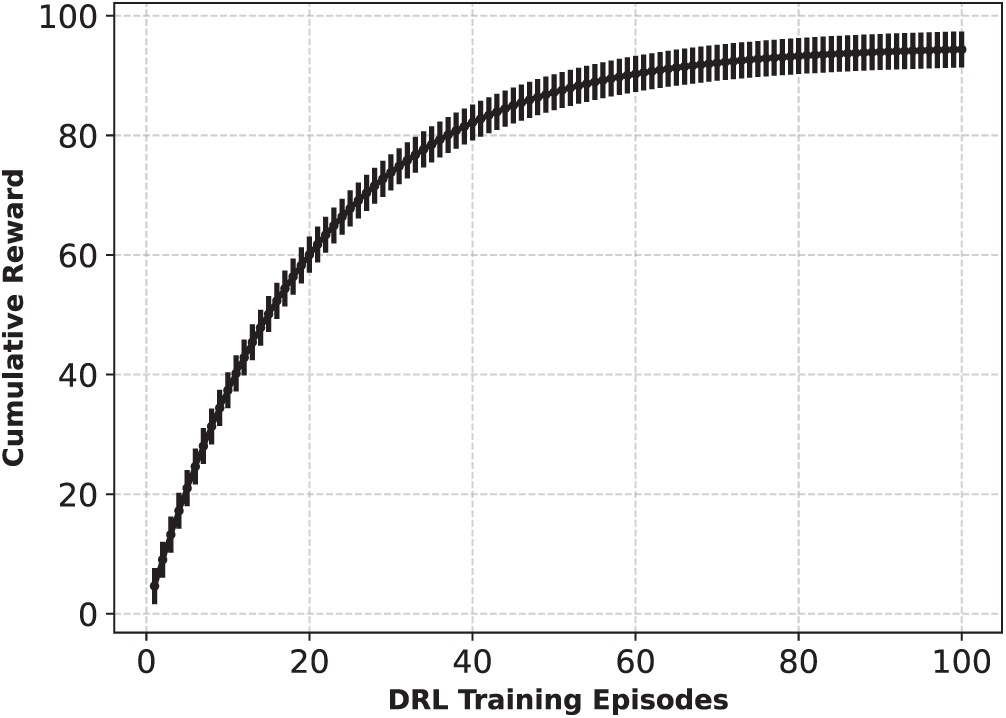

Fig. 6 demonstrates the learning behavior of the DRL agent for 100 training episodes. In the first few episodes, the cumulative reward increased rapidly and stabilized around episode 80. It specifies that the DRL effectively learns to optimize energy and balances competing goals.

Figure 6: DRL reward convergence across training episodes, showing stable policy learning

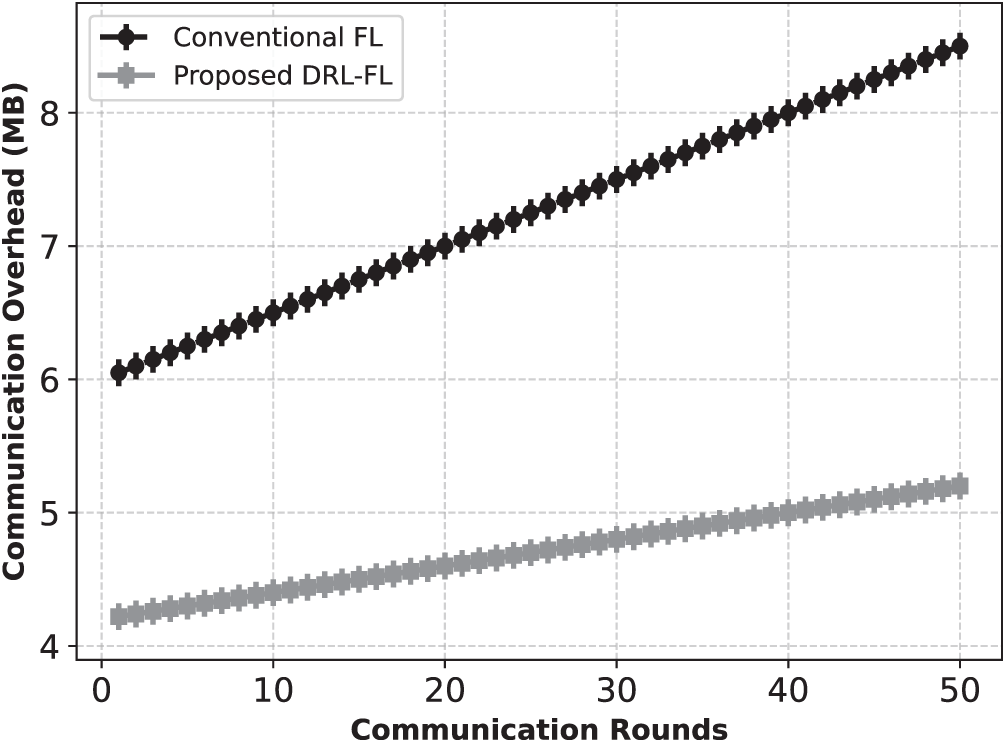

The communication efficiency for the FL and DRL-FL models is shown in Fig. 7. The average communication overhead for the FL has been 6.5 MB, while it has been 4.7 MB for the DRL-FL. The nodes are allowed to transmit the model updates using the adaptive share mechanism, which is useful for ITS. It not only reduces the bandwidth but also maintains low-latency communication.

Figure 7: Communication overhead per round. DRL-FL reduces bandwidth usage through optimized participation

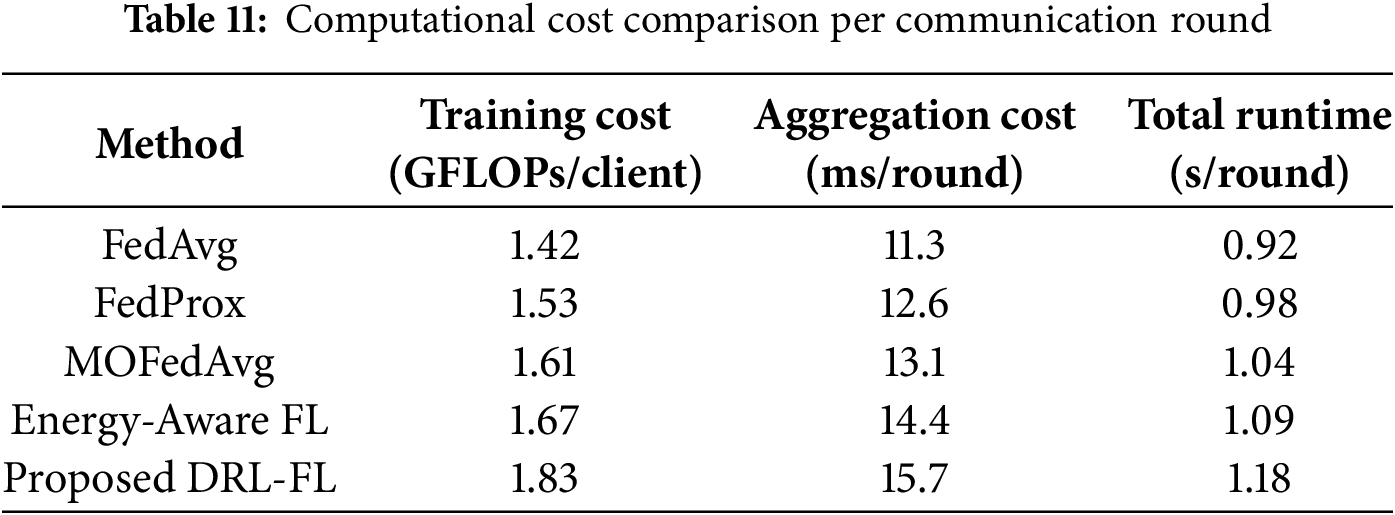

The inclusion of core design elements, mobility-aware client selection, dynamic energy management, trust-weighted aggregation, and anomaly detection in the proposed framework leads to superior performance. Algorithms, including FedAvg and FedProx, with static connectivity with rapid change in client availability, may lead to unstable convergence. The proposed DRL-FL model continuously evaluates client stability, avoiding wasting computational resources on potentially disconnected nodes. The issue of overly erratic update quality is avoided by the energy-aware DRL policy, which adjusts the local training workload of battery usage. The trust-weighted aggregation reduces the overall number of malicious model updates based on anomaly scores to prevent malicious influence. The PPO-based policy simultaneously balances accuracy, energy consumption, and robustness, achieving faster convergence and lower energy consumption. The computational cost comparison per communication round for the proposed framework and several models is presented in Table 11.

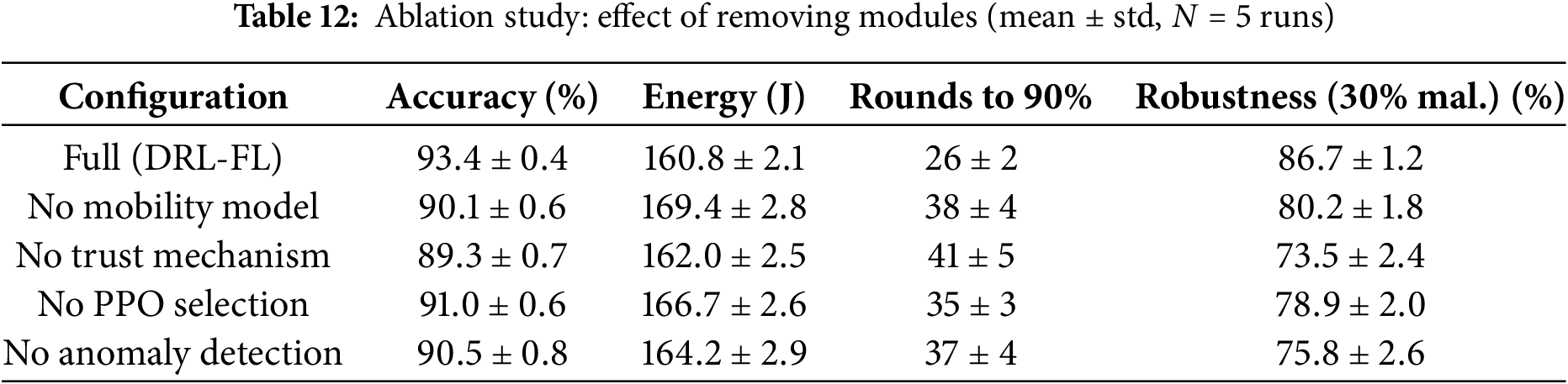

The contribution of each system module for the ablation study has been presented in Table 12. Removing the mobility predictor may increase energy consumption and reduce accuracy by selecting unstable clients. The robustness to adversarial nodes decreases as trust-weighted aggregation is reduced. Convergence can also be delayed by replacing PPO with a heuristic selection strategy, and removing the autoencoder-based anomaly detector increases the exposure to the malicious activities. The analysis confirms that each component in the proposed framework contributes meaningfully to the observed performance metrics.

In this study, an integrated Deep Reinforcement Learning (DRL) and Federated Learning (FL) framework has been presented for an intelligent transportation system (ITS). The framework is designed for an IoT environment to address energy efficiency, mobility management, and threat detection issues. The client selection was performed using DRL, while the threat detection mechanism used an autoencoder. Malicious node identification was accomplished for threat identification in a highly dynamic ITS. The proposed model has been trained on factors such as energy availability, trust levels, and mobility dynamics, with a focus on learning stability and reduced energy consumption. Results reveal that the DRL-FL outperforms the existing model, achieving higher accuracy, lower bandwidth, and less energy loss. The mobility evaluations further endorse the model’s effectiveness for different network densities and vehicle speeds. The research can readily be extended to edge computing environments and blockchain-based trust management for dense ITSs.

Acknowledgement: The authors express thanks to Princess Nourah bint Abdulrahman University for supporting this research through the Researchers Supporting Project number (PNURSP2025R510), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Funding Statement: This research work is supported by Princess Nourah bint Abdulrahman University Researchers Supporting Project No. PNURSP2025R510, Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Author Contributions: The authors confirm contribution to the paper as follows: Conceptualization, Hamad Ali Abosaq and Fahad Masood; methodology, Jarallah Alqahtani and Fahad Masood; software, Alanoud Al Mazroa and Muhammad Asad Khan; validation, Muhammad Asad Khan and AKM Bahalul Haque; formal analysis, Hamad Ali Abosaq, Jarallah Alqahtani, and Alanoud Al Mazroa; investigation, Hamad Ali Abosaq, and Alanoud Al Mazroa; resources, AKM Bahalul Haque; data curation, Fahad Masood and Muhammad Asad Khan; writing—original draft preparation, AKM Bahalul Haque and Fahad Masood; writing—review and editing, AKM Bahalul Haque, and Muhammad Asad Khan; visualization, Hamad Ali Abosaq, and Jarallah Alqahtani; supervision, project administration, Hamad Ali Abosaq, and Jarallah Alqahtani. All authors reviewed and approved the final version of the manuscript.

Availability of Data and Materials: The dataset has been obtained from https://public.roboflow.com/object-detection/self-driving-car.

Ethics Approval: Not applicable.

Conflicts of Interest: The authors declare no conflicts of interest.

References

1. Musa AA, Malami SI, Alanazi F, Ounaies W, Alshammari M, Haruna SI. Sustainable traffic management for smart cities using Internet-of-Things-oriented intelligent transportation systems (ITSchallenges and recommendations. Sustainability. 2023;15(13):9859. doi:10.3390/su15139859. [Google Scholar] [CrossRef]

2. Elassy M, Al-Hattab M, Takruri M, Badawi S. Intelligent transportation systems for sustainable smart cities. Transp Eng. 2024;16(17):100252. doi:10.1016/j.treng.2024.100252. [Google Scholar] [CrossRef]

3. Kairouz P, McMahan HB, Avent B, Bellet A, Bennis M, Bhagoji AN, et al. Advances and open problems in federated learning. Found Trends Mach Learn. 2021;14(1–2):1–210. doi:10.1561/2200000083. [Google Scholar] [CrossRef]

4. Zhang S, Li J, Shi L, Ding M, Nguyen DC, Tan W, et al. Federated learning in intelligent transportation systems: recent applications and open problems. IEEE Trans Intell Transp Syst. 2023;25(5):3259–85. doi:10.1109/tits.2023.3324962. [Google Scholar] [CrossRef]

5. Macedo D, Santos D, Perkusich A, Valadares DC. Mobility-aware federated learning considering multiple networks. Sensors. 2023;23(14):6286. doi:10.3390/s23146286. [Google Scholar] [PubMed] [CrossRef]

6. Alasbali N, Masood F, Alnazzawi N, Ghaban W, Alazeb A, Basurra S, et al. IoT-UAV enabled intelligent resource management in low-carbon smart agriculture using federated reinforcement learning. IEEE Trans Consum Electron. 2025;71(2):6933–41. doi:10.1109/tce.2025.3572552. [Google Scholar] [CrossRef]

7. Masood F, Khan MA, Alshehri MS, Ghaban W, Saeed F, Albarakati HM, et al. AI-based wireless sensor IoT networks for energy-efficient consumer electronics using stochastic optimization. IEEE Trans Consum Electron. 2024;70(4):6855–62. doi:10.1109/tce.2024.3416035. [Google Scholar] [CrossRef]

8. Yang Q, Liu Y, Chen T, Tong Y. Federated machine learning: concept and applications. ACM Trans Intell Syst Technol. 2019;10(2):1–19. doi:10.1145/3298981. [Google Scholar] [CrossRef]

9. Lim WYB, Luong NC, Hoang DT, Jiao Y, Liang YC, Yang Q, et al. Federated learning in mobile edge networks: a comprehensive survey. IEEE Commun Surv Tutorials. 2020;22(3):2031–63. doi:10.1007/978-3-031-07838-5_1. [Google Scholar] [CrossRef]

10. Lamssaggad A, Benamar N, Hafid AS, Msahli M. A survey on the current security landscape of intelligent transportation systems. IEEE Access. 2021;9:9180–208. doi:10.1109/access.2021.3050038. [Google Scholar] [CrossRef]

11. Demertzi V, Demertzis S, Demertzis K. An overview of cyber threats, attacks and countermeasures on the primary domains of smart cities. Appl Sci. 2023;13(2):790. doi:10.3390/app13020790. [Google Scholar] [CrossRef]

12. Asiri F, Malwi WA, Masood F, Alshehri MS, Zhukabayeva T, Shah SA, et al. Privacy preserving federated anomaly detection in IoT edge computing using bayesian game reinforcement learning. Comput Mater Contin. 2025;84(2):3943–60. doi:10.32604/cmc.2025.066498. [Google Scholar] [CrossRef]

13. Xie B, Sun Y, Zhou S, Niu Z, Xu Y, Chen J, et al. MOB-FL: mobility-aware federated learning for intelligent connected vehicles. In: ICC 2023—IEEE International Conference on Communications. Piscataway, NJ, USA: IEEE. p. 3951–7. [Google Scholar]

14. Jin Z, Yang C, Ye Y, Zhang L, Shen J, Su J. Mobility-aware semi-asynchronous federated learning for vehicular networks. IEEE Trans Veh Technol. 2025. doi:10.1109/TVT.2025.3603978. [Google Scholar] [CrossRef]

15. Chen D, Deng T, Jia J, Feng S, Yuan D. Mobility-aware decentralized federated learning with joint optimization of local iteration and leader selection for vehicular networks. Comput Netw. 2025;263(2):111232. doi:10.1016/j.comnet.2025.111232. [Google Scholar] [CrossRef]

16. Chang X, Obaidat MS, Xue X, Ma J, Duan X. Mobility-aware vehicle selection strategy for federated learning in the internet of vehicles. In: 2024 International Conference on Communications, Computing, Cybersecurity, and Informatics (CCCI). Piscataway, NJ, USA: IEEE; 2024. p. 1–6. [Google Scholar]

17. Gu X, Wu Q, Fan Q, Fan P. Mobility-aware federated self-supervised learning in vehicular network. Urban Lifeline. 2024;2(1):10. doi:10.1007/s44285-024-00020-5. [Google Scholar] [CrossRef]

18. Saleem M, Arishi A, Farooq MS, Khan MA, Adnan KM. Weighted explainable federated learning for privacy-preserving and scalable energy optimization in autonomous vehicular networks. Egypt Inform J. 2025;31(5):100758. doi:10.1016/j.eij.2025.100758. [Google Scholar] [CrossRef]

19. Almaazmi KIA, Almheiri SJ, Khan MA, Shah AA, Abbas S, Ahmad M. Enhancing smart city sustainability with explainable federated learning for vehicular energy control. Sci Rep. 2025;15(1):23888. doi:10.1038/s41598-025-07844-3. [Google Scholar] [PubMed] [CrossRef]

20. Wang Y, Sui M, Xia T, Liu M, Yang J, Zhao H. Energy-efficient federated learning-driven intelligent traffic monitoring: bayesian prediction and incentive mechanism design. Electronics. 2025;14(9):1891. doi:10.3390/electronics14091891. [Google Scholar] [CrossRef]

21. Firdaus M, Larasati HT. A Blockchain-assisted distributed edge intelligence for privacy-preserving vehicular networks. Comput Mater Contin. 2023;76(3):2959–78. doi:10.32604/cmc.2023.039487. [Google Scholar] [CrossRef]

22. Chen S, Yang L, Shi Y, Wang Q. Blockchain-enabled secure and privacy-preserving data aggregation for fog-based ITS. Comput Mater Contin. 2023;75(2):3781–96. doi:10.32604/cmc.2023.036437. [Google Scholar] [CrossRef]

23. Pacharla N, Srinivasa Reddy K. Trusted certified auditor using cryptography for secure data outsourcing and privacy preservation in fog-enabled VANETs. Comput Mater Contin. 2024;79(2):3089–110. doi:10.32604/cmc.2024.048133. [Google Scholar] [CrossRef]

24. Bakirci M. Internet of Things-enabled unmanned aerial vehicles for real-time traffic mobility analysis in smart cities. Comput Electr Eng. 2025;123(2):110313. doi:10.1016/j.compeleceng.2025.110313. [Google Scholar] [CrossRef]

Cite This Article

Copyright © 2026 The Author(s). Published by Tech Science Press.

Copyright © 2026 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools