Open Access

Open Access

REVIEW

Physics-Informed Neural Networks: Current Progress and Challenges in Computational Solid and Structural Mechanics

1 Department of Mechanical Engineering, Faculty of Engineering, Chiang Mai University, Chiang Mai, Thailand

2 Office of Research Administration, Chiang Mai University, Chiang Mai, Thailand

3 Duy Tan Research Institute for Computational Engineering (DTRICE), Duy Tan University, Ho Chi Minh City, Vietnam

4 Faculty of Civil Engineering, Duy Tan University, Da Nang, Vietnam

* Corresponding Author: Pana Suttakul. Email:

(This article belongs to the Special Issue: Data-Driven Artificial Intelligence and Machine Learning in Computational Modelling for Engineering and Applied Sciences)

Computer Modeling in Engineering & Sciences 2026, 146(2), 2 https://doi.org/10.32604/cmes.2026.077044

Received 01 December 2025; Accepted 30 January 2026; Issue published 26 February 2026

Abstract

Physics-informed neural networks (PINNs) have emerged as a promising class of scientific machine learning techniques that integrate governing physical laws into neural network training. Their ability to enforce differential equations, constitutive relations, and boundary conditions within the loss function provides a physically grounded alternative to traditional data-driven models, particularly for solid and structural mechanics, where data are often limited or noisy. This review offers a comprehensive assessment of recent developments in PINNs, combining bibliometric analysis, theoretical foundations, application-oriented insights, and methodological innovations. A bibliometric survey indicates a rapid increase in publications on PINNs since 2018, with prominent research clusters focused on numerical methods, structural analysis, and forecasting. Building upon this trend, the review consolidates advancements across five principal application domains, including forward structural analysis, inverse modeling and parameter identification, structural and topology optimization, assessment of structural integrity, and manufacturing processes. These applications are propelled by substantial methodological advancements, encompassing rigorous enforcement of boundary conditions, modified loss functions, adaptive training, domain decomposition strategies, multi-fidelity and transfer learning approaches, as well as hybrid finite element–PINN integration. These advances address recurring challenges in solid mechanics, such as high-order governing equations, material heterogeneity, complex geometries, localized phenomena, and limited experimental data. Despite remaining challenges in computational cost, scalability, and experimental validation, PINNs are increasingly evolving into specialized, physics-aware tools for practical solid and structural mechanics applications.Keywords

Machine learning is a rapidly evolving field capable of processing and analyzing data from diverse perspectives [1–5]. It has become a widely used tool for understanding and predicting the behavior of target variables, with various machine learning algorithms demonstrating strong performance in identifying influential factors associated with these variables. As a data-driven approach, the effectiveness of machine learning largely depends on the quality and quantity of the training data. While machine learning models can capture complex relationships between inputs and outputs to predict the behavior of physical systems, they do not inherently incorporate or understand the underlying physical laws governing those systems.

In recent years, the integration of machine learning with traditional computational methods has led to significant advancements in tackling complex scientific and engineering challenges [6–10]. One of the notable innovations in this area is physics-informed neural networks (PINNs), which incorporate fundamental physical laws, such as partial differential equations (PDEs), directly into the training process of neural networks [11–13]. By embedding governing equations into their structure, PINNs enable the modeling of physical systems in a way that leverages both data-driven learning and prior physical knowledge. As a result, PINNs can provide accurate and physically consistent solutions for both forward and inverse problems across a wide range of engineering applications [14–18].

Classical numerical methods, such as finite element method (FEM) and finite difference method (FDM), have long been standard tools for solving PDEs in engineering problems. While these methods are reliable for complex systems, they often encounter challenges when addressing inverse analysis, high-dimensional problems, strong nonlinearities, and sparse data, issues common in solid mechanics. PINNs address these limitations by embedding known physical laws directly into their loss function, reducing reliance on extensive datasets and enhancing predictions’ physical consistency [19]. By leveraging both empirical data and fundamental equations, PINNs present a promising alternative that can significantly improve the efficiency and accuracy of simulations compared to purely data-driven approach [20].

PINNs have had a substantial impact across various areas of computational mechanics [21–25]. Especially in the field of solid and structural mechanics, they have been employed to model complex material behavior, perform structural analyses, predict stress–strain responses under various loading conditions, and identify unknown material properties or damage through inverse modeling.

Despite their advantages, implementing PINNs has challenges, particularly concerning computational efficiency and training complexity [25,26]. Optimizing network parameters while ensuring compliance with physical laws can lead to high computational costs, especially for high-dimensional problems. Furthermore, the performance of PINNs is sensitive to network architecture and initialization strategies, which can affect convergence rates and solution accuracy [27,28]. Researchers have been focusing on overcoming these challenges by employing advanced training methods, such as domain decomposition techniques and adaptive loss balancing, to make PINNs more suitable for large-scale and real-time applications [29–31]. In addition, recent studies have explored the integration of discretized physics, such as finite element formulations, into neural network architectures as an alternative to continuous governing equations, with the aim of reducing computational burden while preserving physical fidelity [32–36].

Although several review articles have examined PINNs from broad, cross-disciplinary perspectives, a focused synthesis dedicated to solid and structural mechanics remains limited. This study aims to provide a focused bibliometric analysis and critical review PINNs with explicit emphasis on computational solid and structural mechanics, a domain that poses distinct challenges compared to generic PDE problems. This work makes three specific contributions. First, it presents a solid-mechanics-centered bibliometric analysis that identifies research trends, influential publications, and thematic clusters. Second, the review organizes existing studies into a mechanics-oriented classification, distinguishing between forward analysis, inverse identification, optimization, damage and fracture modeling, and manufacturing-related applications. Third, by synthesizing methodological advances alongside application case studies, this paper highlights mechanics-specific limitations and research gaps that hinder practical adoption. Note that, during the preparation of this manuscript, an AI-assisted tool was used as a support tool to summarize key ideas from the literature and improve clarity of language. The tool did not contribute to the generation of scientific content, data analysis, or interpretation. Through this combined bibliometric, application, and technical perspective, the study advances understanding of how PINNs can be effectively deployed in solid and structural mechanics and provides actionable directions for future research.

Bibliometric analysis is a valuable approach for systematically understanding scholarly research landscapes [37,38]. In this study, bibliometric techniques were applied to evaluate the contributions of authors, countries, and journals to the advancement of PINNs. The influence of research articles was further assessed through citation counts, while keyword analysis was conducted to reveal thematic trends within the field. To visualize these relationships, VOSviewer software was employed [39–42]. VOSviewer is a specialized tool for constructing and exploring bibliometric networks, including co-authorship, co-citation, and keyword co-occurrence analyses. By generating network maps from bibliographic data, it enables the identification of research structures, emerging themes, and collaboration patterns. The software also provides diverse visualization and quantitative metrics, supporting a deeper interpretation of complex bibliometric information [43–45].

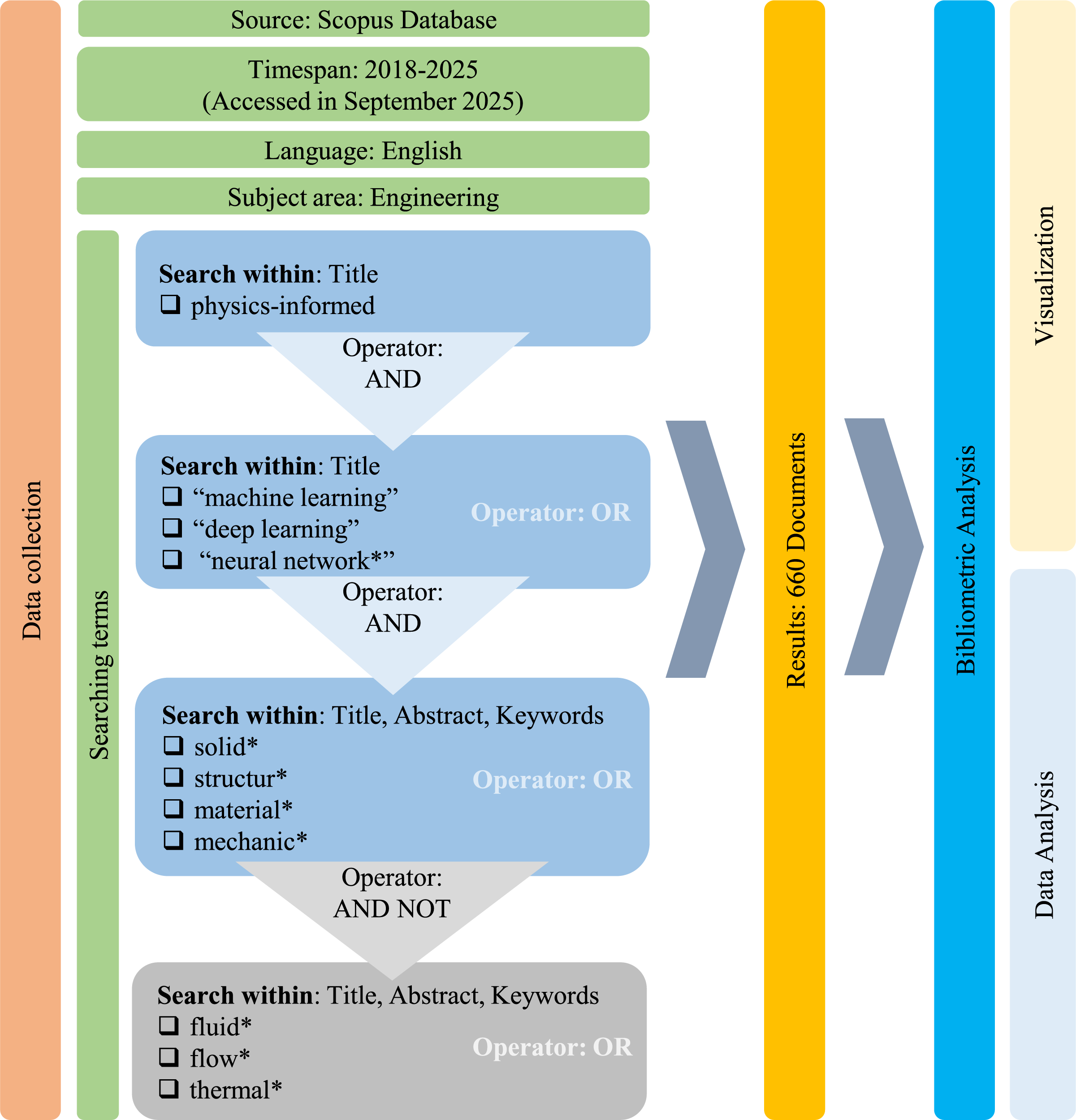

Data collection is an essential step prior to conducting the bibliometric analysis, which involved sourcing relevant publications from database according to predefined criteria. The overall methodological framework is illustrated in Fig. 1. To ensure consistency and avoid duplication, a single database was selected [46]. Scopus was chosen due to its accessibility and comprehensive coverage of scientific documents [47–49]. The timespan was restricted to 2018–2025 (data accessed on 27 September 2025), with results limited to documents written in English and classified under the subject area of Engineering.

Figure 1: The detailed procedure of bibliometric analysis. The asterisk symbol (

The search strategy was carefully designed to capture the most relevant studies. Initially, the keyword “physics-informed” was required to appear in the title field. This was combined with machine learning-related terms, namely “machine learning”, “deep learning”, or “neural network*”, also within the title field. To further refine the scope toward solid and structural mechanics, additional terms such as solid, structur*, material*, and mechanic* were searched within the title, abstract, and keywords. At the same time, unrelated domains were excluded by filtering out publications containing fluid*, flow*, or thermal* in these fields. Accordingly, the final advanced search query was formulated as follows:

(TITLE(physics-informed) AND TITLE (“machine learning” OR “deep learning” OR “neural network*”) AND TITLE-ABS-KEY (solid* OR structur* OR material* OR mechanic*) AND NOT TITLE-ABS-KEY (fluid* OR flow* OR thermal*)) AND PUBYEAR > 2017 AND PUBYEAR < 2026 AND (LIMIT-TO (SUBJAREA, “ENGI”)) AND (LIMIT-TO (LANGUAGE, “English”))

The above search strategy was intentionally designed to prioritize precision and reproducibility in bibliometric trend and cluster analyses. As a consequence, relevant studies that employ PINN methodologies but use alternative terminology (e.g., “scientific machine learning,” “physics-guided,” or application-specific naming) or focus on strongly coupled thermomechanical problems may not be fully captured in the bibliometric dataset. This represents a trade-off between precision and coverage. To mitigate this limitation, the narrative review presented in later sections of this paper was based on broader searches across titles, abstracts, and keywords, using expanded keyword combinations and manual screening, ensuring comprehensive coverage of PINN applications in solid and structural mechanics.

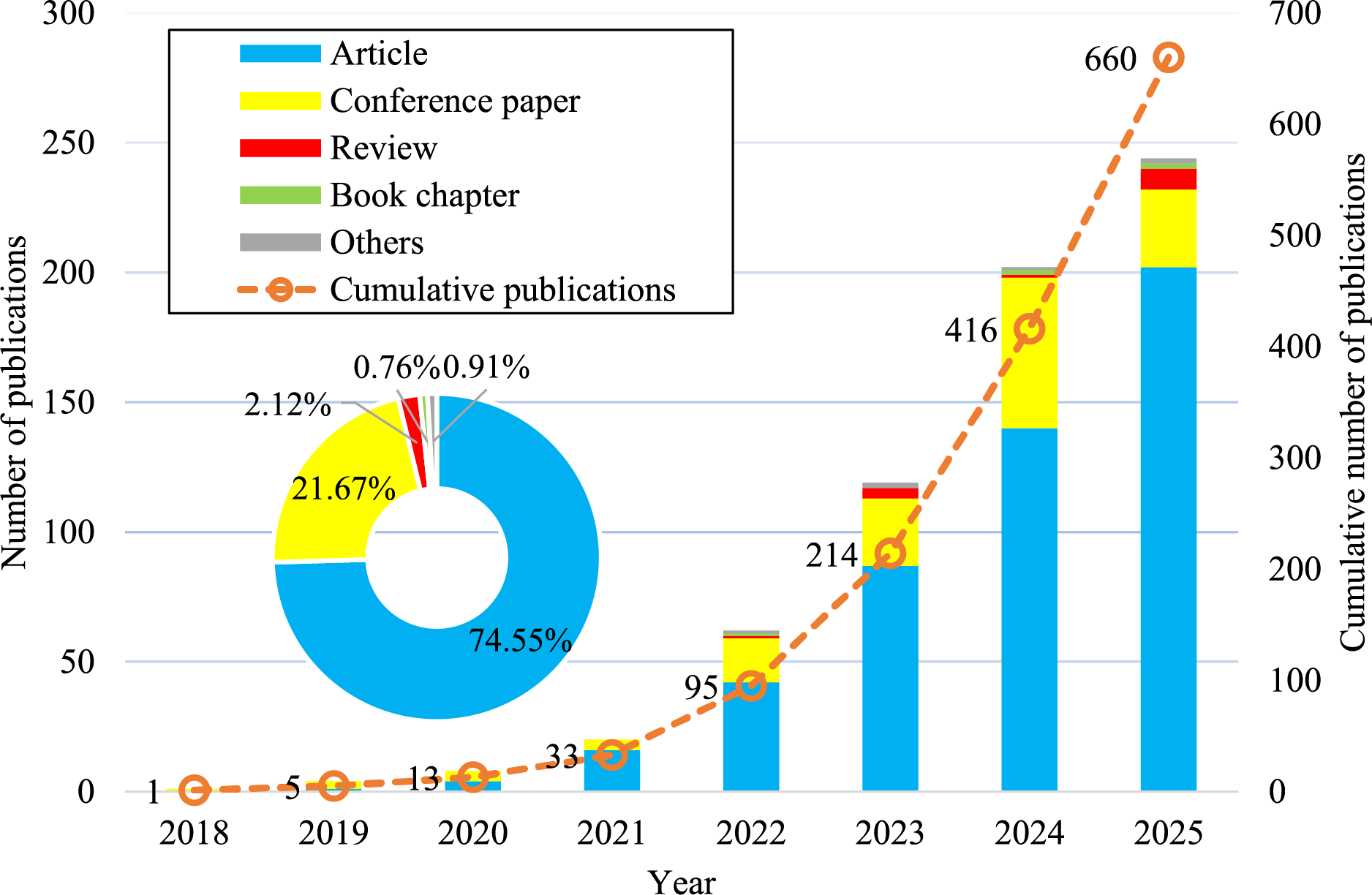

Using this bibliometric search strategy, a total of 660 documents related to the field were identified during the period 2018–2025 (up to 27 September 2025). The bibliographic dataset, comprising information such as authors, titles, keywords, and document types, was retrieved from the Scopus database for subsequent analysis. Fig. 2 illustrates the annual distribution of publications, which demonstrates a steady upward trajectory over the study period. The research output expanded from a single publication in 2018 to 244 publications by September 2025, highlighting the growing scholarly interest in the topic.

Figure 2: Annual and cumulative publication trends (2018–2025), highlighting rapid growth and the predominance of journal articles (up to 27 September 2025).

In terms of document types, journal articles dominate the dataset, accounting for 74.55% of total publications, followed by conference papers (21.67%), review papers (2.12%), book chapters (0.76%), and other categories (0.91%). This distribution underscores the central role of peer-reviewed journal articles in advancing the field, while conference papers also contribute significantly to disseminating emerging findings.

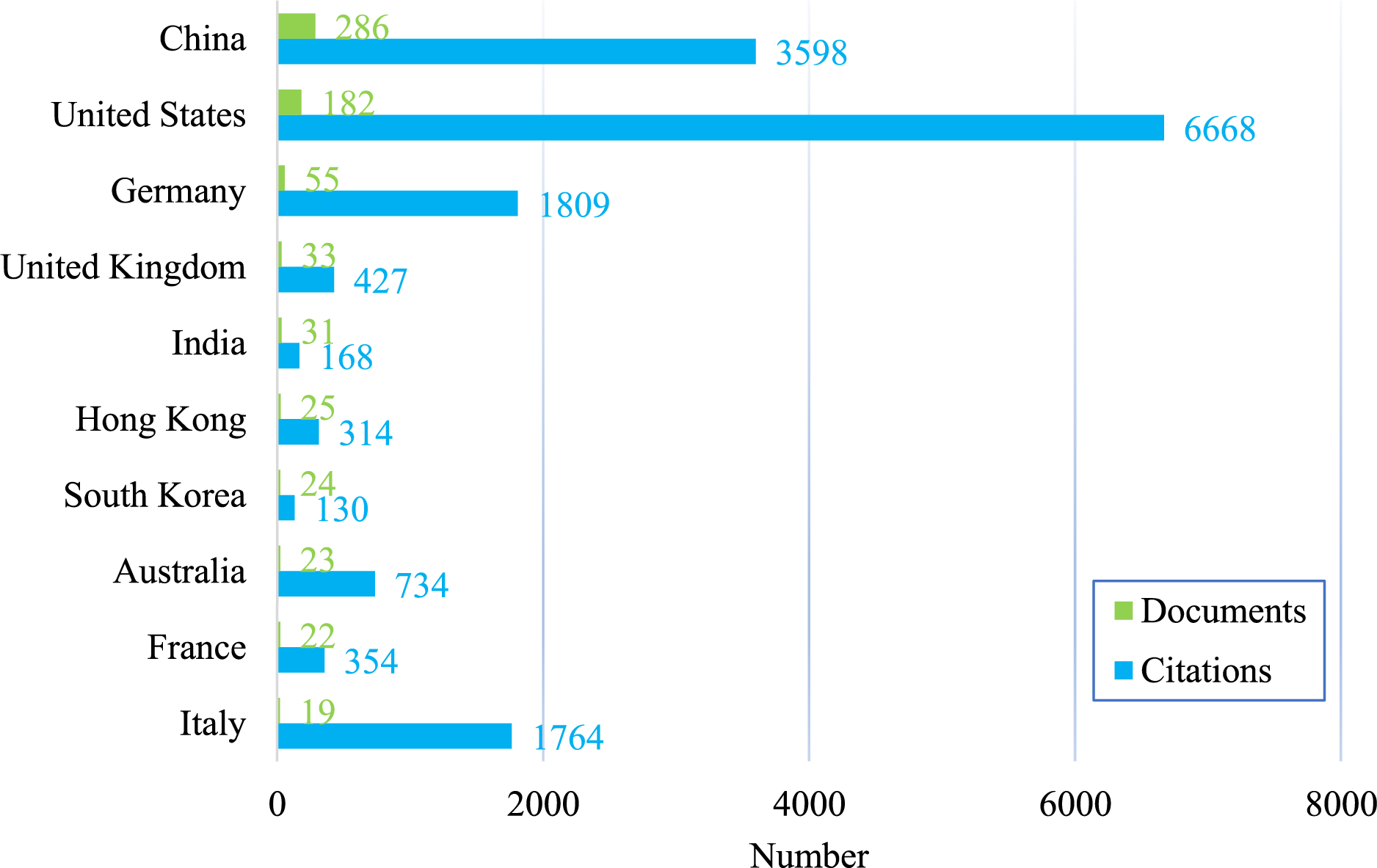

The ranking of the top 10 countries/territories by publication output is presented in Fig. 3. China and the United States dominate the field, with 286 and 182 publications, respectively. Despite producing fewer documents, the United States demonstrates a comparatively higher citation count, reflecting a strong impact within the scholarly community. Germany, the United Kingdom, and India follow, each contributing more than 30 publications. Hong Kong, South Korea, Australia, and France each produced over 20 publications, while Italy contributed 19 publications accompanied by relatively higher citation counts.

Figure 3: Top 10 countries/territories contributing to PINNs in solid and structural mechanics.

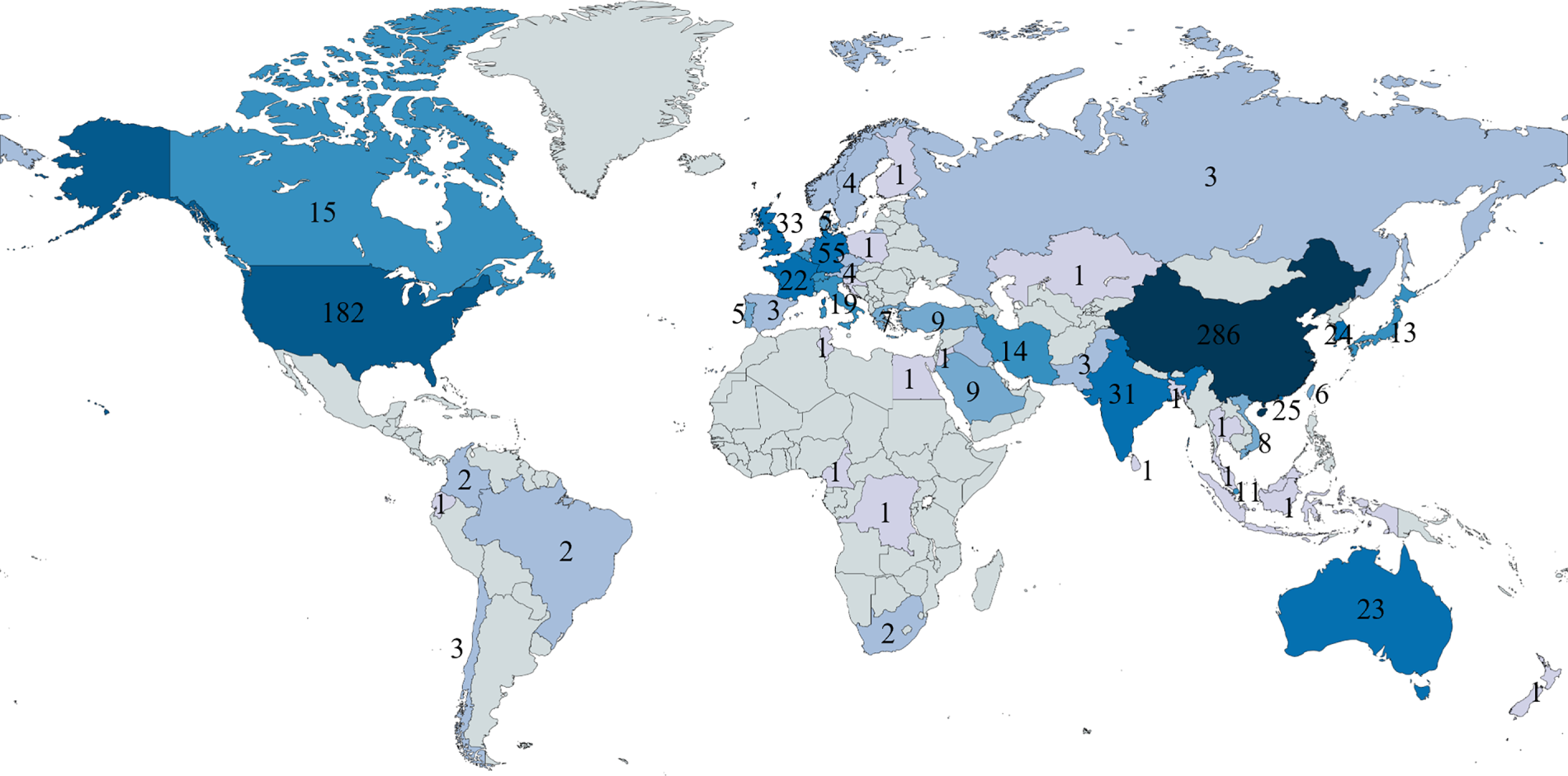

To highlight the global distribution of research, Fig. 4 presents scientific production in a world map format. Darker shades indicate countries with higher research output, while lighter shades correspond to lower levels of activity. The analysis underscores substantial international engagement with PINNs in solid and structural mechanics, with China and the United States emerging as the leading contributors. European countries such as Germany, the United Kingdom, and France also make notable contributions, while significant outputs are also evident in India, Hong Kong, Republic of Korea, and Australia. Importantly, the dataset also includes countries with only a single publication, demonstrating the widespread and global nature of research efforts in this field.

Figure 4: Global map of PINN-related scientific production in solid and structural mechanics.

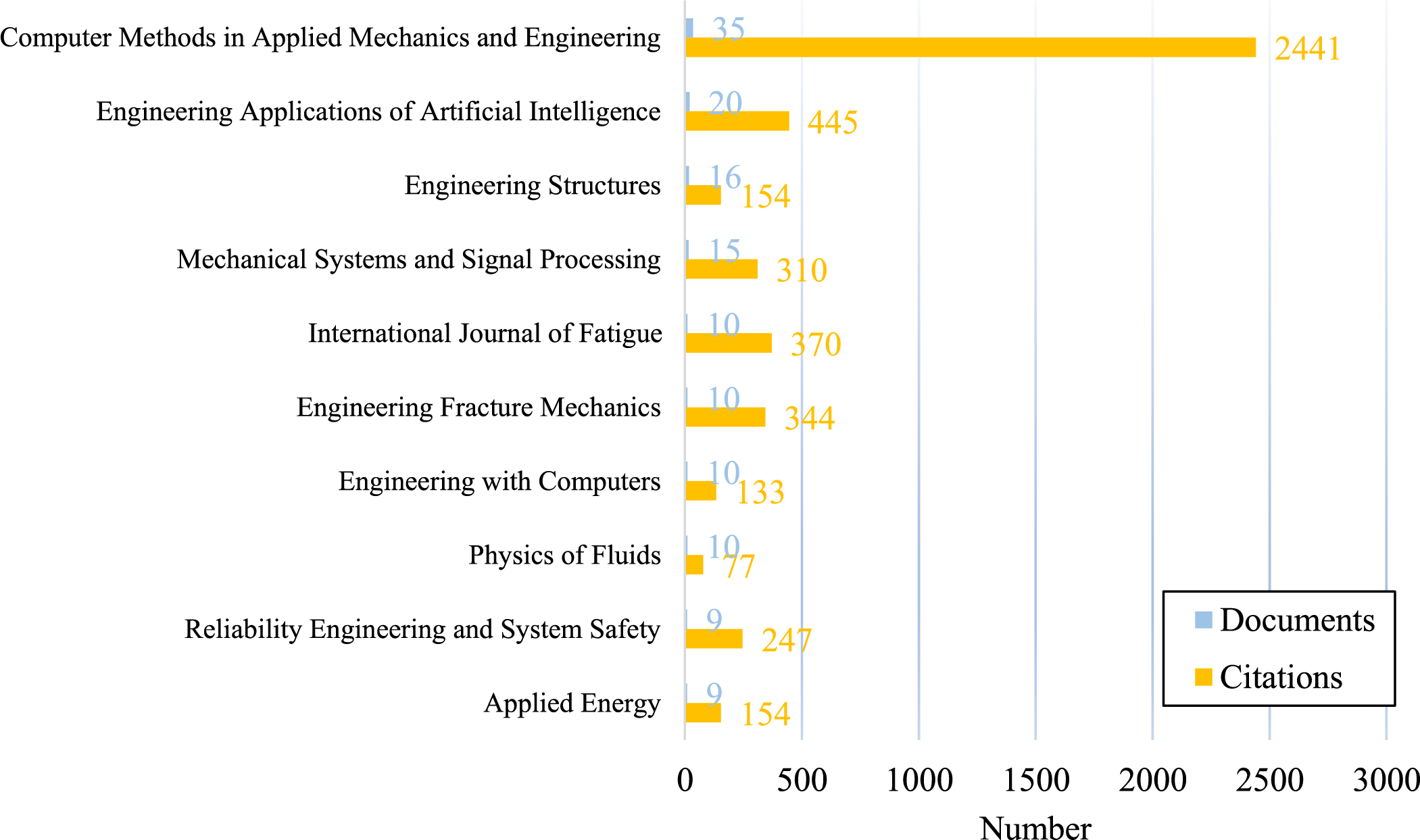

Publications in this field are primarily concentrated in peer-reviewed journals, as illustrated in Fig. 5, which presents the top 10 publication sources ranked by document output. The analysis highlights the dual dimensions of journal productivity and scholarly impact. The leading source is Computer Methods in Applied Mechanics and Engineering, with 35 publications and the highest citation count (2441 citations), indicating its central role in disseminating influential research. This is followed by Engineering Applications of Artificial Intelligence with 20 publications and 445 citations. Other notable sources include Engineering Structures and Mechanical Systems and Signal Processing, each contributing more than 10 publications. Additional journals such as the International Journal of Fatigue, Engineering Fracture Mechanics and Engineering with Computers also exhibit substantial activity, reflecting their significance as outlets for advancing knowledge in this domain. Collectively, these findings underscore that high-impact research on PINNs in solid and structural mechanics is being published across both general computational mechanics journals and specialized engineering outlets.

Figure 5: Top 10 publication sources for PINNs in solid and structural mechanics.

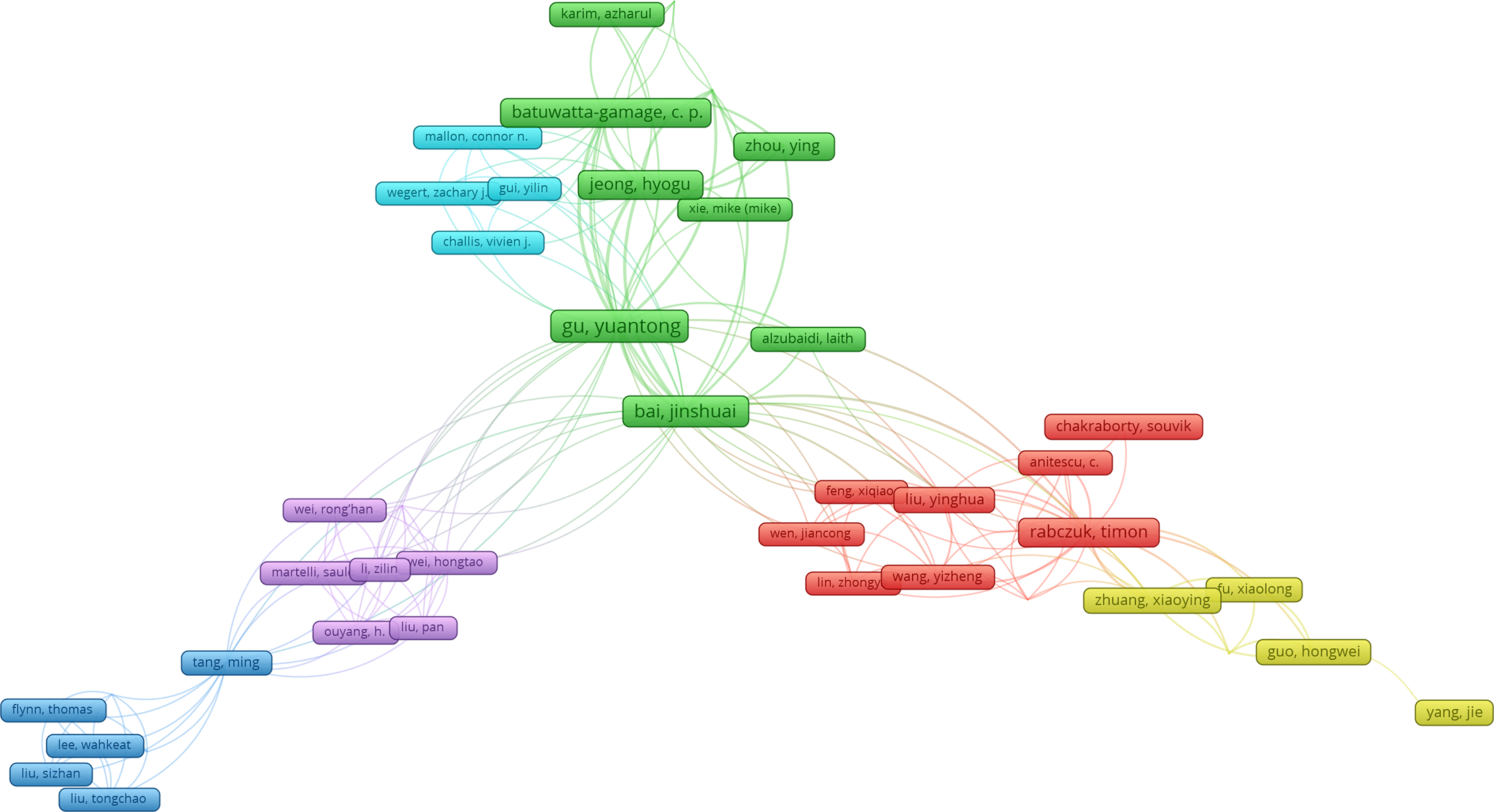

PINNs have attracted considerable global attention, fostering extensive collaboration among researchers. Fig. 6, generated using VOSviewer, illustrates the biggest co-authorship network in this field, where different colors denote distinct collaborative clusters. Larger nodes represent more prolific authors, while the proximity between nodes indicates the strength of co-authorship links. The analysis highlights several prominent clusters. Notably, Gu, Yuantong and Bai, Jinshuai form central nodes in one of the largest clusters (green), connecting with researchers such as Jeong, Hyogu, Batuwatta-Gamage, C.P., and Zhou, Ying, reflecting strong collaborative activity. Another influential cluster (red) is centered on Timon, Rabczuk, demonstrating a tightly connected research community. Additional clusters include groups led by Tang, Ming (blue), Li, Zilin (purple), Zhuang, Xiaoying (yellow), and Gui, Yilin (light blue) each linking networks of researchers working on related topics. Overall, the network structure demonstrates that while the field is composed of multiple independent research groups, several key authors act as central connectors. These authors facilitate cross-group collaborations, shaping research patterns and advancing the development of PINNs in solid and structural mechanics.

Figure 6: Visualization of author network in PINN research for solid and structural mechanics, showing clustered collaboration patterns and key researchers acting as central hubs across research.

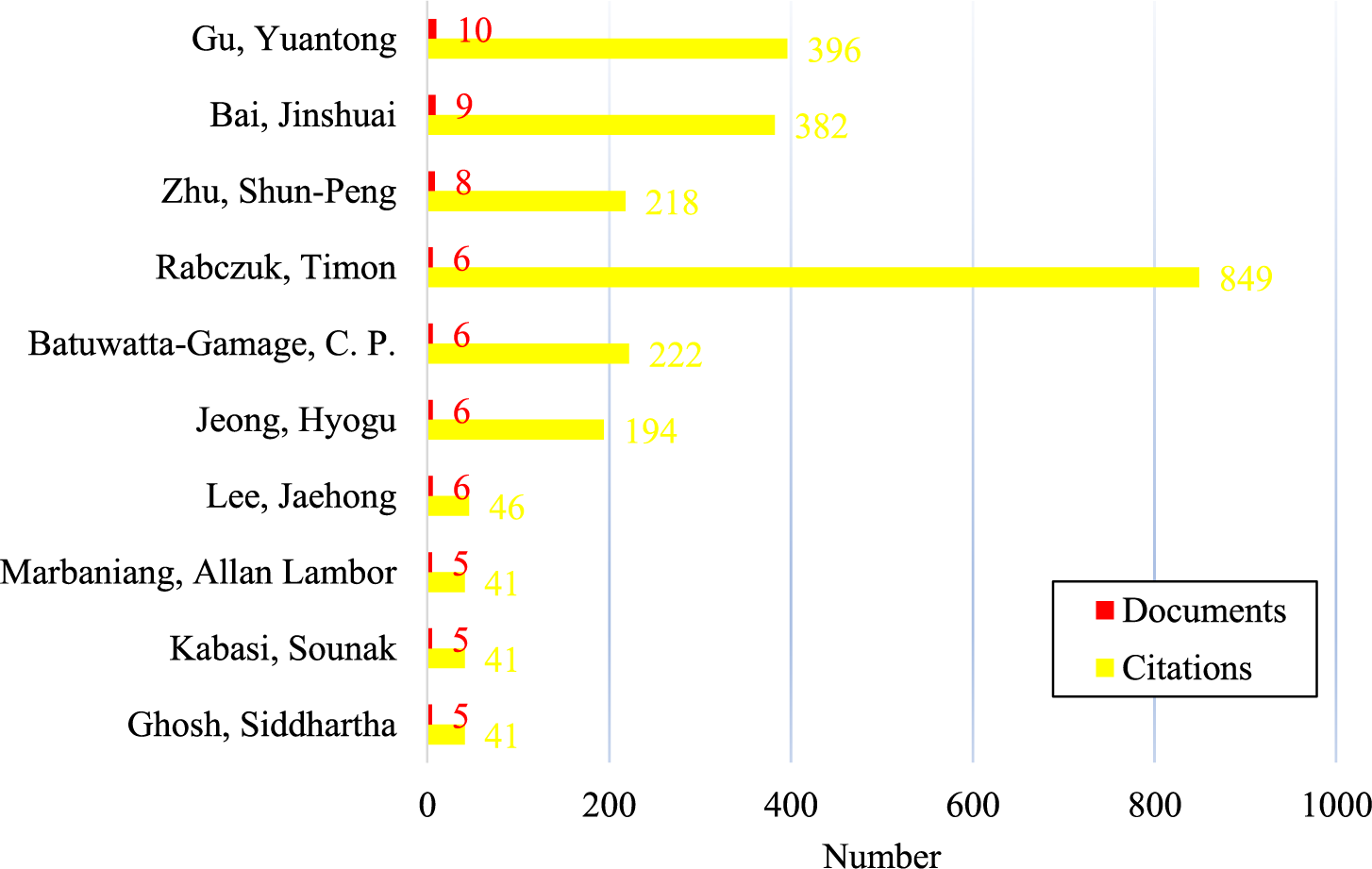

The top 10 authors with the highest number of publications are listed in Fig. 7, confirming their prominence with larger nodes illustrated in Fig. 6. The results reveal that while several authors have produced a relatively modest number of publications, their impact, as measured by citations, is substantial. For instance, Gu, Yuantong leads the list with 10 publications and 396 citations, followed closely by Bai Jinshuai with 9 publications and 382 citations. Notably, Timon Rabczuk, despite publishing only 6 documents, has received the highest citation count (849 citations), underscoring his significant scholarly influence in the field.

Figure 7: Top 10 authors in PINN-related solid and structural mechanics with the highest number of publications.

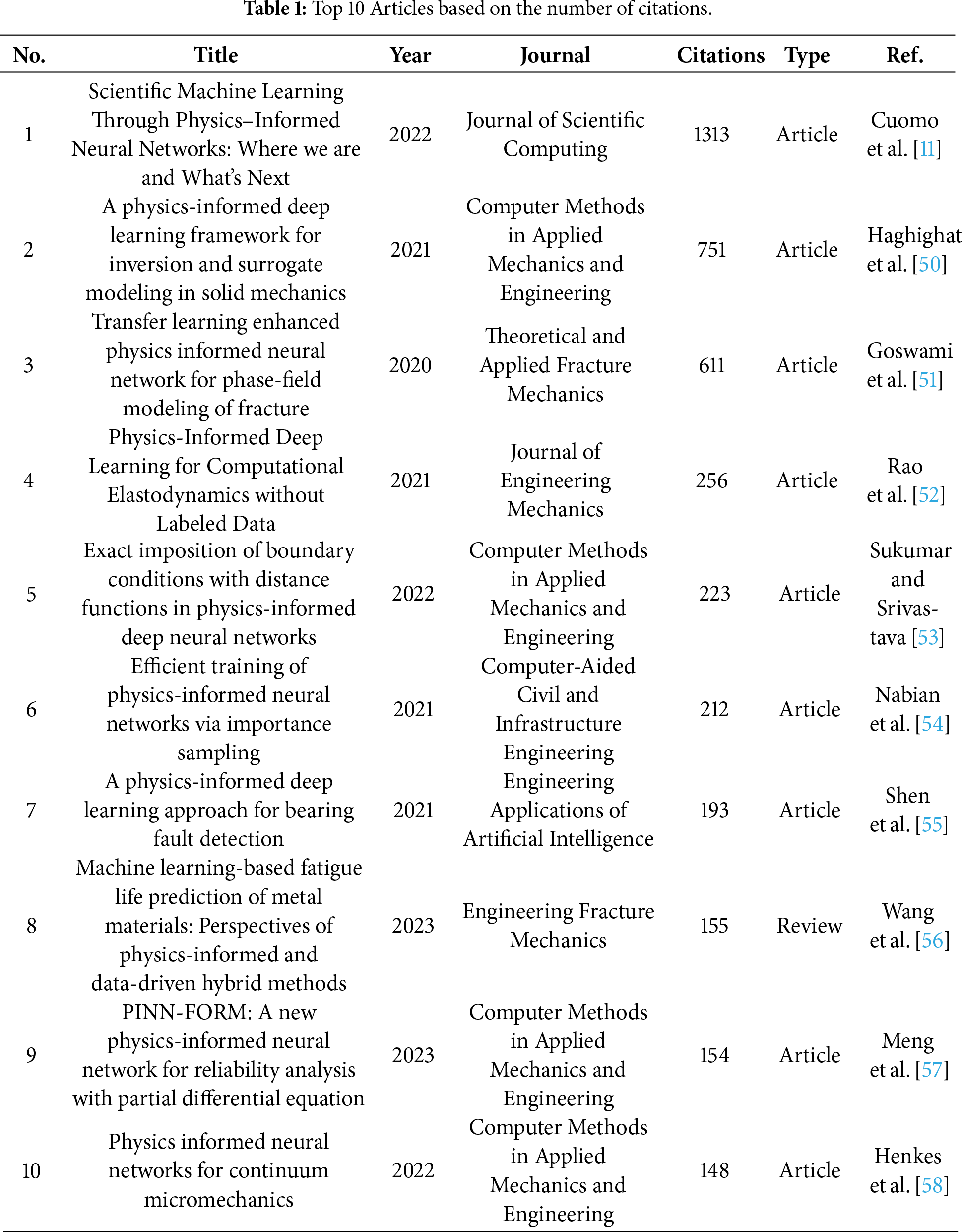

The 10 most-cited papers in the field are summarized in Table 1. The most influential work is Cuomo et al. [11] in the Journal of Scientific Computing with 1313 citations, followed by Haghighat et al. [50] and Goswami et al. [51]. Computer Methods in Applied Mechanics and Engineering accounts for 4 of the top 10 papers, underscoring its central role in disseminating PINNs research in mechanics. Of these publications, nine are research articles and one is a review, indicating that impact is largely driven by original contributions. The temporal distribution spans 2020–2023; while earlier works (2020–2022) have naturally accumulated more citations, several 2023 publications have already surpassed 150 citations, reflecting rapid uptake in the community. Thematically, the most-cited studies cluster around methodological innovations (e.g., inverse problems, boundary condition enforcement, training efficiency) and applications in mechanics (fracture, elastodynamics, reliability, micromechanics, and fatigue).

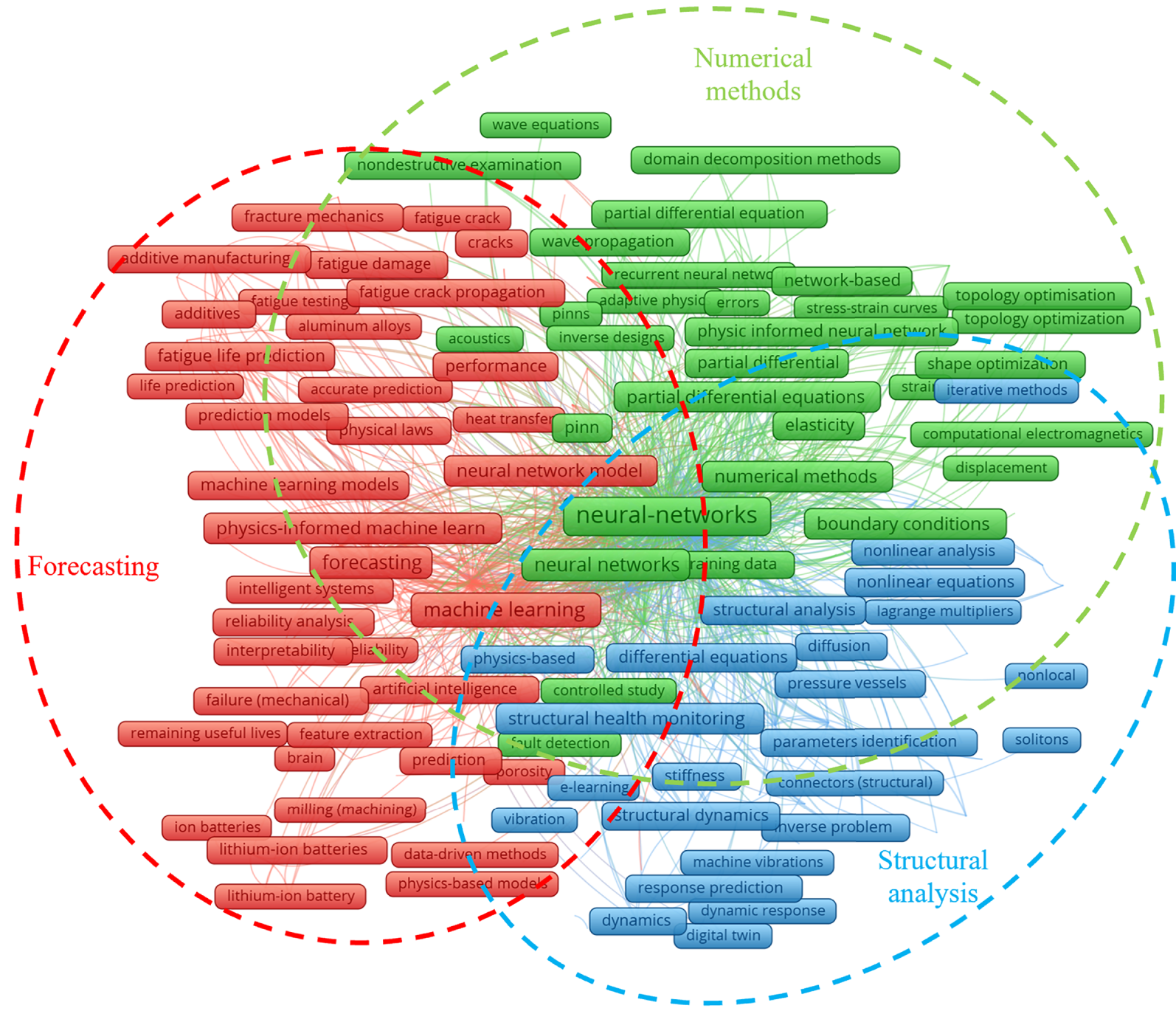

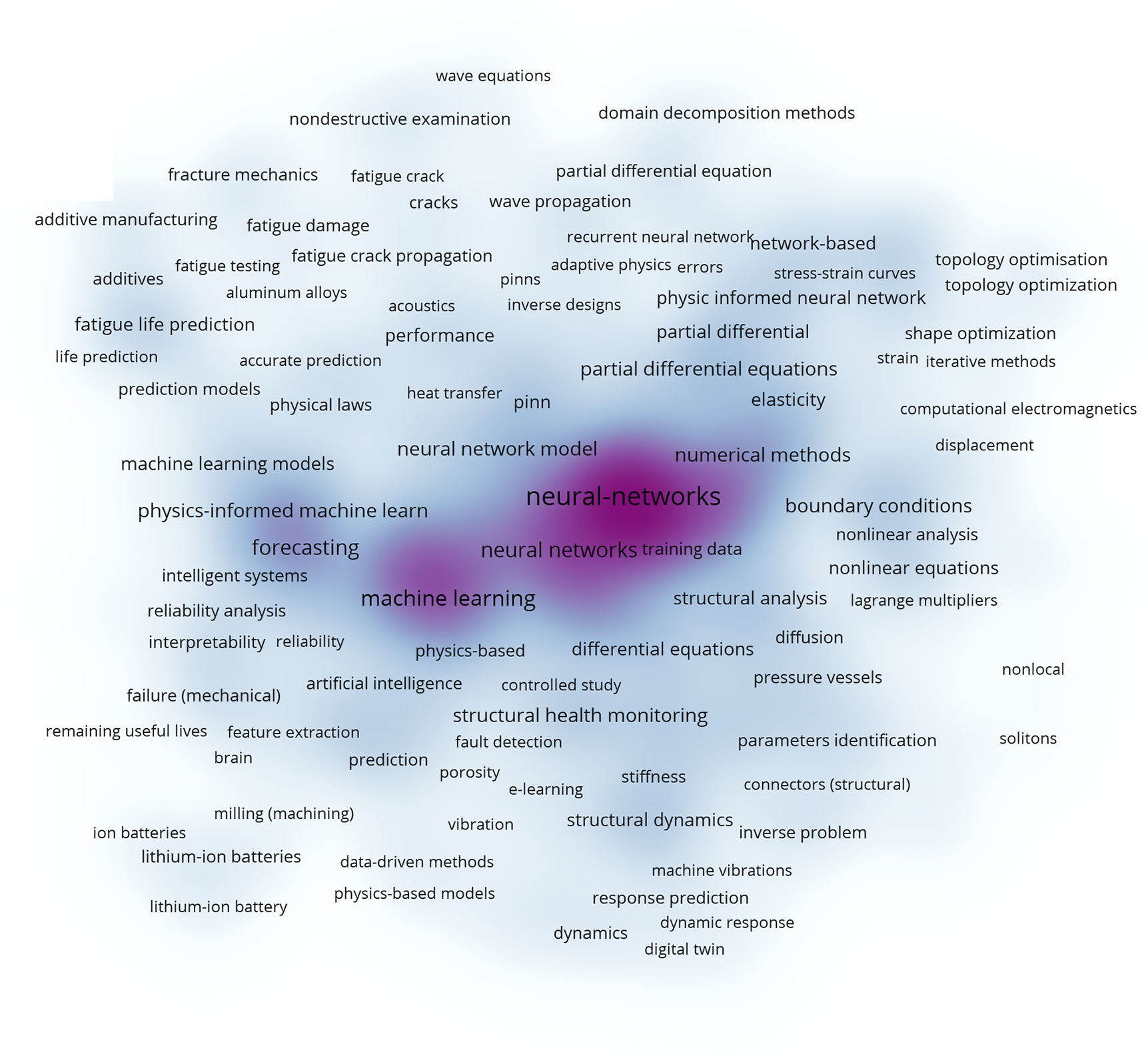

The current research areas involving PINNs were identified through a keyword co-occurrence analysis of the publication network, as illustrated in Fig. 8. The visualization highlights three dominant thematic clusters. The first cluster, shown in green, is associated with numerical methods, encompassing terms such as partial differential equations, boundary conditions, elasticity, and topology optimization. This cluster reflects the methodological foundation of PINNs, emphasizing their role as computational frameworks for solving complex PDEs and for optimizing the size, shape, and topology of structures. The second cluster, represented in blue, focuses on structural analysis, with keywords including nonlinear analysis, structural health monitoring, structural dynamics, inverse problems, and parameter identification. These terms indicate the increasing application of PINNs in solid and structural mechanics, where traditional numerical approaches often face challenges in handling complex boundary conditions, real-time monitoring, or inverse modeling. The third cluster, depicted in red, emphasizes forecasting and reliability-oriented applications, characterized by terms such as reliability analysis, fault detection, fatigue life prediction, fracture mechanics, additive manufacturing, and battery technologies. This demonstrates the extension of PINNs into predictive modeling and performance assessment, particularly in engineering systems where safety and durability are critical.

Figure 8: Visualization of keyword co-occurrence in PINN research for solid and structural mechanics, revealing three major thematic clusters associated with numerical methods, structural analysis, and forecasting.

Complementing this analysis, the keyword density map in Fig. 9 illustrates the frequency and strength of associations among terms within the domain. Darker regions indicate concentrated research interest, with prominent hotspots around neural networks, machine learning, numerical methods, partial differential equations, forecasting, and structural analysis. This distribution suggests that PINNs research is most active at the intersection of numerical modeling, structural mechanics, and predictive applications, where the dual strengths of physics constraints and data-driven learning are most beneficial. Meanwhile, emerging topics such as additive manufacturing, structural health monitoring, and parameter identification highlight the field’s diversification, pointing to future opportunities for applying PINNs in advanced manufacturing, infrastructure resilience, and reliability engineering.

Figure 9: Keyword density map showing core research themes in PINN research for solid and structural mechanics.

These maps reveal both the maturity of PINNs in mechanics-focused applications and their growing adaptability across broader engineering and scientific domains. The prominence of methodological terms indicates a strong emphasis on refining computational efficiency and accuracy, while the spread of application-oriented keywords underscores the community’s drive to translate these methods into practical solutions with tangible industrial and societal impact.

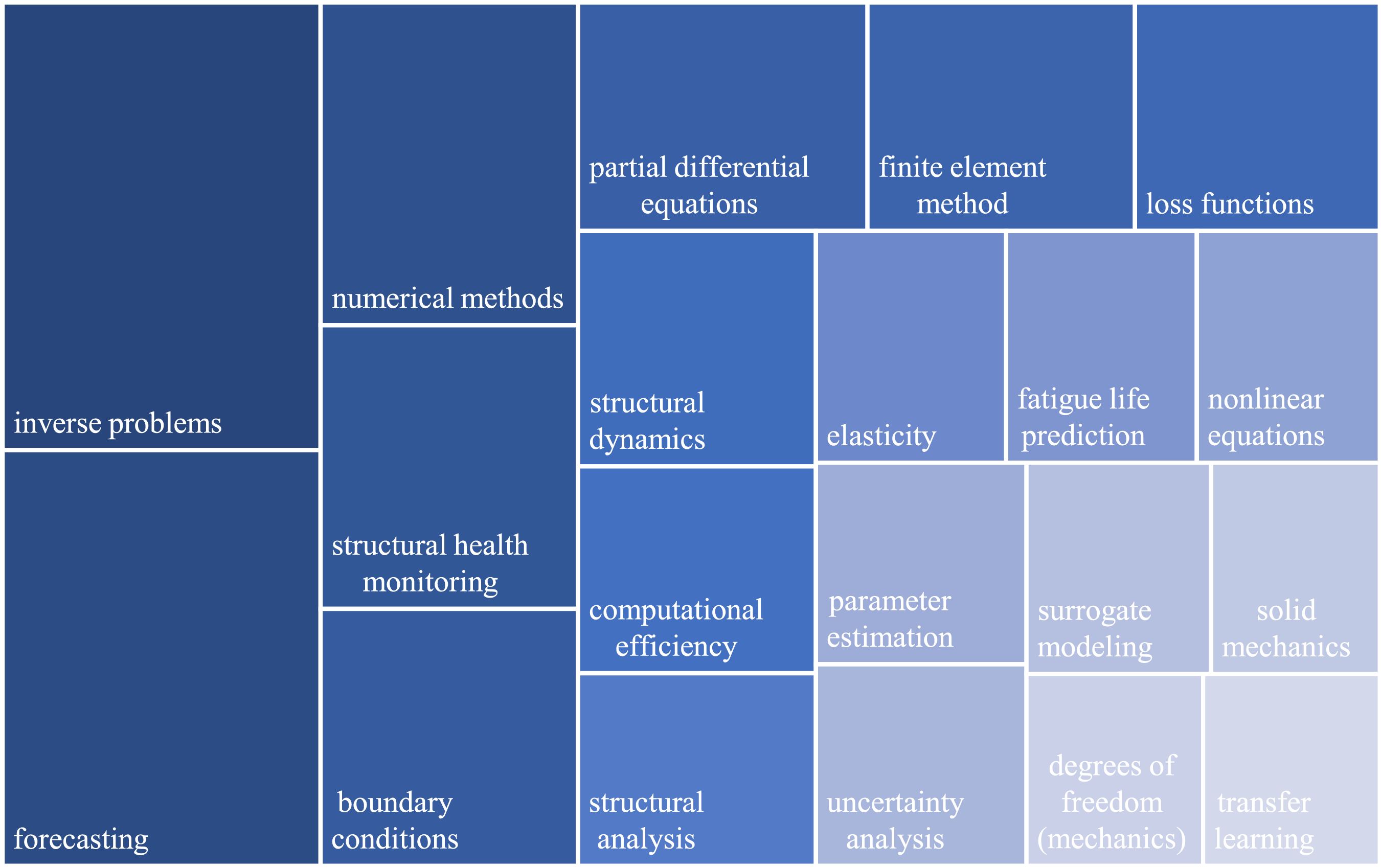

Another perspective is provided by the treemap in Fig. 10, which displays the most frequent keywords found in publications related to PINNs, excluding terms such as neural network and deep learning to focus solely on applications. The most frequent keyword, inverse problems, underscores the central role of PINNs in addressing ill-posed formulations where traditional methods often face limitations. The next most frequent, forecasting, reflects their growing importance in predictive modeling across diverse engineering fields. Numerical methods further emphasize the methodological foundation of PINNs, while structural health monitoring illustrates their increasing use in diagnostics and condition assessment. Other prominent terms, including boundary conditions, partial differential equations, and nonlinear equations, highlight the integration of physics-based constraints into learning frameworks, and finite element method reflects the synergy between PINNs and established computational mechanics approaches. Keywords such as loss functions, computational efficiency, and transfer learning indicate strong interest in improving training strategies, performance, and adaptability. Meanwhile, entries such as structural dynamics, structural analysis, elasticity, solid mechanics, and degrees of freedom point to their widespread applications in classical mechanics. Additional high-frequency terms, fatigue life prediction and parameter estimation, emphasize PINNs’ applications in damage prediction and inverse modeling. Uncertainty analysis reflects ongoing efforts to quantify prediction reliability, while surrogate modeling demonstrates interest in efficient approximations and model reduction. Collectively, the treemap portrays a field deeply rooted in computational solid and structural mechanics, while simultaneously diversifying into broader engineering domains.

Figure 10: Treemap of the top 20 most frequently associated keywords with PINNs.

3 Fundamentals of Physics-Informed Neural Networks

This section conveys a brief description of mathematical fundamentals of PINNs. Concretely, since the interest of this review is applications of PINNs in the field of solid and structural mechanics, the linear elasticity is presented for the sake of concise discussions. However, all aspects of the discussion can be straightforwardly extended to other physical phenomena.

In the context of linear elasticity with Cauchy-Boltzmann continuum theory, the deformation of a continuum body is governed by

where

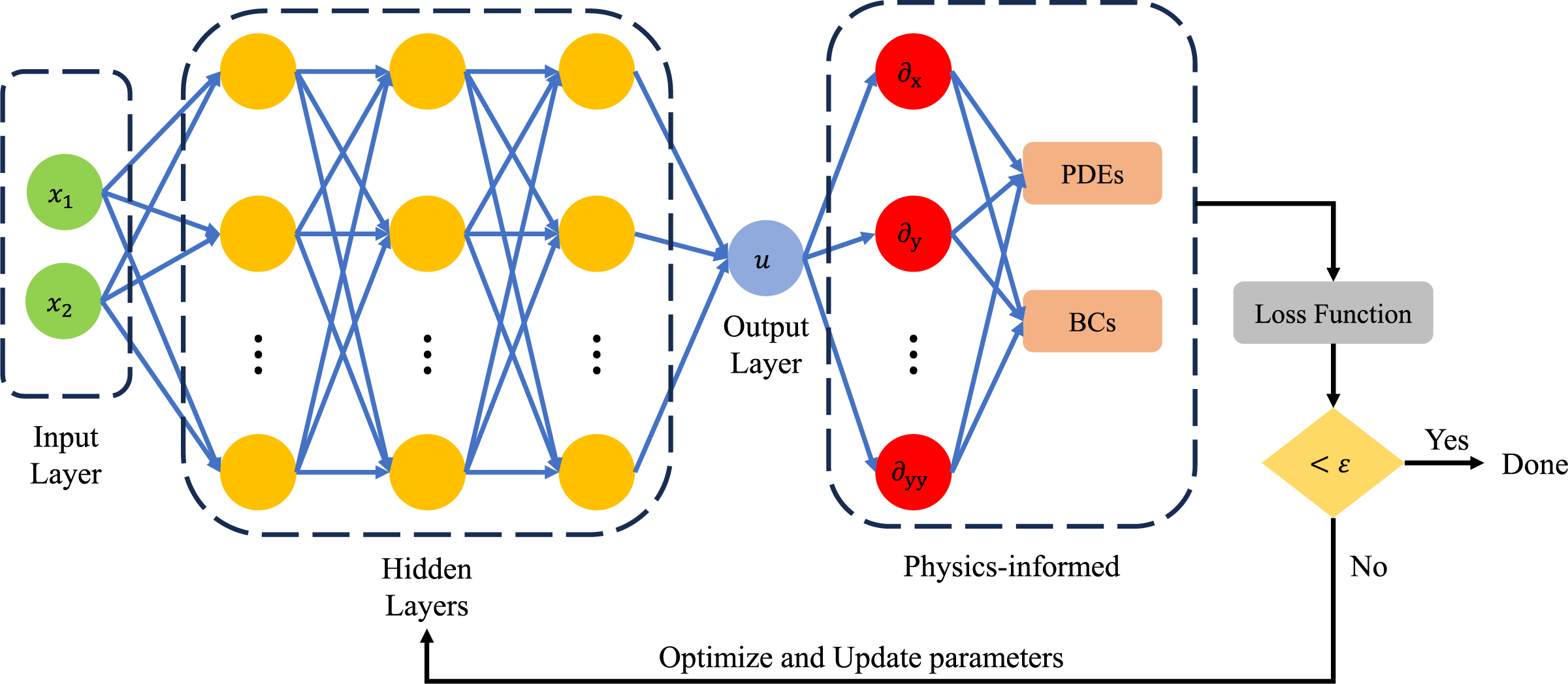

To solve the above boundary-value problem with PINNs, neural networks are used as approximators of kinematic quantities, e.g., stress components and displacement components. The most common implementation is to approximate the displacement components by neural networks, and other quantities such as stress and strain components can be obtained through the differentiation of the displacement components, i.e., Eqs. (2) and (3). This is equivalent to the classical displacement formulation. Alternatively, displacement components together with stress or strain components are approximated by neural networks, and this approach is known as mixed formulation. Obviously, the mixed formulation requires more trainable parameters than the displacement counterparts, but it involves lower degree of differentiation. In this discussion, the mixed formulation is selected, and therefore, the displacement components and stress components are approximated with independent neural networks, i.e.,

Here,

Once specific architectures of neural networks are selected, a loss function

where the norm

with

Figure 11: Basic architecture of a PINN.

In practice, the boundary conditions can be satisfied by construction, i.e.,

Here,

The reduction of terms in the loss function is beneficial for the faster and easier convergence of the training process [59]. Regardless of this, the components from the equilibrium equations and constitutive relations still possess different units, i.e., force per volume and force per area. Therefore, careful selection of the penalty coefficients

However, the direct minimization of the governing equations is not the only way to obtain the solution in PINNs. For the linear elasticity, there exists an “energy functional”

If the displacement components are considered as kinematic unknowns and neural networks are used to approximate these quantities, the training process is carried out by minimizing the loss function

For the forward problems where the interest is the solution for displacement components, the minimization of the loss function

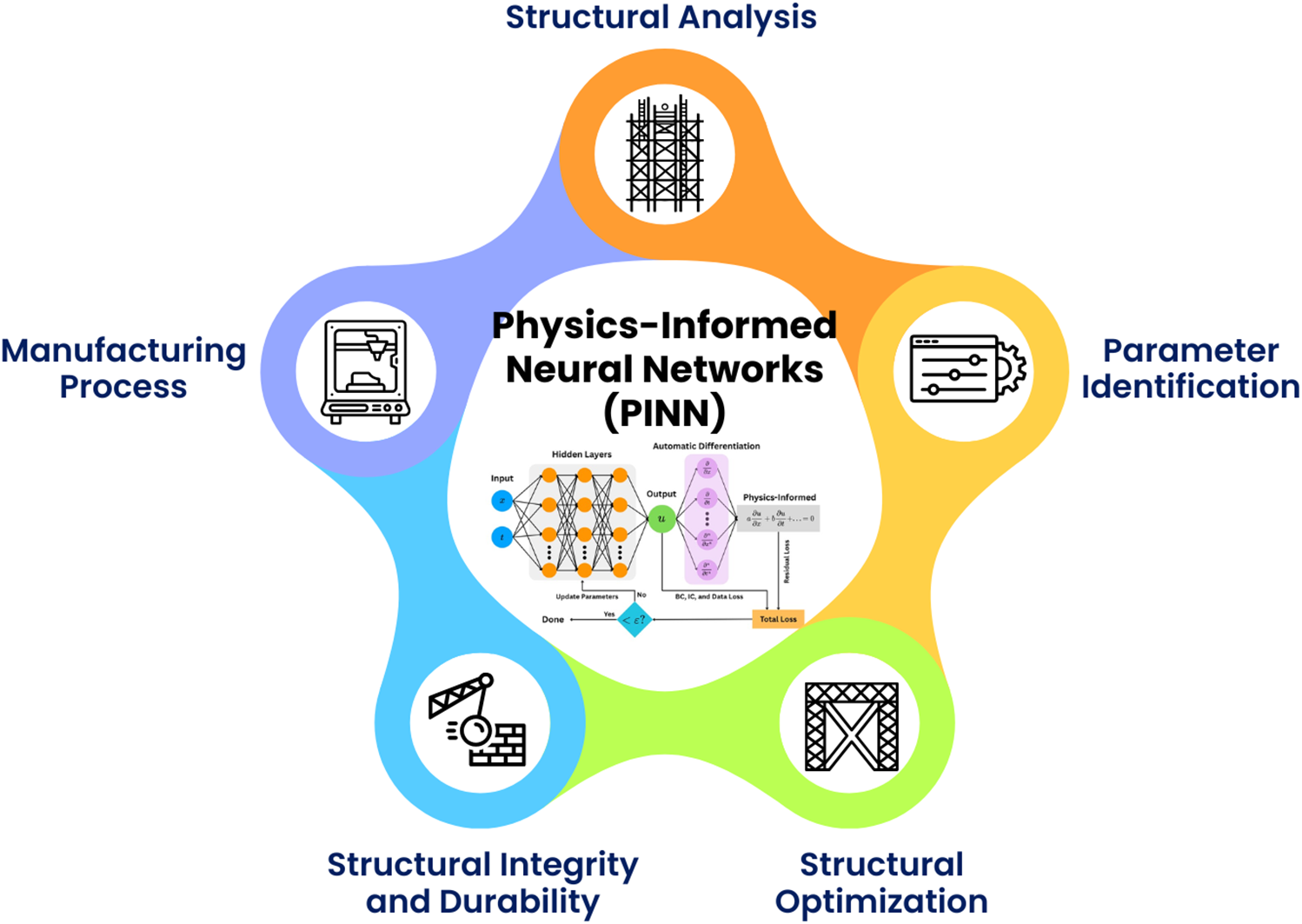

4 Current Research of Physics-Informed Neural Networks in Computational Solid and Structural Mechanics

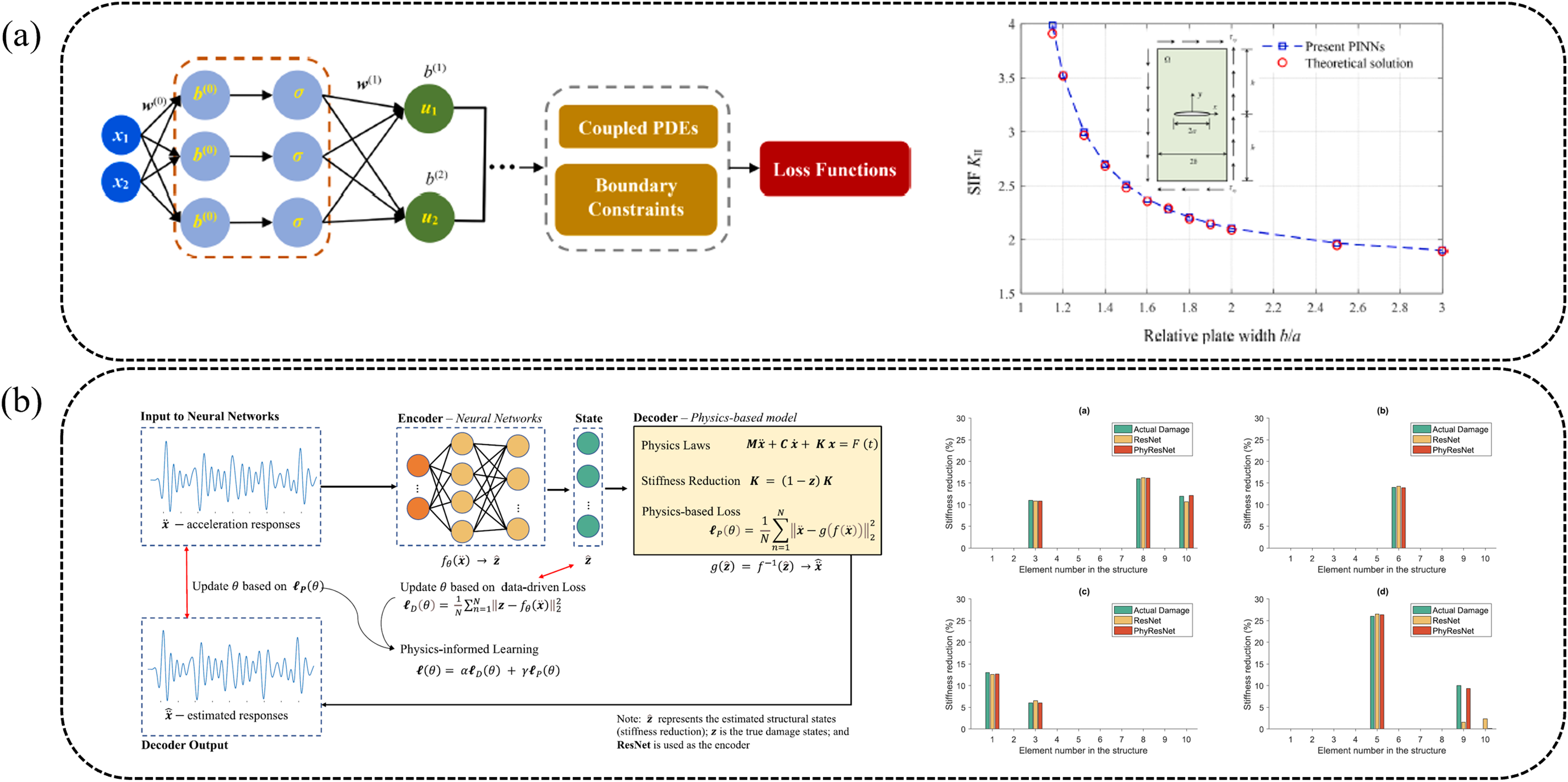

Physics-informed neural networks have been extended to a broad range of solid and structural mechanics problems. As illustrated in Fig. 12, their applications can be broadly classified into five major domains: structural analysis, parameter identification, structural optimization, structural integrity and durability, and manufacturing processes. Representative studies within each category demonstrate how different PINN variants are formulated and tailored to address specific physical challenges. The following subsections provide a detailed discussion of these domains, highlighting how flexible and effective PINNs can be when dealing with different problems from the solid mechanics domain.

Figure 12: Major application domains of PINNs in solid and structural mechanics.

Structural analysis is a fundamental task in solid and structural mechanics, aiming to determine displacement, strain, and stress fields under prescribed loads, boundary conditions, and material properties. Classical numerical solvers, particularly the FEM, have long been the dominant tools for such analyses. Recently, PINNs have emerged as an alternative computational paradigm by embedding governing equations directly into neural network training, enabling mesh-free approximation of physical responses and offering increased flexibility for parametric and data-scarce scenarios. Most PINN-based formulations in this context take spatial coordinates as primary inputs, together with boundary conditions and material parameters (e.g., Young’s modulus and Poisson’s ratio), and predict displacement or stress fields as outputs [63–65]. These models have demonstrated strong performance across a wide range of material behaviors, including linear elasticity [50,66,67], hyperelasticity [66,68], plasticity [65,66,69,70], and elastoplasticity [50,64,70–72], in one-, two-, and three-dimensional domains [63,73–75], highlighting their versatility for forward structural analysis.

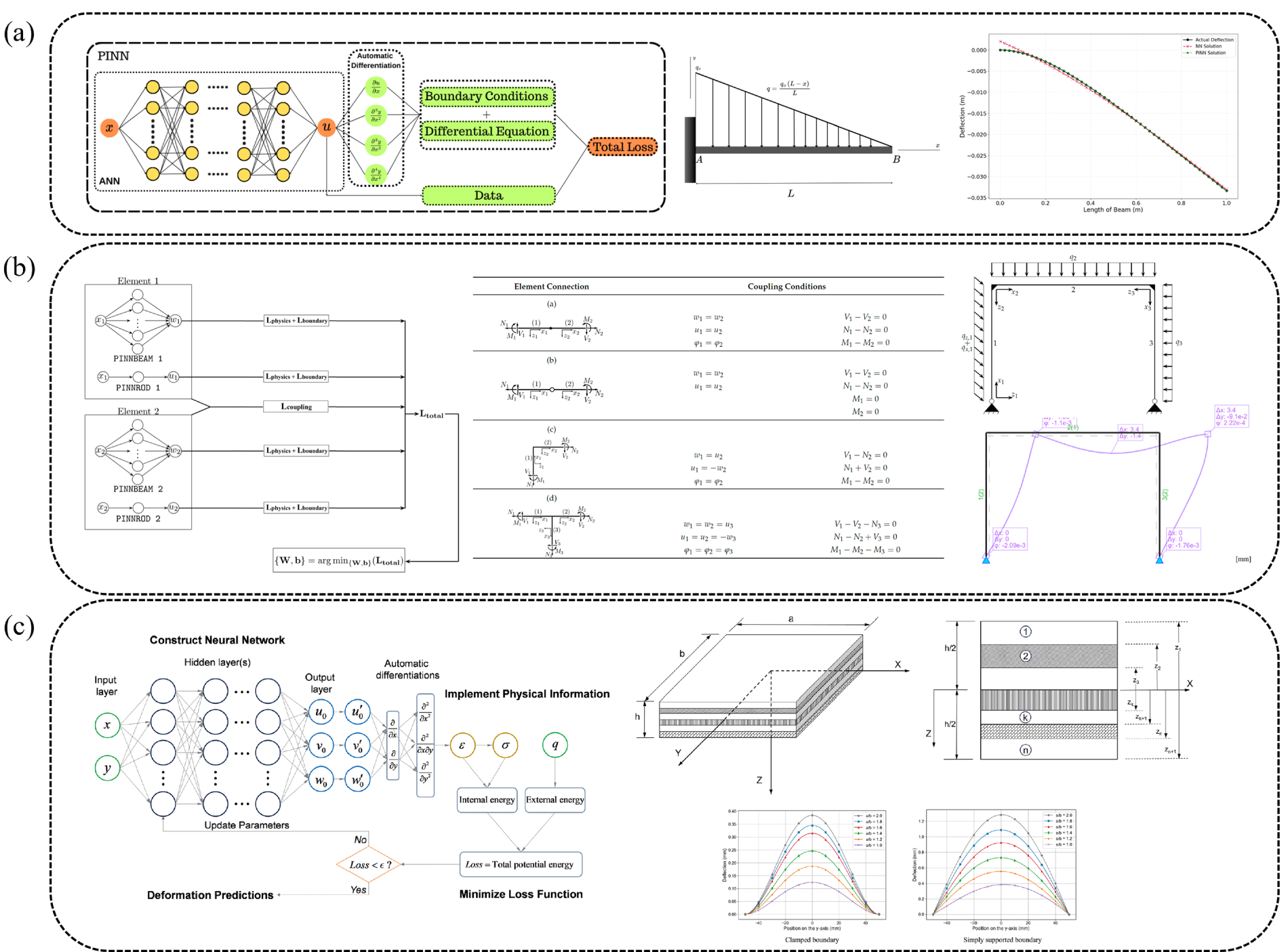

A significant body of work has focused on beam-type structural elements, where governing equations involve high-order spatial derivatives and accurate recovery of internal forces is critical. For example, the PINN formulation illustrated in Fig. 13a, applied to a classical Euler–Bernoulli cantilever beam, enables simultaneous prediction of deflection, rotation, bending moment, and shear force by directly enforcing the governing physics [76]. This capability is particularly attractive for beam, plate, and shell structures, where high-order operators and derivative-based postprocessing often amplify numerical errors in conventional data-driven models. These studies collectively demonstrate that PINNs can serve as continuous-field solvers that deliver smooth, physically consistent solutions for structural members governed by high-order differential equations.

Figure 13: Representative applications of PINNs in structural analysis: (a) Analysis of a 1D cantilever beam [76], (b) Analysis of 2D Frame Structures [77], and (c) Simulation of laminated composite plates [79].

Beyond individual structural components, PINNs have also been applied to multi-member systems such as frame structures. In these formulations, separate neural networks represent each structural member, while compatibility and equilibrium conditions are imposed at joints, as shown in Fig. 13b [77]. This framework enables the simultaneous analysis of interconnected systems without explicit mesh connectivity, making it attractive for parametric studies and topology modifications. However, such applications also reveal challenges specific to structural assemblies, including the need to properly represent force transmission, stiffness discontinuities, and load redistribution across interfaces. These issues highlight the importance of robust boundary and interface treatments, which are discussed in detail in the next section.

For 2D structural components, PINNs have been successfully applied to thin-walled structures and plate-like systems. Early studies highlighted their ability to handle geometric complexity and small thickness-to-length ratios more flexibly than traditional mesh-based schemes [63]. PINN frameworks based on the Föppl–von Kármán equations have been developed for finite-deformation analysis of thin elastic plates, achieving high accuracy at reduced computational cost [20]. Subsequent extensions have addressed more complex plate behaviors, including variable thickness and stiffness distributions [78]. These applications demonstrate that PINNs can naturally accommodate nonlinear kinematics and complex geometries while providing smooth approximations of displacement and stress fields.

PINNs have also shown strong potential for laminated and composite structures, where anisotropy, heterogeneity, and interlayer coupling pose substantial challenges for conventional solvers. As illustrated in Fig. 13c, physics-informed formulations grounded in laminate theory enable accurate prediction of layerwise displacement and stress fields without explicit meshing of material interfaces [79], highlighting the ability of PINNs to alleviate the computational burden associated with mesh generation and interface tracking in layered structures. To address heterogeneity and discontinuities in more general settings, domain-decomposition-based PINN frameworks have been developed to accurately capture interface behavior in multi-material systems [80]. At the structural member level, PINN-based models have been reported for functionally graded porous beams [81] and for large-deflection responses in functionally graded graphene-platelet–reinforced auxetic sandwich beams on nonlinear foundations [82], highlighting the applicability of PINNs to problems involving strong material heterogeneity and geometric nonlinearity. Additional applications include wave propagation in laminated structures [83] and accelerated characterization of composite micromechanics using semi-supervised learning strategies [84]. Methodological developments that support reliable treatment of heterogeneity and interfaces (e.g., subdomain- or interface-aware formulations) are consolidated in the next section.

Multiscale and size-dependent structural behavior is another important application area for PINNs, particularly in problems where classical continuum models struggle to capture scale effects and long-range interactions. Integrating numerical simulations with machine learning has been recognized as a promising approach to addressing such multiscale challenges [85]. In this context, PINN-based frameworks grounded in nonlocal strain-gradient theories have been developed to model wave propagation in nanostructured sandwich plates [86], homogenize lattice materials [87], and capture the bending of nanobeams on nonlinear elastic foundations [88]. These applications highlight PINNs’ ability to represent size effects, long-range interactions, and multiscale coupling within a unified computational framework, offering new opportunities for modeling architected materials and nanostructured systems.

More recently, PINNs have been explored as surrogate models for real-time structural analysis and health monitoring. By learning continuous mappings from limited input data to full-field stress and displacement distributions, PINN-based surrogates enable rapid inference that would otherwise require expensive numerical simulations. For example, Go et al. [89] developed a PINN surrogate capable of predicting full-field displacement and stress distributions in near real time for a 2D plate with a hole under unknown biaxial tension. Such approaches highlight the potential of PINNs for real-time decision-making, digital twins, and condition-based maintenance of structural systems.

The studies reviewed in this subsection demonstrate that PINNs are increasingly adopted as flexible forward solvers for a wide range of structural analysis problems, from simple beams to complex multiscale and heterogeneous systems. While these applications highlight their potential, they also reveal recurring challenges related to stability, boundary-condition enforcement, interface treatment, and scalability. The methodological innovations developed to address these issues, such as adaptive training, hard boundary constraints, domain decomposition, and hybrid FEM–PINN formulations, are consolidated and discussed in Section 5.

Material parameter identification is a central inverse problem in solid and structural mechanics, aimed at determining mechanical properties, such as Young’s modulus and Poisson’s ratio, from indirect measurements, including displacement, strain, or vibration responses [90,91]. This process plays a critical role in various fields, including structural health monitoring [92–94], non-destructive testing [95–97], and failure analysis [98]. It is also particularly important for multi-material systems [99], composite structures [100], and functionally graded materials (FGMs) [23], in which mechanical properties vary spatially and cannot be characterized by a single set of parameters. Additionally, parameter identification plays a vital role in biomedical applications, where accurate characterization of tissue stiffness supports the diagnosis of pathological conditions, including cancer and fibrosis [101–104]. In many of these contexts, direct measurement of material properties is either impractical or impossible, motivating the use of physics-informed learning frameworks that can infer unknown parameters from limited observational data.

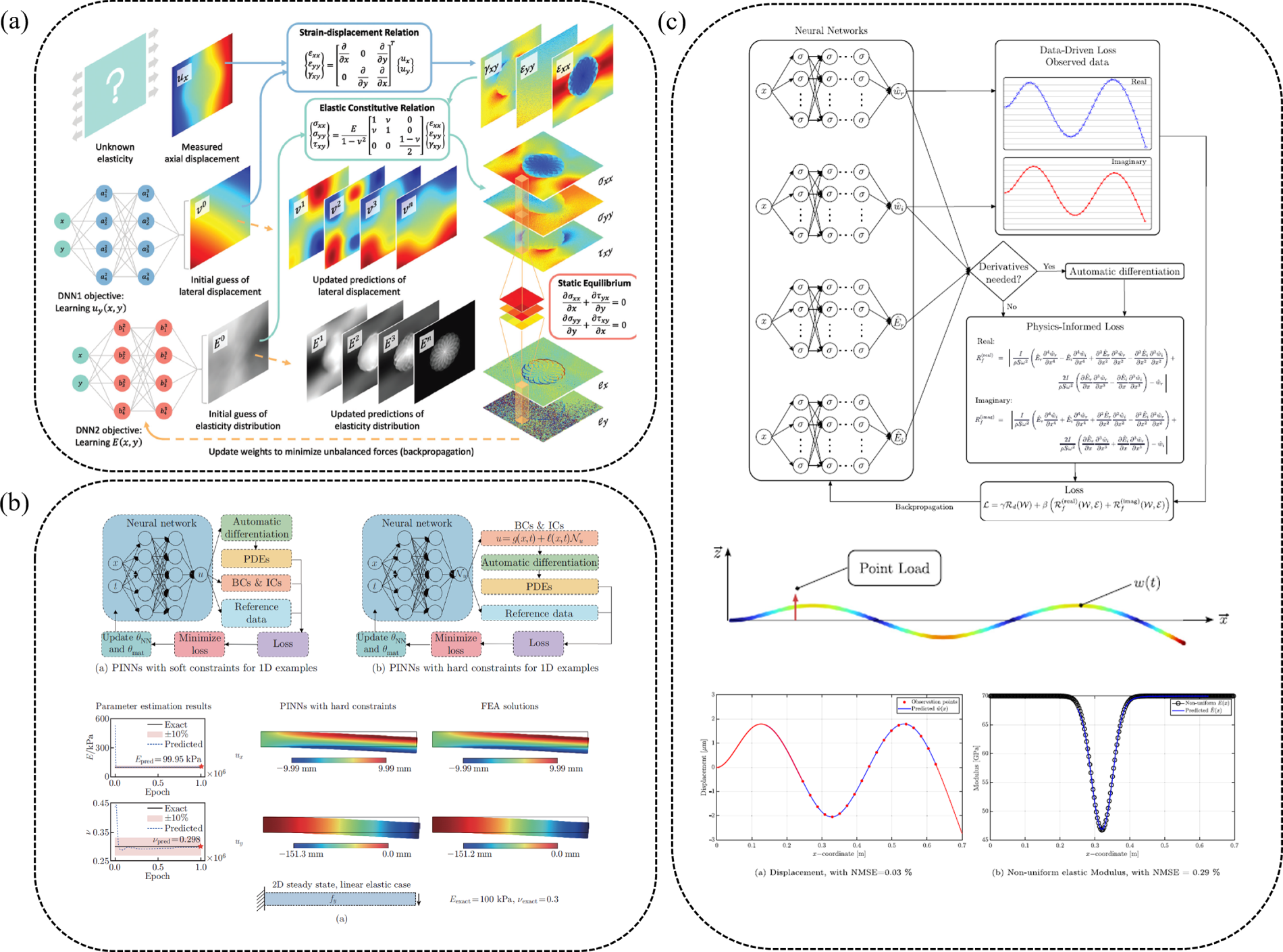

In medical elastography, PINN-based frameworks have made significant advances in reconstructing heterogeneous material fields. Chen and Gu [101] introduced the ElastNet model, which accurately predicts spatially varying Young’s modulus and displacement fields in soft tissues with complex stiffness profiles, as illustrated in Fig. 14a. Similarly, Kamali et al. [102] used PINNs for elasticity imaging in hydrogel-like materials, estimating variations in both Young’s modulus and Poisson’s ratio by combining strain measurements with physical constraints. These approaches improve image resolution and diagnostic reliability in biomedical applications, where non-invasive stiffness estimation is essential. In biomechanics, Wu et al. [105] introduced a Fourier-feature PINN that reconstructs full-field heterogeneous hyperelastic parameters in tissues undergoing large deformation. Capable of learning Neo-Hookean, Mooney–Rivlin, and Gent constitutive models from a strain snapshot and robust to 10% noise, this method offers a high-fidelity solution for nonlinear inverse elasticity problems with very limited experimental data.

Figure 14: Representative applications of PINNs in parameter identification: (a) ElastNet model for elastography [101], (b) Identification of constant material properties in beams [106], and (c) Reconstruction of spatially varying elastic modulus in beams [22].

For engineered materials, Yan et al. [100] extended the application of inverse problems in composite materials using a hybrid PINN and extreme learning machine (ELM) framework. The integration of fast learning through ELM and domain decomposition enabled the framework to efficiently handle large and complex structural assemblies. The method accurately identified unknown material parameters, such as fiber orientation and stiffness, with errors below 2% compared to FEM solutions, demonstrating its effectiveness in inverse problems of composite materials. Additionally, Bharadwaja et al. [99] applied PINNs to heterogeneous materials, showing that PINNs can accurately capture stress discontinuities at material interfaces. Their study highlighted the superior performance of PINNs over traditional methods in predicting displacement fields, stress distributions, and effective material properties in multi-material systems.

Recent developments have further expanded the reach of PINN-based parameter identification across static, dynamic, and nonlinear regimes. As a baseline case, Wu et al. [106] proposed a PINN-based inverse framework for static beam problems with a constant material properties, demonstrating accurate identification of Young’s modulus and Poisson’s ratio from sparse displacement data, as depicted in Fig. 14b. Their results show strong robustness to measurement noise and good agreement with exact solutions, establishing the effectiveness of PINNs for material parameter identification in quasi-static solid mechanics. For dynamic systems, Söyleyici and Ünver [107] introduced a PINN-based approach enhanced with neural tangent kernel (NTK) theory to identify stiffness, damping, and modal parameters in free-vibration beams, achieving inverse errors below 1.41% relative to FEM simulations and experimental measurements. In addition, de O Teloli et al. [22] proposed a PINN-based framework for Euler–Bernoulli beams that estimates displacement, a spatially varying elastic modulus, and damping directly from vibration data. Their formulation enables accurate recovery of both the real and imaginary components of the complex modulus in the frequency domain, as illustrated in Fig. 14c, representing one of the few successful demonstrations of damping identification using PINNs in structural systems.

Beyond neural-network-based approaches, physics-informed probabilistic models have also been explored for inverse mechanics. For example, Tondo et al. [108] proposed a physics-informed Gaussian process (PIGP) framework for static inverse problems in Euler–Bernoulli beams, enabling the identification of unknown elastic parameters and distributed loads while simultaneously providing uncertainty quantification. This Bayesian formulation improves robustness against noise and sparse measurements, offering an attractive alternative for inverse mechanics applications.

These studies demonstrate that physics-informed learning frameworks are increasingly effective tools for identifying material and structural parameters across a wide range of applications. Their ability to infer stiffness, damping, and distributed forces from limited or noisy measurements highlights their promise for structural health monitoring, biomedical diagnostics, and multiscale material characterization. These applications also reveal recurring challenges with stability, noise sensitivity, and scalability, which have motivated the development of specialized training strategies, loss formulations, and hybrid solvers. These methodological advances are consolidated and discussed in Section 5.

Structural optimization is an essential part of modern engineering design, especially for creating lightweight and efficient systems. Traditional methods, mainly FEM-based analyses combined with gradient-based optimization, often face high computational costs, mesh dependency, and limited scalability when handling complex geometries or nonlinear material behavior. Recent progress in PINNs has introduced mesh-free and differentiable optimization frameworks that can enhance both computational efficiency and solution accuracy.

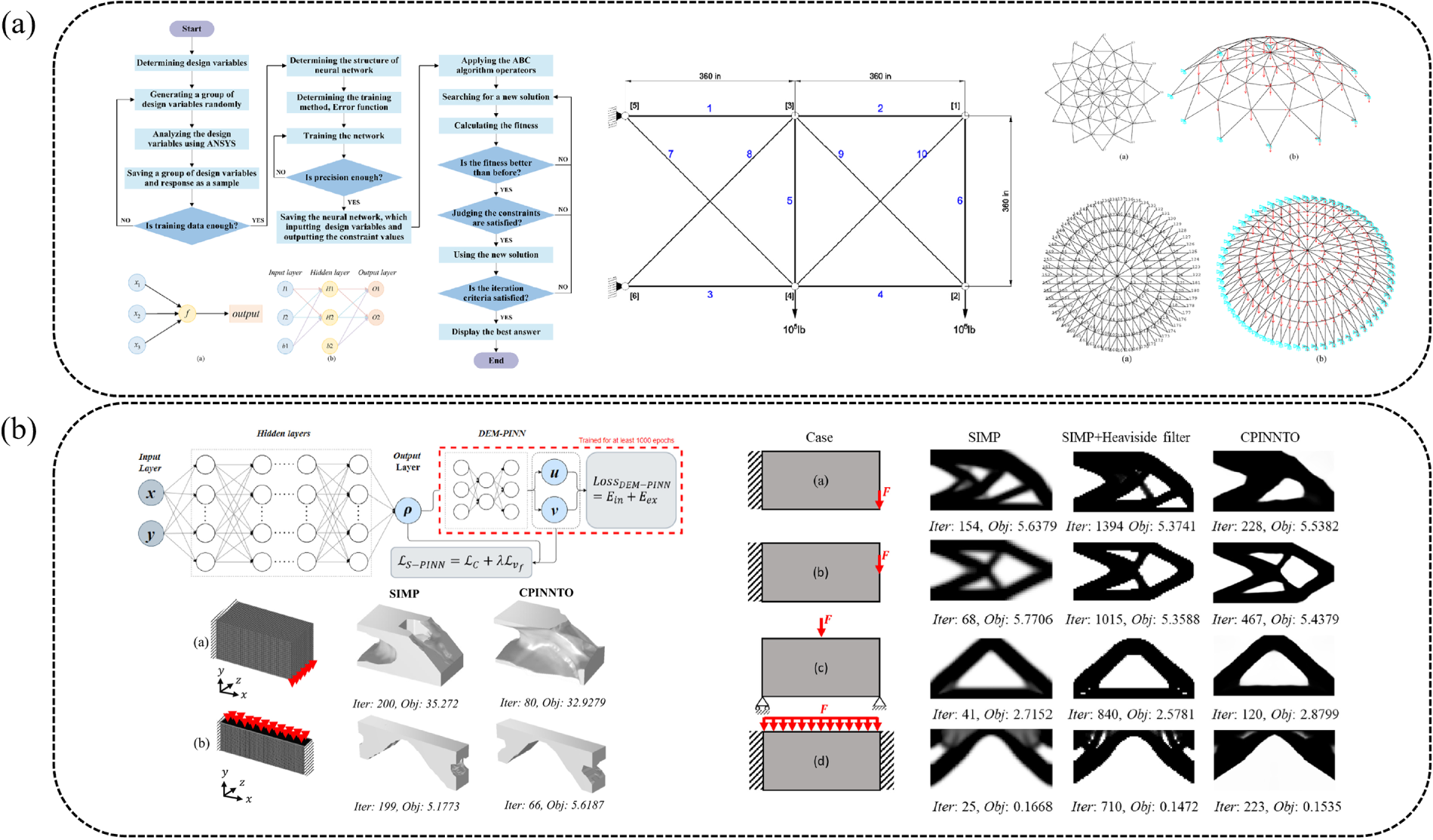

Early demonstrations of PINN-based structural optimization focused on replacing or augmenting conventional solvers. For instance, Mai et al. [109] proposed the physics-informed neural energy-force network (PINEFN), which embeds governing equations directly into the optimization process and eliminates the need for traditional structural analysis. This framework enables efficient optimization of truss structures, yielding competitive designs with significantly reduced computational demands. Similarly, Wu et al. [110] proposed a PINN-enabled surrogate-based optimization framework for geometrically nonlinear structural systems, where a trained neural network replaces repeated finite element analyses during optimization, as illustrated in Fig. 15a. By coupling the surrogate PINN with a metaheuristic optimizer, their approach enables efficient size, shape, and topology optimization of dome and truss structures while maintaining accuracy comparable to FEM-based optimization. The reported results show reductions in computational cost, further highlighting the potential of PINN-based and PINN-assisted frameworks for large-scale, solver-free structural optimization.

Figure 15: Representative applications of PINNs in structural optimization: (a) Truss and dome optimization [110], (b) Topology optimization for 2D and 3D structures [112], and (c) Topology optimization for multi-material systems [113].

Topology optimization has emerged as a major application area for PINNs, where the goal is to determine the optimal material distribution within a prescribed design domain. Jeong et al. [111] introduced a physics-informed neural network-based topology optimization (PINNTO) framework that replaces conventional FEM-based solvers. By integrating energy-based PINNs with the solid isotropic material with penalization (SIMP) scheme, their method achieved accuracy comparable to classical approaches while avoiding explicit mesh-based discretization. Building on this idea, Jeong et al. [112] proposed the Complete physics-informed neural network topology optimization (CPINNTO) framework, shown in Fig. 15b, which employs two coupled PINNs to estimate stress fields and update material distributions. This approach was validated on tip-loaded cantilever beams, demonstrating robustness and improved computational efficiency.

In addition to classical compliance minimization problems, recent studies have extended PINN-based approaches to more challenging topology optimization tasks, including multiscale, multimaterial, and geometrically nonlinear designs [113]. An example of multi-material topology optimization is illustrated in Fig. 15c. Furthermore, Lai et al. [114] proposed a dual-energy physics-informed multi-material topology optimization approach that integrates a dual-network deep-energy PINN with a phase-field formulation. This unified framework enables the construction of smooth material interfaces and high-quality multi-material layouts. Jeong et al. [115] enhanced PINN-based topology optimization by incorporating Fourier-feature embeddings and periodic activation functions, enabling robust optimization under strongly nonlinear, large-deformation hyperelastic behavior and improving convergence in geometrically nonlinear design scenarios. Yin et al. [116] proposed a discrete PINN (dPINN) framework that allows topology optimization in large-scale three-dimensional domains by using local mesh-based interpolation, enabling the treatment of problems with millions of degrees of freedom. These developments demonstrate that PINNs can be adapted to increasingly complex design settings that are difficult to handle using conventional solvers.

PINNs have also been applied to optimizing discontinuous or voxel-based structures, which are common in digital and additive manufacturing (AM) contexts. Zhang et al. [117] proposed a weak-form PINN formulation tailored to digital materials, enabling accurate treatment of sharp stiffness jumps without requiring explicit analytical sensitivities. Moreover, Zhao et al. [118] developed a PINN-based topology optimization framework using a deep energy method (DEM) and continuous adjoint sensitivity analysis evaluated by automatic differentiation, removing discretization errors and providing accurate solutions for both self-adjoint and non-self-adjoint optimization problems. These applications demonstrate that PINNs can naturally accommodate non-smooth material distributions and complex design spaces.

These studies show that PINN-based structural optimization is evolving into a versatile alternative to conventional FEM-driven design pipelines. Their ability to support solver-free or solver-accelerated workflows, reduce mesh dependence, and handle nonlinear, multi-material, and large-deformation problems highlights their promise for next-generation computational design. At the same time, these applications reveal recurring challenges related to training stability, scalability, and boundary enforcement, which have motivated the development of specialized formulations, adaptive strategies, and hybrid frameworks. These methodological advances are synthesized and discussed in Section 5.

4.4 Structural Integrity and Durability

Assessing structural integrity and durability is essential for ensuring the long-term performance and safety of engineering components, particularly under conditions involving damage initiation, crack propagation, and repeated cyclic loading. Recent developments in physics-informed learning provide a promising pathway, improving physical consistency, extrapolation capability, accuracy, interpretability, and data efficiency in structural integrity applications [119,120]. Within this context, fracture mechanics has emerged as a natural starting point, as understanding crack initiation and propagation forms the basis for predicting more complex durability phenomena such as fatigue.

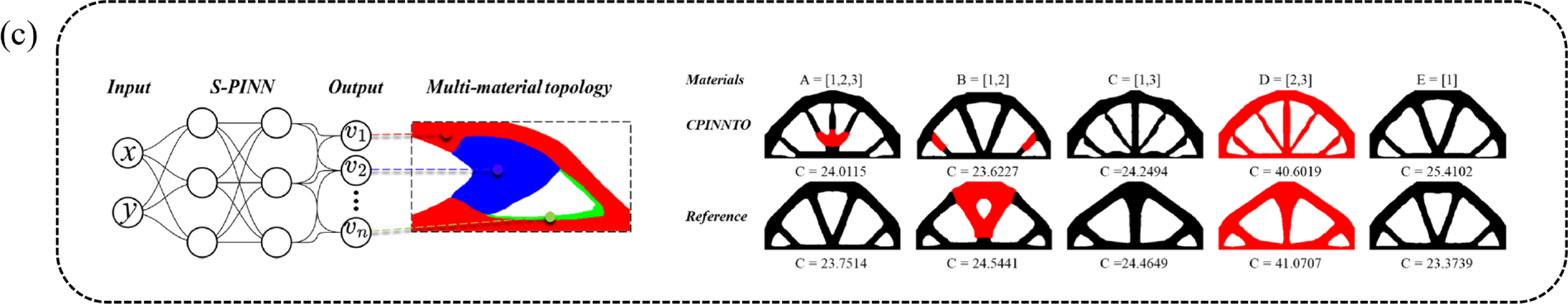

Fracture mechanics and crack modeling, characterized by crack propagation, discontinuities, and material failures in complex structures, present significant challenges that can be effectively addressed using PINNs. Gu et al. [121] developed an enriched PINN framework specifically designed for fracture mechanics applications. By incorporating crack-tip singularities and discontinuities directly into the model, the enriched PINNs accurately predicted stress intensity factors (SIFs), as illustrated in Fig. 16a. The framework is a mesh-free and efficient alternative for modeling complex fracture behaviors without the need for traditional meshing techniques. Ning et al. [122] introduced a peridynamic-informed neural network (PD-ENN) which combines peridynamics with neural networks to predict crack initiation and propagation in brittle materials. The PD-ENN was shown to accurately capture both crack paths and displacement fields, with high prediction accuracy in crack propagation patterns. Furthermore, the energy-informed neural network (EINN) was developed to model solids with cracks by leveraging nonlocal theories and energy principles [123,124]. The EINN framework integrates peridynamic theory, which enables it to handle discontinuous displacements and thermomechanical effects, providing robust modeling of crack propagation even under complex loading conditions. Guo and Song [125] proposed another PINN formulation that embeds the Airy stress function and linear elastic fracture mechanics (LEFM) formulations to recover SIFs and transverse stress directly from measurements. Their method demonstrates strong generalization across mixed-mode I/II/III loading, achieves high accuracy even under noise, and shows potential for real-time structural health monitoring applications.

Figure 16: Representative applications of PINNs in structural integrity and durability: (a) 2D in-plane crack analysis [121], (b) Structural damage identification [94], and (c) A PINN framework for fatigue life prediction [126].

Beyond crack propagation, physics-informed learning has also been applied to vibration-based damage detection and structural health monitoring. Wang et al. [94] proposed a physics-guided residual neural network (PhyResNet), shown in Fig. 16b, that integrates governing equations of structural dynamics into the learning process. Their framework significantly improves damage localization and quantification accuracy under noisy and data-scarce conditions. Similarly, Zhou and Xu [127] further contributed a baseline-free PINN method for damage detection in thin plates using measured flexural guided wavefields. By enforcing the Kirchhoff–Love plate equation, the trained network reconstructs a pseudo-pristine wavefield that represents the undamaged plate, enabling damage-induced anomalies to be isolated by comparing measured and reconstructed responses. The approach identifies damage locations through an energy-based index and demonstrates strong accuracy and noise robustness without requiring labeled data or prior baseline measurements.

While physics-informed learning has shown strong capability in modeling fracture initiation, crack propagation, and damage localization, ensuring structural integrity over an entire service lifetime also requires accurate prediction of fatigue behavior under repeated cyclic loading. Knowledge of fatigue life is fundamental to safety, reliability, and cost-effective design, especially in high-risk sectors such as aerospace, automotive, and maritime engineering, where components are subjected to long-term cyclic stresses. Reliable fatigue assessment supports material and design optimization, informed maintenance scheduling, and life-extension strategies, ultimately reducing costs and preventing unexpected failures. Building on advances in fracture modeling, PINNs have increasingly been adopted for fatigue analysis, offering a physics-consistent and data-efficient framework for predicting fatigue damage evolution and remaining useful life [128–132].

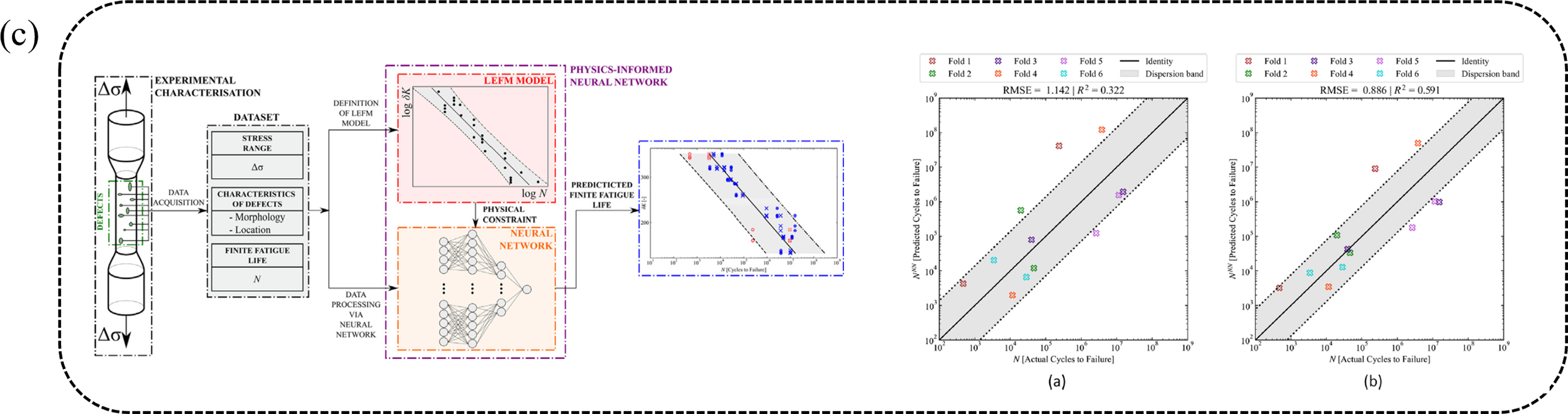

Several studies have applied PINNs to fatigue life prediction. A notable example is the use of PINNs to predict creep–fatigue life in 316 stainless steels at elevated temperatures [133]. By incorporating physical laws into the loss function, this method improves accuracy on small datasets and outperforms traditional machine learning and empirical models, demonstrating the advantage of physics-based feature integration. The prediction of fatigue life under mixed-mode loading conditions, a type of problem well-received in engineering components but difficult to model due to complex stress interactions was explored by Salvati et al. [126], as shown in Fig. 16c. The study detailed the formulation of a PINN that incorporates mixed-mode fracture mechanics into its architecture, allowing for more accurate predictions of fatigue crack growth paths than conventional models. Another study introduced a multi-fidelity PINN for fatigue life prediction using only a limited number of experimental samples [134]. By embedding physical models into activation functions, the network effectively handles data of varying fidelity, improving prediction accuracy and outperforming random forest (RF), support vector machine (SVM), and conventional neural networks when data are scarce.

Recent advances have broadened the applicability of PINN-based fatigue modeling across materials, manufacturing processes, and loading regimes. For AM alloys, Abiria et al. [135] developed a hybrid PINN (HPINN) that integrates data-driven artificial neural network (ANN) components with physics-based constraints derived from Basquin’s law, a modified Paris law, and a non-negativity condition encoded as activation functions. Designed for predicting high-cycle fatigue behavior under limited data, the HPINN was validated on AM Al-Mg4.5Mn and Ti-6Al-4V alloys and outperformed conventional ANN and PINN models, achieving predictions within a two-factor scatter band. Dang et al. [136] extended PINNs to pore-driven fatigue in laser-directed energy deposition (L-DED) Ti-6Al-4V, showing that incorporating defect morphology and microstructure significantly improves fatigue response modeling compared to regression-based methods. Feng et al. [137] introduced a probabilistic fatigue framework that couples PINNs with extreme-value statistics, generating fatigue-life distributions that capture uncertainty inherent in AM components. Wang et al. [138] further advanced multi-fidelity fatigue prediction, enabling accurate modeling of defect–fatigue interactions using combined low- and high-fidelity datasets.

These studies demonstrate that PINNs are becoming powerful tools for modeling fracture, damage evolution, and fatigue behavior in structural systems. Their ability to incorporate governing physics, handle sparse and noisy data, represent discontinuities, and quantify uncertainty makes them particularly attractive for safety-critical applications. At the same time, these applications reveal recurring challenges with training stability, discontinuity handling, and scalability, which have motivated the development of specialized formulations, adaptive strategies, and transfer learning. These methodological advances are consolidated and discussed in Section 5.

In the manufacturing sector, PINNs have gained increasing attention in AM [126,139,140], primarily because they link process-induced effects to the resulting mechanical behavior and structural integrity of solid components. Although AM inherently involves coupled phenomena, the PINN-based studies reviewed here are considered from a solid mechanics perspective, focusing on how fabrication-induced conditions influence porosity, residual stress, fatigue life, and the overall mechanical performance of printed parts. By embedding physical constraints into data-driven learning, PINNs provide an effective framework for evaluating these mechanical outcomes under limited or noisy data.

Beyond the fatigue life prediction challenges in AM materials discussed in the previous subsection [135–138], PINNs have been extended to assess defect formation and its impact on mechanical durability. In particular, the approach presented in [140] demonstrated that using simple physics-informed features from manufacturing conditions can predict porosity with accuracy comparable to more complex deep learning models, ultimately enhancing process control and quality in AM. Recent developments further reinforce the significance of physics-informed learning in porosity evaluation. Skiadopoulos et al. [141] introduced a transfer-learning PINN framework that estimates volumetric porosity and pore size in AM AlSi10Mg from ultrasonic data. The model is first pretrained on high-fidelity synthetic ultrasonic responses generated through finite element simulations and subsequently fine-tuned using a limited number of experimental measurements. By embedding scattering-based physical relations into the PINN formulation, the framework enables accurate defect quantification even under sparse labeled datasets.

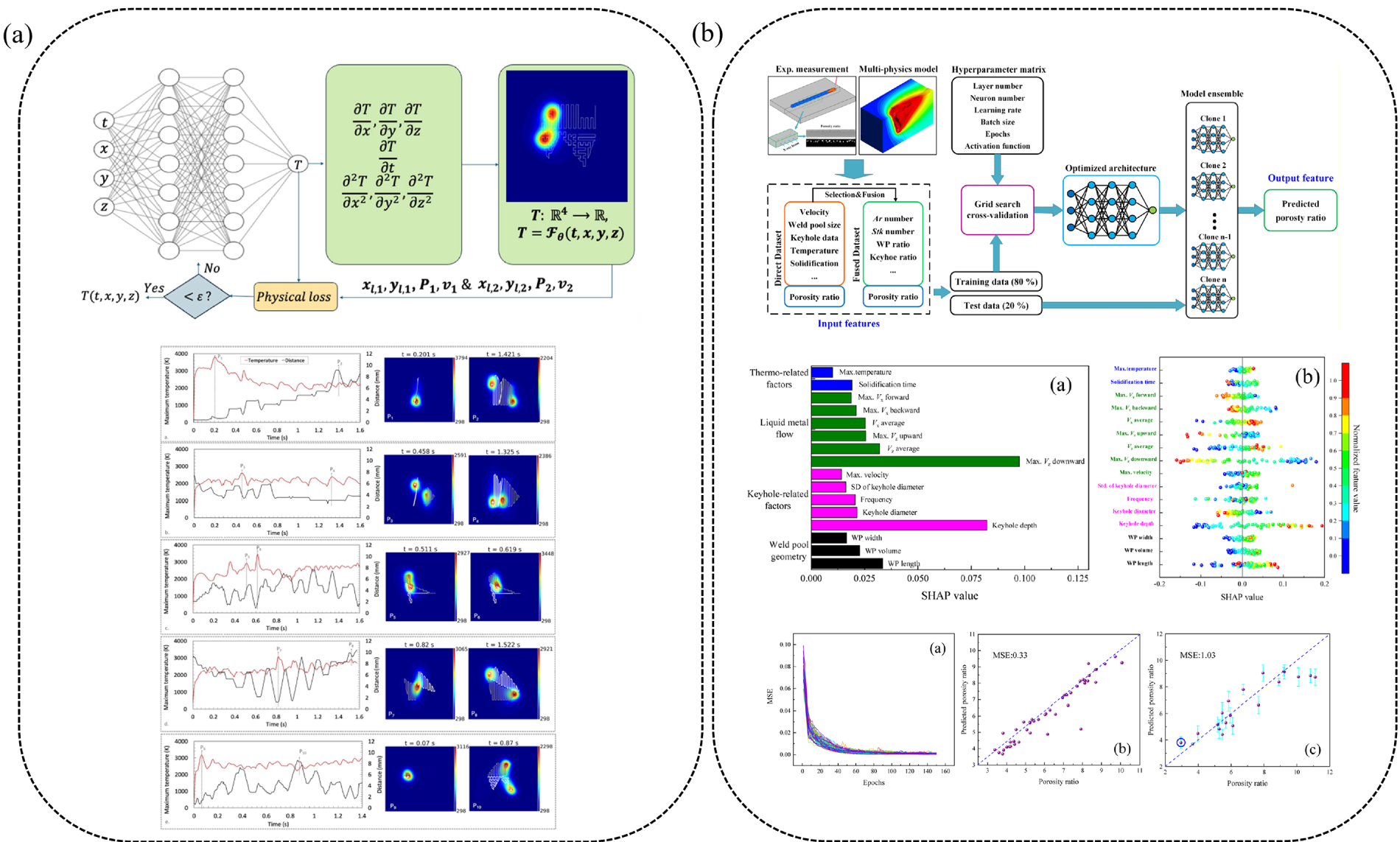

PINN-based models have also been explored as surrogates to support mechanical performance assessment influenced by manufacturing processes. Faegh et al. [142] presented a path-aware PINN framework for multi-laser metal AM that predicts temperature distributions across various part decompositions and scan-path strategies, as shown in Fig. 17a. By integrating thermal physics into a mesh-free PINN surrogate, this approach enables optimized laser path planning to achieve uniform thermal distributions, thereby reducing residual stress and distortion.

Figure 17: Representative applications of PINNs in manufacturing process: (a) Thermal field prediction [142] and (b) Porosity prediction [143].

A broader overview of physics-informed machine learning in AM is provided by Farrag et al. [144], who reviewed physics-informed approaches, including PINNs, for defect evaluation, parameter optimization, and quality monitoring. Their review highlights how physics-informed frameworks can bridge simulation-based knowledge and sparse experimental data to improve the generalizability, interpretability, and efficiency of AM process models.

Physics-informed learning has also been expanded to post-processing and joining techniques closely related to Zhao et al. [145] developed a physics-informed ANN for laser shock peening, accurately predicting residual stresses and microhardness by incorporating shock-wave attenuation mechanics into the model inputs, resulting in significantly higher accuracy than empirical or purely data-driven models. Similarly, Meng et al. [143] introduced a physics-informed deep learning framework for porosity prediction in laser beam welding of aluminum alloys, identifying the dominant physical factors governing defect formation and improving prediction accuracy compared with models relying solely on process parameters, as illustrated in Fig. 17b.

These studies demonstrate that PINNs and physics-informed machine learning are becoming essential tools in manufacturing-related solid mechanics. Their ability to predict fatigue life, characterize porosity and defects, model thermomechanical histories, and support process optimization highlights their value for next-generation digital manufacturing. At the same time, these applications reveal challenges with scalability, multiphysics coupling, and real-time deployment, which have motivated the development of specialized formulations, transfer-learning strategies, and hybrid solvers. These methodological advances are consolidated and discussed in Section 5.

5 Technical Advances in PINNs for Solid and Structural Mechanics

While Section 4 focused on how PINNs have been applied across different classes of solid and structural mechanics problems, this section consolidates methodological and algorithmic advances that generalize across these applications. Rather than isolated developments, these advances can be largely understood as targeted responses to recurring numerical and physical challenges specific to solid mechanics, such as high-order governing equations, localized fields, material heterogeneity, interface treatment, discontinuity handling, complex boundary conditions and geometries, measurement noise, and limited experimental data. By framing recent PINN developments around the specific problems, they aim to solve, this section highlights how physics-informed learning is evolving from a generic neural modeling paradigm into a specialized computational tool for solid and structural mechanics.

From this perspective, adaptive training strategies aim to resolve localized features such as stress concentrations and crack-tip singularities; advanced loss formulations and activation designs target stiff and multi-scale governing equations; domain decomposition methods enable accurate treatment of heterogeneity and interfaces; hard-constraint formulations improve boundary-condition enforcement; and multi-fidelity, transfer-learning, and FEM-integrated approaches address data scarcity, scalability, and industrial applicability. This problem-driven organization clarifies the functional role of each methodological advance.

5.1 Adaptive Training and Residual-Based Refinement

Many solid mechanics problems exhibit strong spatial localization, such as stress concentrations, crack-tip singularities, boundary layers, and sharp material interfaces. These features are difficult to resolve with uniform collocation strategies, often leading to slow convergence and poor accuracy in standard PINNs. To address this limitation, recent research has focused on adaptive training strategies that dynamically redistribute learning effort toward regions where the governing physics is most difficult to satisfy.

Instead of relying on fixed collocation point distributions and uniform loss weighting, adaptive methods identify regions with large residuals, typically corresponding to steep gradients, discontinuities, or highly nonlinear responses, and selectively refine the sampling density or increase the corresponding loss penalties [58]. By concentrating model capacity where it is most needed, these strategies significantly improve the resolution of localized phenomena and enhance stability in elasticity, fracture, and multiscale problems.

5.2 Loss Function and Activation Function Design

Governing equations in solid mechanics are often stiff, multi-scale, and dimensionally heterogeneous, leading to large imbalances among residual terms in standard PINN loss functions. These imbalances can cause training instability, slow convergence, and biased solutions. To address this issue, recent studies have focused on designing physics-aware, automatically balanced loss formulations.

A notable example is the least squares weighted residual (LSWR) loss proposed by Bai et al. [74], which introduces dimensionless scaling and weighted-residual integration to mitigate inconsistencies among physical terms. This approach has been shown to significantly improve the accuracy of displacement and stress predictions in two- and three-dimensional elasticity problems. Beyond loss formulation, the design of activation functions has also been shown to influence optimization stability. Zhang and Ding [146] demonstrated that adaptive combinations of smooth activation functions can accelerate convergence and improve solution accuracy across a range of linear and nonlinear solid mechanics problems. Together, these developments enhance the robustness of PINNs in stiffness-dominated and multi-scale settings.

5.3 Domain Decomposition and Subdomain Learning

Many solid mechanics problems involve strong material heterogeneity, multi-phase domains, sharp interfaces, and multi-scale behavior. In such settings, global neural representations often struggle to capture localized features without excessive network capacity. To address this limitation, domain decomposition and subdomain learning strategies have been introduced.

By partitioning the computational domain into subregions, each represented by a separate neural network, PINNs can learn localized behaviors such as wave interactions, interfacial effects, and sharp gradients while maintaining global consistency through interface constraints. This strategy has been shown to be effective in composite materials [83,100] and micromechanical systems [58]. More broadly, subdomain learning mitigates spectral bias and capacity limitations, making PINNs more suitable for large-scale and heterogeneous solid mechanics simulations.

5.4 Boundary-Condition Enforcement and Hard Constraints

In solid mechanics, small boundary errors can propagate into large inaccuracies in stress and strain, particularly in stiff or high-order systems. Traditional PINNs impose boundary conditions through soft penalty terms, which can cause boundary drift, slow convergence, or inaccurate stress recovery.

To mitigate this issue, hard-constraint formulations have been introduced, in which boundary conditions are enforced a priori through the construction of the trial solution rather than penalized during optimization. Distance-function-based approaches embed Dirichlet boundary conditions analytically into the neural network approximation by multiplying the network output with a geometry-aware distance function that vanishes exactly on the boundary, thereby eliminating the need for explicit penalty terms and significantly improving accuracy and training stability [53]. This strategy not only guarantees exact satisfaction of essential boundary conditions but also simplifies the loss function to focus exclusively on governing-equation residuals in the interior domain. Similarly, exact Dirichlet PINNs (EPINNs) enforce boundary conditions directly within the network architecture, reducing computational overhead and accelerating convergence [75]. The parametric extended PINN (P-XPINN) framework proposed by Cao and Wang [147] further combines hard constraints with subdomain decomposition, enabling robust handling of irregular geometries and mixed boundary conditions.

5.5 Multi-Fidelity Learning and Transfer Learning

Experimental data in solid mechanics are often sparse, noisy, or costly to acquire, particularly for long-term degradation, fatigue, and failure processes. This scarcity limits the applicability of purely data-driven or single-fidelity PINN models. To overcome this limitation, multi-fidelity and transfer-learning strategies have been developed.

Multi-fidelity PINNs combine inexpensive low-fidelity physics models with sparse high-fidelity data, significantly improving prediction accuracy while reducing computational cost [134,138]. Complementarily, transfer learning accelerates convergence by pretraining models on synthetic or simulation-generated datasets and subsequently fine-tuning them using limited experimental data. This paradigm has been demonstrated in porosity estimation for AM components [141] and long-duration structural dynamics analyses [148].

The effectiveness of transfer learning within PINN frameworks has been further demonstrated in solid mechanics applications [14,35]. In these approaches, PINN models are first trained on simplified scenarios that are easier to converge and then fine-tuned under more complex loading or geometric conditions that would otherwise be difficult to learn directly. By reusing previously learned physics-informed representations, transfer learning enhances robustness, improves data efficiency, and reduces training time in practical engineering problems with limited measurements. Collectively, these strategies enable PINNs to generalize across geometries, loading conditions, and materials while preserving physical consistency.

5.6 Hybridization with Finite Element Methods

Despite their flexibility, standalone PINNs often face challenges related to robustness, scalability, and industrial trust. For structural systems composed of multiple components, separate neural networks are typically required to represent individual structural members, while compatibility and equilibrium conditions must be enforced at joints [77]. When discontinuities arise at interfaces, such as jumps in internal forces, material properties, or stiffness, these discontinuities must be known or estimated a priori and explicitly enforced through additional loss terms. This requirement complicates training and often leads to slow convergence or reduced stability. Moreover, classical PINN formulations are most naturally defined for simple geometries, such as straight beams or plates, and may struggle to represent complex domains with internal boundaries, notches, or irregular topologies.

In contrast, conventional numerical methods such as FEM can naturally handle complex geometries, multiple components, and interface discontinuities through discretization and weak-form formulations, without requiring explicit prior knowledge of jump magnitudes. To bridge the gap between physics-informed learning and established engineering practice, recent research has increasingly focused on hybrid PINN–FEM frameworks, which aim to combine the geometric flexibility and numerical robustness of FEM with the data efficiency and interpretability of physics-informed learning [149–151].

Finite element–integrated neural network formulations embed weak-form discretization directly into the PINN architecture, enabling stable and accurate forward simulations [36] as well as efficient inverse identification of material properties [34] in both elastic and elastoplastic solids. More recent extensions incorporate transfer learning into the finite element–integrated neural network formulation, where pretrained forward models using coarse meshes are employed to initialize analyses on finer meshes, yielding substantially faster convergence and improved robustness [35]. These developments demonstrate that FEM-inspired formulations can significantly enhance the stability and scalability of PINNs while preserving their differentiable and physics-consistent structure.

A further limitation of classical PINNs is their difficulty in representing complex finite geometries, topological connectivity, and internal boundaries. To address these challenges, a recent hybrid approach termed Finite-PINN incorporates finite-domain geometric information through geometric encoding based on the eigenfunctions of the Laplace–Beltrami operator [152]. By augmenting Euclidean coordinates with topology-aware basis functions, this framework enables PINNs to more faithfully represent the geometry and connectivity of solid structures, improving robustness and convergence for problems involving notches, internal boundaries, and complex domains.

Inspired by variational principles in FEM, the parametric deep energy method (P-DEM) formulates PINN training as the minimization of total potential energy in a parametric reference domain mapped to the physical domain via NURBS-based geometry representations [153]. This approach enables accurate treatment of complex geometries, efficient numerical integration, and natural enforcement of Neumann boundary conditions, while producing smooth displacement fields suitable for higher-order continuum theories. Together, these geometry-aware and energy-based hybrid formulations alleviate key geometric and stability limitations of classical PINNs while preserving their mesh-free and solver-free characteristics.

Overall, hybrid PINN–FEM approaches provide a promising pathway toward industrial adoption by combining the numerical robustness, geometric fidelity, and weak-form stability of classical solvers with the flexibility and differentiability of physics-informed learning. These frameworks enable PINNs to move beyond simplified benchmark problems and toward realistic, large-scale engineering applications involving complex geometries, discontinuities, and heterogeneous materials.

Physics-informed neural networks have rapidly evolved from simple PDE-residual formulations into a diverse family of methods, including variational PINNs, enhanced constitutive networks, domain-decomposition strategies, multi-fidelity architectures, and hybrid FEM–PINN frameworks. Together, these developments substantially expand the scope of PINNs in solid and structural mechanics and are expected to further extend the applicability of physics-informed methodologies to nonlinear, multiscale, and data-scarce engineering problems.

Viewed collectively, these methodological advances can be interpreted as targeted solutions to the core numerical and physical challenges of solid and structural mechanics. Adaptive training resolves localization and singularities; balanced loss formulations improve stability in stiff and multi-scale systems; domain decomposition enables accurate treatment of heterogeneity and interfaces; hard-constraint formulations ensure physically consistent boundary enforcement; and multi-fidelity and FEM-integrated strategies address data scarcity, scalability, and engineering trust. This problem-driven evolution highlights how PINNs are transitioning from generic neural solvers into specialized, physics-aware computational tools for solid and structural mechanics.

Looking ahead, several promising opportunities emerge. A major research trajectory involves developing scalable PINN frameworks for large-scale 3D structural systems. Domain-decomposition strategies have shown strong potential for reducing computational costs and improving local accuracy, particularly in simulations characterized by steep gradients, material interfaces, or multi-region physics. When integrated with adaptive mesh refinement or data-driven subdomain partitioning, these strategies could enable efficient and robust modeling of full-scale engineering structures. Variational, weak-form, and FEM-integrated formulations are also expected to play an increasingly pivotal role in future computational mechanics. These approaches mitigate the need for high-order derivatives, improve numerical conditioning, and naturally incorporate constitutive models and finite-element operators. As a result, they offer a viable pathway toward industrial-scale solvers capable of addressing various complex problems without requiring dense collocation sets.

Another important direction is the development of task-specific PINN architectures tailored to key classes of problems in solid mechanics, such as multiscale homogenization, fracture and fatigue, structural health monitoring, and topology optimization. Existing studies show that when the architecture, feature embeddings, and loss design are carefully aligned with the governing physics, PINNs can effectively capture complex phenomena, such as size effects, strong material heterogeneity, large deformations, and defect-driven fatigue. Establishing systematic, domain-specific design principles, covering trial-space selection, regularization strategies, and boundary-condition enforcement, is likely to enhance reliability and reduce the current dependence on manual hyperparameter tuning.

A growing area of opportunity lies in the integration of physics-informed surrogates into digital twins, real-time monitoring, and decision-support systems. Surrogate PINNs trained on combined simulation data and sparse measurements [22,127] have already demonstrated strong capabilities in predicting stress, strain, and damage fields. When coupled with multi-fidelity and transfer-learning techniques, these surrogates can be rapidly adapted across different geometries, loading conditions, and materials, making them well-suited for structural integrity assessment, condition-based maintenance, and process control in manufacturing. Expanding research on uncertainty quantification and reliability highlights the increasingly important role of physics-informed learning in safety-critical applications. By embedding physics within the predictive model while providing calibrated uncertainty measures, these approaches offer a pathway toward trustworthy and certifiable surrogates suitable for design codes, reliability assessment, and risk-informed engineering decision making.

Despite these promising prospects, several fundamental challenges remain. The primary limitation is computational cost and scalability: training PINNs for large-scale 3D domains [154,155], long-time dynamics, or fine multiscale features remain expensive. Although domain decomposition, multi-fidelity training, and FE-integrated strategies mitigate some of these costs, further advances in parallelization, adaptive sampling, model reduction, and efficient automatic differentiation on modern hardware are still needed. Another critical challenge is training robustness and hyperparameter sensitivity. PINN performance strongly depends on architecture design, activation functions, loss formulation, and weighting between data and physics terms. While improved loss functions and adaptive strategies have enhanced robustness, no unified guidelines exist for selecting these components across different solid mechanics problems. Systematic weighting schemes, diagnostic tools, and automated training protocols remain important open areas. Handling discontinuities and localized phenomena constitutes another major challenge. Cracks, interfaces, contact, and sharp material transitions introduce non-smooth fields that classical PINNs struggle to represent. Moreover, experimental validation and standardized benchmarks remain limited. Many studies rely primarily on synthetic or small-scale examples; rigorous comparisons against high-fidelity FEM simulations and controlled experiments are critical for establishing trustworthiness and enabling adoption in engineering practice.

Recently, several efforts have been performed to combine PINNs with the mature FEM [34,36,150,152]. This approach offers several fruitful features to address several limitations of PINNs, e.g., analysis with irregular domains and enhanced computational costs. Furthermore, this approach also provides great potential for the incorporation of PINNs into commercial finite element packages. If this can be done, applications of PINNs are expected to be exponential since the bottleneck of computational costs can be resolved.

Furthermore, gradients of the loss function with respect to the trainable weights and biases are required if the neural networks are trained by gradient-based optimization algorithms. In this scenario, the automatic differentiation is widely employed owing to its precision. Unfortunately, automatic differentiation needs to be performed for every iteration, and this is a computational burden. Typically, the training process involves thousands of iterations, and if symbolic expressions of the gradients are obtained once at the initial stage of the training process, they can be reused for thousand times. The executing time for symbolic expressions is probably much shorter than that of performing automatic differentiation for thousands of iterations. Further endeavors are essential to explore the potential advantages of this approach.