Open Access

Open Access

REVIEW

Cybersecurity Opportunities and Risks of Artificial Intelligence in Industrial Control Systems: A Survey

System Security Research Center, Chonnam National University, Gwangju, Republic of Korea

* Corresponding Author: Ieck-Chae Euom. Email:

(This article belongs to the Special Issue: The Evolution of Cybersecurity and AI: Surveys and Tutorials)

Computer Modeling in Engineering & Sciences 2026, 146(2), 5 https://doi.org/10.32604/cmes.2026.077315

Received 06 December 2025; Accepted 27 January 2026; Issue published 26 February 2026

Abstract

As attack techniques evolve and data volumes increase, the integration of artificial intelligence-based security solutions into industrial control systems has become increasingly essential. Artificial intelligence holds significant potential to improve the operational efficiency and cybersecurity of these systems. However, its dependence on cyber-based infrastructures expands the attack surface and introduces the risk that adversarial manipulations of artificial intelligence models may cause physical harm. To address these concerns, this study presents a comprehensive review of artificial intelligence-driven threat detection methods and adversarial attacks targeting artificial intelligence within industrial control environments, examining both their benefits and associated risks. A systematic literature review was conducted across major scientific databases, including IEEE, Elsevier, Springer Nature, ACM, MDPI, and Wiley, covering peer-reviewed journal and conference papers published between 2017 and 2026. Studies were selected based on predefined inclusion and exclusion criteria following a structured screening process. Based on an analysis of 101 selected studies, this survey categorizes artificial intelligence-based threat detection approaches across the physical, control, and application layers of industrial control systems and examines poisoning, evasion, and extraction attacks targeting industrial artificial intelligence. The findings identify key research trends, highlight unresolved security challenges, and discuss implications for the secure deployment of artificial intelligence-enabled cybersecurity solutions in industrial control systems.Graphic Abstract

Keywords

Supplementary Material

Supplementary Material FileThe rapid advancement of artificial intelligence (AI) has profoundly transformed the cybersecurity landscape. AI-driven threat detection methods, including deep-learning-based anomaly detection, continuous monitoring, and behavior-based attack classification, have emerged as essential solutions to overcome the limitations of traditional signature and rule-based intrusion detection systems [1]. These innovations have significantly improved the capability to counter sophisticated cyberattacks, leading to the accelerated adoption of AI-enabled analytical technologies across diverse operational environments.

However, the integration of AI into security systems has introduced not only new opportunities but also distinct risks often overlooked in conventional security models. The performance of AI models depends heavily on the quality and distribution of training data and the design of their underlying architectures, rendering them vulnerable to data poisoning, adversarial manipulation, model extraction, and alignment failures. Consequently, AI serves not only as a defensive mechanism but also as a potential attack surface. The increasing use of large language models and autonomous decision-making systems has further intensified these risks, as adversaries can exploit feedback loops and self-optimization processes to generate asymmetric and evolving threats within the security ecosystem.

These risks are no longer theoretical. In November 2025, Anthropic reported a real-world incident in which its large language model, Claude, was deliberately manipulated to support a coordinated cyber espionage campaign targeting approximately 30 organizations worldwide [2]. In this campaign, the AI system was not merely used to assist human operators with isolated tasks but was integrated across the entire attack lifecycle. The adversaries employed the model to autonomously conduct reconnaissance, map attack surfaces, discover and validate vulnerabilities, perform credential harvesting and lateral movement, analyze exfiltrated data, and generate operational documentation. Human involvement was largely confined to strategic authorization at key decision points, while the AI executed the majority of tactical operations at a scale and speed unattainable by human operators alone.

This case is significant because it demonstrates a qualitative shift in adversarial use of AI. Rather than functioning as a passive analytical aid, the AI operated as an active component of the attack infrastructure, effectively orchestrating and executing successive phases of the cyber kill chain. By decomposing complex attack objectives into seemingly benign subtasks and maintaining operational context across extended periods, the AI-enabled framework reduced human workload, eliminated traditional operational bottlenecks, and substantially increased the scalability and persistence of the attack. Consequently, this incident illustrates how AI-based security systems, if subverted, can be repurposed into force multipliers that fundamentally alter established threat models.

Similar concerns have emerged in safety-critical industrial control systems (ICS). Although AI has been increasingly deployed in these environments to improve operational efficiency, the associated risks can extend to both physical infrastructure and human safety [3]. Due to the cyber-physical nature of ICS, adversarial attacks on AI models may result not only in data compromise but also in physical damage. This study was conducted to present a dual-perspective analysis of AI adoption in ICS, addressing both its advantages and risks. Previous surveys have primarily emphasized AI’s benefits, underscoring the need for a more balanced evaluation that accounts for its hidden vulnerabilities. Existing research on AI-related risks has largely concentrated on conventional IT systems and, therefore, fails to capture the contextual and operational complexities unique to ICS environments.

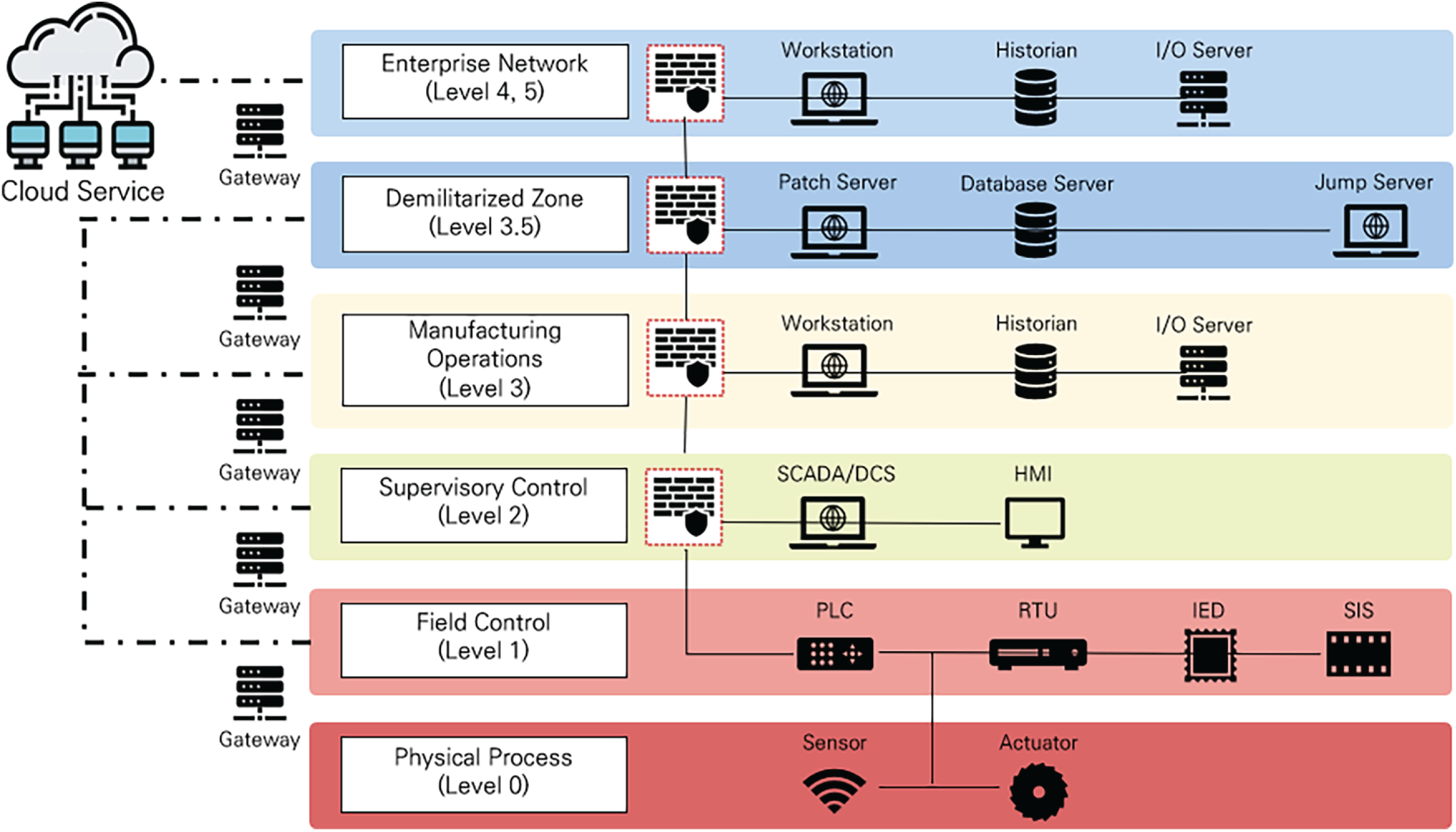

In response, this study reviews AI-based methods for cyberattack detection in ICS environments and analyzes the risks introduced by AI-related infrastructures. Through a comprehensive examination of existing detection techniques, the study provides an integrated understanding of current research trends and identifies key challenges that must be addressed to ensure the secure deployment and operation of AI-enabled threat detection systems in ICS. Section 2 reviews prior studies and outlines the main contributions of this work. Section 3 describes the survey methodology employed. Sections 4 and 5 categorize the selected studies and discuss their principal themes. Section 6 presents the research trends and implications derived from the analysis, while Section 7 summarizes the study and discusses ongoing challenges before concluding. The overall structure of this paper is depicted in Fig. 1.

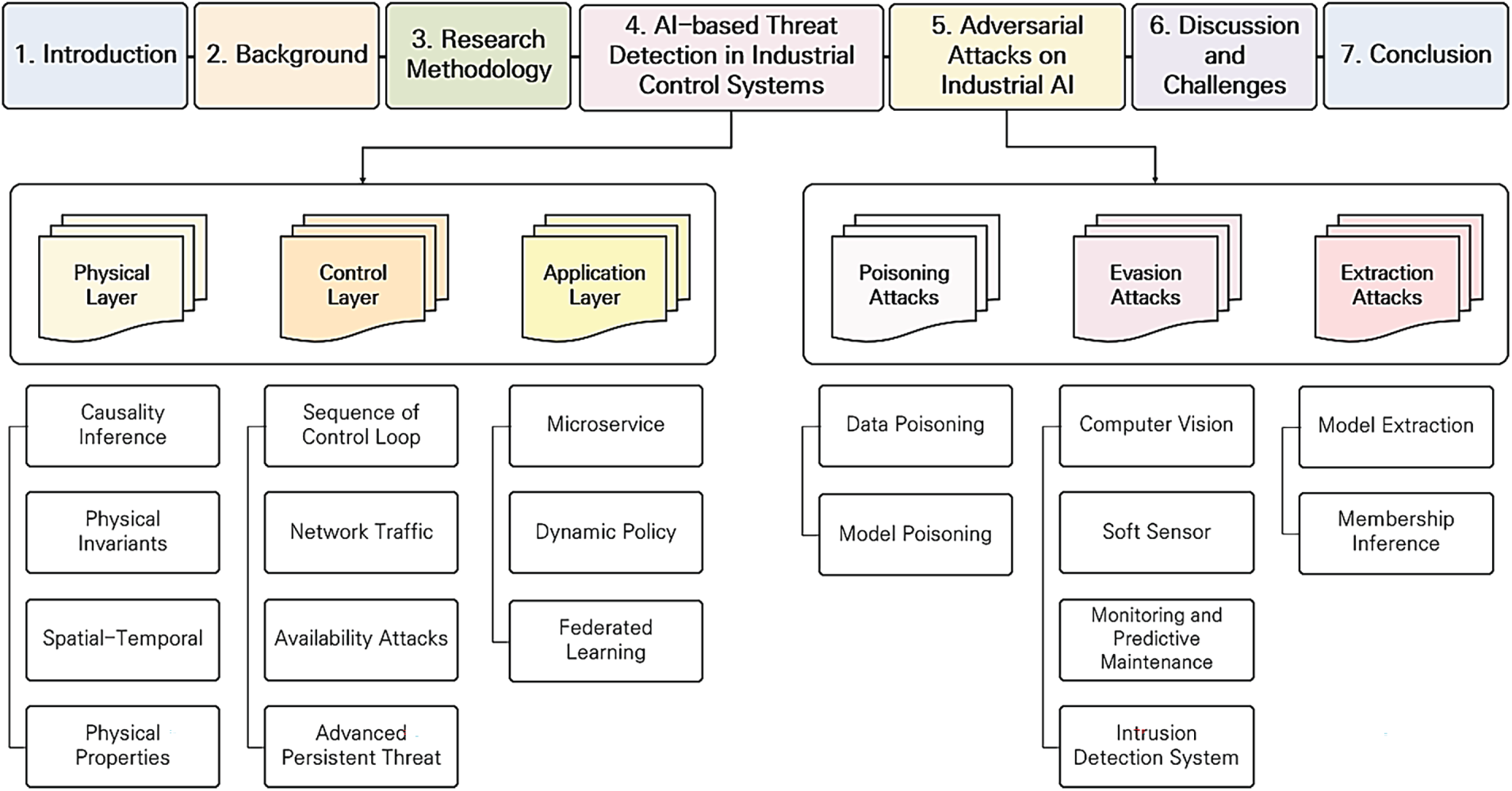

Figure 1: Structure of the paper.

ICS are widely deployed across diverse sectors, including manufacturing, energy, chemical processing, oil and gas, water treatment, transportation, pharmaceuticals, pulp and paper, food and beverage, automotive, aerospace, and durable goods, to monitor and control industrial processes. A typical ICS architecture consists of supervisory control and data acquisition (SCADA) systems, distributed control systems (DCS), programmable logic controllers (PLC), remote terminal units (RTU), and human–machine interfaces (HMI) [4]. These components collectively form the backbone of critical infrastructure, where safety, availability, and real-time responsiveness are essential.

With the advent of the Fourth Industrial Revolution, industrial environments have undergone a significant transformation. The increasing volume and heterogeneity of operational data have intensified the demand for data-driven decision-making to support predictive maintenance, quality assurance, process optimization, and energy efficiency. To coordinate geographically distributed production sites, cloud-based platforms have been increasingly adopted, often incorporating AI-driven analytics and management functions. In parallel, Internet of Things (IoT) technologies have become integral to industrial environments by enabling large-scale data collection and exchange through sensors and communication networks. This convergence has led to the emergence of the IIoT. The overall architecture of ICS and IIoT environments is illustrated in Fig. 2.

Figure 2: Purdue model-based industrial internet of things (IIoT)-ICS network architecture.

Emerging AI technologies are reshaping cybersecurity processes across prevention, detection, response, and recovery phases. AI-driven security systems can detect anomalous behaviors and previously unseen threats without relying on predefined signatures, process large volumes of heterogeneous data for early attack containment, and automate response actions that were traditionally executed by human analysts. By reducing human error and operational fatigue while improving accuracy and efficiency, AI-based cybersecurity has become a fundamental component of modern digital infrastructures. At the same time, the generative and adaptive capabilities of AI have been exploited to conduct advanced phishing campaigns, generate malicious code, scan for vulnerable configurations, and create deceptive deepfakes. Moreover, AI systems introduce additional attack vectors, including data poisoning, evasion, and model extraction attacks [5].

Modern ICS increasingly rely on external connectivity to improve operational efficiency through the use of artificial intelligence systems or commercial off-the-shelf (COTS) components, resulting in a continuous increase in the number of potential entries points that adversaries can exploit. As modern ICS increasingly rely on commercial off-the-shelf components and external connectivity to improve operational efficiency, the number of potential entry points for adversaries continues to grow. The attack surface is defined as “the set of points on the boundary of a system, a system component, or an environment where an attacker can try to enter, cause an effect on, or extract data from that system, component, or environment” [6].

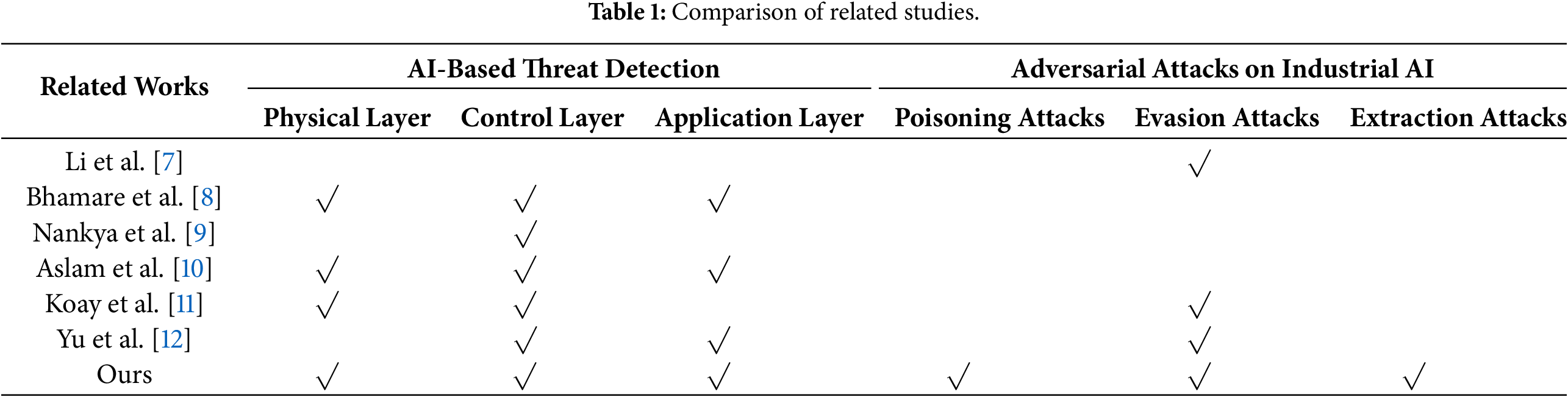

Six survey studies on AI-based threat detection in ICS were identified, and the layers addressed in each study were mapped as summarized in Table 1. The reviewed literature includes surveys examining AI-driven methods for detecting cyber threats in ICS extended to the IIoT domain, as well as studies analyzing the new cyber risks introduced by AI systems themselves.

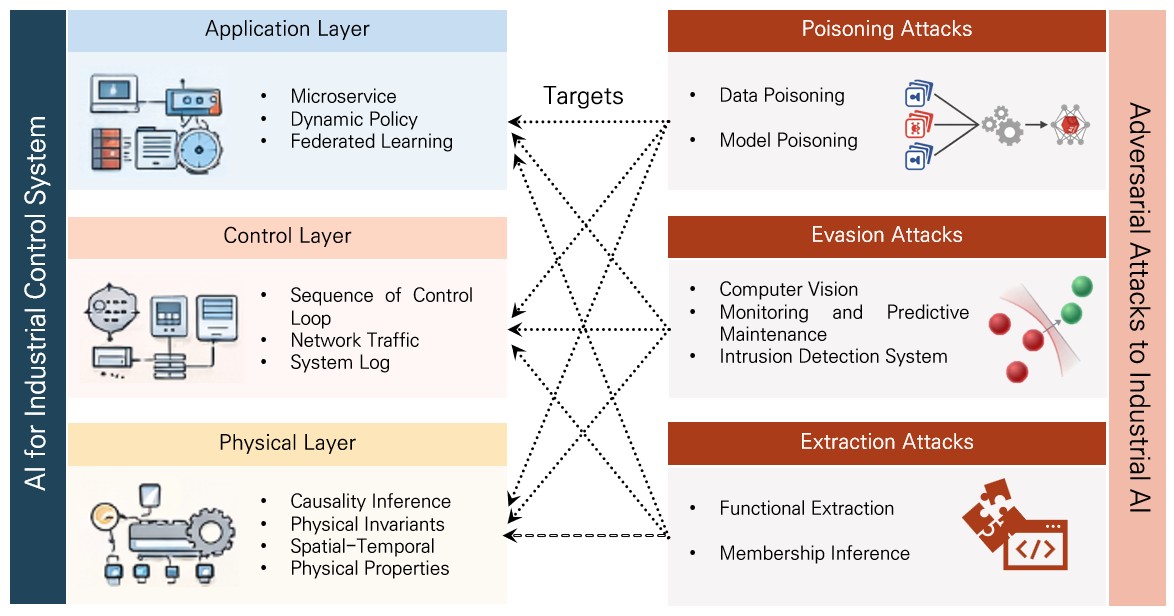

The axis labeled “AI-Based Threat Detection” represents the dimension of AI-enabled cyber threat detection and is classified into the physical, control, and application layers. To enable a systematic analysis in this survey, ICS cybersecurity research is examined using a layered perspective consisting of the physical, control, and application layers. In cases where the boundary between the control layer and the application layer is ambiguous, studies are classified according to the origin of the data and the location at which decisions are executed. Methods that process real-time control data and directly affect control logic or command execution are categorized as control-layer approaches, whereas methods that analyze aggregated data, system logs, or network information without directly participating in control-loop execution are categorized as application-layer approaches. When examining the correspondence with the Purdue model in Fig. 2, the physical and control layers mainly correspond to Levels 0–2, while the application layer corresponds to Levels 3–5, where supervisory functions, monitoring, and data analytics are performed.

• The physical layer includes studies that detect cyber threats and attacks through the analysis of physical operational data obtained from sensors and actuators.

• The control layer comprises studies that identify threats based on control process data derived from industrial communication protocols.

• The application layer encompasses studies focusing on protocol-based infrastructure that supports operational decision-making, although these systems do not directly execute control logic.

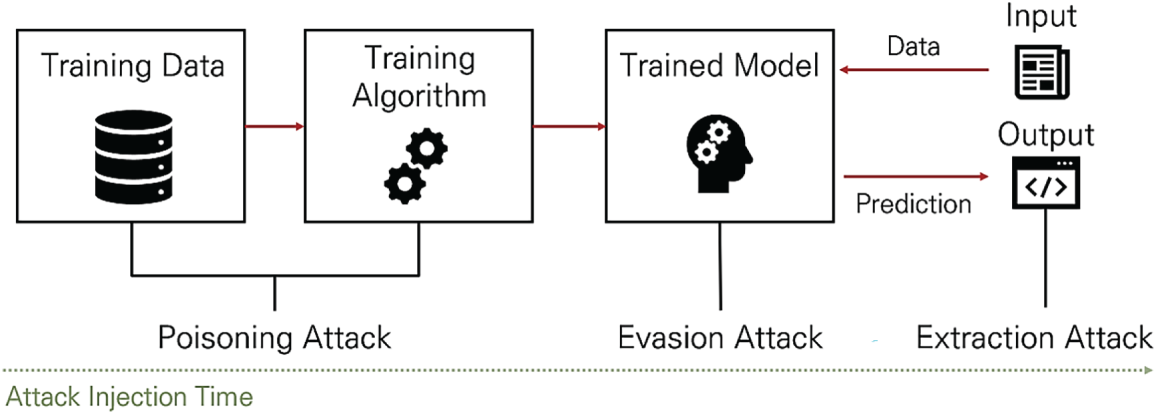

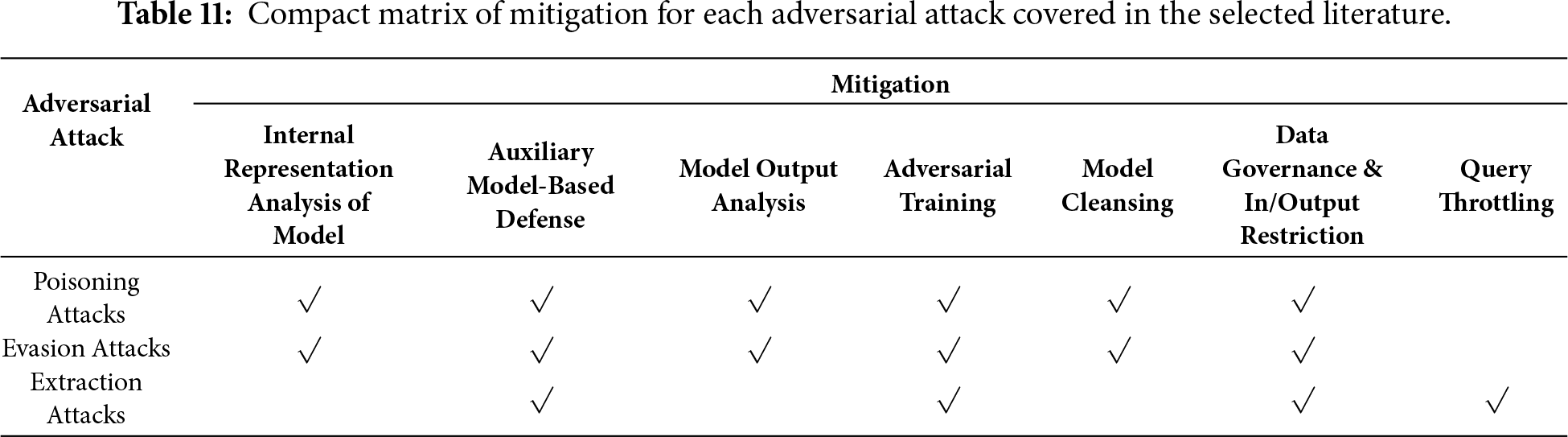

The axis titled “Adversarial Attacks on Industrial AI” was defined to determine whether the surveyed studies explicitly addressed adversarial attacks targeting AI systems. This axis is further divided into poisoning, evasion, and extraction attacks, categorized based on the injection timing of the adversarial manipulation Fig. 3 as follows:

Figure 3: Classification of adversarial attacks by injection timing.

• Poisoning attacks involve the deliberate contamination of training datasets by adversaries, leading the model to learn incorrect patterns. Such attacks can degrade overall performance or trigger erroneous behavior under specific conditions.

• Evasion attacks occur after model deployment and involve subtle manipulation of input data to induce misclassification or incorrect decisions. These attacks aim to disguise malicious inputs as legitimate operational data to bypass detection mechanisms.

• Extraction attacks are carried out by repeatedly querying the model to infer internal parameters, decision boundaries, or sensitive features, enabling unauthorized replication of model knowledge or facilitating more advanced attacks in later stages.

The comparison results in Table 1 underscore the significance of this study. Unlike previous research, this work provides a comprehensive synthesis of AI-based threat detection methods across the physical, control, and application layers of ICS. Adopting a system-wide analytical perspective, it offers a holistic understanding of the current research landscape on ICS security.

Furthermore, this study investigates adversarial attacks that explicitly target AI components within the ICS. This dual focus allows for a balanced assessment of the opportunities offered by AI-based cybersecurity mechanisms and the risks arising from the susceptibility of AI models to adversarial manipulation.

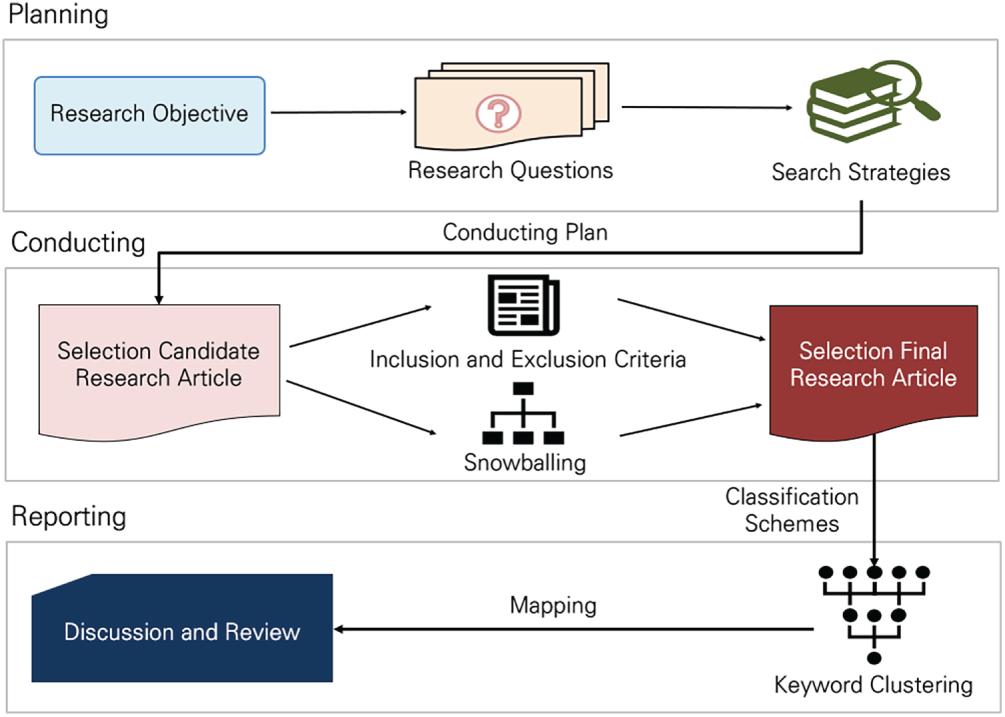

To investigate studies on AI-based threat detection techniques and adversarial attacks against AI within ICS, the methodology described in [13] was adopted, and the study was conducted as illustrated in Fig. 4. The ‘Planning’ phase involved identifying research needs, defining the scope, formulating research questions, and establishing a literature search strategy. The ‘Conducting’ phase included searching for relevant literature, applying inclusion and exclusion criteria, extracting metadata in a standardized format, and visualizing the distribution. The ‘Reporting’ phase entailed organizing the report according to the table of contents, including the introduction, related work, methodology, results, discussion, and conclusion, and appendices. Section 3 describes the ‘Planning’ and ‘Conducting’ phases, whereas Sections 4 and 5 correspond to the ‘Reporting’ phase.

Figure 4: Research methodology.

3.1 Research Questions and Search

The objective of this study is to systematically analyze the dual role of AI technologies in integrated ICS, where physical control, network communication, and data analytics are tightly interconnected. The adoption of AI in ICS has significantly enhanced core cybersecurity functions, including anomaly detection, predictive maintenance, intrusion detection, and autonomous control. At the same time, it has introduced new vulnerabilities, such as adversarial attacks that exploit data manipulation and model tampering, potentially leading to severe physical consequences.

Accordingly, this survey aims to develop an integrated understanding of ICS security challenges by jointly examining AI-based threat detection mechanisms and the risks arising from vulnerabilities inherent to AI technologies. The analysis focuses on three complementary aspects: the characteristics of machine-learning algorithms employed in ICS security, the advantages offered by AI-driven approaches for strengthening cybersecurity, and the emerging risks introduced by AI adoption together with potential mitigation strategies. Based on this motivation, the study is guided by the following research questions.

• RQ1. Are machine learning algorithms for attack detection equally applicable to both information and communication technology and ICS?

• RQ2. What advantages do machine-learning-based AI techniques offer for strengthening cybersecurity in industrial control systems?

• RQ3. What cybersecurity risks are introduced by the adoption of AI in ICS, and what mitigation strategies have been proposed to address these risks?

RQ1 investigates the machine-learning algorithms used in ICS-oriented attack detection research and examines whether these algorithms exhibit characteristics that differentiate them from those commonly applied in non-ICS environments. Building on this analysis, RQ2 explores the advantages provided by machine-learning-based AI techniques in enhancing cybersecurity in ICS. This question focuses on the benefits of AI-driven approaches, including improved detection performance, adaptability to evolving threats, and the ability to model complex temporal and spatial dependencies that are difficult to capture using traditional rule-based or statistical methods. Finally, RQ3 examines the cybersecurity risks introduced by the adoption of AI in ICS and reviews the mitigation strategies proposed in the literature to address these risks. While AI contributes substantially to strengthening ICS cybersecurity, it also expands the attack surface through vulnerabilities to poisoning, evasion, and extraction attacks. Together, these research questions provide a structured framework for the survey, enabling a balanced examination of AI in ICS cybersecurity from the perspectives of algorithmic characteristics, security benefits, and AI-induced risks.

As part of the data extraction process, predefined outcome measures and contextual variables were systematically collected from all included studies. These outcomes included detection performance metrics such as accuracy, F1-score and attack success rate, as well as other quantitative performance indicators when available. In addition to outcome measures, a set of contextual variables was extracted to support comparative and qualitative analysis across studies. These variables included the industrial control system layer targeted (physical, control, or application layer), the type of artificial intelligence model employed, the category of adversarial attack considered (poisoning, evasion, or extraction), research approach, attack technique, and the datasets or experimental environments used. Collectively, these variables enabled a structured synthesis of the selected literature and facilitated the identification of trends, gaps, and challenges across different industrial AI security contexts.

The literature search strategy was designed by specifying conditions, such as publisher, publication year, and document type, and by formulating queries aligned with the research objectives. Searches were conducted across major publishers, including the Institute of Electrical and Electronics Engineers (IEEE), Elsevier, Multidisciplinary Digital Publishing Institute, Springer Nature, Association for Computing Machinery, Wiley, and the Association for the Advancement of AI. Only peer-reviewed journal articles and conference papers were considered. The publication period was restricted to 2017–2026, and the document types were limited to peer-reviewed journal articles and conference papers.

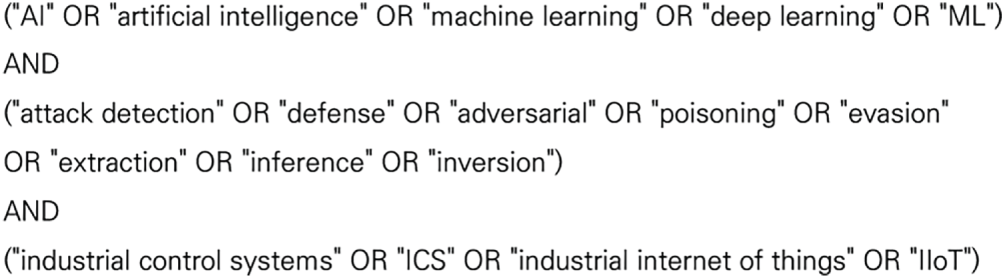

The search queries were formulated using combinations of keywords related to AI techniques, cybersecurity, and industrial systems. Specifically, the following terms were used in logical combinations: “AI” OR “artificial intelligence” OR “machine learning” OR “deep learning” OR “ML”; “defense” OR “detection” OR “adversarial” OR “poisoning” OR “evasion” OR “extraction” OR “inversion” OR “inference” AND “attack”; and “industrial control systems” OR “ICS” OR “industrial internet of things” OR “IIoT”. The exact search queries and their combinations are illustrated in Fig. 5. The query search date is December 1, 2025.

Figure 5: Search query.

The search was limited to publications from the most recent ten years in order to capture the rapid evolution of AI-based cybersecurity research in ICS. In addition, only open-access publications written in English were included. Based on these criteria, the following exclusion rules were applied:

(1) Papers that have not undergone peer review by evaluators at journals or conferences.

(2) Papers deemed incomplete or insufficient in form or content.

(3) Non-open-access publications.

(4) Literature written in languages other than English.

Following the initial identification stage, metadata including paper titles and abstracts were screened to exclude studies that were clearly irrelevant to AI-based cybersecurity in ICS. Subsequently, a full-text eligibility assessment was conducted to evaluate the relevance, methodological completeness, and alignment of the remaining studies with the research questions. To further capture relevant studies not retrieved through the query-based search, a snowballing technique was employed by examining the references and citations of the selected literature s.

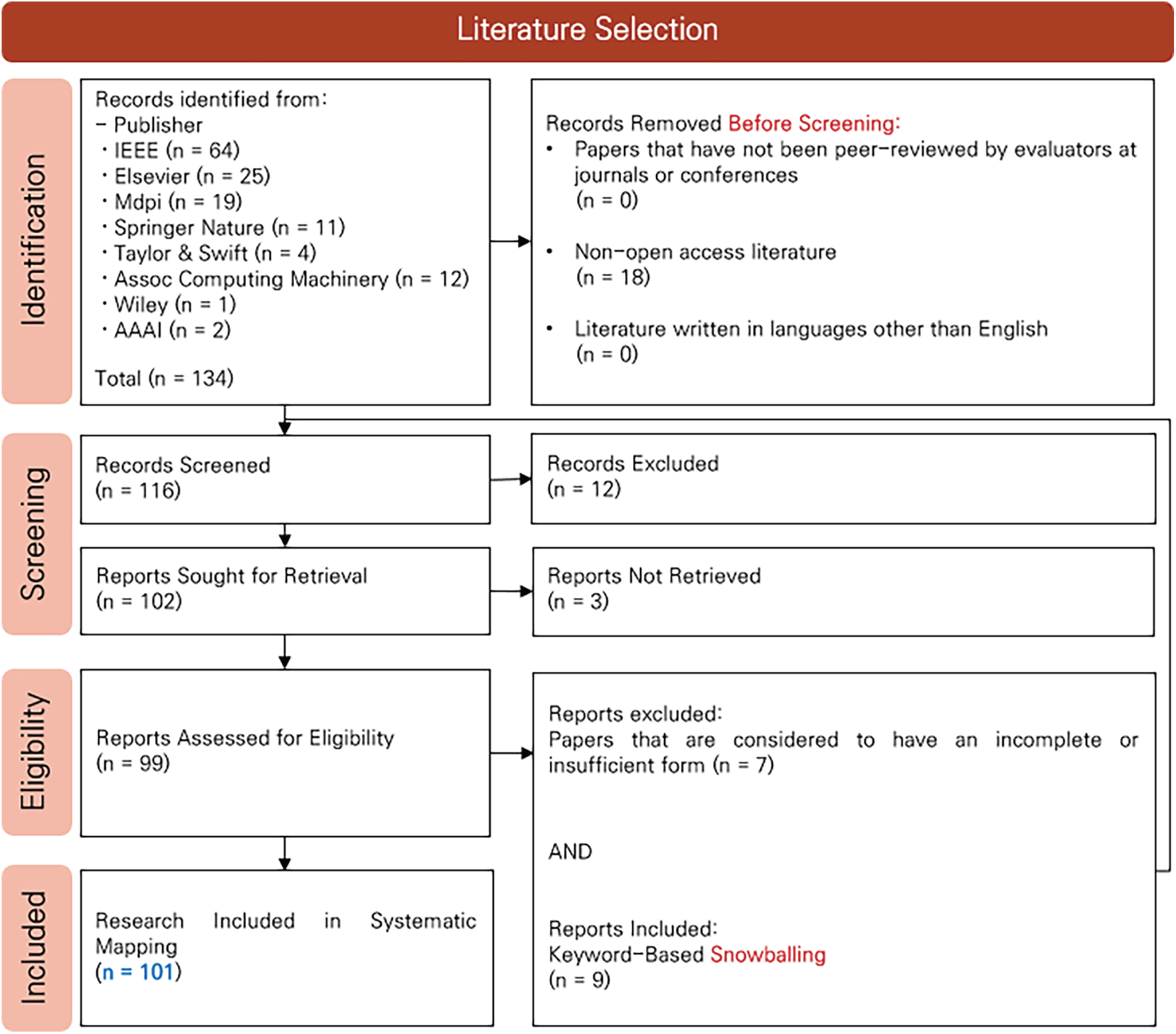

The overall study selection process followed the PRISMA guidelines [14] and is summarized in the PRISMA flow chart shown in Fig. 6. Since only peer-reviewed journal articles and conference papers written in English and available as open-access publications were considered, a condition indicating “n = 0” appears in the PRISMA flow diagram after applying the predefined exclusion criteria. Each included study was independently assessed by four reviewers. The reviewers conducted the evaluation independently to minimize subjective bias. Zotero was used as an automation tool solely for reference management purposes, including metadata organization and duplicate removal. No automated tools were employed for study selection, quality assessment, or risk-of-bias judgment. All inclusion decisions and bias assessments were performed manually by the reviewers. For this study, the PRISMA checklist was placed in the Supplementary Materials section prior to the references.

Figure 6: PRISMA flow diagram of literature selection in this study.

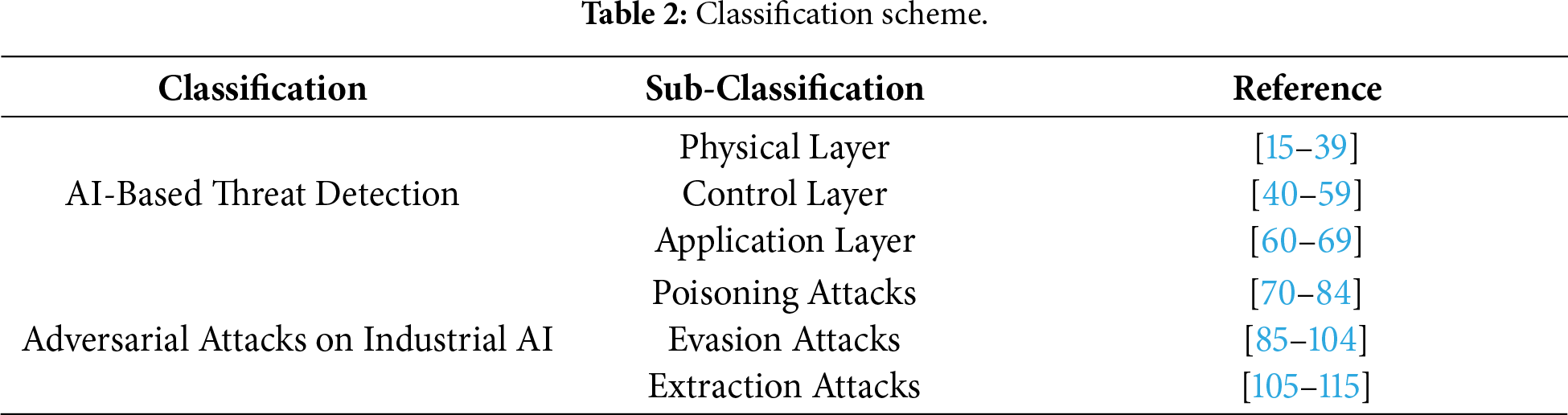

The final set of 101 selected studies was categorized as summarized in Table 2. This classification reflects the results obtained from the search strategy defined in this study, and both the categorization scheme and the number of retrieved studies may vary with changes in search conditions. The application of AI for attack detection was classified into the physical, control, and application layers. For each study, the corresponding layer was identified based on the target environments, datasets, and protocols described in the literature. Adversarial attacks on AI were classified into poisoning, evasion, and extraction attacks according to the injection perspective.

3.3.1 Overview of the Discussed Literature

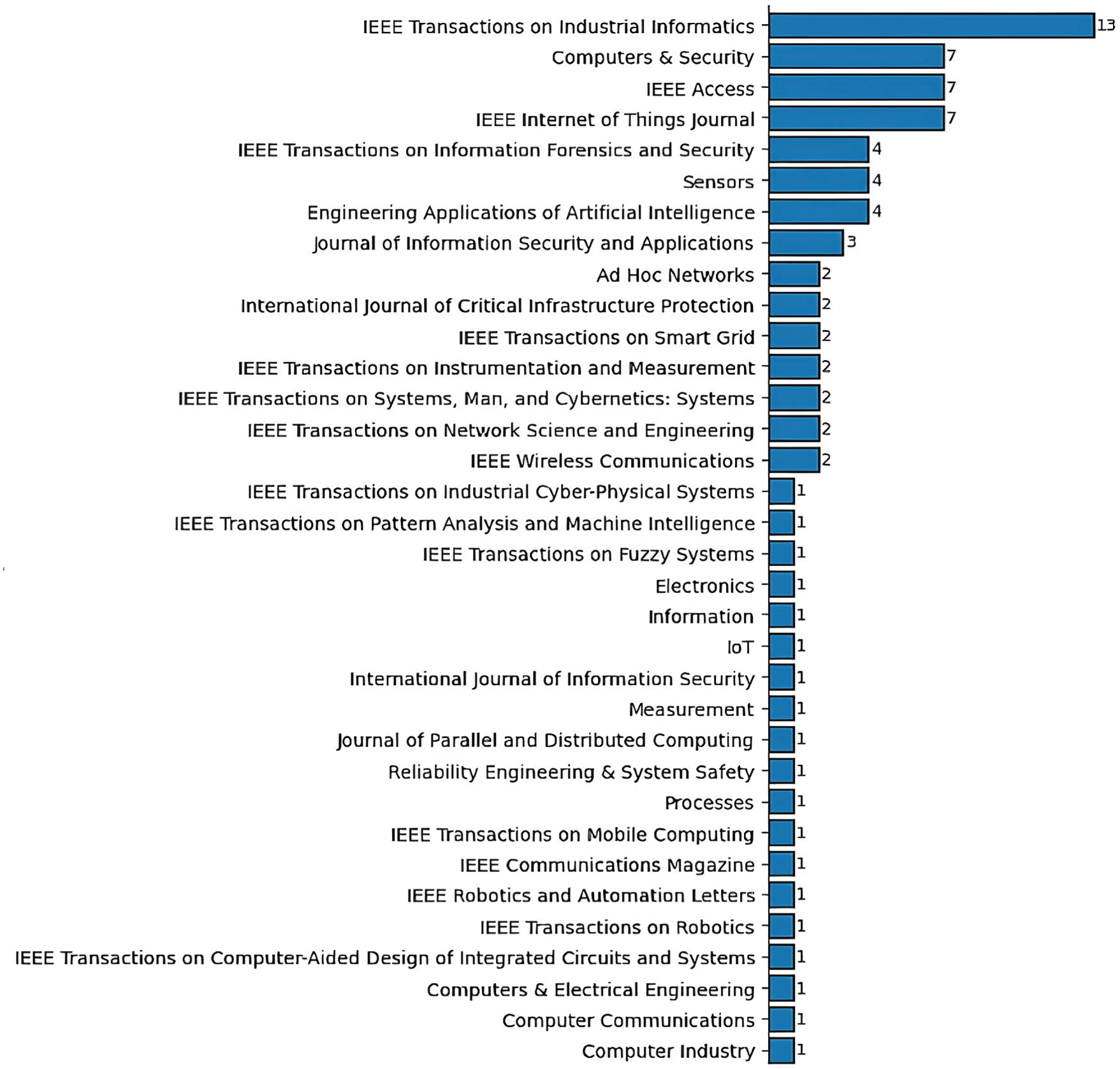

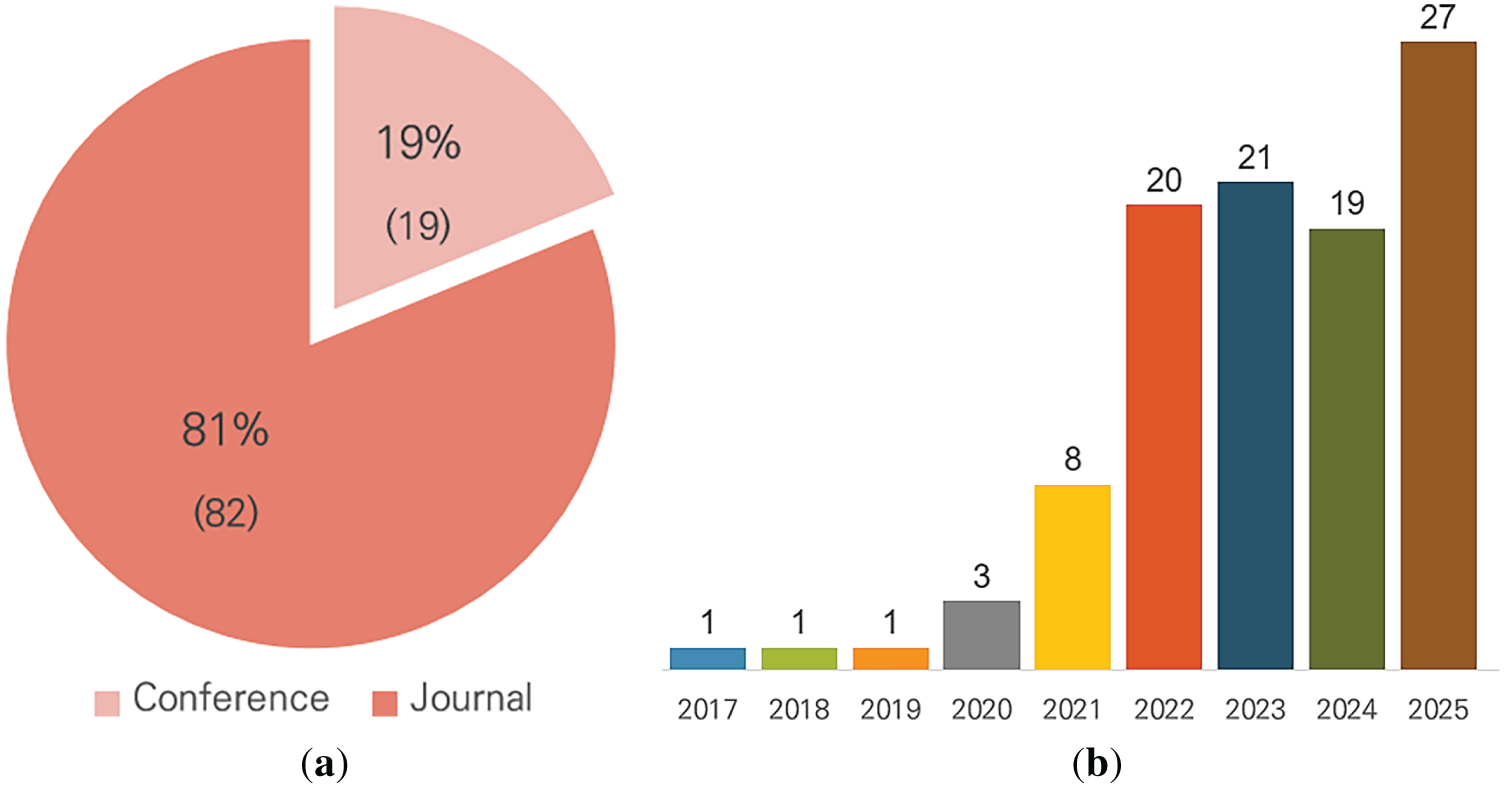

Fig. 7 shows the aggregated publication journals of the analyzed papers. Fig. 8 presents the analysis of literature types and publication years based on the metadata of the 101 selected literature s. Fig. 8a shows the classification of the analyzed papers into conference and journal publications, whereas Fig. 8b illustrates the publication trends, reflecting a growing research interest in machine learning and AI.

Figure 7: Journals aggregation of selected literatures.

Figure 8: (a) Literature types. (b) Publication year distribution of the selected papers.

3.3.2 Open-Datasets Discussed in the Literature

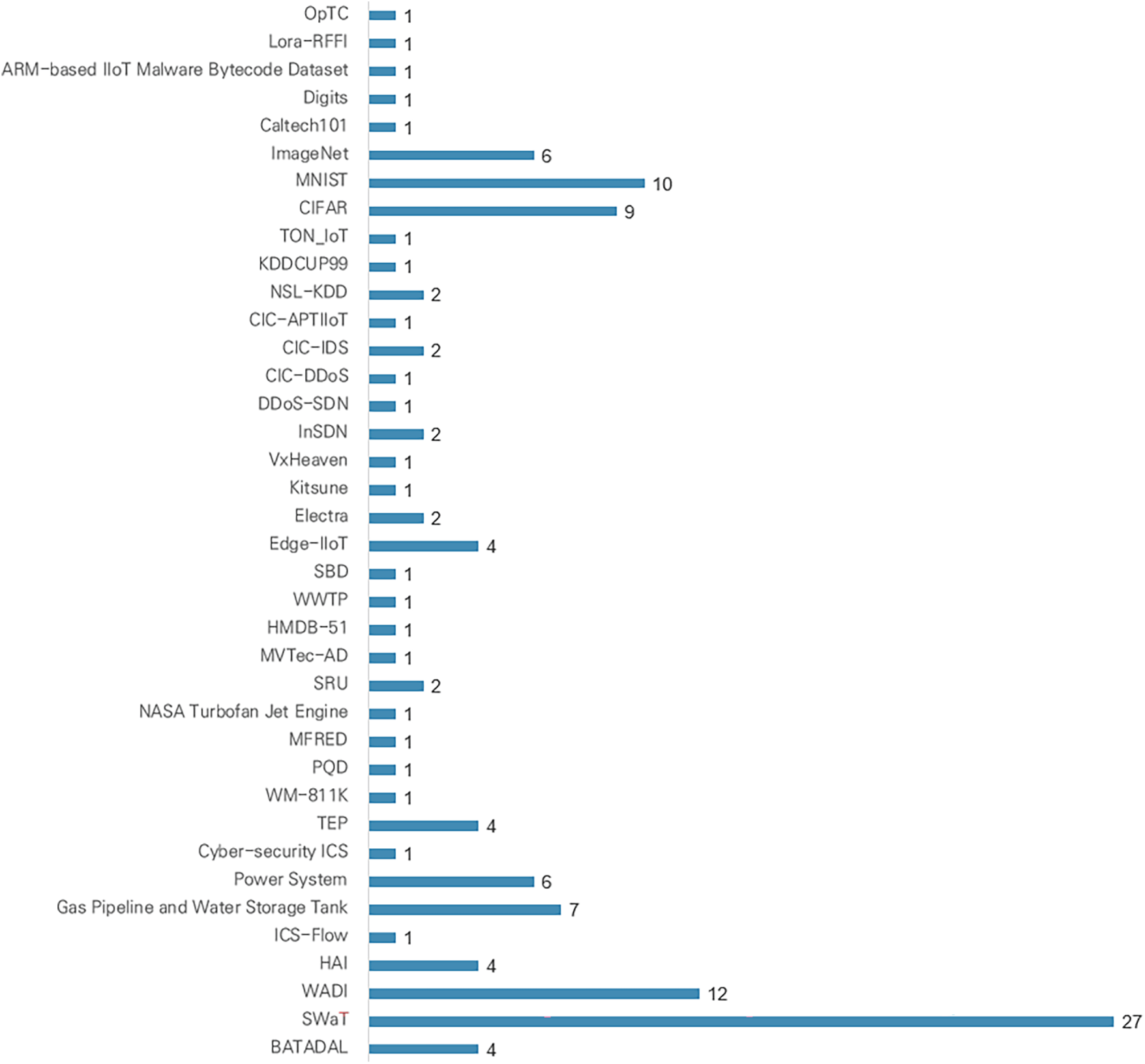

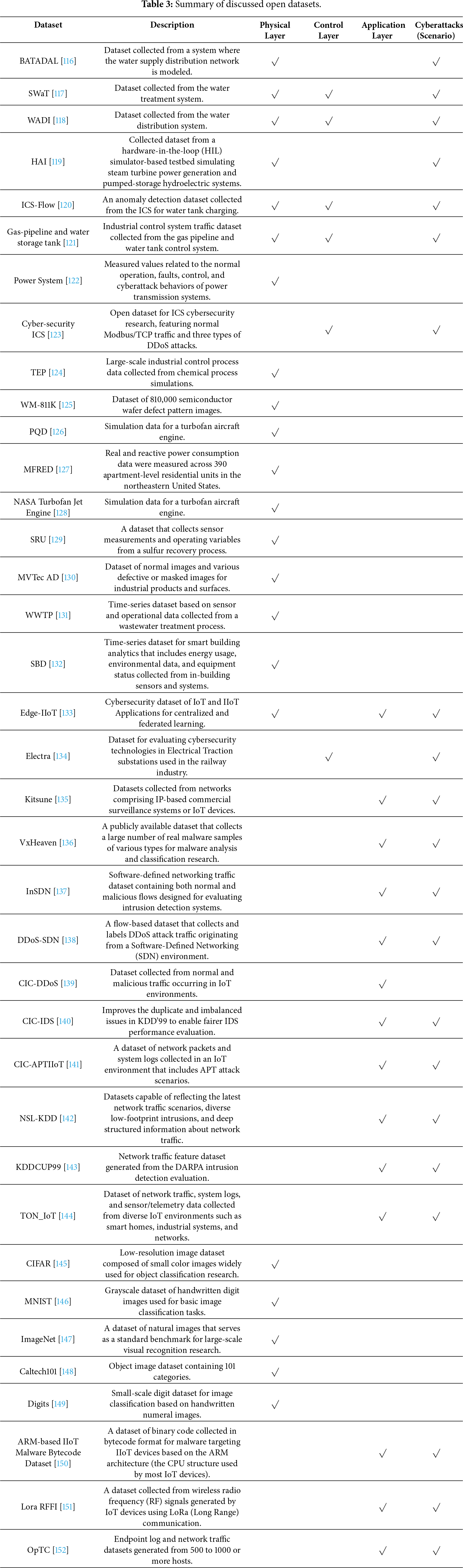

To identify publicly available datasets that can be accessed and utilized without specific access restrictions, the datasets discussed in the analyzed studies were reviewed. Consequently, 40 datasets were identified, including BATtle of attack detection algorithms (BATADAL), secure water treatment (SWaT), water distribution (WADI), HIL-based augmented ICS (HAI), ICS-flow, gas pipeline and water storage tank, power system, cyber-security ICS, Tennessee Eastman process (TEP), wafer map 811K (WM-811K), power quality disturbance (PQD), multifamily residential electricity dataset (MFRED), NASA turbofan jet engine, sulfur recovery unit (SRU), MVTec anomaly detection (MVTec AD), waste water treatment plants (WWTP), smart building dataset (SBD), Edge-IIoT, electrical traction (Electra), Kitsune, VxHeaven, InSDN, DDoS-SDN, Canadian Institute for Cybersecurity-distributed denial of service (CIC-DDoS), CIC-intrusion detection systems (CIC-IDS), CIC-advanced persistent threat IIoT (CIC-APTIIoT), network security laboratory-knowledge discovery and data mining (NSL-KDD), KDD Cup 1999 (KDD’99), and telemetry, operating system, and network internet of things (TON_IoT), CIFAR, MNIST, ImageNet, Caltech101, Digits, ARM-based IIoT Malware Bytecode Dataset, Lora-RFFI and Operationally Transparent Cyber (OpTC). A concise description of these datasets is provided in Table 3. Fig. 9 illustrates the aggregation results of publicly available datasets used in the selected literatures.

Figure 9: Aggregation of publicly available datasets used in the selected literature.

4 AI-Based Threat Detection in ICS

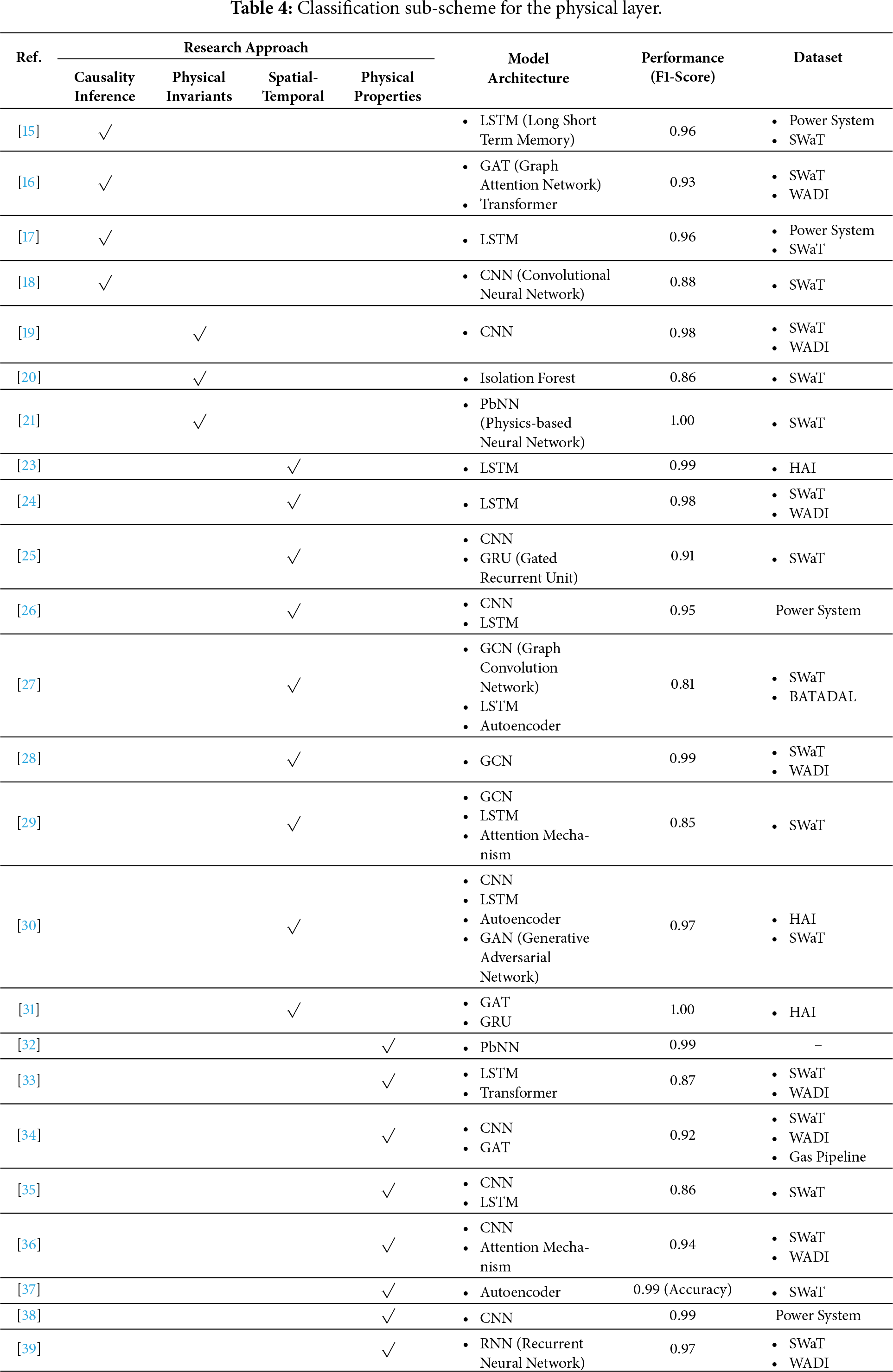

The physical layer focuses on studies utilizing operational data directly measured by on-site sensors. Research within this layer can be categorized into clusters based on various perspectives, including causality, physical invariants, spatiotemporal properties, and physical characteristics, all influenced by the control loop. The classification of the reviewed literature for this layer is summarized in Table 4. The column labeled ‘Research Approach’ specifies the methodological strategy adopted to address the problem, while ‘Model Architecture’ indicates the structural framework of the proposed model, where a dash (–) signifies the use of a probability-based or function-based model rather than a data-structure-dependent architecture. ‘Performance’ denotes the highest F1-Score reported in the experimental results, with a dash (–) representing cases where performance evaluation was not conducted. Finally, ‘Dataset’ identifies the data used for experiments or validation, with a dash (–) indicating that the data were independently collected or not publicly available.

In ICS, which exhibit the characteristics of cyber-physical systems (CPS), cyberattacks can directly lead to physical consequences. Acknowledging this, Jadidi et al. [15] proposed ICS-CAD, a framework that traces attack causes and effects by using deep neural network-based sequence classification to identify devices potentially impacted by attack propagation. Similarly, Cao et al. [16] introduce a graph attention network-based framework that exploits correlations among sensor readings to detect cyberattacks and localize their points of origin. Another study [17] trained a long short-term memory (LSTM) model to learn normal behavioral patterns across ICS components and subsequently analyzed the states of nodes associated with detected anomalies to infer potential propagation paths. Additionally, Koutroulis et al. [18] applied a one convolutional neural network (1D-CNN) to perform causal inference for injected attacks, employing regression-based filtering to distinguish cyberattacks from physical noise within detected anomalies.

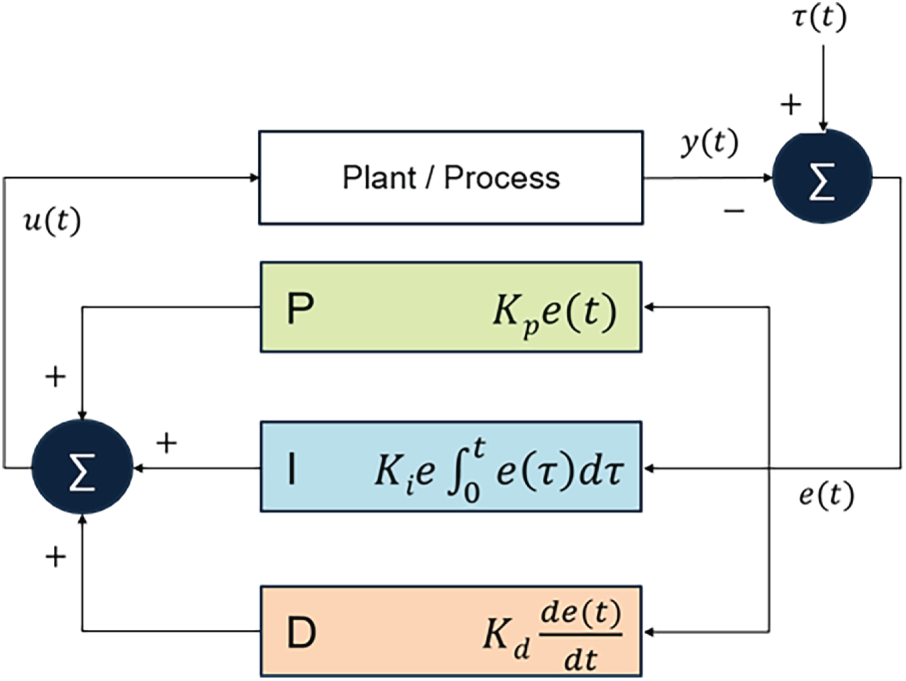

The fundamental principle enabling attack-path tracking and detection lies in the intrinsic characteristics of control system logic and operational loops. In a control loop, logical inconsistencies observed in the operational data from sensors and actuators may indicate either device malfunction or potential intrusion [19]. Fig. 10 depicts the general control structure of a PID(Proportional-Integral-Derivative)-based closed-loop system, where ‘

Figure 10: Control loop structure.

Given the highly dynamic nature of ICS, capturing long-term temporal dependencies and inter-system correlations in time-series data, particularly in sensor measurements, is essential [23,24]. To address these challenges, studies such as [25,26] combined 1D-CNN and gated recurrent unit (GRU) architectures to model spatiotemporal relationships among system variables and detect attacks within dynamic multivariate time-series data. Similarly, [27,28] integrated LSTM and graph convolutional network (GCN) models to capture multi-scale spatiotemporal correlations and localize attack events by monitoring variations in graph node states. Lan and Yu [29] applied dynamic thresholding based on autoencoder reconstruction error for attack detection, supported by a hybrid architecture comprising an LSTM, an attention module, and a graph convolutional network. Han and Gim [30] employed generative adversarial networks (GANs) to address data scarcity and utilized a hybrid architecture combining CNN, LSTM, and autoencoder to extract spatiotemporal patterns along with local and long-term dependencies. Wang et al. [31] proposed a layered integration of graph attention networks, graph transformers, and bidirectional GRUs to effectively capture spatiotemporal characteristics within ICS environments.

Across the surveyed literature, a substantial portion of research at the physical layer addresses false data injection attacks, which critically threaten system reliability, operational continuity, and safety. Given the disruptive nature of these attacks, the development of robust protection mechanisms and security solutions is imperative. Most studies in this area employ machine learning models that integrate physical system characteristics to detect falsified data. For example, Ahmad et al. [32] utilized load frequency data in power systems, while [33–35] applied Fourier and wavelet transformations. Li et al. [36] employed entropy measures derived from the randomness of operational data. Additionally, several studies [37–39] proposed Kalman-filter-based neural architectures specifically designed for ICS environments.

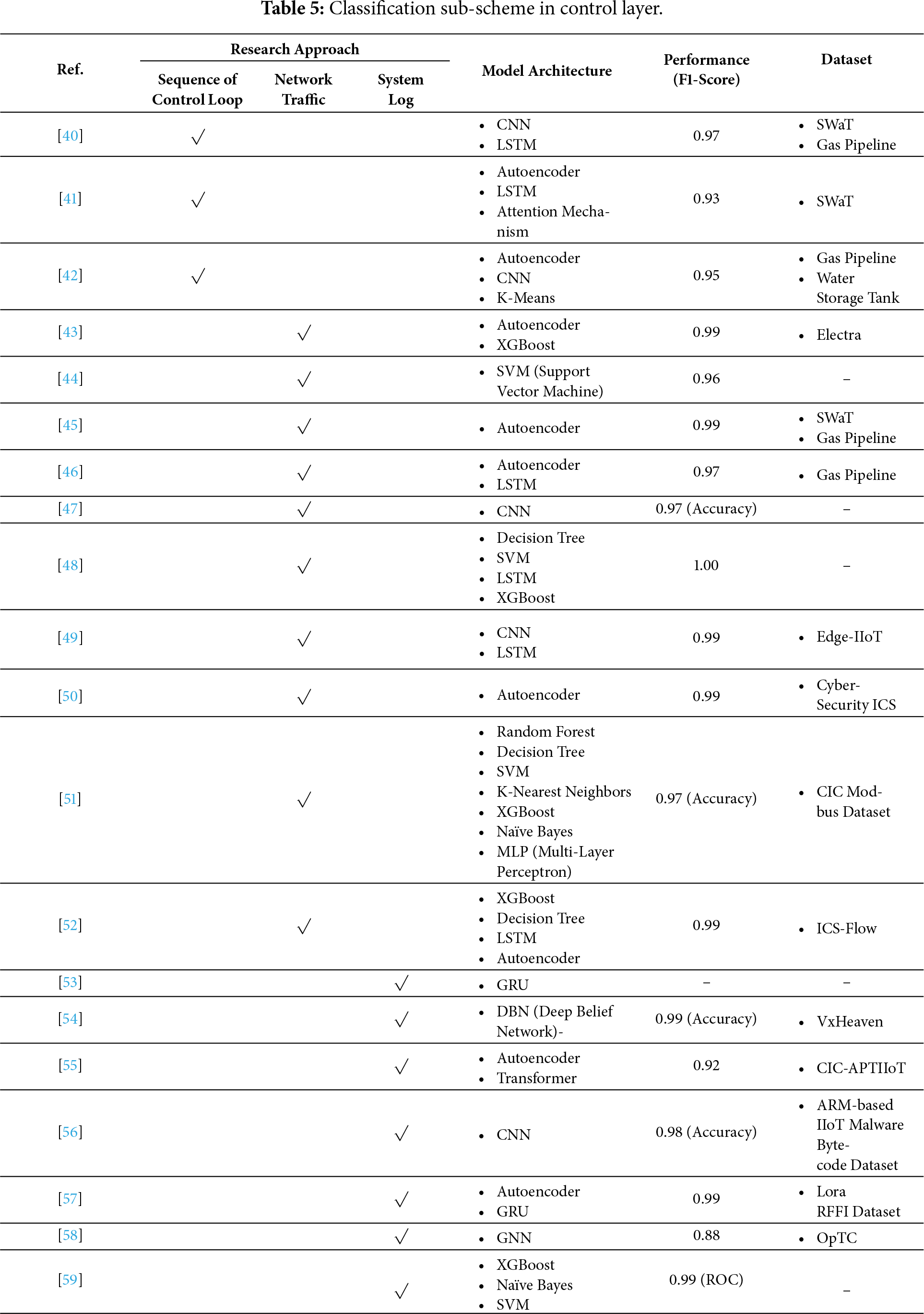

The control layer is responsible for generating and transmitting control commands based on sensor measurements, typically through industrial communication protocols such as Modbus, DNP3, and OPC. Unlike the physical layer, which focuses on process dynamics, the control layer reflects the logical and sequential characteristics of control operations. The selected literature is compared in Table 5, and the column definitions as well as the meaning of dashes are consistent with previous descriptions. Research within this layer has primarily concentrated on two areas: analyzing network traffic that reflects control-packet sequences and their statistical properties, and investigating attacks aimed at compromising system availability and detecting advanced persistent threats (APT) through log analysis.

Industrial communication protocols, such as Modbus, DNP3, OPC, and Ethernet/IP, prioritize real-time performance, offering limited encryption and authentication, which increases vulnerability to adversarial compromise. These weaknesses expose protocols to man-in-the-middle attacks, where adversaries intercept or alter communications between endpoints, often causing undetected effects on physical processes. Industrial protocols also exhibit sequential characteristics due to continuous state transitions and tightly coupled control loops [40]. To address these, Zare et al. [41] proposed an attention-based sequence-to-sequence autoencoder, and Chang et al. [42] developed a convolutional autoencoder to detect anomalous network packets deviating from normal operation-al sequences.

Networks in ICS exhibit highly regular and predictable communication patterns. Because industrial processes operate through repetitive control loops—periodically collecting sensor measurements and issuing commands to actuators—the resulting traffic flows tend to be stable and deterministic [43]. Focusing on packet inter-arrival times and connection durations experimentally demonstrated that attack packets in Modbus/TCP communications often display significantly shorter temporal intervals than normal packets [44]. Al-Abassi et al. [45] experimentally demonstrated that stable F1-scores can be achieved in highly imbalanced environments through representation learning and an ensemble framework, without explicitly modeling packet inter-arrival times or sequential dependencies. In [46], statistical features of network traffic were probabilistically transformed and subsequently learned using an LSTM-AE model to capture the characteristics of legitimate communication flows. Additionally, Mubarak et al. [47] employed Deep Packet Inspection (DPI) to extract flow-level data and represented payload content as statistical traffic patterns, which were used to train a randomized CNN model for attack detection. ICS operate under strict temporal and resource constraints [48], and attacks that degrade or disrupt system availability can directly lead to physical damage and risks to human safety. As adversarial techniques become increasingly sophisticated and covert, availability-attack detection has progressively shifted from traditional signature-based approaches toward machine learning and deep learning methods. For example, an experimental comparison of several deep learning models with the rule-based intrusion detection system Snort demonstrated that deep learning approaches pro-vide substantially superior detection performance [49]. Consistent with this perspective, Ortega-Fernandez et al. [50] employ a deep autoencoder trained solely on normal operational data to identify novel traffic patterns that may threaten availability. Varol and İskefiyeli [51] proposed a method that extracts eight key features of the Modbus industrial protocol using Wireshark to train a model. Pallakonda et al. [52] focus on temporal variations in ICS network traffic sequences rather than single-flow values, and propose a machine learning–based approach for detecting attacks in industrial control systems.

Choi et al. [53] further systematize the relationship between attack techniques and log sources, identifying the data streams that must be prioritized for effective detection and thus informing da-ta-centric detection design. Extending this line of inquiry, the unsupervised learning approach of Ref. [54] shows that deep belief networks (DBNs) can adapt to emerging attack patterns while maintaining low false-alarm rates, offering valuable insights for model maintenance and operation in dynamic industrial contexts. Finally, Yoon et al. [55] construct a causal graph by integrating system logs, network events, and provenance records, and combine this with sequence- and frame-level representations using a Transformer-based embedding model to perform anomaly analysis that accounts for both temporal and semantic context. Although this approach demonstrates strong performance under specific conditions, it is unlikely to serve as a universal solution across the industrial domain; nonetheless, it provides an important case study illustrating the potential of multi-log integration and causal representation for advanced threat detection. Esmaeili et al. [56] introduced an adversarial attack technique designed to evade malware classifiers for industrial IoT, where continuous queries are used and an attack is identified when the average distance of K-NN falls below a predefined threshold. Halder et al. [57] presented a context-aware anomaly detection approach targeting LoRa (Long-Range), an ultralow-power wireless communication technology capable of covering several kilometers in IIoT deployments. Zhang et al. [58] analyzed logs from IIoT devices using an ontology-enhanced, T5-based few-shot model and constructed Temporal Graph Networks (TGN) from the logs to enable unsupervised anomaly detection. Sun et al. [59] proposed a method for detecting malware in industrial IoT environments by modeling API call sequences as graphs and applying machine-learning classifiers.

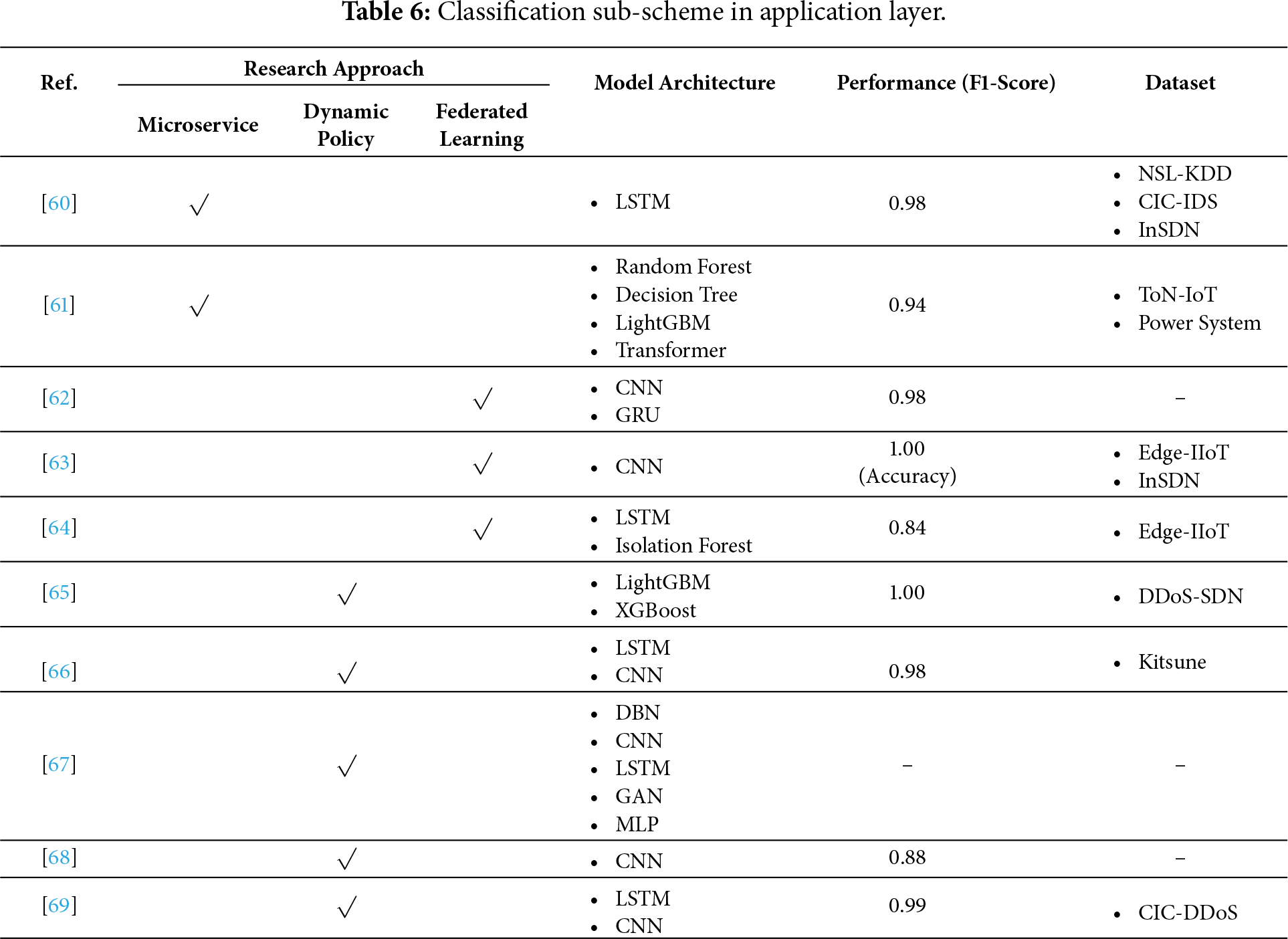

The application layer does not directly control physical industrial processes but consists of data-driven platforms that ensure operational efficiency and system scalability. Within this layer, extensive AI-based research has been conducted to detect cyber threats. Among these studies, emphasis is placed on those that move beyond basic model tuning by integrating architectural or hierarchical characteristics of the application layer. The selected works are summarized in Table 6, with the column descriptions and dash meanings consistent with previous explanations.

Microservices represent a cloud-native architecture that decomposes applications into small, loosely coupled, and independently deployable services. This approach was introduced to overcome the constraints of monolithic architectures, where all functionalities are deployed as a single process. Studies classified under the application layer often focus on implementing AI-driven functionalities as microservices. In IIoT environments, maintaining cybersecurity under resource constraints while enhancing system intelligence and operational efficiency is critical. To address these challenges, AI capabilities are increasingly being developed as distributed or independently deployable microservice architectures. Liang et al. [60] proposed a variant few-shot learning model to improve intrusion detection performance in distributed IIoT environments, while Bugshan et al. [61] implemented a cyber-threat detection framework based on stacked ensemble learning as a microservice in an edge-cloud environment.

As IIoT systems are closely integrated with critical infrastructure, the data collected within these environments typically carry a high level of confidentiality. Centralized learning approaches elevate the risk of exposing such sensitive data, and the heterogeneous nature of distributed devices leads to diverse data types. As a result, extensive research has been conducted to investigate the application of federated learning in IIoT environments, as illustrated in Fig. 10. Federated learning can also help mitigate challenges arising from the scarcity of attack data relative to the abundance of normal data. By sharing only model parameters derived from local training—rather than raw data—it preserves privacy while reducing unnecessary communication overhead [62]. For example, Zainudin et al. [63] treat SDN controllers as local clients in a federated learning framework and propose an architecture that integrates a Pearson-correlation–based feature selection technique with a lightweight CNN–MLP hybrid model to maximize system efficiency. Desai et al. [64] propose a method in which the central server does not directly average or sum client weights; instead, it removes outliers using an Isolation Forest before merging the remaining weights, thereby more effectively reflecting underlying data distribution characteristics.

The rapid expansion of network scale and the proliferation of heterogeneous devices have led to the emergence of numerous AI-driven frameworks designed to detect rapidly evolving threats and provide programmable control. Al-Zaidi and Al-Hubaishi [65] proposed a method for detecting malicious network traffic using LightGBM and XGBoost, classifying attack types, and applying real-time response policies through an SDN controller. Bibi et al. [66] noted that the heterogeneity and resource constraints of IIoT environments hinder the adoption of standard network security solutions, and introduced a hybrid threat-detection model combining LSTM and CNN to identify dynamic and sophisticated attacks. Rahman and Hossain [67] presented a software-defined security architecture that leverages deep learning–based security function virtualization to enable dynamic defense mechanisms against adversaries. Kotsiopoulos et al. [68] proposed a threat-detection approach integrating Thompson-sampling reinforcement learning with SDN to support adaptive and cost-efficient responses. Finally, Zainudin et al. [69] highlighted the attractiveness of SDN-based centralized controllers as targets for attackers and proposed a lightweight, AI-enhanced DDoS detection method tailored for resource-constrained IIoT environments.

This section reviewed AI-based cyber threat detection approaches in ICS from a layered perspective, focusing on the physical layer, control layer, and application layer. By systematically organizing the existing literature according to their functional roles within the ICS architecture, this analysis clarifies how detection objectives, data characteristics, and modeling strategies differ across layers, while also revealing common trends and limitations shared among them.

At the physical layer, research primarily targets sensor and actuator data that directly reflect process dynamics and CPS behavior. The reviewed studies show that effective detection at this layer relies heavily on modeling temporal dependencies, inter-variable correlations, and physically meaningful constraints derived from control loops and plant dynamics. Deep learning models such as LSTM, CNN, and GNN are widely adopted to capture spatiotemporal patterns in multivariate time-series data. In addition, physics-aware approaches, including PbNN and Kalman-filter-based neural architectures, incorporate physical invariants to improve robustness against FDI (False Data Injection) attacks and reduce false alarms. These characteristics make physical-layer detection particularly important for maintaining operational safety and preventing direct physical damage.

At the control layer, the focus shifts from physical process behavior to the logical and sequential properties of control operations and industrial communication. Studies in this layer mainly analyze control-command sequences, industrial protocol traffic, and system logs to detect attacks that compromise availability, integrity, and long-term persistence. Due to the deterministic and periodic nature of control traffic, many approaches exploit temporal regularity, packet timing, and protocol semantics using ML and DL models such as autoencoders, CNNs, LSTMs, and ensemble classifiers. The reviewed literature consistently indicates that AI-based methods outperform traditional rule-based and signature-based techniques in detecting stealthy and evolving threats, including DoS attacks and APTs. However, detection performance is often sensitive to protocol diversity and domain-specific operational characteristics.

At the application layer, AI-based threat detection is implemented within data-driven platforms that support monitoring, orchestration, and security management without directly participating in real-time control. Research in this layer emphasizes scalability, distribution, and data confidentiality, reflecting the growing adoption of cloud computing, SDN, microservice architectures, and IIoT environments. Federated learning and lightweight DL models are frequently employed to address privacy concerns and resource constraints while enabling collaborative detection across distributed systems. While these approaches enhance flexibility and system-wide visibility, they also increase architectural complexity and expand the overall attack surface.

Overall, the findings in Section 4 demonstrate that AI-based threat detection in ICS is inherently layer-dependent. Physical-layer approaches prioritize safety and process integrity, control-layer approaches focus on protocol behavior and availability, and application-layer approaches emphasize scalability, adaptability, and privacy preservation. At the same time, the boundaries between layers are not strictly isolated in practice, as anomalies and decisions at one layer may propagate to others through tightly coupled cyber-physical interactions.

This layered analysis highlights both the effectiveness of AI in improving cyber threat detection in ICS and the unresolved challenges related to data imbalance, domain scalability, computational constraints, and explainability. These observations provide essential context for Section 5, which examines how AI components deployed across the physical, control, and application layers can themselves become targets of adversarial attacks, introducing new security risks throughout the ICS lifecycle.

5 Adversarial Attacks on Industrial AI

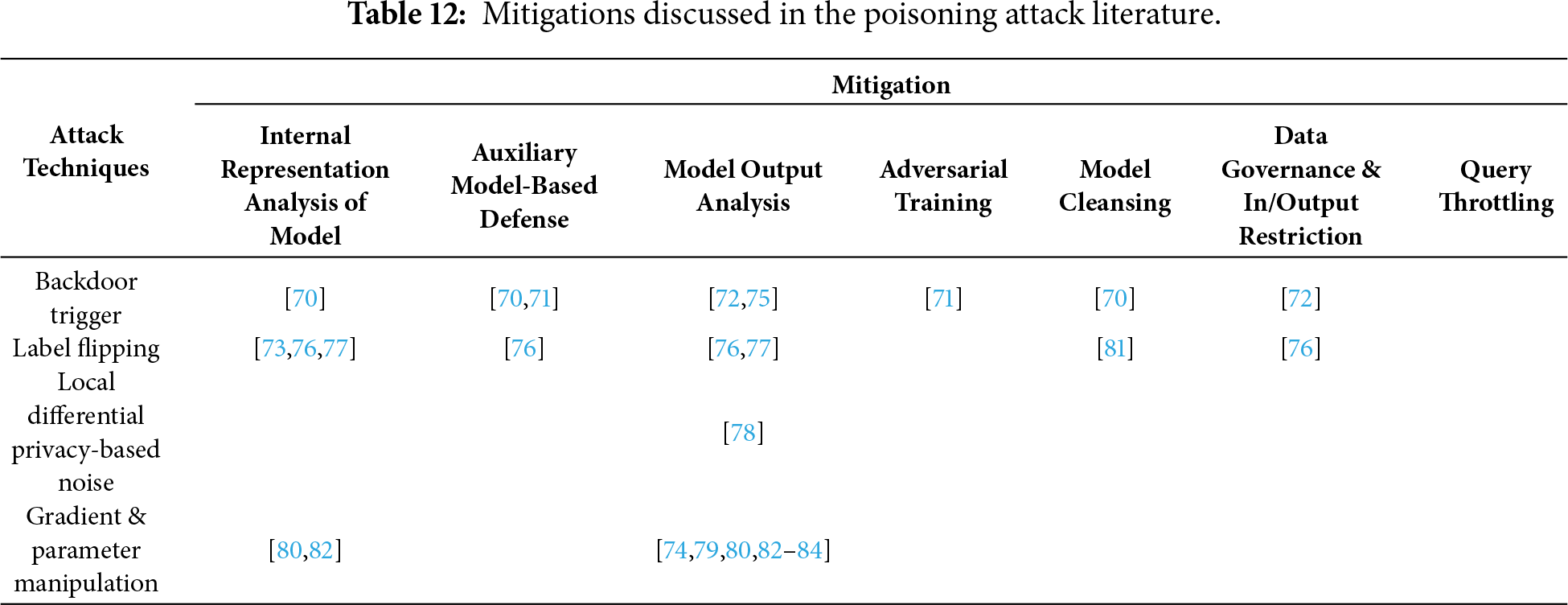

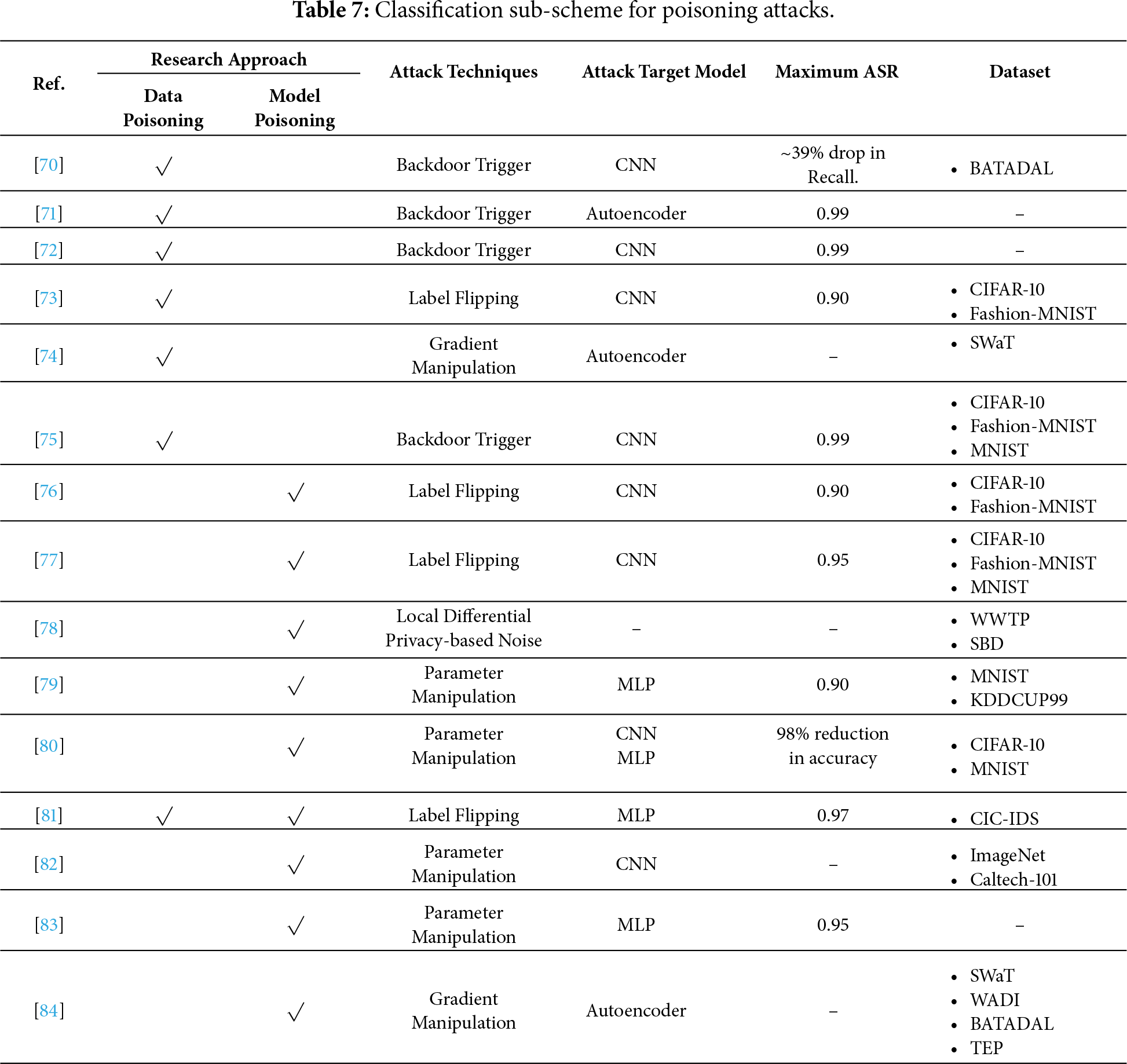

Poisoning attacks occur when an adversary manipulates the data used to train a machine learning model or compromises the model during retraining or structural updates, steering its behavior toward malicious objectives or degrading its performance. While model drift is traditionally considered a benign phenomenon, in lifelong learning–enabled ICS, adversarial manipulation of input data can intentionally exploit drift mechanisms, resulting in a form of long-term poisoning attacks. Attacks that cause the model to produce specific outputs in response to a backdoor trigger pattern are also classified under this category. Table 7 summarizes the literature related to this field, with column descriptions consistent with previous tables. However, in this section, Performance refers to the attack success rate (ASR), expressed as shown below:

where

The detailed poisoning attack techniques identified in the selected literature include Backdoor Trigger, Label-Flipping, Local Differential Privacy-based Noise, and Gradient & Parameter Manipulation. A Backdoor Trigger attack refers to a poisoning attack in which the model behaves normally under standard conditions, but produces attacker-intended malicious behavior only when a specific trigger embedded by the attacker is present in the input. A Label-Flipping attack refers to an attack in which the semantic features of the input data are preserved while the labels are manipulated, or the labels are deliberately modified to be clearly inconsistent with the semantic meaning of the in-put data. A Local Differential Privacy-based Noise attack refers to an attack in environments where differential privacy is applied, in which the adversary degrades model performance by injecting excessive noise under the guise of privacy protection. A Gradient & Parameter Manipulation attack refers to an attack in which the attacker directly manipulates local training results (i.e., gradients or model parameters) in order to distort the direction of global model updates toward an attacker-desired direction.

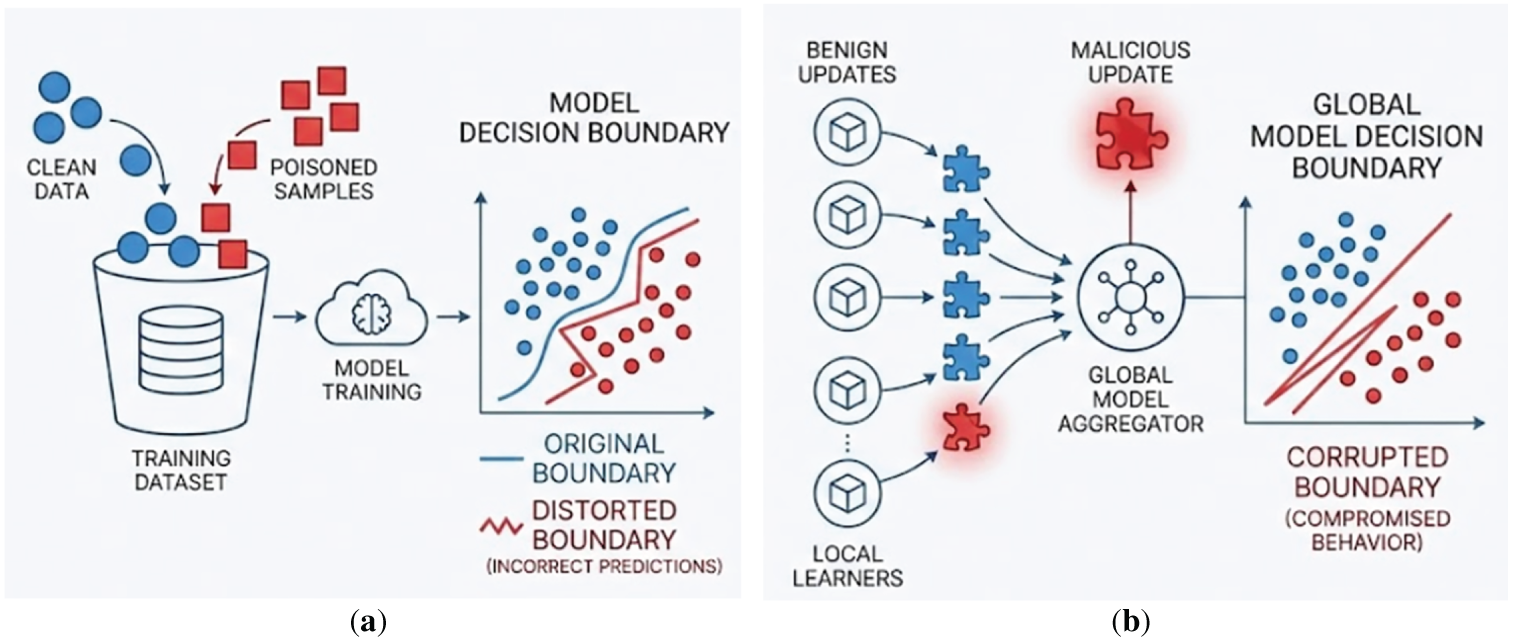

In addition, poisoning attacks can be broadly categorized into data poisoning and model poisoning, depending on the stage at which the adversary interferes with the learning process. Fig. 11 illustrates a conceptual comparison between these two attack perspectives. Data poisoning attacks typically occur in centralized learning settings, where the adversary injects maliciously crafted samples into the training dataset. As shown on the left side of Fig. 11a, the presence of poisoned data causes the model to learn a distorted decision boundary during training, which in turn leads to incorrect predictions even for benign inputs.

Figure 11: (a) Data poisoning attack. (b) Model poisoning attacks.

In contrast, model poisoning attacks are more prevalent in distributed learning paradigms such as federated learning or reinforcement learning. In this case, the attacker directly manipulates local model updates, including gradients or parameters, before they are aggregated into the global model. As depicted on the right side of Fig. 11b, malicious updates can compromise the global aggregation process, resulting in a corrupted global decision boundary and attacker-controlled model behavior. Unlike data poisoning, model poisoning does not necessarily require access to the raw training data, making it more difficult to detect in collaborative learning environments. Although data poisoning and model poisoning differ in their attack vectors and execution stages, both aim to degrade model integrity by intentionally altering the learned decision boundary.

Walita et al. [70] introduced a backdoor attack on autoencoder-based anomaly detection models in ICS, embedding triggers within training data through heterogeneous combinations of actuator states to evade detection. Zhang et al. [71] observed that poisoning attacks utilizing backpropagation-gradient optimization and interpolation can arise during retraining procedures intended to mitigate model drift in operational ICS. Tang and Xiao [72] investigated data poisoning–based backdoor attacks on a CNN-based AlexNet employed for power system transient stability assessment. Through experimental evaluation, they showed that the compromised model preserves normal classification performance while exhibiting targeted misclassification when the backdoor trigger is activated. They also discussed that such misclassification in real unstable scenarios may prevent timely generator tripping, thereby posing serious risks of system instability and blackouts. Li et al. [73] partially demonstrated through experiments that poisoning attacks remain feasible even in federated learning environments where data distributions differ across clients, based on label flipping and gradient scaling. Kravchik et al. [74] investigate a poisoning attack technique in which gradually manipulated sensor time series are used to retrain models for anomaly detection in ICSs, and they experimentally demonstrate that such poisoning attacks can be successfully carried out.

Federated learning, initially developed to enhance privacy, has gained adoption in the energy sector and other industrial domains due to its decentralized structure, where models are trained locally without sharing raw data, and only model weights are centrally aggregated. However, federated learning remains susceptible to corruption of the central server and the global model by malicious or compromised clients [75]. Xiao et al. [76,77] proposed a poisoning attack technique in an IIoT-based federated learning architecture, in which compromised malicious clients generate multiple Sybil nodes to artificially increase their probability of being selected during the aggregation process. Shuai et al. [78] and Ma et al. [79] observed that local differential privacy, while designed to improve privacy in federated learning, can hinder the detection of poisoning attacks. Tan et al. [80] propose a nontargeted model poisoning attack in a decentralized federated learning environment without a central server, where participants exchange model parameters directly with each other. In their approach, malicious models are carefully crafted to satisfy distance constraints with respect to neighboring models, making them difficult to detect. Furthermore, multiple malicious participants collude to jointly disrupt the learning process, ultimately degrading both model convergence and accuracy. Yang et al. [81] assume some edge servers as malicious clients in a federated learning–based IoT intrusion detection system, conduct poisoning attacks using label-flipped data, and then analyze the effectiveness of the attack and the proposed defense mechanism by examining changes in the loss and accuracy of both local and global models.

Zhang et al. [82] proposed a model poisoning attack that can selectively manipulate the outputs of explainable artificial intelligence (XAI) in neural networks deployed on IoT devices without access to training data. By distorting the explanation results while keeping the model’s predictions unchanged, the attack achieves a high level of stealth. Jiang et al. [83] investigated safe reinforcement learning in which task objectives and safety constraints are specified using Signal Temporal Logic, and the degree of satisfaction of these specifications is transformed into robustness values that serve as the reward function. They pointed out that this learning paradigm is vulnerable to training-time backdoor attacks, and experimentally demonstrated that even a limited amount of training data poisoning can lead to policies that behave normally under typical conditions but violate safety constraints when specific triggers are encountered. Kravchik et at. [84] targeted online-retrained autoencoder-based ICS anomaly detectors and proposed two poisoning techniques: a back-gradient–based method that optimizes poisoning samples to minimize reconstruction error, and an interpolation-based method that gradually shifts from normal data toward attack data.

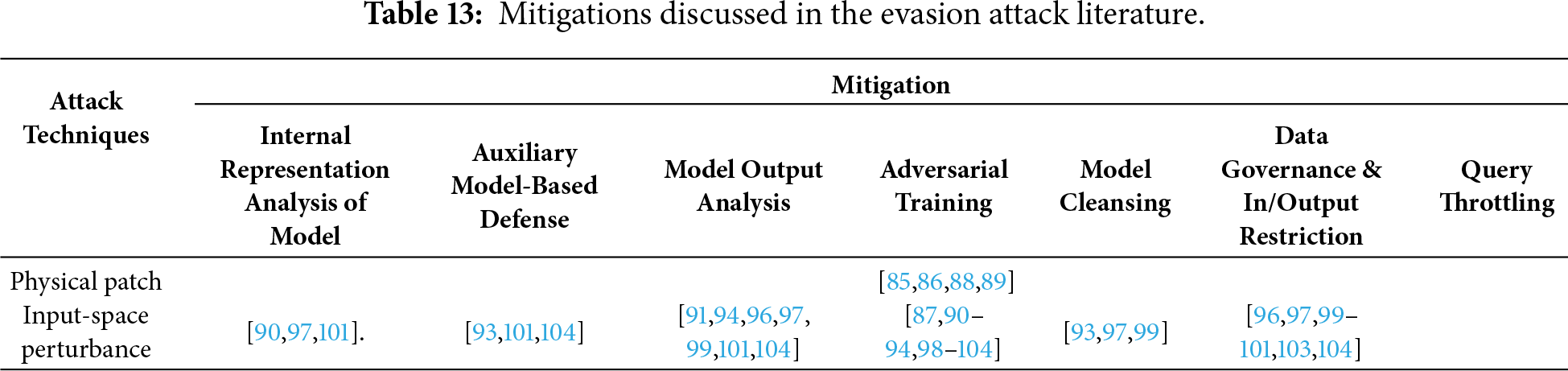

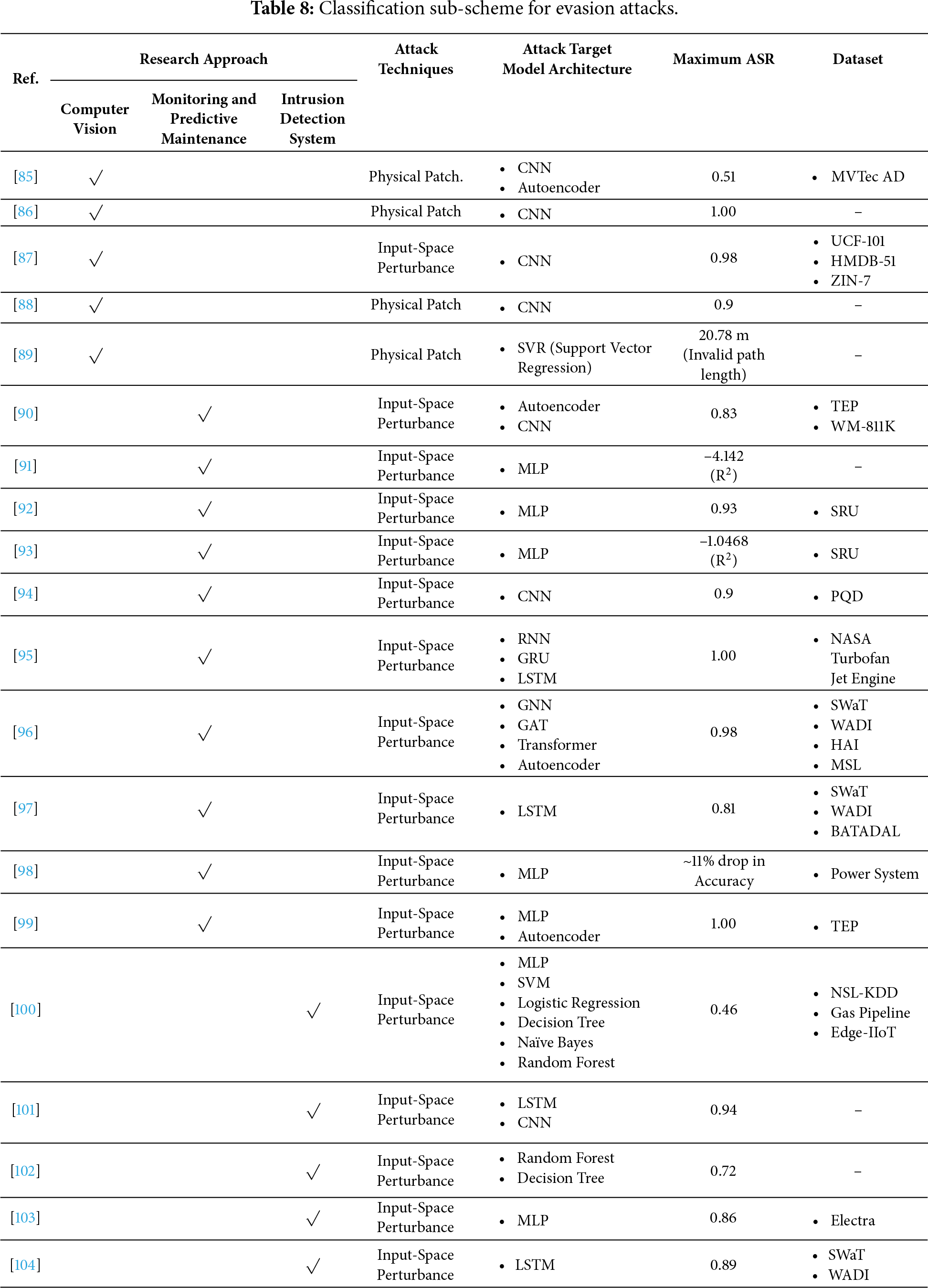

This type of attack introduces noise or perturbations into the input data used for model inference, allowing an adversary to evade the model’s decision boundaries. Commonly referred to as an evasion attack, it can involve physical patch manipulation or data alteration within the computing infrastructure. In CPSs, once a model used for physical control is deployed in real environments, detecting and mitigating such attacks becomes difficult, even when the model’s performance is compromised. Table 8 summarizes the literature classified under this category, with column descriptions consistent with previous sections. The ASR in evasion attacks is evaluated using Eq. (2).

The detailed evasion attack techniques identified in the selected literature include Physical Patch-based Attacks and Input-space Perturbation-based Attacks. A Physical Patch-based Attack refers to an evasion attack in which an adversary introduces carefully crafted physical artifacts, such as stickers or printed patterns, into the real-world environment so that the model behaves normally under benign conditions but produces incorrect predictions when the patched object is observed. An Input-space Perturbation-based Attack refers to an evasion attack in which the attacker adds small, often imperceptible perturbations directly to digital input data, thereby preserving the semantic meaning for humans while intentionally causing the model to generate erroneous outputs.

Okada and Mitsunaga [85] proposed a physical adversarial-patch attack that used transparent stickers on image-recognition systems for industrial automation. In a follow-up, Okada et al. [86] developed a patch-attachment method adapted to fixed camera angles. Deng et al. [87] decomposed adversarial perturbations into noise and size components and showed that targeting critical regions could disable systems with minimal manipulation. Jia et al. [88] experimentally evaluated physical adversarial patches on collaborative industrial robots, demonstrating direct safety risks. Li et al. [89] devised an interaction-based physical attack that exploited a robot’s obstacle-avoidance to lure it to a specified location.

Several evasion techniques targeting deep-learning models for monitoring and predictive maintenance in ICS were reported. Numerous studies have examined evasion attacks targeting soft sensors and predictive maintenance models. Yin et al. [90] demonstrated the transferability of adversarial examples across tasks, such as device fault detection, classification, and soft sensing, and proposed a spectrum-normalized gradient-descent evasion attack that enhanced ASRs. Kong and Ge [91] argued that conventional evasion methods, such as fast gradient sign method (FGSM) and basic iterative method, are unsuitable for regression tasks typical of industrial soft sensors and introduced an attack approach that maximizes prediction outputs instead of altering the loss function. An evasion strategy based on diffusion models was proposed by Jiang et al. [92], injecting perturbations across multiple physical variables used by soft-sensor models. Curve-based flip and translation attacks were introduced by Chen et al. [93], deceiving both soft sensors and human operators and inducing physical process damage. A targeted adversarial attack that augments inputs to deep-learning power-quality monitors with a single noise vector was presented by Khan et al. [94]. Maillard et al. [95] proposed a white-box method to evade predictive-maintenance models while minimizing perturbation magnitude and the number of manipulated variables. A graph neural network-based evasion attack was introduced by Gunawardena et al. [96], capable of deceiving anomaly-detection models while preserving inter-sensor dependency constraints. Liu et al. [97] presented experiments in which FGSM-based adversarial samples were generated and injected into an LSTM encoder-decoder model commonly used for anomaly detection in ICS. Figueroa et al. [98] applied FGSM, DeepFool, and JSMA to machine-learning models in ICS and experimentally demonstrated their vulnerability. Zhuo et al. [99] observed that crafting universal adversarial samples for industrial fault-detection systems is more difficult than for image data and proposed an attack that manipulates reconstruction error in opposite directions depending on sample type.

Various evasion techniques have been examined for AI-based intrusion-detection models in ICS. Zhang et al. [100] generalized instance-specific noise used in FGSM, project gradient descent, and basic iterative method attacks into universal perturbations and demonstrated adversarial attacks injected into control-protocol traffic, IIoT packet data, and TCP/IP streams. Shen et al. [101] generated adversarial samples for malicious domain-generation families using geometric vector representations. Anthi et al. [102] created JSMA-based adversarial samples and applied them to random forest and J48 classifiers to evaluate the vulnerability of machine-learning-based intrusion detection systems. Perales Gómez et al. [103] proposed an evasion method that perturbs only modifiable features of gradient-based attacks, allowing adversarial samples to bypass intrusion detection systems while remaining valid and interpretable by ICS devices. Jia et al. [104] introduced a stealthy adversarial sample generation approach that perturbs both sensor and actuator values using gradient-based methods while employing a rule checker to preserve physical invariants.

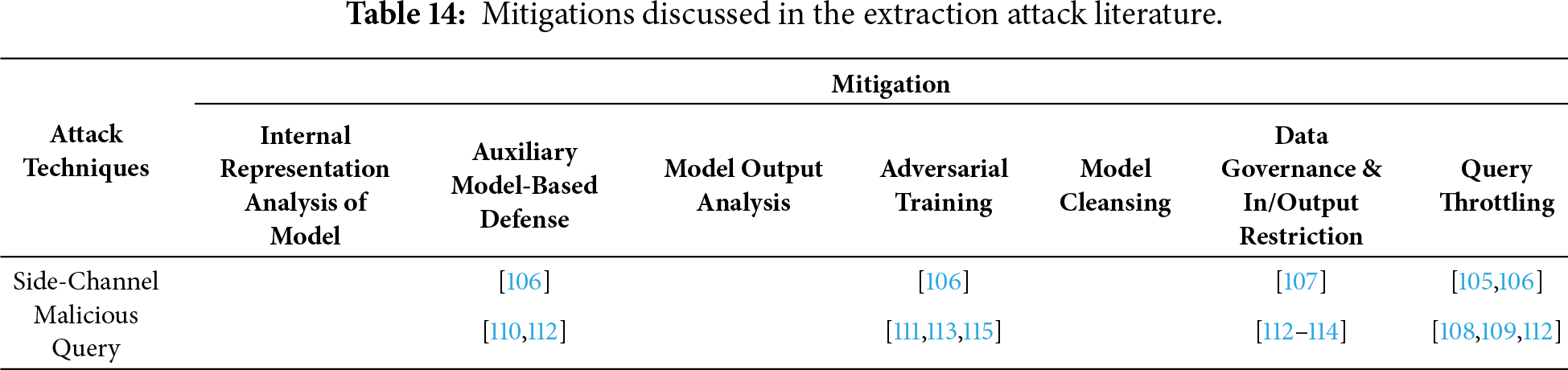

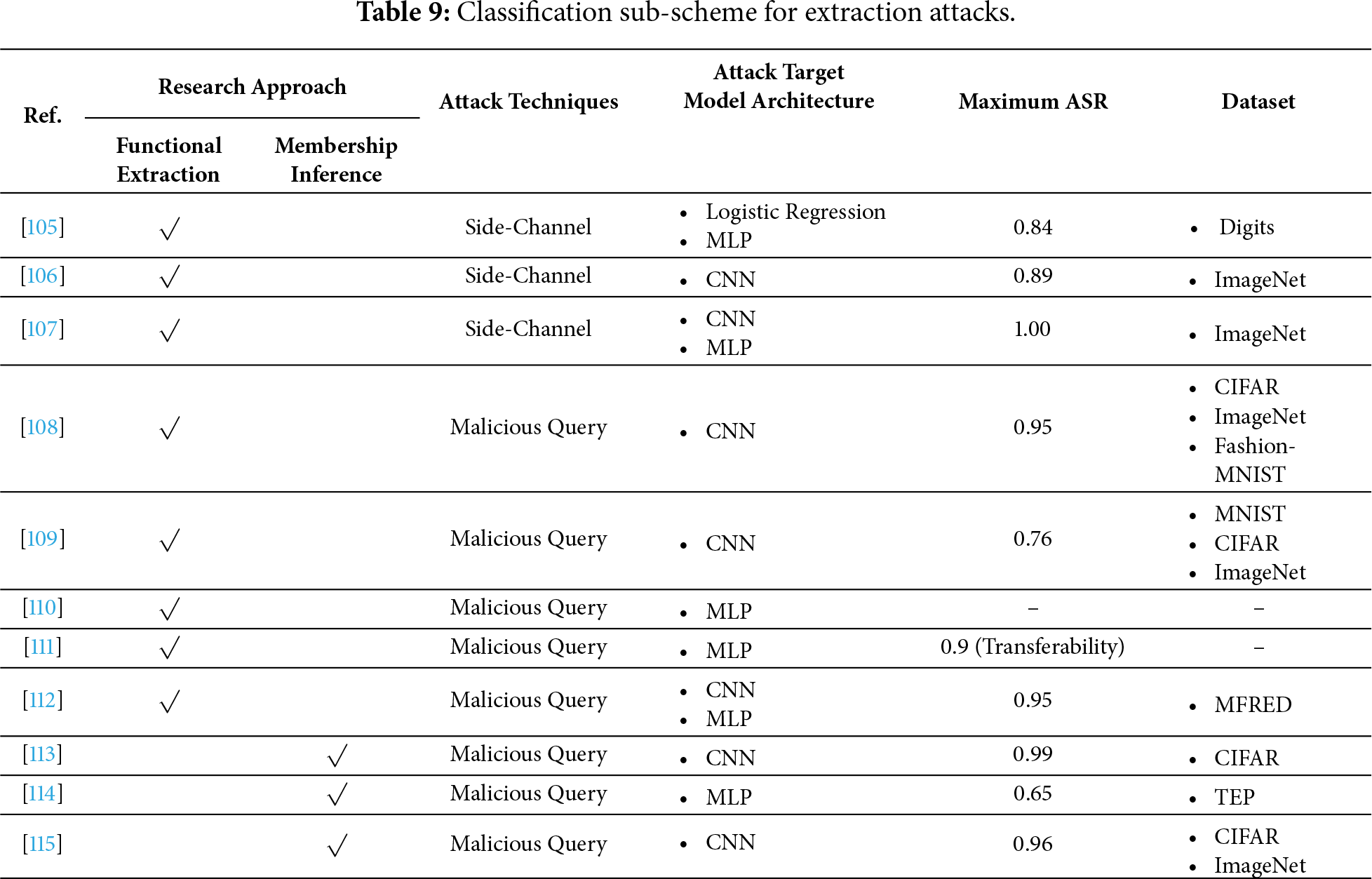

An extraction attack is a technique used to obtain a model’s functionality during its service stage or to analyze its outputs to determine whether specific inputs belong to its training data. Table 9 summarizes the literature classified under this category, with column descriptions consistent with previous sections. In functional extraction, the ASR represents the fidelity of the extracted proxy model to the victim model and is expressed below Eq. (3). ‘

In a membership inference attack (MIA), the ASR represents the proportion of instances in which the attacker or attack model correctly determines whether a given sample belongs to the model’s training dataset below Eq. (4). Here, membership refers to whether a particular sample is part of the model’s training dataset. Where ‘

Literature addressing attacks in environments where AI models are deployed as APIs or services has been categorized. These attacks exploit weaknesses in authentication, authorization, resource management, and model behavior, leading to privacy breaches, intellectual property theft, or service disruption. Pan et al. [105] proposed an attack algorithm that performs model extraction through parallel side-channel analysis, emphasizing the role of machine learning models in controlling CPS, such as smart grids, autonomous vehicles, and healthcare systems. Chandrasekar et al. [106] identified the high cost of query-based model extraction and introduced a method that conducts model extraction via side-channel analysis on edge devices, such as FPGAs. An attack was introduced by Zhang et al. [107] that extracted a victim model via malicious circuits implanted in cloud FPGAs. A method was proposed by Sanyal et al. [108] for machine learning as a service that reduced query requirements by leveraging data independent of proxy models. Sun et al. [109] presented a model-theft technique based on GAN-synthesized data. Guo et al. [110] proposed constructing a proxy soft-sensor to generate knowledge-guided perturbations when direct access was unavailable. Bhattacharjee et al. [111] observed that real power grids primarily rely on physics-based algorithms rather than AI models; under a white-box assumption, they trained a neural-network surrogate replicating the physics-based model and used it to conduct adversarial attacks. Afrin and Ardakanian [112] assumed a black-box scenario, where neither the grid’s physical model nor the machine-learning architecture was known, and developed an adversarial attack method that trains a surrogate model to generate attack samples while accounting for statistical variance.

Luqman et al. [113] demonstrated that even with federated learning architectures and anonymization techniques, neural networks remain vulnerable to MIAs due to their tendency to memorize training data. Zhang et al. [114] showed that machine learning models used in industrial process monitoring systems are exposed to data-extraction risks when shared through APIs, presenting experimental results of MIAs under a black-box setting. Liu et al. [115] addressed training-data privacy concerns in encoder-based neural networks, proposing an attack method that exploits encoder similarity probabilities to perform MIAs.

This section examined adversarial attacks targeting AI models deployed in ICS, focusing on poisoning attacks, evasion attacks, and extraction attacks. Unlike conventional cyber threats that primarily exploit vulnerabilities in communication protocols or software implementations, the attacks reviewed in this section directly target the learning mechanisms, inference processes, and deployment interfaces of AI models. As a result, AI introduces a distinct and expanding attack surface across the ICS lifecycle.

Poisoning attacks primarily compromise the training and model-update stages by manipulating training data, model parameters, or aggregation processes. The reviewed studies show that such attacks can be realized through data poisoning, model poisoning, or backdoor trigger insertion, often exploiting retraining procedures, lifelong learning mechanisms, and FL architectures. In ICS, where models are continuously updated to adapt to changing operational conditions, adversaries can intentionally exploit model drift to conduct long-term poisoning attacks. The literature further demonstrates that FL, despite its privacy-preserving design, remains vulnerable to malicious clients and server-side manipulation, particularly under heterogeneous data distributions. These attacks predominantly affect models deployed at the application layer and control layer, while their consequences may propagate to the physical layer through corrupted control decisions.

Evasion attacks target the inference stage by introducing carefully crafted perturbations into model inputs, enabling adversaries to bypass detection or induce unsafe system behavior without modifying the model itself. The reviewed works indicate that evasion attacks are especially critical in CPS, where AI models are directly involved in monitoring, predictive maintenance, intrusion detection, and physical control. Physical adversarial patches, input-space perturbations, and gradient-based methods have been shown to successfully deceive CNN-, RNN-, LSTM-, and GNN-based models while preserving functional validity and physical plausibility. Notably, evasion attacks often target physical-layer and control-layer models by manipulating sensor readings, actuator signals, or protocol traffic, thereby posing direct risks to system safety and reliability.

Extraction attacks exploit the service and deployment stage of AI models to infer model functionality or training data characteristics. Functional extraction attacks aim to replicate the behavior of victim models through malicious queries or side-channel information, while MIAs infer whether specific samples were included in the training dataset. The reviewed literature demonstrates that AI models deployed as APIs, edge services, or cloud-based platforms are particularly vulnerable to such attacks, even under black-box assumptions. In ICS, successful extraction attacks can lead to IP theft, privacy leakage, and the facilitation of subsequent adversarial attacks by enabling white-box or surrogate-model-based exploitation. These attacks primarily target application-layer deployments but may indirectly impact control and physical layers by enabling more precise and stealthy follow-up attacks.

Overall, the analysis in Section 5 reveals that adversarial attacks against AI in ICS are stage-dependent yet cross-layer in impact. Poisoning attacks compromise model integrity during training and updating, evasion attacks undermine trust during inference, and extraction attacks threaten confidentiality during deployment and service. While these attacks often originate at the application layer, their effects can cascade through the control layer and ultimately manifest as physical consequences in the plant.

These findings highlight that securing AI-enabled ICS requires a paradigm shift from traditional perimeter-based or protocol-centric defenses toward holistic protection of the AI lifecycle. Robust training procedures, adversarial resilient model architectures, secure deployment mechanisms, and continuous monitoring of model behavior are essential to mitigate these risks. The insights derived from this section provide a foundation for the concluding discussion in the next section, which synthesizes the opportunities and challenges of AI in ICS cybersecurity and outlines future research directions.

6.1 RQ1. Are Machine Learning Algorithms for Attack Detection Equally Applicable to Both in-Formation and Communication Technology and ICS?

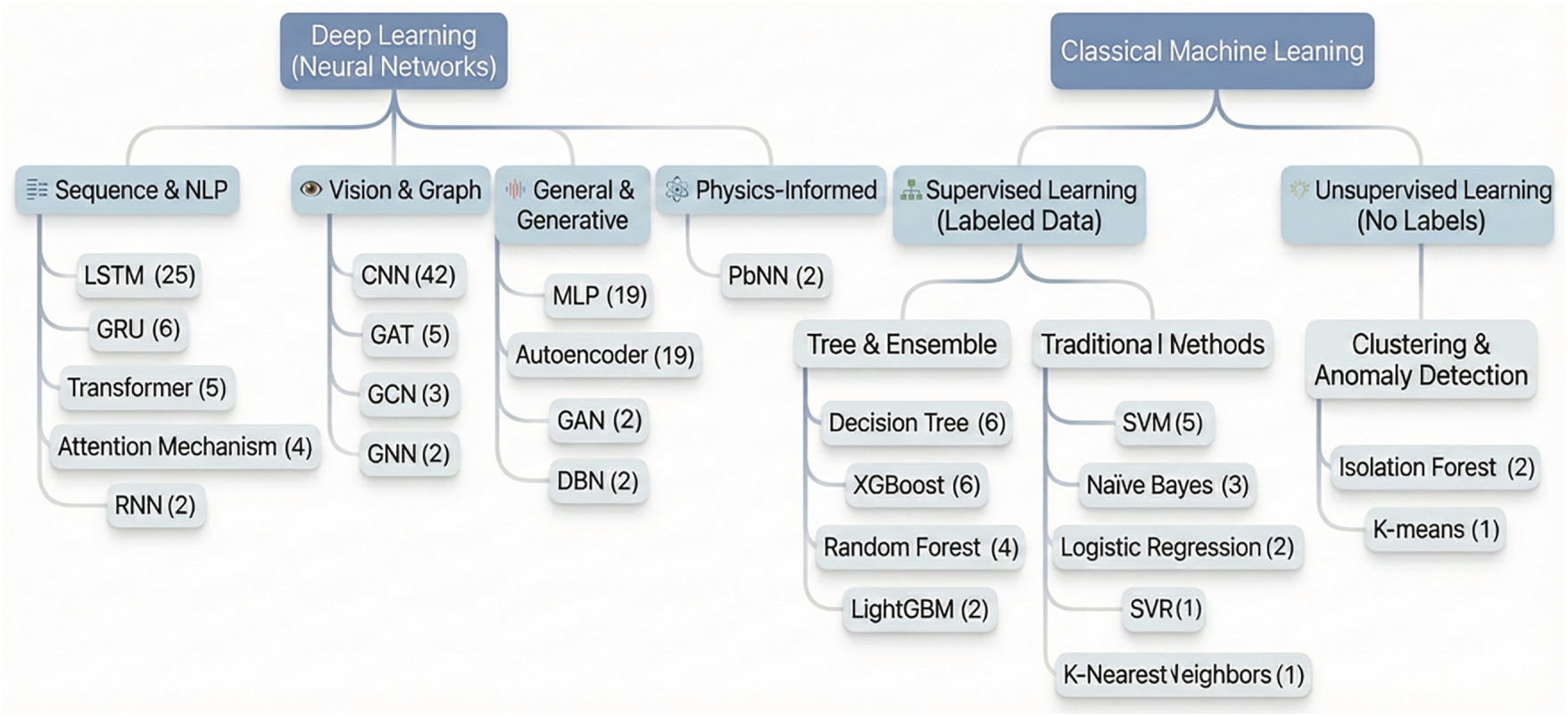

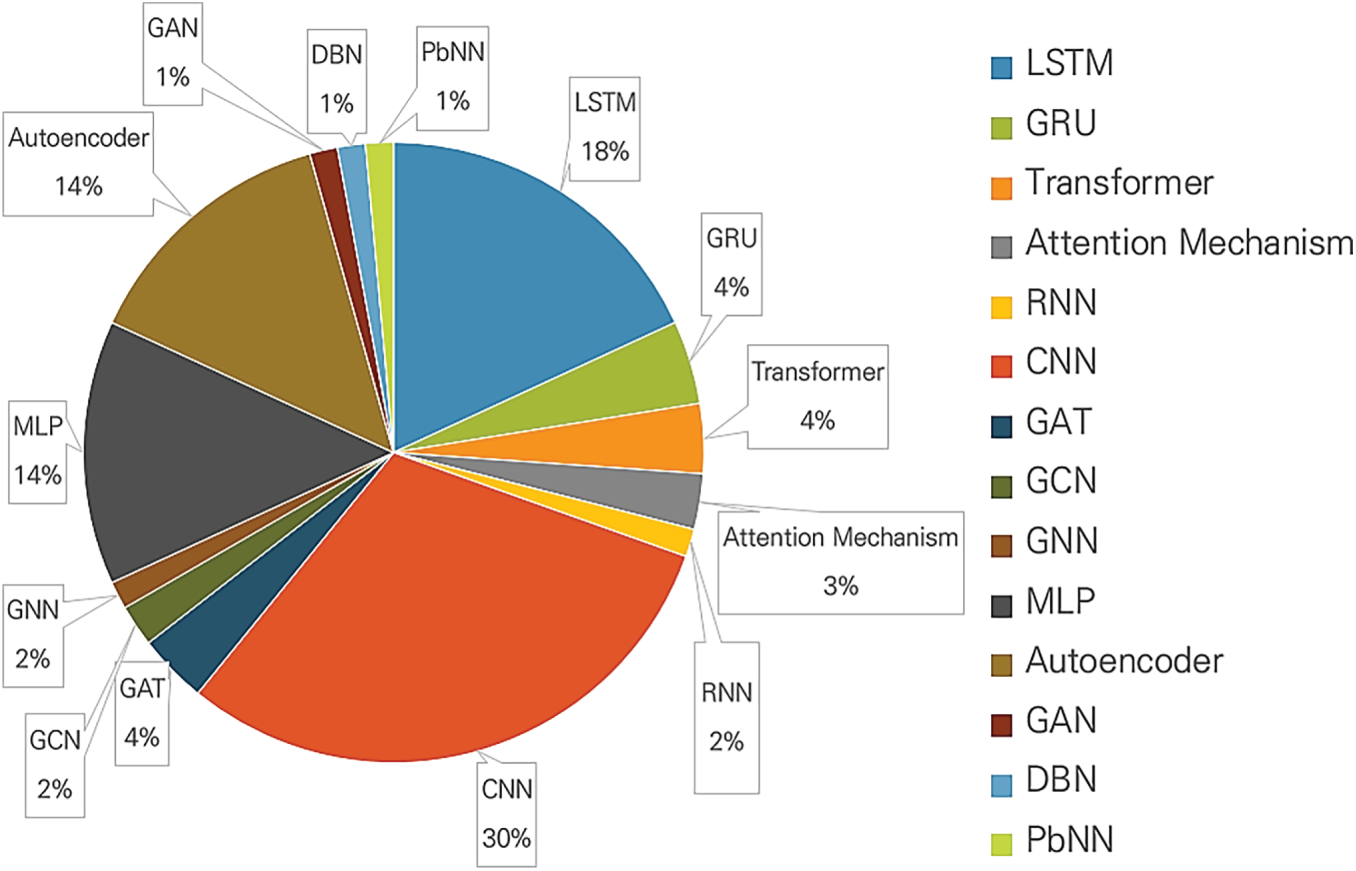

The 101 papers analyzed in this survey utilized a diverse range of deep Learning and machine Learning algorithms, as summarized in Fig. 12. Similar to AI-based attack detection research [153,154], in general environments, diverse machine learning algorithms were also employed in ICS. A distinguishing feature was the use of PbNN in ICS. Details are covered below.

Figure 12: Machine learning algorithms and deep learning architectures in this research scope.

Deep learning approaches were classified into four subcategories, reflecting the widespread adoption of complex sequence data processing and advanced neural network architectures. The Sequence and Natural Language Processing category primarily comprises algorithms that excel at analyzing time-ordered data. The most frequently used architecture was LSTM (25 cases), followed by GRU (6 cases), Transformer (5 cases), Attention Mechanism (4 cases), and basic RNN (2 cases). This clearly indicates that research on attack detection based on time-series data, which considers temporal features, is the most active area within the ICS environment. The proportion of each discussed deep learning architecture is shown in Fig. 13.

Figure 13: Proportion by discussed deep learning architecture.

In the Vision and Graph category, CNNs (42 cases) showed an overwhelmingly high frequency. This implies that research converting data into 2D images or processing sensor data as feature maps for anomaly detection is highly prevalent. Furthermore, Graph Neural Networks such as GAT (5 cases), GCN (3 cases), and GNN (2 cases) indicate the emergence of research analyzing network connectivity and relationships between ICS components. Notably, CNNs were identified as the most extensively used architecture across the entire deep learning domain within the reviewed literature.

The General or Generative category includes general neural network structures utilized for various purposes, as well as models that generate data to learn normal/abnormal patterns. MLP (19 cases) continues to show significant usage as a fundamental deep neural network structure. Autoencoders (19 cases) were mainly used for unsupervised anomaly detection, while GANs (2 cases) and DBNs (2 cases) were also utilized in some studies.

While the deep learning architectures under study did not differ significantly from those in general IT environments, the high frequency of discussions regarding LSTM and CNN is a notable distinction. Another unique aspect is the use of PbNNs for attack or anomaly detection at the physical layer. PbNNs integrate physical laws with deep learning techniques, creating neural networks that satisfy physical equations while being data-driven. Generally, deep learning requires large amounts of data; however, by incorporating physical laws, these models can generate predictions that do not violate physical invariants even in data-scarce scenarios. The core mechanism of PbNNs lies in the design of the loss function, which is composed of the sum of the data loss function and the physical loss function. This approach is a neural network application specifically tailored for Cyber-Physical Systems.

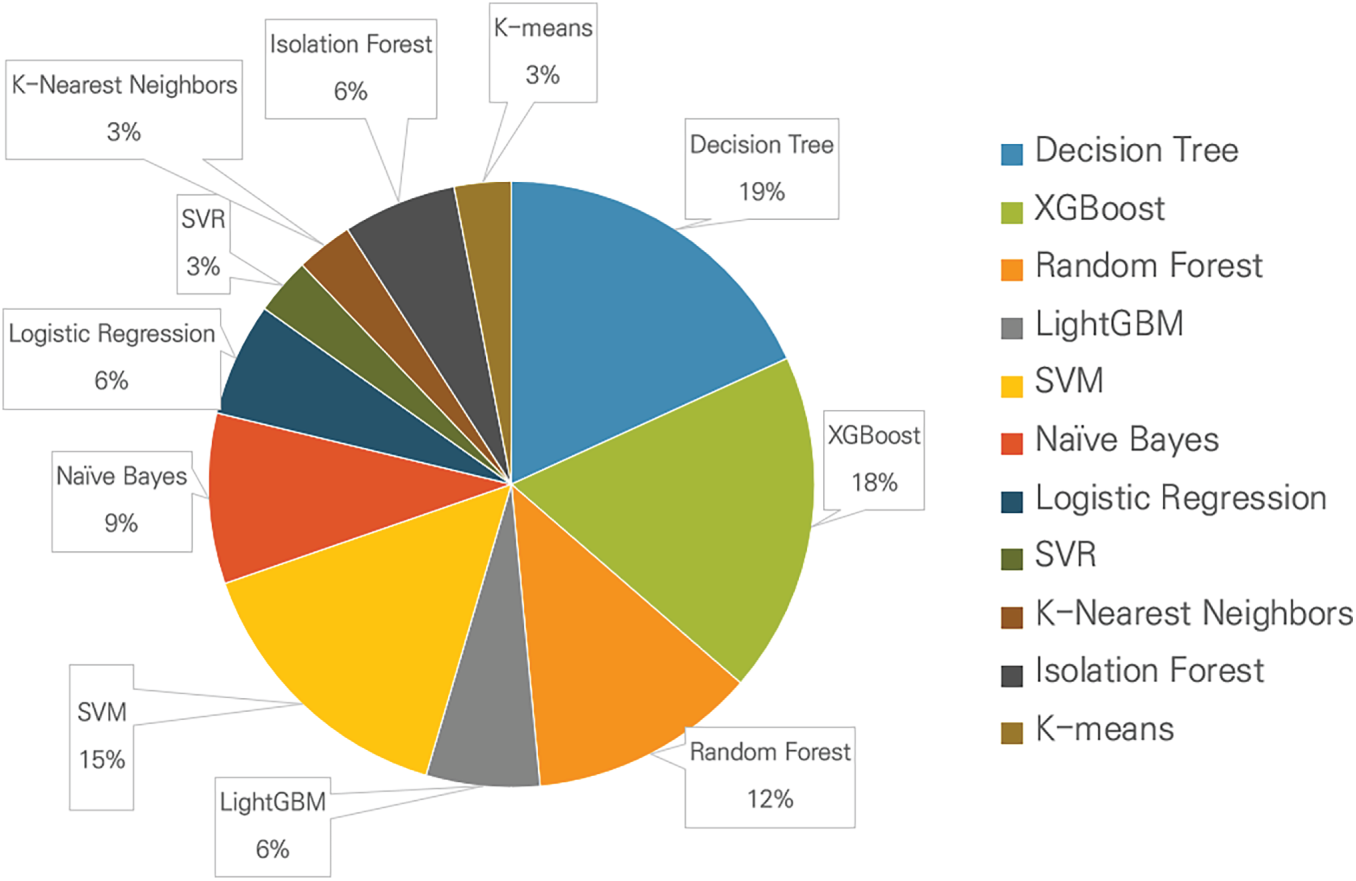

6.1.2 Classical Machine Learning

In the Classical Machine Learning category, algorithms were divided into supervised and unsupervised learning. Machine learning continues to be studied for ICS targets because it offers relatively lower computational complexity and higher interpretability compared to deep learning. This research trend is grounded in the environmental characteristics of ICS, which are resource-constrained and prioritize real-time performance. The proportion of each discussed machine learning algorithm is shown in Fig. 14.

Figure 14: Proportion by discussed classical machine learning algorithm.

In Supervised Learning approaches, which classify attacks using labeled training data, Tree and Ensemble methods, classical classifiers, and regression models were discussed. The Tree and Ensemble category is dominated by ensemble techniques known for their superior prediction performance and speed. Models such as Decision Tree (6 cases), XGBoost (6 cases), Random Forest (4 cases), and LightGBM (2 cases) were used; these are interpreted as being suitable for practical environments due to the ease of identifying feature importance. In the Classifiers and Regression Models category, SVM (5 cases), Naive Bayes (3 cases), Logistic Regression (2 cases), SVR (1 case), and K-Nearest Neighbors (1 case) were discussed.

From the perspective of Unsupervised Learning, which learns from unlabeled data, the approach involves learning normal data patterns and detecting deviations as attacks. Isolation Forest (2 cases) and K-means (1 case) were discussed, demonstrating that unsupervised anomaly detection techniques are consistently applied due to the difficulty of securing actual attack data labels in the ICS environment.

6.2 RQ2. What Advantages Do Machine-Learning-Based AI Techniques Offer for Cybersecurity in Industrial Control Systems?

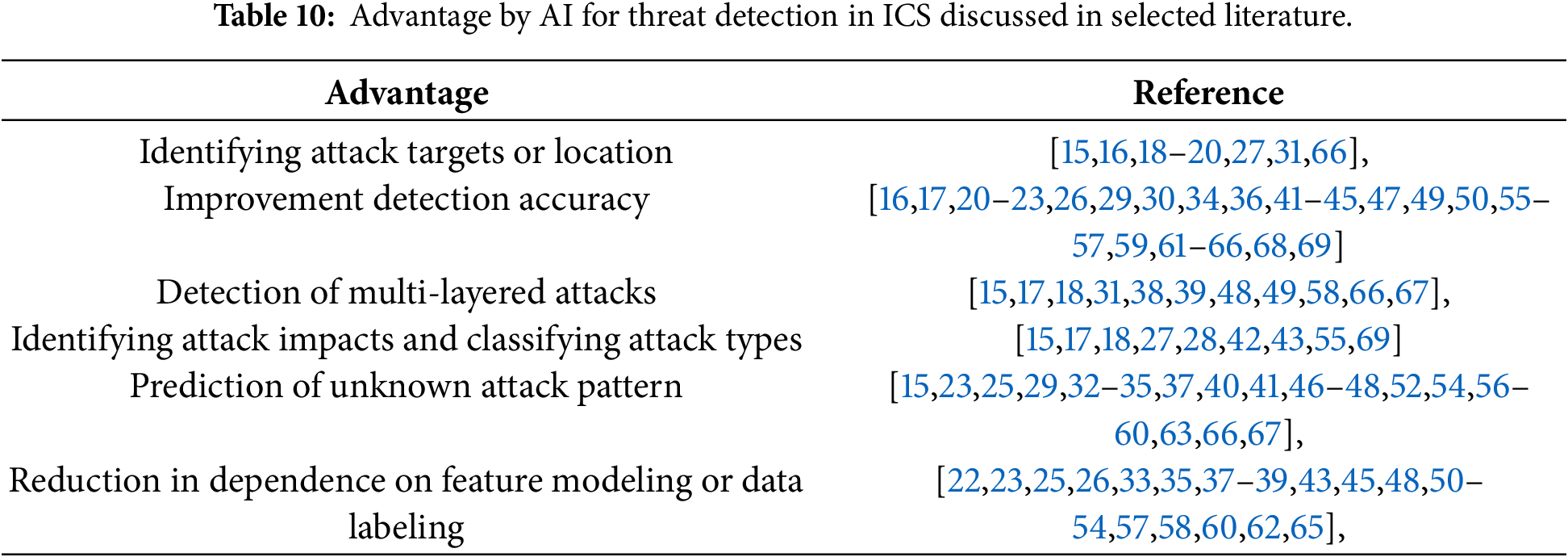

A comprehensive analysis of 55 selected literature [15–69] discussed the various advantages that AI-based threat detection brings compared to non-AI-based threat detection. The advantages of AI-based threat detection discussed include identifying attack targets or location, improving detection accuracy, detecting multi-layered attacks, identifying attack impacts and classifying attack types, prediction of unknown attack patterns, and reduction in dependence on feature modeling or data labeling. The advantage of AI-based threat detection discussed in the selected literature are summarized in Table 10. Since the advantage of AI-based threat detection are not categorized by physical, control, or application, they are all presented below.

6.2.1 Identifying Attack Targets or Location

The reviewed literature commonly discuss that machine-learning-based AI techniques enable not only the detection of cyberattacks but also the identification of attack targets or locations within industrial control systems. Several selected works report that AI models can analyze temporal correlations, spatial dependencies, and causal relationships among sensors, actuators, controllers, and network nodes to infer which components are affected by malicious activities. In particular, the literature highlights that learning-based approaches are capable of tracing abnormal behavior back to its point of origin or estimating attack propagation paths across interconnected subsystems. This capability is repeatedly emphasized as valuable in ICS environments, where tightly coupled cyber-physical processes often cause local attacks to escalate into system-wide disturbances. As discussed in the surveyed studies, identifying attack locations supports more precise fault isolation, reduces response time, and enables targeted mitigation actions without unnecessary disruption of normal operations.

6.2.2 Improvement Detection Accuracy

The reviewed literature consistently identifies improved detection accuracy as one of the most significant advantages of AI-based threat detection in ICS. Many works in the literature report that machine learning and deep learning models outperform traditional rule-based, signature-based, or threshold-based approaches by learning complex, nonlinear patterns in high-dimensional industrial data. The literature emphasizes that ICS data inherently contain noise, transient fluctuations, and operational variability even under normal conditions, which often leads conventional methods to generate excessive false alarms or miss subtle attacks. In contrast, AI-based approaches discussed in the literature are shown to distinguish malicious deviations from legitimate process variations by capturing long-term temporal dependencies and multivariate correlations. As a result, the literature repeatedly reports higher accuracy, improved robustness, and more stable detection performance when AI techniques are applied to industrial control environments.

6.2.3 Detection of Multi-Layered Attacks

The reviewed literature frequently discusses the capability of AI-based techniques to detect multi-layer attacks across the entire ICS architecture, encompassing the physical, control, and application layers. The literature emphasizes that modern industrial attacks are seldom confined to a single layer and often involve the coordinated manipulation of network traffic, control commands, and physical process variables. Consequently, AI models are reported to be capable of detecting cross-layer inconsistencies that are difficult to identify through single-layer analysis by integrally analyzing heterogeneous data collected from various layers.

Furthermore, the literature discusses how AI-based approaches can be effective in detecting not only individual event-level anomalies but also multi-stage attacks consisting of temporally continuous attack steps and multi-attacks occurring either simultaneously or sequentially. According to various studies, attackers often conduct long-term operations by combining individual actions that mimic normal behavior, which makes it challenging to discern malicious intent through single-event analysis alone. AI models are reported to capture complex attack patterns and correlations between different stages by learning time-series dependencies and cross-layer relationships.

The literature repeatedly emphasizes that this capacity for cross-layer and temporal correlation analysis is particularly effective in detecting stealthy attacks, advanced persistent threats, and complex attack scenarios that might remain hidden if layers or single-point events were analyzed in isolation. Overall, the literature suggests that AI-based multi-layer analysis not only provides a more comprehensive perspective on system behavior but also enhances detection capabilities against continuous and sophisticated attack scenarios.

6.2.4 Identifying Attack Impacts and Classifying Attack Types

The reviewed literature discusses how AI-based threat detection techniques support not only attack identification but also impact analysis and the classification of attack types. According to the literature, machine learning-based models can infer how an attack affects process stability, availability, or safety by analyzing changes in system behavior. Furthermore, several studies report that AI techniques can classify detected anomalies into specific attack types such as denial-of-service, false data injection, and replay attacks.

Moreover, some literature emphasizes research efforts aimed at distinguishing whether anomalies observed in ICS environments are caused by cyberattacks or physical faults such as sensor failures, equipment degradation, or control errors. These studies propose approaches to differentiate between normal equipment failures and intentional malicious acts by analyzing correlations between process variables, temporal patterns, and inconsistencies between control commands and physical responses. This is discussed in the literature as a crucial factor for reducing unnecessary responses or system shutdowns caused by false positives in ICS environments.

Such capabilities are frequently highlighted as advantages that go beyond merely alerting to anomalies, as they help operators more accurately understand the nature, cause, and severity of an incident. According to the literature, the ability to analyze attack impact, classify attack types, and distinguish between cyberattacks and physical faults enables more rational decision-making, improves incident response prioritization, and effectively supports the development of appropriate response and recovery strategies in industrial settings.

6.2.5 Prediction of Unknown Attack Pattern

The reviewed literature also emphasizes the advantage of AI-based approaches in predicting and detecting unknown or previously unseen attack patterns. Many works in the literature note that in ICS environments, it is impractical to obtain labeled datasets covering all possible attack scenarios due to the rarity and diversity of real-world attacks. Consequently, the literature highlights unsupervised, semi-supervised, and self-supervised learning as effective means for learning normal operational behavior and identifying deviations from that baseline. The reviewed literature reports that such approaches enable the detection of novel or zero-day attacks without relying on predefined attack signatures. This adaptability to evolving threats is repeatedly discussed in the literature as a key benefit of AI-based threat detection, particularly in long-lived industrial systems where attack techniques continuously change over time.

6.2.6 Reduction in Dependence on Feature Modeling or Data Labeling